Instructions to use zai-org/GLM-OCR with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use zai-org/GLM-OCR with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("image-text-to-text", model="zai-org/GLM-OCR") messages = [ { "role": "user", "content": [ {"type": "image", "url": "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/p-blog/candy.JPG"}, {"type": "text", "text": "What animal is on the candy?"} ] }, ] pipe(text=messages)# Load model directly from transformers import AutoTokenizer, AutoModelForImageTextToText tokenizer = AutoTokenizer.from_pretrained("zai-org/GLM-OCR") model = AutoModelForImageTextToText.from_pretrained("zai-org/GLM-OCR") messages = [ { "role": "user", "content": [ {"type": "image", "url": "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/p-blog/candy.JPG"}, {"type": "text", "text": "What animal is on the candy?"} ] }, ] inputs = tokenizer.apply_chat_template( messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt", ).to(model.device) outputs = model.generate(**inputs, max_new_tokens=40) print(tokenizer.decode(outputs[0][inputs["input_ids"].shape[-1]:])) - Inference

- Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use zai-org/GLM-OCR with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "zai-org/GLM-OCR" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "zai-org/GLM-OCR", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }'Use Docker

docker model run hf.co/zai-org/GLM-OCR

- SGLang

How to use zai-org/GLM-OCR with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "zai-org/GLM-OCR" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "zai-org/GLM-OCR", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "zai-org/GLM-OCR" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "zai-org/GLM-OCR", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }' - Docker Model Runner

How to use zai-org/GLM-OCR with Docker Model Runner:

docker model run hf.co/zai-org/GLM-OCR

Jared Wen commited on

Update README.md

Browse filesupdate 3 benchmark result, wechat, decord and zai API link

README.md

CHANGED

|

@@ -1,201 +1,219 @@

|

|

| 1 |

-

---

|

| 2 |

-

license: mit

|

| 3 |

-

language:

|

| 4 |

-

- zh

|

| 5 |

-

- en

|

| 6 |

-

- fr

|

| 7 |

-

- es

|

| 8 |

-

- ru

|

| 9 |

-

- de

|

| 10 |

-

- ja

|

| 11 |

-

- ko

|

| 12 |

-

pipeline_tag: image-to-text

|

| 13 |

-

library_name: transformers

|

| 14 |

-

---

|

| 15 |

-

|

| 16 |

-

# GLM-OCR

|

| 17 |

-

|

| 18 |

-

<div align="center">

|

| 19 |

-

<img src=https://raw.githubusercontent.com/zai-org/GLM-OCR/refs/heads/main/resources/logo.svg width="40%"/>

|

| 20 |

-

</div>

|

| 21 |

-

<p align="center">

|

| 22 |

-

👋 Join our <a href="https://raw.githubusercontent.com/zai-org/GLM-OCR/refs/heads/main/resources/wechat.

|

| 23 |

-

<br>

|

| 24 |

-

📍 Use GLM-OCR's <a href="https://docs.z.ai/guides/

|

| 25 |

-

</p>

|

| 26 |

-

|

| 27 |

-

|

| 28 |

-

## Introduction

|

| 29 |

-

|

| 30 |

-

GLM-OCR is a multimodal OCR model for complex document understanding, built on the GLM-V encoder–decoder architecture. It introduces Multi-Token Prediction (MTP) loss and stable full-task reinforcement learning to improve training efficiency, recognition accuracy, and generalization. The model integrates the CogViT visual encoder pre-trained on large-scale image–text data, a lightweight cross-modal connector with efficient token downsampling, and a GLM-0.5B language decoder. Combined with a two-stage pipeline of layout analysis and parallel recognition based on PP-DocLayout-V3, GLM-OCR delivers robust and high-quality OCR performance across diverse document layouts.

|

| 31 |

-

|

| 32 |

-

**Key Features**

|

| 33 |

-

|

| 34 |

-

- **State-of-the-Art Performance**: Achieves a score of 94.62 on OmniDocBench V1.5, ranking #1 overall, and delivers state-of-the-art results across major document understanding benchmarks, including formula recognition, table recognition, and information extraction.

|

| 35 |

-

|

| 36 |

-

- **Optimized for Real-World Scenarios**: Designed and optimized for practical business use cases, maintaining robust performance on complex tables, code-heavy documents, seals, and other challenging real-world layouts.

|

| 37 |

-

|

| 38 |

-

- **Efficient Inference**: With only 0.9B parameters, GLM-OCR supports deployment via vLLM, SGLang, and Ollama, significantly reducing inference latency and compute cost, making it ideal for high-concurrency services and edge deployments.

|

| 39 |

-

|

| 40 |

-

- **Easy to Use**: Fully open-sourced and equipped with a comprehensive [SDK](https://github.com/zai-org/GLM-OCR) and inference toolchain, offering simple installation, one-line invocation, and smooth integration into existing production pipelines.

|

| 41 |

-

|

| 42 |

-

##

|

| 43 |

-

|

| 44 |

-

|

| 45 |

-

|

| 46 |

-

|

| 47 |

-

|

| 48 |

-

|

| 49 |

-

|

| 50 |

-

|

| 51 |

-

|

| 52 |

-

|

| 53 |

-

|

| 54 |

-

|

| 55 |

-

|

| 56 |

-

|

| 57 |

-

|

| 58 |

-

|

| 59 |

-

|

| 60 |

-

|

| 61 |

-

|

| 62 |

-

|

| 63 |

-

|

| 64 |

-

|

| 65 |

-

|

| 66 |

-

|

| 67 |

-

|

| 68 |

-

|

| 69 |

-

|

| 70 |

-

|

| 71 |

-

```

|

| 72 |

-

|

| 73 |

-

|

| 74 |

-

|

| 75 |

-

|

| 76 |

-

|

| 77 |

-

```

|

| 78 |

-

|

| 79 |

-

|

| 80 |

-

|

| 81 |

-

|

| 82 |

-

|

| 83 |

-

|

| 84 |

-

|

| 85 |

-

|

| 86 |

-

|

| 87 |

-

|

| 88 |

-

|

| 89 |

-

|

| 90 |

-

|

| 91 |

-

|

| 92 |

-

|

| 93 |

-

```

|

| 94 |

-

|

| 95 |

-

|

| 96 |

-

|

| 97 |

-

|

| 98 |

-

|

| 99 |

-

```

|

| 100 |

-

|

| 101 |

-

|

| 102 |

-

|

| 103 |

-

|

| 104 |

-

|

| 105 |

-

|

| 106 |

-

|

| 107 |

-

|

| 108 |

-

|

| 109 |

-

|

| 110 |

-

|

| 111 |

-

|

| 112 |

-

|

| 113 |

-

|

| 114 |

-

|

| 115 |

-

|

| 116 |

-

|

| 117 |

-

|

| 118 |

-

|

| 119 |

-

|

| 120 |

-

|

| 121 |

-

|

| 122 |

-

|

| 123 |

-

|

| 124 |

-

|

| 125 |

-

|

| 126 |

-

|

| 127 |

-

|

| 128 |

-

|

| 129 |

-

|

| 130 |

-

|

| 131 |

-

|

| 132 |

-

|

| 133 |

-

|

| 134 |

-

|

| 135 |

-

|

| 136 |

-

|

| 137 |

-

|

| 138 |

-

|

| 139 |

-

|

| 140 |

-

|

| 141 |

-

|

| 142 |

-

|

| 143 |

-

|

| 144 |

-

|

| 145 |

-

|

| 146 |

-

|

| 147 |

-

|

| 148 |

-

|

| 149 |

-

|

| 150 |

-

|

| 151 |

-

|

| 152 |

-

|

| 153 |

-

|

| 154 |

-

|

| 155 |

-

|

| 156 |

-

"

|

| 157 |

-

|

| 158 |

-

|

| 159 |

-

|

| 160 |

-

|

| 161 |

-

|

| 162 |

-

```

|

| 163 |

-

|

| 164 |

-

|

| 165 |

-

|

| 166 |

-

|

| 167 |

-

|

| 168 |

-

|

| 169 |

-

|

| 170 |

-

|

| 171 |

-

|

| 172 |

-

|

| 173 |

-

|

| 174 |

-

|

| 175 |

-

|

| 176 |

-

|

| 177 |

-

|

| 178 |

-

|

| 179 |

-

|

| 180 |

-

|

| 181 |

-

|

| 182 |

-

|

| 183 |

-

|

| 184 |

-

|

| 185 |

-

|

| 186 |

-

|

| 187 |

-

|

| 188 |

-

|

| 189 |

-

|

| 190 |

-

|

| 191 |

-

|

| 192 |

-

|

| 193 |

-

|

| 194 |

-

|

| 195 |

-

|

| 196 |

-

|

| 197 |

-

|

| 198 |

-

|

| 199 |

-

|

| 200 |

-

|

| 201 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: mit

|

| 3 |

+

language:

|

| 4 |

+

- zh

|

| 5 |

+

- en

|

| 6 |

+

- fr

|

| 7 |

+

- es

|

| 8 |

+

- ru

|

| 9 |

+

- de

|

| 10 |

+

- ja

|

| 11 |

+

- ko

|

| 12 |

+

pipeline_tag: image-to-text

|

| 13 |

+

library_name: transformers

|

| 14 |

+

---

|

| 15 |

+

|

| 16 |

+

# GLM-OCR

|

| 17 |

+

|

| 18 |

+

<div align="center">

|

| 19 |

+

<img src=https://raw.githubusercontent.com/zai-org/GLM-OCR/refs/heads/main/resources/logo.svg width="40%"/>

|

| 20 |

+

</div>

|

| 21 |

+

<p align="center">

|

| 22 |

+

👋 Join our <a href="https://raw.githubusercontent.com/zai-org/GLM-OCR/refs/heads/main/resources/wechat.jpg" target="_blank">WeChat</a> and <a href="https://discord.gg/QR7SARHRxK" target="_blank">Discord</a> community

|

| 23 |

+

<br>

|

| 24 |

+

📍 Use GLM-OCR's <a href="https://docs.z.ai/guides/vlm/glm-ocr" target="_blank">API</a>

|

| 25 |

+

</p>

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

## Introduction

|

| 29 |

+

|

| 30 |

+

GLM-OCR is a multimodal OCR model for complex document understanding, built on the GLM-V encoder–decoder architecture. It introduces Multi-Token Prediction (MTP) loss and stable full-task reinforcement learning to improve training efficiency, recognition accuracy, and generalization. The model integrates the CogViT visual encoder pre-trained on large-scale image–text data, a lightweight cross-modal connector with efficient token downsampling, and a GLM-0.5B language decoder. Combined with a two-stage pipeline of layout analysis and parallel recognition based on PP-DocLayout-V3, GLM-OCR delivers robust and high-quality OCR performance across diverse document layouts.

|

| 31 |

+

|

| 32 |

+

**Key Features**

|

| 33 |

+

|

| 34 |

+

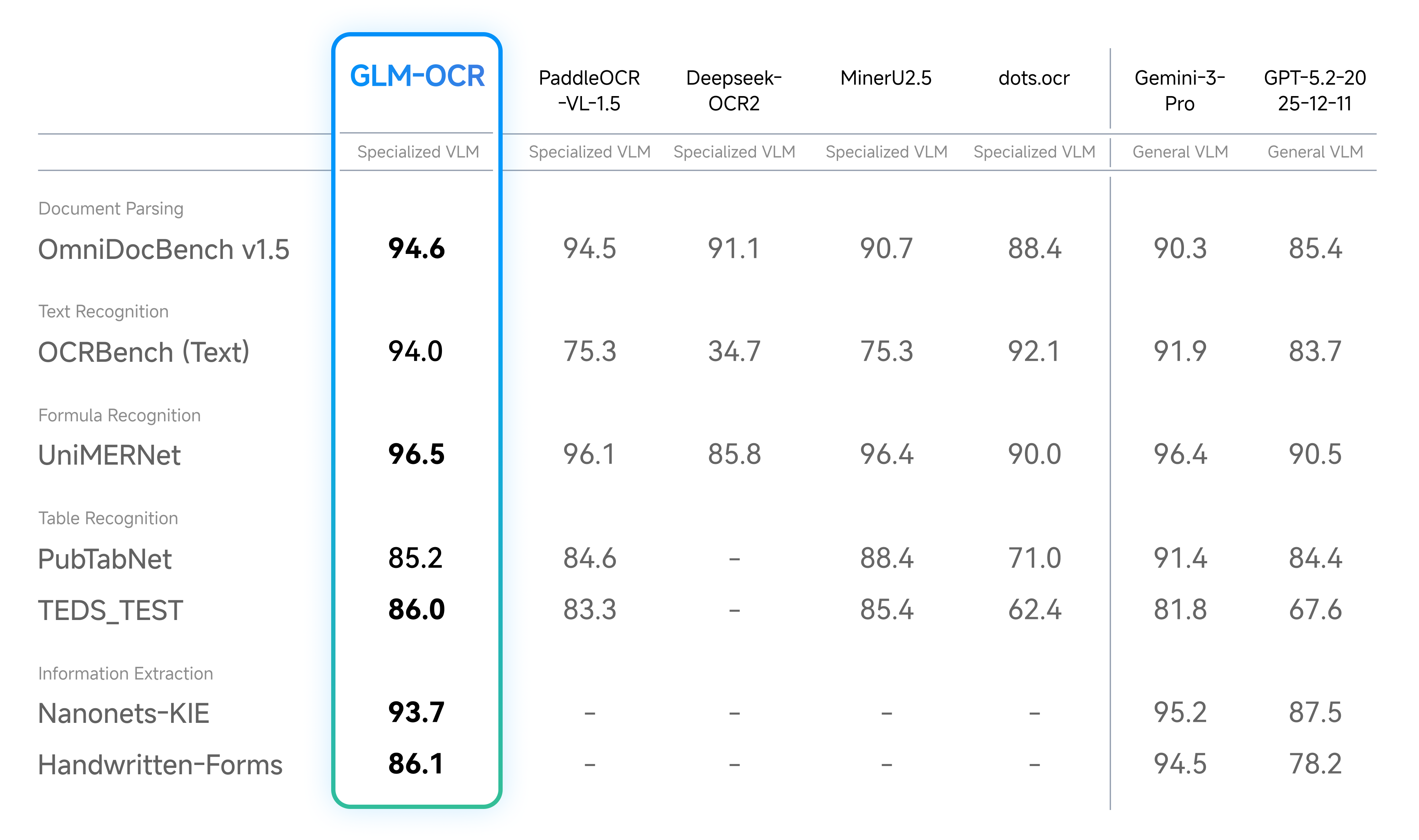

- **State-of-the-Art Performance**: Achieves a score of 94.62 on OmniDocBench V1.5, ranking #1 overall, and delivers state-of-the-art results across major document understanding benchmarks, including formula recognition, table recognition, and information extraction.

|

| 35 |

+

|

| 36 |

+

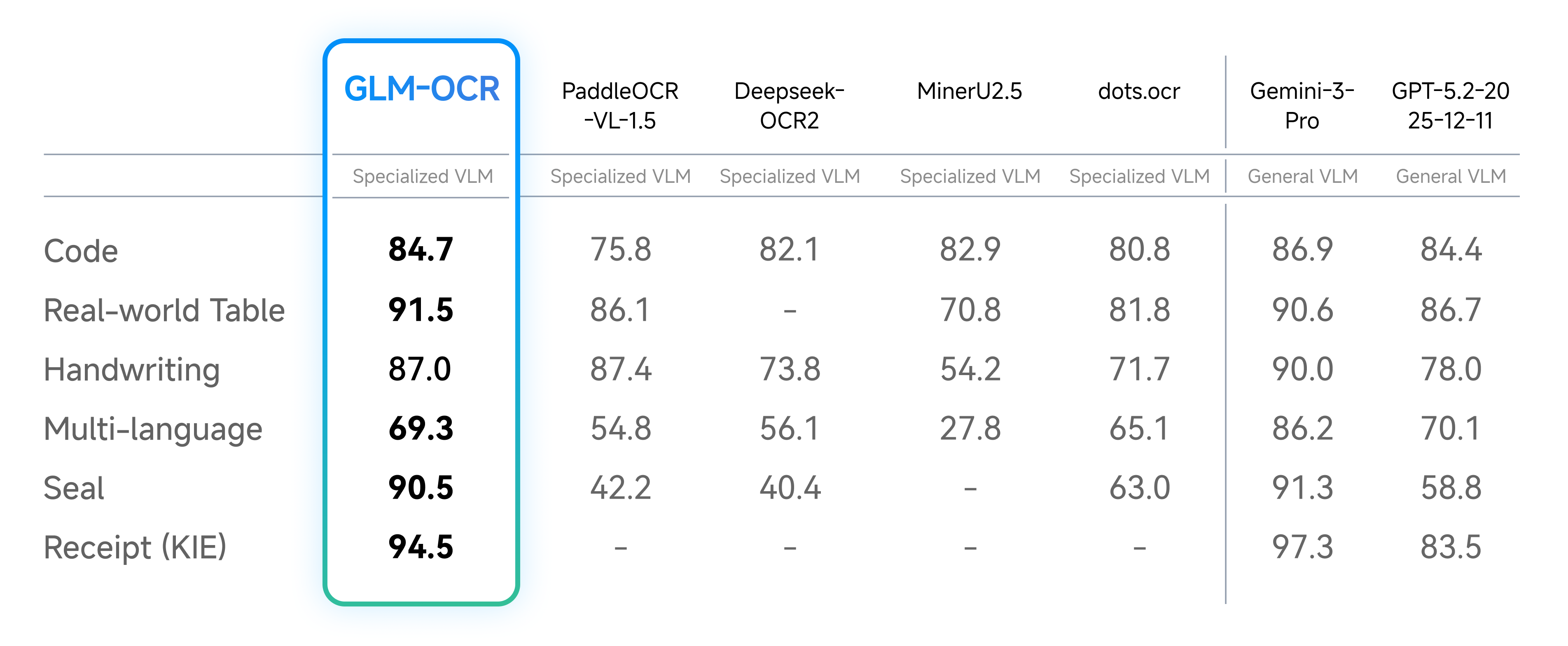

- **Optimized for Real-World Scenarios**: Designed and optimized for practical business use cases, maintaining robust performance on complex tables, code-heavy documents, seals, and other challenging real-world layouts.

|

| 37 |

+

|

| 38 |

+

- **Efficient Inference**: With only 0.9B parameters, GLM-OCR supports deployment via vLLM, SGLang, and Ollama, significantly reducing inference latency and compute cost, making it ideal for high-concurrency services and edge deployments.

|

| 39 |

+

|

| 40 |

+

- **Easy to Use**: Fully open-sourced and equipped with a comprehensive [SDK](https://github.com/zai-org/GLM-OCR) and inference toolchain, offering simple installation, one-line invocation, and smooth integration into existing production pipelines.

|

| 41 |

+

|

| 42 |

+

## Performance

|

| 43 |

+

|

| 44 |

+

- Document Parsing & Information Extraction

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

- Real-World Scenarios Performance

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

|

| 54 |

+

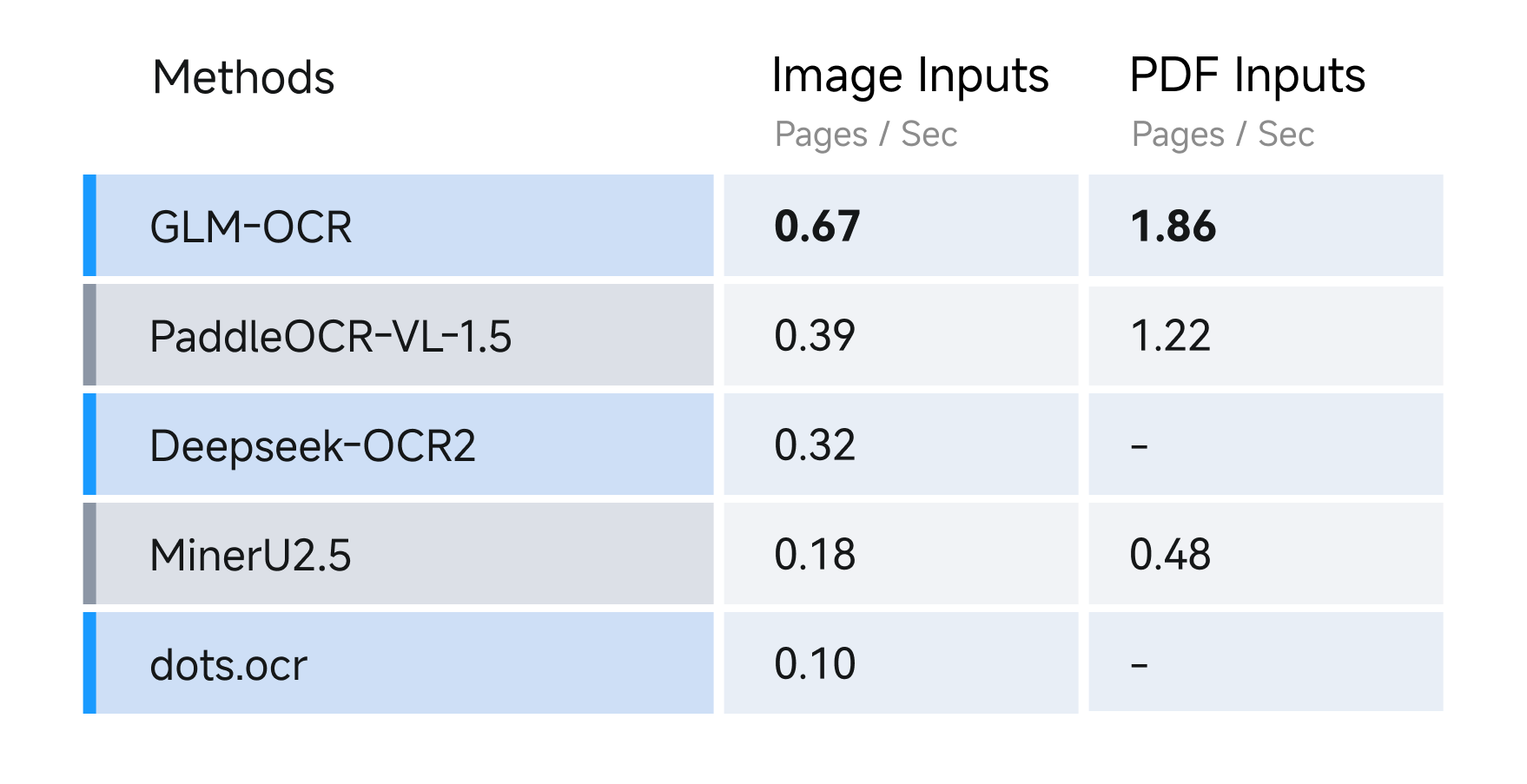

- Speed Test

|

| 55 |

+

|

| 56 |

+

For speed, we compared different OCR methods under identical hardware and testing conditions (single replica, single concurrency), evaluating their performance in parsing and exporting Markdown files from both image and PDF inputs. Results show GLM-OCR achieves a throughput of 1.86 pages/second for PDF documents and 0.67 images/second for images, significantly outperforming comparable models.

|

| 57 |

+

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

## Usage

|

| 61 |

+

|

| 62 |

+

### vLLM

|

| 63 |

+

|

| 64 |

+

1. run

|

| 65 |

+

|

| 66 |

+

```bash

|

| 67 |

+

pip install -U vllm --extra-index-url https://wheels.vllm.ai/nightly

|

| 68 |

+

```

|

| 69 |

+

|

| 70 |

+

or using docker with:

|

| 71 |

+

```

|

| 72 |

+

docker pull vllm/vllm-openai:nightly

|

| 73 |

+

```

|

| 74 |

+

|

| 75 |

+

2. run with:

|

| 76 |

+

|

| 77 |

+

```bash

|

| 78 |

+

pip install git+https://github.com/huggingface/transformers.git

|

| 79 |

+

vllm serve zai-org/GLM-OCR --allowed-local-media-path / --port 8080

|

| 80 |

+

```

|

| 81 |

+

|

| 82 |

+

### SGLang

|

| 83 |

+

|

| 84 |

+

|

| 85 |

+

1. using docker with:

|

| 86 |

+

|

| 87 |

+

```bash

|

| 88 |

+

docker pull lmsysorg/sglang:dev

|

| 89 |

+

```

|

| 90 |

+

|

| 91 |

+

or build it from source with:

|

| 92 |

+

|

| 93 |

+

```bash

|

| 94 |

+

pip install git+https://github.com/sgl-project/sglang.git#subdirectory=python

|

| 95 |

+

```

|

| 96 |

+

|

| 97 |

+

2. run with:

|

| 98 |

+

|

| 99 |

+

```bash

|

| 100 |

+

pip install git+https://github.com/huggingface/transformers.git

|

| 101 |

+

python -m sglang.launch_server --model zai-org/GLM-OCR --port 8080

|

| 102 |

+

```

|

| 103 |

+

|

| 104 |

+

### Ollama

|

| 105 |

+

|

| 106 |

+

1. Download [Ollama](https://ollama.com/download).

|

| 107 |

+

2. run with:

|

| 108 |

+

|

| 109 |

+

```bash

|

| 110 |

+

ollama run glm-ocr

|

| 111 |

+

```

|

| 112 |

+

|

| 113 |

+

Ollama will automatically use image file path when an image is dragged into the terminal:

|

| 114 |

+

|

| 115 |

+

```bash

|

| 116 |

+

ollama run glm-ocr Text Recognition: ./image.png

|

| 117 |

+

```

|

| 118 |

+

|

| 119 |

+

### Transformers

|

| 120 |

+

|

| 121 |

+

```

|

| 122 |

+

pip install git+https://github.com/huggingface/transformers.git

|

| 123 |

+

```

|

| 124 |

+

|

| 125 |

+

```python

|

| 126 |

+

from transformers import AutoProcessor, AutoModelForImageTextToText

|

| 127 |

+

import torch

|

| 128 |

+

|

| 129 |

+

MODEL_PATH = "zai-org/GLM-OCR"

|

| 130 |

+

messages = [

|

| 131 |

+

{

|

| 132 |

+

"role": "user",

|

| 133 |

+

"content": [

|

| 134 |

+

{

|

| 135 |

+

"type": "image",

|

| 136 |

+

"url": "test_image.png"

|

| 137 |

+

},

|

| 138 |

+

{

|

| 139 |

+

"type": "text",

|

| 140 |

+

"text": "Text Recognition:"

|

| 141 |

+

}

|

| 142 |

+

],

|

| 143 |

+

}

|

| 144 |

+

]

|

| 145 |

+

processor = AutoProcessor.from_pretrained(MODEL_PATH)

|

| 146 |

+

model = AutoModelForImageTextToText.from_pretrained(

|

| 147 |

+

pretrained_model_name_or_path=MODEL_PATH,

|

| 148 |

+

torch_dtype="auto",

|

| 149 |

+

device_map="auto",

|

| 150 |

+

)

|

| 151 |

+

inputs = processor.apply_chat_template(

|

| 152 |

+

messages,

|

| 153 |

+

tokenize=True,

|

| 154 |

+

add_generation_prompt=True,

|

| 155 |

+

return_dict=True,

|

| 156 |

+

return_tensors="pt"

|

| 157 |

+

).to(model.device)

|

| 158 |

+

inputs.pop("token_type_ids", None)

|

| 159 |

+

generated_ids = model.generate(**inputs, max_new_tokens=8192)

|

| 160 |

+

output_text = processor.decode(generated_ids[0][inputs["input_ids"].shape[1]:], skip_special_tokens=False)

|

| 161 |

+

print(output_text)

|

| 162 |

+

```

|

| 163 |

+

|

| 164 |

+

### Prompt Limited

|

| 165 |

+

|

| 166 |

+

GLM-OCR currently supports two types of prompt scenarios:

|

| 167 |

+

|

| 168 |

+

1. **Document Parsing** – extract raw content from documents. Supported tasks include:

|

| 169 |

+

|

| 170 |

+

```python

|

| 171 |

+

{

|

| 172 |

+

"text": "Text Recognition:",

|

| 173 |

+

"formula": "Formula Recognition:",

|

| 174 |

+

"table": "Table Recognition:"

|

| 175 |

+

}

|

| 176 |

+

```

|

| 177 |

+

|

| 178 |

+

2. **Information Extraction** – extract structured information from documents. Prompts must follow a strict JSON schema. For example, to extract personal ID information:

|

| 179 |

+

|

| 180 |

+

```python

|

| 181 |

+

请按下列JSON格式输出图中信息:

|

| 182 |

+

{

|

| 183 |

+

"id_number": "",

|

| 184 |

+

"last_name": "",

|

| 185 |

+

"first_name": "",

|

| 186 |

+

"date_of_birth": "",

|

| 187 |

+

"address": {

|

| 188 |

+

"street": "",

|

| 189 |

+

"city": "",

|

| 190 |

+

"state": "",

|

| 191 |

+

"zip_code": ""

|

| 192 |

+

},

|

| 193 |

+

"dates": {

|

| 194 |

+

"issue_date": "",

|

| 195 |

+

"expiration_date": ""

|

| 196 |

+

},

|

| 197 |

+

"sex": ""

|

| 198 |

+

}

|

| 199 |

+

```

|

| 200 |

+

|

| 201 |

+

⚠️ Note: When using information extraction, the output must strictly adhere to the defined JSON schema to ensure downstream processing compatibility.

|

| 202 |

+

|

| 203 |

+

## GLM-OCR SDK

|

| 204 |

+

|

| 205 |

+

We provide an easy-to-use SDK for using GLM-OCR more efficiently and conveniently. please check our [github](https://github.com/zai-org/GLM-OCR) to get more detail.

|

| 206 |

+

|

| 207 |

+

## Acknowledgement

|

| 208 |

+

|

| 209 |

+

This project is inspired by the excellent work of the following projects and communities:

|

| 210 |

+

|

| 211 |

+

- [PP-DocLayout-V3](https://huggingface.co/PaddlePaddle/PP-DocLayoutV3)

|

| 212 |

+

- [PaddleOCR](https://github.com/PaddlePaddle/PaddleOCR)

|

| 213 |

+

- [MinerU](https://github.com/opendatalab/MinerU)

|

| 214 |

+

|

| 215 |

+

## License

|

| 216 |

+

|

| 217 |

+

The GLM-OCR model is released under the MIT License.

|

| 218 |

+

|

| 219 |

+

The complete OCR pipeline integrates [PP-DocLayoutV3](https://huggingface.co/PaddlePaddle/PP-DocLayoutV3) for document layout analysis, which is licensed under the Apache License 2.0. Users should comply with both licenses when using this project.

|