|

|

--- |

|

|

library_name: transformers |

|

|

license: apache-2.0 |

|

|

license_link: https://huggingface.co/Qwen/Qwen3-Coder-30B-A3B-Instruct/blob/main/LICENSE |

|

|

pipeline_tag: text-generation |

|

|

--- |

|

|

|

|

|

# Comparison between Qwen3-Coder-30B and Qwen3-235B Models |

|

|

|

|

|

## Performance Comparison |

|

|

|

|

|

| Benchmark | Qwen3-Coder-30B-A3B-Instruct-FP8-Dynamic | Qwen3-235B-A22B | Qwen3-235B-A22B-FP8-dynamic | |

|

|

|-----------|------------------------------------------|-----------------|------------------------------| |

|

|

| MMLU | 78.07% | 84.77% | 84.61% | |

|

|

| ARC Challenge | 65.36% | 71.84% | 70.90% | |

|

|

| GSM-8K (strict-match) | 88.93% | 74.22% | 74.98% | |

|

|

| Hellaswag | 50.25% | 76.56% | 76.10% | |

|

|

| Winogrande | 62.75% | 73.95% | 75.06% | |

|

|

| TruthfulQA MC2 | 59.00% | 61.18% | 60.93% | |

|

|

|

|

|

## Key Observations |

|

|

|

|

|

1. **GSM-8K Performance**: Qwen3-Coder-30B significantly outperforms the larger Qwen3-235B models on GSM-8K (mathematical reasoning), with a score of 88.93% compared to ~74% for the 235B models. |

|

|

|

|

|

2. **Hellaswag Performance**: The 30B model shows a significant drop in performance on Hellaswag (commonsense reasoning), scoring only 50.25% compared to ~76% for the 235B models. |

|

|

|

|

|

3. **TruthfulQA Performance**: All models perform similarly on TruthfulQA, with the 30B model slightly behind at 59.00% compared to ~61% for the 235B models. |

|

|

|

|

|

4. **Winogrande Performance**: The 30B model scores 62.75% on Winogrande compared to ~74-75% for the 235B models. |

|

|

|

|

|

## Summary |

|

|

|

|

|

The Qwen3-Coder-30B model shows specialized strength in mathematical reasoning tasks (GSM-8K) where it significantly outperforms the larger models. However, it lags behind the 235B models in general knowledge (MMLU), commonsense reasoning (Hellaswag), and pronoun disambiguation (Winogrande). This suggests the 30B model may have been optimized specifically for coding and mathematical tasks at the expense of some general capabilities. |

|

|

|

|

|

|

|

|

----- |

|

|

|

|

|

|

|

|

# Qwen3-Coder-30B-A3B-Instruct |

|

|

|

|

|

## Highlights |

|

|

|

|

|

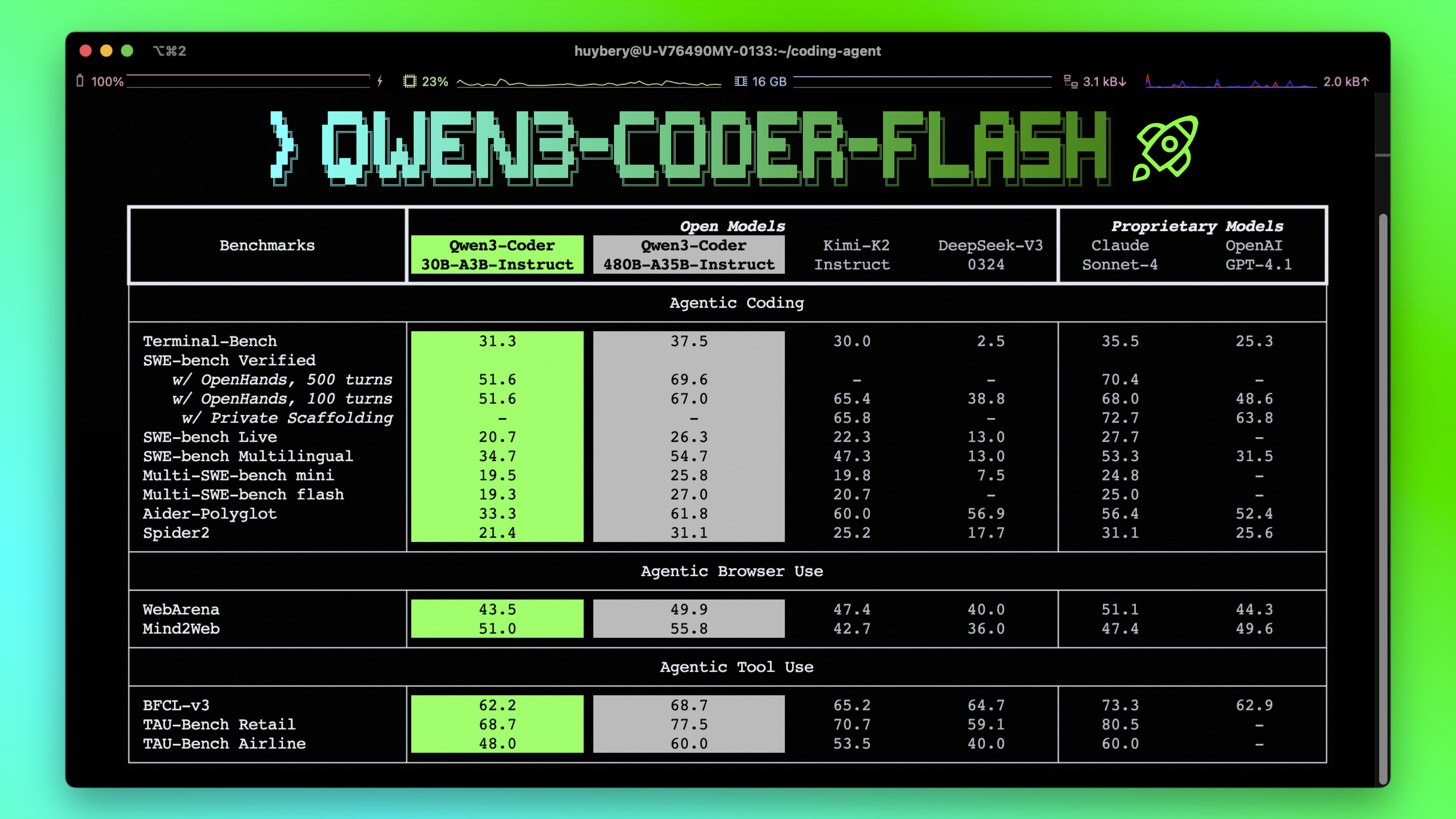

**Qwen3-Coder** is available in multiple sizes. Today, we're excited to introduce **Qwen3-Coder-30B-A3B-Instruct**. This streamlined model maintains impressive performance and efficiency, featuring the following key enhancements: |

|

|

|

|

|

- **Significant Performance** among open models on **Agentic Coding**, **Agentic Browser-Use**, and other foundational coding tasks. |

|

|

- **Long-context Capabilities** with native support for **256K** tokens, extendable up to **1M** tokens using Yarn, optimized for repository-scale understanding. |

|

|

- **Agentic Coding** supporting for most platform such as **Qwen Code**, **CLINE**, featuring a specially designed function call format. |

|

|

|

|

|

|

|

|

|

|

|

## Model Overview |

|

|

|

|

|

**Qwen3-Coder-30B-A3B-Instruct** has the following features: |

|

|

- Type: Causal Language Models |

|

|

- Training Stage: Pretraining & Post-training |

|

|

- Number of Parameters: 30.5B in total and 3.3B activated |

|

|

- Number of Layers: 48 |

|

|

- Number of Attention Heads (GQA): 32 for Q and 4 for KV |

|

|

- Number of Experts: 128 |

|

|

- Number of Activated Experts: 8 |

|

|

- Context Length: **262,144 natively**. |

|

|

|

|

|

**NOTE: This model supports only non-thinking mode and does not generate ``<think></think>`` blocks in its output. Meanwhile, specifying `enable_thinking=False` is no longer required.** |

|

|

|

|

|

For more details, including benchmark evaluation, hardware requirements, and inference performance, please refer to our [blog](https://qwenlm.github.io/blog/qwen3-coder/), [GitHub](https://github.com/QwenLM/Qwen3-Coder), and [Documentation](https://qwen.readthedocs.io/en/latest/). |

|

|

|

|

|

|

|

|

## Quickstart |

|

|

|

|

|

We advise you to use the latest version of `transformers`. |

|

|

|

|

|

With `transformers<4.51.0`, you will encounter the following error: |

|

|

``` |

|

|

KeyError: 'qwen3_moe' |

|

|

``` |

|

|

|

|

|

The following contains a code snippet illustrating how to use the model generate content based on given inputs. |

|

|

```python |

|

|

from transformers import AutoModelForCausalLM, AutoTokenizer |

|

|

|

|

|

model_name = "Qwen/Qwen3-Coder-30B-A3B-Instruct" |

|

|

|

|

|

# load the tokenizer and the model |

|

|

tokenizer = AutoTokenizer.from_pretrained(model_name) |

|

|

model = AutoModelForCausalLM.from_pretrained( |

|

|

model_name, |

|

|

torch_dtype="auto", |

|

|

device_map="auto" |

|

|

) |

|

|

|

|

|

# prepare the model input |

|

|

prompt = "Write a quick sort algorithm." |

|

|

messages = [ |

|

|

{"role": "user", "content": prompt} |

|

|

] |

|

|

text = tokenizer.apply_chat_template( |

|

|

messages, |

|

|

tokenize=False, |

|

|

add_generation_prompt=True, |

|

|

) |

|

|

model_inputs = tokenizer([text], return_tensors="pt").to(model.device) |

|

|

|

|

|

# conduct text completion |

|

|

generated_ids = model.generate( |

|

|

**model_inputs, |

|

|

max_new_tokens=65536 |

|

|

) |

|

|

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist() |

|

|

|

|

|

content = tokenizer.decode(output_ids, skip_special_tokens=True) |

|

|

|

|

|

print("content:", content) |

|

|

``` |

|

|

|

|

|

**Note: If you encounter out-of-memory (OOM) issues, consider reducing the context length to a shorter value, such as `32,768`.** |

|

|

|

|

|

For local use, applications such as Ollama, LMStudio, MLX-LM, llama.cpp, and KTransformers have also supported Qwen3. |

|

|

|

|

|

## Agentic Coding |

|

|

|

|

|

Qwen3-Coder excels in tool calling capabilities. |

|

|

|

|

|

You can simply define or use any tools as following example. |

|

|

```python |

|

|

# Your tool implementation |

|

|

def square_the_number(num: float) -> dict: |

|

|

return num ** 2 |

|

|

|

|

|

# Define Tools |

|

|

tools=[ |

|

|

{ |

|

|

"type":"function", |

|

|

"function":{ |

|

|

"name": "square_the_number", |

|

|

"description": "output the square of the number.", |

|

|

"parameters": { |

|

|

"type": "object", |

|

|

"required": ["input_num"], |

|

|

"properties": { |

|

|

'input_num': { |

|

|

'type': 'number', |

|

|

'description': 'input_num is a number that will be squared' |

|

|

} |

|

|

}, |

|

|

} |

|

|

} |

|

|

} |

|

|

] |

|

|

|

|

|

import OpenAI |

|

|

# Define LLM |

|

|

client = OpenAI( |

|

|

# Use a custom endpoint compatible with OpenAI API |

|

|

base_url='http://localhost:8000/v1', # api_base |

|

|

api_key="EMPTY" |

|

|

) |

|

|

|

|

|

messages = [{'role': 'user', 'content': 'square the number 1024'}] |

|

|

|

|

|

completion = client.chat.completions.create( |

|

|

messages=messages, |

|

|

model="Qwen3-Coder-30B-A3B-Instruct", |

|

|

max_tokens=65536, |

|

|

tools=tools, |

|

|

) |

|

|

|

|

|

print(completion.choice[0]) |

|

|

``` |

|

|

|

|

|

## Best Practices |

|

|

|

|

|

To achieve optimal performance, we recommend the following settings: |

|

|

|

|

|

1. **Sampling Parameters**: |

|

|

- We suggest using `temperature=0.7`, `top_p=0.8`, `top_k=20`, `repetition_penalty=1.05`. |

|

|

|

|

|

2. **Adequate Output Length**: We recommend using an output length of 65,536 tokens for most queries, which is adequate for instruct models. |

|

|

|

|

|

|

|

|

### Citation |

|

|

|

|

|

If you find our work helpful, feel free to give us a cite. |

|

|

|

|

|

``` |

|

|

@misc{qwen3technicalreport, |

|

|

title={Qwen3 Technical Report}, |

|

|

author={Qwen Team}, |

|

|

year={2025}, |

|

|

eprint={2505.09388}, |

|

|

archivePrefix={arXiv}, |

|

|

primaryClass={cs.CL}, |

|

|

url={https://arxiv.org/abs/2505.09388}, |

|

|

} |

|

|

``` |

|

|

|