metadata

license: apache-2.0

library_name: diffusers

pipeline_tag: image-to-image

tags:

- controlnet

- remote-sensing

- openstreetmap

widget:

- src: demo_images/input.jpeg

prompt: convert this openstreetmap into its satellite view

output:

url: demo_images/output.jpeg

we do not have a full checkpoint conversion validation, if you encounter pipeline loading failure and unsidered output, please contact me via bili_sakura@zju.edu.cn

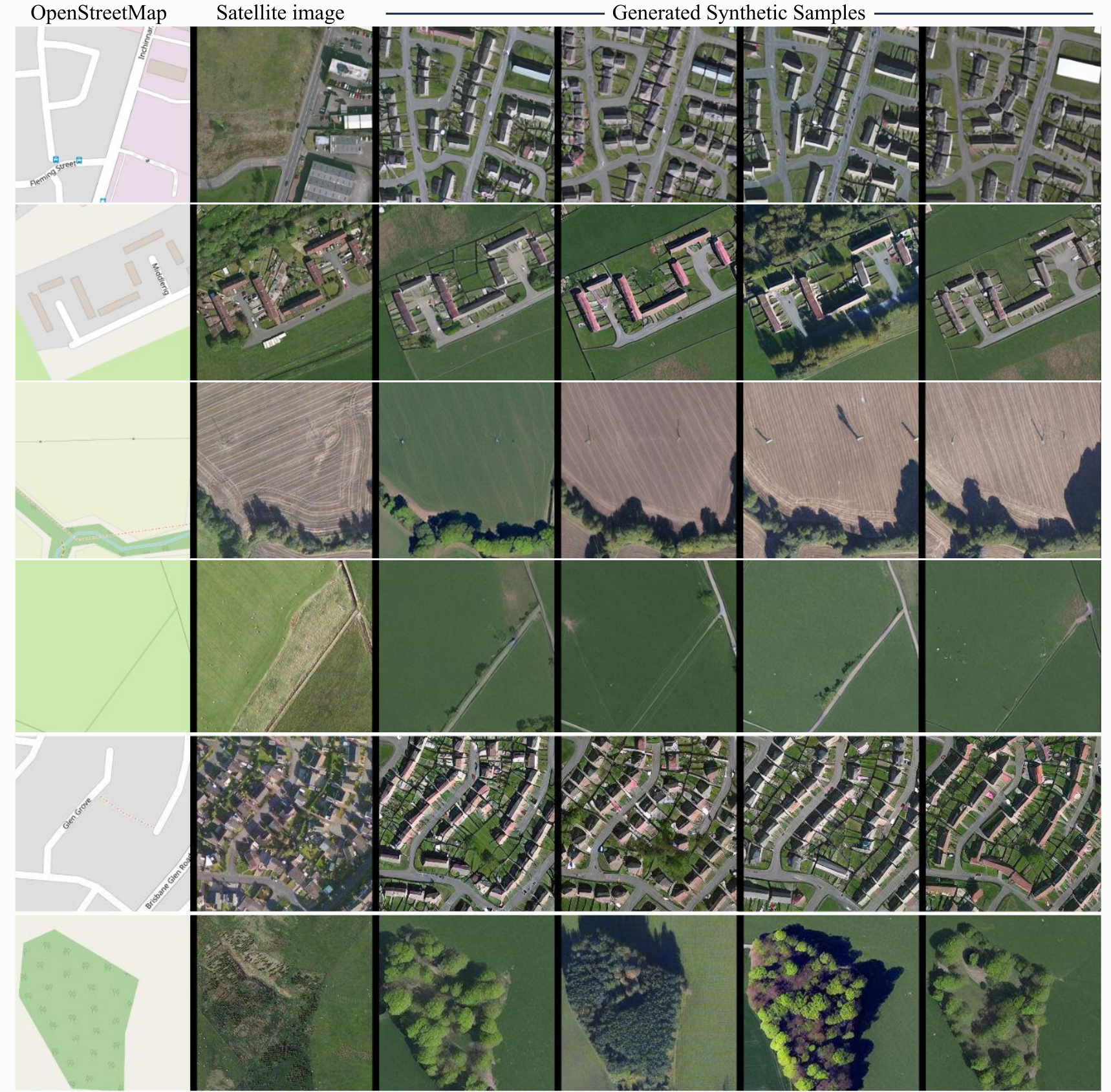

ControlEarth

ControlNet model conditioned on OpenStreetMaps (OSM) to generate the corresponding satellite images.

Trained on the region of the Central Belt.

Repo structure

This repo is self-contained and includes:

- controlnet/ — ControlNet weights (OSM → satellite)

- text_encoder/, unet/, vae/, scheduler/, tokenizer/ — Stable Diffusion v1-5 base

- demo_images/ — Placeholder for input OSM images

- inference_demo.py — Full diffusers inference script (uses only this repo, no external downloads)

Dataset used for training

The dataset used for the training procedure is the WorldImagery Clarity dataset.

The code for the dataset construction can be accessed in https://github.com/miquel-espinosa/map-sat.

Usage

# From the repo root

python inference_demo.py

Or load programmatically:

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel, UniPCMultistepScheduler

import torch

repo = "/path/to/controlearth" # or "." when run from repo root

controlnet = ControlNetModel.from_pretrained(f"{repo}/controlnet", torch_dtype=torch.float16)

pipe = StableDiffusionControlNetPipeline.from_pretrained(

repo, controlnet=controlnet, torch_dtype=torch.float16,

safety_checker=None, requires_safety_checker=False

)

pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

pipe.enable_model_cpu_offload()

image = pipe("convert this openstreetmap into its satellite view", num_inference_steps=50, image=control_image).images[0]