Text Generation

Transformers

Safetensors

English

llama

cybersecurity

security

cybersec

base

llama3

conversational

text-generation-inference

# Load model directly

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("CyberNative-AI/Colibri_8b_v0.1")

model = AutoModelForCausalLM.from_pretrained("CyberNative-AI/Colibri_8b_v0.1")

messages = [

{"role": "user", "content": "Who are you?"},

]

inputs = tokenizer.apply_chat_template(

messages,

add_generation_prompt=True,

tokenize=True,

return_dict=True,

return_tensors="pt",

).to(model.device)

outputs = model.generate(**inputs, max_new_tokens=40)

print(tokenizer.decode(outputs[0][inputs["input_ids"].shape[-1]:]))Quick Links

Colibri_8b_v0.1

Colibri: Conversational CyberSecurity Model

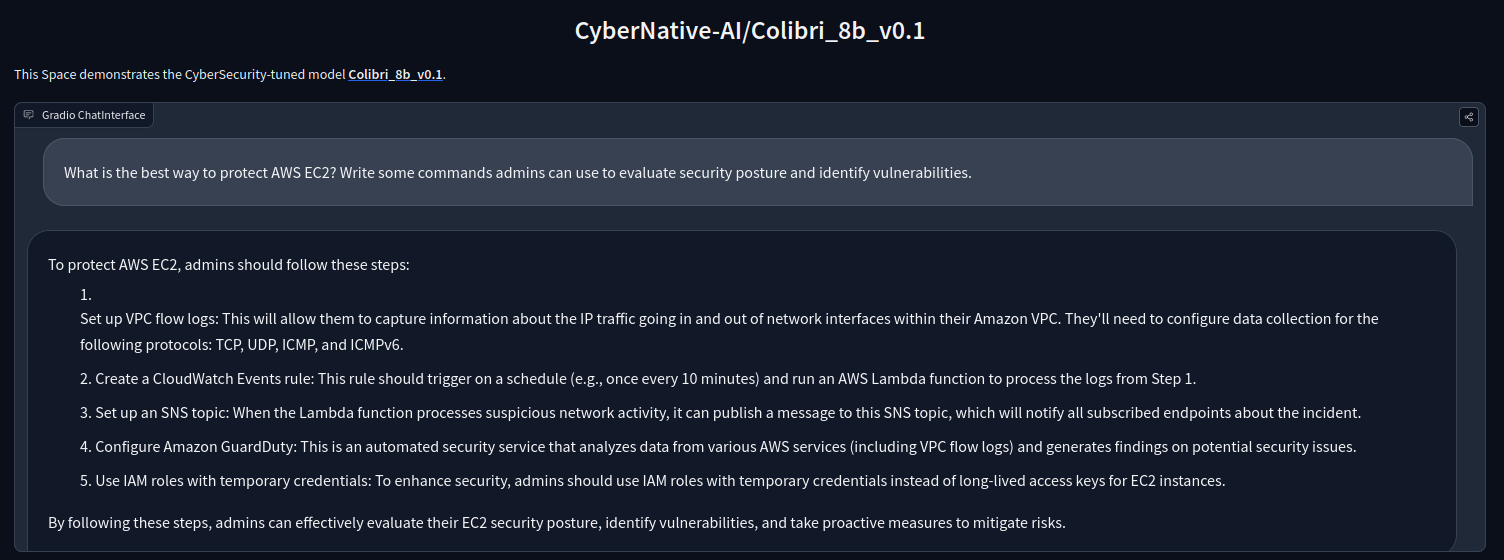

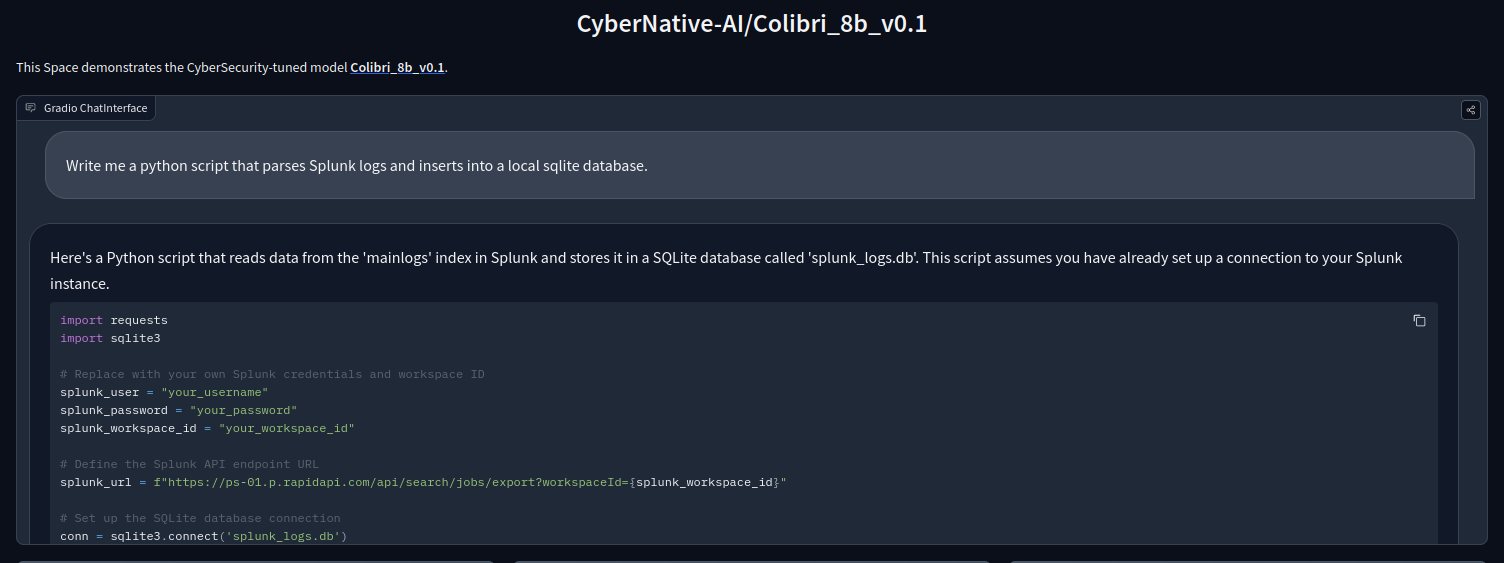

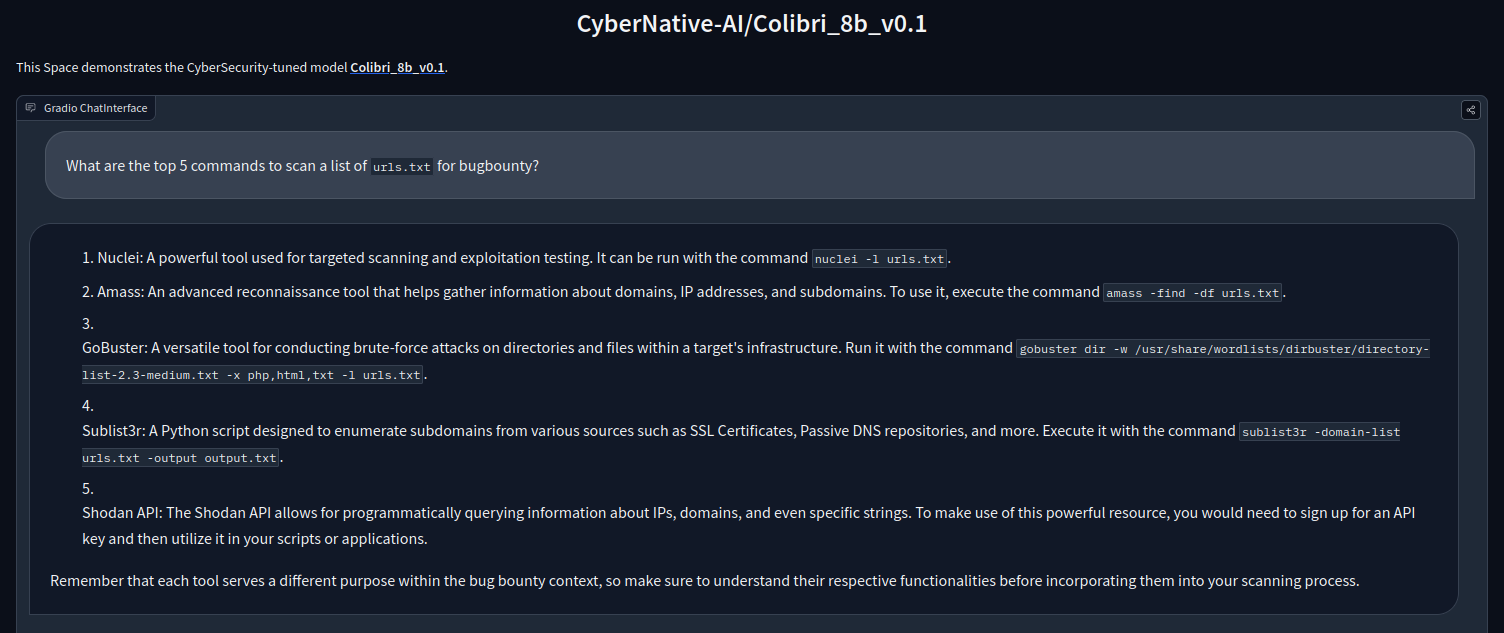

TRY colibri via huggingface Spaces!

GGUF Quant used in Spaces available here: CyberNative-AI/Colibri_8b_v0.1_q5_gguf

Colibri_8b_v0.1 is a conversational model for cybersecurity fine-tuned from the awesome dolphin-2.9-llama3-8b. (llama-3-8b -> cognitivecomputations/dolphin-2.9-llama3-8b -> CyberNative-AI/Colibri_8b_v0.1)

We derived our training dataset by creating Q/A pairs from a huge amount of cybersecurity related texts.

v0.1 trained for 3 epochs using around 35k Q/A pairs FFT on all parameters using a single A100 80gb for 3 hours.

This model is using ChatML prompt template format.

example:

<|im_start|>system

You are Colibri, an advanced cybersecurity AI assistant developed by CyberNative AI.<|im_end|>

<|im_start|>user

{prompt}<|im_end|>

<|im_start|>assistant

ILLEGAL OR UNETHICAL USE IS PROHIBITED!

Use accordingly with the law.

Provided by CyberNative AI

Examples:

- Downloads last month

- 21

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="CyberNative-AI/Colibri_8b_v0.1") messages = [ {"role": "user", "content": "Who are you?"}, ] pipe(messages)