HiTZ/multilingual-abstrct

Viewer • Updated • 34.9k • 334

How to use HiTZ/mbert-argmining-abstrct-multilingual with Transformers:

# Use a pipeline as a high-level helper

from transformers import pipeline

pipe = pipeline("token-classification", model="HiTZ/mbert-argmining-abstrct-multilingual") # Load model directly

from transformers import AutoTokenizer, AutoModelForTokenClassification

tokenizer = AutoTokenizer.from_pretrained("HiTZ/mbert-argmining-abstrct-multilingual")

model = AutoModelForTokenClassification.from_pretrained("HiTZ/mbert-argmining-abstrct-multilingual")# Load model directly

from transformers import AutoTokenizer, AutoModelForTokenClassification

tokenizer = AutoTokenizer.from_pretrained("HiTZ/mbert-argmining-abstrct-multilingual")

model = AutoModelForTokenClassification.from_pretrained("HiTZ/mbert-argmining-abstrct-multilingual")

This model is a fine-tuned version of bert-base-multilingual-cased for the argument component detection task on AbstRCT data in English, Spanish, French and Italian (https://huggingface.co/datasets/HiTZ/multilingual-abstrct).

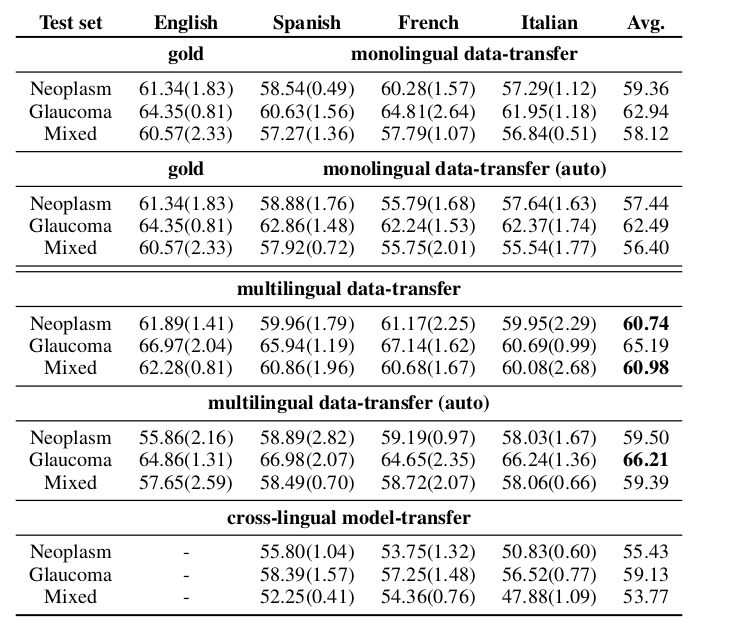

F1-macro scores (at sequence level) and their averages per test set from the argument component detection results of monolingual, monolingual automatically post-processed, multilingual, multilingual automatically post-processed, and crosslingual experiments.

The following hyperparameters were used during training:

Contact: Anar Yeginbergen and Rodrigo Agerri HiTZ Center - Ixa, University of the Basque Country UPV/EHU

Base model

google-bert/bert-base-multilingual-cased

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("token-classification", model="HiTZ/mbert-argmining-abstrct-multilingual")