Instructions to use In2Training/FILM-7B with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use In2Training/FILM-7B with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="In2Training/FILM-7B") messages = [ {"role": "user", "content": "Who are you?"}, ] pipe(messages)# Load model directly from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("In2Training/FILM-7B") model = AutoModelForCausalLM.from_pretrained("In2Training/FILM-7B") messages = [ {"role": "user", "content": "Who are you?"}, ] inputs = tokenizer.apply_chat_template( messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt", ).to(model.device) outputs = model.generate(**inputs, max_new_tokens=40) print(tokenizer.decode(outputs[0][inputs["input_ids"].shape[-1]:])) - Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use In2Training/FILM-7B with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "In2Training/FILM-7B" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "In2Training/FILM-7B", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker

docker model run hf.co/In2Training/FILM-7B

- SGLang

How to use In2Training/FILM-7B with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "In2Training/FILM-7B" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "In2Training/FILM-7B", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "In2Training/FILM-7B" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "In2Training/FILM-7B", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }' - Docker Model Runner

How to use In2Training/FILM-7B with Docker Model Runner:

docker model run hf.co/In2Training/FILM-7B

FILM-7B

💻 [Github Repo] • 📃 [Paper] • ⚓ [VaLProbing-32K]

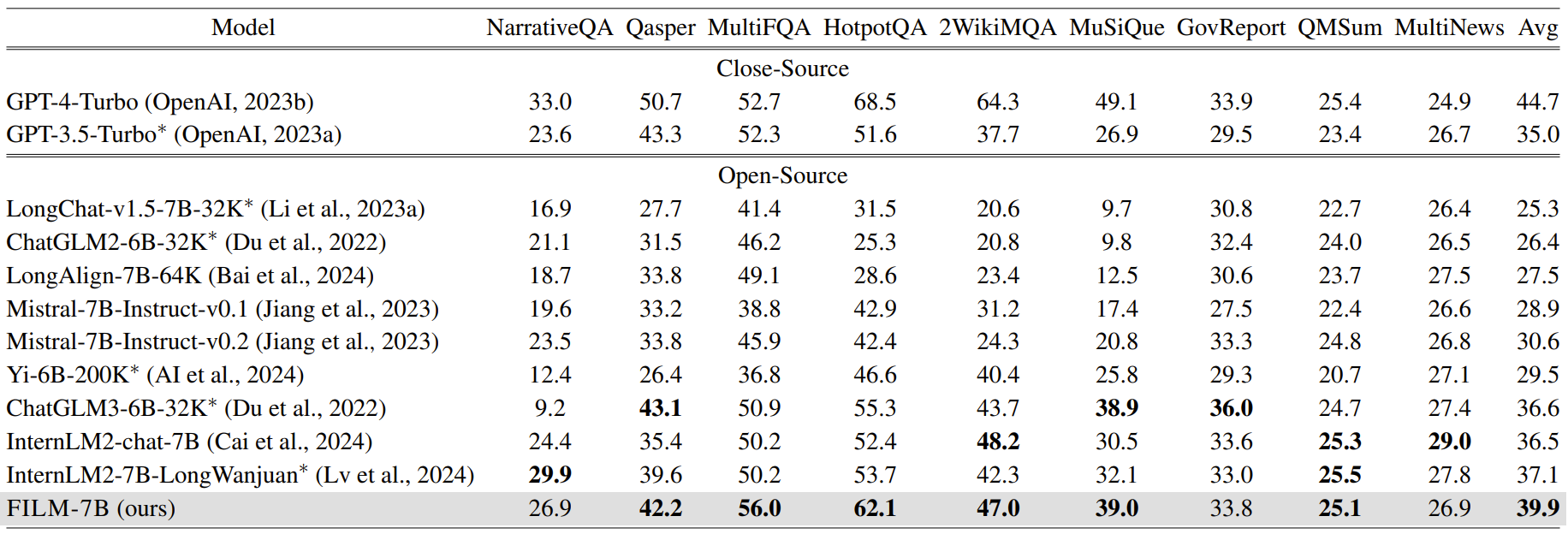

FILM-7B is a 32K-context LLM that overcomes the lost-in-the-middle problem. It is trained from Mistral-7B-Instruct-v0.2 by applying Information-Intensie (In2) Training. FILM-7B achieves near-perfect performance on probing tasks, SOTA-level performance on real-world long-context tasks among ~7B size LLMs, and does not compromise the short-context performance.

Model Usage

The system tempelate for FILM-7B:

'''[INST] Below is a context and an instruction. Based on the information provided in the context, write a response for the instruction.

### Context:

{YOUR LONG CONTEXT}

### Instruction:

{YOUR QUESTION & INSTRUCTION} [/INST]

'''

Probing Results

To reproduce the results on our VaL Probing, see the guidance in https://github.com/microsoft/FILM/tree/main/VaLProbing.

Real-World Long-Context Tasks

To reproduce the results on real-world long-context tasks, see the guidance in https://github.com/microsoft/FILM/tree/main/real_world_long.

Short-Context Tasks

To reproduce the results on short-context tasks, see the guidance in https://github.com/microsoft/FILM/tree/main/short_tasks.

📝 Citation

@misc{an2024make,

title={Make Your LLM Fully Utilize the Context},

author={Shengnan An and Zexiong Ma and Zeqi Lin and Nanning Zheng and Jian-Guang Lou},

year={2024},

eprint={2404.16811},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

Disclaimer: This model is strictly for research purposes, and not an official product or service from Microsoft.

- Downloads last month

- 60

docker model run hf.co/In2Training/FILM-7B