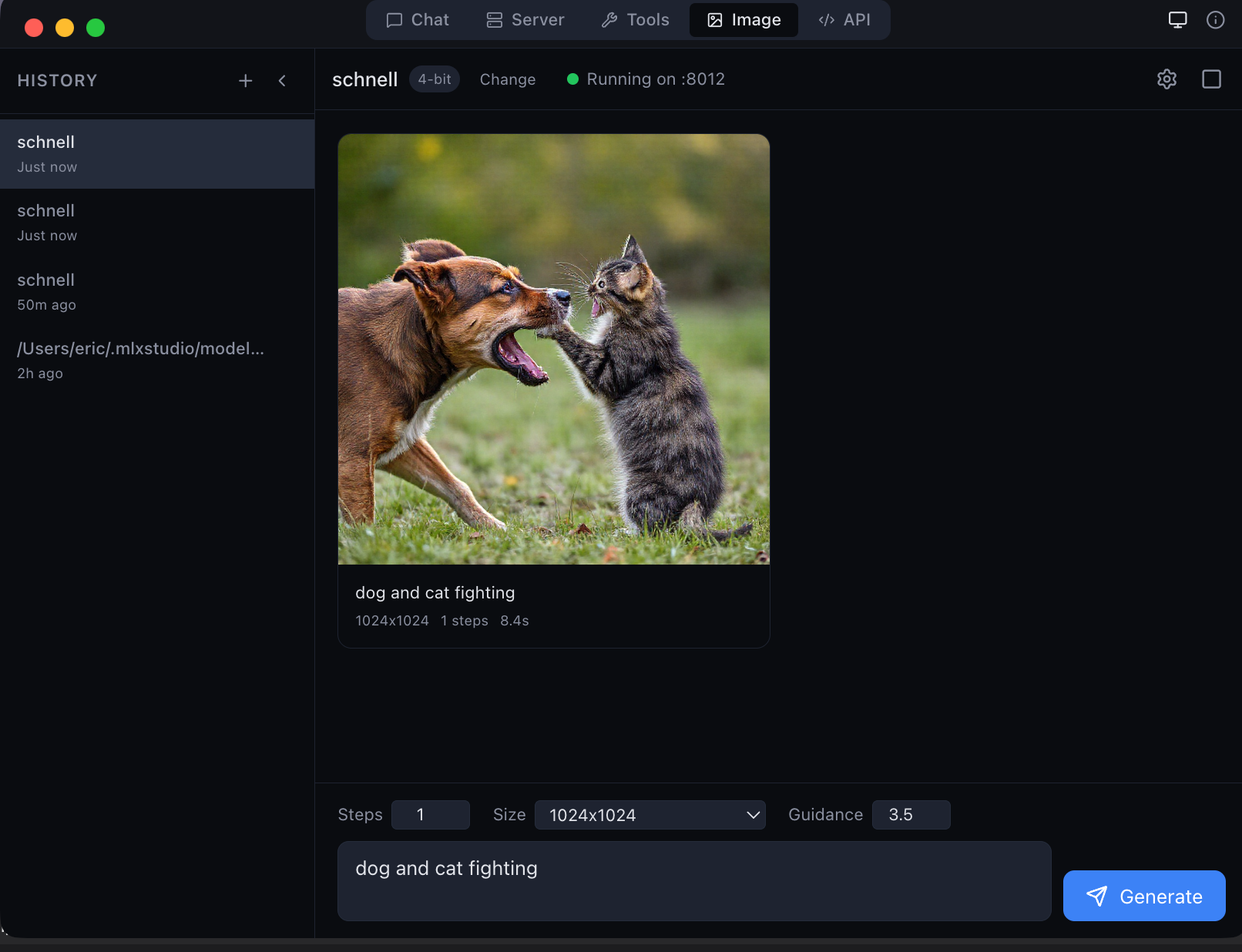

MLX Studio — the only app that natively supports JANG models

LM Studio, Ollama, oMLX, Inferencer and other MLX apps do not support JANG yet. Use MLX Studio for native JANG support, or pip install jang for Python inference. Ask your favorite app's creators to add JANG support!

JANGQ-AI — JANG Quantized Models for Apple Silicon

JANG (Jang Adaptive N-bit Grading) — the GGUF equivalent for MLX.

Same size as MLX, smarter bit allocation. Models stay quantized in GPU memory at full Metal speed.

Install

pip install "jang[mlx]"

Models

| Model | Profile | MMLU | HumanEval | Size |

|---|---|---|---|---|

| Qwen3.5-122B-A10B-JANG_2S | 2-bit | 84% | 90% | 38 GB |

| Qwen3.5-35B-A3B-JANG_4K | 4-bit K-quant | 84% | 90% | 16.7 GB |

| Qwen3.5-35B-A3B-JANG_2S | 2-bit | 62% | — | 12 GB |

Links

GitHub · PyPI · MLX Studio

Created by Jinho Jang — jangq.ai · @dealignai

Inference Providers NEW

This model isn't deployed by any Inference Provider. 🙋 Ask for provider support