DiT-IC: Aligned Diffusion Transformer for Efficient Image Compression

Paper • 2603.13162 • Published

Source code is available at Github.

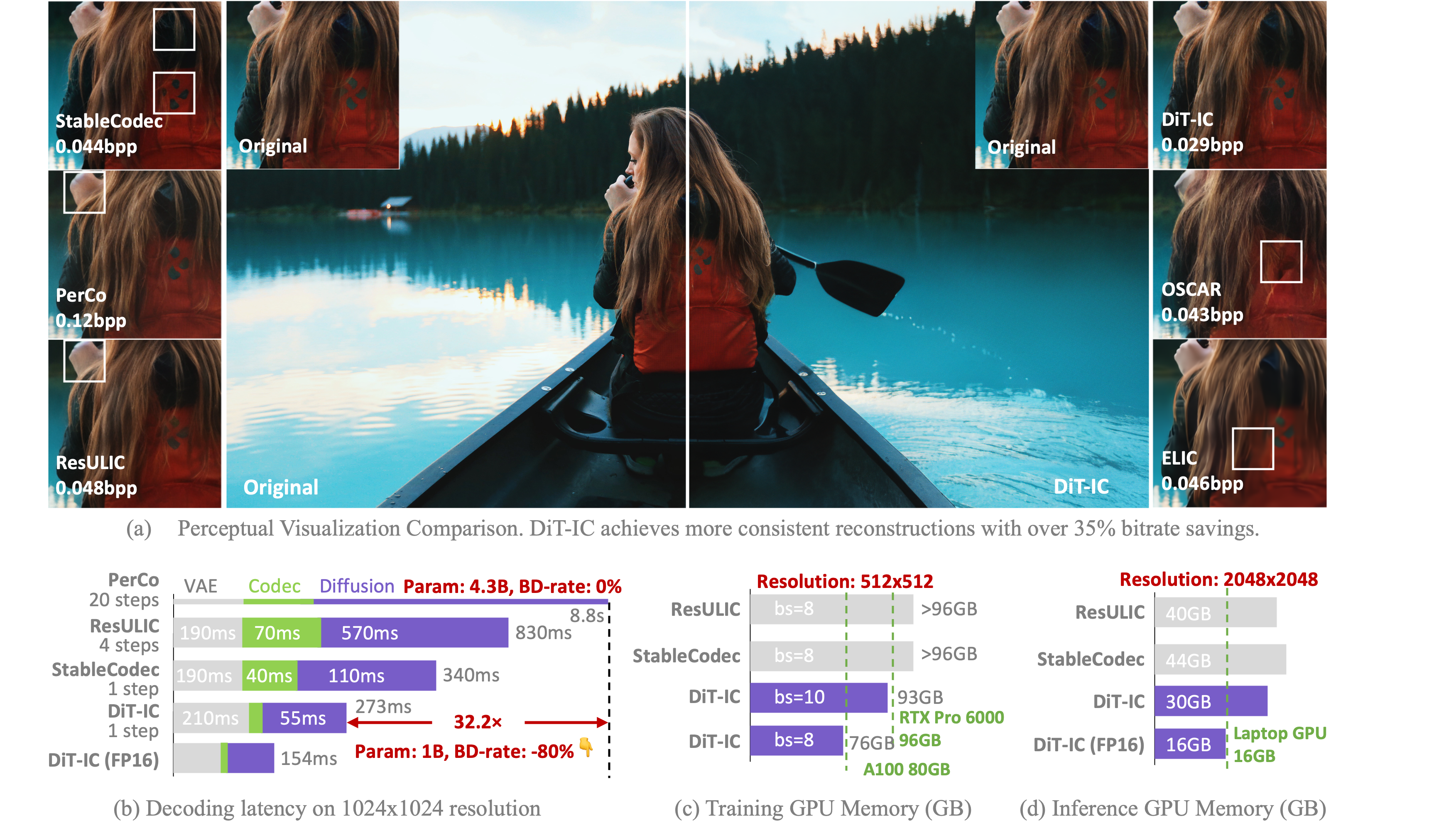

This model performs the diffusion process in a 32× latent space to reduce memory usage and accelerate inference.

The model is based on the pretrained text-to-image generative model SANA-600M.

For training and inference scripts, please visit our GitHub Repository.

If you use this model, please cite:

@inproceedings{shi2026ditic,

title={DiT-IC: Aligned Diffusion Transformer for Efficient Image Compression},

author={Shi Junqi, Lu Ming, Li Xingchen, Ke Anle, Zhang Ruiqi and Ma Zhan},

booktitle={CVPR},

year={2026}

}

Unable to build the model tree, the base model loops to the model itself. Learn more.