|

|

--- |

|

|

library_name: peft |

|

|

base_model: LSX-UniWue/LLaMmlein_7B |

|

|

tags: |

|

|

- trl |

|

|

- sft |

|

|

- generated_from_trainer |

|

|

datasets: |

|

|

- LSX-UniWue/Guanako |

|

|

- FreedomIntelligence/sharegpt-deutsch |

|

|

- FreedomIntelligence/alpaca-gpt4-deutsch |

|

|

language: |

|

|

- de |

|

|

license: other |

|

|

--- |

|

|

|

|

|

# LLäMmlein 7B Chat |

|

|

|

|

|

|

|

|

|

|

|

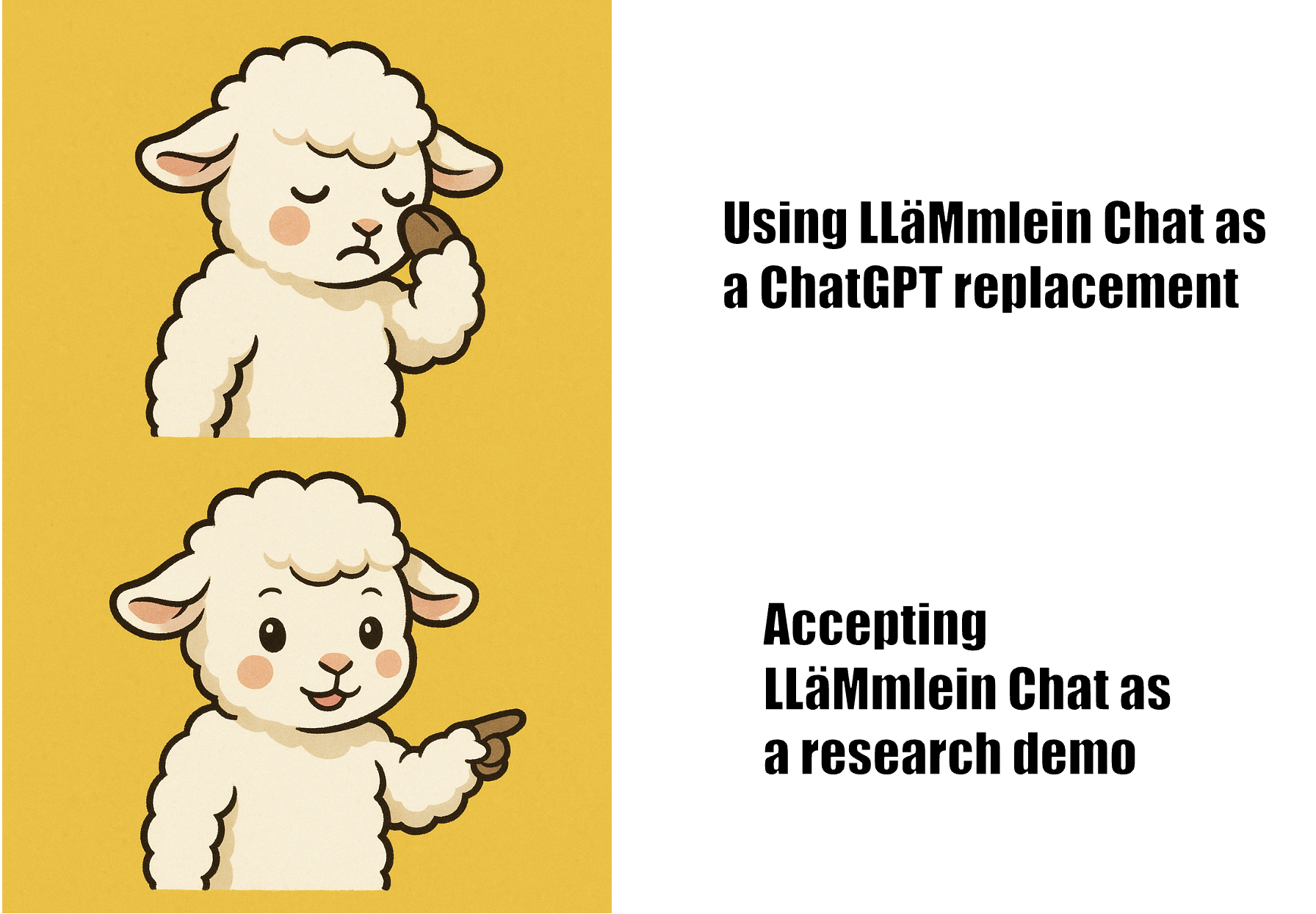

> [!WARNING] |

|

|

> While the base versions of our LLäMmlein are quite good, our chat versions are research demonstrations and are not ready to be used in settings where close instruction following is necessary. Please check the paper for more details. |

|

|

|

|

|

This is an early preview of our instruction-tuned 7B model, trained using limited German-language resources. |

|

|

Please note that it is not the final version - we are actively working on improvements! |

|

|

|

|

|

Find more details on our [page](https://www.informatik.uni-wuerzburg.de/datascience/projects/nlp/llammlein/) and our [preprint](arxiv.org/abs/2411.11171)! |

|

|

|

|

|

## Example Usage |

|

|

|

|

|

```python |

|

|

from transformers import AutoModelForCausalLM, AutoTokenizer |

|

|

|

|

|

model = AutoModelForCausalLM.from_pretrained("LSX-UniWue/LLaMmlein_7B_chat") |

|

|

tokenizer = AutoTokenizer.from_pretrained("LSX-UniWue/LLaMmlein_7B_chat") |

|

|

model = model.to("mps") |

|

|

|

|

|

messages = [ |

|

|

{ |

|

|

"role": "user", |

|

|

"content": "Was sind die wichtigsten Sehenswürdigkeiten von Berlin?", |

|

|

}, |

|

|

] |

|

|

|

|

|

chat = tokenizer.apply_chat_template( |

|

|

messages, |

|

|

return_tensors="pt", |

|

|

add_generation_prompt=True, |

|

|

).to("mps") |

|

|

|

|

|

|

|

|

print( |

|

|

tokenizer.decode( |

|

|

model.generate( |

|

|

chat, |

|

|

max_new_tokens=100, |

|

|

pad_token_id=tokenizer.pad_token_id, |

|

|

eos_token_id=tokenizer.eos_token_id, |

|

|

repetition_penalty=1.1, |

|

|

)[0], |

|

|

skip_special_tokens=False, |

|

|

) |

|

|

) |

|

|

``` |

|

|

[Data Take Down](https://www.informatik.uni-wuerzburg.de/datascience/projects/nlp/llammlein/) |