metadata

license: mit

base_model: Qwen/Qwen3-VL-4B-Instruct

pipeline_tag: image-text-to-text

library_name: transformers

tags:

- gui-agent

- mobile-agent

- android-world

- vision-language-model

UI-Voyager: A Self-Evolving GUI Agent Learning via Failed Experience

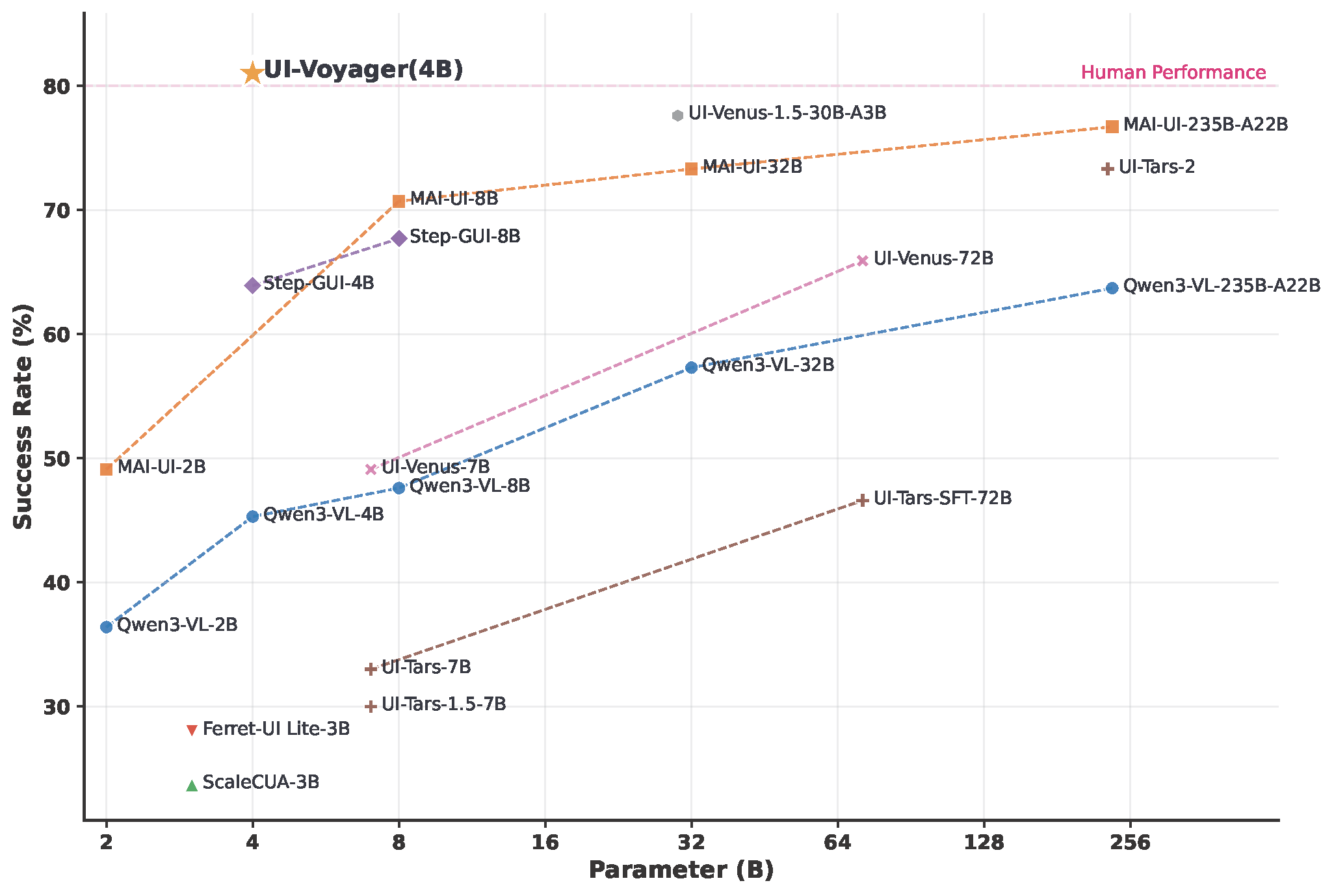

UI-Voyager is a novel two-stage self-evolving mobile GUI agent fine-tuned from Qwen3-VL-4B-Instruct. Our 4B model achieves a 81.0% success rate on the AndroidWorld benchmark, outperforming numerous recent baselines and exceeding human-level performance.

Overview of UI-Voyager performance on AndroidWorld

Highlights

- 🏆 State-of-the-Art Performance: Achieves 81.0% success rate on AndroidWorld, surpassing human-level performance.

- 🔄 Self-Evolving: A two-stage training paradigm that learns from failed experiences to continuously improve.

- 📱 Mobile GUI Agent: Specialized for operating mobile device interfaces — recognizing UI elements, understanding functions, and completing tasks autonomously.

- 🧠 Built on Qwen3-VL-4B: Inherits the powerful vision-language capabilities of Qwen3-VL-4B-Instruct, including advanced visual perception, OCR, and multimodal reasoning.

Model Details

| Attribute | Detail |

|---|---|

| Base Model | Qwen3-VL-4B-Instruct |

| Parameters | ~4B |

| License | MIT |

| Task | Mobile GUI Agent / Image-Text-to-Text |

| Benchmark | AndroidWorld |

| Success Rate | 81.0% |

Evaluation on AndroidWorld

For full evaluation instructions using AndroidWorld with parallel emulators, please refer to our GitHub repository.

# Start parallel evaluation (4 emulators)

NUM_WORKERS=4 CONFIG_NAME=UI-Voyager MODEL_NAME=UI-Voyager ./run_android_world.sh

Citation

If you find this work useful, please consider giving a star ⭐ and citation:

@misc{lin2026uivoyager,

title={UI-Voyager: A Self-Evolving GUI Agent Learning via Failed Experience},

author={Zichuan Lin and Feiyu Liu and Yijun Yang and Jiafei Lyu and Yiming Gao and Yicheng Liu and Zhicong Lu and Yangbin Yu and Mingyu Yang and Junyou Li and Deheng Ye and Jie Jiang},

year={2026},

eprint={2603.24533},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2603.24533},

}