File size: 6,085 Bytes

bcc2b7a e3a94fa 83d09be e3a94fa bcc2b7a e3a94fa d09f6ed e3a94fa bcc2b7a e3a94fa bcc2b7a dd95738 e3a94fa bcc2b7a e3a94fa bcc2b7a e3a94fa bcc2b7a e3a94fa bcc2b7a e3a94fa bcc2b7a e3a94fa bcc2b7a e3a94fa bcc2b7a e3a94fa bcc2b7a e3a94fa bcc2b7a e3a94fa 2f81521 bcc2b7a e3a94fa bcc2b7a e3a94fa cde96b0 e3a94fa |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 |

---

license: apache-2.0

tags:

- mistral

- Uncensored

- text-generation-inference

- transformers

- unsloth

- trl

- roleplay

- conversational

- rp

datasets:

- N-Bot-Int/Iris-Uncensored-R2

- N-Bot-Int/Millie-R1_DPO

- N-Bot-Int/Millia-R1_DPO

language:

- en

base_model:

- unsloth/mistral-7b-instruct-v0.3-bnb-4bit

pipeline_tag: text-generation

library_name: transformers

metrics:

- character

---

# Official Quants are Uploaded By Us

- [MistThena7BV2-GGUF](https://huggingface.co/N-Bot-Int/MistThena7BV2-GGUF)

# Support us on Ko-Fi!

- [](https://ko-fi.com/J3J61D8NHV)

# MistThena7B - V2.

- Introducing our Mindboggling MistThena7B **V2**, This Version Offer an Upgraded RP experience, beyond Other AI model

We've made, Outcompetting our 3B, 1B, MythoMax, Deepseek and Hermes for Roleplaying!

- **MistThena7B-V2** Offer an expanded Roleplay capabilities, using our EmojiEmulsifyer Program to Train MistThena7B

To Use Emojis, expanding the Roleplaying Immersiveness and Actionsets MistThena7B can do!

- Activate MistThena's Expanded Actions, by mirroring it(ie using Emoji on your own prompts), to ensure MistThena's

Use of Emoji or Actions!

- MistThena7B-V2 is also trained on 160K Examples from our Latest Corpus **IRIS_UNCENSORED_R2**!, This shows that

Iris_Uncensored_R2 shows huge potential to produce good outputs, and reveals MistThena7B's Good Roleplaying capabilities,

Which were obtained through High and rigorous training, combined with Preventive measure to overfitting

- MistThena7B contains more Fine-tuned Dataset so please Report any issues found through our email

[nexus.networkinteractives@gmail.com](mailto:nexus.networkinteractives@gmail.com)

about any overfitting, or improvements for the future Model **V3**,

Once again feel free to Modify the LORA to your likings, However please consider Adding this Page

for credits and if you'll increase its **Dataset**, then please handle it with care and ethical considerations

- MistThena is

- **Developed by:** N-Bot-Int

- **License:** apache-2.0

- **Finetuned from model:** unsloth/mistral-7b-instruct-v0.3-bnb-4bit

- **Sequential Trained from Model:** N-Bot-Int/OpenElla3-Llama3.2A

- **Dataset Combined Using:** Mosher-R1(Propietary Software)

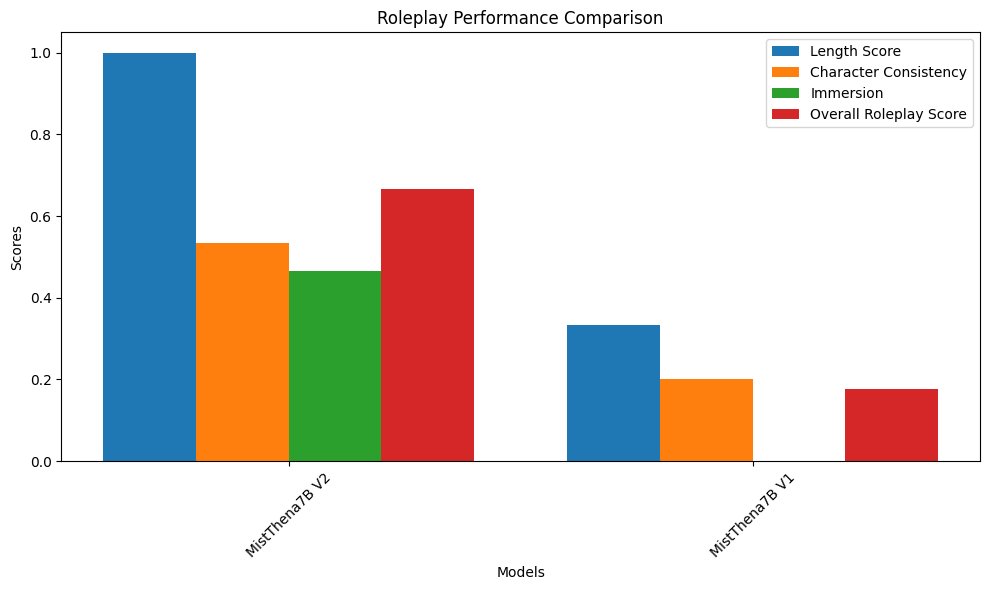

- Comparison Metric Score

- Metrics Made By **ItsMeDevRoland**

Which compares:

- **MistThena7B-V1**

- **MistThena7B-V2 : 60 STEP VERSION**

Which are All Ranked with the Same Prompt, Same Temperature, Same Hardware(Google Colab),

To Properly Showcase the differences and strength of the Models

---

# 🌀 MistThema-7B V2: Slower Beats, Stronger Bonds — A Roleplay Revival

> "She may not win the speed race, but when it comes to presence and performance — she owns the stage."

---

# MistThema-7B V2 isn’t just an upgrade — she’s a reinvention. Built on V1’s storytelling roots, V2 shifts her focus inward:

- **longer scenes, deeper characters, and dialogue that breathes.**

- 💬 **Roleplay Evaluation**

- ✍️ **Length Score**: 0.34 → **1.00** (🚀)

- 🧠 **Character Consistency**: 0.20 → **0.53**

- 🌌 **Immersion**: 0.00 → **0.47**

- 🎭 **Overall RP Score**: 0.17 → **0.67**

> She no longer just responds — she *inhabits*. MistThema-7B V2 is the method actor of models, channeling roles with vivid coherence and creative depth.

---

# ⚙️ The Cost of Craft: Time for Thought

- 🕒 **Inference Time**: 114s → **179s** (↑)

- ⚡ **Tokens/sec**: 1.51 → **1.28** (↓)

> Yes, she’s slower — but that’s not a bug. That’s intention. Every word is more considered, every output more deliberate.

---

# 📏 Traditional Metrics? A Trade-off

- 📘 **BLEU Score**: 0.43 → **0.18**

- 📕 **ROUGE-L**: 0.60 → **0.32**

> While V1 outperforms on surface-level matching, V2 is optimized for *experiential fidelity*, not rigid overlap.

---

# 🎯 Reimagined for Realness

MistThema-7B V2 isn’t trying to mimic — she’s trying to *immerse*. Designed to tell stories, embody roles, and hold character in long-form exchanges.

- 🧩 Tailored for:

- Narrative-heavy use cases

- Emotional continuity and consistency

- Richer, longer interactions

---

> “MistThema-7B V2 trades benchmarks for believability. Less about matching — more about meaning. Less polished — more *present*.”

# MistThema-7B V2 is where slower feels *stronger*.

---

- # Notice

- **For a Good Experience, Please use**

-

- {FOR KOBOLDCPP USERS, PLEASE USE COHERENT CREATIVITY LEGACY}

- TL:DR I'm still looking for ways to improve a good settings, if you have any good settings, feel free to put them

in the community! i'll credit ya out!

- # Detail card:

- Parameter

- 7 Billion Parameters

- (Please visit your GPU Vendor if you can Run 7B models)

- Training

- 250 Steps

- N-Bot-Int/Iris_Uncensored_R2

- 60 Steps

- N-Bot-Int/Millie_DPO

- Finetuning tool:

- Unsloth AI

- This mistral model was trained 2x faster with [Unsloth](https://github.com/unslothai/unsloth) and Huggingface's TRL library.

[<img src="https://raw.githubusercontent.com/unslothai/unsloth/main/images/unsloth%20made%20with%20love.png" width="200"/>](https://github.com/unslothai/unsloth)

- Fine-tuned Using:

- Google Colab |