license: apache-2.0

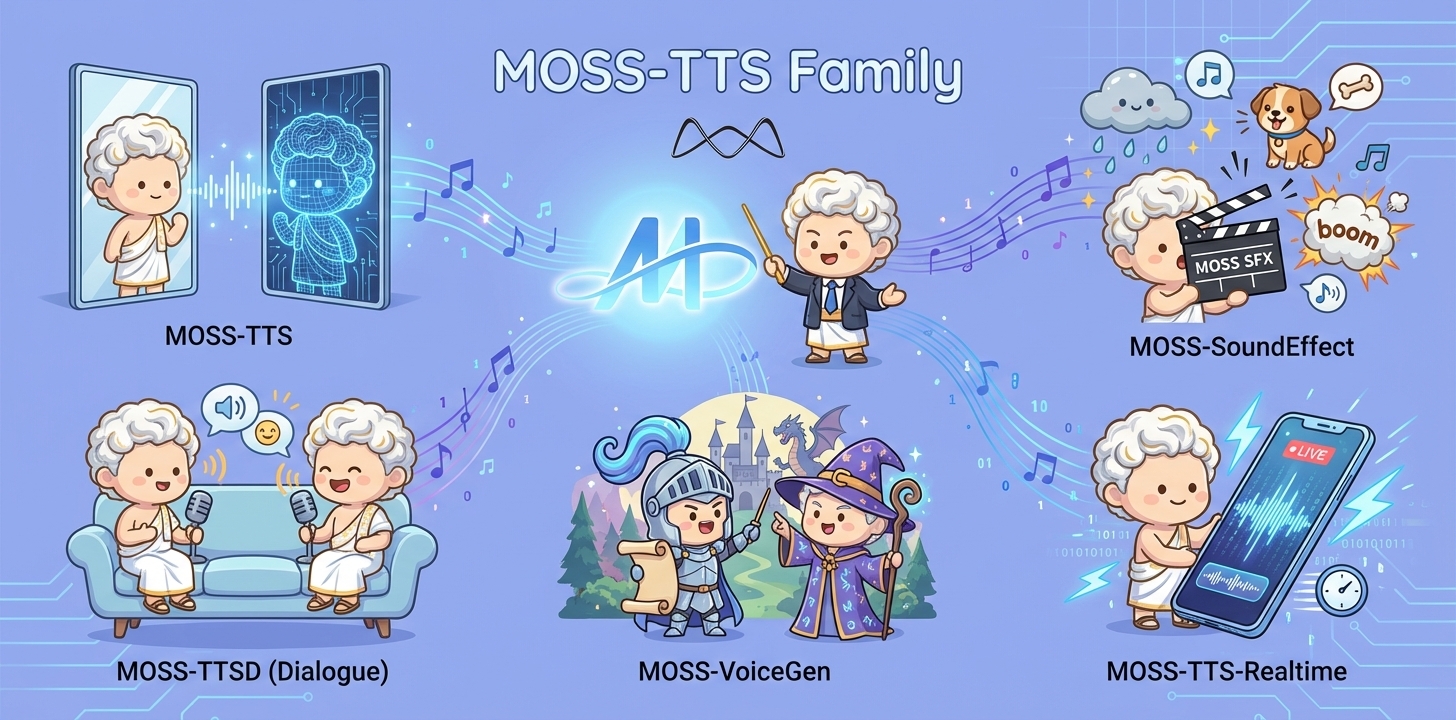

MOSS-TTS Family

Overview

MOSS‑TTS Family is an open‑source speech and sound generation model family from MOSI.AI and the OpenMOSS team. It is designed for high‑fidelity, high‑expressiveness, and complex real‑world scenarios, covering stable long‑form speech, multi‑speaker dialogue, voice/character design, environmental sound effects, and real‑time streaming TTS.

Introduction

When a single piece of audio needs to sound like a real person, pronounce every word accurately, switch speaking styles across content, remain stable over tens of minutes, and support dialogue, role‑play, and real‑time interaction, a single TTS model is often not enough. The MOSS‑TTS Family breaks the workflow into five production‑ready models that can be used independently or composed into a complete pipeline.

- MOSS‑TTS: MOSS-TTS is the flagship, production-ready Text-to-Speech foundation model in the MOSS-TTS Family, built to ship, scale, and deliver real-world voice applications beyond demos. It provides high-fidelity zero-shot voice cloning as the core capability, along with ultra-long speech generation, token-level duration control, multilingual and code-switched synthesis, and fine-grained Pinyin/phoneme pronunciation control. Together, these features make it a robust base model for scalable narration, dubbing, and voice-driven products.

- MOSS‑TTSD: MOSS-TTSD is a production-oriented long-form spoken dialogue generation model for creating highly expressive, multi-party conversational audio at scale. It supports continuous long-duration generation, flexible multi-speaker turn-taking control, and zero-shot voice cloning from short reference audio, enabling natural conversations with rich interaction dynamics. It is designed for real-world long-form content such as podcasts, audiobooks, commentary, dubbing, and entertainment dialogue.

- MOSS‑VoiceGenerator: MOSS-VoiceGenerator is an open-source voice design system that generates speaker timbres directly from free-form text descriptions, enabling fast creation of voices for characters, personalities, and emotions—without requiring reference audio. It unifies timbre design, style control, and content synthesis in a single instruction-driven model, producing high-fidelity, emotionally expressive speech that feels naturally human. It can be used standalone for creative production, or as a voice design layer that improves integration and usability for downstream TTS systems.

- MOSS‑SoundEffect: MOSS-SoundEffect is a high-fidelity sound effect generation model built for real-world content creation, offering strong environmental richness, broad category coverage, and reliable duration controllability. Trained on large-scale, high-quality data, it generates consistent audio from text prompts across natural ambience, urban scenes, creatures, human actions, and music-like clips. It is well suited for film and game production, interactive experiences, and data synthesis pipelines.

- MOSS‑TTS‑Realtime: MOSS-TTS-Realtime is a context-aware, multi-turn streaming TTS foundation model designed for real-time voice agents. Unlike conventional TTS that synthesizes replies in isolation, it conditions generation on multi-turn dialogue history—including both textual and acoustic signals from prior user speech—so responses stay coherent, consistent, and natural across turns. With low-latency incremental synthesis and strong voice stability, it enables truly conversational, human-like real-time speech experiences.

Released Models

| Model | Architecture | Size | Model Card | Hugging Face |

|---|---|---|---|---|

| MOSS-TTS | MossTTSDelay | 8B | moss_tts_model_card.md | 🤗 Huggingface |

| MossTTSLocal | 1.7B | moss_tts_model_card.md | 🤗 Huggingface | |

| MOSS‑TTSD‑V1.0 | MossTTSDelay | 8B | moss_ttsd_model_card.md | 🤗 Huggingface |

| MOSS‑VoiceGenerator | MossTTSDelay | 1.7B | moss_voice_generator_model_card.md | 🤗 Huggingface |

| MOSS‑SoundEffect | MossTTSDelay | 8B | moss_sound_effect_model_card.md | 🤗 Huggingface |

| MOSS‑TTS‑Realtime | MossTTSRealtime | 1.7B | moss_tts_realtime_model_card.md | 🤗 Huggingface |

MOSS-TTS-Realtime

1. Overview

1.1 TTS Family Positioning

MOSS-TTS-Realtime is a high-performance, real-time speech synthesis model within the broader MOSS TTS Family. It is designed for interactive voice agents that require low-latency, continuous speech generation across multi-turn conversations. Unlike conventional streaming TTS systems that synthesize each response in isolation, MOSS-TTS-Realtime natively models dialogue context by conditioning speech generation on both textual and acoustic information from previous turns. By tightly integrating multi-turn context awareness with incremental streaming synthesis, it produces natural, coherent, and voice-consistent audio responses, enabling fluid and human-like spoken interactions for real-time applications.

Key Capabilities

Context-Aware & Expressive Speech Generation: Generates expressive and coherent speech by modeling both textual and acoustic context across multiple dialogue turns.

High-Fidelity Voice Cloning with Multi-Turn Consistency: Achieves exceptionally high voice similarity while maintaining strong speaker identity consistency across multiple dialogue turns.

Long-Context: Supports long-range context with a maximum context length of 32K (about 40 minutes), enabling stable and consistent speech generation in extended conversations.

Highly Human-Like Speech with Natural Prosody: Trained on over 2.5 million hours of single-speaker speech and more than 1 million hours of two-speaker and multi-speaker conversational data, resulting in highly natural prosody and strong human-like expressiveness.

Multilingual Speech Support: Supports over 10 languages beyond Chinese and English, including Korean, Japanese, German, and French, enabling consistent and expressive speech across languages.

1.2 Model Architecture

2. Usage

Please refer to the following GitHub repository for detailed usage instructions and examples:

👉 Usage Guide:

https://github.com/OpenMOSS/MOSS-TTS/blob/main/moss_tts_realtime_model_card.md