metadata

base_model: facebook/vit-mae-base

library_name: transformers

pipeline_tag: image-classification

tags:

- probex

- model-j

- weight-space-learning

Model-J: MAE Model (model_idx_0199)

This model is part of the Model-J dataset, introduced in:

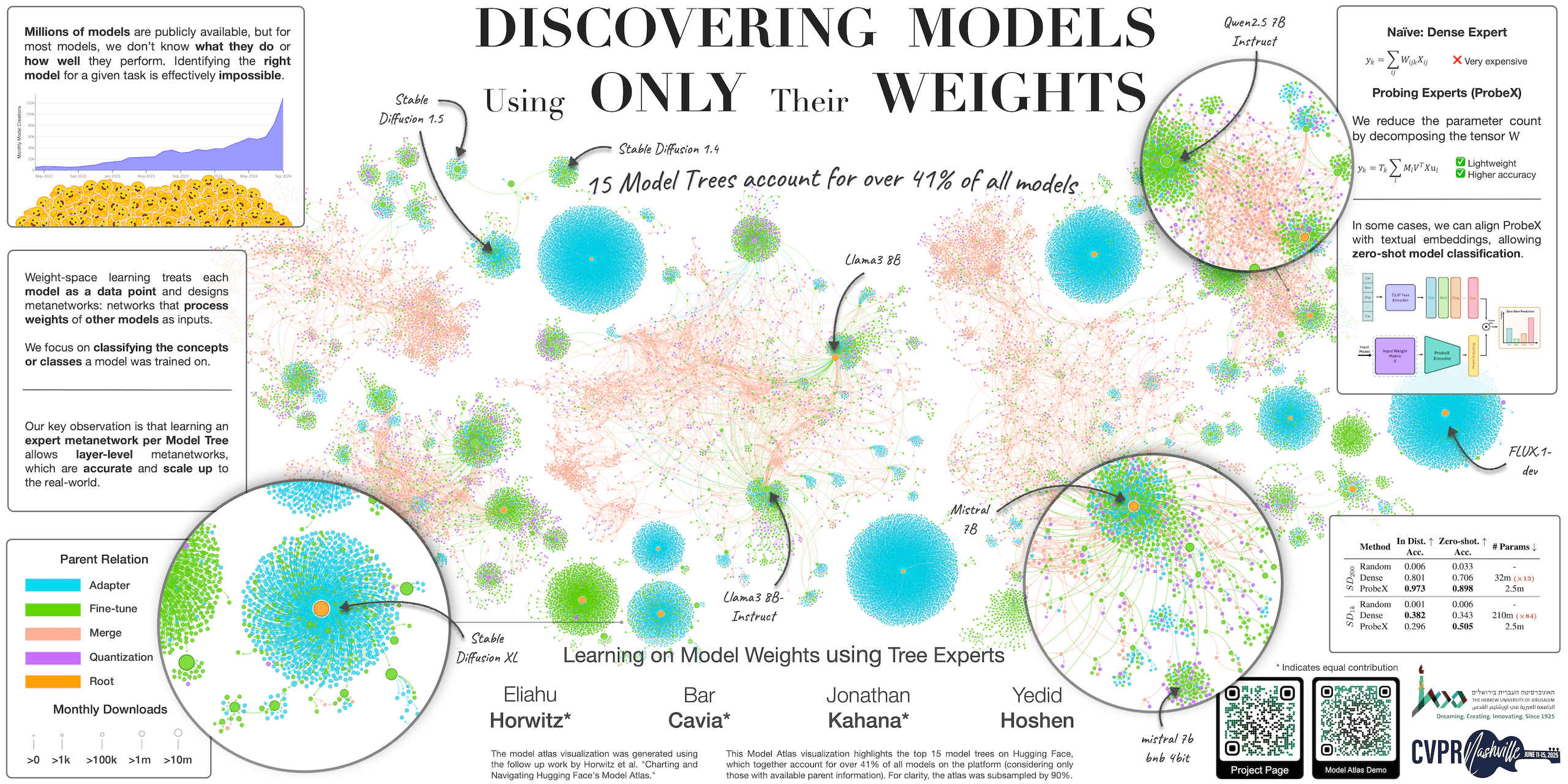

Learning on Model Weights using Tree Experts (CVPR 2025) by Eliahu Horwitz*, Bar Cavia*, Jonathan Kahana*, Yedid Hoshen

🌐 Project | 📃 Paper | 💻 GitHub | 🤗 Dataset

Model Details

| Attribute | Value |

|---|---|

| Subset | MAE |

| Split | val |

| Base Model | facebook/vit-mae-base |

| Dataset | CIFAR100 (50 classes) |

Training Hyperparameters

| Parameter | Value |

|---|---|

| Learning Rate | 0.0005 |

| LR Scheduler | constant |

| Epochs | 8 |

| Max Train Steps | 2664 |

| Batch Size | 64 |

| Weight Decay | 0.007 |

| Seed | 199 |

| Random Crop | False |

| Random Flip | False |

Performance

| Metric | Value |

|---|---|

| Train Accuracy | 0.9073 |

| Val Accuracy | 0.5832 |

| Test Accuracy | 0.6044 |

Training Categories

The model was fine-tuned on the following 50 CIFAR100 classes:

dolphin, poppy, porcupine, plain, can, pickup_truck, trout, raccoon, wardrobe, house, kangaroo, bear, bowl, mountain, bee, telephone, dinosaur, whale, tank, wolf, rose, turtle, snail, woman, skunk, cloud, train, elephant, chair, sunflower, caterpillar, crocodile, mouse, beetle, otter, motorcycle, bus, leopard, cup, television, chimpanzee, tractor, boy, castle, flatfish, hamster, bed, snake, sea, oak_tree