library_name: transformers

tags:

- generated_from_trainer

- deep-search

- web-agent

- rag

model-index:

- name: Online-Searcher-QwQ-32B

results: []

license: mit

pipeline_tag: text-generation

Online-Searcher-QwQ-32B (SimpleDeepSearcher)

This model, Online-Searcher-QwQ-32B, is part of the SimpleDeepSearcher family, a lightweight yet effective framework for enhancing large language models (LLMs) in deep search tasks. It was presented in the paper SimpleDeepSearcher: Deep Information Seeking via Web-Powered Reasoning Trajectory Synthesis.

Code: https://github.com/RUCAIBox/SimpleDeepSearcher

Model description

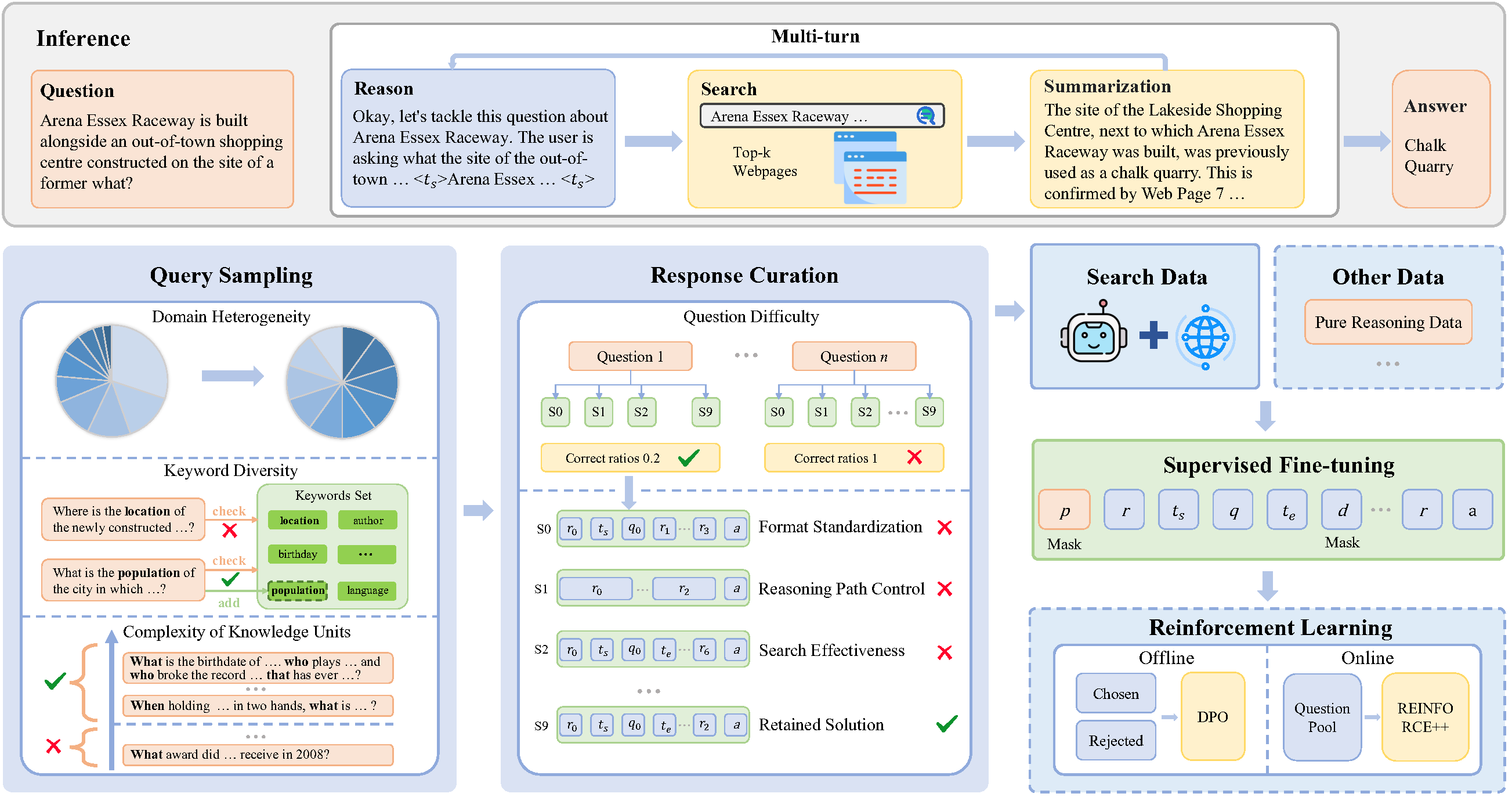

SimpleDeepSearcher addresses critical limitations in existing retrieval-augmented generation (RAG) systems for complex deep search scenarios. It tackles the lack of high-quality training trajectories and the distributional mismatches in simulated environments, as well as prohibitive computational costs.

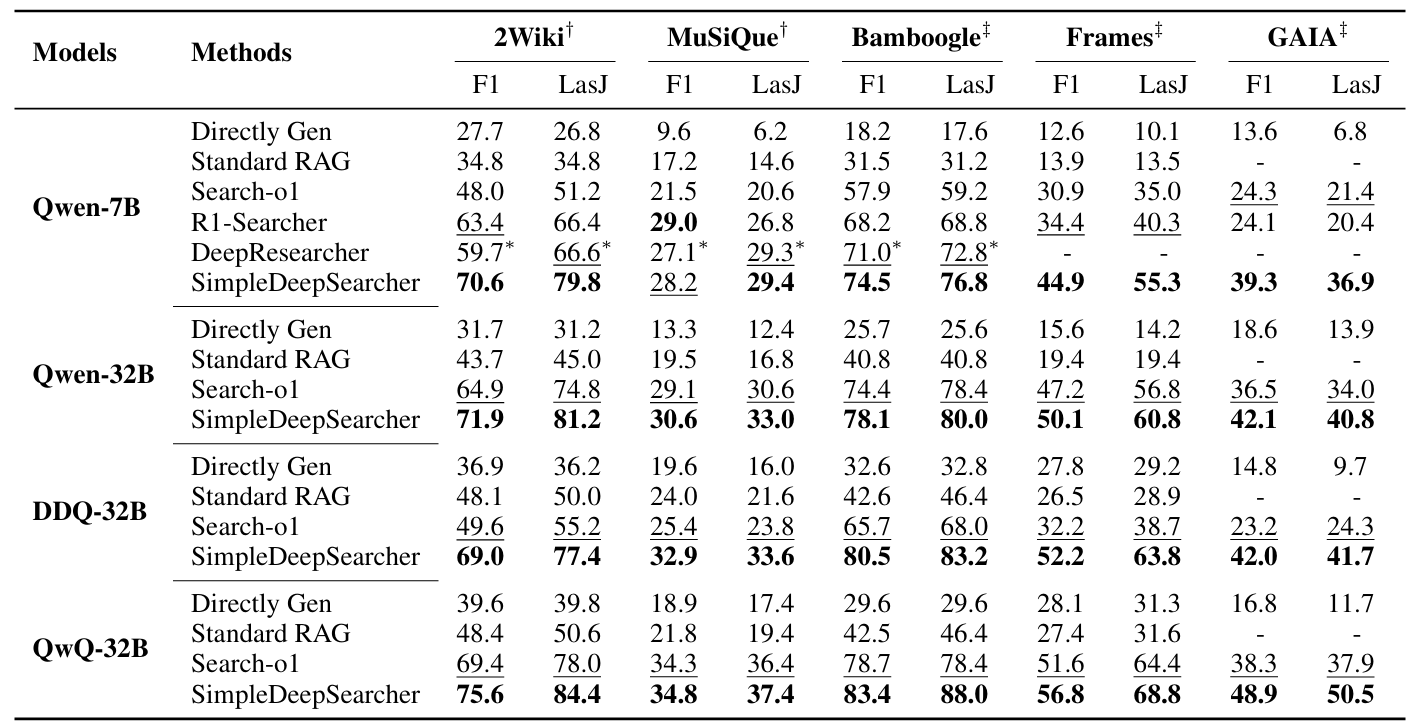

This framework strategically engineers data by synthesizing high-quality training data, simulating realistic user interactions in live web search environments. This is coupled with a multi-criteria curation strategy that optimizes the diversity and quality of both input and output. Experiments on five benchmarks demonstrate that supervised fine-tuning (SFT) on only 871 curated samples yields significant improvements over RL-based baselines.

Online-Searcher-QwQ-32B is a 32B model, likely based on a Qwen2 backbone as indicated in its config.json, fine-tuned within this SimpleDeepSearcher framework. Our work establishes SFT as a viable pathway by systematically addressing the data-scarce bottleneck, offering practical insights for efficient deep search systems.

Key Contributions

- A real web-based data synthesis framework that simulates realistic user search behaviors, generating multi-turn reasoning and search trajectories.

- A multi-criteria data curation strategy that jointly optimizes both input question selection and output response filtering through orthogonal filtering dimensions.

- Experimental results demonstrate that SFT on only 871 samples enables SimpleDeepSearcher to outperform strong baselines (especially RL-based baselines) on both in-domain and out-of-domain benchmarks.

Overall Performance

Framework Overview

Intended uses & limitations

This model is primarily intended for research and development in areas related to deep information seeking, web-powered reasoning, retrieval-augmented generation (RAG) systems, and multi-step complex reasoning tasks. It is designed to be a lightweight yet effective solution for scenarios requiring iterative information retrieval from the web.

Limitations: While SimpleDeepSearcher demonstrates strong performance with high data efficiency using a small curated dataset, its effectiveness in highly dynamic or adversarial web environments may require further evaluation. The model's performance relies on the quality and diversity of its synthesized training trajectories.

Training and evaluation data

The model was trained on a high-quality dataset of 871 curated samples. This training data was synthesized by simulating realistic user interactions within live web search environments. A multi-criteria curation strategy was applied to optimize both input question selection and output response filtering, ensuring data diversity and quality across various domains.

Sample Usage

For detailed instructions on how to use SimpleDeepSearcher for inference or training, please refer to the Quick Start section in the official GitHub repository. The repository provides scripts for environment setup, data construction, and inference generation.

Training procedure

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Use adamw_torch with betas=(0.9,0.999) and epsilon=1e-08 and optimizer_args=No additional optimizer arguments

- lr_scheduler_type: linear

- num_epochs: 3.0

Framework versions

- Transformers 4.46.3

- Pytorch 2.5.1+cu124

- Datasets 2.19.0

- Tokenizers 0.20.3

Citation

If you find our work useful, please cite our paper:

@article{sun2025simpledeepsearcher,

title={SimpleDeepSearcher: Deep Information Seeking via Web-Powered Reasoning Trajectory Synthesis},

author={Sun, Shuang and Song, Huatong and Wang, Yuhao and Ren, Ruiyang and Jiang, Jinhao and Zhang, Junjie and Bai, Fei and Deng, Jia and Zhao, Wayne Xin and Liu, Zheng and others},

journal={arXiv preprint arXiv:2505.16834},

year={2025}

}

License

This project is released under the MIT License.