Add comprehensive model card for Legal$\Delta$

#1

by nielsr HF Staff - opened

README.md

ADDED

|

@@ -0,0 +1,116 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

pipeline_tag: text-generation

|

| 4 |

+

library_name: transformers

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

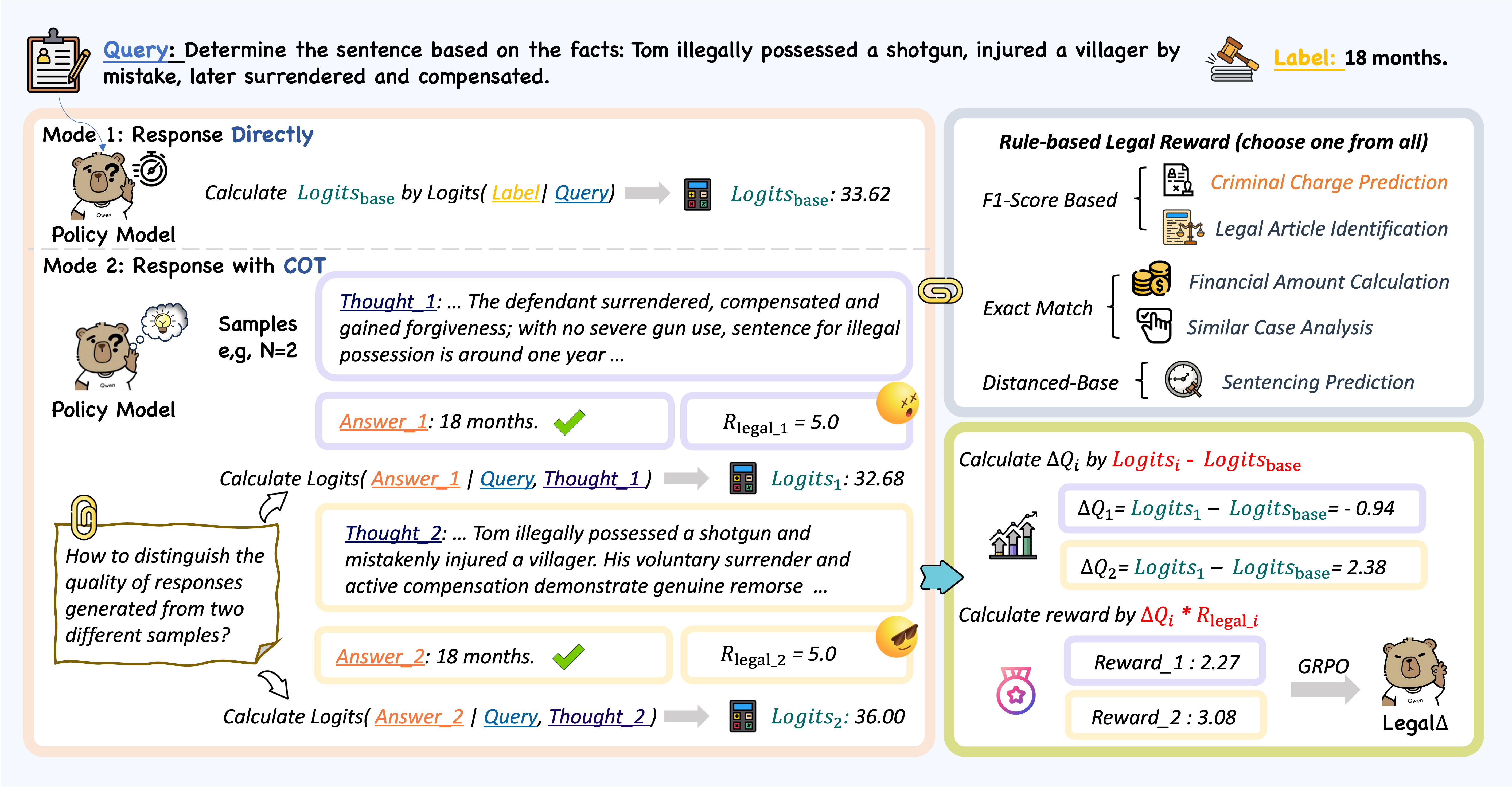

# Legal$\Delta$: Enhancing Legal Reasoning in LLMs via Reinforcement Learning with Chain-of-Thought Guided Information Gain

|

| 8 |

+

|

| 9 |

+

Legal$\Delta$ is a novel reinforcement learning framework designed to enhance legal reasoning in Large Language Models (LLMs) through chain-of-thought guided information gain. It addresses the critical challenge of existing legal LLMs that often struggle to generate reliable and interpretable reasoning processes, frequently defaulting to direct answers without explicit multi-step reasoning. Legal$\Delta$ promotes the acquisition of meaningful reasoning patterns, ensuring more robust and trustworthy legal judgments without relying on labeled preference data.

|

| 10 |

+

|

| 11 |

+

- 📚 Paper: [Legal$\Delta$: Enhancing Legal Reasoning in LLMs via Reinforcement Learning with Chain-of-Thought Guided Information Gain](https://huggingface.co/papers/2508.12281)

|

| 12 |

+

- 💻 Code: [https://github.com/NEUIR/LegalDelta](https://github.com/NEUIR/LegalDelta)

|

| 13 |

+

|

| 14 |

+

## Abstract

|

| 15 |

+

|

| 16 |

+

Legal Artificial Intelligence (LegalAI) has achieved notable advances in automating judicial decision-making with the support of Large Language Models (LLMs). However, existing legal LLMs still struggle to generate reliable and interpretable reasoning processes. They often default to fast-thinking behavior by producing direct answers without explicit multi-step reasoning, limiting their effectiveness in complex legal scenarios that demand rigorous justification. To address this challenge, we propose Legal$\Delta$, a reinforcement learning framework designed to enhance legal reasoning through chain-of-thought guided information gain. During training, Legal$\Delta$ employs a dual-mode input setup-comprising direct answer and reasoning-augmented modes-and maximizes the information gain between them. This encourages the model to acquire meaningful reasoning patterns rather than generating superficial or redundant explanations. Legal$\Delta$ follows a two-stage approach: (1) distilling latent reasoning capabilities from a powerful Large Reasoning Model (LRM), DeepSeek-R1, and (2) refining reasoning quality via differential comparisons, combined with a multidimensional reward mechanism that assesses both structural coherence and legal-domain specificity. Experimental results on multiple legal reasoning tasks demonstrate that Legal$\Delta$ outperforms strong baselines in both accuracy and interpretability. It consistently produces more robust and trustworthy legal judgments without relying on labeled preference data. All code and data will be released at this https URL .

|

| 17 |

+

|

| 18 |

+

## Overview

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

## Usage

|

| 22 |

+

|

| 23 |

+

The provided weights are LoRA checkpoints. **Before using this model for inference or evaluation, you must merge these LoRA checkpoints with the base model, such as [Qwen2.5-14B-Instruct](https://huggingface.co/Qwen/Qwen2.5-14B-Instruct).**

|

| 24 |

+

|

| 25 |

+

Please follow the detailed setup and merging instructions provided in the [official GitHub repository](https://github.com/NEUIR/LegalDelta).

|

| 26 |

+

|

| 27 |

+

### Installation

|

| 28 |

+

Clone the repository and set up the recommended Conda environments:

|

| 29 |

+

|

| 30 |

+

```bash

|

| 31 |

+

git clone https://github.com/NEUIR/LegalDelta.git

|

| 32 |

+

cd LegalDelta

|

| 33 |

+

|

| 34 |

+

# For model inference

|

| 35 |

+

conda env create -n qwen_inf -f inference_environment.yml

|

| 36 |

+

conda activate qwen_inf

|

| 37 |

+

|

| 38 |

+

# For model training (if you intend to fine-tune further)

|

| 39 |

+

conda create -n legal_delta python=3.11

|

| 40 |

+

conda activate legal_delta

|

| 41 |

+

pip install -r requirements.txt --force-reinstall --no-deps --no-cache-dir

|

| 42 |

+

```

|

| 43 |

+

|

| 44 |

+

### Merging LoRA Checkpoints

|

| 45 |

+

After setting up the environment, download the LoRA checkpoints from the Hugging Face Hub (this repository) and the base model (e.g., Qwen2.5-14B-Instruct). Then, use the provided script in the GitHub repository to merge them:

|

| 46 |

+

|

| 47 |

+

```bash

|

| 48 |

+

# Assuming you are in the LegalDelta directory from the git clone

|

| 49 |

+

python src/merge_lora.py

|

| 50 |

+

```

|

| 51 |

+

Refer to the GitHub repository for detailed arguments and usage of `merge_lora.py`.

|

| 52 |

+

|

| 53 |

+

### Inference with the Merged Model

|

| 54 |

+

Once the LoRA checkpoints are merged into a full model, you can load and use it with the `transformers` library:

|

| 55 |

+

|

| 56 |

+

```python

|

| 57 |

+

import torch

|

| 58 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 59 |

+

|

| 60 |

+

# Replace "path/to/your/merged/LegalDelta_model" with the actual path to your merged checkpoint

|

| 61 |

+

model_path = "path/to/your/merged/LegalDelta_model"

|

| 62 |

+

|

| 63 |

+

# Load tokenizer and model

|

| 64 |

+

tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

|

| 65 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 66 |

+

model_path,

|

| 67 |

+

torch_dtype=torch.bfloat16, # or torch.float16 depending on your hardware

|

| 68 |

+

device_map="auto",

|

| 69 |

+

trust_remote_code=True

|

| 70 |

+

).eval()

|

| 71 |

+

|

| 72 |

+

# Example for a legal reasoning query (adjust format as per model's chat template/prompting style)

|

| 73 |

+

prompt = "<|im_start|>system\

|

| 74 |

+

You are a helpful legal assistant.\

|

| 75 |

+

<|im_end|>\

|

| 76 |

+

<|im_start|>user\

|

| 77 |

+

What is the legal consequence of breach of contract?\

|

| 78 |

+

<|im_end|>\

|

| 79 |

+

<|im_start|>assistant\

|

| 80 |

+

"

|

| 81 |

+

|

| 82 |

+

input_ids = tokenizer.encode(prompt, return_tensors="pt").to(model.device)

|

| 83 |

+

|

| 84 |

+

# Generate response

|

| 85 |

+

output_ids = model.generate(

|

| 86 |

+

input_ids,

|

| 87 |

+

max_new_tokens=512,

|

| 88 |

+

do_sample=True,

|

| 89 |

+

temperature=0.7,

|

| 90 |

+

top_p=0.8,

|

| 91 |

+

repetition_penalty=1.05,

|

| 92 |

+

eos_token_id=tokenizer.eos_token_id,

|

| 93 |

+

pad_token_id=tokenizer.pad_token_id

|

| 94 |

+

)

|

| 95 |

+

|

| 96 |

+

generated_text = tokenizer.decode(output_ids[0], skip_special_tokens=True)

|

| 97 |

+

print(generated_text)

|

| 98 |

+

```

|

| 99 |

+

|

| 100 |

+

For comprehensive evaluation scripts and specific task formats (e.g., Lawbench, Lexeval, Disclaw), please refer to the [official GitHub repository](https://github.com/NEUIR/LegalDelta).

|

| 101 |

+

|

| 102 |

+

## Citation

|

| 103 |

+

|

| 104 |

+

If you find this work useful, please cite our paper:

|

| 105 |

+

|

| 106 |

+

```bibtex

|

| 107 |

+

@misc{dai2025legaldeltaenhancinglegalreasoning,

|

| 108 |

+

title={Legal$\Delta$: Enhancing Legal Reasoning in LLMs via Reinforcement Learning with Chain-of-Thought Guided Information Gain},

|

| 109 |

+

author={Xin Dai and Buqiang Xu and Zhenghao Liu and Yukun Yan and Huiyuan Xie and Xiaoyuan Yi and Shuo Wang and Ge Yu},

|

| 110 |

+

year={2025},

|

| 111 |

+

eprint={2508.12281},

|

| 112 |

+

archivePrefix={arXiv},

|

| 113 |

+

primaryClass={cs.CL},

|

| 114 |

+

url={https://arxiv.org/abs/2508.12281},

|

| 115 |

+

}

|

| 116 |

+

```

|