PRISM-TP2M-1.4B

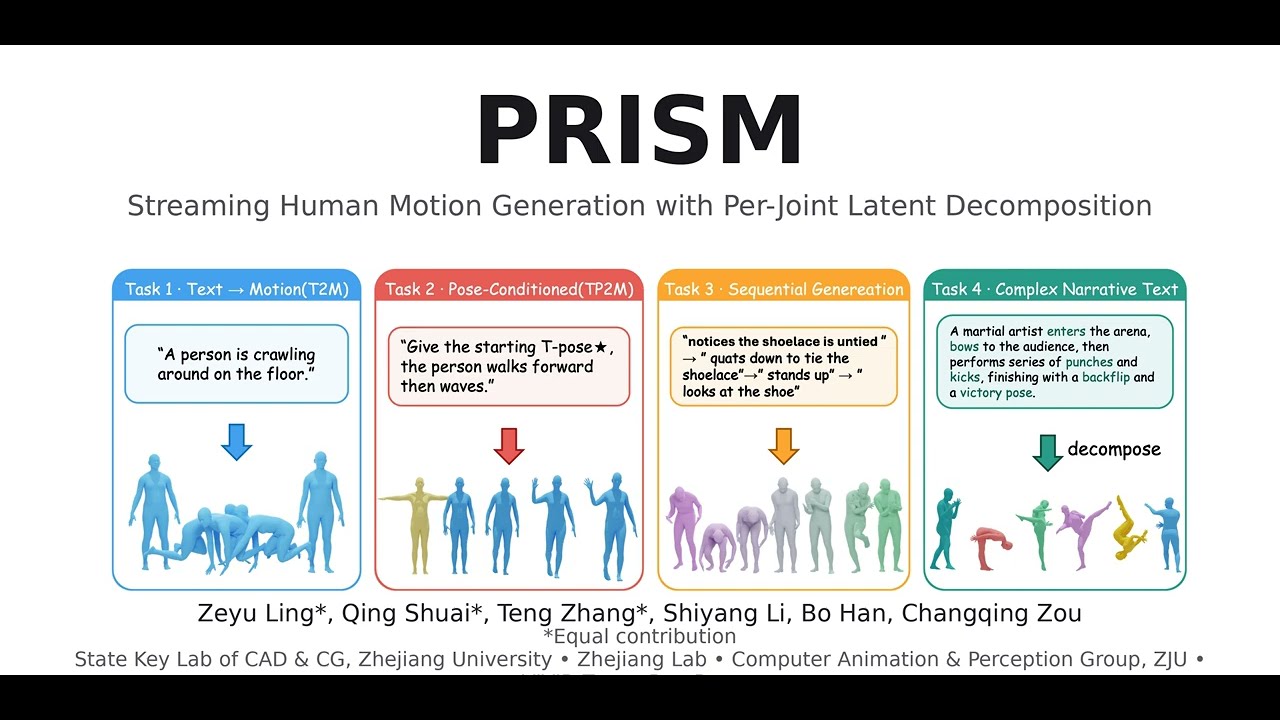

PRISM: Streaming Human Motion Generation with Per-Joint Latent Decomposition

Zeyu Ling, Qing Shuai, Teng Zhang, Shiyang Li, Bo Han, Changqing Zou

Abstract

Text-to-motion generation has advanced rapidly, yet two challenges persist. First, existing motion autoencoders compress each frame into a single monolithic latent vector, entangling trajectory and per-joint rotations in an unstructured representation that downstream generators struggle to model faithfully. Second, text-to-motion, pose-conditioned generation, and long-horizon sequential synthesis typically require separate models or task-specific mechanisms, with autoregressive approaches suffering from severe error accumulation over extended rollouts.

We present PRISM, addressing each challenge with a dedicated contribution. (1) A joint-factorized motion latent space: each body joint occupies its own token, forming a structured 2D grid (time × joints) compressed by a causal VAE with forward-kinematics supervision. This simple change to the latent space, without modifying the generator, substantially improves generation quality, revealing that latent space design has been an underestimated bottleneck. (2) Noise-free condition injection: each latent token carries its own timestep embedding, allowing conditioning frames to be injected as clean tokens (timestep 0) while the remaining tokens are denoised. This unifies text-to-motion and pose-conditioned generation in a single model, and directly enables autoregressive segment chaining for streaming synthesis. Self-forcing training further suppresses drift in long rollouts. With these two components, we train a single motion generation foundation model that seamlessly handles text-to-motion, pose-conditioned generation, autoregressive sequential generation, and narrative motion composition, achieving state-of-the-art on HumanML3D, MotionHub, BABEL, and a 50-scenario user study.

Demo

Model Details

| Architecture | Flow-matching DiT transformer with causal spatio-temporal Motion VAE |

| Text encoder | UMT5 (T5-style) |

| Parameters | ~1.4B |

| Output | SMPL/SMPL-X body parameters (22 joints, rotation_6d, 30 fps) |

Download

This Hugging Face repo contains the pretrained weights. Download to use locally:

pip install huggingface_hub

huggingface-cli download ZeyuLing/PRISM-TP2M-1.4B --local-dir pretrained_models/prism_1.4b

Or in Python:

from huggingface_hub import snapshot_download

snapshot_download("ZeyuLing/PRISM-TP2M-1.4B", local_dir="pretrained_models/prism_1.4b")

For full inference scripts and setup, use the GitHub repository (designed to run inside versatilemotion).

Usage

Load from local checkpoint:

from mmotion.pipelines.prism_from_pretrained import load_prism_pipeline_from_pretrained

pipe = load_prism_pipeline_from_pretrained("path/to/pretrained_models/prism_1.4b")

Text-to-Motion (single segment):

smplx_dict = pipe(

prompts="A person walks forward and waves.",

negative_prompt="",

num_frames_per_segment=129,

num_joints=23,

guidance_scale=5.0,

)

Sequential multi-segment:

smplx_dict = pipe(

prompts=["A person waves.", "A person walks.", "A person bows."],

num_frames_per_segment=[97, 129, 97],

guidance_scale=5.0,

)

Pose-conditioned (TP2M):

smplx_dict = pipe(

prompts="The person stands up and walks.",

first_frame_motion_path="/path/to/first_frame.npz",

num_frames_per_segment=129,

guidance_scale=5.0,

)

Requirements

- Python ≥ 3.9

- PyTorch (CUDA recommended)

- transformers, diffusers, einops, mmengine

- SMPL/SMPL-X body model (for full mesh rendering)

Citation

@article{ling2026prism,

title={PRISM: Streaming Human Motion Generation with Per-Joint Latent Decomposition},

author={Ling, Zeyu and Shuai, Qing and Zhang, Teng and Li, Shiyang and Han, Bo and Zou, Changqing},

journal={arXiv preprint arXiv:2603.08590},

year={2026},

url={https://arxiv.org/abs/2603.08590}

}

License

See the main repository for license terms.