Update README.md

Browse files

README.md

CHANGED

|

@@ -101,13 +101,17 @@ pipeline_tag: text-classification

|

|

| 101 |

metrics:

|

| 102 |

- accuracy

|

| 103 |

- f1

|

|

|

|

| 104 |

tags:

|

| 105 |

- sentiment-analysis

|

| 106 |

- thai

|

| 107 |

- classification

|

| 108 |

- fine-tuned

|

| 109 |

- multilingual

|

| 110 |

-

new_version: ZombitX64/

|

|

|

|

|

|

|

|

|

|

| 111 |

---

|

| 112 |

|

| 113 |

# MultiSent-E5

|

|

@@ -141,7 +145,7 @@ The model is particularly effective at:

|

|

| 141 |

|

| 142 |

### Model Sources

|

| 143 |

|

| 144 |

-

* **Repository:** [https://huggingface.co/ZombitX64/Thai-sentiment-e5](https://huggingface.co/ZombitX64/

|

| 145 |

* **Base Model:** [https://huggingface.co/intfloat/multilingual-e5-large](https://huggingface.co/intfloat/multilingual-e5-large)

|

| 146 |

|

| 147 |

## Uses

|

|

@@ -359,29 +363,6 @@ The model showed excellent convergence with minimal overfitting:

|

|

| 359 |

- Accuracy plateaued at 99.63% from epoch 3 onwards

|

| 360 |

- Early convergence suggests effective transfer learning from the base model

|

| 361 |

|

| 362 |

-

## Evaluation

|

| 363 |

-

|

| 364 |

-

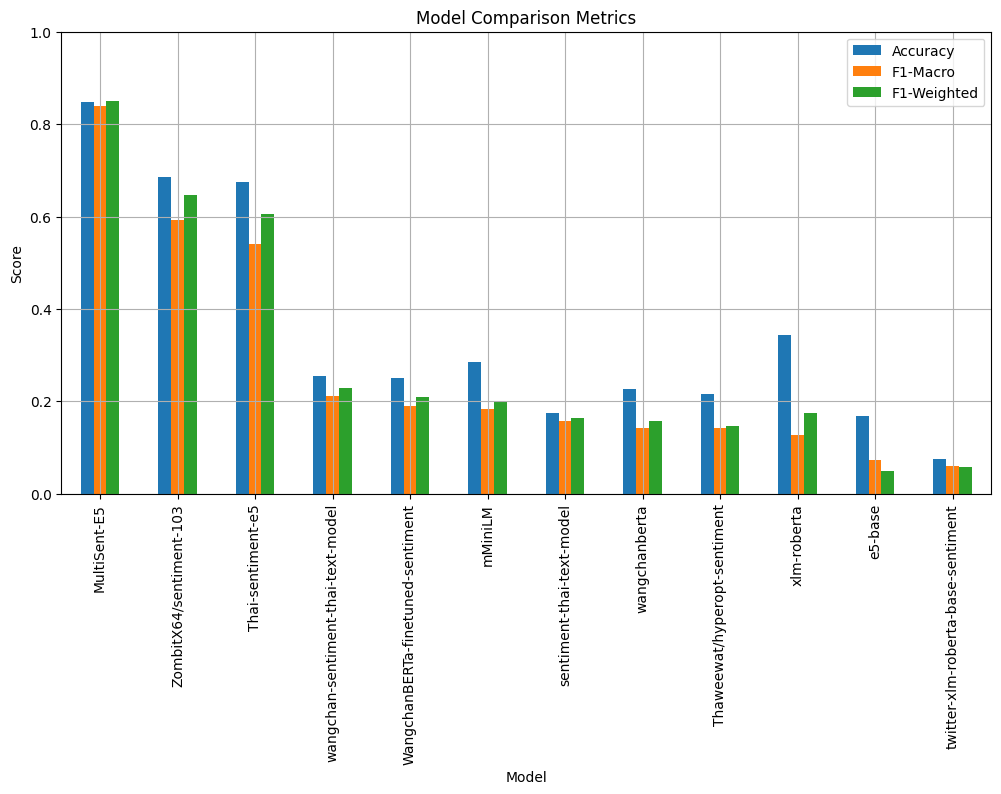

### Model Comparison Metrics

|

| 365 |

-

|

| 366 |

-

|

| 367 |

-

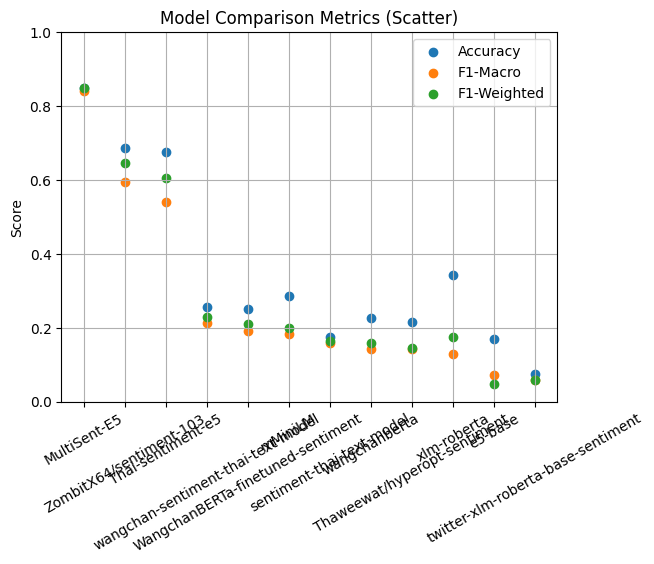

### Model Comparison Metrics (Scatter)

|

| 368 |

-

|

| 369 |

-

|

| 370 |

-

| 🥇อันดับ | ชื่อโมเดล | Accuracy (%) | หมายเหตุ |

|

| 371 |

-

| -------- | ---------------------------------- | ------------ | ------------------------------ |

|

| 372 |

-

| 1 | **MultiSent-E5** | **84.88** | ★ โมเดลที่แม่นยำที่สุด |

|

| 373 |

-

| 2 | ZombitX64/sentiment-103 | 68.60 | รองชนะเลิศ |

|

| 374 |

-

| 3 | Thai-sentiment-e5 | 67.44 | ดีเด่นด้านความเข้าใจภาษาไทย |

|

| 375 |

-

| 4 | xlm-roberta | 34.30 | multilingual baseline |

|

| 376 |

-

| 5 | mMiniLM | 28.49 | ขนาดเล็ก ใช้ทรัพยากรน้อย |

|

| 377 |

-

| 6 | wangchan-sentiment-thai-text-model | 25.58 | ภาษาไทยโดยเฉพาะ |

|

| 378 |

-

| 7 | WangchanBERTa-finetuned-sentiment | 25.00 | fine-tuned Thai BERT |

|

| 379 |

-

| 8 | wangchanberta | 22.67 | Thai BERT base |

|

| 380 |

-

| 9 | Thaweewat/hyperopt-sentiment | 21.51 | ปรับจูนด้วย hyperopt |

|

| 381 |

-

| 10 | sentiment-thai-text-model | 17.44 | baseline keyword model |

|

| 382 |

-

| 11 | e5-base | 16.86 | multilingual encoder |

|

| 383 |

-

| 12 | twitter-xlm-roberta-base-sentiment | 7.56 | fine-tuned บน Twitter (อังกฤษ) |

|

| 384 |

-

|

| 385 |

============================================================

|

| 386 |

Evaluating Model: MultiSent-E5

|

| 387 |

============================================================

|

|

@@ -936,16 +917,6 @@ ZombitX64, K. Janutsaha, and C. Saengwichain, "MultiSent-E5: A Fine-tuned Multil

|

|

| 936 |

|

| 937 |

If you use this model in your research or applications, please cite both this model and the base model:

|

| 938 |

|

| 939 |

-

```bibtex

|

| 940 |

-

@misc{wang2022text,

|

| 941 |

-

title={Text Embeddings by Weakly-Supervised Contrastive Pre-training},

|

| 942 |

-

author={Liang Wang and Nan Yang and Xiaolong Huang and Binxing Jiao and Linjun Yang and Daxin Jiang and Rangan Majumder and Furu Wei},

|

| 943 |

-

year={2022},

|

| 944 |

-

eprint={2212.03533},

|

| 945 |

-

archivePrefix={arXiv},

|

| 946 |

-

primaryClass={cs.CL}

|

| 947 |

-

}

|

| 948 |

-

```

|

| 949 |

```bibtex

|

| 950 |

@article{wang2024multilingual,

|

| 951 |

title={Multilingual E5 Text Embeddings: A Technical Report},

|

|

@@ -990,5 +961,5 @@ Your feedback helps improve this model. Please report:

|

|

| 990 |

---

|

| 991 |

|

| 992 |

*Last updated: 2024*

|

| 993 |

-

*Model version: 1.

|

| 994 |

*Documentation version: 2.0*

|

|

|

|

| 101 |

metrics:

|

| 102 |

- accuracy

|

| 103 |

- f1

|

| 104 |

+

- bertscore

|

| 105 |

tags:

|

| 106 |

- sentiment-analysis

|

| 107 |

- thai

|

| 108 |

- classification

|

| 109 |

- fine-tuned

|

| 110 |

- multilingual

|

| 111 |

+

new_version: ZombitX64/MultiSent-E5-Pro

|

| 112 |

+

datasets:

|

| 113 |

+

- ZombitX64/SEACrowdWongnaiReviews

|

| 114 |

+

- ZombitX64/Sentiment-Benchmark

|

| 115 |

---

|

| 116 |

|

| 117 |

# MultiSent-E5

|

|

|

|

| 145 |

|

| 146 |

### Model Sources

|

| 147 |

|

| 148 |

+

* **Repository:** [https://huggingface.co/ZombitX64/Thai-sentiment-e5](https://huggingface.co/ZombitX64/ZombitX64/MultiSent-E5-Pro)

|

| 149 |

* **Base Model:** [https://huggingface.co/intfloat/multilingual-e5-large](https://huggingface.co/intfloat/multilingual-e5-large)

|

| 150 |

|

| 151 |

## Uses

|

|

|

|

| 363 |

- Accuracy plateaued at 99.63% from epoch 3 onwards

|

| 364 |

- Early convergence suggests effective transfer learning from the base model

|

| 365 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 366 |

============================================================

|

| 367 |

Evaluating Model: MultiSent-E5

|

| 368 |

============================================================

|

|

|

|

| 917 |

|

| 918 |

If you use this model in your research or applications, please cite both this model and the base model:

|

| 919 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 920 |

```bibtex

|

| 921 |

@article{wang2024multilingual,

|

| 922 |

title={Multilingual E5 Text Embeddings: A Technical Report},

|

|

|

|

| 961 |

---

|

| 962 |

|

| 963 |

*Last updated: 2024*

|

| 964 |

+

*Model version: 1.1*

|

| 965 |

*Documentation version: 2.0*

|