This is a sentence-transformers model finetuned from BAAI/bge-m3. It maps sentences & paragraphs to a 1024-dimensional dense vector space and can be used for semantic textual similarity, semantic search, paraphrase mining, text classification, clustering, and more.

from sentence_transformers import SentenceTransformer

# Download from the 🤗 Hub

model = SentenceTransformer("aaa961/finetuned-bge-m3-base-en")

# Run inference

sentences = [

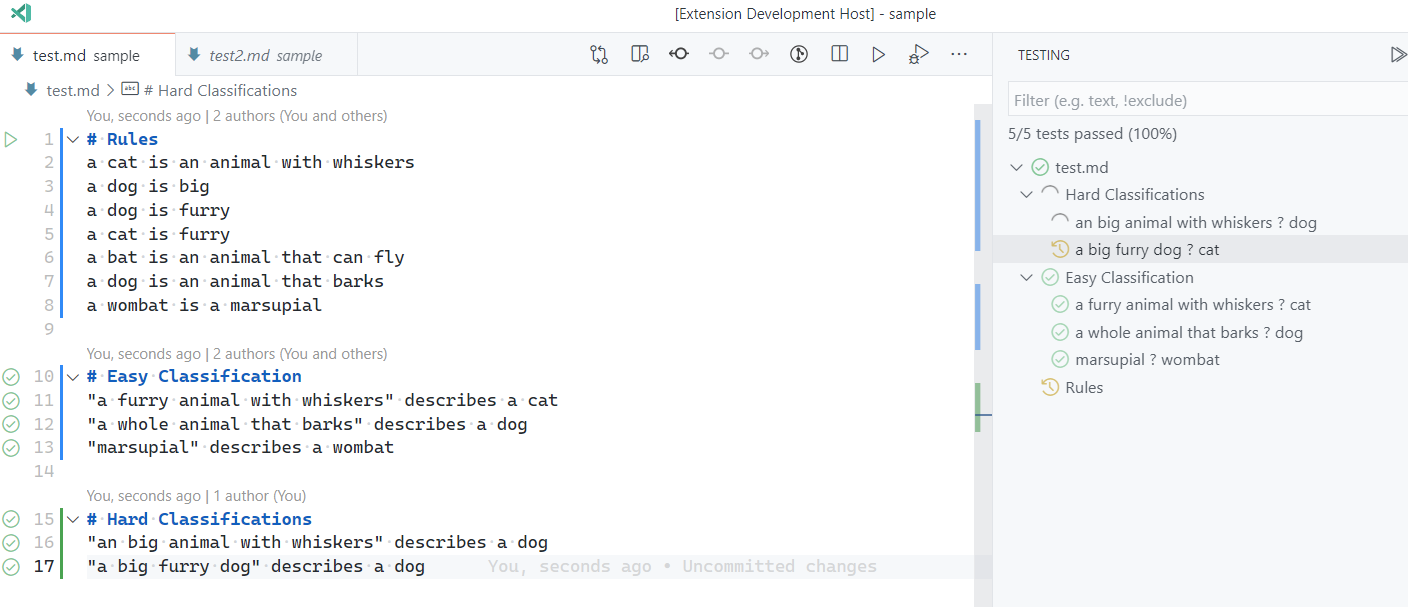

'Shell integration: bash and zsh don\'t serialize \\n and ; characters Part of https://github.com/microsoft/vscode/issues/155639\r\n\r\nRepro:\r\n\r\n1. Open a bash or zsh session\r\n2. Run:\r\n ```sh\r\n echo "a\r\n … b"\r\n ```\r\n \r\n3. ctrl+alt+r to run recent command, select the last command, 🐛 it\'s run without the new line\r\n \r\n',

'TreeView state out of sync Testing #117304\r\n\r\nRepro: Not Sure\r\n\r\nTest state shows passed in file but still running in tree view.\r\n\r\n\r\n',

'Setting icon and color in createTerminal API no longer works correctly See https://github.com/fabiospampinato/vscode-terminals/issues/77\r\n\r\nLooks like the default tab color/icon change probably regressed this.\r\n\r\n',

]

embeddings = model.encode(sentences)

print(embeddings.shape)

# [3, 1024]# Get the similarity scores for the embeddings

similarities = model.similarity(embeddings, embeddings)

print(similarities)

# tensor([[1.0000, 0.4264, 0.4315],# [0.4264, 1.0000, 0.4278],# [0.4315, 0.4278, 1.0000]])

Git Branch Picker Race Condition If I paste the branch too quickly and then press enter, it does not switch to it, but creates a new branch.

This breaks muscle memory, as it works when you do it slowly.

Once loading completes, it should select the branch again.

218

links aren't discoverable to screen reader users in markdown documents They're only discoverable via visual distinction and the action that can be taken (IE opening them) is only indicated in the tooltip AFAICT.

Approximate statistics based on the first 70 samples:

texts

label

type

string

int

details

min: 58 tokens

mean: 303.57 tokens

max: 864 tokens

1: ~2.86%

2: ~2.86%

6: ~2.86%

11: ~5.71%

14: ~2.86%

23: ~2.86%

32: ~5.71%

35: ~2.86%

39: ~2.86%

40: ~2.86%

46: ~2.86%

54: ~2.86%

83: ~2.86%

102: ~2.86%

104: ~4.29%

111: ~2.86%

122: ~2.86%

123: ~2.86%

125: ~2.86%

145: ~2.86%

146: ~2.86%

162: ~2.86%

166: ~2.86%

169: ~2.86%

184: ~2.86%

188: ~2.86%

190: ~2.86%

200: ~2.86%

201: ~4.29%

203: ~2.86%

206: ~2.86%

217: ~2.86%

Samples:

texts

label

Ctrl+I stopped working after first hold+talk+release Testing #213355

Screencast shows that it seems to be in the wrong context and is trying to stop the session?

Repro was just asking "Testing testing" and then trying to ask something else

217

Ctrl + I does not work when chat input field has focus Testing #213355

Ctrl + I works in the editor and when I hold it, I get into speech mode. But when the chat input field (panel or inline chat) already has focus, Ctrl + I does not work.

(Connected to Windows through Remote Desktop in case that matters.)

217

Terminal renaming not functioning as expected in editor area

Does this issue occur when all extensions are disabled?: Yes

@inproceedings{reimers-2019-sentence-bert,

title = "Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks",

author = "Reimers, Nils and Gurevych, Iryna",

booktitle = "Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing",

month = "11",

year = "2019",

publisher = "Association for Computational Linguistics",

url = "https://arxiv.org/abs/1908.10084",

}

BatchSemiHardTripletLoss

@misc{hermans2017defense,

title={In Defense of the Triplet Loss for Person Re-Identification},

author={Alexander Hermans and Lucas Beyer and Bastian Leibe},

year={2017},

eprint={1703.07737},

archivePrefix={arXiv},

primaryClass={cs.CV}

}