CoPaw-Flash-9B

CoPaw-Flash is a lightweight model deeply optimized for the CoPaw autonomous agent scenario. Since its training phase, the model has been specifically refined for CoPaw tasks, delivering enhanced agentic performance in tool invocation, command execution, memory management, and multi-step planning.

Capability

The core strength of CoPaw-Flash stems from its native integration with the CoPaw ecosystem. We have constructed extensive, high-quality agent trajectory data sampled from real CoPaw environments, systematically enhancing the model's proficiency in high-frequency daily scenarios. Key features include:

- Active Memory Management: Autonomously identifies, stores, and retrieves persistent user preferences and task states, ensuring high logical consistency across multi-turn interactions.

- Native File Parsing: Optimized for terminal operations and file system orchestration. Excels at generating precise CLI commands and executing complex, multi-step file I/O tasks.

- Efficient Information Search: Enhanced for web-search tool invocation. Features precise search intent recognition and multi-step web navigation to effectively identify and query online information.

- Intelligent Guidance: Built-in awareness of the CoPaw feature map. Proactively suggests functional paths and troubleshooting based on real-time operational context.

Model Overview

CoPaw-Flash-2B/4B/9B is fine-tuned from Qwen3.5-2B/4B/9B, sharing the same architectural parameters.

- Type: Causal Language Model with Vision Encoder

- Training Stage: Post-training

- Number of Parameters: 2B/4B/9B

- Hidden Dimension: 2048/2560/4096

- Token Embedding: 248320 (Padded)

- Number of Layers: 24/32/32

- Hidden Layout: 6/8/8 × (3 × (Gated DeltaNet → FFN) → 1 × (Gated Attention → FFN))

- Gated DeltaNet:

- Number of Linear Attention Heads: 16/32/32 for V and 16/16/16 for QK

- Head Dimension: 128

- Gated Attention:

- Number of Attention Heads: 8/16/16 for Q and 2/4/4 for KV

- Head Dimension: 256

- Rotary Position Embedding Dimension: 64

- Feed Forward Network: Intermediate Dimension: 6144/9216/12288

- LM Output: 248320 (Tied to token embedding)

- Context Length: 262,144 tokens natively

Benchmark Results

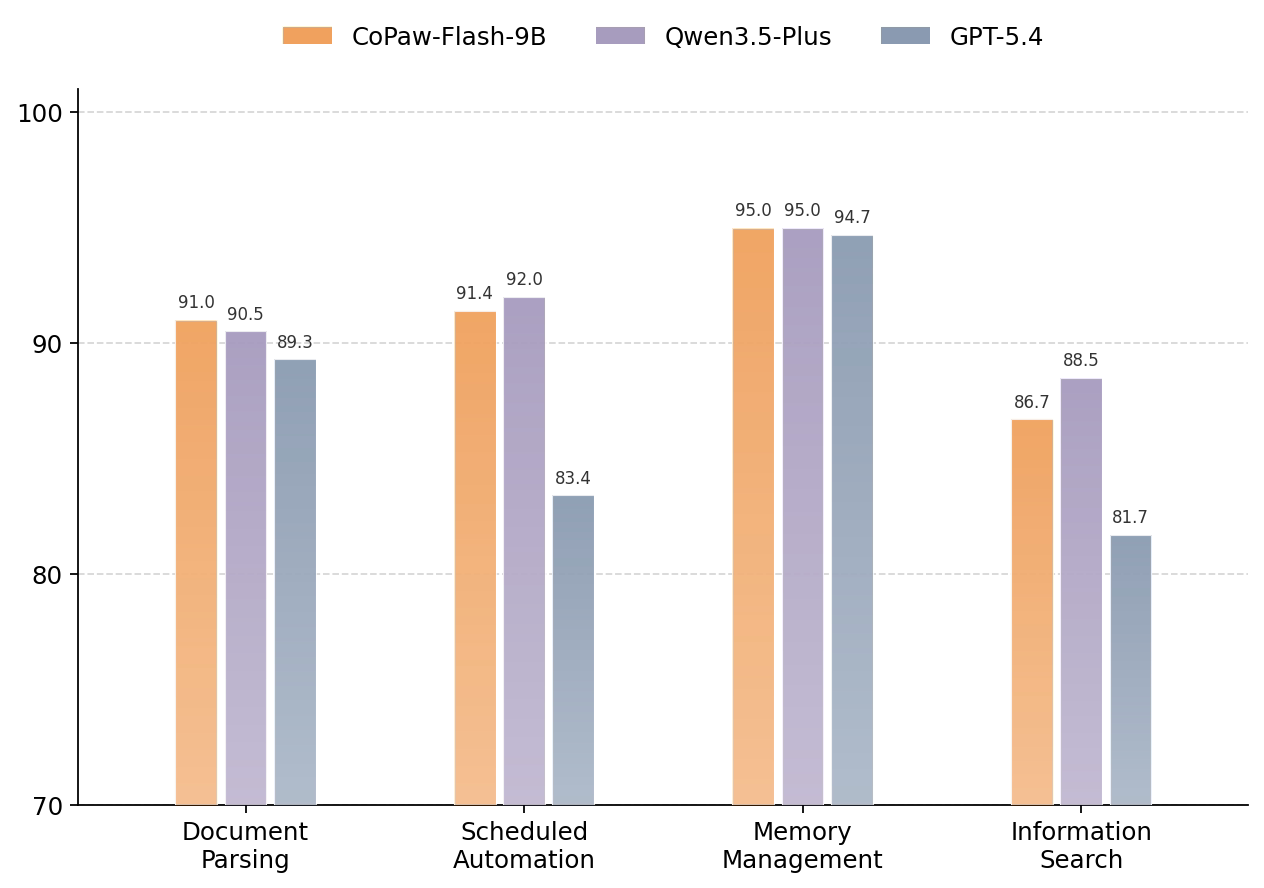

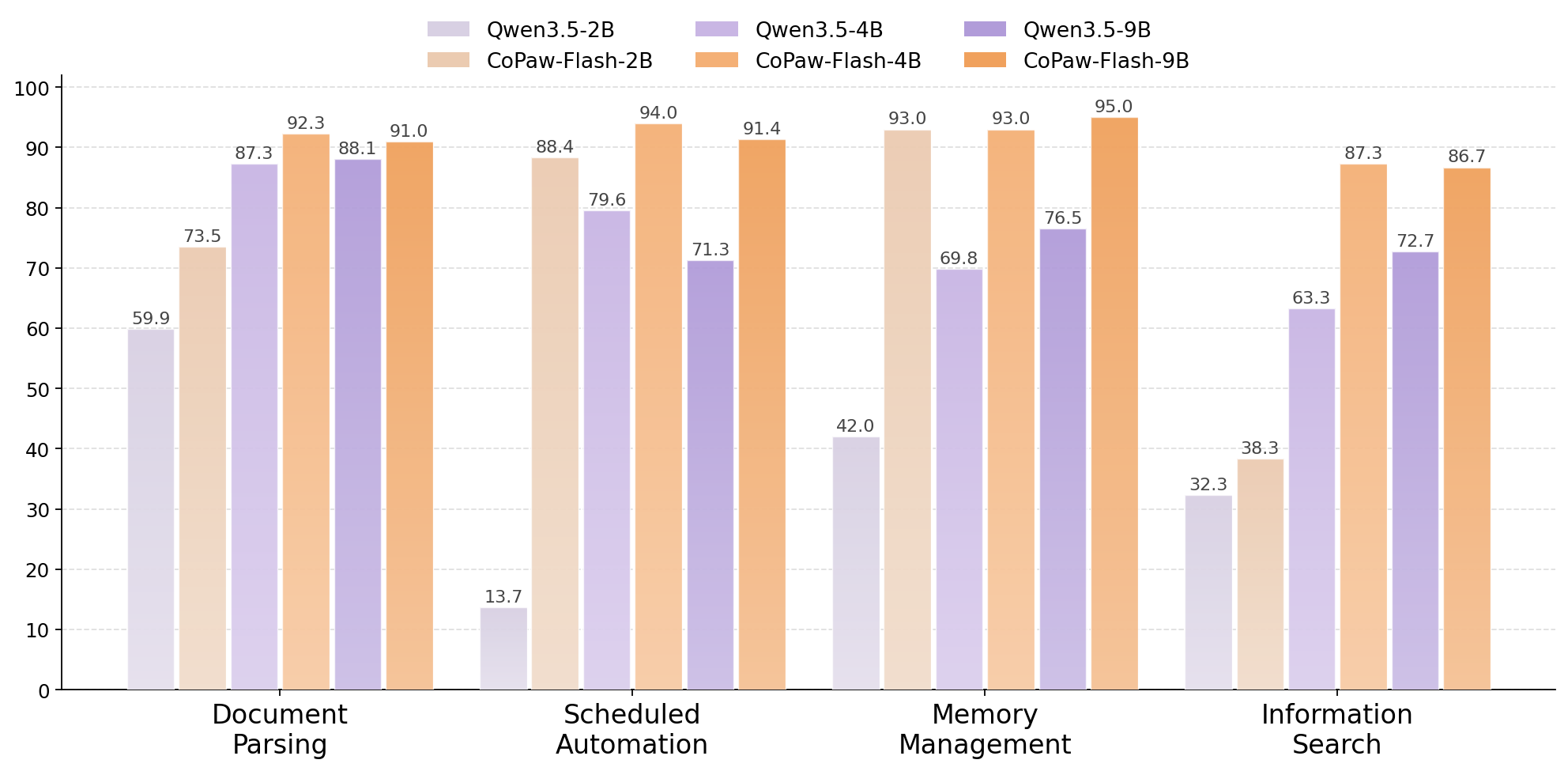

The complexity of CoPaw's context engineering and tool usage poses heightened challenges for model evaluation. To address this, we have developed a dedicated benchmark tailored to the CoPaw environment. This benchmark systematically evaluates model performance across five high-frequency usage scenarios, covering key operational dimensions.

Results indicate that CoPaw-Flash delivers substantial improvements across multiple task categories, achieving performance comparable to leading flagship models—all while maintaining significantly lower resource requirements.

Figure 1: CoPaw-Flash-9B compared with other models.

Figure 2: CoPaw-Flash-2B/4B/9B compared with their respective baseline models.

Quickstart

Serving CoPaw-Flash

CoPaw-Flash can be served via APIs using popular inference frameworks. Below are example commands to launch OpenAI-compatible API servers for CoPaw-Flash.

vLLM

vLLM is a high-throughput and memory-efficient inference and serving engine for LLMs. Since CoPaw-Flash leverages the Qwen3.5 architecture, the latest vLLM version is required for optimal compatibility. You can install it in a fresh environment using:

uv pip install vllm --torch-backend=auto --extra-index-url https://wheels.vllm.ai/nightly

Note: For more detailed usage guides and advanced configurations, please refer to the vLLM Documentation and the Qwen3.5 Documentation.

The following command creates API endpoints at http://localhost:8000/v1. This example demonstrates launching the standard version with a maximum context length of 262,144 tokens using tensor parallelism across 8 GPUs.

Bash

vllm serve <your_model_path> \

--port 8000 \

--tensor-parallel-size 8 \

--max-model-len 262144 \

--reasoning-parser qwen3 \

--enable-auto-tool-choice \

--tool-call-parser qwen3_xml

Using CoPaw-Flash via Chat Completions API

Once the server is running, you can access CoPaw-Flash via standard HTTP requests or OpenAI-compatible SDKs.

Prerequisites

Ensure the OpenAI Python SDK is installed and your environment variables are configured:

pip install -U openai

# Set the following accordingly

export OPENAI_BASE_URL="http://localhost:8000/v1"

export OPENAI_API_KEY="EMPTY"

Text-Only Input Example

The following Python script demonstrates how to interact with the model using the OpenAI SDK:

from openai import OpenAI

# Configured by environment variables

client = OpenAI()

messages = [

{"role": "user", "content": "Hello, Copaw!"},

]

chat_response = client.chat.completions.create(

model=<your_model_path>,

messages=messages,

max_tokens=81920,

temperature=1.0,

top_p=0.95,

presence_penalty=1.5,

extra_body={

"top_k": 20,

},

)

print("Chat response:", chat_response)

Contact Us

CoPaw-Flash is developed by the AgentScope Team. If you would like to leave us a message, feel free to get in touch through the channels below.

- Downloads last month

- 72

Model tree for agentscope-ai/CoPaw-Flash-9B

Base model

Qwen/Qwen3.5-9B-Base