| This folder contains models trained for the two characters oyama mahiro and oyama mihari. | |

| Trigger words are | |

| - oyama mahiro | |

| - oyama mihari | |

| To get anime style you can add `aniscreen` | |

| At this point I feel like having oyama in the trigger is probably a bad idea because it seems to cause more character blending. | |

| ### Dataset | |

| Total size 338 | |

| screenshots 127 | |

| - Mahiro: 51 | |

| - Mihari: 46 | |

| - Mahiro + Mihari: 30 | |

| fanart 92 | |

| - Mahiro: 68 | |

| - Mihari: 8 | |

| - Mahiro + Mihari: 16 | |

| Regularization 119 | |

| For training the following repeat is used | |

| - 1 for Mahiro and reg | |

| - 2 for Mihari | |

| - 4 for Mahiro + Mihari | |

| ### Base model | |

| [NMFSAN](https://huggingface.co/Crosstyan/BPModel/blob/main/NMFSAN/README.md) | |

| ### LoRA | |

| Please refer to [LoRA Training Guide](https://rentry.org/lora_train) | |

| - training of text encoder turned on | |

| - network dimension 64 | |

| - learning rate scheduler constant | |

| - learning rate 1e-4 and 1e-5 (two separate runs) | |

| - batch size 7 | |

| - clip skip 2 | |

| - number of training epochs 45 | |

| ### Comparaison | |

| learning rate 1e-4 | |

|  | |

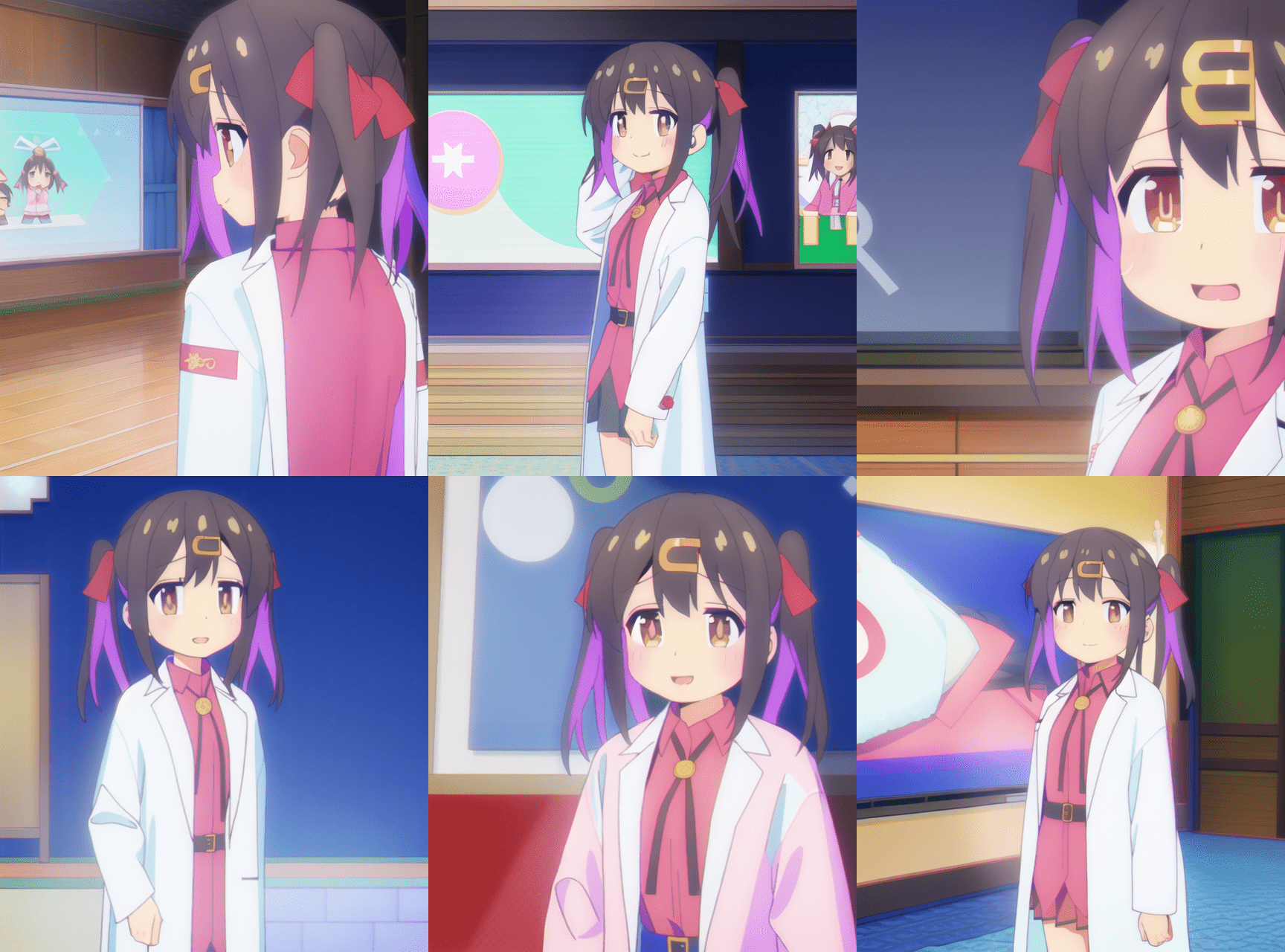

| learning rate 1e-5 | |

|  | |

| Normally with 2 repeats and 45 epochs we should have perfectly learned the character with dreambooth (using typically lr=1e-6), but here with lr=1e-5 it does not seem to work very well. lr=1e-4 produces quite correct results but there is a risk of overfitting. | |

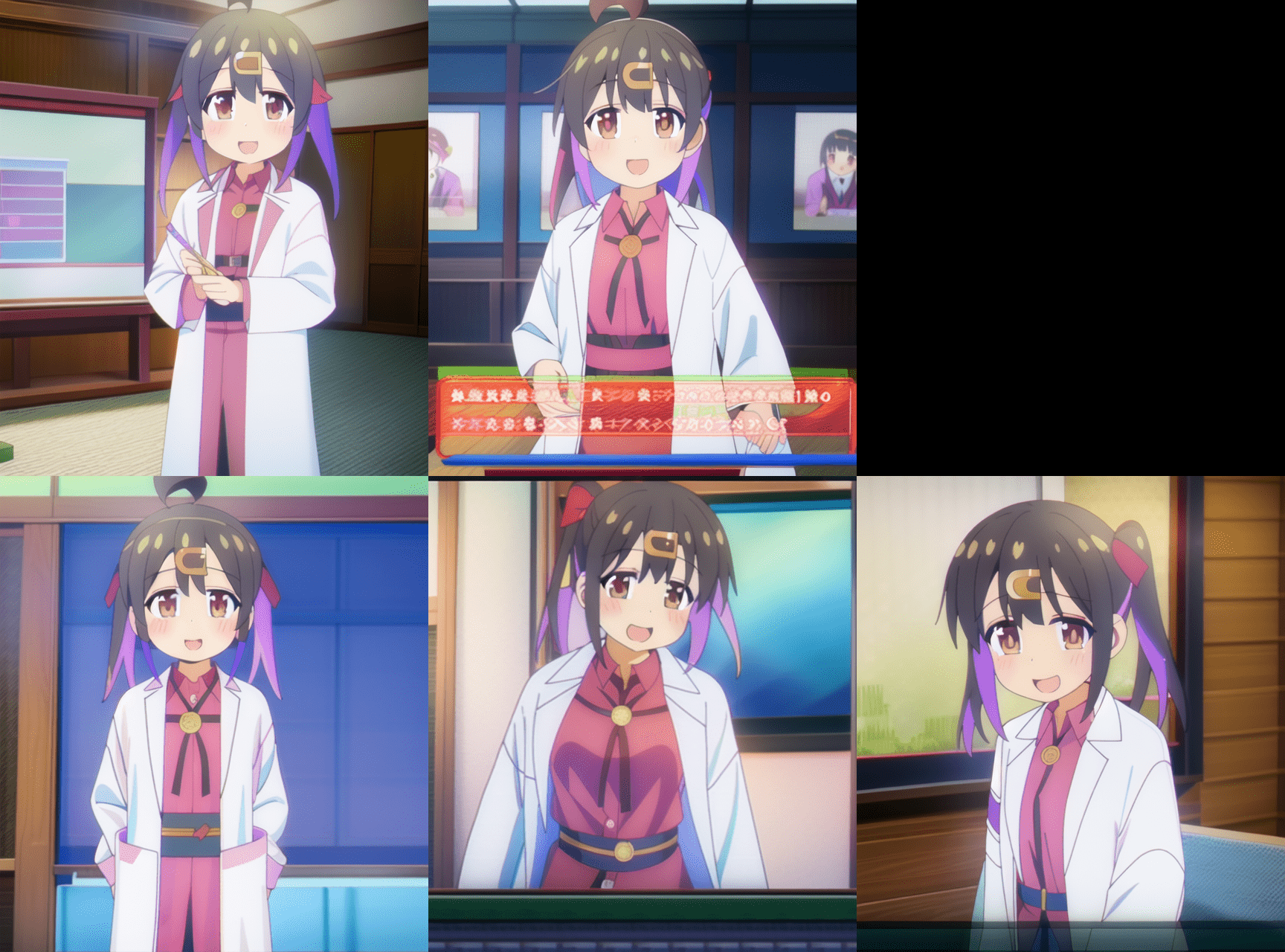

| ### Examples | |

|  | |

|  | |

|  | |