Instructions to use athirdpath/Harmonia-20b-GGUF with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- llama-cpp-python

How to use athirdpath/Harmonia-20b-GGUF with llama-cpp-python:

# !pip install llama-cpp-python from llama_cpp import Llama llm = Llama.from_pretrained( repo_id="athirdpath/Harmonia-20b-GGUF", filename="Harmonia-20b.fp16.gguf", )

output = llm( "Once upon a time,", max_tokens=512, echo=True ) print(output)

- Notebooks

- Google Colab

- Kaggle

- Local Apps

- llama.cpp

How to use athirdpath/Harmonia-20b-GGUF with llama.cpp:

Install from brew

brew install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf athirdpath/Harmonia-20b-GGUF:Q4_K_M # Run inference directly in the terminal: llama-cli -hf athirdpath/Harmonia-20b-GGUF:Q4_K_M

Install from WinGet (Windows)

winget install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf athirdpath/Harmonia-20b-GGUF:Q4_K_M # Run inference directly in the terminal: llama-cli -hf athirdpath/Harmonia-20b-GGUF:Q4_K_M

Use pre-built binary

# Download pre-built binary from: # https://github.com/ggerganov/llama.cpp/releases # Start a local OpenAI-compatible server with a web UI: ./llama-server -hf athirdpath/Harmonia-20b-GGUF:Q4_K_M # Run inference directly in the terminal: ./llama-cli -hf athirdpath/Harmonia-20b-GGUF:Q4_K_M

Build from source code

git clone https://github.com/ggerganov/llama.cpp.git cd llama.cpp cmake -B build cmake --build build -j --target llama-server llama-cli # Start a local OpenAI-compatible server with a web UI: ./build/bin/llama-server -hf athirdpath/Harmonia-20b-GGUF:Q4_K_M # Run inference directly in the terminal: ./build/bin/llama-cli -hf athirdpath/Harmonia-20b-GGUF:Q4_K_M

Use Docker

docker model run hf.co/athirdpath/Harmonia-20b-GGUF:Q4_K_M

- LM Studio

- Jan

- Ollama

How to use athirdpath/Harmonia-20b-GGUF with Ollama:

ollama run hf.co/athirdpath/Harmonia-20b-GGUF:Q4_K_M

- Unsloth Studio new

How to use athirdpath/Harmonia-20b-GGUF with Unsloth Studio:

Install Unsloth Studio (macOS, Linux, WSL)

curl -fsSL https://unsloth.ai/install.sh | sh # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for athirdpath/Harmonia-20b-GGUF to start chatting

Install Unsloth Studio (Windows)

irm https://unsloth.ai/install.ps1 | iex # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for athirdpath/Harmonia-20b-GGUF to start chatting

Using HuggingFace Spaces for Unsloth

# No setup required # Open https://huggingface.co/spaces/unsloth/studio in your browser # Search for athirdpath/Harmonia-20b-GGUF to start chatting

- Docker Model Runner

How to use athirdpath/Harmonia-20b-GGUF with Docker Model Runner:

docker model run hf.co/athirdpath/Harmonia-20b-GGUF:Q4_K_M

- Lemonade

How to use athirdpath/Harmonia-20b-GGUF with Lemonade:

Pull the model

# Download Lemonade from https://lemonade-server.ai/ lemonade pull athirdpath/Harmonia-20b-GGUF:Q4_K_M

Run and chat with the model

lemonade run user.Harmonia-20b-GGUF-Q4_K_M

List all available models

lemonade list

Description

These are fp16, q_8_0, q5_k_m, and q4_k_m GGUF quants of Harmonia-20B.

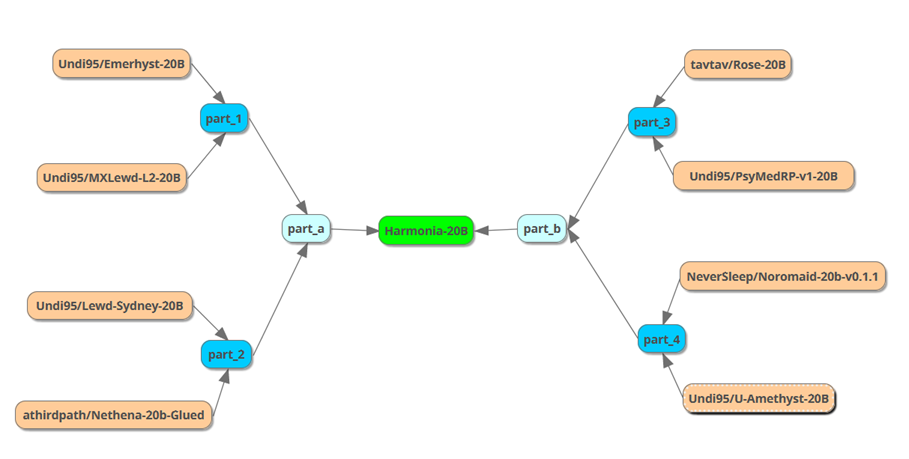

Harmonia-20B is a unified 20B model crafted via a multi-step SLERP merge of eight 20B models. The aim was to develop a versatile "base model" for TaskArithmetic in this size class.

Merging Process:

Models:

- model: Undi95/Emerhyst-20B

- model: Undi95/MXLewd-L2-20B

- model: Undi95/Lewd-Sydney-20B

- model: athirdpath/Nethena-20b-Glued

- model: tavtav/Rose-20B

- model: Undi95/PsyMedRP-v1-20B

- model: NeverSleep/Noromaid-20b-v0.1.1

- model: Undi95/U-Amethyst-20B

Concept:

The idea behind this process was to blend the unique attributes of each model while minimizing individual quirks. This approach has also shown promising results as a standalone RP model, providing a combination of high-quality writing and situational problem-solving/awareness.

Prompt template: Alpaca

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

Thanks to Undi95 for pioneering the 20B recipe, and for most of the models involved.

- Downloads last month

- 10