Buckets:

Title: Text-image Alignment for Diffusion-based Perception

URL Source: https://arxiv.org/html/2310.00031

Published Time: Thu, 02 May 2024 18:37:05 GMT

Markdown Content: Neehar Kondapaneni 1 Markus Marks 1∗ Manuel Knott 1,2∗

Rogerio Guimaraes 1 Pietro Perona 1 1 California Institute of Technology

2 ETH Zurich, Swiss Data Science Center, Empa

Abstract

Diffusion models are generative models with impressive text-to-image synthesis capabilities and have spurred a new wave of creative methods for classical machine learning tasks. However, the best way to harness the perceptual knowledge of these generative models for visual tasks is still an open question. Specifically, it is unclear how to use the prompting interface when applying diffusion backbones to vision tasks. We find that automatically generated captions can improve text-image alignment and significantly enhance a model’s cross-attention maps, leading to better perceptual performance. Our approach improves upon the current state-of-the-art (SOTA) in diffusion-based semantic segmentation on ADE20K and the current overall SOTA for depth estimation on NYUv2. Furthermore, our method generalizes to the cross-domain setting. We use model personalization and caption modifications to align our model to the target domain and find improvements over unaligned baselines. Our cross-domain object detection model, trained on Pascal VOC, achieves SOTA results on Watercolor2K. Our cross-domain segmentation method, trained on Cityscapes, achieves SOTA results on Dark Zurich-val and Nighttime Driving. Project page: vision.caltech.edu/TADP/ Code page: github.com/damaggu/TADP

1 Introduction

Figure 1: Text-Aligned Diffusion Perception (TADP). In TADP, image captions align the text prompts and images passed to diffusion-based vision models. In cross-domain tasks, target domain information is incorporated into the prompt to boost performance.

Diffusion models have set the state-of-the-art (SOTA) for image generation [52, 32, 38, 35]. Recently, a few works have shown diffusion pre-trained backbones have a strong prior for scene understanding that allows them to perform well in advanced discriminative vision tasks, such as semantic segmentation [17, 53], monocular depth estimation [53], and keypoint estimation [28, 43]. We refer to these works as diffusion-based perception methods. Unlike contrastive vision language models (e.g., CLIP) [31, 22, 26], generative models have a causal relationship with text, in which text guides image generation. In latent diffusion models, text prompts control the denoising U-Net [36], moving the image latent in a semantically meaningful direction [5].

We explore this relationship and find that text-image alignment significantly improves the performance of diffusion-based perception. We then investigate text-target domain alignment in cross-domain vision tasks, finding that aligning to the target domain while training on the source domain can improve a model’s target domain performance (Fig.1).

We first study prompting for diffusion-based perceptual models and find that increasing text-image alignment improves semantic segmentation and depth estimation performance. We find that unaligned text prompts can introduce semantic shifts to the feature maps of the diffusion model [5] and that these shifts can make it more difficult for the task-specific head to solve the target task. Specifically, we ask whether unaligned text prompts, such as averaging class-specific sentence embeddings ([31, 53]), hinder performance by interfering with feature maps through the cross-attention mechanism. Through ablation experiments on Pascal VOC2012 segmentation [14] and ADE20K [55], we find that off-target and missing class names degrade image segmentation quality. We show automated image captioning [25] achieves sufficient text-image alignment for perception. Our approach (along with latent representation scaling, see Sec.4.1) improves performance for semantic segmentation on Pascal and ADE20k by 4.0 mIoU and 1.7 mIoU, respectively, and depth estimation on NYUv2 [42] by 0.2 RMSE (+8% relative) setting the new SOTA.

Next, we focus on cross-domain adaptation: can appropriate image captioning help visual perception when the model is trained in one domain and tested on a different domain? Training models on the source domain with the appropriate prompting strategy leads to excellent unsupervised cross-domain performance on several benchmarks. We evaluate our cross-domain method on Pascal VOC [13, 14] to Watercolor2k (W2K) and Comic2k (C2K) [21] for object detection and Cityscapes (CS) [9] to Dark Zurich (DZ) [39] and Nighttime (ND) Driving [10] for semantic segmentation. We explore varying degrees of text-target domain alignment and find that improved alignment results in better performance. We also demonstrate using two diffusion personalization methods, Textual Inversion [16] and DreamBooth [37], for better target domain alignment and performance. We find that diffusion pre-training is sufficient to achieve SOTA (+5.8 mIoU on CS→→\to→DZ, +4.0 mIoU on CS→→\to→ND, +0.7 mIoU on VOC→→\to→W2k) or near SOTA results on all cross-domain datasets with no text-target domain alignment, and including our best text-target domain alignment method further improves +1.4 AP on Watercolor2k, +2.1 AP on Comic2k, and +3.3 mIoU on Nighttime Driving.

Overall, our contributions are as follows:

- •We propose a new method using automated caption generation that significantly improves performance on several diffusion-based vision tasks through increased text-image alignment.

- •We systematically study how prompting affects diffusion-based vision performance, elucidating the impact of class presence, grammar in the prompt, and previously used average embeddings.

- •We demonstrate that diffusion-based perception effectively generalizes across domains, with text-target domain alignment improving performance, which can be further boosted by model personalization.

2 Related Work

2.1 Diffusion models for single-domain vision tasks

Diffusion models are trained to reverse a step-wise forward noising process. Once trained, they can generate highly realistic images from pure noise [35, 32, 52, 38]. To control image generation, diffusion models are trained with text prompts/captions that guide the diffusion process. These prompts are passed through a text encoder to generate text embeddings that are incorporated into the reverse diffusion process via cross-attention layers.

Recently, some works have explored using diffusion models for discriminative vision tasks. This can be done by either utilizing the diffusion model as a backbone for the task [53, 17, 43, 28] or through fine-tuning the diffusion model for a specific task and then using it to generate synthetic data for a downstream model [50, 2]. We use the diffusion model as a backbone for downstream vision tasks.

VPD [53] encodes images into latent representations and passes them through one step of the Stable Diffusion model. The cross-attention maps, multi-scale features, and output latent code are concatenated and passed to a task-specific head. Text prompts influence all these maps through the cross-attention mechanism, which guides the reverse diffusion process. The cross-attention maps are incorporated into the multi-scale feature maps and the output latent representation. The text guides the diffusion process and can accordingly shift the latent representation in semantic directions [5, 16, 18, 1]. The details of how VPD uses the prompting interface are described in Sec.3. In short, VPD uses unaligned text prompts. In our work, we show how aligning the text to the image by using a captioner can significantly improve semantic segmentation and depth estimation performance.

2.2 Image captioning

CLIP [31] introduced a novel learning paradigm to align images with their captions. Shortly after, the LAION-5B dataset [41] was released with 5B image-text pairs; this dataset was used to train Stable Diffusion. We hypothesize that text-image alignment is important for diffusion-pretrained vision models. However, images used in advanced vision tasks (like segmentation and depth estimation) are not naturally paired with text captions. To obtain image-aligned captions, we use BLIP-2 [25], a model that inverts the CLIP latent space to generate captions for novel images.

2.3 Diffusion models for cross-domain vision tasks

A few works explore the cross-domain setting with diffusion models [2, 17]. Benigmim et al. [2] use a diffusion model to generate data for a downstream unsupervised domain adaptation (UDA) architecture. In [17], the diffusion backbone is frozen, and the segmentation head is trained with a consistency loss with category and scene prompts guiding the latent code towards target cross-domains. Similar to VPD, the category prompts consist of token embeddings for all classes present in the dataset, irrespective of their presence in any specific image. The consistency loss forces the model to predict the same output mask for all the different scene prompts, helping the segmentation head become invariant to the scene type. Instead of using a consistency loss, we train the diffusion model backbone and task head on the source domain data with and without incorporating the style of the target domain in the caption. We find that better alignment with the target domain (i.e., target domain information included in the prompt) results in better cross-domain performance.

2.4 Cross-domain object detection

Cross-domain object detection can be divided into multiple subcategories, depending on what data/labels are at train/test time available. Unsupervised domain adaptation objection detection (UDAOD) tries to improve detection performance by training on unlabeled target domain data with approaches such as self-training [11, 44], adversarial distribution alignment [54] or generating pseudo labels for self-training [23]. Cross-domain weakly supervised object detection (CDWSOD) assumes the availability of image-level annotations at training time and utilizes pseudo labeling [30, 21], alignment [51] or correspondence mining [19]. Recently, [46] used CLIP [31] for Single Domain Generalization, which aims to generalize from a single domain to multiple unseen target domains. Our text-based method defines a new category of cross-domain object detection that tries to adapt from a single source to an unseen target domain by only having the broad semantic context of the target domain (e.g., foggy/night/comic/watercolor) as text input to our method. When we incorporate model personalization, our method can be considered a UDAOD method since we train a token based on unlabeled images from the target domain.

3 Methods

Figure 2: Overview of TADP. We test several prompting strategies and evaluate their impact on downstream vision task performance. Our method concatenates the cross-attention and multi-scale feature maps before passing them to the vision-specific decoder. In the blue box, we show three single-domain captioning strategies with differing levels of text-image alignment. We propose using BLIP [25] captioning to improve image-text alignment. We extend our analysis to the cross-domain setting (yellow box), exploring whether aligning the source domain text captions to the target domain may impact model performance by appending caption modifiers to image captions generated in the source domain and find model personalization modifiers (Textual Inversion/Dreambooth) work best.

Stable Diffusion [35]. The text-to-image Stable Diffusion model is composed of four networks: an encoder ℰ ℰ\mathcal{E}caligraphic_E, a conditional denoising autoencoder (a U-Net in Stable Diffusion) ϵ θ subscript italic-ϵ 𝜃\epsilon_{\theta}italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT, a language encoder τ θ subscript 𝜏 𝜃\tau_{\theta}italic_τ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT (the CLIP text encoder in Stable Diffusion), and a decoder 𝒟 𝒟\mathcal{D}caligraphic_D. ℰ ℰ\mathcal{E}caligraphic_E and 𝒟 𝒟\mathcal{D}caligraphic_D are trained before ϵ θ subscript italic-ϵ 𝜃\epsilon_{\theta}italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT, such that 𝒟(ℰ(x))=x≈x 𝒟 ℰ 𝑥𝑥 𝑥\mathcal{D}(\mathcal{E}(x))=\tilde{x}\approx x caligraphic_D ( caligraphic_E ( italic_x ) ) = over~ start_ARG italic_x end_ARG ≈ italic_x. Training ϵ θ subscript italic-ϵ 𝜃\epsilon_{\theta}italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT is composed of a pre-defined forward process and a learned reverse process. The reverse process is learned using LAION-400M [40], a dataset of 400 million images (x∈X 𝑥 𝑋 x\in X italic_x ∈ italic_X) and captions (y∈Y 𝑦 𝑌 y\in Y italic_y ∈ italic_Y). In the forward process, an image x 𝑥 x italic_x is encoded into a latent z 0=ℰ(x)subscript 𝑧 0 ℰ 𝑥 z_{0}=\mathcal{E}(x)italic_z start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT = caligraphic_E ( italic_x ), and t 𝑡 t italic_t steps of a forward noise process are executed to generate a noised latent z t subscript 𝑧 𝑡 z_{t}italic_z start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT. Then, to learn the reverse process, the latent z t subscript 𝑧 𝑡 z_{t}italic_z start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT is passed to the denoising autoencoder ϵ θ subscript italic-ϵ 𝜃\epsilon_{\theta}italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT, along with the time-step t 𝑡 t italic_t and the image caption’s representation 𝒞=τ θ(y)𝒞 subscript 𝜏 𝜃 𝑦\mathcal{C}=\tau_{\theta}(y)caligraphic_C = italic_τ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_y ). τ θ subscript 𝜏 𝜃\tau_{\theta}italic_τ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT adds information about y 𝑦 y italic_y to ϵ θ subscript italic-ϵ 𝜃\epsilon_{\theta}italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT using a cross-attention mechanism, in which the query is derived from the image, and the key and value are transformations of the caption representation. The model ϵ θ subscript italic-ϵ 𝜃\epsilon_{\theta}italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT is trained to predict the noise added to the latent in step t 𝑡 t italic_t of the forward process:

L LDM:=𝔼 ℰ(x),y,ϵ∼𝒩(0,1),t[‖ϵ−ϵ θ(z t,t,τ θ(y))‖2 2],assign subscript 𝐿 𝐿 𝐷 𝑀 subscript 𝔼 formulae-sequence similar-to ℰ 𝑥 𝑦 italic-ϵ 𝒩 0 1 𝑡 delimited-[]superscript subscript norm italic-ϵ subscript italic-ϵ 𝜃 subscript 𝑧 𝑡 𝑡 subscript 𝜏 𝜃 𝑦 2 2 L_{LDM}:=\mathbb{E}{\mathcal{E}(x),y,\epsilon\sim\mathcal{N}(0,1),t}\Big{[}|% \epsilon-\epsilon{\theta}(z_{t},t,\tau_{\theta}(y))|_{2}^{2}\Big{]},,italic_L start_POSTSUBSCRIPT italic_L italic_D italic_M end_POSTSUBSCRIPT := blackboard_E start_POSTSUBSCRIPT caligraphic_E ( italic_x ) , italic_y , italic_ϵ ∼ caligraphic_N ( 0 , 1 ) , italic_t end_POSTSUBSCRIPT [ ∥ italic_ϵ - italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_z start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_t , italic_τ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_y ) ) ∥ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT ] ,(1)

where t∈{0,…,T}𝑡 0…𝑇 t\in{0,...,T}italic_t ∈ { 0 , … , italic_T }. During generation, a pure noise latent z T subscript 𝑧 𝑇 z_{T}italic_z start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT and a user-specified prompt are passed through the denoising autoencoder ϵ θ subscript italic-ϵ 𝜃\epsilon_{\theta}italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT for T 𝑇 T italic_T steps and decoded 𝒟(z 0)𝒟 subscript 𝑧 0\mathcal{D}(z_{0})caligraphic_D ( italic_z start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT ) to generate an image guided by the text prompt.

Diffusion for Feature Extraction. Diffusion backbones have been used for downstream vision tasks in several recent works[53, 17, 28, 43]. Due to its public availability and performance in perception tasks, we use a modified version (see Sec.4.1) of the feature extraction method in VPD. An image latent z 0=ℰ(x)subscript 𝑧 0 ℰ 𝑥 z_{0}=\mathcal{E}(x)italic_z start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT = caligraphic_E ( italic_x ) and a conditioning 𝒞 𝒞\mathcal{C}caligraphic_C are passed through the last step of the denoising process ϵ θ(z 0,0,𝒞)subscript italic-ϵ 𝜃 subscript 𝑧 0 0 𝒞\epsilon_{\theta}(z_{0},0,\mathcal{C})italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_z start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT , 0 , caligraphic_C ). The cross-attention maps A 𝐴 A italic_A and the multi-scale feature maps F 𝐹 F italic_F of the U-Net are concatenated V=A⊕F 𝑉 direct-sum 𝐴 𝐹 V=A\oplus F italic_V = italic_A ⊕ italic_F and passed to a task-specific head H 𝐻 H italic_H to generate a prediction p^=H(V)^𝑝 𝐻 𝑉\hat{p}=H(V)over^ start_ARG italic_p end_ARG = italic_H ( italic_V ). The backbone ϵ θ subscript italic-ϵ 𝜃\epsilon_{\theta}italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT and head H 𝐻 H italic_H are trained with a task-specific loss ℒ H(p^,p)subscript ℒ 𝐻^𝑝 𝑝\mathcal{L}_{H}(\hat{p},p)caligraphic_L start_POSTSUBSCRIPT italic_H end_POSTSUBSCRIPT ( over^ start_ARG italic_p end_ARG , italic_p ).

Average EOS Tokens. To generate 𝒞 𝒞\mathcal{C}caligraphic_C, previous methods[53, 17] rely on a method from CLIP[31] to use averaged text embeddings as representations for the classes in a dataset. A list of 80 sentence templates for each class of interest (such as “a photo of a ”) are passed through the CLIP text encoder. We use ℬ ℬ\mathcal{B}caligraphic_B to denote the set of class names in a dataset. For a specific class (b∈ℬ 𝑏 ℬ b\in\mathcal{B}italic_b ∈ caligraphic_B), the CLIP text encoder returns an 80×N×D 80 𝑁 𝐷 80\times N\times D 80 × italic_N × italic_D tensor, where N is the maximum number of tokens over all the templates, and D is 768 (the dimension of each token embedding). Shorter sentences are padded with EOS tokens to fill out the maximum number of tokens. The first EOS token from each sentence template is averaged and used as the representative embedding for the class such that 𝒞∈ℛ|ℬ|×768 𝒞 superscript ℛ ℬ 768\mathcal{C}\in\mathcal{R}^{|\mathcal{B}|\times 768}caligraphic_C ∈ caligraphic_R start_POSTSUPERSCRIPT | caligraphic_B | × 768 end_POSTSUPERSCRIPT. This method is used in[53, 17], we denote it as 𝒞 avg subscript 𝒞 𝑎 𝑣 𝑔\mathcal{C}_{avg}caligraphic_C start_POSTSUBSCRIPT italic_a italic_v italic_g end_POSTSUBSCRIPT and use it as a baseline. For semantic segmentation, all of the class embeddings, irrespective of presence in the image, are passed to the cross-attention layers. Only the class embedding of the room type is passed to the cross-attention layers for depth estimation.

3.1 Text-Aligned Diffusion Perception (TADP)

Our work proposes a novel method for prompting diffusion-pretrained perception models. Specifically, we explore different prompting methods 𝒢 𝒢\mathcal{G}caligraphic_G to generate 𝒞 𝒞\mathcal{C}caligraphic_C. In the single-domain setting, we show the effectiveness of a method that uses BLIP-2 [25], an image captioning algorithm, to generate a caption as the conditioning for the model: 𝒢(x)=y→𝒞 𝒢 𝑥𝑦→𝒞\mathcal{G}(x)=\tilde{y}\rightarrow\mathcal{C}caligraphic_G ( italic_x ) = over~ start_ARG italic_y end_ARG → caligraphic_C. We then extend our method to the cross-domain setting by incorporating target domain information to 𝒞=𝒞+ℳ(𝒫)s 𝒞 𝒞 ℳ subscript 𝒫 𝑠\mathcal{C}=\mathcal{C}+\mathcal{M}(\mathcal{P}){s}caligraphic_C = caligraphic_C + caligraphic_M ( caligraphic_P ) start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT, where ℳ ℳ\mathcal{M}caligraphic_M is a caption modifier that takes target domain information 𝒫 𝒫\mathcal{P}caligraphic_P as input and outputs a caption modification ℳ(𝒫)s ℳ subscript 𝒫 𝑠\mathcal{M}(\mathcal{P}){s}caligraphic_M ( caligraphic_P ) start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT and a model modification ℳ(𝒫)ϵ θ ℳ subscript 𝒫 subscript italic-ϵ 𝜃\mathcal{M}(\mathcal{P}){\epsilon{\theta}}caligraphic_M ( caligraphic_P ) start_POSTSUBSCRIPT italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT end_POSTSUBSCRIPT. In Sec.4, we analyze the text-image interface of the diffusion model by varying the captioner 𝒢 𝒢\mathcal{G}caligraphic_G and caption modifier ℳ ℳ\mathcal{M}caligraphic_M in a systematic manner for three different vision tasks: semantic segmentation, object detection, and monocular depth estimation. Our method and experiments are presented in Fig.2. Following[53], we train our ADE20k segmentation and NYUv2 depth estimation models with fast and regular schedules. On ADE20k, we train using 4k steps (fast), 8k steps (fast), and 80k steps (normal). For NYUv2 depth, we train on a 1-epoch (fast) schedule and a 25-epoch (normal) schedule. For implementation details, refer to AppendixD.

4 Results

Table 1: Prompting for Pascal VOC2012 Segmentation. We report the single-scale validation mIoU for Pascal experiments. (R): Reproduction of VPD, Avg: EOS token averaging, LS: Latent Scaling, G: Grammar, OT: Off-target information. For our method, we indicate the minimum length of the BLIP caption with TADP-X 𝑋 X italic_X and nouns only with (NO).

4.1 Latent scaling

Before exploring image-text alignment, we apply latent scaling to encoded images (Appendix G of Rombach et al. [35]). This normalizes the image latents to have a standard normal distribution. The scaling factor is fixed at 0.18215 0.18215 0.18215 0.18215. We find that latent scaling improves performance using 𝒞 avg subscript 𝒞 𝑎 𝑣 𝑔\mathcal{C}_{avg}caligraphic_C start_POSTSUBSCRIPT italic_a italic_v italic_g end_POSTSUBSCRIPT for segmentation and depth estimation (Fig.3). Specifically, latent scaling improves ∼similar-to\sim∼0.8%mIoU on Pascal, ∼similar-to\sim∼0.3% mIoU on ADE20K, and a relative ∼similar-to\sim∼5.5% RMSE on NYUv2 Depth (Fig.3).

4.2 Single-domain alignment

Average EOS Tokens. We scrutinize the use of average EOS tokens for 𝒞 𝒞\mathcal{C}caligraphic_C (see Sec.3). While average EOS tokens are sensible when measuring cosine similarities in the CLIP latent space, it is unsuitable in diffusion models, where the text guides the diffusion process through cross-attention. In our qualitative analysis, we find that average EOS tokens degrade the cross-attention maps (Fig.4). Instead, we explore using CLIP to embed each class name independently and use the tokens corresponding to the actual word (not the EOS token) and pass this as input to the cross-attention layer:

𝒢 ClassEmbs(ℬ)=concat(CLIP(b)|b∈ℬ)→𝒞 ClassEmbs subscript 𝒢 ClassEmbs ℬ 𝑐 𝑜 𝑛 𝑐 𝑎 𝑡 conditional CLIP 𝑏 𝑏 ℬ→subscript 𝒞 ClassEmbs\mathcal{G}{\text{ClassEmbs}}(\mathcal{B})=concat(\textit{CLIP}(b)|b\in% \mathcal{B})\rightarrow\mathcal{C}{\text{ClassEmbs}}caligraphic_G start_POSTSUBSCRIPT ClassEmbs end_POSTSUBSCRIPT ( caligraphic_B ) = italic_c italic_o italic_n italic_c italic_a italic_t ( CLIP ( italic_b ) | italic_b ∈ caligraphic_B ) → caligraphic_C start_POSTSUBSCRIPT ClassEmbs end_POSTSUBSCRIPT(2)

Second, we explore a generic prompt, a string of class names separated by spaces:

𝒢 ClassNames(ℬ)={‘ ’+b|b∈ℬ}→𝒞 ClassNames subscript 𝒢 ClassNames ℬ conditional-set‘ ’𝑏 𝑏 ℬ→subscript 𝒞 ClassNames\mathcal{G}{\text{ClassNames}}(\mathcal{B})={\text{` '}+b|b\in\mathcal{B}}% \rightarrow\mathcal{C}{\text{ClassNames}}caligraphic_G start_POSTSUBSCRIPT ClassNames end_POSTSUBSCRIPT ( caligraphic_B ) = { ‘ ’ + italic_b | italic_b ∈ caligraphic_B } → caligraphic_C start_POSTSUBSCRIPT ClassNames end_POSTSUBSCRIPT(3)

These prompts are similar to the ones used for averaged EOS tokens 𝒞 avg subscript 𝒞 𝑎 𝑣 𝑔\mathcal{C}{avg}caligraphic_C start_POSTSUBSCRIPT italic_a italic_v italic_g end_POSTSUBSCRIPT w.r.t. overall text-image alignment but instead use the token corresponding to the word representing the class name. We evaluate these variations on Pascal VOC2012 segmentation. We find that 𝒞 ClassNames subscript 𝒞 ClassNames\mathcal{C}{\text{ClassNames}}caligraphic_C start_POSTSUBSCRIPT ClassNames end_POSTSUBSCRIPT improves performance by 1.0 mIoU, but 𝒞 ClassEmbs subscript 𝒞 ClassEmbs\mathcal{C}_{\text{ClassEmbs}}caligraphic_C start_POSTSUBSCRIPT ClassEmbs end_POSTSUBSCRIPT reduces performance by 0.3 mIoU (see Tab.1). We perform more in-depth analyses of the effect of text-image alignment on the diffusion model’s cross-attention maps and image generation properties in AppendixA.

Figure 3: Effects of Latent Scaling (LS) and BLIP caption minimum length. We report mIoU for Pascal, mIoU for ADE20K, and RMSE for NYUv2 depth (right). (Top) Latent scaling improves performance on Pascal ∼0.8 similar-to absent 0.8{\sim}0.8∼ 0.8 mIoU (higher is better), ∼0.3 similar-to absent 0.3{\sim}0.3∼ 0.3 mIoU, and ∼5.5%similar-to absent percent 5.5{\sim}5.5%∼ 5.5 % relative RMSE (lower is better). (Bottom) We see a similar effect for BLIP minimum token length, with longer captions performing better, improving ∼0.8 similar-to absent 0.8{\sim}0.8∼ 0.8 mIoU on Pascal, ∼0.9 similar-to absent 0.9{\sim}0.9∼ 0.9 mIoU on ADE20K, and ∼0.6%similar-to absent percent 0.6{\sim}0.6%∼ 0.6 % relative RMSE.

TADP. To align the diffusion model text input to the image, we use BLIP-2[25] to generate captions for every image in our single-domain datasets (Pascal, ADE20K, and NYUv2).

𝒢 TADP(x)=BLIP-2(x)→𝒞 TADP(x)subscript 𝒢 TADP 𝑥 BLIP-2 𝑥→subscript 𝒞 TADP 𝑥\mathcal{G}{\text{TADP}}(x)=\textit{BLIP-2}(x)\rightarrow\mathcal{C}{\text{% TADP}}(x)caligraphic_G start_POSTSUBSCRIPT TADP end_POSTSUBSCRIPT ( italic_x ) = BLIP-2 ( italic_x ) → caligraphic_C start_POSTSUBSCRIPT TADP end_POSTSUBSCRIPT ( italic_x )(4)

BLIP-2 is trained to produce image-aligned text captions and is designed around the CLIP latent space. However, other vision-language algorithms that produce captions could also be used. We find that these text captions improve performance in all datasets and tasks (Tabs. 1, 2, 3). Performance improves on Pascal segmentation by ∼similar-to\sim∼4% mIoU, ADE20K by ∼similar-to\sim∼1.4% mIoU, and NYUv2 Depth by a relative RMSE improvement of 4%. We see stronger effects on the fast schedules for ADE20K with an improvement of ∼similar-to\sim∼5 mIoU at (4k), ∼similar-to\sim∼2.4 mIoU (8K). On NYUv2 Depth, we see a smaller gain on the fast schedule ∼similar-to\sim∼2.4%. All numbers are reported relative to VPD with latent scaling.

Method#Params FLOPs Crop mIoU ss mIoU ms self-supervised pre-training EVA [15]1.01B-896 2 61.2 61.5 InternImage-L [48]256M 2526G 640 2 53.9 54.1 InternImage-H [48]1.31B 4635G 896 2 62.5 62.9 multi-modal pre-training CLIP-ViT-B [33]105M 1043G 640 2 50.6 51.3 ViT-Adapter [8]571M-896 2 61.2 61.5 BEiT-3 [49]1.01B-896 2 62.0 62.8 ONE-PEACE [47]1.52B-896 2 62.0 63.0 diffusion-based pre-training VPD A32[53]862M 891G 512 2 53.7 54.6 VPD(R)862M 891G 512 2 53.1 54.2 VPD(LS)862M 891G 512 2 53.7 54.4 TADP-40 (Ours)862M 2168G 512 2 54.8 55.9 TADP-Oracle 862M-512 2 72.0-

Table 2: Semantic segmentation with different methods for ADE20k. Our method (green) achieves SOTA within the diffusion-pretrained models category. The results of our oracle indicate the potential of diffusion-based models for future research as it is significantly higher than the overall SOTA (highlighted in yellow). See Tab.1 for a notation key and Tab.S1 for fast schedule results.

Table 3: Depth estimation in NYUv2. We find latent scaling accounts for a relative gain of ∼5.5%similar-to absent percent 5.5\sim 5.5%∼ 5.5 % on the RMSE metric. Additionally, image-text alignment improves ∼4%similar-to absent percent 4\sim 4%∼ 4 % relative on the RMSE metric. A minimum caption length of 40 tokens performs the best.We also explore adding a text-adapter (TA) to TADP, but find no significant gain. See Table1 for a notation key.

Figure 4: Cross-attention maps for different types of prompting (before training). We compare the cross-attention maps for four types of prompting: oracle, BLIP, Average EOS tokens, and class names as space-separated strings. The cross-attention maps for different heads at all different scales are upsampled to 64x64 and averaged. When comparing Average Template EOS and Class Names, we see (qualitatively) averaging degrades the quality of the cross-attention maps. Furthermore, we find that class names that are not present in the image can have highly localized attention maps (e.g., ‘bottle’). Further analysis of the cross-attention maps is available in Sec.A, where we explore image-to-image generation, copy-paste image modifications, and more.

We perform some ablations to analyze what aspects of the captions are important. We explore the minimum token number hyperparameter for BLIP-2 to explore if longer captions can produce more useful feature maps for the downstream task. We try a minimum token number of 0, 20, and 40 tokens (denoted as 𝒞 TADP-N subscript 𝒞 TADP-N\mathcal{C}{\text{TADP-N}}caligraphic_C start_POSTSUBSCRIPT TADP-N end_POSTSUBSCRIPT) and find small but consistent gains with longer captions, resulting on average 0.75% relative gain for 40 tokens vs. 0 tokens (Fig.3). Next, we ablate the Pascal 𝒞 TADP-20 subscript 𝒞 TADP-20\mathcal{C}{\text{TADP-20}}caligraphic_C start_POSTSUBSCRIPT TADP-20 end_POSTSUBSCRIPT captions to understand what in the caption is necessary for the performance gains we observe. We use NLTK [4] to filter for the nouns in the captions. In the 𝒞 TADP(NO)-20 subscript 𝒞 TADP(NO)-20\mathcal{C}{\text{TADP(NO)-20}}caligraphic_C start_POSTSUBSCRIPT TADP(NO)-20 end_POSTSUBSCRIPT nouns-only caption setting, we achieve 86.4% mIoU, similar to 86.2% mIoU with 𝒞 TADP-20 subscript 𝒞 TADP-20\mathcal{C}{\text{TADP-20}}caligraphic_C start_POSTSUBSCRIPT TADP-20 end_POSTSUBSCRIPT (Tab.1), suggesting nouns are sufficient.

Oracle. This insight about nouns leads us to ask if an oracle caption, in which all the object class names in an image are provided as a caption, can improve performance further. We define ℬ(x)ℬ 𝑥\mathcal{B}(x)caligraphic_B ( italic_x ) as the set of class names present in image x 𝑥 x italic_x.

𝒢 Oracle(x)={‘ ’+b|b∈ℬ(x)}→𝒞 Oracle(x)subscript 𝒢 Oracle 𝑥 conditional-set‘ ’𝑏 𝑏 ℬ 𝑥→subscript 𝒞 Oracle 𝑥\mathcal{G}{\text{Oracle}}(x)={\text{` '}+b|b\in\mathcal{B}(x)}\rightarrow% \mathcal{C}{\text{Oracle}}(x)caligraphic_G start_POSTSUBSCRIPT Oracle end_POSTSUBSCRIPT ( italic_x ) = { ‘ ’ + italic_b | italic_b ∈ caligraphic_B ( italic_x ) } → caligraphic_C start_POSTSUBSCRIPT Oracle end_POSTSUBSCRIPT ( italic_x )(5)

While this is not a realistic setting, it serves as an approximate upper bound on performance for our method on the segmentation task. We find a large improvement in performance in segmentation, achieving 89% mIoU on Pascal and 72.2% mIoU on ADE20K. For depth estimation, multi-class segmentation masks are only provided for a smaller subset of the images, so we cannot generate a comparable oracle. We perform ablations on the oracle captions to evaluate the model’s sensitivity to alignment. For ADE20K, on the 4k iteration schedule, we modify the oracle captions by randomly adding and removing classes such that the recall and precision are at 0.5, 0.75, and 1.0 (independently) (Tab.S2). We find that both precision and recall have an effect, but recall is significantly more important. When recall is lower (0.50), improving precision has minimal impact (<1% mIoU). However, precision has progressively larger impacts as recall increases to 0.75 and 1.00 (∼similar-to\sim∼3% mIoU and ∼similar-to\sim∼7% mIoU). In contrast, recall has large impacts at every precision level: 0.5 - (∼similar-to\sim∼6% mIoU), 0.75 - (∼similar-to\sim∼9% mIoU), and 1.00 - (∼similar-to\sim∼13% mIoU). BLIP-2 captioning performs similarly to a precision of 1.00 and a recall of 0.5 (Tab.2). Additional analyses w.r.t. precision, recall, and object sizes can be found in AppendixB.

4.3 Cross-domain alignment

Next, we ask if text-image alignment can benefit cross-domain tasks. In cross-domain, we train a model on a source domain and test it on a different target domain. There are two aspects of alignment in the cross-domain setting: the first is also present in single-domain, which is image-text alignment; the second is unique to the cross-domain setting, which is text-target domain alignment. The second is challenging because there is a large domain shift between the source and target domain. Our intuition is that while the model has no information on the target domain from the training images, an appropriate text prompt may carry some general information about the target domain. Our cross-domain experiments focus on the text-target domain alignment and use 𝒢 TADP subscript 𝒢 TADP\mathcal{G}_{\text{TADP}}caligraphic_G start_POSTSUBSCRIPT TADP end_POSTSUBSCRIPT for image-text alignment (following our insights from the single-domain setting).

Training. Our experiments in this setting are designed in the following manner: we train a diffusion model on the source domain captions 𝒞 TADP(x)subscript 𝒞 TADP 𝑥\mathcal{C}{\text{TADP}}(x)caligraphic_C start_POSTSUBSCRIPT TADP end_POSTSUBSCRIPT ( italic_x ). With these source domain captions, we experiment with four different caption modifications (each increasing in alignment to the target domain), a null ℳ null(𝒫)subscript ℳ null 𝒫\mathcal{M}{\textit{null}}(\mathcal{P})caligraphic_M start_POSTSUBSCRIPT null end_POSTSUBSCRIPT ( caligraphic_P ) caption modification where ℳ null(𝒫)s=∅=ℳ null(𝒫)ϵ θ=∅subscript ℳ null subscript 𝒫 𝑠 subscript ℳ null subscript 𝒫 subscript italic-ϵ 𝜃\mathcal{M}{\textit{null}}(\mathcal{P}){s}=\varnothing=\mathcal{M}{\textit{% null}}(\mathcal{P}){\epsilon_{\theta}}=\varnothing caligraphic_M start_POSTSUBSCRIPT null end_POSTSUBSCRIPT ( caligraphic_P ) start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT = ∅ = caligraphic_M start_POSTSUBSCRIPT null end_POSTSUBSCRIPT ( caligraphic_P ) start_POSTSUBSCRIPT italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT end_POSTSUBSCRIPT = ∅, a simple ℳ simple(𝒫)subscript ℳ simple 𝒫\mathcal{M}{\textit{simple}}(\mathcal{P})caligraphic_M start_POSTSUBSCRIPT simple end_POSTSUBSCRIPT ( caligraphic_P ) caption modifier where ℳ simple(𝒫)s subscript ℳ simple subscript 𝒫 𝑠\mathcal{M}{\textit{simple}}(\mathcal{P}){s}caligraphic_M start_POSTSUBSCRIPT simple end_POSTSUBSCRIPT ( caligraphic_P ) start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT is a hand-crafted string describing the style of the target domain appended to the end and ℳ simple(𝒫)ϵ θ=∅subscript ℳ simple subscript 𝒫 subscript italic-ϵ 𝜃\mathcal{M}{\textit{simple}}(\mathcal{P}){\epsilon{\theta}}=\varnothing caligraphic_M start_POSTSUBSCRIPT simple end_POSTSUBSCRIPT ( caligraphic_P ) start_POSTSUBSCRIPT italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT end_POSTSUBSCRIPT = ∅, a Textual Inversion [16]ℳ TI(𝒫)subscript ℳ TI 𝒫\mathcal{M}{\textit{TI}}(\mathcal{P})caligraphic_M start_POSTSUBSCRIPT TI end_POSTSUBSCRIPT ( caligraphic_P ) caption modifier where the output ℳ TI(𝒫)s subscript ℳ TI subscript 𝒫 𝑠\mathcal{M}{\textit{TI}}(\mathcal{P}){s}caligraphic_M start_POSTSUBSCRIPT TI end_POSTSUBSCRIPT ( caligraphic_P ) start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT is a learned Textual Inversion token <<<*>>> and ℳ TI(𝒫)ϵ θ=∅subscript ℳ TI subscript 𝒫 subscript italic-ϵ 𝜃\mathcal{M}{\textit{TI}}(\mathcal{P}){\epsilon{\theta}}=\varnothing caligraphic_M start_POSTSUBSCRIPT TI end_POSTSUBSCRIPT ( caligraphic_P ) start_POSTSUBSCRIPT italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT end_POSTSUBSCRIPT = ∅, and a DreamBooth [37]ℳ DB(𝒫)subscript ℳ DB 𝒫\mathcal{M}{\textit{DB}}(\mathcal{P})caligraphic_M start_POSTSUBSCRIPT DB end_POSTSUBSCRIPT ( caligraphic_P ) caption modifier where ℳ DB(𝒫)s subscript ℳ DB subscript 𝒫 𝑠\mathcal{M}{\textit{DB}}(\mathcal{P}){s}caligraphic_M start_POSTSUBSCRIPT DB end_POSTSUBSCRIPT ( caligraphic_P ) start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT is a learned DreamBooth token <<>> and ℳ DB(𝒫)ϵ θ subscript ℳ DB subscript 𝒫 subscript italic-ϵ 𝜃\mathcal{M}{\textit{DB}}(\mathcal{P}){\epsilon{\theta}}caligraphic_M start_POSTSUBSCRIPT DB end_POSTSUBSCRIPT ( caligraphic_P ) start_POSTSUBSCRIPT italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT end_POSTSUBSCRIPT is a DreamBoothed diffusion backbone. We also include two additional control experiments. In the first, ℳ ud(𝒫)subscript ℳ ud 𝒫\mathcal{M}{\textit{ud}}(\mathcal{P})caligraphic_M start_POSTSUBSCRIPT ud end_POSTSUBSCRIPT ( caligraphic_P ) an unrelated target domain style is appended to the end of the string. In the second, ℳ nd(𝒫)subscript ℳ nd 𝒫\mathcal{M}{\textit{nd}}(\mathcal{P})caligraphic_M start_POSTSUBSCRIPT nd end_POSTSUBSCRIPT ( caligraphic_P ) a nearby but a different target domain style is appended to the caption. ℳ TI(𝒫)subscript ℳ TI 𝒫\mathcal{M}{\textit{TI}}(\mathcal{P})caligraphic_M start_POSTSUBSCRIPT TI end_POSTSUBSCRIPT ( caligraphic_P ) and ℳ DB(𝒫)subscript ℳ DB 𝒫\mathcal{M}{\textit{DB}}(\mathcal{P})caligraphic_M start_POSTSUBSCRIPT DB end_POSTSUBSCRIPT ( caligraphic_P ) require more information than the other methods, such that 𝒫 𝒫\mathcal{P}caligraphic_P represents a subset of unlabelled images from the target domain.

Table 4: Cross-domain semantic segmentation. Cityscapes (CD) to Dark Zurich (DZ) val and Nighttime Driving (ND). We report the mIoU. Our method sets a new SOTA for DarkZurich and Nighttime Driving.

Table 5: Cross-domain object detection. Pascal VOC to Watercolor2k and Comic2k. We report the AP and AP 50 𝐴 subscript 𝑃 50 AP_{50}italic_A italic_P start_POSTSUBSCRIPT 50 end_POSTSUBSCRIPT. Our method sets a new SOTA for Watercolor2K.

Testing. When testing the trained models on the target domain images, we want to use the same captioning modification for the test images as in the training setup. However, 𝒢 TADP subscript 𝒢 TADP\mathcal{G}{\text{TADP}}caligraphic_G start_POSTSUBSCRIPT TADP end_POSTSUBSCRIPT introduces a confound since it naturally incorporates target domain information. For example, 𝒢 TADP(x)subscript 𝒢 TADP 𝑥\mathcal{G}{\text{TADP}}(x)caligraphic_G start_POSTSUBSCRIPT TADP end_POSTSUBSCRIPT ( italic_x ) might produce the caption “a watercolor painting of a dog and a bird" for an image from the Watercolor2K dataset. Using the ℳ simple(𝒫)subscript ℳ simple 𝒫\mathcal{M}_{\textit{simple}}(\mathcal{P})caligraphic_M start_POSTSUBSCRIPT simple end_POSTSUBSCRIPT ( caligraphic_P ) captioning modification on this prompt would introduce redundant information and would not match the caption format used during training. In order to remove target domain information and get a plain caption that can be modified in the same manner as in the training data, we use GPT-3.5 [6] to remove all mentions of the target domain shift. For example, after using GPT-3.5 to remove mentions of the watercolor style in the above sentence, we are left with “an image of a bird and a dog”. With these GPT-3.5 cleaned captions, we can match the caption modifications used during training when evaluating test images. This caption-cleaning strategy lets us control how target domain information is included in the test image captions, ensuring that test captions are in the same domain as train captions.

Evaluation. We evaluate cross-domain transfer on several datasets. We train our model on Pascal VOC [13, 14] object detection and evaluate on Watercolor2K (W2K) [21] and Comic2K (C2K) [21]. We also train our model on the Cityscapes [9] dataset and evaluate on the Nighttime Driving (ND) [10] and Dark Zurich-val (DZ-val) [39] datasets. We show results in Tabs.4,5. In the following sections, we also report the average performance of each method on the cross-domain segmentation datasets (average mIoU) and the cross-domain object detection datasets (average AP).

Null caption modifier. The null captions have no target domain information. In this setting, the model is trained with captions with no target domain information and tested with GPT-3.5 cleaned target domain captions. We find diffusion pre-training to be extraordinarily powerful on its own, with just plain captions (no target domain information); the model already achieves SOTA on VOC→→\to→W2K with 72.1 AP 50 𝐴 subscript 𝑃 50 AP_{50}italic_A italic_P start_POSTSUBSCRIPT 50 end_POSTSUBSCRIPT, SOTA on CD→→\to→DZ-val with 42.8 mIoU and SOTA on CD→→\to→ND with 60.8 mIoU. Our model performs better than the current SOTA [44] on VOC→→\to→W2K and worse on VOC→→\to→C2K (highlighted in yellow in Tab.5). However, [44] uses a large extra training dataset from the target (comic) domain, so we highlight in bold our results in Tab.5 to show they outperform all other methods that use only images in C2K as examples from the target domain. Furthermore, these results are with a lightweight FPN [24] head, in contrast to other competitive methods like Refign [7], which uses a heavier decoder head. These captions achieve 50.5 average mIoU and 36.6 average AP.

Simple caption modifier. We then add target domain information to our captions by prepending the target domain’s semantic shift to the generic captions. These caption modifiers are hand-crafted. For example, “a dog and a bird” becomes “a X style painting of a dog and a bird” (where X is watercolor for W2K and comic for C2K) and “a dark night photo of a dog and a bird” for DZ. These captions achieve 48.0 average mIoU and 37.7 average AP.

Textual Inversion caption modifier. Textual inversion [16] is a method that learns a target concept (an object or style) from a set of images and encodes it into a new token. We learn a novel token from target domain image samples to further increase image-text alignment (for details, see Sec.D.1). In this setting, the sentence template becomes “a <<>> style painting of a dog and a bird”. We find that, on average, Textual Inversion captions perform the best, achieving 51.1 average mIoU and 38.2 average AP.

DreamBooth caption modifier. DreamBooth-ing [37] aims to achieve the same goal as textual inversion. Along with learning a new token, the stable-diffusion backbone itself is fine-tuned with a set of target domain images (for details, see Sec.D.1). We swap the stable diffusion backbone with the DreamBooth-ed backbone before training. We use the same template as in textual inversion. These captions achieve 49.7 average mIoU and 38.1 average AP.

Ablations. We ablate our target domain alignment strategy by introducing unrelated and nearby target-domain style modifications. For example, this would be “a dashcam photo of a dog and a bird" (unrelated) and “a constructivism painting of a dog and a bird" (nearby) for the W2K and C2K datasets. “A watercolor painting of a car on the street" (unrelated) and “a foggy photo of a car on the street" for the ND and DZ-val datasets. We find these off-target domains reduce performance on all datasets.

5 Discussion

We present a method for image-text alignment that is general, fully automated, and can be applied to any diffusion-based perception model. To achieve this, we systematically explore the impact of text-image alignment on semantic segmentation, depth estimation, and object detection. We investigate whether similar principles apply in the cross-domain setting and find that alignment towards the target domain during training improves downstream cross-domain performance.

We find that EOS token averaging for prompting does not work as effectively as strings for the objects in the image. Our oracle ablation experiments show that our diffusion pre-trained segmentation model is particularly sensitive to missing classes (reduced recall) and less sensitive to off-target classes (reduced precision), and both have a negative impact. Our results show that aligning text prompts to the image is important in identifying/generating good multi-scale feature maps for the downstream segmentation head. This implies that the multi-scale features and latent representations do not naturally identify semantic concepts without the guidance of the text in diffusion models. Moreover, proper latent scaling is crucial for downstream vision tasks. Lastly, we show how using a captioner, which has the benefit of being open vocabulary, high precision, and downstream task agnostic, to prompt the diffusion pre-trained segmentation model automatically improves performance significantly over providing all possible class names.

We also find that diffusion models can be used effectively for cross-domain tasks. Our model, without any captions, already surpasses several SOTA results in cross-domain tasks due to the diffusion backbone’s generalizability. We find that good target domain alignment can help with cross-domain performance for some domains, and misalignment leads to worse performance. Capturing information about target domain styles in words alone can be difficult. For these cases, we show that model personalization through Textual Inversion or Dreambooth can bridge the gap without requiring labeled data. Future work could explore how to expand our framework to generalize to multiple unseen domains. Future work may also explore closed vocabulary captioners that are more task-specific to get closer to oracle-level performance.

Acknowledgements.

Pietro Perona and Markus Marks were supported by the National Institutes of Health (NIH R01 MH123612A) and the Caltech Chen Institute (Neuroscience Research Grant Award). Pietro Perona, Neehar Kondapaneni, Rogerio Guimaraes, and Markus Marks were supported by the Simons Foundation (NC-GB-CULM-00002953-02). Manuel Knott was supported by an ETH Zurich Doc.Mobility Fellowship. We thank Oisin Mac Aodha, Yisong Yue, and Mathieu Salzmann for their valuable inputs that helped improve this work.

References

- Balaji et al. [2022] Yogesh Balaji, Seungjun Nah, Xun Huang, Arash Vahdat, Jiaming Song, Qinsheng Zhang, Karsten Kreis, Miika Aittala, Timo Aila, Samuli Laine, Bryan Catanzaro, Tero Karras, and Ming-Yu Liu. eDiff-I: Text-to-Image Diffusion Models with an Ensemble of Expert Denoisers. arXiv preprint arXiv:2211.01324, 2022.

- Benigmim et al. [2023] Yasser Benigmim, Subhankar Roy, Slim Essid, Vicky Kalogeiton, and Stéphane Lathuilière. One-shot Unsupervised Domain Adaptation with Personalized Diffusion Models. arXiv preprint arXiv:2303.18080, 2023.

- Bhat et al. [2023] Shariq Farooq Bhat, Reiner Birkl, Diana Wofk, Peter Wonka, and Matthias Müller. ZoeDepth: Zero-shot Transfer by Combining Relative and Metric Depth. arXiv preprint arXiv:2302.12288, 2023.

- Bird et al. [2009] Steven Bird, Ewan Klein, and Edward Loper. Natural language processing with Python: analyzing text with the natural language toolkit. O’Reilly Media, Inc., 2009.

- Brack et al. [2023] Manuel Brack, Felix Friedrich, Dominik Hintersdorf, Lukas Struppek, Patrick Schramowski, and Kristian Kersting. SEGA: Instructing Diffusion using Semantic Dimensions. arXiv preprint arXiv:2301.12247, 2023.

- Brown et al. [2020] Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901, 2020.

- Brüggemann et al. [2022] David Brüggemann, Christos Sakaridis, Prune Truong, and Luc Van Gool. Refign: Align and Refine for Adaptation of Semantic Segmentation to Adverse Conditions. 2023 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), 2022.

- Chen et al. [2022] Zhe Chen, Yuchen Duan, Wenhai Wang, Junjun He, Tong Lu, Jifeng Dai, and Y. Qiao. Vision Transformer Adapter for Dense Predictions. arXiv preprint arXiv:2205.08534, 2022.

- Cordts et al. [2016] Marius Cordts, Mohamed Omran, Sebastian Ramos, Timo Rehfeld, Markus Enzweiler, Rodrigo Benenson, Uwe Franke, Stefan Roth, and Bernt Schiele. The Cityscapes Dataset for Semantic Urban Scene Understanding. 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pages 3213–3223, 2016.

- Dai and Gool [2018] Dengxin Dai and Luc Van Gool. Dark Model Adaptation: Semantic Image Segmentation from Daytime to Nighttime. 2018 21st International Conference on Intelligent Transportation Systems (ITSC), pages 3819–3824, 2018.

- Deng et al. [2021] Jinhong Deng, Wen Li, Yuhua Chen, and Lixin Duan. Unbiased mean teacher for cross-domain object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 4091–4101, 2021.

- Eigen et al. [2014] David Eigen, Christian Puhrsch, and Rob Fergus. Depth Map Prediction from a Single Image using a Multi-Scale Deep Network. In Advances in Neural Information Processing Systems 27 (NIPS 2014), 2014.

- [13] M. Everingham, L. Van Gool, C.K.I. Williams, J. Winn, and A. Zisserman. The PASCAL Visual Object Classes Challenge 2007 (VOC2007) Results. http://www.pascal-network.org/challenges/VOC/voc2007/workshop/index.html.

- Everingham et al. [2012] M. Everingham, L. Van Gool, C.K.I. Williams, J. Winn, and A. Zisserman. The PASCAL Visual Object Classes Challenge 2012 (VOC2012), 2012.

- Fang et al. [2022] Yuxin Fang, Wen Wang, Binhui Xie, Quan-Sen Sun, Ledell Yu Wu, Xinggang Wang, Tiejun Huang, Xinlong Wang, and Yue Cao. EVA: Exploring the Limits of Masked Visual Representation Learning at Scale. 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 19358–19369, 2022.

- Gal et al. [2022] Rinon Gal, Yuval Alaluf, Yuval Atzmon, Or Patashnik, Amit H. Bermano, Gal Chechik, and Daniel Cohen-Or. An Image is Worth One Word: Personalizing Text-to-Image Generation using Textual Inversion. arXiv preprint arXiv:2208.01618, 2022.

- Gong et al. [2023] Rui Gong, Martin Danelljan, Han Sun, Julio Delgado Mangas, and Luc Van Gool. Prompting Diffusion Representations for Cross-Domain Semantic Segmentation. arXiv preprint arXiv:2307.02138, 2023.

- Hertz et al. [2022] Amir Hertz, Ron Mokady, Jay Tenenbaum, Kfir Aberman, Yael Pritch, and Daniel Cohen-Or. Prompt-to-Prompt Image Editing with Cross Attention Control. arXiv preprint arXiv:2208.01626, 2022.

- Hou et al. [2021] Luwei Hou, Yu Zhang, Kui Fu, and Jia Li. Informative and consistent correspondence mining for cross-domain weakly supervised object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 9929–9938, 2021.

- Hoyer et al. [2022] Lukas Hoyer, Dengxin Dai, and Luc Van Gool. DAFormer: Improving Network Architectures and Training Strategies for Domain-Adaptive Semantic Segmentation. 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 9914–9925, 2022.

- Inoue et al. [2018] Naoto Inoue, Ryosuke Furuta, Toshihiko Yamasaki, and Kiyoharu Aizawa. Cross-Domain Weakly-Supervised Object Detection Through Progressive Domain Adaptation. In IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 5001–5009, 2018.

- Jia et al. [2021] Chao Jia, Yinfei Yang, Ye Xia, Yi-Ting Chen, Zarana Parekh, Hieu Pham, Quoc V. Le, Yunhsuan Sung, Zhen Li, and Tom Duerig. Scaling Up Visual and Vision-Language Representation Learning With Noisy Text Supervision. arXiv preprint arXiv:2102.05918, 2021.

- Jiang et al. [2021] Junguang Jiang, Baixu Chen, Jianmin Wang, and Mingsheng Long. Decoupled adaptation for cross-domain object detection. arXiv preprint arXiv:2110.02578, 2021.

- Kirillov et al. [2019] Alexander Kirillov, Ross B. Girshick, Kaiming He, and Piotr Dollár. Panoptic Feature Pyramid Networks. 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 6392–6401, 2019.

- Li et al. [2023] Junnan Li, Dongxu Li, Silvio Savarese, and Steven Hoi. BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models. arXiv preprint arXiv:2301.12597, 2023.

- Li et al. [2022] Yangguang Li, Feng Liang, Lichen Zhao, Yufeng Cui, Wanli Ouyang, Jing Shao, Fengwei Yu, and Junjie Yan. Supervision Exists Everywhere: A Data Efficient Contrastive Language-Image Pre-training Paradigm. arXiv preprint arXiv:2110.05208, 2022.

- Liu et al. [2021] Ze Liu, Han Hu, Yutong Lin, Zhuliang Yao, Zhenda Xie, Yixuan Wei, Jia Ning, Yue Cao, Zheng Zhang, Li Dong, Furu Wei, and Baining Guo. Swin Transformer V2: Scaling Up Capacity and Resolution. 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 11999–12009, 2021.

- Luo et al. [2023] Grace Luo, Lisa Dunlap, Dong Huk Park, Aleksander Holynski, and Trevor Darrell. Diffusion hyperfeatures: Searching through time and space for semantic correspondence. arXiv preprint arXiv:2305.14334, 2023.

- Ning et al. [2023] Jia Ning, Chen Li, Zheng Zhang, Zigang Geng, Qi Dai, Kun He, and Han Hu. All in Tokens: Unifying Output Space of Visual Tasks via Soft Token. arXiv preprint arXiv:2301.02229, 2023.

- Ouyang et al. [2021] Shengxiong Ouyang, Xinglu Wang, Kejie Lyu, and Yingming Li. Pseudo-label generation-evaluation framework for cross domain weakly supervised object detection. In 2021 IEEE International Conference on Image Processing (ICIP), pages 724–728. IEEE, 2021.

- Radford et al. [2021] Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, Gretchen Krueger, and Ilya Sutskever. Learning Transferable Visual Models From Natural Language Supervision. International Conference on Machine Learning, pages 8748–8763, 2021.

- Ramesh et al. [2022] Aditya Ramesh, Prafulla Dhariwal, Alex Nichol, Casey Chu, and Mark Chen. Hierarchical Text-Conditional Image Generation with CLIP Latents. arXiv preprint arXiv:2204.06125, 2022.

- Rao et al. [2022] Yongming Rao, Wenliang Zhao, Guangyi Chen, Yansong Tang, Zheng Zhu, Guan Huang, Jie Zhou, and Jiwen Lu. DenseCLIP: Language-Guided Dense Prediction with Context-Aware Prompting. 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 18061–18070, 2022.

- Ren et al. [2017] Shaoqing Ren, Kaiming He, Ross Girshick, and Jian Sun. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. IEEE Transactions on Pattern Analysis and Machine Intelligence, 39(6):1137–1149, 2017.

- Rombach et al. [2022] Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser, and Bjorn Ommer. High-Resolution Image Synthesis with Latent Diffusion Models. 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 10674–10685, 2022.

- Ronneberger et al. [2015] Olaf Ronneberger, Philipp Fischer, and Thomas Brox. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Medical Image Computing and Computer-Assisted Intervention – MICCAI 2015, pages 234–241. Springer International Publishing, Cham, 2015.

- Ruiz et al. [2022] Nataniel Ruiz, Yuanzhen Li, Varun Jampani, Yael Pritch, Michael Rubinstein, and Kfir Aberman. DreamBooth: Fine Tuning Text-to-Image Diffusion Models for Subject-Driven Generation. arXiv preprint arXiv:2208.12242, 2022.

- Saharia et al. [2022] Chitwan Saharia, William Chan, Saurabh Saxena, Lala Li, Jay Whang, Emily L. Denton, Seyed Kamyar Seyed Ghasemipour, Burcu Karagol Ayan, Seyedeh Sara Mahdavi, Raphael Gontijo Lopes, Tim Salimans, Jonathan Ho, David J. Fleet, and Mohammad Norouzi. Photorealistic Text-to-Image Diffusion Models with Deep Language Understanding. arXiv preprint arXiv:2205.11487, 2022.

- Sakaridis et al. [2019] Christos Sakaridis, Dengxin Dai, and Luc Van Gool. Guided Curriculum Model Adaptation and Uncertainty-Aware Evaluation for Semantic Nighttime Image Segmentation. 2019 IEEE/CVF International Conference on Computer Vision (ICCV), pages 7373–7382, 2019.

- Schuhmann et al. [2021] Christoph Schuhmann, Richard Vencu, Romain Beaumont, Robert Kaczmarczyk, Clayton Mullis, Aarush Katta, Theo Coombes, Jenia Jitsev, and Aran Komatsuzaki. LAION-400M: Open Dataset of CLIP-Filtered 400 Million Image-Text Pairs. arXiv preprint arXiv:2111.02114, 2021.

- Schuhmann et al. [2022] Christoph Schuhmann, Romain Beaumont, Richard Vencu, Cade Gordon, Ross Wightman, Mehdi Cherti, Theo Coombes, Aarush Katta, Clayton Mullis, Mitchell Wortsman, Patrick Schramowski, Srivatsa Kundurthy, Katherine Crowson, Ludwig Schmidt, Robert Kaczmarczyk, and Jenia Jitsev. LAION-5B: An open large-scale dataset for training next generation image-text models. arXiv preprint arXiv:2210.08402, 2022.

- Silberman et al. [2012] Nathan Silberman, Derek Hoiem, Pushmeet Kohli, and Rob Fergus. Indoor Segmentation and Support Inference from RGBD Images. European Conference on Computer Vision (ECCV), 2012.

- Tang et al. [2023] Luming Tang, Menglin Jia, Qianqian Wang, Cheng Perng Phoo, and Bharath Hariharan. Emergent correspondence from image diffusion. arXiv preprint arXiv:2306.03881, 2023.

- Topal et al. [2022] Barış Batuhan Topal, Deniz Yuret, and Tevfik Metin Sezgin. Domain-adaptive self-supervised pre-training for face & body detection in drawings. arXiv preprint arXiv:2211.10641, 2022.

- Tzeng et al. [2017] Eric Tzeng, Judy Hoffman, Kate Saenko, and Trevor Darrell. Adversarial discriminative domain adaptation. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 7167–7176, 2017.

- Vidit et al. [2023] Vidit Vidit, Martin Engilberge, and Mathieu Salzmann. CLIP the Gap: A Single Domain Generalization Approach for Object Detection. 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 3219–3229, 2023.

- Wang et al. [2023a] Peng Wang, Shijie Wang, Junyang Lin, Shuai Bai, Xiaohuan Zhou, Jingren Zhou, Xinggang Wang, and Chang Zhou. ONE-PEACE: Exploring One General Representation Model Toward Unlimited Modalities. arXiv preprint arXiv:2305.11172, 2023a.

- Wang et al. [2022] Wenhai Wang, Jifeng Dai, Zhe Chen, Zhenhang Huang, Zhiqi Li, Xizhou Zhu, Xiao-hua Hu, Tong Lu, Lewei Lu, Hongsheng Li, Xiaogang Wang, and Y. Qiao. InternImage: Exploring Large-Scale Vision Foundation Models with Deformable Convolutions. 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 14408–14419, 2022.

- Wang et al. [2023b] Wen Wang, Hangbo Bao, Li Dong, Johan Bjorck, Zhiliang Peng, Qiangbo Liu, Kriti Aggarwal, Owais Khan Mohammed, Saksham Singhal, Subhojit Som, and Furu Wei. Image as a Foreign Language: BEIT Pretraining for Vision and Vision-Language Tasks. 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 19175–19186, 2023b.

- Wu et al. [2023] Weijia Wu, Yuzhong Zhao, Mike Zheng Shou, Hong Zhou, and Chunhua Shen. DiffuMask: Synthesizing Images with Pixel-level Annotations for Semantic Segmentation Using Diffusion Models. arXiv preprint arXiv:2303.11681, 2023.

- Xu et al. [2022] Yunqiu Xu, Yifan Sun, Zongxin Yang, Jiaxu Miao, and Yi Yang. H2fa r-cnn: Holistic and hierarchical feature alignment for cross-domain weakly supervised object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 14329–14339, 2022.

- Yu et al. [2022] Jiahui Yu, Yuanzhong Xu, Jing Yu Koh, Thang Luong, Gunjan Baid, Zirui Wang, Vijay Vasudevan, Alexander Ku, Yinfei Yang, Burcu Karagol Ayan, Benton C. Hutchinson, Wei Han, Zarana Parekh, Xin Li, Han Zhang, Jason Baldridge, and Yonghui Wu. Scaling Autoregressive Models for Content-Rich Text-to-Image Generation. arXiv preprint arXiv:2206.10789, 2022.

- Zhao et al. [2023] Wenliang Zhao, Yongming Rao, Zuyan Liu, Benlin Liu, Jie Zhou, and Jiwen Lu. Unleashing Text-to-Image Diffusion Models for Visual Perception. arXiv preprint arXiv:2303.02153, 2023.

- Zhao et al. [2020] Zhen Zhao, Yuhong Guo, Haifeng Shen, and Jieping Ye. Adaptive object detection with dual multi-label prediction. In Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part XXVIII 16, pages 54–69. Springer, 2020.

- Zhou et al. [2017] Bolei Zhou, Hang Zhao, Xavier Puig, Sanja Fidler, Adela Barriuso, and Antonio Torralba. Scene Parsing through ADE20K Dataset. 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pages 5122–5130, 2017.

\thetitle

Supplementary Materials

A Cross-attention analysis

Qualitative image-to-image variation analysis. We present a qualitative and quantitative analysis of the effect of off-target class names added to the prompt. In Fig.S1, we use the stable diffusion image to image (img2img) variation pipeline (with the original Stable Diffusion 1.5 weights) to qualitatively analyze the effects of prompts with off-target classes. The img2img variation pipeline encodes a real image into a latent representation, adds a user-specified amount of noise to the latent representation, and de-noises it (according to a user-specified prompt) to generate a variation on the original image. The amount of noise added is dictated by a strength ratio indicating how much variation should occur. A higher ratio results in more added noise and more denoising steps, allowing a relatively higher impact of the new text prompt on the image. We find that

𝒞 ClassNames subscript 𝒞 ClassNames\mathcal{C}_{\text{ClassNames}}caligraphic_C start_POSTSUBSCRIPT ClassNames end_POSTSUBSCRIPT (see caption for details) results in variations that incorporate the off-target classes. This effect is most clear looking across the panels left to right in which objects belonging to off-target classes (an airplane and a train) become more prominent. These qualitative results imply that this prompt modifies the latent representation to incorporate information about off-target classes, potentially making the downstream task more difficult. In contrast, using the BLIP prompt changes the image, but the semantics (position of objects, classes present) of the image variation are significantly closer to the original. These results suggest a mechanism for how off-target classes may impact our vision models. We quantitatively measure this effect using a fully trained Oracle model in the following section.

Copy-Paste Experiment. An interesting property in Fig.4 is that the word bottle has strong cross-attention over the neck of the bird. We hypothesize that diffusion models seek to find the nearest match for each token since they are trained to generate images that correspond to the prompt. We test this hypothesis on a base image of a dog and a bird. We first visualize the cross-attention maps for a set of object labels. We find that the words bottle, cat, and horse have a strong cross-attention to the bird, dog, and dog, respectively. We paste a bottle, cat, and horse into the base image to see if the diffusion model will localize the “correct” objects if they are present. In Fig.S2, we show that the cross-attention maps prefer to localize the “correct” object, suggesting our hypothesis is correct.

Averaged EOS Tokens: Averaging vs. EOS? Averaged EOS Tokens create diffuse attention maps that empirically harm performance. What is the actual cause of the decrease in performance? Is it averaging, or is it the usage of many EOS tokens? We replace the averaged EOS tokens with single prompt EOS tokens and find that the attention maps are still diffuse. This indicates that the usage of EOS tokens is the primary cause of the diffuse attention maps and not the averaging.

Quantitative effect of 𝒞 ClassNames subscript 𝒞 ClassNames\mathcal{C}_{\text{ClassNames}}caligraphic_C start_POSTSUBSCRIPT ClassNames end_POSTSUBSCRIPT on Oracle model. To quantify the impact of the off-target classes on the downstream vision task, we measure the averaged pixel-wise scores (normalized via Softmax) per class when passing the

𝒞 ClassNames subscript 𝒞 ClassNames\mathcal{C}_{\text{ClassNames}}caligraphic_C start_POSTSUBSCRIPT ClassNames end_POSTSUBSCRIPT to the Oracle segmentation model for Pascal VOC 2012 (Fig.S4). We compare this to the original oracle prompt. We find that including the off-target prompts significantly increases the probability of a pixel being misclassified as one of the semantically nearby off-target classes. For example, if the original image contains a cow, including the words dog and sheep, it significantly raises the probability of misclassifying the pixels belonging to the cow as pixels belonging to a dog or a sheep. These results indicate that the task-specific head picks up the effect of off-target classes and is incorporated into the output.

Figure S1: Qualitative image-to-image variation. An untrained stable diffusion model is passed an image to perform image-to-image variation. The number of denoising steps conducted increases from left to right (5 to 45 out of a total of 50). On the top row, we pass all the class names in Pascal VOC 2012: “background airplane bicycle bird boat bottle bus car cat chair cow dining table dog horse motorcycle person potted plant sheep sofa train television”. In the bottom row we pass the BLIP caption “a bird and a dog”.

Figure S2: Copy-Paste Experiment. A bottle, a cat, and a horse from different images are copied and pasted into our base image to see how the cross-attention maps change. The label on the left describes the category of the item that has been pasted into the image. The labels above each map describe the cross-attention map corresponding to the token for that label.

Figure S3: Averaging vs. EOS. In [53], for each class name, the EOS token from 80 prompts (containing the class name) was averaged together. The averaged EOS tokens for each class were concatenated together and passed to the diffusion model as text input. We explore if averaging drives the diffuse nature of the cross-attention maps. We replace the 80 prompt templates with a single prompt template: “a photo of a {class name}” and visualize the cross-attention maps. In the top row, we show the averaged template EOS tokens. In the bottom row, we show the single template EOS tokens.

Figure S4: Impact of off-target classes on semantic segmentation performance. The matrices show normalized scores averaged over pixels on Pascal VOC 2012 for an oracle-trained model when receiving either present class names (left) or all class names (right).

B Additional ADE20K Results

Table S1: Semantic segmentation fast schedule on ADE20K. Our method has a large advantage over prior work on the fast schedule with significantly better performance in both the single-scale and multi-scale evaluations for 4k and 8k iterations.

Table S2: ADE20K - Oracle Precision-Recall Ablations We modify the oracle captions by randomly adding or removing classes such that the precision and recall are 0.50, 0.75, or 1.00. We train models on ADE20K on a fast schedule (4K) using these captions. The 4k iteration oracle equivalent is highlighted in blue.

Figure S5: Recall analysis. ADE20k mIOU per image with respect to the recall of classes present in the caption. We embedded each word in our caption with CLIP’s text encoder. We considered a cosine similarity of ≥0.9 absent 0.9\geq 0.9≥ 0.9 with the embedded class name as a match. Linear regression analysis shows positive correlations between recall and mIoU (r=0.28 𝑟 0.28 r=0.28 italic_r = 0.28).

Figure S6: Object size analysis. ADE20k IOU per object image with respect to the relative object size (pixels divided by total pixels). Linear regression analysis shows positive correlations between relative object size and the IoU-score of a class (r=0.40 𝑟 0.40 r=0.40 italic_r = 0.40).

C Qualitative Examples

Figure S7: Ground truth examples of the tokenized datasets.

Figure S8: Textual inversion and Dreambooth tokens of Cityscapes to Dark Zurich.

Figure S9: Textual inversion and Dreambooth tokens of VOC to Comic.

Figure S10: Textual inversion and Dreambooth tokens of VOC to Watercolor.

Figure S11: Predictions (top) and Ground Truth (bottom) visualizations for Pascal VOC2012.

Figure S12: Predictions (top) and Ground Truth (bottom) visualizations for ADE20K.

Figure S13: Predictions (top) and Ground Truth (bottom) visualizations for NYUv2 Depth.

Figure S14: Depth Estimation Comparison: Image, Ground Truth, and Prediction visualizations for Midas, VPD, and TADP (ours) in NYUv2 Depth. Black boxes (red on original image) show where TADP is better than Midas and/or VPD.

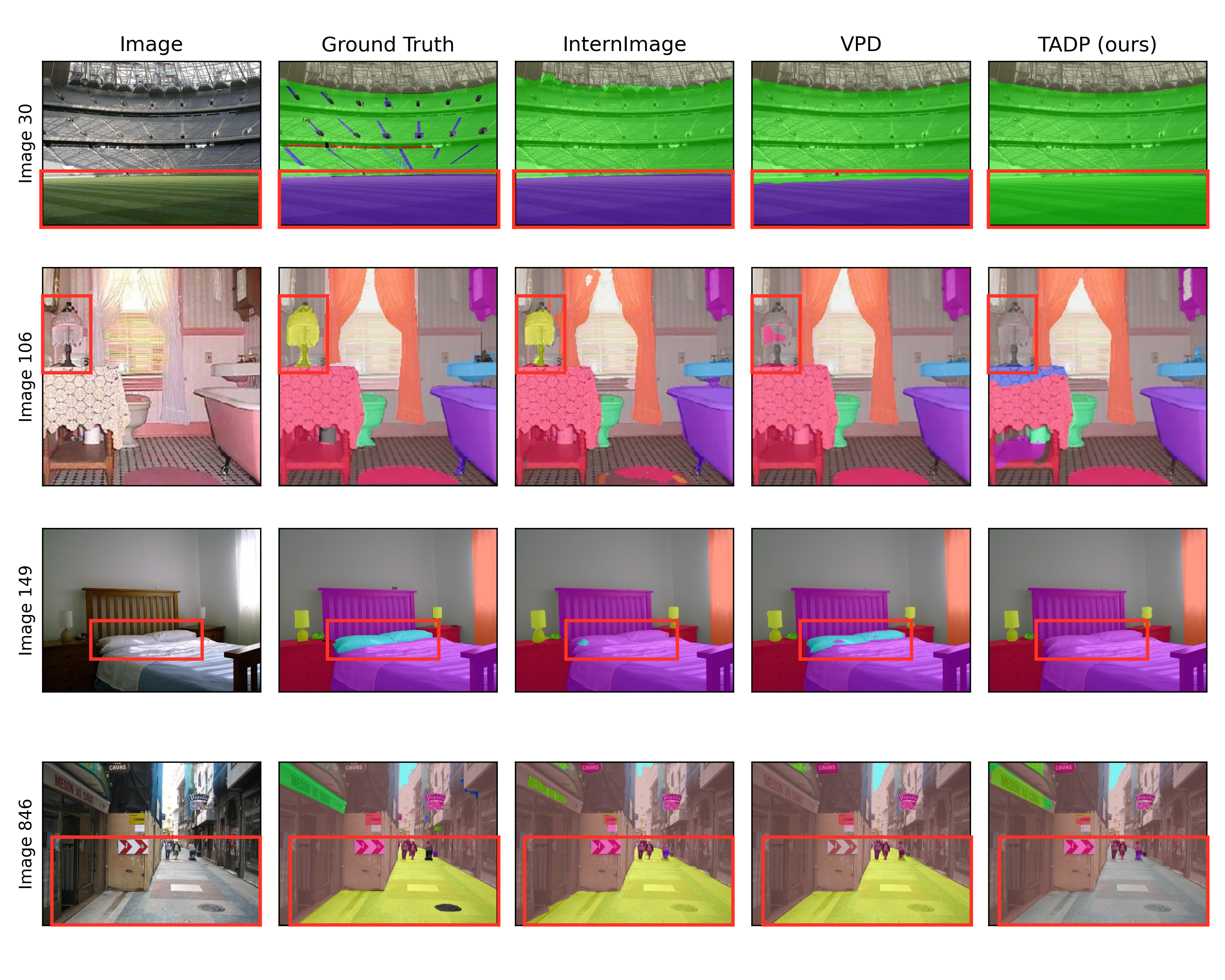

Figure S15: Image Segmentation Comparison: Image, Ground Truth, and Prediction visualizations for InternImage, VPD, and TADP (ours) in ADE20K. Red boxes show where TADP is better than InternImage and/or VPD.

Figure S16: Image Segmentation Comparison: Image, Ground Truth, and Prediction visualizations for InternImage, VPD, and TADP (ours) in ADE20K. Red boxes show where TADP is better than InternImage and/or VPD.

Figure S17: Depth Estimation Comparison: Image, Ground Truth, and Prediction visualizations for Midas, VPD, and TADP (ours) in NYUv2 Depth. TADP is worse than Midas and/or VPD in these images in terms of the general scale

Figure S18: Image Segmentation Comparison: Image, Ground Truth, and Prediction visualizations for InternImage, VPD, and TADP (ours) in ADE20K. Red boxes show where TADP is worse than InternImage and/or VPD.

Figure S19: Cross-domain Image Segmentation Comparison: Image, Ground Truth, and Prediction visualizations for Refign-DAFormer, and TADP (ours) for Cityscapes to Dark Zurich Val. Red boxes show where TADP is better than Refign-DAFormer.

Figure S20: Cross-domain Object Detection Comparison: Image, Ground Truth, and Prediction visualizations for DASS, and TADP (ours) for Pascal VOC to Watercolor2k. Red boxes show the detections of each model. Notice that TADP not only beats DASS mostly, but also finds more objects than the ones annotated in the ground truth.

D Implementation Details

To isolate the effects of our text-image alignment method, we ensure our model setup precisely follows prior work. Following VPD [53], we jointly train the task-specific head and the diffusion backbone. The learning rate of the backbone is set to 1/10 the learning rate of the head to preserve the benefits of pre-training better. We describe the different tasks by describing H 𝐻 H italic_H and ℒ H subscript ℒ 𝐻\mathcal{L}_{H}caligraphic_L start_POSTSUBSCRIPT italic_H end_POSTSUBSCRIPT. We use an FPN [24] head with a cross-entropy loss for segmentation. We use the same convolutional head used in VPD for monocular depth estimation with a Scale-Invariant loss [12]. For object detection, we use a Faster-RCNN head with the standard Faster-RCNN loss [34]1 1 1 Object detection was not explored in VPD.. Further details of the training setup can be found in Tab.S3 and Tab.S4. In our single-domain tables, we include our reproduction of VPD, denoted with a (R). We compute our relative gains with our reproduced numbers, with the same seed for all experiments.

(a)ADE20k - full schedule

(b)ADE20k - fast schedule 8k

(c)ADE20k - fast schedule 4k

(d)NYUv2

(e)NYUv2 - fast schedule

(f)Pascal VOC

Table S3: Single-Domain Hyperparameters.

(a)Cityscapes →→\rightarrow→ Dark Zurich & NightTime Driving

(b)Pascal VOC →→\rightarrow→ Watercolor & Comic

(c)Dreambooth Hyperparameters

(d)Textual Inversion Hyperparameters

Table S4: Cross-Domain Hyperparameters.

D.1 Model personalization

For textual inversion, we use 500 images from DZ-train and five images for W2K and C2K and train all tokens for 1000 steps. We use a constant learning rate scheduler with a learning rate of 5e−4 5 𝑒 4 5e-4 5 italic_e - 4 and no warmup. For Dreambooth, we use the same images as in textual inversion but train the model for 500 steps (DZ) steps or 1000 steps (W2K and C2K). We use a learning rate of 2e−6 2 𝑒 6 2e-6 2 italic_e - 6 with a constant learning rate scheduler and no warmup. We use no prior preservation loss.

Xet Storage Details

- Size:

- 86.3 kB

- Xet hash:

- 93d1314fddd1a6d2ea8d9b21aa7c065549f8f2a4f4fa501e1ba9e3fd8305462b

Xet efficiently stores files, intelligently splitting them into unique chunks and accelerating uploads and downloads. More info.