Buckets:

Title: High-Fidelity Subject-Driven Fine-Tuning for Diffusion Models

URL Source: https://arxiv.org/html/2312.00079

Markdown Content: Zhonghao Wang 1,2 1 2{}^{1,2}start_FLOATSUPERSCRIPT 1 , 2 end_FLOATSUPERSCRIPT, Wei Wei 4 4{}^{4}start_FLOATSUPERSCRIPT 4 end_FLOATSUPERSCRIPT, Yang Zhao 1 1{}^{1}start_FLOATSUPERSCRIPT 1 end_FLOATSUPERSCRIPT, Zhisheng Xiao 1 1{}^{1}start_FLOATSUPERSCRIPT 1 end_FLOATSUPERSCRIPT,

Mark Hasegawa-Johnson 2 2{}^{2}start_FLOATSUPERSCRIPT 2 end_FLOATSUPERSCRIPT, Humphrey Shi 2,3 2 3{}^{2,3}start_FLOATSUPERSCRIPT 2 , 3 end_FLOATSUPERSCRIPT, Tingbo Hou 1 1{}^{1}start_FLOATSUPERSCRIPT 1 end_FLOATSUPERSCRIPT

1 1{}^{1}start_FLOATSUPERSCRIPT 1 end_FLOATSUPERSCRIPT Google, 2 2{}^{2}start_FLOATSUPERSCRIPT 2 end_FLOATSUPERSCRIPT UIUC, 3 3{}^{3}start_FLOATSUPERSCRIPT 3 end_FLOATSUPERSCRIPT Georgia Tech, 4 4{}^{4}start_FLOATSUPERSCRIPT 4 end_FLOATSUPERSCRIPT Accenture

Abstract

This paper explores advancements in high-fidelity personalized image generation through the utilization of pre-trained text-to-image diffusion models. While previous approaches have made significant strides in generating versatile scenes based on text descriptions and a few input images, challenges persist in maintaining the subject fidelity within the generated images. In this work, we introduce an innovative algorithm named HiFi Tuner to enhance the appearance preservation of objects during personalized image generation. Our proposed method employs a parameter-efficient fine-tuning framework, comprising a denoising process and a pivotal inversion process. Key enhancements include the utilization of mask guidance, a novel parameter regularization technique, and the incorporation of step-wise subject representations to elevate the sample fidelity. Additionally, we propose a reference-guided generation approach that leverages the pivotal inversion of a reference image to mitigate unwanted subject variations and artifacts. We further extend our method to a novel image editing task: substituting the subject in an image through textual manipulations. Experimental evaluations conducted on the DreamBooth dataset using the Stable Diffusion model showcase promising results. Fine-tuning solely on textual embeddings improves CLIP-T score by 3.6 points and improves DINO score by 9.6 points over Textual Inversion. When fine-tuning all parameters, HiFi Tuner improves CLIP-T score by 1.2 points and improves DINO score by 1.2 points over DreamBooth, establishing a new state of the art.

1 Introduction

Figure 1: Illustration of HiFi Tuner. We first learn the step-wise subject representations with subject source images and masks. Then we select and transform the reference image, and use DDIM inversion to obtain its noise latent trajectory. Finally, we generate an image controlled by the prompt, the step-wise subject representations and the reference subject guidance.

Diffusion models [37, 14] have demonstrated a remarkable success in producing realistic and diverse images. The advent of large-scale text-to-image diffusion models [33, 30, 29], leveraging expansive web-scale training datasets [34, 7], has enabled the generation of high-quality images that align closely with textual guidance. Despite this achievement, the training data remains inherently limited in its coverage of all possible subjects. Consequently, it becomes infeasible for diffusion models to accurately generate images of specific, unseen subjects based solely on textual descriptions. As a result, personalized generation has emerged as a pivotal research problem. This approach seeks to fine-tune the model with minimal additional costs, aiming to generate images of user-specified subjects that seamlessly align with the provided text descriptions.

We identify three drawbacks of existing popular methods for subject-driven fine-tuning [31, 15, 9, 32]. Firstly, a notable imbalance exists between sample quality and parameter efficiency in the fine-tuning process. For example, Textual Inversion optimizes only a few parameters in the text embedding space, resulting in poor sample fidelity. Conversely, DreamBooth achieves commendable sample fidelity but at the cost of optimizing a substantial number of parameters. Ideally, there should be a parameter-efficient method that facilitates the generation of images with satisfactory sample fidelity while remaining lightweight for improved portability. Secondly, achieving a equilibrium between sample fidelity and the flexibility to render objects in diverse scenes poses a significant challenge. Typically, as fine-tuning iterations increase, the sample fidelity improves, but the flexibility of the scene coverage diminishes. Thirdly, current methods struggle to accurately preserve the appearance of the input object. Due to the extraction of subject representations from limited data, these representations offer weak constraints to the diffusion model. Consequently, unwanted variations and artifacts may appear in the generated subject.

In this study, we introduce a novel framework named HiFi Tuner for subject fine-tuning that prioritizes the parameter efficiency, thereby enhancing sample fidelity, preserving the scene coverage, and mitigating undesired subject variations and artifacts. Our denoising process incorporates a mask guidance to reduce the influence of the image background on subject representations. Additionally, we introduce a novel parameter regularization method to sustain the model’s scene coverage capability and design a step-wise subject representation mechanism that adapts to parameter functions at different denoising steps. We further propose a reference-guided generation method that leverages pivotal inversion of a reference image. By integrating guiding information into the step-wise denoising process, we effectively address issues related to unwanted variations and artifacts in the generated subjects. Notably, our framework demonstrates versatility by extending its application to a novel image editing task: substituting the subject in an image with a user-specified subject through textual manipulations.

We summarize the contributions of our work as follows. Firstly, we identify and leverage three effective techniques to enhance the subject representation capability of textual embeddings. This improvement significantly aids the diffusion model in generating samples with heightened fidelity. Secondly, we introduce a novel reference-guided generation process that successfully addresses unwanted subject variations and artifacts in the generated images. Thirdly, we extend the application of our methodology to a new subject-driven image editing task, showcasing its versatility and applicability in diverse scenarios. Finally, we demonstrate the generic nature of HiFi Tuner by showcasing its effectiveness in enhancing the performance of both the Textual Inversion and the DreamBooth.

2 Related Works

Subject-driven text-to-image generation. This task requires the generative models generate the subject provided by users in accordance with the textual prompt description. Pioneer works [4, 26] utilize Generative Adversarial Networks (GAN) [10] to synthesize images of a particular instance. Later works benefit from the success of diffusion models [30, 33] to achieve a superior faithfulness in the personalized generation. Some works [6, 35] rely on retrieval-augmented architecture to generate rare subjects. However, they use weakly-supervised data which results in an unsatisfying faithfullness for the generated images. There are encoder-based methods [5, 16, 36] that encode the reference subjects as a guidance for the diffusion process. However, these methods consume a huge amount of time and resources to train the encoder and does not perform well for out-of-domain subjects. Other works [31, 9] fine-tune the components of diffusion models with the provided subject images. Our method follows this line of works as our models are faithful and generic in generating rare and unseen subjects.

Text-guided image editing. This task requires the model to edit an input image according to the modifications described by the text. Early works [27, 9] based on diffusion models [30, 33] prove the effectiveness of manipulating textual inputs for editing an image. Further works [1, 24] propose to blend noise with the input image for the generation process to maintain the layout of the input image. Prompt-to-Prompt [12, 25] manipulates the cross attention maps from the image latent to the textual embedding to edit an image and maintain its layout. InstructPix2Pix [2] distills the diffusion model with image editing pairs synthesized by Prompt-to-Prompt to implement the image editing based on instructions.

3 Methods

Figure 2: The framework of HiFi Tuner. The grey arrows stand for the data flow direction. The red arrows stand for the gradient back propagation direction. SAM 𝑆 𝐴 𝑀 SAM italic_S italic_A italic_M stands for the Segment Anything [18] model. DM 𝐷 𝑀 DM italic_D italic_M stands for the Stable Diffusion [30] model. DDIM 𝐷 𝐷 𝐼 𝑀 DDIM italic_D italic_D italic_I italic_M and DDIM−1 𝐷 𝐷 𝐼 superscript 𝑀 1{DDIM}^{-1}italic_D italic_D italic_I italic_M start_POSTSUPERSCRIPT - 1 end_POSTSUPERSCRIPT stands for the DDIM denoising step and inversion step respectively.

In this section, we elaborate HiFi Tuner in details. We use the denoising process to generate subjects with appearance variations and the inversion process to preserve the details of subjects. In section 3.1, we present some necessary backgrounds for our work. In section 3.2, we introduce the three proposed techniques that help preserving the subject identity. In section 3.3, we introduce the reference-guided generation technique, which merits the image inversion process to further preserve subject details. In section 3.4, we introduce an extension of our work on a novel image editing application – personalized subject replacement with only textual prompt edition.

3.1 Backgrounds

Stable diffusion[30] is a widely adopted framework in the realm of text-to-image diffusion models. Unlike other methods [33, 29], Stable diffusion is a latent diffusion model, where the diffusion model is trained within the latent space of a Variational Autoencoder (VAE). To accomplish text-to-image generation, a text prompt undergoes encoding into textual embeddings c 𝑐 c italic_c using a CLIP text encoder[28]. Subsequently, a random Gaussian noise latent x T subscript 𝑥 𝑇 x_{T}italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT is initialized. The process then recursively denoises noisy latent x t subscript 𝑥 𝑡 x_{t}italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT through a noise predictor network ϵ θ subscript italic-ϵ 𝜃\epsilon_{\theta}italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT with the conditioning of c 𝑐 c italic_c. Finally, the VAE decoder is employed to project the denoised latent x 0 subscript 𝑥 0 x_{0}italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT onto an image. During the sampling process, a commonly applied mechanism involves classifier-free guidance [13] to enhance sample quality. Additionally, deterministic samplers, such as DDIM [38], are employed to improve sampling efficiency. The denoising process can be expressed as

x t−1=F(t)(x t,c,ϕ)=β tx t−γ t(wϵ θ(x t,c)+(1−w)ϵ θ(x t,ϕ)).subscript 𝑥 𝑡 1 superscript 𝐹 𝑡 subscript 𝑥 𝑡 𝑐 italic-ϕ subscript 𝛽 𝑡 subscript 𝑥 𝑡 subscript 𝛾 𝑡 𝑤 subscript italic-ϵ 𝜃 subscript 𝑥 𝑡 𝑐 1 𝑤 subscript italic-ϵ 𝜃 subscript 𝑥 𝑡 italic-ϕ\begin{split}x_{t-1}&=F^{(t)}(x_{t},c,\phi)\ &=\beta_{t}x_{t}-\gamma_{t}(w\epsilon_{\theta}(x_{t},c)+(1-w)\epsilon_{\theta}% (x_{t},\phi)).\end{split}start_ROW start_CELL italic_x start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT end_CELL start_CELL = italic_F start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_c , italic_ϕ ) end_CELL end_ROW start_ROW start_CELL end_CELL start_CELL = italic_β start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT - italic_γ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ( italic_w italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_c ) + ( 1 - italic_w ) italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_ϕ ) ) . end_CELL end_ROW(1)

where β t subscript 𝛽 𝑡\beta_{t}italic_β start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT and γ t subscript 𝛾 𝑡\gamma_{t}italic_γ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT are time-dependent constants; w 𝑤 w italic_w is the classifier-free guidance weight; ϕ italic-ϕ\phi italic_ϕ is the CLIP embedding for a null string.

Textual inversion[9]. As a pioneer work in personalized generation, Textual Inversion introduced the novel concept that a singular learnable textual token is adequate to represent a subject for the personalization. Specifically, the method keeps all the parameters of the diffusion model frozen, exclusively training a word embedding vector c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT using the diffusion objective:

𝔏 s(c s)=min c s‖ϵ θ(x t,[c,c s])−ϵ‖2 2,subscript 𝔏 𝑠 subscript 𝑐 𝑠 subscript subscript 𝑐 𝑠 superscript subscript norm subscript italic-ϵ 𝜃 subscript 𝑥 𝑡 𝑐 subscript 𝑐 𝑠 italic-ϵ 2 2\displaystyle\mathfrak{L}{s}(c{s})=\min_{c_{s}}|\epsilon_{\theta}(x_{t},[c,% c_{s}])-\epsilon|_{2}^{2},fraktur_L start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ( italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ) = roman_min start_POSTSUBSCRIPT italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT end_POSTSUBSCRIPT ∥ italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , [ italic_c , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ] ) - italic_ϵ ∥ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT ,(2)

where [c,c s]𝑐 subscript 𝑐 𝑠[c,c_{s}][ italic_c , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ] represents replacing the object-related word embedding in the embedding sequence of the training caption (e.g. “a photo of A”) with the learnable embedding c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT. After c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT is optimized, this work applies F(t)(x t,[c,c s],ϕ)superscript 𝐹 𝑡 subscript 𝑥 𝑡 𝑐 subscript 𝑐 𝑠 italic-ϕ F^{(t)}(x_{t},[c,c_{s}],\phi)italic_F start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , [ italic_c , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ] , italic_ϕ ) for generating personalized images from prompts.

Null-text inversion[25] method introduces an inversion-based approach to image editing, entailing the initial inversion of an image input to the latent space, followed by denoising with a user-provided prompt. This method comprises two crucial processes: a pivotal inversion process and a null-text optimization process. The pivotal inversion involves the reversal of the latent representation of an input image, denoted as x 0 subscript 𝑥 0 x_{0}italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT, back to a noise latent representation, x T subscript 𝑥 𝑇 x_{T}italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT, achieved through the application of reverse DDIM. This process can be formulated as reparameterizing Eqn. (1) with w=1 𝑤 1 w=1 italic_w = 1:

x t+1=F−1(t)(x t,c)=β t¯x t+γ t¯ϵ θ(x t,c)subscript 𝑥 𝑡 1 superscript superscript 𝐹 1 𝑡 subscript 𝑥 𝑡 𝑐¯subscript 𝛽 𝑡 subscript 𝑥 𝑡¯subscript 𝛾 𝑡 subscript italic-ϵ 𝜃 subscript 𝑥 𝑡 𝑐 x_{t+1}={F^{-1}}^{(t)}(x_{t},c)=\overline{\beta_{t}}x_{t}+\overline{\gamma_{t}% }\epsilon_{\theta}(x_{t},c)italic_x start_POSTSUBSCRIPT italic_t + 1 end_POSTSUBSCRIPT = italic_F start_POSTSUPERSCRIPT - 1 end_POSTSUPERSCRIPT start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_c ) = over¯ start_ARG italic_β start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT end_ARG italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT + over¯ start_ARG italic_γ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT end_ARG italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_c )(3)

We denote the latent trajectory attained from the pivotal inversion as [x 0*,…,x T*]superscript subscript 𝑥 0…superscript subscript 𝑥 𝑇[x_{0}^{},...,x_{T}^{}][ italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT , … , italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ]. However, naively applying Eqn. (1) for x Tsuperscript subscript 𝑥 𝑇 x_{T}^{}italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT will not restore x 0superscript subscript 𝑥 0 x_{0}^{}italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT, because ϵ θ(x t,c)≠ϵ θ(x t−1*,c)subscript italic-ϵ 𝜃 subscript 𝑥 𝑡 𝑐 subscript italic-ϵ 𝜃 superscript subscript 𝑥 𝑡 1 𝑐\epsilon_{\theta}(x_{t},c)\neq\epsilon_{\theta}(x_{t-1}^{},c)italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_c ) ≠ italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT , italic_c ). To recover the original image, Null-text inversion trains a null-text embedding ϕ t subscript italic-ϕ 𝑡\phi_{t}italic_ϕ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT for each timestep t 𝑡 t italic_t force the the denoising trajectory to stay close to the forward trajectory [x 0,…,x T*]superscript subscript 𝑥 0…superscript subscript 𝑥 𝑇[x_{0}^{},...,x_{T}^{}][ italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT , … , italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ]. The learning objective is

𝔏 h(t)(ϕ t)=min ϕ t‖x t−1−F(t)(x t,c,ϕ t)‖2 2.superscript subscript 𝔏 ℎ 𝑡 subscript italic-ϕ 𝑡 subscript subscript italic-ϕ 𝑡 superscript subscript norm superscript subscript 𝑥 𝑡 1 superscript 𝐹 𝑡 subscript 𝑥 𝑡 𝑐 subscript italic-ϕ 𝑡 2 2\displaystyle\mathfrak{L}{h}^{(t)}(\phi{t})=\min_{\phi_{t}}|x_{t-1}^{}-F^{% (t)}(x_{t},c,\phi_{t})|_{2}^{2}.fraktur_L start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( italic_ϕ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ) = roman_min start_POSTSUBSCRIPT italic_ϕ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT end_POSTSUBSCRIPT ∥ italic_x start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT - italic_F start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_c , italic_ϕ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ) ∥ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT .(4)

After training, image editing techniques such as the prompt-to-prompt [12] can be applied with the learned null-text embeddings {ϕ t*}superscript subscript italic-ϕ 𝑡{\phi_{t}^{*}}{ italic_ϕ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT } to allow manipulations of the input image.

3.2 Learning subject representations

We introduce three techniques for improved learning of the representations that better capture the given object.

Mask guidance One evident issue we observed in Textual Inversion is the susceptibility of the learned textual embedding, c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT, to significant influence from the backgrounds of training images. This influence often imposes constraints on the style and scene of generated samples and makes identity preservation more challenging due to the limited capacity of the textual embedding, which is spent on unwanted background details. We present a failure analysis of Textual Inversion in the Appendix A. To address this issue, we propose a solution involving the use of subject masks to confine the loss during the learning process of c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT. This approach ensures that the training of c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT predominantly focuses on subject regions within the source images. Specifically, binary masks of the subjects in the source images are obtained using Segment Anything (SAM) [18], an off-the-shelf instance segmentation model. The Eqn.(2) is updated to a masked loss:

𝔏 s(c s)=min c s‖M⊙(ϵ θ(x t,[c,c s])−ϵ)‖2 2,subscript 𝔏 𝑠 subscript 𝑐 𝑠 subscript subscript 𝑐 𝑠 superscript subscript norm direct-product 𝑀 subscript italic-ϵ 𝜃 subscript 𝑥 𝑡 𝑐 subscript 𝑐 𝑠 italic-ϵ 2 2\mathfrak{L}{s}(c{s})=\min_{c_{s}}|M\odot(\epsilon_{\theta}(x_{t},[c,c_{s}]% )-\epsilon)|_{2}^{2},fraktur_L start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ( italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ) = roman_min start_POSTSUBSCRIPT italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT end_POSTSUBSCRIPT ∥ italic_M ⊙ ( italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , [ italic_c , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ] ) - italic_ϵ ) ∥ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT ,(5)

where ⊙direct-product\odot⊙ stands for element-wise product, and M 𝑀 M italic_M stands for a binary mask of the subject. This simple technique mitigates the adverse impact of background influences and enhancing the specificity of the learned textual embeddings.

Parameter regularization We aim for the learned embedding, c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT, to obtain equilibrium between identity preservation and the ability to generate diverse scenes. To achieve this balance, we suggest initializing c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT with a portion of the null-text embedding, ϕ s subscript italic-ϕ 𝑠\phi_{s}italic_ϕ start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT, and introducing an L2 regularization term. This regularization term is designed to incentivize the optimized c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT to closely align with ϕ s subscript italic-ϕ 𝑠\phi_{s}italic_ϕ start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT:

𝔏 s(c s)=min c s‖M⊙(ϵ θ(x t,[c,c s])−ϵ)‖2 2+w s‖c s−ϕ s‖2 2.subscript 𝔏 𝑠 subscript 𝑐 𝑠 subscript subscript 𝑐 𝑠 superscript subscript norm direct-product 𝑀 subscript italic-ϵ 𝜃 subscript 𝑥 𝑡 𝑐 subscript 𝑐 𝑠 italic-ϵ 2 2 subscript 𝑤 𝑠 superscript subscript norm subscript 𝑐 𝑠 subscript italic-ϕ 𝑠 2 2\small\mathfrak{L}{s}(c{s})=\min_{c_{s}}|M\odot(\epsilon_{\theta}(x_{t},[c,% c_{s}])-\epsilon)|{2}^{2}+w{s}|c_{s}-\phi_{s}|_{2}^{2}.fraktur_L start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ( italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ) = roman_min start_POSTSUBSCRIPT italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT end_POSTSUBSCRIPT ∥ italic_M ⊙ ( italic_ϵ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , [ italic_c , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ] ) - italic_ϵ ) ∥ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT + italic_w start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ∥ italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT - italic_ϕ start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ∥ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT .(6)

Here, c s∈ℝ n×d subscript 𝑐 𝑠 superscript ℝ 𝑛 𝑑 c_{s}\in\mathbb{R}^{n\times d}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_n × italic_d end_POSTSUPERSCRIPT where n 𝑛 n italic_n is the number of tokens and d 𝑑 d italic_d is the embedding dimension, and w s subscript 𝑤 𝑠 w_{s}italic_w start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT is a regularization hyper-parameter. We define ϕ s subscript italic-ϕ 𝑠\phi_{s}italic_ϕ start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT as the last n 𝑛 n italic_n embeddings of ϕ italic-ϕ\phi italic_ϕ and substitute the last n 𝑛 n italic_n embeddings in c 𝑐 c italic_c with c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT, forming [c,c s]𝑐 subscript 𝑐 𝑠[c,c_{s}][ italic_c , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ]. It is noteworthy that [c,c s]=c 𝑐 subscript 𝑐 𝑠 𝑐[c,c_{s}]=c[ italic_c , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ] = italic_c if c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT is not optimized, given that ϕ italic-ϕ\phi italic_ϕ constitutes the padding part of the embedding. This regularization serves two primary purposes. Firstly, the stable diffusion model is trained with a 10%percent 10 10%10 % caption drop, simplifying the conditioning to ϕ italic-ϕ\phi italic_ϕ and facilitating classifier-free guidance [13]. Consequently, ϕ italic-ϕ\phi italic_ϕ is adept at guiding the diffusion model to generate a diverse array of scenes, making it an ideal anchor point for the learned embedding. Secondly, due to the limited data used for training the embedding, unconstrained parameters may lead to overfitting with erratic scales. This overfitting poses a risk of generating severely out-of-distribution textual embeddings.

Step-wise subject representations We observe that the learned textual embedding, c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT, plays distinct roles across various denoising time steps. It is widely acknowledged that during the sampling process. In early time steps where t 𝑡 t italic_t is large, the primary focus is on generating high-level image structures, while at smaller values of t 𝑡 t italic_t, the denoising process shifts its emphasis toward refining finer details. Analogous functional distinctions exist for the role of c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT. Our analysis of c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT across time steps, presented in Fig. 3, underscores these variations. Motivated by this observation, we propose introducing time-dependent embeddings, c s t superscript subscript 𝑐 𝑠 𝑡 c_{s}^{t}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t end_POSTSUPERSCRIPT, at each time step instead of a single c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT to represent the subject. This leads to a set of embeddings, [c s 1,…,c s T]superscript subscript 𝑐 𝑠 1…superscript subscript 𝑐 𝑠 𝑇[c_{s}^{1},...,c_{s}^{T}][ italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT , … , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT ], working collectively to generate images. To ensure smooth transitions between time-dependent embeddings, we initially train a single c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT across all time steps. Subsequently, we recursively optimize c s t superscript subscript 𝑐 𝑠 𝑡{c_{s}^{t}}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t end_POSTSUPERSCRIPT following DDIM time steps, as illustrated in Algorithm 1. This approach ensures that c s t superscript subscript 𝑐 𝑠 𝑡 c_{s}^{t}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t end_POSTSUPERSCRIPT is proximate to c s t+1 superscript subscript 𝑐 𝑠 𝑡 1 c_{s}^{t+1}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t + 1 end_POSTSUPERSCRIPT by initializing it with c s t+1 superscript subscript 𝑐 𝑠 𝑡 1 c_{s}^{t+1}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t + 1 end_POSTSUPERSCRIPT and optimizing it for a few steps. After training, we apply

x t−1=F(t)(x t,[c,c s t],ϕ)subscript 𝑥 𝑡 1 superscript 𝐹 𝑡 subscript 𝑥 𝑡 𝑐 superscript subscript 𝑐 𝑠 𝑡 italic-ϕ x_{t-1}=F^{(t)}(x_{t},[c,c_{s}^{t}],\phi)italic_x start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT = italic_F start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , [ italic_c , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t end_POSTSUPERSCRIPT ] , italic_ϕ )(7)

with the optimized [c s 1,…,c s T]superscript subscript 𝑐 𝑠 1…superscript subscript 𝑐 𝑠 𝑇[c_{s}^{1},...,c_{s}^{T}][ italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT , … , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT ] to generate images.

Figure 3: Step-wise function analysis of c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT. We generate an image from a noise latent with DDIM and an optimized c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT representing a subject dog. The text prompt is ”A sitting dog”. The top image is the result generated image. We follow [12] to obtain the attention maps with respect to the 5 token embeddings of c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT as shown in the below images. The numbers to the left refer to the corresponding DDIM denoising steps. In time step 50, the 5 token embeddings of c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT are attended homogeneously across the latent vectors. In time step 1, these token embeddings are attended mostly by the subject detailed regions such as the forehead, the eyes, the ears, etc.

Result:C s subscript 𝐶 𝑠 C_{s}italic_C start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT

C s={}subscript 𝐶 𝑠 C_{s}={}italic_C start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT = { } ,

c s T+1=c s superscript subscript 𝑐 𝑠 𝑇 1 subscript 𝑐 𝑠 c_{s}^{T+1}=c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_T + 1 end_POSTSUPERSCRIPT = italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT

for t=[T,…,1]𝑡 𝑇 normal-…1 t=[T,...,1]italic_t = [ italic_T , … , 1 ] do

for i=[1,…,I]𝑖 1 normal-…𝐼 i=[1,...,I]italic_i = [ 1 , … , italic_I ] do

ϵ∼𝒩(0,1)similar-to italic-ϵ 𝒩 0 1\epsilon\sim\mathcal{N}(0,1)italic_ϵ ∼ caligraphic_N ( 0 , 1 ) ,

x 0∈X 0 subscript 𝑥 0 subscript 𝑋 0 x_{0}\in X_{0}italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT ∈ italic_X start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT ,

x t=N s(x 0,ϵ,t)subscript 𝑥 𝑡 subscript 𝑁 𝑠 subscript 𝑥 0 italic-ϵ 𝑡 x_{t}=N_{s}(x_{0},\epsilon,t)italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT = italic_N start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ( italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT , italic_ϵ , italic_t )

Algorithm 1 Optimization algorithm for c s t superscript subscript 𝑐 𝑠 𝑡 c_{s}^{t}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t end_POSTSUPERSCRIPT. T 𝑇 T italic_T is DDIM time steps. I 𝐼 I italic_I is the optimization steps per DDIM time step. X 0 subscript 𝑋 0 X_{0}italic_X start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT is the set of encoded latents of the source images. N s(⋅)subscript 𝑁 𝑠⋅N_{s}(\cdot)italic_N start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ( ⋅ ) is the DDIM noise scheduler. 𝔏 s(⋅)subscript 𝔏 𝑠⋅\mathfrak{L}_{s}(\cdot)fraktur_L start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ( ⋅ ) refers to the loss function in Eqn. (6).

3.3 Reference-guided generation

Shown in Figure 2, we perform our reference-guided generation in three steps. First, we determine the initial latent x T subscript 𝑥 𝑇 x_{T}italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT and follow the DDIM denoising process to generate an image. Thus, we can determine the subject regions of {x t}subscript 𝑥 𝑡{x_{t}}{ italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT } requiring guiding information and the corresponding reference image. Second, we transform the reference image and inverse the latent of the transformed image to obtain a reference latent trajectory, [x 0*,…,x T*]superscript subscript 𝑥 0…superscript subscript 𝑥 𝑇[x_{0}^{},...,x_{T}^{}][ italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT , … , italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ]. Third, we start a new denoising process from x T subscript 𝑥 𝑇 x_{T}italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT and apply the guiding information from [x 0*,…,x T*]superscript subscript 𝑥 0…superscript subscript 𝑥 𝑇[x_{0}^{},...,x_{T}^{}][ italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT , … , italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ] to the guided regions of {x t}subscript 𝑥 𝑡{x_{t}}{ italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT }. Thereby, we get a reference-guided generated image.

Guided regions and reference image. First, we determine the subject regions of x t subscript 𝑥 𝑡 x_{t}italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT that need the guiding information. Notice that x t∈ℝ H×W×C subscript 𝑥 𝑡 superscript ℝ 𝐻 𝑊 𝐶 x_{t}\in\mathbb{R}^{H\times W\times C}italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_H × italic_W × italic_C end_POSTSUPERSCRIPT, where H 𝐻 H italic_H, W 𝑊 W italic_W and C 𝐶 C italic_C are the height, width and channels of the latent x t subscript 𝑥 𝑡 x_{t}italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT respectively. Following the instance segmentation methods [11, 22], we aim to find a subject binary mask M g subscript 𝑀 𝑔 M_{g}italic_M start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT to determine the subset x t s∈ℝ m×C superscript subscript 𝑥 𝑡 𝑠 superscript ℝ 𝑚 𝐶 x_{t}^{s}\in\mathbb{R}^{m\times C}italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_s end_POSTSUPERSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_m × italic_C end_POSTSUPERSCRIPT corresponding to the subject regions. Because DDIM [38] is a deterministic denoising process as shown in Eqn. (1), once x T subscript 𝑥 𝑇 x_{T}italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT, c 𝑐 c italic_c and ϕ italic-ϕ\phi italic_ϕ are determined, the image to be generated is already determined. Therefore, we random initialize x T subscript 𝑥 𝑇 x_{T}italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT with Gaussian noise; then, we follow Eqn. (7) and apply the decoder of the stable diffusion model to obtain a generated image, I g1 subscript 𝐼 𝑔 1 I_{g1}italic_I start_POSTSUBSCRIPT italic_g 1 end_POSTSUBSCRIPT; by applying Grounding SAM [21, 18] with the subject name to I g1 subscript 𝐼 𝑔 1 I_{g1}italic_I start_POSTSUBSCRIPT italic_g 1 end_POSTSUBSCRIPT and resizing the result to H×W 𝐻 𝑊 H\times W italic_H × italic_W, we obtain the subject binary mask M g subscript 𝑀 𝑔 M_{g}italic_M start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT. Second, we determine the reference image by choosing the source image with the closest subject appearance to the subject in I g1 subscript 𝐼 𝑔 1 I_{g1}italic_I start_POSTSUBSCRIPT italic_g 1 end_POSTSUBSCRIPT, since the reference-guided generation should modify {x t}subscript 𝑥 𝑡{x_{t}}{ italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT } as small as possible to preserve the image structure. As pointed out by DreamBooth[31], DINO [3] score is a better metric than CLIP-I [28] score in measuring the subject similarity between two images. Hence, we use ViT-S/16 DINO model [3] to extract the embedding of I g1 subscript 𝐼 𝑔 1 I_{g1}italic_I start_POSTSUBSCRIPT italic_g 1 end_POSTSUBSCRIPT and all source images. We choose the source image whose DINO embedding have the highest cosine similarity to the DINO embedding of I g1 subscript 𝐼 𝑔 1 I_{g1}italic_I start_POSTSUBSCRIPT italic_g 1 end_POSTSUBSCRIPT as the reference image, I r subscript 𝐼 𝑟 I_{r}italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT. We use Grounding SAM [21, 18] to obtain the subject binary mask M r subscript 𝑀 𝑟 M_{r}italic_M start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT of I r subscript 𝐼 𝑟 I_{r}italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT.

Reference image transformation and inversion. First, we discuss the transformation of I r subscript 𝐼 𝑟 I_{r}italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT. Because the subject in I g1 subscript 𝐼 𝑔 1 I_{g1}italic_I start_POSTSUBSCRIPT italic_g 1 end_POSTSUBSCRIPT and the subject in I r subscript 𝐼 𝑟 I_{r}italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT are spatially correlated with each other, we need to transform I r subscript 𝐼 𝑟 I_{r}italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT to let the subject better align with the subject in I g1 subscript 𝐼 𝑔 1 I_{g1}italic_I start_POSTSUBSCRIPT italic_g 1 end_POSTSUBSCRIPT. As the generated subject is prone to have large appearance variations, it is noneffective to use image registration algorithms, e.g. RANSAC [8], based on local feature alignment. We propose to optimize a transformation matrix

T θ=[θ 1 0 0 0 θ 1 0 0 0 1][cos(θ 2)−sinθ 2 0 sinθ 2 cos(θ 2)0 0 0 1][1 0 θ 3 0 1 θ 4 0 0 1]subscript 𝑇 𝜃 matrix subscript 𝜃 1 0 0 0 subscript 𝜃 1 0 0 0 1 matrix subscript 𝜃 2 subscript 𝜃 2 0 subscript 𝜃 2 subscript 𝜃 2 0 0 0 1 matrix 1 0 subscript 𝜃 3 0 1 subscript 𝜃 4 0 0 1\footnotesize T_{\theta}=\begin{bmatrix}\theta_{1}&0&0\ 0&\theta_{1}&0\ 0&0&1\end{bmatrix}\begin{bmatrix}\cos(\theta_{2})&-\sin{\theta_{2}}&0\ \sin{\theta_{2}}&\cos(\theta_{2})&0\ 0&0&1\end{bmatrix}\begin{bmatrix}1&0&\theta_{3}\ 0&1&\theta_{4}\ 0&0&1\end{bmatrix}italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT = [ start_ARG start_ROW start_CELL italic_θ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT end_CELL start_CELL 0 end_CELL start_CELL 0 end_CELL end_ROW start_ROW start_CELL 0 end_CELL start_CELL italic_θ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT end_CELL start_CELL 0 end_CELL end_ROW start_ROW start_CELL 0 end_CELL start_CELL 0 end_CELL start_CELL 1 end_CELL end_ROW end_ARG ] [ start_ARG start_ROW start_CELL roman_cos ( italic_θ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT ) end_CELL start_CELL - roman_sin italic_θ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT end_CELL start_CELL 0 end_CELL end_ROW start_ROW start_CELL roman_sin italic_θ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT end_CELL start_CELL roman_cos ( italic_θ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT ) end_CELL start_CELL 0 end_CELL end_ROW start_ROW start_CELL 0 end_CELL start_CELL 0 end_CELL start_CELL 1 end_CELL end_ROW end_ARG ] start_ARG start_ROW start_CELL 1 end_CELL start_CELL 0 end_CELL start_CELL italic_θ start_POSTSUBSCRIPT 3 end_POSTSUBSCRIPT end_CELL end_ROW start_ROW start_CELL 0 end_CELL start_CELL 1 end_CELL start_CELL italic_θ start_POSTSUBSCRIPT 4 end_POSTSUBSCRIPT end_CELL end_ROW start_ROW start_CELL 0 end_CELL start_CELL 0 end_CELL start_CELL 1 end_CELL end_ROW end_ARG

composed of scaling, rotation and translation such that T θ(M r)subscript 𝑇 𝜃 subscript 𝑀 𝑟 T_{\theta}(M_{r})italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_M start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT ) best aligns with M g subscript 𝑀 𝑔 M_{g}italic_M start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT. Here, {θ i}subscript 𝜃 𝑖{\theta_{i}}{ italic_θ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT } are learnable parameters, and T θ(⋅)subscript 𝑇 𝜃⋅T_{\theta}(\cdot)italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( ⋅ ) is the function of applying the transformation to an image. T θ subscript 𝑇 𝜃 T_{\theta}italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT can be optimized with

𝔏 t=min θ‖T θ(M r)−M g‖1 1.subscript 𝔏 𝑡 subscript 𝜃 superscript subscript norm subscript 𝑇 𝜃 subscript 𝑀 𝑟 subscript 𝑀 𝑔 1 1\mathfrak{L}{t}=\min{\theta}|T_{\theta}(M_{r})-M_{g}|_{1}^{1}.fraktur_L start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT = roman_min start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ∥ italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( italic_M start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT ) - italic_M start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT ∥ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT .(9)

Please refer to the Appendix B for a specific algorithm optimizing T θ subscript 𝑇 𝜃 T_{\theta}italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT. We denote the optimized T θ subscript 𝑇 𝜃 T_{\theta}italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT as T θsuperscript subscript 𝑇 𝜃 T_{\theta}^{}italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT and the result of T θ(M r)superscript subscript 𝑇 𝜃 subscript 𝑀 𝑟 T_{\theta}^{}(M_{r})italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ( italic_M start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT ) as M rsuperscript subscript 𝑀 𝑟 M_{r}^{}italic_M start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT. Thereafter, we can transform I r subscript 𝐼 𝑟 I_{r}italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT with T θ(I r)superscript subscript 𝑇 𝜃 subscript 𝐼 𝑟 T_{\theta}^{}(I_{r})italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ( italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT ) to align the subject with the subject in I g1 subscript 𝐼 𝑔 1 I_{g1}italic_I start_POSTSUBSCRIPT italic_g 1 end_POSTSUBSCRIPT. Notice that the subject in T θ(I r)superscript subscript 𝑇 𝜃 subscript 𝐼 𝑟 T_{\theta}^{}(I_{r})italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ( italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT ) usually does not perfectly align with the subject in I g1 subscript 𝐼 𝑔 1 I_{g1}italic_I start_POSTSUBSCRIPT italic_g 1 end_POSTSUBSCRIPT. A rough spatial location for placing the reference subject should suffice for the reference guiding purpose in our case. Second, we discuss the inversion of T θ(I r)superscript subscript 𝑇 𝜃 subscript 𝐼 𝑟 T_{\theta}^{}(I_{r})italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ( italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT ). We use BLIP-2 model [19] to caption I r subscript 𝐼 𝑟 I_{r}italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT and use a CLIP text encoder to encode the caption to c r subscript 𝑐 𝑟 c_{r}italic_c start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT. Then, we encode T θ(I r)superscript subscript 𝑇 𝜃 subscript 𝐼 𝑟 T_{\theta}^{}(I_{r})italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ( italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT ) into x 0superscript subscript 𝑥 0 x_{0}^{}italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT with a Stable Diffusion image encoder. Finally, we recursively apply Eqn. (3) to obtain the reference latent trajectory, [x 0*,…,x T*]superscript subscript 𝑥 0…superscript subscript 𝑥 𝑇[x_{0}^{},...,x_{T}^{}][ italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT , … , italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ].

Generation process. There are two problems with the reference-guided generation: 1) the image structure needs to be preserved; 2) the subject generated needs to conform with the context of the image. We reuse x T subscript 𝑥 𝑇 x_{T}italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT in step 1 as the initial latent. If we follow Eqn. (7) for the denoising process, we will obtain I g1 subscript 𝐼 𝑔 1 I_{g1}italic_I start_POSTSUBSCRIPT italic_g 1 end_POSTSUBSCRIPT. We aim to add guiding information to the denoising process and obtain a new image I g2 subscript 𝐼 𝑔 2 I_{g2}italic_I start_POSTSUBSCRIPT italic_g 2 end_POSTSUBSCRIPT such that the subject in I g2 subscript 𝐼 𝑔 2 I_{g2}italic_I start_POSTSUBSCRIPT italic_g 2 end_POSTSUBSCRIPT has better fidelity and the image structure is similar to I g1 subscript 𝐼 𝑔 1 I_{g1}italic_I start_POSTSUBSCRIPT italic_g 1 end_POSTSUBSCRIPT. Please refer to Algorithm 2 for the specific reference-guided generation process. As discussed in Section 3.2, the stable diffusion model focuses on the image structure formation at early denoising steps and the detail polishing at later steps. If we incur the guiding information in early steps, I g2 subscript 𝐼 𝑔 2 I_{g2}italic_I start_POSTSUBSCRIPT italic_g 2 end_POSTSUBSCRIPT is subject to have structural change such that M rsuperscript subscript 𝑀 𝑟 M_{r}^{}italic_M start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT cannot accurately indicate the subject regions. It is harmful to enforce the guiding information at later steps either, because the denoising at this stage gathers useful information mostly from the current latent. Therefore, we start and end the guiding process at middle time steps t s subscript 𝑡 𝑠 t_{s}italic_t start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT and t e subscript 𝑡 𝑒 t_{e}italic_t start_POSTSUBSCRIPT italic_e end_POSTSUBSCRIPT respectively. At time step t s subscript 𝑡 𝑠 t_{s}italic_t start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT, we substitute the latent variables corresponding to the subject region in x t subscript 𝑥 𝑡 x_{t}italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT with those in x tsuperscript subscript 𝑥 𝑡 x_{t}^{}italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT. We do this for three reasons: 1) the substitution enables the denoising process to assimilate the subject to be generated to the reference subject; 2) the latent variables at time step t s subscript 𝑡 𝑠 t_{s}italic_t start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT are close to the noise space so that they are largely influenced by the textual guidance as well; 3) the substitution does not drastically change the image structure because latent variables have small global effect at middle denoising steps. We modify Eqn. (4) to Eqn. (10) for guiding the subject generation.

𝔏 h(t)(ϕ h)=min ϕ h‖x t−1[M r]−F(t)(x t,[c,c s t],ϕ h)[M r*]‖2 2 superscript subscript 𝔏 ℎ 𝑡 subscript italic-ϕ ℎ subscript subscript italic-ϕ ℎ superscript subscript delimited-∥∥superscript subscript 𝑥 𝑡 1 delimited-[]superscript subscript 𝑀 𝑟 superscript 𝐹 𝑡 subscript 𝑥 𝑡 𝑐 superscript subscript 𝑐 𝑠 𝑡 subscript italic-ϕ ℎ delimited-[]superscript subscript 𝑀 𝑟 2 2\begin{split}\mathfrak{L}{h}^{(t)}(\phi{h})=\min_{\phi_{h}}|x_{t-1}^{}[M_{% r}^{}]-F^{(t)}(x_{t},[c,c_{s}^{t}],\phi_{h})[M_{r}^{*}]|_{2}^{2}\end{split}start_ROW start_CELL fraktur_L start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( italic_ϕ start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT ) = roman_min start_POSTSUBSCRIPT italic_ϕ start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT end_POSTSUBSCRIPT ∥ italic_x start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT [ italic_M start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ] - italic_F start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , [ italic_c , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t end_POSTSUPERSCRIPT ] , italic_ϕ start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT ) [ italic_M start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ] ∥ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT end_CELL end_ROW(10)

Here, x t[M]subscript 𝑥 𝑡 delimited-[]𝑀 x_{t}[M]italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT [ italic_M ] refers to latent variables in x t subscript 𝑥 𝑡 x_{t}italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT indicated by the mask M 𝑀 M italic_M. Because ϕ h subscript italic-ϕ ℎ\phi_{h}italic_ϕ start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT is optimized with a few steps per denoising time step, the latent variables corresponding to the subject regions change mildly within the denoising time step. Therefore, at the next denoising time step, the stable diffusion model can adapt the latent variables corresponding to non-subject regions to conform with the change of the latent variables corresponding to the subject regions. Furthermore, we can adjust the optimization steps for ϕ h subscript italic-ϕ ℎ\phi_{h}italic_ϕ start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT to determine the weight of the reference guidance. More reference guidance will lead to a higher resemblance to the reference subject while less reference guidance will result in more variations for the generated subject.

Result:x 0 subscript 𝑥 0 x_{0}italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT

Inputs:

t s subscript 𝑡 𝑠 t_{s}italic_t start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT ,

t e subscript 𝑡 𝑒 t_{e}italic_t start_POSTSUBSCRIPT italic_e end_POSTSUBSCRIPT ,

x T subscript 𝑥 𝑇 x_{T}italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT ,

M rsuperscript subscript 𝑀 𝑟 M_{r}^{}italic_M start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ,

c 𝑐 c italic_c ,

ϕ italic-ϕ\phi italic_ϕ ,

[c s 1,…,c s T]superscript subscript 𝑐 𝑠 1…superscript subscript 𝑐 𝑠 𝑇[c_{s}^{1},...,c_{s}^{T}][ italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT , … , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT ] ,

[x 0*,…,x T*]superscript subscript 𝑥 0…superscript subscript 𝑥 𝑇[x_{0}^{},...,x_{T}^{}][ italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT , … , italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ]

for t=[T,…,1]𝑡 𝑇 normal-…1 t=[T,...,1]italic_t = [ italic_T , … , 1 ] do

if t==t s t==t_{s}italic_t = = italic_t start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT then

if t⩽t s 𝑡 subscript 𝑡 𝑠 t\leqslant t_{s}italic_t ⩽ italic_t start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT and t⩾t e 𝑡 subscript 𝑡 𝑒 t\geqslant t_{e}italic_t ⩾ italic_t start_POSTSUBSCRIPT italic_e end_POSTSUBSCRIPT then

for j=[1,…,J]𝑗 1 normal-…𝐽 j=[1,...,J]italic_j = [ 1 , … , italic_J ] do

Algorithm 2 Reference-guided generation algorithm. J 𝐽 J italic_J is the number of optimization steps for ϕ h subscript italic-ϕ ℎ\phi_{h}italic_ϕ start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT per denoising step. 𝔏 h(t)(⋅)superscript subscript 𝔏 ℎ 𝑡⋅\mathfrak{L}_{h}^{(t)}(\cdot)fraktur_L start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( ⋅ ) refers to the loss function in Eqn. (10).

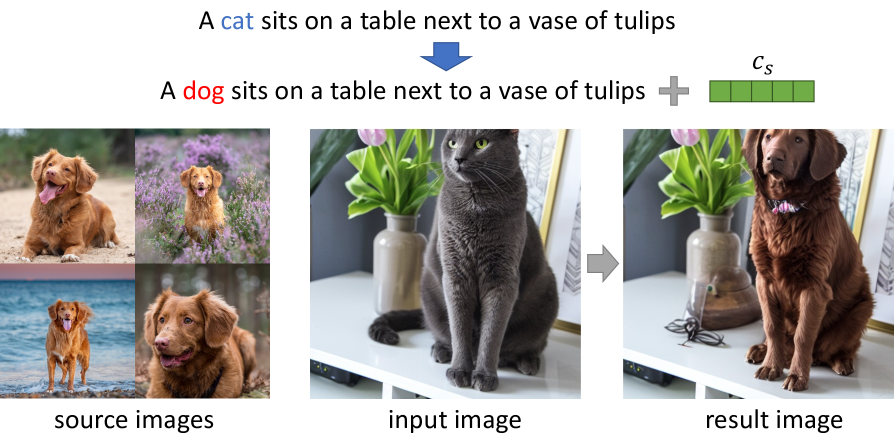

3.4 Personalized subject replacement

We aim to use the learned subject textual representations to replace the subject in an image with the user-specified subject. Although there are methods [23, 39, 40, 20] inpainting the image area with a user-specified subject, our method has two advantages over them. First, we do not specify the inpainting area of the image; instead, our method utilize the correlation between the textual embeddings and the latent variables to identify the subject area. Second, our method can generate a subject with various pose and appearance such that the added subject better conforms to the image context.

We first follow the fine-tuning method in Section 3.2 to obtain the step-wise subject representations [c s 1,…,c s T]superscript subscript 𝑐 𝑠 1…superscript subscript 𝑐 𝑠 𝑇[c_{s}^{1},...,c_{s}^{T}][ italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT , … , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT ]. We encode the original image I r subscript 𝐼 𝑟 I_{r}italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT to x 0 r superscript subscript 𝑥 0 𝑟 x_{0}^{r}italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT with the Stable Diffusion image encoder; then we use BLIP-2 model [19] to caption I r subscript 𝐼 𝑟 I_{r}italic_I start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT and encode the caption into c r superscript 𝑐 𝑟 c^{r}italic_c start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT with the Stable Diffusion language encoder. We identify the original subject word embedding in c r superscript 𝑐 𝑟 c^{r}italic_c start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT and substitute that with the new subject word embedding w g subscript 𝑤 𝑔 w_{g}italic_w start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT to attain a c g superscript 𝑐 𝑔 c^{g}italic_c start_POSTSUPERSCRIPT italic_g end_POSTSUPERSCRIPT (e.g. ‘cat’ →→\rightarrow→ ‘dog’ in the sentence ‘a photo of siting cat’). Then we follow Algorithm 3 to generate the image with the subject replaced. Referring to the prompt-to-prompt paper [12], we store the step-wise cross attention weights with regard to the word embeddings in c r superscript 𝑐 𝑟 c^{r}italic_c start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT to a t rsuperscript superscript subscript 𝑎 𝑡 𝑟{a_{t}^{r}}^{}italic_a start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT. A(t)(⋅,⋅,⋅)superscript 𝐴 𝑡⋅⋅⋅A^{(t)}(\cdot,\cdot,\cdot)italic_A start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( ⋅ , ⋅ , ⋅ ) performs the same operations as F(t)(⋅,⋅,⋅)superscript 𝐹 𝑡⋅⋅⋅F^{(t)}(\cdot,\cdot,\cdot)italic_F start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( ⋅ , ⋅ , ⋅ ) in Eqn. (1) but returns x t−1 subscript 𝑥 𝑡 1 x_{t-1}italic_x start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT and a t rsuperscript superscript subscript 𝑎 𝑡 𝑟{a_{t}^{r}}^{}italic_a start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT. We also modify F(t)(⋅,⋅,⋅)superscript 𝐹 𝑡⋅⋅⋅F^{(t)}(\cdot,\cdot,\cdot)italic_F start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( ⋅ , ⋅ , ⋅ ) to Fc s t,w g(⋅,⋅,⋅,a t r*)superscript subscript𝐹 superscript subscript 𝑐 𝑠 𝑡 subscript 𝑤 𝑔 𝑡⋅⋅⋅superscript superscript subscript 𝑎 𝑡 𝑟\tilde{F}{[c{s}^{t},w_{g}]}^{(t)}(\cdot,\cdot,\cdot,{a_{t}^{r}}^{})over~ start_ARG italic_F end_ARG start_POSTSUBSCRIPT [ italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t end_POSTSUPERSCRIPT , italic_w start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT ] end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( ⋅ , ⋅ , ⋅ , italic_a start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ) such that all token embeddings use fixed cross attention weights a t rsuperscript superscript subscript 𝑎 𝑡 𝑟{a_{t}^{r}}^{*}italic_a start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT except that [c s t,w g]superscript subscript 𝑐 𝑠 𝑡 subscript 𝑤 𝑔[c_{s}^{t},w_{g}][ italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t end_POSTSUPERSCRIPT , italic_w start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT ] use the cross attention weights of the new denoising process.

Result:x 0 g superscript subscript 𝑥 0 𝑔 x_{0}^{g}italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_g end_POSTSUPERSCRIPT

Inputs:

x 0 r superscript subscript 𝑥 0 𝑟 x_{0}^{r}italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT ,

c r superscript 𝑐 𝑟 c^{r}italic_c start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT ,

c g superscript 𝑐 𝑔 c^{g}italic_c start_POSTSUPERSCRIPT italic_g end_POSTSUPERSCRIPT ,

[c s 1,…,c s T]superscript subscript 𝑐 𝑠 1…superscript subscript 𝑐 𝑠 𝑇[c_{s}^{1},...,c_{s}^{T}][ italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT , … , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT ]

for t=[0,…,T−1]𝑡 0 normal-…𝑇 1 t=[0,...,T-1]italic_t = [ 0 , … , italic_T - 1 ] do

x T r=x T rsuperscript subscript 𝑥 𝑇 𝑟 superscript superscript subscript 𝑥 𝑇 𝑟 x_{T}^{r}={x_{T}^{r}}^{}italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT = italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT ,

ϕ T=ϕ subscript italic-ϕ 𝑇 italic-ϕ\phi_{T}=\phi italic_ϕ start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT = italic_ϕ

for t=[T,…,1]𝑡 𝑇 normal-…1 t=[T,...,1]italic_t = [ italic_T , … , 1 ] do

for k=[1,…,K]𝑘 1 normal-…𝐾 k=[1,...,K]italic_k = [ 1 , … , italic_K ] do

for t=[T,…,1]𝑡 𝑇 normal-…1 t=[T,...,1]italic_t = [ italic_T , … , 1 ] do

x t−1 g=Fc s t,w g(x t g,[c g,c s t],ϕ t*,a t r*)superscript subscript 𝑥 𝑡 1 𝑔 superscript subscript𝐹 superscript subscript 𝑐 𝑠 𝑡 subscript 𝑤 𝑔 𝑡 superscript subscript 𝑥 𝑡 𝑔 superscript 𝑐 𝑔 superscript subscript 𝑐 𝑠 𝑡 superscript subscript italic-ϕ 𝑡 superscript superscript subscript 𝑎 𝑡 𝑟 x_{t-1}^{g}=\tilde{F}{[c{s}^{t},w_{g}]}^{(t)}(x_{t}^{g},[c^{g},c_{s}^{t}],% \phi_{t}^{},{a_{t}^{r}}^{})italic_x start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_g end_POSTSUPERSCRIPT = over~ start_ARG italic_F end_ARG start_POSTSUBSCRIPT [ italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t end_POSTSUPERSCRIPT , italic_w start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT ] end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_g end_POSTSUPERSCRIPT , [ italic_c start_POSTSUPERSCRIPT italic_g end_POSTSUPERSCRIPT , italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t end_POSTSUPERSCRIPT ] , italic_ϕ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT , italic_a start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT )

Algorithm 3 Personalized subject replacement algorithm. F−1(t)superscript superscript 𝐹 1 𝑡{F^{-1}}^{(t)}italic_F start_POSTSUPERSCRIPT - 1 end_POSTSUPERSCRIPT start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT refers to Eqn. (3). K 𝐾 K italic_K is the optimization steps for null-text optimization. 𝔏 h(t)(⋅)superscript subscript 𝔏 ℎ 𝑡⋅\mathfrak{L}_{h}^{(t)}(\cdot)fraktur_L start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( italic_t ) end_POSTSUPERSCRIPT ( ⋅ ) refers to Eqn. (4)

4 Experiments

Figure 4: Qualitative comparison. We implement our fine-tuning method based on both Textual Inversion (TI) and DreamBooth (DB). A visible improvement is made by comparing the images in the third column with those in the second column and comparing the images in the fifth column and those in the forth column.

Figure 5: Results for personalized subject replacement.

Dataset. We use the DreamBooth [31] dataset for evaluation. It contains 30 subjects: 21 of them are rigid objects and 9 of them are live animals subject to large appearance variations. The dataset provides 25 prompt templates for generating images. Following DreamBooth, we fine-tune our framework for each subject and generate 4 images for each prompt template, totaling 3,000 images.

Settings. We adopt the pretrained Stable Diffusion [30] version 1.4 as the text-to-image framework. We use DDIM with 50 steps for the generation process. For HiFi Tuner based on Textual Inversion, we implement both the learning of subject textual embeddings described in Section 3.2 and the reference-guided generation described in Section 3.3. We use 5 tokens for c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT and adopts an ADAM [17] optimizer with a learning rate 5e−3 5 superscript 𝑒 3 5e^{-3}5 italic_e start_POSTSUPERSCRIPT - 3 end_POSTSUPERSCRIPT to optimize it. We first optimize c s subscript 𝑐 𝑠 c_{s}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT for 1000 steps and then recursively optimize c s t superscript subscript 𝑐 𝑠 𝑡 c_{s}^{t}italic_c start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t end_POSTSUPERSCRIPT for 10 steps per denoising step. We set t s=40 subscript 𝑡 𝑠 40 t_{s}=40 italic_t start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT = 40 and t e=10 subscript 𝑡 𝑒 10 t_{e}=10 italic_t start_POSTSUBSCRIPT italic_e end_POSTSUBSCRIPT = 10 and use an ADAM [17] optimizer with a learning rate 1e−2 1 superscript 𝑒 2 1e^{-2}1 italic_e start_POSTSUPERSCRIPT - 2 end_POSTSUPERSCRIPT to optimize ϕ h subscript italic-ϕ ℎ\phi_{h}italic_ϕ start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT. We optimize ϕ h subscript italic-ϕ ℎ\phi_{h}italic_ϕ start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT for 10 steps per DDIM denoising step. For HiFi Tuner based on DreamBooth, we follow the original subject representation learning process and implement the reference-guided generation described in Section 3.3. We use the same optimization schedule to optimize ϕ h subscript italic-ϕ ℎ\phi_{h}italic_ϕ start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT as mentioned above. For the reference-guided generation, we only apply HiFi Tuner to the 21 rigid objects, because their appearances vary little and have strong need for the detail preservation.

Evaluation metrics. Following DreamBooth [31], we use DINO score and CLIP-I score to measure the subject fidelity and use CLIP-T score the measure the prompt fidelity. CLIP-I score is the average pairwise cosine similarity between CLIP [28] embeddings of generated images and real images, while DINO score calculates the same cosine similarity but uses DINO [3] embeddings instead of CLIP embeddings. As pointed out in the DreamBooth paper [31], DINO score is a better means than CLIP-I score in measuring the subject detail preservation. CLIP-T score is the average cosine similarity between CLIP [28] embeddings of the pairwise prompts and generated images.

Qualitative comparison. Fig.4 shows the qualitative comparison between HiFi Tuner and other fine-tuning frameworks. HiFi Tuner possesses three advantages compared to other methods. First, HiFi Tuner is able to diminish the unwanted style change for the generated subjects. As shown in Fig.4 (a) & (b), DreamBooth blends sun flowers with the backpack, and both DreamBooth and Textual Inversion generate backpacks with incorrect colors; HiFi Tuner maintains the styles of the two backpacks. Second, HiFi Tuner can better preserve details of the subjects. In Fig.4 (c), Textual Inversion cannot generate the whale on the can while DreamBooth generate the yellow part above the whale differently compared to the original image; In Fig.4 (d), DreamBooth generates a candle with a white candle wick but the candle wick is brown in the original image. Our method outperforms Textual Inversion and DreamBooth in preserving these details. Third, HiFi Tuner can better preserve the structure of the subjects. In Fig.4 (e) & (f), the toy car and the toy robot both have complex structures to preserve, and Textual Inversion and DreamBooth generate subjects with apparent structural differences. HiFi Tuner makes improvements on the model’s structural preservation capability.

Quantitative comparison. We show the quantitative improvements HiFi Tuner makes in Table 1. HiFi Tuner improves Textual Inversion for 9.6 points in DINO score and 3.6 points in CLIP-T score, and improves DreamBooth for 1.2 points in DINO score and 1.2 points in CLIP-T score.

Table 1: Quantitative comparison.

Table 2: Ablation study.

Ablation studies. We present the quantitative improvements of adding our proposed techniques in Table 2. We observe that fine-tuning either DreamBooth or Textual Inversion with more steps leads to a worse prompt fidelity. Therefore, we fine-tune the networks with fewer steps than the original implementations, which results in higher CLIP-T scores but lower DINO scores for the baselines. Thereafter, we can use our techniques to improve the subject fidelity so that both DINO scores and CLIP-T scores can surpass the original implementations. For HiFi Tuner based on Textual Inversion, we fine-tune the textual embeddings with 1000 steps. The four proposed techniques make steady improvements over the baseline in DINO score while maintain CLIP-T score. The method utilizing all of our proposed techniques makes a remarkable 9.8-point improvement in DINO score over the baseline. For HiFi Tuner based on DreamBooth, we fine-tune all the diffusion model weights with 400 steps. By utilizing the reference-guided generation, HiFi Tuner achieves a 1.8-point improvement over the baseline in DINO score.

Results for personalized subject replacement. We show the qualitative results in Figure 5. More results can be found in the Appendix C.

5 Conclusions

In this work, we introduce a parameter-efficient fine-tuning method that can boost the sample fidelity and the prompt fidelity based on either Textual Inversion or DreamBooth. We propose to use a mask guidance, a novel parameter regularization technique and step-wise subject representations to improve the sample fidelity. We invents a reference-guided generation technique to mitigate the unwanted variations and artifacts for the generated subjects. We also exemplify that our method can be extended to substitute a subject in an image with personalized item by textual manipulations.

References

- Avrahami et al. [2022] Omri Avrahami, Dani Lischinski, and Ohad Fried. Blended diffusion for text-driven editing of natural images. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 18208–18218, 2022.

- Brooks et al. [2023] Tim Brooks, Aleksander Holynski, and Alexei A Efros. Instructpix2pix: Learning to follow image editing instructions. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 18392–18402, 2023.

- Caron et al. [2021] Mathilde Caron, Hugo Touvron, Ishan Misra, Hervé Jégou, Julien Mairal, Piotr Bojanowski, and Armand Joulin. Emerging properties in self-supervised vision transformers. In Proceedings of the IEEE/CVF international conference on computer vision (ICCV), pages 9650–9660, 2021.

- Casanova et al. [2021] Arantxa Casanova, Marlene Careil, Jakob Verbeek, Michal Drozdzal, and Adriana Romero Soriano. Instance-conditioned gan. Advances in Neural Information Processing Systems, 34:27517–27529, 2021.

- Chen et al. [2023a] Wenhu Chen, Hexiang Hu, Yandong Li, Nataniel Rui, Xuhui Jia, Ming-Wei Chang, and William W Cohen. Subject-driven text-to-image generation via apprenticeship learning. arXiv preprint arXiv:2304.00186, 2023a.

- Chen et al. [2023b] Wenhu Chen, Hexiang Hu, Chitwan Saharia, and William W. Cohen. Re-imagen: Retrieval-augmented text-to-image generator. In The Eleventh International Conference on Learning Representations (ICLR), 2023b.

- Chen et al. [2022] Xi Chen, Xiao Wang, Soravit Changpinyo, AJ Piergiovanni, Piotr Padlewski, Daniel Salz, Sebastian Goodman, Adam Grycner, Basil Mustafa, Lucas Beyer, et al. Pali: A jointly-scaled multilingual language-image model. In The Eleventh International Conference on Learning Representations (ICLR), 2022.

- Fischler and Bolles [1981] Martin A Fischler and Robert C Bolles. Random sample consensus: a paradigm for model fitting with applications to image analysis and automated cartography. Communications of the ACM, 24(6):381–395, 1981.

- Gal et al. [2023] Rinon Gal, Yuval Alaluf, Yuval Atzmon, Or Patashnik, Amit Haim Bermano, Gal Chechik, and Daniel Cohen-or. An image is worth one word: Personalizing text-to-image generation using textual inversion. In International Conference on Learning Representations (ICLR), 2023.

- Goodfellow et al. [2020] Ian Goodfellow, Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David Warde-Farley, Sherjil Ozair, Aaron Courville, and Yoshua Bengio. Generative adversarial networks. Communications of the ACM, 63(11):139–144, 2020.

- He et al. [2017] Kaiming He, Georgia Gkioxari, Piotr Dollár, and Ross Girshick. Mask r-cnn. In Proceedings of the IEEE/CVF international conference on computer vision (ICCV), pages 2961–2969, 2017.

- Hertz et al. [2023] Amir Hertz, Ron Mokady, Jay Tenenbaum, Kfir Aberman, Yael Pritch, and Daniel Cohen-or. Prompt-to-prompt image editing with cross-attention control. In International Conference on Learning Representations (ICLR), 2023.

- Ho and Salimans [2022] Jonathan Ho and Tim Salimans. Classifier-free diffusion guidance. arXiv preprint arXiv:2207.12598, 2022.

- Ho et al. [2020] Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising diffusion probabilistic models. Advances in neural information processing systems (NeurIPS), 33:6840–6851, 2020.

- Hu et al. [2022] Edward J Hu, Yelong Shen, Phillip Wallis, Zeyuan Allen-Zhu, Yuanzhi Li, Shean Wang, Lu Wang, and Weizhu Chen. LoRA: Low-rank adaptation of large language models. In International Conference on Learning Representations (ICLR), 2022.

- Jia et al. [2023] Xuhui Jia, Yang Zhao, Kelvin CK Chan, Yandong Li, Han Zhang, Boqing Gong, Tingbo Hou, Huisheng Wang, and Yu-Chuan Su. Taming encoder for zero fine-tuning image customization with text-to-image diffusion models. arXiv preprint arXiv:2304.02642, 2023.

- Kingma and Ba [2014] Diederik P Kingma and Jimmy Ba. Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980, 2014.

- Kirillov et al. [2023] Alexander Kirillov, Eric Mintun, Nikhila Ravi, Hanzi Mao, Chloe Rolland, Laura Gustafson, Tete Xiao, Spencer Whitehead, Alexander C. Berg, Wan-Yen Lo, Piotr Dollár, and Ross Girshick. Segment anything. arXiv:2304.02643, 2023.

- Li et al. [2023a] Junnan Li, Dongxu Li, Silvio Savarese, and Steven Hoi. BLIP-2: bootstrapping language-image pre-training with frozen image encoders and large language models. In International conference on machine learning (ICML), 2023a.

- Li et al. [2023b] Tianle Li, Max Ku, Cong Wei, and Wenhu Chen. Dreamedit: Subject-driven image editing. arXiv preprint arXiv:2306.12624, 2023b.

- Liu et al. [2023] Shilong Liu, Zhaoyang Zeng, Tianhe Ren, Feng Li, Hao Zhang, Jie Yang, Chunyuan Li, Jianwei Yang, Hang Su, Jun Zhu, et al. Grounding dino: Marrying dino with grounded pre-training for open-set object detection. arXiv preprint arXiv:2303.05499, 2023.

- Liu et al. [2021] Ze Liu, Yutong Lin, Yue Cao, Han Hu, Yixuan Wei, Zheng Zhang, Stephen Lin, and Baining Guo. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF international conference on computer vision (ICCV), pages 10012–10022, 2021.

- Lugmayr et al. [2022] Andreas Lugmayr, Martin Danelljan, Andres Romero, Fisher Yu, Radu Timofte, and Luc Van Gool. Repaint: Inpainting using denoising diffusion probabilistic models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 11461–11471, 2022.

- Meng et al. [2022] Chenlin Meng, Yutong He, Yang Song, Jiaming Song, Jiajun Wu, Jun-Yan Zhu, and Stefano Ermon. SDEdit: Guided image synthesis and editing with stochastic differential equations. In International Conference on Learning Representations (ICLR), 2022.

- Mokady et al. [2023] Ron Mokady, Amir Hertz, Kfir Aberman, Yael Pritch, and Daniel Cohen-Or. Null-text inversion for editing real images using guided diffusion models. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pages 6038–6047, 2023.

- Nitzan et al. [2022] Yotam Nitzan, Kfir Aberman, Qiurui He, Orly Liba, Michal Yarom, Yossi Gandelsman, Inbar Mosseri, Yael Pritch, and Daniel Cohen-Or. Mystyle: A personalized generative prior. ACM Transactions on Graphics (TOG), 41(6):1–10, 2022.

- Patashnik et al. [2021] Or Patashnik, Zongze Wu, Eli Shechtman, Daniel Cohen-Or, and Dani Lischinski. Styleclip: Text-driven manipulation of stylegan imagery. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), pages 2085–2094, 2021.

- Radford et al. [2021] Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, et al. Learning transferable visual models from natural language supervision. In International conference on machine learning (ICML), pages 8748–8763. PMLR, 2021.

- Ramesh et al. [2022] Aditya Ramesh, Prafulla Dhariwal, Alex Nichol, Casey Chu, and Mark Chen. Hierarchical text-conditional image generation with clip latents. arXiv preprint arXiv:2204.06125, 2022.

- Rombach et al. [2022] Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser, and Björn Ommer. High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (CVPR), pages 10684–10695, 2022.

- Ruiz et al. [2023a] Nataniel Ruiz, Yuanzhen Li, Varun Jampani, Yael Pritch, Michael Rubinstein, and Kfir Aberman. Dreambooth: Fine tuning text-to-image diffusion models for subject-driven generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 22500–22510, 2023a.

- Ruiz et al. [2023b] Nataniel Ruiz, Yuanzhen Li, Varun Jampani, Wei Wei, Tingbo Hou, Yael Pritch, Neal Wadhwa, Michael Rubinstein, and Kfir Aberman. Hyperdreambooth: Hypernetworks for fast personalization of text-to-image models, 2023b.

- Saharia et al. [2022] Chitwan Saharia, William Chan, Saurabh Saxena, Lala Li, Jay Whang, Emily L Denton, Kamyar Ghasemipour, Raphael Gontijo Lopes, Burcu Karagol Ayan, Tim Salimans, et al. Photorealistic text-to-image diffusion models with deep language understanding. Advances in Neural Information Processing Systems (NeurIPS), 35:36479–36494, 2022.

- Schuhmann et al. [2022] Christoph Schuhmann, Romain Beaumont, Richard Vencu, Cade Gordon, Ross Wightman, Mehdi Cherti, Theo Coombes, Aarush Katta, Clayton Mullis, Mitchell Wortsman, et al. Laion-5b: An open large-scale dataset for training next generation image-text models. Advances in Neural Information Processing Systems (NeurIPS), 35:25278–25294, 2022.

- Sheynin et al. [2022] Shelly Sheynin, Oron Ashual, Adam Polyak, Uriel Singer, Oran Gafni, Eliya Nachmani, and Yaniv Taigman. Knn-diffusion: Image generation via large-scale retrieval. arXiv preprint arXiv:2204.02849, 2022.

- Shi et al. [2023] Jing Shi, Wei Xiong, Zhe Lin, and Hyun Joon Jung. Instantbooth: Personalized text-to-image generation without test-time finetuning. arXiv preprint arXiv:2304.03411, 2023.

- Sohl-Dickstein et al. [2015] Jascha Sohl-Dickstein, Eric Weiss, Niru Maheswaranathan, and Surya Ganguli. Deep unsupervised learning using nonequilibrium thermodynamics. In International conference on machine learning, pages 2256–2265. PMLR, 2015.

- Song et al. [2020] Jiaming Song, Chenlin Meng, and Stefano Ermon. Denoising diffusion implicit models. In International Conference on Learning Representations (ICLR), 2020.

- Xie et al. [2023] Shaoan Xie, Zhifei Zhang, Zhe Lin, Tobias Hinz, and Kun Zhang. Smartbrush: Text and shape guided object inpainting with diffusion model. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 22428–22437, 2023.

- Zhang et al. [2023] Xin Zhang, Jiaxian Guo, Paul Yoo, Yutaka Matsuo, and Yusuke Iwasawa. Paste, inpaint and harmonize via denoising: Subject-driven image editing with pre-trained diffusion model. arXiv preprint arXiv:2306.07596, 2023.

Appendix A Failure analysis of Textual Inversion

Figure 6: A failure analysis of Textual Inversion [9] method.

We present a failure analysis of Textual Inversion as shown in Figure 6. The source image is a dog sitting on a meadow. For a prompt “a sitting dog”, the generated images mostly contain a dog sitting on a meadow and the dog’s appearance is not well preserved.

Appendix B Algorithm of optimizing T θ subscript 𝑇 𝜃 T_{\theta}italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT

Please refer to Algorithm 4 for optimizing T θ subscript 𝑇 𝜃 T_{\theta}italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT.

Result:T θsuperscript subscript 𝑇 𝜃 T_{\theta}^{}italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT start_POSTSUPERSCRIPT * end_POSTSUPERSCRIPT

Inputs:

M r subscript 𝑀 𝑟 M_{r}italic_M start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT ,

M g subscript 𝑀 𝑔 M_{g}italic_M start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT

P r=P(M r)subscript 𝑃 𝑟 𝑃 subscript 𝑀 𝑟 P_{r}=P(M_{r})italic_P start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT = italic_P ( italic_M start_POSTSUBSCRIPT italic_r end_POSTSUBSCRIPT ) ,

P g=P(M g)subscript 𝑃 𝑔 𝑃 subscript 𝑀 𝑔 P_{g}=P(M_{g})italic_P start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT = italic_P ( italic_M start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT )

for l=[1,…,L]𝑙 1 normal-…𝐿 l=[1,...,L]italic_l = [ 1 , … , italic_L ] do

for p t∈P t subscript 𝑝 𝑡 subscript 𝑃 𝑡 p_{t}\in P_{t}italic_p start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ∈ italic_P start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT do

for p g∈P g subscript 𝑝 𝑔 subscript 𝑃 𝑔 p_{g}\in P_{g}italic_p start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT ∈ italic_P start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT do

if x<m 𝑥 𝑚 x<m italic_x < italic_m then

Algorithm 4 Algorithm of optimizing T θ subscript 𝑇 𝜃 T_{\theta}italic_T start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT. P(M)∈ℝ N×3 𝑃 𝑀 superscript ℝ 𝑁 3 P(M)\in\mathbb{R}^{N\times 3}italic_P ( italic_M ) ∈ blackboard_R start_POSTSUPERSCRIPT italic_N × 3 end_POSTSUPERSCRIPT returns the coordinates where M==1 M==1 italic_M = = 1 and appends 1 1 1 1’s after the coordinates.

Appendix C Results for personalized subject replacement

We show more results for the personalized subject replacement in Figure 7.

Figure 7: Results for personalized subject replacement.

Xet Storage Details

- Size:

- 94 kB

- Xet hash:

- fe793b5ece0de162386f143164f594ecbe76471fe71f287979ea87547bbe7fdf

Xet efficiently stores files, intelligently splitting them into unique chunks and accelerating uploads and downloads. More info.