Buckets:

Title: Creating High-quality 3D Content by Bridging the Gap Between Text-to-2D and Text-to-3D Generation

URL Source: https://arxiv.org/html/2312.00085

Published Time: Wed, 31 Jul 2024 00:24:30 GMT

Markdown Content: \useunder

\ul

(2024)

Abstract.

In recent times, automatic text-to-3D content creation has made significant progress, driven by the development of pretrained 2D diffusion models. Existing text-to-3D methods typically optimize the 3D representation to ensure that the rendered image aligns well with the given text, as evaluated by the pretrained 2D diffusion model. Nevertheless, a substantial domain gap exists between 2D images and 3D assets, primarily attributed to variations in camera-related attributes and the exclusive presence of foreground objects. Consequently, employing 2D diffusion models directly for optimizing 3D representations may lead to suboptimal outcomes. To address this issue, we present X-Dreamer, a novel approach for high-quality text-to-3D content creation that effectively bridges the gap between text-to-2D and text-to-3D synthesis. The key components of X-Dreamer are two innovative designs: Camera-Guided Low-Rank Adaptation (CG-LoRA) and Attention-Mask Alignment (AMA) Loss. CG-LoRA dynamically incorporates camera information into the pretrained diffusion models by employing camera-dependent generation for trainable parameters. This integration makes the 2D diffusion model camera-sensitive. AMA loss guides the attention map of the pretrained diffusion model using the binary mask of the 3D object, prioritizing the creation of the foreground object. This module ensures that the model focuses on generating accurate and detailed foreground objects. Extensive evaluations demonstrate the effectiveness of our proposed method compared to existing text-to-3D approaches. Our project webpage: https://anonymous-11111.github.io/. Our code is available at https://github.com/xmu-xiaoma666/X-Dreamer.

Text-to-3D Generation, Vision and Language, Domain Gap

††copyright: acmlicensed††journalyear: 2024††doi: XXXXXXX.XXXXXXX††journal: JACM††journalvolume: 37††journalnumber: 4††article: 111††publicationmonth: 8††ccs: Applied computing Media arts††ccs: Applied computing Computer-aided design††ccs: Applied computing Computer-Fine arts 1. Introduction

Figure 1. The outputs of the text-to-2D generation model (left) and the text-to-3D generation model (right) under the same text prompt, i.e., “A statue of Leonardo DiCaprio’s head”.

Figure 2. Text-to-3D generation results of the proposed X-Dreamer.

The field of text-to-3D synthesis, which seeks to generate superior 3D content predicated on input textual descriptions, has shown significant potential to impact a diverse range of applications. These applications extend beyond traditional areas such as architecture, animation, and gaming, and encompass contemporary domains like virtual and augmented reality.

In recent years, extensive research(Chen et al., 2023b; Yu et al., 2023; Huang et al., 2023b) has demonstrated significant performance improvement in the text-to-2D generation task(Ramesh et al., 2022; Saharia et al., 2022; Rombach et al., 2022; Balaji et al., 2022; Wu et al., 2024) by leveraging pretrained diffusion models(Sohl-Dickstein et al., 2015; Ho et al., 2020; Song et al., 2020) on a large-scale text-image dataset(Schuhmann et al., 2022). Building on these advancements, DreamFusion(Poole et al., 2022) introduces an effective approach that utilizes a pretrained 2D diffusion model(Saharia et al., 2022) to autonomously generate 3D assets from text, eliminating the need for a dedicated 3D asset dataset. A key innovation introduced by DreamFusion is the Score Distillation Sampling (SDS) algorithm. This algorithm aims to optimize a single 3D representation, such as NeRF(Mildenhall et al., 2021), to ensure that rendered images from any camera perspective maintain a high likelihood with the given text, as evaluated by the pretrained 2D diffusion model. Inspired by the groundbreaking SDS algorithm, several recent works(Lin et al., 2023; Chen et al., 2023a; Metzer et al., 2023; Wang et al., 2023a, c) have emerged, envisioning the text-to-3D generation task through the application of pretrained 2D diffusion models.

While text-to-3D generation has made significant strides through the utilization of pretrained text-to-2D diffusion models(Saharia et al., 2022; Zhang et al., 2023; Kumari et al., 2023), it is crucial to recognize and address the persistent and substantial domain gap that remains between text-to-2D and text-to-3D generation. This distinction is clearly illustrated in Fig.1. To begin with, the text-to-2D model produces camera-independent generation results, focusing on generating high-quality images from specific angles while disregarding other angles. In contrast, 3D content creation is intricately tied to camera parameters such as position, shooting angle, and field of view. As a result, a text-to-3D model must generate high-quality results across all possible camera parameters. This fundamental difference emphasizes the necessity for innovative approaches that enable the pretrained diffusion model to consider camera parameters. Furthermore, a text-to-2D generation model(Sun et al., 2024; Chen et al., 2023c; Ruan et al., 2023; Qu et al., 2023; Lu et al., 2023; Fei et al., 2024a, c) must simultaneously generate both foreground and background elements while maintaining the overall coherence of the image. Conversely, a text-to-3D generation model(Raj et al., 2023; Xu et al., 2023; Lorraine et al., 2023) only needs to concentrate on creating the foreground object. This distinction allows text-to-3D models to allocate more resources and attention to precisely represent and generate the foreground object. Consequently, the domain gap between text-to-2D and text-to-3D generation poses a significant performance obstacle when directly employing pretrained 2D diffusion models for 3D asset creation.

In this study, we present a pioneering framework, X-Dreamer, designed to address the domain gap between text-to-2D and text-to-3D generation, thereby facilitating the creation of high-quality text-to-3D content. Our framework incorporates two innovative designs that are specifically tailored to address the aforementioned challenges. Firstly, existing approaches(Lin et al., 2023; Chen et al., 2023a; Metzer et al., 2023; Wang et al., 2023a; Ma et al., 2022, 2023a) commonly employ 2D pretrained diffusion models(Saharia et al., 2022; Rombach et al., 2022) for text-to-3D generation, which lack inherent linkage to camera parameters. To address this limitation and ensure that our text-to-3D model produces results that are directly influenced by camera parameters, we introduce Camera-Guided Low-Rank Adaptation (CG-LoRA) to fine-tune the pretrained 2D diffusion model. Notably, the parameters of CG-LoRA are dynamically generated based on the camera information during each iteration, establishing a robust relationship between the text-to-3D model and camera parameters. Furthermore, pretrained text-to-2D diffusion models allocate attention to both foreground and background generation, whereas the creation of 3D assets necessitates a stronger focus on accurately generating foreground objects. To address this requirement, we introduce Attention-Mask Alignment (AMA) Loss, which leverages the rendered binary mask of the 3D object to guide the attention map of the pretrained 2D stable diffusion model(Rombach et al., 2022). By incorporating this module, X-Dreamer prioritizes the generation of foreground objects, resulting in a significant enhancement of the overall quality of the generated 3D content.

We present a compelling demonstration of the effectiveness of X-Dreamer in synthesizing high-quality 3D assets based on textual cues, as shown in Fig.2. By incorporating CG-LoRA and AMA loss to address the domain gap between text-to-2D and text-to-3D generation, our proposed framework exhibits substantial advancements over prior methods in text-to-3D generation. In summary, our study contributes to the field in three key aspects:

- •We propose a novel method, X-Dreamer, for high-quality text-to-3D content creation, effectively bridging the domain gap between text-to-2D and text-to-3D generation.

- •To enhance the alignment between the generated results and the camera perspective, we propose CG-LoRA, which leverages camera information to dynamically generate CG-LoRA parameters for 2D diffusion models.

- •To prioritize the creation of foreground objects in the text-to-3D model, we introduce AMA loss, which utilizes binary masks of the foreground 3D object to guide the attention maps of the 2D diffusion model.

- Related Work

2.1. Text-to-3D Content Creation

In recent years, there has been a significant surge in interest surrounding the evolution of text-to-3D generation(Michel et al., 2022; Poole et al., 2022; Li et al., 2023a; Chen et al., 2023d; Li et al., 2024a; Sella et al., 2023; Wu et al., 2024; Yang et al., 2023; Fei et al., 2024b; Babu et al., 2023; Fei et al., 2024d). This growing field has been propelled, in part, by advancements in pretrained vision-and-language models, such as CLIP(Radford et al., 2021), as well as diffusion models like Stable Diffusion(Rombach et al., 2022) and Imagen(Saharia et al., 2022). Contemporary text-to-3D models can generally be classified into two distinct categories: the CLIP-based text-to-3D approach and the diffusion-based text-to-3D approach. The CLIP-based text-to-3D approach(Michel et al., 2022; Ma et al., 2023b; Lei et al., 2022; Jain et al., 2022; Mohammad Khalid et al., 2022; Wei et al., 2023) employs CLIP encoders(Radford et al., 2021) to project textual descriptions and rendered images derived from the 3D object into a modal-shared feature space. Subsequently, CLIP loss(Jiang et al., 2023a; Wang et al., 2023b; Ding et al., 2023) is harnessed to align features from both modalities, optimizing the 3D representation to conform to the textual description. Various scholars have made significant contributions to this field. For instance, Michel et al.(Michel et al., 2022) are pioneers in proposing the use of CLIP loss to harmonize the text prompt with the rendered images of the 3D object, thereby enhancing text-to-3D generation. Ma et al.(Ma et al., 2023b) introduce dynamic textual guidance during 3D object synthesis to improve convergence speed and generation performance. However, these approaches have inherent limitations, as they tend to generate 3D representations with a scarcity of geometry and appearance detail. To overcome this shortcoming, the diffusion-based text-to-3D approach(Poole et al., 2022; Lin et al., 2023; Chen et al., 2023a; Tang et al., 2023; Jiang et al., 2023b; Huang et al., 2023a) leverages pretrained text-to-2D diffusion models(Rombach et al., 2022; Saharia et al., 2022) to guide the optimization of 3D representations. Central to these models(Cao et al., 2023; Richardson et al., 2023; Huang et al., 2024; Tang et al., 2023; Li et al., 2023b) is the application of SDS loss(Poole et al., 2022) to align the rendered images stemming from a variety of camera perspectives with the textual description. Specifically, given the target text prompt, Lin et al.(Lin et al., 2023) leverage a coarse-to-fine pipeline to generate high-resolution 3D content. Chen et al.(Chen et al., 2023a) decouple geometry modeling and appearance modeling to generate realistic 3D assets. For specific purposes, some researchers(Seo et al., 2023; Wang et al., 2023c) integrate trainable LoRA(Hu et al., 2021) branches into pretrained diffusion models. For instance, Seo et al.(Seo et al., 2023) put forth 3DFuse, a model that harnesses the power of LoRA to comprehend object semantics. Wang et al.(Wang et al., 2023c) introduce ProlificDreamer, where the role of LoRA is to evaluate the score of the variational distribution for 3D parameters. However, the LoRA parameter begins its journey from random initialization and maintains its independence from the camera and text. To address these limitations, we present two innovative modules: CG-LoRA and AMA loss. These modules are designed to enhance the model’s ability to consider important camera parameters and prioritize the generation of foreground objects throughout the text-to-3D creation process.

Figure 3. Overview of the proposed X-Dreamer, which consists of geometry learning and appearance learning.

2.2. Low-Rank Adaptation (LoRA)

Low-Rank Adaptation (LoRA)(Hu et al., 2022) is a technique used to reduce memory requirements when fine-tuning a large model(Wang et al., 2024; Achiam et al., 2023; Li et al., 2024b; Chen et al., 2023e; Liu et al., 2024; Luo et al., 2024; Li et al., 2023c). It involves injecting only a small set of trainable parameters into the pretrained model, while keeping the original parameters fixed. During the optimization process, gradients are passed through the fixed pretrained model weights to the LoRA adapter, which is then updated to optimize the loss function. LoRA has been applied in various fields, including natural language processing(Hu et al., 2021; Brown et al., 2020), image synthesis(Zhang et al., 2023) and 3D generation(Seo et al., 2023; Wang et al., 2023c). To achieve low-rank adaptation, a linear projection with a pretrained weight matrix 𝐖 0∈ℝ d in×d out subscript 𝐖 0 superscript ℝ subscript 𝑑 𝑖 𝑛 subscript 𝑑 𝑜 𝑢 𝑡\mathbf{W}{0}\in\mathbb{R}^{d{in}\times d_{out}}bold_W start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_d start_POSTSUBSCRIPT italic_i italic_n end_POSTSUBSCRIPT × italic_d start_POSTSUBSCRIPT italic_o italic_u italic_t end_POSTSUBSCRIPT end_POSTSUPERSCRIPT is augmented with an additional low-rank factorized projection. This augmentation is represented as 𝐖 0+Δ𝐖=𝐖 0+𝐀𝐁 subscript 𝐖 0 Δ 𝐖 subscript 𝐖 0 𝐀𝐁\mathbf{W}{0}+\Delta\mathbf{W}=\mathbf{W}{0}+\mathbf{A}\mathbf{B}bold_W start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT + roman_Δ bold_W = bold_W start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT + bold_AB, where 𝐀∈ℝ d in×r 𝐀 superscript ℝ subscript 𝑑 𝑖 𝑛 𝑟\mathbf{A}\in\mathbb{R}^{d_{in}\times r}bold_A ∈ blackboard_R start_POSTSUPERSCRIPT italic_d start_POSTSUBSCRIPT italic_i italic_n end_POSTSUBSCRIPT × italic_r end_POSTSUPERSCRIPT, 𝐁∈ℝ r×d out 𝐁 superscript ℝ 𝑟 subscript 𝑑 𝑜 𝑢 𝑡\mathbf{B}\in\mathbb{R}^{r\times d_{out}}bold_B ∈ blackboard_R start_POSTSUPERSCRIPT italic_r × italic_d start_POSTSUBSCRIPT italic_o italic_u italic_t end_POSTSUBSCRIPT end_POSTSUPERSCRIPT, and r≪min(d in,d out)much-less-than 𝑟 subscript 𝑑 𝑖 𝑛 subscript 𝑑 𝑜 𝑢 𝑡 r\ll\min(d_{in},d_{out})italic_r ≪ roman_min ( italic_d start_POSTSUBSCRIPT italic_i italic_n end_POSTSUBSCRIPT , italic_d start_POSTSUBSCRIPT italic_o italic_u italic_t end_POSTSUBSCRIPT ). During training, 𝐖 0 subscript 𝐖 0\mathbf{W}{0}bold_W start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT remains fixed, while 𝐀 𝐀\mathbf{A}bold_A and 𝐁 𝐁\mathbf{B}bold_B are trainable. The modified forward pass, given the original forward pass 𝐘=𝐗𝐖 0 𝐘 subscript 𝐗𝐖 0\mathbf{Y}=\mathbf{X}\mathbf{W}{0}bold_Y = bold_XW start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT, can be formulated as follows:

(1)𝐘=𝐗𝐖 0+𝐗𝐀𝐁.𝐘 subscript 𝐗𝐖 0 𝐗𝐀𝐁\mathbf{Y}=\mathbf{X}\mathbf{W}_{0}+\mathbf{X}\mathbf{A}\mathbf{B}.bold_Y = bold_XW start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT + bold_XAB .

In this paper, we introduce CG-LoRA, which involves the dynamic generation of trainable parameters for 𝐀 𝐀\mathbf{A}bold_A based on camera information. This technique allows for integrating perspective information, including camera parameters and direction-aware descriptions, into the pretrained text-to-2D diffusion model. As a result, our method significantly enhances text-to-3D generation capabilities.

- Approach

3.1. Architecture

In this section, we present a comprehensive introduction to the proposed X-Dreamer, which consists of two main stages: geometry learning and appearance learning. For geometry learning, we employ DMTet(Shen et al., 2021) as the 3D representation. DMTet is an MLP parameterized with Φ dmt subscript Φ 𝑑 𝑚 𝑡\Phi_{dmt}roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT and is initialized with a 3D ellipsoid using the mean squared error (MSE) loss ℒ MSE subscript ℒ 𝑀 𝑆 𝐸\mathcal{L}{MSE}caligraphic_L start_POSTSUBSCRIPT italic_M italic_S italic_E end_POSTSUBSCRIPT. Subsequently, we optimize DMTet and CG-LoRA using the SDS loss(Poole et al., 2022)ℒ SDS subscript ℒ 𝑆 𝐷 𝑆\mathcal{L}{SDS}caligraphic_L start_POSTSUBSCRIPT italic_S italic_D italic_S end_POSTSUBSCRIPT and the proposed AMA loss ℒ AMA subscript ℒ 𝐴 𝑀 𝐴\mathcal{L}{AMA}caligraphic_L start_POSTSUBSCRIPT italic_A italic_M italic_A end_POSTSUBSCRIPT to ensure the alignment between the 3D representation and the input text prompt. For appearance learning, we leverage bidirectional reflectance distribution function (BRDF) modeling(Torrance and Sparrow, 1967) following the previous approach(Chen et al., 2023a). Specifically, we utilize an MLP with trainable parameters Φ mat subscript Φ 𝑚 𝑎 𝑡\Phi{mat}roman_Φ start_POSTSUBSCRIPT italic_m italic_a italic_t end_POSTSUBSCRIPT to predict surface materials. Similar to the geometry learning stage, we optimize Φ mat subscript Φ 𝑚 𝑎 𝑡\Phi_{mat}roman_Φ start_POSTSUBSCRIPT italic_m italic_a italic_t end_POSTSUBSCRIPT and CG-LoRA using the SDS loss ℒ SDS subscript ℒ 𝑆 𝐷 𝑆\mathcal{L}{SDS}caligraphic_L start_POSTSUBSCRIPT italic_S italic_D italic_S end_POSTSUBSCRIPT and the AMA loss ℒ AMA subscript ℒ 𝐴 𝑀 𝐴\mathcal{L}{AMA}caligraphic_L start_POSTSUBSCRIPT italic_A italic_M italic_A end_POSTSUBSCRIPT to achieve alignment between the 3D representation and the text prompt. Fig.3 provides a detailed depiction of our proposed X-Dreamer.

3.1.1. Geometry Learning

For geometry learning, an MLP network Φ dmt subscript Φ 𝑑 𝑚 𝑡\Phi_{dmt}roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT is utilized to parameterize DMTet as a 3D representation. To enhance the stability of geometry modeling, we employ a 3D ellipsoid as the initial configuration for DMTet Φ dmt subscript Φ 𝑑 𝑚 𝑡\Phi_{dmt}roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT. For each vertex v i∈V T subscript 𝑣 𝑖 subscript 𝑉 𝑇 v_{i}\in V_{T}italic_v start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ∈ italic_V start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT belonging to the tetrahedral grid T 𝑇 T italic_T, we train Φ dmt subscript Φ 𝑑 𝑚 𝑡\Phi_{dmt}roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT to predict two important values: the SDF value s(v i)𝑠 subscript 𝑣 𝑖 s(v_{i})italic_s ( italic_v start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) and the deformation offset δ(v i)𝛿 subscript 𝑣 𝑖\delta(v_{i})italic_δ ( italic_v start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ). To initialize Φ dmt subscript Φ 𝑑 𝑚 𝑡\Phi_{dmt}roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT with the 3D ellipsoid, we sample a set of N 𝑁 N italic_N points {p i∈ℝ 3}|i=1 N evaluated-at subscript 𝑝 𝑖 superscript ℝ 3 𝑖 1 𝑁{p_{i}\in\mathbb{R}^{3}}|{i=1}^{N}{ italic_p start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT } | start_POSTSUBSCRIPT italic_i = 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_N end_POSTSUPERSCRIPT approximately distributed on the surface of an ellipsoid and compute the corresponding SDF values {SDF(p i)}|i=1 N evaluated-at 𝑆 𝐷 𝐹 subscript 𝑝 𝑖 𝑖 1 𝑁{{SDF}(p{i})}|{i=1}^{N}{ italic_S italic_D italic_F ( italic_p start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) } | start_POSTSUBSCRIPT italic_i = 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_N end_POSTSUPERSCRIPT. Subsequently, we optimize Φ dmt subscript Φ 𝑑 𝑚 𝑡\Phi{dmt}roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT using MSE loss. This optimization process ensures that Φ dmt subscript Φ 𝑑 𝑚 𝑡\Phi_{dmt}roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT effectively initializes DMTet to resemble the 3D ellipsoid. The formulation of the MSE loss is given by:

(2)ℒ MSE=1 N∑i=1 N(s(p i;Φ dmt)−SDF(p i))2.subscript ℒ 𝑀 𝑆 𝐸 1 𝑁 superscript subscript 𝑖 1 𝑁 superscript 𝑠 subscript 𝑝 𝑖 subscript Φ 𝑑 𝑚 𝑡 𝑆 𝐷 𝐹 subscript 𝑝 𝑖 2\mathcal{L}{MSE}=\frac{1}{N}\sum{i=1}^{N}\left(s(p_{i};\Phi_{dmt})-SDF(p_{i}% )\right)^{2}.caligraphic_L start_POSTSUBSCRIPT italic_M italic_S italic_E end_POSTSUBSCRIPT = divide start_ARG 1 end_ARG start_ARG italic_N end_ARG ∑ start_POSTSUBSCRIPT italic_i = 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_N end_POSTSUPERSCRIPT ( italic_s ( italic_p start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ; roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT ) - italic_S italic_D italic_F ( italic_p start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) ) start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT .

After initializing the geometry, our objective is to align the geometry of DMTet with the input text prompt. Specifically, we generate the normal map 𝒏 𝒏\boldsymbol{n}bold_italic_n and the object mask 𝒎 𝒎\boldsymbol{m}bold_italic_m from the initialized DMTet Φ dmt subscript Φ 𝑑 𝑚 𝑡\Phi_{dmt}roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT by employing a differentiable rendering technique(Torrance and Sparrow, 1967), given a randomly sampled camera pose 𝒄 𝒄\boldsymbol{c}bold_italic_c. Subsequently, we input the normal map 𝒏 𝒏\boldsymbol{n}bold_italic_n into the frozen stable diffusion (SD) with a trainable CG-LoRA and update Φ dmt subscript Φ 𝑑 𝑚 𝑡\Phi_{dmt}roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT using the SDS loss, which is defined as follows:

(3)∇Φ dmt ℒ SDS=𝔼 t,ϵ[w(t)(ϵ^Θ(𝒏 t;𝒚,t)−ϵ)∂𝒏∂Φ dmt],subscript∇subscript Φ 𝑑 𝑚 𝑡 subscript ℒ SDS subscript 𝔼 𝑡 bold-italic-ϵ delimited-[]𝑤 𝑡 subscript^bold-italic-ϵ Θ subscript 𝒏 𝑡 𝒚 𝑡 bold-italic-ϵ 𝒏 subscript Φ 𝑑 𝑚 𝑡\small\nabla_{\Phi_{dmt}}\mathcal{L}{\mathrm{SDS}}=\mathbb{E}{t,\boldsymbol{% \epsilon}}\left[w(t)\left(\hat{\boldsymbol{\epsilon}}{\Theta}(\boldsymbol{n}% {t};\boldsymbol{y},t)-\boldsymbol{\epsilon}\right)\frac{\partial\boldsymbol{n}% }{\partial{\Phi_{dmt}}}\right],∇ start_POSTSUBSCRIPT roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT end_POSTSUBSCRIPT caligraphic_L start_POSTSUBSCRIPT roman_SDS end_POSTSUBSCRIPT = blackboard_E start_POSTSUBSCRIPT italic_t , bold_italic_ϵ end_POSTSUBSCRIPT [ italic_w ( italic_t ) ( over^ start_ARG bold_italic_ϵ end_ARG start_POSTSUBSCRIPT roman_Θ end_POSTSUBSCRIPT ( bold_italic_n start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ; bold_italic_y , italic_t ) - bold_italic_ϵ ) divide start_ARG ∂ bold_italic_n end_ARG start_ARG ∂ roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT end_ARG ] ,

where Θ Θ\Theta roman_Θ represents the parameter of SD, ϵ^Θ(𝒏 t;𝒚,t)subscript^bold-italic-ϵ Θ subscript 𝒏 𝑡 𝒚 𝑡\hat{\boldsymbol{\epsilon}}{\Theta}(\boldsymbol{n}{t};\boldsymbol{y},t)over^ start_ARG bold_italic_ϵ end_ARG start_POSTSUBSCRIPT roman_Θ end_POSTSUBSCRIPT ( bold_italic_n start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ; bold_italic_y , italic_t ) denotes the predicted noise of SD given the noise level t 𝑡 t italic_t and text embedding 𝒚 𝒚\boldsymbol{y}bold_italic_y. Additionally, 𝒏 t=α t𝒏+σ tϵ subscript 𝒏 𝑡 subscript 𝛼 𝑡 𝒏 subscript 𝜎 𝑡 bold-italic-ϵ\boldsymbol{n}{t}=\alpha{t}\boldsymbol{n}+\sigma_{t}\boldsymbol{\epsilon}bold_italic_n start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT = italic_α start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT bold_italic_n + italic_σ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT bold_italic_ϵ, where ϵ∼𝒩(𝟎,𝑰)similar-to bold-italic-ϵ 𝒩 0 𝑰\boldsymbol{\epsilon}\sim\mathcal{N}(\mathbf{0},\boldsymbol{I})bold_italic_ϵ ∼ caligraphic_N ( bold_0 , bold_italic_I ) represents noise sampled from a normal distribution. The implementation of w(t)𝑤 𝑡 w(t)italic_w ( italic_t ), α t subscript 𝛼 𝑡\alpha_{t}italic_α start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT, and σ t subscript 𝜎 𝑡\sigma_{t}italic_σ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT is based on the DreamFusion(Poole et al., 2022).

Furthermore, to focus SD on generating foreground objects, we introduce an additional AMA loss to align the object mask 𝒎 𝒎\boldsymbol{m}bold_italic_m with the attention map of SD, given by:

(4)ℒ AMA=1 L∑i=1 L|𝒂 i−η(𝒎)|,subscript ℒ 𝐴 𝑀 𝐴 1 𝐿 superscript subscript 𝑖 1 𝐿 subscript 𝒂 𝑖 𝜂 𝒎\mathcal{L}{AMA}=\frac{1}{L}\sum{i=1}^{L}|\boldsymbol{a}_{i}-\eta(% \boldsymbol{m})|,caligraphic_L start_POSTSUBSCRIPT italic_A italic_M italic_A end_POSTSUBSCRIPT = divide start_ARG 1 end_ARG start_ARG italic_L end_ARG ∑ start_POSTSUBSCRIPT italic_i = 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_L end_POSTSUPERSCRIPT | bold_italic_a start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT - italic_η ( bold_italic_m ) | ,

where L 𝐿 L italic_L denotes the number of attention layers, and 𝒂 i subscript 𝒂 𝑖\boldsymbol{a}_{i}bold_italic_a start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT is the attention map of i 𝑖 i italic_i-th attention layer. The function η(⋅)𝜂⋅\eta(\cdot)italic_η ( ⋅ ) is employed to resize the rendered mask, ensuring its dimensions align with those of the attention maps.

Figure 4. Illustration of Camera-Guided Low-Rank Adaptation (CG-LoRA).

3.1.2. Appearance Learning

After obtaining the geometry of the 3D object, our objective is to compute its appearance using the Physically-Based Rendering (PBR) material model(McAuley et al., 2012). The material model comprises the diffuse term 𝒌 d∈ℝ 3 subscript 𝒌 𝑑 superscript ℝ 3\boldsymbol{k}{d}\in\mathbb{R}^{3}bold_italic_k start_POSTSUBSCRIPT italic_d end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT, the roughness and metallic term 𝒌 rm∈ℝ 2 subscript 𝒌 𝑟 𝑚 superscript ℝ 2\boldsymbol{k}{rm}\in\mathbb{R}^{2}bold_italic_k start_POSTSUBSCRIPT italic_r italic_m end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT, and the normal variation term 𝒌 n∈ℝ 3 subscript 𝒌 𝑛 superscript ℝ 3\boldsymbol{k}{n}\in\mathbb{R}^{3}bold_italic_k start_POSTSUBSCRIPT italic_n end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT. Firstly, the Diffuse Term 𝒌 d∈ℝ 3 subscript 𝒌 𝑑 superscript ℝ 3\boldsymbol{k}{d}\in\mathbb{R}^{3}bold_italic_k start_POSTSUBSCRIPT italic_d end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT accurately represents how the material responds to diffuse lighting. This term is captured by a three-dimensional vector that denotes the material’s diffuse color. Secondly, the Roughness and Metallic Term 𝒌 rm∈ℝ 2 subscript 𝒌 𝑟 𝑚 superscript ℝ 2\boldsymbol{k}{rm}\in\mathbb{R}^{2}bold_italic_k start_POSTSUBSCRIPT italic_r italic_m end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT effectively captures the material’s roughness and metallic properties. It is represented by a two-dimensional vector that conveys the values of roughness and metallicness. Roughness quantifies the surface smoothness, while metallicness indicates the presence of metallic properties in the material. These terms collectively contribute to the overall visual realism of the rendered scene. Additionally, the Normal Variation Term 𝒌 n∈ℝ 3 subscript 𝒌 𝑛 superscript ℝ 3\boldsymbol{k}{n}\in\mathbb{R}^{3}bold_italic_k start_POSTSUBSCRIPT italic_n end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT plays a crucial role in characterizing variations in surface normals. This term, typically used in conjunction with a normal map, enables the simulation of fine details and textures on the material’s surface. During the rendering process, these three PBR material terms interact to accurately simulate the optical properties exhibited by real-world materials. This integration facilitates rendering engines in achieving high-quality rendering results and generating realistic virtual scenes.

For any point p∈ℝ 3 𝑝 superscript ℝ 3 p\in\mathbb{R}^{3}italic_p ∈ blackboard_R start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT on the surface of the geometry, we utilize an MLP parameterized by Φ mat subscript Φ 𝑚 𝑎 𝑡\Phi_{mat}roman_Φ start_POSTSUBSCRIPT italic_m italic_a italic_t end_POSTSUBSCRIPT to obtain the three material terms, which can be expressed as follows:

(5)(𝒌 d,𝒌 n,𝒌 rm)=MLP(𝒫(p);Φ mat),subscript 𝒌 𝑑 subscript 𝒌 𝑛 subscript 𝒌 𝑟 𝑚 MLP 𝒫 𝑝 subscript Φ 𝑚 𝑎 𝑡(\boldsymbol{k}{d},\boldsymbol{k}{n},\boldsymbol{k}{rm})=\text{MLP}\left(% \mathcal{P}(p);\Phi{mat}\right),( bold_italic_k start_POSTSUBSCRIPT italic_d end_POSTSUBSCRIPT , bold_italic_k start_POSTSUBSCRIPT italic_n end_POSTSUBSCRIPT , bold_italic_k start_POSTSUBSCRIPT italic_r italic_m end_POSTSUBSCRIPT ) = MLP ( caligraphic_P ( italic_p ) ; roman_Φ start_POSTSUBSCRIPT italic_m italic_a italic_t end_POSTSUBSCRIPT ) ,

where 𝒫(⋅)𝒫⋅\mathcal{P}(\cdot)caligraphic_P ( ⋅ ) represents the positional encoding using a hash-grid technique(Müller et al., 2022). Subsequently, each pixel of the rendered image can be computed as follows:

(6)V(p,ω)=∫Ω L i(p,ω i)f(p,ω i,ω)(ω i⋅n p)d ω i,𝑉 𝑝 𝜔 subscript Ω subscript 𝐿 𝑖 𝑝 subscript 𝜔 𝑖 𝑓 𝑝 subscript 𝜔 𝑖 𝜔⋅subscript 𝜔 𝑖 subscript 𝑛 𝑝 differential-d subscript 𝜔 𝑖 V(p,\omega)=\int_{\Omega}L_{i}(p,\omega_{i})f(p,\omega_{i},\omega)(\omega_{i}% \cdot n_{p})\mathrm{d}\omega_{i},italic_V ( italic_p , italic_ω ) = ∫ start_POSTSUBSCRIPT roman_Ω end_POSTSUBSCRIPT italic_L start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ( italic_p , italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) italic_f ( italic_p , italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , italic_ω ) ( italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ⋅ italic_n start_POSTSUBSCRIPT italic_p end_POSTSUBSCRIPT ) roman_d italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ,

where V(p,ω)𝑉 𝑝 𝜔 V(p,\omega)italic_V ( italic_p , italic_ω ) denotes the rendered pixel value from the direction ω 𝜔\omega italic_ω for the surface point p 𝑝 p italic_p. Ω Ω\Omega roman_Ω denotes a hemisphere defined by the set of incident directions ω i subscript 𝜔 𝑖\omega_{i}italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT satisfying the condition ω i⋅n p≥0⋅subscript 𝜔 𝑖 subscript 𝑛 𝑝 0\omega_{i}\cdot n_{p}\geq 0 italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ⋅ italic_n start_POSTSUBSCRIPT italic_p end_POSTSUBSCRIPT ≥ 0, where ω i subscript 𝜔 𝑖\omega_{i}italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT denotes the incident direction, and n p subscript 𝑛 𝑝 n_{p}italic_n start_POSTSUBSCRIPT italic_p end_POSTSUBSCRIPT represents the surface normal at point p 𝑝 p italic_p. L i(⋅)subscript 𝐿 𝑖⋅L_{i}(\cdot)italic_L start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ( ⋅ ) corresponds to the incident light from an off-the-shelf environment map, and f(⋅)𝑓⋅f(\cdot)italic_f ( ⋅ ) is the Bidirectional Reflectance Distribution Function (BRDF) related to the material properties (i.e., 𝒌 d,𝒌 n,𝒌 rm subscript 𝒌 𝑑 subscript 𝒌 𝑛 subscript 𝒌 𝑟 𝑚\boldsymbol{k}{d},\boldsymbol{k}{n},\boldsymbol{k}{rm}bold_italic_k start_POSTSUBSCRIPT italic_d end_POSTSUBSCRIPT , bold_italic_k start_POSTSUBSCRIPT italic_n end_POSTSUBSCRIPT , bold_italic_k start_POSTSUBSCRIPT italic_r italic_m end_POSTSUBSCRIPT). By aggregating all rendered pixel colors, we obtain a rendered image 𝒙={V(p,ω)}𝒙 𝑉 𝑝 𝜔\boldsymbol{x}={V(p,\omega)}bold_italic_x = { italic_V ( italic_p , italic_ω ) }. Similar to the geometry modeling stage, we feed the rendered image 𝒙 𝒙\boldsymbol{x}bold_italic_x into SD. The optimization objective remains the same as Equ.3 and Equ.4, where the rendered normal map 𝒏 𝒏\boldsymbol{n}bold_italic_n and the parameters of DMTet Φ dmt subscript Φ 𝑑 𝑚 𝑡\Phi{dmt}roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT are replaced with the rendered image 𝒙 𝒙\boldsymbol{x}bold_italic_x and the parameters of the material encoder Φ mat subscript Φ 𝑚 𝑎 𝑡\Phi_{mat}roman_Φ start_POSTSUBSCRIPT italic_m italic_a italic_t end_POSTSUBSCRIPT, respectively.

3.2. Camera-Guided Low-Rank Adaptation

The domain gap between text-to-2D and text-to-3D generation presents a significant challenge, as discussed in Sec.1, which leads to unsatisfied generated results. To address these issues, we propose Camera-Guided Low-Rank Adaptation (CG-LoRA) as a solution to bridge the domain gap. As depicted in Fig.4, we leverage camera parameters and direction-aware text to guide the generation of parameters in CG-LoRA, enabling X-Dreamer to effectively incorporate camera perspective and direction information.

Specifically, given a text prompt T 𝑇 T italic_T and camera parameters C={x,y,z,ϕ yaw,ϕ pit,θ fov}𝐶 𝑥 𝑦 𝑧 subscript italic-ϕ 𝑦 𝑎 𝑤 subscript italic-ϕ 𝑝 𝑖 𝑡 subscript 𝜃 𝑓 𝑜 𝑣 C={x,y,z,\phi_{yaw},\phi_{pit},\theta_{fov}}italic_C = { italic_x , italic_y , italic_z , italic_ϕ start_POSTSUBSCRIPT italic_y italic_a italic_w end_POSTSUBSCRIPT , italic_ϕ start_POSTSUBSCRIPT italic_p italic_i italic_t end_POSTSUBSCRIPT , italic_θ start_POSTSUBSCRIPT italic_f italic_o italic_v end_POSTSUBSCRIPT }1 1 1 The variables x,y,z,ϕ yaw,ϕ pit,θ fov 𝑥 𝑦 𝑧 subscript italic-ϕ 𝑦 𝑎 𝑤 subscript italic-ϕ 𝑝 𝑖 𝑡 subscript 𝜃 𝑓 𝑜 𝑣 x,y,z,\phi_{yaw},\phi_{pit},\theta_{fov}italic_x , italic_y , italic_z , italic_ϕ start_POSTSUBSCRIPT italic_y italic_a italic_w end_POSTSUBSCRIPT , italic_ϕ start_POSTSUBSCRIPT italic_p italic_i italic_t end_POSTSUBSCRIPT , italic_θ start_POSTSUBSCRIPT italic_f italic_o italic_v end_POSTSUBSCRIPT represent the x, y, z coordinates, yaw angle, pitch angle of the camera, and field of view, respectively. The roll angle ϕ roll subscript italic-ϕ 𝑟 𝑜 𝑙 𝑙\phi_{roll}italic_ϕ start_POSTSUBSCRIPT italic_r italic_o italic_l italic_l end_POSTSUBSCRIPT is intentionally set to 0 to ensure the stability of the object in the rendered image., we initially project these inputs into a feature space using the pretrained textual CLIP encoder ℰ txt(⋅)subscript ℰ 𝑡 𝑥 𝑡⋅\mathcal{E}{txt}(\cdot)caligraphic_E start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT ( ⋅ ) and a trainable MLP ℰ pos(⋅)subscript ℰ 𝑝 𝑜 𝑠⋅\mathcal{E}{pos}(\cdot)caligraphic_E start_POSTSUBSCRIPT italic_p italic_o italic_s end_POSTSUBSCRIPT ( ⋅ ):

(7)𝒕 𝒕\displaystyle\boldsymbol{t}bold_italic_t=ℰ txt(T),absent subscript ℰ 𝑡 𝑥 𝑡 𝑇\displaystyle=\mathcal{E}{txt}(T),= caligraphic_E start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT ( italic_T ) , (8)𝒄 𝒄\displaystyle\boldsymbol{c}bold_italic_c=ℰ pos(C),absent subscript ℰ 𝑝 𝑜 𝑠 𝐶\displaystyle=\mathcal{E}{pos}(C),= caligraphic_E start_POSTSUBSCRIPT italic_p italic_o italic_s end_POSTSUBSCRIPT ( italic_C ) ,

where 𝒕∈ℝ d txt 𝒕 superscript ℝ subscript 𝑑 𝑡 𝑥 𝑡\boldsymbol{t}\in\mathbb{R}^{d_{txt}}bold_italic_t ∈ blackboard_R start_POSTSUPERSCRIPT italic_d start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT end_POSTSUPERSCRIPT and 𝒄∈ℝ d cam 𝒄 superscript ℝ subscript 𝑑 𝑐 𝑎 𝑚\boldsymbol{c}\in\mathbb{R}^{d_{cam}}bold_italic_c ∈ blackboard_R start_POSTSUPERSCRIPT italic_d start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT end_POSTSUPERSCRIPT are textual features and camera features. Subsequently, we employ two low-rank matrices to project 𝒕 𝒕\boldsymbol{t}bold_italic_t and 𝒄 𝒄\boldsymbol{c}bold_italic_c into trainable dimensionality-reduction matrices within CG-LoRA:

(9)𝑨 txt subscript 𝑨 𝑡 𝑥 𝑡\displaystyle\boldsymbol{A}{txt}bold_italic_A start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT=Reshape(𝒕𝑾 txt),absent Reshape 𝒕 subscript 𝑾 𝑡 𝑥 𝑡\displaystyle=\text{Reshape}(\boldsymbol{t}\boldsymbol{W}{txt}),= Reshape ( bold_italic_t bold_italic_W start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT ) , (10)𝑨 cam subscript 𝑨 𝑐 𝑎 𝑚\displaystyle\boldsymbol{A}{cam}bold_italic_A start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT=Reshape(𝒄𝑾 cam),absent Reshape 𝒄 subscript 𝑾 𝑐 𝑎 𝑚\displaystyle=\text{Reshape}(\boldsymbol{c}\boldsymbol{W}{cam}),= Reshape ( bold_italic_c bold_italic_W start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT ) ,

where 𝑨 txt∈ℝ d×r 2 subscript 𝑨 𝑡 𝑥 𝑡 superscript ℝ 𝑑 𝑟 2\boldsymbol{A}{txt}\in\mathbb{R}^{d\times\frac{r}{2}}bold_italic_A start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_d × divide start_ARG italic_r end_ARG start_ARG 2 end_ARG end_POSTSUPERSCRIPT and 𝑨 cam∈ℝ d×r 2 subscript 𝑨 𝑐 𝑎 𝑚 superscript ℝ 𝑑 𝑟 2\boldsymbol{A}{cam}\in\mathbb{R}^{d\times\frac{r}{2}}bold_italic_A start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_d × divide start_ARG italic_r end_ARG start_ARG 2 end_ARG end_POSTSUPERSCRIPT are two dimensionality-reduction matrices of CG-LoRA. The function Reshape(⋅)Reshape⋅\text{Reshape}(\cdot)Reshape ( ⋅ ) is used to transform the shape of a tensor from ℝ d∗r 2 superscript ℝ 𝑑 𝑟 2\mathbb{R}^{d\frac{r}{2}}blackboard_R start_POSTSUPERSCRIPT italic_d ∗ divide start_ARG italic_r end_ARG start_ARG 2 end_ARG end_POSTSUPERSCRIPT to ℝ d×r 2 superscript ℝ 𝑑 𝑟 2\mathbb{R}^{d\times\frac{r}{2}}blackboard_R start_POSTSUPERSCRIPT italic_d × divide start_ARG italic_r end_ARG start_ARG 2 end_ARG end_POSTSUPERSCRIPT. 2 2 2 ℝ d∗r 2 superscript ℝ 𝑑 𝑟 2\mathbb{R}^{d\frac{r}{2}}blackboard_R start_POSTSUPERSCRIPT italic_d ∗ divide start_ARG italic_r end_ARG start_ARG 2 end_ARG end_POSTSUPERSCRIPT denotes a one-dimensional vector. ℝ d×r 2 superscript ℝ 𝑑 𝑟 2\mathbb{R}^{d\times\frac{r}{2}}blackboard_R start_POSTSUPERSCRIPT italic_d × divide start_ARG italic_r end_ARG start_ARG 2 end_ARG end_POSTSUPERSCRIPT represents a two-dimensional matrix.𝑾 txt∈ℝ d txt×(d∗r 2)subscript 𝑾 𝑡 𝑥 𝑡 superscript ℝ subscript 𝑑 𝑡 𝑥 𝑡 𝑑 𝑟 2\boldsymbol{W}{txt}\in\mathbb{R}^{d{txt}\times\left(d\frac{r}{2}\right)}bold_italic_W start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_d start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT × ( italic_d ∗ divide start_ARG italic_r end_ARG start_ARG 2 end_ARG ) end_POSTSUPERSCRIPT and 𝑾 cam∈ℝ d cam×(d∗r 2)subscript 𝑾 𝑐 𝑎 𝑚 superscript ℝ subscript 𝑑 𝑐 𝑎 𝑚 𝑑 𝑟 2\boldsymbol{W}{cam}\in\mathbb{R}^{d{cam}\times\left(d\frac{r}{2}\right)}bold_italic_W start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_d start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT × ( italic_d ∗ divide start_ARG italic_r end_ARG start_ARG 2 end_ARG ) end_POSTSUPERSCRIPT are two low-rank matrices. Thus, we decompose them into the product of two matrices to reduce the trainable parameters in our implementation, i.e., 𝑾 txt=𝑼 txt𝑽 txt subscript 𝑾 𝑡 𝑥 𝑡 subscript 𝑼 𝑡 𝑥 𝑡 subscript 𝑽 𝑡 𝑥 𝑡\boldsymbol{W}{txt}=\boldsymbol{U}{txt}\boldsymbol{V}{txt}bold_italic_W start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT = bold_italic_U start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT bold_italic_V start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT and 𝑾 cam=𝑼 cam𝑽 cam subscript 𝑾 𝑐 𝑎 𝑚 subscript 𝑼 𝑐 𝑎 𝑚 subscript 𝑽 𝑐 𝑎 𝑚\boldsymbol{W}{cam}=\boldsymbol{U}{cam}\boldsymbol{V}{cam}bold_italic_W start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT = bold_italic_U start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT bold_italic_V start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT, where 𝑼 txt∈ℝ d txt×r′subscript 𝑼 𝑡 𝑥 𝑡 superscript ℝ subscript 𝑑 𝑡 𝑥 𝑡 superscript 𝑟′\boldsymbol{U}{txt}\in\mathbb{R}^{d{txt}\times r^{\prime}}bold_italic_U start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_d start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT × italic_r start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT end_POSTSUPERSCRIPT, 𝑽 txt∈ℝ r′×(d∗r 2)subscript 𝑽 𝑡 𝑥 𝑡 superscript ℝ superscript 𝑟′𝑑 𝑟 2\boldsymbol{V}{txt}\in\mathbb{R}^{r^{\prime}\times(d*\frac{r}{2})}bold_italic_V start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_r start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT × ( italic_d ∗ divide start_ARG italic_r end_ARG start_ARG 2 end_ARG ) end_POSTSUPERSCRIPT, 𝑼 cam∈ℝ d cam×r′subscript 𝑼 𝑐 𝑎 𝑚 superscript ℝ subscript 𝑑 𝑐 𝑎 𝑚 superscript 𝑟′\boldsymbol{U}{cam}\in\mathbb{R}^{d_{cam}\times r^{\prime}}bold_italic_U start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_d start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT × italic_r start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT end_POSTSUPERSCRIPT, 𝑽 cam∈ℝ r′×(d∗r 2)subscript 𝑽 𝑐 𝑎 𝑚 superscript ℝ superscript 𝑟′𝑑 𝑟 2\boldsymbol{V}_{cam}\in\mathbb{R}^{r^{\prime}\times(d*\frac{r}{2})}bold_italic_V start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_r start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT × ( italic_d ∗ divide start_ARG italic_r end_ARG start_ARG 2 end_ARG ) end_POSTSUPERSCRIPT, r′superscript 𝑟′r^{\prime}italic_r start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT is a small number (i.e., 4). In accordance with LoRA(Hu et al., 2021), we initialize the dimensionality-expansion matrix 𝑩∈ℝ r×d 𝑩 superscript ℝ 𝑟 𝑑\boldsymbol{B}\in\mathbb{R}^{r\times d}bold_italic_B ∈ blackboard_R start_POSTSUPERSCRIPT italic_r × italic_d end_POSTSUPERSCRIPT with zero values to ensure that the model begins training from the pretrained parameters of SD. Thus, the feed-forward process of CG-LoRA is formulated as follows:

(11)𝒚=𝒙𝑾+[𝒙𝑨 txt;𝒙𝑨 cam]𝑩,𝒚 𝒙 𝑾 𝒙 subscript 𝑨 𝑡 𝑥 𝑡 𝒙 subscript 𝑨 𝑐 𝑎 𝑚 𝑩\displaystyle\boldsymbol{y}=\boldsymbol{x}\boldsymbol{W}+[\boldsymbol{x}% \boldsymbol{A}{txt};\boldsymbol{x}\boldsymbol{A}{cam}]\boldsymbol{B},bold_italic_y = bold_italic_x bold_italic_W + [ bold_italic_x bold_italic_A start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT ; bold_italic_x bold_italic_A start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT ] bold_italic_B ,

where 𝑾∈ℝ d×d 𝑾 superscript ℝ 𝑑 𝑑\boldsymbol{W}\in\mathbb{R}^{d\times d}bold_italic_W ∈ blackboard_R start_POSTSUPERSCRIPT italic_d × italic_d end_POSTSUPERSCRIPT represents the frozen parameters of the pretrained SD model, and [⋅;⋅]⋅⋅[\cdot;\cdot][ ⋅ ; ⋅ ] is the concatenation operation alone the channel dimension. In our implementation, we integrate CG-LoRA into the linear embedding layers of the attention modules in SD to effectively capture direction and camera information.

3.3. Attention-Mask Alignment Loss

Although SD is pretrained to generate 2D images that encompass both foreground and background elements, the task of text-to-3D generation demands a stronger focus on generating foreground objects. To address this specific requirement, we introduce Attention-Mask Alignment (AMA) Loss, which aims to align the attention map of SD with the rendered mask image of the 3D object. Specifically, for each attention layer in the pretrained SD, we compute the attention map between the query image feature 𝑸∈ℝ H×h×w×d H 𝑸 superscript ℝ 𝐻 ℎ 𝑤 𝑑 𝐻\boldsymbol{Q}\in\mathbb{R}^{H\times h\times w\times\frac{d}{H}}bold_italic_Q ∈ blackboard_R start_POSTSUPERSCRIPT italic_H × italic_h × italic_w × divide start_ARG italic_d end_ARG start_ARG italic_H end_ARG end_POSTSUPERSCRIPT and the key CLS token feature 𝑲∈ℝ H×d H 𝑲 superscript ℝ 𝐻 𝑑 𝐻\boldsymbol{K}\in\mathbb{R}^{H\times\frac{d}{H}}bold_italic_K ∈ blackboard_R start_POSTSUPERSCRIPT italic_H × divide start_ARG italic_d end_ARG start_ARG italic_H end_ARG end_POSTSUPERSCRIPT. The calculation is formulated as follows:

(12)𝒂¯=Softmax(𝑸𝑲⊤d),¯𝒂 Softmax 𝑸 superscript 𝑲 top 𝑑\displaystyle\bar{\boldsymbol{a}}=\mathrm{Softmax}(\frac{\boldsymbol{Q}% \boldsymbol{K}^{\top}}{\sqrt{d}}),over¯ start_ARG bold_italic_a end_ARG = roman_Softmax ( divide start_ARG bold_italic_Q bold_italic_K start_POSTSUPERSCRIPT ⊤ end_POSTSUPERSCRIPT end_ARG start_ARG square-root start_ARG italic_d end_ARG end_ARG ) ,

where H 𝐻 H italic_H denotes the number of attention heads in SD. h ℎ h italic_h, w 𝑤 w italic_w, and d 𝑑 d italic_d represent the height, width, and channel dimensions of the image features, respectively. 𝒂¯∈ℝ H×h×w¯𝒂 superscript ℝ 𝐻 ℎ 𝑤\bar{\boldsymbol{a}}\in\mathbb{R}^{H\times h\times w}over¯ start_ARG bold_italic_a end_ARG ∈ blackboard_R start_POSTSUPERSCRIPT italic_H × italic_h × italic_w end_POSTSUPERSCRIPT represents the attention map. Subsequently, we proceed to compute the overall attention map 𝒂^∈ℝ h×w^𝒂 superscript ℝ ℎ 𝑤\hat{\boldsymbol{a}}\in\mathbb{R}^{h\times w}over^ start_ARG bold_italic_a end_ARG ∈ blackboard_R start_POSTSUPERSCRIPT italic_h × italic_w end_POSTSUPERSCRIPT by averaging the attention values of 𝒂¯¯𝒂\bar{\boldsymbol{a}}over¯ start_ARG bold_italic_a end_ARG across all attention heads. Since the attention map values are normalized using the softmax function, the activation values in the attention map may become very small when the image feature resolution is high. However, considering that each element in the rendered mask has a binary value of either 0 or 1, directly aligning the attention map with the rendered mask is not optimal. To address this, we propose a normalization technique that maps the values in the attention map from 0 to 1. This normalization process is formulated as follows:

(13)𝒂=𝒂^−min(𝒂^)max(𝒂^)−min(𝒂^)+ν,𝒂^𝒂^𝒂^𝒂^𝒂 𝜈\displaystyle\boldsymbol{a}=\frac{\hat{\boldsymbol{a}}-\min(\hat{\boldsymbol{a% }})}{\max(\hat{\boldsymbol{a}})-\min(\hat{\boldsymbol{a}})+\nu},bold_italic_a = divide start_ARG over^ start_ARG bold_italic_a end_ARG - roman_min ( over^ start_ARG bold_italic_a end_ARG ) end_ARG start_ARG roman_max ( over^ start_ARG bold_italic_a end_ARG ) - roman_min ( over^ start_ARG bold_italic_a end_ARG ) + italic_ν end_ARG ,

where ν 𝜈\nu italic_ν represents a small constant value (e.g., 1e-6 1 𝑒-6 1e\textrm{-}6 1 italic_e - 6) that prevents division by zero in the denominator. Finally, we align the attention maps of all attention layers with the rendered mask of the 3D object using the AMA loss. The formulation of this alignment is presented in Equ.4.

- Experiments

4.1. Implementation Details.

We conduct the experiments using four Nvidia RTX 3090 GPUs and the PyTorch library(Paszke et al., 2019). To calculate the SDS loss, we utilize the Stable Diffusion implemented by HuggingFace Diffusers(von Platen et al., 2022). For the DMTet Φ dmt subscript Φ 𝑑 𝑚 𝑡\Phi_{dmt}roman_Φ start_POSTSUBSCRIPT italic_d italic_m italic_t end_POSTSUBSCRIPT and material encoder Φ mat subscript Φ 𝑚 𝑎 𝑡\Phi_{mat}roman_Φ start_POSTSUBSCRIPT italic_m italic_a italic_t end_POSTSUBSCRIPT, we implement them as a two-layer MLP and a single-layer MLP, respectively, with a hidden dimension of 32. The values of d cam subscript 𝑑 𝑐 𝑎 𝑚 d_{cam}italic_d start_POSTSUBSCRIPT italic_c italic_a italic_m end_POSTSUBSCRIPT, d txt subscript 𝑑 𝑡 𝑥 𝑡 d_{txt}italic_d start_POSTSUBSCRIPT italic_t italic_x italic_t end_POSTSUBSCRIPT, r 𝑟 r italic_r, r′superscript 𝑟′r^{\prime}italic_r start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT, the batch size, the SDS loss weight, the AMA loss weight, and the aspect ratio of the perspective projection plane are set to 1024, 1024, 4, 4, 4, 1, 0.1, and 1 respectively. AMA loss is used after half of the training iterations, where CG-LoRA has a certain degree of perspective alignment ability. We optimize X-Dreamer for 2000 iterations for geometry learning and 1000 iterations for appearance learning. For each iteration, ϕ pit subscript italic-ϕ 𝑝 𝑖 𝑡\phi_{pit}italic_ϕ start_POSTSUBSCRIPT italic_p italic_i italic_t end_POSTSUBSCRIPT, ϕ yaw subscript italic-ϕ 𝑦 𝑎 𝑤\phi_{yaw}italic_ϕ start_POSTSUBSCRIPT italic_y italic_a italic_w end_POSTSUBSCRIPT, and θ fov subscript 𝜃 𝑓 𝑜 𝑣\theta_{fov}italic_θ start_POSTSUBSCRIPT italic_f italic_o italic_v end_POSTSUBSCRIPT are randomly sampled from (−15∘,45∘)superscript 15 superscript 45(-15^{\circ},45^{\circ})( - 15 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT , 45 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT ), (−180∘,180∘)superscript 180 superscript 180(-180^{\circ},180^{\circ})( - 180 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT , 180 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT ), and (25∘,45∘)superscript 25 superscript 45(25^{\circ},45^{\circ})( 25 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT , 45 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT ), respectively.

Figure 5. Text-to-3D generation results from an ellipsoid.

Figure 6. Text-to-3D generation results from coarse-grained guided meshes.

4.2. Results of X-Dreamer

4.2.1. Text-to-3D generation from an ellipsoid

We present representative results of X-Dreamer for text-to-3D generation, utilizing an ellipsoid as the initial geometry, as shown in Fig.5. The results demonstrate the ability of X-Dreamer to generate high-quality and photo-realistic outputs that accurately correspond to the input text prompts.

4.2.2. Text-to-3D generation from coarse-grained meshes

While there is a wide availability of coarse-grained meshes for download from the internet, directly utilizing these meshes for 3D content creation often results in poor performance due to the lack of geometric details. However, when compared to a 3D ellipsoid, these meshes may provide better 3D shape prior information for X-Dreamer. Hence, instead of using ellipsoids, we can initialize DMTet with coarse-grained guided meshes as well. As shown in Fig.6, X-Dreamer can generate 3D assets with precise geometric details based on the given text, even when the provided coarse-grained mesh lacks details. For instance, in the last column of Fig.6, X-Dreamer accurately transforms the geometry from a cow to a corgi based on the text prompt “A corgi, highly detailed.” Therefore, X-Dreamer is also an exceptionally powerful tool for editing coarse-grained mesh geometry using textual inputs.

Table 1. Quantitative comparison of SOTA Methods: The top-performing and second-best results are highlighted in bolded and underlined, respectively.

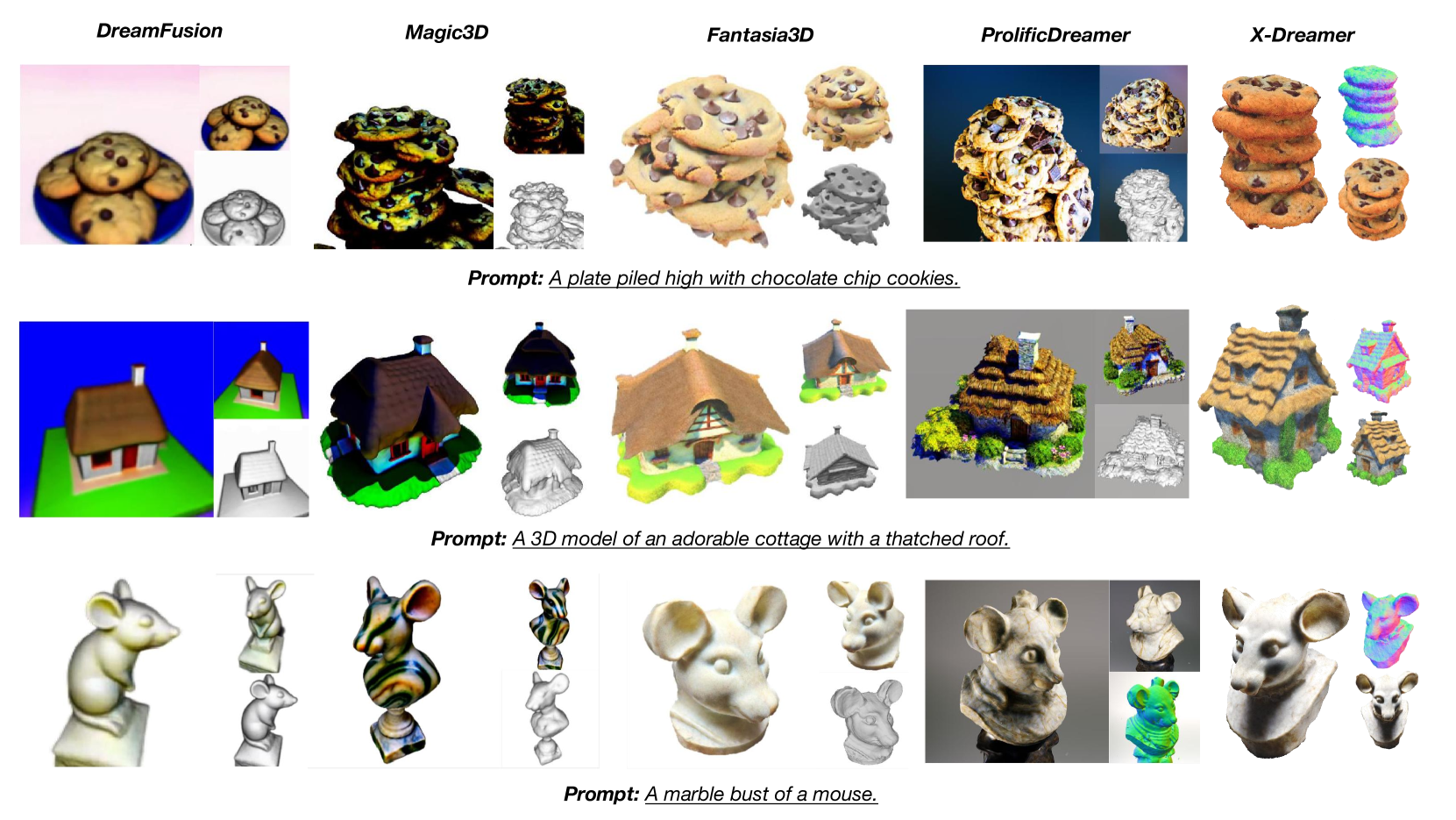

Figure 7. Comparison with SOTA methods. Our method yields results that exhibit enhanced fidelity and more details.

Figure 8. More comparison with State-of-the-Art (SOTA) methods.

4.2.3. Quantitative Comparison

We conducted a meticulously organized user study involving 50 individuals to evaluate the performance of four state-of-the-art (SOTA) methods by assessing 50 generated results, i.e., DreamFusion(Poole et al., 2022), Magic3D(Lin et al., 2023), Fantasia3D(Chen et al., 2023a), and ProlificDreamer(Wang et al., 2023c). Participants were asked to compare and select the best result based on two criteria: Geometry Quality (Geo. Qua.) and Appearance Quality (App. Qua.). The results, as presented in Tab.1, indicate that our approach garnered a preference from 75.0% of the participants. This overwhelming preference underscores our method’s exceptional quality and superiority, establishing it as a pioneering solution in the field. The primary objective of these user studies was to evaluate the geometric and visual quality of the generated results, without specifically assessing their alignment with the text. To provide a more comprehensive evaluation, we further calculated the CLIP score and OpenCLIP score for both the generated results and the corresponding text prompts. As depicted in Tab.1, our method outperformed other approaches in terms of both the CLIP score and OpenCLIP score. These findings demonstrate that the results generated by our method exhibit superior alignment with the text, further strengthening the efficacy of our approach.

4.2.4. Qualitative Comparison

To assess the effectiveness of X-Dreamer, we compare it with four SOTA methods. Since the codes of some methods(Poole et al., 2022; Lin et al., 2023; Wang et al., 2023c) have not been publicly available, we present the results obtained by implementing these methods in threestudio(Guo et al., 2023). The results are depicted in Fig.7. In Fig.8, we present a comprehensive comparison of our methods with four baselines, using the images provided in their original papers. When compared to the SDS-based methods(Poole et al., 2022; Lin et al., 2023; Chen et al., 2023a), X-Dreamer outperforms them in generating superior-quality and realistic 3D assets. In addition, when compared to the VSD-based method(Wang et al., 2023c), X-Dreamer produces 3D content with comparable or even better visual effects, while requiring significantly less optimization time. Specifically, the geometry and appearance learning process of X-Dreamer requires only approximately 27 minutes, whereas ProlificDreamer exceeds 8 hours.

Figure 9. Camera Control Comparison with Zero-1-to-3(Liu et al., 2023).

4.2.5. Camera Control Comparison

During training, CG-LoRA aims to control the camera parameters. To investigate the effectiveness of this control, we visualize the image generated by Stable Diffusion (SD) with a pretrained CG-LoRA. As shown in Fig.9, given a specific camera parameter, SD with CG-LoRA can generate images from that perspective. To make a comparative analysis, we also compare our results with Zero-1-to-3(Liu et al., 2023), a method capable of generating images with desired viewing angles based on relative camera angles and reference images. Using the front view produced by our approach as a reference image, we leverage Zero-1-to-3 to generate images with varying camera parameters. Our observations indicate that our method can generate images that exhibit a general alignment with the angles produced by Zero-1-to-3.

4.3. Ablation Study

Figure 10. Ablation studies of the proposed X-Dreamer.

Figure 11. Visualization of Attention Map, Rendered Mask, and Rendered Image with and without AMA Loss. For clarity, we only visualize the attention map of the first attention layer in SD.

4.3.1. Ablation on the proposed modules

To gain insights into the abilities of CG-LoRA and AMA loss, we perform ablation studies wherein each module is incorporated individually to assess its impact. As depicted in Fig.10, the ablation results demonstrate a notable decline in the geometry and appearance quality of the generated 3D objects when CG-LoRA is excluded from X-Dreamer. For instance, as shown in the second row of Fig.10, the generated Batman lacks an ear on the top of its head in the absence of CG-LoRA. This observation highlights the crucial role of CG-LoRA in injecting camera-relevant information into the model, thereby enhancing the 3D consistency. Furthermore, the omission of AMA loss from X-Dreamer also has a deleterious effect on the geometry and appearance fidelity of the generated 3D assets. Specifically, as illustrated in the first row of Fig.10, X-Dreamer successfully generates a photorealistic texture for the rocket, whereas the texture quality noticeably deteriorates in the absence of AMA loss. This disparity can be attributed to AMA loss, which directs the focus of the model towards foreground object generation, ensuring the realistic representation of both geometry and appearance of foreground objects. These ablation studies provide valuable insights into the individual contributions of CG-LoRA and AMA loss in enhancing the geometry, appearance, and overall quality of the generated 3D objects.

4.3.2. Attention map comparisons w/ and w/o AMA loss

AMA loss is introduced with the aim of guiding attention during the denoising process towards the foreground object. This objective is achieved by aligning the attention map of SD with the rendered mask of the 3D object. To evaluate the effectiveness of AMA loss in accomplishing this goal, we visualize the attention maps of SD with and without AMA loss at both the geometry learning and appearance learning stages. As depicted in Fig.11, it can be observed that incorporating AMA loss not only results in improved geometry and appearance of the generated 3D asset, but also concentrates the attention of SD specifically on the foreground object area. The visualizations confirm the efficacy of AMA loss in directing the attention of SD, resulting in improved quality and foreground object focus during geometry and appearance learning stages.

4.3.3. Ablation on the parameter generation of CG-LoRA

Figure 12. Ablation Study of CG-LoRA.

In our implementation, the parameters of CG-LoRA are dynamically generated based on two key factors related to camera information: camera parameters and direction-aware text. To thoroughly investigate the impact of these camera information terms on X-Dreamer, we conducted ablation experiments, dynamically generating CG-LoRA parameters based solely on one of these terms. The results, as illustrated in Fig.12, clearly demonstrate that when using only one type of camera information term, the geometry and appearance quality of the generated results are significantly diminished compared to those produced by X-Dreamer. For example, as shown in the third row of Fig.12, when utilizing the CG-LoRA solely related to direction-aware text, the geometry quality of the generated cookies appears poor. Similarly, as depicted in the last row of Fig.12, employing the CG-LoRA solely based on camera parameters results in diminished geometry and appearance quality for the pumpkin. These findings highlight the importance of both camera parameters and direction-aware text in generating high-quality 3D assets. The successful generation of parameters for CG-LoRA relies on the synergy between these two camera information terms, emphasizing their crucial role in achieving superior geometry and appearance in the generated assets.

4.4. Attention Map of Different Layers

Figure 13. Attention maps of different layers of SD for the geometry learning stage.

Figure 14. Attention maps of different layers of SD for the appearance learning stage.

Figure 15. Rendered images of generated 3D assets under different environment maps.

Figure 16. Bad cases of X-Dreamer.

In this section, we present visualizations of the attention maps from various attention layers during the geometry learning stage and the appearance learning stage. As illustrated in Fig.13 and Fig.14, the attention maps generated by different attention layers consistently exhibit the ability to accurately locate the shape of the foreground object. This demonstrates the robustness and effectiveness of the attention mechanism in capturing the essential features of the foreground object. Furthermore, we observe that attention maps from different layers seem to exhibit variations in focus, with some emphasizing the foreground, some highlighting the edges, and some concentrating on the interior of the object. This divergence in focus can be attributed to the distinct roles played by different layers in the stable diffusion training process. Each layer contributes its unique perspective and emphasis, leading to variations in attention focus. Nevertheless, despite these variations, all attention maps generated by X-Dreamer successfully locate the foreground objects with precision. This outcome provides strong evidence that our proposed module effectively guides the model’s attention towards the foreground objects. By incorporating the attention mechanism into the training process, X-Dreamer ensures that the model focuses on the most relevant regions, resulting in accurate and reliable detection of the foreground objects throughout the geometry and appearance learning stages.

4.5. Rendered Images under Different Environment Maps

Our method, while employing a fixed environment for training the model, offers significant advantages and flexibility in the rendering of 3D assets generated by X-Dreamer. It is worth noting that these assets are not limited to a single environment; instead, they possess the capability to be rendered in diverse environments, expanding their applicability and adaptability. The visual evidence presented in Fig.15 reinforces our claim. By utilizing different environment maps, we can readily observe the striking variations in the appearance of the rendered images of these 3D assets. This compelling demonstration showcases the inherent versatility and potential of X-Dreamer’s generated assets to seamlessly integrate into various rendering engines. The ability to effectively address the challenges posed by different lighting conditions is a crucial aspect of any rendering engine. By leveraging the capabilities of X-Dreamer, we unlock the possibility of using its 3D assets in a multitude of rendering engines. This versatility ensures that the assets can adapt and thrive in diverse lighting scenarios, enabling them to meet the demands of real-world applications in fields such as architecture, virtual reality, and computer graphics.

4.6. Limitation

One limitation of the proposed X-Dreamer is its inability to generate multiple objects simultaneously. When the input text prompt contains two distinct objects, X-Dreamer may produce a mixed object that combines properties of both objects. A clearer understanding of this limitation can be gained by referring to Fig.16. In the first row of Fig.16, our intention is for X-Dreamer to generate “A sliced loaf of fresh bread and a cabbage.” However, the resulting output consists of a bread with a few cabbage leaves, indicating the model’s difficulty in generating separate objects accurately. Similarly, in the second row of Fig.16, we aim to generate “A blue and white porcelain vase and an apple.” However, the output depicts a blue and white porcelain vase with a shape resembling an apple. Nevertheless, it is important to note that this limitation does not significantly undermine the value of our work. In applications such as games and movies, each object functions as an independent entity, capable of creating complex scenes through interaction with other objects. Our primary objective is to generate high-quality 3D objects, and while the current limitation exists, it does not diminish the overall quality and utility of our approach.

- Conclusion

This study introduces a groundbreaking framework called X-Dreamer, which is designed to enhance text-to-3D synthesis by addressing the domain gap between text-to-2D and text-to-3D generation. To achieve this, we first propose CG-LoRA, a module that incorporates 3D-associated information, including direction-aware text and camera parameters, into the pretrained Stable Diffusion (SD) model. By doing so, we enable the effective capture of information relevant to the 3D domain. Furthermore, we design AMA loss to align the attention map generated by SD with the rendered mask of the 3D object. The primary objective of AMA loss is to guide the focus of the text-to-3D model towards the generation of foreground objects. Through extensive experiments, we have thoroughly evaluated the efficacy of our proposed method, which has consistently demonstrated its ability to synthesize high-quality and photorealistic 3D content from given text prompts.

References

- (1)

- Achiam et al. (2023) Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. 2023. Gpt-4 technical report. arXiv preprint arXiv:2303.08774 (2023).

- Babu et al. (2023) Sudarshan Babu, Richard Liu, Avery Zhou, Michael Maire, Greg Shakhnarovich, and Rana Hanocka. 2023. Hyperfields: Towards zero-shot generation of nerfs from text. arXiv preprint arXiv:2310.17075 (2023).

- Balaji et al. (2022) Yogesh Balaji, Seungjun Nah, Xun Huang, Arash Vahdat, Jiaming Song, Karsten Kreis, Miika Aittala, Timo Aila, Samuli Laine, Bryan Catanzaro, et al. 2022. ediffi: Text-to-image diffusion models with an ensemble of expert denoisers. arXiv preprint arXiv:2211.01324 (2022).

- Brown et al. (2020) Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. 2020. Language models are few-shot learners. Advances in neural information processing systems 33 (2020), 1877–1901.

- Cao et al. (2023) Tianshi Cao, Karsten Kreis, Sanja Fidler, Nicholas Sharp, and Kangxue Yin. 2023. Texfusion: Synthesizing 3d textures with text-guided image diffusion models. In Proceedings of the IEEE/CVF International Conference on Computer Vision. 4169–4181.

- Chen et al. (2023c) Jingwen Chen, Yingwei Pan, Ting Yao, and Tao Mei. 2023c. Controlstyle: Text-driven stylized image generation using diffusion priors. In Proceedings of the 31st ACM International Conference on Multimedia. 7540–7548.

- Chen et al. (2023a) Rui Chen, Yongwei Chen, Ningxin Jiao, and Kui Jia. 2023a. Fantasia3d: Disentangling geometry and appearance for high-quality text-to-3d content creation. arXiv preprint arXiv:2303.13873 (2023).

- Chen et al. (2023b) Yang Chen, Yingwei Pan, Yehao Li, Ting Yao, and Tao Mei. 2023b. Control3d: Towards controllable text-to-3d generation. In Proceedings of the 31st ACM International Conference on Multimedia. 1148–1156.

- Chen et al. (2023d) Zilong Chen, Feng Wang, and Huaping Liu. 2023d. Text-to-3D using Gaussian Splatting. arXiv preprint arXiv:2309.16585 (2023).

- Chen et al. (2023e) Zhe Chen, Jiannan Wu, Wenhai Wang, Weijie Su, Guo Chen, Sen Xing, Zhong Muyan, Qinglong Zhang, Xizhou Zhu, Lewei Lu, et al. 2023e. Internvl: Scaling up vision foundation models and aligning for generic visual-linguistic tasks. arXiv preprint arXiv:2312.14238 (2023).

- Ding et al. (2023) Ziluo Ding, Hao Luo, Ke Li, Junpeng Yue, Tiejun Huang, and Zongqing Lu. 2023. Clip4mc: An rl-friendly vision-language model for minecraft. arXiv preprint arXiv:2303.10571 (2023).

- Fei et al. (2024a) Hao Fei, Shengqiong Wu, Wei Ji, Hanwang Zhang, and Tat-Seng Chua. 2024a. Dysen-VDM: Empowering Dynamics-aware Text-to-Video Diffusion with LLMs. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 7641–7653.

- Fei et al. (2024b) Hao Fei, Shengqiong Wu, Wei Ji, Hanwang Zhang, Meishan Zhang, Mong-Li Lee, and Wynne Hsu. 2024b. Video-of-thought: Step-by-step video reasoning from perception to cognition. In Proceedings of the International Conference on Machine Learning.

- Fei et al. (2024c) Hao Fei, Shengqiong Wu, Hanwang Zhang, Tat-Seng Chua, and Shuicheng Yan. 2024c. VITRON: A Unified Pixel-level Vision LLM for Understanding, Generating, Segmenting, Editing. (2024).

- Fei et al. (2024d) Hao Fei, Shengqiong Wu, Meishan Zhang, Min Zhang, Tat-Seng Chua, and Shuicheng Yan. 2024d. Enhancing video-language representations with structural spatio-temporal alignment. IEEE Transactions on Pattern Analysis and Machine Intelligence (2024).

- Guo et al. (2023) Yuan-Chen Guo, Ying-Tian Liu, Ruizhi Shao, Christian Laforte, Vikram Voleti, Guan Luo, Chia-Hao Chen, Zi-Xin Zou, Chen Wang, Yan-Pei Cao, and Song-Hai Zhang. 2023. threestudio: A unified framework for 3D content generation. https://github.com/threestudio-project/threestudio.

- Ho et al. (2020) Jonathan Ho, Ajay Jain, and Pieter Abbeel. 2020. Denoising diffusion probabilistic models. Advances in neural information processing systems 33 (2020), 6840–6851.

- Hu et al. (2021) Edward J Hu, Yelong Shen, Phillip Wallis, Zeyuan Allen-Zhu, Yuanzhi Li, Shean Wang, Lu Wang, and Weizhu Chen. 2021. Lora: Low-rank adaptation of large language models. arXiv preprint arXiv:2106.09685 (2021).

- Hu et al. (2022) Edward J Hu, Yelong Shen, Phillip Wallis, Zeyuan Allen-Zhu, Yuanzhi Li, Shean Wang, Lu Wang, and Weizhu Chen. 2022. LoRA: Low-Rank Adaptation of Large Language Models. In International Conference on Learning Representations. https://openreview.net/forum?id=nZeVKeeFYf9

- Huang et al. (2023b) Shuo Huang, Zongxin Yang, Liangting Li, Yi Yang, and Jia Jia. 2023b. AvatarFusion: Zero-shot Generation of Clothing-Decoupled 3D Avatars Using 2D Diffusion. In Proceedings of the 31st ACM International Conference on Multimedia. 5734–5745.

- Huang et al. (2023a) Yukun Huang, Jianan Wang, Ailing Zeng, He Cao, Xianbiao Qi, Yukai Shi, Zheng-Jun Zha, and Lei Zhang. 2023a. DreamWaltz: Make a Scene with Complex 3D Animatable Avatars. arXiv preprint arXiv:2305.12529 (2023).

- Huang et al. (2024) Yukun Huang, Jianan Wang, Ailing Zeng, He Cao, Xianbiao Qi, Yukai Shi, Zheng-Jun Zha, and Lei Zhang. 2024. Dreamwaltz: Make a scene with complex 3d animatable avatars. Advances in Neural Information Processing Systems 36 (2024).

- Jain et al. (2022) Ajay Jain, Ben Mildenhall, Jonathan T Barron, Pieter Abbeel, and Ben Poole. 2022. Zero-shot text-guided object generation with dream fields. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 867–876.

- Jiang et al. (2023a) Ruixiang Jiang, Lingbo Liu, and Changwen Chen. 2023a. Clip-count: Towards text-guided zero-shot object counting. In Proceedings of the 31st ACM International Conference on Multimedia. 4535–4545.

- Jiang et al. (2023b) Ruixiang Jiang, Can Wang, Jingbo Zhang, Menglei Chai, Mingming He, Dongdong Chen, and Jing Liao. 2023b. AvatarCraft: Transforming Text into Neural Human Avatars with Parameterized Shape and Pose Control. arXiv preprint arXiv:2303.17606 (2023).

- Kumari et al. (2023) Nupur Kumari, Bingliang Zhang, Richard Zhang, Eli Shechtman, and Jun-Yan Zhu. 2023. Multi-concept customization of text-to-image diffusion. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 1931–1941.

- Lei et al. (2022) Jiabao Lei, Yabin Zhang, Kui Jia, et al. 2022. Tango: Text-driven photorealistic and robust 3d stylization via lighting decomposition. Advances in Neural Information Processing Systems 35 (2022), 30923–30936.

- Li et al. (2024b) Chunyuan Li, Cliff Wong, Sheng Zhang, Naoto Usuyama, Haotian Liu, Jianwei Yang, Tristan Naumann, Hoifung Poon, and Jianfeng Gao. 2024b. Llava-med: Training a large language-and-vision assistant for biomedicine in one day. Advances in Neural Information Processing Systems 36 (2024).

- Li et al. (2023b) Muheng Li, Yueqi Duan, Jie Zhou, and Jiwen Lu. 2023b. Diffusion-sdf: Text-to-shape via voxelized diffusion. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 12642–12651.

- Li et al. (2023a) Weiyu Li, Rui Chen, Xuelin Chen, and Ping Tan. 2023a. SWEETDREAMER: ALIGNING GEOMETRIC PRIORS IN 2D DIFFUSION FOR CONSISTENT TEXT-TO-3D. arXiv preprint arXiv:2310.02596 (2023).

- Li et al. (2024a) Yuhan Li, Yishun Dou, Yue Shi, Yu Lei, Xuanhong Chen, Yi Zhang, Peng Zhou, and Bingbing Ni. 2024a. Focaldreamer: Text-driven 3d editing via focal-fusion assembly. In Proceedings of the AAAI Conference on Artificial Intelligence, Vol.38. 3279–3287.

- Li et al. (2023c) Zhang Li, Biao Yang, Qiang Liu, Zhiyin Ma, Shuo Zhang, Jingxu Yang, Yabo Sun, Yuliang Liu, and Xiang Bai. 2023c. Monkey: Image resolution and text label are important things for large multi-modal models. arXiv preprint arXiv:2311.06607 (2023).

- Lin et al. (2023) Chen-Hsuan Lin, Jun Gao, Luming Tang, Towaki Takikawa, Xiaohui Zeng, Xun Huang, Karsten Kreis, Sanja Fidler, Ming-Yu Liu, and Tsung-Yi Lin. 2023. Magic3d: High-resolution text-to-3d content creation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 300–309.

- Liu et al. (2024) Haotian Liu, Chunyuan Li, Qingyang Wu, and Yong Jae Lee. 2024. Visual instruction tuning. Advances in neural information processing systems 36 (2024).

- Liu et al. (2023) Ruoshi Liu, Rundi Wu, Basile Van Hoorick, Pavel Tokmakov, Sergey Zakharov, and Carl Vondrick. 2023. Zero-1-to-3: Zero-shot one image to 3d object. In Proceedings of the IEEE/CVF International Conference on Computer Vision. 9298–9309.

- Lorraine et al. (2023) Jonathan Lorraine, Kevin Xie, Xiaohui Zeng, Chen-Hsuan Lin, Towaki Takikawa, Nicholas Sharp, Tsung-Yi Lin, Ming-Yu Liu, Sanja Fidler, and James Lucas. 2023. Att3d: Amortized text-to-3d object synthesis. In Proceedings of the IEEE/CVF International Conference on Computer Vision. 17946–17956.

- Lu et al. (2023) Lingxiao Lu, Jiangtong Li, Junyan Cao, Li Niu, and Liqing Zhang. 2023. Painterly image harmonization using diffusion model. In Proceedings of the 31st ACM International Conference on Multimedia. 233–241.

- Luo et al. (2024) Gen Luo, Yiyi Zhou, Yuxin Zhang, Xiawu Zheng, Xiaoshuai Sun, and Rongrong Ji. 2024. Feast Your Eyes: Mixture-of-Resolution Adaptation for Multimodal Large Language Models. arXiv preprint arXiv:2403.03003 (2024).

- Ma et al. (2023a) Yiwei Ma, Jiayi Ji, Xiaoshuai Sun, Yiyi Zhou, and Rongrong Ji. 2023a. Towards local visual modeling for image captioning. Pattern Recognition 138 (2023), 109420.

- Ma et al. (2022) Yiwei Ma, Guohai Xu, Xiaoshuai Sun, Ming Yan, Ji Zhang, and Rongrong Ji. 2022. X-clip: End-to-end multi-grained contrastive learning for video-text retrieval. In Proceedings of the 30th ACM International Conference on Multimedia. 638–647.

- Ma et al. (2023b) Yiwei Ma, Xiaoqing Zhang, Xiaoshuai Sun, Jiayi Ji, Haowei Wang, Guannan Jiang, Weilin Zhuang, and Rongrong Ji. 2023b. X-Mesh: Towards Fast and Accurate Text-driven 3D Stylization via Dynamic Textual Guidance. In Proceedings of the IEEE/CVF International Conference on Computer Vision. 2749–2760.

- McAuley et al. (2012) Stephen McAuley, Stephen Hill, Naty Hoffman, Yoshiharu Gotanda, Brian Smits, Brent Burley, and Adam Martinez. 2012. Practical physically-based shading in film and game production. In ACM SIGGRAPH 2012 Courses. 1–7.

- Metzer et al. (2023) Gal Metzer, Elad Richardson, Or Patashnik, Raja Giryes, and Daniel Cohen-Or. 2023. Latent-nerf for shape-guided generation of 3d shapes and textures. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 12663–12673.

- Michel et al. (2022) Oscar Michel, Roi Bar-On, Richard Liu, Sagie Benaim, and Rana Hanocka. 2022. Text2mesh: Text-driven neural stylization for meshes. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 13492–13502.

- Mildenhall et al. (2021) Ben Mildenhall, Pratul P Srinivasan, Matthew Tancik, Jonathan T Barron, Ravi Ramamoorthi, and Ren Ng. 2021. Nerf: Representing scenes as neural radiance fields for view synthesis. Commun. ACM 65, 1 (2021), 99–106.

- Mohammad Khalid et al. (2022) Nasir Mohammad Khalid, Tianhao Xie, Eugene Belilovsky, and Tiberiu Popa. 2022. Clip-mesh: Generating textured meshes from text using pretrained image-text models. In SIGGRAPH Asia 2022 conference papers. 1–8.

- Müller et al. (2022) Thomas Müller, Alex Evans, Christoph Schied, and Alexander Keller. 2022. Instant neural graphics primitives with a multiresolution hash encoding. ACM Transactions on Graphics (ToG) 41, 4 (2022), 1–15.

- Paszke et al. (2019) Adam Paszke, Sam Gross, Francisco Massa, Adam Lerer, James Bradbury, Gregory Chanan, Trevor Killeen, Zeming Lin, Natalia Gimelshein, Luca Antiga, et al. 2019. Pytorch: An imperative style, high-performance deep learning library. Advances in neural information processing systems 32 (2019).

- Poole et al. (2022) Ben Poole, Ajay Jain, Jonathan T Barron, and Ben Mildenhall. 2022. Dreamfusion: Text-to-3d using 2d diffusion. arXiv preprint arXiv:2209.14988 (2022).

- Qu et al. (2023) Leigang Qu, Shengqiong Wu, Hao Fei, Liqiang Nie, and Tat-Seng Chua. 2023. Layoutllm-t2i: Eliciting layout guidance from llm for text-to-image generation. In Proceedings of the 31st ACM International Conference on Multimedia. 643–654.

- Radford et al. (2021) Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, et al. 2021. Learning transferable visual models from natural language supervision. In International conference on machine learning. PMLR, 8748–8763.

- Raj et al. (2023) Amit Raj, Srinivas Kaza, Ben Poole, Michael Niemeyer, Nataniel Ruiz, Ben Mildenhall, Shiran Zada, Kfir Aberman, Michael Rubinstein, Jonathan Barron, et al. 2023. Dreambooth3d: Subject-driven text-to-3d generation. In Proceedings of the IEEE/CVF International Conference on Computer Vision. 2349–2359.

- Ramesh et al. (2022) Aditya Ramesh, Prafulla Dhariwal, Alex Nichol, Casey Chu, and Mark Chen. 2022. Hierarchical text-conditional image generation with clip latents. arXiv preprint arXiv:2204.06125 1, 2 (2022), 3.

- Richardson et al. (2023) Elad Richardson, Gal Metzer, Yuval Alaluf, Raja Giryes, and Daniel Cohen-Or. 2023. Texture: Text-guided texturing of 3d shapes. In ACM SIGGRAPH 2023 Conference Proceedings. 1–11.

- Rombach et al. (2022) Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser, and Björn Ommer. 2022. High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 10684–10695.

- Ruan et al. (2023) Ludan Ruan, Yiyang Ma, Huan Yang, Huiguo He, Bei Liu, Jianlong Fu, Nicholas Jing Yuan, Qin Jin, and Baining Guo. 2023. Mm-diffusion: Learning multi-modal diffusion models for joint audio and video generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 10219–10228.

- Saharia et al. (2022) Chitwan Saharia, William Chan, Saurabh Saxena, Lala Li, Jay Whang, Emily L Denton, Kamyar Ghasemipour, Raphael Gontijo Lopes, Burcu Karagol Ayan, Tim Salimans, et al. 2022. Photorealistic text-to-image diffusion models with deep language understanding. Advances in Neural Information Processing Systems 35 (2022), 36479–36494.

- Schuhmann et al. (2022) Christoph Schuhmann, Romain Beaumont, Richard Vencu, Cade Gordon, Ross Wightman, Mehdi Cherti, Theo Coombes, Aarush Katta, Clayton Mullis, Mitchell Wortsman, et al. 2022. Laion-5b: An open large-scale dataset for training next generation image-text models. Advances in Neural Information Processing Systems 35 (2022), 25278–25294.

- Sella et al. (2023) Etai Sella, Gal Fiebelman, Peter Hedman, and Hadar Averbuch-Elor. 2023. Vox-e: Text-guided voxel editing of 3d objects. In Proceedings of the IEEE/CVF International Conference on Computer Vision. 430–440.

- Seo et al. (2023) Junyoung Seo, Wooseok Jang, Min-Seop Kwak, Jaehoon Ko, Hyeonsu Kim, Junho Kim, Jin-Hwa Kim, Jiyoung Lee, and Seungryong Kim. 2023. Let 2d diffusion model know 3d-consistency for robust text-to-3d generation. arXiv preprint arXiv:2303.07937 (2023).

- Shen et al. (2021) Tianchang Shen, Jun Gao, Kangxue Yin, Ming-Yu Liu, and Sanja Fidler. 2021. Deep marching tetrahedra: a hybrid representation for high-resolution 3d shape synthesis. Advances in Neural Information Processing Systems 34 (2021), 6087–6101.

- Sohl-Dickstein et al. (2015) Jascha Sohl-Dickstein, Eric Weiss, Niru Maheswaranathan, and Surya Ganguli. 2015. Deep unsupervised learning using nonequilibrium thermodynamics. In International conference on machine learning. PMLR, 2256–2265.

- Song et al. (2020) Yang Song, Jascha Sohl-Dickstein, Diederik P Kingma, Abhishek Kumar, Stefano Ermon, and Ben Poole. 2020. Score-based generative modeling through stochastic differential equations. arXiv preprint arXiv:2011.13456 (2020).

- Sun et al. (2024) Gan Sun, Wenqi Liang, Jiahua Dong, Jun Li, Zhengming Ding, and Yang Cong. 2024. Create your world: Lifelong text-to-image diffusion. IEEE Transactions on Pattern Analysis and Machine Intelligence (2024).