Buckets:

Title: Lost in Translation: Latent Concept Misalignment in Text-to-Image Diffusion Models

URL Source: https://arxiv.org/html/2408.00230

Published Time: Tue, 06 Aug 2024 01:07:03 GMT

Markdown Content: 1 1 institutetext: Shanghai Jiao Tong University 2 2 institutetext: Shanghai Artificial Intelligence Laboratory 3 3 institutetext: Fudan University 4 4 institutetext: Renmin University of China Junyu Deng\orcidlink 0009-0004-6313-7378 33 Yixin Ye\orcidlink 0009-0006-1882-2960 11 Chongxuan Li\orcidlink 0000-0002-0912-9076 44

Zhijie Deng†\orcidlink 0000-0002-0932-1631 11 Dequan Wang†\orcidlink 0000-0001-8270-8448 1122

Abstract

Advancements in text-to-image diffusion models have broadened extensive downstream practical applications, but such models often encounter misalignment issues between text and image. Taking the generation of a combination of two disentangled concepts as an example, say given the prompt \say a tea cup of iced coke, existing models usually generate a glass cup of iced coke because the iced coke usually co-occurs with the glass cup instead of the tea one during model training. The root of such misalignment is attributed to the confusion in the latent semantic space of text-to-image diffusion models, and hence we refer to the \say a tea cup of iced coke phenomenon as Latent Concept Misalignment (LC-Mis). We leverage large language models (LLMs) to thoroughly investigate the scope of LC-Mis, and develop an automated pipeline for aligning the latent semantics of diffusion models to text prompts. Empirical assessments confirm the effectiveness of our approach, substantially reducing LC-Mis errors and enhancing the robustness and versatility of text-to-image diffusion models. Our code and dataset have been available online for reference.

Keywords:

Text-to-image diffusion models Misalignment Large Language Models

1 Introduction

1 1 footnotetext: Equal contribution. † Corresponding authors.

Text-to-image synthesis[18, 27, 40, 38, 15, 26, 7, 36, 39, 21, 30, 28, 25, 33] via diffusion models has made remarkable progress, where high-quality images are generated given text prompts[28, 24]. However, a significant limitation of existing models is that they can easily face visual-textual misalignment in practice, where certain elements in the input text are overlooked in generated images. As shown in Figure 1, none of Midjourney[20], Dall·E 3[23], and SDXL[24] can craft an image containing \say a tea cup of iced coke. Instead, these models exhibit a preference for generating a glass cup due to inherent biases in concept combination during the training process of the models.

Midjourney[20]Dall·E 3[23]SDXL[24]MoCE (ours)

Midjourney[20]Dall·E 3[23]SDXL[24]MoCE (ours)

Figure 1: Teaser figures, from three models and our approach MoCE, showcase a classic example of Latent Concept Misalignment (LC-Mis) in this study: a tea cup of iced coke. Here, a glass cup, an unfamiliar object, substitutes the anticipated tea cup. We denote the iced coke as Concept 𝒜 𝒜\mathcal{A}caligraphic_A, the tea cup as Concept ℬ ℬ\mathcal{B}caligraphic_B, and introduce a latent Concept 𝒞 𝒞\mathcal{C}caligraphic_C —the glass. This combination of 𝒜 𝒜\mathcal{A}caligraphic_A, ℬ ℬ\mathcal{B}caligraphic_B, and 𝒞 𝒞\mathcal{C}caligraphic_C forms our investigative focus.

Referring to the two main concepts (e.g., \say iced coke and \say tea cup) as 𝒜 𝒜\mathcal{A}caligraphic_A and ℬ ℬ\mathcal{B}caligraphic_B, and a latent concept that inherently correlates with 𝒜 𝒜\mathcal{A}caligraphic_A or ℬ ℬ\mathcal{B}caligraphic_B as 𝒞 𝒞\mathcal{C}caligraphic_C (e.g., \say glass cup), by our experience, it is non-trivial to address the misalignment problem based on naive prompt engineering. We refer to such an inherent issue as Latent Concept Misalignment (LC-Mis). Unlike basic works that only focus on the mutual encroachment of 𝒜 𝒜\mathcal{A}caligraphic_A and ℬ ℬ\mathcal{B}caligraphic_B[35, 9, 17, 16, 3], our problem involves a latent concept 𝒞 𝒞\mathcal{C}caligraphic_C that has never been mentioned in the text prompt. The emergence of this phenomenon can lead to the absence of the expected concept ℬ ℬ\mathcal{B}caligraphic_B (\say tea cup) in the generated output.

We devise an efficient pipeline with the aid of Large Language Models[2, 22, 1] (LLMs) to discover an extensive set of LC-Mis examples. Specifically, human researchers first patiently guide the LLM to gradually understand and delve into the logic behind LC-Mis. The LLM is then leveraged to generate additional LC-Mis concept pairs according to its own understanding. After acquiring a substantial number of LC-Mis concept pairs, state-of-the-art diffusion models, including Midjourney[20] and SDXL[24], are employed to synthesize high-quality image samples for evaluation. Subsequently, expert human researchers meticulously evaluate the generated images to identify and select concept pairs that accurately manifest LC-Mis. This rigorous evaluation process culminates in the formation of our dataset.

Figure 2: We display the issue of Latent Concept Misalignment (LC-Mis). In the first row of the images, even the most advanced text-to-image models (SDXL) fail to faithfully generate the specified concepts. We have developed an autonomous pipeline to explore this issue and proposed a hotfix, MoCE, to simply fix it.

We introduce Mixture of Concept Experts (MoCE) to enhance the alignment between images and text in text-to-image diffusion models and hence mitigate the LC-Mis issue. Specifically, inspired by the sequential rule of human drawing when faced with a concept pair (𝒜 𝒜\mathcal{A}caligraphic_A, ℬ ℬ\mathcal{B}caligraphic_B), we divide the entire sampling process of diffusion models into two phases based on the drawing advice given by an LLM, where only one of 𝒜 𝒜\mathcal{A}caligraphic_A and ℬ ℬ\mathcal{B}caligraphic_B is provided in the first phase and then the complete text prompt is used in the second phase. Once the image synthesis is complete, a quantitative metric based on proven benchmarks, such as Clipscore[11] and Image-Reward[37], is employed to measure the alignment between the generated images and the text prompt. This measurement is then used to iteratively adjust the lengths of the two phases mentioned above, employing a binary search method, until the generated images meet the expectation. Empirical case studies, grounded in thorough human evaluation, provide confirmation that our approach significantly mitigates the LC-Mis issue. Furthermore, it also enhances both the applicability and flexibility of text-to-image diffusion techniques across diverse fields. This improvement is clearly demonstrated in Figure2. The models we used in this paper are those available online as of October 1, 2023. See Section6.2 for the discussion on the latest models.

Here, we summarize our contributions in the following 2 aspects:

- •We investigate the neglected Latent Concept Misalignment (LC-Mis) issue within existing text-to-image diffusion models, introduce an LLM-based pipeline to collect our LC-Mis dataset.

- •We propose to split the concepts in text prompt and input them into different phases of the diffusion model generation process, effectively mitigating the LC-Mis issue.

2 Related Work

Diffusion Models Diffusion models[13] predict noise in noisy images and produce high-quality outputs after training. Having gained considerable attention, they are now considered the state-of-the-art in image generation. These models find applications in various domains, such as label-to-image synthesis[6, 28], text-to-image generation[25, 28, 24], image editing[5, 19, 14], and video generation[12]. Specifically, text-to-image generation is of practical importance. Open-source and commercial solutions like Stable Diffusion[28, 24], Midjourney[20] and Dall·E 3[23] have achieved success, bolstered by advancements in neural networks[29, 34] and large datasets of image-text pairs[32, 31]. Nevertheless, the question of whether these models truly innovate or merely generate combinations encountered in their training data remains unresolved. In this study, we investigate this issue by examining text-to-image diffusion models in the context of unconventional concept pairings, namely LC-Mis.

Misalignment Issues While state-of-the-art generative models frequently produce high-quality, realistic images, they struggle with certain concept combinations. Such models usually mimic combinations seen in training data. Prior work has emphasized spatial conflicts where multiple entities coexist in close proximity[35, 9, 17, 16, 3]. Distinct from these investigations, our focus lies on Latent Concept Misalignment, illustrated by phrases such as \say a tea cup of iced coke. Through rigorous experimentation, we investigate this challenge and introduce a benchmark alongside a hotfix solution.

3 Benchmark: Collecting Data on Latent Concept Misalignment (LC-Mis)

In this section, we detail the process of collecting our LC-Mis dataset. In our dataset, each pair features Concept 𝒜 𝒜\mathcal{A}caligraphic_A and Concept ℬ ℬ\mathcal{B}caligraphic_B, which cannot be simultaneously generated by text-to-image models due to the existance of the latent concept, 𝒞 𝒞\mathcal{C}caligraphic_C. The advantages of our collection system are as follows:

- •Given that extensive human knowledge is required to generate data with LC-Mis issue, we utilize LLMs (e.g., GPT-3.5 in this paper) to develop an efficient guidance system, inspired by LLMs Reasoning[8].

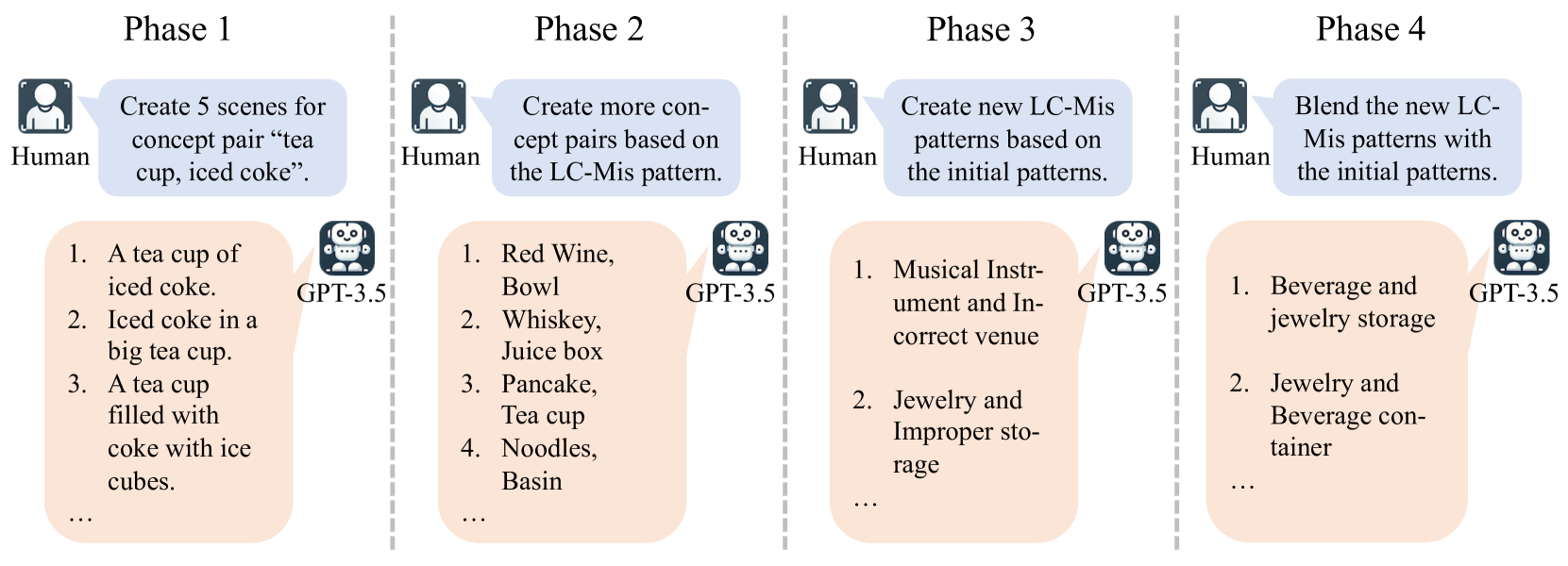

- •These concept pairs often defy common human understanding, making them challenging to mine. We meticulously craft a generation loop containing 4 phases, allowing the iterative amplification of a small dataset into a substantially larger one. The process overview is shown in Figure3, and the detailed prompts to guide GPT-3.5 can be found in our Appendix Section0.A.

- •Finally, concept pairs discovered by GPT-3.5 require comprehensive evaluation. However, advanced tools such as Clipscore[11] or Image Reward[37] may exhibit ineffectiveness in LC-Mis problem (Section5.3). Therefore, the evaluation of human experts is an important part of our system.

Figure 3: A single loop of our interactive LLMs (i.e., GPT-3.5) guidance system, comprising 4 phases. Human researchers provide instruction for GPT-3.5, then GPT-3.5 create new LC-Mis concept pairs and patterns for evaluation. We can iterate the phase 2 - 4 to rapidly expand our LC-Mis dataset.

3.1 Phase 1 - Identifying Initial Concept Pairs as Seeds

In Phase 1, human experts identify a small number of concept pairs and extract patterns from them. These patterns serve as seeds for subsequent dataset expansion. Human researchers begin with analyzing various visual scenes in renowned text-to-image datasets, such as Laion2B-en***https://huggingface.co/datasets/laion/laion2B-en and MJ User Prompts & Images Dataset†††https://huggingface.co/datasets/succinctly/midjourney-prompts, to detect concept pairs prone to LC-Mis. They select 50 concept pairs, like \say iced coke and \say tea cup, which produce inaccurate output images.

Upon analysis, we categorize these concept pairs into 8 distinct patterns. Among them, 4 patterns belong to the scope of LC-Mis (detailed LC-Mis patterns are shown in Appendix Table1), mainly including the categories of \say foreground and background, as well as \say objects and containers. These patterns serve as an effective starting point for identifying additional concept pairs by means of GPT-3.5.

3.2 Phase 2 - Generating and Verifying Additional Concept Pairs with GPT-3.5

In this phase, human researchers employ GPT-3.5 to generate additional concept pairs corresponding to each pattern from Section3.1, using the initial 50 concept pairs for few-shot learning. Using this method, GPT-3.5 generates 499 valid concept pairs. None of these pairs can be generated correctly by text-to-image models with the simple prompt \say concept 𝒜 𝒜\mathcal{A}caligraphic_A, concept ℬ ℬ\mathcal{B}caligraphic_B and they undergo rigorous verification, with most of them proving to be of high quality.

Then a further verification is meticulously designed to comprehensively assess the accuracy of the generated concept pairs. Specifically, GPT-3.5 generates 5 prompts for each pair, varying in length and richness. Subsequently, text-to-image models generate 4 images per prompt. After human verification of all 20 images, the concept pairs are scored on 5 levels: zero correct images correspond to Level 5, 1∼5 similar-to 1 5 1\sim 5 1 ∼ 5 correct images to Level 4, 6∼10 similar-to 6 10 6\sim 10 6 ∼ 10 to Level 3, 11∼15 similar-to 11 15 11\sim 15 11 ∼ 15 to Level 2, and 16∼20 similar-to 16 20 16\sim 20 16 ∼ 20 to Level 1.

In total, 2,495 sentence-based text prompts are fed into text-to-image models, which in turn produce 9,980 images. After rigorous screening, 272 concept pairs (55%) attain a Level 5 rating, representing the pinnacle of quality. Among them, 173 concept pairs belong to the scope of LC-Mis and will be used as the main data for our subsequent experiments. Utilizing GPT-3.5’s generalizing ability and reasoning skills, our initial dataset of 50 pairs is expanded quickly, maintaining high quality and diversity. This phase can be repeated on the basis of Phases 3 and 4 to generate more and concept pairs.

3.3 Phase 3 - Discovering New Patterns for Concept Pair Generation

In this phase, we leverage GPT-3.5 to identify 9 new patterns and apply the same methodology in Section3.2 to these patterns.

During previous process, we observe that GPT-3.5 produces duplicates when tasked with generating additional concept pairs, suggesting that it may have reached the limits of its knowledge within current patterns. Consequently, GPT-3.5 is encouraged to autonomously identify new patterns to enhance the semantic scope of LC-Mis. Building upon the 8 meticulously categorized patterns, human researchers instruct GPT-3.5 to identify 9 additional patterns. Subsequently, we replicate the procedures from Section3.2, achieving a Level 5 accuracy rate of 70%, markedly exceeding the capabilities of human experts.

3.4 Phase 4 - Creating Novel Concept Pairs by Merging Patterns

In this phase, we merge concepts from different patterns to form new patterns and concept pairs, which will serve as seeds for the subsequent iteration.

Specifically, human researchers instruct GPT-3.5 to combine concepts from one pattern with ones from another pattern. For example, patterns labeled \say Beverage and Incorrect Container and \say Jewelry and Inadequate Storage are merged to create new patterns: \say Beverage and Jewelry Storage and \say Jewelry and Beverage Container. Following the process in Section3.3, we observe that each newly created pattern achieves a Level 5 accuracy rate of at least 60%, demonstrating GPT-3.5’s capability in synthesizing orthogonal patterns into more patterns.

In summary, we collect our dataset leveraging the collaboration between LLMs (i.e., GPT-3.5) and text-to-image models. Based on our proposed system, we can further expand our dataset iteratively in the future.

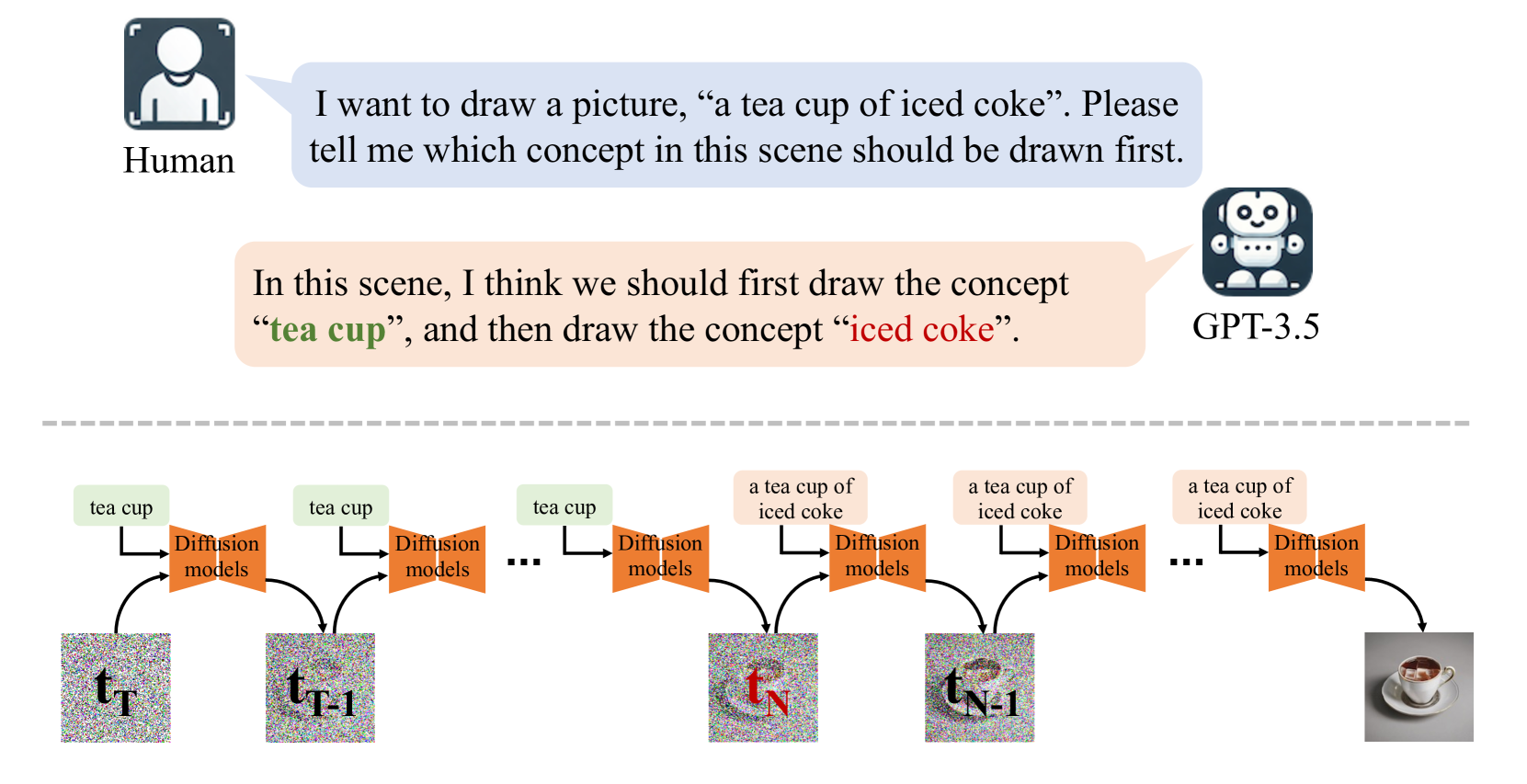

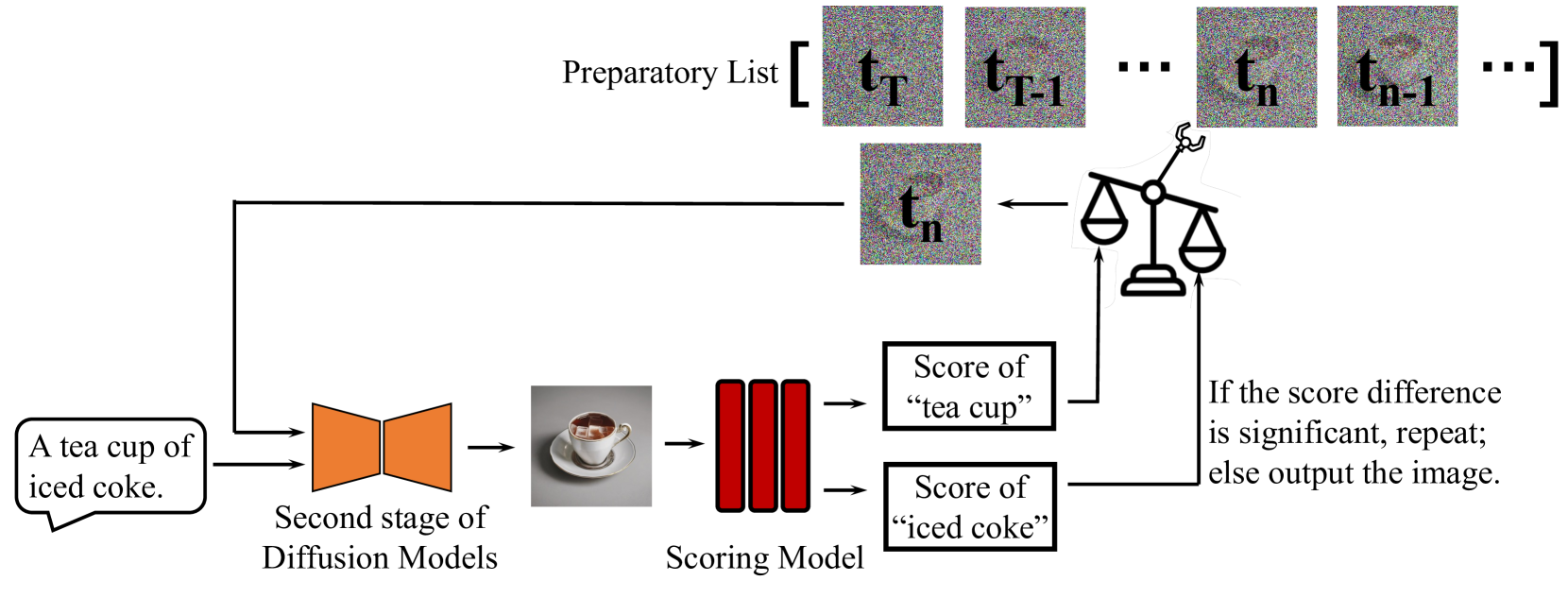

Figure 4: Overview of our method, MoCE. GPT-3.5 determines the drawing sequence of concepts. The initial concept is sampled for N 𝑁 N italic_N steps in diffusion models before inputting the full text prompt. We use binary search to find the optimal N 𝑁 N italic_N, refer to Section4 Paragraph “Dynamic Binary Optimization” and Appendix Figure12 and13 for details.

4 Method: Mixture of Concept Experts (MoCE)

Motivation of MoCE Humans always follow a certain order when painting. Inspired by human painting nature and motivated by dynamic models[10], we integrate LLMs (e.g., GPT-3.5 in this paper) into our method, Mixture of Concept Experts (MoCE) to alleviate the LC-Mis issues. As shown in Figure4, we first input the easily overlooked concept to focus on the attention mechanism during the early diffusion stages, enhancing their representation in the final image. We base MoCE on SDXL[24], one of the foremost reliable open-source diffusion models. The system includes components listed as follows:

Sequential Concept Introduction Gaining insight from human artistic processes, concepts are introduced to diffusion models sequentially rather than simultaneously to prevent the LC-Mis entanglement. As a result, we need to find a reasonable and logical sequence of them. LLMs are naturally suitable for this task. Therefore, we employ GPT-3.5 to ascertain the most logical sequence for two concepts based on its comprehension of human behavior. We provide interaction details with GPT-3.5 in our Appendix Section0.A.

Denoising Process Partition In the denoising process of diffusion models across time steps,

t T,t T−1,…,t 1,t 0 subscript 𝑡 𝑇 subscript 𝑡 𝑇 1…subscript 𝑡 1 subscript 𝑡 0 t_{T},t_{T-1},...,t_{1},t_{0}italic_t start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT , italic_t start_POSTSUBSCRIPT italic_T - 1 end_POSTSUBSCRIPT , … , italic_t start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT , italic_t start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT , the process is split into 2 phases:

- •First Phase (t T,t T−1,…,t N subscript 𝑡 𝑇 subscript 𝑡 𝑇 1…subscript 𝑡 𝑁 t_{T},t_{T-1},...,t_{N}italic_t start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT , italic_t start_POSTSUBSCRIPT italic_T - 1 end_POSTSUBSCRIPT , … , italic_t start_POSTSUBSCRIPT italic_N end_POSTSUBSCRIPT): We only input the easily lost concept into diffusion models, and save images at each time step as a preparatory list.

- •Second Phase (t N−1,t N−2,…,t 0 subscript 𝑡 𝑁 1 subscript 𝑡 𝑁 2…subscript 𝑡 0 t_{N-1},t_{N-2},...,t_{0}italic_t start_POSTSUBSCRIPT italic_N - 1 end_POSTSUBSCRIPT , italic_t start_POSTSUBSCRIPT italic_N - 2 end_POSTSUBSCRIPT , … , italic_t start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT): t x subscript 𝑡 𝑥 t_{x}italic_t start_POSTSUBSCRIPT italic_x end_POSTSUBSCRIPT will be selected from the preparatory list. Starting from time step t x subscript 𝑡 𝑥 t_{x}italic_t start_POSTSUBSCRIPT italic_x end_POSTSUBSCRIPT, we provide the completed text prompt to diffusion models to synthesize the final image.

However, which image t x subscript 𝑡 𝑥 t_{x}italic_t start_POSTSUBSCRIPT italic_x end_POSTSUBSCRIPT to take from the preparatory list reaches the optimal output remains unknown, motivating us to formulate a strategy for autonomously determining the optimal allocation of time steps.

Automated Feedback Mechanism We iteratively select the most suitable t x subscript 𝑡 𝑥 t_{x}italic_t start_POSTSUBSCRIPT italic_x end_POSTSUBSCRIPT based on the fidelity of the final image. In this case, Clipscore, 𝒮 c subscript 𝒮 𝑐\mathcal{S}_{c}caligraphic_S start_POSTSUBSCRIPT italic_c end_POSTSUBSCRIPT, is used to assess the fidelity of the generated image M 𝑀 M italic_M to the desired concept, e.g., 𝒜 𝒜\mathcal{A}caligraphic_A:

𝒮 c(M,𝒜)=ClipScore(M,𝒜)subscript 𝒮 𝑐 𝑀 𝒜 𝐶 𝑙 𝑖 𝑝 𝑆 𝑐 𝑜 𝑟 𝑒 𝑀 𝒜\mathcal{S}_{c}(M,\ \mathcal{A})=ClipScore(M,\ \mathcal{A})caligraphic_S start_POSTSUBSCRIPT italic_c end_POSTSUBSCRIPT ( italic_M , caligraphic_A ) = italic_C italic_l italic_i italic_p italic_S italic_c italic_o italic_r italic_e ( italic_M , caligraphic_A )(1)

As the issue of misalignment persists, naively employing Clipscore wouldn’t truly show the correlation between entity 𝒜 𝒜\mathcal{A}caligraphic_A and the final image M 𝑀 M italic_M since it may be not distinguishable (see Section5.3). Therefore, we calculate the Clipscore between images and both the concept itself and its corresponding description generated by GPT-3.5. Some details are not prominent in M 𝑀 M italic_M, yet they might be further elaborated in the information further provided by the descriptions. This possibly uplifts the Clipscore of the entity and lowers the influence of misalignment to some certain extent. The the score function in MoCE, Description-Concept Coordination Score, is as follow:

𝒮(M,𝒜)=Max(𝒮 c(M,𝒜),𝒮 c(M,𝒜 Description))𝒮 𝑀 𝒜 𝑀 𝑎 𝑥 subscript 𝒮 𝑐 𝑀 𝒜 subscript 𝒮 𝑐 𝑀 subscript 𝒜 𝐷 𝑒 𝑠 𝑐 𝑟 𝑖 𝑝 𝑡 𝑖 𝑜 𝑛\mathcal{S}(M,\ \mathcal{A})=Max(\mathcal{S}{c}(M,\ \mathcal{A}),\ \mathcal{S% }{c}(M,\ \mathcal{A}_{Description}))caligraphic_S ( italic_M , caligraphic_A ) = italic_M italic_a italic_x ( caligraphic_S start_POSTSUBSCRIPT italic_c end_POSTSUBSCRIPT ( italic_M , caligraphic_A ) , caligraphic_S start_POSTSUBSCRIPT italic_c end_POSTSUBSCRIPT ( italic_M , caligraphic_A start_POSTSUBSCRIPT italic_D italic_e italic_s italic_c italic_r italic_i italic_p italic_t italic_i italic_o italic_n end_POSTSUBSCRIPT ) )(2)

We also devise a new metric named Multi-Concept Disparity, denoted as 𝒟 𝒟\mathcal{D}caligraphic_D:

𝒟=𝒮(M,𝒜)−𝒮(M,ℬ)𝒟 𝒮 𝑀 𝒜 𝒮 𝑀 ℬ\mathcal{D}=\mathcal{S}(M,\mathcal{A})-\mathcal{S}(M,\mathcal{B})caligraphic_D = caligraphic_S ( italic_M , caligraphic_A ) - caligraphic_S ( italic_M , caligraphic_B )(3)

𝒟 𝒟\mathcal{D}caligraphic_D denotes the difference in the scores of an image between two concepts. The larger the size of |𝒟|𝒟|\mathcal{D}|| caligraphic_D |, the higher the likelihood that at least one concept within it will become blurred.

Dynamic Binary Optimization When allocating a larger number of time steps in the first stage, the more dominant features of

𝒜 𝒜\mathcal{A}caligraphic_A possibly overshadows those of

ℬ ℬ\mathcal{B}caligraphic_B , then the greater the absolute value of

𝒟 𝒟\mathcal{D}caligraphic_D is, and vice versa. Therefore, there exists a positive correlation between the time steps allocated in the first phase and the image quality, making the binary search method highly suitable for this scenario. When the score of concept

𝒜 𝒜\mathcal{A}caligraphic_A is significantly higher than

ℬ ℬ\mathcal{B}caligraphic_B , we choose a larger

t x subscript 𝑡 𝑥 t_{x}italic_t start_POSTSUBSCRIPT italic_x end_POSTSUBSCRIPT as the boundary point for two phases, and vice versa. If the difference of their scores is below the threshold, the final image will be output. Specific implementation details can be found in our Appendix Section0.C.

In summary, our methodology presents a robust solution for addressing entity misalignment in text-to-image diffusion models. It combines intuitive reasoning, automatic fine-tuning, and efficiency optimizations to produce more precise and contextually apt image outputs.

(a)Iced coke, tea cup

(b)Noodles, basin

(c)Iced coffee, shot glass

Figure 5: Visualizations of images at Level 5 generated by SDXL (baseline) and MoCE. Here, we present representative examples of the “Item and Container” pattern.

5 Experiments

We conduct extensive experiments centered around MoCE, revealing its ability to alleviate the LC-Mis issue in text-to-image diffusion models.

5.1 Setup

Dataset In Section3.2, we obtain 272 concept pairs for Level 5. From this pool, human experts carefully select 173 concept pairs as LC-Mis cases for experiments, while the remaining concept pairs fall into the realm of traditional misalignment issues, extensively discussed in Section2.

(a)Snubnosed monkey, San Fransisco

(b)Black necked crane, London

(c)Watermelon, lemon tree

Figure 6: Visualizations of images at Level 5 generated by SDXL (baseline) and MoCE. Here, we present representative examples of the “Foreground and Background” pattern.

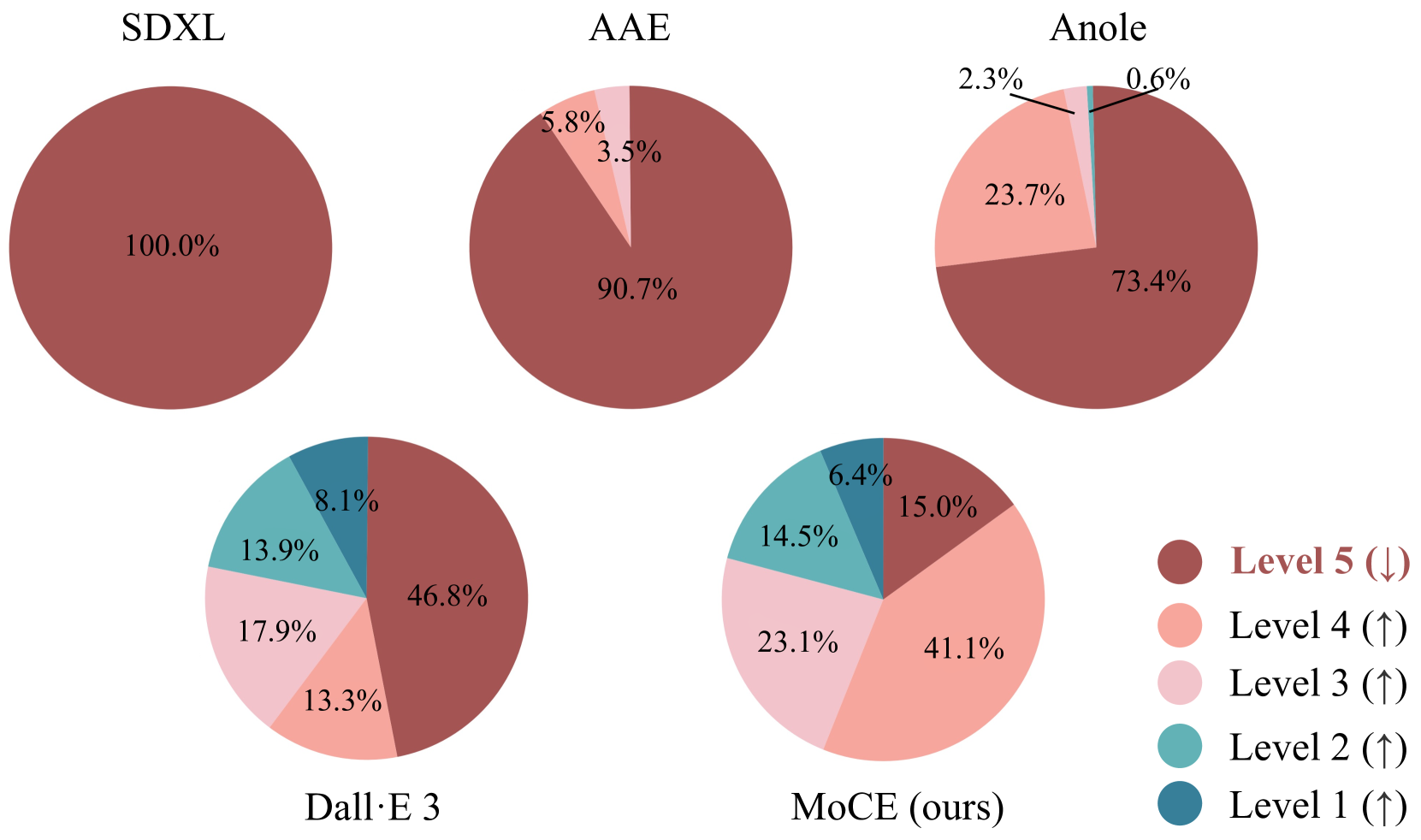

Model Our remediation, MoCE, is implemented using SDXL[24] due to its open-source nature and widespread use. We omit Midjourney and Dall·E 3 from consideration because of their internal black-box architectures. Our baseline, represented by these 173 LC-Mis concept pairs in Section3, demonstrates that all of them receive a rating of Level 5, indicating that none of the images generated in 20 attempts faithfully represented the expected concepts. We also incorporate 3 additional baseline models: Attend-and-Excite (AAE)[3], Dall·E 3[23], and Anole[4]. AAE aims to mitigate traditional misalignment issues via the attention map layer, and Dall·E 3 through fine-grained annotation. And Anole is a novel model that employs autoregressive methods for interleaved image-text generation, offering fresh insights into resolving misalignment issues.

Our experiments use a single NVIDIA A100 GPU for image generation via SDXL, and a RTX 4090 GPU is also sufficient.

Evaluation Metric Considering the instability of quantitative score evaluation, as well as the use of Clipscore and Image-Reward in our method, MoCE,we primarily utilize human evaluation in our experiments. We engage human experts for a more impartial evaluation. Additionally, the results of quantitative score evaluation are also included in our Appendix Section0.D for reference.

Figure 7: Human Evaluation for our MoCE. Human experts rate the 173 LC-Mis concept pairs on SDXL, AAE, Anole, Dalle·E 3 and our MoCE. The brown color represents the proportion of Level 5 ratings among 173 pairs. A lower Level 5 proportion indicates the model better alleviates the LC-Mis issue. Compared to baseline models, our MoCE exhibits a clear advantage when faced with LC-Mis issues, and it has even surpassed Dalle·E 3, which requires expensive training costs.

5.2 Result

Our method, MoCE, utilizes the 173 Level 5 LC-Mis concept pairs, providing their corresponding text prompts as input to the model. The second phase of MoCE is repeated up to three times to ensure the Multi-Concept Disparity 𝒟 𝒟\mathcal{D}caligraphic_D coverage below 0.6. After obtaining 20 images for each concept pair, human experts re-evaluate these images based on the criteria discussed in Section3. We report the counts of concept pairs at each level after undergoing improvement by our MoCE and compare them to the baseline results in Figure7. Meanwhile, we also present several visualized images in Figure5 and6.

Figure 8: Human Evaluation for Dall·E 3 (baseline) and our MoCE on different patterns mentioned in Section3. The brown color represents the proportion of Level 5 ratings among 173 pairs. A lower Level 5 proportion indicates the model better alleviates the LC-Mis issue. In Patterns (a), (b), and (c), MoCE outperforms Dall·E 3, as its output includes more concept pairs belonging to Level 1 and 2. And in Pattern (d), Dall·E 3’s performance is superior to MoCE.

In the original Level 5 LC-Mis concept pairs, the baseline model fails to produce any correct image, as we mentioned in Section3. Even when sophisticated engineering strategies, e.g., AAE and Anole, are used, which may be effective for traditional misalignment problems, there is little improvement for Level 5 concept pairs when applied to LC-Mis problems. However, following the enhancement made by our MoCE, over half of the concept pairs are now correctly generated, and there are even several concept pairs rated as Level 1. Additionally, Dall·E 3, with its expensive and fine-tuned data annotations, indeed helps with the LC-Mis problem, achieving improvements for Level 5 concept pairs comparable to MoCE. However, it is important to note that training Dall·E 3 requires additional data preprocessing, which can be a costly process, suggesting that our MoCE produces more correct images in an economical manner. We also include a comparison of the scores of images generated by MoCE and baselines in Appendix Table4, demonstrating that our method is statistically meaningful.

In addition, we also present the human evaluation results under the segmented patterns. As introduced in Section3, we have divided these 173 LC-Mis concept pairs into 4 patterns, and we have presented the results of human evaluation in Figure8. In Patterns (a), (b) and (c), MoCE outperforms Dall·E 3, as its output includes more concept pairs belonging to Level 1 and 2. And in Pattern (d), Dall·E 3’s performance is superior to MoCE.

5.3 Analysis

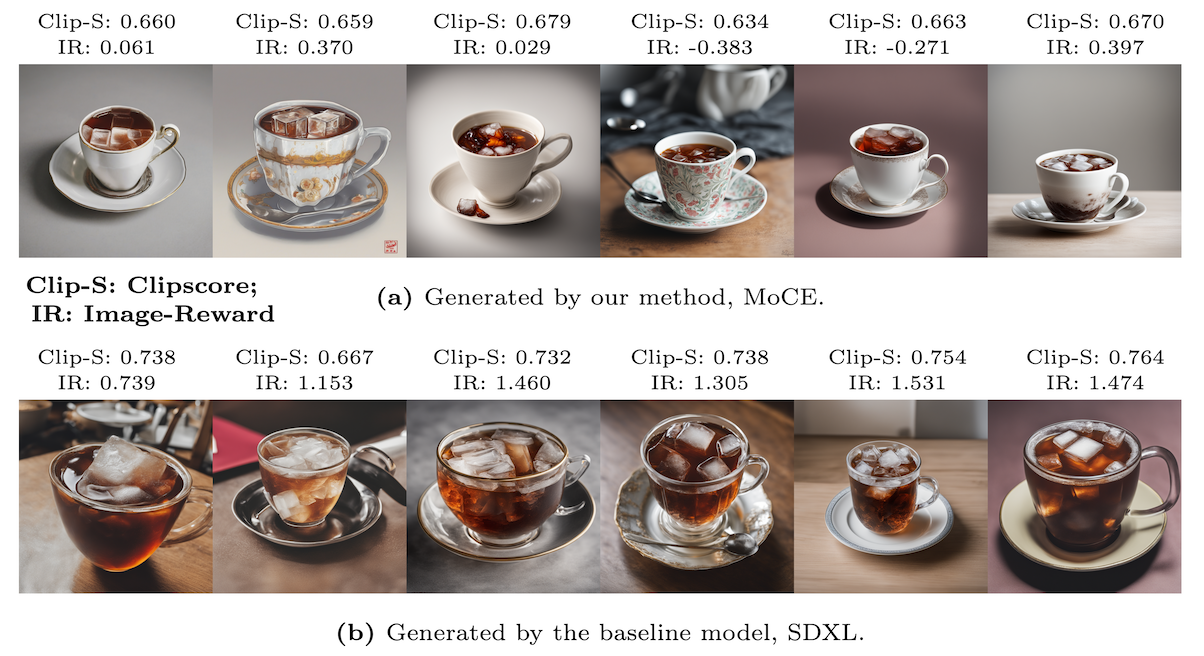

Figure 9: Visualization of images of “a tea cup of iced coke” generated by both our MoCE and baseline model. We also report Clipscore (Clip-S, ↑↑\uparrow↑) and Image-Reward Score (IR, ↑↑\uparrow↑) between images and the concept, “iced coke”, to demonstrate the minor pitfalls of the existing evaluation metrics: even MoCE correctly generates “iced coke” (a), the result score is still significantly lower.

We are deeply concerned about the impact of LC-Mis issues on the existing text-to-image model landscape, for the most representative and challenging example, \say a tea cup of iced coke, we present visual restoration results in Figure9. Both Clipscore and Image-Reward effectively adjust the time step in our MoCE model with the help of Description-Concept Coordination Score, while unfortunately the existing evaluation metrics may sometimes be proven to be not absolutely accurate enough. Figure9 displays both Clipscore and Image-Reward scores between images and the concept \say iced coke. We meticulously choose transparent glasses generated by baseline models, which resemble tea cups, to analyze the error in the scoring mechanism. The occurrence of \say iced coke in both sub-figures within Figure9 is evident to human experts. However, both Clipscore and Image-Reward scores are markedly lower for images of \say a tea cup of iced coke attributed to the material of the cup. This highlights the limitations of current evaluation metrics in addressing misalignment issues and emphasizes the necessity for developing new metrics based on existing methods, which will become one of the key research directions for our future work.

(a)Dall·E 3 without GPT-4

(b)Dall·E 3 with GPT-4

(c)Midjourney

(d)Stable Diffusion 3

Figure 10: The results of the example “a tea cup of iced coke” using the latest Dall·E 3 (a), Dall·E 3 with complex prompt engineering from GPT-4 (b), Midjourney(c) and Stable Diffusion 3 (d). The latest Dall·E 3 with GPT-4 has indeed alleviated the LC-Mis issue to some degree, with the help of significant annotation costs.

6 Conclusions

6.1 Summary

In this paper, we introduce a new text-to-image misalignment issue called LC-Mis, involving a significant latent concept. We present a novel framework to explore the LC-Mis examples, making it one of the earliest works of using LLMs in developing image generation systems. Our method, MoCE, innovatively splits the text prompts and inputs them into diffusion models, alleviating the LC-Mis problem. Human evaluation confirms MoCE’s effectiveness. we also highlight the impact of the LC-Mis on existing text-to-image systems, discuss flaws in current evaluation metrics, and call for community attention to this matter.

6.2 Recent Advances in the Field

Until releasing our paper, text-to-image models have further evolved. We present results from the latest (available online as of July 7, 2024) Dall·E 3, Midjourney, and Stable Diffusion 3 in Figure10. In the example \say a tea cup of iced coke, Without complex prompt engineering, models still perform poorly on the LC-Mis issue, as shown in Figure10(a),10(c) and10(d). Complex prompt engineering from GPT-4 (Figure10(b)) does help alleviate the issue. However, it’s important to note that this comes with significant annotation costs during Dall·E 3’s training, and is also accompanied by a certain degree of instability, highlighting the issue’s significance.

6.3 Future Work

In our future work, we will focus on exploring more complex LC-Mis scenarios and developing learnable search algorithms to reduce the iterations in our method. Additionally, we will expand the range of model types, model versions and sampler types used in our dataset and continuously iterate our dataset collection algorithms to enhance and enlarge the dataset.

Acknowledgement

This work was supported by Key R&D Program of Shandong Province, China (2023CXGC010112).

References

- [1] Achiam, J., Adler, S., Agarwal, S., Ahmad, L., Akkaya, I., Aleman, F.L., Almeida, D., Altenschmidt, J., Altman, S., Anadkat, S., et al.: Gpt-4 technical report. arXiv preprint arXiv:2303.08774 (2023)

- [2] Brown, T., Mann, B., Ryder, N., Subbiah, M., Kaplan, J.D., Dhariwal, P., Neelakantan, A., Shyam, P., Sastry, G., Askell, A., et al.: Language models are few-shot learners. In: NeurIPS (2020)

- [3] Chefer, H., Alaluf, Y., Vinker, Y., Wolf, L., Cohen-Or, D.: Attend-and-excite: Attention-based semantic guidance for text-to-image diffusion models. ACM Transactions on Graphics (TOG) 42(4), 1–10 (2023)

- [4] Chern, E., Su, J., Ma, Y., Liu, P.: Anole: An open, autoregressive and native multimodal models for interleaved image-text generation. GitHub repository (2024), https://github.com/GAIR-NLP/anole

- [5] Couairon, G., Verbeek, J., Schwenk, H., Cord, M.: Diffedit: Diffusion-based semantic image editing with mask guidance. arXiv preprint arXiv:2210.11427 (2022)

- [6] Dhariwal, P., Nichol, A.: Diffusion models beat gans on image synthesis. In: NeurIPS (2021)

- [7] Ding, M., Yang, Z., Hong, W., Zheng, W., Zhou, C., Yin, D., Lin, J., Zou, X., Shao, Z., Yang, H., et al.: Cogview: Mastering text-to-image generation via transformers. In: NeurIPS (2021)

- [8] Dong, Q., Dong, L., Xu, K., Zhou, G., Hao, Y., Sui, Z., Wei, F.: Large language model for science: A study on p vs. np. arXiv preprint arXiv:2309.05689 (2023)

- [9] Du, Y., Durkan, C., Strudel, R., Tenenbaum, J.B., Dieleman, S., Fergus, R., Sohl-Dickstein, J., Doucet, A., Grathwohl, W.S.: Reduce, reuse, recycle: Compositional generation with energy-based diffusion models and mcmc. In: ICML (2023)

- [10] Han, Y., Huang, G., Song, S., Yang, L., Wang, H., Wang, Y.: Dynamic neural networks: A survey. TPAMI (2021)

- [11] Hessel, J., Holtzman, A., Forbes, M., Bras, R.L., Choi, Y.: Clipscore: A reference-free evaluation metric for image captioning. arXiv preprint arXiv:2104.08718 (2021)

- [12] Ho, J., Chan, W., Saharia, C., Whang, J., Gao, R., Gritsenko, A., Kingma, D.P., Poole, B., Norouzi, M., Fleet, D.J., et al.: Imagen video: High definition video generation with diffusion models. arXiv preprint arXiv:2210.02303 (2022)

- [13] Ho, J., Jain, A., Abbeel, P.: Denoising diffusion probabilistic models. In: NeurIPS (2020)

- [14] Kawar, B., Zada, S., Lang, O., Tov, O., Chang, H., Dekel, T., Mosseri, I., Irani, M.: Imagic: Text-based real image editing with diffusion models. In: CVPR (2023)

- [15] Li, B., Qi, X., Lukasiewicz, T., Torr, P.: Controllable text-to-image generation. In: NeurIPS (2019)

- [16] Li, Y., Liu, H., Wu, Q., Mu, F., Yang, J., Gao, J., Li, C., Lee, Y.J.: Gligen: Open-set grounded text-to-image generation. In: CVPR (2023)

- [17] Liu, N., Li, S., Du, Y., Torralba, A., Tenenbaum, J.B.: Compositional visual generation with composable diffusion models. In: ECCV (2022)

- [18] Mansimov, E., Parisotto, E., Ba, J.L., Salakhutdinov, R.: Generating images from captions with attention. arXiv preprint arXiv:1511.02793 (2015)

- [19] Meng, C., He, Y., Song, Y., Song, J., Wu, J., Zhu, J.Y., Ermon, S.: Sdedit: Guided image synthesis and editing with stochastic differential equations. arXiv preprint arXiv:2108.01073 (2021)

- [20] Midjourney: Midjourney (V5.2) [Text-to-Image Model]. https://www.midjourney.com (2023)

- [21] Nichol, A., Dhariwal, P., Ramesh, A., Shyam, P., Mishkin, P., McGrew, B., Sutskever, I., Chen, M.: Glide: Towards photorealistic image generation and editing with text-guided diffusion models. arXiv preprint arXiv:2112.10741 (2021)

- [22] OpenAI: ChatGPT (Aug 3 Version) [Large Language Model]. https://chat.openai.com (2023)

- [23] OpenAI: Dall·e 3 system card. OpenAI technical report (2023)

- [24] Podell, D., English, Z., Lacey, K., Blattmann, A., Dockhorn, T., Müller, J., Penna, J., Rombach, R.: Sdxl: Improving latent diffusion models for high-resolution image synthesis. arXiv preprint arXiv:2307.01952 (2023)

- [25] Ramesh, A., Dhariwal, P., Nichol, A., Chu, C., Chen, M.: Hierarchical text-conditional image generation with clip latents. arXiv preprint arXiv:2204.06125 (2022)

- [26] Ramesh, A., Pavlov, M., Goh, G., Gray, S., Voss, C., Radford, A., Chen, M., Sutskever, I.: Zero-shot text-to-image generation. In: ICML (2021)

- [27] Reed, S., Akata, Z., Yan, X., Logeswaran, L., Schiele, B., Lee, H.: Generative adversarial text to image synthesis. In: ICML (2016)

- [28] Rombach, R., Blattmann, A., Lorenz, D., Esser, P., Ommer, B.: High-resolution image synthesis with latent diffusion models. In: CVPR (2022)

- [29] Ronneberger, O., Fischer, P., Brox, T.: U-net: Convolutional networks for biomedical image segmentation. In: MICCAI (2015)

- [30] Saharia, C., Chan, W., Saxena, S., Li, L., Whang, J., Denton, E.L., Ghasemipour, K., Gontijo Lopes, R., Karagol Ayan, B., Salimans, T., et al.: Photorealistic text-to-image diffusion models with deep language understanding. In: NeurIPS (2022)

- [31] Schuhmann, C., Beaumont, R., Vencu, R., Gordon, C., Wightman, R., Cherti, M., Coombes, T., Katta, A., Mullis, C., Wortsman, M., et al.: Laion-5b: An open large-scale dataset for training next generation image-text models. In: NeurIPS (2022)

- [32] Sharma, P., Ding, N., Goodman, S., Soricut, R.: Conceptual captions: A cleaned, hypernymed, image alt-text dataset for automatic image captioning. In: ACL (2018)

- [33] Song, Y., Dhariwal, P., Chen, M., Sutskever, I.: Consistency models. arXiv preprint arXiv:2303.01469 (2023)

- [34] Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A.N., Kaiser, Ł., Polosukhin, I.: Attention is all you need. In: NeurIPS (2017)

- [35] Wang, R., Chen, Z., Chen, C., Ma, J., Lu, H., Lin, X.: Compositional text-to-image synthesis with attention map control of diffusion models. arXiv preprint arXiv:2305.13921 (2023)

- [36] Wu, C., Liang, J., Hu, X., Gan, Z., Wang, J., Wang, L., Liu, Z., Fang, Y., Duan, N.: Nuwa-infinity: Autoregressive over autoregressive generation for infinite visual synthesis. arXiv preprint arXiv:2207.09814 (2022)

- [37] Xu, J., Liu, X., Wu, Y., Tong, Y., Li, Q., Ding, M., Tang, J., Dong, Y.: Imagereward: Learning and evaluating human preferences for text-to-image generation. arXiv preprint arXiv:2304.05977 (2023)

- [38] Xu, T., Zhang, P., Huang, Q., Zhang, H., Gan, Z., Huang, X., He, X.: Attngan: Fine-grained text to image generation with attentional generative adversarial networks. arxiv. arXiv preprint arXiv:1711.10485 (2017)

- [39] Yu, J., Xu, Y., Koh, J.Y., Luong, T., Baid, G., Wang, Z., Vasudevan, V., Ku, A., Yang, Y., Ayan, B.K., et al.: Scaling autoregressive models for content-rich text-to-image generation. arXiv preprint arXiv:2206.10789 (2022)

- [40] Zhang, H., Xu, T., Li, H., Zhang, S., Wang, X., Huang, X., Metaxas, D.N.: Stackgan: Text to photo-realistic image synthesis with stacked generative adversarial networks. In: ICCV (2017)

Appendix

Appendix 0.A Interaction Details in Interactive LLMs Guidance System

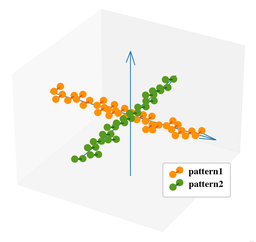

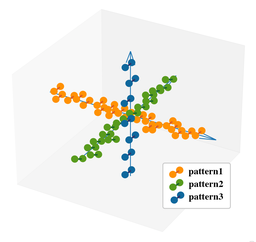

In this section, we describe the detailed process of interaction between human researchers and GPT-3.5 within our workflow. Utilizing block diagrams for clarity, we model the engagement between human experts and GPT-3.5. To illustrate the variation in data volume within the Interactive LLMs Guidance System, we present several diagrams, in Figure11. In these diagrams, pairs of spheres along the same axis denote concept pairs associated with a particular pattern, distinguishable by their color.

(a) Round 1(b) Round 2-3(c) Round 4-5(d) Round 6 Come up with concept pairs Guide GPT-3.5 to extend concept pairs Guide GPT-3.5 to create new patterns Guide GPT-3.5 to blend exsiting patterns

(a) Round 1(b) Round 2-3(c) Round 4-5(d) Round 6 Come up with concept pairs Guide GPT-3.5 to extend concept pairs Guide GPT-3.5 to create new patterns Guide GPT-3.5 to blend exsiting patterns

Figure 11: Schematic diagrams of different rounds of Socratic Reasoning. The complete procedure contains coming up with concept pairs (Figure a), guiding GPT-3.5 to extend concept pairs (Figure b), guiding GPT-3.5 to create new patterns (Figure c) and guiding GPT-3.5 to blend exsiting patterns (Figure d).

Phase 1: Identifying Initial Concept Pairs as Seeds After developing an initial set of 50 concept pairs and categorizing them into 8 unique patterns (among them, 4 patterns match our LC-Mis issue), human researchers direct GPT-3.5 to generate additional concept pairs. We specify both the researcher-provided prompt and the GPT-3.5 response. The format of the patterns deducting concept pairs are depicted in Table 1.

Midjourney text-to-image generation, in conjunction with human researcher validation, then confirms a total of 50 concept pairs. Throughout the evaluation conducted by human experts, 5 impartial human experts are tasked to identify the generated images. If minor differences, a majority opinion will be adopted. Otherwise, a senior expert will come to re-identify the image. Human expert evaluation prove to be accurate under certain circumstances as discussed in Section5.3 of our paper.

Table 1: Patterns summarized manually by human researchers.

We leverage GPT-3.5 to generate 5 detailed prompts for each concept pair:

Phase 2 - Generating and Verifying Additional Concept Pairs On the basis of known patterns, we encourage GPT-3.5 to explore more concept pairs. Our instructions for GPT-3.5 are as follows:

Phase 3 - Discovering New Patterns for Concept Pair Generation The proliferation of repetitive concept pairs has prompted researchers to investigate innovative approaches. Engaging with GPT-3.5, scholars aim to automate the discovery of new patterns. Our instructions for GPT-3.5 are as follows:

Due to the lack of guidance from information that has been validated in visual space, the new concept pairs answered by GPT-3.5 don’t achieve the expected effect. So human researchers propose a shift toward necessitating concepts within the linguistic domain rather than demanding concept pairs specifically. In this way, human researchers only need to select suitable conflicting concepts from two sets of concepts and combine them for verification, drawing from their accumulated experience with text-image interactions. This avoids both unguided exploration and excessive guidance, see the instruction prompt in the next page.

We have also present the new patterns given by GPT-3.5 in Tabel2 as follows:

Table 2: New patterns created by GPT-3.5 and the levels of their concept pairs.

Phase 4 - Creating Novel Concept Pairs by Merging Patterns Two straight lines determine a plane. Once the points on the two lines are defined, it’s available to guide GPT-3.5 to expand the new plane. This means GPT-3.5 can blend newly generated patterns with previous patterns, thereby generating more LC-Mis concept pairs. Our instructions for GPT-3.5 are as follows:

Human researchers then repeat the verification process used in Phase 1 to verify these concept pairs. After thorough verification, we report the number of concept pairs rated as Level 5 for each blended pattern in Table3. It’s quite surprising to discover that the validation results closely align with the expectations of human researchers, indicating that GPT-3.5 successfully integrates two mutually orthogonal patterns.

Table 3: Blended patterns created by GPT-3.5 and the levels of their concept pairs.

Appendix 0.B Interaction in Sequential Concept Introduction

Here, we present the detailed interaction to guide GPT-3.5 to provide the most logical sequence of two concepts and the description of them.

Appendix 0.C Comprehensive Explanation of MoCE

The whole process of MoCE actually contains two phases. For the first phase, as depicted in Figure 12, Diffusion Models was asked to generate the image for the selected substance to form a preparatory list including images of different timestamps t T subscript 𝑡 𝑇 t_{T}italic_t start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT. From there, the second phase of MoCE, as shown in Figure 13 gets in effect, which takes an image of specific timestamp t n subscript 𝑡 𝑛 t_{n}italic_t start_POSTSUBSCRIPT italic_n end_POSTSUBSCRIPT from the preparatory list as the input for the Diffusion Models in the second stage where the whole sentence containing both substances was taken as the prompt. The selection of the specific candidate from the preparatory list was determined by our scoring model introduced in paper Section4.

Figure 12: First phase of MoCE. Provided with a prompt, GPT-3.5 determines drawing order. SDXL generates latent images at each step, storing them in a preparatory list.

Figure 13: Second phase of MoCE. The second stage model takes an image from the preparatory list and performs denoising. A specific selection process, utilizing the Multi-Concept Disparity and binary search, was used to select a better image from the list.

Appendix 0.D Score Evaluation

By convention, evaluation metrics such as Clipscore and Image-Reward are usually used to gauge the correspondence between the generations and the input entities quantitatively. These metrics utilize the cosine similarity between embeddings produced by Deep Neural Networks (DNNs). Nonetheless, they often fail to discern numerical values, transparent objects, and other crucial elements readily identifiable by humans. In our paper Section4, our proposed MoCE uses the Multi-Concept Disparity (𝒟 𝒟\mathcal{D}caligraphic_D) between the scores of 64 images (M 𝑀 M italic_M) with respect to 2 concepts (𝒜 𝒜\mathcal{A}caligraphic_A and ℬ ℬ\mathcal{B}caligraphic_B) as the basis for performing a binary search:

𝒟=𝒮(M,𝒜)−𝒮(M,ℬ)𝒟 𝒮 𝑀 𝒜 𝒮 𝑀 ℬ\mathcal{D}=\mathcal{S}(M,\mathcal{A})-\mathcal{S}(M,\mathcal{B})caligraphic_D = caligraphic_S ( italic_M , caligraphic_A ) - caligraphic_S ( italic_M , caligraphic_B )(4)

We use this metric to assist the demonstration our MoCE performance. Specifically, we assess the generation performance of both the baseline model (SDXL) and our MoCE using the metric, 𝒟 𝒟\mathcal{D}caligraphic_D, in Equation4, where 𝒟 𝒟\mathcal{D}caligraphic_D is calculated using Clipscore or Image-Reward, denoted as 𝒟 𝒟\mathcal{D}caligraphic_D - Clipscore ‡‡‡To facilitate easier observation by human experts, we demonstrate the established 𝒟 𝒟\mathcal{D}caligraphic_D - Clipscore at a magnification of 10×10\times 10 ×. and 𝒟 𝒟\mathcal{D}caligraphic_D - Image-Reward respectively. Experiments are conducted on both the set of concept pairs at Level 5 and Level 1 to 4. We report the experimental results in Table4. In comparison to the baseline model, 𝒟 𝒟\mathcal{D}caligraphic_D - Clipscore of images generated by our MoCE is reduced by more than half, and the 𝒟 𝒟\mathcal{D}caligraphic_D - Image-Reward is reduced by more than 1 3 1 3\frac{1}{3}divide start_ARG 1 end_ARG start_ARG 3 end_ARG, in either set of levels. It demonstrates MoCE’s ability to effectively restore the lost concepts in images.

Table 4: Score Evaluation for our MoCE using both 𝒟 𝒟\mathcal{D}caligraphic_D - Clipscore (↓↓\downarrow↓) and 𝒟 𝒟\mathcal{D}caligraphic_D - Image-Reward (↓↓\downarrow↓). We use concept pairs originally rated as Level 5 and Level 1 - 4.

Appendix 0.E Ablation Demo

Here, we first demonstrate the ablation test on computational time. Figure 14 shows that the prompt switching time can be set between 20% and 40% of the steps to optimize the time-performance tradeoff, thus optimizing the cost to 1×\times× or 2×\times× at the same time.

Moreover, ablation test on dataset size is also conducted. Here we compare 3 models from datasets of varying sizes, as shown in Figure 15. Since DALL·E 3 is not open-sourced, we cannot apply MoCE to it. DALL·E 3 does perform well. However, its detailed labeling process is labor-intensive,while at the same time, our MoCE can be easily integrated into current models.

Figure 14: Analysis for different prompt switching times on “a tea cup of iced coke."

Figure 15: Comparison between baseline models and MoCE on various datasets with different sizes.

Appendix 0.F Restoration Visualizations of Level 5

Here, we demonstrate more visualizations of images of Level 5 restored using our MoCE. In spite of the given additional rich information, Midjourney fails to correctly generate these images. While using the same text prompts, our MoCE successfully retrieves the lost concepts as presented in Figure16 and17. We also propose that in Figure 16 and 17, human experts judge “Hot Tea” based on the transparency of the liquid and “Shanghai” based on landmarks such as the Oriental Pearl TV Tower. These judgments are based on verified model tendencies.

Appendix 0.G Restoration Visualizations of Level 1 - 4

For images restored by baseline models by adding rich information, our MoCE can also easily retrieve the lost concepts and increase the frequency of correct generation, as presented in Figure18 and19.

Baseline Models MoCE

(a) Apple Cider, Cocktail Shaker

(a) Apple Cider, Cocktail Shaker

(b) Green Tea, Red Solo Cup

(b) Green Tea, Red Solo Cup

(c) Iced Coffee, Shot Glass

(c) Iced Coffee, Shot Glass

(d) Iced Latte, Soda Can

(d) Iced Latte, Soda Can

(e) Hot Tea, Coffee Mug

(e) Hot Tea, Coffee Mug

Figure 16: Visualizations of images at Level 5 generated by baseline models and our MoCE.

Baseline Models MoCE

(f) Stone Lion, Paris

(f) Stone Lion, Paris

(g) Watermelon, Lemon Tree

(g) Watermelon, Lemon Tree

(h) Croquettes, Shanghai

(h) Croquettes, Shanghai

(i) Gumdrops, Night

(i) Gumdrops, Night

(j) Noodle, Basin

(j) Noodle, Basin

Figure 17: Visualizations of images at Level 5 generated by baseline models and our MoCE.

(a) Cereal, Chopsticks

(a) Cereal, Chopsticks

(b) Teaspoon, Sandwich

(b) Teaspoon, Sandwich

(c) Teaspoon, Curry

(c) Teaspoon, Curry

(d) Savory steak, Chopsticks

(d) Savory steak, Chopsticks

(e) Pancakes, Tea cup

(e) Pancakes, Tea cup

Figure 18: Visualizations of images at Level 1 - 4 generated by baseline models and our MoCE. With additional information, baseline models can indeed generate a small number of correct images, and our MoCE is able to increase the frequency of correct generation.

(f) California Condor, Petra

(f) California Condor, Petra

(g) Javan Rhino, Stonehenge

(g) Javan Rhino, Stonehenge

(h) Javan Halk-Eagle, Sydney Opera House

(h) Javan Halk-Eagle, Sydney Opera House

(i) Asian Elephant, Angkor Wat

(i) Asian Elephant, Angkor Wat

(j) Indus River Dolphin, Mount Fuji

(j) Indus River Dolphin, Mount Fuji

Figure 19: Visualizations of images at Level 1 - 4 generated by baseline models and our MoCE. With additional information, baseline models can indeed generate a small number of correct images, and our MoCE is able to increase the frequency of correct generation.

Xet Storage Details

- Size:

- 80.7 kB

- Xet hash:

- d8e4daef8398c55ce1fefa98131c8adc1966390d5b83c55a4a493bee2d03bcd7

Xet efficiently stores files, intelligently splitting them into unique chunks and accelerating uploads and downloads. More info.