Buckets:

Title: StimuVAR: Spatiotemporal Stimuli-aware Video Affective Reasoning with Multimodal Large Language Models

URL Source: https://arxiv.org/html/2409.00304

Published Time: Wed, 04 Jun 2025 00:27:52 GMT

Markdown Content: [1]\fnm Yuxiang \sur Guo\equalcont This work was mostly done when Y. Guo was an intern at HRI-USA. [1]\fnm Rama \sur Chellappa [2]\fnm Shao-Yuan \sur Lo

1]\orgname Johns Hopkins University 2]\orgname Honda Research Institute USA

Abstract

Predicting and reasoning how a video would make a human feel is crucial for developing socially intelligent systems. Although Multimodal Large Language Models (MLLMs) have shown impressive video understanding capabilities, they tend to focus more on the semantic content of videos, often overlooking emotional stimuli. Hence, most existing MLLMs fall short in estimating viewers’ emotional reactions and providing plausible explanations. To address this issue, we propose StimuVAR, a spatiotemporal Stimuli-aware framework for Video Affective Reasoning (VAR) with MLLMs. StimuVAR incorporates a two-level stimuli-aware mechanism: frame-level awareness and token-level awareness. Frame-level awareness involves sampling video frames with events that are most likely to evoke viewers’ emotions. Token-level awareness performs tube selection in the token space to make the MLLM concentrate on emotion-triggered spatiotemporal regions. Furthermore, we create VAR instruction data to perform affective training, steering MLLMs’ reasoning strengths towards emotional focus and thereby enhancing their affective reasoning ability. To thoroughly assess the effectiveness of VAR, we provide a comprehensive evaluation protocol with extensive metrics. StimuVAR is the first MLLM-based method for viewer-centered VAR. Experiments demonstrate its superiority in understanding viewers’ emotional responses to videos and providing coherent and insightful explanations. Our code is available at https://github.com/EthanG97/StimuVAR.

keywords:

Video affective reasoning, emotion recognition, emotional stimuli, multimodal large language models

1 Introduction

Figure 1: Traditional methods uniformly sample video frames, which could easily miss rapid yet key events that are most likely to evoke viewers’ emotions. In contrast, StimuVAR proposes event-driven frame sampling, efficiently selecting the frames containing rapid key events, such as a rock falling onto the road. Next, emotion-triggered tube selection identifies the areas where these events occur, represented by color-boxed regions, guiding MLLM’s focus on these emotional stimuli. Additionally, StimuVAR performs affective reasoning, which can offer rationales behind its predictions; for example, it recognizes that the unexpected occurrence of a falling rock triggers the emotion of “surprise”. Relevant and irrelevant words are colored.

Understanding human emotional responses to videos is crucial for developing socially intelligent systems that enhance human-computer interaction[pantic2005affective, wang2020emotion], personalized services[bielozorov2019role, lee2017chatbot], and more. In recent years, user-generated videos on social media platforms have become an integral part of modern society. With increasing concerns about mental health, there is growing public attention on how videos affect viewers’ well-being. Unlike most existing Video Emotion Analysis (VEA) approaches that focus on analyzing the emotions of characters in a video[srivastava2023you, kosti2019context, lee2019context, yang2024robust, lian2023explainable, cheng2024emotion], predicting and reasoning about a video’s emotional impact on viewers is a more challenging task[achlioptas2021artemis, achlioptas2023affection, mazeika2022would, jiang2014predicting]. This challenge requires not only an understanding of video content but also commonsense knowledge of human reactions and emotions.

Traditional emotion models are trained to map visual embeddings to corresponding emotion labels[srivastava2023you, kosti2019context, lee2019context, yang2024robust, mazeika2022would, yang2021stimuli, pu2023going]. These models heavily rely on basic visual attributes, such as color, brightness, or object class[xie2024emovit, yang2023emoset], which are often insufficient for accurately estimating viewers’ emotional reactions. While recent advances in Multimodal Large Language Models (MLLMs)[Zhang2023VideoLLaMAAI, Lin2023VideoLLaVALU, luo2023valley, Maaz2023VideoChatGPTTD, li2024mvbench, Jin_2024_CVPR, Ye_2024_CVPR] have demonstrated superiority in various video understanding tasks[mittal2024can, Wang2023VamosVA, Yang2024FollowTR], they tend to focus more on the semantic content and factual analysis of videos. This lack of awareness of emotional knowledge often leads these MLLMs to fall short in viewer-centered VEA.

Psychologists have highlighted that emotions are often triggered by specific elements, referred to as emotional stimuli[mehrabian1974approach, frijda1986emotions, brosch2010perception, peng2016emotions]. Fig.1 illustrates an example. Consider a 20-second dashcam video in which a rock suddenly falls onto the road within 2 seconds, while the other 18 seconds depict regular driving scenes. Although most of the video depicts ordinary scenes, the unexpected rock fall is likely to evoke surprise and fear in viewers. In this case, the falling rock is a critical stimulus that predominantly shapes the viewers’ emotional responses. However, current MLLMs may overlook or not prioritize these key emotional stimuli. From a temporal perspective, most MLLMs use uniform temporal downsampling to sample input video frames. While uniform sampling may work well for general video understanding tasks, it could miss the unexpected moments or rapid events that could generate strong reactions and thus make a wrong prediction. From a spatial perspective, emotional stimuli like the falling rock may occupy only a small region of the frame. Identifying these stimulus regions is essential for reducing redundant information and achieving more precise affective understanding.

On the other hand, interpretability is crucial for earning public trust when deploying models in real-world applications. Still, traditional emotion models are not explainable, and most current MLLMs fail to provide plausible affective explanations due to their limited awareness of emotional stimuli, as previously discussed. Although a few recent efforts aim at explainable emotion analysis[lian2023explainable, cheng2024emotion, achlioptas2021artemis, achlioptas2023affection, xie2024emovit], they consider only image data or lack a comprehensive evaluation protocol to fully validate their reasoning ability. The task of reasoning human affective responses triggered by videos remains less explored.

To address these limitations, we propose StimuVAR, a spatiotemporal Stimuli-aware framework for Video Affective Reasoning (VAR) with MLLMs. StimuVAR incorporates a two-level stimuli-aware mechanism to identify spatiotemporal stimuli: frame-level awareness and token-level awareness. For frame-level awareness, we introduce event-driven frame sampling, using optical flow as a cue to capture the frames that contain unexpected events or unintentional accidents[epstein2020oops]. These frames are likely to be the stimuli that evoke viewers’ emotions. For token-level awareness, we design emotion-triggered tube selection that localizes the emotion-triggered spatiotemporal regions in the token space, which the MLLM can then emphasize. In addition, we construct VAR visual instruction data (based on the training set of the large-scale viewer-centered Video Cognitive Empathy (VCE) dataset[mazeika2022would]) via GPT[brown2020language, achiam2023gpt] to perform affective training. The VAR-specific instruction data steer the MLLM’s reasoning strengths and commonsense knowledge towards an emotional focus, enhancing the MLLM’s ability to provide insightful and contextually relevant explanations for its affective understanding. To the best of our knowledge, the proposed StimuVAR is the first MLLM-based method for predicting and reasoning viewers’ emotional reactions to videos.

To thoroughly assess the VAR problem, we provide a comprehensive evaluation protocol with extensive metrics, including prediction accuracy, emotional-alignment[achlioptas2021artemis], doubly-right[mao2023doubly], CLIPScore[hessel2021clipscore], and LLM-as-a-judge[zheng2024judging]. The proposed StimuVAR achieves state-of-the-art performance across multiple benchmarks, demonstrating its ability to predict how a video would make a human feel and to provide plausible explanations. In summary, the main contributions of this work are as follows:

- 1.We propose StimuVAR, a novel spatiotemporal stimuli-aware framework for the VAR problem. To the best of our knowledge, it is the first MLLM-based method for predicting and reasoning viewers’ emotional reactions to videos.

- 2.We propose the event-driven frame sampling and emotion-triggered tube selection strategies to achieve frame- and token-level spatiotemporal stimuli awareness. Furthermore, we create VAR instruction data to perform affective training, enhancing affective reasoning ability.

- 3.We provide a comprehensive evaluation protocol with extensive metrics for the VAR problem. StimuVAR demonstrates state-of-the-art performance in both prediction and reasoning abilities across multiple benchmarks.

2 Related Work

2.1 Video Emotion Analysis

Existing VEA studies can be categorized into two main themes: recognizing the emotions of characters in a video and predicting viewers’ emotional responses to a video. The former primarily relies on facial expressions and dialogue information[srivastava2023you, kosti2019context, lee2019context, yang2024robust]. We target the latter, which is more challenging as it requires not only video content understanding but also commonsense knowledge of human reactions. Such viewer-centered VEA is crucial for understanding how a video affects human mental health, gaining more attention in the social media era. Traditional supervised approaches learn to map visual features to corresponding emotion labels[mazeika2022would, yang2021stimuli, pu2023going]. They heavily rely on basic visual attributes, such as color, brightness, or object class[xie2024emovit], which are often inadequate for the complex task of viewer-centered VEA. Additionally, these approaches lack interpretability, failing to provide rationales behind their predictions for broader applications. ArtEmis[achlioptas2021artemis] and Affection[achlioptas2023affection] introduce benchmarks for viewer-centered affective explanations but only consider image data. Several recent studies explore utilizing MLLMs in emotion analysis. EmoVIT[xie2024emovit] performs emotion visual instruction tuning[liu2024visual, Dai2023InstructBLIPTG] to learn emotion-specific knowledge. Although EmoVIT claims to have affective reasoning ability, it does not provide an evaluation. EMER[lian2023explainable] and Emotion-LLaMA[cheng2024emotion] use MLLMs to offer explanations for their emotion predictions, but they focus on analyzing characters’ emotions rather than viewers’ emotional responses.

2.2 Multimodal Large Language Models

Recent advances in Large Language Models (LLMs), e.g., the GPT[brown2020language, achiam2023gpt] and LLaMA families[touvron2023llama1, touvron2023llama, dubey2024llama], have demonstrated outstanding capabilities in understanding and generating natural languages. These models have been extended to MLLMs[li2023blip, zhu2024minigpt, liu2024visual, Dai2023InstructBLIPTG] to tackle vision-language problems. For instance, LLaVA[liu2024visual] and InstructBLIP[Dai2023InstructBLIPTG] use a projector to integrate visual features into the LLM and conduct visual instruction tuning for instruction-aware vision-language understanding. Several video-oriented MLLMs have also been introduced specifically for video tasks, such as Video-LLaMA[Zhang2023VideoLLaMAAI], Video-ChatGPT[Maaz2023VideoChatGPTTD], Chat-UniVi[Jin_2024_CVPR], mPLUG-Owl[Ye_2024_CVPR], etc. However, these approaches typically employ uniform temporal downsampling to sample input video frames, which often results in missing key stimulus moments that are most likely to trigger viewers’ emotions. In contrast, the proposed StimuVAR incorporates spatiotemporal stimuli awareness, enabling it to achieve state-of-the-art VAR performance.

3 Method

The proposed StimuVAR, a spatiotemporal stimuli-aware framework for VAR is based on the MLLM backbone. VAR is a task aiming to predict viewers’ emotional responses to a given video and provide reasoning for the prediction. It can be formulated as follows:

{E,R}=ℱ(V,P),𝐸 𝑅 ℱ 𝑉 𝑃{E,R}=\mathcal{F}(V,P),{ italic_E , italic_R } = caligraphic_F ( italic_V , italic_P ) ,(1)

where V 𝑉 V italic_V is an input video, P 𝑃 P italic_P is an input text prompt, E 𝐸 E italic_E is the predicted emotion response, and R 𝑅 R italic_R is free-form textual reasoning for the emotion prediction E 𝐸 E italic_E. We employ an MLLM as a backbone of the VAR model ℱ ℱ\mathcal{F}caligraphic_F. A typical MLLM architecture consists of a visual encoder ℱ v subscript ℱ 𝑣\mathcal{F}{v}caligraphic_F start_POSTSUBSCRIPT italic_v end_POSTSUBSCRIPT, a projector ℱ proj subscript ℱ 𝑝 𝑟 𝑜 𝑗\mathcal{F}{proj}caligraphic_F start_POSTSUBSCRIPT italic_p italic_r italic_o italic_j end_POSTSUBSCRIPT, a tokenizer ℱ t subscript ℱ 𝑡\mathcal{F}{t}caligraphic_F start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT, and a LLM ℱ llm subscript ℱ 𝑙 𝑙 𝑚\mathcal{F}{llm}caligraphic_F start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT, and thus Eq.(1) can be written as:

{E,R}=ℱ llm(ℱ proj(ℱ v(V)),ℱ t(P)).𝐸 𝑅 subscript ℱ 𝑙 𝑙 𝑚 subscript ℱ 𝑝 𝑟 𝑜 𝑗 subscript ℱ 𝑣 𝑉 subscript ℱ 𝑡 𝑃{E,R}=\mathcal{F}{llm}(\mathcal{F}{proj}(\mathcal{F}{v}(V)),\mathcal{F}{% t}(P)).{ italic_E , italic_R } = caligraphic_F start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT ( caligraphic_F start_POSTSUBSCRIPT italic_p italic_r italic_o italic_j end_POSTSUBSCRIPT ( caligraphic_F start_POSTSUBSCRIPT italic_v end_POSTSUBSCRIPT ( italic_V ) ) , caligraphic_F start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ( italic_P ) ) .(2)

The proposed StimuVAR addresses the lack of interpretability in traditional emotion models and the lack of emotional stimuli awareness in existing MLLMs. Fig.2 provides an overview of StimuVAR. The following subsections elaborate on the proposed spatiotemporal stimuli-aware mechanism and affective training.

Figure 2: The architecture of StimuVAR. Event-driven frame sampling employs optical flow to capture emotional stimuli at the frame level, while emotion-triggered tube selection identifies key spatiotemporal areas at the token level, achieving effective and efficient video representations for VAR. To enhance affective understanding, we perform a two-phase affective training to steer MLLM’s reasoning strengths and commonsense knowledge towards an emotional focus, enabling accurate emotion predictions and plausible explanations.

3.1 Spatiotemporal Stimuli Awareness

The proposed spatiotemporal stimuli-aware mechanism involves two levels of awareness: frame-level and token-level. Frame-level awareness is achieved through the event-driven frame sampling strategy, which samples video frames that contain events most likely to evoke viewers’ emotions. Token-level awareness is accomplished via the emotion-triggered tube selection strategy, which selects regions in the token space to guide the MLLM’s focus toward emotion-triggered spatiotemporal areas.

3.1.1 Event-driven Frame Sampling

In video tasks, uniformly sampling frames is a common practice to represent a video due to temporal redundancy. However, uniform sampling often fails to represent videos containing rapid, unexpected actions or unintentional accidents[epstein2020oops], most likely to evoke viewers’ emotional reactions[peng2016emotions]. This is because uniform sampling could easily miss the frames of such rapid but key events. While processing all frames without sampling would preserve all temporal information, the computational burden is significant, especially for MLLMs. To achieve frame-level stimuli awareness, we aim to develop a sampling method that selects the most representative frames within the same constrained number as the uniform sampling baseline. For practical use, we suggest that such frame sampling should meet the following criteria:

- •Capture key events: Identify frames that depict the key events for affective understanding.

- •Constrained number of frames: Select the most representative frames within the allotted frame budget.

- •Fast processing: Perform sampling efficiently to ensure timely analysis.

Epstein et al.[epstein2020oops] study a related task of localizing unintentional actions in videos, exploring cues such as speed and context. Still, their approach requires additional deep networks to extract these video features, making it less ideal for incorporation into the MLLM pipeline when considering processing speed.

Figure 3: The process of event-driven frame sampling. Optical flows OF 𝑂 𝐹 OF italic_O italic_F between adjacent frames are calculated and then filtered using a Gaussian filter G σ subscript 𝐺 𝜎 G_{\sigma}italic_G start_POSTSUBSCRIPT italic_σ end_POSTSUBSCRIPT. Peaks in the filtered optical flows OF~~𝑂 𝐹\tilde{OF}over~ start_ARG italic_O italic_F end_ARG are identified as key events e 𝑒 e italic_e. Frames surrounding these events are assigned a high-intensity sampling rate, while the rest of the frames are sampled with a low-intensity rate.

We propose our event-driven frame sampling strategy based on the observation that rapid key events often coincide with dramatic changes in a video’s appearance. These appearance changes can be modeled using the optical flow estimation technique, widely used to capture motions in video tasks[turaga2009unsupervised, turaga2007videos]. Let us consider a video V 𝑉 V italic_V consisting of frames {f 1,f 2,…f T}subscript 𝑓 1 subscript 𝑓 2…subscript 𝑓 𝑇{f_{1},f_{2},...f_{T}}{ italic_f start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT , … italic_f start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT }; an optical flow estimator derives the pattern of apparent motion between each pair of adjacent frames f t subscript 𝑓 𝑡 f_{t}italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT and f t+1 subscript 𝑓 𝑡 1 f_{t+1}italic_f start_POSTSUBSCRIPT italic_t + 1 end_POSTSUBSCRIPT as follows:

OF t=OpticalFlow(f t,f t+1)for t=1,2,…,T−1,formulae-sequence 𝑂 subscript 𝐹 𝑡 OpticalFlow subscript 𝑓 𝑡 subscript 𝑓 𝑡 1 for 𝑡 1 2…𝑇 1 OF_{t}=\text{OpticalFlow}(f_{t},f_{t+1})\quad\text{for}\quad t=1,2,\ldots,T-1,italic_O italic_F start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT = OpticalFlow ( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t + 1 end_POSTSUBSCRIPT ) for italic_t = 1 , 2 , … , italic_T - 1 ,(3)

where OF t 𝑂 subscript 𝐹 𝑡 OF_{t}italic_O italic_F start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT is a frame-level optical flow value (i.e., mean absolute of each pixel’s optical flow value), and then we obtain a set of estimated optical flows {OF 1,OF 2,…,OF T−1}𝑂 subscript 𝐹 1 𝑂 subscript 𝐹 2…𝑂 subscript 𝐹 𝑇 1{OF_{1},OF_{2},\ldots,OF_{T-1}}{ italic_O italic_F start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT , italic_O italic_F start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT , … , italic_O italic_F start_POSTSUBSCRIPT italic_T - 1 end_POSTSUBSCRIPT } of the video. Although there have been many deep network-based optical flow estimators in the literature[dosovitskiy2015flownet, ilg2017flownet], we find that the classic Lucas-Kanade method[lucas1981iterative] is sufficient for our purpose and offers much higher computational efficiency. As illustrated in Fig.3, we construct a curve OF(t)𝑂 𝐹 𝑡 OF(t)italic_O italic_F ( italic_t ) that depicts the intensity of the optical flows over time. To mitigate noise-induced fluctuations, we apply Gaussian smoothing to this curve using a Gaussian filter G σ subscript 𝐺 𝜎 G_{\sigma}italic_G start_POSTSUBSCRIPT italic_σ end_POSTSUBSCRIPT:

OF(t)=(OF∗G σ)(t)=∑τ=−∞∞OF(t−τ)G σ(τ).𝑂 𝐹 𝑡 𝑂 𝐹 subscript 𝐺 𝜎 𝑡 superscript subscript 𝜏 𝑂 𝐹 𝑡 𝜏 subscript 𝐺 𝜎 𝜏\tilde{OF}(t)=(OF*G_{\sigma})(t)=\sum_{\tau=-\infty}^{\infty}OF(t-\tau),G_{% \sigma}(\tau).over~ start_ARG italic_O italic_F end_ARG ( italic_t ) = ( italic_O italic_F ∗ italic_G start_POSTSUBSCRIPT italic_σ end_POSTSUBSCRIPT ) ( italic_t ) = ∑ start_POSTSUBSCRIPT italic_τ = - ∞ end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ∞ end_POSTSUPERSCRIPT italic_O italic_F ( italic_t - italic_τ ) italic_G start_POSTSUBSCRIPT italic_σ end_POSTSUBSCRIPT ( italic_τ ) .(4)

We define the p 𝑝 p italic_p highest peaks in the smoothed curve OF(t)𝑂 𝐹 𝑡\tilde{OF}(t)over~ start_ARG italic_O italic_F end_ARG ( italic_t ) as the center of key events, i.e., {OFe1,OFe2,…,OFep}subscript𝑂 𝐹 𝑒 1 subscript𝑂 𝐹 𝑒 2…subscript𝑂 𝐹 𝑒 𝑝{\tilde{OF}{e1},\tilde{OF}{e2},\ldots,\tilde{OF}{ep}}{ over~ start_ARG italic_O italic_F end_ARG start_POSTSUBSCRIPT italic_e 1 end_POSTSUBSCRIPT , over~ start_ARG italic_O italic_F end_ARG start_POSTSUBSCRIPT italic_e 2 end_POSTSUBSCRIPT , … , over~ start_ARG italic_O italic_F end_ARG start_POSTSUBSCRIPT italic_e italic_p end_POSTSUBSCRIPT }, where each e 𝑒 e italic_e denotes a key event in the video. The peaks are determined based on a predefined minimum distance between each other and prominence. Then we locate the corresponding frames {f e1,f e2,…,f ep}subscript 𝑓 𝑒 1 subscript 𝑓 𝑒 2…subscript 𝑓 𝑒 𝑝{f{e1},f_{e2},\ldots,f_{ep}}{ italic_f start_POSTSUBSCRIPT italic_e 1 end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_e 2 end_POSTSUBSCRIPT , … , italic_f start_POSTSUBSCRIPT italic_e italic_p end_POSTSUBSCRIPT } of the p 𝑝 p italic_p peaks, and each event is centered around its peak frame, spanning a duration of 2d 2 𝑑 2d 2 italic_d frames as {f ei−d,…,f ei,…,f ei+d}for i=1,2,…,p formulae-sequence subscript 𝑓 𝑒 𝑖 𝑑…subscript 𝑓 𝑒 𝑖…subscript 𝑓 𝑒 𝑖 𝑑 for 𝑖 1 2…𝑝{f_{ei-d},\ldots,f_{ei},\ldots,f_{ei+d}}\quad\text{for}\quad i=1,2,\ldots,p{ italic_f start_POSTSUBSCRIPT italic_e italic_i - italic_d end_POSTSUBSCRIPT , … , italic_f start_POSTSUBSCRIPT italic_e italic_i end_POSTSUBSCRIPT , … , italic_f start_POSTSUBSCRIPT italic_e italic_i + italic_d end_POSTSUBSCRIPT } for italic_i = 1 , 2 , … , italic_p. We designate the 2d+1 2 𝑑 1 2d+1 2 italic_d + 1 frames of each event as event-related frames, while the remaining T−p×(2d+1)𝑇 𝑝 2 𝑑 1 T-p\times(2d+1)italic_T - italic_p × ( 2 italic_d + 1 ) non-event frames are collectively treated as a single “event”.

Given the higher likelihood of important information within the event-related frames, we assign a high-intensity sampling rate to them, while the non-event frames are sampled with a low-intensity rate. Let us consider a predefined number of frames to sample as N 𝑁 N italic_N, these N 𝑁 N italic_N frames are evenly distributed across all “events” e={e 1,e 2,…,e p+1}e subscript 𝑒 1 subscript 𝑒 2…subscript 𝑒 𝑝 1\textbf{e}={e_{1},e_{2},\ldots,e_{p+1}}e = { italic_e start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT , italic_e start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT , … , italic_e start_POSTSUBSCRIPT italic_p + 1 end_POSTSUBSCRIPT }, where we regard non-event as a single “event”. That is, we uniformly sample N p+1 𝑁 𝑝 1\frac{N}{p+1}divide start_ARG italic_N end_ARG start_ARG italic_p + 1 end_ARG frames from each event set, and more sampled frames are involved in the event set compared to the non-event set, thereby achieving varying sampling rates. This enables discriminative sampling based on event occurrence, ensuring an efficient allocation of resources to effectively capture the essential frames from the video.

3.1.2 Emotion-triggered Tube Selection

After sampling the informative frames that represent a video, we further select the essential regions of interest in the MLLM’s token space that are more likely to trigger human emotions, thereby achieving token-level stimuli awareness. This emotion-triggered tube selection module guides the MLLM’s focus on the stimulus regions, enhances interpretability, and reduces tokens, leading to a decrease in computational cost.

Figure 4: The process of emotion-triggered tube selection. Patch tokens from selected frames are reshaped according to the patch coordinates of the frames and are grouped into tubes of shape t×h×w 𝑡 ℎ 𝑤 t\times h\times w italic_t × italic_h × italic_w. The correlation score estimation determines the importance of each tube for VAR. Then, we select the Top-K tubes with the highest scores as the final video representation.

Inspired by patch selection in visual recognition[cordonnier2021differentiable], we formulate token selection as a Top-K problem. As illustrated in Fig.2, to identify emotional stimuli areas in the token space, we focus on the tokens of the visual tokens after the projector, denoted as k∈ℝ N×L×C k superscript ℝ 𝑁 𝐿 𝐶\textbf{k}\in\mathbb{R}^{N\times L\times C}k ∈ blackboard_R start_POSTSUPERSCRIPT italic_N × italic_L × italic_C end_POSTSUPERSCRIPT, where C 𝐶 C italic_C is the embedding dimension and L 𝐿 L italic_L is the number of tokens for each frame. We use a two-layer perceptron 𝒮 𝒮\mathcal{S}caligraphic_S to estimate correlation scores between each token and the output response, given by c=𝒮(k)∈ℝ N×L c 𝒮 k superscript ℝ 𝑁 𝐿\textbf{c}=\mathcal{S}(\textbf{k})\in\mathbb{R}^{N\times L}c = caligraphic_S ( k ) ∈ blackboard_R start_POSTSUPERSCRIPT italic_N × italic_L end_POSTSUPERSCRIPT. Next, we reshape the correlation scores c into a 3D volume c r∈ℝ N×L×L superscript c 𝑟 superscript ℝ 𝑁 𝐿 𝐿\textbf{c}^{r}\in\mathbb{R}^{N\times\sqrt{L}\times\sqrt{L}}c start_POSTSUPERSCRIPT italic_r end_POSTSUPERSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_N × square-root start_ARG italic_L end_ARG × square-root start_ARG italic_L end_ARG end_POSTSUPERSCRIPT according to the patch coordinates of the original input frames. This volume is split into tubes of shape t×h×w 𝑡 ℎ 𝑤 t\times h\times w italic_t × italic_h × italic_w, resulting in ((N−t d t+1)×(L−h d h+1)×(L−w d w+1))𝑁 𝑡 subscript 𝑑 𝑡 1 𝐿 ℎ subscript 𝑑 ℎ 1 𝐿 𝑤 subscript 𝑑 𝑤 1((\frac{N-t}{d_{t}}+1)\times(\frac{\sqrt{L}-h}{d_{h}}+1)\times(\frac{\sqrt{L}-% w}{d_{w}}+1))( ( divide start_ARG italic_N - italic_t end_ARG start_ARG italic_d start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT end_ARG + 1 ) × ( divide start_ARG square-root start_ARG italic_L end_ARG - italic_h end_ARG start_ARG italic_d start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT end_ARG + 1 ) × ( divide start_ARG square-root start_ARG italic_L end_ARG - italic_w end_ARG start_ARG italic_d start_POSTSUBSCRIPT italic_w end_POSTSUBSCRIPT end_ARG + 1 ) ) tubes, where (d t,d w,d h)subscript 𝑑 𝑡 subscript 𝑑 𝑤 subscript 𝑑 ℎ(d_{t},d_{w},d_{h})( italic_d start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_d start_POSTSUBSCRIPT italic_w end_POSTSUBSCRIPT , italic_d start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT ) denotes the stride shape. The token scores within each tube are averaged to obtain each tube’s score, and then we select the Top-K tubes with the highest correlation scores as the final video representation sent to the following LLM.

This design differs from existing token selection approaches[wang2022efficient] that consider the temporal and spatial domains sequentially. In these approaches, temporal selection highly influences the final performance, especially after the frame sampling step. Missing essential frames that trigger emotions during the token selection process significantly reduces the chance of making a correct prediction. We provide empirical evidence in Fig.6 to support this claim. In contrast, our tube selection strategy groups tokens into tubes, ensuring that the selection is driven by both temporal and spatial information. This design preserves each frame’s intrinsic spatial structure and accounts for the entire video’s consistency and continuity. Furthermore, our tube selection allows for efficient utilization of computational resources while identifying key emotional stimuli in a video.

3.2 Affective Training

To fully integrate the proposed stimuli-aware mechanism into the MLLM backbone and to enhance the MLLM’s affective reasoning ability, we introduce an affective training protocol to fine-tune the MLLM.

3.2.1 VAR Visual Instruction Data Construction

To prepare effective affective training, we construct VAR-specific visual instruction data based on the training set of the raw Video Cognitive Empathy (VCE) dataset[mazeika2022would], a large-scale viewer-centered video dataset with fine-grained annotations. Detailed dataset information is provided in Sec.4.1. Given the strong capabilities of GPT[brown2020language, achiam2023gpt] for constructing logical reasoning across concepts, we leverage it to generate instructions with causal relationships between videos and the emotions they evoke.

Due to GPT’s limitation in directly processing large-scale video data, we first employ a vision-language model[wang2023cogvlm] to generate captions for each sampled frame, structuring them sequentially in a format of “Frame 1 description: …; Frame 2 description: …”. GPT then processes these frame-level captions, incorporating temporal correlations to produce a coherent video-level caption. Compared to directly captioning videos in a single step using a video-oriented MLLM[Zhang2023VideoLLaMAAI, Lin2023VideoLLaVALU], this progressive summarization method captures video details and ensures frame-level temporal consistency, thereby mitigating hallucination.

Next, inspired by[zhang2024mm], we prompt GPT to generate a reasoning process of deriving the label from an input. Specifically, given the pairs of the video caption

{‘‘role’’:‘‘system’’,

‘‘content’’:Given the below(QUESTION,ANSWER)pair examples of emotion estimation,left fill-in the REASONING process which derives ANSWERS from QUESTIONS in three sentences.},

{‘‘role’’:‘‘user’’,

‘‘content’’:QUESTION:These are frame descriptions from a video.After reading the descriptions,how people might emotionally feel about the content and why.Only provide the one most likely emotion.

ANSWER:The viewer feels.

REASONING:Let’s think of step-by-step

3.2.2 Two-phase Training

Our affective training consists of two phases (see Fig.2). In the initial phase, we use the original training set of the VCE dataset with videos and emotional response label pairs {V,Y E}𝑉 subscript 𝑌 𝐸{V,Y_{E}}{ italic_V , italic_Y start_POSTSUBSCRIPT italic_E end_POSTSUBSCRIPT }, where only the projector θ ℱ proj subscript 𝜃 subscript ℱ 𝑝 𝑟 𝑜 𝑗\theta_{\mathcal{F}{proj}}italic_θ start_POSTSUBSCRIPT caligraphic_F start_POSTSUBSCRIPT italic_p italic_r italic_o italic_j end_POSTSUBSCRIPT end_POSTSUBSCRIPT of the MLLM is trainable, while keeping the pre-trained visual encoder ℱ v subscript ℱ 𝑣\mathcal{F}{v}caligraphic_F start_POSTSUBSCRIPT italic_v end_POSTSUBSCRIPT and LLM ℱ llm subscript ℱ 𝑙 𝑙 𝑚\mathcal{F}_{llm}caligraphic_F start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT frozen, as follows:

min θ ℱ projℒ(ℱ(V,P;θ ℱ v,θ ℱ proj,θ ℱ llm),Y E),subscript subscript 𝜃 subscript ℱ 𝑝 𝑟 𝑜 𝑗 ℒ ℱ 𝑉 𝑃 subscript 𝜃 subscript ℱ 𝑣 subscript 𝜃 subscript ℱ 𝑝 𝑟 𝑜 𝑗 subscript 𝜃 subscript ℱ 𝑙 𝑙 𝑚 subscript 𝑌 𝐸\min_{\theta_{\mathcal{F}{proj}}}\mathcal{L}(\mathcal{F}(V,P;\theta{\mathcal% {F}{v}},\theta{\mathcal{F}{proj}},\theta{\mathcal{F}{llm}}),Y{E}),roman_min start_POSTSUBSCRIPT italic_θ start_POSTSUBSCRIPT caligraphic_F start_POSTSUBSCRIPT italic_p italic_r italic_o italic_j end_POSTSUBSCRIPT end_POSTSUBSCRIPT end_POSTSUBSCRIPT caligraphic_L ( caligraphic_F ( italic_V , italic_P ; italic_θ start_POSTSUBSCRIPT caligraphic_F start_POSTSUBSCRIPT italic_v end_POSTSUBSCRIPT end_POSTSUBSCRIPT , italic_θ start_POSTSUBSCRIPT caligraphic_F start_POSTSUBSCRIPT italic_p italic_r italic_o italic_j end_POSTSUBSCRIPT end_POSTSUBSCRIPT , italic_θ start_POSTSUBSCRIPT caligraphic_F start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT end_POSTSUBSCRIPT ) , italic_Y start_POSTSUBSCRIPT italic_E end_POSTSUBSCRIPT ) ,(5)

where ℒ ℒ\mathcal{L}caligraphic_L is the cross-entropy loss. During this phase, the projector aligns the visual features extracted by the pre-trained visual encoder with the pre-trained LLM word embeddings and learns the correlation between videos and the emotions they trigger, supervised by the ground-truth emotion annotations Y E subscript 𝑌 𝐸 Y_{E}italic_Y start_POSTSUBSCRIPT italic_E end_POSTSUBSCRIPT.

In the second phase, we enhance the MLLM’s ability to offer plausible reasoning for its emotional predictions through visual instruction tuning[xie2024emovit, liu2024visual, Dai2023InstructBLIPTG]. We use our VAR instruction data {V,Y E,Y R}𝑉 subscript 𝑌 𝐸 subscript 𝑌 𝑅{V,Y_{E},Y_{R}}{ italic_V , italic_Y start_POSTSUBSCRIPT italic_E end_POSTSUBSCRIPT , italic_Y start_POSTSUBSCRIPT italic_R end_POSTSUBSCRIPT } (based on the original training set of the VCE dataset {V,Y E}𝑉 subscript 𝑌 𝐸{V,Y_{E}}{ italic_V , italic_Y start_POSTSUBSCRIPT italic_E end_POSTSUBSCRIPT }) constructed in Sec.3.2.1 to train the proposed emotion-triggered tube selection module θ tube subscript 𝜃 𝑡 𝑢 𝑏 𝑒\theta_{tube}italic_θ start_POSTSUBSCRIPT italic_t italic_u italic_b italic_e end_POSTSUBSCRIPT and fine-tune the projector θ ℱ proj subscript 𝜃 subscript ℱ 𝑝 𝑟 𝑜 𝑗\theta_{\mathcal{F}{proj}}italic_θ start_POSTSUBSCRIPT caligraphic_F start_POSTSUBSCRIPT italic_p italic_r italic_o italic_j end_POSTSUBSCRIPT end_POSTSUBSCRIPT and the LLM (using LoRA[hu2022lora]) θ ℱ llm lora subscript superscript 𝜃 𝑙 𝑜 𝑟 𝑎 subscript ℱ 𝑙 𝑙 𝑚\theta^{lora}{\mathcal{F}_{llm}}italic_θ start_POSTSUPERSCRIPT italic_l italic_o italic_r italic_a end_POSTSUPERSCRIPT start_POSTSUBSCRIPT caligraphic_F start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT end_POSTSUBSCRIPT. The training objective is formulated as follows:

min θ tuneℒ(ℱ(V,P;θ ℱ v,θ ℱ proj,θ tube,θ ℱ llm),{Y E,Y R}),θ tune={θ ℱ proj,θ tube,θ ℱ llm lora},subscript subscript 𝜃 𝑡 𝑢 𝑛 𝑒 ℒ ℱ 𝑉 𝑃 subscript 𝜃 subscript ℱ 𝑣 subscript 𝜃 subscript ℱ 𝑝 𝑟 𝑜 𝑗 subscript 𝜃 𝑡 𝑢 𝑏 𝑒 subscript 𝜃 subscript ℱ 𝑙 𝑙 𝑚 subscript 𝑌 𝐸 subscript 𝑌 𝑅 subscript 𝜃 𝑡 𝑢 𝑛 𝑒 subscript 𝜃 subscript ℱ 𝑝 𝑟 𝑜 𝑗 subscript 𝜃 𝑡 𝑢 𝑏 𝑒 subscript superscript 𝜃 𝑙 𝑜 𝑟 𝑎 subscript ℱ 𝑙 𝑙 𝑚\min_{\theta_{tune}}\mathcal{L}(\mathcal{F}(V,P;\theta_{\mathcal{F}{v}},% \theta{\mathcal{F}{proj}},\theta{tube},\theta_{\mathcal{F}{llm}}),{Y{E},% Y_{R}}),\quad\theta_{tune}={\theta_{\mathcal{F}{proj}},\theta{tube},\theta% ^{lora}{\mathcal{F}{llm}}},roman_min start_POSTSUBSCRIPT italic_θ start_POSTSUBSCRIPT italic_t italic_u italic_n italic_e end_POSTSUBSCRIPT end_POSTSUBSCRIPT caligraphic_L ( caligraphic_F ( italic_V , italic_P ; italic_θ start_POSTSUBSCRIPT caligraphic_F start_POSTSUBSCRIPT italic_v end_POSTSUBSCRIPT end_POSTSUBSCRIPT , italic_θ start_POSTSUBSCRIPT caligraphic_F start_POSTSUBSCRIPT italic_p italic_r italic_o italic_j end_POSTSUBSCRIPT end_POSTSUBSCRIPT , italic_θ start_POSTSUBSCRIPT italic_t italic_u italic_b italic_e end_POSTSUBSCRIPT , italic_θ start_POSTSUBSCRIPT caligraphic_F start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT end_POSTSUBSCRIPT ) , { italic_Y start_POSTSUBSCRIPT italic_E end_POSTSUBSCRIPT , italic_Y start_POSTSUBSCRIPT italic_R end_POSTSUBSCRIPT } ) , italic_θ start_POSTSUBSCRIPT italic_t italic_u italic_n italic_e end_POSTSUBSCRIPT = { italic_θ start_POSTSUBSCRIPT caligraphic_F start_POSTSUBSCRIPT italic_p italic_r italic_o italic_j end_POSTSUBSCRIPT end_POSTSUBSCRIPT , italic_θ start_POSTSUBSCRIPT italic_t italic_u italic_b italic_e end_POSTSUBSCRIPT , italic_θ start_POSTSUPERSCRIPT italic_l italic_o italic_r italic_a end_POSTSUPERSCRIPT start_POSTSUBSCRIPT caligraphic_F start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT end_POSTSUBSCRIPT } ,(6)

where ℒ ℒ\mathcal{L}caligraphic_L is the cross-entropy loss. This fine-tuning enhances the model’s affective reasoning capabilities for its emotional predictions. Furthermore, the emotion-triggered tube selection module strengthens the link between visual tokens of stimuli and the elements referenced in the reasoning, while also filtering out irrelevant visual tokens, reducing noise, improving decision-making, and enhancing the model’s efficiency.

Our two-phase affective training protocol directs the MLLM’s reasoning strengths and commonsense knowledge towards an emotional focus, enabling the MLLM to offer plausible explanations for its affective understanding.

4 Experiments

4.1 Experimental Setup

Datasets. We evaluate our method on three viewer-centered VEA datasets. Video Cognitive Empathy (VCE)[mazeika2022would] contains 61,046 videos with 27 fine-grained emotional responses[cowen2017self] manually annotated. The dataset is divided into 50,000 videos for training and 11,046 for testing, with each video averaging 14.1 seconds in length. This dataset purposely removes audio cues to focus solely on visual information. VideoEmotion-8 (VE-8)[jiang2014predicting] comprises 1,101 user-generated videos sourced from YouTube and Flickr. Each video is categorized into one of eight emotions from Plutchik’s Wheel of Emotions[plutchik1980general] and has an average duration of around 107 seconds. YouTube/Flickr-EkmanSix (YF-6)[xu2016heterogeneous] shares the same video source as VE-8 but expands the size to 1,637, categorized into Ekman’s six basic emotions[ekman1971constants]. Since our VAR instruction data is constructed using the training set of VCE, we employ the VCE test set for in-domain evaluation while using VE-8 and YF-6 as out-of-domain datasets to assess our method’s effectiveness and generalization capabilities.

Evaluation metrics. We follow the official metrics used by the VCE, VE-8 and YF-6 datasets. VCE utilizes the Top-3 accuracy, while VE-8 and YF-6 evaluate the Top-1 accuracy. Additionally, we provide a comprehensive protocol with extensive metrics to evaluate the reasoning quality for the VAR problem. All the metrics are reference-free since existing viewer-centered VEA datasets[mazeika2022would, jiang2014predicting] do not contain ground-truth reasoning. These metrics are described as follows:

- [(i)]

- 1.Emotional-alignment (Emo-align)[achlioptas2021artemis] uses GPT-3.5[brown2020language] as a text-to-emotion classifier C E|R subscript 𝐶 conditional 𝐸 𝑅 C_{E|R}italic_C start_POSTSUBSCRIPT italic_E | italic_R end_POSTSUBSCRIPT that measures the accuracy (%) of the emotion predicted from reasoning text. This metric evaluates if the output reasoning R 𝑅 R italic_R evokes the same emotion E 𝐸 E italic_E as the ground truth Y E subscript 𝑌 𝐸 Y_{E}italic_Y start_POSTSUBSCRIPT italic_E end_POSTSUBSCRIPT.

- 2.Doubly-right {RR, RW, WR, WW}[mao2023doubly] jointly considers the accuracy of prediction and reasoning, where RR denotes the percentage (%) of Right emotional prediction with Right reasoning, RW denotes Right prediction with Wrong reasoning, WR denotes Wrong prediction with Right reasoning, and WW denotes Wrong prediction with Wrong reasoning. Here the correctness of reasoning is defined by Emo-align. We desire a high accuracy of RR (the best is 100%) and low percentages of RW, WR and WW (the best is 0%). The sum of {RR, RW, WR, WW} is 100%.

- 3.CLIPScore (CLIP-S)[hessel2021clipscore] uses CLIP[radford2021learning] to measure the compatibility between input videos and output reasoning in feature space. A higher score indicates better video-reasoning compatibility.

- 4.LLM-as-a-judge[zheng2024judging] uses GPT-3.5[brown2020language] and Claude 3.5[anthropic2024claude] as a judge respectively to rate the quality of reasoning. We set the score as an integer within a range of [1,4]1 4[1,4][ 1 , 4 ]. In addition to average scores, we also report the 1-vs.-1 (StimuVAR vs. a baseline) comparison results showing the number of test data where StimuVAR wins, loses or ties.

Baseline approaches. To our knowledge, StimuVAR may be the first method for the viewer-centered VAR problem. Hence, we broadly compare it with traditional zero-shot approaches[mazeika2022would, radford2021learning], traditional emotion models[tran2018closer, sharir2021image, tong2022videomae, pu2023going], state-of-the-art video-oriented MLLMs[Zhang2023VideoLLaMAAI, Lin2023VideoLLaVALU, luo2023valley, Maaz2023VideoChatGPTTD, li2024mvbench, Jin_2024_CVPR, Ye_2024_CVPR], and a long-video MLLM, LongVLM[weng2024longvlm]. The traditional emotion models are specifically trained for emotion tasks, but they cannot offer the rationales behind their predictions. We also consider EmoVIT[xie2024emovit], a state-of-the-art emotion MLLM for image data, and we extend it to videos for comparison.

Implementation details. The MLLM backbone uses CLIP ViT-L/14[radford2021learning, dosovitskiy2021image] as the visual encoder, Llama2-7b[touvron2023llama] as the LLM, and a linear layer as the projector. For VAR instruction data creation, we employ CogVLM-17B[wang2023cogvlm] to generate captions for each selected frame and GPT-4[achiam2023gpt] to aggregate these frame-level captions into a video caption. GPT-3.5[brown2020language] is then used to generate causal connections between the videos and the emotions they trigger. In event-driven frame sampling, we set N=6 𝑁 6 N=6 italic_N = 6 as the default number of sampled frames. The emotion-triggered tube selection module refines the tokens after the projector, retaining the top K=4 𝐾 4 K=4 italic_K = 4 tubes with shape 2×4×4 2 4 4 2\times 4\times 4 2 × 4 × 4 as spatial tokens and as temporal tokens to represent a video. The model is trained using the AdamW optimizer[loshchilov2019decoupled] with a learning rate of 5×10−5 5 superscript 10 5 5\times 10^{-5}5 × 10 start_POSTSUPERSCRIPT - 5 end_POSTSUPERSCRIPT and a batch size of 1 1 1 1, through 2 2 2 2 and 3 3 3 3 epochs in the phase I and II of affective training, respectively.

Table 1: Quantitative comparison on the VCE dataset.

Method Venue Top-3 Emo-align RR RW WR WW CLIP-S Traditional CLIP[radford2021learning]ICML’21 28.4------ Majority[mazeika2022would]NeurIPS’22 35.7------ R(2+1)D[tran2018closer]CVPR’18 65.6------ STAM[sharir2021image]arXiv’21 66.4------ VideoMAE[tong2022videomae]NeurIPS’22 68.9------ MM-VEMA[pu2023going]PRCV’23 73.3------ MLLM Video-LLaMA[Zhang2023VideoLLaMAAI]EMNLP’23 26.4 25.5 16.2 10.2 9.3 64.3 63.9 Video-LLaVA[Lin2023VideoLLaVALU]arXiv’23 25.0 31.2 17.5 7.5 13.7 61.3 70.6 Valley[luo2023valley]arXiv’23 31.3 29.4 19.2 12.1 10.2 58.5 69.4 Video-ChatGPT[Maaz2023VideoChatGPTTD]ACL’24 21.0 29.5 11.4 9.5 18.1 61.0 68.9 VideoChat2[li2024mvbench]CVPR’24 31.1 36.4 24.0 7.1 12.4 56.5 68.6 Chat-UniVi[Jin_2024_CVPR]CVPR’24 38.6 29.5 21.0 17.6 8.5 52.9 70.2 mPLUG-Owl[Ye_2024_CVPR]CVPR’24 23.6 22.1 13.8 9.7 8.3 68.2 69.3 EmoVIT[xie2024emovit]CVPR’24 10.5 5.2 4.8 5.7 0.4 89.1 48.9 LongVLM[weng2024longvlm]ECCV’24 20.9 19.7 6.9 13.9 12.8 66.4 64.3 StimuVAR (Ours)73.5 69.6 68.8 4.7 0.8 25.6 75.3

Table 2: LLM-as-a-judge comparison on the VCE and VE-8 datasets. Due to GPT’s query limitation, 100 test videos are randomly selected for evaluation. “Win/Lose/Tie” denotes the number of samples that StimuVAR wins/loses/ties against each competitor in a 1-vs.-1 comparison. “Score” denotes the average score on the 100 test samples.

4.2 Main Results

Table 1 reports the results on the VCE dataset. Traditional emotion models generally outperform MLLMs in prediction accuracy since they are specifically trained for emotion tasks. However, these traditional models are not able to provide explanations for their predictions. In contrast, MLLMs have a significant reasoning capability. Compared to existing video-oriented MLLMs, the proposed StimuVAR demonstrates superiority in the VAR task, both in predicting viewers’ emotional responses and offering rationales behind its predictions. We can observe that StimuVAR achieves 69.6% in Emo-align, which is double the performance of most MLLM baselines. It also has 68.8% in RR, which shows consistency between emotional predictions and reasoning. StimuVAR’s highest CLIP-S score indicates a better correspondence between input videos and output reasoning, suggesting that its reasoning closely adheres to the visual cues and covers details. Notably, while EmoVIT[xie2024emovit] is an MLLM specifically trained with emotion knowledge, it does not perform well on viewer-centered VEA datasets. The reason may be that EmoVIT prioritizes image attributes, whereas video data require temporal attention. This validates the importance of a spatiotemporal stimuli-aware mechanism. Table 2 presents the LLM-as-a-judge results using GPT. Due to GPT’s query limitation, we randomly select 100 test videos for this metric. StimuVAR achieves the highest GPT rating scores and better winning percentages against all competitors in 1-vs.-1 comparisons, demonstrating its coherent and insightful explanations. The results using Claude 3.5 as a judge are reported in the Supplementary Material.

Table 2 and Table 3 present the results on the VE-8 dataset. To test the generalizability of StimuVAR, we evaluate the model trained with VCE data without fine-tuning on VE-8. Although VE-8 has a different emotion categorization, lower video quality, and nearly eight times longer video duration, StimuVAR again outperforms all the MLLM baselines. Furthermore, this performance is achieved by sampling only six frames, making the computational efficiency more significant for these long videos. Table 4 shows a performance ranking in the YF-6 dataset similar to that of VE-8, where StimuVAR achieves the highest Top-1 accuracy of 46.2%. These results demonstrate that the proposed emotional stimuli awareness has high generalizability and can efficiently reduce computational costs. Overall, StimuVAR consistently achieves the best scores across all the metrics on both datasets.

Table 3: Quantitative comparison on the VE-8 dataset.

Table 4: Quantitative comparison on the YF-6 dataset.

Figure 5: Output responses of different MLLMs. Compared to the baselines, StimuVAR accurately captures the scenes, activities and emotional stimuli, and then connects these elements with viewers’ emotional reactions. Relevant and irrelevant words are colored.

Qualitative results.Fig.5 provides qualitative results of two examples. We can see that the responses generated by our StimuVAR successfully capture the key stimuli events (e.g., “being involved in an accident with a bicyclist” and “unexpected and thrilling motorcycle stunts and tricks”) and offer causal connections between the stimuli and viewers’ emotional reactions (e.g., “accident → injury → sense of sympathy” and “motorcycle tricks → sense of astonishment”). In contrast, other MLLM baselines fail to capture the accidents that have a strong emotional intensity, resulting in incorrect predictions. This shows the importance of the proposed stimuli-aware mechanisms.

4.3 Ablation Study

In this section, we look into how the proposed mechanisms affect StimuVAR. We conduct experiments on the VCE dataset[mazeika2022would].

Table 5: Left: Ablation on the proposed strategy design. Right: Ablation on temporal aggregation design. #Tokens denotes the number of spatial tokens. Results are on the VCE dataset. Both Top-1 and Top-3 accuracy are reported.

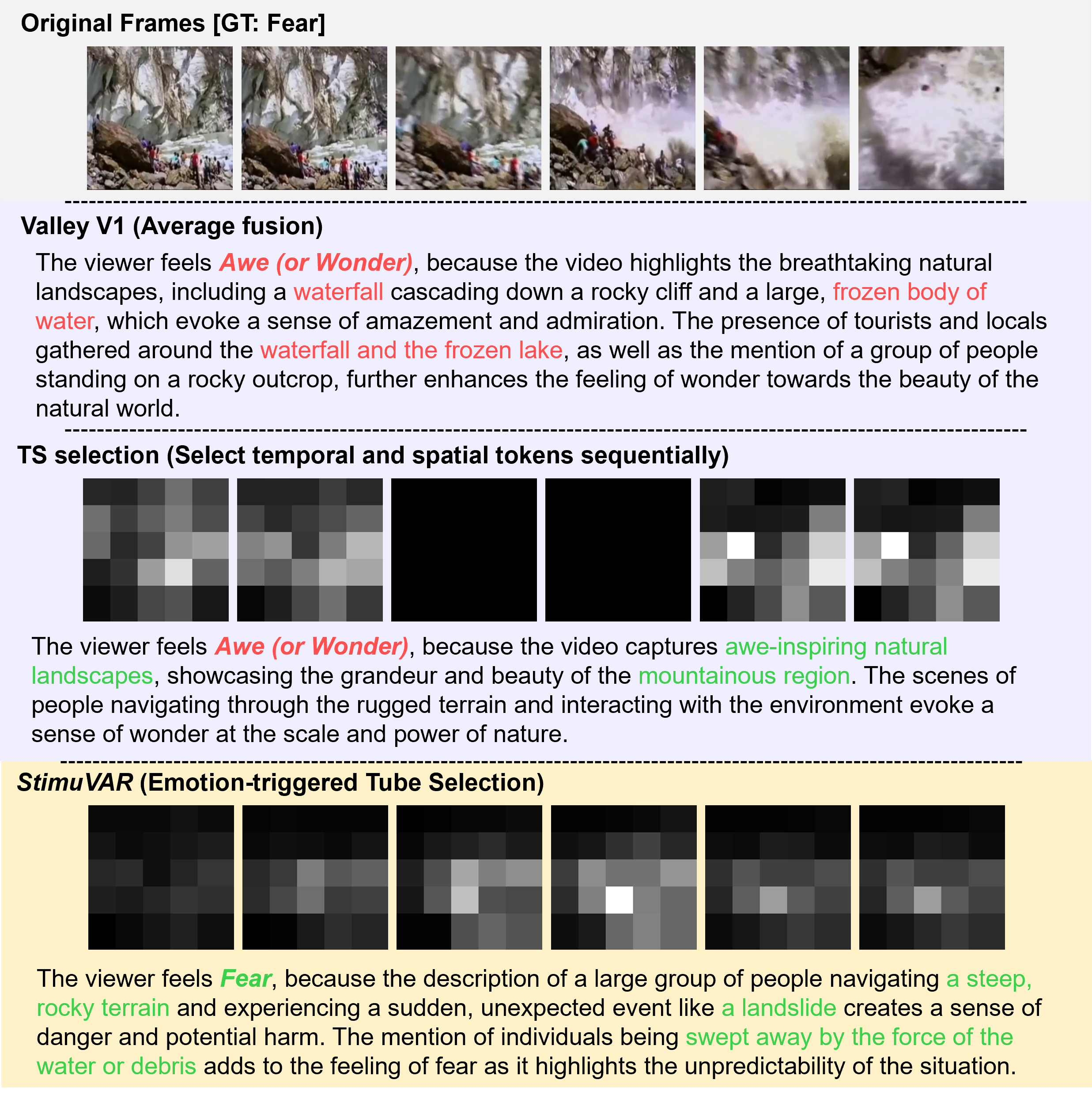

Figure 6: Comparison of tube score maps and output responses of different temporal aggregation designs. Valley[luo2023valley] often hallucinates objects that do not appear in a video. TS Selection[wang2022efficient] could be easily misled by its inaccurate temporal selection. In contrast, our emotion-triggered tube selection simultaneously considers both spatial and temporal information, resulting in the accurate selection of emotion-relevant tokens. Relevant and irrelevant words are colored.

Strategy design. In this work, we propose three key components: event-driven frame sampling, emotion-triggered tube selection, and affective training. The ablation results are shown in Table 5 (Left). We define a Vanilla model that utilizes the token along with averaged tokens to represent a video, and it is trained by only the initial phase of affective training. This baseline obtains 44.5% Top-1 and 68.4% Top-3 accuracy. Adding our event-driven frame sampling strategy improves Top-1/Top-3 accuracy by 1.5%/2.5%, demonstrating the effectiveness of localizing frame-level temporal stimuli. This positive trend continues with the inclusion of our VAR visual instruction tuning (i.e., the second phase of affective training), which increases Top-1/Top-3 accuracy by 1.3%/1.6%. The VAR visual instruction tuning enhances the connections between stimuli and viewers’ emotions through the generated reasoning, achieving deeper affective understanding. Finally, further integrating the emotion-triggered tube selection module (i.e., the final StimuVAR, integrating all three components) achieves the highest performance, reaching 48.3%/73.5% Top-1/Top-3 accuracy. This improvement is due to the identification of spatiotemporal stimuli in the token space. These results demonstrate the effectiveness of all three proposed strategies.

Temporal aggregation design. Due to the computational cost of attention increasing with increasing numbers of visual tokens, it is challenging for MLLMs to encode videos effectively and efficiently. Valley[luo2023valley] introduces three variants of temporal aggregation to encode video representations: (V1) averages the tokens; (V2) computes the weighted sum of the tokens; and (V3) combines the averaged tokens with the dynamic information extracted by an additional transformer layer on the tokens. Wang et al.[wang2022efficient] design a token selection module for efficient temporal aggregation, which considers temporal and spatial dimensions sequentially to select tokens; we name it TS Selection. The proposed StimuVAR uses emotion-triggered tube selection for temporal aggregation, considering spatiotemporal information simultaneously. In this experiment, we replace StimuVAR’s tube selection with these baseline temporal aggregation designs to compare their effectiveness. As shown in Table 5 (Right), StimuVAR’s emotion-triggered tube selection outperforms Valley V1 - V3. This is because Valley V1 - V3 fuse spatial features in the temporal dimension, which is prone to producing objects not appearing in the video in the feature domain, causing visual hallucinations. StimuVAR also outperforms TS selection, demonstrating the superiority of the spatiotemporal design of our tube selection. Fig.6 illustrates the hallucination issue of Valley V1 - V3. Specifically, the fused features incorrectly produced objects like ‘fozen body of water’, ‘frozen lake’ and ‘waterfall’, which are not present in the video. On the other hand, TS Selection relies heavily on temporal selection. As shown in the middle of Fig.6, if frames containing emotion-related events are mistakenly filtered out by temporal selection, the final output would be misled. In contrast, StimuVAR uses tubes as the minimal unit of selection, mitigating such issues. Our method achieves higher performance while reducing the number of tokens to enhance efficiency.

Fine-tune state-of-the-art video-oriented MLLMs using VAR instruction data. To exclusively assess the contributions of our proposed VAR instruction data, we use our VAR instruction data to fine-tune the three best-performing MLLMs in Table 1: Chat-UniVi[Jin_2024_CVPR], Valley[luo2023valley], and VideoChat2[li2024mvbench], where we follow their official fine-tuning guidelines for each model. We evaluate them on the VCE, VE-8 and YF-6 datasets, using the same evaluation metrics described in Sec.4.1. As can be seen in Table 6, fine-tuning using our VAR instruction data consistently improves the performance of all three MLLMs on all three datasets. This highlights the quality and effectiveness of the proposed VAR instruction data in enhancing MLLMs’ affective reasoning capabilities. On the other hand, compared to these fine-tuned MLLMs, our StimuVAR still consistently demonstrates clear superiority. This highlights the advantages of the proposed model design. Overall, these results demonstrate that both the proposed VAR instruction data and model design are effective, playing their roles in contributing to excellent performance.

Table 6: Quantitative comparison of state-of-the-art video-oriented MLLMs, with and without fine-tuned on our proposed VAR instruction data.

Number of selections in stimuli awareness. We explore varying numbers of frames to sample and tubes to select in our event-driven frame sampling and emotion-triggered tube selection strategies, respectively. As shown in Fig.7, initially increasing the numbers of selected frames N 𝑁 N italic_N and tubes K 𝐾 K italic_K improves performance. Specifically, the Top-3 accuracy increases from 69.9% to 73.5% with three more frames (from N=3 𝑁 3 N=3 italic_N = 3 to N=6 𝑁 6 N=6 italic_N = 6), and there is an improvement from 71.8% to 73.5% when we select the top K=4 𝐾 4 K=4 italic_K = 4 tubes compared to K=1 𝐾 1 K=1 italic_K = 1. These increases are attributed to the additional information available, which allows the model to gain a more comprehensive affective understanding of the videos. However, when we continue to increase the number of frames and tubes, the performance begins to decline. The Top-3 accuracy decreases to 72.9% when N=12 𝑁 12 N=12 italic_N = 12 frames are sampled, and it also drops to 71.0% when we double the tubes to K=8 𝐾 8 K=8 italic_K = 8. This decline is due to redundant information from excessive frames or tokens, which distracts the model from emotional stimuli. Still, StimuVAR consistently outperforms the MLLM baselines (as presented in Table 1) with these different N 𝑁 N italic_N and K 𝐾 K italic_K, demonstrating that the proposed strategies are not very sensitive to hyperparameter choices or computational budgets. Regarding computational cost, as expected, increasing the number of selected frames N 𝑁 N italic_N and tubes K 𝐾 K italic_K results in longer inference times (about linearly). This highlights the importance and effectiveness of the proposed event-driven frame sampling and emotion-triggered tube selection strategies, which can improve both accuracy and computational efficiency.

Figure 7: Ablation on the number of selected frames (left) and tubes (right) for accuracy and inference time on the VCE dataset.

Table 7: Ablation on the property of event-driven frame sampling on the VCE dataset. Top-3 accuracy is reported.

Test set Surprise subset

Xet Storage Details

- Size:

- 63 kB

- Xet hash:

- 0f87f2055dd3cc505b59bb4cd7b147b025379aa4f8f463b9801c2f704d031f58

Xet efficiently stores files, intelligently splitting them into unique chunks and accelerating uploads and downloads. More info.