Buckets:

Title: Fine-grained Spatial-Temporal Perception for Gas Leak Segmentation

URL Source: https://arxiv.org/html/2505.00295

Markdown Content:

Abstract

Gas leaks pose significant risks to human health and the environment. Despite long-standing concerns, there are limited methods that can efficiently and accurately detect and segment leaks due to their concealed appearance and random shapes. In this paper, we propose a Fine-grained Spatial-Temporal Perception (FGSTP) algorithm for gas leak segmentation. FGSTP captures critical motion clues across frames and integrates them with refined object features in an end-to-end network. Specifically, we first construct a correlation volume to capture motion information between consecutive frames. Then, the fine-grained perception progressively refines the object-level features using previous outputs. Finally, a decoder is employed to optimize boundary segmentation. Because there is no highly precise labeled dataset for gas leak segmentation, we manually label a gas leak video dataset, GasVid. Experimental results on GasVid demonstrate that our model excels in segmenting non-rigid objects such as gas leaks, generating the most accurate mask compared to other state-of-the-art (SOTA) models.

Index Terms— Gas leak segmentation, non-rigid and blurry object segmentation, video surveillance system

1 Introduction

Gas leaks are unintended events that can occur due to damaged or faulty connections, corrosion, or improper installation. Whether it is natural gas, methane, or other hazardous gases, their escape into the atmosphere can lead to explosions, fires, and long-term health complications. Detecting gas leaks is essential for early intervention and mitigation of potential disasters. Traditional leak detection methods involve inspectors manually using instruments near potential leak areas to assess the status of the pipeline. These approaches are inefficient and hazardous to inspectors due to the potentially harmful gas. In contrast, video surveillance systems can provide accurate and real-time monitoring without human labor. Thus, designing a video processing algorithm to recognize leaks by analyzing motion patterns and shape variations is a better option for gas leak segmentation. Such algorithms can process continuous video streams in real-time, providing faster and safer detection compared to manual inspections.

Gas leaks possess characteristics distinct from common objects. They are colorless, odorless, and invisible to human senses. Although infrared cameras can visualize leaks [1], distinguishing them often remains challenging because of their non-rigid shape and blurry texture. In many cases, segmentation is only possible when the leak is in motion. For example, Lu et al. [2] proposes Gaussian-based background modeling for adaptive gas leak segmentation. However, this method faces significant limitations in industrial scenarios due to the irregular morphology of gas and the variability of the background. Common motion extraction method based on background subtraction and optical flow [3] fails due to the variable shape of the object and severe noise disturbances.

Recently, deep-learning techniques have shown robustness in object segmentation [4]. Fan et al. [5] first located coarse concealed objects and then refined them in the decoder. Wang et al. [6], based on YOLOv7 [7], demonstrates the capabilities in detecting visible non-rigid objects like dust. For those objects in RGB images, methods relying solely on spatial information may be effective. However, segmenting blurry objects in infrared cameras becomes challenging if models neglect motion information. Yan et al. [8] incorporates optical flow to learn motion features, and Yang et al. [3] employs unsupervised learning to generate pixel-level motion labels in CNNs. However, both approaches are susceptible to noise interference. Memory-based segmentation methods [9, 10, 11] struggle with the variety of gas leak shapes. Moreover, redundant memory significantly impacts segmentation performance. Cheng et al. [12] integrates boundary optimization and optical flow for motion analysis, showing potential for gas leak segmentation. However, it struggles to distinguish objects which are similar to leaks in texture, like clouds. To the best of our knowledge, few methods can effectively segment blurry and non-rigid objects, like gas leaks, in infrared videos.

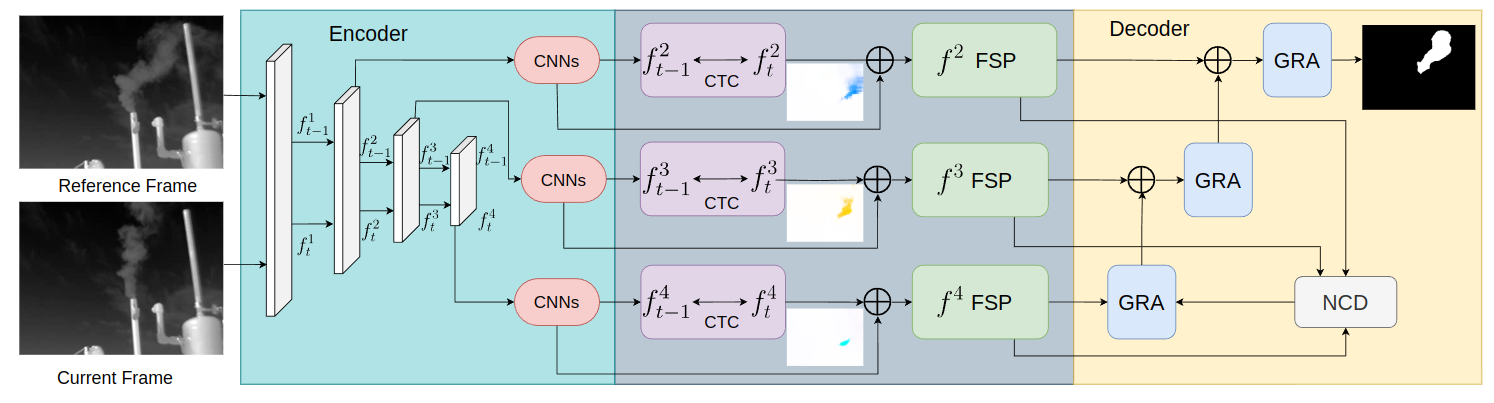

Fig.1: The architecture of FGSTP. The model processes every current frame along with an adjacent frame each time. Each current frame needs to be processed twice with two adjacent frames. The encoder denoises the input frame and extracts the multi-scale features, while the decoder optimizes boundaries through GRA and NCD modules. f i j superscript subscript 𝑓 𝑖 𝑗 f_{i}^{j}italic_f start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_j end_POSTSUPERSCRIPT denotes the feature of the ith frame and jth scale. The Consecutive Temporal Correlation (CTC) block captures motion information. f j superscript 𝑓 𝑗 f^{j}italic_f start_POSTSUPERSCRIPT italic_j end_POSTSUPERSCRIPT denotes the CTC output in the jth scale. The Fine-Grained Spatial Perception (FSP) module refines spatial features.

In this paper, we address these challenges by proposing a fine-grained spatial-temporal network for non-rigid, blurry object segmentation in infrared video. We adopt a multi-scale transformer-based [13] encoder to mitigate video noise. To extract representative motion features of gas leaks, we refine relevant motion clues across multiple scales using a proposed Consecutive Temporal Correlation module (CTC). We also propose the Fine-grained Spatial Perception module (FSP) to capture spatial features by combining the motion features with encoder features and distilling informative semantic features of leaks. Besides, boundary determination is critical yet challenging for polymorphic objects, as their lack of clear boundaries makes them blend with the background. Our decoder can optimize boundaries from coarse prediction to accurate mask. We conduct experiments on the GasVid [1] dataset, for which we manually annotate gas leak regions frame by frame to ensure the accurate representation of leak area and effective model training. In summary, our contributions include: (1) We propose an end-to-end architecture that is specifically to segment non-rigid and blurry objects in infrared videos; (2) We design CTC and FSP modules to obtain key motion clues and perceive shapes or boundary features from encoder output.

The paper is organized as follows: Section 2 introduces our FGSTP framework. Section 3 presents experimental results on the GasVid dataset. Section 4 concludes our work.

2 Method

2.1 Framework Overview

Fig.1 shows the architecture of FGSTP. The model consists of four components: (1) Transformer-based encoder denoises and obtains multi-scale features; (2) Consecutive Temporal Correlation (CTC) captures key motion; (3) Fine-grained Spatial Perception (FSP) refines spatial features through the residual connection between encoder features and CTC outputs; (4) The decoder optimizes predicted masks boundaries. FGSTP takes the inference frame with two adjacent frames as input to identify the location and shape of the target object. The model output is a binary mask of the inference frame.

FGSTP is a one-stage model and requires no additional auxiliary inputs. It is designed for segmenting blurry non-rigid objects like gas leaks. The shape of leaks change significantly in the short term. Our research finds that the two adjacent frames contain sufficient information for the inference frame in this kind of video object segmentation. Therefore our model just calculates 3 frames (two adjacent and one inference frames) each time. This operation not only reduces redundant computations but also helps FGSTP capture inter-frame differences more prominently.

2.2 FGSTP Architecture

(1) Encoder. The Pyramid Vision Transformer (PVT) [13] is the model backbone to extract the global features from three input frames. The encoder has four layers, which generate global features at different-scales. The size of features in each layer is C×H/2 i+1×W/2 i+1 𝐶 𝐻 superscript 2 𝑖 1 𝑊 superscript 2 𝑖 1 C\times H/2^{i+1}\times W/2^{i+1}italic_C × italic_H / 2 start_POSTSUPERSCRIPT italic_i + 1 end_POSTSUPERSCRIPT × italic_W / 2 start_POSTSUPERSCRIPT italic_i + 1 end_POSTSUPERSCRIPT, where i∈{1,2,3,4}𝑖 1 2 3 4 i\in{1,2,3,4}italic_i ∈ { 1 , 2 , 3 , 4 }, H,W,C 𝐻 𝑊 𝐶 H,W,C italic_H , italic_W , italic_C are height, width and channels. Previous work finds that the last three layers reserve more high-level semantic features with reduced dimensions [12]. Therefore we put the 2nd,3rd,2 𝑛 𝑑 3 𝑟 𝑑 2nd,3rd,2 italic_n italic_d , 3 italic_r italic_d , and 4th 4 𝑡 ℎ 4th 4 italic_t italic_h layers features into the multi-scale network to extract local features f t i superscript subscript 𝑓 𝑡 𝑖 f_{t}^{i}italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_i end_POSTSUPERSCRIPT (i∈{2,3,4}𝑖 2 3 4 i\in{2,3,4}italic_i ∈ { 2 , 3 , 4 }).

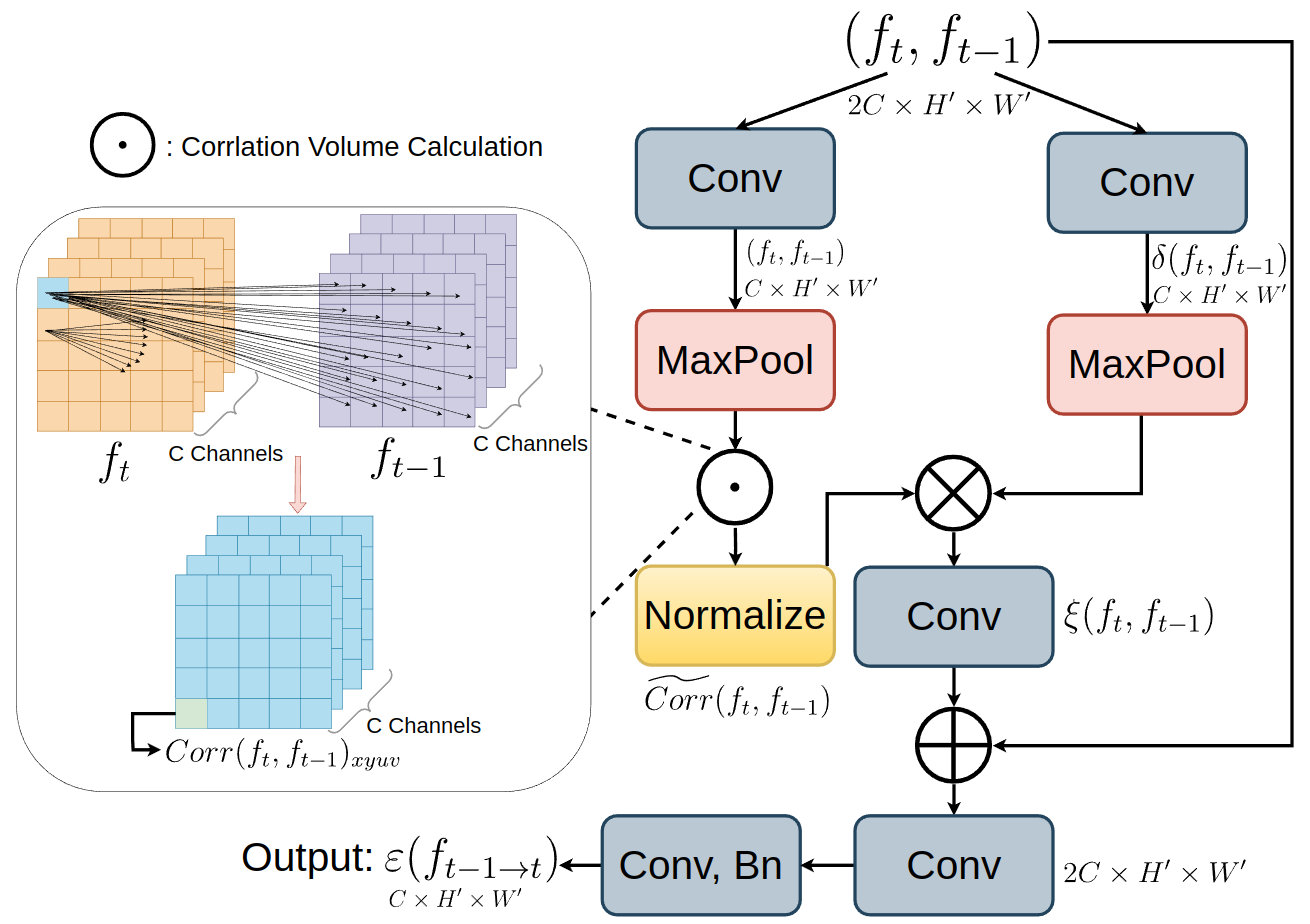

(2) Consecutive Temporal Correlation. The Consecutive Temporal Correlation (CTC) is to capture motion clues from consecutive frames. Different from reference [3] which needs the optical flow results as the network’s input, CTC is an embedded component in FGSTP. Inspired by [14], in each scales (i∈{2,3,4}𝑖 2 3 4 i\in{2,3,4}italic_i ∈ { 2 , 3 , 4 }), CTC computes temporal correspondence through the feature consistency between two consecutive frames. The structure of CTC is in Fig.2. Given the features of two frames from the encoder, (f t,f t−1)∈R 2C×H′×W′subscript 𝑓 𝑡 subscript 𝑓 𝑡 1 superscript 𝑅 2 𝐶 superscript 𝐻′superscript 𝑊′(f_{t},f_{t-1})\in R^{2C\times H^{\prime}\times W^{\prime}}( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT ) ∈ italic_R start_POSTSUPERSCRIPT 2 italic_C × italic_H start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT × italic_W start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT end_POSTSUPERSCRIPT, the 4D correlation volume Corr(f t,f t−1)∈R H′×W′×H′×W′𝐶 𝑜 𝑟 𝑟 subscript 𝑓 𝑡 subscript 𝑓 𝑡 1 superscript 𝑅 superscript 𝐻′superscript 𝑊′superscript 𝐻′superscript 𝑊′Corr(f_{t},f_{t-1})\in R^{H^{\prime}\times W^{\prime}\times H^{\prime}\times W% ^{\prime}}italic_C italic_o italic_r italic_r ( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT ) ∈ italic_R start_POSTSUPERSCRIPT italic_H start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT × italic_W start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT × italic_H start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT × italic_W start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT end_POSTSUPERSCRIPT can be calculated as follows:

Corr(f t,f t−1)xyuv=exp(∑c(f t)xyc⋅(f t−1)uvc)𝐶 𝑜 𝑟 𝑟 subscript subscript 𝑓 𝑡 subscript 𝑓 𝑡 1 𝑥 𝑦 𝑢 𝑣 subscript 𝑐⋅subscript subscript 𝑓 𝑡 𝑥 𝑦 𝑐 subscript subscript 𝑓 𝑡 1 𝑢 𝑣 𝑐\displaystyle Corr{(f_{t},f_{t-1})}{xyuv}=\exp\left(\sum\limits{c}{(f_{t})}% {xyc}\cdot{(f{t-1})}_{uvc}\right)italic_C italic_o italic_r italic_r ( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT ) start_POSTSUBSCRIPT italic_x italic_y italic_u italic_v end_POSTSUBSCRIPT = roman_exp ( ∑ start_POSTSUBSCRIPT italic_c end_POSTSUBSCRIPT ( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ) start_POSTSUBSCRIPT italic_x italic_y italic_c end_POSTSUBSCRIPT ⋅ ( italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT ) start_POSTSUBSCRIPT italic_u italic_v italic_c end_POSTSUBSCRIPT )(1)

where c is the channel index. When all features similarities are calculated between consecutive frames, the most related part between two frames can be found. Then normalization is applied to correlation volume Corr(f t,f t−1)xyuv 𝐶 𝑜 𝑟 𝑟 subscript subscript 𝑓 𝑡 subscript 𝑓 𝑡 1 𝑥 𝑦 𝑢 𝑣 Corr{(f_{t},f_{t-1})}_{xyuv}italic_C italic_o italic_r italic_r ( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT ) start_POSTSUBSCRIPT italic_x italic_y italic_u italic_v end_POSTSUBSCRIPT in the latter two dimensions (u,v)𝑢 𝑣(u,v)( italic_u , italic_v ) to obtain the corresponding part between reference frame and current frame. The normalization is as follows:

Corr(f t,f t−1)xyuv=Corr(f t,f t−1)xyuv∑u∑v Corr(f t,f t−1)xyuv𝐶 𝑜 𝑟 𝑟 subscript subscript 𝑓 𝑡 subscript 𝑓 𝑡 1 𝑥 𝑦 𝑢 𝑣 𝐶 𝑜 𝑟 𝑟 subscript subscript 𝑓 𝑡 subscript 𝑓 𝑡 1 𝑥 𝑦 𝑢 𝑣 subscript 𝑢 subscript 𝑣 𝐶 𝑜 𝑟 𝑟 subscript subscript 𝑓 𝑡 subscript 𝑓 𝑡 1 𝑥 𝑦 𝑢 𝑣\displaystyle\widetilde{Corr}(f_{t},f_{t-1}){xyuv}=\frac{Corr(f{t},f_{t-1})% {xyuv}}{\sum{u}\sum_{v}Corr(f_{t},f_{t-1})_{xyuv}}over~ start_ARG italic_C italic_o italic_r italic_r end_ARG ( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT ) start_POSTSUBSCRIPT italic_x italic_y italic_u italic_v end_POSTSUBSCRIPT = divide start_ARG italic_C italic_o italic_r italic_r ( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT ) start_POSTSUBSCRIPT italic_x italic_y italic_u italic_v end_POSTSUBSCRIPT end_ARG start_ARG ∑ start_POSTSUBSCRIPT italic_u end_POSTSUBSCRIPT ∑ start_POSTSUBSCRIPT italic_v end_POSTSUBSCRIPT italic_C italic_o italic_r italic_r ( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT ) start_POSTSUBSCRIPT italic_x italic_y italic_u italic_v end_POSTSUBSCRIPT end_ARG(2)

The normalized correlation volume is calculated by each channel between features of adjacent frames. It should be merged with the channel features δ(f t,f t−1)𝛿 subscript 𝑓 𝑡 subscript 𝑓 𝑡 1\delta(f_{t},f_{t-1})italic_δ ( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT ) to obtain the final temporal correlation. Therefore the channel features multiply normalized correlation and undergo series convolution layers and residual connections to get the final output ε(f t−1→t)𝜀 subscript 𝑓→𝑡 1 𝑡\varepsilon(f_{t-1\rightarrow t})italic_ε ( italic_f start_POSTSUBSCRIPT italic_t - 1 → italic_t end_POSTSUBSCRIPT ). The integration between channel-wise features and normalized correlation volume is as follows:

ξ(f t−1→t)=δ(f t,f t−1)⋅Corr(f t,f t−1)𝜉 subscript 𝑓→𝑡 1 𝑡⋅𝛿 subscript 𝑓 𝑡 subscript 𝑓 𝑡 1𝐶 𝑜 𝑟 𝑟 subscript 𝑓 𝑡 subscript 𝑓 𝑡 1\displaystyle\xi(f_{t-1\rightarrow t})=\delta(f_{t},f_{t-1})\cdot\widetilde{% Corr}(f_{t},f_{t-1})italic_ξ ( italic_f start_POSTSUBSCRIPT italic_t - 1 → italic_t end_POSTSUBSCRIPT ) = italic_δ ( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT ) ⋅ over~ start_ARG italic_C italic_o italic_r italic_r end_ARG ( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT )(3)

Fig.2: The structure of CTC. It computes 4D correlation volume Corr(f t,f t−1)𝐶 𝑜 𝑟 𝑟 subscript 𝑓 𝑡 subscript 𝑓 𝑡 1 Corr(f_{t},f_{t-1})italic_C italic_o italic_r italic_r ( italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT ) for motion information and integrates channel-wise semantics for precise motion alignment.

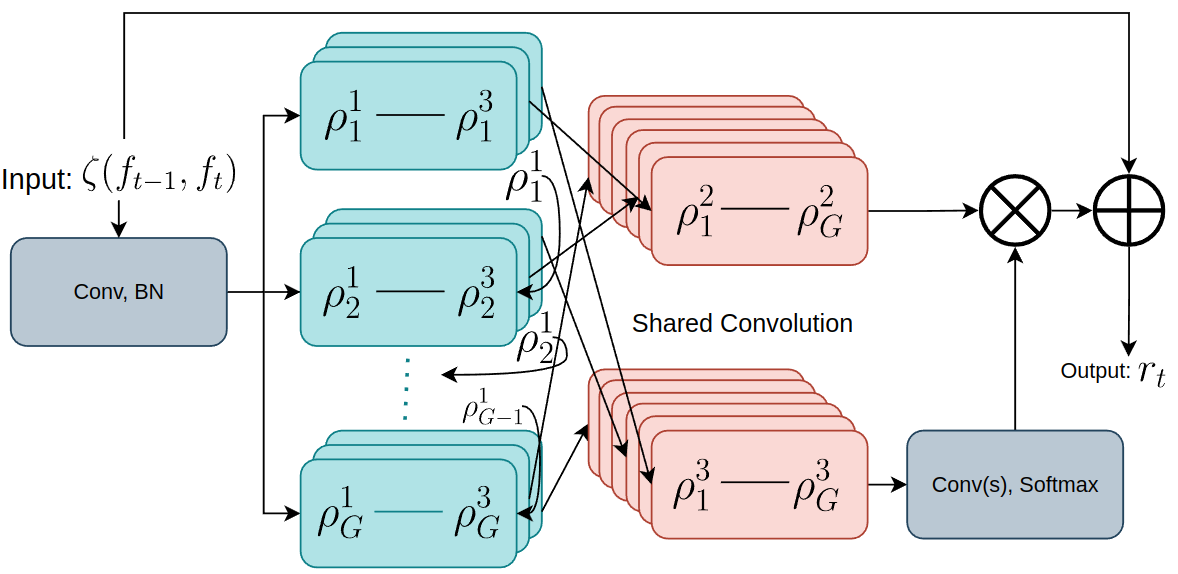

(3) Fine-grained Spatial Perception. The output of CTC contains only motion information. Relying solely on motion information is insufficient for gas leak segmentation, as it overlooks valuable details like shape and texture. To address this issue, inspired by [15], we propose Fine-grained Spatial Perception (FSP) to capture spatial features. The input of FSP ζ(f t−1,f t)𝜁 subscript 𝑓 𝑡 1 subscript 𝑓 𝑡\zeta(f_{t-1},f_{t})italic_ζ ( italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ) combines the CTC (ε(f t−1→t i)∈R C×H′×W′𝜀 superscript subscript 𝑓→𝑡 1 𝑡 𝑖 superscript 𝑅 𝐶 superscript 𝐻′superscript 𝑊′\varepsilon(f_{t-1\rightarrow t}^{i})\in R^{C\times H^{\prime}\times W^{\prime}}italic_ε ( italic_f start_POSTSUBSCRIPT italic_t - 1 → italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_i end_POSTSUPERSCRIPT ) ∈ italic_R start_POSTSUPERSCRIPT italic_C × italic_H start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT × italic_W start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT end_POSTSUPERSCRIPT) and multi-scale features f t i∈R C×H′×W′,i∈{2,3,4}formulae-sequence superscript subscript 𝑓 𝑡 𝑖 superscript 𝑅 𝐶 superscript 𝐻′superscript 𝑊′𝑖 2 3 4 f_{t}^{i}\in R^{C\times H^{\prime}\times W^{\prime}},i\in{2,3,4}italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_i end_POSTSUPERSCRIPT ∈ italic_R start_POSTSUPERSCRIPT italic_C × italic_H start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT × italic_W start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT end_POSTSUPERSCRIPT , italic_i ∈ { 2 , 3 , 4 } in encoder through residual connection. Fig. 3 is the structure of FSP.

In FSP, firstly the channel number of ζ(f t−1,f t)𝜁 subscript 𝑓 𝑡 1 subscript 𝑓 𝑡\zeta(f_{t-1},f_{t})italic_ζ ( italic_f start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ) is increased and then divided into G 𝐺 G italic_G groups along the channel dimension, where the features in each group are further split into three sets. The first set (ρ j 1,j∈(1…G)superscript subscript 𝜌 𝑗 1 𝑗 1…𝐺\rho_{j}^{1},j\in(1...G)italic_ρ start_POSTSUBSCRIPT italic_j end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT , italic_j ∈ ( 1 … italic_G )) in each group concatenates with the next group. Then the features of the latter two sets in each group are rearranged into two new groups (ρ 1 2→ρ G 2→superscript subscript 𝜌 1 2 superscript subscript 𝜌 𝐺 2\rho_{1}^{2}\rightarrow\rho_{G}^{2}italic_ρ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT → italic_ρ start_POSTSUBSCRIPT italic_G end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT and ρ 1 3→ρ G 3→superscript subscript 𝜌 1 3 superscript subscript 𝜌 𝐺 3\rho_{1}^{3}\rightarrow\rho_{G}^{3}italic_ρ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT → italic_ρ start_POSTSUBSCRIPT italic_G end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT) by convolution and split operations according to their position in the original groups. Finally, the two sets of group-mixed features (ρ 2 superscript 𝜌 2\rho^{2}italic_ρ start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT and ρ 3 superscript 𝜌 3\rho^{3}italic_ρ start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT) are integrated in a convolution block and produce the output r t subscript 𝑟 𝑡 r_{t}italic_r start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT. The mixing operation extracts critical clues across channels for robust feature representation. The feature group iteration integrates different channel features with partial parameter sharing. These FSP processes improve the ability to capture rich clues, enhancing object perception and prediction refinement.

(4) Decoder. The decoder is designed to identify the boundaries of non-rigid objects. The Neighbor Connection Decoder (NCD) [5] extracts the coarse mask from different scales of the previous module, FSP. The coarse mask is the approximate location of the target object. Reversing the coarse mask means switching the value of foreground and background. Then the coarse mask is interpolated by reversed masks in the Group Reversal Attention (GRA) [5] module through concatenation and convolution.

q k=concat(p i k:r k)∗W GRA\displaystyle q^{k}=\text{concat}(p_{i}^{k}:r^{k})*W_{GRA}italic_q start_POSTSUPERSCRIPT italic_k end_POSTSUPERSCRIPT = concat ( italic_p start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_k end_POSTSUPERSCRIPT : italic_r start_POSTSUPERSCRIPT italic_k end_POSTSUPERSCRIPT ) ∗ italic_W start_POSTSUBSCRIPT italic_G italic_R italic_A end_POSTSUBSCRIPT(4)

where q k superscript 𝑞 𝑘 q^{k}italic_q start_POSTSUPERSCRIPT italic_k end_POSTSUPERSCRIPT is the integration masks of each scale in frame k 𝑘 k italic_k, p i k superscript subscript 𝑝 𝑖 𝑘 p_{i}^{k}italic_p start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_k end_POSTSUPERSCRIPT is one of the original coarse mask, r k superscript 𝑟 𝑘 r^{k}italic_r start_POSTSUPERSCRIPT italic_k end_POSTSUPERSCRIPT is the reversed mask, and W GRA subscript 𝑊 𝐺 𝑅 𝐴 W_{GRA}italic_W start_POSTSUBSCRIPT italic_G italic_R italic_A end_POSTSUBSCRIPT is the convolutional kernel of GRA. In this way, only the boundary area is emphasized in the coarse mask because the values of the foreground and background cancel each other out. More details can be seen in [5]. Finally we combine the output of GRA and FSP to get the results of gas leak segmentation.

2.3 Loss Function

Like [12], our loss function combines the weighted cross-entropy loss L ce w superscript subscript 𝐿 𝑐 𝑒 𝑤 L_{ce}^{w}italic_L start_POSTSUBSCRIPT italic_c italic_e end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_w end_POSTSUPERSCRIPT and intersection-over-union (IoU) loss L iou w superscript subscript 𝐿 𝑖 𝑜 𝑢 𝑤 L_{iou}^{w}italic_L start_POSTSUBSCRIPT italic_i italic_o italic_u end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_w end_POSTSUPERSCRIPT to achieve robust optimization. These components balance the accuracy and structural alignment of prediction. The loss function is below:

L hybrid=L ce w+L iou w subscript 𝐿 ℎ 𝑦 𝑏 𝑟 𝑖 𝑑 superscript subscript 𝐿 𝑐 𝑒 𝑤 superscript subscript 𝐿 𝑖 𝑜 𝑢 𝑤\displaystyle L_{hybrid}=L_{ce}^{w}+L_{iou}^{w}italic_L start_POSTSUBSCRIPT italic_h italic_y italic_b italic_r italic_i italic_d end_POSTSUBSCRIPT = italic_L start_POSTSUBSCRIPT italic_c italic_e end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_w end_POSTSUPERSCRIPT + italic_L start_POSTSUBSCRIPT italic_i italic_o italic_u end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_w end_POSTSUPERSCRIPT(5)

The weighted cross-entropy loss, L ce w superscript subscript 𝐿 𝑐 𝑒 𝑤 L_{ce}^{w}italic_L start_POSTSUBSCRIPT italic_c italic_e end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_w end_POSTSUPERSCRIPT, ensures that the model focuses more on difficult regions by assigning weights to those regions. IoU loss, L iou w superscript subscript 𝐿 𝑖 𝑜 𝑢 𝑤 L_{iou}^{w}italic_L start_POSTSUBSCRIPT italic_i italic_o italic_u end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_w end_POSTSUPERSCRIPT, complements the cross-entropy loss by emphasizing the overall predicted shape and size consistency with ground truth.

Fig.3: The structure of FSP. It enhances motion features from CTC by residual connection with encoder and calculates semantic representation.

3 Experiments

3.1 Dataset

Our experiments use the public dataset, GasVid [1]. This dataset is a gas leak video set containing 31 videos, each with a duration of 24 minutes. More details can be found in [1]. However, the dataset does not include annotations, so we manually label the videos. In this dataset, some videos are very similar to others, and some are too difficult for humans to distinguish gas leaks. We remove those unused videos and divided the remaining videos into the following categories: close (camera) distance with clear background (no cloud, easy to segment); medium distance with clear background (medium to segment); close distance with complex background (hard to segment); long camera distance with clear background (hard); long camera distance with complex background (hard). For each category, we assign one video for training and another one for testing. This setup allows the model to learn all video types during training and effectively evaluate leak segmentation performance across diverse scenarios. We totally select 14 videos. To accelerate training, each video is divided into many segments that each of them contains 150 frames. We also remove the repeated segments in each video to reduce imbalance. The training set consists of 9 videos with 120 segments, while the testing set has 5 videos with 67 segments totally. Our results on the GasVid dataset are presented in Section 3.3.

3.2 Metrics and Configurations

Our metrics include the following indexes: S-measure (S α subscript 𝑆 𝛼 S_{\alpha}italic_S start_POSTSUBSCRIPT italic_α end_POSTSUBSCRIPT): proposed in [16], combines region-aware and object-aware evaluations to measure structural similarity. Weighted F-measure (F β ω superscript subscript 𝐹 𝛽 𝜔 F_{\beta}^{\omega}italic_F start_POSTSUBSCRIPT italic_β end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_ω end_POSTSUPERSCRIPT) [17]: combines precision and recall, weighted by a balancing factor, to provide a comprehensive evaluation. Mean Absolute Error (MAE): denoted as M 𝑀 M italic_M, quantifies the pixel-level accuracy by comparing the predicted masks with ground truth. Enhanced-Alignment Measure (E ϕ subscript 𝐸 italic-ϕ E_{\phi}italic_E start_POSTSUBSCRIPT italic_ϕ end_POSTSUBSCRIPT)[18]: assesses pixel-level correspondence alongside image-level statistics, making it well-suited for evaluating both global and localized accuracy in non-rigid object segmentation. Mean Intersection over Union (mIoU): calculates the average overlap between prediction and ground truth. Mean Dice: computes the average similarity coefficient, quantifying the overlap between prediction and ground truth, emphasizing segmentation accuracy.

Unlike normal object segmentation methods, we don’t use any pre-trained backbone or model due to the specific characteristics of our dataset. Pre-trained models on RGB datasets may negatively impact our experiments. For a fair comparison, we train our model and benchmark models from scratch. The input frames are resized to 352×\times×352. We train for 60 epochs with learning rate 1×10−4 1 superscript 10 4 1\times 10^{-4}1 × 10 start_POSTSUPERSCRIPT - 4 end_POSTSUPERSCRIPT. All models are trained on an NVIDIA RTX 4090 GPU.

3.3 Results

Our experimental results are presented in Table 1. The green values represent the best scores for each metric, while the red values indicate the second-highest scores. Our method outperforms all benchmark models. Even when compared with the second-best method, SLT-Net [12], our method achieves superior performance on most metrics. Moreover, other methods show significant disparities compared to our model.

Table 1: Quantitative comparison on various benchmarks in GasVid dataset.

1467

1476

2559

2563

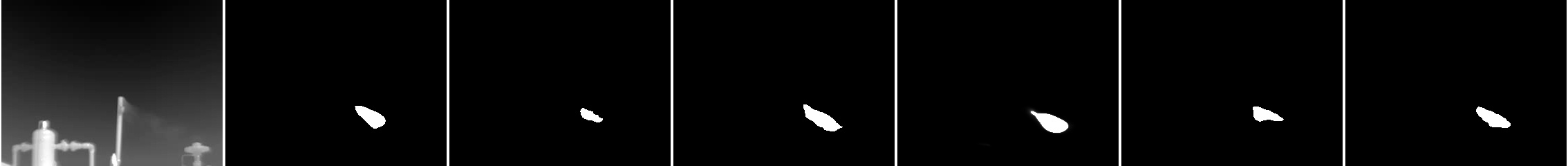

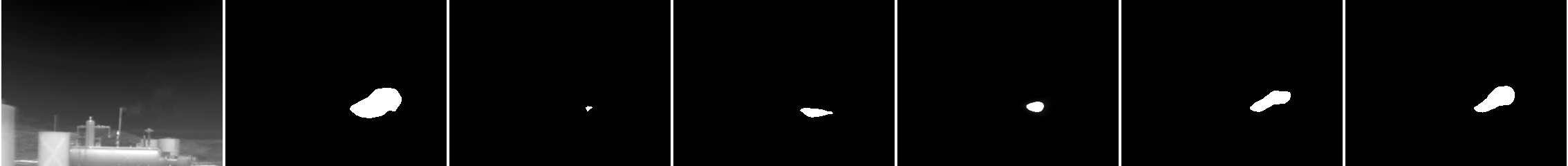

2566

Fig.4: Visualization results in GasVid Dataset. Our model prediction is the most accurate in different situations. i.e formulae-sequence 𝑖 𝑒 i.e italic_i . italic_e close (camera) distance with complex background (cloud or birds interference, 1467), long distance with complex background (1476), medium distance with clear background (2559), long distance with clear background (2563), close distance with clear background (2566)

Table 1 presents the performance comparison across different models. MG [3] fails on the GasVid dataset, producing either incomplete or missing masks due to its reliance solely on optical flow without supervised learning. This approach is ineffective for segmenting blurry and non-rigid objects, like gas leaks. SINet-V2 [5], an image-based method, processes each video frame independently and ignores motion information, which significantly affects its performance in segmenting leaks. As a result, it achieves the lowest mIoU (0.361). XMem++ and RMem [9, 10] are memory-based models that require the first-frame mask as input to guide segmentation. Although effective for regular objects, they struggle with non-rigid and blurry objects, leading to lower weighted F-measures (0.424 and 0.431) compared to SINet-V2 (0.472). ZoomNext [15] leverages strong spatial feature extraction to perform better than image-based and memory-based models, reaching 0.378 mIoU. However, its limited motion extraction capability hinders its performance for tracking leaks. SLT-Net [12] enhances SINet-V2 by incorporating motion information through optical flow, improving performance to 0.392 mIoU. However, it still lacks object-level feature representation, limiting its segmentation capability. In contrast, our model, FGSTP, integrates fine-grained spatial perception with precise motion details, allowing it to effectively find leaks, and achieves the highest performance, 0.399 mIoU.

We also provide visualized results in Fig.4. Due to MG’s failure and space limitations, we select the results of the best five models in all test videos. Our model consistently produces the most accurate masks across various scenarios. For Video-1467 (first row), the background contains clouds and birds, with the clouds having appearances similar to gas leaks. In these challenging cases, only our model correctly segments the leak. Video-1476 is the most difficult sample because of its long camera distance and cloud-covered background. All models, except ours, generate masks with incorrect shapes and locations. Video-2559 also involves a long camera distance but lacks clouds. SLT-Net correctly localizes the leak in this video, but unlike our model, it fails to accurately match its shape. Videos 2559 and 2566 are relatively easier and all models successfully segment leaks; however, our model’s predictions have more details and ours are the most similar to the ground truth (GT). These results demonstrate that our model not only captures more details in simpler scenarios but also shows more stable and accurate performance in challenging conditions.

3.4 Ablation Studies

We conduct ablation studies on the GasVid dataset to evaluate the effectiveness of the two core modules: CTC and FSP. The results are presented in Table 2. Without both CTC and FSP, the model lacks both spatial and temporal information, resulting in the worst performance with only 0.291 mIoU, even worse than many benchmark models. This is because the decoder struggles to generate accurate masks using only backbone features. Removing FSP while keeping CTC eliminates spatial perception, causing the performance to drop to 0.383 mIoU. Although worse than the full model, it still performs better than without both modules or without CTC. Removing CTC and remaining FSP leads to a more significant performance drop to 0.313 mIoU, indicating that motion features are very important. However, spatial perception also contributes to the model performance. The ablation results demonstrate that the superior performance of our model comes from the integration of CTC and FSP, which maximizes the benefits of both temporal and spatial dimensions.

Table 2: Ablation studies results on CTC and FSP modules of FGSTP in GasVid dataset

4 Conclusion

This paper presents an innovative method for gas leak segmentation that achieves better accuracy and adaptability across diverse scenarios. Our approach incorporates an encoder to reduce noise, a temporal module to refine motion clues, and a spatial perception to effectively distinguish target object. Experimental results demonstrate that our model outperforms SOTA benchmarks. Additionally, ablation studies confirm the critical role of our two core modules for robust performance. Finally, visualized results highlight that our model not only captures finer details in easier cases but also handles various challenging situations effectively.

References

- [1] Jingfan Wang, Jingwei Ji, Arvind P Ravikumar, Silvio Savarese, and Adam R Brandt, “Videogasnet: Deep learning for natural gas methane leak classification using an infrared camera,” Energy, vol. 238, pp. 121516, 2022.

- [2] Quan Lu, Qin Li, Likun Hu, and Lifeng Huang, “An effective low-contrast SF 6 gas leakage detection method for infrared imaging,” IEEE Transactions on Instrumentation and Measurement, vol. 70, pp. 1–9, 2021.

- [3] Charig Yang, Hala Lamdouar, Erika Lu, Andrew Zisserman, and Weidi Xie, “Self-supervised video object segmentation by motion grouping,” in Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), October 2021, pp. 7177–7188.

- [4] Zhengxia Zou, Keyan Chen, Zhenwei Shi, Yuhong Guo, and Jieping Ye, “Object detection in 20 years: A survey,” Proceedings of the IEEE, vol. 111, no. 3, pp. 257–276, 2023.

- [5] Deng-Ping Fan, Ge-Peng Ji, Ming-Ming Cheng, and Ling Shao, “Concealed object detection,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 44, no. 10, pp. 6024–6042, 2021.

- [6] Mingpu Wang, Gang Yao, Yang Yang, Yujia Sun, Meng Yan, and Rui Deng, “Deep learning-based object detection for visible dust and prevention measures on construction sites,” Developments in the Built Environment, vol. 16, pp. 100245, 2023.

- [7] Chien-Yao Wang, Alexey Bochkovskiy, and Hong-Yuan Mark Liao, “Yolov7: Trainable bag-of-freebies sets new state-of-the-art for real-time object detectors,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2023, pp. 7464–7475.

- [8] Pengxiang Yan, Guanbin Li, Yuan Xie, Zhen Li, Chuan Wang, Tianshui Chen, and Liang Lin, “Semi-supervised video salient object detection using pseudo-labels,” in Proceedings of the IEEE International Conference on Computer Vision, 2019, pp. 7284–7293.

- [9] Maksym Bekuzarov, Ariana Bermudez, Joon-Young Lee, and Hao Li, “Xmem++: Production-level video segmentation from few annotated frames,” in Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), October 2023, pp. 635–644.

- [10] Junbao Zhou, Ziqi Pang, and Yu-Xiong Wang, “Rmem: Restricted memory banks improve video object segmentation,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2024, pp. 18602–18611.

- [11] Ho Kei Cheng, Seoung Wug Oh, Brian Price, Joon-Young Lee, and Alexander Schwing, “Putting the object back into video object segmentation,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2024, pp. 3151–3161.

- [12] Xuelian Cheng, Huan Xiong, Deng-Ping Fan, Yiran Zhong, Mehrtash Harandi, Tom Drummond, and Zongyuan Ge, “Implicit motion handling for video camouflaged object detection,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2022, pp. 13864–13873.

- [13] Wenhai Wang, Enze Xie, Xiang Li, Deng-Ping Fan, Kaitao Song, Ding Liang, Tong Lu, Ping Luo, and Ling Shao, “Pvt v2: Improved baselines with pyramid vision transformer,” Computational Visual Media, vol. 8, no. 3, pp. 415–424, 2022.

- [14] Dongxu Li, Chenchen Xu, Kaihao Zhang, Xin Yu, Yiran Zhong, Wenqi Ren, Hanna Suominen, and Hongdong Li, “Arvo: Learning all-range volumetric correspondence for video deblurring,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021, pp. 7721–7731.

- [15] Youwei Pang, Xiaoqi Zhao, Tian-Zhu Xiang, Lihe Zhang, and Huchuan Lu, “Zoomnext: A unified collaborative pyramid network for camouflaged object detection,” IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024.

- [16] Fan D, Cheng M, Liu Y, Li T, and Borji A, “A new way to evaluate foreground maps,” in Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR), 2017, vol. 245484557.

- [17] Ran Margolin, Lihi Zelnik-Manor, and Ayellet Tal, “How to evaluate foreground maps?,” in Proceedings of the IEEE conference on computer vision and pattern recognition, 2014, pp. 248–255.

- [18] Deng-Ping Fan, Cheng Gong, Yang Cao, Bo Ren, Ming-Ming Cheng, and Ali Borji, “Enhanced-alignment measure for binary foreground map evaluation,” in Proceedings of the Twenty-Seventh International Joint Conference on Artificial Intelligence, IJCAI-18. 7 2018, pp. 698–704, International Joint Conferences on Artificial Intelligence Organization.

Xet Storage Details

- Size:

- 41.1 kB

- Xet hash:

- a175629aefff7e25bd4a90e539f47c371e8cf81478614231e5d8efa4a0006088

Xet efficiently stores files, intelligently splitting them into unique chunks and accelerating uploads and downloads. More info.