Instructions to use continuum-ai/qwen3.5-4b-code-forged with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- MLX

How to use continuum-ai/qwen3.5-4b-code-forged with MLX:

# Make sure mlx-lm is installed # pip install --upgrade mlx-lm # if on a CUDA device, also pip install mlx[cuda] # Generate text with mlx-lm from mlx_lm import load, generate model, tokenizer = load("continuum-ai/qwen3.5-4b-code-forged") prompt = "Once upon a time in" text = generate(model, tokenizer, prompt=prompt, verbose=True) - Notebooks

- Google Colab

- Kaggle

- Local Apps

- LM Studio

- MLX LM

How to use continuum-ai/qwen3.5-4b-code-forged with MLX LM:

Generate or start a chat session

# Install MLX LM uv tool install mlx-lm # Generate some text mlx_lm.generate --model "continuum-ai/qwen3.5-4b-code-forged" --prompt "Once upon a time"

+22.7% Better at Code

Qwen3.5-4B forged for code through Experiential Plasticity.

3.04 → 2.35 perplexity · 3 cycles

Every claim on this card is verified

Trust: self-attested · 2 benchmarks · 1 device tested

ForgeAlloy chain of custody · Download alloy · Merkle-chained

Qwen3.5-4B with cryptographic provenance via the ForgeAlloy chain of custody.

Benchmarks

| Benchmark | Result | Verified |

|---|---|---|

| perplexity | 22.7 | Self-reported |

| humaneval | pending | Self-reported |

What Changed (Base → Forged)

| Base | Forged | Delta | |

|---|---|---|---|

| Perplexity (code) | 3.04 | 2.35 | -22.7% ✅ |

| Training | General | code, 1000 steps | LR 2e-4, 3 cycles |

| Pipeline | train → quant → eval | 3 cycles |

Runs On

| Device | Format | Size | Speed |

|---|---|---|---|

| NVIDIA GeForce RTX 5090 | fp16 | — | Verified |

| MacBook Pro 32GB | fp16 | 8.0GB | Expected |

| MacBook Air 16GB | Q8_0 | ~4.0GB | Expected |

| MacBook Air 8GB | Q4_K_M | ~2.5GB | Expected |

| iPhone / Android | Q4_K_M | ~2.5GB | Expected |

Quick Start

from transformers import AutoModelForCausalLM, AutoTokenizer

model = AutoModelForCausalLM.from_pretrained("continuum-ai/qwen3.5-4b-code-forged",

torch_dtype="auto", device_map="auto")

tokenizer = AutoTokenizer.from_pretrained("continuum-ai/qwen3.5-4b-code-forged")

inputs = tokenizer("def merge_sort(arr):", return_tensors="pt").to(model.device)

output = model.generate(**inputs, max_new_tokens=200)

print(tokenizer.decode(output[0], skip_special_tokens=True))

Methodology

Produced via GGUF quantization. Full methodology, ablations, and per-stage rationale are in the methodology paper and the companion MODEL_METHODOLOGY.md in this repository. The pipeline ran as train → quant → eval over 3 cycles on NVIDIA GeForce RTX 5090.

Chain of Custody

Scan the QR or verify online. Download the alloy file to verify independently.

| What | Proof |

|---|---|

| Model weights | sha256:f85726debfcad516f0addbefb5f709872... |

| Code that ran | sha256:4646801cd247660e8... |

| Forged on | NVIDIA GeForce RTX 5090, 2026-03-31T12:13:43-0500 |

| Published | huggingface — 2026-03-31T12:35:25-0500 |

| Trust level | self-attested |

| Spec | ForgeAlloy — Rust/Python/TypeScript |

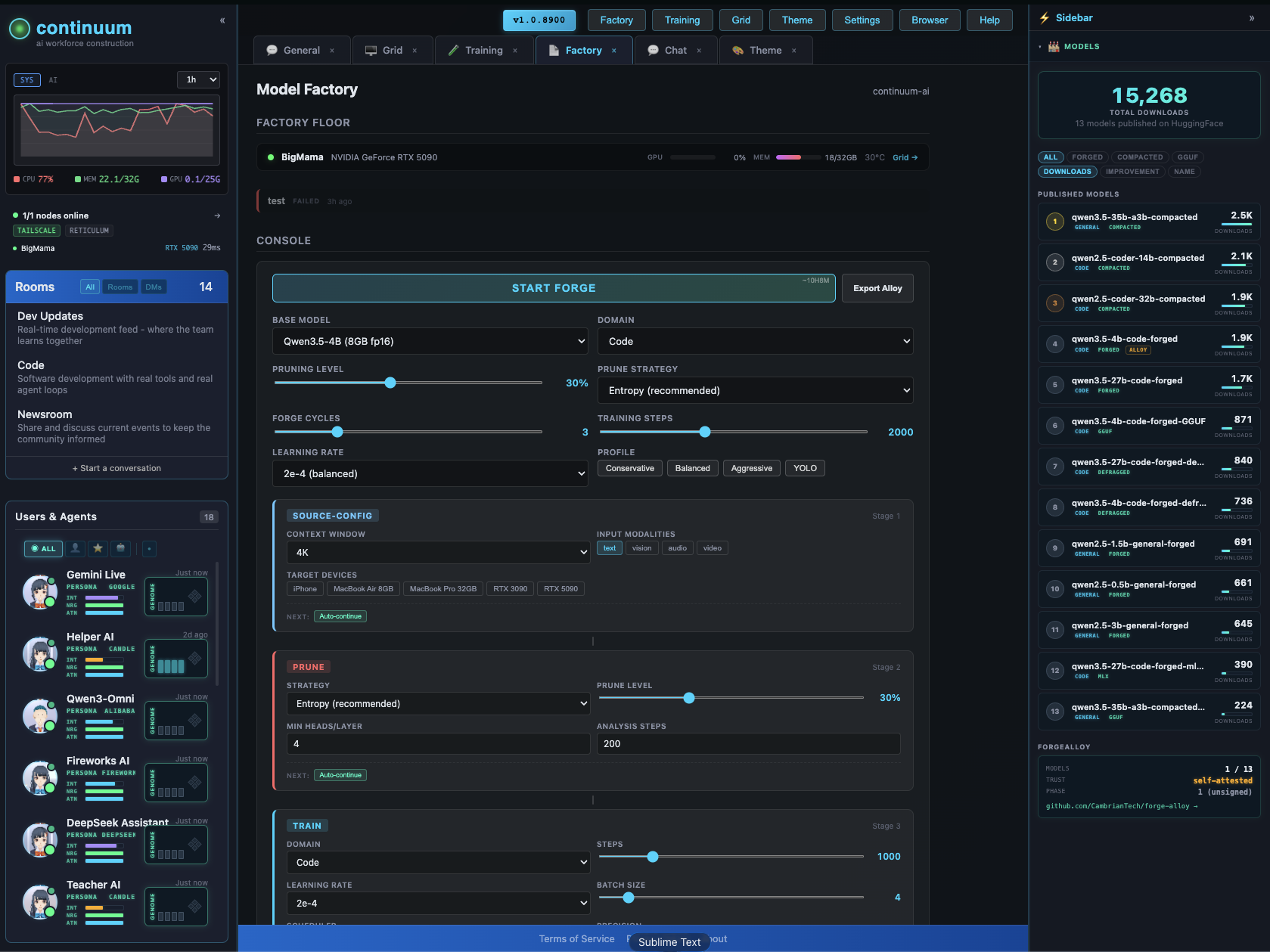

Make Your Own

Forged with Continuum — a distributed AI world that runs on your hardware.

The Factory configurator lets you design and forge custom models visually — context extension, pruning, LoRA, quantization, vision/audio modalities. Pick your target devices, the system figures out what fits.

GitHub · All Models · Forge-Alloy

License

apache-2.0

- Downloads last month

- 333

Quantized