id int64 1.9B 3.25B | title stringlengths 2 244 | state stringclasses 2

values | body stringlengths 3 58.6k ⌀ | created_at timestamp[s]date 2023-09-15 14:23:33 2025-07-22 09:33:54 | updated_at timestamp[s]date 2023-09-18 16:20:09 2025-07-22 10:44:03 | closed_at timestamp[s]date 2023-09-18 16:20:09 2025-07-19 22:45:08 ⌀ | html_url stringlengths 49 51 | pull_request dict | number int64 6.24k 7.7k | is_pull_request bool 2

classes | comments listlengths 0 24 |

|---|---|---|---|---|---|---|---|---|---|---|---|

2,843,188,499 | OSError: [Errno 22] Invalid argument forbidden character | closed | ### Describe the bug

I'm on Windows and i'm trying to load a datasets but i'm having title error because files in the repository are named with charactere like < >which can't be in a name file. Could it be possible to load this datasets but removing those charactere ?

### Steps to reproduce the bug

load_dataset("CAT... | 2025-02-10T17:46:31 | 2025-02-11T13:42:32 | 2025-02-11T13:42:30 | https://github.com/huggingface/datasets/issues/7388 | null | 7,388 | false | [

"You can probably copy the dataset in your HF account and rename the files (without having to download them to your disk). Or alternatively feel free to open a Pull Request to this dataset with the renamed file",

"Thank you, that will help me work around this problem"

] |

2,841,228,048 | Dynamic adjusting dataloader sampling weight | open | Hi,

Thanks for your wonderful work! I'm wondering is there a way to dynamically adjust the sampling weight of each data in the dataset during training? Looking forward to your reply, thanks again. | 2025-02-10T03:18:47 | 2025-03-07T14:06:54 | null | https://github.com/huggingface/datasets/issues/7387 | null | 7,387 | false | [

"You mean based on a condition that has to be checked on-the-fly during training ? Otherwise if you know in advance after how many samples you need to change the sampling you can simply concatenate the two mixes",

"Yes, like during training, if one data sample's prediction is consistently wrong, its sampling weig... |

2,840,032,524 | Add bookfolder Dataset Builder for Digital Book Formats | closed | ### Feature request

This feature proposes adding a new dataset builder called bookfolder to the datasets library. This builder would allow users to easily load datasets consisting of various digital book formats, including: AZW, AZW3, CB7, CBR, CBT, CBZ, EPUB, MOBI, and PDF.

### Motivation

Currently, loading dataset... | 2025-02-08T14:27:55 | 2025-02-08T14:30:10 | 2025-02-08T14:30:09 | https://github.com/huggingface/datasets/issues/7386 | null | 7,386 | false | [

"On second thought, probably not a good idea."

] |

2,830,664,522 | Make IterableDataset (optionally) resumable | open | ### What does this PR do?

This PR introduces a new `stateful` option to the `dataset.shuffle` method, which defaults to `False`.

When enabled, this option allows for resumable shuffling of `IterableDataset` instances, albeit with some additional memory overhead.

Key points:

* All tests have passed

* Docstrings ... | 2025-02-04T15:55:33 | 2025-03-03T17:31:40 | null | https://github.com/huggingface/datasets/pull/7385 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7385",

"html_url": "https://github.com/huggingface/datasets/pull/7385",

"diff_url": "https://github.com/huggingface/datasets/pull/7385.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7385.patch",

"merged_at": null

} | 7,385 | true | [

"@lhoestq Hi again~ Just circling back on this\r\nWondering if there’s anything I can do to help move this forward. 🤗 \r\nThanks!",

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7385). All of your documentation changes will be reflected on that endpoint. The docs are avai... |

2,828,208,828 | Support async functions in map() | closed | e.g. to download images or call an inference API like HF or vLLM

```python

import asyncio

import random

from datasets import Dataset

async def f(x):

await asyncio.sleep(random.random())

ds = Dataset.from_dict({"data": range(100)})

ds.map(f)

# Map: 100%|█████████████████████████████| 100/100 [00:0... | 2025-02-03T18:18:40 | 2025-02-13T14:01:13 | 2025-02-13T14:00:06 | https://github.com/huggingface/datasets/pull/7384 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7384",

"html_url": "https://github.com/huggingface/datasets/pull/7384",

"diff_url": "https://github.com/huggingface/datasets/pull/7384.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7384.patch",

"merged_at": "2025-02-13T14:00... | 7,384 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7384). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update.",

"example of what you can do with it:\r\n\r\n```python\r\nimport aiohttp\r\nfrom huggingf... |

2,823,480,924 | Add Pandas, PyArrow and Polars docs | closed | (also added the missing numpy docs and fixed a small bug in pyarrow formatting) | 2025-01-31T13:22:59 | 2025-01-31T16:30:59 | 2025-01-31T16:30:57 | https://github.com/huggingface/datasets/pull/7382 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7382",

"html_url": "https://github.com/huggingface/datasets/pull/7382",

"diff_url": "https://github.com/huggingface/datasets/pull/7382.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7382.patch",

"merged_at": "2025-01-31T16:30... | 7,382 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7382). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,815,649,092 | Iterating over values of a column in the IterableDataset | closed | ### Feature request

I would like to be able to iterate (and re-iterate if needed) over a column of an `IterableDataset` instance. The following example shows the supposed API:

```python

def gen():

yield {"text": "Good", "label": 0}

yield {"text": "Bad", "label": 1}

ds = IterableDataset.from_generator(gen)

tex... | 2025-01-28T13:17:36 | 2025-05-22T18:00:04 | 2025-05-22T18:00:04 | https://github.com/huggingface/datasets/issues/7381 | null | 7,381 | false | [

"I'd be in favor of that ! I saw many people implementing their own iterables that wrap a dataset just to iterate on a single column, that would make things more practical.\n\nKinda related: https://github.com/huggingface/datasets/issues/5847",

"(For anyone's information, I'm going on vacation for the next 3 week... |

2,811,566,116 | fix: dill default for version bigger 0.3.8 | closed | Fixes def log for dill version >= 0.3.9

https://pypi.org/project/dill/

This project uses dill with the release of version 0.3.9 the datasets lib. | 2025-01-26T13:37:16 | 2025-03-13T20:40:19 | 2025-03-13T20:40:19 | https://github.com/huggingface/datasets/pull/7380 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7380",

"html_url": "https://github.com/huggingface/datasets/pull/7380",

"diff_url": "https://github.com/huggingface/datasets/pull/7380.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7380.patch",

"merged_at": null

} | 7,380 | true | [

"`datasets` doesn't support `dill` 0.3.9 yet afaik since `dill` made some changes related to the determinism of dumps\r\n\r\nIt would be cool to investigate (maybe run the `datasets` test) with recent `dill` to see excactly what breaks and if we can make `dill` 0.3.9 work with `datasets`"

] |

2,802,957,388 | Allow pushing config version to hub | open | ### Feature request

Currently, when datasets are created, they can be versioned by passing the `version` argument to `load_dataset(...)`. For example creating `outcomes.csv` on the command line

```

echo "id,value\n1,0\n2,0\n3,1\n4,1\n" > outcomes.csv

```

and creating it

```

import datasets

dataset = datasets.load_dat... | 2025-01-21T22:35:07 | 2025-01-30T13:56:56 | null | https://github.com/huggingface/datasets/issues/7378 | null | 7,378 | false | [

"Hi ! This sounds reasonable to me, feel free to open a PR :)"

] |

2,802,723,285 | Support for sparse arrays with the Arrow Sparse Tensor format? | open | ### Feature request

AI in biology is becoming a big thing. One thing that would be a huge benefit to the field that Huggingface Datasets doesn't currently have is native support for **sparse arrays**.

Arrow has support for sparse tensors.

https://arrow.apache.org/docs/format/Other.html#sparse-tensor

It would be ... | 2025-01-21T20:14:35 | 2025-01-30T14:06:45 | null | https://github.com/huggingface/datasets/issues/7377 | null | 7,377 | false | [

"Hi ! Unfortunately the Sparse Tensor structure in Arrow is not part of the Arrow format (yes it's confusing...), so it's not possible to use it in `datasets`. It's a separate structure that doesn't correspond to any type or extension type in Arrow.\n\nThe Arrow community recently added an extension type for fixed ... |

2,802,621,104 | [docs] uv install | closed | Proposes adding uv to installation docs (see Slack thread [here](https://huggingface.slack.com/archives/C01N44FJDHT/p1737377177709279) for more context) if you're interested! | 2025-01-21T19:15:48 | 2025-03-14T20:16:35 | 2025-03-14T20:16:35 | https://github.com/huggingface/datasets/pull/7376 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7376",

"html_url": "https://github.com/huggingface/datasets/pull/7376",

"diff_url": "https://github.com/huggingface/datasets/pull/7376.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7376.patch",

"merged_at": null

} | 7,376 | true | [] |

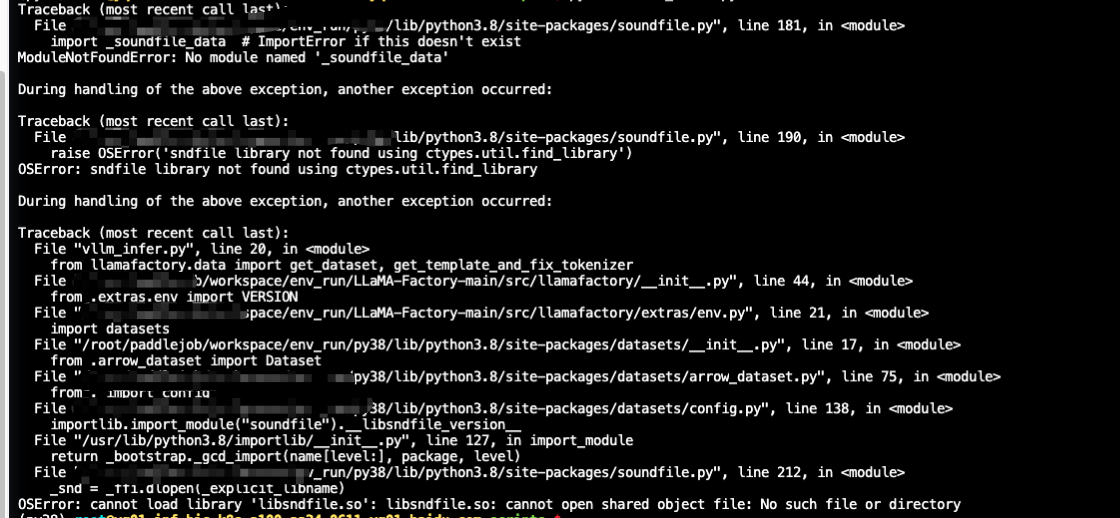

2,800,609,218 | vllm批量推理报错 | open | ### Describe the bug

### Steps to reproduce the bug

### Expected behavior

| 2025-01-16T18:17:24 | 2025-01-16T18:26:40 | 2025-01-16T18:26:38 | https://github.com/huggingface/datasets/pull/7374 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7374",

"html_url": "https://github.com/huggingface/datasets/pull/7374",

"diff_url": "https://github.com/huggingface/datasets/pull/7374.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7374.patch",

"merged_at": "2025-01-16T18:26... | 7,374 | true | [] |

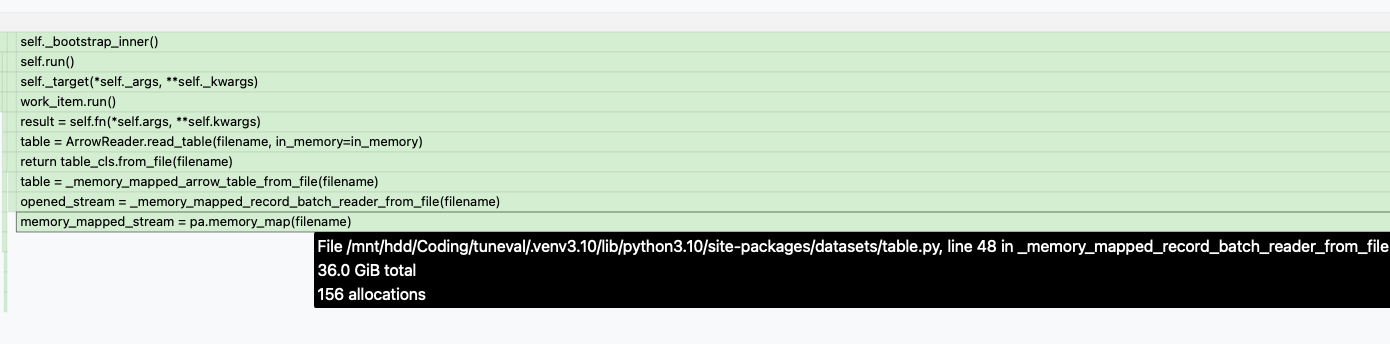

2,793,237,139 | Excessive RAM Usage After Dataset Concatenation concatenate_datasets | open | ### Describe the bug

When loading a dataset from disk, concatenating it, and starting the training process, the RAM usage progressively increases until the kernel terminates the process due to excessive memory consumption.

https://github.com/huggingface/datasets/issues/2276

### Steps to reproduce the bug

```python

... | 2025-01-16T16:33:10 | 2025-03-27T17:40:59 | null | https://github.com/huggingface/datasets/issues/7373 | null | 7,373 | false | [

"\n\n\n\nAdding a img from memray\nhttps://gist.github.com/sam-hey/00c958f13fb0f7b54d17197fe353002f",

"I'm having the same issue where c... |

2,791,760,968 | Inconsistent Behavior Between `load_dataset` and `load_from_disk` When Loading Sharded Datasets | open | ### Description

I encountered an inconsistency in behavior between `load_dataset` and `load_from_disk` when loading sharded datasets. Here is a minimal example to reproduce the issue:

#### Code 1: Using `load_dataset`

```python

from datasets import Dataset, load_dataset

# First save with max_shard_size=10

Dataset.fr... | 2025-01-16T05:47:20 | 2025-01-16T05:47:20 | null | https://github.com/huggingface/datasets/issues/7372 | null | 7,372 | false | [] |

2,790,549,889 | 500 Server error with pushing a dataset | open | ### Describe the bug

Suddenly, I started getting this error message saying it was an internal error.

`Error creating/pushing dataset: 500 Server Error: Internal Server Error for url: https://huggingface.co/api/datasets/ll4ma-lab/grasp-dataset/commit/main (Request ID: Root=1-6787f0b7-66d5bd45413e481c4c2fb22d;670d04ff-... | 2025-01-15T18:23:02 | 2025-01-15T20:06:05 | null | https://github.com/huggingface/datasets/issues/7371 | null | 7,371 | false | [

"EDIT: seems to be all good now. I'll add a comment if the error happens again within the next 48 hours. If it doesn't, I'll just close the topic."

] |

2,787,972,786 | Support faster processing using pandas or polars functions in `IterableDataset.map()` | closed | Following the polars integration :)

Allow super fast processing using pandas or polars functions in `IterableDataset.map()` by adding support to pandas and polars formatting in `IterableDataset`

```python

import polars as pl

from datasets import Dataset

ds = Dataset.from_dict({"i": range(10)}).to_iterable_da... | 2025-01-14T18:14:13 | 2025-01-31T11:08:15 | 2025-01-30T13:30:57 | https://github.com/huggingface/datasets/pull/7370 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7370",

"html_url": "https://github.com/huggingface/datasets/pull/7370",

"diff_url": "https://github.com/huggingface/datasets/pull/7370.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7370.patch",

"merged_at": "2025-01-30T13:30... | 7,370 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7370). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update.",

"merging this and will make some docs and communications around using polars for optimiz... |

2,787,193,238 | Importing dataset gives unhelpful error message when filenames in metadata.csv are not found in the directory | open | ### Describe the bug

While importing an audiofolder dataset, where the names of the audiofiles don't correspond to the filenames in the metadata.csv, we get an unclear error message that is not helpful for the debugging, i.e.

```

ValueError: Instruction "train" corresponds to no data!

```

### Steps to reproduce the ... | 2025-01-14T13:53:21 | 2025-01-14T15:05:51 | null | https://github.com/huggingface/datasets/issues/7369 | null | 7,369 | false | [

"I'd prefer even more verbose errors; like `\"file123.mp3\" is referenced in metadata.csv, but not found in the data directory '/path/to/audiofolder' ! (and 100+ more missing files)` Or something along those lines."

] |

2,784,272,477 | Add with_split to DatasetDict.map | closed | #7356 | 2025-01-13T15:09:56 | 2025-03-08T05:45:02 | 2025-03-07T14:09:52 | https://github.com/huggingface/datasets/pull/7368 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7368",

"html_url": "https://github.com/huggingface/datasets/pull/7368",

"diff_url": "https://github.com/huggingface/datasets/pull/7368.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7368.patch",

"merged_at": "2025-03-07T14:09... | 7,368 | true | [

"Can you check this out, @lhoestq?",

"cc @lhoestq @albertvillanova ",

"@lhoestq\r\n",

"@lhoestq\r\n",

"@lhoestq",

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7368). All of your documentation changes will be reflected on that endpoint. The docs are available until... |

2,781,522,894 | Dataset.from_dict() can't handle large dict | open | ### Describe the bug

I have 26,000,000 3-tuples. When I use Dataset.from_dict() to load, neither. py nor Jupiter notebook can run successfully. This is my code:

```

# len(example_data) is 26,000,000, 'diff' is a text

diff1_list = [example_data[i].texts[0] for i in range(len(example_data))]

diff2_list =... | 2025-01-11T02:05:21 | 2025-01-11T02:05:21 | null | https://github.com/huggingface/datasets/issues/7366 | null | 7,366 | false | [] |

2,780,216,199 | A parameter is specified but not used in datasets.arrow_dataset.Dataset.from_pandas() | open | ### Describe the bug

I am interested in creating train, test and eval splits from a pandas Dataframe, therefore I was looking at the possibilities I can follow. I noticed the split parameter and was hopeful to use it in order to generate the 3 at once, however, while trying to understand the code, i noticed that it ha... | 2025-01-10T13:39:33 | 2025-01-10T13:39:33 | null | https://github.com/huggingface/datasets/issues/7365 | null | 7,365 | false | [] |

2,776,929,268 | API endpoints for gated dataset access requests | closed | ### Feature request

I would like a programatic way of requesting access to gated datasets. The current solution to gain access forces me to visit a website and physically click an "agreement" button (as per the [documentation](https://huggingface.co/docs/hub/en/datasets-gated#access-gated-datasets-as-a-user)).

An i... | 2025-01-09T06:21:20 | 2025-01-09T11:17:40 | 2025-01-09T11:17:20 | https://github.com/huggingface/datasets/issues/7364 | null | 7,364 | false | [

"Looks like a [similar feature request](https://github.com/huggingface/huggingface_hub/issues/1198) was made to the HF Hub team. Is handling this at the Hub level more appropriate?\r\n\r\n(As an aside, I've gotten the [HTTP-based solution](https://github.com/huggingface/huggingface_hub/issues/1198#issuecomment-1905... |

2,774,090,012 | ImportError: To support decoding images, please install 'Pillow'. | open | ### Describe the bug

Following this tutorial locally using a macboko and VSCode: https://huggingface.co/docs/diffusers/en/tutorials/basic_training

This line of code: for i, image in enumerate(dataset[:4]["image"]):

throws: ImportError: To support decoding images, please install 'Pillow'.

Pillow is installed.

###... | 2025-01-08T02:22:57 | 2025-05-28T14:56:53 | null | https://github.com/huggingface/datasets/issues/7363 | null | 7,363 | false | [

"what's your `pip show Pillow` output",

"same issue.. my pip show Pillow output as below:\n\n```\nName: pillow\nVersion: 11.1.0\nSummary: Python Imaging Library (Fork)\nHome-page: https://python-pillow.github.io/\nAuthor: \nAuthor-email: \"Jeffrey A. Clark\" <aclark@aclark.net>\nLicense: MIT-CMU\nLocation: [/opt/... |

2,773,731,829 | HuggingFace CLI dataset download raises error | closed | ### Describe the bug

Trying to download Hugging Face datasets using Hugging Face CLI raises error. This error only started after December 27th, 2024. For example:

```

huggingface-cli download --repo-type dataset gboleda/wikicorpus

Traceback (most recent call last):

File "/home/ubuntu/test_venv/bin/huggingface... | 2025-01-07T21:03:30 | 2025-01-08T15:00:37 | 2025-01-08T14:35:52 | https://github.com/huggingface/datasets/issues/7362 | null | 7,362 | false | [

"I got the same error and was able to resolve it by upgrading from 2.15.0 to 3.2.0.",

"> I got the same error and was able to resolve it by upgrading from 2.15.0 to 3.2.0.\r\n\r\nWhat is needed is upgrading `huggingface-hub==0.27.1`. `datasets` does not appear to have anything to do with the error. The upgrade is... |

2,771,859,244 | Fix lock permission | open | All files except lock file have proper permission obeying `ACL` property if it is set.

If the cache directory has `ACL` property, it should be respected instead of just using `umask` for permission.

To fix it, just create a lock file and pass the created `mode`.

By creating a lock file with `touch()` before `Fil... | 2025-01-07T04:15:53 | 2025-01-07T04:49:46 | null | https://github.com/huggingface/datasets/pull/7361 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7361",

"html_url": "https://github.com/huggingface/datasets/pull/7361",

"diff_url": "https://github.com/huggingface/datasets/pull/7361.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7361.patch",

"merged_at": null

} | 7,361 | true | [] |

2,771,751,406 | error when loading dataset in Hugging Face: NoneType error is not callable | open | ### Describe the bug

I met an error when running a notebook provide by Hugging Face, and met the error.

```

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

Cell In[2], line 5

3 # Load the enhancers dat... | 2025-01-07T02:11:36 | 2025-02-24T13:32:52 | null | https://github.com/huggingface/datasets/issues/7360 | null | 7,360 | false | [

"Hi ! I couldn't reproduce on my side, can you try deleting your cache at `~/.cache/huggingface/modules/datasets_modules/datasets/InstaDeepAI--nucleotide_transformer_downstream_tasks_revised` and try again ? For some reason `datasets` wasn't able to find the DatasetBuilder class in the python script of this dataset... |

2,771,137,842 | There are multiple 'mteb/arguana' configurations in the cache: default, corpus, queries with HF_HUB_OFFLINE=1 | open | ### Describe the bug

Hey folks,

I am trying to run this code -

```python

from datasets import load_dataset, get_dataset_config_names

ds = load_dataset("mteb/arguana")

```

with HF_HUB_OFFLINE=1

But I get the following error -

```python

Using the latest cached version of the dataset since mteb/arguana... | 2025-01-06T17:42:49 | 2025-01-06T17:43:31 | null | https://github.com/huggingface/datasets/issues/7359 | null | 7,359 | false | [

"Related to https://github.com/embeddings-benchmark/mteb/issues/1714"

] |

2,770,927,769 | Fix remove_columns in the formatted case | open | `remove_columns` had no effect when running a function in `.map()` on dataset that is formatted

This aligns the logic of `map()` with the non formatted case and also with with https://github.com/huggingface/datasets/pull/7353 | 2025-01-06T15:44:23 | 2025-01-06T15:46:46 | null | https://github.com/huggingface/datasets/pull/7358 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7358",

"html_url": "https://github.com/huggingface/datasets/pull/7358",

"diff_url": "https://github.com/huggingface/datasets/pull/7358.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7358.patch",

"merged_at": null

} | 7,358 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7358). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,770,456,127 | Python process aborded with GIL issue when using image dataset | open | ### Describe the bug

The issue is visible only with the latest `datasets==3.2.0`.

When using image dataset the Python process gets aborted right before the exit with the following error:

```

Fatal Python error: PyGILState_Release: thread state 0x7fa1f409ade0 must be current when releasing

Python runtime state: f... | 2025-01-06T11:29:30 | 2025-03-08T15:59:36 | null | https://github.com/huggingface/datasets/issues/7357 | null | 7,357 | false | [

"The issue seems to come from `pyarrow`, I opened an issue on their side at https://github.com/apache/arrow/issues/45214"

] |

2,770,095,103 | How about adding a feature to pass the key when performing map on DatasetDict? | closed | ### Feature request

Add a feature to pass the key of the DatasetDict when performing map

### Motivation

I often preprocess using map on DatasetDict.

Sometimes, I need to preprocess train and valid data differently depending on the task.

So, I thought it would be nice to pass the key (like train, valid) when perf... | 2025-01-06T08:13:52 | 2025-03-24T10:57:47 | 2025-03-24T10:57:47 | https://github.com/huggingface/datasets/issues/7356 | null | 7,356 | false | [

"@lhoestq \r\nIf it's okay with you, can I work on this?",

"Hi ! Can you give an example of what it would look like to use this new feature ?\r\n\r\nNote that currently you can already do\r\n\r\n```python\r\nds[\"train\"] = ds[\"train\"].map(process_train)\r\nds[\"test\"] = ds[\"test\"].map(process_test)\r\n```",... |

2,768,958,211 | Not available datasets[audio] on python 3.13 | open | ### Describe the bug

This is the error I got, it seems numba package does not support python 3.13

PS C:\Users\sergi\Documents> pip install datasets[audio]

Defaulting to user installation because normal site-packages is not writeable

Collecting datasets[audio]

Using cached datasets-3.2.0-py3-none-any.whl.metada... | 2025-01-04T18:37:08 | 2025-06-28T00:26:19 | null | https://github.com/huggingface/datasets/issues/7355 | null | 7,355 | false | [

"It looks like an issue with `numba` which can't be installed on 3.13 ? `numba` is a dependency of `librosa`, used to decode audio files",

"There seems that `uv` cannot resolve \n\n```bhas\nuv add -n datasets[audio] huggingface-hub[hf-transfer] transformers\n```\n\nThe problem is again `librosa` which depends on ... |

2,768,955,917 | A module that was compiled using NumPy 1.x cannot be run in NumPy 2.0.2 as it may crash. To support both 1.x and 2.x versions of NumPy, modules must be compiled with NumPy 2.0. Some module may need to rebuild instead e.g. with 'pybind11>=2.12'. | closed | ### Describe the bug

Following this tutorial: https://huggingface.co/docs/diffusers/en/tutorials/basic_training and running it locally using VSCode on my MacBook. The first line in the tutorial fails: from datasets import load_dataset

dataset = load_dataset('huggan/smithsonian_butterflies_subset', split="train"). w... | 2025-01-04T18:30:17 | 2025-01-08T02:20:58 | 2025-01-08T02:20:58 | https://github.com/huggingface/datasets/issues/7354 | null | 7,354 | false | [

"recreated .venv and run this: pip install diffusers[training]==0.11.1"

] |

2,768,484,726 | changes to MappedExamplesIterable to resolve #7345 | closed | modified `MappedExamplesIterable` and `test_iterable_dataset.py::test_mapped_examples_iterable_with_indices`

fix #7345

@lhoestq | 2025-01-04T06:01:15 | 2025-01-07T11:56:41 | 2025-01-07T11:56:41 | https://github.com/huggingface/datasets/pull/7353 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7353",

"html_url": "https://github.com/huggingface/datasets/pull/7353",

"diff_url": "https://github.com/huggingface/datasets/pull/7353.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7353.patch",

"merged_at": "2025-01-07T11:56... | 7,353 | true | [

"I noticed that `Dataset.map` has a more complex output depending on `remove_columns`. In particular [this](https://github.com/huggingface/datasets/blob/6457be66e2ef88411281eddc4e7698866a3977f1/src/datasets/arrow_dataset.py#L3371) line removes columns from output if the input is being modified in place (i.e. `input... |

2,767,763,850 | fsspec 2024.12.0 | closed | null | 2025-01-03T15:32:25 | 2025-01-03T15:34:54 | 2025-01-03T15:34:11 | https://github.com/huggingface/datasets/pull/7352 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7352",

"html_url": "https://github.com/huggingface/datasets/pull/7352",

"diff_url": "https://github.com/huggingface/datasets/pull/7352.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7352.patch",

"merged_at": "2025-01-03T15:34... | 7,352 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7352). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,767,731,707 | Bump hfh to 0.24 to fix ci | closed | null | 2025-01-03T15:09:40 | 2025-01-03T15:12:17 | 2025-01-03T15:10:27 | https://github.com/huggingface/datasets/pull/7350 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7350",

"html_url": "https://github.com/huggingface/datasets/pull/7350",

"diff_url": "https://github.com/huggingface/datasets/pull/7350.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7350.patch",

"merged_at": "2025-01-03T15:10... | 7,350 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7350). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,767,670,454 | Webdataset special columns in last position | closed | Place columns "__key__" and "__url__" in last position in the Dataset Viewer since they are not the main content

before:

<img width="1012" alt="image" src="https://github.com/user-attachments/assets/b556c1fe-2674-4ba0-9643-c074aa9716fd" />

| 2025-01-03T14:32:15 | 2025-01-03T14:34:39 | 2025-01-03T14:32:30 | https://github.com/huggingface/datasets/pull/7349 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7349",

"html_url": "https://github.com/huggingface/datasets/pull/7349",

"diff_url": "https://github.com/huggingface/datasets/pull/7349.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7349.patch",

"merged_at": "2025-01-03T14:32... | 7,349 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7349). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,766,128,230 | Catch OSError for arrow | closed | fixes https://github.com/huggingface/datasets/issues/7346

(also updated `ruff` and appleid style changes) | 2025-01-02T14:30:00 | 2025-01-09T14:25:06 | 2025-01-09T14:25:04 | https://github.com/huggingface/datasets/pull/7348 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7348",

"html_url": "https://github.com/huggingface/datasets/pull/7348",

"diff_url": "https://github.com/huggingface/datasets/pull/7348.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7348.patch",

"merged_at": "2025-01-09T14:25... | 7,348 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7348). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,760,282,339 | Converting Arrow to WebDataset TAR Format for Offline Use | closed | ### Feature request

Hi,

I've downloaded an Arrow-formatted dataset offline using the hugggingface's datasets library by:

```

import json

from datasets import load_dataset

dataset = load_dataset("pixparse/cc3m-wds")

dataset.save_to_disk("./cc3m_1")

```

now I need to convert it to WebDataset's TAR form... | 2024-12-27T01:40:44 | 2024-12-31T17:38:00 | 2024-12-28T15:38:03 | https://github.com/huggingface/datasets/issues/7347 | null | 7,347 | false | [

"Hi,\r\n\r\nI've downloaded an Arrow-formatted dataset offline using the hugggingface's datasets library by:\r\n\r\nimport json\r\nfrom datasets import load_dataset\r\n\r\ndataset = load_dataset(\"pixparse/cc3m-wds\")\r\ndataset.save_to_disk(\"./cc3m_1\")\r\n\r\n\r\nnow I need to convert it to WebDataset's TAR form... |

2,758,752,118 | OSError: Invalid flatbuffers message. | closed | ### Describe the bug

When loading a large 2D data (1000 × 1152) with a large number of (2,000 data in this case) in `load_dataset`, the error message `OSError: Invalid flatbuffers message` is reported.

When only 300 pieces of data of this size (1000 × 1152) are stored, they can be loaded correctly.

When 2,00... | 2024-12-25T11:38:52 | 2025-01-09T14:25:29 | 2025-01-09T14:25:05 | https://github.com/huggingface/datasets/issues/7346 | null | 7,346 | false | [

"Thanks for reporting, it looks like an issue with `pyarrow.ipc.open_stream`\r\n\r\nCan you try installing `datasets` from this pull request and see if it helps ? https://github.com/huggingface/datasets/pull/7348",

"> Thanks for reporting, it looks like an issue with `pyarrow.ipc.open_stream`\r\n> \r\n> Can you t... |

2,758,585,709 | Different behaviour of IterableDataset.map vs Dataset.map with remove_columns | closed | ### Describe the bug

The following code

```python

import datasets as hf

ds1 = hf.Dataset.from_list([{'i': i} for i in [0,1]])

#ds1 = ds1.to_iterable_dataset()

ds2 = ds1.map(

lambda i: {'i': i+1},

input_columns = ['i'],

remove_columns = ['i']

)

list(ds2)

```

produces

```python

[{'i': ... | 2024-12-25T07:36:48 | 2025-01-07T11:56:42 | 2025-01-07T11:56:42 | https://github.com/huggingface/datasets/issues/7345 | null | 7,345 | false | [

"Good catch ! Do you think you can open a PR to fix this issue ?"

] |

2,754,735,951 | HfHubHTTPError: 429 Client Error: Too Many Requests for URL when trying to access SlimPajama-627B or c4 on TPUs | closed | ### Describe the bug

I am trying to run some trainings on Google's TPUs using Huggingface's DataLoader on [SlimPajama-627B](https://huggingface.co/datasets/cerebras/SlimPajama-627B) and [c4](https://huggingface.co/datasets/allenai/c4), but I end up running into `429 Client Error: Too Many Requests for URL` error when ... | 2024-12-22T16:30:07 | 2025-01-15T05:32:00 | 2025-01-15T05:31:58 | https://github.com/huggingface/datasets/issues/7344 | null | 7,344 | false | [

"Hi ! This is due to your old version of `datasets` which calls HF with `expand=True`, an option that is strongly rate limited.\r\n\r\nRecent versions of `datasets` don't rely on this anymore, you can fix your issue by upgrading `datasets` :)\r\n\r\n```\r\npip install -U datasets\r\n```\r\n\r\nYou can also get maxi... |

2,750,525,823 | [Bug] Inconsistent behavior of data_files and data_dir in load_dataset method. | closed | ### Describe the bug

Inconsistent operation of data_files and data_dir in load_dataset method.

### Steps to reproduce the bug

# First

I have three files, named 'train.json', 'val.json', 'test.json'.

Each one has a simple dict `{text:'aaa'}`.

Their path are `/data/train.json`, `/data/val.json`, `/data/test.jso... | 2024-12-19T14:31:27 | 2025-01-03T15:54:09 | 2025-01-03T15:54:09 | https://github.com/huggingface/datasets/issues/7343 | null | 7,343 | false | [

"Hi ! `data_files` with a list is equivalent to `data_files={\"train\": data_files}` with a train test only.\r\n\r\nWhen no split are specified, they are inferred based on file names, and files with no apparent split are ignored",

"Thanks for your reply!\r\n`files with no apparent split are ignored`. Is there a o... |

2,749,572,310 | Update LICENSE | closed | null | 2024-12-19T08:17:50 | 2024-12-19T08:44:08 | 2024-12-19T08:44:08 | https://github.com/huggingface/datasets/pull/7342 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7342",

"html_url": "https://github.com/huggingface/datasets/pull/7342",

"diff_url": "https://github.com/huggingface/datasets/pull/7342.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7342.patch",

"merged_at": null

} | 7,342 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7342). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,745,658,561 | minor video docs on how to install | closed | null | 2024-12-17T18:06:17 | 2024-12-17T18:11:17 | 2024-12-17T18:11:15 | https://github.com/huggingface/datasets/pull/7341 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7341",

"html_url": "https://github.com/huggingface/datasets/pull/7341",

"diff_url": "https://github.com/huggingface/datasets/pull/7341.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7341.patch",

"merged_at": "2024-12-17T18:11... | 7,341 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7341). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,745,473,274 | don't import soundfile in tests | closed | null | 2024-12-17T16:49:55 | 2024-12-17T16:54:04 | 2024-12-17T16:50:24 | https://github.com/huggingface/datasets/pull/7340 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7340",

"html_url": "https://github.com/huggingface/datasets/pull/7340",

"diff_url": "https://github.com/huggingface/datasets/pull/7340.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7340.patch",

"merged_at": "2024-12-17T16:50... | 7,340 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7340). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,745,460,060 | Update CONTRIBUTING.md | closed | null | 2024-12-17T16:45:25 | 2024-12-17T16:51:36 | 2024-12-17T16:46:30 | https://github.com/huggingface/datasets/pull/7339 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7339",

"html_url": "https://github.com/huggingface/datasets/pull/7339",

"diff_url": "https://github.com/huggingface/datasets/pull/7339.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7339.patch",

"merged_at": "2024-12-17T16:46... | 7,339 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7339). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,744,877,569 | One or several metadata.jsonl were found, but not in the same directory or in a parent directory of | open | ### Describe the bug

ImageFolder with metadata.jsonl error. I downloaded liuhaotian/LLaVA-CC3M-Pretrain-595K locally from Hugging Face. According to the tutorial in https://huggingface.co/docs/datasets/image_dataset#image-captioning, only put images.zip and metadata.jsonl containing information in the same folder. How... | 2024-12-17T12:58:43 | 2025-01-03T15:28:13 | null | https://github.com/huggingface/datasets/issues/7337 | null | 7,337 | false | [

"Hmmm I double checked in the source code and I found a contradiction: in the current implementation the metadata file is ignored if it's not in the same archive as the zip image somehow:\r\n\r\nhttps://github.com/huggingface/datasets/blob/caa705e8bf4bedf1a956f48b545283b2ca14170a/src/datasets/packaged_modules/folde... |

2,744,746,456 | Clarify documentation or Create DatasetCard | open | ### Feature request

I noticed that you can use a Model Card instead of a Dataset Card when pushing a dataset to the Hub, but this isn’t clearly mentioned in [the docs.](https://huggingface.co/docs/datasets/dataset_card)

- Update the docs to clarify that a Model Card can work for datasets too.

- It might be worth c... | 2024-12-17T12:01:00 | 2024-12-17T12:01:00 | null | https://github.com/huggingface/datasets/issues/7336 | null | 7,336 | false | [] |

2,743,437,260 | Too many open files: '/root/.cache/huggingface/token' | open | ### Describe the bug

I ran this code:

```

from datasets import load_dataset

dataset = load_dataset("common-canvas/commoncatalog-cc-by", cache_dir="/datadrive/datasets/cc", num_proc=1000)

```

And got this error.

Before it was some other file though (lie something...incomplete)

runnting

```

ulimit -n 8192

... | 2024-12-16T21:30:24 | 2024-12-16T21:30:24 | null | https://github.com/huggingface/datasets/issues/7335 | null | 7,335 | false | [] |

2,740,266,503 | TypeError: Value.__init__() missing 1 required positional argument: 'dtype' | open | ### Describe the bug

ds = load_dataset(

"./xxx.py",

name="default",

split="train",

)

The datasets does not support debugging locally anymore...

### Steps to reproduce the bug

```

from datasets import load_dataset

ds = load_dataset(

"./repo.py",

name="default",

split="train",

)

... | 2024-12-15T04:08:46 | 2025-07-10T03:32:36 | null | https://github.com/huggingface/datasets/issues/7334 | null | 7,334 | false | [

"same error \n```\ndata = load_dataset('/opt/deepseek_R1_finetune/hf_datasets/openai/gsm8k', 'main')[split] \n```",

"> same error\n> \n> ```\n> data = load_dataset('/opt/deepseek_R1_finetune/hf_datasets/openai/gsm8k', 'main')[split] \n> ```\n\nhttps://github.com/huggingface/open-r1/issues/204 this help me",

"S... |

2,738,626,593 | Fix typo in arrow_dataset | closed | null | 2024-12-13T15:17:09 | 2024-12-19T17:10:27 | 2024-12-19T17:10:25 | https://github.com/huggingface/datasets/pull/7328 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7328",

"html_url": "https://github.com/huggingface/datasets/pull/7328",

"diff_url": "https://github.com/huggingface/datasets/pull/7328.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7328.patch",

"merged_at": "2024-12-19T17:10... | 7,328 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7328). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,738,514,909 | .map() is not caching and ram goes OOM | open | ### Describe the bug

Im trying to run a fairly simple map that is converting a dataset into numpy arrays. however, it just piles up on memory and doesnt write to disk. Ive tried multiple cache techniques such as specifying the cache dir, setting max mem, +++ but none seem to work. What am I missing here?

### Steps to... | 2024-12-13T14:22:56 | 2025-02-10T10:42:38 | null | https://github.com/huggingface/datasets/issues/7327 | null | 7,327 | false | [

"I have the same issue - any update on this?"

] |

2,738,188,902 | Remove upper bound for fsspec | open | ### Describe the bug

As also raised by @cyyever in https://github.com/huggingface/datasets/pull/7296 and @NeilGirdhar in https://github.com/huggingface/datasets/commit/d5468836fe94e8be1ae093397dd43d4a2503b926#commitcomment-140952162 , `datasets` has a problematic version constraint on `fsspec`.

In our case this c... | 2024-12-13T11:35:12 | 2025-01-03T15:34:37 | null | https://github.com/huggingface/datasets/issues/7326 | null | 7,326 | false | [

"Unfortunately `fsspec` versioning allows breaking changes across version and there is no way we can keep it without constrains at the moment. It already broke `datasets` once in the past. Maybe one day once `fsspec` decides on a stable and future proof API but I don't think this will happen anytime soon\r\n\r\nedi... |

2,736,618,054 | Introduce pdf support (#7318) | closed | First implementation of the Pdf feature to support pdfs (#7318) . Using [pdfplumber](https://github.com/jsvine/pdfplumber?tab=readme-ov-file#python-library) as the default library to work with pdfs.

@lhoestq and @AndreaFrancis | 2024-12-12T18:31:18 | 2025-03-18T14:00:36 | 2025-03-18T14:00:36 | https://github.com/huggingface/datasets/pull/7325 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7325",

"html_url": "https://github.com/huggingface/datasets/pull/7325",

"diff_url": "https://github.com/huggingface/datasets/pull/7325.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7325.patch",

"merged_at": "2025-03-18T14:00... | 7,325 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7325). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update.",

"Hi @AndreaFrancis and @lhoestq ! Thanks for looking at the code and for all the changes... |

2,736,008,698 | Unexpected cache behaviour using load_dataset | closed | ### Describe the bug

Following the (Cache management)[https://huggingface.co/docs/datasets/en/cache] docu and previous behaviour from datasets version 2.18.0, one is able to change the cache directory. Previously, all downloaded/extracted/etc files were found in this folder. As i have recently update to the latest v... | 2024-12-12T14:03:00 | 2025-01-31T11:34:24 | 2025-01-31T11:34:24 | https://github.com/huggingface/datasets/issues/7323 | null | 7,323 | false | [

"Hi ! Since `datasets` 3.x, the `datasets` specific files are in `cache_dir=` and the HF files are cached using `huggingface_hub` and you can set its cache directory using the `HF_HOME` environment variable.\r\n\r\nThey are independent, for example you can delete the Hub cache (containing downloaded files) but sti... |

2,732,254,868 | ArrowInvalid: JSON parse error: Column() changed from object to array in row 0 | open | ### Describe the bug

Encountering an error while loading the ```liuhaotian/LLaVA-Instruct-150K dataset```.

### Steps to reproduce the bug

```

from datasets import load_dataset

fw =load_dataset("liuhaotian/LLaVA-Instruct-150K")

```

Error:

```

ArrowInvalid Traceback (most recen... | 2024-12-11T08:41:39 | 2025-07-15T13:06:55 | null | https://github.com/huggingface/datasets/issues/7322 | null | 7,322 | false | [

"Hi ! `datasets` uses Arrow under the hood which expects each column and array to have fixed types that don't change across rows of a dataset, which is why we get this error. This dataset in particular doesn't have a format compatible with Arrow unfortunately. Don't hesitate to open a discussion or PR on HF to fix ... |

2,731,626,760 | ImportError: cannot import name 'set_caching_enabled' from 'datasets' | open | ### Describe the bug

Traceback (most recent call last):

File "/usr/local/lib/python3.10/runpy.py", line 187, in _run_module_as_main

mod_name, mod_spec, code = _get_module_details(mod_name, _Error)

File "/usr/local/lib/python3.10/runpy.py", line 110, in _get_module_details

__import__(pkg_name)

File "... | 2024-12-11T01:58:46 | 2024-12-11T13:32:15 | null | https://github.com/huggingface/datasets/issues/7321 | null | 7,321 | false | [

"pip install datasets==2.18.0",

"Hi ! I think you need to update axolotl"

] |

2,731,112,100 | ValueError: You should supply an encoding or a list of encodings to this method that includes input_ids, but you provided ['label'] | closed | ### Describe the bug

I am trying to create a PEFT model from DISTILBERT model, and run a training loop. However, the trainer.train() is giving me this error: ValueError: You should supply an encoding or a list of encodings to this method that includes input_ids, but you provided ['label']

Here is my code:

### St... | 2024-12-10T20:23:11 | 2024-12-10T23:22:23 | 2024-12-10T23:22:23 | https://github.com/huggingface/datasets/issues/7320 | null | 7,320 | false | [

"Now i have other error"

] |

2,730,679,980 | set dev version | closed | null | 2024-12-10T17:01:34 | 2024-12-10T17:04:04 | 2024-12-10T17:01:45 | https://github.com/huggingface/datasets/pull/7319 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7319",

"html_url": "https://github.com/huggingface/datasets/pull/7319",

"diff_url": "https://github.com/huggingface/datasets/pull/7319.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7319.patch",

"merged_at": "2024-12-10T17:01... | 7,319 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7319). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,730,676,278 | Introduce support for PDFs | open | ### Feature request

The idea (discussed in the Discord server with @lhoestq ) is to have a Pdf type like Image/Audio/Video. For example [Video](https://github.com/huggingface/datasets/blob/main/src/datasets/features/video.py) was recently added and contains how to decode a video file encoded in a dictionary like {"pat... | 2024-12-10T16:59:48 | 2024-12-12T18:38:13 | null | https://github.com/huggingface/datasets/issues/7318 | null | 7,318 | false | [

"#self-assign",

"Awesome ! Let me know if you have any question or if I can help :)\r\n\r\ncc @AndreaFrancis as well for viz",

"Other candidates libraries for the Pdf type: PyMuPDF pypdf and pdfplumber\r\n\r\nEDIT: Pymupdf looks like a good choice when it comes to maturity + performance + versatility BUT the li... |

2,730,661,237 | Release: 3.2.0 | closed | null | 2024-12-10T16:53:20 | 2024-12-10T16:56:58 | 2024-12-10T16:56:56 | https://github.com/huggingface/datasets/pull/7317 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7317",

"html_url": "https://github.com/huggingface/datasets/pull/7317",

"diff_url": "https://github.com/huggingface/datasets/pull/7317.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7317.patch",

"merged_at": "2024-12-10T16:56... | 7,317 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7317). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,730,196,085 | More docs to from_dict to mention that the result lives in RAM | closed | following discussions at https://discuss.huggingface.co/t/how-to-load-this-simple-audio-data-set-and-use-dataset-map-without-memory-issues/17722/14 | 2024-12-10T13:56:01 | 2024-12-10T13:58:32 | 2024-12-10T13:57:02 | https://github.com/huggingface/datasets/pull/7316 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7316",

"html_url": "https://github.com/huggingface/datasets/pull/7316",

"diff_url": "https://github.com/huggingface/datasets/pull/7316.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7316.patch",

"merged_at": "2024-12-10T13:57... | 7,316 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7316). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,727,502,630 | Resolved for empty datafiles | open | Resolved for Issue#6152 | 2024-12-09T15:47:22 | 2024-12-27T18:20:21 | null | https://github.com/huggingface/datasets/pull/7314 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7314",

"html_url": "https://github.com/huggingface/datasets/pull/7314",

"diff_url": "https://github.com/huggingface/datasets/pull/7314.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7314.patch",

"merged_at": null

} | 7,314 | true | [

"Closes #6152 ",

"@mariosasko I hope this resolves #6152 "

] |

2,726,240,634 | Cannot create a dataset with relative audio path | open | ### Describe the bug

Hello! I want to create a dataset of parquet files, with audios stored as separate .mp3 files. However, it says "No such file or directory" (see the reproducing code).

### Steps to reproduce the bug

Creating a dataset

```

from pathlib import Path

from datasets import Dataset, load_datas... | 2024-12-09T07:34:20 | 2025-04-19T07:13:08 | null | https://github.com/huggingface/datasets/issues/7313 | null | 7,313 | false | [

"Hello ! when you `cast_column` you need the paths to be absolute paths or relative paths to your working directory, not the original dataset directory.\r\n\r\nThough I'd recommend structuring your dataset as an AudioFolder which automatically links a metadata.jsonl or csv to the audio files via relative paths **wi... |

2,725,103,094 | [Audio Features - DO NOT MERGE] PoC for adding an offset+sliced reading to audio file. | open | This is a proof of concept for #7310 . The idea is to enable the access to others column of the dataset row when loading an audio file into a table. This is to allow sliced reading. As stated in the issue, many people have very long audio files and use start and stop slicing in this audio file.

Right now, this code ... | 2024-12-08T10:27:31 | 2024-12-08T10:27:31 | null | https://github.com/huggingface/datasets/pull/7312 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7312",

"html_url": "https://github.com/huggingface/datasets/pull/7312",

"diff_url": "https://github.com/huggingface/datasets/pull/7312.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7312.patch",

"merged_at": null

} | 7,312 | true | [] |

2,725,002,630 | How to get the original dataset name with username? | open | ### Feature request

The issue is related to ray data https://github.com/ray-project/ray/issues/49008 which it requires to check if the dataset is the original one just after `load_dataset` and parquet files are already available on hf hub.

The solution used now is to get the dataset name, config and split, then `... | 2024-12-08T07:18:14 | 2025-01-09T10:48:02 | null | https://github.com/huggingface/datasets/issues/7311 | null | 7,311 | false | [

"Hi ! why not pass the dataset id to Ray and let it check the parquet files ? Or pass the parquet files lists directly ?",

"I'm not sure why ray design an API like this to accept a `Dataset` object, so they need to verify the `Dataset` is the original one and use the `DatasetInfo` to query the huggingface hub. I'... |

2,724,830,603 | Enable the Audio Feature to decode / read with an offset + duration | open | ### Feature request

For most large speech dataset, we do not wish to generate hundreds of millions of small audio samples. Instead, it is quite common to provide larger audio files with frame offset (soundfile start and stop arguments). We should be able to pass these arguments to Audio() (column ID corresponding in t... | 2024-12-07T22:01:44 | 2024-12-09T21:09:46 | null | https://github.com/huggingface/datasets/issues/7310 | null | 7,310 | false | [

"Hi ! What about having audio + start + duration columns and enable something like this ?\r\n\r\n```python\r\nfor example in ds:\r\n array = example[\"audio\"].read(start=example[\"start\"], frames=example[\"duration\"])\r\n```",

"Hi @lhoestq, this would work with a file-based dataset but would be terrible for... |

2,729,738,963 | Allow manual configuration of Dataset Viewer for datasets not created with the `datasets` library | open | #### **Problem Description**

Currently, the Hugging Face Dataset Viewer automatically interprets dataset fields for datasets created with the `datasets` library. However, for datasets pushed directly via `git`, the Viewer:

- Defaults to generic columns like `label` with `null` values if no explicit mapping is provide... | 2024-12-07T16:37:12 | 2024-12-11T11:05:22 | null | https://github.com/huggingface/datasets/issues/7315 | null | 7,315 | false | [

"Hi @diarray-hub , thanks for opening the issue :) Let me ping @lhoestq and @severo from the dataset viewer team :hugs: ",

"amazing :)",

"Hi ! why not modify the manifest.json file directly ? this way users see in the viewer the dataset as is instead which makes it easier to use using e.g. the `datasets` librar... |

2,723,636,931 | Faster parquet streaming + filters with predicate pushdown | closed | ParquetFragment.to_batches uses a buffered stream to read parquet data, which makes streaming faster (x2 on my laptop).

I also added the `filters` config parameter to support filtering with predicate pushdown, e.g.

```python

from datasets import load_dataset

filters = [('problem_source', '==', 'math')]

ds = ... | 2024-12-06T18:01:54 | 2024-12-07T23:32:30 | 2024-12-07T23:32:28 | https://github.com/huggingface/datasets/pull/7309 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7309",

"html_url": "https://github.com/huggingface/datasets/pull/7309",

"diff_url": "https://github.com/huggingface/datasets/pull/7309.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7309.patch",

"merged_at": "2024-12-07T23:32... | 7,309 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7309). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,720,244,889 | refactor: remove unnecessary else | open | null | 2024-12-05T12:11:09 | 2024-12-06T15:11:33 | null | https://github.com/huggingface/datasets/pull/7307 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7307",

"html_url": "https://github.com/huggingface/datasets/pull/7307",

"diff_url": "https://github.com/huggingface/datasets/pull/7307.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7307.patch",

"merged_at": null

} | 7,307 | true | [] |

2,719,807,464 | Creating new dataset from list loses information. (Audio Information Lost - either Datatype or Values). | open | ### Describe the bug

When creating a dataset from a list of datapoints, information is lost of the individual items.

Specifically, when creating a dataset from a list of datapoints (from another dataset). Either the datatype is lost or the values are lost. See examples below.

-> What is the best way to create... | 2024-12-05T09:07:53 | 2024-12-05T09:09:38 | null | https://github.com/huggingface/datasets/issues/7306 | null | 7,306 | false | [] |

2,715,907,267 | Build Documentation Test Fails Due to "Bad Credentials" Error | open | ### Describe the bug

The `Build documentation / build / build_main_documentation (push)` job is consistently failing during the "Syncing repository" step. The error occurs when attempting to determine the default branch name, resulting in "Bad credentials" errors.

### Steps to reproduce the bug

1. Trigger the `build... | 2024-12-03T20:22:54 | 2025-01-08T22:38:14 | null | https://github.com/huggingface/datasets/issues/7305 | null | 7,305 | false | [

"how were you able to fix this please?",

"> how were you able to fix this please?\r\n\r\nI was not able to fix this."

] |

2,715,179,811 | Update iterable_dataset.py | closed | close https://github.com/huggingface/datasets/issues/7297 | 2024-12-03T14:25:42 | 2024-12-03T14:28:10 | 2024-12-03T14:27:02 | https://github.com/huggingface/datasets/pull/7304 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7304",

"html_url": "https://github.com/huggingface/datasets/pull/7304",

"diff_url": "https://github.com/huggingface/datasets/pull/7304.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7304.patch",

"merged_at": "2024-12-03T14:27... | 7,304 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7304). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,705,729,696 | DataFilesNotFoundError for datasets LM1B | closed | ### Describe the bug

Cannot load the dataset https://huggingface.co/datasets/billion-word-benchmark/lm1b

### Steps to reproduce the bug

`dataset = datasets.load_dataset('lm1b', split=split)`

### Expected behavior

`Traceback (most recent call last):

File "/home/hml/projects/DeepLearning/Generative_model/Diffusio... | 2024-11-29T17:27:45 | 2024-12-11T13:22:47 | 2024-12-11T13:22:47 | https://github.com/huggingface/datasets/issues/7303 | null | 7,303 | false | [

"Hi ! Can you try with a more recent version of `datasets` ? Also you might need to pass trust_remote_code=True since it's a script based dataset"

] |

2,702,626,386 | Let server decide default repo visibility | closed | Until now, all repos were public by default when created without passing the `private` argument. This meant that passing `private=False` or `private=None` was strictly the same. This is not the case anymore. Enterprise Hub offers organizations to set a default visibility setting for new repos. This is useful for organi... | 2024-11-28T16:01:13 | 2024-11-29T17:00:40 | 2024-11-29T17:00:38 | https://github.com/huggingface/datasets/pull/7302 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7302",

"html_url": "https://github.com/huggingface/datasets/pull/7302",

"diff_url": "https://github.com/huggingface/datasets/pull/7302.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7302.patch",

"merged_at": "2024-11-29T17:00... | 7,302 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7302). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update.",

"No need for a specific version of huggingface_hub to avoid a breaking change no (it's a... |

2,701,813,922 | update load_dataset doctring | closed | - remove canonical dataset name

- remove dataset script logic

- add streaming info

- clearer download and prepare steps | 2024-11-28T11:19:20 | 2024-11-29T10:31:43 | 2024-11-29T10:31:40 | https://github.com/huggingface/datasets/pull/7301 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7301",

"html_url": "https://github.com/huggingface/datasets/pull/7301",

"diff_url": "https://github.com/huggingface/datasets/pull/7301.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7301.patch",

"merged_at": "2024-11-29T10:31... | 7,301 | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7301). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,701,424,320 | fix: update elasticsearch version | closed | This should fix the `test_py311 (windows latest, deps-latest` errors.

```

=========================== short test summary info ===========================

ERROR tests/test_search.py - AttributeError: `np.float_` was removed in the NumPy 2.0 release. Use `np.float64` instead.

ERROR tests/test_search.py - AttributeE... | 2024-11-28T09:14:21 | 2024-12-03T14:36:56 | 2024-12-03T14:24:42 | https://github.com/huggingface/datasets/pull/7300 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7300",

"html_url": "https://github.com/huggingface/datasets/pull/7300",

"diff_url": "https://github.com/huggingface/datasets/pull/7300.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7300.patch",

"merged_at": "2024-12-03T14:24... | 7,300 | true | [

"May I request a review @lhoestq",

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7300). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,695,378,251 | Efficient Image Augmentation in Hugging Face Datasets | open | ### Describe the bug

I'm using the Hugging Face datasets library to load images in batch and would like to apply a torchvision transform to solve the inconsistent image sizes in the dataset and apply some on the fly image augmentation. I can just think about using the collate_fn, but seems quite inefficient.

... | 2024-11-26T16:50:32 | 2024-11-26T16:53:53 | null | https://github.com/huggingface/datasets/issues/7299 | null | 7,299 | false | [] |

2,694,196,968 | loading dataset issue with load_dataset() when training controlnet | open | ### Describe the bug

i'm unable to load my dataset for [controlnet training](https://github.com/huggingface/diffusers/blob/074e12358bc17e7dbe111ea4f62f05dbae8a49d5/examples/controlnet/train_controlnet.py#L606) using load_dataset(). however, load_from_disk() seems to work?

would appreciate if someone can explain why ... | 2024-11-26T10:50:18 | 2024-11-26T10:50:18 | null | https://github.com/huggingface/datasets/issues/7298 | null | 7,298 | false | [] |

2,683,977,430 | wrong return type for `IterableDataset.shard()` | closed | ### Describe the bug

`IterableDataset.shard()` has the wrong typing for its return as `"Dataset"`. It should be `"IterableDataset"`. Makes my IDE unhappy.

### Steps to reproduce the bug

look at [the source code](https://github.com/huggingface/datasets/blob/main/src/datasets/iterable_dataset.py#L2668)?

### Expected ... | 2024-11-22T17:25:46 | 2024-12-03T14:27:27 | 2024-12-03T14:27:03 | https://github.com/huggingface/datasets/issues/7297 | null | 7,297 | false | [

"Oops my bad ! thanks for reporting"

] |

2,675,573,974 | Remove upper version limit of fsspec[http] | closed | null | 2024-11-20T11:29:16 | 2025-03-06T04:47:04 | 2025-03-06T04:47:01 | https://github.com/huggingface/datasets/pull/7296 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7296",

"html_url": "https://github.com/huggingface/datasets/pull/7296",

"diff_url": "https://github.com/huggingface/datasets/pull/7296.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7296.patch",

"merged_at": null

} | 7,296 | true | [] |

2,672,003,384 | [BUG]: Streaming from S3 triggers `unexpected keyword argument 'requote_redirect_url'` | open | ### Describe the bug

Note that this bug is only triggered when `streaming=True`. #5459 introduced always calling fsspec with `client_kwargs={"requote_redirect_url": False}`, which seems to have incompatibility issues even in the newest versions.

Analysis of what's happening:

1. `datasets` passes the `client_kw... | 2024-11-19T12:23:36 | 2024-11-19T13:01:53 | null | https://github.com/huggingface/datasets/issues/7295 | null | 7,295 | false | [] |

2,668,663,130 | Remove `aiohttp` from direct dependencies | closed | The dependency is only used for catching an exception from other code. That can be done with an import guard.

| 2024-11-18T14:00:59 | 2025-05-07T14:27:18 | 2025-05-07T14:27:17 | https://github.com/huggingface/datasets/pull/7294 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7294",

"html_url": "https://github.com/huggingface/datasets/pull/7294",

"diff_url": "https://github.com/huggingface/datasets/pull/7294.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7294.patch",

"merged_at": "2025-05-07T14:27... | 7,294 | true | [] |

2,664,592,054 | Updated inconsistent output in documentation examples for `ClassLabel` | closed | fix #7129

@stevhliu | 2024-11-16T16:20:57 | 2024-12-06T11:33:33 | 2024-12-06T11:32:01 | https://github.com/huggingface/datasets/pull/7293 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7293",

"html_url": "https://github.com/huggingface/datasets/pull/7293",

"diff_url": "https://github.com/huggingface/datasets/pull/7293.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7293.patch",

"merged_at": "2024-12-06T11:32... | 7,293 | true | [

"Updated! 😄 ",

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7293). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update.",

"@lhoestq, can you help with this failing test please? 🙏 "

] |

2,664,250,855 | DataFilesNotFoundError for datasets `OpenMol/PubChemSFT` | closed | ### Describe the bug

Cannot load the dataset https://huggingface.co/datasets/OpenMol/PubChemSFT

### Steps to reproduce the bug

```

from datasets import load_dataset

dataset = load_dataset('OpenMol/PubChemSFT')

```

### Expected behavior

```

-----------------------------------------------------------------------... | 2024-11-16T11:54:31 | 2024-11-19T00:53:00 | 2024-11-19T00:52:59 | https://github.com/huggingface/datasets/issues/7292 | null | 7,292 | false | [

"Hi ! If the dataset owner uses `push_to_hub()` instead of `save_to_disk()` and upload the local files it will fix the issue.\r\nRight now `datasets` sees the train/test/valid pickle files but they are not supported file formats.",

"Alternatively you can load the arrow file instead:\r\n\r\n```python\r\nfrom datas... |

2,662,244,643 | Why return_tensors='pt' doesn't work? | open | ### Describe the bug

I tried to add input_ids to dataset with map(), and I used the return_tensors='pt', but why I got the callback with the type of List?

### Steps to reproduce the bug

`",

"> Hi ! `datasets` uses Arrow as storage backend which is agnostic to deep learning frameworks like torch. If ... |

2,657,620,816 | `Dataset.save_to_disk` hangs when using num_proc > 1 | open | ### Describe the bug

Hi, I'm encountered a small issue when saving datasets that led to the saving taking up to multiple hours.

Specifically, [`Dataset.save_to_disk`](https://huggingface.co/docs/datasets/main/en/package_reference/main_classes#datasets.Dataset.save_to_disk) is a lot slower when using `num_proc>1` than... | 2024-11-14T05:25:13 | 2025-06-27T00:56:47 | null | https://github.com/huggingface/datasets/issues/7290 | null | 7,290 | false | [

"I've met the same situations.\r\n\r\nHere's my logs:\r\nnum_proc = 64, I stop it early as it cost **too** much time.\r\n```\r\nSaving the dataset (1540/4775 shards): 32%|███▏ | 47752224/147853764 [15:32:54<132:28:34, 209.89 examples/s]\r\nSaving the dataset (1540/4775 shards): 32%|███▏ | 47754224/14785... |

2,648,019,507 | Dataset viewer displays wrong statists | closed | ### Describe the bug

In [my dataset](https://huggingface.co/datasets/speedcell4/opus-unigram2), there is a column called `lang2`, and there are 94 different classes in total, but the viewer says there are 83 values only. This issue only arises in the `train` split. The total number of values is also 94 in the `test`... | 2024-11-11T03:29:27 | 2024-11-13T13:02:25 | 2024-11-13T13:02:25 | https://github.com/huggingface/datasets/issues/7289 | null | 7,289 | false | [

"i think this issue is more for https://github.com/huggingface/dataset-viewer"

] |

2,647,052,280 | Release v3.1.1 | closed | null | 2024-11-10T09:38:15 | 2024-11-10T09:38:48 | 2024-11-10T09:38:48 | https://github.com/huggingface/datasets/pull/7288 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7288",

"html_url": "https://github.com/huggingface/datasets/pull/7288",

"diff_url": "https://github.com/huggingface/datasets/pull/7288.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7288.patch",

"merged_at": null

} | 7,288 | true | [] |

2,646,958,393 | Support for identifier-based automated split construction | open | ### Feature request

As far as I understand, automated construction of splits for hub datasets is currently based on either file names or directory structure ([as described here](https://huggingface.co/docs/datasets/en/repository_structure))

It would seem to be pretty useful to also allow splits to be based on ide... | 2024-11-10T07:45:19 | 2024-11-19T14:37:02 | null | https://github.com/huggingface/datasets/issues/7287 | null | 7,287 | false | [

"Hi ! You can already configure the README.md to have multiple sets of splits, e.g.\r\n\r\n```yaml\r\nconfigs:\r\n- config_name: my_first_set_of_split\r\n data_files:\r\n - split: train\r\n path: *.csv\r\n- config_name: my_second_set_of_split\r\n data_files:\r\n - split: train\r\n path: train-*.csv\r\n -... |

2,645,350,151 | Concurrent loading in `load_from_disk` - `num_proc` as a param | closed | ### Feature request

https://github.com/huggingface/datasets/pull/6464 mentions a `num_proc` param while loading dataset from disk, but can't find that in the documentation and code anywhere

### Motivation

Make loading large datasets from disk faster

### Your contribution

Happy to contribute if given pointers | 2024-11-08T23:21:40 | 2024-11-09T16:14:37 | 2024-11-09T16:14:37 | https://github.com/huggingface/datasets/issues/7286 | null | 7,286 | false | [] |

2,644,488,598 | Release v3.1.0 | closed | null | 2024-11-08T16:17:58 | 2024-11-08T16:18:05 | 2024-11-08T16:18:05 | https://github.com/huggingface/datasets/pull/7285 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7285",

"html_url": "https://github.com/huggingface/datasets/pull/7285",

"diff_url": "https://github.com/huggingface/datasets/pull/7285.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7285.patch",

"merged_at": null

} | 7,285 | true | [] |

2,644,302,386 | support for custom feature encoding/decoding | closed | Fix for https://github.com/huggingface/datasets/issues/7220 as suggested in discussion, in preference to #7221

(only concern would be on effect on type checking with custom feature types that aren't covered by FeatureType?) | 2024-11-08T15:04:08 | 2024-11-21T16:09:47 | 2024-11-21T16:09:47 | https://github.com/huggingface/datasets/pull/7284 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7284",

"html_url": "https://github.com/huggingface/datasets/pull/7284",

"diff_url": "https://github.com/huggingface/datasets/pull/7284.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7284.patch",

"merged_at": "2024-11-21T16:09... | 7,284 | true | [

"@lhoestq ",

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_7284). All of your documentation changes will be reflected on that endpoint. The docs are available until 30 days after the last update."

] |

2,642,537,708 | Allow for variation in metadata file names as per issue #7123 | open | Allow metadata files to have an identifying preface. Specifically, it will recognize files with `-metadata.csv` or `_metadata.csv` as metadata files for the purposes of the dataset viewer functionality.

Resolves #7123. | 2024-11-08T00:44:47 | 2024-11-08T00:44:47 | null | https://github.com/huggingface/datasets/pull/7283 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7283",

"html_url": "https://github.com/huggingface/datasets/pull/7283",

"diff_url": "https://github.com/huggingface/datasets/pull/7283.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7283.patch",

"merged_at": null

} | 7,283 | true | [] |

2,642,075,491 | Faulty datasets.exceptions.ExpectedMoreSplitsError | open | ### Describe the bug

Trying to download only the 'validation' split of my dataset; instead hit the error `datasets.exceptions.ExpectedMoreSplitsError`.

Appears to be the same undesired behavior as reported in [#6939](https://github.com/huggingface/datasets/issues/6939), but with `data_files`, not `data_dir`.

Her... | 2024-11-07T20:15:01 | 2024-11-07T20:15:42 | null | https://github.com/huggingface/datasets/issues/7282 | null | 7,282 | false | [] |

2,640,346,339 | File not found error | open | ### Describe the bug

I get a FileNotFoundError:

<img width="944" alt="image" src="https://github.com/user-attachments/assets/1336bc08-06f6-4682-a3c0-071ff65efa87">

### Steps to reproduce the bug

See screenshot.

### Expected behavior

I want to load one audiofile from the dataset.

### Environmen... | 2024-11-07T09:04:49 | 2024-11-07T09:22:43 | null | https://github.com/huggingface/datasets/issues/7281 | null | 7,281 | false | [

"Link to the dataset: https://huggingface.co/datasets/MichielBontenbal/UrbanSounds "

] |

2,639,977,077 | Add filename in error message when ReadError or similar occur | open | Please update error messages to include relevant information for debugging when loading datasets with `load_dataset()` that may have a few corrupted files.

Whenever downloading a full dataset, some files might be corrupted (either at the source or from downloading corruption).

However the errors often only let me k... | 2024-11-07T06:00:53 | 2024-11-20T13:23:12 | null | https://github.com/huggingface/datasets/issues/7280 | null | 7,280 | false | [

"Hi Elisa, please share the error traceback here, and if you manage to find the location in the `datasets` code where the error occurs, feel free to open a PR to add the necessary logging / improve the error message.",

"> please share the error traceback\n\nI don't have access to it but it should be during [this ... |

2,635,813,932 | Feature proposal: Stacking, potentially heterogeneous, datasets | open | ### Introduction

Hello there,

I noticed that there are two ways to combine multiple datasets: Either through `datasets.concatenate_datasets` or `datasets.interleave_datasets`. However, to my knowledge (please correct me if I am wrong) both approaches require the datasets that are combined to have the same features.... | 2024-11-05T15:40:50 | 2024-11-05T15:40:50 | null | https://github.com/huggingface/datasets/pull/7279 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7279",

"html_url": "https://github.com/huggingface/datasets/pull/7279",

"diff_url": "https://github.com/huggingface/datasets/pull/7279.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7279.patch",

"merged_at": null

} | 7,279 | true | [] |

2,633,436,151 | Let soundfile directly read local audio files | open | - [x] Fixes #7276 | 2024-11-04T17:41:13 | 2024-11-18T14:01:25 | null | https://github.com/huggingface/datasets/pull/7278 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7278",

"html_url": "https://github.com/huggingface/datasets/pull/7278",

"diff_url": "https://github.com/huggingface/datasets/pull/7278.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7278.patch",

"merged_at": null

} | 7,278 | true | [] |

2,632,459,184 | Add link to video dataset | closed | This PR updates https://huggingface.co/docs/datasets/loading to also link to the new video loading docs.