dataset_name stringlengths 2 128 | description stringlengths 1 9.7k | prompt stringlengths 59 185 |

|---|---|---|

PropSegmEnt | **PropSegmEnt** is a corpus of over 35K propositions annotated by expert human raters. The dataset structure resembles the tasks of (1) segmenting sentences within a document to the set of propositions, and (2) classifying the entailment relation of each proposition with respect to a different yet topically-aligned doc... | Provide a detailed description of the following dataset: PropSegmEnt |

LiPC | **LiPC (LiDAR Point Cloud Clustering Benchmark Suite)** is a benchmark suite for point cloud clustering algorithms based on open-source software and open datasets. It aims to provide the community with a collection of methods and datasets that are easy to use, comparable, and that experimental results are traceable and... | Provide a detailed description of the following dataset: LiPC |

EU Long-term Dataset with Multiple Sensors for Autonomous Driving | **EU Long-term Dataset with Multiple Sensors for Autonomous Driving** was collected with a robocar, equipped with eleven heterogeneous sensors, in the downtown and suburban areas of Montbéliard in France. The vehicle speed was limited to 50 km/h following the French traffic rules. For the long-term data, the driving di... | Provide a detailed description of the following dataset: EU Long-term Dataset with Multiple Sensors for Autonomous Driving |

L-CAS 3D Point Cloud People Dataset | **L-CAS 3D Point Cloud People Dataset** contains 28,002 Velodyne scan frames acquired in one of the main buildings (Minerva Building) of the University of Lincoln, UK. Total length of the recorded data is about 49 minutes. Data were grouped into two classes according to whether the robot was stationary or moving. | Provide a detailed description of the following dataset: L-CAS 3D Point Cloud People Dataset |

Light field RGB Dataset | We created this robust and custom light field dataset in order to assist light field researchers in using SOTA machine learning algorithms for a variety of light field tasks such as depth estimation, synthetic aperture imaging, and more.

In this dataset contains five folders of 6 datasets with a specified number of ... | Provide a detailed description of the following dataset: Light field RGB Dataset |

DISE 2021 Dataset | Datasets are built upon three other datasets: DISEC 2013, RVL-CDIP, RDCL 2017. Please respect their LICENSE. | Provide a detailed description of the following dataset: DISE 2021 Dataset |

a test dataset for reuse | a | Provide a detailed description of the following dataset: a test dataset for reuse |

RoFT | RoFT is a dataset of 21,000 human annotations of generated text. The task is "Boundary detection" i.e. given a passage that starts off as human written, determine when the text transitions to being machine generated. The dataset also includes error annotations using the taxonomy introduced in the paper. The data can be... | Provide a detailed description of the following dataset: RoFT |

Skit-S2I | This dataset for Intent classification from human speech covers 14 coarse-grained intents from the Banking domain. This work is inspired by a similar release in the Minds-14 dataset - here, we restrict ourselves to Indian English but with a much larger training set. The data was generated by 11 (Indian English) speaker... | Provide a detailed description of the following dataset: Skit-S2I |

Minsk2019 ALS database | **Minsk2019 ALS database** is a dataset collected in Republican Research and Clinical Center of Neurology and Neurosurgery (Minsk, Belarus). A total of 54 speakers were recorded, with 39 healthy speakers (23 males, 16 females) and 15 ALS patients with signs of bulbar dysfunction (6 males, 9 females). It is designed for... | Provide a detailed description of the following dataset: Minsk2019 ALS database |

Distress Analysis Interview Corpus/Wizard-of-Oz set (DAIC-WOZ) | The Distress Analysis Interview Corpus/Wizard-of-Oz set (DAIC-WOZ) dataset [24, 25] comprises voice and text samples from 189 interviewed healthy and control persons and their PHQ-8 depression detection questionnaire. This dataset is commonly used in research works for text-based detection, voice-based detection, and i... | Provide a detailed description of the following dataset: Distress Analysis Interview Corpus/Wizard-of-Oz set (DAIC-WOZ) |

SUSY | This is a classification problem to distinguish between a signal process which produces supersymmetric particles and a background process which does not. | Provide a detailed description of the following dataset: SUSY |

ISLES 2017 | A medical image segmentation challenge at the International Conference on Medical Image Computing and Computer Assisted Intervention (MICCAI) 2017. On the SMIR, you can register for the challenge, download the test data and submit your results. For more information, visit the official ISLES homepage under www.isles-cha... | Provide a detailed description of the following dataset: ISLES 2017 |

Dusha | **Dusha** is a dataset for speech emotion recognition (SER) tasks. The corpus contains approximately 350 hours of data, more than 300 000 audio recordings with Russian speech and their transcripts. It is annotated using a crowd-sourcing platform and includes two subsets: acted and real-life. | Provide a detailed description of the following dataset: Dusha |

TextBox 2.0 | **TextBox 2.0** is a comprehensive and unified library for text generation, focusing on the use of pre-trained language models (PLMs). The library covers 13 common text generation tasks and their corresponding 83 datasets and further incorporates 45 PLMs covering general, translation, Chinese, dialogue, controllable, d... | Provide a detailed description of the following dataset: TextBox 2.0 |

XAlign | It consists of an extensive collection of a high quality cross-lingual fact-to-text dataset in 11 languages: Assamese (as), Bengali (bn), Gujarati (gu), Hindi (hi), Kannada (kn), Malayalam (ml), Marathi (mr), Oriya (or), Punjabi (pa), Tamil (ta), Telugu (te), and monolingual dataset in English (en). This is the Wikiped... | Provide a detailed description of the following dataset: XAlign |

MultiSpider | **MultiSpider** is a large multilingual text-to-SQL dataset which covers seven languages (English, German, French, Spanish, Japanese, Chinese, and Vietnamese). | Provide a detailed description of the following dataset: MultiSpider |

CORBEL | Dataset included measuring static tension under 2 kg load in different points

of the CB and measurements in dynamic conditions. The latter conditions

presumed the range of the linear belt speeds between

nu_1 = 0.5 and nu_max = 1.7 m/s. 400 Hz unified sampling frequency for

the experiments. It corresponded with 140... | Provide a detailed description of the following dataset: CORBEL |

MusicNetEM | New refined labels for the MusicNet dataset obtained by the EM process as described in the paper: Ben Maman and Amit Bermano, "Unaligned Supervision for Automatic Music Transcription in The Wild" | Provide a detailed description of the following dataset: MusicNetEM |

MENYO-20k | MENYO-20k is the first multi-domain parallel corpus with a special focus on clean orthography for Yorùbá--English with standardized train-test splits for benchmarking. | Provide a detailed description of the following dataset: MENYO-20k |

Harmonized US National Health and Nutrition Examination Survey (NHANES) 1988-2018 | The National Health and Nutrition Examination Survey (NHANES) provides data on the health and environmental exposure of the non-institutionalized US population. Such data have considerable potential to understand how the environment and behaviors impact human health. These data are also currently leveraged to answer pu... | Provide a detailed description of the following dataset: Harmonized US National Health and Nutrition Examination Survey (NHANES) 1988-2018 |

GNMC | We present the Gracenote Multi-Crop (GNMC) dataset, to further research in algorithms for aesthetic image cropping. The dataset consists of a diverse collection of 10K images, each cropped in five different aspect ratios by experienced editors. GNMC is larger than existing datasets commonly used to benchmark image crop... | Provide a detailed description of the following dataset: GNMC |

HBW | Human Bodies in the Wild (HBW) is a validation and test set for body shape estimation. It consists of images taken in the wild and ground truth 3D body scans in SMPL-X topology. To create HBW, we collect body scans of 35 participants and register the SMPL-X model to the scans. Further each participant is photographed i... | Provide a detailed description of the following dataset: HBW |

QoEVAVE | **Quality of Experience Evaluation of Interactive Virtual Environments with Audiovisual Scenes (QoEVAVE)** provides an initial audiovisual database consiting of 12 sequences capturing real-life nature and urban scenes. The maximum video resolution is 7680x3840 (8k) at 60 frames-per-second, with 4th-order Ambisonics spa... | Provide a detailed description of the following dataset: QoEVAVE |

HPointLoc | **HPointLoc** is a dataset designed for exploring capabilities of visual place recognition in indoor environment and loop detection in simultaneous localization and mapping. It is based on the popular Habitat simulator from 49 photorealistic indoor scenes from the Matterport3D dataset and contains 76,000 frames. | Provide a detailed description of the following dataset: HPointLoc |

FGVD | **Fine-Grained Vehicle Detection** (**FGVD**) is a dataset for fine-grained vehicle detection captured from a moving camera mounted on a car. The FGVD dataset is challenging as it has vehicles in complex traffic scenarios with intra-class and inter-class variations in types, scale, pose, occlusion, and lighting conditi... | Provide a detailed description of the following dataset: FGVD |

Merger Agreement Understanding Dataset (MAUD) | MAUD is an expert-annotated merger agreement reading comprehension dataset based on the American Bar Association's 2021 Public Target Deal Points study, where lawyers and law students answered 92 questions about 152 merger agreements.

With over 39,000 examples and 47,000 total annotations, it is the largest expert-a... | Provide a detailed description of the following dataset: Merger Agreement Understanding Dataset (MAUD) |

MTNeuro | **MTNeuro** is a multi-task neuroimaging benchmark built on volumetric, micrometer-resolution X-ray microtomography images spanning a large thalamocortical section of mouse brain, encompassing multiple cortical and subcortical regions.

This dataset provides some key features for the neuroinformatics processing commu... | Provide a detailed description of the following dataset: MTNeuro |

CamNuvem Dataset | This dataset focuses only on the robbery category, presenting a new weakly labelled dataset that contains 486 new real–world robbery surveillance videos acquired from public sources. | Provide a detailed description of the following dataset: CamNuvem Dataset |

Argoverse 2 Sensor | The **Argoverse 2 Sensor** Dataset is a collection of 1,000 scenarios with 3D object tracking annotations. Each sequence in our training and validation sets includes annotations for all objects within five meters of the “drivable area” — the area in which it is possible for a vehicle to drive. The HD map for each scena... | Provide a detailed description of the following dataset: Argoverse 2 Sensor |

Argoverse 2 Lidar | The **Argoverse 2 Lidar** Dataset is a collection of 20,000 scenarios with lidar sensor data, HD maps, and ego-vehicle pose. It does not include imagery or 3D annotations. The dataset is designed to support research into self-supervised learning in the lidar domain, as well as point cloud forecasting.

The dataset is... | Provide a detailed description of the following dataset: Argoverse 2 Lidar |

Argoverse 2 Map Change | The **Argoverse 2 Map Change** Dataset is a collection of 1,000 scenarios with ring camera imagery, lidar, and HD maps. Two hundred of the scenarios include changes in the real-world environment that are not yet reflected in the HD map, such as new crosswalks or repainted lanes. By sharing a map dataset that labels the... | Provide a detailed description of the following dataset: Argoverse 2 Map Change |

arXiv-10 | Benchmark dataset for abstracts and titles of 100,000 ArXiv scientific papers.

This dataset contains 10 classes and is balanced (exactly 10,000 per class).

The classes include subcategories of computer science, physics, and math.

• Direct link: [Download](https://github.com/ashfarhangi/Protoformer/raw/main/data/A... | Provide a detailed description of the following dataset: arXiv-10 |

HarveyNER | fine-grained location names extraction from disaster-related tweets | Provide a detailed description of the following dataset: HarveyNER |

JGLUE | JGLUE, Japanese General Language Understanding Evaluation, is built to measure the general NLU ability in Japanese. | Provide a detailed description of the following dataset: JGLUE |

MyStone: Preprocessed expelled kidney stones | The dataset of images is built upon a collection of 454 samples kindly provided by the urology department of the Hospital Universitary de Bellvitge (Barcelona, Spain) in the time span of several years. They cover all the main 9 classes but cystine, for which just 4 samples were available so we discarded this class as m... | Provide a detailed description of the following dataset: MyStone: Preprocessed expelled kidney stones |

USPTO-190 | A chemical synthesis route dataset constructed from the USPTO reaction dataset (1976-Sep2016) and a list of commercially available

building blocks from eMolecules (~23.1M molecules). After processing, the dataset has 299202 training routes, 65274 validation routes, 190 test routes, and the corresponding target molec... | Provide a detailed description of the following dataset: USPTO-190 |

BEAT | BEAT has i) 76 hours, high-quality, multi-modal data captured from 30 speakers talking with eight different emotions and in four different languages, ii) 32 millions frame-level emotion and semantic relevance annotations.

Our statistical analysis on BEAT demonstrates the correlation of conversational gestures with \te... | Provide a detailed description of the following dataset: BEAT |

Probability words NLI | This dataset tests the capabilities of language models to correctly capture the meaning of words denoting probabilities (WEP), e.g. words like "probably", "maybe", "surely", "impossible".

We used probabilitic soft logic to combine probabilistic statements expressed with WEP (WEP-Reasoning) and we also used the UNLI ... | Provide a detailed description of the following dataset: Probability words NLI |

PACO | **Parts and Attributes of Common Objects (PACO)** is a detection dataset that goes beyond traditional object boxes and masks and provides richer annotations such as part masks and attributes. It spans 75 object categories, 456 object-part categories and 55 attributes across image (LVIS) and video (Ego4D) datasets. The ... | Provide a detailed description of the following dataset: PACO |

GeoDE | **GeoDE** is a geographically diverse dataset with 61,940 images from 40 classes and 6 world regions, and no personally identifiable information, collected through crowd-sourcing. | Provide a detailed description of the following dataset: GeoDE |

Aachen Day-Night v1.1 Benchmark | Aachen Day-Night v1.1 dataset is an extended version of the original *[Aachen Day-Night dataset](https://paperswithcode.com/dataset/aachen-day-night)*. Besides the original query images, the Aachen Day-Night v1.1 dataset contains an additional 93 nighttime queries. In addition, it uses a larger 3D model containing addi... | Provide a detailed description of the following dataset: Aachen Day-Night v1.1 Benchmark |

iV2V and iV2I+ | This dataset provides wireless measurements from two industrial testbeds: iV2V (industrial Vehicle-to-Vehicle) and iV2I+ (industrial Vehicular-to-Infrastructure plus sensor).

iV2V covers 10h of sidelink communication scenarios between 3 Automated Guided Vehicles (AGVs), while iV2I+ was conducted for around 16h at an... | Provide a detailed description of the following dataset: iV2V and iV2I+ |

abc_cc | ## Dataset Summary

The dataset used to train and evaluate [TunesFormer](https://huggingface.co/sander-wood/tunesformer) is collected from two sources: [The Session](https://thesession.org) and [ABCnotation.com](https://abcnotation.com). The Session is a community website focused on Irish traditional music, while ABC... | Provide a detailed description of the following dataset: abc_cc |

AviationQA | AviationQA is introduced in the paper titled- There is No Big Brother or Small Brother: Knowledge Infusion in Language Models for Link Prediction and Question Answering

The paper is accepted in the main conference of ICON 2022.

We create a synthetic dataset, AviationQA, a set of 1 million factoid QA pairs from 12... | Provide a detailed description of the following dataset: AviationQA |

Binette's 2022 Inventors Benchmark | Hand-disambiguation of a sample of U.S. patents inventor mentions from PatentsView.org.

Inventors we selected indirectly by sampling inventor mentions uniformly at random. This results in inventor sampled with probability proportional to their number of granted patents.

The time period considered is from 1976 to ... | Provide a detailed description of the following dataset: Binette's 2022 Inventors Benchmark |

MN-DS | **Multilabeled News Dataset** (**MN-DS**) is a dataset for news classification. It consists of 10,917 articles in 17 first-level and 109 second-level categories from 215 media sources. | Provide a detailed description of the following dataset: MN-DS |

HaDes | **HaDes** is a token-level, reference-free hallucination detection dataset named HAllucination DEtection dataSet. To create this dataset, a large number of text segments extracted from English language Wikipedia are perturbed, and then verified these with crowd-sourced annotations. | Provide a detailed description of the following dataset: HaDes |

FaithDial | **FaithDial** is a new benchmark for hallucination-free dialogues, by editing hallucinated responses in the Wizard of Wikipedia (WoW) benchmark.

FaithDial contains around 50K turns across 5.5K conversations. If trained on FaithDial, state-of-the-art dialogue models are significantly more faithful while also enhancin... | Provide a detailed description of the following dataset: FaithDial |

MSI | Article: A novel hierarchical model based on different emotion induction modalities for EEG emotion recognition | Provide a detailed description of the following dataset: MSI |

Compositional Visual Reasoning (CVR) | A fundamental component of human vision is our ability to parse complex visual scenes and judge the relations between their constituent objects. AI benchmarks for visual reasoning have driven rapid progress in recent years with state-of-the-art systems now reaching human accuracy on some of these benchmarks. Yet, there... | Provide a detailed description of the following dataset: Compositional Visual Reasoning (CVR) |

Fake News Detection | TICNN dataset | Provide a detailed description of the following dataset: Fake News Detection |

KAIST multi-spectral Day/Night 2018 | We introduce the **KAIST multi-spectral** dataset, which covers a greater range of drivable regions, from urban to residential, for autonomous systems. Our dataset provides different perspectives of the world captured in coarse time slots (day and night) in addition to fine time slots (sunrise, morning, afternoon, suns... | Provide a detailed description of the following dataset: KAIST multi-spectral Day/Night 2018 |

Causal Triplet | **Causal Triplet** is a causal representation learning benchmark featuring not only visually more complex scenes, but also two crucial desiderata commonly overlooked in previous works:

1) An actionable counterfactual setting, where only certain object-level variables allow forcounterfactual observations whereas oth... | Provide a detailed description of the following dataset: Causal Triplet |

AstroVision | AstroVision is a large-scale dataset comprised of 115,970 densely annotated, real images of 16 different small bodies from both legacy and ongoing deep space missions to facilitate the study of deep learning for autonomous navigation in the vicinity of a small body. | Provide a detailed description of the following dataset: AstroVision |

SFDDD | We've all been there: a light turns green and the car in front of you doesn't budge. Or, a previously unremarkable vehicle suddenly slows and starts swerving from side-to-side.

When you pass the offending driver, what do you expect to see? You certainly aren't surprised when you spot a driver who is texting, seeming... | Provide a detailed description of the following dataset: SFDDD |

ParagraphOrdreing | We have prepared a dataset, ParagraphOrdreing, which consists of around 300,000 paragraph pairs. We collected our data from Project Gutenberg. We have written an API for gathering and pre-processing in order to have the appropriate format for the defined task. Each example contains two paragraphs and a label that deter... | Provide a detailed description of the following dataset: ParagraphOrdreing |

BA-2motifs | It's a synthetic dataset, which contains 1000 graphs divided into two classes according to the motif they contain: either a “house” or a five-node cycle. | Provide a detailed description of the following dataset: BA-2motifs |

File S1 | -Tab 1 (Carboxylase table):

This expanded table contains additional information for carboxylase classes and splits them into individual examples.

Notes:

Primary direction is carboxylating if we could find a single natural or even artificial example that runs primarily in that direction.

Thermodynamic data is miss... | Provide a detailed description of the following dataset: File S1 |

File S2 | -Tab 1 Rubisco forms:

This excel sheet contains one row for every rubisco form considered in this review (some forms like IAq and IAc from [31] are not considered separately because they are only phylogenetically separated in the small subunits). For each form we include the pathway in which it functions, the chemical... | Provide a detailed description of the following dataset: File S2 |

File S3 | This .csv file contains all of the sequences used in the phylogenetic analysis (see above). For form annotation sequences under 360aa were excluded and no upper limit was set. Sequences removed by trimAL (using a gap threshold of 0.1) are labeled as “Unannotated” - many of them may not be actual rubiscos. Sequences tha... | Provide a detailed description of the following dataset: File S3 |

File S4 | This tree was generated as indicated above in the methods. The model chosen by the algorithm was LG_F_R10. | Provide a detailed description of the following dataset: File S4 |

File S5 | This tree was generated as indicated above in the methods. The model chosen by the algorithm was LG+F+R8. | Provide a detailed description of the following dataset: File S5 |

File S6 | This tree was generated as indicated above in the text for file S3 using model LG+F+G. | Provide a detailed description of the following dataset: File S6 |

File S7 | This tree was generated as indicated above in the methods. The model chosen by the algorithm was LG+R8. | Provide a detailed description of the following dataset: File S7 |

EGFxSet | EGFxSet (Electric Guitar Effects dataset) features recordings for all clean tones in a 22-fret Stratocaster, recorded with 5 different pickup configurations, also processed through 12 popular guitar effects. Our dataset was recorded in real hardware, making it relevant for music information retrieval tasks on real mus... | Provide a detailed description of the following dataset: EGFxSet |

VTC | VTC is a large-scale multimodal dataset containing video-caption pairs (~300k) alongside comments that can be used for multimodal representation learning. | Provide a detailed description of the following dataset: VTC |

Duke Breast Cancer MRI | Breast MRI scans of 922 cancer patients from Duke University, with tumor bounding box annotations, clinical, imaging, and many other features, and more. | Provide a detailed description of the following dataset: Duke Breast Cancer MRI |

SymphonyNet | First large-scale symphony generation dataset. | Provide a detailed description of the following dataset: SymphonyNet |

RxRx1 | **RxRx1** is a biological dataset designed specifically for the systematic study of batch effect correction methods. The dataset consists of 125,510 high-resolution fluorescence microscopy images of human cells under 1,138 genetic perturbations in 51 experimental batches across 4 cell types. | Provide a detailed description of the following dataset: RxRx1 |

ValNov Subtask A | Binary labels for Validity and Novelty respectively are given for each Conclusion. | Provide a detailed description of the following dataset: ValNov Subtask A |

ValNov Subtask B | Validity and Novelty are determined in a comparative setting between two conclusions at a time. For Validity and Novelty possible labels are "Conclusion 1 is better", "tie" and "Conclusion 2 is better", for Validity and Novelty respectively. | Provide a detailed description of the following dataset: ValNov Subtask B |

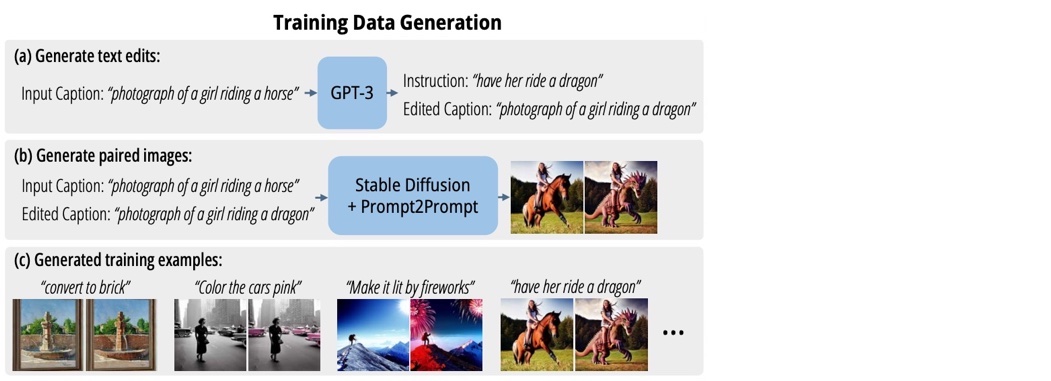

InstructPix2Pix Image Editing Dataset |

A dataset for image editing containing *>450k* samples of:

1. input image (with corresponding text caption describing the image)

2. text-based edit instruction

3. edited image (with corresponding text captio... | Provide a detailed description of the following dataset: InstructPix2Pix Image Editing Dataset |

AlexMI | # Alex Motor Imagery dataset.

## Dataset summary

Motor imagery dataset from the PhD dissertation of A. Barachant.

This Dataset contains EEG recordings from 8 subjects, performing 2

task of motor imagination (right hand, feet or rest). Data have been

recorded at 512Hz with 16 wet electrodes (Fpz, F7, F3... | Provide a detailed description of the following dataset: AlexMI |

BNCI 2014-001 Motor Imagery dataset. | ## BNCI 2014-001 Motor Imagery dataset

Dataset IIa from BCI Competition 4 [1].

### Dataset Description

This data set consists of EEG data from 9 subjects. The cue-based BCI paradigm consisted of four different motor imagery tasks, namely the imagination of movement of the left hand (class 1), right hand (class... | Provide a detailed description of the following dataset: BNCI 2014-001 Motor Imagery dataset. |

BNCI 2014-004 Motor Imagery dataset. | **Dataset description**

This data set consists of EEG data from 9 subjects of a study published in

[1]_. The subjects were right-handed, had normal or corrected-to-normal

vision and were paid for participating in the experiments.

All volunteers were sitting in an armchair, watching a flat screen m... | Provide a detailed description of the following dataset: BNCI 2014-004 Motor Imagery dataset. |

Motor Imagery dataset from Cho et al 2017. | **Dataset Description**

We conducted a BCI experiment for motor imagery movement (MI movement)

of the left and right hands with 52 subjects (19 females, mean age ±

SD age = 24.8 ± 3.86 years); Each subject took part in the same

experiment, and subject ID was denoted and indexed as s1, s2, ...,

s52. Subjects... | Provide a detailed description of the following dataset: Motor Imagery dataset from Cho et al 2017. |

Physionet Motor Imagery dataset. | ## Physionet MI dataset: https://physionet.org/pn4/eegmmidb/

This data set consists of over 1500 one- and two-minute EEG recordings,

obtained from 109 volunteers [2]_.

Subjects performed different motor/imagery tasks while 64-channel EEG were

recorded using the BCI2000 system (http://www.bci2000... | Provide a detailed description of the following dataset: Physionet Motor Imagery dataset. |

High-gamma dataset discribed in Schirrmeister et al. 2017 | High-gamma dataset discribed in Schirrmeister et al. 2017

Our “High-Gamma Dataset” is a 128-electrode dataset (of which we later only use

44 sensors covering the motor cortex, (see Section 2.7.1), obtained from 14

healthy subjects (6 female, 2 left-handed, age 27.2 ± 3.6 (mean ± std)) with

roughly 1000 (963.1 ± 1... | Provide a detailed description of the following dataset: High-gamma dataset discribed in Schirrmeister et al. 2017 |

Motor Imagey Dataset from Shin et al 2017 | ## Data Acquisition

EEG and NIRS data was collected in an ordinary bright room. EEG data was

recorded by a multichannel BrainAmp EEG amplifier with thirty active

electrodes (Brain Products GmbH, Gilching, Germany) with linked mastoids

reference at 1000 Hz sampling rate. The EEG amplifier was also used to

measure... | Provide a detailed description of the following dataset: Motor Imagey Dataset from Shin et al 2017 |

Mental Arithmetic Dataset from Shin et al 2017 | **Data Acquisition**

EEG and NIRS data was collected in an ordinary bright room. EEG data was

recorded by a multichannel BrainAmp EEG amplifier with thirty active

electrodes (Brain Products GmbH, Gilching, Germany) with linked mastoids

reference at 1000 Hz sampling rate. The EEG amplifier was also used to

measur... | Provide a detailed description of the following dataset: Mental Arithmetic Dataset from Shin et al 2017 |

Motor Imagery dataset from Weibo et al 2014. | Dataset from the article *Evaluation of EEG oscillatory patterns and

cognitive process during simple and compound limb motor imagery* [1]_.

It contains data recorded on 10 subjects, with 60 electrodes.

This dataset was used to investigate the differences of the EEG patterns

between simple limb motor imagery... | Provide a detailed description of the following dataset: Motor Imagery dataset from Weibo et al 2014. |

Motor Imagery dataset from Zhou et al 2016. | Dataset from the article *A Fully Automated Trial Selection Method for

Optimization of Motor Imagery Based Brain-Computer Interface* [1]_.

This dataset contains data recorded on 4 subjects performing 3 type of

motor imagery: left hand, right hand and feet.

Every subject went through three sessions, each of which ... | Provide a detailed description of the following dataset: Motor Imagery dataset from Zhou et al 2016. |

BNCI 2014-002 Motor Imagery dataset | **Dataset Description**

This data set consists of EEG data from 9 subjects. The cue-based BCI

paradigm consisted of four different motor imagery tasks, namely the imag-

ination of movement of the left hand (class 1), right hand (class 2), both

feet (class 3), and tongue (class 4). Two sessions o... | Provide a detailed description of the following dataset: BNCI 2014-002 Motor Imagery dataset |

BNCI 2015-001 Motor Imagery dataset | **Dataset description**

We acquired the EEG from three Laplacian derivations, 3.5 cm (center-to-

center) around the electrode positions (according to International 10-20

System of Electrode Placement) C3 (FC3, C5, CP3 and C1), Cz (FCz, C1, CPz

and C2) and C4 (FC4, C2, CP4 and C6). The acquisition hardware was a

... | Provide a detailed description of the following dataset: BNCI 2015-001 Motor Imagery dataset |

BNCI 2015-004 Motor Imagery dataset | **Dataset description**

We provide EEG data recorded from nine users with disability (spinal cord

injury and stroke) on two different days (sessions). Users performed,

follow- ing a cue-guided experimental paradigm, five distinct mental tasks

(MT). MTs include mental word association (condition WORD), mental

s... | Provide a detailed description of the following dataset: BNCI 2015-004 Motor Imagery dataset |

BMI/OpenBMI dataset for MI. | BMI/OpenBMI dataset for MI.

Dataset from Lee et al 2019 [1].

### Dataset Description

EEG signals were recorded with a sampling rate of 1,000 Hz and collected with 62 Ag/AgCl electrodes. The EEG amplifier used in the experiment was a BrainAmp (Brain Products; Munich, Germany). The channels were nasion-reference... | Provide a detailed description of the following dataset: BMI/OpenBMI dataset for MI. |

Munich Motor Imagery dataset | BMI/OpenBMI dataset for MI.

Dataset from Lee et al 2019 [1].

### Dataset Description

A trial started with the central display of a white fixation cross. After 3 s, a white arrow was superimposed on the fixation cross, either pointing to the left or the right. Subjects were instructed to perform haptic motor i... | Provide a detailed description of the following dataset: Munich Motor Imagery dataset |

Motor Imagery dataset from Ofner et al 2017 | ### Dataset description

We recruited 15 healthy subjects aged between 22 and 40 years with a mean

age of 27 years (standard deviation 5 years). Nine subjects were female,

and all the subjects except s1 were right-handed.

We measured each subject in two sessions on two different days, which were

... | Provide a detailed description of the following dataset: Motor Imagery dataset from Ofner et al 2017 |

OLKAVS | The dataset contains 1,150 hours of transcribed audio from 1,107 Korean speakers in a studio setup with nine different viewpoints and various noise situations. We also provide the pre-trained baseline models for two tasks, audio-visual speech recognition and lip reading. | Provide a detailed description of the following dataset: OLKAVS |

RGB Arabic Alphabet Sign Language (AASL) dataset | RGB Arabic Alphabet Sign Language (AASL) dataset | Provide a detailed description of the following dataset: RGB Arabic Alphabet Sign Language (AASL) dataset |

BB | The Bacteria Biotope (BB) Task is part of the [BioNLP Open Shared Tasks](http://2019.bionlp-ost.org) and meets the BioNLP-OST standards of quality, originality and data formats.

Manually annotated data is provided for training, development and evaluation of information extraction methods. Tools for the detailed evalua... | Provide a detailed description of the following dataset: BB |

BB-norm-habitat | In the BB-norm modality of this task, participant systems had to normalize textual entity mentions according to the OntoBiotope ontology for habitats.

See [BB-dataset](https://paperswithcode.com/dataset/bb) for more information. | Provide a detailed description of the following dataset: BB-norm-habitat |

BB-norm-phenotype | In the BB-norm modality of this task, participant systems had to normalize textual entity mentions according to the OntoBiotope ontology for phenotypes. See [BB-dataset](https://paperswithcode.com/dataset/bb) for more information. | Provide a detailed description of the following dataset: BB-norm-phenotype |

ChemDisGene | ChemDisGene, a new dataset for training and evaluating multi-class multi-label biomedical relation extraction models. | Provide a detailed description of the following dataset: ChemDisGene |

Traffic | **Abstract**: The task for this dataset is to forecast the spatio-temporal traffic volume based on the historical traffic volume and other features in neighboring locations.

| Data Set Characteristics | Number of Instances | Area | Attribute Characteristics | Number of Attributes | Date Donated | Associated Task... | Provide a detailed description of the following dataset: Traffic |

Sales | Forecast Sales using ARIMA and SARIMA | Provide a detailed description of the following dataset: Sales |

Bochrum movement data | The linked repository holds data from a controlled single-obstacle avoidance experiment recorded in a motion laboratory. If you are using this data, please cite the following sources for the data

B. Grimme, Analysis and identification of elementary invariants as building blocks of human arm movements. PhD thesis, In... | Provide a detailed description of the following dataset: Bochrum movement data |

The tourism forecasting competition | > The data we use include 366 monthly series, 427 quarterly series and 518 yearly series. They were supplied by both tourism bodies (such as Tourism Australia, the Hong Kong Tourism Board and Tourism New Zealand) and various academics, who had used them in previous tourism forecasting studies (please refer to the ackno... | Provide a detailed description of the following dataset: The tourism forecasting competition |

HOI-SDC | In order to avoid the training process of the model being influenced by a portion of HOI classes with a very small number of instances, we remove some of the HOI classes containing a very small number of instances and HOI classes with no interaction from the training \textbf{S}et for the \textbf{D}ouble \textbf{C}halle... | Provide a detailed description of the following dataset: HOI-SDC |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.