text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91

values | source stringclasses 1

value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

|---|---|---|---|---|---|

This article is based on Unit Testing in Java, to be published on:

Introduction

A broad assertion is one that is so scrupulous in nailing down every little detail about the behavior it is checking that it becomes brittle and hides its intent under its overwhelming breadth and depth. When you encounter a broad assertion, it’s hard to say what exactly is it supposed check and, when you step back to observe, that test is probably breaking far more frequently than the average because it’s so picky that any change whatsoever will cause a difference in the expected output.

Let’s make this discussion a bit more concrete again by looking at an example test that suffers from this condition.

Example

The following example is my very own doing. I wrote it some years back as part of a sales presentation tracking system for a medical corporation. The corporation wanted to gather data on how the various sales presentations were carried out by the sales fleet that visited doctors to push their products. Essentially, they wanted a log of which salesman showed which slide of which presentation for how many seconds before moving on.

The solution involved a number of components. There was a little plug-in in the actual presentation file, triggering events when starting a new slide show, entering a slide, and so forth—each with a timestamp to signify when that particular event happened. Those events were pushed to a background application that appended them into a log file. Before synchronizing that log file with the centralized server, however, we transformed the log file into another format, preprocessing it a bit to make it easier for the centralized server to chomp the log file and dump the numbers into a central database. Essentially, we calculated the slide durations from timestamps.

The object responsible for this transformation was called a LogFileTransformer and, being test-infected as I was, I had written some tests for it. Listing 1 presents one of those tests—the one that suffered from broad assertion—along with the relevant setup. Have a look at it and see if you can detect the broad assertion.

Listing 1 Broad assertion makes a test brittle and opaque

public class LogFileTransformerTest { private String expectedOutput; private String logFile; @Before public void setUpBuildLogFile() { StringBuilder lines = new StringBuilder(); appendTo(lines, "[2005-05-23 21:20:33] LAUNCHED"); appendTo(lines, "[2005-05-23 21:20:33] session-id###SID"); appendTo(lines, "[2005-05-23 21:20:33] user-id###UID"); appendTo(lines, "[2005-05-23 21:20:33] presentation-id###PID"); appendTo(lines, "[2005-05-23 21:20:35] screen1"); appendTo(lines, "[2005-05-23 21:20:36] screen2"); appendTo(lines, "[2005-05-23 21:21:36] screen3"); appendTo(lines, "[2005-05-23 21:21:36] screen4"); appendTo(lines, "[2005-05-23 21:22:00] screen5"); appendTo(lines, "[2005-05-23 21:22:48] STOPPED"); logFile = lines.toString(); } @Before public void setUpBuildTransformedFile() { StringBuilder file = new StringBuilder(); appendTo(file, "session-id###SID"); appendTo(file, "presentation-id###PID"); appendTo(file, "user-id###UID"); appendTo(file, "started###2005-05-23 21:20:33"); appendTo(file, "screen1###1"); appendTo(file, "screen2###60"); appendTo(file, "screen3###0"); appendTo(file, "screen4###24"); appendTo(file, "screen5###48"); appendTo(file, "finished###2005-05-23 21:22:48"); expectedOutput = file.toString(); } @Test public void transformationGeneratesRightStuffIntoTheRightFile() throws Exception { TempFile input = TempFile.withSuffix(".src.log").append(logFile); TempFile output = TempFile.withSuffix(".dest.log"); new LogFileTransformer().transform(input.file(), output.file()); assertTrue("Destination file was not created", output.exists()); assertEquals(expectedOutput, output.content()); } // rest omitted for clarity }

Did you see it? Did you see the broad assertion? You probably did—there are only two assertions in there. But, which of the two is the culprit here, and what makes it too broad?

The first assertion checks that the destination file was indeed created. The second assertion checks that the destination file’s content is what’s expected. Now, the value of the first assertion is questionable and it should probably be deleted. However, it’s the second assertion that’s our main concern—the broad assertion:

assertEquals(expectedOutput, output.content());

This is quite a relevant assertion in the sense that it verifies exactly what the name of the test implies—that the right stuff ended up in the right file. The problem is really that the test is too broad, resulting in the assertion’s being a wholesale comparison of the whole log file. It’s a thick safety net, that’s for sure, as even the tiniest of changes in the output will fail the assertion. And therein lies the problem.

A test that has never failed is of little value—it’s probably not testing anything. In the other end of the spectrum, a test that always fails is a mere nuisance. What we’re looking for is a test that has failed in the past, proving that it is able to catch a deviation from the desired behavior of the code it’s testing and that it will break again if we make such a change to the code it’s testing.

The test in our example fails to fulfill this criterion by failing too easily, making it brittle and fragile. But that’s only a symptom of a more fundamental issue—the problem of being a broad assertion. The various small changes in the log file’s format or content that would break this test are valid reasons to fail the test. There’s nothing intrinsically wrong about the assertion. The problem lies in the test’s violation of a fundamental guiding principle for what constitutes a good test:

A test should have only one reason to fail.

If that principle seems familiar, it’s a variation of a well-known object-oriented design principle, the Single Responsibility Principle, which says, “A class should have one, and only one, reason to change.”1 Now let’s clarify why the principle of having only one reason to fail is so important.

Catching many kinds of changes in the generated output is good. However, when the test does fail, we want to know why. In our example it’s quite difficult to tell what happened if this test, transformationGeneratesRightStuffIntoTheRightFile, suddenly breaks. In practice, we’ll always have to look at the details to figure out what had changed and, consequently, broke the test. If the assertion is too broad, many of those details that break the test are in fact irrelevant.

How should we go about improving this test, then?

What to do about it?

The first order of action when encountering an overly broad assertion is to identify irrelevant details and remove them from the test. In our example, we might look at the log file being transformed and try to reduce the number of lines.

We want it to represent a valid log file and be elaborate enough for the purposes of the test. For example, our log file has timings for five screens. Maybe two or three would be enough? Could we get by with just one?

This question brings us to the next improvement to consider—splitting the test. Asking ourselves how few lines in the log file we could get by with quickly leads to concerns about the test no longer testing this and that. Listing 2 presents one possible solution where each aspect of the log file and its transformation are extracted into separate tests.

Listing 2 More relaxed, semantics-oriented assertions reduce brittleness and improve readability

public class LogFileTransformerTest { private static final String END = "2005-05-23 21:21:37"; private static final String START = "2005-05-23 21:20:33"; private LogFile logFile; @Before public void setUp() { logFile = new LogFile(START, END); } @Test #1 public void overallFileStructureIsCorrect() throws Exception { StringBuilder expected = new StringBuilder(); appendTo(expected, "session-id###SID"); appendTo(expected, "presentation-id###PID"); appendTo(expected, "user-id###UID"); appendTo(expected, "started###2005-05-23 21:20:33"); appendTo(expected, "finished###2005-05-23 21:21:37"); assertEquals(expected.toString(), transform(logFile.toString())); } @Test #2 public void screenDurationsGoBetweenStartedAndFinished() throws Exception { logFile.addContent("[2005-05-23 21:20:35] screen1"); String out = transform(logFile.toString()); assertTrue(out.indexOf("started") < out.indexOf("screen1")); assertTrue(out.indexOf("screen1") < out.indexOf("finished")); } @Test public void screenDurationsAreRenderedInSeconds() throws Exception { logFile.addContent("[2005-05-23 21:20:35] screen1"); logFile.addContent("[2005-05-23 21:20:35] screen2"); logFile.addContent("[2005-05-23 21:21:36] screen3"); String output = transform(logFile.toString()); assertTrue(output.contains("screen1###0")); assertTrue(output.contains("screen2###61")); assertTrue(output.contains("screen3###1")); } // rest omitted for brevity private String transform(String log) { ... } private void appendTo(StringBuilder buffer, String string) { ... } private class LogFile { ... } } #1 Checks that common headers are placed correctly #2 Checks screen durations' place in the log #3 Checks screen duration calculations

The solution above introduces a test helper class, LogFile, which establishes the standard “envelope”—the header and footer—for the log file being transformed based on the given starting and ending timestamps. This allows the second and the third test, screenDurationsGoBetweenStartedAndFinished and screenDurationsAreRenderedInSeconds, to append just the screen durations to the log, making the test more focused and easier to grasp. In other words, we delegate some of the responsibility for constructing the complete log file to LogFile. In order to ensure that that responsibility receives due diligence, the overall file structure is verified by the first test, overallFileStructureIsCorrect, in the context of the simplest possible scenario—an otherwise empty log file.

This refactoring has given us more focus by hiding the details from each test that are irrelevant for that particular test. That is also the downside of this approach—some of the details are hidden. In applying this technique, we must ask ourselves what we value more—being able to see the whole in one place or being able to see the essence of a test quickly.

I suggest that, most of the time, when speaking of unit tests, the latter is more desirable as the fine-grained, focused tests point us quickly to the root of the problem in case of a test failure. With all tests making assertions against the whole transformed log file, for example, a small change in the file syntax could easily break all of our tests making it more difficult to figure out what exactly broken—where’s the problem?

Summary

We can shoot ourselves in the proverbial foot by making too broad assertions..

A broad assertion also makes it difficult for the programmer to identify the intent and essence of the test. When you see a test that seems to bite off a lot, ask yourself what exactly do you want to verify? Then, try to formulate your assertion in those terms.

Speak Your Mind | http://www.javabeat.net/what-is-broad-assertion-in-unit-testing-in-java/ | CC-MAIN-2014-42 | refinedweb | 1,792 | 52.7 |

Este artículo tambíen está en Español.

Java Database Connectivity (JDBC) is a programming framework for Java developers writing programs that access information stored in databases, spreadsheets, and flat files. JDBC is commonly used to connect a user program to a "behind the scenes" database, regardless of what database management software is used to control the database. In this way, JDBC is cross-platform [ 1]. This article will provide an introduction and sample code that demonstrates database access from Java programs that use the classes of the JDBC API, which is available for free download from Sun's site [3].

A database that another program links to is called a data source. Many data sources, including products produced by Microsoft and Oracle, already use a standard called Open Database Connectivity (ODBC). Many legacy C and Perl programs use ODBC to connect to data sources. ODBC consolidated much of the commonality between database management systems. JDBC builds on this feature, and increases the level of abstraction. JDBC-ODBC bridges have been created to allow Java programs to connect to ODBC-enabled database software [1].

This article assumes that readers already have a data source established and are moderately familiar with the Structured Query Language (SQL), the command language for adding records, retrieving records, and other basic database manipulations. See Hoffman's tutorial on SQL if you are a beginner or need some refreshing [2].

Regardless of data source location, platform, or driver (Oracle, Microsoft, etc.), JDBC makes connecting to a data source less difficult by providing a collection of classes that abstract details of the database interaction. Software engineering with JDBC is also conducive to module reuse. Programs can easily be ported to a different infrastructure for which you have data stored (whatever platform you choose to use in the future) with only a driver substitution.

As long as you stick with the more popular database platforms

(Oracle, Informix, Microsoft, MySQL, etc.), there is almost

certainly a JDBC driver written to let your programs connect and

manipulate data. You can download a specific JDBC driver from the

manufacturer of your database management system (DBMS) or from a

third party (in the case of less popular open source products) [5]. The JDBC driver for your database will come

with specific instructions to make the class files of the driver

available to the Java Virtual Machine, which your program is going

to run. JDBC drivers use Java's built-in

DriverManager

to open and access a database from within your Java program.

To begin connecting to a data source, you first need to instantiate

an object of your JDBC driver. This essentially requires only one

line of code, a command to the

DriverManager, telling

the Java Virtual Machine to load the bytecode of your driver into

memory, where its methods will be available to your program. The

String parameter below is the fully qualified class

name of the driver you are using for your platform combination:

Class.forName("org.gjt.mm.mysql.Driver").newInstance();

To actually manipulate your database, you need to get an object of

the

Connection class from your driver. At the very

least, your driver will need a URL for the database and parameters

for access control, which usually involves standard password

authentication for a database account.

As you may already be aware, the Uniform Resource Locator (URL) standard is good for much more than telling your browser where to find a web page:

The URL for our example driver and database looks like this:

jdbc:mysql://db_server:3306/contacts/

Even though these two URLs look different, they are actually the same in form: the protocol for connection, machine host name and optional port number, and the relative path of the resource. Your JDBC driver will come with instructions detailing how to form the URL for your database. It will look similar to our example.

You will want to control access to your data, unless security is not an issue. The standard least common denominator for authentication to a database is a pair of strings, an account and a password. The account name and password you give the driver should have meaning within your DBMS, where permissions should have been established to govern access privileges.

Our example JDBC driver uses an object of the

Properties

class to pass information through the

DriverManager,

which yields a

Connection object:

Properties props = new Properties(); props.setProperty("user", "contacts"); props.setProperty("password", "blackbook"); Connection con = DriverManager.getConnection( "jdbc:mysql://localhost:3306/contacts/", props);

Now that we have a

Connection object, we can easily pass

commands through it to the database, taking advantage of the

abstraction layers provided by JDBC.

Databases are composed of tables, which in turn are composed of rows. Each database table has a set of rows that define what data types are in each record. Records are also stored as rows of the database table with one row per record. We use the data source connection created in the last section to execute a command to the database.

We write commands to be executed by the DBMS on a database using SQL. The syntax of a SQL statement, or query, usually consists of an action keyword, a target table name, and some parameters. For example:

INSERT INTO songs VALUES ( "Jesus Jones", "Right Here, Right Now"); INSERT INTO songs VALUES ( "Def Leppard", "Hysteria");

These SQL queries each added a row of data to table "songs" in the database. Naturally, the order of the values being inserted into the table must match the order of the corresponding columns of the table, and the data types of the new values must match the data types of the corresponding columns. For more information about the supported data types in your DBMS, consult your reference material.

To execute an SQL statement using a

Connection object,

you first need to create a

Statement object, which

will execute the query contained in a

String.

Statement stmt = con.createStatement(); String query = ... // define query stmt.executeQuery(query);

In the course of modernizing a record keeping system, you encounter a flat file of data that was created long before the rise of the modern relational database. Rather than type all the data from the flat file into the DBMS, you may want to create a program that reads in the text file, inserting each row into a database table, which has been created to model the original flat file structure.

In this case, we examine a very simple text file. There are only a few rows and columns, but the principle here can be applied and scaled to larger problems. There are only a few steps:

Here is the code of the example program:

import java.io.*; import java.sql.*; import java.util.*; public class TextToDatabaseTable { private static final String DB = "contacts", TABLE_NAME = "records", HOST = "jdbc:mysql://db_lhost:3306/", ACCOUNT = "account", PASSWORD = "nevermind", DRIVER = "org.gjt.mm.mysql.Driver", FILENAME = "records.txt"; public static void main (String[] args) { try { // connect to db Properties props = new Properties(); props.setProperty("user", ACCOUNT); props.setProperty("password", PASSWORD); Class.forName(DRIVER).newInstance(); Connection con = DriverManager.getConnection( HOST + DB, props); Statement stmt = con.createStatement(); // open text file BufferedReader in = new BufferedReader( new FileReader(FILENAME)); // read and parse a line String line = in.readLine(); while(line != null) { StringTokenizer tk = new StringTokenizer(line); String first = tk.nextToken(), last = tk.nextToken(), email = tk.nextToken(), phone = tk.nextToken(); // execute SQL insert statement String query = "INSERT INTO " + TABLE_NAME; query += " VALUES(" + quote(first) + ", "; query += quote(last) + ", "; query += quote(email) + ", "; query += quote(phone) + ");"; stmt.executeQuery(query); // prepare to process next line line = in.readLine(); } in.close(); } catch( Exception e) { e.printStackTrace(); } } // protect data with quotes private static String quote(String include) { return("\"" + include + "\""); } }

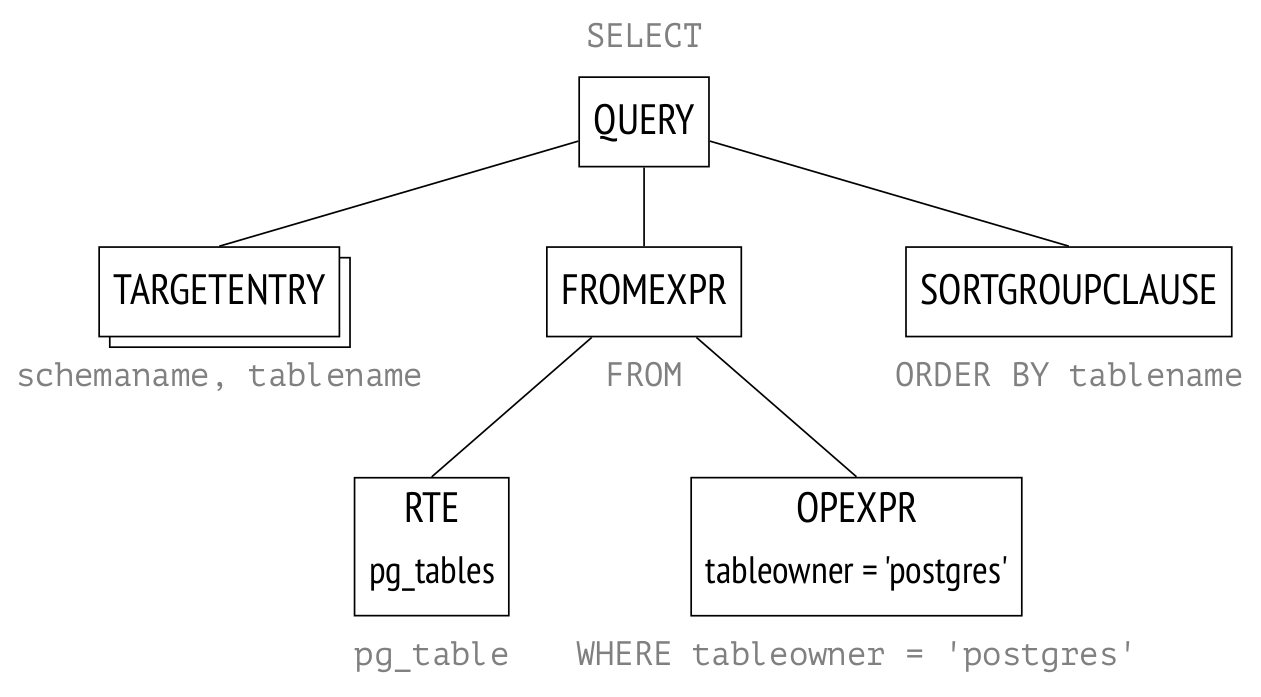

Perhaps even more often than inserting data, you will want to retrieve existing information from your database and use it in your Java program. The usual way to implement this is with another type of SQL query, which selects a set of rows and columns from your database and appears very much like a table. The rows and columns of your result set will be a subset of the tables you queried, where certain fields match your parameters. For example:

SELECT title FROM songs WHERE artist="Def Leppard";

This query returns:

The boxed portion above is a sample result set from a particular

database program. In a Java program, this SQL statement can be

executed in the same way as in the insert example, but additionally,

we must capture the results in a

ResultSet object.

Statement stmt = con.createStatement(); String query = "SELECT FROM junk;"; // define query ResultSet answers = stmt.executeQuery(query);

The JDBC version of a query result set has a cursor that initially

points to the row just before the first row. To advance the cursor, use

the

next() method. If you know the names of the columns

from your result set, you can refer to them by name. You can also

refer to the columns by number, starting with 1. Usually you will

want to get access all of the rows of your result set, using a loop

as in the following code segment:

All database tables have meta data that describe the names and data types of each column; result sets are the same way. You can use theAll database tables have meta data that describe the names and data types of each column; result sets are the same way. You can use thewhile(answers.next()) { String name = answers.getString("name"); int number = answers.getInt("number"); // do something interesting }

ResultSetMetaDataclass to get the column count and the names of the columns, like so:

ResultSetMetaData meta = answers.getMetaData(); String[] colNames = new String[meta.getColumnCount()]; for (int col = 0; col < colNames.length; col++) colNames[col] = meta.getColumnName(col + 1);

We choose to write a simple software tool to show the rows and columns of a database table.

In this case, we are going to query a database table for all its records, and display the result set to the command line. We could also have created a graphical front end made of Java Swing components.

Notice that we do not know anything except the URL and

authentication information to the database table we are going to

display. Everything else is determined from the

ResultSet

and its meta data.

Comments in the code explain the actions of the program. Here is the code of the example program:

import java.sql.*; import java.util.*; public class DatabaseTableViewer { private static final String DB = "contacts", TABLE_NAME = "records", HOST = "jdbc:mysql://db_host:3306/", ACCOUNT = "account", PASSWORD = "nevermind", DRIVER = "org.gjt.mm.mysql.Driver"; public static void main (String[] args) { try { // authentication properties Properties props = new Properties(); props.setProperty("user", ACCOUNT); props.setProperty("password", PASSWORD); // load driver and prepare to access Class.forName(DRIVER).newInstance(); Connection con = DriverManager.getConnection( HOST + DB, props); Statement stmt = con.createStatement(); // execute select query String query = "SELECT * FROM " + TABLE_NAME + ";"; ResultSet table = stmt.executeQuery(query); // determine properties of table ResultSetMetaData meta = table.getMetaData(); String[] colNames = new String[meta.getColumnCount()]; Vector[] cells = new Vector[colNames.length]; for( int col = 0; col < colNames.length; col++) { colNames[col] = meta.getColumnName(col + 1); cells[col] = new Vector(); } // hold data from result set while(table.next()) { for(int col = 0; col < colNames.length; col++) { Object cell = table.getObject(colNames[col]); cells[col].add(cell); } } // print column headings for(int col = 0; col < colNames.length; col++) System.out.print(colNames[col].toUpperCase() + "\t"); System.out.println(); // print data row-wise while(!cells[0].isEmpty()) { for(int col = 0; col < colNames.length; col++) System.out.print(cells[col].remove(0).toString() + "\t"); System.out.println(); } } // exit more gently catch(Exception e) { e.printStackTrace(); } } }

In this article, you saw a quick introduction to manipulating databases with JDBC. More advanced features of JDBC require a greater knowledge of databases. See the references for more articles about JDBC and its applications [4]. As a Java programmer, JDBC is a good tool to have in your arsenal.

I encourage you to copy the code in this article to your own computer. With this article and documentation for another JDBC driver, you are on your way to creating data source-driven Java programs. Experiment with this code, and adapt it to connect to data sources available to you. | http://www.acm.org/crossroads/columns/ovp/march2001.html | crawl-001 | refinedweb | 2,034 | 56.45 |

Hi,

So i have a custom component with several source files (.h and .c), i would like to limit the inclusion of some of this files on the projects depending of one of the component parameters.

It's similar to how the SCB component work, depending on the chosen option (SPI, I2C, UART, etc.) the header and source files of the chosen option are included and the others header and source files are not included.

I had read the custom component author documentation but could not find information on how to do this. Does anyone know if it's possible?

I'm using Creator 4.1 if that helps.

Thanks in advance

You will just need to enter some #if in your component API files to control the including of the required .h files.

Bob

No doubt the #if logic will for sure work with the .h files, but can something similar be done to selectively make a .c file part of the project ?

Hi, thats a good idea for including .h files, actually it is how the header files for different peripherals of the SCB block are included. From the SCB.h file:

#if (`$INSTANCE_NAME`_SCB_MODE_I2C_INC)

#include "`$INSTANCE_NAME`_I2C_PVT.h"

#endif /* (`$INSTANCE_NAME`_SCB_MODE_I2C_INC) */

#if (`$INSTANCE_NAME`_SCB_MODE_EZI2C_INC)

#include "`$INSTANCE_NAME`_EZI2C_PVT.h"

#endif /* (`$INSTANCE_NAME`_SCB_MODE_EZI2C_INC) */

#if (`$INSTANCE_NAME`_SCB_MODE_SPI_INC || `$INSTANCE_NAME`_SCB_MODE_UART_INC)

#include "`$INSTANCE_NAME`_SPI_UART_PVT.h"

#endif /* (`$INSTANCE_NAME`_SCB_MODE_SPI_INC || `$INSTANCE_NAME`_SCB_MODE_UART_INC) */

but as tonyL says it doesn't seem help for .c files. | https://community.cypress.com/thread/9880 | CC-MAIN-2017-47 | refinedweb | 240 | 59.7 |

This is your resource to discuss support topics with your peers, and learn from each other.

09-14-2013 03:48 PM

I can't seem to be able to change the wifi state. I can read the state of the wifi though. I saw in a previous post that this was not possible.

Is it still impossible?

09-14-2013 04:59 PM - edited 09-14-2013 05:01 PM

This seems to imply that it will turn the wifi on

I'm not sure if it will work as it says it starts it in STA mode and I have no idea what that means but It's the only thing I noticed about turning the wifi on and off

here's other wifi related info

If this doesnt do the trick I would suggest submitting a Jira ticket

09-14-2013 11:18 PM

Actually that's an enum to know the status of the wifi radio. This

Will let you change the status of the wifi. My code is

#include "WifiController.hpp" #include <wifi/wifi_service.h> #include "GeoNotification.hpp" WifiController::WifiController() {} WifiController::~WifiController() {} int WifiController::toggleWifiState(bool state) { getWifiState(); int status = wifi_set_sta_power(state); if(status==WIFI_SUCCESS) qDebug()<<"WIFI ENABLE SUCCESS CURRENT STATE: "<<state; else qDebug()<<"WIFI ENABLE FAILURE CURRENT STATE: "<<state; return status; } int WifiController::getWifiState() { wifi_status_t status; wifi_get_status(&status); if(status == WIFI_STATUS_BUSY) { return 2; } if(status == WIFI_STATUS_RADIO_ON) { return 1; } if(status == WIFI_STATUS_RADIO_OFF) { return 0; } return 0; }

I call the toggleWifiState() function to change the wifi state. But it doesn't work

I am stuck on this problem for a long time.

09-15-2013 06:10 AM

That would be the code from the link I posted, appears that it does work

=)

09-15-2013 06:17 AM

09-15-2013 06:20 AM

That would likely mean that STA mode isn't regular wifi, and your best bet would be to file a feature request ticket on jira | https://supportforums.blackberry.com/t5/Native-Development/Is-it-currently-possible-to-toggle-wifi-state-programmatically/m-p/2589733 | CC-MAIN-2016-44 | refinedweb | 324 | 58.21 |

Python client wrapper library for WSDL API

This project received 11 bids from talented freelancers with an average bid price of $1134 USD.Get free quotes for a project like this

Skills Required

Project Budget$750 - $1500 USD

Total Bids11

Project Description

The output of this project should be a python 2.7 module that can be imported by any other python script or module that wraps a specific WSDL API from the below SaaS provider. The module should contain a class that is built to handle all interactions with the WSDL interface, including automatically taking care of authentication and session management. The class should have simple calls that match up to the WSDL API interface. Note that the below SaaS provider has broken up the WSDL interface into multiple WSDL definitions, and all must be accessible via the module/class. The class should also be easily extendable as new functions are defined or new WSDL interfaces are defined.

Data retrieved from the WSDL APIs must be made available via memory object using standard python 2.7 data structures to be used in the calling python script/module to be used for further data processing.

[url removed, login to view]

The final product should be constructed in such a way that the library could be used to talk to the hosted solution or a privately hosted copy of the code. (i.e. be able to define the namespace and URL to access the namespace)

The use of GPL/GNU or other open source licensing for WSDL or XML support is encouraged and allowed. All works done on this project must also carry a GPL license for redistribution.

All source code and working examples must be delivered for acceptance of the project.

Examples should include:

-setting authentication and namespace

-retrieving a list of Employees, Clients, Projects and Tasks

-retrieving a list of time entries by date range and by date range/employeeID

The developer should signup for a trial account to the TimeLive hosted solution to test and build this | https://www.freelancer.com/jobs/Python-Engineering/Python-client-wrapper-library-for/ | CC-MAIN-2015-22 | refinedweb | 339 | 55.98 |

React Redux: As the name suggests it’s a javascript library created by Facebook and it is the most popular javascript library and used for building l User Interfaces(UI) specifically for single-page applications. React js enables the developer to break down complicated UI into a simpler one. We can make particular changes in data of web applications without refreshing the page. React allows creating reusable components.

Advantages of React js

Easy to learn and Easy to use:

React is easy to learn and simple to use and comes with lots of good paperwork, tutorials, and training resources. You can use plain JavaScript to create a web application and then handle it using this. It is also known as the V in the MVC (Model View Controller) pattern.

Virtual DOM:

Virtual DOM is a memory-representation of Real DOM(Document Object Model). A virtual DOM is a lightweight JavaScript object that is originally just a copy of Real DOM.

It helps to improve performance and thus the rendering of the app is fast.

CodeReability increases with JSX:

JSX stands for javascript XML.This is a sort of file used by React which utilizes javascript expressiveness along with HTML like template syntax.JSX makes your code simple and better.

Reusable Components:

Each component has its logic and controls its own rendering and can be reused wherever you need it. Component reusability helps to create your application simpler and increases performance.

Need for React Redux:

1) The core problem with React js is state Management.

2) There may be the same data to display in multiple places. Redux has a different approach, redux offers a solution storing all your application state in one place called store. The component then dispatch state changes to the store not directly to the component.

What is Redux??

Redux is a predictable state container for javascript applications. It helps you write applications that behave consistently and run in different environments and are easy to test. Redux mostly used for state Management.

Advantages of Using Redux

Redux makes state Predictable:

In redux the state is Predictable when same state and action passed to a reducer. Its always produce same the same result. since reducers are pure functions. The state is also unchangeable and never changed. This allow us for arduous task such as infinite redo and undo.

Maintainability:

Redux is strict about how code should be organized, which makes understanding the structure of any redux application easier for someone with redux knowledge. This generally makes the maintenance easier.

Ease of testing:

Redux apps can be easily tested since functions are used to change the state of pure functions.

Redux-data flow (diagram)

Redux is composed of the following components:

Action

Reducer

Store

View

Action: Actions are the payload of information that sends data from your application to your store. Actions describe the fact that something happens but do not specify how the application state changes in the response.

Action must have a type property that indicates types of action being performed

they must be defined as a string constant.

Action-type:

export const ADD_ITEM=’ADD_ITEM,

Action-creator:

import * as actionType from ‘./action-types’

function addItem(item) {

return {

type: actionType.ADD_ITEM, payload:item

}

}

Reducer: Reducer is a pure function which specifies how application state change in response to an action. Reducer handle action dispatch by the component. Reducer takes a previous state and action and returns a new state. Reducer does not manipulate the original state passed to them but make their own copies and update them.

function reducer(state = initialState, action) {

switch (action.type) {

case ‘ADD_ITEM’: return Object.assign({}, state, { items: [ …state.items, { item: action.payload } ] }) default: return state

}

}

Things you should never do inside a reducer

Mutate its arguments

Perform side effects like API calls

Calling non-pure functions Like Math.random()

Store

A store is an object that brings all components to work together. It calculates state changes and then notifies the root reducer about it. Store keeps the state of your whole application. It makes the development of large applications easier and faster. Store is accessible to each component

import { createStore } from ‘redux’

import todoApp from ‘./reducers’

let store = createStore(reducer);

View:

The only purpose of the view is to display the data passed by the store.

Conclusion:-

So coming to the conclusion why we should use React with Redux is because redux solves the state management problem. Redux offers solutions storing your whole application state in a single place that you can say it central store which is accessible to each component.

React Native App Development Company

React Native Development Company

Outsource React Native Development Company

Discussion (0) | https://dev.to/ashikacronj/react-redux-a-complete-guide-to-beginners-2a45 | CC-MAIN-2021-25 | refinedweb | 775 | 57.37 |

Produce the highest quality screenshots with the least amount of effort! Use Window Clippings.

The Windows SDK provides the WideCharToMultiByte function to convert a Unicode, or UTF-16, string (WCHAR*) to a character string (CHAR*) using a particular code page. Windows also provides the MultiByteToWideChar function to convert a character string from a particular code page to a Unicode string. These functions can be a bit daunting at first but unless you have a lot of legacy code or APIs to deal with you can just specify CP_UTF8 as the code page and these functions will convert between Unicode UTF-16 and UTF-8 formats. UTF-8 isn’t really a code page in the original sense but the API functions lives on and now provide support for UTF conversions.

ATL provides a set of class templates that wrap these functions to simplify conversions even further. It takes a fairly efficient and elegant approach to memory management (compared to previous versions of ATL) that should serve you well in most cases. CW2A is a typedef for the CW2AEX class template that wraps the WideCharToMultiByte function. Similarly, CA2W is a typedef for the CA2WEX class template that wraps the MultiByteToWideChar function.

In the example below I start with a Unicode string that includes the Greek capital letters for Alpha and Omega. The string is converted to UTF-8 with CW2A and then back to Unicode with CA2W. Be sure to specify CP_UTF8 as the second parameter in both cases otherwise ATL will use the current ANSI code page.

Keep in mind that although UTF-8 strings look like characters strings, you cannot rely on pointer arithmetic to subscript them as the characters may actually consume anywhere from one to four bytes. It’s also possible that Unicode characters may require more than two bytes should they fall in a range above U+FFFF. In general you should treat user input as opaque buffers.

#include <atlconv.h>#include <atlstr.h>

#define ASSERT ATLASSERT

int main(){ const CStringW unicode1 = L"\x0391 and \x03A9"; // 'Alpha' and 'Omega'

const CStringA utf8 = CW2A(unicode1, CP_UTF8);

ASSERT(utf8.GetLength() > unicode1.GetLength());

const CStringW unicode2 = CA2W(utf8, CP_UTF8);

ASSERT(unicode1 == unicode2);}

If you’re looking for one of my previous articles here is a complete list of them for you to browse through.

its not like UTF16 is always 2bytes either

asdf: Thanks I forgot to mention that. I updated the text to point that out. | http://weblogs.asp.net/kennykerr/archive/2008/07/24/visual-c-in-short-converting-between-unicode-and-utf-8.aspx | crawl-002 | refinedweb | 408 | 60.24 |

FH: You walk over the AST with a function that has the signature "IronJS.Ast -> System.Linq.Expressions.Expression". It basically transforms the internal AST nodes into the equivalent DLR ones.

FH: It's a superset, or rather an evolution, of the 3.5 version.

FH: Yes

FH: If you run IronJS on a pre-4.0 version then you need to include the proper assemblies which can be downloaded and compiled from dlr.codeplex.com and all the same types will exist as in 4.0, just under a different namespace.

FH: IronJS itself doesn’t really come with a pre-defined use-case, I would say that anywhere you would like users of your application to be able to do their own scripting.

I know of a few people that have used it together with ASP.NET/MVC to both create view templates and some sort of ".net-nodejs-hybrid".

FH: Currently, no, there are a few low level things IronJS does which are not allowed in SL.

FH: It would not help against XSS, as this has nothing to do with the runtime itself, and with the quality of today's JavaScript runtimes I don't think there would be a huge benefit to run IronJS in the browser.

FH: IronJS uses the .NET GC.

FH: It cannot, sadly.

FH: There is nothing stopping you from exposing LINQ to IronJS manually, but it's not supported out of the box. Also, what it would mean to have Linq in IronJS? Not that much I think. Maybe I sound negative but JavaScript as a language is not very concise when using lambdas, etc.

FH: Yes they are faster, no doubt.

FH: The parsing is still in F#, it's the core runtime classes that represent the JS environment that has been moved to C#. This was done by John Gietzen who contributed a lot of code and it was done because it's easier for other people to inter-op with them.

FH: Yes, I am.

FH: Honestly my contact with Microsoft and the trust in the quality of the code the DLR team put out. Also the DLR had been proven with IronRuby and IronPython. Nothing bad against Parrot though, as I have never even spoken to them.

IronJS on github

IronJS Blog

Parrot virtual machine

Homes found for Iron languages

Microsoft lets go of Iron languages

Microsoft's Dynamic languages are dying

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter. | http://www.i-programmer.info/professional-programmer/i-programmer/4482.html?start=1 | CC-MAIN-2013-48 | refinedweb | 440 | 72.16 |

The.

In this part of the post, I would like to document the steps needed to run an existing Django project on gunicorn as the backend server and nginx as the reverse proxy server. (Please refer to Part 1 of this post)

Disclaimer: this post is based on this site but I added a lot of details that wasn't explained.

Setup nginx

Before starting the nginx server, we want modify its config file. I installed nginx via brew on my machine, and the conf file is located here:

/usr/local/etc/nginx/nginx.conf

We can modify this file directly to add configurations for our site, but this is not a good idea. Because you may have multiple web apps running behind this nginx server. Each web app may need its own configuration. To do this, let's create another folder for storing these site-wise configuration files first:

cd /usr/local/etc/nginx mkdir sites-enabled

We can store our site specific config here but wouldn't it be better if we store the config file along with our project files together? Now, let's navigate to our project folder. (I named my project testproject and stored it under /Users/webapp/Apps/testproject)

cd /Users/webapp/Apps/testproject touch nginx.conf

Here is my config file:

server { listen 80; server_name your_server_ip; access_log /Users/webapp/logs/access.log; # <- make sure to create the logs directory error_log /Users/webapp/logs/error.log; # <- you will need this file for debugging location / { proxy_pass; # <- let nginx pass traffic to the gunicorn server } location /static { root /Users/webapp/Apps/testproject/vis; # <- let nginx serves the static contents } }

Let me elaborate on the '/static' part. This part means that any traffic to 'your_server_ip/static' will be forwarded to '/Users/webapp/Apps/testproject/vis/static'. You might ask why doesn't it forward to '/Users/webapp/Apps/testproject/vis'?(without '/static' in the end) Because when using 'root' in the config, it will append the '/static' part after it. So be aware! You can fix this by using alias instead of root and append /static to the end of the path:

location /static { alias /Users/webapp/Apps/testproject/vis/static; }

Here is the folder structure of my project :

/Users/webapp/Apps/testproject/ manage.py <- the manage.py file generated by Django nginx.conf <- the nginx config file for your project gunicorn.conf.py <- the gunicorn config file that we will create later, just keep on reading testproject/ <- automatically generated by Django settings.py urls.py wsgi.py <- automatically generated by Django and used by gunicorn later vis/ <- the webapp that I wrote admin.py models.py test.py urls.py template/ vis/ index.html static/ <- the place where I stored all of the static files for my project vis/ css/ images/ js/

All of the static files are in the /testproject/vis/static folder, so that's where nginx should be looking. You might ask that the static files live in their own folders rather than right under the /static/ path. How does nginx know where to fetch them? Well, this is not nginx's problem to solve. It is your responsibility to code the right path in your template. This is what I wrote in my template/vis/index.html page:

href="{% static 'vis/css/general.css' %}

It is likely that you won't get the path right the first time. But that's ok. Just open up Chrome's developer tools and look at the error messages in the console to see which part of the path is messed up. Then, either fix your nginx config file or your template.

To let nginx read our newly create config file:

cd /usr/local/etc/nginx/ nano nginx.conf

Find the ' http { ' header, add this line under it:

http{ include /usr/local/etc/nginx/sites-enabled/*;

This line tells nginx to look for config files under the 'sites-enabled' folder. Instead of copy our project's nginx.conf into the 'sites-enabled' folder, we can simply create a soft link instead:

cd /usr/local/etc/nginx/site-enabled ln -s /full_path/to/your/django_project a_name # in my case, this is what my link command looks like: # ln -s /Users/webapp/Apps/testproject/nginx.conf testproject # this would create a soft link named testproject which points to the real config file

Once this is done, you can finally start up the nginx server:

To start nginx, use

sudo nginx

To stop it, use

sudo nginx -s stop

To reload the config file without shutting down the server:

sudo nginx -s reload

Please refer to this page for a quick overview of the commands.

Setup gunicorn

Setting up gunicorn is more straight forward (without considering optimization). First, let's write a config file for gunicorn. Navigate to the directory which contains your manage.py file, for me, this is what I did:

cd /Users/webapp/Apps/testproject touch gunicorn.conf.py # yep, the config file is a python script

This is what I put in the config file:

bind = "127.0.0.1:9000" # Don't use port 80 becaue nginx occupied it already. errorlog = '/Users/webapp/logs/gunicorn-error.log' # Make sure you have the log folder create accesslog = '/Users/webapp/logs/gunicorn-access.log' loglevel = 'debug' workers = 1 # the number of recommended workers is '2 * number of CPUs + 1'

Save the file and to start gunicorn, make sure you are at the same directory level as where the manage.py file is and do:

gunicorn -c gunicorn.conf.py testproject.wsgi

The ' -c ' option means read from a config file. The testproject.wsgi part is actually referring to the wsgi.py file in a child folder. (Please refer to my directory structure above)

Just in case if you need to shutdown gunicorn, you can either use Ctrl + c at the console or if you lost connection to the console, use [1]:

kill -9 `ps aux | grep gunicorn | awk '{print $2}'`

Actually, a better way to run and shutdown gunicorn is to make it a daemon process so that the server will still run even if you log out of the machine. To do that, use the following command:

gunicorn -c gunicorn.conf.py testproject.wsgi --pid ~/logs/gunicorn.pid --daemon

This command will do three things:

- run gunicorn with the configuration file named gunicorn.conf.py

- save the process id of the gunicorn process to a specific file ('~/logs/gunicorn.pid' in this case)

- run gunicorn in daemon mode so that it won't die even if we log off

To shutdown this daemon process, open '~/logs/gunicorn.pid' to find the pid and use (assuming 12345 is what stored in '~/logs/gunicorn.pid'):

kill -9 12345

to kill the server.

This is it! Enter 127.0.0.1 in your browser and see if your page loads up. It is likely that it won't load up due to errors that you are not aware of. That's ok. Just look at the error logs:

tail -f error.log

Determine if it is an nginx, gunicorn or your Django project issue. It is very likely that you don't have proper access permission or had a typo in the config files. Just going through the logs and you will find out which part is causing the issue. Depending on how you setup your Django's logging config, you can either read debug messages in the same console which you started gunicorn, or read it form a file. Here is what I have in my Django's settings.py file:

LOGGING = { 'version': 1, 'disable_existing_loggers': False, 'formatters': { 'verbose': { 'format' : "[%(asctime)s] %(levelname)s [%(name)s:%(lineno)s] %(message)s", 'datefmt' : "%d/%b/%Y %H:%M:%S" }, 'simple': { 'format': '%(levelname)s %(message)s' }, }, 'handlers': { 'null': { 'level': 'DEBUG', 'class': 'logging.NullHandler', }, 'console': { 'level': 'DEBUG', 'class': 'logging.StreamHandler', 'formatter': 'verbose' }, 'logfile':{ 'level':'DEBUG', 'class':'logging.handlers.WatchedFileHandler', 'filename': "/Users/webapp/gunicorn_log/vis.log", 'formatter': 'verbose', }, }, 'loggers': { 'django.request': { 'handlers': ['logfile'], 'level': 'DEBUG', 'propagate': True, }, 'django': { 'handlers': ['logfile'], 'propagate': True, 'level': 'DEBUG', }, 'vis': { 'handlers': ['console', 'logfile'], 'level': 'DEBUG', 'propagate': False, }, } }

Bad Request 400

Just when you thought everything is ready, and you want the world to see what you have built...BAM! Bad Request 400. Why??? Because when you turn DEBUG = False in the settings.py file, you have to specify the ALLOWED_HOSTS attribute:

DEBUG = False ALLOWED_HOSTS = [ '127.0.0.1', ]

Just don't put your port number here, only the ip part. Read more about this issue here:

Conclusion

Setting up nginx, gunicorn and your Django project can be a very tedious process if you are not familiar with any of them like me. I document my approach here to hopefully help anybody who has encountered the same issue.

Just found a great tool for debugging Django applications, the Django debug toolbar provides a toolbar to your webpage when DEBUG=True. It looks like this:

The toolbar will provides a ton of information, such as:

- The number of database queries made while loading the apge

- The amount of time it took to load the page (similar to Chrome dev tools' Timeline feature)

- The content of your Settings file

- Content of the request and response headers

- The name and page of each static files loaded along with the current page

- Current page's template name and path

- If Caching is used, it shows the content of the cached objects and the time it took to load them

- Singals

- Logging messages

- Redirects

To install it:

pip install django-debug-toolbar

Then, in your settings.py:

INSTALLED_APPS = ( 'django.contrib.admin', 'django.contrib.auth', 'django.contrib.contenttypes', 'django.contrib.sessions', 'django.contrib.messages', 'django.contrib.staticfiles', # <- automatically added by Django, make sure it is not missing 'debug_toolbar', # <- add this 'myapp', # <- your app ) # static url has to be defined STATIC_URL = '/static/' # pick and choose the panels you want to see', ]

That's it. Start your server by

python manage.py runser

Load up your page in the web browser, you should see a black vertical toolbar appearing on the right side of your page.

用select_related来提取数据和不用select_related来提取数据的差别。

我有一个Model(用户每天会填写一些问题,这个Model用来收集用户每天填写的问题的答案):

class Answer(models.Model): day_id = models.IntegerField() set_id = models.IntegerField() question_id = models.IntegerField() answer = models.TextField(default='') user = models.ForeignKey(User) def __unicode__(self): result = u'{4}:{0}.{1}.{2}: {3}'.format(self.day_id, self.set_id, self.question_id, self.answer, self.user.id) return result

这个Model除了自带的Field外还有一个外键 user,用于引用Django自带的User表。

我需要将所有用户的每天的答案都输出到一个文件里, 所以首先要提取出所有的答案:

answers = Answer.objects.all() for answer in answers: print answer

运行这个指令会对数据库进行981次请求。数据库里有980行数据,Answer.objects.all()算是一次请求。那么剩下的980次请求是在干嘛呢?问题出在:

result = u'{4}:{0}.{1}.{2}: {3}'.format(self.day_id, self.set_id, self.question_id, self.answer, self.user.id)

当打印answer这个Object的时候,除了会打印出自身的Field外,还会打印出user.id. 由于Answer这个表中没有user.id这个Field,所以要单独请求User的表给出相应的id。这个就是造成980次请求的原因。每次打印一个answer,都要请求一遍User表来获取相对应的用户id。

这种提取数据的方式明显是不合理的,为了能一次完成任务,可以使用select_related:

answers = Answer.objects.all().select_related('user') for answer in answers: print answer

这样,数据库请求只有一次。select_related()的作用其实是把Answer和User这两张表给合并了:

SELECT ... FROM "answer" INNER JOIN "auth_user" ON ( "answer"."user_id" = "auth_user"."id" )

这样页面的载入时间就会被大大减少。

在一个文字框里加入灰色的注释文字帮助用户理解应该输入什么信息是常见的做法。在Django里有两种方式可以为输入框添加文字注释。假设我们有一个Model:

from django.db import models class User(models.Model): user_name = models.CharField()

方法一:

import django.forms as forms from models import User class Login(forms.ModelForm): user_name = forms.CharField(widget=forms.TextInput(attrs={'placeholder': u'输入Email地址'})) class Meta: model = User

方法二:

import django.forms as forms from models import User class Login(forms.ModelForm): class Meta: model = User widgets = { 'user_name': forms.TextInput(attrs={'placeholder': u'输入Email地址'}), }

When writing a user registration form in Django, you are likely to encounter this error message:

A user with that Username already exists.

This happens when a new user wants to register with a name that is already stored in the database. The message itself is self explainatory but what I need is to display this message in Chinese. According to Django's documentation, I should be able to do this:

class RegistrationForm(ModelForm): class Meta: model = User error_messages = { 'unique': 'my custom error message', }

But this didn't work. It turns out that Django's CharField only accepts the following error message keys:

Error message keys: required, max_length, min_length

Thanks to this StackOverflow post, here is how Django developers solved this problem in the UserCreationForm, we can adopt their solution to this situation:

class RegistrationForm(ModelForm): # create your own error message key & value error_messages = { 'duplicate_username': 'my custom error message' } class Meta: model = User # override the clean_<fieldname> method to validate the field yourself def clean_username(self): username = self.cleaned_data["username"] try: User._default_manager.get(username=username) #if the user exists, then let's raise an error message raise forms.ValidationError( self.error_messages['duplicate_username'], #user my customized error message code='duplicate_username', #set the error message key ) except User.DoesNotExist: return username # great, this user does not exist so we can continue the registration process

Now when you try to enter a duplicate username, you will see the custom error message being shown instead of the default one :)

I.

I had the following code:

from django.core.urlresolvers import reverse class UserProfileView(FormView): template_name = 'profile.html' form_class = UserProfileForm success_url = reverse('index')

When the code above runs, an error is thrown:

django.core.exceptions.ImproperlyConfigured: The included urlconf 'config.urls' does not appear to have any patterns in it. If you see valid patterns in the file then the issue is probably caused by a circular import.

There are two solutions to this problem, solution one:

from django.core.urlresolvers import reverse_lazy class UserProfileView(FormView): template_name = 'profile.html' form_class = UserProfileForm success_url = reverse_lazy('index') # use reverse_lazy instead of reverse

Solution 2:

from django.core.urlresolvers import reverse class UserProfileView(FormView): template_name = 'profile.html' form_class = UserProfileForm def get_success_url(self): # override this function if you want to use reverse return reverse('index')

According to Django's document, reverse_lazy should be used instead of reverse when your project's URLConf is not loaded. The documentation specifically points out that reverse_lazy should be used in the following situation:

providing a reversed URL as the url attribute of a generic class-based view. (this is the situation I encountered)

providing a reversed URL to a decorator (such as the login_url argument for the django.contrib.auth.decorators.permission_required() decorator).

providing a reversed URL as a default value for a parameter in a function’s signature.

It is unclear when URLConf is loaded. At least I cannot find the documentation on this topic. So if the above error occurs again, try reverse_lazy

When a user request a page view from a website (powered by Django), a cookie is returned along with the requested page. Inside this cookie, a key/value pair is presented:

Cookie on the user's computer Key Value --- ----- sessionid gilg56nsdelont4740onjyto48sv2h7l

This id is used to uniquely identify who's who by the server. User A's id is different from User B's etc. This id is not only stored in the cookie on the user's computer, it is also stored in the database on the server (assuming you are using the default session engine). By default, after running

./manage.py migrate, a table named django_session is created in the database. It has three columns:

django_session table in database session_key session_data expire_date --------------------------------------------------- y5j0jy3l4v3 ZTJlMmZiMGYw 2015-05-08 15:13:28.226903

The value stored in the session_key column matches the value stored in the cookie received by the user.

Let's say this user decides to login to the web service. Upon successfully logged into the system, a new sessionid is assigned to him/her and a different session_data is stored in the database:

Before logging in: session_key session_data expire_date --------------------------------------------------- 437383928373 anonymous 2015-05-08 15:13:28.226903

After logging in: session_key session_data expire_date --------------------------------------------------- 218374758493 John 2015-05-08 15:13:28.226903

*I made up this example to use numbers and usernames instead of hash strings. For security reasons, these are all hash strings in reality.

As we can see here, a new session_key has been assigned to this user and we now know that this user is 'John'. Form now on, John's session_key will not change even if he closes the browser and visit this server again. Thus, when John comes back the next day, he does not need to login again.

Django provides a setting to let developers to specify this behaviour, in settings.py, a variable named SESSION_SAVE_EVERY_REQUEST can be set:

SESSION_EXPIRE_AT_BROWSER_CLOSE = False # this is the default value. the session_id will not expire until SESSION_COOKIE_AGE has reached.

If this is set to True, then John is forced to login everytime he visits this website.

Since saving and retrieving session data from the database can be slow, we can store session data in memory by:

#Assuming memcached is installed and set as the default cache engine SESSION_ENGINE = 'django.contrib.sessions.backends.cache'

The advantage of this approach is that session store/retrival will be faster. But the downside is if the server crashes, all session data is lost.

A mix of cache & database storage is:

SESSION_ENGINE = 'django.contrib.sessions.backends.cached_db'

According to django's documentation:

every write to the cache will also be written to the database. Session reads only use the database if the data is not already in the cache.

This approach is slower than a pure cache solution but faster than a purse db solution.

Django's offical document did warn to not use local-memory cache as it doesn't retain data long enough to be a good choice.

By default the session data for a logged in user lasts two weeks in Django, users have to log back in after the session expires. This time period can be adjusted by setting the SESSION_COOKIE_AGE variable.

There are times when you are not worried about user authentication but still want to have each user sees only his/her stuff. Then you need a way to login a user without password, here is the solution posted on stackoverflow

Normally, when logging a user with password, authenticate() has to be called before login():

username = request.POST['username'] password = request.POST['password'] user = authenticate(username=username, password=password) login(request, user)

What authenticate does is to add an attribute called backend with the value of the authentication backend, take a look at the line of the source code. The solution is to add this attribute yourself:

#assuming the user name is passed in via get username = self.request.GET.get('username') user = User.objects.get(username=username) #manually set the backend attribute user.backend = 'django.contrib.auth.backends.ModelBackend' login(request, user)

That's it!

I was running a Django server on Ubuntu 12.04 and saw a lot of errors logged in gunicorn's error log file:

error: [Errno 111] Connection refused

One line of the error messages caught my eye, send_mail. Gunicorn is trying to send my the error message via the send_mail() function but failed. I realized that I didn't setup the email settings in settings.py.

I searched online for a solution and found two:

- send email via Gmail's smtp

- setup your mail server

Option 1 seems like a quick and dirty way to get things done, but it has a few drawbacks:

- Sending email via Gmail's smtp means the FROM field will be your Gmail address rather than your company or whatever email address you want it to be.

- To access Gmail, you need to provide your username and password. This means you have to store your gmail password either in the settings.py file or to be more discreet in an environment variable.

- You have to allow less secured app to access your gmail. This is a setting in your gmail's account.

I decided to setup my own mail server because I don't want to use my personal email for contacting clients. To do that, I googled and found that there are two mail servers that I can use:

- postfix

- sendmail

Since postfix is newer and easier to config, I decided to use it.

- Install postfix and Find main.cf

Note: main.cf is the config for postfix mail server

sudo apt-get install postfix

postfix is already the newest version. postfix set to manually installed.

Great, it is already installed. Then, I went to /etc/postfix/ to find main.cf and it is not there! Weird, so I tried to reinstall postfix:

sudo apt-get install --reinstall postfix

After installation, I saw a message:'.

I see. So, I followed the instruction and copied the main.cf file to /etc/postfix/:

cp /usr/share/postfix/main.cf.debian /etc/postfix/main.cf

Add the following lines to main.cf:

mynetworks = 127.0.0.0/8 [::ffff:127.0.0.0]/104 [::1]/128 mydestination = localhost

Then, reload this config file:

/etc/init.d/postfix reload

Now, let's test to see if we can send an email to our mailbox via telnet:

telnet localhost 25

Once connected, enter the following line by line:

mail from: whatever@whatever.com rcpt to: your_real_email_addr@blah.com data (press enter) type whatever content you feel like to type . (put an extra period on the last line and then press enter again)

If everything works out, you sould see a comfirmation message resemables this:

250 2.0.0 Ok: queued as CC732427AE

It is guaranteed that this email will end up in the spam box if you use Gmail. So take a look at your spam inbox to see if you received the test mail (it may take a minute to show up).

If you recevied the test email, then it means postfix is working properly. Now, let's config Django to send email via postfix.

First, I added the following line to my settings.py file:

EMAIL_BACKEND = 'django.core.mail.backends.smtp.EmailBackend' EMAIL_HOST = 'localhost' EMAIL_PORT = 25 EMAIL_HOST_USER = '' EMAIL_HOST_PASSWORD = '' EMAIL_USE_TLS = False DEFAULT_FROM_EMAIL = 'Server <server@whatever.com>'

Then, I opened up Django shell to test it:

./manage.py shell >>> from django.core.mail import send_mail >>> send_mail('Subject here', 'Here is the message.', 'from@example.com', ['to@example.com'], fail_silently=False)

Again, check your spam inbox. If you received this mail, then it means Django can send email via postfix, DONE!

references:.

When you have large number of models, it is eaiser to understand their relationships by looking at a graph, Django extensions has a handy command to convert these relationships into an image file:

- Install Django extension by:

pip install django-extensions

2. Enable Django extensions in your settings.py:

INSTALLED_APPS = ( ... 'django_extensions', )

3. Install graph packages that Django extensions relies on for drawing:

pip install pyparsing==1.5.7 pydot

4. Use this command to draw:

./manage.py graph_models -a -g -o my_project_visualized.png

For more drawing options, please refer to the offical doc.

Use the --log-file=- option to send error messages to console:

gunicorn --log-file=-

After debugging is complete, remove this option and output the error message to a log file instead.

I was given a task to randomly generate usernames and passwords for 80 users in Django. Here is how I did it:

Thanks to this excellent StackOverflow post, generating random characters become very easy:

import string import random def generate(size=5, numbers_only=False): base = string.digits if numbers_only else string.ascii_lowercase + string.digits return ''.join(random.SystemRandom().choice(base) for _ in range(size))

I want to use lowercase characters for usernames and digits only for passwords. Thus, the optional parameter numbers_only is used to specific which format I want.

Then, open up the Django shell:

./manage.py shell

and Enter the following to the interactive shell to generate a user:

from django.contrib.auth.models import User from note import utils User.objects.create_user(utils.generate(), password=utils.generate(6, True))

I saved the generate() inside utils.py which is located inside a project named note. Modify

from note import utils to suit your needs.'

Julien Phalip gave a great talk at DjangoCon 2015, he introduced three ways to hydrate (i.e. load initial data into React component on first page load) React app with data, I am going to quote him directly from the slides and add my own comments.

Conventional Method: Client fetches data via Ajax after initial page load.

Load the data via ajax after initial page load.

Less conventional method: Server serializes data as global Javascript variable in the inital HTML payload (see Instagram).

Let the server load the data first, then put it into a global Javascript variable which is embed inside the returned HTML page.

In the linked Github project (which is a Django project), the server fetches from the database to get all of the data. Then, it converts the data from Python object to json format and embed the data in the returned HTML page.

Server side rendering: Let the server render the output HTML with data and send it to the client.

This one is a little bit involved. Django would pass the data from DB along with the React component to python-react for rendering.

python-react is a piece of code that runs on a simple Node HTTP server and what it does is receiving the react component along with data via a POST request and returns the rendered HTML back. (The pyton-react server runs on the same server as your Django project.)

So which method to use then?

We can use the number of round trips and the rendering speed as metrics for the judgement.

Method 1

Round trips: The inital page request is one round trip, and the following ajax request is another one. So two round trips.

Rendering time: Server rendering is usually faster then client rendering, but if the amount of data gets rendered is not a lot then this time can be considered negligible. In this case, the rednering happens on the client side. Let's assume the amount of data need to be rendered is small and doesn't impact the user experience, then it is negligible.

Method 2

Round trips: Only one round trip

Rendering time: Negligible as aforementioned.

Method 3

Round trips: Only one round trip

Rendering time: If it is negligible on the client side then it is probably negligible on the server side.

It seems that Method 2 & 3 are equally fast. The differences between them are:

- Method 2 renders on the client side and Method 3 renders on the server side.

- Method 3 requires extra setup during development and deployment. Also, the more moving pieces there are, the more likely it breaks and takes longer to debug.

Conclusion

Without hard data to prove, here is just my speculation: Use Method 2 most of the time and only Method 3 if you think rendering on the client side is going to be slow and impact the user experience.

There are some pitfalls when you need to create and login users manually in Django. Let's create a user first:

def view_handler(request): username = request.POST.get('username', None) password = request.POST.get('username', None)

Note that

request.POST.get('username', None) should be used instead of

request.POST['username']. If the later is used, you will get this error:

MultiValueDictKeyError

Once the username and password are extracted, let's create the user

User.objects.create(username=username, password=password, email=email) # DON'T DO THIS

The above code is wrong. Because when

create is used instead of

create_user, the user's password is not hashed. You will see the user's password is stored in clear text in the database, which is not the right thing to do.

So you should use the following instead:

User.objects.create_user(username=username, password=password, email=None)

What if you want to test if the user you are about to create has already existed:

user, created = User.objects.get_or_create(username=username, email=None) if created: user.set_password(password) # This line will hash the password user.save() #DO NOT FORGET THIS LINE

get_or_create will get the existing user or create a new one. Two values are returned, an user object and a boolean flag

created indicating whether if the user created is a new one (i.e. created = True) or an existing one (i.e. created = False)

It is import to not forget including

user.save() in the end. Because

set_password does NOT save the password to the database.

Now a user has been created successfully, the next step is to login.

user = authenticate(username=email, password=password) login(request, user)

authenticate() only sets

user.backend to whatever authentication backend Django uses. So the code above is equivlent to:

user.backend = 'django.contrib.auth.backends.ModelBackend' login(request, user)

Django's documentation recommends the first way of doing it. However, there is an use case for the second approach. When you want to login an user without a password:

username = self.request.GET.get('username') user = User.objects.get(username=username) user.backend = 'django.contrib.auth.backends.ModelBackend' login(request, user)

The is used when security isn't an issue but you still want to distinguish between who's who on your site.

So to sum up the code above, here is the view_handler that manually create and login an user:

def view_handler(request, *args, **kwargs): email = request.POST.get('email', None) password = request.POST.get('password', None) if email and password: user, created = User.objects.get_or_create(username=email, email=email) if created: user.set_password(password) user.save() user = authenticate(username=email, password=password) login(request, user) return HttpResponseRedirect('where_ever_should_be_redirect_to') else: # return error or redirect to login page again

When writing a Django project, it happens often that mulitple apps will be included. Let me use an example:

Project - Account - Journal

In this example, I created a Django project that contains two apps. The

Account app handles user registration and login. The

Journal app allows users to write journals and save it to the database. Here is the what the urls look like:

#ROOT_URLCONF urlpatterns = [ url(r'^account/', include('Account.urls', namespace='account')), url(r'^journal/', include('Journal.urls', namespace='journal')), #This namespace name is used later, so just remember we have given everything under journal/ a name ]

This above file is what the

ROOT_URLCONF points to. Inside the

Note app, the urls look like this:

urlpatterns = [ url(r'^(?P<id>[0-9]{4})/$', FormView.as_view(), name = 'detail'), ]

So each journal has a 4 digit id. When a journal is access, it's url may look like this:

Let's say user

John bookmarked a journal written by another person. He wants to comment on it. When John tries to access that journal, he is redirected to the login page. In the login page's view handler, a redirect should be made to Journal ID

1231 once authentication is passed:

def view_handler(request): # authentication passed return redirect(reverse('detail', kwargs={'id', '1231'}))

The

reverse(...) statement is not going to work in this case. Because the view_handler belongs to the

Account app. It does not know about the urls inside the

Journal app. To be able to redirect to the detail page of the

Journal app:

reverse('journal:detail', kwargs={'id', '1231'})

So the format for reversing urls that belong to other apps is:

reverse('namespace:name', args, kwargs) | http://cheng.logdown.com/tags/django | CC-MAIN-2018-43 | refinedweb | 5,159 | 56.55 |

The following release notes cover the most recent changes over the last 30:

September 17, 2020Anthos

Anthos 1.4.3 is now available.

Updated components:

Anthos 1.3.4 is now available.

Updated components:

GKE on AWS 1.4.3-gke.7 is now available. GKE on AWS 1.4.3-gke.7 clusters run on Kubernetes 1.16.13-gke.1402.

To Upgrade:

- Upgrade your Management service to 1.4.3-gke.7.

- Upgrade your user clusters to to 1.16.13-gke.1402.

A vulnerability, described in CVE-2020-14386, was recently discovered in the Linux kernel. The vulnerability may allow container escape to obtain root privileges on the host node.

All GKE on AWS nodes are affected.

To fix this vulnerability, upgrade your management service and user clusters to this patched version. The following GKE on AWS version contains the fix for this vulnerability:

- GKE on AWS 1.4.3

For more information, see the Security Bulletin

Anthos.

Anthos.

The BigQuery Data Transfer Service is now available in the following regions: Los Angeles (us-west2), São Paolo (southamerica-east1), South Carolina (us-east1), Hong Kong (asia-east1) and Osaka (asia-northeast2).

The BigQuery Data Transfer Service is now available in the following regions: Los Angeles (us-west2), São Paolo (southamerica-east1), South Carolina (us-east1), Hong Kong (asia-east1) and Osaka (asia-northeast2)..

You can now migrate a VM instance from one network to another. This feature is available in Beta.

The issue with undeleting service accounts has been resolved. You can now undelete most service accounts that meet the criteria for undeletion.

September 16, 2020Compute Engine

Troubleshoot VMs by capturing a screenshot from the VM. This is Generally Available.

You can now use the

goog-firestoremanaged billing report label to view costs related to export and import operations.

You can now use the

goog-firestoremanaged billing report label to view costs related to import and export operations.

There is a known issue with the upgrade from GKE 1.16 to 1.17. Any custom resources you created in the

istio-system namespace are deleted during an upgrade to 1.17 (R30 or earlier). These resources must be manually recreated. We recommend that you do not upgrade to GKE 1.17 until a patch release fixes the issue. The fix will be rolled out in GKE release R31.

September 15, 2020Cloud Load Balancing

Added total latency to external HTTP(S) load balancer Cloud Logging entries. Total latency measures from when the external HTTP(S) load balancer receives the first bytes of the incoming request headers until the external HTTP(S) load balancer finishes proxying the backend's response to the client. This feature is now available in General Availability.

Cloud SQL now offers serverless export. With serverless export, Cloud SQL performs the export from a temporary instance. Offloading the export operation allows databases on the primary instance to continue to serve queries and perform other operations at the usual performance rate.

Cloud SQL now offers serverless export. With serverless export, Cloud SQL performs the export from a temporary instance. Offloading the export operation allows databases on the primary instance to continue to serve queries and perform other operations at the usual performance rate.

The following PostgreSQL minor versions have been upgraded:

- PostgreSQL 9.6.16 is upgraded to 9.6.18.

- PostgreSQL 10.11 is upgraded to 10.13.

- PostgreSQL 11.6 is upgraded to 11.8.

- PostgreSQL 12.1 is upgraded to 12.3.

SSD persistent disks attached to certain VMs with at least 64 vCPUs can now reach 100,000 write IOPS. To learn more about the requirements to reach these limits, see Block storage performance.

September 14, 2020Cloud CDN

Cache Modes, TTL overrides and custom response headers are now supported on backend buckets and backend services, and are available in beta.

Cache modes allow Cloud CDN to automatically cache static content types, including web assets like CSS, JavaScript and fonts, as well as image and video content.