text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91

values | source stringclasses 1

value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

|---|---|---|---|---|---|

Been made to do this task where I need to make an animal guessing game using if-else. I cannot figure out how to make this code so it will add more than four animals. The game is basically

Think of an animal.

Is it a bird? yes

Can it fly? no

Is it an emu? no

Oh. Well, thank you for playing.

This is all the animals I need to include:

TREE.JPG

and this is the code I have to modify to include the new animals in:

public class animalquiz { public static void main(String[] args) { public static void main(String[] args) { Scanner keyboard = new Scanner(System.in); boolean answerIsCorrect; System.out.println("Think of an animal.\n"); if(ask("Is it a bird? ", keyboard)) { if(ask("Can it fly? ", keyboard)) { answerIsCorrect = ask("Is it a kookaburra? ", keyboard); } else { answerIsCorrect = ask("Is it an emu? ", keyboard); } } else { if(ask("Does it lay eggs? ", keyboard)) { answerIsCorrect = ask("Is it a platypus? ", keyboard); } else { answerIsCorrect = ask("Is it a kangaroo? ", keyboard); } } if(answerIsCorrect) { System.out.println("Yes! I am invincible!"); } else { System.out.println("Oh. Well, thank you for playing."); } } /** * A utility method to ask a yes/no question * * @param question the question to ask * @param a scanner for user input * * @return whether the user answered "yes" (actually, whether the user answered anything starting with Y or y) */ private static boolean ask(String question, Scanner keyboard) { System.out.print(question); String answer = keyboard.nextLine().trim(); return answer.charAt(0) == 'Y' || answer.charAt(0) == 'y'; } }

I hope someone can help and explain how I can do this | http://www.javaprogrammingforums.com/loops-control-statements/26134-guessing-game-help.html | CC-MAIN-2016-36 | refinedweb | 266 | 62.54 |

The Java Specialists' Newsletter

Issue 0452002-04-11

Category:

GUI

Java version:

GitHub

Subscribe Free

RSS Feed

Welcome to the 45th edition of The Java(tm) Specialists' Newsletter, read in over 78 countries,

with newest additions Thailand and Iceland. Both end with "land"

but they couldn't be two more opposite countries. Why don't

those drivers who insist on crawling along the German Autobahn at

140km/h stick to the slow lane? I drove almost 500km on Friday, and had the opportunity to meet

Carl Smotricz (my archive

keeper) and some other subscribers in Frankfurt. We had

some very inspiring discussions regarding Java performance,

enjoyed some laughs at Java's expense and listened to my tales

of life in South Africa.

Unsubscription Fees: Some of my readers wrote to tell me

what a fantastic idea unsubscription fees were to make some

money. Others wrote angry notes asking how I had obtained their

credit card details. All of them were wrong! Note the date of

our last newsletter - 1st April! Yes, it was all part of the

April Fool's craze that hits the world once a year.

Apologies to those of you who found that joke in poor taste (my

wife said I shouldn't put it in, but I didn't listen to her).

The rest of the newsletter was quite genuine. A friend, who was

caught "hook, line & sinker", suggested that I should clear

things up and tell you exactly what my purpose is in publishing

"The Java(tm) Specialists' Newsletter":

#1. Publishing this newsletter is my hobby: No idealism

here at all. A friend encouraged me a few years ago to write

down all the things I had been telling him about Java, so one day

I simply started, and I have carried on doing it. It's a great

way to relax, put the feet up and think a while.

#2. There are no subscription / unsubscription fees:

The day that I'm so broke that I need to charge you for reading

the things I write, will be the day that I immediately start

looking for work as a permanent employee again. There are

neither subscription nor unsubscription fees, nor will there ever

be.

#3. How do I earn my living? Certainly not by

writing newsletters! I spend about 75% of my time writing Java

code on contract for customers situated in various parts of the

world. 20% of my time is spent presenting Java and Design Patterns

courses in interesting places such as Mauritius and South Africa

and the last 5% is spent advising companies about Java

technology.

#4. Marketing for Maximum Solutions: Because people

know my company and me through this newsletter, I have received

many requests for courses, contract work and consulting, and this

helps me to make a living. My hobby of writing the newsletter

has turned out to have some nice side effects.

And now, without wasting any more time, let's look at a real-life

Java problem...

Join us on Crete (or via webinar) for advanced Core Java Courses:Concurrency Specialists Course 1-4 April 2014 and

Java Specialists Master Course 20-23 May 2014.

The last slide of all my courses says that my students may send

me questions any time they get stuck. A few weeks ago

Robert Crida from Peralex in Bergvliet, South Africa, who came on

my Java course last

year, asked me how to display a JTextArea

within a cell of a JTable. I sensed it would take more than 5

minutes to answer and being in a rush to finish some work

inbetween Mauritius and Germany, I told him it would take me a

few days to get back to him. When I got to Germany, I promptly

forgot about his problem, until one of his colleagues reminded me

last week.

Robert was trying to embed a JTextArea object within a JTable.

The behaviour that he was getting was that when he resized the

width of the table, he could see that the text in the text area

was being wrapped onto multiple lines but the cells did not

become higher to show those lines. He wanted the table row

height to be increased automatically to make the complete text

area visible.

He implemented a JTextArea cell renderer as below:

import java.awt.Component;

import javax.swing.JTable;

import javax.swing.JTextArea;

import javax.swing.table.TableCellRenderer;

public class TextAreaRenderer extends JTextArea

implements TableCellRenderer {

public TextAreaRenderer() {

setLineWrap(true);

setWrapStyleWord(true);

}

public Component getTableCellRendererComponent(JTable jTable,

Object obj, boolean isSelected, boolean hasFocus, int row,

int column) {

setText((String)obj);

return this;

}

}

I wrote some test code to try this out. Before I continue, I

need to point out that I use the SUN JDK 1.3.1 whereas

Robert uses the SUN JDK 1.4.0. The classic "write once,

debug everywhere" is a topic for another newsletter ...

import javax.swing.*;

import java.awt.BorderLayout;

public class TextAreaRendererTest extends JFrame {

// The table has 10 rows and 3 columns

private final JTable table = new JTable(10, 3);

public TextAreaRendererTest() {

// We use our cell renderer for the third column

table.getColumnModel().getColumn(2).setCellRenderer(

new TextAreaRenderer());

// We hard-code the height of rows 0 and 5 to be 100

table.setRowHeight(0, 100);

table.setRowHeight(5, 100);

// We put the table into a scrollpane and into a frame

getContentPane().add(new JScrollPane(table));

// We then set a few of the cells to our long example text

String test = "The lazy dog jumped over the quick brown fox";

table.getModel().setValueAt(test, 0, 0);

table.getModel().setValueAt(test, 0, 1);

table.getModel().setValueAt(test, 0, 2);

table.getModel().setValueAt(test, 4, 0);

table.getModel().setValueAt(test, 4, 1);

table.getModel().setValueAt(test, 4, 2);

}

public static void main(String[] args) {

TextAreaRendererTest test = new TextAreaRendererTest();

test.setSize(600, 600);

test.setDefaultCloseOperation(JFrame.EXIT_ON_CLOSE);

test.show();

}

}

You'll notice when you run this, that when the row is high enough

the text wraps very nicely inside the JTextArea, as

in cell (0, 2). However, the JTable does not increase the row

height in cell (4, 2) just because you decide to put a tall

component into the cell. It requires a bit of prodding to do

that.

JTextArea

My first approach was to override getPreferredSize()

in the TextAreaRenderer class. However, that didn't work because

JTable didn't take your preferred size into account in sizing the

rows. I spent about an hour delving through the source code of

JTable and JTextArea. After a lot of

experimentation, I found out that JTextArea actually

had the correct preferred size according to the width of the

column in the JTable. I tried changing the

getTableCellRendererComponent() method:

getPreferredSize()

JTable

getTableCellRendererComponent()

public Component getTableCellRendererComponent(JTable jTable,

Object obj, boolean isSelected, boolean hasFocus, int row,

int column) {

setText((String)obj);

table.setRowHeight(row, (int)getPreferredSize().getHeight());

return this;

}

On first glimpse, the program seemed to work correctly now,

except that my poor CPU was running at 100%. The problem was that

when you set the row height, the table was invalidated and that

caused getTableCellRendererComponent() to be called

in order to render all the cells again. This in turn then set

the row height, which invalidated the table again. In order to

put a stop to this cycle of invalidation, I needed to check

whether the row is already the correct height before setting it:

public Component getTableCellRendererComponent(JTable jTable,

Object obj, boolean isSelected, boolean hasFocus, int row,

int column) {

setText((String)obj);

int height_wanted = (int)getPreferredSize().getHeight();

if (height_wanted != table.getRowHeight(row))

table.setRowHeight(row, height_wanted);

return this;

}

I tried it out (on SUN JDK 1.3.1) and it worked perfectly.

There are some restrictions with my solution:

Satisfied, I sent off the answer to Robert, with the words:

"After spending an hour tearing out my hair, I found a solution

for you, it's so simple you'll kick yourself, like I did myself

;-)"

A few hours the answer came back: "Your solution does not solve

my problem at all."

"What?" I thought. Upon questioning his configuration, we

realised that I was using JDK 1.3.1 and Robert was using JDK

1.4.0. I tried it on JDK 1.4.0 on my machine, and truly, it did

not render properly! What had they changed so that it didn't

work anymore? After battling for another hour trying to figure

out what the difference was and why it didn't work out, I gave up

and carried on with my other work of tuning someone's application

server. If you know how to do it in JDK 1.4.0, please tell me!

I have avoided JDK 1.4 for real-life projects, because I prefer

others to find the bugs first. Most of my work is spent

programming on real-life projects, so JDK 1.3.1 is the version

I'm stuck with. My suspicion of new JDK versions goes back to

when I started using JDK 1.0.x, JDK 1.1.x, JDK 1.2.x. I found

that for every bug that was fixed in a new major version, 3 more

appeared, and I grew tired of being a guinea pig. I must admit

that I'm very happy with JDK 1.3.1, as I was with JDK 1.2.2 and

JDK 1.1.8. I think that once JDK 1.4.1 is released I'll start

using it and then you'll see more newsletters about that version

of Java.

In a future newsletter I will demonstrate how you can implement

"friends" at runtime in the JDK 1.4.

Heinz

GUI Articles

Related Java Course | http://www.javaspecialists.eu/archive/Issue045.html | CC-MAIN-2014-10 | refinedweb | 1,616 | 63.59 |

Measuring the coverage of unit tests is an important topic because we need to check the design of the tests and the scope in the code of them.

Coverlet is an amazing tool to measure the coverage of unit tests in .NET projects.

Coverlet is completely opensource and free. it supports .NET and .NET Core and you can add it as a NuGet Package.

Coverlet

Coverlet is a cross platform code coverage framework for .NET, with support for line, branch and method coverage. It works with .NET Framework on Windows and .NET Core on all supported platforms.

Installation

VSTest Integration:

dotnet add package coverlet.collector

N.B. You MUST add package only to test projects

MSBuild Integration:

dotnet add package coverlet.msbuild

N.B. You MUST add package only to test projects

Global Tool:

dotnet tool install --global coverlet.console

Quick Start

VSTest Integration

Coverlet is integrated into the Visual Studio Test Platform as a data collector. To get coverage simply run the following command:

dotnet test --collect:"XPlat Code Coverage"

After the above command is run, a

coverage.cobertura.json file containing the results will be published to the

TestResults directory as an attachment. A summary of the results will also be displayed in the terminal.

See documentation for advanced usage.

Requirements

- You need…

First, we have to add the NuGet within an existing unit test project (MSTest, xUnit, etc..). Coverlet has many ways to use it but I recommend to use MSBuild.

dotnet add package coverlet.msbuild

after that, we can use easily the integration between MSBuild and coverlet to run the test and measure the coverage with the following command:

dotnet test /p:CollectCoverage=true

you will get the following result:

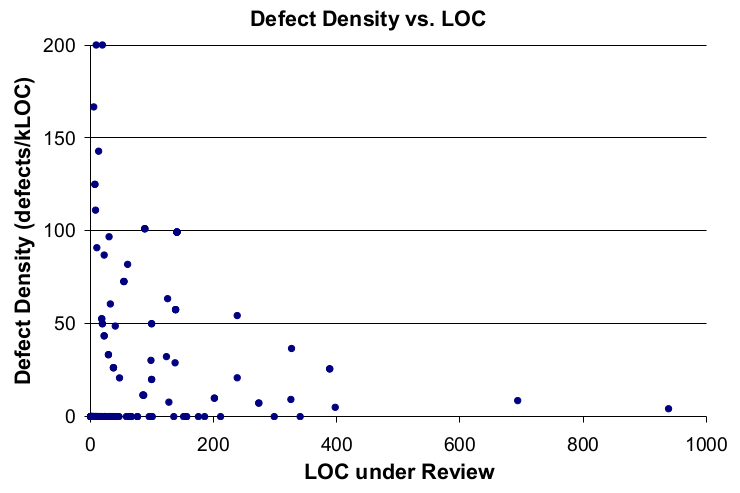

In the first column from left to right we can see the list of modules covered. In the column 'Line', we get the percentage of lines checked after running the tests and it's the same for 'Branch'(statements) and 'Method'(functions inside the classes).

Coverlet generates a file coverage.json that contains the whole information displayed in the console. You can consume this file with your own application.

Try it out!

Mteheran

/

CoverletDemo

Coverlet demo for unit test coverage

CoverletDemo

Coverlet demo for unit test coverage

Posted on by:

Miguel Teheran

Developer by passion, C#, animals, nature and travel lover.

Discussion

Thanks for this article. Trying to connect coverlet test coverage to my CI pipeline now but didn't get how to collect total test coverage. I have 3 test projects, like Project1.Tests.csproj, Project2.Tests.csproj and Project3.Tests.csproj and coverlet shows tests coverage for each project separately. Wondering is there a possibility to collect total test coverage between all projects.

Coverlet always shows the namespaces separated but you also will see a total table at the end. you have to put the test dlls (binaries) in the same folder and run "dotnet test /p:CollectCoverage=true" there.

Thanks, it is what I need actually! Also, I have added a few more command line arguments. Here is the command that works for me perfectly (just in case if anyone needs it):

Sweet! Thanks for sharing it | https://dev.to/mteheran/coverlet-unit-tests-coverage-for-net-4954 | CC-MAIN-2020-40 | refinedweb | 524 | 58.48 |

As you know, there are many frameworks for mobile app development and a growing number of them are based on HTML5. These next-generation tools help developers create mobile apps for phones and tablets without the steep learning curve associated with native SDKs and other programming languages like Objective-C or Java. For countless developers throughout the world, HTML5 represents the future for cross-platform mobile app development.

But the question is why? Why has HTML5 become so popular?

The widespread adoption of HTML5 involves the emergence of the bring your own device (BYOD) movement. BYOD means that developers can no longer limit application usage to a single platform because consumers want their apps to run on the devices they use every day. HTML5 allows developers to target multiple devices with a single codebase and deliver experiences that closely emulate native solutions, without writing the same application multiple times, using multiple languages or SDKs. The evolution of modern web browsers means that HTML5 can deliver cross-platform, multi-device solutions that mirror behaviors and experiences of “native” apps to the point that it’s often difficult to distinguish between an app written using native development tools and those using HTML.

Multiple platform support, time to market and lower maintenance costs are just a few of the advantages inherent in HTML/JavaScript. It’s advantages don’t stop there. HTML’s ability to mitigate long-term risks associated with emerging technologies such as WinRT, ChromeOS, FirefoxOS, and Tizen is unmatched. Simply said, the only code that will work on all these platforms is HTML/JavaScript.

Is there a price? Yes, certainly native app consumes less memory and will have faster or more responsive user experience. But in all cases where a web app would work, you can make a step further and create a mobile web app or even packaged application for the store, for multiple platforms, from single codebase. PhoneJS lets you get started fast.

PhoneJS is a cross-platform HTML5 mobile app development framework that was built to be versatile, flexible and efficient. PhoneJS is a single page application (SPA) framework, with view management and URL routing. Its layout engine allows you to abstract navigation away from views, so the same app can be rendered differently across different platforms or form factors. PhoneJS includes a rich collection of touch-optimized UI widgets with built-in styles for today’s most popular mobile platforms including iOS, Android, and Windows Phone 8.

To better understand the principles of PhoneJS development and how you can create and publish applications in platform stores, let’s take a look at a simple demo app called TipCalculator. This application calculates tip amounts due based on a restaurant bill. The complete source code for this app is available here.

The app can be found in the AppStore, Google Play, and Windows Store.

TipCalculator is a Single-Page Application (SPA) built with HTML5. The start page is index.html, with standard meta tags and links to CSS and JavaScript resources. It includes a script reference to the JavaScript file index.js, where you’ll find the code that configures PhoneJS app framework logic:

TipCalculator.app = new DevExpress.framework.html.HtmlApplication({

namespace: TipCalculator,

defaultLayout: "empty"

});

Within this section, we must specify the default layout for the app. In this example, we’ll use the simplest option, an empty layout. More advanced layouts are available with full support for interactive navigation styles described in the following images:

empty

PhoneJS uses well-established layout methodologies supported by many server-side frameworks, including Ruby on Rails and ASP.NET MVC. Detailed information about Views and Layouts can be found in our online documentation.

To configure view routing in our SPA, we must add an additional line of code in index.js:

TipCalculator.app.router.register(":view", { view: "home" });

This registers a simple route that retrieves the view name from the URL (from the hash segment of the URL). The home view is used by default. Each view is defined in its own HTML file and is linked into the main application page index.html like this:

<link rel="dx-template" type="text/html" href="views/home.html" />

A viewmodel is a representation of data and operations used by the view. Each view has a function with the same base name as the view itself and returns the viewmodel for the view. For the home view, the views/home.js script defines the function home which creates the corresponding viewmodel.

TipCalculator.home = function(params) {

...

};

Three input parameters are used for the tip calculation algorithm: bill total, the number of people sharing the bill, and a tip percentage. These variables are defined as observables, which will be bound to corresponding UI widgets.

Note: Observables functionality is supplied by Knockout.js, an important foundation for viewmodels used in PhoneJS. You can learn more about Knockout.js here.

This is the code used in the home function to initialize the variables:

var billTotal = ko.observable(),

tipPercent = ko.observable(DEFAULT_TIP_PERCENT),

splitNum = ko.observable(1);

The result of the tip calculation is represented by four values: totalToPay, totalPerPerson, totalTip , tipPerPerson . Each value is a dependent observable (a computed value), which is automatically recalculated when any of the observables used in its definition change. Again, this is standard Knockout.js functionality.

totalToPay

totalPerPerson

totalTip

tipPerPerson

var totalTip = ko.computed(...);

var tipPerPerson = ko.computed(...);

var totalPerPerson = ko.computed(...);

var totalToPay = ko.computed(...);

For an example of business logic implementation in a viewmodel, let’s take a closer look at the observable totalToPay.

The total sum to pay is usually rounded. For this purpose, we have two functions roundUp and roundDown that change the value of roundMode (another observable). These changes cause recalculation of totalToPay, because roundMode is used in the code associated with the totalToPay observable.

roundDown

roundMode

var totalToPay = ko.computed(function() {

var value = totalTip() + billTotalAsNumber();

switch(roundMode()) {

case ROUND_DOWN:

if(Math.floor(value) >= billTotalAsNumber())

return Math.floor(value);

return value;

case ROUND_UP:

return Math.ceil(value);

default:

return value;

}

});

When any input parameter in the view changes, rounding should be disabled to allow the user to view precise values. We subscribe to the changes of the UI-bound observables to achieve this:

billTotal.subscribe(function() {

roundMode(ROUND_NONE);

});

tipPercent.subscribe(function() {

roundMode(ROUND_NONE);

});

splitNum.subscribe(function() {

roundMode(ROUND_NONE);

});

The complete viewmodel can be found in home.js. It represents a simple example of a typical viewmodel.

Note: In a more complex app, it may be useful to implement a structure that modularizes your viewmodels separate from view implementation files. In other words, a file like home.js need not contain the code to implement the viewmodel and instead call a helper function elsewhere for this purpose. In this walkthrough we’re trying to keep things structurally simple.

Let’s now turn to the markup of the view located in the view/home.html file. The root div element represents a view with the name ‘home’. Within it is a div containing markup for a placeholder called ‘content ’.

content

<div data-

<div data-

...

</div>

</div>

A toolbar is located at the top of the view:

<div data-</div>

dxToolbar is a PhoneJS UI widget. It’s defined in the markup using Knockout.js binding.

dxToolbar

A fieldset appears below the toolbar. To display a fieldset, we use two special CSS classes understood by PhoneJS: dx-fieldset and dx-field. The fieldset contains a text field for the bill total and two sliders for the tip percentage and the number of diners.

dx-fieldset

dx-field

<div data-

</div>

<div data-</div>

<div data-</div>

Two buttons (dxButton) are displayed below the editors, allowing the user to round the total sum to pay. The remaining view displays fieldsets used for calculated results.

dxButton

<div class="round-buttons">

<div data-</div>

<div data-</div>

</div>

<div id="results" class="dx-fieldset">

<div class="dx-field">

<span class="dx-field-label">Total to pay</span>

<span class="dx-field-value" style="font-weight: bold" data-</span>

</div>

<div class="dx-field">

<span class="dx-field-label">Total per person</span>

<span class="dx-field-value" data-</span>

</div>

<div class="dx-field">

<span class="dx-field-label">Total tip</span>

<span class="dx-field-value" data-</span>

</div>

<div class="dx-field">

<span class="dx-field-label">Tip per person</span>

<span class="dx-field-value" data-</span>

</div>

</div>

This completes the description of the files required to create a simple app using PhoneJS. As you’ve seen, the process is simple, straightforward and intuitive.

Starting and debugging a PhoneJS app is just like any other HTML5 based app. You must deploy the folder containing HTML and JavaScript sources, along with any other required file to your web server. Because there is no server-side component to the architectural model, it doesn’t matter which web server you use as long as it can provide file access through HTTP. Once deployed, you can open the app on a device, in an emulator or a desktop browser by simply navigating app’s start page URL.

If you want to view the app as it will appear in a phone or tablet within a desktop browser, you will have to override the UserAgent in the browser. Fortunately, this is easy to do with the developer tools that ship as part of today’s modern browsers:

If you prefer not to modify UserAgent settings, you can use the Ripple Emulator to emulate multiple device types.

At this point you have a web application that will work in the browser on the mobile device and look like native app. Modern mobile browsers provide access to local storage, location api, camera, so good chances are that your app already has anything it needs.

But what if you need access to device features that browser does not provide? What if you want an app in the app store, not just a webpage. Then you’ll have to create a hybrid application and de-facto standard for such an app is Apache Cordova aka PhoneGap.

PhoneGap project for each platform is a native app project that contains WebView (browser control) and a “bridge” that lets your JavaScript code inside WebView access native functions provided by PhoneGap libraries and plugins.

To use it, you need to have SDK for each platform you are targeting, but you don’t need to know details of native development, you just need to put your HTML, CSS, JS files into right places and specify your app’s properties like name, version, icons, splashcreens and so on.

To be able to publish your app, you will need to register as developer in the respective development portal. This process is well documented for each store and beyond the scope of this article. After that you’ll be able to receive certificates to sign your app package.

The need to have SDK for each platform installed sounds challenging - especially after “write one, run everywhere” promise of HTML5/JS approach. This is a small price to pay for building hybrid application and have everything under control. But still there are several services and products that solves this problem for you.

One is Adobe’s online service - PhoneGap Build which allows you to build one app for free (to build more, you’ll need a paid account). If you have all the required platform certificate files, the service can build your app for all supported platforms with a few mouse clicks. You only need to prepare app descriptions, promotional and informational content and icons in order to submit your app to an individual store.

For Visual Studio developers, DevExpress offers a product called DevExtreme (it includes PhoneJS), which can build applications for iOS, Android and Windows Phone 8 directly within the Microsoft Visual Studio IDE.

To summarize, if you need a web application that looks and feels like native on a mobile device, you need PhoneJS - it contains everything required to build touch-enabled, native-looking web application. If you want to go further and access device features, like the contact list or camera, from JavaScript code, you will need Cordova aka PhoneGap. PhoneGap also lets you compile your web app into a native app package. If you don’t want to install an SDK for each platform you are targeting, you can use the PhoneGapBuild service to build your package. Finally, if you have DevExtreme, you can build packages right inside Visual Studio.

This article, along with any associated source code and files, is licensed under The Code Project Open License (CPOL) | http://www.codeproject.com/Articles/633706/PhoneJS-HTML5-JavaScript-Mobile-Development-Framew | CC-MAIN-2015-06 | refinedweb | 2,095 | 55.03 |

Install packages with Python tools on SQL Server

Applies to:

SQL Server 2017 (14.x) only

This article describes how to use standard Python tools to install new Python packages on an instance of SQL Server Machine Learning Services. In general, the process for installing new packages is similar to that in a standard Python environment. However, some additional steps are required if the server does not have an Internet connection.

For more information about package location and installation paths, see Get Python package information.

Prerequisites

- You must have SQL Server Machine Learning Services installed with the Python language option.

Other considerations

Packages must be Python 3.5-compliant and run on Windows.

The Python package library is located in the Program Files folder of your SQL Server instance and, by default, installing in this folder requires administrator permissions. For more information, see Package library location.

Package installation is per instance. If you have multiple instances of Machine Learning Services, you must add the package to each one.

Database servers are frequently locked down. In many cases, Internet access is blocked entirely. For packages with a long list of dependencies, you will need to identify these dependencies in advance and be ready to install each one manually.

Before adding a package, consider whether the package is a good fit for the SQL Server environment.

We recommend that you use Python in-database for tasks that benefit from tight integration with the database engine, such as machine learning, rather than tasks that simply query the database.

If you add packages that put too much computational pressure on the server, performance will suffer.

On a hardened SQL Server environment, you might want to avoid the following:

- Packages that require network access

- Packages that require elevated file system access

- Packages used for web development or other tasks that don't benefit by running inside SQL Server

Add a Python package on SQL Server

To install a new Python package that can be used in a script on SQL Server, you install the package in the instance of Machine Learning Services. If you have multiple instances of Machine Learning Services, you must add the package to each one.

The package installed in the following examples is CNTK, a framework for deep learning from Microsoft that supports customization, training, and sharing of different types of neural networks.

For offline install, download the Python package

If you are installing Python packages on a server with no Internet access, you must download the WHL file from a computer with Internet access and then copy the file to the server.

For example, on an Internet-connected computer you can download a

.whl file for CNTK and then copy the file to a local folder on the SQL Server computer. See Install CNTK from Wheel Files for a list of available

.whl files for CNTK.

Important

Make sure that you get the Windows version of the package. If the file ends in .gz, it's probably not the right version.

For more information about downloads of the CNTK framework for multiple platforms and for multiple versions of Python, see Setup CNTK on your machine.

Locate the Python library

Locate the default Python library location used by SQL Server. If you have installed multiple instances, locate the

PYTHON_SERVICES folder for the instance where you want to add the package.

For example, if Machine Learning Services was installed using defaults, and machine learning was enabled on the default instance, the path is:

cd "C:\Program Files\Microsoft SQL Server\MSSQL14.MSSQLSERVER\PYTHON_SERVICES"

Tip

For future debugging and testing, you might want to set up a Python environment specific to the instance library.

Install the package using pip

Use the pip installer to install new packages. You can find

pip.exe in the

Scripts subfolder of the

PYTHON_SERVICES folder. SQL Server Setup does not add the

Scripts subfolder to the system path, so you must specify the full path, or you can add the Scripts folder to the PATH variable in Windows.

Note

If you're using Visual Studio 2017, or Visual Studio 2015 with the Python extensions, you can run

pip install from the Python Environments window. Click Packages, and in the text box, provide the name or location of the package to install. You don't need to type

pip install; it is filled in for you automatically.

If the computer has Internet access, provide the name of the package:

scripts\pip.exe install cntk

You can also specify the URL of a specific package and version, for example:

scripts\pip.exe install

If the computer does not have Internet access, specify the WHL file you downloaded earlier. For example:

scripts\pip.exe install C:\Downloads\cntk-2.1-cp35-cp35m-win_amd64.whl

You might be prompted to elevate permissions to complete the install. As the installation progresses, you can see status messages in the command prompt window.

Load the package or its functions as part of your script

When installation is complete, you can immediately begin using the package in Python scripts in SQL Server.

To use functions from the package in your script, insert the standard

import <package_name> statement in the initial lines of the script:

EXECUTE sp_execute_external_script @language = N'Python', @script = N' import cntk # Python statements ... ' | https://docs.microsoft.com/en-us/sql/machine-learning/package-management/install-python-packages-standard-tools?view=sql-server-2017&viewFallbackFrom=azuresqldb-mi-current | CC-MAIN-2022-05 | refinedweb | 879 | 54.22 |

Windows Containers are an isolated, resource controlled, and portable operating environment. An application inside a container can run without affecting the rest of the system and vice versa. This isolation makes SQL Server in a Windows Container ideal for Rapid Test Deployment scenarios as well as Continuous Integration Processes. This blog provides a step by step guide of setting up SQL Server Express 2014 in a Windows Container by using this community-contributed Dockerfile.

Prerequisites

• System running Windows Server Technical Preview 4 or later.

• 10GB available storage for container host image, OS Base Image and setup scripts.

• Administrator permissions on the system.

Setup a VM or a physical machine as a Windows Container Host

Step 1: Start a PowerShell session as administrator. This can be done by running the following command from the command line.

PS C:\> powershell.exe

Step 2: Use the following command to download the setup script. Note: The script can also be manually downloaded from this location – Configuration Script.

wget -uri -OutFile C:\Install-ContainerHost.ps1

Step 3: After the download completes, execute the script.

PS C:\> powershell.exe -NoProfile C:\Install-ContainerHost.ps1

With these steps completed your system should be ready for Windows Containers. You can follow this link to read the full article that describes more on setting up a VM or a physical machine as a Windows Container Host.

Installing SQL Server Express 2014 in a Windows Container

Step 1: Copy all files from this community-contributed Dockerfile locally into your c:\sqlexpress folder.

Note: All files were provided by brogersyh . The Dockerfile contains all the SQL Server configuration settings (ports, passwords etc.) that you can customize if needed.

Step 2: Start a PowerShell session and run the following command:

docker build -t sqlexpress .

Starting SQL Server

Step 1: Start a PowerShell session and run the following command:

docker run -it -p 1433:1433 sqlexpress cmd

Step 2: Enter PowerShell in the Container Window by typing:

Powershell

Step 3: Start SQL Server by running the following PS Script (downloaded from step2):

./start

Step 4: Verify that SQL Server is running with the following command:

get-service *sql*

Next Steps

We are currently working on testing and publishing SQL Server Container Images that could speed up the process of getting started with SQL Server in Windows Containers significantly. Stay tuned for an update!

Further Reading

Windows Containers Overview

Windows-based containers: Modern app development with enterprise-grade control.

Windows Containers: What, Why and How

Join the conversationAdd Comment

ist there a way to access the DB with the sql management studio?

Absolutely! You can access the DB from sql management studio by using the container’s IP Address along with the sql authentication credentials stored in the Dockerfile (Installing SQL Server Express 2014 in a Windows Container: Step1 int he tutorial). Use the container’s internal IP Address if you are accessing the DB from SSMS installed on the Host and the external IP Address if you are accessing it from another machine. Note that a firewall rule might need to be created depending upon your setup.

Please refer to this article for more information regarding Container Networking:.

What is the advantage of a Docker container running SQL Server in isolation over a VM running SQL Server in isolation? With a VM, I only need one copy of the OS bits. With a Docker container, there appears to be multiple copies of the OS bits in C:\ProgramData\docker\windowsfilter. After following the instructions above I ended up with 21GB of bloat in those folders. What’s the point?

Thanks a lot for the feedback Paul.

You can think of containers as another resource sharing option and not necessarily as a VM replacement. Depending on the scenario one option might be more suitable than the other.

With that said, choosing between a container and a VM can be tricky, but I believe that this blog post: provides a nice overview on Containers and explains their key differences compared to VMs (Eg. namespace isolation, governing resources etc.).

Hope that helps.

Hi,

I was able to build the container image with you scripts:

PS C:\sqlexpress> docker images

REPOSITORY TAG IMAGE ID CREATED VIRTUAL SIZE

sqlexpress latest 707d46ea7004 16 minutes ago 3.239 GB

But when I try to start the container, I get a huge error:

PS C:\sqlexpress> docker run -it -p 1433:1433 sqlexpress cmd

docker : panic: runtime error: invalid memory address or nil pointer dereference

At line:1 char:1

+ docker run -it -p 1433:1433 sqlexpress cmd

+ ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

+ CategoryInfo : NotSpecified: (panic: runtime …ter dereference:String) [], RemoteException

+ FullyQualifiedErrorId : NativeCommandError

Any ideas?

Hi Pedro –

Could you please post this issue to the Windows container forum for further investigation?

Thanks,

Perry

Hi Perry,

Yes, I can. I’ve been digging a little bit more on the subject but I will post it to the forum.

hello I have followed your steps and everything is working like a charm.

Thanks you

Great! Thanks for trying SQL in Windows Containers SoyUnEmilio!

How can one attach already present mdf and log file?

what exactly is happening in datatemp, start.ps1?

Hi Sam –

Please take a look at the following section that describes how you can attach existing .mdf and .ldf files.

Can you be a bit more specific on your datatemp, start.ps1 question?

Thanks, Perry

Given that SQL Server uses non-preemptive scheduling and aside from two windows DLLs it is virtually bare metal when it comes to scheduling, how does resource management work with SQL Server and windows containers, in terms of constraining the CPU resource and instance uses.

Hi Chris –

Please take a look at this:–Part-4-Resource-Management video that walks through how to manage resources, such as CPU, disk, memory and network, for Windows Server Containers.

Thanks, Perry

Is there an option to run full SQL Server i.e. not express, in a container?

Hi Andrew –

Although, currently SQL Server Express is the only edition available as a Docker image, you could edit the existing Docker file: and create your own image.

Thanks,

Perry

Hi I have one container with Web site up and running on the my development machine windows 2016(I did not commit yet), of course I need to port on to docker hub. Now I need to build another container to host db sql server express.

1. I have question how to do download it on to client(windows 10) as client, I am seeing the image ps images dockker images command. How do I add it to my client through vm hyper v app? or docker run command. Also just wondering how work on db stuff now, create another container sql server express and how does this web container will see db container. app web ui taking to db server on client machine.

Hi Narayan –

Please follow the tutorial below to get started with setting up a multi container application that includes SQL Server Express 2016.

Let me know if you run into any issues,

Thanks,

Perry | https://blogs.msdn.microsoft.com/sqlserverstorageengine/2016/03/21/sql-server-in-windows-containers/ | CC-MAIN-2018-47 | refinedweb | 1,171 | 54.83 |

arup rakshit

Joined

80 Experience

0 Lessons Completed

0 Questions Solved

Activity

Thanks for your suggestions!

Thanks again!

How can I refactor the view code, and extract out as much as Ruby code from that ? What is your suggestion regarding the searching as you mentioned in your earlier comment?

Thanks for your reply!

No, I am just trying to display users names group by location/age/department etc. Currently nothing I think about for searching.

How can I write the below operation, being handled by group_by Ruby method, in terms of DB specific query?

def list_users @search_by_options = [:age, :location, :department, :designation] @users = User.all.group_by { |user| user.public_send(params[:search_by] || :location) } end

Corresponding view code is as below :-

<%= form_tag list_users_users_path, :method => 'get' do %> <p> <%= select_tag "search_by", options_for_select(@search_by_options) %> <%= submit_tag "Submit"%> </p> <% end %> <% @users.each do |grouping_key, users| %> <p> <%= grouping_key %> : <%= users.map(&:name).join("||")%></p> <% end %> <%= link_to "Back to Main page", root_path %> | https://gorails.com/users/1548 | CC-MAIN-2020-50 | refinedweb | 151 | 51.34 |

"Shall I compare thee to a summer's day?" - William Shakespeare, 'Sonnets'

Created: 24th October 1999

Last Modified: 27th November 1999

This page explains how to compare values and use conditional jumps.

The CP instruction stands for compare. It takes the general form

cp ValueToCompareWith and compares the value of the a register with

ValueToCompareWith, which can be any 8 bit register, an 8 bit number or (hl). So:

cp 7 cp b cp e cp (hl) cp %11010111

and so on. What the CP instruction actually does is substracts ValueToCompareWith from the value of the a register, but it doesn't store the result anywhere or affect the value of either the a register or ValueToCompareWith. What it does do is affect the flags.

The f register is sometimes called the flag register. It can't be used in maths, logic or any other 'useful' instruction (it can be pushed and popped from the stack when paired with a to give the af register pair). However, many instructions affect the value of f. Most of the bits in f have a meaning, for example one of the bits is set if there has been a carry and reset if there hasn't.

The f register lets us use 'if..else' statements in assembly. There is another form

of the CALL, JR and JP instructions, which is

call condition,address (or

jp condition,address or

jr condition,address). The address

part remains the same, and condition is one of the following (there are other

possibilities, but they don't all work for JR):

Note that you can also do

ret condition.

So you can now finally write a useful program (or at least you can once I've told you

what

call _getkey does). The _getkey ROM routine pauses the calculator

until a key is pressed, and then returns the value of that key in the a register. It

also trashes a few other registers, so if you need them don't forget to push them

onto the stack. The number returned in the a register isn't the same as the one used

in the built in language, it's a bit more advanced. There are equates defined for

the different keys, so rather than doing

cp 7 you can do

cp

kExit (for some reason there's no underscore). If you need a list of the

equates for the keys they're in a file called "asm86.h" which you'll find in

whatever directory you installed Assembly Studio in in a subdirectory called

"include". They all start with a small k - the ones with a capital k are something

else.

The program should clear the screen. It should then wait for a key to be pressed. If the key is [EXIT] then the program should quit. If the key is [ENTER] then the program should display a star (character 42) on the screen and go onto the next line. If it is any other key the program should do nothing. The program should then wait for another key and so on.

Try and work out your own code before copying the one below. Remember, your code will probably be different from mine because I've used a few shortcuts, but as long as it works that's fine.

Source: getkey1.asm

Compiled: getkey1.86p

#include "ti86asm.inc" .org _asm_exec_ram call _clrScrn ; Clears the screen (duuh!) call _homeup ; Cursor to top left LoopStart: call _getkey ; Wait for a key to be pressed cp kExit ; Compare it to kExit - [EXIT] key ret z ; If they're equal return to the OS cp kEnter ; Otherwise compare it to kEnter - [ENTER] key jr nz,LoopStart ; If it's not enter go and get another key ; If it is enter... ld a,42 ; a = 42 (ASCII character '*') call _putc ; Display charater in a call _newline ; Go onto a new line jr LoopStart ; Then go back to the start of the loop .end ; Tell the assembler to stop | http://www.asm86.cwc.net/tutorial/page3_7.html | crawl-001 | refinedweb | 665 | 70.84 |

The title is not a mistake.

Does it mean RxJava 2.x is a step back? Quite the contrary! In 2.x an important distinction was made:

2.x makes an important distinction between streams that can support backpressure ("can slow down if needed" in simple words) and those that don't. From the type system perspective it becomes clear what kind of source are we dealing with and what are its guarantees. So how should we migrate our

So, to wrap up, whenever you see an

There is no simple

rx.Observablefrom RxJava 1.x is a completely different beast than

io.reactivex.Observablefrom 2.x. Blindly upgrading

rxdependency and renaming all imports in your project will compile (with minor changes) but does not guarantee the same behavior. In the very early days of the project

Observablein 1.x had no notion of backpressure but later on backpressure was included. What does it actually mean? Let's imagine we have a stream that produces one event every 1 millisecond but it takes 1 second to process one such item. You see it can't possibly work this way in the long run:

import rx.Observable; //RxJava 1.x import rx.schedulers.Schedulers; Observable .interval(1, MILLISECONDS) .observeOn(Schedulers.computation()) .subscribe( x -> sleep(Duration.ofSeconds(1)));

MissingBackpressureExceptioncreeps in within few hundred milliseconds. But what does this exception mean? Well, basically it's a safety net (or sanity check if you will) that prevents you from hurting your application. RxJava automatically discovers that producer is overflowing the consumer and proactively terminates the stream to avoid further damage. So what if we simply search and replace few imports here and there?

import io.reactivex.Observable; //RxJava 2.x import io.reactivex.schedulers.Schedulers; Observable .interval(1, MILLISECONDS) .observeOn(Schedulers.computation()) .subscribe( x -> sleep(Duration.ofSeconds(1)));The exception is gone! So is our throughput... The application stalls after a while, staying in an endless GC loop. You see,

Observablein RxJava 1.x has assertions (bounded queues, checks, etc.) all over the place, making sure you are not overflowing anywhere. For example

observeOn()operator in 1.x has a queue limited to 128 elements by default. When backpressure is properly implemented across the whole stack,

observeOn()operator asks upstream to deliver not more than 128 elements to fill in its internal buffer. Then separate threads (workers) from this scheduler are picking up events from this queue. When queue becomes almost empty,

observeOn()operator asks (

request()method) for more. This mechanism breaks apart when producer does not respect backpressure requests and sends more data than it was allowed, effectively overflowing the consumer. The internal queue inside

observeOn()operator is full, yet

interval()operator keeps emitting new events - because that's what

interval()is suppose to do.

Observablein 1.x discovers such overflow and fails fast with

MissingBackpressureException. It literally means: I tried so hard to keep the system in healthy state, but my upstream is not respecting backpressure - backpressure implementation is missing. However

Observablein 2.x has no such safety mechanism. It's a vanilla stream that hopes you will be a good citizen and either have slow producers or fast consumers. When system is healthy, both

Observables behave the same way. However under load 1.x fails fast, 2.x fails slowly and painfully.

Does it mean RxJava 2.x is a step back? Quite the contrary! In 2.x an important distinction was made:

Observabledoesn't care about backpressure, which greatly simplifies its design and implementation. It should be used to model streams that can't support backpressure by definition, e.g. user interface events

Flowabledoes support backpressure and has all the safety measures in place. In other words all steps in computation pipeline make sure you are not overflowing the consumer.

interval()example to RxJava 2.x? Easier than you think:

Flowable .interval(1, MILLISECONDS) .observeOn(Schedulers.computation()) .subscribe( x -> sleep(Duration.ofSeconds(1)));That simple. You may ask yourself a question, how come

Flowablecan have

interval()operator that, by definition, can't support backpressure? After all

interval()is suppose to deliver events at constant rate, it can't slow down! Well, if you look at the declaration of

interval()you'll notice:

@BackpressureSupport(BackpressureKind.ERROR)Simply put this tells us that whenever backpressure can no longer be guaranteed, RxJava will take care of it and throw

MissingBackpressureException. That's precisely what happens when we run

Flowable.interval()program - it fails fast, as opposed to destabilizing whole application.

So, to wrap up, whenever you see an

Observablefrom 1.x, what you probably want is

Flowablefrom 2.x. At least unless your stream by definition does not support backpressure. Despite same name,

Observables in these two major releases are quite different. But once you do a search and replace from

Observableto

Flowableyou'll notice that migration isn't that straightforward. It's not about API changes, the differences are more profound.

There is no simple

Flowable.create()directly equivalent to

Observable.create()in 2.x. I made a mistake myself to overuse

Observable.create()factory method in the past.

create()allows you to emit events at an arbitrary rate, entirely ignoring backpressure. 2.x has some friendly facilities to deal with backpressure requests, but they require careful design of your streams. This will be covered in the next FAQ.

FYI, there is the Flowables.intervalBackpressure operator in the extensions project that doesn't signal MissingBackpressureException and emits values based on time and demand:

The thing to note is that the emission pattern may no longer be completely periodic and the signals may arrive in quick succession if there was a temporal lack of requests.

I once asked you a related question on Twitter, but still unsure - does your RxJava book cover 2.x flavor? If not - do you consider it still relevant or you plan for 2.x edition?

Dear Igor, our RxJava book unfortunately covers 1.x. I have no plans so far to publish a 2nd edition, but we'll see :-). | https://www.nurkiewicz.com/2017/08/1x-to-2x-migration-observable-vs.html | CC-MAIN-2018-34 | refinedweb | 1,001 | 52.05 |

Bummer! This is just a preview. You need to be signed in with a Basic account to view the entire video.

Better Errors4:20 with Alena Holligan

One of the most commonly overlooked parts of designing an API is proper error messaging. We all get excited to get our API out the door for its amazing functionality, and we forget to take care of informing callers of our API about errors that they might encounter. Because clients don’t have access to our error logs, we need to give them appropriate error messages.

Exception Constants

const COURSE_NOT_FOUND = 'Course Not Found'; const COURSE_INFO_REQUIRED = 'Required course data missing'; const COURSE_CREATION_FAILED = 'Unable to create course'; const COURSE_UPDATE_FAILED = 'Unable to update course'; const COURSE_DELETE_FAILED = 'Unable to delete course'; const REVIEW_NOT_FOUND = 'Review Not Found'; const REVIEW_INFO_REQUIRED = 'Required review data missing'; const REVIEW_CREATION_FAILED = 'Unable to create review'; const REVIEW_UPDATE_FAILED = 'Unable to update review'; const REVIEW_DELETE_FAILED = 'Unable to delete review';

Error Handler

To change how the handler, we have to add it to our dependencies as the "errorHandler"

$container['errorHandler'] = function ($c) { return function ($request, $response, $exception) use ($c) { $data = [ 'status' => 'error', 'code' => $exception->getCode(), 'message' => $exception->getMessage(), ]; return $response->withJson($data,$statusCode); }; };

- 0:00

One of the most commenly overlooked parts of designing an API

- 0:05

is proper error messaging.

- 0:07

We all get excited to get our API out the door for its amazing functionality.

- 0:12

And we forget to take care of informing callers of our API

- 0:16

about errors that they might encounter.

- 0:18

Because clients don't have access to our error logs,

- 0:22

we need to give them appropriate error messages.

- 0:26

Let's see what we can do about better communicating when things don't go right.

- 0:32

We're going to start by setting up a custom exception for our API.

- 0:36

Add a new folder under source named Exception.

- 0:44

Then create a new file named APIException.php.

- 0:54

First, we need to add our namespace.

- 0:59

This will be App\Exception.

- 1:04

Then we can add our class.

- 1:06

ApiException extends the default Exception.

- 1:18

We're going to keep this class pretty close to the original,

- 1:24

so we'll start with our constructor, public function __construct.

- 1:31

Our message, Will have a default of an empty string.

- 1:38

Our code will default to 400 and

- 1:44

our Exception previous or null.

- 1:51

Then we're gonna call the parent __construct.

- 1:57

Pass the message, the code and previous.

- 2:03

Besides that, we're going to add some constants for writing our messages.

- 2:08

We'll be able to access these outside the class, just like we do for

- 2:12

the PDO constants.

- 2:14

This will help our verbage stay consistent.

- 2:16

I don't wanna worry about typing all these out.

- 2:18

So I've included them in the teacher's notes.

- 2:21

You can copy and paste from there.

- 2:24

This is just an extra exception,

- 2:26

it's not gonna actually change how Slim handles the exceptions.

- 2:30

So we wanna set up a custom handler as well, so

- 2:33

we can return a JSON error response.

- 2:37

So in our dependencies, We're going to add another container.

- 2:46

Container, and this needs to be called errorHandler.

- 2:59

And let's close our semi colon.

- 3:02

Then we're going to return and set a new function.

- 3:07

We'll need the request, the response,

- 3:11

and the exception, and we'll want to use.

- 3:19

We'll set data equal to an array.

- 3:24

Let's close some semi colons.

- 3:29

Our status is going to equal error.

- 3:36

Our code is going to equal the exception getCode and

- 3:42

the message, Is going to equal the exception, getMessage.

- 3:53

Now, we can return a response withJson and

- 3:58

say data and the exception, getCode.

- 4:05

Let's check this out with Postman just to make sure that we didn't break anything.

- 4:10

We're gonna look for all our courses.

- 4:13

Great, it still works.

- 4:15

Now, we're ready to look at our methods and throw some exceptions. | https://teamtreehouse.com/library/better-errors | CC-MAIN-2020-05 | refinedweb | 739 | 64.81 |

You can subscribe to this list here.

Showing

2

results of 2

Hi,

I am running into an issue trying to use enable_on_exec

in per-thread mode with an event group.

My understanding is that enable_on_exec allows activation

of an event on first exec. This is useful for tools monitoring

other tasks and which you invoke as: tool my_program. In

other words, the tool forks+execs my_program. This option

allows developers to setup the events after the fork (to get

the pid) but before the exec(). Only execution after the exec

is monitored. This alleviates the need to use the

ptrace(PTRACE_TRACEME) call.

My understanding is that an event group is scheduled only

if all events in the group are active (disabled=0). Thus, one

trick to activate a group with a single ioctl(PERF_IOC_ENABLE)

is to enable all events in the group except the leader. This works

well. But once you add enable_on_exec on on the events,

things go wrong. The non-leader events start counting before

the exec. If the non-leader events are created in disabled state,

then they never activate on exec.

The attached test program demonstrates the problem.

simply invoke with a program that runs for a few seconds.

#include <sys/types.h>

#include <inttypes.h>

#include <stdio.h>

#include <stdlib.h>

#include <stdarg.h>

#include <unistd.h>

#include <string.h>

#include <sys/wait.h>

#include <syscall.h>

#include <err.h>

#include <perf_counter.h>

int

child(char **arg)

{

int i;

/* burn cycles to detect if monitoring start before exec */

for(i=0; i < 5000000; i++) syscall(__NR_getpid);

execvp(arg[0], arg);

errx(1, "cannot exec: %s\n", arg[0]);

/* not reached */

}

int

parent(char **arg)

{

struct perf_counter_attr hw[2];

char *name[2];

int fd[2];

int status, ret, i;

uint64_t values[3];

pid_t pid;

if ((pid=fork()) == -1)

err(1, "Cannot fork process");

memset(hw, 0, sizeof(hw));

name[0] = "PERF_COUNT_HW_CPU_CYCLES";

hw[0].type = PERF_TYPE_HARDWARE;

hw[0].config = PERF_COUNT_HW_CPU_CYCLES;

hw[0].read_format =

PERF_FORMAT_TOTAL_TIME_ENABLED|PERF_FORMAT_TOTAL_TIME_RUNNING;

hw[0].disabled = 1;

hw[0].enable_on_exec = 1;

name[1] = "PERF_COUNT_HW_INSTRUCTIONS";

hw[1].type = PERF_TYPE_HARDWARE;

hw[1].config = PERF_COUNT_HW_INSTRUCTIONS;

hw[1].read_format =

PERF_FORMAT_TOTAL_TIME_ENABLED|PERF_FORMAT_TOTAL_TIME_RUNNING;

hw[1].disabled = 0;

hw[1].enable_on_exec = 1;

fd[0] = perf_counter_open(&hw[0], pid, -1, -1, 0);

if (fd[0] == -1)

err(1, "cannot open event0");

fd[1] = perf_counter_open(&hw[1], pid, -1, fd[0], 0);

if (fd[1] == -1)

err(1, "cannot open event1");

if (pid == 0)

exit(child(arg));

waitpid(pid, &status, 0);

for(i=0; i < 2; i++) {

ret = read(fd[i], values, sizeof(values));

if (ret < sizeof(values))

err(1, "cannot read values event %s", name[i]);

if (values[2])

values[0] = (uint64_t)((double)values[0] * values[1]/values[2]);

printf("%20"PRIu64" %s %s\n",

values[0],

name[i],

values[1] != values[2] ? "(scaled)" : "");

close(fd[i]);

}

return 0;

}

int

main(int argc, char **argv)

{

if (!argv[1])

errx(1, "you must specify a command to execute\n");

return parent(argv+1);

}

Oleg,

On Tue, Aug 18, 2009 at 1:45 PM, Oleg Nesterov<oleg@...> wrote:

> On 08/18, stephane eranian wrote:

>> > In any case. We should not look at SA_SIGINFO at all. If sys_sigaction() was

>> > called without SA_SIGINFO, then it doesn'matter if we send SEND_SIG_PRIV or

>> > siginfo_t with the correct si_fd/etc.

>> >

>> What's the official role of SA_SIGINFO? Pass a siginfo struct?

>>

>> Does POSIX describe the rules governing the content of si_fd?

>> Or is si_fd a Linux-ony extension in which case it goes with F_SETSIG.

>

> Not sure I understand your concern...

>

> OK. You suggest to pass siginfo_t with .si_fd/etc when we detect SA_SIGINFO.

>

The reason I refer to SA_SIGINFO is simply because of the excerpt from the

sigaction man page:.

In other words, I use this to emphasize the fact that to get a siginfo

struct, you need to pass SA_SIGINFO and use sa_sigaction instead of

sa_handler. That's all I am saying.

> But, in that case we can _always_ pass siginfo_t, regardless of SA_SIGINFO.

> If the task has a signal handler and sigaction() was called without

> SA_SIGINFO, then the handler must not look into *info (the second arg of

> sigaction->sa_sigaction). And in fact, __setup_rt_frame() doesn't even

> copy the info to the user-space:

>

> if (ka->sa.sa_flags & SA_SIGINFO) {

> if (copy_siginfo_to_user(&frame->info, info))

> return -EFAULT;

> }

>

> OK? Or I missed something?

>

No, I think we are in agreement. To get meaningful siginfo use SA_SIGINFO.

> Or. Suppose that some app does:

>

> void io_handler(int sig, siginfo_t *info, void *u)

> {

> if ((info->si_code & __SI_MASK) != SI_POLL) {

> // RT signal failed! sig MUST be == SIGIO

> recover();

> } else {

> do_something(info->si_fd);

> }

> }

>

> int main(void)

> {

> sigaction(SIGRTMIN, { SA_SIGINFO, io_handler });

> sigaction(SIGIO, { SA_SIGINFO, io_handler });

> ...

> }

>

I don't think you can check si_code without first checking which

signal it is in si_signo. The values for si_code overlap between

the different struct in siginfo->_sifields.

>> It would seem natural that in the siginfo passed to the handler of SIGIO, the

>> file descriptor be passed by default. That is all I am trying to say here.

>

> Completely agreed! I was always puzzled by send_sigio_to_task(). I was never

> able to understand why it looks so strange.

>

> So, I think it should be

>

> static void send_sigio_to_task(struct task_struct *p,

> struct fown_struct *fown,

> int fd,

> int reason)

> {

> siginfo_t si;

> /*

> * F_SETSIG can change ->signum lockless in parallel, make

> * sure we read it once and use the same value throughout.

> */

> int signum = ACCESS_ONCE(fown->signum) ?: SIGIO;

>

> if (!sigio_perm(p, fown, signum))

> return;

>

> si.si_signo = signum;

> si.si_errno = 0;

> si.si_code = reason;

> si.si_fd = fd;

> /* Make sure we are called with one of the POLL_*

> reasons, otherwise we could leak kernel stack into

> userspace. */

> BUG_ON((reason & __SI_MASK) != __SI_POLL);

> if (reason - POLL_IN >= NSIGPOLL)

> si.si_band = ~0L;

> else

> si.si_band = band_table[reason - POLL_IN];

>

> /* Failure to queue an rt signal must be reported as SIGIO */

> if (!group_send_sig_info(signum, &si, p))

> group_send_sig_info(SIGIO, SEND_SIG_PRIV, p);

> }

>

> (except it should be on top of fcntl-add-f_etown_ex.patch).

> This way, at least we don't break the "detect RT signal failed" above.

>

> What do you think?

>

> But let me repeat: I just can't convince myself we have a good reason

> to change the strange, but carefully documented behaviour.

>

I agree with you. Given the existing documentation in the man page

of fcntl() and the various code examples. I think it is possible for

developers to figure out how to get the si_fd in the handler. This

problem is not specific to perf_counters nor perfmon. Any SIGIO-based

program may be interested in getting si_fd, therefore I am assuming the

solution is well understood at this point. | http://sourceforge.net/p/perfmon2/mailman/perfmon2-devel/?viewmonth=200908&viewday=20 | CC-MAIN-2015-06 | refinedweb | 1,089 | 60.51 |

Automatic, accurate crop type maps can provide unprecedented information for understanding food systems, especially in developing countries where ground surveys are infrequent. However, little work has applied existing methods to these data scarce environments, which also have unique challenges of irregularly shaped fields, frequent cloud coverage, small plots, and a severe lack of training data. To address this gap in the literature, we provide the first crop type semantic segmentation dataset of small holder farms, specifically in Ghana and South Sudan. We are also the first to utilize high resolution, high frequency satellite data in segmenting small holder farms.

The dataset includes time series of satellite imagery from Sentinel-1, Sentinel-2, and PlanetScope satellites throughout 2016 and 2017. For each tile/chip in the dataset, there are time series of imagery from each of the satellites, as well as a corresponding label that defines the crop type at each pixel. The label has only one value at each pixel location, and assumes that the crop type remains the same across the full time span of the satellite image time series. In many cases where ground truth was not available, pixels have no label and are set to a value of 0.

Crop type mapping of small holder farms in Ghana and South Sudan

Semantic Segmentation of Crop Type in Africa: A Novel Dataset and Analysis of Deep Learning Methods, Rose Rustowicz

Rustowicz R., Cheong R., Wang L., Ermon S., Burke M., Lobell D. (2020) "Semantic Segmentation of Crop Type in South Sudan Dataset", Version 1.0, Radiant MLHub. [Date Accessed]

from radiant_mlhub import Dataset ds = Dataset.fetch('su_african_crops_south_sudan') for c in ds.collections: print(c.id)

Python Client quick-start guide | https://mlhub.earth/data/su_african_crops_south_sudan | CC-MAIN-2022-27 | refinedweb | 282 | 51.78 |

ye gods,

I’m trying to learn my 1st OO lang and chose ruby. I’ve already

got a couple of simple batch processing programs written and

functioning but CommandLine is kicking my butt.

As I read the docs ‘program -a -b’ can be called with ‘program -ab’,

setting flags a & b in either case. I get an Unknown option error.

I cut this example directly from:

/usr/lib/ruby/gems/1.8/gems/commandline-0.7.10/docs/posted-docs.index.html

#!/usr/bin/env ruby

require ‘rubygems’

require ‘commandline’

class App < CommandLine::Application

def initialize

options :help, :debug

end

def main

puts “call your library here”

end

end#class App

Then added :verbose to options (options :help, :debug, :version).

My results:

% ruby example.rb -d -v

call your library here

% ruby example.rb -dv

Unknown option ‘-dv’.

Usage: example.rb

% _

I’ve read everything I can find and tried a dozen different

permutations on the theme, including :posix instead of :flag.

I’m sure I’m overlooking something basic due to newbie-myopia.

Any advice?

pb | https://www.ruby-forum.com/t/commandline-help-for-frustrated-newb/58616 | CC-MAIN-2018-47 | refinedweb | 179 | 57.57 |

Java Reference

In-Depth Information

Thread 1_Prepare to increase book stock

Thread 1 _ Book stock increased by 5

Thread 1 _ Sleeping

Thread 2 _ Prepare to check book stock

Thread 1 _ Wake up

Thread 1 _ Book stock rolled back

Thread 2 _ Book stock is 10

Thread 2 _ Sleeping

Thread 2_Wake up

In order that the underlying database can support the READ_COMMITTED isolation level, it may acquire

an update lock on a row that was updated but not yet committed. Then other transactions must wait to

read that row until the update lock is released, which happens when the locking transaction commits or

rolls back.

The REPEATABLE_READ Isolation Level

Now let's restructure the threads to demonstrate another concurrency problem. Swap the tasks of the

two threads so that thread 1 checks the book stock before thread 2 increases the book stock.

package com.apress.springenterpriserecipes.bookshop.spring;

...

public class Main {

public static void main(String[] args) {

...

final BookShop bookShop = (BookShop) context.getBean( " bookShop " );

Thread thread1 = new Thread(new Runnable() {

public void run() {

bookShop.checkStock( " 0001 " );

}

}, " Thread 1 " );

Thread thread2 = new Thread(new Runnable() {

public void run() {

try {

bookShop.increaseStock( " 0001 " , 5);

} catch (RuntimeException e) {}

}

}, " Thread 2 " );

Search WWH ::

Custom Search | http://what-when-how.com/Tutorial/topic-105fbg1u/Spring-Enterprise-Recipes-A-Problem-Solution-Approach-208.html | CC-MAIN-2018-30 | refinedweb | 206 | 58.01 |

Arduino, The Documentary

Today's Mobile Monday post is one that I thought could dispatch a number of birds with a single post...

What do you think of when you hear the word “Champ”? Someone who is the best? A sports champion? How about someone who fights for a cause? Here at Microsoft we have employees called “Developer Evangelists”. Part of their job is to help get the word out about Microsoft products and technologies. The other part of their job is to champion our developers.

Here in Windows Phone we call our evangelists “Phone Champs”. Champs ensure our developers get exactly the help & support they need and are the voice of the developer community. They are all experts on our platform and serve as local resources to answer questions from current or prospective developers. Champs can help you troubleshoot a problem in your app and can help you get your hands on a phone for testing. Oh, and some of our Champs are really funny and can tell you a good joke or two.

So how do you find one of our great Phone Champs? Today I’m happy to announce a new application to help you find them. We originally wanted to call the app “Champ Acquisition and Discovery 1.0 for Workgroups Windows Phone Edition.” Luckily cooler heads prevailed and we’re calling it “Find My Champ”.

...

Get the App

Go download the app’s source code. Compile the code in our dev tools and side-load Find My Champ onto your developer device or into the emulator. The app will also be in the Marketplace soon for you to download. Find your local Phone Champ and get in touch with them. Ask them for help with your app or to let you know about events or hackathons in your area.

We’d love to get your feedback on the Find My Champ application. Tell us what we can do to make it more useful. You can also come help us improve the app on Codeplex. Additionally, I suspect that many of you will have creative ideas for how to use the Champs OData feed in other applications, mashups, etc. Please let me know what you create!

The first thing that I thought was cool was that this is backed on an OData feed. This means that if we, the development community want to present our own view of the data, in our own apps, we can very easily.

Using the OData feed is very easy. Here's a snap from the must have LINQPad utility querying the feed.

LINQPad makes it almost too easy to play with this feed. Say I want all the Champs with a Last Name starting with E, order by LastName?

Or who have a MSDN blog and also have a Twitter account?

Okay, OData via Linq is cool, but what if you want the actual OData URL for this query? Again, LINQPad makes that click easy. Just click on the "SQL" right above the results;

Then to maybe finally blow your mind, did you know Notepad can open URL's?

So we're got the data, lets check out the app source.

First, when you download the source, you'll need to add one reference, easily done via NuGet.

When you first open the Solution you'll see this (if you expand the given items). Note the warning/missing icons;

Fire up NuGet and search for "SilverlightToolkitWP"

Click install.

That's it. You should now be good to go and able to run the app in the Emulator.

When first running the app in the Emulator, the Local list will be this default list. If you want to see the local people for a given location, set the location and then re-run the app (refresh doesn't yet seem to take a change in location into account).

So here I set the location;

Then exit and re-run the app in the Emulator.

Set to another location, exit and rerun the app. You don't need to kill the emulator or debugging session, just re-run it in the emulator (if that makes sense?)

The source is pretty easily readable and spelunkable;

Data binding is used as you would expect.

One of the things I thought key was how "local" was determined. How was your location and the Champ's Lat/Long's used to determine "local?"

public static double GetDistance(double fromLatitude, double fromLongitude, double toLatitude, double toLongitude) { int earthRadius = 6371; // earth's radius (mean) in km double chgLat = DegreeToRadians(toLatitude - fromLatitude); double chgLong = DegreeToRadians(toLongitude - fromLongitude); double a = Math.Sin(chgLat / 2) * Math.Sin(chgLat / 2) + Math.Cos(DegreeToRadians(fromLatitude)) * Math.Cos(DegreeToRadians(toLatitude)) * Math.Sin(chgLong / 2) * Math.Sin(chgLong / 2); double c = 2 * Math.Atan2(Math.Sqrt(a), Math.Sqrt(1 - a)); double d = earthRadius * c; return d; } private static double DegreeToRadians(double value) { return Math.PI * value / 180.0; }

private void UpdateDistance() { if (GeoData.Status != GeoPositionStatus.Ready) return; // when position is updated, update distance var _FromLat = GeoData.Location.Latitude; var _FromLong = GeoData.Location.Longitude; var _ToLat = (double)Latitude; var _ToLong = (double)Longitude; this.DistanceAway = FMCLocation.GetDistance(_FromLat, _FromLong, _ToLat, _ToLong); } // this is the same as PropertyChanged("Distance"), just easier to use public event EventHandler DistanceChanged; private double m_DistanceAway; public double DistanceAway { get { return m_DistanceAway; } set { m_DistanceAway = value; OnPropertyChanged("DistanceAway"); OnPropertyChanged("IsLocal"); if (DistanceChanged != null) DistanceChanged(this, EventArgs.Empty); } } public bool IsLocal { get { return (DistanceAway > 0 && DistanceAway < 250); } }

If you're looking for help in building your Windows Phone 7 applications and are looking for help, think about contacting your local Champ. That's part of their job and desire, to be there to help you. Finding them, is now super easy, via this app (which you have to agree is kind of meta-cool...

Very cool

Great post. I love seeing the LINQPad stuff and I had no idea that Notepad could do that! I will also share that we got our first remix on the Find My Champ app. See the video here:

Excellent !!! Thank you for this. | https://channel9.msdn.com/coding4fun/blog/Need-to-find-your-local-Windows-Phone-Champion-Heres-an-OData-service-Phone-app-and-source-that-will | CC-MAIN-2017-51 | refinedweb | 1,014 | 66.64 |