text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91

values | source stringclasses 1

value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

|---|---|---|---|---|---|

e/samples/radioclient/

This is a program for playing around with XML-RPC, SOAP, and the Blogger API

using a Radio Userland 8 server. The sample used to be called bloggertest,

but I renamed it.

The big readme.txt file is included at the end of this message.

I've spent a lot of time in the last two weeks playing with XML-RPC, Blogger

and SOAP and I wanted to have a sample that was simpler to modify and

experiment with than Simon's textRouter. You should not use radioclient

without the understanding that you are working on live data and could

corrupt your blog, so user beware (see the readme). radioclient starts up

the shell automatically and imports xmlrpclib and Mark Pilgrim's blogger

module, which I included with the sample. This makes it relatively simple to

run additional tests from the shell, so I also included a tests directory of

various XML-RPC, SOAP, and blogger tests I've run so far.

I expect that Dan Shafer, myself and possibly others will have more to say

about this sample in the future. I hope we might do some tutorials to

introduce people to client web services using PythonCard as well as

modifications to the app so it can interact with other blog sites.

ka

---

readme.txt:

This app is a playground of sorts for testing XML-RPC, the Blogger API, and

SOAP using a local Radio Userland 8 server. The interface is designed for

easy testing and experimenting in Python, so it is not particularly user

friendly. If you know the IP address, username, and password for a remote

server, radioclient can also talk to that box by changing the following line

in the on_openBackground method:

# username, password, server url

self.blog = RadioBloggerSite('', '', '')

*** A WARNING ***

This is not a commercial tool supported by Userland or connected in any way

with the Radio Userland 8 product. This is a development app done by open

source developers that use Radio and is part of the PythonCard samples

designed to help stress and show off the PythonCard framework.

Since this app is talking to a live server, you are viewing, adding,

editing, and deleting real data. If you are using the default Radio

settings, your changes will be upstreamed to the public Radio server as

changes are made. You should keep backups of your data, templates, and

generated pages.

*** YOU HAVE BEEN WARNED ***

WINDOWS ONLY

Radio 8 is only available for Windows and the Macintosh. PythonCard, which

relies on wxPython, works on Windows and Linux/GTK, but isn't available on

the Mac yet, except using a Windows emulator or running wxGTK on Mac OS X.

So, for the time being this sample is effectively just for Windows users

that also have Radio 8.

RADIO AND THE BLOGGER API

Radio implements the Blogger API as defined at

As of Radio 8.0.2

it doesn't implement getUserInfo

getUsersBlogs returns info about the public Radio server not the local

server

getTemplate and setTemplate appear to only work with the 'main' template

This test app will be updated as changes are made to the blogger api in

Radio

Over time, this app might be expanded to include additional tests against

other XML-RPC, Blogger API, and SOAP web services. The tests folder contains

interactive shell sessions using various web service libraries.

Note that wxPython has a great multi-column list control called wxListCtrl

that will eventually be supported in PythonCard. Until then, the columns are

being simulated with tabs and/or spaces in a plain List.

WEBLOGS PING

Mark is supposed to just wrap this one-liner into blogger.py, so I'm not

going

to add it to my own wrapper classes unless he doesn't do that soon.

#

"Actually, XML-RPC is easier in Python than almost any other language,

because the XML-RPC library uses a very cool feature of Python called

"dynamic binding" to create a kind of virtual proxy that lets you call

remote functions with exactly the same syntax as calling local functions.

import xmlrpclib

remoteServer = xmlrpclib.Server("")

remoteServer.weblogUpdates.ping(SITE_NAME, SITE_URL)

This short example pings weblogs.com to tell it that your weblog has

changed. I use this script to connect this Greymatter weblog to the

weblogs.com community (since Greymatter has no weblogs.com support built

in). But the point here is the syntax of that third line: it's the same

syntax as calling a local function. Once the proxy (remoteServer) is set up,

the XML-RPC library makes the object act as if it has a weblogUpdates object

within it and a ping method within that. It's all a lie, of course; under

the covers it's constructing an XML-RPC request and sending it off, and then

receiving an XML-RPC response and parsing it and returning a native Python

object. But I, as a Python developer, don't have to worry about all that if

I don't want to, and I don't have to learn a new syntax for calling remote

functions."

REFERENCES

Python

win32 extensions

wxPython

windows binaries at:

PythonCard

xmlrpclib (use 0.9.9 if you have Python 2.1.x, it is included as part of

Python 2.2)

pyblogger

The Blogger API

Radio Userland 8

emulatingBloggerInRadio

emulatingBloggerInManila

ggerApi

textRouter (a manila/blogger app written in PythonCard by Simon Kittle)

Python.Scripting: Python, XML-RPC and SOAP

Python and XML: An Introduction

_______________________________________________

Pythoncard-users mailing list

Pythoncard-users@[...].net | http://aspn.activestate.com/ASPN/Mail/Message/PythonCard/1017624 | crawl-002 | refinedweb | 918 | 60.04 |

1560831758

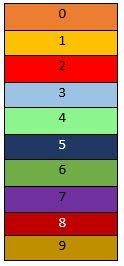

We will create a sample chat application to demonstrate this feature. However, because we are focusing on just user presence, we will not implement the actual chat feature.

If you are building an application that has a user base, you might need to show your users when their friends are currently online. This comes in handy especially in messenger applications where the current user would like to know which of their friends are available for instant messaging.

Here is a screen recording on how we want the application to work:

If you want a tutorial on how to create a messenger application on iOS, check out this article.

To follow along you need the following requirements:

Let’s get started.

Before creating the iOS application, let’s create the backend application in Node.js. This application will have the necessary endpoints the application will need to function properly. To get started, create a new directory for the project.

In the root of the project, create a new

package.json file and paste the following contents into it:

{ "name": "presensesample", "version": "1.0.0", "main": "index.js", "dependencies": { "body-parser": "^1.18.3", "express": "^4.16.4", "pusher": "^2.1.3" } }

Above, we have defined some npm dependencies that the backend application will need to function. Amongst the dependencies, we can see the

pusher library. This is the Pusher JavaScript server SDK.

Next, open your terminal application and

cd to the root of the project you just created and run the following command:

$ npm install

This command will install all the dependencies we defined above in the

package.json file.

Next, create a new file called

index.js and paste the following code into the file:

// File: ./index.js

const express = require(‘express’);

const bodyParser = require(‘body-parser’);

const Pusher = require(‘pusher’);

const app = express();

let users = {}; let currentUser = {}; let pusher = new Pusher({ appId: 'PUSHER_APP_ID', key: 'PUSHER_APP_KEY', secret: 'PUSHER_APP_SECRET', cluster: 'PUSHER_APP_CLUSTER' }); app.use(bodyParser.json()); app.use(bodyParser.urlencoded({ extended: false })); // TODO: Add routes here app.listen(process.env.PORT || 5000);

In the code above, we imported the libraries we need for the backend application. We then instantiated two new variables:

users and

currentUser. We will be using these variables as a temporary in-memory store for the data since we are not using a database.

Next, we instantiated the Pusher library using the credentials for our application. We will be using this instance to communicate with the Pusher API. Next, we add the

listen method which instructs the Express application to start the application on port 5000.

Next, let’s add some routes to the application. In the code above, we added a comment to signify where we will be adding the route definitions. Replace the comment with the following code:

// File: ./index.js

// [...] app.post('/users', (req, res) => { const name = req.body.name; const matchedUsers = Object.keys(users).filter(id => users[id].name === name); if (matchedUsers.length === 0) { const id = generate_random_id(); users[id] = currentUser = { id, name }; } else { currentUser = users[matchedUsers[0]]; } res.json({ currentUser, users }); }); // [...]

Above, we have the first route. The route is a

POST route that creates a new user. It first checks the

users object to see if a user already exists with the specified name. If a user does not exist, it generates a new user ID using the

generate_random_id method (we will create this later) and adds it to the

users object. If a user exists, it skips all of that logic.

Regardless of the outcome of the user check, it sets the

currentUser as the user that was created or matched and then returns the

currentUser and

users object as a response.

Next, let’s define the second route. Because we are using presence channels, and presence channels are private channels, we need an endpoint that will authenticate the current user. Below the route above, add the following code:

// File: ./index.js

// [...] app.post('/pusher/auth/presence', (req, res) => { let socketId = req.body.socket_id; let channel = req.body.channel_name; let presenceData = { user_id: currentUser.id, user_info: { name: currentUser.name } }; let auth = pusher.authenticate(socketId, channel, presenceData); res.send(auth); }); // [...]

Above, we have the Pusher authentication route. This route gets the expected

socket_id and

channel_name and uses that to generate an authentication token. We also supply a

presenceData object that contains all the information about the user we are authenticating. We then return the token as a response to the client.

Finally, in the first route, we referenced a function

generate_random_id. Below the route we just defined, paste the following code:

// File: ./index.js

// [...] function generate_random_id() { let s4 = () => (((1 + Math.random()) * 0x10000) | 0).toString(16).substring(1); return s4() + s4() + '-' + s4() + '-' + s4() + '-' + s4() + s4() + s4(); } // [...]

The function above just generates a random ID that we can then use as the user ID when creating new users.

Let’s add a final default route. This will catch visits to the backend home. In the same file, add the following:

// […]

app.get('/', (req, res) => res.send('It works!')); // [...]

With this, we are done with the Node.js backend. You can run your application using the command below:

$ node index.js

Your app will be available here:.

Launch Xcode and create a new sample Single View App project. We will call ours

presensesample.

When you are done creating the application, close Xcode. Open your terminal application and

cd to the root directory of the iOS application and run the following command:

$ pod init

This will create a new

Podfile file in the root directory of your application. Open the file and replace the contents with the following:

# File: ./Podfile

target ‘presensesample’ do

platform :ios, ‘12.0’

use_frameworks! pod 'Alamofire', '~> 4.7.3' pod 'PusherSwift', '~> 5.0' pod 'NotificationBannerSwift', '~> 1.7.3' end

Above, we have defined the application’s dependencies. To install the dependencies, run the following command:

$ pod install

The command above will install all the dependencies in the

Podfile and also create a new

.xcworkspace file in the root of the project. Open this file in Xcode to launch the project and not the

.xcodeproj file.

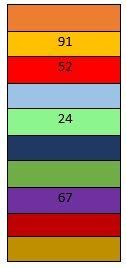

The first thing we will do is create the storyboard scenes we need for the application to work. We want the storyboard to look like this:

Open the main storyboard file and delete all the scenes in the file so it is empty. Next, add a view controller to the scene.

TIP: You can use the command + shift + L shortcut to bring the objects library.

With the view controller selected, click on Editor > Embed In > Navigation Controller. This will embed the current view controller in a navigation controller. Next, with the navigation view controller selected, open the attributes inspector and select the Is Initial View Controller option to set the navigation view controller as the entry point for the storyboard.

Next, design the view controller as seen in the screenshot below. Later on in the article, we will be connecting the text field and button to the code using an

@IBOutlet and an

@IBAction.

Next, add the tab bar controller and connect it to the view controller using a manual segue. Since tab bar controllers come with two regular view controllers, delete them and add two table view controllers instead as seen below:

When you are done creating the scenes, let’s start adding the necessary code.

Create a new controller class called

LoginViewController and set it as the custom class for the first view controller attached to the navigation controller. Paste the following code into the file:

// File: ./presensesample/LoginViewController.swift

import UIKit

import Alamofire

import PusherSwift

import NotificationBannerSwift

class LoginViewController: UIViewController { var user: User? = nil var users: [User] = [] @IBOutlet weak var nameTextField: UITextField! override func viewWillAppear(_ animated: Bool) { super.viewWillAppear(animated) user = nil users = [] navigationController?.isNavigationBarHidden = true } @IBAction func startChattingButtonPressed(_ sender: Any) { if nameTextField.text?.isEmpty == false, let name = nameTextField.text { registerUser(["name": name.lowercased()]) { successful in guard successful else { return StatusBarNotificationBanner(title: "Failed to login.", style: .danger).show() } self.performSegue(withIdentifier: "showmain", sender: self) } } } func registerUser(_ params: [String : String], handler: @escaping(Bool) -> Void) { let url = "" Alamofire.request(url, method: .post, parameters: params) .validate() .responseJSON { resp in if resp.result.isSuccess, let data = resp.result.value as? [String: Any], let user = data["currentUser"] as? [String: String], let users = data["users"] as? [String: [String: String]], let id = user["id"], let name = user["name"] { for (uid, user) in users { if let name = user["name"], id != uid { self.users.append(User(id: uid, name: name)) } } self.user = User(id: id, name: name) self.nameTextField.text = nil return handler(true) } handler(false) } } override func prepare(for segue: UIStoryboardSegue, sender: Any?) { if let vc = segue.destination as? MainViewController { vc.viewControllers?.forEach { if let onlineVc = $0 as? OnlineTableViewController { onlineVc.users = self.users onlineVc.user = self.user } } } } }

In the controller above, we have defined the

users and

user properties which will hold the available users and the current user when the user is logged in. We also have the

nameTextField which is an

@IBOutlet to the text field in the storyboard view controller, so make sure you connect the outlet if you hadn’t previously done so.

In the same controller, we have the

startChattingButtonPressed method which is an

@IBAction so make sure you connect it to the submit button in the storyboard view controller if you have not already done so. In this method, we call the

registerUser method to register the user using the API. If the registration is successful, we direct the user to the

showmain segue.

The segue between the login view controller and the tab bar controller should be set with an identifier of

showmain.

In the

registerUser method, we send the name to the API and receive a JSON response. We parse it to see if the registration was successful or not.

The final method in the class is the

prepare method. This method is automatically called by iOS when a new segue is being loaded. We use this to preset some data to the view controller we are about to load.

Next, create a new file called

MainViewController and set this as the custom class for the tab bar view controller. In the file, paste the following code:

// File: ./presensesample/MainViewController.swift

import UIKit

class MainViewController: UITabBarController { override func viewDidLoad() { super.viewDidLoad() navigationItem.title = "Who's Online" navigationItem.hidesBackButton = true navigationController?.isNavigationBarHidden = false // Logout button navigationItem.rightBarButtonItem = UIBarButtonItem( title: "Logout", style: .plain, target: self, action: #selector(logoutButtonPressed) ) } override func tabBar(_ tabBar: UITabBar, didSelect item: UITabBarItem) { navigationItem.title = item.title } @objc fileprivate func logoutButtonPressed() { viewControllers?.forEach { if let vc = $0 as? OnlineTableViewController { vc.users = [] vc.pusher.disconnect() } } navigationController?.popViewController(animated: true) } }

In the controller above, we have a few methods defined. The

viewDidLoad method sets the title of the controller and other navigation controller specific things. We also define a Logout button in this method. The button will trigger the

logoutButtonPressed method.

In the

logoutButtonPressed method, we try to log the user out by resetting the

users property in the view controller and also we disconnect the user from the Pusher connection.

Next, create a new controller class named

ChatTableViewController. Set this class as the custom class for one of the tab bar controllers child controllers. Paste the following code into the file:

// File: ./presensesample/ChatTableViewController.swift

import UIKit

class ChatTableViewController: UITableViewController { override func viewDidLoad() { super.viewDidLoad() } override func numberOfSections(in tableView: UITableView) -> Int { return 0 } override func tableView(_ tableView: UITableView, numberOfRowsInSection section: Int) -> Int { return 0 } }

The controller above is just a base controller and we do not intend to add any chat logic to this controller.

Create a new controller class called

OnlineTableViewController. Set this controller as the custom class for the second tab bar controller child controller. Paste the following code to the controller class:

// File: ./presensesample/OnlineTableViewController.swift

import UIKit

import PusherSwift

struct User { let id: String var name: String var online: Bool = false init(id: String, name: String, online: Bool? = false) { self.id = id self.name = name self.online = online! } } class OnlineTableViewController: UITableViewController { var pusher: Pusher! var user: User? = nil var users: [User] = []: "onlineuser", for: indexPath) let user = users[indexPath.row] cell.textLabel?.text = "\(user.name) \(user.online ? "[Online]" : "")" return cell } }

In the code above, we first defined a

User struct. We will use this to represent the user resource. We have already referenced this struct in previous controllers we created earlier.

Next, we defined the

OnlineTableViewController class which is extends the

UITableViewController class. In this class, we override the usual table view controller methods to provide the table with data.

You have to set the cell reuse identifier of this table to

onlineuserin the storyboard.

Above we also defined some properties:

pusher- this will hold the Pusher SDK instance that we will use to subscribe to Pusher Channels.

users- this will hold an array of

Userstructs.

user- this is the user struct of the current user.

Next, in the same class, add the following method:

// File: ./presensesample/OnlineTableViewController.swift

// [...] override func viewDidLoad() { super.viewDidLoad() tableView.allowsSelection = false // Create the Pusher instance... pusher = Pusher( key: "PUSHER_APP_KEY", options: PusherClientOptions( authMethod: .endpoint(authEndpoint: ""), host: .cluster("PUSHER_APP_CLUSTER") ) ) // Subscribe to a presence channel... let channel = pusher.subscribeToPresenceChannel( channelName: "presence-chat", onMemberAdded: { member in if let info = member.userInfo as? [String: String], let name = info["name"] { if let index = self.users.firstIndex(where: { $0.id == member.userId }) { let userModel = self.users[index] self.users[index] = User(id: userModel.id, name: userModel.name, online: true) } else { self.users.append(User(id: member.userId, name: name, online: true)) } self.tableView.reloadData() } }, onMemberRemoved: { member in if let index = self.users.firstIndex(where: { $0.id == member.userId }) { let userModel = self.users[index] self.users[index] = User(id: userModel.id, name: userModel.name, online: false) self.tableView.reloadData() } } ) // Bind to the subscription succeeded event... channel.bind(eventName: "pusher:subscription_succeeded") { data in guard let deets = data as? [String: AnyObject], let presence = deets["presence"] as? [String: AnyObject], let ids = presence["ids"] as? NSArray else { return } for userid in ids { guard let uid = userid as? String else { return } if let index = self.users.firstIndex(where: { $0.id == uid }) { let userModel = self.users[index] self.users[index] = User(id: uid, name: userModel.name, online: true) } } self.tableView.reloadData() } // Connect to Pusher pusher.connect() } // [...]

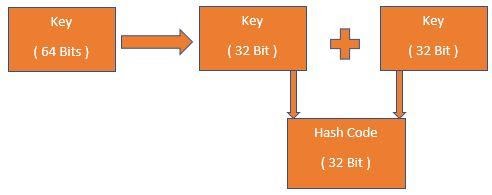

In the

viewDidLoad method above, we are doing several things. First, we instantiate the Pusher instance. In the options, we specify the authorize endpoint. We use the same URL as the backend we created earlier.

The next thing we do is subscribe to a presence channel called

presence-chat. When working with presence channels, the channel name must be prefixed with

presence-. The

subscribeToPresenceChannel method has two callbacks that we can add logic to:

onMemberAdded- this event is called when a new user joins the

presence-chatchannel. In this callback, we check for the user that was added and mark them as online in the

usersarray.

onMemberRemoved- this event is called when a user leaves the

presence-chatchannel. In this callback, we check for the user that left the channel and mark them as offline.

Next, we bind to the

pusher:subscription_succeeded event. This event is called when a user successfully subscribes to updates on a channel. The callback on this event returns all the currently subscribed users. In the callback, we use this list of subscribed users to mark them online in the application.

Finally, we use the

connect method on the

pusher instance to connect to Pusher.

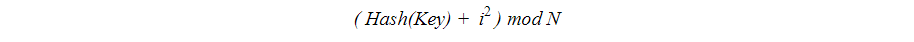

One last thing we need to do before we are done with the iOS application is allowing the application load data from arbitrary URLs. By default, iOS does not allow this, and it should not. However, since we are going to be testing locally, we need this turned on temporarily. Open the

info.plist file and update it as seen below:

Now, our app is ready. You can run the application and you should see the online presence status of other users when they log in.

In this tutorial, we learned how to use presence channels in your iOS application using Pusher Channels.

The source code for the application created in this tutorial is available on GitHub.

☞ Learn Swift 4: From Beginner to Advanced

☞ Top 10 Node.js Frameworks

☞ Machine Learning In Node.js With TensorFlow.js

☞ Express.js & Node.js Course for Beginners - Full Tutorial

☞ How to Perform Web-Scraping using Node.js

☞ Build a web scraper with Node

☞ Getting started with Flutter

☞ Android Studio for beginners

☞ Building a mobile chat app with Nest.js and Ionic 4

Originally published by Neo Ighodaro at

#mobile-apps #node-js #swift 12441441

Are.

For more info:

Website:

Call: +1-978-309-9910

#top swift app development company usa #best swift app development company #swift app development #swift ios app development #swift app development company #hire expert swift ios app developers in usa | https://morioh.com/p/8f75c2412b81 | CC-MAIN-2021-39 | refinedweb | 2,791 | 51.34 |

Hi Christoph,On 19 Sep 2007, at 04:36, Christoph Lameter wrote:> Currently there is a strong tendency to avoid larger page > allocations in> the kernel because of past fragmentation issues and the current> defragmentation methods are still evolving. It is not clear to what > extend> they can provide reliable allocations for higher order pages (plus the> definition of "reliable" seems to be in the eye of the beholder).>> Currently we use vmalloc allocations in many locations to provide a > safe> way to allocate larger arrays. That is due to the danger of higher > order> allocations failing. Virtual Compound pages allow the use of regular> page allocator allocations that will fall back only if there is an > actual> problem with acquiring a higher order page.>>.I like this a lot. It will get rid of all the silly games we have to play when needing both large allocations and efficient allocations where possible. In NTFS I can then just allocated higher order pages instead of having to mess about with the allocation size and allocating a single page if the requested size is <= PAGE_SIZE or using vmalloc() if the size is bigger. And it will make it faster because a lot of the time a higher order page allocation will succeed with your patchset without resorting to vmalloc() so that will be a lot faster.So where I currently have fs/ntfs/malloc.h the below mess I could get rid of it completely and just use the normal page allocator/ deallocator instead...static inline void *__ntfs_malloc(unsigned long size, gfp_t gfp_mask){ if (likely(size <= PAGE_SIZE)) { BUG_ON(!size); /* kmalloc() has per-CPU caches so is faster for now. */ return kmalloc(PAGE_SIZE, gfp_mask & ~__GFP_HIGHMEM); /* return (void *)__get_free_page(gfp_mask); */ } if (likely(size >> PAGE_SHIFT < num_physpages)) return __vmalloc(size, gfp_mask, PAGE_KERNEL); return NULL;}And other places in the kernel can make use of the same. I think XFS does very similar things to NTFS in terms of larger allocations at least and there are probably more places I don't know about off the top of my head...I am looking forward to your patchset going into mainline. (-:Best regards, Anton> Advantages:>> - If higher order allocations are failing then virtual compound pages> consisting of a series of order-0 pages can stand in for those> allocations.>> - "Reliability" as long as the vmalloc layer can provide virtual > mappings.>> - Ability to reduce the use of vmalloc layer significantly by using> physically contiguous memory instead of virtual contiguous memory.> Most uses of vmalloc() can be converted to page allocator calls.>> - The use of physically contiguous memory instead of vmalloc may > allow the> use larger TLB entries thus reducing TLB pressure. Also reduces > the need> for page table walks.>> Disadvantages:>> - In order to use fall back the logic accessing the memory must be> aware that the memory could be backed by a virtual mapping and take> precautions. virt_to_page() and page_address() may not work and> vmalloc_to_page() and vmalloc_address() (introduced through this> patch set) may have to be called.>> - Virtual mappings are less efficient than physical mappings.> Performance will drop once virtual fall back occurs.>> - Virtual mappings have more memory overhead. vm_area control > structures> page tables, page arrays etc need to be allocated and managed to > provide> virtual mappings.>> The patchset provides this functionality in stages. Stage 1 introduces> the basic fall back mechanism necessary to replace vmalloc allocations> with>> alloc_page(GFP_VFALLBACK, order, ....)>> which signifies to the page allocator that a higher order is to be > found> but a virtual mapping may stand in if there is an issue with > fragmentation.>> Stage 1 functionality does not allow allocation and freeing of virtual> mappings from interrupt contexts.>> The stage 1 series ends with the conversion of a few key uses of > vmalloc> in the VM to alloc_pages() for the allocation of sparsemems memmap > table> and the wait table in each zone. Other uses of vmalloc could be > converted> in the same way.>>> Stage 2 functionality enhances the fallback even more allowing > allocation> and frees in interrupt context.>> SLUB is then modified to use the virtual mappings for slab caches> that are marked with SLAB_VFALLBACK. If a slab cache is marked this > way> then we drop all the restraints regarding page order and allocate> good large memory areas that fit lots of objects so that we rarely> have to use the slow paths.>> Two slab caches--the dentry cache and the buffer_heads--are then > flagged> that way. Others could be converted in the same way.>> The patch set also provides a debugging aid through setting>> CONFIG_VFALLBACK_ALWAYS>> If set then all GFP_VFALLBACK allocations fall back to the virtual> mappings. This is useful for verification tests. The test of this> patch set was done by enabling that options and compiling a kernel.>>> Stage 3 functionality could be the adding of support for the large> buffer size patchset. Not done yet and not sure if it would be useful> to do.>> Much of this patchset may only be needed for special cases in which > the> existing defragmentation methods fail for some reason. It may be > better to> have the system operate without such a safety net and make sure > that the> page allocator can return large orders in a reliable way.>> The initial idea for this patchset came from Nick Piggin's fsblock> and from his arguments about reliability and guarantees. Since his> fsblock uses the virtual mappings I think it is legitimate to> generalize the use of virtual mappings to support higher order> allocations in this way. The application of these ideas to the large> block size patchset etc are straightforward. If wanted I can base> the next rev of the largebuffer patchset on this one and implement> fallback.>> Contrary to Nick, I still doubt that any of this provides a > "guarantee".> Have said that I have to deal with various failure scenarios in the VM> daily and I'd certainly like to see it work in a more reliable manner.>> IMHO getting rid of the various workarounds to deal with the small 4k> pages and avoiding additional layers that group these pages in > subsystem> specific ways is something that can simplify the kernel and make the> kernel more reliable overall.>> If people feel that a virtual fall back is needed then so be it. Maybe> we can shed our security blanket later when the approaches to deal> with fragmentation have matured.>> The patch set is also available via git from the largeblock git > tree via>> git pull> git://git.kernel.org/pub/scm/linux/kernel/git/christoph/ > largeblocksize.git> vcompound>> -- > -> To unsubscribe from this list: send the line "unsubscribe linux- > kernel" in> the body of a message to majordomo@vger.kernel.org> More majordomo info at> Please read the FAQ at | https://lkml.org/lkml/2007/9/19/31 | CC-MAIN-2017-04 | refinedweb | 1,118 | 52.6 |

I am using 2 sortable objects and 1 droppable object.The 2

sortable are connected using "connectWith" property.

to see a image example.

The idea is that i will drag objects

from the bottom sortable to the upper sortable or to the droppable

object.

The problem is that when i am dragging a object from

the bottom sortable to the draggable object

I have this in my controller:

def selectmodels

@brand = Brand.find_by_name(params[:brand_name]) @models =

Model.where(:brand_id => @brand.id) render json: @models

end

The returned code is processed by:

$.ajax({ url: '/selectmodels?brand_name=' + test, type:

'get', dataType: 'json',

synchronized void drop(Board board) { int[][] a =

getArray(); int[][] b = board.getArray(); //I don't have

currentObject here... what do I need to write? if

(Board.goDown(currentX, currentY, b, a, board, currentObject)) {

currentY++; updateXY(); }}

The method call is curren

curren

i am trying to implement an autocomplete combobox using jquery ui

plugin.

with the below mentioned code i am able to achieve the

autocomplete part but not the dropdown part (due to the uncaught typeerror

the dropdown arrow is not visible)

$.widget( "ui.combobox",

{ _create: function() { var input,

self = this,

i am working on a little learning project and have run into an issue

that i cant work out.

I get the following error message on

google chromes dev console :-

Uncaught TypeError: Object

[object Object] has no method 'match'lexer.nexthandlebars-1.0.0.beta.6.js:364lexhandlebars-1.0.0.beta.6.js:392lexhandlebars-1.0.0.beta.6.js:214parsehandlebars-1.

I have this kind of json:

{ "1": {"id": 1,

"first": "mymethod", "second" : [true, true, false]} , "4": {"id": 2,

"first": "foo", "second" : [true, true, false]}, "67": {"id": 67,

"first": "bar", "second": [true, true, false]}, "70": {"id": 70,

"first": "foobar", "second" : [true, true, false]}}

I am trying to parse it using gson

I'm rewriting a JavaScript library that I wrote some time ago.Its

purpose is to display an array of objects as a table, that can be sorted,

filtered and edited without server communication.

The current

solution "pollutes" the objects with additional attributes, that are needed

to steer the display.The original object may look like this

{"name":"...","lastn

I am having big trouble fixing this error.

I have a view in

Backbone.js and I want to bind some actions on it with keyboard

events.

Here is my view :

window.PicturesView =

Backbone.View.extend({ initialize : function() {

$(document).on('keydown', this.keyboard, this); }, remove

: function() { $(document

T writing code using ExtJS4.0.1, MVC architecture. And when I develop

main form I meet problem with search extension for web site.

When I was trying to create new widget in controller, I need render

result in subpanel. and so when I write sample code I meet following

problem:

**Uncaught TypeError: Object [object Object] has no

method 'setSortState'**

I have an object, and I am using php's session to persist the objects

state. Here is basically what I'm doing:

I have "object A"

which contains one "object B". In the constructor for A, I am simply

grabbing B from the session and setting "object B" equal to its appropriate

value in the session.

I then proceed to call some of object

b's functions, but I have a feeling | http://bighow.org/tags/Object/1 | CC-MAIN-2017-04 | refinedweb | 543 | 64.41 |

SSHim () is a library for testing and debugging SSH automation clients. The aim is to provide a scriptable SSH server which can be made to behave like any SSH-enabled device.

Currently SSHim does just enough to fire up an SSH server using Paramiko and read and write values. Eventually it could be expanded to support additional channel types, scripted tunneling and more.

To install from pypi:

pip install sshim

To install as an egg-link in development mode:

python setup.py develop -N

To run from the folder direct:

PYTHONPATH=. python examples/counter.py

Or to run the tests:

nosetests -x -s -w tests/

Simple Example:

import logging logging.basicConfig(level='DEBUG') logger = logging.getLogger() import sshim, re # define the callback function which will be triggered when a new SSH connection is made: def hello_world(script): # ask the SSH client to enter a name script.write('Please enter your name: ') # match their input against a regular expression which will store the name in a capturing group called name groups = script.expect(re.compile('(?P<name>.*)')).groupdict() # log on the server-side that the user has connected logger.info('%(name)s just connected', **groups) # send a message back to the SSH client greeting it by name script.writeline('Hello %(name)s!' % groups) # create a server and pass in the callback method # connect to it using `ssh localhost -p 3000` server = sshim.Server(hello_world, port=3000) try: server.run() except KeyboardInterrupt: server.stop()

Because SSHim uses Python to script the SSH server, complicated emulated interfaces can be created using branching, stored state and looping, e.g:

import logging logging.basicConfig(level='DEBUG') import sshim, time, re # define the callback function which will be triggered when a new SSH connection is made: def counter(script): while True: for n in xrange(0, 10): # send the numbers 0 to 10 to the client with a little pause between each one for dramatic effect: script.writeline(n) time.sleep(0.1) # ask them if they are interested in seeing the show again script.write('Again? (y/n): ') # parse their input with a regular expression and pull it into a named group groups = script.expect(re.compile('(?P<again>[yn])')).groupdict() # if they didn't say yes, break out the loop and disconnect them if groups['again'] != 'y': break # create a server and pass in the callback method # connect to it using `ssh localhost -p 3000` server = sshim.Server(counter, port=3000) try: server.run() except KeyboardInterrupt: server.stop()

Synchronously start the server in the current thread, blocking indefinitely.

Stop the server, waiting for the runloop to exit.

Expect a line of input from the user. If this has the match method, it will call it on the input and return the result, otherwise it will use the equality operator, ==. Notably, if a regular expression is passed in its match method will be called and the matchdata returned. This allows you to use matching groups to pull out interesting data and operate on it.

If echo is set to False, the server will not echo the input back to the client.

Send raw encoded bytes to the client.

Send unicode to the client.

Send unicode to the client and append a carriage return and newline. | http://pythonhosted.org/sshim/ | CC-MAIN-2016-44 | refinedweb | 540 | 65.52 |

Parent Directory

|

Revision Log

Sapling model updates.

#!/usr/bin/perl -w package LoaderUtils; use strict; use Tracer; use SeedUtils; ; } =head3 RolesForLoading my ($roles, $errors) = RolesForLoading($function); Split a functional assignment into roles. If the functional assignment seems suspicious, it will be flagged as invalid. A count will be returned of the number of roles that are rejected because they are too long. =over 4 =item function Functional assignment to parse. =item RETURN Returns a two-element list. The first is either a reference to a list of roles, or an undefined value (indicating a suspicious functional assignment). The second is the number of roles that are rejected for being too long. =back =cut sub RolesForLoading { # Get the parameters. my ($function) = @_; # Declare the return variables. my ($roles, $errors) = (undef, 0); # Only proceed if there are no suspicious elements in the functional assignment. if (! ($function =~ /\b(?:similarit|blast\b|fasta|identity)|%|E=/i)) { # Initialize the return list. $roles = []; # Split the function into roles. my @roles = roles_of_function($function); # Keep only the good roles. for my $role (@roles) { if (length($role) > 250) { $errors++; } else { push @$roles, $role; } } } # Return the results. return ($roles, $errors); } 1; | http://biocvs.mcs.anl.gov/viewcvs.cgi/Sprout/LoaderUtils.pm?revision=1.2&view=markup&pathrev=mgrast_release_3_1_0 | CC-MAIN-2019-43 | refinedweb | 191 | 60.21 |

table of contents

NAME¶

getunwind - copy the unwind data to caller's buffer

SYNOPSIS¶

#include <syscall.h> #include <linux/unwind.h>

long getunwind(void *buf, size_t buf_size);

Note: There is no glibc wrapper for this system call; see NOTES.

DESCRIPTION¶

Note: this function is obsolete..

RETURN VALUE¶

On success, getunwind() returns the size of the unwind data. On error, -1 is returned and errno is set to indicate the error.

ERRORS¶

getunwind() fails with the error EFAULT if the unwind info can't be stored in the space specified by buf.

VERSIONS¶

This system call is available since Linux 2.4.

CONFORMING TO¶

This system call is Linux-specific, and is available only on the IA-64 architecture.

NOTES¶¶

COLOPHON¶

This page is part of release 5.10 of the Linux man-pages project. A description of the project, information about reporting bugs, and the latest version of this page, can be found at. | https://manpages.debian.org/testing/manpages-dev/getunwind.2.en.html | CC-MAIN-2021-49 | refinedweb | 154 | 67.86 |

Interactive Child's Mobile

Introduction: Interactive Child's Mobile" and 3/8" diameter and 4 feet of 1/2" diameter)

- Fishing line (approximately 5 yards)

- Light-colored thread (approximately 5 yards)

- Hot glue gun (1) and hot glue sticks (1-2)

- Stuffed toy (1) (one which had circuitry in it and therefore has easy access to the insides is convenient)

- Electronics

- Arduino UNOs (2)

- LEDs** (3)

- FTDI Breakout board (1)***

- USB cables, A to B plugs (2)

- XBee radios (2)

- XBee breakout boards with headers (2) ****

- Mini Servo motors (2)

- A-B USB cable (1)

- 1-axis accelerometer (1)

- Three-terminal switches (3)

- Push buttons (2)

- Flex sensors (2)

- Resistors (2x10k and 4x2.2k)

- Blacklight flashlights with screw-off heads (2)

- Solid-core wire (a lot*****)

- Electrical tape (approximately 1 yard)

- Soldering iron (1) and solder (approximately 1 yard)

- Battery packs and batteries (we used one of three AAAs, one of three AAs, and two of two AAs, but some of this is flexible)

* We used 12" x 24" sheets from inventables.com. Depending on the sizes and shapes of your parts, you might be able to use 12"x8" sheets, which are the next-smallest size. Also, the blue fluorescent acrylic from Inventables doesn't fluoresce (as of March, 2012).

** We used lilypad LEDs because we had them in a color we liked (orange-yellow). We don't recommend using lilypad LEDs instead of regular ones, though.

*** One of these will make your life much easier if your XBee radios aren't already configured to talk to each other. Don't assume they will.

**** XBee radios have non-standard pin spacing, so you will want a workaround. Reference. Alternatively reference digikey.com

***** We used about a dozen wires of approximately 4 yards in length to suspend switches from the ceiling for easier access. Securing the blacklights and the servos to the dowels also required a few yards of wire. Other than that, most of the wiring could be done with a yard or two of wire.

Step 1: Design Parts

The acrylic for this project is cut using a laser cutter. We designed our parts in Solidworks, but feel free to use whatever CAD software you prefer. All of our parts were between 3 and 6 inches on a side, with most of them being approximately 4" square. For the theme our our baby mobile, we designed two flowers, two butterflies, and one rainbow.

The sides of the acrylic fluoresce more than the faces do. To show this off (and to mix up the colors) we cut holes and inset parts for all of the acrylic shapes. To facilitate mixing and matching of these inset parts, we cut one of each of the flowers and the butterflies out of each color of acrylic. We also cut the corresponding arc of the rainbow out of each color of acrylic (with pink being the largest and green being the smallest). This gave us 20 parts, enough for two mobiles with 10 parts each. We also cut small (1/16") holes where we wanted to attach the parts to each other to facilitate the assembly process later.

Assemble the parts onto cutsheets (according to the format for the laser cutter you use), putting the parts as close together as you can given the tolerance of your laser cutter. Be aware that parts placed too close to the edge of the sheet may get cut off. Then cut the parts (or get someone to cut them for you).

The images for this step show the Solidworks parts for all the components we designed.

Step 2: Assemble Parts

Decide what color combinations you like best and lay out how you would like to assemble your parts. We found that the orange and yellow acrylics looked similar, so we preferred to accent the pink and green with the orange and yellow, and vice versa. We initially intended to use only fishing line to secure parts to each other but found that thread was easier to work with and allowed spinning parts more movement. However, for parts requiring structure, fishing line worked very well to hold subparts in a particular configuration. For our mobile, we used thread to hold together the two butterflies and the flowers. We used fishing line to hold together the tulips and the rainbows. as these looked significantly better with more structure.

All subparts were secured to each other using square knots through corresponding holes. (See step 1 again and look at the alignment of the holes in the parts, if you're interested.) The spacing was intended to match that in the parts, though of course getting this exact in practice was difficult.

Step 3: Test Components

If you have not already, download the arduino software from. You will need this for programming and testing components. Plug one of the arduinos into your computer and run one of the demos (e.g. Blink) to confirm your arduino is working; do the same with the other arduino. Now you're ready to test the other components.

The bend sensors serve as variable resistors. For our purposes, we need a reading from them. We therefore need to solder a 10k resistor in between one of the sides and ground. In fact, using the sensor will be a lot easier if you solder leads to each of the pins. Make sure to insulate the connections with heat shrink tubing or electrical tape. Note also that the joint of the bend sensor can wear through and break, so reinforcing this with tape is a good idea. The resistor, as mentioned previously, will go to ground; the same pin to which the resistor is soldered will go to the analog input; and the other pin will go to power (5V). Plug the bend sensor into the arduino in this configuration, with the input to pin A0. Then configure the arduino to print out the analog input values. If you aren't familiar with arduino software, use the code below. See what kinds of values you get when you bend your flex sensor versus when the sensor is straight.

void setup()

{

Serial.begin(9600); // Setup serial

digitalWrite(13, HIGH); // Indicates that the program has intialized

}

void loop()

{

raw = analogRead(A0); // Reads the Input PIN

Serial.println(raw); // Prints the input value

delay(10); // Make it not scroll too quickly

}

In the next step, you will hack the servos for continuous motion. For now, though, it's a good idea to make sure they work in the first place. The orange wire is signal, red is power (5V), and brown is ground; hook a servo up to the arduino in this manner. Run one of the servo test modules to make sure your servo behaves as expected. You will need long leads on the servos (at least 1 yard, depending on how long the fishing line suspending the mobile will be), so now would be a good time to attach those by sliding them into the corresponding pins on the servo. We found that braiding the wires together helped to keep them consolidated and ensured we knew which wires went to which component.

Solder leads to the power, ground, and signal pins of the accelerometer. Hook the accelerometer up to the arduino, with the input going to one of the analog pins (e.g. A0). Print the raw values as you did for the bend sensors. Try tapping the accelerometer and see what kinds of changes in the values you get. We found that giving the accelerometer a good shake tended to make our input numbers go from three digits to four digits.

Screw the heads off the two flashlights. The spring in the center of the head corresponds to power and the metal threads around the outside correspond to ground. Carefully solder a long power lead to the spring and a long ground lead to the threads. (You will need at least 1.5 yards of each wire to get from the end of the mobile to the arduino.) Plug the ground wire into a ground pin and the power wire into one of the digital pins. When you set the pin to high, the light should turn on, and when you set the pin to low, the light should turn off.

Solder long leads to each end of each of the LEDs, making note of which lead goes to ground and which carries the signal. Test each LED like you did each flashlight--insert the ground wire into a ground pin and the signal wire into a digital pin, then ensure the LED turns on when you set the pin high and off when you set the pin low.

All of your electrical components should now be working, and with the exception of hacking the servos and programming the XBees, they should be ready to use!

Step 4: Hack Servos

The servos are intended to rotate continuously in one direction, which necessitates hacking the servos. The first step in doing this is to remove the hard stop attached to one of the gears. Remove the screws from the casing and remove the plastic top to expose the gears. Take careful note of the order in which the gears are placed so that you can put them back in this order! The bottom pin has a raised plastic piece which prevents the servo from rotating more than 180 degrees in either direction. Carefully cut off this stop--small wire cutters with an angled tip work well for this. Then put all the gears back and screw back on the top, then put on a plastic horn. You should be able to twist the horn continuously in either direction. If you can't, take the horn and cover back off and make sure you removed the entire stop.

The potentiometer (pot) inside the servo tells the servo what position is has. To allow continuous motion, we want the servo to think that the pot is in the middle at all times so that any value other than 0 degrees will cause it to rotate. The pot has a range of 5k, so you can replace the pot with two 2.2k resistors (don't ask us about the math--this was recommended to us). The pot is the cylindrical device inside the body which has a shaft attached to it. Snip the wires holding the pot so you can extract the pot from the casing. Solder a 2.2k resistor in between the white wire (signal) and the black wire (ground) and another 2.2k resistor in between the white wire and the red wire (power). Use electrical tape or heat shrink tubing to insulate the connections. Then (very carefully!) put the pot and shaft back in place and make sure all the components fit back into the body of the motor. Once everything is back in place, screw back on the casing.

Step 5: Program XBees

If you know your XBee radios are properly configured to talk to each other, skip this step.

Configuring XBee's is not exactly the most straightforward of processes. You will need 2 of them, they should be 2 of the same model. We used two S1's. Go ahead and download the XCTU software from beneath the tab named Diagnostics, Utilities, & MIBs. While that is installing, take the FTDI breakout board and solder on leads to Power (3.3 V), Ground, TX & RX (possibly labeled DOUT & DIN).

General rules for XBee's:

1) Only power with 3.3 V, or else you'll fry the radio. Note, your FTDI board and your Ardunio UNO's should have 3.3V rails.

2) A wire that goes to TX on one end goes to RX on the other.

3) Your XBee's should only need 4 wires plugged in ever.

Hook up one of your XBee's to the FTDI board and plug it in to your computer. Then start the XCTU software (as a general rule, plug it in first, then start XCTU).

Hit the Test/Query button towards the right, and if any on-screen instructions pop up, follow them.* You should receive verification that you have some fashion of an XBee. Head over to the Modem Configuration tab, click read, then check 'Always Update Firmware,' then hit write. You will want to do this to both XBee's and receive confirmation that they are configured with identical firmware, and they should be the same model number, though their serial numbers will differ. Next hit Read, uncheck 'Always Update Firmware' and make sure that you set the baud rate to 9600 (under Serial Interfacing; Interface Data Rate) and if you want to avoid interference from your neighbor's XBee tinkering, go ahead and set a different PanID & Channel. Your radios should work if you give them the same Channel, but different ID's. In order to test they can communicate, plug one radio into an Arduino, hook it up to the computer and run the Arduino software. Open the Com Port Serial Monitor (Ctr Shift M) and go back to your 'Terminal' tab from XCTU. Anything you type in the Terminal should appear magically on the other Arduino's Serial Monitor.

At this point, if it doesn't work, check the first three general rules of XBee's (see above, especially #2: i.e. wire goes from TX on the arduino to DIN on the XBee). If it still doesn't work, especially if you have a different model of XBee than we did, use the power of Google to find configuration tutorials. XCTU should have all the power you need to configure them, it is just a trick of making sure you carefully read all the options and they are configured on the same channels with same baud rates, etc. One of them might need to be in 'command' mode while another is in 'peer,' although not necessarily. There are many models of XBee's...

Step 6: Assemble Toy Circuitry

The arduino which goes into the toy will send values depending on whether the flex sensors and the accelerometer are giving high or low values. The flex sensors read high if at least one of them is high. If both the flex sensors and the accelerometer are low, transmit 0; if the flex sensors only are high, transmit 1; if the accelerometer only is high, transmit 2; if both components are high, transmit 3. This significantly simplifies the processing which must be done on the receiving arduino.

Now, there's a bit of a pickle here. The arduino usually transmits a value of '0'. However, for reasons we have not yet determined, the arduino sometimes transmits everything as a ASCII character that is 48 above whatever you desired. We got around this difficulty by just having the receiving arduino test for both possible values, but we'll be sure to update this if we figure out why we get funny values sometimes. It helps to make sure you use the Serial.write() command, not the Serial.print() or Serial.println() commands when you are hoping to actually transmit data across the radios. Also, at this point, as you start plugging things in and putting code on the Arduinos, you'll want to avoid uploading code to an Arduino that has anything plugged into it's TX or RX pins (pins 0 & 1).

We used three 1.5V batteries in series to power the arduino (three AAs). The arduino performs some internal power regulation, so you can power the arduino off of 4.5V or 6V.

Solder the ground leads of the two flex sensors and the arduino together, leaving the end of one lead free to plug into the board. Do the same with the three power leads. Plug the ground leads into one of the ground pins on the arduino and the power leads into the 5V power pin. Plug the power lead of the XBee into the 3.3V pin and the ground lead of the XBee into another ground pin, then plug the RX/DIN lead into the TX pin of the arduino. (The XBee is receiving from the arduino, hence the input of the XBee being connected to the output of the arduino.) Finally, plug the ground lead of the batter pack into the final ground pin and the power lead of the battery pack into the Vin pin.

The toy circuitry is now assembled! Now all you need to do is program the arduino. We used the below code to control our toy.

// Values to be read

int accelVal = 0;

int bendVal1 = 0;

int bendVal2 = 0;

int accelRef;

int bendRef1;

int bendRef2;

boolean accelOn = false;

boolean bendOn = false;

int sendVal = 0;

void setup() {

// This code runs once, at the beginning

Serial.begin(9600); // Initialize serial monitor

// Get reference values: these allow us to calibrate the values we send for any variation in component behavior

accelRef = analogRead(A3);

bendRef1 = analogRead(A4);

bendRef2 = analogRead(A5);

}

void loop() {

// This code runs continuously

delay(1);

// Get values

accelVal = analogRead(A3);

bendVal1 = analogRead(A4);

bendVal2 = analogRead(A5);

// Check if accelerometer is on

if ((accelVal - accelRef) > (accelRef / 3)){ // This is an arbitrary reference that we found worked well

accelOn = true;

} else {

accelOn = false;

}

// Check if bend sensors are on

if ((bendVal1 < (3 * bendRef1 / 4)) || (bendVal2 < (3 * bendRef2 / 4))){ // This is an arbitrary reference

bendOn = true;

} else {

bendOn = false;

}

// Determine the correct value to transmit based on the sensors

if (accelOn == false) {

if (bendOn == false) {

sendVal = 0;

} else {

sendVal = 1;

}

} else {

if (bendOn == false) {

sendVal = 2;

} else {

sendVal = 3;

}

}

// Transmit the value

Serial.write(sendVal);

}

Step 7: Assemble Mobile

We assembled the parts of the mobile back in step 2, so now it's time to put all of them together. Lay out your mobile on the floor to make sure the two sides will not hit each other. We used the full 4' of the thick top dowel. The next layer consisted of medium-weight dowel of approximately 1.5' in length. From this hung a light-weight dowel of approximately 1' in length, off of which hung a butterfly and a flower, and another medium-weight dowel, again approximately 1.5' in length; this hung low enough so as not to interfere with the other branch. Off of that dowel we hung a rainbow and another light dowel of approximately 1' in length with another butterfly and another flower. Each side of the mobile had one of each of the five parts, and we tried to balance the colors used, as well.

We found it useful to lay out one side of the mobile and mark the lengths of the dowels we liked, then cut two of each of the lengths we wanted (to mirror the mobile on the other side). Once you have the dowels at the lengths you like, tie the requisite parts at the ends of the corresponding dowels using fishing line. Secure the fishing line to the dowel with hot glue. Once you have all the components set, balance the mobile, starting from the bottom. Tie fishing line to the center of the bottom dowel, find the balance point, and glue the fishing line in place. Then tie this branch to the end of the medium dowel with the rainbow on the other end and secure. Balance this branch, then tie to one end of the other medium dowel. Balance the final light dowel and secure to the other end of the medium dowel. Finally, balance this branch and attach a servo horn directly at the balance point with fishing line and hot glue. Repeat this process on the other branch. Attach a servo to each end of the heavy dowel, using hot glue. You may find it helpful to troubleshoot the mobile assembly before attaching the servos to the horns, but eventually you will hot glue the servo horns to the servo shafts; this is necessary because of the weight of the branches, although the branches are not very heavy. Braid the three wires for each servo together and secure them along the thick dowel rod, having them meet in the middle.

Cut the remaining medium-weight dowel in half and attach one half to each side of the main heavy-weight dowel rod. Tape and/or glue the blacklights to the ends of the medium-weight dowel, facing towards the mobile. You will need to play with the angle of the blacklight heads somewhat to obtain a nice angle such that all the mobile pieces are hit by the blacklight. Twist the blacklight wires together and secure them to the rod, meeting at the balance point of the rod. Use fishing line to tie an LED to each end of the thick dowel and one in the middle, securing the fishing line with hot glue as necessary. Twist the wires for each LED together and make them meet in the middle. Twist all the wires from the LEDs, blacklights, and motors together and with the fishing line suspending the center of the mobile. These wires will all go to the arduino in the ceiling where the mobile is mounted. (Of course, if your ceiling does not have removable tiles like the one we used does, you will need some other place to store all your circuitry.)

Step 8: Assemble Mobile Circuitry

We assembled and tested all the components for the mobile circuitry in steps 3, 4, and 5, so now it's time to put them together. We found that a mini breadboard helped us to clean up our circuit so we could see all the components. You can, however, build the circuit entirely on the arduino if you prefer.

Connect the 3.3V pin of the arduino to the VDD pin of the XBee, the RX pin of the arduino the the VIN pin of the XBee, and a ground pin of the arduino to the ground pin of the XBEE. We found that we needed to power the two motors separately from the arduino because the arduino could not source enough current to power the arduinos as well as all the other components. Connect the power wires of the servos to each other and to the power wire of the AAA battery pack. Connect the ground wires of the servos to each other and to the ground wire of the AAA battery pack. Solder the ground wires of the LEDs, the blacklights, and the servos all together, leaving one end free to plug into a ground pin. Connect all components to the requisite pins--we used pins 12 and 11 for blacklights, 10 and 9 for servos, and 8, 7, and 6 for LEDs.

Finally, power the arduino itself. We used two battery packs of two AAs each connected in series to give us a 6V input; you can also, as discussed previously, power arduinos off of 4.5V; we used whatever we had available. Connect the power lead to the VIN pin and the ground lead to a GND pin. Incidentally, we found that soldering leads onto the battery packs from the flashlights worked well--we used one of these to power the servos.

Now all you need to do is program the arduino. We used the below code on the mobile arduino to control the various components.

#include <Servo.h>

int serial_val = 0;

boolean motors_on = false;

// Counters for the LEDs flashing

int x1 = 0;

int x2 = 0;

int x3 = 0;

// Sample pins for mobile-mounted arduino

static int ledPin1 = 6;

static int ledPin2 = 7;

static int ledPin3 = 8;

static int motorPin1 = 9;

static int motorPin2 = 10;

static int flashlightPin1 = 11;

static int flashlightPin2 = 12;

// Servo information

Servo motor1;

Servo motor2;

int pos1 = 0; // position of motor1

int pos2 = 0; // position of motor2

void setup() {

// Setup code runs once at the beginning

Serial.begin(9600); // Starts the serial monitor

// Tell the arduino which pins will be used for output; no pins will be used for input on the mobile

pinMode(motorPin1, OUTPUT);

pinMode(motorPin2, OUTPUT);

pinMode(flashlightPin1, OUTPUT);

pinMode(flashlightPin2, OUTPUT);

pinMode(ledPin1, OUTPUT);

pinMode(ledPin2, OUTPUT);

pinMode(ledPin3, OUTPUT);

// Pin 13 is the built-in LED, usually used to signify that the program wrote correctly

pinMode(13, OUTPUT);

digitalWrite(13, HIGH);

}

void loop() {

// Main code, which runs repeatedly

// read value from (radio), subtract 48 for quick fix from ASCII to int

if(Serial.available())

serial_val = Serial.read()-48;

// Check to make sure the value read is reasonable

Serial.println(serial_val);

if(serial_val > 0)

{

digitalWrite(13, HIGH);

}

else

{

digitalWrite(13, LOW);

}

// Increment counters

x1 = x1 + 1;

x2 = x2 + 1;

x3 = x3 + 1;

// Check values of counters; random primes were selected as cutoffs so the cycles wouldn't line up

if (x1 > 20483){

x1 = 0;

} else {

if (x1 < 1000){

digitalWrite(ledPin1, HIGH); // Turn on for 1 second every 20.483 seconds

} else {

digitalWrite(ledPin1, LOW); // Turn off the rest of the time

}

}

if (x2 > 29303){

x2 = 0;

} else {

if (x2 < 1200) {

digitalWrite(ledPin2, HIGH); // Turn on for 1.2 seconds every 29.303 seconds

} else {

digitalWrite(ledPin2, LOW); // Turn off the rest of the time

}

}

if (x3 > 18397){

x3 = 0;

} else {

if (x3 < 900) {

digitalWrite(ledPin3, HIGH); // Turn on for 0.9 seconds every 18.397 seconds

} else {

digitalWrite(ledPin3, LOW); // Turn off the rest of the time

}

}

// Delay just a bit so the program can respond

delay(1);

// to turn the blacklights on / off:

if(serial_val == 1 || serial_val ==3)

{

digitalWrite(flashlightPin1, HIGH);

digitalWrite(flashlightPin2, HIGH);

}

else

{

digitalWrite(flashlightPin1, LOW);

digitalWrite(flashlightPin2, LOW);

}

// Turn the motors on or off

// This code actually turns them on and off for brief spurts by attaching and detaching the motors

// because otherwise they ran at too high a speed and the pieces hit each other

if(serial_val == 2 || serial_val == 3)

{

if(motors_on)

{

motors_on = false;

motor1.detach();

motor2.detach();

}

else

{

motors_on = true;

motor1.attach(motorPin1); // Attach motor1 to appropriate pin

motor2.attach(motorPin2); // Attach motor2 to appropriate pin

motor1.write(0);

motor2.write(0);

delay(300);

}

}

else

{

if(motors_on)

{

motors_on = false;

motor1.detach();

motor2.detach();

}

}

}

Step 9: Final Assembly

If you are mounting your mobile inside the ceiling, like we did, stand on a table or other tall object and move aside a tile in the ceiling. The circuitry will rest on top of a neighboring tile. Tie the mobile to the ceiling and place the circuitry inside the ceiling. Test the mobile and toy interaction to make sure no wires were displaced in the hanging of the mobile. Then slide the ceiling tile back into place.

Open the toy's midsection and pull out most of the stuffing. Our toy had a very large head, so we put the boards inside the head. If you are afraid that your wires may slip out (ours did!), encase the arduino gently with tape to hold everything in place. (You could get around this problem by making your own circuit board and soldering everything in place, but this method works all right.) Carefully slide the arduino into the stuffed animal and pad with a little bit of the extracted stuffing. Next, sew the bend sensors to the sides of the stuffed animal, hiding the stitching as much as possible in the animal's fur. Finally, slide in the battery pack. Pad with as much stuffing as will fit. Turn the battery pack on and test the interaction with the mobile.

Congratulations! You now have a working, glow-in-the-dark, interactive baby mobile.

~Molly and Josh.

Maybe I missed it, but where did you find the uv led flashlights with twist off heads?

This is really cool.

A word of caution, sometimes flourescent colours induce vomiting in infants. In Sweden babytoys with these colours were pulled back in the 90'ties because of fears the vomit would suffocate the babies.

I would love to make ths switch activated for my handicapped child but its abit over my head Anyway to simplify.

The hardest part is getting the two arduinos to work with each other; if you're just interested in the glowing, moving mobile, you could simplify a lot of the work by just working with the mobile and not using the toy. Feel free to message me offline if you'd like to talk more about this! | http://www.instructables.com/id/Interactive-Childs-Mobile/ | CC-MAIN-2017-39 | refinedweb | 4,719 | 69.31 |

The Problem(s) With Solaris SVR4 Link-Editor Mapfiles

By Ali Bahrami-Oracle on Jan 06, 2010

Lately, I've been working on a new mapfile syntax to replace this original language, which Solaris inherited as part of its System V Release 4 origins. In the process, I've examined every line of the manual, and of the code, many times. I believe I understand it all the way down now, and I'd like to record some of what I've learned here. My main reason for doing this is as justification for undertaking a replacement language. Oddly enough though, I believe that this information will make it easier to decode, use, and write these older mapfiles. Once you understand the quirks, you can work around them.

This discussion will not cover the new syntax that will come in a subsequent installment. However, I do want to reassure you that full support for the original mapfile language will remain in place. We're not about to force anyone to rewrite 20+ years worth of mapfiles. The goal is to freeze the old support in its current form, provide a better alternative, and gradually move the world to it over a period of years.

Terse To A Fault / Not ExtensibleThe:

- There are only a limited number of magic characters available, mainly on the top row of the keyboard.

- Only a few of these characters have mnemonic meanings that make intuitive sense in the context of object linking. And those that do have such a meaning can easily imply more than one thing. For example, In the SVR4 syntax, '=' means segment creation, and ':' means section to segment assignment. The reverse would make just as much sense: ':' could have meant segment creation, and '=' could have meant assign sections to segments. No one would have found this less intuitive. I used to constantly get these backwards, and would have to look at the manual or another mapfile to remember which character has which meaning. That's pretty sad, considering that '=' is probably the most mnemonic character in the language.

- After the first few good characters (=, :) are taken, the remaining assignments become rather arbitrary. For instance, the | character is used to specify section order, while @ specifies the creation of a "segment size symbol". Neither of these evoke meaning. To the extent that they do, it's a negative effect, such as the fact that '|' evokes shell pipelines, but means nothing like that in mapfiles.

- Some characters are overloaded, having different meanings in different contexts.

SVR4 Mapfile Syntax As EvolutionFor me, the best way to understand the SVR4 mapfile syntax has been to start with it's original form, and then consider how and where each subsequent feature has been added.

In the late 1980's, starting around 1986 or so, Unix System V Release 4 (SVR4) was being developed at AT&T. They created a new linking format (ELF), to resolve the inadequacies of previous format (COFF) used in SVR3. SVR3 had a rather elaborate mapfile syntax. Rather than stay with this syntax, the SVR4 people designed a new, smaller, and simpler replacement. We don't know their reasons for this decision, and can only guess that they didn't think the SVR3 language was necessary, or a good fit with their new ELF based link-editor. As an aside, while researching different mapfile languages during the design of the replacement syntax for Solaris, I discovered that there is a notable similarity between the SVR3 mapfile language and GNU ld linker scripts. SVR3 lives on, as does much of Unix, in its influence on later systems.

The original SVR4 language was very small, consisting of four different possible statements. All of these have the form:

where name is a segment name, and magic is a character that determines what the directive does:where name is a segment name, and magic is a character that determines what the directive does:name magic ... ;

- Segment Definition (=): Create segments and/or modify their attributes

- Section to Segment Assignment (:): Specify how sections are assigned to segments

- Section-Within-Segment Ordering (|): Specify the order in which output sections are ordered within a segment

- Segment size symbols (@): Create absolute symbols containing final segment size in output object.

Solaris started with the original SVR4 code base. Since then, Sun has added three more top level statements:

- Symbol scope/version definition ({}): Assign symbols to versions

- File Control Directives (-): Specify which versions can be used from sharable object dependencies

- Hardware/Software capabilities (=): Augment/override the capabilities from objects..[version-name] { scope: symbol [= ...]; *; } [inherited-version-name...];

Segment Definition (=)Segment definition statements can be used to create a new segment, or to modify existing ones:

If a segment-attribute-value is one of (LOAD, NOTE, NULL, STACK), then it defines the type of segment being created:If a segment-attribute-value is one of (LOAD, NOTE, NULL, STACK), then it defines the type of segment being created:segment_name = segment-attribute-value... ;

- LOAD segments are regions of the object to be mapped into the process address space at runtime, represented by PT_LOAD program header entries. LOAD segments were part of the original AT&T language.

- NOTE segments are regions of the object to contain note sections, represented by PT_NOTE program header entries. NOTE segments were part of the original AT&T language.

- NULL segments are a concept added by Sun. As far as we can determine, they were created in order to have a type of segment that won't be eliminated from the output object if no sections are assigned to them. Their actual meaning depends on whether the ?E flags is set or not, as described below.

- STACK "segments" were added by Sun relatively recently in order to allow modifying the attributes of the process stack. This is not really a segment at all: It does not specify a memory mapping, and sections cannot be assigned to it. Rather, it allows access to the PT_SUNWSTACK program header, setting the header flags to specify stack permissions. Only the flags can be set on a stack "segment". The representation of this concept as a segment was done to simplify the underlying implementation of the feature.

If a segment-attribute-value starts with the '?' character, then it is a segment flag:

- ?R, ?W, ?X

- Set the Read (PF_R), Write (PF_W), and eXecute (PF_X) program header flags, respectively. This is a feature of the original SVR4 syntax, and is self explanatory.

- ?E

- The Empty flag can be be used with either a LOAD, or NULL segment:

- Applied to a LOAD segment, the ?E flag creates a "reservation". This is an obscure and little used feature by which a program header is written to the output object, "reserving" a region of the address space for use by the program, which presumably knows how to locate it and do something useful. Sections cannot be assigned to such a segment.

- Applied to a NULL segment, the ?E flag adds extra PT_NULL program headers to the end of the program header array. This feature is useful for post optimizers which rewrite objects to add segments, and need a place to create corresponding PT_LOAD program headers for them.

- The ?E flag is meaningless when applied to NOTE or STACK segments.

The Empty flag was added by Sun. It should be noted that ?E does not correspond to an actual program header flag. It's treatment as a flag in the mapfile syntax, rather than expressing it as a different sort of option (using a magic character other than '?' as a prefix) was primarily a matter of implementation convenience.

- ?N

- Normally, the link-editor makes the ELF and program headers part of the first loadable segment in the object. The ?N flag, if set on the first loadable segment, prevents this from occurring. The headers are still placed in the output object, but are not part of a segment, and therefore not available at runtime. It is meaningless to apply ?N to a non-LOAD segment.

This flag was added by Sun. As with ?E, it does not correspond to a real program header flag. It's representation as a flag is a matter of implementation convenience.

- ?O

- This is another flag, added by Sun, that does not correspond to a real program header. It is used to control the order the placement of sections from input files within the output sections in the segment. Sections are assigned to segments via the ':' mapfile directive. Normally, sections are added in the order seen by the link-editor. When ?O is set, the order of the input sections matches the order in which these assignment directives are found in the mapfile.