text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91

values | source stringclasses 1

value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

|---|---|---|---|---|---|

One of you guys asked me to do a tutorial on Fixing Java Errors. I thought it was a good idea, so here I’ll show you over 30 common Java errors.

Sometimes it is hard to figure out what Cannot be Resolved, Syntax Error on Token, or Method is not Visible mean. But, after watching this video you should better understand.

Also, you’ll learn how to get Eclipse to help you fix Java errors.

If you like videos like this tell Google [googleplusone]

To make me extra happy, feel free to share it

Code from the Video

import java.lang.Math.*; public class Lesson35{ // ERROR 1: Cannot make a static reference to the non-static method // SOLVED: You can't call a non static method from a static method // private void printSomething(){ private static void printSomething(){ // ERROR 2: Unresolved compilation problem // SOLVED: Pay attention to Eclipse Errors // Int BigNumber = 100000; // ERROR 3: A string literal isn't properly closed // SOLVED: Pay attention to Eclipse //String something = "A string error //is occurring"; System.out.println("Something"); } // If this is missing you get ERROR 2 // ERROR 4: Exception in thread "main" java.lang.NoSuchMethodError: main // SOLVED: Make sure you type the main function correctly // public static void main(String args){ // This is Wrong public static void main(String[] args){ // ERROR 5: Can't be resolved to a variable // SOLVED: Pay attention to Eclipse // printsomething; // This is Wrong printSomething(); // ERROR 6: Type mismatch Can't convert from int to String // SOLVED: Convert the integer int number = 12; // String anotherNum = number; // This is Wrong String anotherNum = Integer.toString(number); // int number = Integer.parseInt(anotherNum); // Convert from string to int // ERROR 7: Can't be resolved to a type // SOLVED: import the dimension library // Dimension dim = new Dimension(); // ERROR 8: Method is undefined // SOLVED: Make sure methods are in the class double pi = 3.14; // long randLong = Lesson34.round(pi); // The wrong way // ERROR 9: Can't invoke method // SOLVED: Understand how methods work long randLong = Math.round(pi); // The right way // randLong = pi.round(); // Wrong way // ERROR 11: The method is not applicable for the arguments // SOLVED: Provide the right arguments in the right order // getStuff(1.234, 5); // Wrong Way getStuff(1,5.0); // Right Way // ERROR 12: Syntax error on token ",; expected // SOLVED: Understand how methods are called in Java vs. other languages // double sumNum = addThem(LessonFive,1,2); // Wrong Way double sumNum = LessonFive.addThem(1,2); // ERROR 13: Syntax error on token '==' // SOLVED: = is different from == // int value == 1; } // ERROR 10: Can't be resolved to a type // SOLVED: Always provide the type in methods /* public static void getStuff(number1, number2){ } */ // ERROR 14: Return type for method is missing // SOLVED: Provide a return type or void public static void getStuff(int number1, double number2){ // ERROR 15: Syntax error on token ",[ expected // SOLVED: Understand how arrays are defined in Java // int[] intArray = new [10,10]int; // Wrong Way int[][] intArray = new int[10][10]; // ERROR 16: The method is not visible // SOLVED: You can't private methods which are declared in // another class. private static void getFileInfo() // Lesson33.getFileInfo(); // Wrong Way // ERROR 17: Local variable may not have been initialized // SOLVED: Always give variables default values String howMany = "10"; // String howMany; // Wrong Way System.out.println(howMany); // ERROR 18: Cannot be Resolved // SOLVED: Understand that arrays and strings use a // different version of length System.out.println(howMany.length()); // System.out.println(howMany.length); // Wrong Way // System.out.println(intArray.length()); // Wrong Way System.out.println(intArray.length); // ERROR 19: Prefix Operator vs. Postfix Operator int xInt = 1, yInt = 1; xInt = yInt++; // Passes the original value of yInt before incrementing System.out.println("xInt: " + xInt); // ERROR 20: Not calling break at end of case int day = 1; switch (day){ case 1: System.out.println("Monday"); case 2: System.out.println("Tuesday"); case 3: System.out.println("Wednesday"); case 4: System.out.println("Thursday"); default: System.out.println("Friday"); } } }

thanks for the tutorial, will u pls also make a tutorial on IE errors and how to fix it?

I covered a great deal about making cross browser sites in How to Design a Website.

Thanks , i got it. i have got some new issues , pls help me to understand when you are free.

1. position property can be used to set the position of an element, so why float is important to learn ? is it true that using position property is a bad practice when we have chance to use float property ?

2. suppose there are two class named “something” and “nothing”,

wht is difference between .something+.nothing{property:value;} and .something, .nothing {property:value;}?

as far as i know this .something, .nothing {property:value;} is called Grouping Selectors.

3. display:inline-block; what does it mean ?

4. would u please give me a practical example of display:block property ?

i know u are a css geek but very helpful, so i have asked a lot , dont mind, take care

1. I like to use float to get all of the page elements to wrap around it. I don’t like to use absolute positioning. I just think a more liquid layout looks better. That is just an opinion.

2. #idName.className would target an element with both a class name and id name. When you put a space between these you are targeting elements under the first element. So, #idName .className means target all elements with the class name className that are decendents of idName

3. inline-block doesn’t force line breaks after the element. Since it is still a block item you can set width, height, top and bottom margins / paddings

4. block elements force a line break and allow you to define width, height, top and bottom margins / paddings

Thank you so much , u R the best and friendly tutor i have ever seen.

You’re very welcome. I do my best

pls i want tutorial on how to deploy a java application and a print method for printing screens in a printer

I’ll cover printing. It is very easy to set up. I’m not done with Java yet

Hello Derek,

Please let me know,

What is java-jni priority in your priority list?

You are jumping in every possible technology(SAMSUNG SDK), I want you to come back to our old C / C++ sooooooooooon!!

I was able to reach till java # 35 now, with all your programs written and compiled.

I’m going to finish Samsung this month. Then I’ll finish up C, Inkscape, Google Maps with Android and a few other Android technologies. At that point I’ll transition into Android graphics, interfaces and games. I’ll hold a vote on whether the tutorial that will come out along with the Android stuff will be C++, or J2EE. | http://www.newthinktank.com/2012/04/java-video-tutorial-35/?replytocom=8973 | CC-MAIN-2019-51 | refinedweb | 1,136 | 63.39 |

There are two different methods of transmitting USB data between your microcontroller board and your PC. They are called "USB Stacks". They are layers of code that handle all of the protocols for transmitting data whether you are using it to upload your program to the board, receiving data via the serial monitor or serial plotter, or talking back to your computer emulating a mouse, keyboard or other device.

Traditional Arduino 8-bit boards all use the original Arduino stack. Newer boards such as M0 and M4 based on the SAMD21 and SAMD51 have the option of using either the Arduino stack or a different version called the TinyUSB Stack. Still other boards such as the nRF52840 based boards use only TinyUSB and it is likely that upcoming boards such as the ESP32-S2 will continue to use only TinyUSB. This is primarily because TinyUSB is the underlying architecture for implementing CircuitPython on these boards.

If you are using an M0 or M4 board you select which stack you want to use in the Tools menu of the Arduino IDE as shown below.

The image shows the tools menu of the Arduino IDE and we have selected an Adafruit Feather M0 Express board. Here you have a choice between using the Arduino stack or the TinyUSB stack.

However in the image below, we have configured for an Adafruit Feather nRF52840 Express. As you can see there is no "USB Stack" option. What you cannot see is that this particular board only uses TinyUSB. If you try to #include it will not find the proper library because is not supported under TinyUSB.

For the M0 and M4 boards you can simply choose to select the Arduino stack and there's no problem. However the TinyUSB stack also has many other features that might be useful to you. Among them are the ability to use WebUSB and to use the onboard flash chip of your board as a mass storage device. This essentially turns your Feather board into a flash drive where you can drag-and-drop files. We will not be covering those capabilities in this tutorial. Of course if you're using the nRF52840 based systems don't have a choice. You have to use TinyUSB.

As mentioned previously, the traditional way to control mouse or keyboard is the following include files.

#include <HID.h> #include <Mouse.h> #include <Keyboard.h>

#include <HID.h> #include <Mouse.h> #include <Keyboard.h>

You should erase those lines and replace them with

#include <TinyUSB_Mouse_and_Keyboard.h>

#include <TinyUSB_Mouse_and_Keyboard.h>

This will automatically detect whether you are using the Arduino Stack or the TinyUSB Stack. If you are using the original Arduino Stack it will simply do the necessary include files for you. And if you're using TinyUSB Stack it will instead use its own code that works exactly like the originals. Note that there is no way to separate Mouse and Keyboard inclusion in our system. It was much easier to implement both at once rather than implementing them separately because of the way TinyUSB implements its HID functions. Theoretically when you compile your code if you did not make any reference to Keyboard and only to Mouse the linking loader will eliminate the Keyboard code. And vice versa if you use only Keyboard and not Mouse. Combining these into a single library saved us a lot of headaches.

If you have existing code that uses Mouse.h or Keyboard.h or both you should make the changes noted above and give it a try.

If you are using an M0 or M4 based board, you will have to set the Tools->USB Stack to "TinyUSB". In fact try switching back and forth between the two stacks and recompiling. You should see the same results using either stack. If you are using the nRF52840 processor, you do not need to select the TinyUSB option.

While developing and testing this library, we discovered that occasionally it makes a difference when you call the

Mouse.begin() or

Keyboard.begin() methods relative to the

Serial.begin(…) method. Sometimes your computer would get confused as to how your USB was operating. Was it a mouse? Was it a keyboard? Was it a serial device? We had inconsistent results. Our best results came if you did your

Mouse.begin() and/or

Keyboard.begin() before doing

Serial.begin(…). No such restriction is necessary when using the BLE52 version of the library. It only affects the TinyUSB version.

In the next section, we will describe the BLE52 library followed by a series of three demonstration examples. | https://learn.adafruit.com/mouse-and-keyboard-control-using-tinyusb-and-ble/tinyusb-mouse-and-keyboard-usage | CC-MAIN-2021-21 | refinedweb | 766 | 66.94 |

Debugger Views Application Sample

This sample demonstrates a feature introduced with Microsoft Visual Studio 2005, the capability to change the way you view a class in the debug windows. By applying new attributes such as the DebuggerDisplayAttribute to a class and its members, you can control how the value, type, and name columns are displayed for that type in the Locals debug window, and whether a value is shown, hidden, or expanded when viewed. This sample is currently available only in Microsoft Visual C# 2005.

For information about using the samples, see the following topics:

This sample includes source files for three classes that contain the debugger attributes and source files that do not, as shown in the following table.

The DebuggerViewsExample.cs file contains the entry point of the console application and is used by both sets of files. Uncomment the code in the debug versions (DebugAddress.cs, DebugCustomer.cs, and DebugCustomerName.cs) to run the sample.

To build the sample using the Command Prompt

Open a Command Prompt window and navigate to the \CS subdirectory under the DebuggerViewsCS directory. For information about required settings and the SDK Command Prompt, see How to: Set Sample Settings.

Type msbuild DebuggerViewsCS.sln at the command line.

To build the sample using Visual Studio

Open Windows Explorer and navigate to the \CS subdirectory under the DebuggerViewsCS directory.

Double-click DebuggerViewsCS.sln to open the file in Visual Studio 2005.

On the Build menu, click Build Solution.

To run the sample

On the View menu of Visual Studio, click Solution Explorer.

In the Solution Explorer window, double-click DebuggerViewsExample.cs to open the file in Visual Studio 2005.

On the Project menu, click Show all files.

Insert a breakpoint (press F9) on the line where the GetCustomers method instantiates a variable of type Customer, as shown here:

Customer c = new Customer(cn, Address.GetAddressById(customerAddresses, cn.Id));

Press F5 to start and run the sample application in the Visual Studio 2005 debugging environment.

When the application breaks at the breakpoint, press F10 to step to the next line of code.

Observe current values in the Locals window by expanding the hierarchy of the application's properties, collections, and other objects. To display the Locals window, click Windows from the Debug menu and then click Locals.

On the Debug menu, click Stop Debugging, press Shift+F5, or click the icon in the Debugging toolbar, to stop code execution.

Uncomment the code in the three files whose names start with Debug. Then comment out the code in the three files whose names start with NonDebug, or select those three files in the Solution Explorer window, right-click, and then click Exclude From Project.

On the Debug menu, choose Start with the same breakpoint set. When the application breaks, observe the details in the Locals window values column, which now has additional information provided by debugging attributes.

To provide an example of using a debugging attribute, the DebugCustomer.cs file contains the following DebuggerDisplay attribute for the CustomerName class:

[DebuggerDisplay("{(FirstName == null) ? \"\":FirstName} {LastName} ==> (Customer ID = {Id})")]

DebuggerTypeProxy(typeof(CustomerNameDebugView))]

public class CustomerName

When debugging, this attribute displays the following value in the Locals window. The name cn is an instance of a CustomerName object.

Without the attribute, the Locals window displays the following: | https://msdn.microsoft.com/en-us/library/k7deak79(v=vs.90).aspx | CC-MAIN-2018-13 | refinedweb | 548 | 55.74 |

/* * mutex.h * * Mutual exclusion thread synchon.14 2005/11/25 00:06:12 csoutheren * Applied patch #1364593 from Hannes Friederich * Also changed so PTimesMutex is no longer descended from PSemaphore on * non-Windows platforms * * Revision 1.13 2005/11/08 22:31:00 csoutheren * Moved declaration of PMutex * * Revision 1.12 2005/11/08 22:18:31 csoutheren * Changed PMutex to use PTimedMutex on non-Windows platforms because * sem_wait is not recursive. Very sad. * Thanks to Frederic Heem for finding this problem * * Revision 1.11 2005/11/04 06:34:20.10 2003/09/17 05:41:58 csoutheren * Removed recursive includes * * Revision 1.9 2003/09/17 01:18:02 csoutheren * Removed recursive include file system and removed all references * to deprecated coooperative threading support * * Revision 1.8 2002/09/16 01:08:59 robertj * Added #define so can select if #pragma interface/implementation is used on * platform basis (eg MacOS) rather than compiler, thanks Robert Monaghan. * * Revision 1.7 2002/01/23 04:26:36 craigs * Added copy constructors for PSemaphore, PMutex and PSyncPoint to allow * use of default copy constructors for objects containing instances of * these classes * * Revision 1.6 2001/05/22 12:49:32 robertj * Did some seriously wierd rewrite of platform headers to eliminate the * stupid GNU compiler warning about braces not matching. * * Revision 1.5 1999/03/09 02:59:50 robertj * Changed comments to doc++ compatible documentation. * * Revision 1.4 1999/02/16 08:12:22 robertj * MSVC 6.0 compatibility changes. * * Revision 1.3 1998/11/30 02:50:59 robertj * New directory structure * * Revision 1.2 1998/09/23 06:20:55 robertj * Added open source copyright license. * * Revision 1.1 1998/03/23 02:41:31 robertj * Initial revision * */ #ifndef _PMUTEX #define _PMUTEX #ifdef P_USE_PRAGMA #pragma interface #endif #include <ptlib/critsec.h> #include <ptlib/semaphor.h> /**This class defines a thread mutual exclusion object. A mutex is where a piece of code or data cannot be accessed by more than one thread at a time. To prevent this the PMutex is used in the following manner: \begin{verbatim} PMutex mutex; ... mutex.Wait(); ... critical section - only one thread at a time here. mutex.Signal(); ... \end{verbatim} The first thread will pass through the #Wait()# function, a second thread will block on that function until the first calls the #Signal()# function, releasing the second thread. */ /* * On Windows, It is convenient for PTimedMutex to be an ancestor of PSemaphore * But that is the only platform where it is - every other platform (i.e. Unix) * uses different constructs for these objects, so there is no need for a PTimedMute * to carry around all of the PSemaphore members */ #ifdef _WIN32 class PTimedMutex : public PSemaphore { PCLASSINFO(PTimedMutex, PSemaphore); #else 00131 class PTimedMutex : public PSync { PCLASSINFO(PTimedMutex, PSync) #endif public: /* Create a new mutex. Initially the mutex will not be "set", so the first call to Wait() will never wait. */ PTimedMutex(); PTimedMutex(const PTimedMutex & mutex); // Include platform dependent part of class #ifdef _WIN32 #include "msos/ptlib/mutex.h" #else #include "unix/ptlib/mutex.h" #endif }; // On Windows, critical sections are recursive and so we can use them for mutexes // The only Posix mutex that is recursive is pthread_mutex, so we have to use that #ifdef _WIN32 typedef PCriticalSection PMutex; #else typedef PTimedMutex PMutex; #endif #endif // End Of File /////////////////////////////////////////////////////////////// | http://pwlib.sourcearchive.com/documentation/1.10.0/mutex_8h-source.html | CC-MAIN-2018-13 | refinedweb | 552 | 55.44 |

Bokeh is a Python package that helps in data visualization. It is an open source project. Bokeh renders its plot using HTML and JavaScript. This indicates that it is useful while working with web-based dashboards.

Bokeh can be easily used in conjunction with NumPy, Pandas, and other Python packages. It can be used to produce interactive plots, dashboards, and so on. It helps in communicating the quantitative insights to the audience effectively.

from bokeh.plotting import figure, output_file, show xs = [[5, 6, 9], [2,3,6], [4, 3, 7, 1]] ys = [[6, 5, 8], [3,0,8], [2, 3, 6, 8]] fig = figure(plot_width = 400, plot_height = 200) fig.patches(xs, ys, fill_color = ['red', 'blue', 'green'], line_color = 'white') output_file('patchplot.html') show(fig)

The required packages are imported, and aliased.

The figure function is called.

The ‘output_file’ function is called to mention the name of the html file that will be generated.

The data is defined as a list of lists.

The width and height of the plot are defined within the ‘figure’ function.

The ‘patches’ function present in Bokeh is called.

The ‘show’ function is used to display the plot. | https://www.tutorialspoint.com/how-can-patch-plot-with-multiple-patches-be-visualized-in-bokeh | CC-MAIN-2021-31 | refinedweb | 191 | 68.57 |

Monday Motivation Hack: Use Your PTO

You think you’re locking in your career by never missing a day.

You’re not alone.

Research shows that more than a quarter of workers fear that taking time off will make them seem less dedicated. Others think that vacation-time martyrdom will boost their chances for a raise or a promotion (it doesn’t).

But, this (very American) cultural phenomenon of rarely taking time off and almost never using all of one’s vacation days is bad news for employers and employees alike. And managers know it.

According to Project: Time Off, managers agree that paid time off (PTO):

- improves health and well-being (82 percent)

- relieves burnout (81 percent)

- increases employees’ focus after return (78 percent)

- improves employees’ commitment to their jobs (70 percent)

So, if the boss is on board, why did Americans donate 658 million vacation days to their employers in 2015?

More than 66 percent of employees report getting mixed or negative messages about time off and just don’t want to take the risk, deal with the stress, or let the work build up.

However, there are important reasons to make PTO a priority.

Why You Absolutely Must Start Using Your PTO

Recently, we explored the benefits of self-care and treating yo’self for motivation and productivity. Using up your PTO takes these ideas to the next level, and the benefits are just as profound.

- Vacation can save your life—literally. Research shows that high-risk middle-aged men who took regular vacations were less likely to die of all causes and significantly less likely to die of heart attack during the course of a nine-year study. Vacation has also been shown to have an effect on your body and mind similar to that of meditation.

- Time off is critical to self-care, creativity, and motivation. Though research shows the halo-effect of vacation is short lived, it is a vital part of recharging your batteries and your willpower.

How to Get the Most from Your PTO

Put a little excitement in this Monday, and do these things today:

- Find out how many vacation days you currently have.(Check with HR if you have questions about how or when you need to use PTO.)

- Mark out paid holidays on your calendar.

- Pick three fun things you could do with the vacation time you have.

When you have an idea of what you’re working with, there are a few best practices that will set you up for success when you do take time off.

Plan ahead.

Planning is highly correlated with increased use of time off. Many people fear the amount of backed-up work they’ll come back to if they take time off. By wrangling your workload effectively, you will be able to build in adequate buffers to your vacation time and remove the stress that can accompany time off.

Plan around slow seasons.

Take advantage of lulls in your industry to minimize backlogged work before and after vacation.

Piggy-back on holidays.

A lot of people take vacation whenever they can squeeze it in. By planning, you are able to optimize not only workflows but also total time off, getting the maximum bang for your days-off buck.

Communicate with your team.

Advanced notice to your team—with regular reminders—works wonders. You can set early deadlines, and your colleagues will often cooperate to make project requests farther in advance so you’re not bottlenecked before or after your time off.

Make vacation planning a reward.

By planning far-ish in advance for PTO, you get to look forward to your coming vacation. It’s fun to see the details come together. Plus, on rough days, it can be highly motivating to have something concrete to look forward to/daydream about.

Now that you know how much time off you have, when some good times to go on vacation are, and how you’re going to plan for that time, let go of the worry that your boss will be angry or that you’ll fall behind, and plan a trip!

How much vacation do you take? How do you prepare for it and what tips would you share?

| https://www.grammarly.com/blog/monday-motivation-hack-use-pto/ | CC-MAIN-2018-26 | refinedweb | 707 | 70.73 |

Debugging QML Applications

Console API

Log

console.log, console.debug, console.info, console.warn and console.error can be used to print debugging information to the console. For example:

function f(a, b) { console.log("a is ", a, "b is ", b); }

The output is generated using the qDebug, qWarning, qCritical methods in C++ (see also Debugging Techniques).

Assert

console.assert tests that an expression is true. If not, it will write an optional message to the console and print the stack trace.

function f() { var x = 12 console.assert(x == 12, "This will pass"); console.assert(x > 12, "This will fail"); }

Timer

console.time and console.timeEnd log the time (in milliseconds) that was spent between the calls. Both take a string argument that identifies the measurement. For example:

function f() { console.time("wholeFunction"); console.time("firstPart"); // first part console.timeEnd("firstPart"); // second part console.timeEnd("wholeFunction"); }

Trace

console.trace prints the stack trace of the JavaScript execution at the point where it was called. The stack trace info contains the function name, file name, line number and column number. The stack trace is limited to last 10 stack frames.

Count

console.count prints the current number of times a particular piece of code has been executed, along with a message. That is,

function f() { console.count("f called"); }

will print

f called: 1,

f called: 2 ... whenever

f() is executed.

Profile

console.profile turns on the QML and JavaScript profilers. Nested calls are not supported and a warning will be printed to the console.

console.profileEnd turns off the QML and JavaScript profilers. Calling this function without a previous call to console.profile will print a warning to the console. A profiling client should have been attached before this call to receive and store the profiling data. For example:

function f() { console.profile(); //Call some function that needs to be profiled. //Ensure that a client is attached before ending //the profiling session. console.profileEnd(); }

Exception

console.exception prints an error message together with the stack trace of JavaScript execution at the point where it is called.

Debugging Module Imports

The

QML_IMPORT_TRACE environment variable can be set to enable debug output from QML's import loading mechanisms.

For example, for a simple QML file like this:

import QtQuick 2.3 Rectangle { width: 100; height: 100 }

If you set

QML_IMPORT_TRACE=1 before running the QML Scene (or your QML C++ application), you will see output similar to.

Enabling the Infrastructure

You have to explicitly enable the debugging infrastructure when compiling your application. If you use qmake, you can add the configuration parameters to the project .pro file:

- Qt Quick 1:

CONFIG+=declarative_debug

- Qt Quick 2:

CONFIG+=qml_debug

If you use some other build system, you can pass the following defines to the compiler:

- Qt Quick 1:

QT_DECLARATIVE_DEBUG

- Qt Quick 2:

QT_QML_DEBUG

Note: Enabling the debugging infrastructure might compromise the integrity of the application and system, and therefore, you should only enable it in a controlled environment. When the infrastructure is enabled, the application displays the following warning:

QML debugging is enabled. Only use this in a safe environment.

Starting Applications

Start the application with the following arguments:

-qmljsdebugger=port:<port_from>[,port_to][,host:<ip address>][,block]

Where

port_from (mandatory) specifies either the debugging port or the start port of a range of ports when

port_to is specified,

ip address (optional) specifies the IP address of the host where the application is running, and

block (optional) prevents the application from running until the debug client connects to the server. This enables debugging from the start.

After the application has successfully started, it displays the following message:

QML Debugger: Waiting for connection on port <port_number>

Connecting to Applications

When the application is running, an IDE or a tool that implements the binary protocol can connect to the open port.

Qt provides a

qmlprofiler command line tool to capture profiling data in a file. To run JS, inspecting the object tree, and profiling the activities of a QML engine. For more information, see Qt Creator: Debugging Qt Quick. | https://doc.qt.io/archives/qt-5.5/qtquick-debugging.html | CC-MAIN-2021-39 | refinedweb | 673 | 50.02 |

DNS configuration for single server LAN

Point your clients to your local dns server. Setup your dns server to "forward" to your isp's server.

DNS configuration for single server LAN

My DNS server is my one and only domain controller. As such, its start of authority begins at the root leve, which means it cannot be configured for forwarding. If there is a way to actually implement your idea, feel free to explain: I'll try itand award the points to you if it works.

DNS configuration for single server LAN

Hi Todd,

their are two Methods of configuring DNS for your office. The first Method is, like you set it up, the user has to know only one namespace for intra and internet use. This method is extremly heavy to administer, you must configure your firewall espically so no dns traffic from inside goes to outside, since your using the offical root-level Domain and your not registred.

The other method is much simpler to administer, but the user had to recognize two diffrent Namespaces. Create a new Zonefile naming like .INTR for your intranet and configure the forwarder, an DNS-Server from your ISP. Create a Domain you like MYOFFICE, so your DNS looks like MYoffice.intr .

regards

Frobo

DNS configuration for single server LAN

I am probably missing something, but, as I mentioned in response to the first suggestion, I am unable to set forwarding on the DNS service because is has root level authority. I think I need a little more detail to understand how to make use of yoursuggestion. Can you point me to any information on splitting up the zone so that my DNS server does not have root level authority, which it has by default, so I can try the rest of your idea? I know next to nothing about configuring zones of authority. Thanks.

DNS configuration for single server LAN

DHCP does not play well with NAT i would recommend you disable it on your router then follow te first post

DNS configuration for single server LAN

DHCP is important on a network with downlevel clients because it works with the DNS server to populate the WINS table automatically. It also makes management of the network much easier. I have it working just fine behind my firewall/router, which is performing NAT.

DNS configuration for single server LAN

I have setup primary domains on my NT-4 Server/Microsoft DNS Server system for my local network. On the first screen of DNS Manager add your server, create a new domain, then create a new reverse domain (333.222.111.in-addr.arpa). Go to the forwarddomain and enter your hosts making sure to update the PTR records (which are placed in the reverse domain). Go to the property sheet for your server and set your ISP's DNS server in the "forward to" field.

Your clients must point to your server for DNS. Your server forwards requests it can't answer to your ISP and returns the results to the client.

This method works here and you should be able to make it work for you.

DNS configuration for single server LAN

Do you have the DNS entry for the server's NIC set to the DSL router or to itself. It needs to point to itseld as it is a DNS server.

Try this quick test. Open the DNS MMC from another Windows 2000 client on your LAN. Connect to the Server (in the DNS MMC). Rt click the server name then go to properties. Select the Monitoring tab. Run both tests. This should not be done while on the server as it can return eroniuos results. Did they pass?

If you have your DNS set at root authority it will not be able to hit the internet. The following is straight from MS Study materials:

"To allow your DNS server to perform name lookups on the Internet, ensure that you have not configured a root zone on the server, and that the ROOT HINTS tab in the PROPERTIES dialog box of the server contains a list of servers that are authoritive for the root zone of the internet"

Please feel free to email me screen shots of every properties entry under that zone.

I would suggest deleting and recreating the zone.

Good Hunting!

DNS configuration for single server LAN

The Win2K Server itself is able to browse the internet because it has a DSL router, which is performing NAT, assigned as both a gateway and a DNS server.

I have no proxy server. I expect to install Exchange 2000 on the same machine that is running Windows 2000 Server soon. Eventually, I might host a web site on my server, butright now my web site is hosted by my ISP, which also hosts the authoratative name servers associated with my registered domain name.

I don't mind if the local DNS server gets confused when clients on the LAN try to hit portions of my registered namespace that lie outside my firewall, so long as the clients can get names resolved for the rest of the internet.

Ideally, I would like the DNS to recognize its start of authority at lan.registeredname.com, but by default it sees its start of authority at the root level. If it would just take responsibility for addresses on the LAN and pass everything else to the DSL/Ethernet router, which in turn queries my ISP's DNS servers, it seems like everything should work fine.

The other issue Iam unsure of is how to set up replication given that I only have one DNS server and it really doesn't control anything other than the LAN.

So to boil it down, my questions are:

1. How can I set up my DNS so that it will server Windows 2000 andWindows 9x clients on my LAN?

2. How should I configure replication so that my solo DNS server gets the information it needs but does not become a problem on the internet?

This conversation is currently closed to new comments. | http://www.techrepublic.com/forums/discussions/dns-configuration-for-single-server-lan/ | CC-MAIN-2017-09 | refinedweb | 1,017 | 69.01 |

Recently I was having a look at the online job and internship situation online. Though I started with React, soon Vue.js became my preferred frontend framework. So, when I discovered that most of the online jobs and internships look for React developer, rather than Vue.js developer, I found myself re-learning react from the ground up. Today, in this post I'm going to talk about the points I noticed as a vue.js developer learning react.

Note: All the comparisons are done here based on the "base code" provided by their individual CLIs. So, the simple component structure by importing scripts is not discussed.

0. The CLI:

Both React and Vue.js provide a CLI to set up the projects in an optimal way. In vue.js the CLI takes care of some extra setups like Typescript selection, CSS pre-processor selection, Vuex(state management) setup, Vue Router setup and all that. For doing all those setup in react you need to do it manually separately.

One more advantage of vue CLI over React is that vue CLI also provides a GUI to setup projects.

While the vue CLI being really big it is recommended to install it instead of using it with npx, you can use react CLI with npx directly.

For vue.js you need to install the CLI with this command:

npm i -g @vue/cli

Warning: The old vue CLI is known as

vue-cli. So, if you have that uninstall that and install this new CLI.

Once installed you can use this CLI to create a new Vue.js application:

vue create new-project

However, if you don't want to go through all this slow setup process you can use vite to create vue app in no time, but it is not for production-level apps yet.

npm init vite-app test

On the other hand, to set up a react project with CLI you can use this command:

npx create-react-app test

Or, you can first install this CLI globally and then use it:

npm install -g create-react-app create-react-app test

Did you know? you can use vite for creating react apps quickly as well? Well the command for that will be:

npm init vite-app react-app --template react

1. The component structure:

In vue.js we generally work with components with

.vue extension. These files have three parts:

a. The HTML layout in

<template></template> part.

b. The CSS part in

<style></style> tags.

c. The logical JavaScript part in

<script></script> tags.

Example Vue component:

<template> <h1>Hello World</h1> </template> <script> export default { name: "HelloWorld", props: {}, data() { return { //if any data is required then it is passed here } }, methods: { //all the required methods go here... } } </script> <style scoped> h1 { text-align: "center" } </style>

While in react we work with components delivered by plain Javascript files, sometimes

.jsx files. According to the modern react function based component structure, you will need a function returning HTML like markup(JSX) from a JS function. A typical react function based component looks like:

import react from 'react'; function HelloWorld(props){ //all the component based state/varibales/data and methods can go here return( <h1>Hello World</h1> ) } export default HelloWorld;

2. The HTML/Markup

For any webpage, the most important thing is the markup. In Vue.js you can write your component's markup directly in normal HTML inside the

<template></template> tags.

But in React as your component is returned by a JavaScript function via jsx, so there are some small changes:

a. You can't use

class and similar reserved words in JavaScipt. So you will need to use some alternatives provided in jsx like

className instead of

class

b. pretty much all the HTML element properties are to be written in "camelCase"

3. Dealing with style

In vue.js the style can be defined directly inside the component. It can be written in normal CSS syntax easily the

<style></style> tags. From here you can easily change the language used for styling(SCSS, LESS etc., if it is configured) or you can simply change the scope of the stylesheet.

In React.js there are two ways to define styles:

a. You can write a CSS in a separate

.css file and can easily import it in your react component like so:

import "./app.css"

However, this styling is globally applied.

b. You can write CSS as a JavaScript object using JSS

import React from "react"; import injectSheet from "react-jss"; const styles = { center: { textAlign: "center" } }; const HelloWorld = ({ classes }) => ( <h1 className={classes.center}> Hello World </h1> ); const StyledWorld = injectSheet(styles)(HelloWorld); export default StyledWorld

I avoided the inline CSS part as I don't prefer it as a good method to write CSS

4. Dynamic Variables:

In vue.js the data is normally managed using the data() method where we define a function which returns all of our required data. Then using the

methods we define the functions we can call later to modify the values of the defined data.

But in react you need to use hooks to define both the required data as well as the main method which need to be called to change that data.

To render the value of these dynamic variables directly in the website in Vue we use the

{{variable name}} template literal:

<template> <h1> {{requiredVariable}} </h1> </template> <script> export default{ data(){ return( requiredVariable: "Hello" ) }, methods: { //... } } </script>

While in React we can do the same by using

{variable_name} template literal:

import React, {useState} from 'react' function Home() { const [requiredVariable, setRequiredVariable] = useState("Hello"); //any function which might dynamically update the link by calling setRequiredVariable inside it return ( <h1> {requiredVariable} </h1> ); } export default Home;

4-a Getting and Passing props to elements

In both vue.js and react the props are generally passed directly to the element. Example (with an element named ChildComponent):

<ChildComponent message="Hello" />

Now you can also pass any dynamic data from the parent to the child component. For vue.js, that can be easily done by using the

v-bind or

: directive. Example:

<ChildComponent :

Note: Here a data needs to be predefined with the variable name count so that you can bind it.

If you want to do the same thing with react it would look like:

<ChildComponent count={count}/>

Note: Similar to the vue.js disclaimer, you need a count variable predefined in your component to be used here

Now, how to receive the props? For that, in vue.js in the export part of the

<script> we need to define the props key with an object with details of the props received by that element, with the prop type and the prop name. That will look somewhat like this for a component receiving "message" as a prop:

export default { name: "ChildComponent", props: { count: Number }, data() { return { //other data for this component }; }, methods: { //methods for this component } }; </script>

In react the same thing will be received in a functional component like this:

import React from "react"; export default function ChildComponent(props) { //this is extracting all the props from the general prop object passed. Here I have used object restructuring for that. This can also be done in the function arguments. const { message } = props; return( <div> <h1>{props.message}</h1> {/* The same thing done with the pre extracted variable */} <h1>{message}</h1> </div> ) }

Note: If you are using react route then you get extra information in the props. While using vue.js router you get similar router related information in the special

$route.paramsvariable. This is important info for doing routing in vue.js and react

5 Using Dynamic Variable for HTML Element properties:

If you want to set HTML element properties like href value of a link or src value of an image element (i.e where you need only one-way data binding, as they can't be directly changed in the frontend) you can use

v-bind or its shorthand

: to bind their values to the dynamic data we have in the element. Example:

<template> <div> <a v-bind:Dynamic link by v-bind</a> <a :Dynamic link by :</a> </div> </template> <script> export default { name: 'App', data() { return { // other data linkDynamicallySet: "" } }, methods: { //required methods } } </script>

If you want to do the same in the react, you can directly use the template syntax. Example:

import React, {useState, useEffect} from 'react' function Home() { const [linkDynamicallySet, setLinkDynamicallySet] = useState(""); //any function which might dynamically update the link by calling setLinkDynamicallySet inside it return ( <a href={linkDynamicallySet}>Dynamic link</a> ); } export default Home;

6. Calling the DOM events like "onclick":

React is different from vue.js in the aspect of calling DOM events like "onclick" and others. While in react you can call any DOM events using their "camelCased" version, in vue.js you can call these events using the

v-on directive or the shorthand of that i.e

@ to call the methods defined in script on DOM events.

In vue calling an onclick event on a button looks like:

<button @click me</button>

In react calling onclick event on a button looks like:

<button onClick={updateNum}>click me</button>

Here I have presumed all the other functions are defined properly and accordingly

Did you know? In vue.js

v-ondirective you can call .prevent to execute the

e.preventDefaultwithout dealing with the event object directly in your method

7. Handling form input:

In vue.js you can use the already available

v:model for a direct both way binding to update your predefined "data" easily.

While in react, due to the absence of such directive you need to define how to update your state according to the change of your input field and set the value of the input field according to the state variable.

A simple Vue based form will look like:

<template> <form v-on: <input type="email" v- <input type="submit" value="Subscribe" /> </form> </template> <script> export default{ data(){ return( email: "" ) }, methods: { sub(){ //all the required subtask } } } </script>

Same code in react will look like:

import React, { useState } from "react"; export default function App() { const [email, setEmail] = useState(""); const sub = (e) => { //all required task } return ( <div> <form onSubmit={(e) => sub(e)}> <input type="email" value={email} onChange={(e) => setEmail(e.target.value)} /> <input type="submit" value="Subscribe" /> </form> </div> ); }

Conclusion

Well, this is just the tip of the iceberg. There are a lot of things which I left untouched, most notable among them is state management. I have my very first blog post discussing redux vs context API. I would like to do a similar post about vuex and redux in a separate post. Until then stay home, and keep coding😉.

Posted on by:

Ayushman Bilas Thakur

Web development is my ❤. I love writing blog posts and experimenting with new things!

Discussion | https://practicaldev-herokuapp-com.global.ssl.fastly.net/ayushmanbthakur/react-for-vue-js-user-my-experience-3a2n | CC-MAIN-2020-40 | refinedweb | 1,801 | 61.87 |

MIB Smithy SDK version 3.0 and later include an optional package that provides interfaces emulating those of the widely used (but no longer actively maintained) Scotty/TNM package. The intent of this package is to aid migration from Scotty/TNM by allowing the SDK to be a drop-in replacement for most applications while allowing the use of the SDK's own APIs in parallel.

To enable the Scotty/TNM interfaces, you will need to edit the pkgIndex.tcl file in the lib/smithysdk directory and uncomment the appropriate line to make the package visible to Tcl. If you are currently using the 3.0 alpha version of Scotty, you will want to uncomment the line for Tnm version 3.0:

package ifneeded Tnm 3.0 [list source [file join $dir tnm3.0.tcl]]

Otherwise, you will want to uncomment the line providing version 2.0:

package ifneeded Tnm 2.0 [list source [file join $dir tnm2.0.tcl]]

The main difference between these two packages is that the 3.0 version places the "mib" and "snmp" commands in the Tnm namespace, whereas Scotty provided them in the global namespace in earlier versions. The interfaces provided by MIB Smithy SDK should otherwise support the interfaces of both versions, despite some incompatible changes made between Scotty versions.

The following features are not currently supported or have known caveats at this time:

The following sections may be helpful in migrating your scripts from Scotty to use MIB Smithy SDK's native APIs, which are generally faster and more flexible. | http://www.muonics.com/Docs/MIBSmithy/DevGuide/tnm.php | CC-MAIN-2014-52 | refinedweb | 258 | 56.35 |

Introduction

This clock uses the classic video game Pong to tell the time. The 2 players automatically win and lose so their scores show the hours and minutes. It’s not too hard to build and should cost less than £60 / $100. All the parts are easily available on eBay and the software code is free!

The clock has lots of different display modes to choose from:

- Pong Clock

- Large Digits

- Time written in words, e.g. “Ten Past Twelve”

- Time and date with seconds

- Time and date with seconds and a slide effect

- Time and date with seconds and a jumbled character effect

- Date display with printed retro flashing cursor effect

- 12/24 hour option

- Brightness option

- Random clock mode option (changes the display mode every few hours)

- Daylight saving option to add an extra hour

- Push button driven menus for setup & display selection.

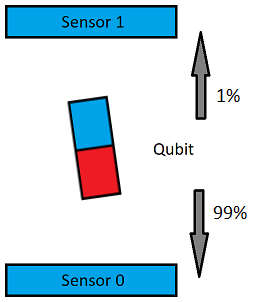

The project uses 2 bright LED matrix panels from Sure Electronics. You can choose between green or red panels with 3mm or 5mm LED’s . An Arduino runs the main code and a DS1307 clock chip keeps time, even when the power is off.

Parts List

- 2 x Sure Electronics 2416 LED Matrix panels (24×16 pixels) in Red or Green (Make sure you get the version based on the HT1632C chip and not the HT1632 – more detail below).

- 1 x Arduino Duemilanove / Uno with ATmega 328 CPU

- 1 x DS1307 real time clock chip and IC socket

- 1 x Crystal oscillator for the DS1307

- 1 x 3.3v coin cell battery and battery holder for the DS1307

- 1 x Arduino prototyping shield

- 1 x Breadboard and jumper wire for testing or if you don’t want to solder (optional)

- 1 x 16 Pin IDC Socket for the display connection

- 2 x Push to make buttons

- 1 x USB Lead

- 1 x Mains to USB power adapter

Plus wire, solder, tools, time, sweat, tears, etc.

Parts in Detail

2 x Sure 2416 LED Matrix You can get these from eBay for around $20 each. They do a red or green display, and with 3mm or 5mm LEDs. My clock in the video is made with the 3mm green displays. Make sure you get the newer version of the display which is based on the Holtek HT1632C chip. You can tell the newer displays as they have the controller chip and DIP switches on the back. The front is relatively empty of components as you can see: clock chip. places like Adafruit, Sparkfun Electronics, Cool Components, Seeed Studio or eBay.

Arduino ‘Diecimila’ with ATmega 328 CPU.

1 x Arduino Prototyping Shield and Header pins. The prototyping board or ‘shield’ plugs on the top of the Arduino making it easy to add other components. It brings the Arduino’s input and output pins onto a circuit board that you can solder things to. Get a board which is designed for a chip to go on, i.e. one that brings each pin of the chip out to a solder pad you can connect wires to. (You can see this area on the bottom left of the board in the picture below.)

Again places like Sparkfun or eBay are good sources for them. You should be able to pick one up for $15 – $20. Check you get the black header pins too as some boards are sold without them. These are the pins that you solder to the edges of the board so it will plug into the rows of sockets on the Arduino.

If you don’t like the idea of soldering, you could get a breadboard and jumper wire to build the circuit on temporarily instead. This is a good idea anyway to test it’s all working. solder it to the shield so we can plug the display ribbon cable in. They are a couple of dollars on eBay. If you aren’t planning to solder, you don’t need this.

2 x ‘Push to Make’ Buttons. These are used to set the time / change the display mode. You can get a pack on eBay for a dollar or two. Any buttons will do, I got these ones that mount on the shield.

1 x.

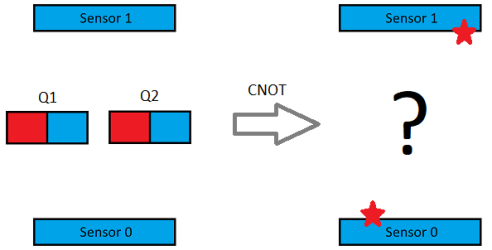

Circuit diagram

This is how it’s all connected…. not too difficult.

IMPORTANT!

Due to my crappy skils at diagrams the schematic above is a bit off.

- The 2 push buttons are not shown in the above diagram. You will need to connect one button between Arduino digital pin 2 and GND. The other button needs to go between Arduino digital pin 3 and GND.

- The pin layout on the DS1307 is not exact. Pin 8 should really be top right opposite pin 1. All the pin numbers are correct in terms of what they connect to however, so follow the pin numbering and you’ll be fine.

Connecting it up!

LED Displays

The displays use a serial protocol called SPI to receive data. There are only 4 wires needed between the Arduino and the first LED display. Two more wires are required for power.

You’ll notice each display has 2 connectors on the back. These are for daisy chaining multiple units together as we are doing. It’s what the little ribbon cable in the box is for. Connect the displays together using the ribbon cable. You can use either connector on the back – they are wired. If your displays light up the wrong way round, just swap their identities using these switches..

A display with dip switch set as number 1.

Clock Chip

The DS1307 clock chip keeps the time. It uses something called. I’ve had reports of people saying their clock doesn’t tick, only to find out they have omitted the battery, so don’t skip this!

Push Buttons

Connect one push button between Arduino digital input pin 2 and GND, the other between Arduino digital input pin 3 and GND.

Power

We’ll supply power in using the USB port on the Arduino and then connect everything to the Arduino’s 5v and GND pins. Connect the 5v pin from the Arduino to the 5v pins on the display and DS1307. Then connect the GND pin from the Arduino to GND pins on the display and DS1307.

A word of caution with the power: Don’t use a higher voltage supply into the Arduino’s round power jack. The Arduino’s onboard regulator will not be able to cope with the power demand the displays draw. If you try you’ll notice the Arduino will get very hot very quickly and probably not last too long!

Also be very careful if you are powering the clock from your computer’s USB port for testing. You could easily damage the port if you have a connection wrong or if the computer can’t supply enough juice. I powered my setup with my Macbook for testing and all was well, but be warned!

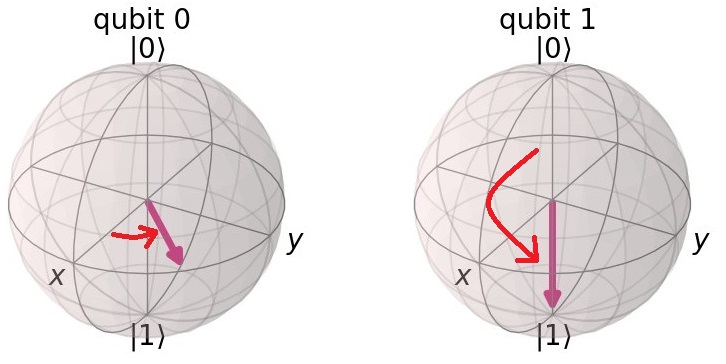

Prototyping shield

The prototyping shield plugs into the Arduino making it easy to add components. On a standard prototyping board you should have room to solder the DS1307 clock, crystal, battery, buttons and the IDC socket for the display ribbon cable. left for the display cable, in the middle is the DS1307 clock chip. The tiny silver capsule below the chip is the crystal. On the right is the coin cell. Below that are the 2 push buttons for setting the mode and time. The red button! Arduino’s site here:

Once the IDE is installed, download the clock code from my page on GitHub by clicking the “Download Zip” button bottom right.

Unzip the clock code zip file. Inside you will see the main clock sketch called pongclock5_1.ino and 4 library folders called ‘Button’, ‘RTClib’, ‘Font’ and ‘ht1632c’. These libraries are extra bits of code needed by the main clock sketch.

Next install the libraries: When you installed the Arduino software it should have created a folder somewhere for your sketches (projects) to go in. Find that folder and see if there is a folder called libraries inside. If there isn’t, create one. Then copy the 4 library folders from the zip file into the libraries folder. After you have done – the file ending in .ino. You should see the main clock code appear in the window. Now pick your Arduino Board Type in the Tools -> Board menu. Then hit the Verify – the check mark icon. This tests the code and should compile without errors. If you do get errors here, you’ve most likely not got the 4 libraries in the right place.

OK, and displays. Then plug the USB lead from the Arduino into the mains to USB adapter.

All being well the display should spring to life. libraries menu

- Make sure you have restarted the Arduino IDE after adding the libraries.

- Check you are using Arduino software version 1.6.5.

I get an error uploading to the Arduino:

- Check your board type and serial settings are correct in the Tools menu.

- Check your Arduino has 32K RAM or more.

The clock doesn’t change:

- Often a wiring issue. Check the LED on Pin 13 of the Arduino flashes. If not then the clock chip is not being read. Check your connections to the DS1307.

- Connect the clock to a computer running the Arduino IDE. Click the serial console button and set it to 57600 baud. You should see the time being printed to the console if the clock is being read. Check the wiring as above if it’s not.

- You must have the coin cell battery connected for the clock to work.

The displays don’t light up

- Check your wiring to the ribbon cables and check the display is getting enough power.

- Try another power supply.

- Remove one display and see if you get half the screen. If you do, then most likely not enough power is being supplied.

The 2 displays show the same half of the clock or the displays are the wrong way around.

- Check the DIP switch settings on the displays are as per the instructions – one set to CS1 and the other CS2. If the displays are the wrong way around, change the display set to CS2 to CS1 and the other from CS1 to CS2.

The text display is garbled after upgrading to 5.1

- Make sure you have replaced the font.h library file with the new one in the 5.1 download.

If you have the older Sure LED Displays

The older LED displays from Sure use a different type of LED driver chip called the Holtek HT1632. You can tell these displays as they have the chips on the front of the LED board. If you have these, you need to replace the ht1632c.h library file in the normal clock code download with this file. Quit and relaunch the Arduino IDE to pick up the new file, then load up the main pong clock .ino sketch file. Next look for this line near the top of the code:

#include <ht1632c.h>

and change it to…

#include <ht1632.h>

Then verify and upload the code as per the instructions above.

French version of Pong Clock with Space Invaders, Sleep Mode & more!

Thanks to Matock who has taken my original pong code and done some more awesome stuff with it, including extra modes like space invaders and a sleep timer. He’s also translated the text to French. If you want an English version you’ll need to ask him nicely in the comments to change bits back! See below for the full list of his changes and a download link:

*…)

Matock’s version is called 5.1FR. Here is his download link.

Thanks…

Thanks to everyone who contributed, including WestFW on the Arduino forum for providing the initial LED driver code, SuperTech-IT over at Instructibles, Alexandre Suter for help with the conversion to Arduino 1.0, Richard Shipman for the lower case font, Matock for the updates & Kirby Heintzelman for all the testing!

Hello, I came across your code for the Sure 2416 LED matrix. Could you send the font.h file?

Amazing work man! Thanks for sharing everything! I guess I will build my own!

Thanks, if you make one let me know!

Pingback: LED Pong clock - machine quotidienne

Hey, seemed to have taken away the .zip file and just uploaded the .pde. So i can’t find the font file

Hey, sorry about that – now replaced with the .zip.

Nice job. As for the enclosure, you can use a simple pair of plexiglass plates, as I do in Wise Clock 2 (video here:)

Hey Florin, your clocks look great.Did you cut your plastic enclosures yourself? I was thinking of trying some semi opaque plexiglass so you just see the diffused LEDs.

Nice work! I love the clock. Time to upgrade to DS3231! Set it and forget it.

J

Hey that’s cool. Saves the external crystal.

very cool, I may have to build one! Thanks for the detailed build notes.

Looked at it for approx. 30 secs, and decided I _MUST_ build one myself.

Just Ebay’ed the sure-elec led panels.

Happy days!

Hi

Just a quick question but why the 1632c chip spec and not the 1632? What is the difference please?

Mark

Hi,

The Sure display based on the 1632 suffers from getting very dim when a lot of the LED’s are on, whereas their board based on the 1632C doesn’t have this problem. As far as I know it’s because the 1632 needs additional driver circuitry which Sure didn’t implement. The newer 1632C has it built in.

Nick

Thanks Nick

Do you believe then that your code will work with the 1632 displays but the displays will perhaps exhibit just dimming issues?

Mark

Yes it will work, but you’ll need a different library for the 1632, and have to make a coupe of tweaks to the main code – I can email them to you if you are interested. (I had it working on the older 1632 boards before I upgraded them to the 1632C.)

Nick

Yes please send me the code to play with as I have accidentally ordered the 1632 based units and would like something to play with before I can afford the 1632C versions. I am templed by a couple of 32×16 dual colour units though with the 1632C controller.

I assume that you have my email address.

Mark

Hi !

Very nice job !

How do you control Pong ? From the PC ?

No, it’s all done on the Arduino, no PC needed.

Hi,

I’ve got mine up and running!

Atmega328 on breadboard and rtc on a small pcb that I made years ago

Looks awesome!

Hey great news! Glad it worked for you.

Thanks for this project, took a couple of hours to assemble this afternoon – I used a RTC module on a board from bluesmokelabs.com It also took a little while to work out where to connect the switches, your description in the post doesn’t match the photos, but I guess that’s one of deliberate mistakes to keep us on our toes.

It looks very nice alongside my nixie clocks, and I’ll have to build a case for it next week. Then on to try to program some more modes and maybe an alarm sounder…

Thanks again

Hey great to hear you made one. What confused you about the switches (apart from them missing from the schematic)? Maybe I can make it clearer in the instructions.

Love to see the case you come up with too.

Hi, I guess the confusion is in the connection of the switches.

You say connect them between A2/A3 and +5V. This should have been between A2/A3 and GND instead.

Clock is working great here. Etched a custom PCB for this. (so no arduino board needed)

Like Richard, I also have to workout a case for it. (acrylic probably)

Whoops, sorry if that lead you astray – should have checked before I wrote it all up. I’ve changed that now. Thanks for pointing it out.

Pingback: Wolf’s Spoor | Arduino Pong Clock

Hi

I was wondering if you heard of anyone getting this running using the 5mm version of the 2416 boards. I have been trying, following your instructions to the letter, and I get no where. I do have the 1632c.

Cheers

Hi Todd, I had a quick look at the data sheet and they look the same. (If you load up the data sheet from sure for the 3mm and 5mm models they are the same doc!).

So I’m not too sure why they aren’t working :(

BTW – You could ask on the Arduino forum if anyone has the same displays – there are a few threads about these displays.

Nick

It works perfectly with 5mm version

Thanks Denis

Good to know.

Thanks Nick

I figured I would ask you first just in case and the Arduino forums were doing their migration.

Thanks again for you time.

As proof of my earlier postings, see my version of the pong clock:

the button pins should be on the digital side not the analog side as per the program instructions

Good spot – now updated. Thanks.

I adapted your pong code to Wise Clock 3:

Pingback: Pong clocks using LED matrix modules - Hack a Day

Pingback: hackaholicballa - Pong clocks using LED matrix modules

Nick, Many thanks for the clock design. After waiting for a couple of weeks for the LEDs to arrive from Hong Kong I now have a red version up and running.

I did spot one thing on the circuit layout diagram which seems to differ from your photographs. The connections to the DS1307 seem to be the wrong way around. Using your numbering I have:

pin 5 to +5V

pin 6 unconnected

pin 7 to Arduino A5

pin 8 to Arduino A4

Many thanks again.

Hey Andy,

Really glad you liked the project and have built one. The red LED’s look great! I wish I had bought the dual colour displays now so I could have a menu option to switch colours.

As for the DS1307. The pinout I have is 5 and 6 to Arduino, 7 is N/C and 8 goes to +5v. Pin 8 is bottom right on my photo.

See the chip pinout here:.

Cheers

Nick

Hi Nick

Agree with the bi-colour LEDs they look great. I might just order one of those from sure and knock another clock together. :-)

I have the same data sheet and that’s how I connected the RTC chip. Perhaps I didn’t explain very well… The diagram that you have showing the connections between components has the pins on the right hand side of the RTC running from bottom to top, when they should go top to bottom (so +5v is opposite pin 1 for the crystal).

Hope that makes more sense.

Cheers

Andy

Ah I get you, diagrams were never my strong point! When I get time I might try and redo it.

Hi Nick,

I’ve made a few updates to the Arduino sketch, and was going to upload it to the google code project (but couldn’t work out how).

Have changed the normal_ clock to animate the digits, so when the time changes the changing digit scrolls down off the screen and the new digit scrolls in from above.

Have also added a couple of Binary clock modes.

If you’re interested then drop me a note and I’ll forward the updated sketch.

Cheers,

Andy

Hey, yes definitely – be great to see the new modes.

Nick

Here you go:

Hey Just had a look at your code. I like the new modes -although I’m not sure I’ll ever be able to figure out the time from the binary display!

Hi !

I just saw you pong clock and I must say that it really looks great… I´m using also the 2416 displays but with the older 1632 chip on it. In earlier post I read that you have offered Mark Pepper to send him the code for the old display version. So I would like to ask you if you please can send me the code for the old 2416 displays…. Thank you very much !!!

Hi, just sent it to you.

Nick

Hey Andy,

I have been running your modified code for a while.

Have you seen the new version that Nick has posted?

Any chance that you will incorporate your ideas into this?

I did find an odd bug, when setting up random mode. Sometimes the binary clock is off by ten hours after setting it that way, I can’t remember the exact sequence of vents right now, but I was able to repeat it several times.

Great work though on your additions!

I love seeing activity. It would be really cool if someone would add a seconds readout to the one mode that has time and date. That area of the panels looks like it has enough space for it, and the blank space looks out of balance somehow.

Cheers,

Kirby

Hi Kirby,

Good to hear that you have enjoyed my updates to the pong clock code. Unfortunately I managed to blow my matrices and dont currently have another set to work with, so I cant do any testing. I’ve got another set on the way from China so will take a look once they arrive.

Cheers,

Andy

Hey Kirby, I saw your post and yeah I see what you mean about the unused part of the display that could have seconds in it. I left them out initially as thought it might be too cluttered. Anyhow, I’ve put them in now. Will give you a shout when the new code is up.

Cheers

Nick

Nick,

That’s cool!

I think think it will look good.

Maybe Andy can add his special animation of the rolling digits to this “theme” too!

Good stuff! This really is a very cool project. Wish I knew more so that I could contribute more.

BTW, I will send you some pics of my proto board that plugs on the back, and if I can get motivated, the box I am putting the whole deal in.

Kirby

Hi Nick !

Thanks you for your mail !! and sorry for the late reply…

Sven

Hi,

I was having problems understanding how you brought power to the display boards. When I connected the +5V and GND pins from the display boards to the (I’m assuming) the GND and 5V pins on the Arduino. But I do so, the power light on the Arduino goes off (I guess the Arduino board was drawing too much power). Any suggestions?

Hey, Yes I connect the +5v from the LED boards to the Arduino’s +5v pin, and GND from the boards to the Arduino’s GND pin. Sounds like you might have a short or maybe your power supply isn’t up to it.

Make sure you use a good mains to USB power adapter and connect it to the USB port, or connect a 5v supply to the +5v and GND pins. Don’t use the round power jack on the Arduino.

Nick

Nick,

After retrieving a mains to USB power supply (+5V) there was still no change. So connecting the +5V and GND pins from the display board to the POWER +5V and GND pins should work? Once the +5V pins are connected, the Arduino (Uno) board’s “ON” light cuts off.

DJ

Yes that should work unless you have a short. Both the LED boards and the arduino need 5 volts, so it’s just a case of connecting them together. Have you got a circuit tester – maybe test to see what happens to the voltage when you connect the LED’s.

Nick

Hi i saw this thing and its awesome, now i want to build one my self, Can i connect 4 display modules to make the hole thing bigger? can i use the same code for that?

I am new with programing, but i want to lern how to do.

Sorry for my English, i am from Sweden.

Best regards Axel

Hey, glad you like it. 4 displays would need quite a bit of changes to the code, but you could get the bigger 5mm LED versions of the displays, I have the 3mm ones which are smaller.

Hi, great idea. Very classic and great for gamers! Im curious about turning your idea into a table. That way my coffee table would display the time and play pong as well. Would there be any changes necessary to make it work as effectively as it already does? Thanks

I guess it depends on what you wanted to do. You would maybe have to design new display boards so they could drive individual LED’s to mount in the table. That could be quite a bit of work.

Nick

Hi there, been struggling for a while to get this running before i realised I’m using the ht1632 boards rather than the 1632c. Could you possibly email me the code for the older boards?

Dave.

Sent. Nick

Cheers for that, got the code compiling fine and got the heartbeat on pin13 but no output to the matrix. I’m using the red ht1632 boards, any configuration tricks for them? had a few other demo’s working and I know my RTC works.

Dave.

Hmm. Heartbeat means the clock is ticking and being read OK.

You should see something on the display if all is OK. I have the green boards so not tried the red. Try uploading some of the demo’s in this thread (see the post from WestFW): That’s where I got my code from.

Nick

Nick,

Okay I figured out that some of the connections on my display board wasn’t set up correctly. I have the boards showing up now. Question, is there a way to actually have 2 players be able to play?

That would take some coding and figuring out how to attach 2 controllers to the analogue inputs, it’s possible with some research. There are probably some tutorials on the Arduino site that might help.

Glad you got it up and running though

Nick,

Do you think there is a way to be able to run the game independent of the clock? I don’t have the necessary parts for the clock. I’m doing research right now also trying to figure out how to possibly use 4 push buttons (2 for each player to move up and to move down).

It’s possible, but you would need to change the code significantly.

Nick

Hi DJ,

I have the exact same problem! can you tell me how did you fix it?

Thanks a million!

How can I program four push buttons two for each paddle for a pong game. One being used to move the bat up and the other bat downward? Any ideas would really help!

You’d need to wire them up to 4 spare input pins, then re work the code so it moves the correct paddle up or down, plus you would need to change the scoring, and either strip out all the other clock stuff or add another ‘Game’ mode. I.e. it’s quite a bit of work!

Ok I understand, now in terms of your project I have been able to get it up and running which is fantastic by the way. The only issue I am having is setting the clock. It keeps saying oo:00?

Hi, wow!, what an amazing clock, superb code. I just built one myself. Built a simple but effective case using a perspex sandwich design similar to dotklok casing. Keep up the good work, I just love arduino.

Hi Nick,

You sent me a copy of the code for the older ht1632 controller. I made the changes to the Arduino PDE pong code as per your instructions and replaced the HT1632 file. After uploading to the Arduino and making sure the board was wired up correctly to the display, we had lift off. (Or not). Nothing displayed.

There seems to be a number of versions of the HT1632 file floating around for the 2416 display on the WWW.

Did you get this code working with the older 2416 Green displays. I know the displays are OK as I tried them with a Amicus18 board on another project. Any additional help would be appreciated.

Thanks

Kevin

Hi Kevin,

Yes I had the older versions of the board initially and that was the code I used. I’ve also sent the email you have to a number of other people who have had success using the older displays.

Hard to say what is wrong with your setup. Do you get the flashing LED on the arduino to show the clock is ticking?

Nick

Nick,

The Led on the Arduino comes on but is not flashing.

Tried another arduino unit and same result.

sorry I forgot to say, it comes on, flashes for a few seconds and then remains on.

Hmm. OK just to make sure – this is the LED that is connected to pin 13 on my Arduino. It should flash on when powered up, then stay off for the “Clock version x.xx” message, then start flashing every second when the clock goes in to pong mode.

With the display not connected it will stay off (just tried it). Really difficult to say without looking at it. You might want to see if the demo code from westFW here (see reply 8) works. You may need to adapt the pin numbers. That’s the basics of the library. If not maybe the displays are wired wrong.

Hi Nick,

Yes we are talking about the LED that is connected to pin 13. It it comes on, flashes for a few seconds and then remains on. The same result is seen even with the display not connected.

In terms of the demo file you refer toy, loaded this up and no problems. Worked are described. So either Windows 7 64bit is having a spooky effect on the compiler or my wiring is going wrong somewhere.

Spent ages on the Arduino.cc forum for these displays, a lot of people have a lot of fun with the older 2416 Green units. A couple of the demos produced a mirror effect, seen this before withe Amicus18 , found a fix for the Amiucus and finally the Arduino.

I shall do a bit more work on it later this week, just started working on Nixie Tubes. Even more fun and some interesting high voltages. Still all good fun.

Will let you know if I have any luck, thanks for your help.

Made my own!

I didn’t use your code tho. But thanks for the inspiration !

Hey looks great with the frame!

Hi Nick !

You helped me some weeks ago already and I am really happy with your clock. I used the more exactly working DS3231 real-time clock, and added a DS18B20 for showing the temperature…. So far so good. My question is hopefully without sounding to impudent :

I have 4 of the old yellow Sure 0832 displays and I would like to use them instead of the two 2416 displays. Is there a chance that you can adapt your code for the 0832 displays ? ? Of course you wouldn´t do this for free !!

Regards

Sven

Sven, if I may: you can also read the temperature from DS3231.

As for the 0832 displays, there is also a mechanical challenge in making a bigger matrix. Would be nice to see how you solve that.

Hi Florin ! Yes I know about the possibilty reading the clock from DS3231. But I am afraid that if it is built in a frame the temperature inside will give wrong values and I am not able to measure anywhere outside…. Why is it a mechanical challenge ? I would like to use 4 0832 instead of 2 2416 displays… so the height/dots is the same but it is more width/dots. Like this one here but with the pong clock :

Regards

You are right about the temperature inaccuracy, especially if it’s inside of an enclosure, the values are skewed.

In the video you pointed to, there is a gap between the 2 0832 displays. That is because the displays have the PCB extended so they can be attached (with screws) to something. You would eventually need to cut those extensions, then somehow “glue” the 2 displays together. Not impossible, but challenging (and requires a bit of careful work).

Hi Florin !

I have already cut the extensions and attached the 4 displays together so that there is no gap :-) My problem is the rewriting of the code that splits the information to each of the 4 displays…. Therfore I need somebody :-)

Regards Sven

It’s nice to hear you managed to get rid of the gap. I would like to see your solution.

From what I imagine, the change in the code consists in talking to 4 different HT1632 chips (each controlling a 0832 display) rather than just one (controlling the 2416 display). You will have one CS line to each of the 0832 displays (whose address also needs to be set, with the switches).

A bit of work, but not “rocket science” as they say :)

Hi Florin !