text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91 values | source stringclasses 1 value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

|---|---|---|---|---|---|

Python for Unix/C Programmers Copyright 1993 Guido van Rossum 1

An Introduction to Python for UNIX/C Programmers

Guido van Rossum

CWI, P.O. Box 94079, 1090 GB Amsterdam

Abstract

Python is an interpreted, object-oriented language suitable for many purposes. It has a

clear, intuitive syntax, powerful high-level data structures, and a ßexible dynamic type

system. Python can be used interactively, in stand-alone scripts, for large programs, or

as an extension language for existing applications. The language runs on UNIX,

Macintosh, and DOS machines. Source and documentation are available by

anonymous ftp.

Python is easily extensible through modules written in C or C++, and can also be

embedded in applications as a library. Existing extensions provide access to most

standard UNIX library functions and system calls (including processes management,

socket and ioctl operations), as well as to large packages like X11/Motif. There are also

a number of system-speciÞc extensions, e.g. to interface to the Sun and SGI audio

hardware. A large library of standard modules written in Python also exists.

Compared to C, Python programs are much shorter, and consequently much faster to

write. In comparison with Perl, Python code is easier to read, write and maintain.

Relative to TCL, Python is better suited for larger or more complicated programs.

1. Introduction

Python is a new kind of scripting language, and as most scripting languages it is built around an inter-

preter. Many traditional scripting and interpreted languages have sacriÞced syntactic clarity to simplify

parser construction; consider e.g. the painful syntax needed to calculate the value of simple expressions

like a+b*c in Lisp, Smalltalk or the Bourne shell. Others, e.g. APL and Perl, support arithmetic expres-

sions and other conveniences, but have made cryptic one-liners into an art form, turning program main-

tenance into a nightmare. Python programs, on the other hand, are neither hard to write nor hard to read,

and its expressive power is comparable to the languages mentioned above. Yet Python is not big: the

entire interpreter Þts in 200 kilobytes on a Macintosh, and this even includes a windowing interface!

1

Python is used or proposed as an application development language and as an extension language for

non-expert programmers by several commercial software vendors. It has also been used successfully

for several large non-commercial software projects (one a continent away from the authorÕs institute). It

is also in use to teach programming concepts to computer science students.

The language comes with a source debugger (implemented entirely in Python), several windowing

interfaces (one portable between platforms), a sophisticated GNU Emacs editing mode, complete

source and documentation, and lots of examples and demos.

This paper does not claim to be a complete description of Python; rather, it tries to give a taste of

Python programming through examples, discusses the construction of extensions to Python, and com-

pares the language to some of its competitors.

1. Figures for modern UNIX systems are higher because RISC instruction sets and large libraries tend to cause

larger binaries. The Macintosh Þgure is actually comparable to that for a CISC processor like the VAX (remember

those? :-)

Python for Unix/C Programmers Copyright 1993 Guido van Rossum 2

2. Examples of Python code

LetÕs have a look at some simple examples of Python code. For clarity, reserved words of the language

are printed in bold.

Our Þrst example program lists the current UNIX directory:

import os # operating system interface

names = os.listdir(Õ.Õ) # get directory contents

names.sort() # sort the list alphabetically

for n in names: # loop over the list of names

if n[0] != Õ.Õ: # select non-hidden names

print n # and print them

The Bourne shell script for this is simply ÒlsÓ, but an equivalent C program would likely require sev-

eral pages, especially considering the necessity to build and sort an arbitrarily long list of strings of

arbitrary length. Some explanations:

¥ The import statement is PythonÕs Òmoral equivalentÓ of CÕs#include statement. Functions and

variables deÞned in an imported module can be referenced by preÞxing them with the module name.

¥ Variables in Python are not declared before they are used; instead, assignment to an undeÞned vari-

able initializes it. (Use of an undeÞned variable is a run-time error however.)

¥ PythonÕsfor loop iterates over an arbitrary list.

¥ Python uses indentation for statement grouping (see also the next example).

¥ The module os deÞnes many functions that are direct interfaces to UNIX system calls, such as

unlink() and stat().The function used here,os.listdir(), is a higher-level interface to

the UNIX calls opendir(), readdir() and closedir().

¥.sort() is a method of list objects. It sorts the list in place (here in dictionary order since the list

items are strings).

And here is a simple program to print the Þrst 100 prime numbers:

primes = [2] # make a list of prime numbers

print 2 # print the first prime

i = 3 # initialize candidate

while len(primes) < 100: # loop until 100 primes found

for p in primes: # loop over known primes

if i%p == 0 or p*p > i: # look for possible divisors

break # cancel rest of for loop

if i%p != 0: # is i indeed a prime?

primes.append(i) # add it to the list

print i # and print it

i = i + 2 # consider next candidate

Some clariÞcations again:

¥ PythonÕs expression syntax is similar to that of C; however there are no assignment or pointer oper-

ations, and Boolean operators (and/or/not) are spelled as keywords.

¥ The expression [2] is a list constructor; it creates a new variable-length list object initially contain-

ing a single item with value 2.

¥ len() is a built-in function returning the length of lists and similar objects (e.g. strings).

¥.append() is another list object method; it modiÞes the list in place by appending a new item to

the end.

HereÕs another example, involving some simple I/O. It is intended as a shell interface to a trivial data-

base of phone numbers maintained in the Þle $HOME/.telbase. Each line is a free format entry; the

Python for Unix/C Programmers Copyright 1993 Guido van Rossum 3

program simply prints the lines that match the command line argument(s). The program is thus more or

less equivalent to the Bourne shell script Ògrep -i "$@" $HOME/.telbaseÓ.

import os, sys, string, regex

def main():

pattern = string.join(sys.argv[1:])

filename = os.environ[ÕHOMEÕ] + Õ/.telbaseÕ

prog = regex.compile(pattern, regex.casefold)

f = open(filename, ÕrÕ)

while 1:

line = f.readline()

if not line:break # End of file

if prog.search(line) >= 0:

print string.strip(line)

main()

Some new Python features to be spotted in this example:

¥ Most code of the program is contained in a function deÞnition. This is a common way of structuring

larger Python programs, as it makes it possible to write code in a top-down fashion. The function

main() is run by the call on the last line of the program.

¥ The expression sys.argv[1:] slices the list sys.argv: it returns a new list with the Þrst ele-

ment stripped.

¥ The+ operator applied to strings concatenates its arguments.

¥ The built-in function open() returns an open Þle object; its arguments are the same as those for the

C standard I/O function fopen(). Open Þle objects have several methods for I/O operations, e.g.

the.readline() method reads the next line from the Þle (including the trailing Õ\nÕ character).

¥ Python has no Boolean data type. Extending CÕs use of integers for Booleans, any object can be used

as a truth value. For numbers, non-zero is true; for lists, strings and so on, values with a non-zero

length are true.

This program also begins to show some of the power of the standard and built-in modules provided by

Python:

¥ WeÕve already met theos module. The variable os.environ is a dictionary (an aggregate value

indexed by arbitrary values, in this case strings) providing access to UNIX environment variables.

¥ The module sys collects a number of useful variables and functions through which a Python pro-

gram can interact with the Python interpreter and its surroundings; e.g.sys.argv contains a

scriptÕs command line arguments.

¥ The string module deÞnes a number of additional string manipulation functions; e.g.

string.join() concatenates a list of strings with spaces between them;string.strip()

removes leading and trailing whitespace.

¥ The regex module deÞnes an interface to the GNU Emacs regular expression library. The function

regex.compile() takes a pattern and returns a compiled regular expression object; this object

has a method.search() returning the position where the Þrst match is found. The argument

regex.casefold passed to regex.compile() is a magic value which causes the search to

ignore case differences.

The next example is an introduction to using X11/Motif from Python; it uses two optional (and still

somewhat experimental) modules that together provide access to many features of the Motif GUI

toolkit. An equivalent C program would be several pages long...

Python for Unix/C Programmers Copyright 1993 Guido van Rossum 4

import sys # Python system interface

import Xt # X toolkit intrinsics

import Xm # Motif

def main():

toplevel = Xt.Initialize()

button = toplevel.CreateManagedWidget(ÕbuttonÕ,

Xm.PushButton, {})

button.labelString = ÕPush meÕ

button.AddCallback(ÕactivateCallbackÕ, button_pushed, 0)

toplevel.RealizeWidget()

Xt.MainLoop()

def button_pushed(widget, client_data, call_data):

sys.exit(0)

main() # Call the main function

The program displays a button and quits when it is pressed. Just a few things to note about it:

¥ X toolkit intrinsics functions that have a widget Þrst argument in C are mapped to methods of widget

objects (here,toplevel and button) in Python.

¥ Most calls to XtSetValues() and XtGetValues() in C can be replaced to assignments or ref-

erences to widget attributes (e.g.button.labelString) in Python.

Much Python code takes the form of modules containing one or more class deÞnitions. Here is a (triv-

ial) class deÞnition:

class Counter:

def __init__(self): # constructor

self.value = 0

def show(self):

print self.value

def incr(self, amount):

self.value = self.value + amount

Assuming this class deÞnition is contained in module Cnt, a simple test program for it could be:

import Cnt

my_counter = Cnt.Counter() # constructor

my_counter.show()

my_counter.incr(14)

my_counter.show()

3. Extending Python using C or C++

It is quite easy to add new built-in modules (a.k.a.extension modules) written in C to Python. If your

Python binary supports dynamic loading (a compile-time option for at least SGI and Sun systems), you

donÕt even need to build a new Python binary: you can simply drop the object Þle in a directory along

PythonÕs module search path and it will be loaded by the Þrstimport statement that references it. If

dynamic loading is not available, you will have to add some lines to the Python MakeÞle and build a

new Python binary incorporating the new module.

The example below is written in C, but C++ can also be used. This is most useful when the extension

must interface to existing code (e.g. a library) written in C++. For this to work, it must be possible to

call C functions from C++ and to generate C++ functions that can be called from C. Most C++ compil-

Python for Unix/C Programmers Copyright 1993 Guido van Rossum 5

ers nowadays support this through the extern"C" mechanism. (There may be restrictions on the use

of statically allocated objects with constructors or destructors.)

The simplest form of extension module just deÞnes a number of functions. This is also the most useful

kind, since most extensions are really ÒwrappersÓ around libraries written for use from C. Extensions

can also easily deÞne constants. DeÞning your own (opaque) data types is possible but requires more

knowledge about internals of the Python interpreter.

The following is a complete module which provides an interface to the UNIX C library function

system(3). To add another function, you add a deÞnition similar to that for demo_system(), and a

line for it to the initializer for demo_methods. The run-time support functions Py_ParseArgs()

and Py_BuildValue() can be instructed to convert a variety of C data types to and from Python

types.

1

/* File "demomodule.c" */

#include <stdlib.h>

#include <Py/Python.h>

static PyObject *demo_system(self, args)

PyObject *self, *args;

{

char *command;

int sts;

if (!Py_ParseArgs(args, "s", &command))

return NULL; /* Wrong argument list - not one string */

sts = system(command);

return Py_BuildValue("i", sts); /* Return an integer */

}

static PyMethodDef demo_methods[] = {

{"system", demo_system},

{NULL, NULL} /* Sentinel */

};

void PyInit_demo()

{

(void) Py_InitModule("demo", demo_methods);

}

4. Comparing Python to other languages

This section compares Python to some other languages that can be seen as its competitors or precursors.

Three of the most well-known languages in this category are reviewed at some length, and a list of

other inßuences is given as well.

4.1. C

One of the design rules for Python has always been to beg, borrow or steal whatever features I liked

from existing languages, and even though the design of C is far from ideal, its inßuence on Python is

considerable. For instance, CÕs deÞnitions of literals, identiÞers and operators and its expression syntax

have been copied almost verbatim. Other areas where C has inßuenced Python: the break,

1. In the current release, the run-time support functions and types have different names. In version 1.0, they will

be changed to those shown here in order to avoid namespace clashes with other libraries.

Python for Unix/C Programmers Copyright 1993 Guido van Rossum 6

continue and return statements; the semantics of mixed mode arithmetic (e.g. 1/3 is 0, but 1/3.0 is

0.333333333333); the use of zero as a base for all indexing operations; the use of zero and non-zero for

false and true. (A more Òhigh-levelÓ inßuence of C on Python is also distinguishable: the willingness to

compromise the ÒpurityÓ of the language in favor of pragmatic solutions for real-world problems. This

is a big difference with ABC [Geurts], in many ways PythonÕs direct predecessor.)

On the other hand, the average Python program looks nothing like the average C program: there are no

curly braces, fewer parentheses, and absolutely no declarations (but more colons :-). Python does not

have an equivalent of the C preprocessor; the import statement is a perfect replacement for

#include, and instead of#define one uses functions and variables.

Because Python has no pointer data types, many common C ÒidiomsÓ do not translate directly into

Python. In fact, much C code simply vanishes into a single operation on PythonÕs more powerful data

types, e.g. string operations, data copying, and table or list management.

Since all built-in operations are coded in C, well-written Python programs often suffer remarkably few

speed penalties compared to the same code written out in C. Sometimes Python even outperforms C

because all Python dictionary lookup uses a sophisticated hashing technique instead, where a simple-

minded C program might use an algorithm that is easier to code but less efÞcient.

Surely, there are also areas where Python code will be clumsier or much slower than equivalent C code.

This is especially the case with tight loops over the elements of a large array that canÕt be mapped to

built-in operations. Also, large numbers of calls to functions containing small amounts of code will suf-

fer a performance penalty. And of course, scripts that run for a very short time may suffer an initial

delay while the interpreter is loaded and the script is parsed.

Beginning Python programmers should be aware of the alternatives available in Python to code that

will run slow when translated literally from C; thereÕs nothing like a regular look at some demo or stan-

dard modules to get the right ÒfeelingÓ (unless you happen to be a co-worker of the author :-).

In summary, on modern-day machines, where a programmerÕs time is often more at a premium than

CPU cycles or memory space, it is often worth while to write an application (or at least large portions

thereof) in Python instead of in C.

4.2. Perl

A well-known scripting language is Perl [Wall]. It has some similarities with Python: powerful string,

list and dictionary data types, access to high- and low-level I/O and other system calls, syntax borrowed

from C as well as other sources. Like Python, Perl is suited for small scripts or one-time programming

tasks as well as for larger programs. It is also extensible with code written in C (use as an embedded

language is not envisioned though).

Unlike Python, Perl has support for regular expression matching and formatted output built right into

the core of the language. PerlÕs type system is less powerful than PythonÕs, e.g. lists and dictionaries can

only contain strings and integers as elements. Perl preÞxes variable names with special characters to

indicate their type, e.g. Ò$fooÓ is a simple (string or integer) variable, while Ò@fooÓ is an array. Combi-

nations of special characters are also used to invoke a host of built-in operations, e.g. Ò$.Ó is the current

input line number.

In Perl, references to undeÞned variables generally yield a default value, e.g. zero. This is often touted

as a convenience, however it means that misspellings can cause nasty bugs in larger programs (this is

ÒsolvedÓ by an interpreter option to complain about variables set only once and never used).

Experienced Perl programmers often frown at Python: there is no built-in syntax for the use of regular

expressions, the language seems verbose, there is no implied loop over standard input, and the lack of

operators with assignment side effects in Python may require several lines of code to replace a single

Perl expression.

However, it takes a lot of time to gain sufÞcient Perl experience to be able to write effective Perl code,

and considerably more to be able to read code written by someone else! On the contrary, getting started

Python for Unix/C Programmers Copyright 1993 Guido van Rossum 7

with Python is easy because there is a lot less ÒmagicÓ to learn, and its relative verbosity makes reading

Python code much easier.

There is lots of Perl code around using horrible ÒtricksÓ or ÒhacksÓ to work around missing features Ñ

e.g. Perl doesnÕt have general pointer variables, but it is possibly to create recursive or cyclical data

structures nevertheless; the code which does this looks contrived, and Perl programmers often try to

avoid using recursive data structures even when a problem naturally lends itself to their use (if they

were available).

In summary, Perl is probably more suitable for relatively small scripts, where a large fraction of the

code is concerned with regular expression matching and/or output formatting. Python wins for more

traditional programming tasks that require more structure for the program and its data.

4.3. TCL

TCL [Ousterhout] is another extensible, interpreted language. TCL (Tool Command Language) is espe-

cially designed to be easy to embed in applications, although a stand-alone interpreter (tcsh, the TCL

shell) also exists. An important feature of TCL is the availability of tk, an X11-based GUI toolkit whose

behavior can be controlled by TCL programs.

TCLÕs power is its extreme simplicity: all statements have the formkeyword argument argument ...

Quoting and substitution mechanisms similar to those used by the Bourne shell provide the necessary

expressiveness. However, this simplicity is also its weakness when it is necessary to write larger pieces

of code in TCL: since all data types are represented as strings, manipulating lists or even numeric data

can be cumbersome, and loops over long lists tend to be slow. Also the syntax, with its abundant use of

various quote characters and curly braces, becomes a nuisance when writing (or reading!) large pieces

of TCL code. On the other hand, when used as intended, as an embedded language used to send control

messages to applications (like tk), TCLÕs lack of speed and sophisticated data types are not much of a

problem.

Summarizing, TCLÕs application scope is more restricted than Python: TCL should be used only as

directed.

4.4. Other inßuences

Here is an (incomplete) list of Òlesser knownÓ languages that have inßuenced Python:

¥ ABC was one of the greatest inßuences on Python: it provided the use of indentation, the concept of

high-level data types, their implementation using reference counting, and the whole idea of an inter-

preted language with an elegant syntax. Python goes beyond ABC in its object-oriented nature, its

use of modules, its exception mechanism, and its extensibility, at the cost of only a little elegance

(Python was once summarized as ÒABC for hackersÓ :-).

¥ Modula-3 provided modules and exceptions (both the import and the try statement syntax were

borrowed almost unchanged), and suggested viewing method calls as syntactic sugar for function

calls.

¥ Icon provided the slicing operations.

¥ C++ provided the constructor and destructor functions and user-deÞned operators.

¥ Smalltalk provided the notions of classes as Þrst-class citizens and run-time type checking.

Python for Unix/C Programmers Copyright 1993 Guido van Rossum 8

5. Availability

The Python source and documentation are freely available by ftp. It compiles and runs on most UNIX

systems, Macintosh, and PCs and compatibles. For Macs and PCs, pre-built executables are available.

The following information should be sufÞcient to access the ftp archive:

Host: (IP number: 192.16.184.180)

Login: anonymous

Password: (your email address)

Transfer mode: binary

Directory: /pub/python

Files: python0.9.9.tar.Z (source, includes LaTeX docs)

pythondoc-ps0.9.9.tar.Z (docs in PostScript)

MacPython0.9.9.hqx (Mac binary)

python.exe.Z (DOS binary)

The Ò0.9.9Ó in the Þlenames may be replaced by a more recent version number by the time you are

reading this. Fetch the Þle INDEX for an up-to-date description of the directory contents.

The 0.9.9 release of Python is also available on the CD-ROM pressed by the NLUUG for its November

1993 conference. The next release will be labeled 1.0 and will be posted to a comp.sources news-

group before the end of 1993.

The source code for the STDWIN window interface is not included in the Python source tree. It can be

ftpÕed from the same host, directory/pub/stdwin, Þle stdwin0.9.8.tar.Z.

6. References

[Geurts] Leo Geurts, Lambert Meertens and Steven Pemberton, ÒABC ProgrammerÕs Hand-

bookÓ, Prentice-Hall, London, 1990.

[Wall] Larry Wall and Randall L. Schwartz, ÒProgramming perlÓ, OÕReilly & Associates, Inc.,

1990-1992.

[Ousterhout] John Ousterhout, ÒAn Introduction to Tcl and TkÓ, Addison Wesley, 1993.

Log in to post a comment | https://www.techylib.com/en/view/adventurescold/an_introduction_to_python_for_unixc_programmers | CC-MAIN-2017-51 | refinedweb | 3,799 | 54.52 |

Type: Posts; User: Joeman

this compiles

#include <utility>

#include <Windows.h>

int main()

{

RECT rc = {0};

std::pair <LONG,LONG> ControlSize;

You're welcome :).

Also my code made sure it didn't break the command:

EXENAME > echo.txt

To make sure your app doesn't break that command, you need to adapt IsAConsole at the least like:

...

This is what ~cConsoleInitializer did

~cConsoleInitializer()

{

if( File )

{

INPUT_RECORD InputRecord;

InputRecord.EventType = KEY_EVENT;

This seems like what you want:

#include <windows.h>

#include <iostream>

#include <cstdio>

bool IsAConsole( HANDLE Handle )

{

DWORD Mode;

return GetConsoleMode( Handle, &Mode ) != 0;...

To be more elaborate about trying &Class.myfunction from my previous post. The compiler will complain about trying to form a pointer by taking the address of a bound member function.

The correct...

Visual Studio 2010 c++ compiler:

Gcc 4.7.0:

This is incorrect since he only defined and declared a free standing function pointer, so you can assign any free standing function that...

I am confused about what you mean, but how about

class A{};

class B : public A{};

int main()

{

A* pA = new B;

B& pB1 = static_cast<B&>(*pA);

}

This is now possible with the new standard of c++ now called c++11 which was just finalized in August this year. c++11 is still being implemented in compilers. Gcc 4.7 and Clang 3.0 supports this...

#include <functional>

#include <algorithm>

#include <vector>

#include <string>

#include <locale>

#include <boost/algorithm/string/case_conv.hpp>

using namespace std;

using namespace...

I am sure you meant

template <class T>

Class1 < Class2 <T>>A problem that has now been fixed in the new c++11 standard. Any compiler that has been updated to conform will compile correctly.

You...

char n[29];Why not use std::string?

I think you quoted more than what you intended because you didn't fix the unsafe practice of calling delete by hand which can be fixed with raii design at the...

cout<<"Enter name :";

cin>>n;

cout<<"Enter shares :";

cin>>num;

cout<<"Enter share value :";

cin>>val;

s1[0].setName(n);

I want to point out the template way to determine the size which adds a little bit of safety as well.

template< typename TYPE, int Size >

size_t GetArrayLength( TYPE (&Array)[Size] )

{ ...

Why not learn from tutorials as well? Also read faqs. They can provide common pitfalls and how to avoid them. Googling your question first is even better if you can generalize it.

void...

Actually I was thinking of a user's point of view. I can see how you got confused, but as a programmer I haven't actually looked at windows 8 ... yet ...

So what if metro maybe the next big...

class UtilWindow

{

public:

Reshape(int width, int height);

~Reshape();

private:

};You should look at some tutorials about classes and see how they declare a constructor and...

I wouldn't inherit a shared_ptr since the destructor isn't virtual. Also shared_ptr only destroys the object it contains only after all shared_ptrs destruct, so if a user clicks the exit button, you...

I disagree. It is to make things easier by mapping one set of contexts to another and not to make things harder in general. I am fine with simplifying tools to make them more flexible and less ridged...

Well I am glad you revised your words because I really didn't read it that way the first time :). I won't argue that they are more prone to write horrible code, but at least it won't blow up so...

I disagree. A high level language should make it easier to write code since it abstracts away hardware dependency which abstracts away concepts related to hardware. For example, who aligns machine...

I agree knowing how things work and why is important. That is why I like computers in the first place :) They are quite complicated.

This is because of the c++ standard. The only type of polymorphic behavior that goes by its direct effect is class polymorphismThat is because c++ doesn't have another terminology for class...

example...

class cMainWindow

{

cWindow Window;

cButton SaveButton;

cButton CloseButton;

You could try CreateMDIWindow and if it fails, call GetLastError() and look at the system error codes.

Also don't forget to unregister your class since dlls do not unregister classes automatically.

Also are you sure there isn't a better way to pass HWND? Can you at least pass pointers? | http://forums.codeguru.com/search.php?s=37b34b0c058c3fc4e66f65b136ec37f0&searchid=967895 | CC-MAIN-2013-20 | refinedweb | 731 | 65.83 |

When you create a Google Cloud project, you are the only user on the project. By default, no other users have access to your project or its resources, including Google Kubernetes Engine resources. Google Kubernetes Engine supports multiple options for managing access to resources within your project and its clusters using role-based access control (RBAC).

These mechanisms have some functional overlap, but are targeted to different types of resources. Each is explained in a section below, but in brief:

Kubernetes RBAC is built into Kubernetes, and grants granular permissions to objects within Kubernetes clusters. Permissions exist as ClusterRole or Role objects within the cluster. RoleBinding objects grant Roles to Kubernetes users, Google Cloud users, Google Cloud service accounts, or Google Groups (beta).

If you primarily use GKE, and need fine-grained permissions for every object and operation within your cluster, Kubernetes RBAC is the best choice.

IAM manages Google Cloud resources, including clusters, and types of objects within clusters. Permissions are assigned to IAM members, which exist within Google Cloud, G Suite, or Cloud Identity.

There is no mechanism for granting permissions for specific Kubernetes objects within IAM. For instance, you can grant a user permission to create CustomResourceDefinitions (CRDs), but you can't grant the user permission to create only one specific CustomResourceDefinition, or limit creation to a specific Namespace or to a specific cluster in the project. An IAM role grants privileges across all clusters in the project, or all clusters in all child projects if the role is applied at the folder level.

If you use multiple Google Cloud components and you don't need to manage granular Kubernetes-specific permissions, IAM is a good choice.

Kubernetes RBAC

Kubernetes has built-in support for RBAC that allows you to create fine-grained Roles, which exist within the Kubernetes cluster. A Role can be scoped to a specific Kubernetes object or a type of Kubernetes object, and defines which actions (called verbs) the Role grants in relation to that object. A RoleBinding is also a Kubernetes object, and grants Roles to users. In a GKE user can be any of:

- Google Cloud user

- Google Cloud service account

- Kubernetes service account

- G Suite user

- G Suite Google Group (beta)

To learn more, refer to Role-Based Access Control.

IAM

IAM allows you to define roles and assign them to members. A role is a collection of permissions, and when assigned to a member, controls access to one or more Google Cloud resources. Roles fall into three broad categories:

- Basic roles provide coarse permissions limited to Owner, Editor, and Viewer.

- Pre-defined roles, such as the pre-defined roles for GKE, provide finer-grained access than basic roles and address many common use cases.

- Custom roles allow you to create unique combinations of permissions.

A member can be any of:

- Google account

- Service account

- Google group

- G Suite domain

- Cloud Identity domain

An IAM policy assigns a set of permissions to one or more Google Cloud members.

You can also use IAM to create and configure service accounts, which are Google Cloud accounts associated with your project that can perform tasks on your behalf. Service accounts are assigned roles and permissions in the same way as human users.

Service accounts provide other functionality as well. To learn more, refer to Creating IAM Policies.

IAM interaction with Kubernetes RBAC

IAM and Kubernetes RBAC work together to help manage access to your cluster. RBAC controls access on a cluster and namespace level, while IAM works on the project level. An entity must have sufficient permissions at either level to work with resources in your cluster.

What's next

- Read the GKE security overview.

- Learn how to use Kubernetes RBAC.

- Learn how to create IAM policies for GKE.

- Learn how to use IAM Conditions for load balancers. | https://cloud.google.com/kubernetes-engine/docs/concepts/access-control?hl=zh-Tw | CC-MAIN-2021-31 | refinedweb | 635 | 61.87 |

Hi, On Wed, 2006-07-19 at 00:27 +0100, Mukund wrote:

Advertising

#define GIMP_COPYRIGHT \ - _("Copyright © 1995-2006\n" \ - "Spencer Kimball, Peter Mattis and the GIMP Development Team") + _("Copyright © 1995-2006\n" \ + "Spencer Kimball, Peter Mattis\n" \ + "and the GIMP Development Team") Why is there a newline inserted here? I think it looked a lot better without it and there's no semantic reason why there should be a newline at this place. #define GIMP_LICENSE \ - _("GIMP is free software; you can redistribute it and/or modify it " \ + _("\n" \ + "GIMP is free software; you can redistribute it and/or modify it " \ "under the terms of the GNU General Public License as published by Please do not use newlines do adjust spacing. Especially not in translatable strings. The spacing in the about dialog is set to what the HIG suggests and should be fine without such hacks. If you really think it needs to be changed, please file a bug-report against GTK+. +#ifdef GIMP_UNSTABLE + gchar *comment = g_strconcat (GIMP_DESCRIPTION, "\n\n", + _("This is an unstable development release."), + "\n", NULL); +#endif Same comment applies here. Sven _______________________________________________ Gimp-developer mailing list Gimp-developer@lists.XCF.Berkeley.EDU | https://www.mail-archive.com/gimp-developer@lists.xcf.berkeley.edu/msg11437.html | CC-MAIN-2017-04 | refinedweb | 199 | 51.78 |

Hi,

I created an eps figure file with matplotlib. I can look at it via mac preview, but when I inserted it into a word document and printed it out, I got nothing except for the eps file information. So what's the problem? Here are all the packages I used in the python code. Does any of them impact the creation of the eps file? Thanks.

import numpy as np

import sys

import matplotlib.pyplot as plt

from mpl_toolkits.basemap import Basemap

from get_fbin import get_fbin

from matplotlib import mathtext

from matplotlib import rc

from matplotlib.font_manager import FontProperties

rc('font',**{'family':'sans-serif','sans-serif':['Helvetica']})

rc('text', usetex=True)

and I saved the code in this way:

fig.savefig('pfg1.eps') | https://discourse.matplotlib.org/t/cannot-print-out-eps-figures/15119 | CC-MAIN-2021-43 | refinedweb | 124 | 67.76 |

From: Jeremy Pack (rostovpack_at_[hidden])

Date: 2007-06-07 11:18:25

Johan,

>

> Yes, please don't make it a requirement to use UUIDs. I'll definitely have a

> need to use human-readable type names, which could also easily map to

> shared library names. This would allow for some automatic loading of shared

> libraries based upon the requested types.

>

One of the primary goals of the library is to keep the separate parts

as encapsulated as possible. As such, if the uuid specific header was

included, they could be used. Otherwise, there would be no

dependencies on uuid.

You can define any arbitrary mapping for your class's type

information. You could create a complicated struct to describe them,

as long as you provided a way to compare them (a less than operator).

So, I believe that what you propose should not be an issue. I or

Mariano will be writing an example soon showing the use of different

types of type info.

> [snip]

>

> >>

> >> 2. how else do you identify them if not with UUID ?

> >

> > RTTI. Some platforms (OS X) actually work fine just using the

> > type_info reference returned by typeid - other platforms (Windows, for

> > instance) only work with the full typeid(x).name(). But see above for

> > other options.

>

> Plain strings, e.g. equal to class names (including namespaces):

>

> "my::namespace::MyClass"

>

I'll make sure to detail that specific example in the tutorial.

>

> Why not provide a class loader with the library, which could be configured

> with e.g. :

>

> - Locations (i.e. directories) to use for loading, in prioritized order.

>

> - Type id => library name mappers, allowing the user to only provide the

> type id when loading classes.

>

> - Shared library extensions to use for the library loading (defaults to

> platform standard extension).

>

> - Explicit load functionality, where the user can specify the library

> name and the type id. As for cross-platform usage, the users should normally

> not include the file extensions or the paths to the libraries, only the

> canonical library names. It should still be _possible_ to provide the exact

> path and library extension.

>

Yes, this is planned - enough people have mentioned it, that we'll

definitely work it in. The basic design of it is done, and it will be

implemented "when we have time". Once again, it will be included in a

"convenience" header, and the code for it will only be included if its

header is included explicitly. Since not everyone will need this

functionality, it will be made optional.

> [snip]

>

> >>

> >> 7. can you explain shortly what Reflection is? Or some URL with

> >> explanation, please.

> >

> > I think of reflection as runtime type information about the methods of

> > a class for which you do not have access to any of its base classes.

> > As such, there are a lot of directions one could go with it - I'm not

> > sure which direction Mariano will choose. Some of these directions are

> > really hard in C++. I think we'll be discussing that a lot in about

> > three weeks - and will want lots of input from the community.

>

> I don't know what you intend to do, but please don't mix the two libraries

> into one. I'd even go as far as to suggest that the "dynamic (un-)loading of

> libraries/finding the entry points for specific methods" part of the

> Extension library should probably be a standalone library.

>

We will be posting the proposed reflection solution to this list

before implementing it. We'll do our best to avoid high coupling

between the plugin loading and reflection. I thought about this for a

while this morning, and I think that we can solve this in a way where

the reflection will work without including any of the plugin loading

headers, and vice versa. However, a "convenience" header will be

provided to make it easy to use them together. We'll definitely want

your input when we put forward the proposed design.

> I assume that it will always be possible to dynamic_cast the created

> instance to whatever types it actually implements, right? Example:

>

> boost::shared_ptr<base> pb = "load extension and create shared base

> instance";

> boost::shared_ptr<derived> pd = boost::dynamic_pointer_cast<derived>(pb);

>

Almost always - you of course can't cast it to a type that you don't

have defined in the current module. In addition, some compilers have

trouble doing dynamic casts across shared library boundaries. I do

know that an older version of the Borland C++ compiler had problems

with this, but am not sure about other compilers. I have done it

successfully with relatively recent versions of VS and gcc.

Thanks for the suggestions. We'll work on them!

Cheers,

Jeremy

Boost list run by bdawes at acm.org, gregod at cs.rpi.edu, cpdaniel at pacbell.net, john at johnmaddock.co.uk | https://lists.boost.org/Archives/boost/2007/06/123042.php | CC-MAIN-2020-29 | refinedweb | 801 | 64.61 |

This!

The Turing test has been the de facto standard for testing a machine for intelligence. It's the way we try to figure out if our models are capable of responding to general-purpose questions the way a human would. The most efficient method for building a machine that has human-like responses is using neural networks and deep learning. These are new algorithms that have been made feasible through the recent advancements in hardware performance.

One aspect of humanity that is restricted to evolved primates is humor. In all fairness, there is no clear cut definition of humor, however, there is one theory that has gained quite a bit of popularity in recent years. The argument is that what makes us classify something as being funny falls under the "incongruity theory". The underlying mechanism of this theory builds on top of the inconsistency between what we are expecting to happen and what actually happens.

As it turns out the cognitive mechanisms that allow us to identify something as "funny" are quite complex, and it is only a few evolved primates that can find something funny.

For example Koko, the western lowland gorilla who understands more than 1,000 American Sign Language signs and 2,000 spoken words has been observed making prank jokes (IE: she would tie the instructor's shoelaces and then innocently make the sign for "chase". That's one tricksy gorilla :D !).

As a rule of thumb, the primates that can make laughing like sounds and are ticklish can be categorized as having a sense of humor.

Computers, on the other hand, are not ticklish, vocal cords are not yet a justified upgrade on any of the latest generation laptops, so, by all means, training a machine to understand humor needs to be trained by other means. The best way to train a neural network is by using an exhaustive text that contains as many jokes as possible in a specific format. You will notice that we already restricted the domain of humor to jokes (no pranks, ironic comedy with complex situations etc).

"If you're willing to restrict the flexibility of your approach, you can almost always do something better."

– John Carmack"

In our situation, we want to restrict the domain of the jokes to one-liners, question style comedy which would make the training of our model more focused, essentially what we are doing is gently nudging the machine in the right direction.

The machine intelligence we are building is for text generation, a deep learning neural network that can make jokes.

We will use the language with the most evolved ecosystem in terms of libraries, examples, and documentation, which in this case, is Python. I am a big fan of Python for its readability and because it is very closely evolving alongside JavaScript so many things feel familiar, it's also the other serverless language, and this makes it an excellent candidate to

write an Azure Function that tells us jokes.

The most popular approach to writing text generation applications are based on Andrej Karpathy's char-rnn architecture and blog post,

the example teaches a recurrent neural network to predict the next character in a sequence based on the previous n characters.

Based on Andrejs work Max Woolf built a python package that makes building a text generation bot very easy. It allows you to configure your neural network depth/layers/behavior etc.

The main method for training your joke model is textgenrnn. This object has a train_from_file method that allows you to consume a file with training data to teach your bot to figure out what punch line would be best suited to answer the joke.

The usage is quite straightforward, training a simple model would look something like this:

from textgenrnn import textgenrnn textgen = textgenrnn(name="new_model") textgen.train_from_file('jokes.txt', num_epochs=5)

After we have trained the data from the training data set, let's take it for a spin and try generating a test joke. We can do this by calling textgen.generate(). This will output the joke, but this simple call will not return the joke from the serverless function, which means we want to use a version of the method call that returns the data.

joke = textgen.generate(return_as_list=True)[0] # Grab the first generated joke in the list, in this case the only one

The script will create the model that is a serialized version of the dataset that will be used by tensorflow to help our function generate jokes based on the training data. This is the file with .hdf5 extension in your project's root folder.

Now the last piece of the puzzle is using the new model as a trigger to generate jokes in an Azure Function. Assuming you created a storage account that has a container named jokes, here's how your function will look like:

import azure.functions as func from textgenrnn import textgenrnn import tempfile import urllib.request import os import logging def main(myblob: func.InputStream, context: func.Context): tempFilePath = tempfile.gettempdir() jokesfile = os.path.join(tempFilePath,"jokes.hdf5") urllib.request.urlretrieve(myblob.uri, jokesfile) textgen = textgenrnn(jokesfile) joke = textgen.generate(return_as_list=True)[0] logging.info(f"joke: {joke}")

{ "scriptFile": "__init__.py", "bindings": [ { "name": "myblob", "type": "blobTrigger", "direction": "in", "path": "jokes/{name}", "connection": "AzureWebJobsStorage" } ] }

To test your function, upload the generated .hdf5 file to the joke container and see the generated joke in the functions logs.

Need more inspiration? You can find the source code in my github repository here.

Congrats

You now have an API with a sense of humor :D

Conclusions

You can notice that the API is not particularly funny in the classical sense, but it will surprise you. I think that what you would be expecting and what the endpoint is returning will definitely fall under the "theory of incongruity".

To improve results to match more traditional patterns, there are a few ways to improve the training method like:

- restrict the type of jokes

- increase training data set size

Want to submit your solution to this challenge? Build a solution locally and then submit an issue. If your solution doesn't involve code you can record a short video and submit it as a link in the issue desccription.!

Discussion (1)

Haha! This is pure gold! 🥇

Is there a live version of this? I would like to fiddle with it! | https://dev.to/azure/build-your-jokes-generator-using-machine-learning-and-serverless-5g4a | CC-MAIN-2021-43 | refinedweb | 1,067 | 60.85 |

An integrated development environment (IDE), like NetBeans or IntelliJ for Java, is a tool that enhances a computer programmer’s productivity.

A tool as in something that enables us to do quickly and easily something that would take us too long to do without assistance.

After a white-boarding interview exercise, one of my mentors said that an IDE can be a crutch.

A crutch as in something that we rely on so much that it atrophies our ability to do something we should be able to do without machine help.

In this article I will mostly only use examples from Java IDEs, specifically NetBeans and IntelliJ. But a lot of the concepts carry over to IDEs for other programming languages, like XCode for Objective-C and Swift, or VS Code for JavaScript and just about everything else.

And of course both NetBeans and IntelliJ can be used for programming languages other than Java. I’ve used NetBeans for C++ and have thought about using it for FORTRAN (curiousity, mostly). And IntelliJ is now what I mostly use for Scala.

Some people think that if you’re not using an editor like Vim you’re not a real programmer, you’re a pretender, an impostor.

A very good example of how much an IDE helps with refactoring comes from my reading of Building Maintainable Software: Java Edition by Joost Visser et al. With an IDE, making changes to several files at once is quite easy.

One of the recommendations in the book is that constructors (and other “methods”) should not take more than four parameters. And even just four parameters can often be too many.

In one of my projects, I had a constructor with five parameters. In another article I’ll delve into the details of that. For now, suffice it to say I realized the constructor ought to be able to infer the fifth parameter, otherwise it should throw an exception.

So I rewrote the constructor to only take four parameters. But now my project won’t compile, because I haven’t yet changed all the 5-parameter constructor calls to be 4-parameter constructor calls.

In Vim or some other plaintext editor, I can do a search for the relevant constructor calls. I might run the search on classes that don’t have the pertinent constructor call.

Of course a “real” programmer would just immediately know the location of all the constructor calls that need to be changed. The truth is, though, that computers are just better at keeping track of state than humans are.

So, in an IDE, I can simply rely on all the now-invalid 5-parameter constructor calls being flagged as compilation errors. Once my IDE’s error/warning indicator goes from red to yellow or (better yet) green, I know the problem is taken care of.

As I was writing this article, it occurred to me that I can further whittle this down to three constructor parameters for at least a simple majority of the use cases, if not a super majority.

This is a change that I intend to accomplish this with a chained constructor, so that the 4-parameter option is still available through the primary constructor when I need it.

This means that I will have to look at each constructor call and decide one by one whether it should remain as it is or if it should be rewritten to take advantage of the 3-parameter auxiliary constructor.

But again, the IDE gives me an easy way to quickly find the relevant constructor calls and take care of them on a case-by-case basis.

So I like to think that for me, the IDE is a tool, not a crutch. Most of the time, anyway. I think it is still necessary to do a little introspection and determine whether this is still the case. Hence this quiz.

Auto-complete

Java has a reputation for being verbose. The long names for some of the standard classes make some programmers give their variables excessively short names, as the following fictional example demonstrates all too well:

ClassWithLongName obj = new ClassWithLongName(param1, param2);

It is rare to see an exception instantiated in one line and then thrown in a later line. Java programmers instead generally prefer to have exceptions thrown in the very same line they’re instantiated. For example:

if (index > maxIndex) {

String msg = index + " is beyond maximum of " + maxIndex;

throw new IndexOutOfBoundsException(msg);

}

rather than

if (index > maxIndex) {

String msg = index + " is beyond maximum of " + maxIndex;

IndexOutOfBoundsException e =

new IndexOutOfBoundsException(msg);

throw e;

}

There is generally no good reason to do it the second way (the stack trace could be misleading), but it certainly doesn’t help that you would have to type

IndexOutOfBoundsException twice.

If you did want to do it that second way, though, you would certainly appreciate it if auto-complete kicked in and you only needed to type the first word, or even just a couple of letters.

In my experience, NetBeans will only auto-complete after a dot, but IntelliJ will offer auto-complete suggestions even for a single letter, if it recognizes the relevant class from either

java.lang or from the imported packages (or from packages in the overall project scope).

For example, if you have

java.awt.* imported, you might only need to type “

Mu” or even just “

M”in IntelliJ to give

MultipleGradientPaint as an auto-complete suggestion that you can accept by pressing Enter.

Then, if you want to access the

OPAQUE field of

MultipleGradientPaint, you might as well just go ahead and type the period, press Caps Lock and write that short word in full.

Whereas in NetBeans, you would have to type

MultipleGradientPaint in full and then the period before the auto-complete suggests

BITMASK,

OPAQUE and

TRANSLUCENT as possible completions.

You can customize how auto-complete works in both NetBeans and IntelliJ. Probably in Eclipse, too, after hunting through several preferences menus.

IntelliJ also tries to auto-complete Java keywords like “

instanceof” and “

extends.”

Quiz question: Do you use auto-complete only for things that would be too slow or error-prone to type? If so, your IDE is a tool. Or do you rely on auto-complete for things like “

catch” and “

true”? If so, your IDE is a crutch.

Quiz question: How do you feel when auto-complete lags behind or fails to offer any suggestions at all? It’s okay to feel a little annoyed, but if you’re angry, your IDE might be a crutch.

However… when your computer is overburdened with too many different programs running besides your IDE, you might have to let the auto-complete kick in even for the shorter keywords like “

else” and even “

if”.

But then your IDE is worse than a crutch, it’s a genuine nuisance. In that case it would probably be better to close some of the other programs, so that auto-complete can work more like it’s intended to work.

And also, it can happen that you neglected to import a necessary package, or an object is of a different type than the type you think it is. An apparent auto-complete failure might indicate a mistake on your part of this sort.

And then your IDE is a tool, helping you remember an important little detail. More proof that computers are better at keeping track of state than humans.

Auto-generate

One of the most touted features of both Scala and Kotlin is that getters and setters are generated automatically and behind the scenes. In Java you have to write a lot of lines like these:

private SomeType propertyA = new SomeType(initParam); public SomeType getPropertyA() {

return (SomeType) this.propertyA.clone();

} public void setPropertyA(SomeType setting) {

if (SomeType.isCurrentlyValid(setting)) {

this.propertyA = (SomeType) this.propertyA.clone();

} else {

throw new IllegalArgumentException("Bad setting");

}

}

Even if you don’t need your setter to do any validation, you still need something like ten lines (with standard formatting). That’s boilerplate, or worse, bloat, according to Java critics.

You can obtain the Java-style getter and setter in Scala with a single line:

@BeanProperty var propertyA: SomeType = SomeType(initParam)

This assumes

BeanProperty has been imported, and presumably you’re going to need it for other fields in the given class, because you need the given class to interoperate with Java classes expecting Java Beans.

This also assumes

SomeType has

apply(), but that’s outside the scope of this article. I’m guessing something very similar for Kotlin.

Maybe they got the idea from Lombok, a plugin for Java IDEs that needs to be specifically installed on your development system before you can start using it. The one-liner would be something like this:

@Getter @Setter SomeType propertyA = new SomeType(initParam);

(you also need to have the relevant Lombok packages imported). Presumably this will take care of the nuances of getters and setters for mutable types.

It is my understanding that, given a proper Lombok installation, a line like the above would lead the compiler to generate pretty much the same bytecode as if you had typed it all out in “vanilla Java,” but without having to worry about typos too much.

However, your IDE can generate getters and setters right out the box, no special plugins needed. Though you might think they’re still boilerplate eyesores, cluttering up your otherwise beautiful code.

In NetBeans, auto-generate for getters and setters is available through a contextual menu. In IntelliJ, you can use the Generate menu.

A much more dramatic example of auto-generate is the generation of

equals() and

hashCode(). You often need the former for unit testing, and then when you write it, your IDE offers to write

hashCode() for you.

You don’t even have to write the least bit of either, you can have your IDE generate both of them for you at once.

In NetBeans, the generated

equals() usually has a redundant if statement at the end, which triggers a warning. I guess the idea is to make sure you review the generated

equals().

I remember a long time ago I had NetBeans auto-generate

hashCode() for

ImaginaryQuadraticRing in my Algebraic Integer Calculator project (source and tests available from GitHub).

That was before the project grew to also include

RealQuadraticRing, and the abstract class

QuadraticRing. I don’t remember the exact form of the generated

hashCode(), but in shortening it to four lines, I did not change the logic of what NetBeans generated:

@Override

public int hashCode() {

return (219 + this.negRad);

}

I still don’t know where NetBeans got that number 219 from, but I’ve wondered about it since. And maybe I could have kept it without any problem when I expanded the project to include

RealQuadraticRing.

But I decided to come up with my own

hashCode() function, which now resides in

QuadraticRing:

@Override

public int hashCode() {

int hash = this.radicand;

if (this.d1mod4) {

hash--;

} else {

hash *= -1;

}

return hash;

}

It’s a tiny bit more complicated, but at least I can fully explain the thought process behind it.

And also I can more easily explain that it is guaranteed to give unique hash codes for any possible

ImaginaryQuadraticRing or

RealQuadraticRing object, (

radicand is of primitive type

int).

The logical extreme would be for me to say that you also need to examine the Java Virtual Machine (JVM) bytecode your IDE generates. But that’s not necessary, unless it interests you.

Java compiler optimization is something programmers like Scala inventor Martin Odersky have worked very hard to improve. As long as you understand your Java source and its efficiency or inefficiency, there’s probably no extra performance to be gained from scrutinizing and tweaking the byte code.

Quiz question: When you have your IDE auto-generate something, do you at least skim what was generated? If you make light edits to what was generated, your IDE is a tool.

But if you don’t even glance at what was generated, if you just assume it’s all good even when you haven’t had your IDE generate something like it before, your IDE is a crutch.

In particular, Java programmers should understand the nuances of getters and setters, and of

equals() and

hashCode(), even if they prefer to let the IDE, or a plugin like Lombok, write those for them.

Stylistic warnings

Remember the example above in which the

IndexOutOfBoundsException is instantiated in one line and thrown in the next? IntelliJ would certainly offer to “inline the variable.” Probably NetBeans, too.

The more you deviate from standard programming practices the more your IDE will offer to change your code to the more usual patterns. In my experience, IntelliJ is more opinionated about this than NetBeans.

It can be very helpful when you’re starting out programming Java, and in my case, IntelliJ has been very helpful as I learn Scala. Passing up easy opportunities for functional programming in Scala is one example of a deviation from common Scala practices.

In Java, constructing an exception in one line and throwing it in a later line is a deviation from common Java practices, and most of the time there is no good reason for it (this might indicate a need to wrap an already thrown exception in a new exception, but that’s a topic for another day).

There needs to be an understanding of when these deviations are ill-advised and when there is a good reason for them.

In some cases it may even be necessary to customize your IDE’s errors and warnings (though I would be very hesitant to demote errors to warnings, not to mention disabling them altogether).

Quiz question: Do you strive to make your IDE’s error/warning indicator be green most of the time? You should. But in so doing, do you question the reasons for the warnings? If you at least understand the reasons for the warnings, even if you don’t customize the warnings, your IDE is a tool.

Quiz question: Have you ever accepted a suggestion from your IDE without fully understanding it, but then followed that up with a question about it to a coworker, mentor, or on StackOverflow, Quora or some website like that? Then your IDE is a tool for learning.

Quiz question: Have you ever customized errors, warnings and/or inspections in your IDE? If you have, your IDE is a tool. But if you have not, and have not felt a need to do so, that’s okay, too, provided you’re aware than you can customize these if you need to.

In conclusion

It boils down to this: is your IDE taking care of tasks that would be too tedious for you to tackle one at a time? Then your IDE is a tool. Or are you letting it make decisions that you should be making? Then it’s a crutch.

I only barely mentioned the Eclipse IDE. I would appreciate comments about how Eclipse is helpful and when it gets in the way. I would also appreciate comments about IDEs for other programming languages. | https://alonso-delarte.medium.com/your-ide-tool-or-crutch-take-the-quiz-a6512e1af74c | CC-MAIN-2021-21 | refinedweb | 2,520 | 60.14 |

Creating a Face Swapping Model in 10 Minutes

January 13, 2021 | 4 min read | 313 views

Face swapping is now one of the most popular features of Snapchat and SNOW. In this post, I’d like to share a naive solution for face swapping, using a pre-trained face parsing model and some OpenCV functions.

I’m going to implement face swapping as follows:

- Parse the face into 19 classes.

- Swap every part of the face with the counterpart.

- Fill holes with local average colors.

The code is available at:.

Face Parsing

For face parsing, I used the pre-trained BiSeNet because it’s accurate and easy to use. This model parses a given face into 19 classes such as left eyebrow, upper lip, and neck.

For this case, I selected 8 classes: left eyebrow, right eyebrow, left eye, right eye, nose, upper lip, mouth, and lower lip. These were used to generate 6 types of masks:

- left eyebrow mask

- right eyebrow mask

- left eye mask

- right eye mask

- nose mask

- mouth mask (including lips)

Face swapping

Next, I swapped for each pair of parts. Since each part is in a different position in each picture, they are needed to be aligned. For example, the two right eyebrow masks below have slightly different positions.

This difference should be adjusted for the fine result, so I computed the shift in centroids with OpenCV’s

moments function.

def calc_shift(m0, m1): mu = cv2.moments(m0, True) x0, y0 = int(mu["m01"]/mu["m00"]), int(mu["m10"]/mu["m00"]) mu = cv2.moments(m1, True) x1, y1 = int(mu["m01"]/mu["m00"]), int(mu["m10"]/mu["m00"]) return (x0-x1, y0-y1)

Then I implemented the swapping part like:

def swap_parts(i0, i1, m0, m1, labels): m0 = create_mask(m0, labels) m1 = create_mask(m1, labels) i,j = calc_shift(m0, m1) l = i0.shape[0] h0 = i0 * m0.reshape((l,l,1)) # forground y = np.zeros((l,l,3)) # cancel the shift in centroids if i>=0 and j>= 0: y[:l-i, :l-j] += h0[i:, j:] elif i>=0 and j<0: y[:l-i, -j:] += h0[i:, :l+j] elif i<0 and j>=0: y[-i:, :l-j] += h0[:l+i, j:] else: y[-i:, -j:] += h0[:l+i, :l+j] h1 = i1 * (1-m1).reshape((l,l,1)) # background y += h1 * (y==0) return y

However, this left some holes (specifically,

m1 \ m0, using the notation of set operation) in the generated image.

Note: Throughout this experiment, I used randomly-sampled photos from.

Fill in the Holes

As a naive approach, I used the average color of the boundary region to fill in the holes. In computer vision, this boundary region is called morphological gradient, and it is computed by OpenCV’s

morphologyEx function.

def swap_parts(i0, i1, m0, m1, labels): ... h1 = i1 * (1-m1).reshape((l,l,1)) # background b = cv2.morphologyEx(m1.astype('uint8'), cv2.MORPH_GRADIENT, np.ones((5,5)).astype('uint8') ) # boundary region c = (np.sum(i1*b.reshape((l,l,1)), axis=(0,1)) / np.sum(b)).astype(int) # average color of the coundary # fill color in the hole h1 += c * m1.reshape((l,l,1)) y += h1 * (y==0) return y

Finally, I got the following result.

I have to admit that the result looks weird, but it means that this app is safe as it can’t be used for DeepFake😇

References

[1] OpenCV: Image Moments

[2] モルフォロジー変換 — OpenCV-Python Tutorials 1 documentation

[3] Changqian Yu, Jingbo Wang, Chao Peng, Changxin Gao, Gang Yu, Nong Sang. ”BiSeNet: Bilateral Segmentation Network for Real-time Semantic Segmentation“. ECCV. 2018.

Written by Shion Honda. If you like this, please share! | https://hippocampus-garden.com/face_swap/ | CC-MAIN-2022-27 | refinedweb | 612 | 64.91 |

# Memoization Forget-Me-Bomb

Have you heard about `memoization`? It's a super simple thing, by the way,– just memoize which result you have got from a first function call, and use it instead of calling it the second time - don't call real stuff without reason, don't waste your time.

Skipping some intensive operations is a very common optimization technique. Every time you might not do something — don’t do it. Try to use cache — `memcache`, `file cache`, `local cache` — any cache! A must-have for backend systems and a crucial part of any backend system of past and present.

Memoization vs Caching

======================

> Memoization is like caching. Just a bit different. Not cache, let's call it kashe.

Long story short, but memoization is not a cache, not a persistent cache. It might be it on a server side, but can not, and should not be a cache on a client side. It's more about available resources, usage patterns, and the reasons to use.

Problem - Cache need a" cache key"

----------------------------------

Cache is storing and fetching data using a **string** cache `key`. It's already a problem to construct a unique, and usable key, but then you have to serialise and de-serialise data to store in yet again string based medium… in short - cache might be not as fast, as you might think. Especially distributed cache.

Memoization does not need any cache key

---------------------------------------

In the same time - no key is needed for memoization. *Usually\** it uses arguments as-is, not trying to create a single key from them, and does not use some globally available shared object to store results, as cache usually does.

> The difference between memoization and cache is in **API INTERFACE**!

*Usually\** does not mean always. [Lodash.memoize](https://github.com/lodash/lodash/blob/4.17.11/lodash.js#L10547), by default, uses `JSON.stringify` to convert passed arguments into a string cache(is there any other way? No!). Just because they are going to use this key to access an internal object, holding a cached value. [fast-memoize](https://community.risingstack.com/the-worlds-fastest-javascript-memoization-library/), "the fastest possible memoization library", does the same. Both named libraries are not memoization libraries, but cache libraries.

> It worth to mention – JSON.stringify might be 10 times slower than a function, you gonna memoize.

Obviously - the simples solution to the problem is NOT to use a cache key, and NOT access some internal cache using that key. So - remember the last arguments you were called with. Like [memoizerific](https://github.com/thinkloop/memoizerific) or [reselect](https://github.com/reduxjs/reselect) do.

> Memoizerific is probably the only general caching library you would like to use.

The cache size

==============

The second big difference between all libraries is about the cache size and the cache structure.

Have you ever thought – why `reselect` or `memoize-one` holds only one, last result? Not to *"not to use cache key to be able to store more than one result"*, but because there are **no reasons to store more than just a last result**.

…It's more about:

* available resources - a single cache line is very resource friendly

* usage patterns - remembering something "in place" is a good pattern. "In place" you usually need only one, last, result.

* the reason to use -modularity, isolation and memory safety are good reasons. Not sharing cache with the rest of your application is just more safe in terms of cache collisions.

A Single Result?!

=================

Yes - the only one result. With one result memoized some **classical things**, like memoized fibonacci number generation(*you may find as an example in every article about memoization*) would be **not possible**. But, usually, you are doing something else - who needs a fibonacci on Frontend? On Backend? A real world examples are quite far from abstract *IT quizzes*.

But still, there are two **BIG** problems about a single-value memoization kind.

Problem 1 - it's "fragile"

--------------------------

By defaults - all arguments should match, exactly be the "===" same. If one argument does not match - the game is over. Even if this comes from the idea of memoization - that might not be something you want nowadays. I mean – you want to memoize as much, as possible, and as often, as possible.

> Even cache miss is a cache wiping headshot.

There is a little difference between "nowadays" from "yesterdays" - immutable data structures, used for example in Redux.

```

const getSomeDataFromState = memoize(state => compute(state.tasks));

```

Looking good? Looking right? However thestate might change when tasks did not, and you need only tasks to match.

**Structural Selectors** are here to save the day with their strongest warrior - **Reselect** – at your beck and call. Reselect is not only memoization library, but it's power comes from memoization **cascades**, or lenses(which they are not, but think about selectors as optical lenses).

```

// every time `state` changes, cached value would be rejected

const getTasksFromState = createSelector(state => state.tasks);

const getSomeDataFromState = createSelector(

// `tasks` "without" `state`

getTasksFromState, // <----------

// and this operation would be memoized "more often"

tasks => compute(state.tasks)

);

```

As a result, in case of immutable data - you always have to first **"focus"** into the data piece you really need, and then - perform calculations, or else cache would be rejected, and all the idea behind memoization would vanish.

This is actually a big problem, especially for newcomers, but it, as The Idea behind immutable data structures, has a significant benefit - **if something is not changed - it is not changed. If something is changed - probably it is changed**. That's giving us a super fast comparison, but with some false negatives, like in the first example.

> The Idea is about "focusing" into the data you depend on

There are two moments I should have - mentioned:

* `lodash.memoize` and `fast-memoize` are converting your data to a string to be used as a key. That means that they are 1) not fast 2) not safe 3) could produce false positives - some **different data** could have the **same string representation**. This might improve "cache hot rate", but actually is a VERY BAD thing.

* there is an ES6 Proxy approach, about tracking all the used pieces of variables given, and checking only keys which matter. While I personally would like to create myriads of data selectors - you might not like or understand the process, but might want to have proper memoization out of the box - then use [memoize-state](https://github.com/theKashey/memoize-state).

Problem 2- it's "one cache line"

--------------------------------

Infinite cache size is a killer. Any uncontrolled cache is a killer, as long as memory is quite finite. So - all the best libraries are "one-cache-line-long". That's a feature and strong design decision. I just written how right it is, and, believe me - it's a **really right thing**, but it's still a problem. A Big Problem.

```

const tasks = getTasks(state);

// let's get some data from state1 (function was defined above)

getDataFromTask(tasks[0]);

// Yep!

equal(getDataFromTask(tasks[0]), getDataFromTask(tasks[0]))

// Ok!

getDataFromTask(tasks[1]);

// a different task? What the heck?

// oh! That's another argument? How dare you!?

// TLDR -> task[0] in the cache got replaced by task[1]

you cannot use getDataFromTask to get data from different tasks

```

Once the same selector has to work with different source data, with more that one - everything is broken. And it is easy to run into the problem:

* As long as we were using selectors to get tasks from a state - we could use the same selectors to get something from a task. Intense comes from API itself. But it does not work then you can memoize only last call, but have to work with multiple data sources.

* The same problem is with multiple React Components - they all are the same, and all a bit different, fetching different tasks, wiping results of each other.

There are 3 possible solutions:

* in case of redux - use mapStateToProps factory. It would create per-instance memoization.

```

const mapStateToProps = () => {

const selector = createSelector(...);

// ^ you have to define per-instance selectors here

// usually that's not possible :)

return state => ({

data: selector(data), // a usual mapStateToProps

});

}

```

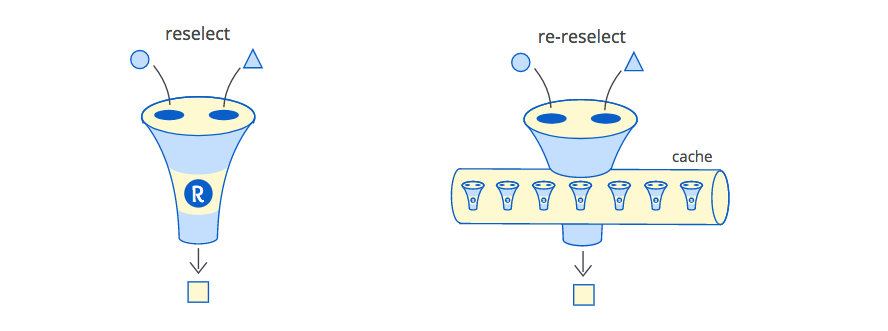

* the second variant is almost the same (and also for redux) - it's about using [re-reselect](https://github.com/toomuchdesign/re-reselect). It's a complex library, which could save the day by distinguishing components. It just could understand, that the new call was made for "another" component, and it might keep the cache for the "previous" one.

This library would help you "keep" the memoization cache, but not delete it. Especially because it is implementing 5(FIVE!) different cache strategies to fit any case. That's a bad smell. What if you choose the wrong one?

All the data you have memoized - you have to forget it, sooner or later. The point is not to remember last function invocation - the point is to FORGET IT at the right time. Not too soon, and ruin memoization, and not too late.

> Got the idea? Now forget it! And where is 3rd variant??

Let take a pause

================

Stop. Relax. Make a deep breath. And answer one simple question - What's the goal? What we have to do to reach the goal? What would save the day?

> TIP: Where is that f\*\*\* "cache" LOCATED!

Where is that "cache" LOCATED? Yes - that's the right question. Thanks for asking it. And the answer is simple - it is located in a closure. In a hidden spot inside\* a memoized function. For example - here is `memoize-one` code:

```

function(fn) {

let lastArgs; // the last arguments

let lastResult;// the last result <--- THIS IS THE CACHE

// the memoized function

const memoizedCall = function(...newArgs) {

if (isEqual(newArgs, lastArgs)) {

return lastResult;

}

lastResult = resultFn.apply(this, newArgs);

lastArgs = newArgs;

return lastResult;

};

return memoizedCall;

}

```

You will be given a `memoizedCall`, and it will hold the last result nearby, inside its local closure, not accessible by anyone, except memoizedCall. A safe place. "this" is a safe place.

`Reselect` does the same, and the only way to create a "fork", with another cache - create a new memoization closure.

But the (another) main question - when it(cache) would be "gone"?

> TLDR: It would be "gone" with a function, when the function instance would be eaten by Garbage Collector.

Instance? Instance! So - what's about per instance memoization? There is a whole article about it at [React documentation](https://reactjs.org/blog/2018/06/07/you-probably-dont-need-derived-state.html#what-about-memoization)

In short — if you are using Class-based React Components you might do:

```

import memoize from "memoize-one";