text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91 values | source stringclasses 1 value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

|---|---|---|---|---|---|

As you may already be familiar with SELinux or perhaps not but I won't go in to discussion what exactly it is, know though that the NSA from the USA created it years back and I am an avid user. I recently got my Raspberry PI and using Raspbian but had an abundant amount of time trying to figure out why every reboot and SSH/HDMI Output would cause a large X [cursor], like the old school GUI did when XFree86 GUI didn't work and this is when attempting to start selinux with no success. In short, I have a tutorial I made to help those who may want to try it out and notice it will NOT work out of the box, even if you use apt-get for all 3 required selinux packages.

GET SELINUX

Open up a terminal and launch:

ACTIVATE SELINUX

Code: Select all

sudo apt-get install selinux-basics selinux-policy-default

Now, you will normally have an issue here but go ahead and run the command:

The output may vary but it should tell you to reboot, so go forth and type

Code: Select all

selinux-activate

now and should start to reboot.now and should start to reboot.sudo reboot

CHECK SELINUX

Now, you may have to remotely SSH to your machine as you probably don't see anything but a grey background and big black X for a mouse cursor. If you don't have this problem, SELinux probably was a successful install (lucky you but doubtful). Either way, open a terminal and run the command:

Now, after that command, you probably see something scary like:

Code: Select all

sudo check-selinux-installation

This obviously means SELinux was NOT successful. So, let's fix that!This obviously means SELinux was NOT successful. So, let's fix that!/usr/sbin/check-selinux-installation:19: DeprecationWarning: os.popen3 is deprecated. Use the subprocess module.

@staticmethod

/usr/sbin/check-selinux-installation:23: DeprecationWarning: os.popen2 is deprecated. Use the subprocess module.

def fix():

/etc/pam.d/login is not SELinux enabled

FSCKFIX is not enabled – not serious, but could prevent system from booting…

PERMISSIVE OR ENFORCING?

Now, I would tell you to try and enable permissive mode but it is extremely likely it won't work. So I want you to enable Enforcing Mode by typing:

CONFIGURE PAM

Code: Select all

sudo selinux-config-enforcing

Now we need to manually configure PAM and you could use vi or pico (sorry, I like pico!) so you can use your favorite text editor for the below command:

1). Edit PAM Login (/etc/pam.d/login)

Now add the following in the file:

Code: Select all

sudo pico /etc/pam.d/login

Save the file (Pico users press Ctrl+X, Y to Overwrite, Enter to Save/Exit).

Code: Select all

session required pam_selinux.so multiple

Now let's re-activate by typing:

It will recommend you reboot, don't, not yet as we have a couple more tasks...

Code: Select all

sudo selinux-activate

2). EDIT INITSCRIPTS (/etc/default/rcS)

Go forth and type:

In this file, I want you to add 2 lines of code (any order):

Code: Select all

sudo pico /etc/default/rcS

and

Code: Select all

FSCKFIX=yes

Now save the file (see #1 for PICO Saving).

Code: Select all

EDITMOTD=no

CHECK DEVPTS

Please run the code:

If it comes back with:

Code: Select all

sudo mount | grep devpts

We've successfully allowed SELinux to install and has been activated where prior it could not be. You could always setup a Cron job and other things as well but you should be OK now.We've successfully allowed SELinux to install and has been activated where prior it could not be. You could always setup a Cron job and other things as well but you should be OK now.[devpts on /dev/pts type devpts (rw,nosuid,noexec,relatime,gid=5,mode=620)/quote] or similar, you're good to go.

Now, remove the static nodes by typing:3). Go, Go Activate (selinux-activate)3). Go, Go Activate (selinux-activate)

Code: Select all

sudo rm -f /dev/[tp]ty[abcdepqrstuvwxyz][0-9a-f]

Now that we added and saved everything, let's see if we did it successfully by typing:If you now see:If you now see:

Code: Select all

sudo selinux-activateActivating SE Linux

SE Linux is activated. You may need to reboot now. | https://www.raspberrypi.org/forums/viewtopic.php?f=66&t=16738 | CC-MAIN-2017-51 | refinedweb | 745 | 62.27 |

Ter. Python has "anonymous blocks" all over the place, since every control structure controls one or more of them. It simply requires that they be forgotten at the next DEDENT. Surely you don't advocate that each of them should get a name! I think this is a difference of cognition. Specifically, people who don't want to name blocks as functions may not abstract processes to signatures as easily, and reify whole processes (including all free identifiers!) as objects more easily, as those who don't think naming is a problem. > Since I routinely use standard names 'f' and 'g' (from math) to name > functions whose name I do not care about, I am baffled (and annoyed) by If the cognition hypothesis is correct, of course you're baffled. You "just don't" think that way, while he really does. The annoyance can probably be relieved by s/a developer/some developers/ here: > (repeated) claims such as "Having to name a one-off function adds > additional cognitive overload to a developer." (Tav). I suspect that "overload" is a pun, here. Your rhetorical question > Golly gee, if one cannot decide on standard one-char name, how can > he manage the rest of Python? has an unexpected answer: in the rest of Python name overloading is carefully controlled and scoped into namespaces. If my cognition hypothesis is correct, then a standard one-character name really does bother/confuse his cognition, where maintaining the whole structure of the block from one use to the next somehow does not. (This baffles me, too!) The question then becomes "can Python become more usable to developers unlike you and me without losing some Pythonicity?" Guido seems to think not (I read him as pessimistic on both grounds: the proposed syntax is neither as useful nor as Pythonic as Tav thinks it is). | https://mail.python.org/pipermail/python-ideas/2009-March/003283.html | CC-MAIN-2016-30 | refinedweb | 307 | 61.16 |

It is the job of an EJB container to automatically load and store Entity Data between each remote interface call. However, it is the job of the EJB developer to make sure that the data loaded in an Entity Bean is consistent with the backend data store at all times. If this is not the case, an Entity Bean can create data integrity problems. This situation may arise when throughout the life of an Entity Bean data, owned by the Bean, is being changed and these changes are not being reflected in the Bean's state.

For example, an Entity Bean representing a parent record and a set of child records may have a remote interface method that adds a new child record. If the method's implementation immediately persists this record to the database, the Entity Bean automatically becomes out of synch with its data store until a fresh load is performed. However, if similar data operations continue without subsequent loads, the Bean can no longer be referenced for the most up-to-date information.

This pattern offers a solution for these kinds of problems.

This pattern primarily applies to the situation described in the example given above. The assumption is that an Entity Bean represents a parent record that has at least one set of related child records. Think about Order and Line Items relationship where Order is the parent record and it may have one or more Line Items associated with it. The relationships may be a lot more complicated and nest many levels, however this pattern would still apply once each individual relationship is considered. Thus, the discussion will focus on a single instance of parent/children relationship but it can be generalized for any situation where this type of relationship is involved.

This pattern assumes that EJB developers correctly update parent record data during data setting operations targeted towards parent record data. If this is not the case, the Entity Bean code must be modified to update or set all the data after each data modification operation. It is also a good practice to follow the Aggregate Details Pattern to store the details of the Entity Bean. The examples in this discussion rely on the use of this pattern.

In order to correctly capture the changes made to the child records, the EJB developers need to follow these steps:

1. Update the Bean's child data

2. Store the operation that was performed on the data

3. Correctly apply the operation to the child data during ejbStore

Steps 2 and 3 are by far the trickiest and therefore require a closer inspection.

Let’s assume that we have a parent class named Shipment that can contain one or more schedules.

public class ShipmentAccessor extends AccessorBase {

// Class Data Members

...

protected ChangeArrayList schedule = new ChangeArrayList();

...

}

ChangeArrayList is a special class derived from ArrayList whose sole responsibility is to keep track of data changes made to a piece of data stored in the list. It is a fully functioning class that has been tested and implemented in a large-scale project. If you would like to obtain a complete listing, drop me an e-mail at leo at stratos dot net.

Following the Aggregate Details Pattern, the Shipment Entity Bean will be derived from the ShipmentAccessor class, thus allowing the complete graph to be returned by executing a single getAllDetails call from the Entity Bean and also enabling set/get code reuse inside the Bean.

If we need to add a new schedule (represented by the ShipmentSchedule class) to the shipment currently stored in the ShipmentAccessor, all we need to do is to add it to the schedule list and do not immediately commit the changes to the data store.

public void addShipmentSchedule(ShipmentSchedule shipmentSchedule) {

schedule.add(shipmentSchedule);

}

The same is true for updating and removing schedules:

public void updateShipmentSchedule(ShipmentSchedule shipmentSchedule) {

schedule.update(shipmentSchedule);

}

public void deleteShipmentSchedule(ShipmentSchedule shipmentSchedule) {

schedule.remove(shipmentSchedule);

}

By capturing the data and all of the operations performed on it, we keep the Entity Bean valid at all times. This, however, places more responsibility on the Bean's ejbStore method that now needs to apply all the data changes that were made after the last store. Therefore, in addition to the current code, extra functionality needs to be added to handle storing all the data modifications. Since all the changes are known, it is relatively straightforward to apply them. The example below uses ChangeArrayList to show how this can be accomplished.

public void ejbStore()

{

...

// Store all the schedule records

deleteShipmentSchedules( schedule.getDeleted() );

insertShipmentSchedules( schedule.getInserted() );

updateShipmentSchedules( schedule.getUpdated() );

}

Each of the methods that takes care of an individual data store operation (insert, update, delete) receives an iterator that iterates over objects that only have the specified operation applied against them. This way, each method needs to handle only a specific operation thus making the code very simple to write, understand and reuse. A consequent load should completely refresh the set of data (schedules in this case) and remove all of the operations previously performed against the data because this pattern only works for load-store lifecycle of the Entity Bean.

Notice that a general usage pattern of the details object under the Aggregate Details Pattern as well as performance considerations (since each remote interface call invokes load/store operations) dictate the following use of the details objects:

1. Find/create the bean

2. Extract the details object (getAllDetails)

3. Perform various data operations on the object

4. Store the details in the Entity Bean (setAllDetails)

Under this scenario, this pattern makes even more sense because of its ability to capture data changes made between each store operation. Let's imagine a situation where an EJB developer follows the general steps outlined above. However, in Step 3, s/he performs several data operations that involve updating existing data and inserting a new record (all this, of course, is done for a set of child records rather than the parent record). If special precautions are not taken, ejbStore will not be able to determine which child records were updated and which were inserted. This pattern allows EJB developers to seamlessly manage this information and to easily manipulate data no matter what operations have been performed against it.

Discussions

J2EE patterns: Keeping Track of Entity Data Changes Between Loads and Stores

Keeping Track of Entity Data Changes Between Loads and Stores (6 messages)

- Posted by: Leo Shuster

- Posted on: December 12 2000 17:21 EST

Threaded Messages (6)

- Keeping Track of Entity Data Changes Between Loads and Stores by Uday Natra on December 15 2000 18:59 EST

- Keeping Track of Entity Data Changes Between Loads and Stores by Leo Shuster on December 27 2000 16:41 EST

- Keeping Track of Entity Data Changes Between Loads and Stores by mike finegan on April 15 2001 10:15 EDT

- Keeping Track of Entity Data Changes Between Loads and Stores by Tarek Hammoud on December 17 2000 21:57 EST

- Keeping Track of Entity Data Changes Between Loads and Stores by Benedict Chng on December 19 2000 11:05 EST

- Keeping Track of Entity Data Changes Between Loads and Stores by Somu Balasundar on January 21 2002 02:05 EST

Keeping Track of Entity Data Changes Between Loads and Stores[ Go to top ]

Leo, The pattern U described, looks really good.

- Posted by: Uday Natra

- Posted on: December 15 2000 18:59 EST

- in response to Leo Shuster

I have a concern about the pattern. You said about implementing the EJBStore method properly to store all the changes properly. But when ever a business method(addShipmentSchedule) is invoked on the EntityBean, The EJBLoad and EJBStore are automatically called if a new a transaction is started. If the Transaction attribute on the EntityBean Methods is set to TRANS_REQUIRED, Then all the business methods on the entity Bean should be called from a single Session Bean Method.

But calling a single Session Bean Method to Update all the info will not suit a situation where I have Multiple UI screens that encapsulates a single Entity Bean. Here I need to write all the changes to the Entity Bean after the user has gone through all the screens Deleting, Inserting and Updating various elements of the entity Bean.

My basic question is that EjbLoad and Store all called on every method if they are called individually.

Keeping Track of Entity Data Changes Between Loads and Stores[ Go to top ]

I will try to answer some of the questions raised in the replies posted above.

- Posted by: Leo Shuster

- Posted on: December 27 2000 16:41 EST

- in response to Uday Natra

There are a couple of ways that this pattern can be implemented in real life:

1. As stated in the pattern, all the changes are made to the accessor object that represents the complete data vector of the Entity Bean. The accessor is obtained at the beginning of the transaction/lifecycle by calling getAllDetails() method and is modified throughout its existence. At the end, the accessor is persisted to the backend data store by calling setAllDetails() method of the Bean, thus forcing ejbStore to be called. Between getAllDetails() and setAllDetails() calls, the Bean itself is not modified -- just the accessor. Keep in mind that it is very costly to commit small changes to the Entity Bean one by one, which trigers store/load operations every time. It is much more efficient to modify the accessor's state and commit all of the changes at once.

2. As suggested by Uday, a Session Bean can be created to wrap an Entity Bean. In this situation, the approach described above can be utilized but on a slightly smaller scale since the changes will be accumulated for one Session Bean method call only. Here, the pattern still proves useful since it eliminates the need to develop extra functionality to commit Bean's state changes (data updates, deletes, inserts) that would otherwise have to be implemented.

3. Some EJB containers may provide special tags in their ejb-jar.xml files that enable developers to limit the amount of loads and stores. In WebLogic, for example, two tags -- <is-modified-method-name> and <is-db-shared> -- can be added inside the <weblogic-enterprise-bean> tag in weblogic-ejb-jar.xml to describe the situations when stores and loads should and should not happen. It is often a good idea to keep track of Bean's changes and signal to the container when the bean is changed and should be saved (this is actually what Toplink does for you, but for those of us without it, we have to reinvent the wheel). In this situation, since stores and loads may not happen on every remote interface call, this pattern enforces data integrity and consistency.

Toplink is a great tool. Unfortunately, not all of us have the benefit of working with it. Thus, this pattern offers a solution for the situations when such advanced middleware tools are not available for the developers. Also, the cost of implementing this pattern is minimal if the framework for data tracking is already implemented. This is exactly what ChangeArrayList does. As you can see from the pattern description, keeping track of data using it becomes trivial.

This pattern describes a solution for keeping the data consistent as a part of normal EJB operation. It relies on the assumption that a number of data operations is performed on the Bean's accessor between getAllDetails() and setAllDetails() calls. It is inefficient to use this pattern for keeping track of a single change that immediately gets persisted to the data store.

This pattern has no bearing on the data modified by external processes since the Bean will not be aware of them at the ejbStore time. In fact, this is a very delicate problem that may require a separate dicussion.

Keeping Track of Entity Data Changes Between Loads and Stores[ Go to top ]

Has anyone compared the performance of TopLink to these methods? TopLink ultimately uses CMP? How are the issues about fine-grained entity beans avoided? Or are they just cached efficiently?

- Posted by: mike finegan

- Posted on: April 15 2001 22:15 EDT

- in response to Leo Shuster

I have heard that using TopLink adds up to 20% degradation in performance over BMP. Does that sound right?

Keeping Track of Entity Data Changes Between Loads and Stores[ Go to top ]

It will be much cheaper to by a product like toplink that can detect deletions, insertions and modifications. Your alternative of coding this stuff in pretty much every 1 to many bean if way more expensive that a license cost.

- Posted by: Tarek Hammoud

- Posted on: December 17 2000 21:57 EST

- in response to Leo Shuster

Not affliated with toplink. Just think that it makes coding persistent stuff trivial.

Keeping Track of Entity Data Changes Between Loads and Stores[ Go to top ]

Hi Leo,

- Posted by: Benedict Chng

- Posted on: December 19 2000 11:05 EST

- in response to Tarek Hammoud

I thought your pattern is describing how you would overcome the problem of data inconsistencies caused by an external process modifying the information on the database tables directly without going through the EJB container. Eg a nightly batch process.

Do you encounter such problems? How do you resolve it?

Ben

Keeping Track of Entity Data Changes Between Loads and Stores[ Go to top ]

Hi

- Posted by: Somu Balasundar

- Posted on: January 21 2002 02:05 EST

- in response to Benedict Chng

Keeping track of Entity Data changes is the responsibility of Transaction manager. Remember that transaction manager controls one Thread at a time. if suppose any batch load/update the Tx tell's the container that load the data again. By Context object this is getting acheived.

Correct me if i am wrong. | http://www.theserverside.com/discussions/thread.tss?thread_id=2636 | CC-MAIN-2014-35 | refinedweb | 2,303 | 50.46 |

The PiFace common functions module.

Project Description

pifacecommon

Common functions for interacting with PiFace products.

Documentation

[]()

You can also find the documentation installed at:

/usr/share/doc/python3-pifacecommon/

Install

Make. Change Log ==========

v4.1.2

- Fixed bug with new Device Tree (Pi2) by changing GPIO_INTERRUPT_DEVICE from /sys/devices/virtual/gpio/ to /sys/class/gpio/ and changing udev rule.

v4.1.1

- Support varying listeners.

v4.1.0

- Added deregister to interrupts.

v4.0.1

- Fixed SPI file descriptor bug when closing.

- Fixed issue #14.

v4.0.0

- Ignored “Interrupted system call” error in watch_port_events.

- Rewrite main functions into chip specific (MCP23S17) class.

- GPIOInterruptDevice class replacing core GPIO enable/disable functions.

- SPIDevice class replacing spisend function. Can now add in spi_callback function which is called before each SPI write.

- Updated installation instructions.

v3.1.1

Added IODIR_FALLING_EDGE and IODIR_RISING_EDGE to replace IODIR_ON and IODIR_OFF respectively. IODIR_ON and IODIR_OFF can still be used in the same way as before. Falling/Rising Edge are for physical level 1/0, On/Off are for logical (programmer) level 1/0.

- Physical Level (pifacecommon.read_bit):

IODIR_FALLING_EDGE: 1 -> 0 IODIR_RISING_EDGE: 0 -> 1

- Logical Level (pifacedigitalio.PiFaceDigital().input_pins[0].value):

IODIR_ON: 0 -> 1 IODIR_OFF: 1 -> 0

- Remember that PiFace Digital Inputs are active low:

>>> pifacecommon.read_bit(0, INPUT_PORT) >>> 1 # physical >>> pifacedigitalio.PiFaceDigital().input_pins[0].value >>> 0 # logical

Fixed Debian package bug where setup script would not be executed.

v3.1.0

- Added debouncing with adjustable settle time.

v3.0.0

- Added timeout class (fixing Issue #2) in interrupts.

- Added support for interrupts on multiple boards.

- Interrupts must be enabled/disabled manually. Automatic handling of this broke interrupts from multiple boards.

v2.0.2

- Moved version number to pifacecommon/version.py so that it can be read from setup.py and bin/uninstall.py.

- Updated SPI help link to point to the new docs.

- Moved installation scripts into single file for Debian packaging.

v2.0.1

- Added version number in source.

- Added uninstall script.

v2.0.0

- Improved interrupts (different API, check the docs).

- Reduced scope of global variables from package to individual modules. (Hiding namespaces from the end user is an attempt to simplify the interface for children. However this package is not intended for that audience and so messing with the namespaces only confuses things.)

v1.2.1

- Supports Python 2.

- Started using semantic versioning.

v1.2

- Started using a change log!

- Removed errors submodule, custom exceptions now go in their respective modules. This might change back in a future release.

- Fixed DigitalInput value bugs

- Fixed SPI transfer bug.. Function spisend now takes bytes as an argument instead of a list. This makes more sense, since it returns bytes.

- Removed install.sh, everything is now handled by setup.py.

- Updated docs.

Download Files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages. | https://pypi.org/project/pifacecommon/ | CC-MAIN-2018-13 | refinedweb | 476 | 54.59 |

The design of ductwork is as important to a home heating and air conditioning system as the unit that powers it. Ducts that are improperly sized, installed with crimps or tight bends, have leaks or are not balanced between supply (conditioned air) and return ("used" air) will cause the system to operate inefficiently. Over time, such small problems may damage the unit. Ductwork is installed at the same time as the heating/air conditioning unit. If done properly, ductwork should last a lifetime.

Things You'll Need

Flexible duct

Metal hanger straps

Hammer

Hanger nails

Tin snips

Heat-resistant tape

Step 1

Determine the size of ductwork needed to match the air flow from the unit, measured in cubic feet per second. The unit should have that marked on it or it will be in the owner's manual with the unit. The size of the pipes coming in from the unit also should indicate the size duct needed to handle output from the unit (supply) and necessary return of air from the house. Main ducts will be larger; branches to individual vents will be smaller.

Step 2

Map routes for ducts through the house using a central duct with branches off to individual areas. Run the main duct through the center of the house, if possible, with forks to vents on outside walls. House design will affect this routing; in some houses, it may be necessary to split supply systems at the unit and run parallel ducts along walls, through an attic. Locate return ducts in areas where air will flow naturally, such as hallways; put return openings on inside walls.

Step 3

Install ducts in a basement between or around floor joists; in a ceiling, place ducts between ceiling joists; in an attic, lay ducts over joists. Fasten ducts with metal strap hangers nailed to joists, about every 6 feet in basements and ceilings and less often in attics. Don't stretch ducts; keep them secure but flexible. Avoid any obstructions that would require sharp bends or would crimp the ducts.

Step 4

Avoid any areas subject to heat, such as water heaters and hot water lines, and don't run ducts over electrical boxes. Add extra insulation to ducts in areas such as attics, where cold wind might blow in. Use metal connectors to join duct sections; slide connectors inside the duct on each end. Secure all connections and seams air-tight with heat-resistant tape recommended by the duct manufacturer.

Step 5

Connect supply and return ducts to the unit once all of the ductwork is laid throughout the house. Test the system by forcing the blower to turn on and look for leaks, indicated by blowing insulation or whistling air around a seam. Force the blower on with a switch if the unit has a separate blower switch, or by adjusting the thermostat until it comes on.

Warning

Don't use duct tape to seal ductwork seams; despite its name, the adhesive will deteriorate over time and cause leaks. | https://www.ehow.com/how_8116922_install-ductwork-central-heating-air.html | CC-MAIN-2020-29 | refinedweb | 504 | 68.3 |

Welcome to the Core Java Technologies Tech Tips for December 14, 2004. Here you'll get tips on using core Java technologies and APIs, such as those in Java 2 Platform, Standard Edition (J2SE).

This issue covers:

Resource Bundle Loading

Hiding ListResourceBundles from javadoc

ListResourceBundles

javadoc Java technology source for developers. Get the latest Java platform releases, tutorials, newsletters and more.

java.net - A web forum where enthusiasts of Java technology can collaborate and build solutions together.

java.com - The ultimate marketplace promoting Java technology,

applications and services.

A resource bundle is a way of embedding text strings in a language-specific (or more precisely, locale-specific) manner. An earlier Tech Tip discussed the use of resource bundles. What follows is a short refresher. If you have a program that needs a string such as "Hello, World", one approach is to code it in the program. However with resource bundles, you don't hardcode the string. Instead, you put the string in a lookup table, and then your program looks up the string at runtime. If the program runs with a different locale, the lookup finds a different string, if translated, or finds the original string if not translated. This doesn't affect the code in your program -- it runs with the same code, irrespective of locale. The only thing you need to do is create and translate the lookup table of values.

As stated previously, resource bundles work with locales. You can say, "I want the 'greet' string for English," where English is the locale. Or, you can say you want 'color' for U.S. English, and 'colour' for U.K. English. Locales also support regionality. In other words, you can specify a phrase for one dialect of U.S. English (perhaps a phrase used in Southern California), and a different phrase for another U.S. region, say New York City.

You can define a resource bundle in a .class file that extends ListResourceBundle, or you can use a PopertyResourceBundle that is backed by a .properties file. When combining resource bundles and locales, there are two searches involved. The first finds the nearest resource bundle requested, the second finds the string for the requested key. Why the differentiation? When searching for resource bundles, the system stops as soon as it finds and loads the requested resource bundle. If the system doesn't find the key in the requested bundle, it then hunts in other resource bundles until it finds the key. Ultimately, if it doesn't find the key, the system throws a MissingResourceException.

.class

ListResourceBundle

PopertyResourceBundle

.properties

MissingResourceException

To demonstrate, suppose you want to find a string for a New York locale in a bundle named Greeting. Suppose too that your Locale was created as follows:

Locale newYork = new Locale("en", "US", "NewYork")

and you asked for a resource bundle like this:

ResourceBundle bundle =

ResourceBundle.getBundle("Greeting", newYork);

The system first looks for the .class file for the bundle. With a region/variant level of locale, such as New York, the file would be Greeting_en_US_NewYork.class. If the system can't find the .class file in the classpath, it then searches for the file Greeting_en_US_NewYork.properties. And if it can't find that file, the system subsequently searches for Greeting_en_US.class, followed by Greeting_en_US.properties, Greeting_en.class, Greeting_en.properties, Greeting.class, and Greeting.properties. The searching stops when the system finds the resource bundle. Thankfully, there is caching involved, so the system doesn't always search everywhere, but that's still potentially a lot of different places that have to be searched.

Greeting_en_US_NewYork.class

Greeting_en_US_NewYork.properties

Greeting_en_US.class

Greeting_en_US.properties

Greeting_en.class

Greeting_en.properties

Greeting.class

Greeting.properties

The system then performs a second round of lookups -- this time for the requested key. If the key isn't in the bundle it found, the system looks for more resource bundles, beyond the language, country, and variant level of the current bundle. This could load more bundles, whether they are .class files or .properties files.

One question you might have is which approach is better, using .class files or using .properties files? Notice that .class files are searched for first, then .properties files. Also note that .class files are loaded directly by the class loader, but .properties files have to be parsed each time the bundle needs to be loaded. Parsing is a two-pass process. To deal with Unicode strings such as \uXXX, the system must scan each key=value line twice, and then split the key from the value.

\uXXX

key=value

Let's investigate both approaches further by comparing load times. Start with the following test program:

import java.util.*;

public class Test1 {

public static void main(String args[]) {

Locale locale = Locale.ENGLISH;

long start = System.nanoTime();

ResourceBundle myResources =

ResourceBundle.getBundle("MyResources", locale);

long end1 = System.nanoTime();

String string = myResources.getString("HelpKey");

long end2 = System.nanoTime();

System.out.println("Load: " + (end1 - start));

System.out.println("Fetch: " + (end2 - end1));

System.out.println("HelpKey: " + string);

}

}

If you are running on a 1.4 Java platform, you need to change the test program so that it calls currentTimeMillis instead of nanoTime. The nanoTime method works with nanosecond precision. The currentTimeMillis works only in milliseconds. Also, see the note about microbenchmarks at the end of this tip.

currentTimeMillis

nanoTime

Next, create a ListResourceBundle class in the same directory as the test program:

import java.util.*;

public class MyResources extends ListResourceBundle {

public Object[][] getContents() {

return contents;

}

private static final Object[][] contents = {

{"OkKey", "OK"},

{"CancelKey", "Cancel"},

{"HelpKey", "Help"},

{"YesKey", "Yes"},

{"NoKey", "No"},

};

}

Compile the test program and the MyResources class. Then run the test program.

MyResources

Your results will depends on your operating environment, your RAM size, and the speed of your processor. Here's a result produced in a 800 MHz machine running Windows XP with 768 MB RAM:

Load: 25937415

Fetch: 62994

Now create a properties file, MyResources.properties, with the following elements:

MyResources.properties

OkKey=OK

CancelKey=Cancel

HelpKey=Help

YesKey=Yes

NoKey=No

Run the test program again, but first remove the MyResources class. This will run the program using the .properties files. Here's the result produced in the same machine as before:

Load: 101469357

Fetch: 35450

The load times show the ListResourceBundle approach is faster than the PropertyResourceBundle approach. But, surprisingly, the fetch times show that the PropertyResourceBundle approach is almost twice as fast as ListResourceBundle approach. With roughly a five times difference in loading and a two times difference in fetching, you'd have to do a lot of fetches to catch up. Keep in mind that a nanosecond is a billionth of a second and a millisecond is a thousandth of a second.

PropertyResourceBundle

Now run the tests again, but this time use 100 elements in the .class and .properties files. To create the file, you can simply copy the five elements in the previous files 20 times, and change the entries slightly with each copy. For example, change OkKey to OkKey1, CancelKey to CancelKey1, and so on. Your results should follows the earlier results. Loading should be faster with the ListResourceBundle, but fetching should be faster with the PropertyResourceBundle. Actually, you should find that the load time of 100 resources for a PropertyResourceBundle is close to that of five elements.

OkKey

OkKey1

CancelKey

CancelKey1

ListResourceBundle

Load: 12782686

Fetch: 262788

PropertyResourceBundle

Load: 12600795

Fetch: 35175

Changing the Locale from language (Locale.ENGLISH) to language

and country (Locale.US) produces even more interesting results:

Locale.ENGLISH

Locale.US

ListResourceBundle

Load: 13152117

Fetch: 32921

PropertyResourceBundle

Load: 14592024

Fetch: 261060

ListResourceBundle:

Load: 12837863

Fetch: 264264

PropertyResourceBundle

Load: 14468366

Fetch: 33166

In all cases, while loading the initial bundle is always faster for the ListResourceBundle, fetching is sometimes slower. So which way do you go? For smaller resource bundles, the ListResourceBundle does seem to be the faster of the two. For larger ones, it seems best to stay away from ListResourceBundle. The ListResourceBundle needs to convert the two-dimensional array into a lookup map, that's the reason for the slower time.

Looking at these results, you might think that a ListResourceBundle should never be used. For instance, for a server-based program, it is easier to maintain a .properties file than a .class file, and the load time is negligible. But, a ListResourceBundle is not just a two-dimensional array of strings. The getContents method returns an Object array:

getContents

Object

public Object[][] getContents()

What does this mean? If you want to localize content beyond simply strings, you must use ListResourceBundle objects. This allows you to localize content such as images, colors, and dimensions. You can't have any object in a PropertyResourceBundle, only strings.

Note that the timing test in the sample program can be considered a microbenchmark. It can certainly be improved. However, with the caching of resource bundle loading, it's hard to get accurate load times when looping multiple times in the same run. Multiple runs should be used to validate results. For information on techniques for writing microbenchmarks, see the JavaOne 2002 presentation How NOT To Write A Microbenchmark. In addition, a lot of performance work in this area has been done for JDK 5.0. Your numbers may differ substantially using Java 2 SDK, Standard Edition, v 1.4.x.

For additional information about working with resource bundles,

see the javadoc for the ResourceBundle class,

the internationalization trail in the Java Tutorial, and the Core Java Internationalization page.

The first Tech Tip in this issue, Resource Bundle Loading, made some performance comparisons between the ListResourceBundle approach and the PropertyResourceBundle approach. If you decide to take the ListResourceBundles approach instead of the alternative PropertyResourceBundle route, there is one more thing to consider.. How to you address this issue? In fact, is there a way to hide ListResourceBundles from javadoc? This tip shows you a way to do that.

By default, the javadoc tool supports two options for suppressing classes from the output. You can specify a list of all the classes in a file and direct the tool to run javadoc on this fixed set. Or you can place all the resource bundles in a package and then direct the tool to run on a set of packages that ignores the package in which the resource bundle is located. The first technique is cumbersome -- maintaining the list is difficult. The second technique prevents you from keeping the resource bundles in the same directories as the source that uses them.

So how can you customize javadoc to ignore specific classes when generating its output? The answer is that instead of generating a complete list of classes (with the resource bundle classes missing), you simply provide a list of resource bundle classes.

This solution works for both the 1.4 and 5.0 releases of J2SE. To do this you run a doclet that accepts an option, -excludefile, which excludes a set of classes that you specify. Here's how you run the doclet (note that the command should go on one line):

-excludefile

java -classpath <path to doclet and path to tools.jar>

ExcludeDoclet -excludefile <path to exclude file>

<javadoc options>

In response to the command, the validOptions method of the Doclet class looks for the -excludefile option. If it finds it, the method reads the contents of the exclude file -- these are the set of classes and packages to ignore. Then the start method is called. As each class or package is processed, the method throws away the classes and packages in the exclude set. The doclet includes the optionLength method, this allows the doclet to run under both J2SE 1.4 and 5.0. Here is the doclet, ExcludeDoclet:

validOptions

Doclet

start

optionLength

ExcludeDoclet

import java.io.*;

import java.util.*;

import com.sun.tools.javadoc.Main;

import com.sun.javadoc.*;

/**

* A wrapper for Javadoc. Accepts an additional option

* called "-excludefile", which specifies which classes

* and packages should be excluded from the output.

*

* @author Jamie Ho

*/

public class ExcludeDoclet extends Doclet {

private static List m_args = new ArrayList();

private static Set m_excludeSet = new HashSet();

/**

* Iterate through the documented classes and remove the

* ones that should be excluded.

*

* @param root the initial RootDoc (before filtering).

*/

public static boolean start(RootDoc root) {

root.printNotice

("\n\nRemoving excluded source files.......\n\n");

ClassDoc[] classes = root.classes();

for (int i = 0; i < classes.length; i++) {

if (m_excludeSet.contains(classes[i].qualifiedName()) ||

m_excludeSet.contains

(classes[i].containingPackage().name())) {

root.printNotice

("Excluding " + classes[i].qualifiedName());

continue;

}

m_args.add(classes[i].position().file().getPath());

}

root.printNotice("\n\n");

return true;

}

/**

* Let every option be valid. The real validation happens

* in the standard doclet, not here. Remove the "-excludefile"

* and "-subpackages" options because they are not needed by

* the standard doclet.

*

* @param options the options from the command line.

* @param reporter the error reporter.

*/

public static boolean validOptions(String[][] options,

DocErrorReporter reporter) {

for (int i = 0; i < options.length; i++) {

if (options[i][0].equalsIgnoreCase("-excludefile")) {

try {

readExcludeFile(options[i][1]);

} catch (Exception e) {

e.printStackTrace();

}

continue;

}

if (options[i][0].equals("-subpackages")) {

continue;

}

for (int j = 0; j < options[i].length; j++) {

m_args.add(options[i][j]);

}

}

return true;

}

/**

* Parse the file that specifies which classes and packages

* to exclude from the output. You can write comments in this

* file by starting the line with a '#' character.

*

* @param filePath the path to the exclude file.

*/

private static void readExcludeFile(String filePath)

throws Exception {

LineNumberReader reader =

new LineNumberReader(new FileReader(filePath));

String line;

while ((line = reader.readLine()) != null) {

if (line.trim().startsWith("#"))

continue;

m_excludeSet.add(line.trim());

}

}

/**

* Method required to validate the length of the given option.

* This is a bit ugly but the options must be hard coded here.

* Otherwise, Javadoc will throw errors when parsing options.

* We could delegate to the Standard doclet when computing

* option lengths, but then this doclet would be dependent on

* the version of J2SE used. I'd rather hard code so that

* this doclet can be used with 1.4.x or 1.5.x.

*

* @param option the option to compute the length for.

*/

public static int optionLength(String option) {

if (option.equalsIgnoreCase("-excludefile")) {

return 2;

}

//General options

if (option.equals("-author") ||

option.equals("-docfilessubdirs") ||

option.equals("-keywords") ||

option.equals("-linksource") ||

option.equals("-nocomment") ||

option.equals("-nodeprecated") ||

option.equals("-nosince") ||

option.equals("-notimestamp") ||

option.equals("-quiet") ||

option.equals("-xnodate") ||

option.equals("-version")) {

return 1;

} else if (option.equals("-d") ||

option.equals("-docencoding") ||

option.equals("-encoding") ||

option.equals("-excludedocfilessubdir") ||

option.equals("-link") ||

option.equals("-sourcetab") ||

option.equals("-noqualifier") ||

option.equals("-output") ||

option.equals("-sourcepath") ||

option.equals("-tag") ||

option.equals("-taglet") ||

option.equals("-tagletpath")) {

return 2;

} else if (option.equals("-group") ||

option.equals("-linkoffline")) {

return 3;

}

//Standard doclet options

option = option.toLowerCase();

if (option.equals("-nodeprecatedlist") ||

option.equals("-noindex") ||

option.equals("-notree") ||

option.equals("-nohelp") ||

option.equals("-splitindex") ||

option.equals("-serialwarn") ||

option.equals("-use") ||

option.equals("-nonavbar") ||

option.equals("-nooverview")) {

return 1;

} else if (option.equals("-footer") ||

option.equals("-header") ||

option.equals("-packagesheader") ||

option.equals("-doctitle") ||

option.equals("-windowtitle") ||

option.equals("-bottom") ||

option.equals("-helpfile") ||

option.equals("-stylesheetfile") ||

option.equals("-charset") ||

option.equals("-overview")) {

return 2;

} else {

return 0;

}

}

/**

* Execute this doclet to filter out the unwanted classes

* and packages. Then execute the standard doclet.

*

* @param args The Javadoc arguments from the command line.

*/

public static void main(String[] args) {

String name = ExcludeDoclet.class.getName();

Main.execute(name, name, args);

Main.execute((String[]) m_args.toArray(new String[] {}));

}

}

Compile the doclet as follows:

javac -classpath tools.jar ExcludeDoclet.java

Replace tools.jar with the appropriate location of your JDK installation. For example, if you're running in the Windows environment and your JDK is installed in the c:\jdk1.5.0 directory, specify c:\jdk1.5.0\lib\tools.jar.

tools.jar

c:\jdk1.5.0 directory, specify c:\jdk1.5.0\lib\tools.jar

Next, create a file such as skip.txt to identify which classes to skip. Normally, this would be your set of ListResourceBundle subclasses. For this example, run ExcludeDoclet with the standard JDK classes, and ignore a set in the java.lang package:

skip.txt

java.lang

java.lang.Math

java.lang.Long

java.lang.InternalError

java.lang.InterruptedException

java.lang.Iterable

java.lang.LinkageError

Then run the following command (on one line):

java -classpath .;c:\jdk1.5.0\lib\tools.jar ExcludeDoclet

-d docs -excludefile skip.txt -sourcepath c:\jdk1.5.0\src

-source 1.5 java.lang

The command will generate the javadoc for the java.lang package, excluding the six classes and interfaces identified in skip.txt.

Here is part of the generated javadoc showing the interfaces in the java.lang package. Notice that the Iterable interface is excluded.

Iterable

For additional information about creating custom doclets, see the

tip Generating Custom Doclets. | http://java.sun.com/developer/JDCTechTips/2004/tt1214.html | crawl-001 | refinedweb | 2,773 | 50.94 |

Yesterday I created trying to understand why I was getting a different value

casting an address and just casting a float ..

anyway the reason for this is this code here

My teacher wrote this in class because wanted us to understand how floating point numbers are stored.My teacher wrote this in class because wanted us to understand how floating point numbers are stored.Code:#include<iostream> using namespace std; void binaryPrint(unsigned char * buff, int size) { for (int i = (size -1) ; i >= 0 ; i --) { unsigned char mask = 128; for (int j = 0 ; j < 8 ; j ++) { cout << ((buff[i] & mask) ? "1" : "0"); mask = mask >> 1; } } cout << endl; } void main() { float i; i = -100.75f; binaryPrint( (unsigned char * ) & i, sizeof(float)); }

But I find it really hard to understand his code.

firstly how is it possible to store an integer into a char type?

is it because all numbers in computer base form is in binary? and because 128 is not greater than 255 the highest number an 8bit can take, I was able to store in a char?

second:

when i declare a type and a location is made for it in memory is it contiguous, so it like an array ?

so for an int, because memory is usually stored in bytes

it will divide and int of size 4bytes into each byte

so it is a 4 by 8 array?

1111 1111

1111 1111

1111 1111

1111 1111

so if i just declared a simple char , because a char size is already a byte it doesn't have divide into up into separate byte..so it is basically

1111 1111

if that is the case

this piece of code:

If bytes are located as arrays, 1d array, 2d array..If bytes are located as arrays, 1d array, 2d array..Code:void binaryPrint(unsigned char * buff, int size) { for (int i = (size -1) ; i >= 0 ; i --) { unsigned char mask = 128; for (int j = 0 ; j < 8 ; j ++) { cout << ((buff[i] & mask) ? "1" : "0"); mask = mask >> 1; } } cout << endl; }

were 2 loops used because char *buff is string pointer to an address that holds one byte of data

I don't really understand why i will be initialised to the size of a type..why not start from 0?

when he did buff[i]

so if i have 32 bits in memory:

1000 1111

1100 0001

1001 1011

0011 0101

if i did buff[i]

since i is 4-1 and represents row 4

i am assuming

it just takes

0011 0101 & 1000 0000

0011 0101 & 0100 0000

0011 0101 & 0010 0000

and just ands them together to see which bit is set.after the inner loop is done

it goes to row 3

and does the process again. So it does this 32 times.

so let's say instead of converting a float or int - since they are 32bits

i wanted to convert a char

now a char is 8 bits long

1111 0000

if i had a string pointer to an address in memory that holds the binary digits

so buff points to 1111 0000

1111 0000 is essentially a 1d array ..

so why is that when I use just one loop, to check which bits are set it doesn't work ?

... Unless... UnlessCode:#include <iostream> using namespace std ; void main() { char let = 9 ; unsigned char* buff = (unsigned char*)&let; unsigned char mask = 128 ; int i= 0; for(; i<8; i++) { if(buff[i] & mask) { cout << "1" ; } else { cout << "0" ; } mask >>=1 ; } cout << endl ; system("pause") ; }

because I am incrementing i..

i am moving to the next row of byte in memory...

so that wouldn't work..

i would have to do buff[0] or *buff for it to work...

please help explain.. | https://cboard.cprogramming.com/cplusplus-programming/130964-binary-converter-bit-level.html | CC-MAIN-2017-04 | refinedweb | 624 | 74.53 |

18 December 2018 0 comments Python, Web development

Last week, I landed concurrent downloads in

hashin. The example was that you do something like...

$ time hashin -r some/requirements.txt --update-all

...and the whole thing takes ~2 seconds even though it that

some/requirements.txt file might contain 50 different packages, and thus 50 different PyPI.org lookups.

Just wanted to point out, this is not unique to use with

--update-all. It's for any list of packages. And I want to put some better numbers on that so here goes...

Suppose you want to create a requirements file for every package in the current virtualenv you might do it like this:

# the -e filtering removes locally installed packages from git URLs $ pip freeze | grep -v '-e ' | xargs hashin -r /tmp/reqs.txt

Before running that I injected a little timer on each pypi.org download. It looked like this:

def get_package_data(package, verbose=False): url = "" % package if verbose: print(url) + t0 = time.time() content = json.loads(_download(url)) if "releases" not in content: raise PackageError("package JSON is not sane") + t1 = time.time() + print(t1 - t0)

I also put a print around the call to

pre_download_packages(lookup_memory, specs, verbose=verbose) to see what the "total time" was.

The output looked like this:

▶ pip freeze | grep -v '-e ' | xargs python hashin.py -r /tmp/reqs.txt 0.22896194458007812 0.2900810241699219 0.2814369201660156 0.22658205032348633 0.24882292747497559 0.268247127532959 0.29332590103149414 0.23981380462646484 0.2930259704589844 0.29442572593688965 0.25312376022338867 0.34232664108276367 0.49491214752197266 0.23823285102844238 0.3221290111541748 0.28302812576293945 0.567702054977417 0.3089122772216797 0.5273139476776123 0.31477880477905273 0.6202089786529541 0.28571176528930664 0.24558186531066895 0.5810830593109131 0.5219211578369141 0.23252081871032715 0.4650228023529053 0.6127192974090576 0.6000659465789795 0.30976200103759766 0.44440698623657227 0.3135409355163574 0.638585090637207 0.297544002532959 0.6462509632110596 0.45389699935913086 0.34597206115722656 0.3462028503417969 0.6250648498535156 0.44159507751464844 0.5733060836791992 0.6739277839660645 0.6560370922088623 SUM TOTAL TOOK 0.8481268882751465

If you sum up all the individual times it would have become 17.3 seconds. It's 43 individual packages and 8 CPUs multiplied by 5 means it had to wait with some before downloading the rest.

Clearly, this works nicely.

Follow @peterbe on Twitter | https://api.minimalcss.app/plog/concurrent-download-with-hashin | CC-MAIN-2020-24 | refinedweb | 364 | 74.29 |

This is your resource to discuss support topics with your peers, and learn from each other.

01-16-2011 06:30 AM

I am attempting to create a label from the information given in a TextInput box and need it to autoSize to the length of the text. The api describes the property for this that I am following but get the following error:

1120: Access of undefined property TextFieldAutoSize. MyIdeasBoard.as /MyIdeasBoard/s

The code for this is as follows:

var tempString:String = newTextInput.text; var thisTextField:Label = new Label(); thisTextField.text = tempString; thisTextField.autoSize = TextFieldAutoSize.RIGHT;

Grateful for any assistance

Solved! Go to Solution.

01-16-2011 07:03 AM

My experience is that you don't need to specify the width of a Label, it'll make it fit automatically. Just set the text, remove the autoSize line and add it.

01-16-2011 07:28 AM

Its not making it fit, that is why I went down the route of the autoSize, its currently cutting it off after about 10 characters and the labels need to be of various sizes.

01-16-2011 08:50 AM

hey dave,

from the looks of it everything looks fine. i am able to use the autoSize properly on my machine. what it sounds like is you are importing the wrong Label class. make sure you are importing the qnx.ui.text.Label class. hope that helps. good luck!

01-16-2011 10:17 AM

Make sure you have imported TextFieldAutoSize at the top:

import flash.text.TextFieldAutoSize;

01-16-2011 11:07 AM

Thats great thanks, I hadn't imported flash.text.TextFieldAutoSize as I had assumed that it was covered by the qnx.ui.text.Label. Its working as expected now. | https://supportforums.blackberry.com/t5/Adobe-AIR-Development/Label-autoSize-error/m-p/737781 | CC-MAIN-2017-13 | refinedweb | 293 | 67.04 |

»

Programming Diversions

Author

Abbott's Revenge

Garrett Rowe

Ranch Hand

Joined: Jan 17, 2006

Posts: 1296

posted

Aug 26, 2007 17:09:00

0

One cool resource I've found for interesting programming questions is

The online-judge problem set archive

. Although

Java

support for the online submission portion of the site leaves a lot to be desired, (currently only Java 1.2 is supported with

very

limited support for java.io.* operations), there are plenty of problems that span a whole range of difficulties. The problem I'm currently pounding my head against is problem #816

Abbott's Revenge

. This problem combines a slew of programming goodies, from parsing a domain-specific language, to finding a good abstraction of the problem (my current headache), to discovering a good algorithm to solve the maze. I figured it might be fun to discuss one or several of the interesting facets of this problem here.

To the mods: I'm not sure if its OK to post problems from this site here, but I figured since these weren't active contest problems, but more like individual programming brain teasers it might not be a problem. There are no prizes associated with solving the problems as far as I'm aware, just the general feeling of accomplishment. If it is an issue, just let me know and I'll cease and desist.

Some problems are so complex that you have to be highly intelligent and well informed just to be undecided about them. - Laurence J. Peter

Garrett Rowe

Ranch Hand

Joined: Jan 17, 2006

Posts: 1296

posted

Aug 26, 2007 17:17:00

0

More fun interactive mazes designed by

Robert Abbott

can be found

here

.

Garrett Rowe

Ranch Hand

Joined: Jan 17, 2006

Posts: 1296

posted

Aug 26, 2007 17:45:00

0

As far as the problem abstraction goes, this is what I'm currently thinking.

interface Cell { boolean isGoal(); CellCoordinates getCoordinates(); List<Cell> listCellsAvailableFrom(Direction d); Direction directionTo(Cell c) throws CellNotAdjacentException; } enum Direction {NORTH, SOUTH, EAST, WEST;} interface CellCoordinates { int getX(); int getY(); }

Maybe I'll use this as a jumping-off point and see where it leads me, although I'm sure the TDD guys will say I'm getting way ahead of myself. I just not as comfortable with their style yet.

Steve Fahlbusch

Bartender

Joined: Sep 18, 2000

Posts: 582

7

I like...

posted

Aug 29, 2007 06:02:00

0

Garrett,

On monday saw your post at work and as soon as i got home had to spend a few minutes banging this out in python - it's a great classic problem with a twist.

How goes it for you?

Just for my own interest. Did you use a forwards or backwards evaluation approach to solving the path (did backwards myself)? And as to enumerating the solution domain did you use a depth first search or breadth first search (since it seems to want a minimal solution, i did the BFS and punted at the first solution).

thanks for the link

Gabriel Claramunt

Ranch Hand

Joined: May 26, 2007

Posts: 375

I like...

posted

Sep 15, 2007 22:48:00

0

Very interesting... It hook me up immediately!

Seems "easy" to solve

: create a directed graph where the nodes represents the "hallways" and the arcs the "turns" connecting them (Yes, is somehow the "complement" of the maze's draw). Then, with Dijktra's algorithm should be a piece of cake

(and is DFS)

Too lazy to code it right now, later I'll do it as an excuse to practice Ruby.

Gabriel

Software Surgeon

Piet Verdriet

Ranch Hand

Joined: Feb 25, 2006

Posts: 266

posted

Sep 29, 2007 06:13:00

0

Thanks for posting this fun puzzle! For those interested, here's how I solved it:

import java.io.*; import java.util.*; // AbbottsRevenge class AbbottsRevenge { public AbbottsRevenge(String fileName) throws IOException { Scanner data = new Scanner(new File(fileName)); while(data.hasNextLine()) { String name = data.nextLine().trim(); if(name.equals("END")) break; MazeSolver solver = new MazeSolver(data.nextLine().trim()); Maze maze = new Maze(name, data); solver.solve(maze); System.out.println(solver); } } public static void main(String[] args) throws IOException { new AbbottsRevenge("data.txt"); // contents of data.txt: /* AtlantaMaze 4 2 N 4 3 1 1 WL NR * 1 2 WF EFR NLR * 1 3 WFL EFR NL * 1 4 ER NL * 2 1 NFR SFL WL * 2 2 NFL SFLR WFRL EL * 2 3 NF SF WFLR EFLR * 2 4 ELR NF SR * 3 1 SL WR * 3 2 NF EFL WR SLR * 3 3 EFL WL SLR * 3 4 EL SR * 0 END */ } } // Maze class Maze { String name; Map<Point, Crossing> crossings; public Maze(String n, Scanner data) { name = n; crossings = new HashMap<Point, Crossing>(); process(data); } public Direction getNextDirection(Point current, Direction last) { Crossing c = crossings.get(current); return c.popDirection(last); } private void process(Scanner data) { while(data.hasNextLine()) { String line = data.nextLine().trim(); if(line.equals("0")) break; String[] array = line.split("\\s+"); Crossing c = new Crossing(new Point(array[0], array[1])); for(int i = 2; i < array.length-1; i++) { for(int j = 1; j < array[i].length(); j++) { c.addConnection(array[i].charAt(0)+""+array[i].charAt(j)); } } crossings.put(c.point, c); } } } // Crossing class Crossing { Point point; Map<Direction, Stack<Direction>> connections; public Crossing(Point p) { point = p; connections = new HashMap<Direction, Stack<Direction>>(); } public void addConnection(String s) { Direction bearing = Direction.getDiretion(String.valueOf(s.charAt(0))); Direction heading = Direction.getDiretion(s); Stack<Direction> allHeadings = connections.remove(bearing); if(allHeadings == null) allHeadings = new Stack<Direction>(); allHeadings.push(heading); connections.put(bearing, allHeadings); } public Direction popDirection(Direction d) { Stack<Direction> stack = connections.get(d); return stack == null || stack.isEmpty() ? null : stack.pop(); } } // Point class Point { int row; int column; public Point(String r, String c) { this(Integer.parseInt(r), Integer.parseInt(c)); } public Point(int r, int c) { row = r; column = c; } public boolean equals(Object o) { Point that = (Point)o; return this.row == that.row && this.column == that.column; } public int hashCode() { return row*37 ^ column*43; } public Point move(Direction d) { switch(d) { case NORTH : return new Point(row-1, column); case SOUTH : return new Point(row+1, column); case EAST : return new Point(row, column+1); default : return new Point(row, column-1); } } public String toString() { return "("+row+","+column+")"; } } // MazeSolver class MazeSolver { Point finish; Maze maze; Stack<Decision> stack; List<Decision> path; public MazeSolver(String s) { String[] array = s.split("\\s+"); Point start = new Point(array[0], array[1]); Direction bearing = Direction.getDiretion(array[2]); finish = new Point(array[3], array[4]); stack = new Stack<Decision>(); stack.push(new Decision(start, bearing)); } private void move(Direction d) { Point next = stack.peek().point.move(d); stack.push(new Decision(next, d)); } private Direction lastDirection() { return stack.peek().direction; } private Point currentPoint() { return stack.peek().point; } private void removeCycles() { if(stack.size() == 1) return; path = new ArrayList<Decision>(stack); for(int i = 0; i < path.size(); i++) { Decision temp = path.get(i); int start = path.indexOf(temp); int end = path.lastIndexOf(temp); while(end > start) { path.remove(end--); } } } public void solve(Maze m) { maze = m; move(lastDirection()); while(true) { Direction next = maze.getNextDirection( currentPoint(), lastDirection()); if(next != null) { move(next); } else { stack.pop(); } if(currentPoint().equals(finish) || stack.size() == 1) break; } removeCycles(); } public String toString() { StringBuilder b = new StringBuilder(maze.name).append('\n').append(' '); if(path == null) return b.append(" No Solution Possible").toString(); for(int i = 0; i < path.size(); i++) { if(i > 0 && i%10 == 0) b.append('\n').append(' '); b.append(' ').append(path.get(i).point); } return b.toString(); } } // Decision class Decision { Point point; Direction direction; public Decision(Point p, Direction d) { point = p; direction = d; } public boolean equals(Object o) { Decision that = (Decision)o; return this.point.equals(that.point) && this.direction == that.direction; } public int hashCode() { return point.hashCode()*13 ^ direction.hashCode()*47; } } // Direction enum Direction { NORTH, EAST, SOUTH, WEST; public static Direction getDiretion(String s) { if(s.equals("WR") || s.equals("EL") || s.equals("NF") || s.equals("N")) { return NORTH; } else if(s.equals("NR") || s.equals("SL") || s.equals("EF") || s.equals("E")) { return EAST; } else if(s.equals("ER") || s.equals("WL") || s.equals("SF") || s.equals("S")) { return SOUTH; } else { return WEST; } } }

I agree. Here's the link:

subject: Abbott's Revenge

Similar Threads

Need excellent Java Programming Resource

Math problem with WAS 4.1

I can't run my Servlet

Binary Notes

Finding the largest Prime Factor

All times are in JavaRanch time: GMT-6 in summer, GMT-7 in winter

JForum

|

Paul Wheaton | http://www.coderanch.com/t/35482/Programming/Abbott-Revenge | CC-MAIN-2014-52 | refinedweb | 1,429 | 56.76 |

This is another post about ASP.NET Core and Angular 2. This time I use a cleaner and more light weight way to host an Angular 2 App inside an ASP.NET Core Web. I'm going to use dotnet CLI and Visual Studio Code.

A few days ago there was an update for ASP.NET Core announced. This is not a big one, but a important run-time update. You should install it, if you already use ASP.NET Core 1.0. If you install it the first time (loaded from), the update is already included. Also since a few days, the final version of Angular2 was announced. So, we will use Angular 2.0.0 and ASP.NET Core 1.0.1.

This post is structured into nine steps:

#1 Create the ASP.NET Core Web

The first step is to create the ASP.NET Core web application this is the easiest thing using the dotnet CLI. After downloading it from and installing it, you are directly able to use it. Choose any console you like and g to your working folder.

Type the following line to create a new web application inside that working folder:

> dotnet new -t web

If you used the dotnet CLI for the first time it will take a few seconds. After the first time it is pretty fast.

Now you have a complete ASP.NET Core quick-start application. Almost the same thing you get, if you create a new application in Visual Studio 2015.

Now we need to restore the NuGet packages, which contains all the .NET Core and ASP.NET dependencies

> dotnet restore

This takes a few seconds, depending in the amount of packages or on the internet connection.

If this is done, type

dotnet run to start the app. You will see an URL in the console. Copy this URL and paste it into the browsers address bar. As you can see, you just need three console commands to create a working ASP.NET application.

#2 Setup the ASP.NET Core Web

To support a Angular2 single page application we need to prepare the

Startup.cs a little bit. Because we don't want to use MVC, but just the web API, we need to remove the configured default route.

To support Angular routing, we need to handle 404 errors: In case a requested resource was not found on the server, it could be a Angular route. This means we should redirect request, which results in a error 404, to the

index.html. We need to create this file in the

wwwroot folder later on.

The

Configure method in the

Startup.cs now looks(); }

#3 The Front-End Dependencies.

NPM is used to get all that stuff, including Angular itself, to the development machine. We need to configure the

package.json a little bit. The easiest way is to use the same configuration as in the ANgular2 quick-start tutorial on angular.io

You need to have Node.JS installed on your machine, To get all the tools working.

{ " } }

You should also install Webpack, Typings and TypeScript globaly on your machine:

> npm install -g typescript > npm install -g typings > npm install -g webpack

The TypeScript build needs a configuration, to know how to build that code. This is why we need a

tsconfig.json:

{ "compilerOptions": { "target": "es5", "module": "commonjs", "moduleResolution": "node", "sourceMap": true, "emitDecoratorMetadata": true, "experimentalDecorators": true, "removeComments": false, "noImplicitAny": false } }

And TypeScript needs type defintions for all the used libraries, which are not written in TypeScript. This is where Typings is used. Typings is a kind of a package manager for TypeScript type definitions, which also needs a configuration:

{ " } }

Now we can use

npm install in the console to load all that stuff. This command automatically calls

typings install as a NPM post install event.

#4 Setup the Single Page

The Angular2 app is hosted on a single HTML page inside the

wwwroot folder of the ASP.NET Core web. Add a new

index.html and add it to the

wwwroot folder:

>

Currently we don't have the JavaSript dependencies configured. This is what we will do in the next step

#5 Configure Webpack

Webpack has two tasks in this simple tutorial. The first thing is to copy some dependencies out of the

node_modules folder into the

wwwroot folder, because static files will only be provided out of this special folder. We need Core.JS, Zone.JS, Reflect-Metadata and System.JS. The second task is to build and bundle the Angular2 application (which is not yet written) and all it's dependencies.

Let's see how this simple Webpack configuration (webpack.config.js) looks like:' } ] } }];

We have two separate configurations for the mentioned tasks. This is not the best way how to configure Webpack. E.g. the Angular2 Webpack Starter or the latest Angular CLI, do the whole stuff with a more complex Webpack configuration.

To run this configuration, just type

webpack in the console. The first configuration writes out a few warnings, but will work anyway. The second config should fail, because we don't have the Angular2 app yet.

#6 Configure the App

We now need to load the Angular2 app and it's dependencies. This is done with System.JS which also needs a ocnfiguration. We need a systemjs.config.js:

/** *);

This file is almost equal to the file from the angular.io quick-start tutorial. We just need to change a few things:

The first thing is the path to the node_modules which is not on the same level as usual. So we need to change the path to

../node_modules/, we also need to tell System.js that the bundle is not a commonjs module. this is doen with the

meta property. I also changed the app main path to

./bundle.js, instead of

main.js

#7 Create the App

Inside the

wwwroot folder, create a new folder called

app. Inside this new folder we need to create a first TypeScript file called main.ts:

import { platformBrowserDynamic } from '@angular/platform-browser-dynamic'; import { AppModule } from './app.module'; const platform = platformBrowserDynamic(); platform.bootstrapModule(AppModule);

This script calls the app.module.ts, which is the entry point to the app: { }

The module collects all the parts of our app and puts all the components and services together.

This is a small component with a small inline template:[] = []; }

At least, we need to create a service which calls a ASP.NET Core web api. We need to create the API later on.; }

#8 The Web API

The web api is pretty simple in this demo, just to show how it works:; } } }

If you start the app using

dotnet run you can call the API using that URL:, you'll see the three persons in the browser as a JSON result.

#9 That's It. Run the App.

Type

webpack and

dotnet run in the console to compile and pack the client app and to start the application. After that call the URL in a browser:

Conclusion

As you can see, hosting an Angular2 app inside ASP.NET Core web using this way is pretty much easier and much more light weight than using Visual Studio 2015..

I pushed the demo code to GitHub. Try it out, play around with it and give me some feedback about it :)

{{ parent.title || parent.header.title}}

{{ parent.tldr }}

{{ parent.linkDescription }}{{ parent.urlSource.name }} | https://dzone.com/articles/aspnet-core-and-angular-2-using-cli-and-visual-stu | CC-MAIN-2017-04 | refinedweb | 1,233 | 68.06 |

Steps to reproduce:

# create a large (>4gb) file

f = open('foo.txt', 'wb')

text = 'a' * 1024**2

for i in xrange(5 * 1024):

f.write(text)

f.close()

# now zip the file

import zipfile

z = zipfile.ZipFile('foo.zip', mode='w', allowZip64=True)

z.write('foo.txt')

z.close()

Now inspect the file headers using a hex editor. The written headers are incorrect. The filesize and compressed size should be written as 0xffffffff and the 'extra field' should contain the actual sizes.

Tested on Python 2.5 but looking at the latest code in 3.2 it still looks broken.

The problem is that the ZipInfo.FileHeader() is written before the filesize is populated, so Zip64 extensions are not written. Later, the sizes in the header are written, but Zip64 extensions are not taken into account and the filesize is just wrapped (7gb becomes 3gb, for instance).

My patch fixes the problem on Python 2.5, it might need minor porting to fix trunk. It works by assigning the uncompressed filesize to the ZipInfo header initially, then writing the header. Then later on, I re-write the header (this is okay since the header size will not have increased.) | https://bugs.python.org/msg115250 | CC-MAIN-2018-51 | refinedweb | 199 | 77.74 |

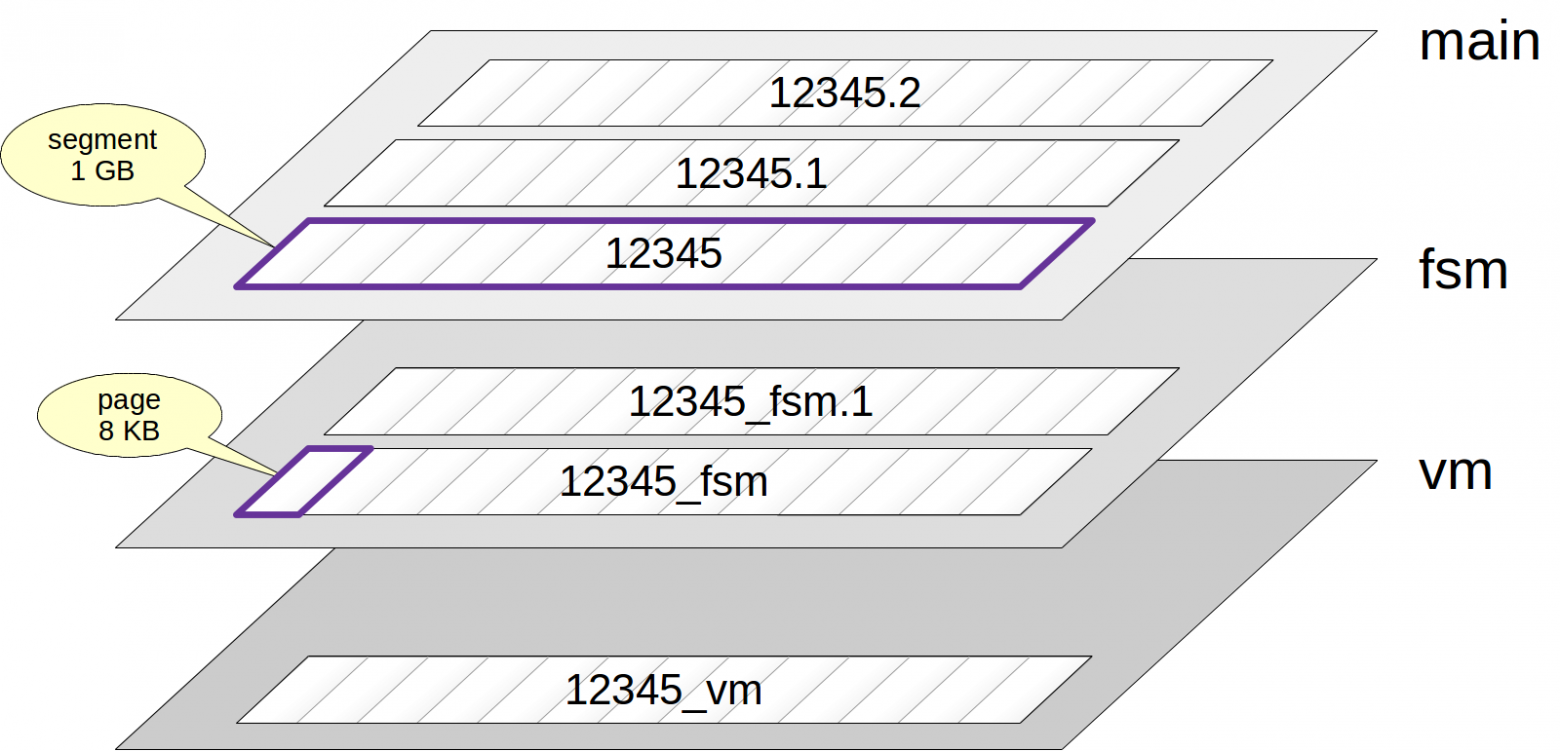

A layout manager which arranges widgets horizontally or vertically. More...

#include <Wt/WBoxLayout>

A layout manager which arranges widgets horizontally or vertically.

This layout manager arranges widgets horizontally or vertically inside the parent container.

The space is divided so that each widget is given its preferred size, and remaining space is divided according to stretch factors among widgets. If not all widgets can be given their preferred size (there is not enough room), then widgets are given a smaller size (down to their minimum size). If necessary, the container (or parent layout) of this layout is resized to meet minimum size requirements.

The preferred width or height of a widget is based on its natural size, where it presents its contents without overflowing. WWidget::resize() or (CSS

width,

height properties) can be used to adjust the preferred size of a widget.

The minimum width or height of a widget is based on the minimum dimensions of the widget or the nested layout. The default minimum height or width for a widget is 0. It can be specified using WWidget::setMinimumSize() or using CSS

min-width or

min-height properties.

You should use WContainerWidget::setOverflow(OverflowAuto) or use a WScrollArea to automatically show scrollbars for widgets inserted in the layout to cope with a size set by the layout manager that is smaller than the preferred size.

When the container of a layout manager does not have a defined size (by having an explicit size, or by being inside a layout manager), or has has only a maximum size set using WWidget::setMaximumSize(), then the size of the container will be based on the preferred size of the contents, up to this maximum size, instead of the default behaviour of constraining the size of the children based on the size of the container. Note that because of the CSS defaults, a WContainer has by default no height, but inherits the width of its parent widget. The width is thus by default defined.

A layout manager may provide resize handles between items which allow the user to change the automatic layout provided by the layout manager (see setResizable()).

Each item is separated using a constant spacing, which defaults to 6 pixels, and can be changed using setSpacing(). In addition, when this layout is a top-level layout (i.e. is not nested inside another layout), a margin is set around the contents. This margin defaults to 9 pixels, and can be changed using setContentsMargins(). You can add more space between two widgets using addSpacing().

For each item a stretch factor may be defined, which controls how remaining space is used. Each item is stretched using the stretch factor to fill the remaining space.

Usage example:

Enumeration of the direction in which widgets are layed out.

Creates a new box layout.

This constructor is rarely used. Instead, use the convenient constructors of the specialized WHBoxLayout or WVBoxLayout classes.

Use

parent =

0 to created a layout manager that can be nested inside other layout managers.

Adds a layout item.

The item may be a widget or nested layout.

How the item is layed out with respect to siblings is implementation specific to the layout manager. In some cases, a layout manager will overload this method with extra arguments that specify layout options.

Implements Wt::WLayout.

Adds a nested layout to the layout.

Adds a nested layout, with given

stretch factor.

Adds extra spacing.

Adds extra spacing to the layout.

Adds a stretch element.

Adds a stretch element to the layout. This adds an empty space that stretches as needed.

Adds a widget to the layout.

Adds a widget to the layout, with given

stretch factor. When the stretch factor is 0, the widget will not be resized by the layout manager (stretched to take excess space).

The

alignment parameter is a combination of a horizontal and/or a vertical AlignmentFlag OR'ed together.).

Removes and deletes all child widgets and nested layouts.

This is similar to WContainerWidget::clear(), with the exception that the layout itself is not deleted.

Implements Wt::WLayout.

Returns the number of items in this layout.

This may be a theoretical number, which is greater than the actual number of items. It can be used to iterate over the items in the layout, in conjunction with itemAt().

Implements Wt::WLayout.

Returns the layout direction.

Inserts a nested layout in the layout.

Inserts a nested layout in the layout at position

index, with given

stretch factor.

Inserts extra spacing in the layout.