text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91 values | source stringclasses 1 value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

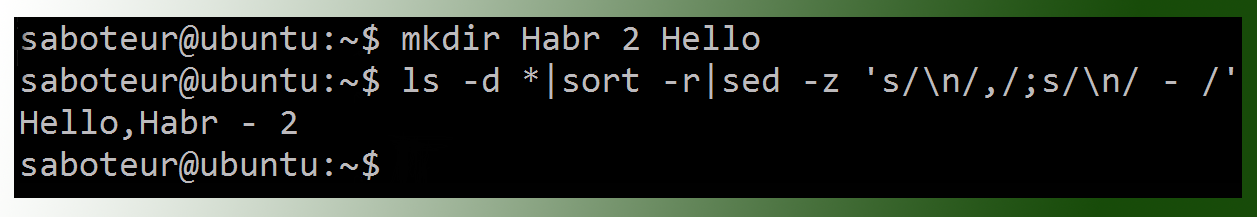

|---|---|---|---|---|---|

Checking Program Style with style61b

On the instructional machines, you can run our version of the open-source

checkstyle program with the command

style61b FILE.java ...

where

FILE.java... denotes one or more names of Java source files. The

program will exit normally if there are no style violations, and otherwise

will exit with a non-zero exit code.

Style61b' is a simple script that invokescheckstyle

configured

with our official style parameters. For home use, it is included in our

cs61b-software

package.

The rest of this document details the style rules thatstyle61b` enforces.

Whitespace

- W1. Each file must end with a newline sequence.

- W2. Files may not contain horizontal tab characters. Use blanks only for indentation.

- W3. No line may contain trailing blanks.

W4. Do NOT put whitespace:

- Around the "<" and ">" within a generic type designation ("List

", not "List ", or "List< Integer >").

- After the prefix operators "!", "--", "++", unary "-", or unary "+".

- Before the tokens ";" or the suffix operators "--" and "++".

- After "(" or before ")".

- After "."

W5.".

W6. In general, break (insert newlines in) lines before an operator, as in

... + 20 * X + Y

- W7. Do not separate a method name from the "(" in a method call with blanks. However, you may separate them with a newline followed by blanks (for indentation) on long lines.

Indentation

- I1. The basic indentation step is 4 spaces.

I2. Indent code by the basic indentation step for each block level (blocks are generally enclosed in "{" and "}"), as in

if (x > 0) { r = -x; } else { r = x; }

I3. Indent 'case' labels at the same level as their enclosing 'switch', as in

switch (op) { case '+': addOpnds(x, y); break; default: ERROR(); }

- I4. Indent continued lines by the basic indentation step.

Braces

- BR1. Use { } braces around the statements of all 'if', 'while', 'do', and 'for' statements.

BR2. Place a "}" brace on the same line as a following "else", "finally", or "catch", as in

if (x > 0) { y = -x; } else { y = x; }

- BR3..

- C1. Every class and field must have a Javadoc (/ ... */) comment. Every method must either have a Javadoc comment, or an

@Overrideannotation.

- C2. Each parameter of a method must be mentioned in its Javadoc comment, using either a

@paramtag, or spelled out in all capital letters in running text. Parameters that begin with "dummy", "ignored", or "unused" do not need to be commented.

- C3. Methods that return non-void values must describe them in their Javadoc comment either with a "@return" tag or in a phrase in running text that contains the word "return", "returning", or "returns".

- C4. The Javadoc comment on every class must contain an @author tag.

- C5. Each Javadoc comment must start with a properly formed sentence, starting with a capital letter and ending with a period.

- C6. Do not use C++-style (

//) comments. If they appear in a skeleton file provided by the staff, they are intended to be removed.

- C7. In method bodies, use

/* ... */'-style comments only at the beginning of the method. That is, avoid internal comments.

- C8. Comments must appear alone on their line(s).

Names

- N1. Names of static final constants must be in all capitals (e.g.,

RED,

DEFAULT_NAME).

- N2. Names of parameters, local variables, and methods must start with a lower-case letter, or consist of a single, upper-case letter.

- N3. Names of types (classes), including type parameters, must start with a capital letter.

- N4. Names of packages must start with a lower-case letter.

- N5. Names of instance variables and non-final class (static) variables must start with either a lower-case letter or "_".

Imports

- IM1. Do not use 'import PACKAGE.*', unless the package is

java.lang.Math,

java.lang.Double, or

org.junit.Assert.

import static CLASS.*is OK.

- IM2. Do not import the same class or static member twice.

- IM3. Do not import classes or members that you do not use.

Assorted Java Style Conventions

- S1. Write array types with the "[]" after the element-type name, not after the declarator. For example, write "

String[] names", not "

String names[]".

S2. Write any modifiers for methods, classes, or fields in the following order:

- public, protected, or private.

- abstract or static.

- final, transient, or volatile.

- synchronized.

- native.

- strictfp.

S3.".

S4. Do not use empty blocks ('{ }' with only whitespace or comments inside) for control statements. There is one exception: a catch block may consist solely of comments having the form

/* Ignore EXCEPTIONNAME. */

S5. Avoid "magic numbers" in code by giving them symbolic names, as in

public static final MAX_SIZE = 100;

Exceptions are the numerals -16 through 16, 100, 1000, 0.5, -0.5, 0.25, -0.25.

- S6. Do not try to catch the exceptions Exception, RuntimeError, or Error.

- S7. Write "

b" rather than "

b == true" and "

!b" rather than "

b == false".

S8. Replace

if (condition) { return true; } else { return false; }

with just

return condition;

- S9. Only static final fields of classes may be public. Other fields must be private or protected.

- S10. Classes that have only static methods and fields must not have a public (or defaulted) constructor.

- S11. Classes that have only private constructors must be declared "final".

Avoiding Error-Prone Constructs

- E1. If a class overrides

.equals, it must also override

.hashCode.

E2. Local variables and parameters must not shadow field names. The preferred way to handle, e.g., getter/setter methods that simply control a field is to prefix the field name with "_", as in

public double getWidth() { return _width; } public void setWidth(double width) { _width = width; }

- E3. Do not use nested assignments, such as "

if ((x = next()) != null) ...". Although this can be useful in C, it is almost never necessary in Java.

- E4. Include a

defaultcase in every

switchstatement.

E5. End every arm of a "switch" statement either with a

breakstatement or a comment of the form

/* fall through */

E6. Do not compare String literals with

==. Write

if (x.equals("something"))

and not

if (x == "something")

There are cases where you really want to use

==, but you are unlikely to encounter them in this class.

Limits

- L1. No file may be longer than 2000 lines.

- L2. No line may be longer than 80 characters.

- L3. No method may be longer than 60 lines.

- L4. No method may have more than 8 parameters.

- L5. Every file must contain exactly one outer class (nested classes are OK). | http://inst.eecs.berkeley.edu/~cs61b/fa18/docs/style-guide.html | CC-MAIN-2019-22 | refinedweb | 1,041 | 67.76 |

PGX supports referring to entities (e.g., graphs, properties) by name. Since multiple sessions can refer to entities by name, there could be cases in which different sessions want to use the same name for different entities. Since version 19.4.0, PGX supports separate namespaces: the name of an entity lives in a certain namespace where it must be unique, but different namespaces can contain entities with the same name.

For example, for graph names each session has its own session-private namespace and can

choose any name without affecting other sessions, nor can see the content of other private namespaces.

In addition to the session-private namespace there is a public namespace for published graphs (e.g., published

via the

publishWithSnapshots() or the

publish() methods).

Similarly, each published graph defines a public namespace for published properties as well as per-session, private namespaces, so that different sessions can create properties with the same name on a published graph. Private graphs only have a private namespace.

We illustrate more details focusing on graphs and then provide some additional details regarding properties; the same behavior applies to named entities as well.

Graphs that are created in a session either through loading (e.g.,

readGraphWithProperties(), with the graph builder or through mutations will take up a name in the session-private namespace.

A graph will be placed in the public namespace only through publishing (i.e., when calling the

publishWithSnapshots() or the

publish() methods).

Publishing a graph will move its name from the session-private namespace to the public namespace.

There can only be one graph with a given name in a given namespace, but a name can be used in different namespaces to refer to different graphs.

An operation that creates a new graph (e.g.,

readGraphWithProperties() will fail if the chosen name of the new graph already exists in the session-private namespace.

Publishing a graph fails if there is already a graph in the public namespace with the same name.

There are two ways to retrieve a graph by name: with or without explicitly mentioning the namespace.

With

getGraph(Namespace, String), you need to provide the namespace (either session private or public); the graph then will be looked up in the given namespace only.

With

getGraph(String), the provided name will be first looked up in the private namespace and, if no graph with the given name is found

there, in the public one.

In other words, if a graph with the same name is defined in both the public and the private namespaces,

getGraph(String) will return the private graph and you need to use

getGraph(Namespace, String) to get hold of

the public graph with that name.

To see the currently used names in a namespace you can use the

PgxSession.getGraphs(Namespace) method, which will list all the names in the given namespace.

The names in the returned collection can be used in a

getGraph(Namespace, String) call to retrieve the

corresponding

PgxGraph.

Property names behave in a similar way as graph names.

All property names of a non-published graph are in the session-private namespace.

Once a graph is published with

PgxGraph.publishWithSnapshots() or the

PgxGraph.publish() methods), its properties are published as well and their names move into the public namespace.

Once a graph is published, newly created properties will still be private to the session and their names will be in the

private namespace.

Those properties can be published individually with the

Property.publish() method, as long as no other

property with the same name is already published for that graph.

Additionally, new private properties can be created with the same name of an already-published properties (since the

names are part of separate namespaces).

To handle such situations and retrieve the correct property, the PGX API offers the

getVertexProperty(Namespace, String) and the

getEdgeProperty(Namespace, String) methods, which allow specifying the namespace where the property name should be looked up.

Similarly to graphs, if you search a property without specifying the namespace, the private namespace is searched first and the search proceeds to the public namespace only if the former fails;

this is the case, for example, for the

getVertexProperty(String) or the

getEdgeProperty(String) methods and for PGQL queries).

Likewise, when a mutation on a graph reads or writes a property referred to by name and two properties exist with the same name,

the property in the private namespace is selected.

To override the default selection, some mutation mechanisms accept a collection of specific

Property objects to be copied into the mutated graph.

For example, such mechanism is supported for filter expressions; see the Creating Subgraphs page for more details. | https://docs.oracle.com/cd/E56133_01/latest/reference/overview/namespaces.html | CC-MAIN-2020-50 | refinedweb | 781 | 52.9 |

Guido van Rossum Unleashed 241

Ruby

by Luke

Thoughts on Ruby?

Guido:

I just looked it up -- I've never used it. Like Parrot, it looks like a mixture of Python and Perl to me. That was fun as an April Fool's joke, but doesn't tickle my language sensibilities the right way.

That said, I'm sure it's cool. I hear it's very popular in Japan. I'm not worried.

Data Structures Library

by GrEp

I love python for making quick hacks, but the one thing that I haven't seen is a comprehensive data structures library. Is their one in development that you would like to comment about or point us to?

Guido:

One of Python's qualities is that you don't need a large data structures library. Rather than providing the equivalent of a 256-part wrench set, with a data type highly tuned for each different use, Python has a few super-tools that can be used efficiently almost everywhere, and without much training in tool selection. Sure, for the trained professional it may be a pain not to have singly- and doubly-linked lists, binary trees, and so on, but for most folks, dicts and lists just about cover it, and even inexperienced programmers rarely make the wrong choice between those two.

Since this is of course a simplification, I expect that we will gradually migrate towards a richer set of data types. For example, there's a proposal for a set type (initially to be added as a module, later as a built-in type) floating. See and.

[j | c]Python

by seanw .NET initiative)?

Guido:

Note that the new name is Jython, by the way. Check out -- they're already working on a 2.1 compatible release.

We used to work really close -- originally, when JPytnon was developed at CNRI by Jim Hugunin, Jim & I would have long discussions about how to implement the correct language semantics in Java. When Barry Warsaw took over, it was pretty much the same. Now that it's Finn Bock and Samuele Pedroni in Europe, we don't have the convenience of a shared whiteboard any more, but they are on the Python developers mailing list and we both aim to make it possible for Jython to be as close to Python in language semantics as possible. For example, one of my reasons against adding Scheme-style continuations to the language (this has seriously been proposed by the Stackless folks) is that it can't be implemented in a JVM. I find the existence of Jython very useful because it reminds me to think in terms of more abstract language semantics, not just implementation details.

IMO the portability of C Python is better than that of Jython, by the way. True, you have to compile C Python for each architecture, but there are fewer platforms without a C compiler than platforms without a decent JVM.

Jython is mostly useful for people who have already chosen the Java platform (or who have no choice because of company policy or simply what the competition does). In that world, it is the scripting and extension language of choice.

does Python need a CPAN?

by po_boy

One of the reasons I still write some things in PERL is because I know that I can find and install about a zillion modules quickly and easily through the CPAN repository and CPAN module. I'm pretty sure that if Python had something similar, like the Vaults of Parnassus but more evolved that I would abandon PERL almost entirely.

Do you see things in a similar way? If so, why has Python not evolved something similar or better, and what can I do to help it along in this realm?

Guido:

It's coming! Check out the action in the catalog-sig. You can help by joining.

One reason why it hasn't happened already is that first we needed to have a good package installation story. With the widespread adoption of distutils, this is taken care of, and I foresee a bright future for the catalog activities.

Favourite Python sketch?

by abischof

Considering that you named the language after the comedy troupe, what's your favourite Monty Python sketch? Personally, my favourite is the lecture on sheep aircraft, but I suppose that's a discussion for another time ;).

Guido:

I'm a bit tired of them actually. I guess I've been overexposed. :-)

Conflict with GPL

by MAXOMENOS

The Free Software foundation mentions the license that comes with Python versions 1.6b1 and later as being incompatible with the GPL. In particular they have this to say about it:

So, my question is a two parter:So, my question is a two parter:This is a free software license but is incompatible with the GNU GPL. The primary incompatibility is that this Python license is governed by the laws of the "State" of Virginia in the USA, and the GPL does not permit this.

1.What was your motivation for saying that Python's license is governed by the laws of Virginia?

2.Is it possible that a future Python license could be GPL-compatible again?

Guido:

Let me answer the second part first. I asked the FSF to make a clear statement about the GPL compatibility of the Python 2.1, and their lawyer gave me a very longwinded hairsplitting answer that said neither yes nor no. You can read for yourself at. I find this is very disappointing; I had thought that with the 1.6.1 release we had most of this behind us, but apparently they change their position at each step in the negotiations.

I don't personally care any more whether Python will ever be GPL-compatible -- I'm just trying to do the FSF a favor because they like to use Python. With all the grief they're giving me, I wonder why I should be bothered any more.

As for the second part: most of you should probably skip right to the next question -- this answer is full of legal technicalities. I've spent waaaaaaaaay to much time talking and listening to lawyers in the past year! :-(

Anyway. The Python 1.6 license was written by CNRI, my employer until May 2000, where I did a lot of work on Python. (Before that, of course, I worked at CWI in Amsterdam, whom I have to thank for making my early work on Python possible.) CNRI own the rights to Python versions 1.3 through 1.6, so they have every right to pick the license.

CNRI's lawyers designed the license with two goals in mind:(1) maximal protection of CNRI, (2) open source. (If (2) hadn't been a prerequisite for my employment at CNRI, they would have preferred not to release Python at all. :-)

Almost every feature of the license works towards protecting CNRI against possible lawsuits from disappointed Python users (as if there would be any :-), and the state of Virginia clause is no exception. CNRI's lawyers believe that sections 4 and 5 of the license (the all caps warnings disclaiming all warranties) only provide adequate protection against lawsuits when a specific state is mentioned whose laws and courts honor general disclaimers. There are some states where consumer protection laws make general disclaimers illegal, so without the state of Virginia clause, they fear that CNRI could still be sued in such a state. (Being a consumer myself, I'm generally in favor of such consumer protection laws, but for open source software that is downloadable for free, I agree with CNRI that without a general disclaimer the author of the software is at risk. I'm happy that Maryland, for example, is considering to pass a law that makes a special exception for open source software here.)

Python 1.6.1, the second "contractual obligation release" (1.6 was the first), was released especially to change CNRI's license in a way that resolved all but one of the GPL incompatibilities in the 1.6 license. I'm not going to explain what those incompatibilities were, or how they were resolved. Just look for yourself by following the "accept license" link at. The relevant changes are all in section 7 of the license, which now contains several excruciating sentences crafted to disable certain other clauses of the license under certain conditions involving the GPL. Read it and weep.

The remaining incompatibility, according to the FSF, is the "click-to-accept" feature of the license. This is another feature to protect CNRI -- their lawyers believe that this is necessary to make the license a binding agreement between the user and CNRI. The FSF is dead against this, and their current position is that because the GPL does not require such an "acceptance ceremony" (their words), any license that does is incompatible with the GPL. It's like the old story of the irresistible force meeting the immovable object: CNRI's lawyers have carefully read the GPL and claim that CNRI's license is fully compatible with the GPL, so you can take your pick as to which lawyer you believe.

Anyway, I removed the acceptance ceremony from the 2.1 license, in the hope that this would satisfy the FSF. Unfortunately, the FSF's response to the 2.1 license (see above) seems to suggest that they have changed their position once again, and are now requesting other changes in the license. I'm very, very tired of this, so on to the next question!

Structured Design.

by Xerithane

First off, as a disclaimer I have never actually written anything in Python. But, I have read up on virtually all the introduction articles and tutorials so I have a grasp on syntax and structure.?

Guido:

What's wrong with the legibility answer? I think that's an *excellent* reason! Don't care if your code is legible?

Don't you hate code that's not properly indented? Making it part of the syntax guarantees that all code is properly indented!

When you use braces, there are several different styles of brace placement (e.g. whether the open brace sits on the same line as the "if" or on the next, and if on the next, whether it is indented or not; ditto for the close brace). If you're used to code written in one style, it can be difficult to read code written in another. Most people, when skimming code, look for the indentation anyway. This leads to sometimes easily overlooked bugs like this one:

if (x 10) x = 10; y = 0;Still not convinced? In 1974, Don Knuth predicted that indentation would eventually become a viable means of structuring code, once program units were small enough. (Full quotation:)

Still not convinced? You admit that you haven't tried it yet. Almost everybody who tries it gets used to it very quickly and end up loving the indentation feature, even those who hated it at first. There's still hope for you!

So, no, I'm not worried about Python holding out 20 more years.

What is *your* idea of Python and its future?

by Scarblac

There are a lot of "golden Python rules" or whatever you would call them, like "explicit is better than implicit", "there should be only one way to do it", that sort of thing. As far as I know, those are from old posts to the mailing list, often by Tim Peters, and they've become The Law afterwards. In the great tradition of Usenet advocacy, people who suggest things that go against these rules are criticized. But looking at Python, I see a lot more pragmatism, not rigid rules. What do you think of those "golden rules" as they're written down?.

Guido:

You're referring to the "Zen of Python", by Tim Peters:

It's no coincidence that these rules are posted on the Python Humor page!

Those rules are useful when they work, but several of the rules warn against zealous application (e.g. "practicality beats purity" and and "now is better than never").

While we put "There's only one way to do it" on a T-shirt, mostly to poke fun at Larry Wall's TMTOWTDI, the actual Python Zen rule reads: "There should be one-- and preferably only one -- obvious way to do it." That has several nuances!

Regarding the future, I doubt that any piece of software ever stops evolving until it dies. It's like your brain: you never stop learning. Good software has the ability to evolve built in from the start, and evolves in a way that keeps the complexity manageable.

Python started out pretty well equipped for evolution: it was extensible at two levels (C extension modules and Python modules) that didn't require changing the language itself. We've occasionally added features to support evolution better, e.g. package namespaces make it possible to have a much large number of modules in the library, and distutils makes it easier to add third party packages.

I hear the complaints from the community about the rate of change in Python, and I'm going to be careful not to change the language too fast. The next batch of changes may well be aimed at *reducing* complexity. For example, there are PEPs proposing a simplification of Python's numeric system (like eradicating the distinction between 32/64-bit ints and bignums), and I've started to think seriously about removing the distinction between types and classes -- another simplification of the language's semantics.

Strangest use of Python

by Salamander

What use of Python have you found that surprised you the most, that gave you the strongest "I can't believe they did that" reaction?

Guido:

I find few things strange.

For the most obfuscated code I've ever come across, see the Mandelbrot set as a lambda,.

Digital Creations has written a high-performance fully transactional replicated object database in Python. That's definitely *way* beyond what I thought Python would be good for when I started.

Some people at national physics labs like LANL and LLNL have a version of Python running on parallel supercomputers with many hundreds of processors. That's pretty awesome.

But my *favorite* use of Python is at a teaching language, to teach the principles of programming, without fuss. Think about it -- it's the next generation!

--Guido van Rossum (home page:)

Guido van Rossum Unleashed More Login

Guido van Rossum Unleashed

Related Links Top of the: day, week, month. | http://developers.slashdot.org/story/01/04/20/1455252/guido-van-rossum-unleashed/insightful-comments | CC-MAIN-2015-11 | refinedweb | 2,432 | 70.73 |

Creating a Slide Show Using the History API and jQuery

Introduction

During Ajax communication, page content is often modified in some way or another. Since Ajax requests are sent through a client side script, the browser address bar remains unchanged even if the page content is being changed. Although this behavior doesn't create any problem for an application's functionality, it has pitfalls of its own. That's where History API comes to your rescue. History API allows you to programmatically change the URL being shown in the browser's address bar. This article demonstrates how History API can be used with an example of a slide show.

Overview of History API

Browsers keep a track of the URLs you visit in the history object. The history object tracks only those URLs that you actually visited, usually by entering them in the browser's address bar. While this serves its purpose in most cases, Ajax driven web pages face a problem of their own. Such pages make Ajax calls to the server and often change the content of the page dynamically. Let's understand this with an example. Suppose you wish to develop an Ajax driven slide show. This slide show consists of previous and next buttons and an image element to display the slide. In order to navigate between two or more slides the slide show needs to make an Ajax call to the server to fetch the slide information to be displayed. Irrespective of the slide being displayed, the browser address bar always shows the URL of the web page that houses the slide show. Now imagine that a user is on the third slide and bookmarks that page with the intention to revisit later. If the user visits the bookmarked URL later, the URL won't fetch the third slide but will fetch the initial slide. This is because the browser's address bar was always pointing to the main page and the history object tracked only the URL of the main page.

- pushState() : The pushState() method is used to add an entry to the browser's history and thus changes the browser's address bar.

- replaceState() : The replaceState() method is used to update an existing entry in the browser's history with new details.

- popstate : The popstate event is raised by the window object when a user visits an entry from the history object.

Ajax Driven Slide Show

To illustrate the use of History API, let's develop an Ajax driven slide show. The slide show exhibits only the basic functionality of showing slides as you click on next and previous buttons. For the sake of simplicity we won't add any fancy effects, configuration or error handling to the slide show. Of course, you can add these features once you finish this basic example. A slide show sample developed in this example is shown below:

Slide Show

As you can see each slide has a title, image and description. Clicking on the Previous or Next button causes an Ajax request to be sent to the server and details of the next or previous slide are fetched accordingly. Also, the browser's address bar reflects a unique URL for each slide being displayed even though the slide is being displayed using Ajax. For example, the above figure shows the URL for the second slide as /slides/index/2.

The slide show shown above can be developed in seven easy steps. Let's see what they are...

1. Create a Database Table to Store Slide Information

The slide show stores all the information about slides in a database. This example uses a SQL Server database but you can use any other RDBMS for storing this information. The following figure shows the Slides table used for this purpose:

Slides Table

As you can see the slides table consists of four columns - Id, Title, Description and ImageUrl. These columns store slide ID, slide title, description of a slide and URL of the slide's image respectively.

2. Create HTML Page and Style Sheet

Next, you need to create an HTML page that houses the slide show. The slide show application needs some server side processing (fetch data from database when Ajax calls are made) and hence you need to use some server side processing engine to display this HTML page. This example assumes ASP.NET MVC as the server side technology but that's not mandatory. You can use any other server side framework (such as PHP) that allows you to execute the required server side logic. The following markup shows the HTML needed to display the slide information. In this example this markup goes inside a Razor view (Index.cshtml).

@model HistoryAPISlideShow.Models.Slide ... <body> <form> <input id="slideId" type="hidden" value="@Model.Id" /> <h1 id="slideTitle" class="Title">@Model.Title</h1> <div><img id="slideImage" src="@Model.ImageUrl" /></div> <div id="slideDescription">@Model.Description</div> <div> <input id="prevButton" type="button" value="Previous" /> <input id="nextButton" type="button" value="Next" /> </div> </form> </body> </html>

Let's examine this markup. The markup consists of a <form> that houses all the DOM elements making the slide show. The hidden field - slideId - is used to store the slide ID and is used in the client script (you will learn about it later). The <h1> element displays a slide's title. The slideImage <img> element displays the slide's image and the slideDescription <div> displays the slide's description. Two buttons - prevButton and nextButton - represent the Previous and Next buttons respectively.

Notice that a Slide object acts as a model to this view. The Id, Title, ImageUrl and Description properties of the Slide object are rendered inside the slideId, slideTitle, slideImage and slideDescription elements respectively. You will create the Slide model class later in this article.

The DOM elements discussed above also have some CSS styles attached with them. These CSS rules are defined in a style sheet and are shown below:

#slideTitle { font-family:Arial; font-size:21px; padding:5px; } #slideDescription { font-family:Arial; font-size:15px; padding:10px; text-align:justify; } #slideImage { padding:10px; border:2px solid #808080; } #prevButton, #nextButton { width:100px; padding:5px; }

Make sure to add these CSS rules to a style sheet file and link that style sheet from the HTML page you just developed.

3. Write Server Side Code to Display the Initial Slide

When a user visits the web page that houses the slide show, the slide show must display the first slide. The user can then use Previous and Next navigation buttons to navigate between the slides. To fetch and supply this initial slide to the HTML markup you need to write some server side code that retrieves the slide information from the Slides table you created earlier. This example uses ASP.NET MVC and Entity Framework for this purpose but again that's not mandatory. You can easily port this logic on whatever server side framework you are using. The code that serves the initial slide is shown below:

public class SlidesController : Controller { public ActionResult Index(int id = 0) { SlideDbEntities db = new SlideDbEntities(); IQueryable<Slide> data = null; if (id == 0) { data = (from item in db.Slides orderby item.Id ascending select item).First(); } else { data = from item in db.Slides where item.Id == id select item; } return View(data.SingleOrDefault()); } }

The above code shows the Index() action method from the Slides controller. The Index() method takes the id parameter and its default value is set to 0. The Index() method servers two purposes. Firstly, it serves the first slide when the slide show page is visited. In this case no id will be passed to it. Secondly, it serves a slide with a specific ID. This variation is used when History API pops a slide URL added to the history object.

We won't go into too much detail of the Index() method. Suffice it to say that if the id parameter is 0, Index() method fetches the first slide data from the Slides table, otherwise it fetches a slide with the specified id. In both cases the slide information is stored in Slide object (Slide is an entity class for the Slides table). The Slide object is then passed to the Index view. Recollect that Index view contains the HTML markup that displays the slide information.

4. Write Server Side Code to Serve a Specific Slide to an Ajax Call

As discussed earlier, clicking on the Previous and Next buttons cause an Ajax call to be made to the server in an attempt to fetch the slide details. To handle this Ajax call you need some server side code that serves a specified slide to the client side script. The following code shows another action method of the Slides controller class that does this job:

public JsonResult GetSlide(int slideid,string direction) { SlideDbEntities db = new SlideDbEntities(); IQueryable<Slide> data = null; if (string.IsNullOrEmpty(direction)) { data = from item in db.Slides where item.Id == slideid select item; } if (direction == "N") { data = (from item in db.Slides where item.Id > slideid orderby item.Id ascending select item).Take(1); } if (direction == "P") { data = (from item in db.Slides where item.Id < slideid orderby item.Id descending select item).Take(1); } Slide slide = data.SingleOrDefault(); return Json(slide); }

The GetSlide() action method accepts two parameters - slideid and direction). The slideid parameter indicates the ID of the slide that is currently displayed in the browser. The direction parameter indicates whether the Previous or Next button was clicked. Its value can be P or N accordingly. If a user clicks on the Next button you need to fetch the slide whose ID is next to the current slide. The slide IDs may not be in sequence and hence the code needs to fetch a slide whose ID is higher than the current slide ID. Similarly, logic needs to be executed if the user clicks on the Previous button. This time, however, you need to fetch a slide that is immediately before the current slide.

Once the slide is retrieved, it is passed to the client using the Json() method. The Json() method converts the Slide object into the equivalent JSON format. Notice that the return value of GetSlide() is JsonResult because it will be accessed by an Ajax call.

5. Load and Display Slides Using jQuery $.ajax()

Now comes the important part - displaying slides using Ajax. To display slides when a user clicks the Previous or Next button we will use jQuery $.ajax() method. So, add a <script> reference to the jQuery library in the head section of the HTML page you created earlier. Also add a <script> block in the head section. The following code shows how the <script> reference and the <script> block look:

<script src="~/Scripts/jquery-2.0.0.js"></script> <script type="text/javascript"> $(document).ready(function () { $("#prevButton").click(function () { GetSlide("P"); }); $("#nextButton").click(function () { GetSlide("N"); }); });

The <script> block consists of the jQuery ready() handler function. The ready() handler wires click event handlers of the prevButton and the nextButton using the click() method. Both the click event handlers call a custom function - GetSlide(). The GetSlide() function fetches a slide from the server by making an Ajax request and accepts either P or N depending on the button pressed. The following code shows the GetSlide() function:

function GetSlide(direction) { var data = {}; data.slideid = $("#slideId").val(); if (direction !== undefined) { data.direction = direction; } $.ajax({ url: "/Slides/GetSlide", type: "POST", data: JSON.stringify(data), dataType: "json", contentType:"application/json", success: function (slide) { $("#slideId").val(slide.Id); $("#slideTitle").html(slide.Title); $("#slideDescription").html(slide.Description); $("#slideImage").attr("src", slide.ImageUrl); }, error: function () { alert(err.status + " - " + err.statusText); } }) }

The GetSlide() function retrieves the value of the current slide ID from the slideId hidden field. It then creates a JavaScript object (data) and stores the slideid and direction into it. Then the $.ajax() method is called to make an Ajax call to the GetSlide() server side method (see step 4). Various configuration options are passed to the $.ajax() method. The url setting points to the URL of the GetSlides() action method. The type setting specifies the request type to be POST. The data setting holds the JSON stringified version of the data JavaScript object. The dataType setting specifies the data type of the response and is set to json. The contentType property specifies the content type of the request and is set to application/json. The success function is called when the Ajax call succeeds and receives the Slide object as its parameter (recollect that GetSlide() action method returns Slide object). The success function then sets the values of slideId, slideTitle, slideDescription and slideImage according to the slide data returned from the server. Finally, the error function displays an error message (if any) in case there is any error while making the Ajax call.

6. Add an Entry in the Browser's History Using pushState()

In its current form the slide show will be displayed as expected. That means clicking on Previous and Next buttons will fetch the slides accordingly and display them in the page. However, the browser address bar remains unchanged throughout this navigation. That's where the History API comes into the picture. To change the browser address bar when a user navigates to the new slide, add the following line to the success handler function you created in step 5.

... success: function (slide) { $("#slideId").val(slide.Id); $("#slideTitle").html(image.Title); $("#slideDescription").html(slide.Description); $("#slideImage").attr("src", slide.ImageUrl); history.pushState(slide, slide.Title, "/slides/index/" + slide.Id); } ...

Notice the line of code marked in bold letters. The code calls the pushState() method of the history object. The pushState() method accepts three parameters. These parameters are described below:

- statedata : The first parameter indicates the state associated with the new entry being added. You can access this state inside the popstate event handler (discussed later). In this case you store the entire slide object into the state.

- title : The second parameter is a title that you wish to assign to the new entry. This title can be displayed by the browser in the History menu. Here we add slide's Title as the title.

- url : The third parameter is a URL that you wish to add to the history object. In this example you add URLs of the form /slides/index/<slide_id> to the history. Notice that these URLs point to the Index() action method of the Slides controller you created in step 3. This is also the URL that will be displayed in the browser's address bar as soon as the pushState() call is made.

After adding this code if you run the web page again, you will observe that as soon as the pushState() call is made the browser's address bar reflects the URL as mentioned in the third parameter. This URL can be bookmarked by the end user or navigated to later during the same session using the browser's back button.

7. Handle Popstate Event

The popstate event is raised by the window object whenever the current history entry changes. The popstate event object has state property containing the statedata value you added while calling pushState(). In our example you use this value as follows:

$(document).ready(function () { window.addEventListener("popstate", function (evt) { $("#slideId").val(evt.state.Id); GetSlide(); }, false); ...

As you can see the above code has been added to the ready() handler. The addEventListener() method wires an event handler for the popstate event. The popstate event handler sets the value of slideId hidden field to the ID of the slide that is being displayed from the history. Notice how the evt.state.Id is used to read a slide ID from the state object. GetSlide() method is then called so as to make an Ajax call to the server in an attempt to retrieve the latest slide information.

That's it! You can run the slide show and test whether it functions as expected by clicking on the navigation buttons and browser's back and forward buttons.

Summary

Modern web applications heavily use Ajax. While using Ajax the page URL remains the same even though its content might get change dynamically. History API allow you to programmatically add entries to history. Ajax calls can take advantage of History API and add entries that uniquely identify an Ajax call. The browser address bar also reflects these URLs. History API provides pushState() method to add an entry to the browser's history. When any item from the browser's history is accessed popstate event is raised on the window so that the page content can be synchronized (if required) as per the URL being displayed in the browser's address<< | https://www.developer.com/lang/jscript/creating-a-slide-show-using-the-history-api-and-jquery.html | CC-MAIN-2019-04 | refinedweb | 2,784 | 64.91 |

Introduction to JavaFX Script

Pages: 1, 2, 3, 4, 5,

else

if (place_your_condition_here) {

//do something

} else if (place_your_condition_here) {

//do something

} else {

//do something

}

The while statement is similar to while in Java. Curly braces are always required with this statement.

while

while (place_your_condition_here)

{

//do something

}

The for statement can be used to loop over an interval (intervals are represented using brackets [] and the .. symbol).

for

[]

..

//i will take the values: 0, 1, 2, 3, 4, 5

for (i in [0..5])

{

//do something with i

}

JavaFX procedures are marked by the operation keyword. Here is a simple example:

operation

extends.

attribute

The inverse clause is optional and it shows a bidirectional relationship to another attribute in the class of the attributes' type. In this case, JavaFX will automatically perform updates (insert, replace, and delete).

inverse:

System.out.println

//expressions within quoted text

import java.lang.System;

var mynumber:Number = 10;

System.out.println("Number is: {mynumber}");

Result: Number is: 10

JavaFX supports a useful facility known as the cardinality of the variable. This facility is implemented with the next three operators:

?

null

+

*

/:

sizeof

/:

indexof

/:

insert

as first

as last

before

after

Pages: 1, 2, 3, 4, 5, 6

Next Page

© 2017, O’Reilly Media, Inc.

(707) 827-7019

(800) 889-8969

All trademarks and registered trademarks appearing on oreilly.com are the property of their respective owners. | http://archive.oreilly.com/pub/a/onjava/2007/07/27/introduction-to-javafx-script.html?page=2 | CC-MAIN-2017-13 | refinedweb | 227 | 56.96 |

Timer in Java is a utility class which is used to schedule tasks for both one time and repeated execution. Timer is similar to alarm facility many people use in mobile phone. Just like you can have one time alarm or repeated alarm, You can use java.util.Timer to schedule one time task or repeated task. In fact we can implement a Reminder utility using Timer in Java and that's what we are going to see in this example of Timer in Java. Two classes java.util.Timer and java.util.TimerTask is used to schedule jobs in Java and forms Timer API. TimerTask is actual task which is executed by Timer. Similar to Thread in Java, TimerTask also implements Runnable interface and overrides run method to specify task details. This Java tutorial will also highlight difference between Timer and TimerTask class and explains how Timer works in Java. By the way difference between Timer and Thread is also a popular Java questions on fresher level interviews.

What is Timer and TimerTask in Java

How Timer works in Java

Timer class in Java maintains a background Thread (this could be either daemon thread or user thread, based on how you created your Timer object), also called as timer's task execution thread. For each Timer there would be corresponding task processing Thread which run scheduled task at specified time. If your Timer thread is not daemon then it will stop your application from exits until it completes all schedule task. Its recommended that TimerTask should not be very long otherwise it can keep this thread busy and not allow other scheduled task to get completed. This can delay execution of other scheduled task, which may queue up and execute in quick succession once offending task completed.

Difference between Timer and TimerTask in Java

I have seen programmers getting confused between Timer and TimerTask, which is quite unnecessary because these two are altogether different. You just need to remember:

1) Timer in Java schedules and execute TimerTask which is an implementation of Runnable interface and overrides run method to defined actual task performed by that TimerTask.

2) Both Timer and TimerTask provides cancel() method. Timer's cancel() method cancels whole timer while TimerTask's one cancels only a particular task. I think this is the wroth noting difference between Timer and TimerTask in Java.

Canceling Timer in Java

You can cancel Java Timer by calling cancel() method of java.util.Timer class, this would result in following:

1) Timer will not cancel any currently executing task.

2) Timer will discard other scheduled task and will not execute them.

3) Once currently executing task will be finished, timer thread will terminate gracefully.

4) Calling Timer.cancel() more than one time will not affect. second call will be ignored.

In addition to cancelling Timer, You can also cancel individual TimerTask by using cancel() method of TimerTask itself.

Timer and TimerTask example to schedule Tasks

Here is one example of Timer and TimerTask in Java to implement Reminder utility.

public class JavaReminder {

Timer timer;

public JavaReminder(int seconds) {

timer = new Timer(); //At this line a new Thread will be created

timer.schedule(new RemindTask(), seconds*1000); //delay in milliseconds

}

class RemindTask extends TimerTask {

@Override

public void run() {

System.out.println("ReminderTask is completed by Java timer");

timer.cancel(); //Not necessary because we call System.exit

//System.exit(0); //Stops the AWT thread (and everything else)

}

}

public static void main(String args[]) {

System.out.println("Java timer is about to start");

JavaReminder reminderBeep = new JavaReminder(5);

System.out.println("Remindertask is scheduled with Java timer.");

}

}

Output

Java timer is about to start

Remindertask is scheduled with Java timer.

ReminderTask is completed by Java timer //this will print after 5 seconds

Important points on Timer and TimerTask in Java

Now we know what is Timer and TimerTask in Java, How to use them, How to cancel then and got an understanding on How Timer works in Java. It’s good time to revise Timer and TimerTask.

1.One Thread will be created corresponding ot each Timer in Java, which could be either daemon or user thread.

2.You can schedule multiple TimerTask with one Timer.

3.You can schedule task for either one time execution or recurring execution.

4.TimerTask.cancel() cancels only that particular task, while Timer.cancel() cancel all task scheduled in Timer.

5.Timer in Java will throw IllegalStateException if you try to schedule task on a Timer which has been cancelled or whose Task execution Thread has been terminated.

That's all on what is Timer and TimerTask in Java and difference between Timer and TimerTask in Java. Good understanding of Timer API is required by Java programmer to take maximum advantage of scheduling feature provided by Timer. They are essential and can be used in variety of ways e.g. to periodically remove clean cache, to perform timely job etc.

Other Java Multithreading Tutorials from Javarevisited Blog

6 comments :

Hi Javin,

The program which you have provided here is for one time execution.

What are changes need to be done in program for recurring execution?

Timer is great. I used it a lot until I found this class:

From API: "This class is preferable to Timer when multiple worker threads are needed, or when the additional flexibility or capabilities of ThreadPoolExecutor (which this class extends) are required."

@garima, You just need to use different schedule() method from Timer class. For recurring execution you can following schedule() method :

public void schedule(TimerTask task,

long delay,

long period)

This will schedule task for repeated execution, first execution will be after delay specified by second argument and than subsequent recurring execution will be separated by period, third argument.

@Jiri Pinkas, Indeed ScheduledThreadPoolExecutor is good to know and as you said has a clear advantage when you need multiple worker thread.

Hi , Facing problem while using timers. I have scheduled job like the below. But its not triggerng when the time comes.

for(i=0;i<=3;i++)

{

Timer timer = new Timer()

timer.schedule(new Timertask() {

pubil void run()

{

System.out.println("triggereed");

}

},timevalue);

}

For the first time its getting triggered and for the next two times its not printing. and no erro is also thrown.

Is there a way to check the scheudle jobs list using the timer instance or any other way we could debug this.

Please help me

Hi Javin,

Nice Explanation, but I have question, how can it knows that the task is complete or not? | http://javarevisited.blogspot.co.uk/2013/02/what-is-timer-and-timertask-in-java-example-tutorial.html | CC-MAIN-2014-15 | refinedweb | 1,087 | 55.84 |

Note: This post was originally written for and posted at the Xamarin blog.

Are you ready to speed up the process of writing your cross-platform mobile app with Xamarin.Forms? If you’re new to Xamarin app development, or even if you’ve developed a few mobile apps, getting started on a new project can be a challenging task.

While Xamarin provides tons of resources, when you create a new project and stare at the default templates, it can take hours before you’re ready to start writing your business logic. With Infragistics Ultimate UI for Xamarin, those hours turn into minutes.

Infragistics Ultimate UI for Xamarin (which you can download and try for free ) enables you to write fast and run fast with a brand new set of feature-rich and high-performing controls, as well as a revolutionary new Productivity Pack that will change the way you create Xamarin applications.

While I’d love to write about all the great controls you get in Ultimate UI for Xamarin, in this post I want to focus on all the productivity tools Infragistics has just released that could change the way you write Xamarin.Forms applications:

Creating Xamarin.Forms applications one page at a time can be a daunting and time-consuming task. How convenient would it be to simply whiteboard out your next app, then have an entire MVVM based Xamarin.Forms app generated with a click of a button? That’s exactly what the Infragistics AppMap does. From File à New, select the Infragistics AppMap Project (Xamarin.Forms):

Next, you can use the Infragistics Xamarin.Forms Project Wizard to choose the platforms you want to target. This is one of my favorite features, because I don’t always want to target every single available platform.

Pay special attention to the checkbox that says “Show AppMap” — this is what you should really care about. Once you have selected your desired platforms and chosen your container (we’ll come back to this later), click the “Create Project” button to get to the brand-new AppMap dialog.

As soon as the dialog appears, you’ll feel right at home with an intuitive UI that is modeled after a mixture of Microsoft Visio and Microsoft Visual Studio. As you can see, the Infragistics AppMap gives you the ability to drag various types of Xamarin.Forms pages from the toolbox onto the design surface and arrange them as you see fit. You can also create connections between the pages that represent either child relationships (such as a TabbedPage with Tabs), or how each page will navigate to others.

Once you’re done designing the application in the AppMap designer, simply click the “Generate AppMap” button and watch the magic happen. Visual Studio will generate all the Views, ViewModels, and all the navigation code for you. You don’t have to do anything but press F5 to run the app. What use to take hours to do can now be done in a few minutes.

There are a lot more features available in the AppMap; you can take a deeper dive into it by watching this introduction video:

To make a long story short, the AppMap generates a Prism application.

For the AppMap to generate a well architected MVVM application based on best patterns and practices, it must have some type of application framework to enable the generation of reliable MVVM-friendly code. Infragistics decided that the Prism Library was the best Xamarin.Forms application framework available, and based all generated code off the features available in Prism.

Most notably, what makes AppMap even possible is the very powerful URI-based navigation framework that Prism provides. Also, remember when you had to choose a container in the Infragistics Xamarin.Forms New Project Dialog? Well, this is because Prism leverages dependency injection (DI) and requires the use of a container. Prism provides just about everything you need to be successful writing an MVVM-friendly Xamarin.Forms application.

For more information on Prism, or to get the source, you can find the Prism Library GitHub at .

After you’ve generated the entire application using the Infragistics AppMap, you can start adding controls and other elements to your pages. If you’re coming from other platforms like WPF, ASP.NET WebForms, or WinForms, you’ll notice a large gaping hole in the Xamarin.Forms development experience. There is no designer, and there is no toolbox! This creates a major barrier to your productivity.

…Unless, of course, you’re using the world’s first Xamarin.Forms Toolbox:

As you can see, the Infragistics Xamarin.Forms Toolbox provides all the standard Xamarin.Forms Layouts, Views, and Cells that are available out of the box from Xamarin. This makes it super simple to drag an element from the toolbox and drop it onto your XAML file, which will insert the XAML snippet at the drop point:

It gets better. Not only does the toolbox provide standard drag and drop behavior, it also provides extended XAML snippets. When you hold the CTRL key and drop a control onto the XAML file, a full XAML snippet is created that provides much more generated code. For example, when you want to create a Grid, chances are you want to have a few rows and columns created. Instead of writing that XAML by hand, though, just hold the CTRL key and let the toolbox do the work for you.

Not only is the Infragistics Xamarin.Forms Toolbox the world’s first toolbox for Xamarin.Forms, it’s also powered by NuGet packages. Yes, you read that right: Powered. By. NuGet. Since Infragistics also ships a ton of great Xamarin controls, it makes sense that they would show up in the toolbox whenever they are added to your project.

To try this, simply add one of Infragistics Xamarin.Forms controls to your project via the NuGet Manager, and keep an eye on the toolbox. For every Infragistics NuGet package you add to your solution, an entry for that control will be added to the Infragistics Xamarin.Forms Toolbox.

Now, drag an Infragistics control from the toolbox and drop it onto your XAML file. Two things happen. First — and the most obvious — is that the control is added to the XAML.

Second, which is even more amazing, is that if you look at the top of your XAML file, the XMLNS declaration for the control has been added automatically. This is a huge timesaver. No more looking at docs or trying to use intellisense to try to find the namespace of the control you want to use. Heck, in VS2015 you don’t even have intellisense, so this new toolbox saves a ton of time and headaches. What’s even better is if you already have an existing XMLNS defined, the toolbox recognizes and uses it, rather than creating another one.

I want to point out that when you first install Infragistics Ultimate UI for Xamarin, the toolbox is not shown in VS. You must manually display the toolbox by going to the View à Other Windows à Infragistics Toolbox menu from Visual Studio.

To see the Infragistics Toolbox in action, check out this video:

After you have designed and generated your entire application using the AppMap and then laid out your page’s structure by dragging and dropping controls from the Infragistics Xamarin.Forms toolbox, the last step is to start styling your controls, binding your controls to data, and configuring your controls to meet your application requirements.

There is only one problem with that last step: Xamarin.Forms does not have a designer! This makes it extremely difficult to configure any control and know how it will work or what it will look like until you press F5 and run your app on a device or an emulator. Not only is this a pain, but it is very time-consuming. Make a change, run the emulator. Make another change, run the emulator again. Repeat until you’re happy with the result. This takes forever!

Well, Infragistics has a solution to that problem too. Introducing the game-changing Infragistics Control Configurators! The Control Configurator it is a dialog you launch from the XAML editor from within Visual Studio that allows you to visually configure the Infragistics Xamarin.Forms controls.

How do you launch it? After you drag an Infragistics Xamarin.Forms control into your XAML editor, click the control name to place your mouse cursor in the control XAML. When you do this, you will see the Visual Studio Suggestion Light Bulb appear. Open the suggestion light bulb and you will see a menu option called “Configure [ControlName]”. Since I am using the XamRadialGauge, the menu item says “Configure XamRadialGauge”. I personally like to use the CTRL+. shortcut to launch the suggestion lightbulb.

Once you see the menu item, click it. You’ll see a dialog appear that has a design surface containing the control and then a ton of options.

All the Control Configurators have a common layout: ribbon at the top, a property grid on the right, and on the left you’ll get several other options, depending on the configurator you are using.

This example is using the XamRadialGauge Control Configurator, so the ribbon contains options to easily modify the ranges, the scale, and the needle. It even has QuickSets that allows you to use a predefined template to get you close to the gauge you have in mind.

If you want more control than what the ribbon menus can give you, jump into the property grid and start modifying every little property until you’re happy. No matter what you do, you’ll see the changes visually reflected on the design surface.

This example uses one of the many Quick Sets to create a gauge:

The next step is to data bind the Value property of the XamRadialGauge to a property in the ViewModel. You may be thinking you have to do this in XAML…but you don’t. Infragistics has provided an awesome data binding editor to use on any bindable property. Just find the property you want in the property grid, and click on the little square to the right of the property value.

Next, click the “Create Data Binding” menu option. You’ll see a dialog appear you can use to create your binding exactly as you see fit. Set the binding path, mode, converter (yes, it will automatically find all your converters), and provide a string format.

Once the dialog launches, notice it automatically finds the BindingContext (ViewModel) for you using a convention of [PageName]ViewModel. If the dialog guessed wrong (or couldn’t find it), you can set the BindingContext yourself by picking it from a list of available classes.

Once you have configured the control exactly as you want it, hit the “Apply & Close” button.

BAM! Check out all the XAML code that was automatically generated for you:

Now, I know your brains are oozing out of your head right about now, but there is more. After you generate all your XAML, make a change to the background color or some other property (in this example, I’m changing the background to LightBlue), then select the XamRadialGauge and show the Control Configurator again.

Yep, it not only allows you to generate XAML, but it will also read it in and the Control Configurator will show you exactly what your XAML does.

Infragistics ships configurator for the following controls:

For a more detailed look at using the Control Configurators, you can check out this video:

The best part about all these new productivity tools is that they are hosted on the Visual Studio Marketplace. This means that Infragistics can (and will) be pushing updates on a continuous delivery schedule to keep your installed extensions automatically updated. You don’t have to do anything. Let Visual Studio manage all the updating for you. You just concentrate on writing apps and being productive.

I don’t know about you, but I am really excited about all these new productivity tools for Xamarin.Forms development. Don’t get me wrong, the Infragistics Xamarin.Forms controls are just as awesome, but these new productivity tools are going to change the way you write Xamarin.Forms applications going forward. From File -> New to production, Infragistics has set the bar for Xamarin.Forms productivity.

The best part is that you can try these out for yourself right now. Head on over to Infragistics and download a trial of Infragistics Ultimate UI for Xamarin today , and you’ll be able to design, lay out, and build cross-platform mobile apps using Xamarin.Forms in a matter of minutes…instead of hours.

Brian Lagunas is a Microsoft MVP, a Xamarin MVP, a Microsoft Patterns & Practices Champion, and co-leader of the Boise .Net Developers User Group (NETDUG). Brian works at Infragistics as a Product Manager for all things XAML, which includes the award-winning WPF, Silverlight, Windows Phone, Windows Store, and Xamarin.Forms control suites. | https://www.infragistics.com/community/blogs/infragistics/archive/2017/05/03/write-fast-with-ultimate-ui-controls-for-xamarin.aspx | CC-MAIN-2017-39 | refinedweb | 2,172 | 63.19 |

ROX-Filer is in Debian/stable. To install:

# apt-get install rox-filer

You can get all the other ROX software using AddApp or ROX-All.

Dennis Tomas writes that he has

"put together a package "rox-desktop" containing nothing but some (IMHO) good defaults, 0install-wrappers for some core software and a login-script. [...] I've also made a menu-method for debian, which places app-wrappers for on-rox-apps in /usr/share/rox/Apps/Debian.

It's all available from.

You can also add it to your /etc/apt/sources.list:

deb binary/

Then update APT's cache with:

# apt-get update

There is also a (separate and less active) ROX-in-Debian Project at.

After installing, you might like to read the Getting Started Guide.

Existing (non-ROX) applications are available from /usr/share/applications.

You can install gtk themes w/ apt too.

apt-cache search gtk-engines

apt-cache search gtk2-engines

Then just select a theme from the GTk theme switcher or by runing ROX-session>SessionSetting>Display

I tried this in a chroot and found a few missing deps with the default login. Probably they should be added to the rox-desktop package:

sh: line 1: xmodmap: command not found

/usr/share/rox-desktop/Apps/Terminal/AppRun: line 3: exec: x-terminal-emulator: not found

/home/fred/.cache/0install.net/implementations/sha1=9a3adb6a6f19591db2e2d21a7fcee4643d293403/Linux-ix86/OroboROX: error while loading shared libraries: libXpm.so.4: cannot open shared object file: No such file or directory

/home/fred/.cache/0install.net/implementations/sha1=a90ceaa0905d8143813f9c74bdb488c2457829af/SystemTrayN/Linux-ix86/SystemTray: error while loading shared libraries: libglitz.so.1: cannot open shared object file: No such file or directory

Seriously need more information here!

I installed rox-filer using apt-get, it did not appear in the KDE menu. I guessed that $ rox-filer would be worth a try. After some error messages, it did lauch. I downloaded ROX-All.tgz but have very little idea what to do with it, I think there's just enough doc's to get me started.

My login page (KDM) does not offer to choose a window manager, KDE is the only one installed so far. Is KDM smart enough to add that after I install rox-all? Does rox-all set that?

If not, then what?

Thanks,

Lance

This worked for me (Debian/unstable):

$ cp /etc/dm/Sessions/rox.desktop /usr/share/xsessions/rox.desktop

but there's no "rox.desktop" in etc/dm/Sessions. In fact, there is no "etc/dm/", and no mention of "rox.desktop" anywhere.

Also, running the rox script brings up several errors:

metalgear:/home/vicky/Download/ROX-All-0.6# ./rox

Xlib: connection to ":0.0" refused by server

Xlib: Invalid MIT-MAGIC-COOKIE-1 key

Traceback (most recent call last):

File "/usr/lib/python2.3/site-packages/zeroinstall/0launch-gui/0launch-gui", line 5, in ?

import gui

File "/usr/lib/python2.3/site-packages/zeroinstall/0launch-gui/gui.py", line 1, in ?

import gtk, os, gobject, sys

File "/usr/lib/python2.3/site-packages/gtk-2.0/gtk/__init__.py", line 37, in ?

from _gtk import *

RuntimeError: could not open display

Actually, it looks like the rox-desktop package contains a /usr/share/xsessions/rox-desktop.desktop file already, so I'm not sure why it doesn't work for you. What version of kdm are you using? I have:

$ dpkg --status kdm

Version: 4:3.5.2-2+b1

A mailing list post from 2004 suggests that this command used to work:

$ kdesu kcmshell System/kdm

Select Sessions, "Add new" and enter "ROX".

However, it doesn't show a session tab for me.: Unable to fetch file, server said '/pub/rox4debian/dists/etch/main/binary-i386/Packages.gz: No such file or directory '

You need to use the correct settings, that is copy the above line exactly. That means that inside the rox4debian/ there's a folder binary/, but not the dists/etch/main/ you apparently are using in your sources list. | http://roscidus.com/desktop/node/86 | crawl-002 | refinedweb | 670 | 60.21 |

Let σ(n) be the sum of the positive divisors of n and let gcd(a, b) be the greatest common divisor of a and b.

Form an n by n matrix M whose (i, j) entry is σ(gcd(i, j)). Then the determinant of M is n!.

The following code shows that the theorem is true for a few values of n and shows how to do some common number theory calculations in SymPy.

from sympy import gcd, divisors, Matrix, factorial def f(i, j): return sum( divisors( gcd(i, j) ) ) def test(n): r = range(1, n+1) M = Matrix( [ [f(i, j) for j in r] for i in r] ) return M.det() - factorial(n) for n in range(1, 11): print test(n)

As expected, the test function returns zeros.

If we replace the function σ above by τ where τ(n) is the number of positive divisors of n, the corresponding determinant is 1. To test this, replace

sum by

len in the definition of

f and replace

factorial(n) by 1.

In case you’re curious, both results are special cases of the following more general theorem. I don’t know whose theorem it is. I found it here.

For any arithmetic function f(m), let g(m) be defined for all positive integers m by

Let M be the square matrix of order n with ij element f(gcd(i, j)). Then

Here μ is the Möbius function. The two special cases above correspond to g(m) = m and g(m) = 1. | http://www.johndcook.com/blog/tag/sympy/ | CC-MAIN-2014-41 | refinedweb | 260 | 71.24 |

# Dozen tricks with Linux shell which could save your time

[](https://habrahabr.ru/post/444890/)

* *First of all, you can read this article in russian [here](https://habr.com/ru/post/340544/).*

One evening, I was reading [Mastering regular expressions by Jeffrey Friedl](https://scanlibs.com/regulyarnyie-vyirazheniya-3-e-izdanie/) , I realized that even if you have all the documentation and a lot of experience, there could be a lot of tricks developed by different people and imprisoned for themselves. All people are different. And techniques that are obvious for certain people may not be obvious to others and look like some kind of weird magic to third person. By the way, I already described several such moments [here (in russian)](https://habrahabr.ru/post/339246/) .

For the administrator or the user the command line is not only a tool that can do everything, but also a highly customized tool that could be develops forever. Recently there was a translated article about some useful tricks in CLI. But I feel that the translator do not have enough experience with CLI and didn't follow the tricks described, so many important things could be missed or misunderstood.

Under the cut — a dozen tricks in Linux shell from my personal experience.

Note: All scripts and examples in the article was specially simplified as much as possible — so maybe you can find several of tricks looks completely useless — perhaps this is the reason. But in any case, share your minds in the comments!

#### 1. Split string with variable expansions

People often use **cut** or even **awk** just to substract a part of string by pattern or with separators.

Also, many people uses substring bash operation using ${VARIABLE:start\_position:length}, that works very fast.

But bash provides a powerful way to manipulate with text strings using #, ##,% and %% — it called *bash variable expansions*.

Using this syntax, you can cut the needful by the pattern without executing external commands, so it will work really fast.

The example below shows how get the third column (shell) from the string where values separated by colon «username:homedir:shell» using **cut** or using variable expansions (we use the \*: mask and the ## command, which means: cut all characters to the left until the last colon found):

```

$ STRING="username:homedir:shell"

$ echo "$STRING"|cut -d ":" -f 3

shell

$ echo "${STRING##*:}"

shell

```

The second option does not start child process (**cut**), and does not use pipes at all, which should work much faster. And if you are using bash subsystem on windows, where the pipes barely move, the speed difference will be significant.

Let's see an example on Ubuntu — execute our command in a loop for 1000 times

```

$ cat test.sh

#!/usr/bin/env bash

STRING="Name:Date:Shell"

echo "using cut"

time for A in {1..1000}

do

cut -d ":" -f 3 > /dev/null <<<"$STRING"

done

echo "using ##"

time for A in {1..1000}

do

echo "${STRING##*:}" > /dev/null

done

```

**Results**

```

$ ./test.sh

using cut

real 0m0.950s

user 0m0.012s

sys 0m0.232s

using ##

real 0m0.011s

user 0m0.008s

sys 0m0.004s

```

The difference is several dozen times!

Of course, the example above is too artificial. In real example we will not work with a static string, we want to read a real file. And for '**cut**' command, we just redirect /etc/passwd to it. In the case of ##, we have to create a loop and read file using internal '**read**' command. So who will win this case?

```

$ cat test.sh

#!/usr/bin/env bash

echo "using cut"

time for count in {1..1000}

do

cut -d ":" -f 7 /dev/null

done

echo "using ##"

time for count in {1..1000}

do

while read

do

echo "${REPLY##*:}" > /dev/null

done

```

**Result**

```

$ ./test.sh

$ ./test.sh

using cut

real 0m0.827s

user 0m0.004s

sys 0m0.208s

using ##

real 0m0.613s

user 0m0.436s

sys 0m0.172s

```

No comments =)

A couple more examples:

Extract the value after equal character:

```

$ VAR="myClassName = helloClass"

$ echo ${VAR##*= }

helloClass

```

Extract text in round brackets:

```

$ VAR="Hello my friend (enemy)"

$ TEMP="${VAR##*\(}"

$ echo "${TEMP%\)}"

enemy

```

#### 2. Bash autocompletion with tab

bash-completion package is a part of almost every Linux distributive. You can enable it in /etc/bash.bashrc or /etc/profile.d/bash\_completion.sh, but usually it is already enabled by default. In general, autocomplete is one of the first convenient moments on Linux shell that a newcomer first of all meets.

But the fact that not everyone uses all of the bash-completion features, and in my opinion is completely in vain. For example not everybody knows, that autocomplete works not only with file names, but also with aliases, variable names, function names and for some commands even with arguments. If you dig into autocomplete scripts, which are actually shell scripts, you can even [add autocomplete](https://habrahabr.ru/post/115886/) for your own application or script.

But let’s come back to the aliases.

You don't need to edit PATH variable or create files in specified directory to run alias. You just need to add them to profile or startup script and execute them from any place.

Usually we are using lowercase letters for files and directories in \*nix, so it could be very comfortable to create uppercase aliases — in that case bash-completion will ~~guess~~ your command almost with a single letter:

```

$ alias TAsteriskLog="tail -f /var/log/asteriks.log"

$ alias TMailLog="tail -f /var/log/mail.log"

$ TA[tab]steriksLog

$ TM[tab]ailLog

```

#### 3. Bash autocompletion with tab — part 2

For more complicated cases, probably you would like to put your personal scripts to $HOME/bin.

But we have functions in bash.

Functions don't require path or separate files. And (attention) bash-completion works with functions too.

Let’s create function LastLogin in **.profile** (don't forget to reload .profile):

```

function LastLogin {

STRING=$(last | head -n 1 | tr -s " " " ")

USER=$(echo "$STRING"|cut -d " " -f 1)

IP=$(echo "$STRING"|cut -d " " -f 3)

SHELL=$( grep "$USER" /etc/passwd | cut -d ":" -f 7)

echo "User: $USER, IP: $IP, SHELL=$SHELL"

}

```

*(Actually there is no important what this function is doing, it is just an example script which we can put to the separate script or even to the alias, but function could be better)*.

In console (please note that function name have an uppercase first letter to speedup bash-completion):

```

$ L[tab]astLogin

User: saboteur, IP: 10.0.2.2, SHELL=/bin/bash

```

#### 4.1. Sensitive data

If you put space before any command in console, it will not appears in the command history, so if you need to put plain text password in the command, it is a good way to use this feature — look into example below, *echo «hello 2»* will not appears in history:

```

$ echo "hello"

hello

$ history 2

2011 echo "hello"

2012 history 2

$ echo "my password secretmegakey" # there are two spaces before 'echo'

my password secretmegakey

$ history 2

2011 echo "hello"

2012 history 2

```

**It is optional**It is usually enabled by default, but you can configure this behavior in the following variable:

export HISTCONTROL=ignoreboth

#### 4.2. Sensitive data in command line arguments