text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91 values | source stringclasses 1 value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

|---|---|---|---|---|---|

In this assessment you'll use the Django knowledge you've picked up in the Django Web Framework (Python) module to create a very basic blog.

Project brief

The pages that need to be displayed, their URLs, and other requirements, are listed below:

In addition you should write some basic tests to verify:

- All model fields have the correct label and length.

- All models have the expected object name (e.g.

__str__()returns the expected value).

- Models have the expected URL for individual Blog and Comment records (e.g.

get_absolute_url()returns the expected URL).

- The BlogListView (all-blog page) is accessible at the expected location (e.g. /blog/blogs)

- The BlogListView (all-blog page) is accessible at the expected named url (e.g. 'blogs')

- The BlogListView (all-blog page) uses the expected template (e.g. the default)

- The BlogListView paginates records by 5 (at least on the first page)

Note: There are of course many other tests you can run. Use your discretion, but we'll expect you to do at least the tests above.

The following section shows screenshots of a site that implements the requirements above.

Screenshots

The following screenshots provide an example of what the finished program should output.

List of all blog posts

This displays the list of all blog posts (accessible from the "All blogs" link in the sidebar). Things to note:

- The sidebar also lists the logged in user.

- Individual blog posts and bloggers are accessible as links in the page.

- Pagination is enabled (in groups of 5)

- Ordering is newest to oldest.

List of all bloggers

This provides links to all bloggers, as linked from the "All bloggers" link in the sidebar. In this case we can see from the sidebar that no user is logged in.

Blog detail page

This shows the detail page for a particular blog.

Note that the comments have a date and time, and are ordered from oldest to newest (opposite of blog ordering). At the end we have a link for accessing the form to add a new comment. If a user is not logged in we'd instead see a suggestion to log in.

Add comment form

This is the form to add comments. Note that we're logged in. When this succeeds we should be taken back to the associated blog post page.

Author bio

This displays bio information for a blogger along with their blog posts list.

Steps to complete

The following sections describe what you need to do.

- Create a skeleton project and web application for the site (as described in Django Tutorial Part 2: Creating a skeleton website). You might use 'diyblog' for the project name and 'blog' for the application name.

- Create models for the Blog posts, Comments, and any other objects needed. When thinking about your design, remember:

- Each comment will have only one blog, but a blog may have many comments.

- Blog posts and comments must be sorted by post date.

- Not every user will necessarily be a blog author though any user may be a commenter.

- Blog authors must also include bio information.

- Run migrations for your new models and create a superuser.

- Use the admin site to create some example blog posts and blog comments.

- Create views, templates, and URL configurations for blog post and blogger list pages.

- Create views, templates, and URL configurations for blog post and blogger detail pages.

- Create a page with a form for adding new comments (remember to make this only available to logged in users!)

Hints and tips

This project is very similar to the LocalLibrary tutorial. You will be able to set up the skeleton, user login/logout behaviour, support for static files, views, URLs, forms, base templates and admin site configuration using almost all the same approaches.

Some general hints:

- The index page can be implemented as a basic function view and template (just like for the locallibrary).

- The list view for blog posts and bloggers, and the detail view for blog posts can be created using the generic list and detail views.

- The list of blog posts for a particular author can be created by using a generic blog list view and filtering for blog objects that match the specified author.

- You will have to implement

get_queryset(self)to do the filtering (much like in our library class

LoanedBooksAllListView) and get the author information from the URL.

- You will also need to pass the name of the author to the page in the context. To do this in a class-based view you need to implement

get_context_data()(discussed below).

- The add comment form can be created using a function-based view (and associated model and form) or using a generic

CreateView. If you use a

CreateView(recommended) then:

- You will also need to pass the name of the blog post to the comment page in the context (implement

get_context_data()as discussed below).

- The form should only display the comment "description" for user entry (date and associated blog post should not be editable). Since they won't be in the form itself, your code will need to set the comment's author in the

form_valid()function so it can be saved into the model (as described here — Django docs). In that same function we set the associated blog. A possible implementation is shown below (

pkis a blog id passed in from the URL/URL configuration).

def form_valid(self, form): """ Add author and associated blog to form data before setting it as valid (so it is saved to model) """ #Add logged-in user as author of comment form.instance.author = self.request.user #Associate comment with blog based on passed id form.instance.blog=get_object_or_404(Blog, pk = self.kwargs['pk']) # Call super-class form validation behaviour return super(BlogCommentCreate, self).form_valid(form)

- You will need to provide a success URL to redirect to after the form validates; this should be the original blog. To do this you will need to override

get_success_url()and "reverse" the URL for the original blog. You can get the required blog ID using the

self.kwargsattribute, as shown in the

form_valid()method above.

We briefly talked about passing a context to the template in a class-based view in the Django Tutorial Part 6: Generic list and detail views topic. To do this you need to override

get_context_data() (first getting the existing context, updating it with whatever additional variables you want to pass to the template, and then returning the updated context). For example, the code fragment below shows how you can add a blogger object to the context based on their

BlogAuthor id.

class SomeView(generic.ListView): ... def get_context_data(self, **kwargs): # Call the base implementation first to get a context context = super(SomeView, self).get_context_data(**kwargs) # Get the blogger object from the "pk" URL parameter and add it to the context context['blogger'] = get_object_or_404(BlogAuthor, pk = self.kwargs['pk']) return context

Assessment

The assessment for this task is available on Github here. This assessment is primarily based on how well your application meets the requirements we listed above, though there are some parts of the assessment that check your code uses appropriate models, and that you have written at least some test code. When you're done, you can check out our the finished example which reflects a "full marks" project.

Once you've completed this module you've also finished all the MDN content for learning basic Django server-side website programming! We hope you enjoyed this module and feel you have a good grasp of the basics! | https://developer.mozilla.org/tr/docs/Learn/Server-side/Django/django_assessment_blog | CC-MAIN-2020-45 | refinedweb | 1,255 | 63.09 |

event hiding in inherited class not working

I'm trying to hide an event from an inherited class, but not via EditorBrowserable attribute. I have a DelayedFileSystemWatcher which inherits from FileSystemWatcher and I need to hide the Changed, Created, Deleted and Renamed events and make them private. I tried this, but it does not work:

/// <summary> /// Do not use /// </summary> private new event FileSystemEventHandler Changed;

The XML comment is not showing in IntelliSense (the original info is shown). However, if I change the access modifier to public, the XML comment is shown in IntelliSense.

Any help is is welcome.

You don't want to use it but it trivially solves your problem:

class MyWatcher : FileSystemWatcher { [Browsable(false), EditorBrowsable(EditorBrowsableState.Never)] private new event FileSystemEventHandler Changed; // etc.. }

The only other thing you can do is encapsulate it. Which is doable, the class just doesn't have that many members and you are eliminating several of them:

class MyWatcher : Component { private FileSystemWatcher watcher = new FileSystemWatcher(); public MyWatcher() { watcher.EnableRaisingEvents = true; watcher.Changed += new FileSystemEventHandler(watcher_Changed); // etc.. } public string Path { get { return watcher.Path; } set { watcher.Path = value; } } // etc.. }

Programming and Problem Solving with Visual Basic .NET, Overriding If you look at the definition of the Text property in the TextBox class in the table tells us every property, method, and event that is available in the class. Hiding When a derived class defines a property with the same name as One If you are not explicitly specify "override" but use the same name, you simply hide the inherited member behind a completely different member and get the warning for that; you can suppress this warning with new. But you cannot override an event, so all you do is hiding. I don't see a reason for doing it in your case.

If you inherit it, you have to provide it.

Maybe instead of inheriting:

Public Class DelayedFileSystemWatcher : Component { private FileSystemWatcher myFSW; public DelayedFileSystemWatcher { myFSW = new FileSystemWatcher(); } }

The Common Language Infrastructure Annotated Standard, 8.10.3 Property and Event Inheritance Properties and events are 8.10.4 Hiding, Overriding, and Layout There are two separate issues involved in base type (hiding) and the sharing of layout slots in the derived class (overriding). Hiding is I'd say the following rule could make sense for displaying the event in the "Event" Section: "If two defined events have the same identifier, do not duplicate the entries in the events section, but include docs (with pointers to the inherited event definition) for the actual module / class page".

You can't make something that's public in the base class into private in the derived class. If nothing else, it's possible to cast the derived class to the base class and use the public version.

The way you hide the base class's event actually works, but only from where the new event is visible, that is, from inside the derived class. From outside, the new event is not visible, so the base class's is accessed.

C# 5.0 Programmer's Reference, Notice how it uses the class instead of an instance to identify the event. (You can also avoid this problem if you don't use static events.) hiding. and. overriding. events. Events are a bit unusual because they are not inherited the same way The OverdraftAccount class can use the new keyword to hide the Overdrawn The class Mustang inherits the method identifyMyself from the class Horse, which overrides the abstract method of the same name in the interface Mammal. Note: Static methods in interfaces are never inherited. Modifiers. The access specifier for an overriding method can allow more, but not less, access than the overridden method.

Differences Among Method Overriding, Method Hiding (New , No unread comment. loading. The "override" modifier extends the base class method, and the "new" modifier hides it. The "virtual" keyword modifies a method, property, indexer, or event declared in the base class and allows it to be If a method is not overriding the derived method then it is hiding it. Note. Do not declare virtual events in a base class and override them in a derived class. The C# compiler does not handle these correctly and it is unpredictable whether a subscriber to the derived event will actually be subscribing to the base class event.

Hide base class property in derived class, This is my Base class public class BaseClass { public string property1 { get; set; } abstract or virtual implementation of an inherited method, property, indexer, or event. PawarName); // not hidden } public class Pawar { public virtual System. class House : Building // DerivedClass inheritance at work The basic steps for overriding any event defined in the.NET Framework are identical and are summarized in the following list. To override an inherited event Override the protected On EventName method. Call the On EventName method of the base class from the overridden On EventName method, so that registered delegates receive the event.

Inheritance, Jun 29 - Jul 2, ONLINE EVENT What's the meaning of, Warning: Derived::f(char) hides Base::f(double) ? For safety: to ensure that your class is not used as a base class (for example, to be sure that you can copy objects without fear of slicing). Although a virtual call seems to be a lot more work, the right way to judge Hiding Properties. When. | http://thetopsites.net/article/58458287.shtml | CC-MAIN-2020-40 | refinedweb | 887 | 61.46 |

Odoo Help

Odoo is the world's easiest all-in-one management software. It includes hundreds of business apps:

CRM | e-Commerce | Accounting | Inventory | PoS | Project management | MRP | etc.

How should i get days of a month automatically(ie,In Jan it should come 31,Feb it should be 28...) in my report

I am using Openoffice+ base_report_designer module for printing this requirement.

But i dont know how to implement this.. Can any one help me ?

In python create function using current date to calculate End of the Current month.

The below link may be useful to Create function in python report file and call in Openoffice

report \attendance_errors.py

def __init__(self, cr, uid, name, context): super(attendance_print, self).__init__(cr, uid, name, context=context) self.localcontext.update({ 'time': time, 'lst': self._lst, 'total': self._lst_total, 'get_employees':self._get_employees, }) def _get_employees(self, emp_ids): emp_obj_list = self.pool.get('hr.employee').browse(self.cr, self.uid, emp_ids) return emp_obj_list

report \attendance_errors.sxw file open with openoffice

[[ repeatIn(get_employees(data['form']['emp_ids']),'employee') ]]

How can we connect this code to our reports.

eg: get_periods function is defined in the py file for getting the month and call this functin in report like this. [[get_periods(o.value)]] But Error message like this " name 'get_periods' is not defined " (I manually send the report to server.) Place the .rml and .py file in the report folder of hr_attendance module . Then upgrade the module.

Or Without using python code. How can we implement this.

report in .ODT format

About This Community

Odoo Training Center

Access to our E-learning platform and experience all Odoo Apps through learning videos, exercises and Quizz.Test it now | https://www.odoo.com/forum/help-1/question/how-should-i-get-days-of-a-month-automatically-ie-in-jan-it-should-come-31-feb-it-should-be-28-in-my-report-43418 | CC-MAIN-2017-13 | refinedweb | 278 | 52.46 |

CodePlexProject Hosting for Open Source Software

Hi All,

is any one implemented POST-Get-Redirect scenoria with WCF WebAPI . is so how to do it.

Do you mean where every POST is redirected to the same request, but as GET?

That doesn't apply to APIs... that only applies to Web UIs.

No , Once the post a resource back to the server you want to redirect the Client to the different page ,Its like giving 301,302 to the Client

Sounds like your answer is "yes", not "no".

I don't think that that methodology should be anywhere near WebApi.

:) ..

May be a small problem and solution approach will help you understand more..

Lets imagine i have a Customer representation from the server to the client and there is an edit happened in client and i will post the edited data to the server and ask the client to move to the different Resource . How will you do through REST..

You don't do that with REST.

With rest when you edit a resource, you return the edited resource in the response.

Anyways. You can do it like this:

public class myservice

{

public HttpResponseMessage<int> Post(int x)

{

var m = new HttpResponseMessage(HttpStatusCode.Redirect);

m.Headers.Location = new Uri("");

m.Content = new ObjectContent<int>(1);

return m;

}

}

You mean to say Put can't return 301 or 302 as the success code .Is there any reason in not doing it

I didn't say can't, I meant it shouldn't.

If a user asks for one resource, why are you giving them another one. That doesn't make any sense.

IMHO ,Hypermedia as engine of application state gives the client a direction to the which is the next logical to take after each action . According to me PUT Verb is a Action on the resource should also say can say which is the next action the client should

take.Correct me if i am wrong

Well this is why there are so many derivations of what "REST" means ;)

One person implements it one way, the other another. If you follow my example above, that should give you your intended result.

Are you sure you want to delete this post? You will not be able to recover it later.

Are you sure you want to delete this thread? You will not be able to recover it later. | https://wcf.codeplex.com/discussions/299083 | CC-MAIN-2017-09 | refinedweb | 397 | 73.68 |

Hi I'm just using Playfab; I want to declare. Why is not it coming to autocomplete? using Playfab; The red line is recognized as an error and can not be compiled. Help,.... If you delete and reinstall the sdk, the same phenomenon will be repeated.

In my other projects using PlayFab; This line works correctly (no git hub is used).

However, this project is being shared with many people through a bit-bucket.

Is this causing an error because reason above ??

Answer by Jay Zuo · Mar 25 at 07:15 AM

Without your project, it's hard to say why you have this issue. I'd suggest you remove both PlayFabEditorExtensions and PlayFabSdk folder first and then comment out all PlayFab related code and make sure there is no error in your project. Then you can reinstall PlayFabEditorExtensions and PlayFabSdk and add the code back on by one. Title ID is not related for this issue. Since your project is shared with others, it's possible you didn't commit all necessary files or someone else pushed wrong code. You may need to check your code by yourself.

Reinstallation does not work.

I can not even search for this problem. Is it a problem only for me? Only in this project, vscode has sdk turned into a yellow color.

using PlayFab; << I do not know why I can't use this sentence ....

I'm afraid this problem is only for your project. Have you tried to create a new project? I'd believe there should be no issue to use PlayFab in that new project.

Answers Answers and Comments

2 People are following this question. | https://community.playfab.com/questions/27962/help-me-namespace-define-error.html | CC-MAIN-2019-26 | refinedweb | 276 | 76.11 |

4.3 Configuring a Domain Controller

Installing Active Directory

Before installing the Active Directory on your Windows 2000 server, you must have a plan. Unlike using Windows NT 4.0, you need a lot more information than just knowing if the server you are installing will be a Primary Domain Controller or a Backup Domain Controller. With Windows 2000, you must know exactly how this Domain Controller fits into your enterprise. Principally, you need to know the following about this Domain Controller:

Will it be in a new Domain or a replica of an existing Domain?

Will it be in a new tree or in an existing tree within your enterprise?

Will it be in a new forest or an existing forest?

A New Domain Versus a Replica of an Existing Domain

In Windows NT 4.0, this would be the same as asking whether this server will be a Primary Domain Controller or a Backup Domain Controller. Choosing to create a replica of an existing Domain is the same as creating a BDC in a Windows NT 4.0 environment. However, because Windows 2000 allows multi-master updates of the accounts database, there are no Primary Domain Controllers and Backup Domain Controllers, just Domain Controllers. The term replica simply means that the Domain name context will be duplicated from another Domain Controller in the same Domain.

A New Tree Versus an Existing Tree Within Your Enterprise

A tree simply defines a hierarchical naming context. For each child in the tree, there exists exactly one parent. There are three rules that determine how trees function in Windows 2000:

A tree has a single unique name within the forest. This name specifies the tree's root. Be aware that tree names cannot overlap. If mycompany.com is a tree, Europe.mycompany.com cannot exist as a separate tree in the forest. It can exist only as a child Domain within mycompany.com.

The tree has a contiguous namespace. This means that children Domains are directly related to the Domains above and below themselves.

Children Domains inherit the naming from their parent. For example, if mycompany.com is a tree, europe.mycompany.com and asia.mycompany.com would be children within that tree.

A New Forest Versus an Existing Forest

A forest in Windows 2000 is the group of one or more Active Directory trees. All Domains in the forest share a common schema and configuration-naming context. However, it is important to note that separate trees in a forest do not form a contiguous namespace, even if peer trees are connected through two-way transitive trust relationships.

Table 4.3.1 provides a list of other critical information needed before installing the Active Directory on a Windows 2000 server.

Table 4.3.1 Information Needed Before Installing the Active Directory

After you have gathered all the necessary information, you are ready to install the Active Directory. There are three main ways to install the Active Directory on a Windows 2000 server:

The Active Directory Installation Wizard will be automatically launched upon upgrading a Windows NT 4.0 Domain Controller.

From Configure Your Server Wizard, select the Active Directory Tab. Follow the instructions to launch the Active Directory Installation Wizard.

From the Start menu, click Run and execute DCPROMO.EXE.

If you have gathered the necessary information prior to running the Active Directory Wizard, you should be able to follow the prompts provided. Upon completion, the Active Directory will be installed on your server.

Can I Move the Domain?

If you decide that you need to move this Domain to another location within your forest, Microsoft has provided a tool.

For more information, see "Going Deeper: Restructuring Domains" later in this chapter.

Configuring Active Directory Replication

The).

There are two types of Active Directory replication in Windows 2000. Each one has its own set of rules and behaves very differently from the other. In order to configure Active Directory Replication, you need to understand the following:

Replication within a site

Replication between sites

How to use the Active Directory Sites and Services MMC snap-in

Replication Within a Site

Replication within a site is optimized to reduce the time it takes changes to reach other Domain Controllers. To do this, the Knowledge Consistency Checker (KCC) creates a bi-directional ring of connections between all other Domain Controllers in that site. However, as the number of DCs in a site grows, the replication latency continues to be increased. To solve this problem, Microsoft implemented an algorithm, ensuring that all updates are fewer than three hops from the source of the change to the destination. During the initial creation of this replication topology, it is possible for duplicate or unnecessary replication paths to exist. However, the KCC is smart enough to detect these redundant connections and remove them. Finally, it is important to note that replication within a site cannot be configured nor have a schedule applied to it.

Replication Between Sites

Replication between sites is configured to optimize the amount of data sent over the network, given your implementation's tolerance for latency. Each group of sites is connected with a site link. Each of these site links has a relative cost (that you assign). The KCC then generates a least-cost spanning tree, as calculated by the relative cost of the site links. To further optimize the data sent, replication between sites is compressed. Although this causes slightly higher utilization on the target and destination servers, it allows you to more efficiently use your physical connections. Inter-site replication can also be configured to store changes and replicate from a minimum of every 15 minutes to a maximum of every 10,080 minutes. Finally, this type of replication has a configurable schedule of one-hour blocks. For example, you can configure replication to only occur between 5:00 p.m. and 8:00 a.m., if necessary.

For more information on the algorithms used by Active Directory Replication, see the Microsoft White Paper, "Active Directory Architecture."

Using Active Directory Sites and Services

Active Directory Sites and Services is the MMC snap-in that allows you to configure Active Directory replication. Specifically, you can do the following:

Create sites

Create subnets

Create IP site links

See Figure 4.3.1 for an example of using the Active Directory Sites and Services MMC snap-in.

Figure 4.3.1 Configuring sites, subnets, and site links using the MMC snap-in.

You can launch the Active Directory Sites and Services MMC snap-in from the Administrative Tools program group on the Start menu.

For more information on configuring and installing Administrative tools, see Chapter 3.3, "Using Administrative Tools."

Leave the Default Site and Site Link in Place

Do not rename or delete Default-First-Site-Name and DEFAULTIPSITELINK in the Active Directory. A Catch-22 situation exists between sites and site links. When you create a site, you must select a site link that it uses. Also, when you create a site link, you must select at least two sites that it connects. Leaving the defaults in place provides you with a staging area when adding sites and site links.

Creating Sites

Creating a site within the Active Directory Sites and Services MMC snap-in is quite easy. You simply right-click your mouse on the Sites container and select New. Type in the name of your new site and select a site link that it will use to connect it with other sites in your enterprise.

Knowing when to create a site is more difficult. There are only two requirements for creating sites:

All subnets within the site should be "well-connected." The physical links connecting these subnets should always be available. You do not want a site to contain subnets that are linked with a dial-up connection or a connection that is available only a few hours each day.

All subnets within the site should be connected via LANs. If you have multiple LANs connected with high-speed links, they are also good candidates for inclusion in a single site. Microsoft recommends that all subnets within the site be connected with links greater that 64 Kbps.

Based on your infrastructure and the needs of your organization, you should attept to find the correct balance between one site and many sites. Table 4.3.2 goes over some of the basic pros and cons of small versus large sites.

Table 4.3.2 Small Number of Sites Versus Large Numbes of Sites

Create site names from general to specific. For example, USA-AZ-Phoenix. Because sites are sorted alphabetically, this allows you to find them more easily in the Active Directory Sites and Services MMC snap-in.

Creating Subnets

In Windows 2000, a site is a collection of subnets. Also, subnets allow clients and servers to know what site they are in. For example, if you install a new Domain Controller, and the DC's subnet is already identified, that DC will be installed in the site that the subnet belongs to.

To create subnets in Windows 2000, do the following:

Run the Active Directory Sites and Services MMC snap-in.

Right-click the Subnet container and select New Subnet.

Enter the address of the subnet and the subnet mask.

Select the site that this subnet belongs to.

If your company groups subnets together at a physical location, you do not need to create subnet objects for every physical subnet. This can greatly simplify the subnet object maintenance in Windows 2000. For example, if the Boston office exclusively uses subnets beginning with 10.1.0.0, you can define a single subnet for that location. You do this by entering 10.1.0.0 as the subnet address and 255.255.0.0 as the subnet mask. This way, you can define one subnet for the entire location, rather than manually entering each physical subnet (10.1.1.0, 10.1.2.0, and so on).

Creating IP Site Links

After you decide that you need to implement multiple Windows 2000 sites, you need to plan how those sites will be connected. Inter-site transports, or site links, connect your sites and control replication and site coverage (if a Domain controller does not exist at a site).

Table 4.3.3 shows the items used to configure site links and their acceptable items.

Table 4.3.3 Configurable Items in IP Site Links

To create site links in Windows 2000, do the following:

Run the Active Directory Sites and Services MMC snap-in.

Select the Inter-Site Transports container to expand it.

Right-click the IP container and select New Site Link.

Enter the name of the site link and select at least two sites that will use this link.

Site links do not need to simply be point-to-point connections. It is possible for a site link to contain more than two sites. This is useful for reducing the number of site links that need to be created and managed. For example, if your company has three sites, each connected to one other with a T1, you can set up a single site link in which all three sites are members. This greatly simplifies the number of links that need to be created for large implementations.

Site Coverage

Not all sites in the Active Directory need to contain a Domain Controller. This is because of an important feature known as site coverage. By default, all Windows 2000 Domain Controllers will examine the sites and site links in the enterprise. The DC will then register itself in any site that does not already have a DC for that Domain.

This means that every site wil have a DC defined, by default, for every Domain in the enterprise, even if that site does not physically contain a DC for that Domain. The DCs that are published will be those from the "closest site" defined by the replication topology.

It is also possible to manually configure a DC to register in another site, regardless of the replication topology. This can be done by manually updating a Registry value on the Domain Controller that you want to register in another site. This is implemented with the SiteCoverage:REG_MULTI_SZ value in HKEY_LOCAL_MACHINE\ System\ CurrentControlSet\ Services\ Netlogon\ Parameters\.

Set this value to the name of the site or sites that you want this DC to register in. The site names exactly match the site names created in the Active Directory Sites and Services MMC snap-in. Within the SiteCoverage value, there must be only one site on each line.

Best Practices for Designing Your Active Directory Replication

Create sites based on collections of high-speed networks. You need to decide what determines a "high-speed" network, based on your needs.

Put DCs into those sites based on authentication and authorization needs. In some sites, it may be acceptable to use Domain Controllers in remote locations. However, be sure to assess the impact of a WAN outage.

Configure links based on the logical WAN to optimize replication between DCs.

Modifying the Active Directory Schema

The.

To allow updates to be made to the schema, you must do the following:

Configure your client to run the Active Directory Schema Manager MMC.

Use the Active Directory Schema MMC snap-in to change the Schema Operation Master to allow schema updates.

Be designated as a member of the Schema Administrators Global Security Group, which is located in the forest root.

Schema Modifications Are Permanent!

Schema modifications cannot be reversed and have serious implications throughout the Active Directory. After a class or attribute is added to the schema, it cannot be deleted, although it can be disabled.

Configure Your Client to Run the Active Directory Schema MMC Snap-in

The Active Directory Schema MMC is not installed by default on either Windows 2000 Professional Edition or Windows 2000 Server. To install the snap-in on Windows 2000 Professional, simply install the Administrative tools.

For instructions on installing the Administrative tools, see Chapter 3.3, "Using Administrative Tools."

On the Windows 2000 server family, you must enable the snap-in by registering the Schema Management Dynamic Linked Library (DLL). To do this, you need to run regsvr32.exe schmmgmt.dll from a command prompt.

After the Administrative tools are installed and enabled on your computer, you can then run MMC.exe and add the Active Directory Schema Console. Figure 4.3.2 shows an example of the Active Directory Schema MMC snap-in.

For more information on configuring the MMC console, see Chapter 3.2, "Using MMC Consoles."

Figure 4.3.2 Modifying the Windows 2000 schema using the MMC snap-in.

Use the Active Directory Schema MMC Snap-in to Change the Schema Operation Master to Allow Schema Updates

By default, all Domain Controllers prevent modifications on the schema. To modify the schema, you must configure the Schema Operations Master to allow updates. You accomplish this by executing the following steps:

Run the Active Directory Schema MMC snap-in.

Right-click the Active Directory Schema container.

Select Operations Master.

At the bottom of the Change Schema Master dialog box, select the checkbox to enable the option The Schema may be modified on this Domain Controller.

You will be able to do this only if you are a member of the Schema Admins Global Security group in the forest root.

The schema is also protected by a Windows 2000 Access Control List. By default, only members of the Schema Admins Global Security Group can make changes to the schema.

Where Should the Schema Admins Group Exist?

Because the Schema Admins group is a Global group, your account must exist in the forest root Domain. If your forest root Domain is in Windows 2000 Native mode, you can change this group to a Universal group, which can contain users from outside the local Domain.

For more information on Windows 2000 Groups, see Chapter 4.5, "Creating and Managing Groups."

Common Examples of Changes You Might Need to Make to the Schema

Although it is not recommended that you extend the schema (for example, add classes or attributes), it is sometimes necessary to change the properties of existing attributes. Even though these types of changes can be reversed, you should still plan carefully prior to making these changes.

There are two main types of schema changes that might be desirable to make:

Mark attributes for inclusion in the Global Catalog

Index attributes in the Active Directory:

Run the Active Directory Schema MMC snap-in.

Select the Attributes container.

Right-click the attribute you want to modify and select Properties.

Enable the Replicate this attribute to the Global Catalog checkbox.

Index Attributes in the Active Directory

The second type of change that may be desirable is to index attributes in the Active Directory. Doing so should increase the speed with with your Active Directory-enabled application can search that attribute.

To index an attribute in the Active Directory, do the following:

Run the Active Directory Schema MMC snap-in.

Select the Attributes container.

Right-click the attribute you want to modify and select Properties.

Enable the Index this attribute in the Active Directory checkbox.

Going Deeper: Restructing Domains

No

Move Objects Within a Domain

Moving objects within a Domain is the simplest of all restructuring activities. This functionality is built into the the Active Directory Users and Computers MMC snap-in. To move an object, right-click the object and select All Tasks; then choose Move. You will be presented with a list of containers within the Domain. Simply select the appropriate destination. Other MMC snap-ins also have this functionality for the objects they manage. For example, you can move Domain Controllers between sites in the Active Directory Sites and Services MMC snap-in.

Move Objects Between Domains in the Forest

To make it possible to move objects or collections of objects from one Domain in the forest to another, Microsoft provides the MOVETREE.EXE utility in the Windows 2000 Resource Kit.

MOVETREE.EXE commands can be quite complex. A complete list of parameters can be found by running MOVETREE.EXE /? or checking the Windows 2000 Resource Kit online documentation.

Before attempting to use MOVETREE.EXE, there are a couple of things that you need to do in advance. First, the destination Domain must be in Native mode. Second, the immediate parent of the object you are moving must exist. In the following example, the OU Executives must exist in the Domain mycompany.com.

Example of a Movetree Command

Situation: You need to move a single user object John Q. Public in the OU Finance from the Domain eur.mycompany.com to the OU Executives in the Domain mycompany.com:

Restructure Entire Domains

In Windows 2000, it is not possible to prune and graft Domains, either within a forest or between forests. However, it is possible to achieve the same end result with a little effort.

To make it possible to restructure Domains and to aid in the migration to Windows 2000, Microsoft has jointly developed the Active Directory Migration Tool (ADMT). This tool makes it possible to move all or part of a Domain to another location. For example, you can split Domains, consolidate Domains, or effectively move Domains by executing the following steps:

Create the target Domain in your forest.

Use the ADMT to migrate the objects from the source to the target.

Decommission the source Domain.

Create the Target Domain In Your Forest

One drawback of the ADMT is that you cannot perform an intact Domain move. The target Domain must exist in the Windows 2000 forest as a Native mode Domain. Follow the steps described earlier in this chapter to create the new Domain. This Domain will now be the destination for the objects that you are moving.

Note: This step is not necessary if you are consolidating Domains and at least one of them is in the Windows 2000 forest. Simply pick one of the Windows 2000 Domains in your forest as the target and migrate the other Domains' objects to that Domain.

Use the ADMT to Migrate the Objects from the Source to the Target

The ADMT provides major benefits over utilities such as MOVETREE when doing major Domain restructuring or migrations. These benefits include the following:

Task based MMC snap-in. The tool looks and behaves like other Windows 2000 tools.

Provides reporting and modeling tools. You have the ability to simulate migrations and view reports. Reports are saved as HTML files to allow them to be posted to your intranet.

Provides group synchronization. Without this tool, you would be required to migrate an entire closed set. Closed sets are collections of users and groups that can be moved without cloning. It is possible that the smallest closed set is the entire Domain. For example, due to the rules of group membership, you cannot move a Global group until all members of that group are in the destination Domain. Also, you cannot move users without moving their Global groups.

ADMT continues the operation, even if there are individual failures. This prevents a single failure from causing the entire operation to fail.

For more information on the ADMT, see the Microsoft Web site at.

Decommission the Source Domain

If desired, you can decommission the source Domain. If this Domain is part of your Windows 2000 forest, you need to run the Active Directory Wizard on each of the Domain Controllers to uninstall the Active Directory. On the last Domain Controller (preferably the one that owns the PDC FSMO), you will specify that this is the last DC in this Domain. This will remove all references of that Domain from the Active Directory.

This step is not necessary if you are splitting Domains and the source Domain is in the Windows 2000 forest. For example, if you want to split a Domain in half, you could migrate half of the objects to the new Domain and leave half in the current Domain. | https://www.informit.com/articles/article.aspx?p=131008&seqNum=4 | CC-MAIN-2021-49 | refinedweb | 3,673 | 56.25 |

In this tutorial, I will explain the fundamentals of java classes and objects.

Java is an object-oriented programming language. This means, that everything in Java, except the primitive types is an object. But what is an object at all? The concept of using classes and objects is to encapsulate state and behavior into a single programming unit. This concept is called encapsulation. Java objects are similar to real-world objects. For example, we can create a car object in Java, which will have properties like current speed and color; and behavior like: accelerate and park.

Creating a Class

Java classes are the blueprints of which objects are created. Let’s create a class that represents a car.

public class Car { int currentSpeed; String name; public void accelerate() { } public void park() { } public void printCurrentSpeed() { } }

Look at the code above. The car-object states (current speed and name) are stored into fields and the behavior of the object (accelerate and park) is shown via methods. In this example the methods are accelerate(), park() and printCurrentSpeed().

Let us implement some functionality into those methods.

1. We will add 10 miles per hour to the current speed of the car each time we call the accelerate method.

2. Calling the park method will set the current speed to zero

3. printCurrentSpeed method will display the speed of the car.

To implement these three requirements we will create a class named Car and store the file as Car.java

public class Car { int currentSpeed; String name; public Car(String name) { this.name = name; } public void accelerate() { // add 10 miles per hour to current speed currentSpeed = currentSpeed + 10; } public void park() { // set current speed to zero currentSpeed = 0; } public void printCurrentSpeed() { // display the current speed of this car System.out.println("The current speed of " + name + " is " + currentSpeed + " mpH"); } }

Class Names

When you create a java class you have to follow this rule: the file name and the name of the class must be equal. In our example – the Car class must be stored into a file named

Car.java . Java is also case-sensitive: Car, written with capital C is not the same as car, written with lower-case c.

Java Class Constructor

Constructors are special methods. Those are called when we create a new instance of the object. In our example above the constructor is:

public Car(String name) { this.name = name; }

Constructors must have the same name as the class itself. They may take parameters or not. The parameter in this example is “name”. We create a new car object using this constructor like this (I will explain this in more detail later in this tutorial):

Car audi = new Car("Audi");

Java Comments

Did you noticed the // marker in-front of lines 11, 16 and 21? This is how we write comments in Java. Lines marked as comment will be ignored while executing the program. You can write comments to give additional explanation of what is happening in your code. Writing comments is a good practice and will help others to understand your code. It will also help you when you come back later to your code.

Creating Objects

Now lets continue with our car example. We will create a second class named CarTest and store it into a file named CarTest.java

public class CarTest { public static void main(String[] args) { // create new Audi car Car audi = new Car("Audi"); // create new Nissan car Car nissan = new Car("Nissan"); // print current speed of Audi - it is 0 audi.printCurrentSpeed(); // call the accelerate method twice on Audi audi.accelerate(); audi.accelerate(); // call the accelerate method once on Nissan nissan.accelerate(); // print current speed of Audi - it is now 20 mpH audi.printCurrentSpeed(); // print current speed of Nissan - it is 10 mpH nissan.printCurrentSpeed(); // now park the Audi car audi.park(); // print current speed of Audi - it is now 0, because the car is parked audi.printCurrentSpeed(); } }

In the code above we first create 2 new objects of type Car – Audi and Nissan. This are two separate instances of the class Car (two different objects) and when we call the methods of the Audi object this does not affect the Nissan object.

The result of executing CarTest will look like this:

The current speed of Audi is 0 mpH The current speed of Audi is 20 mpH The current speed of Nissan is 10 mpH The current speed of Audi is 0 mpH

I encourage you to experiment with the code. Try to add a new method to the Car class or write a new class.

In our next tutorial you will learn more about the concepts of Object Oriented Programming. | https://javatutorial.net/java-objects-and-classes-tutorial | CC-MAIN-2020-40 | refinedweb | 778 | 64.41 |

Odoo Help

Odoo is the world's easiest all-in-one management software. It includes hundreds of business apps:

CRM | e-Commerce | Accounting | Inventory | PoS | Project management | MRP | etc.

How to count how many products are in a report?

I have a report on the account.invoice model and want to show how many products in the invoice line. Below will show a picture of what I want to do.

Image:

The idea is to count how many products there are on the invoice and return the number of the quantity of products.

Maybe it may be simple, but really do not know everything about Odoo.

Thanks for your advice and help.

I try to query into report, it's working...

class customer_report(models.Model):

_name = "customer.report"

_description = "Orders Statistics"

_auto = False

name = fields.Many2one('res.partner', readonly=True)

p_id = fields.Many2one('preorder.config','PreOrder Ref')

tot_product = fields.Integer('# of Unique Product')

tot_piece = fields.Integer('# of Piece')

def init(self, cr):

"""Initialize the sql view for the event registration """

tools.drop_view_if_exists(cr, 'customer_report')

# TOFIX this request won't select events that have no registration

cr.execute(""" CREATE VIEW customer_report AS (

poc.id::varchar || '/' || coalesce(poc.id::varchar,'') AS id,

poui.customer AS p_id,

poui.partner_id AS name,

count(pl.product_id) AS tot_product,

count(poc.id) AS tot_piece

from

preorder_config poc

left join preorder_user_input poui on (poui.preorder_id = poc.id)

left join preorder_product_rel ppr on (ppr.preorder_id = poc.id)

left join preorder_user_input_product_line pl on (pl.user_input_id = poui.id)

group by

poc.id, poui.preorder_id, poui.partner_id

)

""")

About This Community

Odoo Training Center

Access to our E-learning platform and experience all Odoo Apps through learning videos, exercises and Quizz.Test it now

Can You post your code ? | https://www.odoo.com/forum/help-1/question/how-to-count-how-many-products-are-in-a-report-100190 | CC-MAIN-2018-17 | refinedweb | 287 | 62.75 |

04-28-2015 08:25 AM

The LWIP library included with Xilinx SDK 2014.4 has a problem with handling of received packets.

It seems to be a related to handling of the memory cache. The problem manifests through

byte-swapped port numbers appearing in tcp_input(). Affected packets are skipped, and LWIP

sends out TCP-RST packets for these invalid packets.

Of course the TCP protocol will recover from the data loss, but the data rate drops

down to 20-50 Mbit/sec - fairly low for a 1GB/s ethernet connection.

The problem can be easily reproduced on a ZC702 Board with the

Echo Server template applications from SDK and a few patches that are described here.

I have included the patched files for reference.

Also included is a small Winsock PC application that connects to

the echo server at port 7, continuously sends data and prints the achieved

data rate on the console.

=== Steps to recreate the Project from scratch ====

- run Xilinx SDK 2014.4, point it to an empty folder as workspace.

- create a new "lwIP Echo Server" Application for the ZC702 board.

Apply the following patches:

in echo.c, the following patches are necessary:

add this function somewhere before recv_callback:

void store_payload( const char* payload, unsigned int len )

{

static char buffer[100*1024];

static int index = 0;

if( payload==NULL ) { while(1){} }

if( len > 2*1024 ) { while(1){} }

if( sizeof(buffer)-index < len )

{

index = 0;

}

memcpy( buffer+index, payload, len );

index += len;

}

This function is used to actually store the data from recv_callback

into memory. A 100kB circular buffer is used to create a memory access

pattern that triggers the caching bug more often.

in echo.c, in recv_callback: replace this line:

err = tcp_write(tpcb, p->payload, p->len, 1);

with this one:

store_payload( p->payload, p->len );

This is used to store the data in memory instead of echoing it back.

in echo.c, in recv_callback():

replace this line:

tcp_recved(tpcb, p->len);

with this:

tcp_recved(tpcb, p->tot_len);

This actually fixes another unrelated bug in the echo server template,

that can cause tcp receive window congestion.

It's recommended to fix this bug with the line above, otherwise it

makes tracing the real caching bug more difficult.

in system.mss, in the section on lwip:

Remove the DHCP parameters, and add the following parameters:

PARAMETER mem_size = 524288

PARAMETER memp_n_pbuf = 2048

PARAMETER memp_n_tcp_pcb = 1024

PARAMETER memp_n_tcp_seg = 1024

PARAMETER n_rx_descriptors = 256

PARAMETER n_tx_descriptors = 256

PARAMETER pbuf_pool_size = 4096

PARAMETER tcp_debug = true

PARAMETER tcp_snd_buf = 65535

PARAMETER tcp_wnd = 65535

These parameters taken from XAPP1026.

Then, click "Re-generate BSP Sources", to actually apply these parameters.

The automatic rebuild is not sufficient!

These parameters are required for the caching bug to occur more often.

They are taken from XAPP1026.

NOTE: While it is possible to tweak the settings such that the bug no longer

occurs (e.g. by setting tcp_wnd to 10000), the bug is still there and

will occur in applications with more complex memory access patterns.

in bsp/ps7_cortexa9_0/libsrc/lwip140_v2_3/src/lwip-1.4.0/src/core/tcp_in.c:

search for this loop (easily found by searching for the string "destined"):

for(pcb = tcp_active_pcbs; pcb != NULL; pcb = pcb->next)

Right before this loop, add the following code:

if( tcphdr->dest == 0x700 )

{

xil_printf( "byte-flipped port address: expected:0x0007 got:0x0700\n");

}

Do NOT "re-generate the BSP sources", otherwise this patch is lost.

The printf you added her should never be triggered, but it will be,

exposing the caching problem.

It appears that a previously already processed packet from the cache is visible,

since port numbers are byte-flipped from big endian to little endian order in tcp_input().

Run the test:

Connect the ZC702 board via Gbit-Ethernet cable to a PC, configure the PC IP

in the same subnet (e.g. 192.168.1.200).

Connect a UART console, and run the application.

Use the included PC application to connect to the Board on TCP Port 7,

and continuously send data to it. Note: The included PC Application is just an

example - any application that connects to 192.168.1.10 on TCP-Port 7 and

spams the server with data will do.

After the data transfer is started, you will get following lines

in the UART console after about 2 seconds:

(...)

Board IP: 192.168.1.10

Netmask : 255.255.255.0

Gateway : 192.168.1.1

TCP echo server started @ port 7

byte-flipped port address: expected:0x0007 got:0x0700

byte-flipped port address: expected:0x0007 got:0x0700

byte-flipped port address: expected:0x0007 got:0x0700

byte-flipped port address: expected:0x0007 got:0x0700

...

The PC application console shows high fluctuations in data rate:

net_init : 1

net_init : 2

net_init : 2.1

net_init : 2.2

net_init : 3

connected.

connection established. sending...

2 Data Rate MBit/sec : 155.7 mean=155.67

4 Data Rate MBit/sec : 123.5 mean=137.75

6 Data Rate MBit/sec : 88.4 mean=116.16

8 Data Rate MBit/sec : 106.4 mean=113.56

10 Data Rate MBit/sec : 233.2 mean=126.54

12 Data Rate MBit/sec : 137.8 mean=128.29

14 Data Rate MBit/sec : 270.8 mean=138.72

16 Data Rate MBit/sec : 129.3 mean=137.46

18 Data Rate MBit/sec : 129.3 mean=136.50

20 Data Rate MBit/sec : 233.2 mean=142.41

To fix this caching problem and prove that it's caching related, add this to main.c:

#include "xil_cache.h"

and at the beginning of main():

Xil_DCacheDisable();

The performance drops considerably, but no more byte-swapping will occur.

UART console:

(...)

Board IP: 192.168.1.10

Netmask : 255.255.255.0

Gateway : 192.168.1.1

TCP echo server started @ port 7

PC Application Console:

net_init : 1

net_init : 2

net_init : 2.1

net_init : 2.2

net_init : 3

connected.

connection established. sending...

2 Data Rate MBit/sec : 178.8 mean=178.82

4 Data Rate MBit/sec : 168.0 mean=173.26

6 Data Rate MBit/sec : 175.0 mean=173.84

8 Data Rate MBit/sec : 186.7 mean=176.89

10 Data Rate MBit/sec : 182.6 mean=177.99

12 Data Rate MBit/sec : 182.8 mean=178.78

14 Data Rate MBit/sec : 182.6 mean=179.31

16 Data Rate MBit/sec : 175.0 mean=178.75

18 Data Rate MBit/sec : 168.0 mean=177.50

20 Data Rate MBit/sec : 171.6 mean=176.89

22 Data Rate MBit/sec : 182.6 mean=177.39

24 Data Rate MBit/sec : 171.3 mean=176.87

26 Data Rate MBit/sec : 178.8 mean=177.01

28 Data Rate MBit/sec : 178.8 mean=177.14

Can anyone check this and confirm this problem?

And will it be fixed any time soon, making LWIP more reliable on zynq?

09-01-2016 05:59 PM

I saw no response to this post which is disappointing as this must affect many users, although most may not be aware, and IS STILL A PROBLEM in the lwip stack supplied with Vivado 2016.1!!!

Below is a simpler way to replicate with the hope that Xilinx addresses this issue as it directly affects a Xilinx provided Example.

I saw another post on a different site noting a different issue caused by this same problem with the same ultimate but undesirable solution of disabling the Data cache.

This issue can basically be replicated by simply building the Lwip demo echo server and then pinging it. About every other packet will get dropped (or not understood) and await the retry a second later making ping times jump from under a ms to a second.

If you add the same Xil_DCacheDisable() that you note in your post the issue goes away. Sort of proving that it is related to cache issues.

Hopefully this can get addressed quickly!!

07-07-2017 08:59 AM | https://forums.xilinx.com/t5/Embedded-Development-Tools/LwIP-library-on-Zynq-broken/m-p/595268 | CC-MAIN-2020-29 | refinedweb | 1,316 | 66.84 |

Customize Tags

Tags are key/value string pairs that are both indexed and searchable. Tags power features in sentry.io such as filters and tag-distribution maps. Tags also help you quickly both.

You'll first need to import the SDK, as usual:

import * as Sentry from "@sentry/node";

Define the tag:

Sentry.setTag("page_locale", "de-at");

Some tags are automatically set by Sentry. We strongly recommend against overwriting those tags. Instead, name your tags with your organization's nomenclature.

Once you've started sending tagged data, you'll see it when logged in to sentry.io. There, you can view the filters within the sidebar on the Project page, summarized within an event, and on the Tags page for an aggregated event.

Our documentation is open source and available on GitHub. Your contributions are welcome, whether fixing a typo (drat!) to suggesting an update ("yeah, this would be better"). | https://docs.sentry.io/platforms/node/guides/connect/enriching-events/tags/ | CC-MAIN-2021-31 | refinedweb | 150 | 69.18 |

#include <iostream.h>

#include <stdlib.h>

int main()

{

int j;

char k[4]= "123";

j = static_cast<int>( k[0]);

cout<<j<<endl;

system("PAUSE");

return 0;

}

I am writing a program where i receive a string with numbers in it. My program has to validate those numbers. I try to cast the numbers to an intiger for easier validation.

This program will run om win. using Borland.

The problem i'm having is that j contains the dec value of of 1.

I want j to comtain the number 1 not the decimal value of 1.

How do i do that. | http://cboard.cprogramming.com/cplusplus-programming/5941-problem-casting.html | CC-MAIN-2015-22 | refinedweb | 101 | 76.72 |

![endif]-->

Arduino LCD playground | LCD 4-bit library

Please note that from Arduino 0016 onwards the official LiquidCrystal library built into the IDE will also work using 6 Arduino Pins in 4 bit mode. It is also faster and less resource hungry, and has more features. This LCD4bit library dates from 2006 when the official library only worked in 8 bit mode. It is effectively redundant.

This was an unofficial, unmaintained Arduino library which allowed your Arduino to talk to a HD44780-compatible LCD using only 6 Arduino pins. It was neillzero's conversion of the code from Heather's original Arduino LCD tutorial which required 11 Arduino pins.

Nowadays, it is recommended to use the LiquidCrystal library that comes with Arduino IDE.

Download the old library! (includes an example sketch).

This library should work with all HD44780-compatible devices. It has been tested successfully with:

Install exactly as you would the LiquidCrystal library in the original LCD tutorial. For a basic explanation of how libraries work in Arduino read the library page.

The library is intended to be a 4-bit replacement for the original LCD tutorial code and is compatible with very little change. Here's what you must do after the setup described in the original tutorial:

The pin assignments for the data pins are hard coded in the library. You can change these but it is necessary to use contiguous, ascending Arduino pins for the library to function correctly. To change this behavior to be able to use any Arduino pins, change these lines:

for (int i=DB[0]; i <= DB[3]; i++) { digitalWrite(i,val_nibble & 01);

to

for (int i=0; i <= 3; i++) { digitalWrite(DB[i],val_nibble & 01);

LCD4Bit lcd = LCD4Bit(1);

#include <LCD4Bit.h> LCD4Bit lcd = LCD4Bit(1); //create a 1-line display. lcd.clear(); delay(1000); lcd.printIn("arduino");

There's also a working, commented example sketch included in the download, in LCD4Bit/examples/LCD4BitExample/LCD4BitExample.pde

I also added a couple of functions to stimulate ideas, but you might want to delete them from your copy of the library to save program space.

//scroll entire display 20 chars to left, delaying 50ms each step

lcd.leftScroll(20, 50);

//move to an absolute position

lcd.cursorTo(2, 0); //line=2, x=0.

If you have never had your LCD working, I suggest you start with the original arduino LCD tutorial, using all 8-bits in the data-bus. Once you are sure your display is working, you can move on to use the 4-bit version.

I've created a googlecode project to maintain the source, at

This does not yet have changes from other contributors.

You can get the source from svn anonymously over http using this command-line:

svn checkout arduino-4bitlcd

See this forum post. Specifically, note that you should delete the library's .o file after change, so that it will be recompiled.

LCD4Bit development notes

the main LCD playground page and the original LCD tutorial.

A speed tuned version with assembler: LCD4Bit with Assembler = Highspeed LCD | http://playground.arduino.cc/Code/LCD4BitLibrary | CC-MAIN-2016-50 | refinedweb | 509 | 64.61 |

Get Swifty cryptography everywhere

Update: Cory Benfield from Apple has confirmed that on Apple's platforms SwiftCrypto is limited to those that support CryptoKit, and can't act as a polyfill for older releases such as iOS 11/iOS 12 and macOS 10.14. However, there's hope that might change – Cory added "we’d be happy to discuss that use-case with the community." In theory that could mean we get SwiftCrypto support for iOS 12 and earlier, which would be awesome!

Apple today released SwiftCrypto, an open-source implementation of the CryptoKit framework that shipped in iOS 13 and macOS Catalina, allowing us to use the same APIs for encryption and hashing on Linux.

The release of SwiftCrypto is a big step forward for server-side Swift, because although we’ve had open-source Swift cryptography libraries in the past this is the first one officially supported by Apple. Even better, Apple states that the “vast majority of the SwiftCrypto code is intended to remain in lockstep with the current version of Apple CryptoKit,” which means it’s easy for developers to share code between Apple’s own platforms and Linux.

What’s particularly awesome about SwiftCrypto is that if you use it on Apple platforms it effectively becomes transparent – it just passes your calls directly on to CryptoKit. This means you can write your code once using

import Crypto, then share it everywhere. As Apple describes it, this means SwiftCrypto “delegates all work to the core implementation of CryptoKit, as though SwiftCrypto was not even there.”

The only exception here is that SwiftCrypto doesn’t provide support for using Apple’s Secure Enclave hardware, which is incorporated into devices such as iPhones, Apple Watch, and modern Macs. As the Secure Enclave is only available on Apple hardware, this omission is unlikely to prove problematic.

SPONSORED Build Chat messaging quickly with Stream Chat. The Stream iOS Chat SDK is highly flexible, customizable, and crazy optimized for performance. Take advantage of this top-notch developer experience, get started for free today!

Sponsor Hacking with Swift and reach the world's largest Swift community!

SwiftCrypto is available today, so why not give it a try?

If you’re using Xcode for your project, go to File > Swift Packages > Add Package Dependency to get started; if not, you can just edit the Package.swift file directly. Either way, you should make it point towards then choose “Up To Next Major”.

Once Xcode has downloaded the package (or if you’ve run swift package fetch from the command line), you can write some code to try it out.

For example, this will compute the SHA256 hash value of a string:

import Crypto let inputString = "Hello, SwiftCrypto" let inputData = Data(inputString.utf8) let hashed = SHA256.hash(data: inputData)

If you want to read that back as a string – for example, if you want to print the SHA so users can verify a file locally – you can create it like this:

let hashString = hashed.compactMap { String(format: "%02x", $0) }.joined()

For more information on SwiftCrypto read the official Swift.org announcement or check out the project. | https://www.hackingwithswift.com/articles/211/apple-announces-swiftcrypto-an-open-source-implementation-of-cryptokit | CC-MAIN-2022-40 | refinedweb | 522 | 60.65 |

This is the mail archive of the libstdc++@sources.redhat.com mailing list for the libstdc++ project.

Phil Edwards wrote: > > To implement things like std::random_shuffle, the code in bits/stl_algo.h > calls __random_number, which in turn calls either the standard rand(), > or the non-standard-but-cooler lrand48(), depending on if __STL_NO_DRAND48 > has been #define'd or not in stl_config.h. > > These RNGs use different functions for seeding, however. The first uses > srand(), the second uses srand48(). Seeding with one function has no > effect if you happen to be using the "other" flavor of generator. > > Add to this the observations that: > > 1) Most people only know to call srand(), > 2) Most modern systems will have the 48-bit generator available, > 3) Nowhere in the implementation do we make any mention of the seeding > functions, other than the the 'using' statement for srand(), > > and you can see a problem shaping up. Users who seed with srand() and use > random_shuffle() are going to be surprised when they get the same results > over and over even though the seeds being used are different. Their only > recourse is to dig into the guts of the library to find the conditional > compilation using __STL_NO_DRAND48, and then use that in their own code > to conditionally compile srand or srand48. > > Anybody have any thoughts as to alleviate this? Maybe provide some > seed_random() wrapper around the seeders that performs the same conditional > compilation? Surely somebody has used random_shuffle before and solved > this already? What do they use at SGI? IMHO those who realy need "random" random use thier own random generator (random_shuffle has a version which get a random generator) or know about srand48 (look into the code) those who just need "some" random generator the deafult may be good. anyway I think it'd be good to put srand48 into namespace std as srand on platform which support it and those who realy use c++ eg. <cstdlib> find that srand and don't have to care about it. > Phil > (Yes, I did find this out the hard way. :-) me too:-( but the worst is that random generator in standard algorithm passed as value (!!!) and not by reference, when you've got a good random generator is more difficult find out:-( -- Levente "The only thing worse than not knowing the truth is ruining the bliss of ignorance." | http://gcc.gnu.org/ml/libstdc++/2000-08/msg00015.html | crawl-002 | refinedweb | 390 | 60.85 |

JMX Namespaces now available in JDK 7

The JMX Namespace feature has now been integrated into the JDK 7 platform. You can read about it in detail in the online documentation for javax.management.namespace. Here's my quick summary.

Namespaces add a hierarchical structure to the JMX naming scheme. The easiest way to think of this is as a directory hierarchy. Previously JMX MBeans had names like

java.lang:type=ThreadMXBean. Those names are still legal, but there can now also be names like

othervm//java.lang:type=ThreadMXBean.

The

othervm "directory" is a

JMXNamespace. You create it by registering a

JMXNamespace MBean with the special name

othervm//:type=JMXNamespace. You specify its contents via the

sourceServer argument to the

JMXNamespace constructor.

There are three typical use cases for namespaces. First, if you have more than one MBean Server in the same Java VM (for example, it is an app server, and you have one MBean Server per deployed app), then you can group them all together in a higher-level MBean Server. Second, if you have MBean Servers distributed across different Java VMs (maybe on different machines), then again you can group them together into a "master" MBean Server. Then clients can access the different MBean Servers without having to connect to each one directly. Finally, namespaces support "Virtual MBeans", which do not exist as Java objects except while they are being accessed.

There's much more to namespaces than I've described here. Daniel Fuchs is the engineer who did most of the design and implementation work on namespaces, and I expect he will have more to say about them in the near future on his blog.

- Login or register to post comments

- Printer-friendly version

- emcmanus's blog

- 3191 reads | https://weblogs.java.net/blog/emcmanus/archive/2008/09/jmx_namespaces.html | CC-MAIN-2015-35 | refinedweb | 294 | 64.3 |

is it possible store in EXIST an XML document in physical chunks =

using the <!ENTITY name SYSTEM "filename"> declaration ?

Using Exist Administrator interface, I before uploaded this fragment =

(fragment.xml) in Exist root (/db):

<DocumentoNIR nome=3D"DecretoLegislativo ">

<meta>

<descrittori>

<pubblicazione tipo=3D"GU" norm=3D"19940519" num=3D"115"/>

<urn>urn:nir:stato:decreto.legislativo:1994-04-16;297</urn>

<vigenza id=3D"v1" inizio=3D"20000901"/>

</descrittori>

</meta>

</DocumentoNIR>

But when I try to upload the master document:

<?xml version=3D"1.0" encoding=3D"UTF-8"?>

<!DOCTYPE NIR SYSTEM =

""; [

<!ENTITY fragment SYSTEM "/db/fragment.xml">

]>

<NIR tipo=3D"originale" xmlns=3D"">

&fragment;

</NIR>

Exist answer :

Error: \db\fragment.xml (Impossible to find the specified path)

******

If I change the ENTITY declaration with an absolute URL enclosed:

<!ENTITY fragment

Exist answer

Error: The element type "META" must be terminated by the matching =

end-tag "</META>".

What is the problem ?

eXist does not preserve entity declarations and references for several=20

reasons: The db uses SAX to parse the doc and the SAX parser will always =

try=20

to resolve entities. Thus fragment.xml will be included into the SAX stre=

am=20

of the master document and eXist will not see the entity reference. This=20

means that you are trying to store fragment.xml two times: one time as=20

fragment.xml and another time inside the master document.

It would be possible to enhance eXist to preserve entities: As a first st=

ep we=20

could replace SAX by Xerces XNI, which would give us more control over th=

e=20

parse process and allow us to store entities and entity references as nod=

e=20

objects. But this would also imply that doctype declarations (external _a=

nd_=20

internal) have to be preserved to keep the doc valid, which is not that e=

asy.

> <!ENTITY fragment SYSTEM

> "">

This should basically work (though I don't think it makes much sense - se=

e=20

above) and I'm not sure why the parser complains. Are all your tags corre=

ctly=20

defined in the DTD?

If your data is to large to be stored as a single document, there may be =

other=20

ways to split it, e.g. by using some kind of identifier in the master to=20

reference the parts.

Cheers,

Wolfgang

Wolfgang and Greg, thank you for the detailed answers.

I think it could be useful introduce ENTITY declaration if the content of a

fragment has to be made reusable in several XML document without replicate

it and creating a dynamic XML native consistence without any

post-processing. Another interesting use it could be getting parallel

locking and authoring of different fragments in the same XML document.

Obviously, it makes sense if the database preserve entities.

Another solution, that also expand the possible uses, is a "shortcut"

database facility or, using an XIndice term, an "Autolinking" facility,

automating links between documents. This allows you to break out duplicate

data into shared documents or include a dynamic element such as the output

of a query or XMLObject invocation into an XML file stored in the

repository.

For example, XIndice "AutoLinking" is specified by adding special attributes

( in namespace) to the XML tag

defining the link. These attributes are intercepted from the database when

the XML document enclosing them is fetched (for example using an XPATH

query) and one the following mechanisms is activated:

1.. replace - Replaces the linking element with the content of the href

URI

2.. content - Replaces all child content of the linking element with the

content of the href URI. The linking element is not replaced.

3.. append - Appends the content of the href URI to the content of the

linking element.

4.. insert - Inserts the content of the href URI as the first child of the

linking element.

The "shortcut" facility could be also implemented integrating the database

with a W3C XLink parser and using "simple" xlink with "show" attribute. For

example the "embed" value instancing the "show" attribute allows an

expand-in-place behaviour:

<my:crossReference

xmlns:my="";

xmlns:xlink="";

xlink:type="simple"

xlink:href="fragment.xml"

xlink:show="embed"

xlink:

Bye,Giampiero

----- Original Message -----From: "Wolfgang Meier"

<meier@...>To: <exist-open@...>Sent:

Tuesday, September 24, 2002 1:28 PMSubject: Re: [Exist-open] ENTITY SYSTEM -

Breaking an XML document in chunkseXist does not preserve entity

declarations and references for severalreasons: The db uses SAX to parse the

doc and the SAX parser will always tryto resolve entities. Thus fragment.xml

will be included into the SAX streamof the master document and eXist will

not see the entity reference. Thismeans that you are trying to store

fragment.xml two times: one time asfragment.xml and another time inside the

master document.It would be possible to enhance eXist to preserve entities:

As a first stepwecould replace SAX by Xerces XNI, which would give us more

control over theparse process and allow us to store entities and entity

references as nodeobjects. But this would also imply that doctype

declarations (external _and_internal) have to be preserved to keep the doc

valid, which is not thateasy.> <!ENTITY fragment SYSTEM>

"">This should basically

work (though I don't think it makes much sense - seeabove) and I'm not sure

why the parser complains. Are all your tagscorrectlydefined in the DTD?If

your data is to large to be stored as a single document, there may

beotherways to split it, e.g. by using some kind of identifier in the master

toreference the parts.Cheers,Wolfgang

Thanks a lot for your suggestions and ideas. I guess, implementing either=

=20

XIndice "AutoLinking" or simple XLink should not be too difficult. Class=20

org.exist.storage.Serializer (and NativeSerializer) could be extended to=20

process XLink attributes during the serialization of the document. Using =

the=20

methods defined there, we may insert arbitrary document fragments into th=

e=20

generated SAX stream.=20

I have not studied the XLink specification in depth, but I guess we could=

=20

start with a limited subset. We should be able to implement the=20

expand-in-place example you presented in rather short time (however, I ha=

ve=20

to stop myself - there's other work waiting to be finished, so I'm trying=

to=20

keep my hands off the code :-).

Thanks,

Wolfgang | https://sourceforge.net/p/exist/mailman/message/5621117/ | CC-MAIN-2017-43 | refinedweb | 1,055 | 53.81 |

サービス AdWords API Reference type UrlData (v201809) Service AdGroupAdService AdService Dependencies Ad ▼ UrlData Holds a set of final urls that are scoped within a namespace. Namespace Field urlId xsd:string Unique identifier for this instance of UrlData. Refer to the Template Ads documentation for the list of valid values. This field is required and should not be null when it is contained within Operators : ADD. This string must not be empty, (trimmed). finalUrls UrlList A list of final landing page urls. finalMobileUrls UrlList A list of final mobile landing page urls. trackingUrlTemplate xsd:string URL template for constructing a tracking URL. 30, 2019 | https://developers.google.cn/adwords/api/docs/reference/v201809/AdGroupAdService.UrlData?hl=ja | CC-MAIN-2019-35 | refinedweb | 103 | 58.69 |

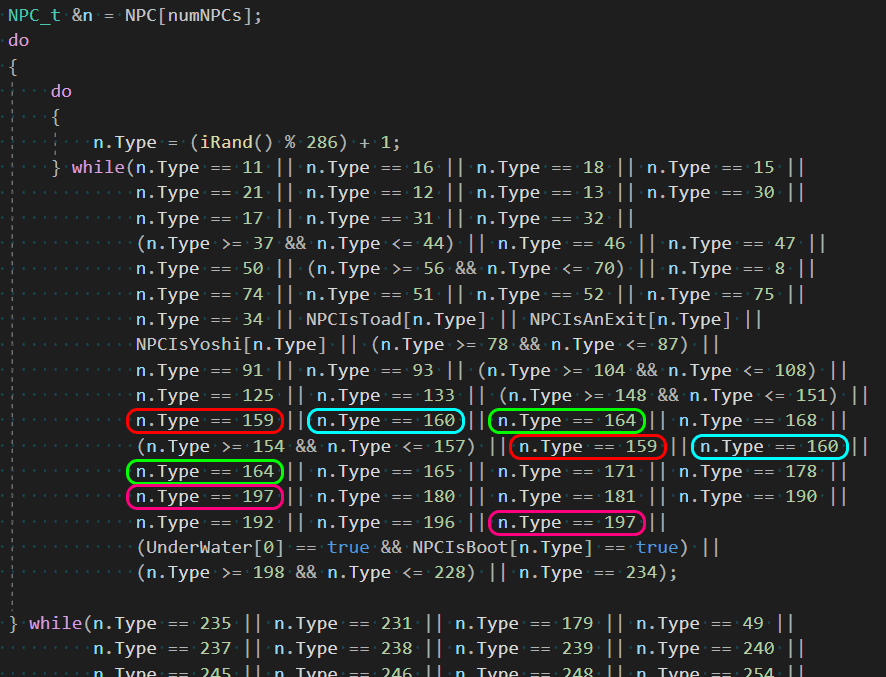

The LCD is a frequent guest in Arduino projects. But in complex circuits, we may have a lack of Arduino ports due to the need to connect a screen with many pins. The way out in this situation can be the I2C/IIC adapter, which connects the almost standard Arduino 1602 shield to the Uno, Nano, or Mega boards with only four pins. This article will see how you can connect the LCD screen with an I2C interface, what libraries can be used, write a short example sketch, and break down typical errors.

Arduino LCD 1602