text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91 values | source stringclasses 1 value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

|---|---|---|---|---|---|

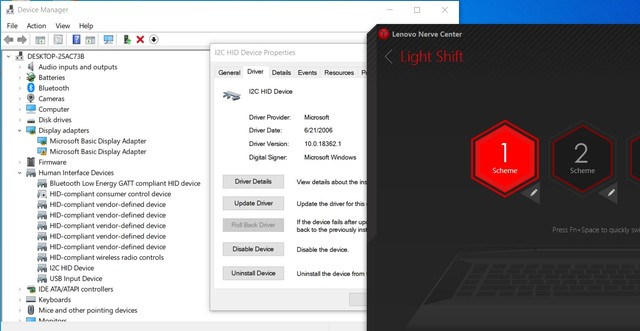

Introduction

The Scalable Vector Graphics (SVG) specification 1.1 has been a W3C Recommendation for over three years now. Firefox 1.5 introduced a built-in SVG rendering engine, and Adobe has an SVG plug-in available for Internet Explorer. This article explains how you can use the SVG support contained in GWT Widget Library o.o.5 to render SVG elements, and how you can make your page compatible with both Firefox and Internet Explorer 6.

SVGPanel Widget

The first widget you always need to create to render SVG graphics is the SVGPanel widget. When you create this widget you need to specify the width and height or the drawing area.

SVGPanel sp = new SVGPanel(500, 300);

This panel, once created, becomes a factory for other components. This works similar to the XML DOM. In this initial release you can create circles, rectangles, ellipses, and paths.

SVGRectangle rect = p.createRectangle(x, y, width, height);

SVGCircle circle = p.createCircle(cx, cy, radius);

SVGEllipse ellipse = p.createEllipse(cx, cy, rx, ry);

SVGPath path = p.createPath(x, y);

Creating a widget this was does not add it to the drawing. You still need to add the SVGPanel via the add() method.

SVGRectangle, SVGCircle, and SVGEllipse

Once created you can set additional attributes to these objects. For all of these you may set the fill color, stroke color, fill opacity, stroke opacity, and stroke width. Each of these objects will also have attributes specific to the object type. For example, you can change the radius of a circle after it's creation, and you may extend a path by adding additional points.

Each method that sets the attribute of an object will return the object itself. This allows you to chain the setting of attributes. The only trick is that some methods belong to the super class SVGBasicShape, which will return an instance of SVGBasicShape, and not the specific subclass. So you just need to be sure to set your widget specific attributes first, followed by the attributes that belong to the super class.

Below are some concrete examples, which show off the various attributes.

p.add(p.createRectangle(0, 0, 500, 300)

.setStroke(Color.DARK_GRAY)

.setStrokeWidth(2)

.setFill(Color.LIGHT_GRAY));

p.add(p.createEllipse(250, 225, 150, 70)

.setStroke(Color.RED)

.setStrokeWidth(1)

.setFill(Color.BLUE));

p.add(p.createCircle(420, 225, 60)

.setFill(Color.BLACK)

.setStrokeWidth(15)

.setStroke(Color.WHITE));

SVGPath Widget

The path widget deserves special attention. You create a path widget by specifying the initial point on the path. From this initial point you can add additional points by drawing either straight lines or curves. You may also move the current point to include multiple lines, for example the inner and outter circles of a doughnut. You may leave the path open, or close it to create a shape. The curves may be cubic Bezier, quadratic Bezier, or elliptical arcs. Each line or curve may be specified using either absolute coordinates, or coordinates relative to the current point. I suggest reading through the path portion of the SVG specification for details on using each of the curves.

The example below draws a hexagon using relative lineTo commands, coloring the shape with red translucent fill, and an orange border.

p.add(p.createPath(150, 190)

.relLineTo(10, 0)

.relLineTo(4, 8)

.relLineTo(-4, 8)

.relLineTo(-10, 0)

.relLineTo(-4, -8)

.closePath()

.setFill(Color.RED)

.setFillOpacity(50)

.setStroke(Color.ORANGE)

.setStrokeWidth(1));

Here is another path that creates an upside-down tear-drop shape, with a translucent yellow fill.

p.add(p.createPath(250, 225)

.relMoveTo(-25, -25)

.relCurveToC(0, -25, 50, -25, 50, 0)

.relLineTo(-25, 50)

.closePath()

.setFill(Color.YELLOW)

.setFillOpacity(50)

.setStroke(Color.BLACK)

.setStrokeWidth(1));

Gradients

You may also create linear gradients that can be used to fill a widget, or in place of a stroke color. Gradients consist of one or more "stops". Each stop contains a color, and optionally an opacity . For each stop you specify the color value, and the SVG engine will transition the color/opacity between stops.

The following gradient will color a widget from red to blue. The first value of each stop is the percentage across the widget. 0% being the left-most point on the widget, to 100% being the right-most point.

SVGLinearGradient grad = p.createLinearGradient()

.addStop(0, Color.RED)

.addStop(100, Color.BLUE);

You may also change the vector of the gradient to something other then left ro right. For example, you might want to color a widget from the top to the bottom. Here is the same gradient which will color from top to bottom instead of left to right. The values are percentages of the objects width and height. Specifically this vector definition starts at 0%,0%(x,y) to 0%,100%(x.y).

SVGLinearGradient grad = p.createLinearGradient()

.addStop(0, Color.RED)

.addStop(100, Color.BLUE)

.setVector(0, 0, 0, 100);

Gradients are not widgets, so you do not add them to the SVGPanel, you just use them. Also notice that each method returns the object instance allowing you to chain method calls.

Working in the Google Hosted Browser

Good luck, it doesn't seem to work. If you are able to get this to work, I would be very interested in the solution. For development I have been compiling the Java code to JavaScript, then testing in Firefox and IE.

Setting up your HTML and Deployment

One of the trickest parts I found was trying to get the SVG content to render in both Firefox 1.5 and Internet Explorer 6. If you are using Iinternet Explorer you will need to first download and install the SVG Viewer plug-in from Adobe. I also strongly suggest reading the Inline SVG page on the SVG Wiki, it will explain in detail how to get everything working across browsers.

Below is the HTML template that I have been using.

<html xmlns=""

xmlns:

<head>

<title>SVG Component Example</title>

<meta name="'gwt:module'"

content="'org.gwtwidgets.examples.svg.Project'">

<object id="AdobeSVG"

classid="clsid:78156a80-c6a1-4bbf-8e6a-3cd390eeb4e2">

</object>

<?import namespace="svg" implementation="#AdobeSVG"?>

</head>

<body>

<script type="text/javascript" src="gwt.js">

</script>

</body>

</html>

Firefox requires that the page be either XML or XHTML, requiring the XHTML namespace definition. This is because Firefox uses a different parser for XML and HTML, and the XML parser is the one that understands SVG.

Internet Explorer requires the svg namespace declaration, as well as the

75 comments:

SCALABLE Vector Graphics, not SCALAR

Oops, thanks for the catch. Fixed.

Great article about SVG and GWT. Do you know when the API will be enhanced to also provide methods to create text using SVG?

Thanks.

Stefan

That is definately on my to-do list, but I don't have a timeframe for doing it. The biggest problem is that there is too much to do, and not enough time to do it.

I am already working with a developer who is expanding the Scriptaculous support, so perhaps someone will volunteer to round out the SVG support.

Hi Robert

Is it possible add an image in the shapes of the SVG package (SVGRectangle, SVGCircle...)?

Is it possible to use EventListener in the SVG Components??

Thanks for your help..

Adriana

Hi Robert

Is it possible add an image in the shapes of the SVG package (SVGRectangle, SVGCircle...)?

Is it possible to use EventListener in the SVG Components??

Thanks for your help..

Adriana

Adriana,

> possible add an image

Not yet.

> possible to use EventListener

Not yet.

I hope do all of those things eventually, or maybe someone will volunteer to do it. The SVG spec is huge, so it may take a while to get everything done.

If all you need is image support and (mouse?) listeners, I'll see what I can do to push them up in the priority list.

It is good to get feedback like this as it gives me an idea of what is needed the most.

Hi Rober Hanson

I want to write a program with SVG panel. when I try to use SVG component

the it makes error. for example when i create a new SVGPanel it makes Exception:

[ERROR] Unable to load module entry point class com.me.client.MyApplication

com.google.gwt.core.client.JavaScriptException: JavaScript Error exception: Unexpected call to method or property access.

at com.google.gwt.dev.shell.ie.ModuleSpaceIE6.invokeNative(ModuleSpaceIE6.java:396)

at com.google.gwt.dev.shell.ie.ModuleSpaceIE6.invokeNativeVoid(ModuleSpaceIE6.java:283)

at com.google.gwt.dev.shell.JavaScriptHost.invokeNativeVoid(JavaScriptHost.java:127)

at com.google.gwt.user.client.impl.DOMImpl.appendChild(DOMImpl.java:27)

at com.google.gwt.user.client.DOM.appendChild(DOM.java:64)

at org.gwtwidgets.client.svg.SVGContainerBase.add(SVGContainerBase.java:43)

at org.gwtwidgets.client.svg.SVGPanelBase.add(SVGPanelBase.java:26)

at org.gwtwidgets.client.svg.SVGPanel.(/init)(SVGPanel.java:60)

at org.gwtwidgets.client.svg.SVGPanel.(/init)(SVGPanel.java:39)

at com.me.client.MyApplication.onModuleLoad(MyApplication.java:21)

I use eclipse IDE. please help me!

It looks like you are trying to use the hosted-mode browser. Right?

I mention that in the article, it just won't work. You need to compile the code to JavaScript, then open in your browser. The problem is that IE requires a plug-in, and this doesn't seem to work at all with the hosted-mode browser.

To Stefan, and others who were

wondering: I was wondering about

text too. But for now, perhaps it's

easier to do using PopupPanel

on top of the canvas... This is

what I came up with, and so far,

so good...

Robert,

Do you know of any way to create a simple vertical (or dotted vertical) line above a panel? I'm trying to recreate what Jack Slocum did here:

using YUI

move the column boundaries and you see a dotted vertical line floating above the table.

Since both are using javascript...there must be a way. (without using SVG)

- Bob

Bob,

If I had to do this, I think that I would probably use a div with the

height of the panel area, and a width of 2px... then use a background

image in the dix with the dotted line. The background would be about

7px high (one dash + space) and repeated top to bottom.

In GWT you could probably do this with a Popup with maybe a FlowPanel

inside, with a style on the FlowPanel to display the line.

I think that would work.

Hi Robert,

Is there anything new about the svg-events ?

I'm trying to work on that, can you give me some advice ?

> Is there anything new about the svg-events ?

SVG support is definately something that I get asked about often, but right now there are too many constraints on my time. I am hoping that things will clear up by mid-January so that I can start writing more code again.

So no, I unfortunately don't have ant tips on how to do it, but I am pretty sure that it can be done. Part of the problem is that GWT doesn't support XML namespaces, but SVG requires it. So you may have some trouble getting it to work the first time.

hi

i've found an example to give event handling to svg widget. but i can't understand why it functions with firefox, opera, and not with explorer and gwt hosted mode.

so, all you have to do is to declare a custom SVGPanel class in which you add methos for adding listeners, for example

public void addClickListener(ClickListener listener) {

if (clickListeners == null)

clickListeners = new ClickListenerCollection();

clickListeners.add(listener);

}

public void addMouseListener(MouseListener listener) {

if (mouseListeners == null)

mouseListeners = new MouseListenerCollection();

mouseListeners.add(listener);

}

and calling it

CustomSVGPanel sp = new CustomSVGPanel(800, 400);

CustomSVGCircle suca= new CustomSVGCircle(new Namespace("svg", ""),200, 225, 25);

sp.add(suca.setFill(Color.BLUE).setColor(Color.GREEN));

sp.addMouseListener(new MouseListener(){

public void onMouseDown(Widget sender, int x, int y) {

}

public void onMouseEnter(Widget sender) {}

public void onMouseLeave(Widget sender) {}

public void onMouseUp(Widget sender, int x, int y){}

public void onMouseMove(Widget sender, int x, int y){

CustomSVGPanel senderPanel = (CustomSVGPanel)sender;

((CustomSVGCircle)senderPanel.getWidget(1)).setFill(Color.RED);

}

});

if someone knows why it not functions with explorer 6 (with adobe SVG plugin istalled), please tell me!!!!

> i've found an example to give

> event handling to svg widget.

Awesome, glad to see that you got something working. The book is finishing up, so hopefully in another month or so I can get back to doing some coding.

Did you notice that the GWT roadmap included SVG support as a nice to have? It would be nice to see them get involved and throw some manpower into this.

Robert: When I load the following markup into my FireFox 1.5xx browser locally, the SVG code is rendered and the image is displayed. However, when I post it on my website and then download it into the same browser, I get a blank screen. Any suggestions as to what I am doing wrong will be greatly appreciated.

By the way, I replaced all angle brackets by entities so that I could send them via your editor.

Thanks,

Dick Baldwin

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE html PUBLIC "-//W3C//DTD XHTML 1.0 Transitional//EN" "">

<html xmlns="" xml:

<head>

<meta http-

<title>Generated XHTML file</title>

</head>

<body id="body" style="position:absolute; z-index:0;border:1px solid black; left:0%; top:0%;width:90%; height:90%;">

<svg xmlns="" version="1.1" style="width:100%;height:100%;position:absolute;top:0;left:0;z-index:-1;">

<defs>

<linearGradient id="gradientA">

<stop offset="0%" style="stop-color:yellow;"/>

<stop offset="100%" style="stop-color:red;"/>

</linearGradient>

<linearGradient id="gradientB">

<stop offset="0%" style="stop-color:green;"/>

<stop offset="100%" style="stop-color:blue;"/>

</linearGradient>

</defs>

<g>

<ellipse cx="110" cy="100" rx="100" ry="40" style="fill:url(#gradientA);stroke:rgb(0,0,100);stroke-width:2"/>

<circle cx="110" cy="100" r="30" style="fill:url(#gradientB)"/>

</g>

</svg>

</body></html>

PS: I forgot to mention that the file name and extension containing the markup from my previous comment is junk.xhtml

Thanks,

Dick Baldwin

Robert,

Never mind, I found my problem - at least part of it. My real objective was to generate inline SVG code with a servlet to have it rendered on the client. It always seems to happen that as soon as I ask for help, I find the solution. In this case, the solution was to put the following statement in my servlet in place of the one that was there:

res.setContentType("image/svg+xml");

This replaced the following statement:

res.setContentType("text/html");

Thanks again.

Dick Baldwin

Glad to hear it all worked out. The whole extension/mime type issue can be problematic.

If anyone else runs into the same issue you should also check out the Inline SVG page of the SVG Wiki. Some useful tips there,.

Hi Robert

A problem that the attribute "xlink:href" cannot use a relative path of a Image. is found when I use Image tag in SVG.

Hi Robert

It looks like adobe viewer (IE 7 with adobe SVG viewer 3.0 installed) doesn't refresh plugin window if I add and remove SVG element (with Javascript produced by GWT) during runtime.

In contrary firefox mozilla 2.0.0.2 works fine (refreshes SVG ok), but doesn't allow to set attribute (example transform attribute for some SVG element) - this is reported as GWT bug ().

Does anybody experiences similar behaviour?

> In contrary firefox mozilla 2.0.0.2

> works fine (refreshes SVG ok), but

> doesn't allow to set attribute

Take a look at org.gwtwidgets.client.ext.ExtDOM. You might be able to use ExtDom.setAttributeNS(element, "prop", "value"). This is what all of the SVG widgets use internally to set the element attributes.

As for IE... well, I have this feeling that it just isn;t going to pan out. The worst part is that Adobe doesn't even support the plug-in any longer. There is talk about a cross-browser vector API that will use SVG on FF, and VML on IE. I figure it will take a while before we see that though.

Hi Robert!

The W3C has defined the SVG specification and provided some interface. Wyh do not you implement the iterface.

Yonghe, that is indeed a very good question. I believe that when I wrote the SVG code that I did notice the W3C API... and if memory serves (it was 8 months ago), I _think_ that I just didn't like their API. I would need to look at the API again to be sure, but I think that was my thinking at the time.

...And for the record, I really dislike the DOM API as well. It is too verbose for every day use. When O coded Perl I published a module XML::EasyOBJ to make using the DOOM easier, and now that I have moved to Java I tend to use the programmer-friendly JDom.

If you are a standards purist, it is likely that you won't agree with that decision.

Is there any methods to load external SVG file to SVGPanel to display it's content??

Grzegorz, no, not currently.

Hi Robert Hanson!

The SVG standard is really too verbose, but it defines all features for SVG, if you write the SVG implementation without SVG standard, you have to refer to large numbers of document about SVG standard, it is troublesome.

By the way,I am not a standards purist, I am developping an application that depends on the SVG in web and I would like to join your project. Could you accept my application?.'

Yonghe, I am hesitant to accept an application out of the blue. If you want to submit widgets/patches, then I am more than happy to accept, review, add them.

As far as sticking to the SVG spec... if you feel that is the way to go, then maybe you should start your own project and feel free to use whatever parts of my code are useful.

As far as long-term SVG support goes, there is an expectation that the GWT core API will include a generic vector graphics API that will use SVG on FF and VML on IE. I expect though that it will be some time before we see that, and because it is generic, it will likly be missing some of what SVG has to offer.

Scott, regarding...

> svgElement.animationsPaused()

The SVG support in the GWT-WL is far from complete, and I am not sure that it ever will be.

If this is possible at all, and it might not even be supported in IE (I really don't know), then you would need to use JSNI in order to do this..'

Hi Robert!

Could you give me a email that can help me to contact with you frequently.

Sure, IamRobertHanson at gmail.com.

I can't guarantee me responsiveness though. I tend to only respond to my personal email in the mornings, and not during work hours.

did work for me in the hosted browser. I use IE7.

Hi, I was wondering if support had been added for event listeners. Please let me know. Thanks.

No, there isn't support for listeners yet. I have had almost no time to work on the library for a long time due to work, the book, and now a new baby.

But... if someone has already figure out how to accomplish this, code patches are welcome.

It seems that Adobe plugin cannot show svg elements that have been added after initWidget() call.

Does somebody have idea how to overcome this ?

I got an svg file which has the following attributes:

xml:space="preserve" width="271mm" height="195mm" style="shape-rendering:geometricPrecision; text-rendering:geometricPrecision; image-rendering:optimizeQuality; fill-rule:evenodd"

viewBox="0 0 271000 194640"

This means that the paths that are made have such coordinates as: 121628. But the final "image" scales ok so that I don't loose precision. Is there a way, using your API, to set this scale so that I can use the same coordinates (there are *many* of them, and since they relate to a map, I can't simply loose precision)?

Thanks and congrats for your great work.

> Is there a way, using your API,

> to set this scale

No, not currently, but it should be easy to add. All of the source is in the jar file, so I encourage you to try it out for yourself.

As far as seeing this feature in a new release... it could be some time.

<long-story>

Now that I finally have time to work on the GWT-WL again it seems that the next release (1.4) of GWT will break SVG support.

So as of GWT-WL 0.1.4 I have removed SVG support since it won't work anyway. At a later date it is expected that GWT will be able to support SVG again, which is when I will add it back to the GWT-WL.

</long-story>

So... as long as you stick with GWT 1.3 and GWT-WL 0.1.3 you can still use the SVG support as-is. But until SVG works with new versions of GWT I don't plan on extending the SVG support.

Hi Robert, looking at Google Groups it seems it is possible to use svg with gwt 1.4. I still have to give it a try, but I was wondering if you tried to adapt your library..

Cheers,

Vito.

Vito, thanks for pointing out this post. It has been awhile since I looked at the SVG code in the GWT-WL, but I expect that it could work.

I recently upgraded my code to GWT 1.4 and very happily realized that SVG widgets are indeed working with this new GWT version!! So, I was wondering:

"But until SVG works with new versions of GWT I don't plan on extending the SVG support."

Will you now think about extending the SVG widgets to support event listening? I am working on this myself but unfortunately not getting any far..

Any ideas or details on how to accomplish this would be greatly appreciated.

Thanks!

I've started working on adding support to GWT for non-HTML document types, in particular SVG. There are basically two parts to this:

* Adapting GWT bootstrap to work in XML

* Adding a "wrapper" implementation for the org.w3c.dom APIs

The GWT-DOM Google code project has been created for this purpose.

To see a demo, point Firefox 2.x at at this example.

See also this thread in the GWT-contributors forum.

Archie,

I would like to check out your library, especially the svg part. But I haven't really seen any javadoc or example code on how to start with it. Do you think you can provide the code of your demo (or any other sample code) and possibly a javadoc of your library?

Thank you very much for your help..

Sure, just check out the code, it's all there at:

I notice that for SVG to work, or at least inline SVG, I need to send my HTML page with content type "application/xhtml+xml", so that Firefox's XML parser (and SVG parser) picks it up. However, for GWT it seems to be necessary to send content type "text/html", otherwise I get a warning in hosted mode, and no rendering at all in web mode. Does this mean the GWT and (inline) SVG are incompatible? It seems like some you people get it working anyway. Why does GWT not allow for content type "application/xhtml+xml"?

Jeroen,

I have had a falling out with SVG + GWT, so I really haven't been following it, but I *think* that GWT 1.5 (when it comes out) will work ok with XHTML. Don't hold me to that though.

hi

i want to start using svg with gwt. please tell me from where will i get the package for svg support in gwt.

expecting immediate help

Tanzeem

The GWT-WL () had support in 1.3... but then it broke, but some have said that they could get it to work.

You should probably ask on the dev list () to see if anyone is actively using GWT + SVG, and ask them what they are using.

I think SVG+GWT would be quite an interesting subject to the people at the SVG world conference SVG Open. Can you or someone else make it there?

Paper abstract deadline is at the end of this month.

Hope to see you in Nuremberg

Stelt, an interesting idea, but unless they paid my way I wouldn't be able to afford to make the trip. Nuremberg is a bit of a drive from New Jersey, and with gas prices being what they are...

Do you know another good candidate?

Someone that doesn't have to drive across an ocean to get to Nuremberg maybe?

Stelt, sorry, I can't say that I do.

i have to get a vector graphics drawn in the panel and i am getting lot of errors.I also tried JSGraphics from the latest GWT-WL.No effect. Can anyone give me a complete sample program(instead of code snippets) that uses SVGPanel or JSGraphics.

Hi,

I'm using widgets-1.3 with gwt 1.4 to draw svg objects and it seems to work fine in gwt hosted mode.

I added eventlisteners to the SWGWidget object(thanks guido) and it seems to work fine in hosted mode as well.

This was really helpful. Thank you Robert !

I have your Draw Sample example running in GWT-shell if you're still interested.

I would like to see the draw sample. But i got a solution using a gwt dojo wrapper called tatami.I found it much efficient than the SVG option . Thanks

Thanks cheapwhyskey (nice nick), but I don't think that I have the time to properly maintain it. I would very much like to see someone else take up the torch.

BTW - It may be of interest that there is a new Canvas widget in GWT-WL 0.2.0. It uses the <canvas> element in non-IE browsers and VML in IE. Using <canvas> is a *lot* faster than SVG (or VML for that matter).

Hi,

I am using GWT and want to use SVG. Please guide the example code posted above on the page, how can i use it step by step.

Please guide, i need solution urgently!

/Danish

AA, this post is over two years old, and GWT has changed in significant ways.

I had been experimenting with GWT + SVG but abandoned it for several reasons. One being that there are no supported plugins for IE, and second because Canvas is a LOT faster if you use FF or Safari.

You should post your question on the dev list to see if anyone has any success stories to share.

Thanks. Actually, I want to use some simplest way to use SVG in the GWT. I hope you would be having a lots of information in this regard. I can not find much on it. Please share some links etc. I will be thankful to you!

/Danish

Search for SVG on the dev list, that is your best bet.

Hi, Its hard to read the code as I have problems with identifying colors.

I have a question:

To do this tutorial of Robert Hanson it is necessary a jar? here I did not find the jar to include thank you for help.

BOUKHARY.

Hi

I look for the jar that allows doing this tutoriel (SVG + GWT) for I do not find the link thank you.

Kazebliz

Baukhary, you can get all of the older versions of GWT-WL here.

Note that the early versions only worked with GWT 1.3 (or older). SVG support was removed in version 0.1.4, so use 0.1.3 or earlier.

Is it possible to run GWT-Widgets 0.1.3 with GWT 1.7?

I'm getting some errors when compiling althougth it finish compilation by removing some unnecessary items.

Is the svg picture displayed in hostmode debugging?

Thanks in advance... i've been battling serveral days ;)

Jlanza, nope, it won't work.

There is a project I just found out about that may be of interest,. It is JS, not GWT, but supposedly has cross-browser SVG support. Maybe it would be possible to create a quick wrapper around it so that it can be used in GWT.

Hi robert,

I will take a look at it.

BTW I have taken your code from 1.3 a modified it a little to fit the group, and other svg items. Now I'm working with the image, but I don't manage to get it displayed.

I get the rectangles,... and the image in the xml code of the page, but it is not displayed.

jlanza, glad to hear it is being used. I wish I could help with the images, but I don't have any ideas, it has been a very long time since I looked at that code.

Hi Robert! What do you think about using GWT generated javascript for manipulating not HTML DOM, but SVG DOM? I would like to animate existing HTML-embedded SVG images by javascript generated from GWT. Just something "like Flash". SVG can be manipulated by java-jar, but this is not fully supported in contrast with javascript SVG manipulation, which is widely supported. I don't know the resulting speed of this solution, but it may be depends on browser SVG DOM and javascript implemetation?

Well, IE doesn't support SVG, so that will be an issue, and the speed is generally slow.

If you were to go through with this, I suggest leveraging the work of others. You should be able to take, and wrap it in GWT classes. The SVGWeb team has been wanting to do this anyway, so maybe you could help them.

Hi,

I am trying to run the gwt-examples-draw-1.0 for the first time and after seeing some text with a broken image box, I found my way to the README.txt file.

The major issue I am having is that the domain and there for web site;

is no longer up. Does anyone have any clue where I can get the required javascript library?

TIA,

Scott (adligo.com)

Huh, too bad that site is down. I searched for Walter Zorn on Google, and didn't find a site for his stuff anywhere.

Anyway, I have a demo using JSGraphics at, which uses the source file from.

Unfortunately I don't have any of the JSGraphics docs, but they were likely archived by the Way Back Machine (). If you search for there, you should be able to find the documentation. (I would send a direct link, but archive.org is excessively slow this morning) | http://blog.mental.ninja/2006/06/coding-svg-with-gwt.html?showComment=1152154620000 | CC-MAIN-2019-18 | refinedweb | 5,207 | 74.69 |

vikramca06

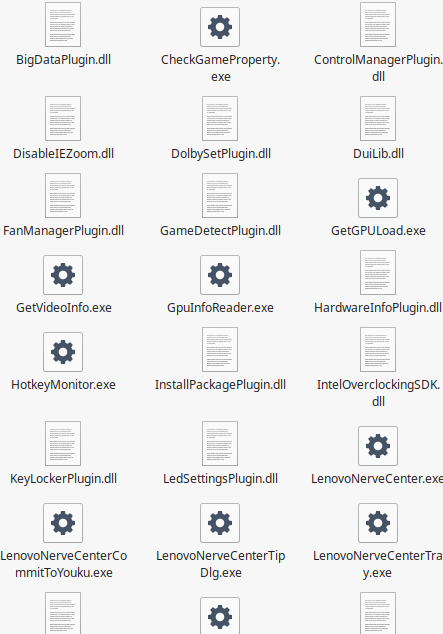

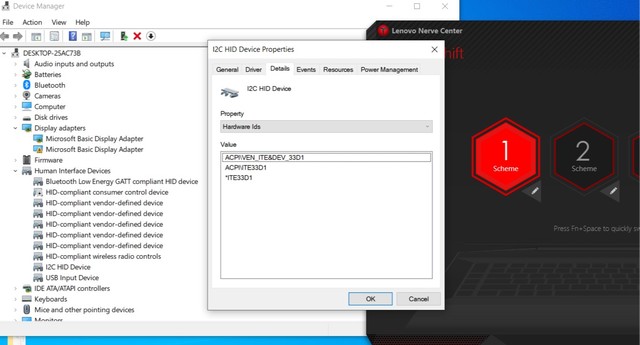

10-05-2017

Hi,

I want to know how "prism:expirationDate" property works for Asset deactivation.

What is the difference between asset "offTime" and "prism:expirationDate"?

Regards,

Vikram.

MC_Stuff

Hi Vikram,

PRISM Namespace is consolidate all rights related elements within a single namespace. AFAIK oob does not use it & may be as part of meta data extraction it might exist.

Offtime just adds attribute as properties to the content & when you activate content will be available in publish. When Off time matches or greater than current time , AEM OSGI Services consider that content as dead and if you try to access using HTTP, AEM would return 404 error but content will still leave in publish instance. if you don;t want content to stay then need to deactivate.

Thanks,

sneh1

Expiry date is used for rights management purpose. If an asset reaches expiry date, only restricted action actions can be performed on it by non admin users.

Complete documentation available @... | https://experienceleaguecommunities.adobe.com/t5/adobe-experience-manager-assets/what-is-the-use-of-quot-prism-expirationdate-quot-and-how-does/qaq-p/208578 | CC-MAIN-2021-17 | refinedweb | 161 | 55.64 |

How to search contents of RTF files using Smart Search Štěpán Kozák — Jul 10, 2014 smart search As I mentioned in my Kentico 8 preview article about Smart Search quite a while ago, Kentico 8 comes with full support for indexing the content of attachment files. The list of support files is quite extensive, however there are still some common files missing. In this article I’ll add support for RTF files which is not among files supported out of the box. To be able to add support for indexing the content of RTF files, first we need to know how attachment content indexing works behind the scenes. The indexing is provided by so called “search text extractors”. The default ones are all in the CMS.Search.TextExtractors namespace. If you configure your Smart Search index to also index attachment content and rebuild the index, then the indexer goes through each attachment it finds attached to the document it’s indexing and the following occurs: The indexer looks at the extension of the file—if the extension is not among those supported it does nothing. If the extension is among the supported files, it gets the binary data of the file and passes it on to the corresponding text extractor registered in the system for this file type. The text extractor takes the file binary data, interprets it, and returns a pure text representation of the file back to the indexer. The indexer caches the extracted text to the AttachmentSearchContent column of attachment in the DB so next time the content is needed it does not have to be extracted (as this can be quite a resource-demanding process). If the attachment is updated, Kentico makes sure the cached value in AttachmentSearchContent is cleared so the search process can call the extractor again to get fresh data. Now understanding how indexing files for content works, we can change our goal from “Adding support for RTF files to Smart Search” to the more technical and more specific “Creating RTF text search extractors”. In this article you’ll learn two things: How to create a custom text extractor to Smart Search How to create a custom module recognized by Kentico just by copying its dll to bin folder. Let’s start with the extractor class itself. The development process is very easy; you just create your custom class (let’s name it RtfTextExtractor) and implement the interface IsearchTextExtractor. This forces you to implement the method ExtractContent, which turns the binary data of the given physical file to its text representation. Just one note about why this method does not return string but an XmlData object: it’s because you may want to index parts of your physical file to different fields of your smart search index document. For example, we may want to extract the metadata of the RTF file, such as author, and store it to the field “Author”, while the rest (the actual contents of the RTF file) might go to the default content field. In this example we will ignore the metadata of the RTF file and just index its contents. To implement the extraction, we’ll use the RichTextBox control as recommended on MSDN, so our extractor will look like this: The next thing we need to ensure is to let Kentico know about the extractor so it can use it for extracting content from the RTF files. My goal is to create a reusable library that I can quickly copy to any of my Kentico instances bin folder whenever I need this instance to be able to index RTF files. To do that, I will create a Kentico module library. This is done by creating a standard c# class library project, referencing required dlls from the Kentico 8 instance, and then adding a special Module class and one assembly attribute to the AssemblyInfo.cs class. The module class can have an arbitrary name. The important thing is that you inherit from a CMS.DataEngine.Module class and provide a parameter-less constructor by inheriting from the default constructor and specifying the module name. The inheritance from Module class provides you with a OnPreInit method where you can register your new extractor. This method is guaranteed to be called at application start, so that’s what we need. The AssemblyDiscoverable attribute in AssembylInfo.cs class then ensures Kentico will load this assembly on application init, look for all the modules in this assembly, and call their OnPreInit methods on application start. It all should look like this: Now we are ready with development. It’s enough to rebuild the project to get the dll and copy it to bin folder of your Kentico instance. Or if you develop in the solution of your Kentico instance, just add a reference to the RTF extractor project to your web project. This will cause an application restart and if you look in Event log application at an Application_Start event, you should see our module among the modules initialized. Henceforth, your Kentico instance supports the indexing of RTF files! Wasn't that easy? :-) You can download the complete code of this library along with compiled dlls from our Marketplace. PS: Just a quick tip at the end. If you would like to have search functionality over the contents of your media library files, there is nothing easier than creating a custom search index. This will call the extractors API to get the contents of the media files, as this API is public. So to get the text contents of a Word file you’d just call this line of Kentico API: var xmlData = new CMS.Search.TextExtractors.DocxSearchTextExtractor(...); Did you know there was such an API in Kentico 8? :-) Share this article on Twitter Facebook LinkedIn Google+ Štěpán Kozák Comments | https://devnet.kentico.com/articles/how-to-search-contents-of-rtf-files-using-smart-search | CC-MAIN-2018-13 | refinedweb | 968 | 57.61 |

The Jupyter Notebook has a feature known as widgets. If you have ever created a desktop user interface, you may already know and understand the concept of widgets. They are basically the controls that make up the user interface. In your Jupyter Notebook you can create sliders, buttons, text boxes and much more.

We will learn the basics of creating widgets in this chapter. If you would like to see some pre-made widgets, you can go to the following URL:

These widgets are Notebook extensions that can be installed in the same way that we learned about in my Jupyter extensions article. They are really interesting and well worth your time if you’d like to study how more complex widgets work by looking at their source code.

Getting Started

To create your own widgets, you will need to install the ipywidgets extension.

Installing with pip

Here is how you would install the widget extension with pip:

pip install ipywidgets jupyter nbextension enable --py widgetsnbextension

If you are using virtualenv, you may need to use the

--sys-prefix option to keep your environment isolated.

Installing with conda

Here is how you would install the widgets extension with conda:

conda install -c conda-forge ipywidgets

Note that when installing with conda, the extension will be automatically enabled.

Learning How to Interact

There are a number of methods for creating widgets in Jupyter Notebook. The first and easiest method is by using the interact function from ipywidgets.interact which will automatically generate user interface controls (or widgets) that you can then use to explore your code and interact with data.

Let's start out by creating a simple slider. Start up a new Jupyter Notebook and enter the following code into the first cell:

from ipywidgets import interact def my_function(x): return x # create a slider interact(my_function, x=20)

Here we import the interact class from ipywidgets. Then we create a simple function called my_function that accepts a single argument and then returns it. Finally we instantiate interact by passing it a function along with the value that we want interact to pass to it. Since we passed in an integer (i.e. 20), the interact class will automatically create a slider.

Try running the cell that contains the code above and you should end up with something that looks like this:

That was pretty neat! Try moving the slider around with your mouse. If you do, you will see that the slider updates interactively and the output from the function is also automatically updated.

You can also create a FloatSlider by passing in floating point numbers instead of integers. Give that a try to see how it changes the slider.

Checkboxes

Once you are done playing with the slider, let's find out what else we can do with interact. Add a new cell in the same Jupyter Notebook with the following code:

interact(my_function, x=True)

When you run this code you will discover that interact has created a checkbox for you. Since you set "x" to **True**, the checkbox is checked. This is what it looked like on my machine:

You can play around with this widget as well by just checking and un-checking the checkbox. You will see its state change and the output from the function call will also get printed on-screen.

Textboxes

Let's change things up a bit and try passing a string to our function. Create a new cell and enter the following code:

interact(my_function, x='Jupyter Notebook!')

When you run this code, you will find that interact generates a textbox with the string we passed in as its value:

Try editing the textbox's value. When I tried doing that, I saw that the output text also changed.

Comboboxes / Drop-downs

You can also create a combobox or drop-down widget by passing a list or a dictionary to your function in interact. Let's try passing in a list of tuples and see how that behaves. Go back to your Notebook and enter the following code into a new cell:

languages = [('Python', 'Rocks!'), ('C++', 'is hard!')] interact(my_function, x=languages)

When you run this code, you should see "Python" and "C++" as items in the combobox. If you select one, the Notebook will display the second element of the tuple to the screen. Here is how my mine rendered when I ran this example:

If you'd like to try out a dictionary instead of a list, here is an example:

languages = {'Python': 'Rocks!', 'C++': 'is hard!'} interact(my_function, x=languages)

The output of running this cell is very similar to the previous example.

More About Sliders

Let's back up a minute so we can talk a bit more about sliders. We can actually do a bit more with them than I had originally let on. When you first created a slider, all you needed to do was pass our function an integer. Here's the code again for a refresher:

from ipywidgets import interact def my_function(x): return x # create a slider interact(my_function, x=20)

The value, 20, here is technically an abbreviation for creating an integer-valued slider. The code:

interact(my_function, x=20)

is actually the equivalent of the following:

interact(my_function, x=widgets.IntSlider(value=20))

Technically, Bools are an abbreviation for Checkboxes, lists / dicts are abbreviations for Comboboxes, etc.

Anyway, back to sliders again. There are actually two other ways to create integer-valued sliders. You can also pass in a tuple of two or three items:

- (min, max)

- (min, max, step)

This allows us to make the slider more useful as now we get to control the min and max values of the slider as well as set the step. The step is the amount of change to the slider when we change it. If you want to set an initial value, then you need to change your code to be like this:

def my_function(x=5): return x interact(my_function, x=(0, 20, 5))

The x=5 in the function is what sets the initial value. I personally found that a little counter-intuitive as the IntSlider itself appears to be defined to work like this:

IntSlider(min, max, step, value)

The interact class does not instantiate IntSlider the same way that you would if you were creating one yourself.

Note that if you want to create a FloatSlider, all you need to do is pass in a float to any of the three arguments: min, max or step. Setting the function's argument to a float will not change the slider to a FloatSlider.

Using interact as a decorator

The interact class can also be used as a Python decorator. For this example, we will also add a second argument to our function. Go back to your running Notebook and add a new cell with the following code:

from ipywidgets import interact @interact(x=5, y='Python') def my_function(x, y): return (x, y)

You will note that in this example, we do not need to pass in the function name explicitly. In fact, if you did so, you would see an error raised. Decorators call functions implicitly. The other item of note here is that we are passing in two arguments instead of one: an integer and a string. As you might have guessed, this will create a slider and a text box respectively:

As with all the previous examples, you can interact with these widgets in the browser and see their outputs.

Fixed Arguments

There are many times where you will want to set one of the arguments to a set or fixed value rather than allowing it to be manipulated through a widget. The Jupyter Notebook ipywidgets package supports this via the fixed function. Let's take a look at how you can use it:

from ipywidgets import interact, fixed @interact(x=5, y=fixed('Python')) def my_function(x, y): return (x, y)

Here we import the fixed function from ipywidgets. Then in our interact decorator, we set the second argument as "fixed". When you run this code, you will find that it only creates a single widget: a slider. That is because we don't want or need a widget to manipulate the second argument.

In this screenshot, you can see that we have just the one slider and the output is a tuple. If you change the slider's value, you will see just the first value in the tuple change.

The interactive function

There is also a second function that is worth covering in this chapter that is called interactive. This function is useful during those times when you want to reuse widgets or access the data that is bound to said widgets. The biggest difference between interactive and interact is that with interactive, the widgets are not displayed on-screen automatically. If you want the widget to be shown, then you need to do so explicitly.

Let's take a look at a simple example. Open up a new cell in your Jupyter Notebook and enter the following code:

from ipywidgets import interactive def my_function(x): return x widget = interactive(my_function, x=5) type(widget)

When you run this code, you should see the following output:

ipywidgets.widgets.interaction.interactive

But you won't see a slider widget like you did had you used the interact function. Just to demonstrate, here is a screenshot of what I got when I ran the cell:

If you'd like the widget to be shown, you need to import the display function. Let's update the code in the cell to be the following:

from ipywidgets import interactive from IPython.display import display def my_function(x): return x widget = interactive(my_function, x=5) display(widget)

Here we import the display function from IPython.display and then we call it at the end of the code. When I ran this cell, I got the slider widget:

Why is this helpful? Why wouldn't you just use interact instead of jumping through extra hoops? Well the answer is that the interactive function gives you additional information that interact does not. You can access the widget's keyword arguments and its result. Add the following two lines to the end of the cell that you just edited:

print(widget.kwargs) print(widget.result)

Now when you run the cell, it will print out the arguments that were passed to the function and the return value (i.e. result) of calling the function.

Wrapping Up

We learned a lot about Jupyter Notebook widgets in this chapter. We covered the basics of using the `interact` function as well as the `interactive` function. However there is more that you can about these functions by checking out the documentation.

Even this is just scratching the surface of what you can do with widgets in Jupyter. In the next chapter we will dig into creating widgets by hand outside of using the interact / interactive functions we learned about this in this chapter. We will learn much more about how widgets work and how you can use them to make your Notebooks much more interesting and potentially much more powerful.

Related Reading

- Jupyter Notebook Extension Basics

- Creating Presentations with Jupyter Notebook | https://www.blog.pythonlibrary.org/2018/10/23/creating-jupyter-notebook-widgets-with-interact/ | CC-MAIN-2020-10 | refinedweb | 1,879 | 60.95 |

).

De-randomization of the data

One of the missing things on the last chapter was the frame data de-randomization. The data inside the frame (excluding the sync word) is randomized by a generator polynomial. This is done because of few things:

- Pseudo-random Symbols distribute better the energy in the spectrum

- Avoids “line-polarization” effect when sending a continuous stream of 1’s

- Better Clock Recovery due more changes in symbol polarity

CCSDS has a standard Polynomial as well, and the image below shows how to generate the pseudo-random bistream:

CCSDS Pseudo-random Bitstream Generator

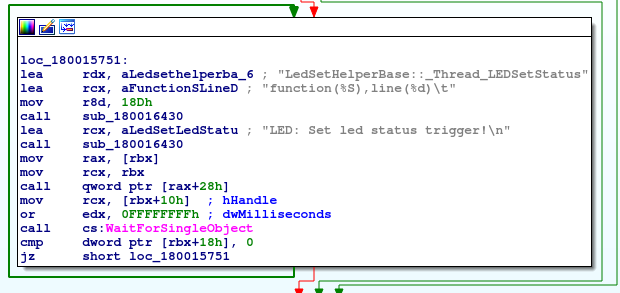

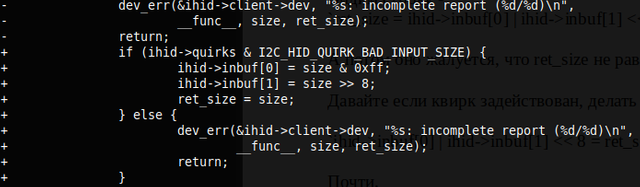

The PN Generator polynomial (as shown in the LRIT spec) is x^8 + x^7 + x^5 + x^3 + 1. You can check several PN Sequence Generators on the internet, but since the repeating period of this PN is 255 bytes and we’re xor’ing with our bytestream I prefer to make a lookup table with all the 255 byte sequence and then just xor (instead generating and xor). Here is the 255 byte PN:

char pn[255] = { 0xff, 0x48, 0x0e, 0xc0, 0x9a, 0x0d, 0x70, 0xbc, 0x8e, 0x2c, 0x93, 0xad, 0xa7, 0xb7, 0x46, 0xce, 0x5a, 0x97, 0x7d, 0xcc, 0x32, 0xa2, 0xbf, 0x3e, 0x0a, 0x10, 0xf1, 0x88, 0x94, 0xcd, 0xea, 0xb1, 0xfe, 0x90, 0x1d, 0x81, 0x34, 0x1a, 0xe1, 0x79, 0x1c, 0x59, 0x27, 0x5b, 0x4f, 0x6e, 0x8d, 0x9c, 0xb5, 0x2e, 0xfb, 0x98, 0x65, 0x45, 0x7e, 0x7c, 0x14, 0x21, 0xe3, 0x11, 0x29, 0x9b, 0xd5, 0x63, 0xfd, 0x20, 0x3b, 0x02, 0x68, 0x35, 0xc2, 0xf2, 0x38, 0xb2, 0x4e, 0xb6, 0x9e, 0xdd, 0x1b, 0x39, 0x6a, 0x5d, 0xf7, 0x30, 0xca, 0x8a, 0xfc, 0xf8, 0x28, 0x43, 0xc6, 0x22, 0x53, 0x37, 0xaa, 0xc7, 0xfa, 0x40, 0x76, 0x04, 0xd0, 0x6b, 0x85, 0xe4, 0x71, 0x64, 0x9d, 0x6d, 0x3d, 0xba, 0x36, 0x72, 0xd4, 0xbb, 0xee, 0x61, 0x95, 0x15, 0xf9, 0xf0, 0x50, 0x87, 0x8c, 0x44, 0xa6, 0x6f, 0x55, 0x8f, 0xf4, 0x80, 0xec, 0x09, 0xa0, 0xd7, 0x0b, 0xc8, 0xe2, 0xc9, 0x3a, 0xda, 0x7b, 0x74, 0x6c, 0xe5, 0xa9, 0x77, 0xdc, 0xc3, 0x2a, 0x2b, 0xf3, 0xe0, 0xa1, 0x0f, 0x18, 0x89, 0x4c, 0xde, 0xab, 0x1f, 0xe9, 0x01, 0xd8, 0x13, 0x41, 0xae, 0x17, 0x91, 0xc5, 0x92, 0x75, 0xb4, 0xf6, 0xe8, 0xd9, 0xcb, 0x52, 0xef, 0xb9, 0x86, 0x54, 0x57, 0xe7, 0xc1, 0x42, 0x1e, 0x31, 0x12, 0x99, 0xbd, 0x56, 0x3f, 0xd2, 0x03, 0xb0, 0x26, 0x83, 0x5c, 0x2f, 0x23, 0x8b, 0x24, 0xeb, 0x69, 0xed, 0xd1, 0xb3, 0x96, 0xa5, 0xdf, 0x73, 0x0c, 0xa8, 0xaf, 0xcf, 0x82, 0x84, 0x3c, 0x62, 0x25, 0x33, 0x7a, 0xac, 0x7f, 0xa4, 0x07, 0x60, 0x4d, 0x06, 0xb8, 0x5e, 0x47, 0x16, 0x49, 0xd6, 0xd3, 0xdb, 0xa3, 0x67, 0x2d, 0x4b, 0xbe, 0xe6, 0x19, 0x51, 0x5f, 0x9f, 0x05, 0x08, 0x78, 0xc4, 0x4a, 0x66, 0xf5, 0x58 };

And for de-randomization just xor’it with the frame (excluding the 4 byte sync word):

for (int i=0; i<1020; i++) { decodedData[i] ^= pn[i%255]; }

Now you should have the de-randomized frame.

Reed Solomon Error Correction

Other of the things that were missing on the last part is the Data Error Correction. We already did the Foward Error Correction (FEC, the viterbi), but we also can do Reed Solomon. Notice that Reed Solomon is completely optional if you have good SNR (that is better than 9dB and viterbi less than 50 BER) since ReedSolomon doesn’t alter the data. I prefer to use RS because I don’t have a perfect signal (although my average RS corrections are 0) and I want my packet data to be consistent. The RS doesn’t usually add to much overhead, so its not big deal to use. Also the libfec provides a RS algorithm for the CCSDS standard.

I will assume you have a uint8_t buffer with a frame data of 1020 bytes (that is, the data we got in the last chapter with the sync word excluded). The CCSDS standard RS uses 255,223 as the parameters. That means that each RS Frame has 255 bytes which 223 bytes are data and 32 bytes are parity. With this specs, we can correct any 16 bytes in our 223 byte of data. In our LRIT Frame we have 4 RS Frames, but the structure are not linear. Since the Viterbi uses a Trellis diagram, the error in Trellis path can generate a sequence of bad bytes in the stream. So if we had a linear sequence of RS Frames, we could corrupt a lot of bytes from one frame and lose one of the RS Frames (that means that we lose the entire LRIT frame). So the data is interleaved by byte. The image below shows how the data is spread over the lrit frame.

For correcting the data, we need to de-interleave to generate the four RS Frames, run the RS algorithm and then interleave again to have the frame data. The [de]interleaving process are very simple. You can use these functions to do that:

#define PARITY_OFFSET 892 void deinterleaveRS(uint8_t *data, uint8_t *rsbuff, uint8_t pos, uint8_t I) { // Copy data for (int i=0; i<223; i++) { rsbuff[i] = data[i*I + pos]; } // Copy parity for (int i=0; i<32; i++) { rsbuff[i+223] = data[PARITY_OFFSET + i*I + pos]; } } void interleaveRS(uint8_t *idata, uint8_t *outbuff, uint8_t pos, uint8_t I) { // Copy data for (int i=0; i<223; i++) { outbuff[i*I + pos] = idata[i]; } // Copy parity - Not needed here, but I do. for (int i=0; i<32; i++) { outbuff[PARITY_OFFSET + i*I + pos] = idata[i+223]; } }

For using it on LRIT frame we can do:

#define RSBLOCKS 4 int derrors[4] = { 0, 0, 0, 0 }; uint8_t rsWorkBuffer[255]; uint8_t rsCorrectedData[1020]; for (int i=0; i<RSBLOCKS; i++) { deinterleaveRS(decodedData, rsWorkBuffer, i, RSBLOCKS); derrors[i] = decode_rs_ccsds(rsWorkBuffer, NULL, 0, 0); interleaveRS(rsWorkBuffer, rsCorrectedData, i, RSBLOCKS); }

In the variable derrors we will have how many bytes it was corrected for each RS Frames. In rsCorrectedData we will have the error corrected output. The value -1 in derrors it means the data is corrupted beyond correction (or the parity is corrupted beyond correction). I usually drop the entire frame if all derrors are -1, but keep in mind that the corruption can happen in the parity only (we can have corrupted bytes in parity that will lead to -1 in error correction) so it would be wise to not do like I did. After that we will have the corrected LRIT Frame that is 892 bytes wide.

Virtual Channel Demuxer

Now we will demux the Virtual Channels. I current save all virtual channel payload (the 892 bytes) to a file called channel_ID.bin then I post process with a python script to separate the channel packets. Parsing the virtual channel header has also some advantages now that we can see if for some reason we skipped a frame of the channel, and also to discard the empty frames (I will talk about it later).

VCDU Header

Fields:

- Version Number – The Version of the Frame Data

- S/C ID – Satellite ID

- VC ID – Virtual Channel ID

- Counter – Packet Counter (relative to the channel)

- Replay Flag – Is 1 if the frame is being sent again.

- Spare – Not used.

Basically we will only use 2 values from the header: VCID and Counter.

uint32_t swapEndianess(uint32_t num) { return ((num>>24)&0xff) | ((num<<8)&0xff0000) | ((num>>8)&0xff00) | ((num<<24)&0xff000000); } (...) uint8_t vcid = (*(rsCorrectedData+1)) & 0x3F; // Packet Counter from Packet uint32_t counter = *((uint32_t *) (rsCorrectedData+2)); counter = swapEndianess(counter); counter &= 0xFFFFFF00; counter = counter >> 8;

I usually save the last counter value and compare with the current one to see if I lost any frame. Just be carefull that the counter value is per channel ID (VCID). I actually never got any VCID higher than 63, so I store the counter in a 256 int32_t array.

One last thing I do in the C code is to discard any frame that has 63 as VCID. The VCID 63 only contains Fill Packets, that is used for keeping the satellite signal continuous, even when not sending anything. The payload of the frame will always contain the same sequence (that can be sequence of 0, 1 or 01).

Packet Demuxer

Having our virtual channels demuxed for files channel_ID.bin, we can do the packet demuxer. I did the packet demuxer in python because of the easy of use. I plan to rewrite in C as well, but I will explain using python code.

Channel Data

Each channel Data can contain one or more packets. If the Channel contains and packet end and another start, the First Header Pointer (the 11 bits from the header) will contain the address for the first header inside the packet zone.

First thing we need to do is read one frame from a channel_ID.bin file, that is, 892 bytes (6 bytes header + 886 bytes data). We can safely ignore the 6 bytes header from VCDU now since we won’t have any usefulness for this part of the program. The spare 5 bits in the start we can ignore, and we should get the FHP value to know if we have a packet start in the current frame. If we don’t, and there is no pending packet to append data, we just ignore this frame and go to the next one. The FHP value will be 2047 (all 1’s) when the current frame only contains data related to a previous packet (no header). If the value is different than 2047 then we have a header. So let’s handle this:

data = data[6:] # Strip channel header fhp = struct.unpack(">H", data[:2])[0] & 0x7FF data = data[2:] # Strip M_PDU Header #data is now TP_PDU if not fhp == 2047: # Frame Contains a new Packet # handle new header

So let’s talk first about handling a new packet. Here is the structure of a packet:

Packet Structure (CP_PDU)

We have a 6 byte header containing some useful info, and a user data that can vary from 1 byte to 8192 bytes. So this packet can span across several frames and we need to handle it. Also there is another tricky thing here: Even the packet header can be split across two frames (the 6 first bytes can be at two frames) so we need to handle that we might not have enough data to even check the packet header. I created a function called CreatePacket that receives a buffer parameter that can or not have enough data for creating a packet. It will return a tuple that contains the APID for the packet (or -1 if buffer doesn’t have at least 6 bytes) and a buffer that contains any unused data for the packet (for example if there was more than one packet in the buffer). We also have a function called ParseMSDU that will receive a buffer that contains at least 6 bytes and return a tuple with the MSDU (packet) header decomposed. There is also a SavePacket function that will receive the channelId (VCID) and a object to save the data to a packet file. I will talk about the SavePacket later.

import struct SEQUENCE_FLAG_MAP = { 0: "Continued Segment", 1: "First Segment", 2: "Last Segment", 3: "Single Data" } pendingpackets = {} def ParseMSDU(data): o = struct.unpack(">H", data[:2])[0] version = (o & 0xE000) >> 13 type = (o & 0x1000) >> 12 shf = (o & 0x800) >> 11 apid = (o & 0x7FF) o = struct.unpack(">H", data[2:4])[0] sequenceflag = (o & 0xC000) >> 14 packetnumber = (o & 0x3FFF) packetlength = struct.unpack(">H", data[4:6])[0] -1 data = data[6:] return version, type, shf, apid, sequenceflag, packetnumber, packetlength, data def CreatePacket(data): while True: if len(data) < 6: return -1, data version, type, shf, apid, sequenceflag, packetnumber, packetlength, data = ParseMSDU(data) pdata = data[:packetlength+2] if apid != 2047: pendingpackets[apid] = { "data": pdata, "version": version, "type": type, "apid": apid, "sequenceflag": SEQUENCE_FLAG_MAP[sequenceflag], "sequenceflag_int": sequenceflag, "packetnumber": packetnumber, "framesdropped": False, "size": packetlength } print "- Creating packet %s Size: %s - %s" % (apid, packetlength, SEQUENCE_FLAG_MAP[sequenceflag]) else: apid = -1 if not packetlength+2 == len(data) and packetlength+2 < len(data): # Multiple packets in buffer SavePacket(sys.argv[1], pendingpackets[apid]) del pendingpackets[apid] data = data[packetlength+2:] apid = -1 print " Multiple packets in same buffer. Repeating." else: break return apid, ""

With that we create a dictionary called pendingpackets that will store APID as the key, and another dictionary with the packet data, including a field called data that we will append data from other frames until we fill the whole packet. Back to our read function, we will have something like this:

... if not fhp == 2047: # Frame Contains a new Packet # Data was incomplete on last FHP and another packet starts here. # basically we have a buffer with data, but without an active packet # this can happen if the header was split between two frames if lastAPID == -1 and len(buff) > 0: print " Data was incomplete from last FHP. Parsing packet now" if fhp > 0: # If our First Header Pointer is bigger than 0, we still have # some data to add. buff += data[:fhp] lastAPID, data = CreatePacket(buff) if lastAPID == -1: buff = data else: buff = "" if not lastAPID == -1: # We are finishing another packet if fhp > 0: # Append the data to the last packet pendingpackets[lastAPID]["data"] += data[:fhp] # Since we have a FHP here, the packet has ended. SavePacket(sys.argv[1], pendingpackets[lastAPID]) del pendingpackets[lastAPID] # Erase the last packet data lastAPID = -1 # Try to create a new packet buff += data[fhp:] lastAPID, data = CreatePacket(buff) if lastAPID == -1: buff = data else: buff = ""

This should handle all frames that has a new header. But maybe the packet is so big that we got frames without any header (continuation packets). In this case the FHP will be 2047, and basically we have three things that can lead to that:

- The header was split between last frame end, and the current frame. FHP will be 2047 and after we append to our buffer we will have a full header to start a packet

- We just need to append the data to last packet.

- We lost some frame (or we just started) and we got a continuation packet. So we drop it.

... else: if len(buff) > 0 and lastAPID == -1: # Split Header print " Data was incomplete from last FHP. Parsing packet now" buff += data lastAPID, data = CreatePacket(buff) if lastAPID == -1: buff = data else: buff = "" elif len(buff)> 0: # This shouldn't happen, but I put a warn here if it does print " PROBLEM!" elif lastAPID == -1: # We don't have any pending packets, and we received # a continuation packet, so we drop. pass else: # We have a last packet, so we append the data. print " Appending %s bytes to %s" % (lastAPID, len(data)) pendingpackets[lastAPID]["data"] += data

Now let’s talk about the SavePacket function. I will describe some of the stuff here, but there will be also something described on the next chapter. Since the packet data can be compressed, we will need to check if the data is compressed, and if it is, we need to decompress. In this part we will not handle the decompression or the file assembler (that will need decompression).

Saving the Raw Packet

Now that we have the handler for the demuxing, we will implement the function SavePacket. It will receive two arguments, the channel id and a packetdict. The channel id will be used for saving the packets in the correct folder (separating them from other channel packets). We may have also a Fill Packet here, that has an APID of 2047. We should drop the data if the apid is 2047. Usually the fill packets are only used to increase the likely hood of the header of packet starts on the start of channel data. So it “fills” the channel data to get the header in the next packet. It does not happen very often though.

In the last step we assembled a packet dict with this structure:

{ "data": pdata, "version": version, "type": type, "apid": apid, "sequenceflag": SEQUENCE_FLAG_MAP[sequenceflag], "sequenceflag_int": sequenceflag, "packetnumber": packetnumber, "framesdropped": False, "size": packetlength }

The data field have the data we need to save, the type says the type of packet (and also if its compressed), the sequenceflag says if the packet is:

- 0 => Continued Segment, if this packet belongs to a file that has been already started.

- 1 => First Segment, if this packet contains the start of the file

- 2 => Last Segment, if this packet contains the end of the file

- 3 => Single Data, if this packet contains the whole file

It also contains a packetnumber that we can use to check if we skip any packet (or lose).

The size parameter is the length of data field – 2 bytes. The two last bytes is the CRC of the packet. The CCSDS only specify the polynomial for the CRC, CRC-CCITT standard. I made a very small function based on a few C functions I found over the internet:

def CalcCRC(data): lsb = 0xFF msb = 0xFF for c in data: x = ord(c) ^ msb x ^= (x >> 4) msb = (lsb ^ (x >> 3) ^ (x << 4)) & 255 lsb = (x ^ (x << 5)) & 255 return (msb << 8) + lsb def CheckCRC(data, crc): c = CalcCRC(data) if not c == crc: print " Expected: %s Found %s" %(hex(crc), hex(c)) return c == crc

On SavePacket function we should check the CRC to see if any data was corrupted or if we did any mistake. So we just check the CRC and then save the packet to a file (at least for now):

EXPORTCORRUPT = False def SavePacket(channelid, packet): global totalCRCErrors global totalSavedPackets global tsize global isCompressed global pixels global startnum global endnum try: os.mkdir("channels/%s" %channelid) except: pass if packet["apid"] == 2047: print " Fill Packet. Skipping" return datasize = len(packet["data"]) if not datasize - 2 == packet["size"]: # CRC is the latest 2 bytes of the payload print " WARNING: Packet Size does not match! Expected %s Found: %s" %(packet["size"], len(packet["data"])) if datasize - 2 > packet["size"]: datasize = packet["size"] + 2 print " WARNING: Trimming data to %s" % datasize data = packet["data"][:datasize-2] if packet["sequenceflag_int"] == 1: print "Starting packet %s_%s_%s.lrit" % (packet["apid"], packet["version"], packet["packetnumber"]) startnum = packet["packetnumber"] if packet["framesdropped"]: print " WARNING: Some frames has been droped for this packet." filename = "channels/%s/%s_%s_%s.lrit" % (channelid, packet["apid"], packet["version"], packet["packetnumber"]) print "- Saving packet to %s" %filename crc = packet["data"][datasize-2:datasize] if len(crc) == 2: crc = struct.unpack(">H", crc)[0] crc = CheckCRC(data, crc) else: crc = False if not crc: print " WARNING: CRC does not match!" totalCRCErrors += 1 if crc or (EXPORTCORRUPT and not crc): f = open(filename, "wb") f.write(data) f.close() totalSavedPackets += 1 else: print " Corrupted frame, skipping..."

With that you should be able to see a lot of files being out of your channel, each one being a packet. If you get the first packet (with the sequenceflag = 1), you will also have the Transport Layer header that contains the decompressed file size, and file number. We will handle the decompression and lrit file composition in next chapter. You can check the final code here: | https://www.teske.net.br/lucas/2016/11/goes-satellite-hunt-part-4-packet-demuxer/ | CC-MAIN-2019-18 | refinedweb | 3,158 | 62.92 |

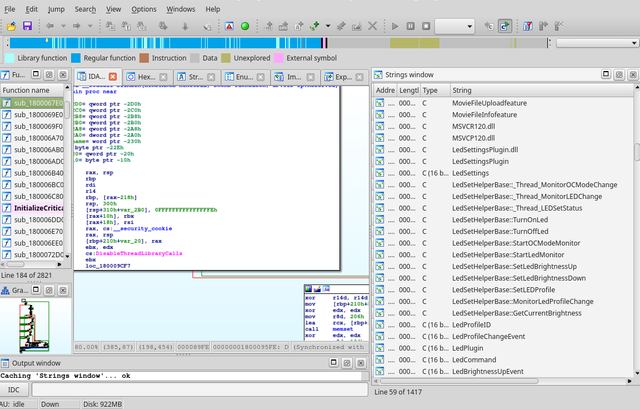

GAVO DaCHS Tutorial¶

Contents

- GAVO DaCHS Tutorial

- Invoking DaCHS

- Building a Catalog Service

- Introduction to DaCHS Publishing

- More on Grammars

- More on Tables

- More on Services

- More on Cores

- More on Metadata

- Active Tags

- Some Words on Times

- Publishing DAL Services

- Writing Examples

- Services Over Views

- The Registry Interface

- Restricting Access

This tutorial intends to guide you through ingestion of data and setting up of services. Even if you plan to only publish images or spectra, you should work through the first part; it explains a lot about DaCHS’ central concept, the resource descriptor (RD), recommended directory layouts, the basics of metadata and services, and debugging.

Invoking DaCHS¶

Note: Before version 1.0 (planned release July 2017), dachs had to

be called as

gavo. While this will still be possible during the 1.0

series for DaCHS, we recommend using

dachs as the entry point. This

tutorial and other examples are migrating towards the new command name,

so: If you’re running DaCHS <1.0, you’ll have to write

gavo when

there’s either “gavo” or “dachs” in DaCHS documentation, newer

installations should just use

dachs.

All DaCHS functionality is invoked through a

program called

dachs. Multiple functions are integrated and selected

through the first argument; run

dachs help to see what’s available;

realistically, the functions most operators will be confronted with are

start,

import,

serve,

publish, and

test. Also make sure you at

least once skim the man page.

DaCHS has some global options (that go in front of the subcommand name). Most subcommands take options and/or arguments, which then have to be after the subcommand name.

Most of DaCHS’s global options have to do with debugging; it is sometimes useful go say:

dachs --ui stingy ...

to reduce the program’s chattiness.

For a brief overview what the individual functions are, see the man page or use the built-in help, as in:

$ dachs limits --help usage: dachs limits [-h] itemId Updates existing values min/max items in a referenced table or RD. positional arguments: itemId Cross-RD reference of a table or RD to update, as in ds/q or ds/q#mytable; only RDs in inputsDir can be updated. optional arguments: -h, --help show this help message and exit

Building a Catalog Service¶

Quick start¶

To do anything useful with DaCHS, you will have to write a resource descriptor (RD), and you’ll probably have to have some data. Both must reside within some subdirectory of DaCHS’ input directory (unless you configured otherwise, that’s /var/gavo/inputs; we assume that in the following).

Since both astronomical data and VO services tend to be complex, you can do a lot of things in an RD. However, the publication of a certain data type (e.g., catalogue, spectra or image collection) often follows a pattern. DaCHS comes with a library of such patterns, and when starting a service, you will usually do something like:

cd /var/gavo/inputs mkdir <collection-name> cd <collection-name> dachs start <data-type-tag>

To see something quickly, however, we will look at an existing data set first, a catalog called ARIHIP catalog – this is a re-reduction of the Hipparcos result catalog with particularly careful solutions for proper motion. It has a column-based input and probably a few more columns than your average catalog these days. It hence is simple in principle but let us demonstrate a few advanced concepts, too.

You can run the following under any user id, as long as

you wisely manage the permissions. For testing, however, we recommend

doing it as the data center administrative account (for the Debian

package, that’s gavoadmin, and short. The directory created here is ususally called the resource directory in DaCHS lingo.

The resource descriptor name also appears in URLs. At GAVO’s data

center, we usually call it

q.rd as that looks nicely query-ish (to

our tastes).

Next, get the raw data. We recommend keeping data in a subdirectory of

resource directory (and suggest to call that subdirectory

data). At

the GAVO DC we usually keep everything in the resource directory under

version control except for that data directory (which tends to be large,

full of binary files, and either versioned by upstream or not at all):

mkdir data cd data curl -O

At this point, we’re ready for ingestion. All commands to DaCHS go

through the

dachs program that has several sub-commands; in this

case, we need the

import sub-command. The sub-commands can be

abbreviated as long as the abbreviation is unambiguous:

cd .. dachs imp q

This should run for a while, reporting the number of ingested rows now and then, and finally say something like “Rows affected: XY”. With this, the data is in the database and is ready for querying.

Let us mention in passing that

dachs imp tries to interpret its first

argument first as a file system path. If that fails, it tries to

interpret it as an RD identifier, i.e., the inputsDir-relative path of

the RD with the extension stripped. Our example RD thus has the RD id

arihip/q, and you could have said:

dachs imp arihip/q

from anywhere in the file system.

After you have imported a table, it is a good idea to run

dachs info

with the DaCHS identifier of the freshly imported table, e.g.,:

dachs info arihip/q#main

The DaCHS identifier of the table consists of the RD id as introduced above, the hash (inspired by the URL fragment identifier), and the id of the table.

This will output several properties (min, max, avg) of numeric columns that may help spot import errors. Also note that for each column, the presence of NULLs is given. When you import data, it is a good idea to check whether these correspond to your expectations, and to consider declaring columns as required when they do not indeed contain NULLs.).

The RD sets up a form-based service you can operate from a web browser; open the URL [1] and play around a bit. Note the small links behind some query fields – DaCHS supports VizieR-like expressions in those fields.

Briefly have a look at the URL; apart from the host name and port (see

the operator’s guide on how to change those), there is the path to the

RD (without the file extension), then the id of the service element (see

below) and a “renderer name”. That essentially defines the physical

interface of the service, i.e., which protocol it is accessed through.

In this case, it’s

form for an HTML form.

Another renderer supported by this service is

scs.xml, which

implements the IVOA Simple Cone Search (SCS) protocol. A client that supports

this is TOPCAT; to try it out, in TOPCAT select VO/Cone Search and fill

out the Cone URL field in the lower part of the window to be. Enter some object name

and a sufficiently large search radius (e.g., Aldebaran and 0.5

degrees), and you’ll see the results coming in.

Incidentally, cone search does not (yet) have a usable interface to

discover additional parameters, and hence TOPCAT restricts you to those

mandatory for every SCS service. For instance, as delivered, arihip

admits an

mv parameter. DaCHS supports a special syntax for

“free” parameters of cone searches as defined by the spectral access

protocol SSAP; to say ”everything brighter than 6th magnitude”, the

parameter setting would be

/6; to use this constraint within TOPCAT,

the access URL needs to be amended like this:.

Finally, the RD opens the arihip table for the IVOA Table Access

Protocol TAP, which

The anatomy of the RD¶

Now have a look at the RD by bringing up q.rd in your favourite editor. It starts out with:

<resource schema="arihip">

RDs are normal XML files (meaning that you could, e.g., add an XML declaration if you want an encoding other than utf-8), and thus they need a root element. Hopefully unsurprisingly, this is called resource for RDs. DaCHS typically does not distinguish attributes and elements with atomic content, which means that you could also have written the fragment above as:

<resource> <schema>arihip</schema>

This notational freedom sometimes allows clearer notation, and it helps with defining active tags. Multiple specifications of the same property make up multiple values where the property is sequence-like (in the reference documentation this is indicated by phrases like “zero or more” or “list of” in the properties descriptions). For atomic properties, later specifications overwrite earlier ones.

The

schema attribute on resource gives the (database) schema that

tables for this resource will turn up in. You should, in general, use

the name of the resource directory here. If you don’t, you have to give

the subdirectory name in the resource element’s

resdir attribute –

either way, this is then used to build absolute paths within the RD,

e.g., for the sources element discussed below.

In general, you should have exactly one RD per database schema. This is not enforced, but sharing schemata between RDs will cause many undesirable behaviours. (which could, in this case, fixed by adding untrusted to B’s readRoles manually, but you get the idea).

Another hint: There’s a fairly large body of RDs at, and most of them are free for inspection and blatant stealing (if you need a license on any of this, let us know). These RDs can be seen live on. To locate examples for concrete elements, meta items, and such, have a look at our RD element reference for these."> <meta name="profile">AllSky ICRS</meta> <meta name="waveband">Optical</meta> </meta> <STREAM source="//procs#license-cc-by" what="ARIHIP"/> <meta name="_longdoc" format="rst"> The ARIHIP Catalogue is a suitable combination of the results of the HIPPARCOS astrometry satellite with ground-based data. (abridged) </meta> <meta name="source"> Veröff. Astron. Rechen-Inst. No. 40 (2001); </meta> <meta name="_intro" format="rst"> <![CDATA[ For advanced queries on this catalogue use ADQL_ possibly via TAP_ .. _ADQL: /adql .. _TAP: /tap ]]> </meta>

This metadata is cruicial for later registration of the service, and some of it turns up in service responses. If you have a look at the HTML form you opened above, you will find quite a bit of it in the sidebar.

Metadata elements have a

name attribute that gives the “kind” of

metadata contained, and sometimes also determine a specific type.

Metadata can be hierarchical, where hierarchy elements are separated by

dots, and metadata can come in various formats as determined by the

format attribute. If you give nothing here, DaCHS will apply some

whitespace normalization, and it will interpret empty lines as

paragraphs if the target format supports it. With

format="rst", the

content will be interpreted as reStructuredText. Be careful to use

consistent indenation in this case. There are some other, more obscure,

formats, too, that you do not need to worry about right now.