text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91

values | source stringclasses 1

value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

|---|---|---|---|---|---|

jxyShockinglyGreen

Posts20

Joined

Last visited

About jxy

Recent Profile Visitors

The recent visitors block is disabled and is not being shown to other users.

jxy's Achievements

Rare

Rare

Rare

Recent Badges

7

Reputation

- I have a paid membership of brilliant.org. Not sure if you can view this content. Let me know if yo... | https://greensock.com/profile/106617-jxy/ | CC-MAIN-2022-40 | refinedweb | 693 | 73.98 |

SQL from Java

From SQLZOO

You can connect to an SQL engine using JDBC. You will need to obtain a JDBC driver from the appropriate database.

- The example given is based on the MySQL JDBC Connector/J which may be found at MySQL

- Extract the MySQL Connector/J into a directory C:\thingies. (If you know what you are doing... | http://sqlzoo.net/wiki/SQL_from_Java | CC-MAIN-2014-15 | refinedweb | 222 | 51.75 |

9.21.5. Free Response - CookieOrder A¶

The following is a free response question from 2010. It was question 1 on the exam. You can see all the free response questions from past exams at.

Question 1. An organization raises money by selling boxes of cookies. A cookie order specifies the variety of cookie and the number o... | https://runestone.academy/runestone/static/JavaReview/ListBasics/cookieOrderA.html | CC-MAIN-2019-26 | refinedweb | 349 | 56.96 |

Bummer! This is just a preview. You need to be signed in with a Basic account to view the entire video.

Practice Projects and Code Fights119:06 with Kenneth Love

Kenneth answers student questions while playing around with Python by doing a few practice projects and trying out some Code Fights.

- 0:00

[MUSIC]

- 0:05

I t... | https://teamtreehouse.com/library/practice-projects-and-code-fights | CC-MAIN-2018-13 | refinedweb | 17,100 | 92.42 |

cd hw/xfree86/os-support/linux

1)

It seems an error slipped into the patch I submitted for bug #4511. It results

in Makefile parsing errors. Sorry about that :( The patch adding the necessary

'\' is included here:

--- Makefile.am 2005-10-17 09:18:58 +0200

+++

/var/tmp/portage/xorg-server-0.99.2-r1/work/xorg-server-0.99... | https://bugs.freedesktop.org/show_bug.cgi?id=4928 | CC-MAIN-2016-30 | refinedweb | 382 | 55.1 |

Developer's Corner

From pdl

This is meant to be a collection of ideas currently floating around the PDL mailing list for current and future development.

Survey Results

Read the Results from the Fall 2009 Usage and Installation Survey.

PDL Way Forward

All, I've consolidated the topics and discussion from perldl and pdl-... | http://sourceforge.net/apps/mediawiki/pdl/index.php?title=Developer's_Corner&oldid=223 | CC-MAIN-2013-48 | refinedweb | 528 | 51.11 |

Details

- Type:

Bug

- Status: Closed

- Priority:

Major

- Resolution: Fixed

- Affects Version/s: 0.92.0

-

-

- Labels:None

- Hadoop Flags:Reviewed

- Release Note:HideToShowTo

Description.

Issue Links

- is related to

HADOOP-7646 Make hadoop-common use same version of avro as HBase

- Closed

Activity

- All

- Work Log

- Hist... | https://issues.apache.org/jira/browse/HBASE-4327 | CC-MAIN-2017-09 | refinedweb | 1,026 | 63.05 |

I am new to SublimeText as well as Python programming so forgive me if I am missing something that should be obvious. I am trying to write a plugin for Sublime Text 1 that inserts a timestamp using this as a reference.

My point of confusion is, how do I bind my newly-created plugin to a key command. The samply plugin r... | http://www.sublimetext.com/forum/viewtopic.php?f=3&t=2104&start=0 | CC-MAIN-2015-11 | refinedweb | 172 | 58.38 |

>

Introduction

Running Couchbase as a Docker container is fairly easy. Simply inherit from the latest, official Couchbase image and add your customized behavior according to your requirement. In this post, I am going to show how you can fire up a web application using Spring Boot, Vaadin, and of course Couchbase (as ba... | https://blog.couchbase.com/docker-vaadin-meet-couchbase-part1/ | CC-MAIN-2022-33 | refinedweb | 1,379 | 55.84 |

Odoo Help

Odoo is the world's easiest all-in-one management software. It includes hundreds of business apps:

CRM | e-Commerce | Accounting | Inventory | PoS | Project management | MRP | etc.

Module create new model - no table create

I'm trying to learn OpenERp code logic... I would like to write a new module, and need ... | https://www.odoo.com/forum/help-1/question/module-create-new-model-no-table-create-2421 | CC-MAIN-2017-51 | refinedweb | 326 | 53.88 |

compile and run java program

compile and run java program Hello, everyone!!!

I just want to ask....

For example, I have a java file named Hello.java and saved at my desktop.

how... using the java built-in compiler of UBUNTU..

I hope you could help me

compile error

compile error Hello All

for example

public class...");

... | http://www.roseindia.net/tutorialhelp/comment/395 | CC-MAIN-2015-11 | refinedweb | 2,870 | 65.83 |

This document identifies the status of Last Call issues on XQuery 1.0 and XPath 2.0 Formal Semantics as of February 11, 2005.

The formal semantics “[FS]”.

Last call issues list for xquery-semantics (up to message 2004Mar/0246).

There are 90 issue(s).

90 raised (90 substantive), 0 proposed, 0 decided, 0 announced and 0 ... | http://www.w3.org/2005/02/formal-semantics-issues.html | crawl-002 | refinedweb | 19,066 | 56.96 |

Details

Description

Notes:

* running test method "testMultipleEvals" (single threaded case) always succeeds

* running test method "testMultiThreadMultipleEvals" always fails

* commenting out the allow.inline.local.scope line makes the multithread test pass (but of course has other side-effects)

Interestingly, for the m... | https://issues.apache.org/jira/browse/VELOCITY-776?focusedCommentId=12905340&page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel | CC-MAIN-2014-10 | refinedweb | 786 | 61.46 |

28

The avr-g++ C++ compiler that arduino uses does need forward declaration.

To make it easier for beginners the IDE does auto generate forward declaration code and puts it into your C++ code before sending it to the avr-g++ C++ compiler.

Dr.Smart

Thanks for that insight Dr.Smart – I did not think about that. Good to ... | https://www.tweaking4all.com/hardware/arduino/arduino-programming-course/arduino-programming-part-6/ | CC-MAIN-2021-31 | refinedweb | 1,104 | 69.41 |

This is the second expose data from your server using the MVC 6 Web API. We’ll retrieve a list of movies from the Web API and display the list of movies in our AngularJS app by taking advantage of an AngularJS template.

Enabling ASP.NET MVC

The first step is to enable MVC for our application. There are two files that w... | https://stephenwalther.com/archive/2015/01/13/asp-net-5-and-angularjs-part-2-using-the-mvc-6-web-api | CC-MAIN-2021-31 | refinedweb | 1,431 | 58.79 |

System.Taffybar

Description

The main module of Taffybar

Synopsis

- data TaffybarConfig = TaffybarConfig {

- screenNumber :: Int

- monitorNumber :: Int

- barHeight :: Int

- errorMsg :: Maybe String

- startWidgets :: [IO Widget]

- endWidgets :: [IO Widget]

- defaultTaffybar :: TaffybarConfig -> IO ()

- defaultTaffybarCon... | http://pages.cs.wisc.edu/~travitch/taffybar/System-Taffybar.html | CC-MAIN-2016-30 | refinedweb | 730 | 57.27 |

Created on 2010-12-28 06:57 by cooyeah, last changed 2013-06-28 15:46 by sylvain.corlay. This issue is now closed.

The constructor of TextTestRunner class looks like:

def __init__(self, stream=sys.stderr, descriptions=1, verbosity=1)

Since the default parameter is evaluated only once, if sys.stderr is redirected later,... | http://bugs.python.org/issue10786 | CC-MAIN-2015-40 | refinedweb | 457 | 68.47 |

Subresultant algorithm taking a lot of time for higher degree univariate polynomials with coefficients from fraction fields

I have to compute the gcd of univariate polynomials over the fraction field of $\mathbb{Z}[x,y]$. I wanted to use the subresultant algorithm already implemented for UFDs. I copied the same functio... | https://ask.sagemath.org/question/37672/subresultant-algorithm-taking-a-lot-of-time-for-higher-degree-univariate-polynomials-with-coefficients-from-fraction-fields/ | CC-MAIN-2018-17 | refinedweb | 573 | 63.05 |

For design reasons the OpenGL specification was isolated from any window

system dependencies. The resulting interface is a portable, streamlined

and efficient 2D and 3D rendering library. It is up to the native window

system to open and render windows. The OpenGL library communicates with

the native system through addi... | http://www.linuxfocus.org/Portugues/January1998/article16.html | CC-MAIN-2016-44 | refinedweb | 1,615 | 52.9 |

On Sat, 2 May 2009, twisted-web-request at twistedmatrix.com wrote: > Hi Dave! Thanks for taking the time to contribute. > > Unfortunately, this contribution will almost certainly be lost if you > don't open a ticket for it at <>. > Once you've done that, you'll need to mark it for review by adding the > "review" keywo... | http://twistedmatrix.com/pipermail/twisted-web/2009-May/004171.html | CC-MAIN-2014-42 | refinedweb | 330 | 58.99 |

Can u GIve any Example where it may fails?

what i thought if S=p2 + p3 if there is any way to split S into p1 + p4 then it will validate the conditions

Can u GIve any Example where it may fails?

what i thought if S=p2 + p3 if there is any way to split S into p1 + p4 then it will validate the conditions

It is correct. Y... | https://discuss.codechef.com/t/chefship-editorial/66255/119 | CC-MAIN-2020-45 | refinedweb | 1,160 | 68.91 |

Subject: Re: [boost] [tweener] Doxygen documentation issues

From: Steven Watanabe (watanabesj_at_[hidden])

Date: 2013-11-05 13:22:55

AMDG

On 11/05/2013 10:10 AM, Paul A. Bristow wrote:

>

>> Doxygen XML is a bit annoying about that. Last time I tried, it seemed to ignore the option

> that's

>> supposed to hide private m... | https://lists.boost.org/Archives/boost/2013/11/208290.php | CC-MAIN-2019-30 | refinedweb | 201 | 67.45 |

Your browser does not seem to support JavaScript. As a result, your viewing experience will be diminished, and you have been placed in read-only mode.

Please download a browser that supports JavaScript, or enable it if it's disabled (i.e. NoScript).

I have a dialog with a Treeview.

In the Treeview I can detect when a o... | https://plugincafe.maxon.net/topic/12242/reacting-on-treeview-user-action | CC-MAIN-2022-05 | refinedweb | 121 | 66.74 |

through static type checking

- Providing code completion and refactoring support in many of our favorite editors, like VS Code, WebStorm, and even Vim

- Allowing us to use modern language features of JavaScript before they are available in all browsers

We think TypeScript is great, and we think many of our Ionic Angula... | https://blog.ionicframework.com/how-to-use-typescript-in-react/ | CC-MAIN-2019-18 | refinedweb | 652 | 61.06 |

XForms is gaining momentum rapidly, with support available for common browsers using extensions or plugins, and through products like the IBM® Workplace Forms technology (see the Resources section at the end of this article to find out more). use actions and events with XForms, and how to control the format of the form... | http://www.ibm.com/developerworks/xml/library/x-xformsintro3/ | crawl-003 | refinedweb | 2,003 | 59.03 |

Before considering class and interface declarations in Java, it is

essential that you understand the object-oriented model used by the

language. No useful programs can be written in Java without using

objects. Java deliberately omits certain C++ features that promote a

less object-oriented style of programming. Thus, a... | https://docstore.mik.ua/orelly/java/langref/ch05_03.htm | CC-MAIN-2019-26 | refinedweb | 4,803 | 51.89 |

Intermediate-level techniques improve security and reliability

Document options requiring JavaScript are not displayed

Help us improve this content

Level: Intermediate

Cameron Laird (claird@phaseit.net), Consultant, Phaseit, Inc.

04 May 2007

Are you tired of spending countless hours devoted to fixing memory faults? Do... | http://www.ibm.com/developerworks/aix/library/au-correctmem/ | crawl-001 | refinedweb | 2,262 | 52.29 |

Hello, I have a scrolling background on a Quad. The background scrolling speed will eventually speed up overtime, as the player lives longer.

The game is essentially an endless runner, but the player never actually moves, so only the background will scroll to make it look like the player is moving. But because the play... | https://answers.unity.com/questions/1195756/get-objects-to-move-relative-to-background-scrolli.html | CC-MAIN-2019-43 | refinedweb | 452 | 63.9 |

JavaScript library and REST reference for Project Server 2013

The JavaScript library and REST reference for Project Server 2013 contains information about the JavaScript object model and the REST interface that you use to access Project Server functionality. You can use these APIs to develop cross-browser web apps, Pro... | https://docs.microsoft.com/en-us/previous-versions/office/project-javascript-api/jj712612(v=office.15)?redirectedfrom=MSDN | CC-MAIN-2019-43 | refinedweb | 346 | 55.24 |

What is CORS?CORS stands for Cross-Origin-Resource-Scripting. This is essentially a mechanism that allows restricted resources (e.g. web services) on a web page to be requested from another domain outside the domain from which the resource originated. The default security model used by JavaScript running in browsers is... | https://blog.medhat.ca/2016/10/azure-deployment-of-aspnet-web-api-cors.html | CC-MAIN-2021-43 | refinedweb | 2,701 | 60.01 |

Identical binary trees

Given two binary trees, check if two trees are identical binary trees? First question arises : when do you call two binary trees are identical? If root of two trees is equal and their left and right subtrees are also identical, then two trees are called as identical binary trees. For example, two... | https://algorithmsandme.com/category/data-structures/binary-search-tree/page/2/ | CC-MAIN-2019-18 | refinedweb | 831 | 60.95 |

Many C++ Windows programmers get confused over what bizarre identifiers like TCHAR, LPCTSTR are.

In this article, I would attempt by best to clear out the fog.

TCHAR

LPCTSTR

In general, a character can be represented in 1 byte or 2 bytes. Let's say 1-byte character is ANSI character - all English characters are represe... | http://www.codeproject.com/Articles/76252/What-are-TCHAR-WCHAR-LPSTR-LPWSTR-LPCTSTR-etc?msg=4456447 | CC-MAIN-2015-40 | refinedweb | 2,900 | 52.39 |

Happy New Year!

2008-12-31

Changes of this week:

- Added access to collision contacts of Players.

- Fixed minor bug in UnigineScript with namespaces and user-defined classes.

- Refactored Actor system.

- LightSpot performance optimization.

- User classes in UnigineScript can be stored as keys in maps.

- Character class... | https://developer.unigine.com/devlog/20081231-happy-new-year | CC-MAIN-2020-45 | refinedweb | 163 | 65.32 |

Law of Large Numbers and the Central Limit Theorem (With Python)

Want to share your content on python-bloggers? click here.

Statistics does not usually go with the word “famous,” but a few theorems and concepts of statistics find such universal application, that they deserve that tag. The Central Limit Theorem and the ... | https://python-bloggers.com/2020/11/law-of-large-numbers-and-the-central-limit-theorem-with-python/ | CC-MAIN-2022-40 | refinedweb | 2,294 | 56.76 |

We'll need to share your messages (and your email address if you're logged in) with our live chat provider, Drift. Here's their privacy policy.

If you don't want to do this, you can email us instead at contact@anvil.works.

Do you find yourself wanting to store more than just strings, numbers and dates in your tables? P... | https://anvil.works/blog/page-5.html | CC-MAIN-2019-22 | refinedweb | 286 | 72.36 |

I have a question in c++ , can you help me ,and tell me where is the proplem in my solution ?

The question is:

Assume that the maximum number of students in a class is 50. Write a program that reads students' names followed by their test score from a file and outputs the following:

a. class average

b. Names of all the ... | https://www.daniweb.com/programming/software-development/threads/104091/please-help-me-i-want-it-for-tomorrow | CC-MAIN-2018-43 | refinedweb | 194 | 76.93 |

Your Account

by Chris Adamson

This blog comes from two different places. The first is my decade-long general indifference to really using the clipping rectangle, which in Java2D is a shape that indicates what part of a Graphics object is to be painted in. It offers the potential for graphics optimizations because the r... | http://archive.oreilly.com/pub/post/a_scrolly_clippy_swing_optimiz.html | CC-MAIN-2014-41 | refinedweb | 1,683 | 54.83 |

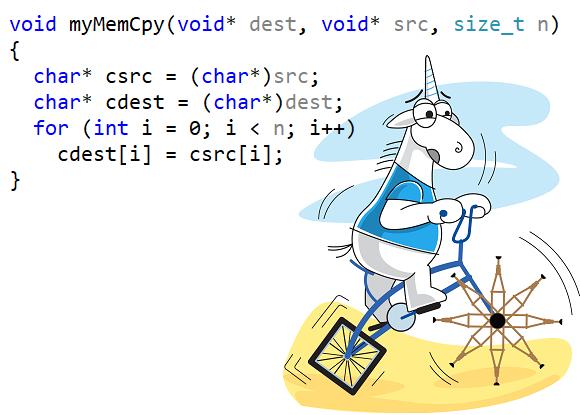

# Starting My Collection of Bugs Found in Copy Functions

I've already noticed a few times before that programmers seem to tend to make mistakes in simple copy functions. Writing a profound article on... | https://habr.com/ru/post/495640/ | null | null | 1,082 | 54.52 |

Last Updated on

A question I get asked a lot is:

How can I make money with machine learning?

You can get a job with your machine learning skills as a machine learning engineer, data analyst or data scientist. That is the goal of a great many people that contact me.

There are also other options.

In this post, I want to ... | https://machinelearningmastery.com/machine-learning-for-money/ | CC-MAIN-2019-47 | refinedweb | 1,883 | 63.09 |

For many years there has been the only way to write client-side logic for the web; JavaScript. WebAssembly provides another way, as a low-level language similar to assembly, with a compact binary format. Go, a popular open source programming language focused on simplicity, readability and efficiency, recently gained th... | https://www.sitepen.com/blog/compiling-go-to-webassembly/ | CC-MAIN-2019-09 | refinedweb | 1,883 | 64.51 |

So I can't figure out how to change my result into an exact decimal.

My problem is that the tan function returns 1 instead of like 1.37etc...My problem is that the tan function returns 1 instead of like 1.37etc...Code:#include <cmath> #include <iostream> #include <cstdlib> #include <iomanip> #define Pi double(3.14159) ... | https://cboard.cprogramming.com/cplusplus-programming/135376-loss-data-trig-function.html | CC-MAIN-2017-30 | refinedweb | 195 | 72.97 |

I am stuck on trying to figure out how to insert a vector for a value in a map. For example:

#include <iostream>

#include <vector>

#include <map>

using namespace std;

int main()

{

map <int, vector<int> > mymap;

mymap.insert(pair<int, vector<int> > (10, #put something here#));

return 0;

}

{1,2}

Basically your question i... | https://codedump.io/share/AVKhG4h5ihOB/1/insert-vector-for-value-in-map-in-c | CC-MAIN-2017-47 | refinedweb | 176 | 52.09 |

std::cos(std::complex)

From cppreference.com

Computes complex cosine of a complex value

z.

[edit] Parameters

[edit] Return value

If no errors occur, the complex cosine of

z is returned.

Errors and special cases are handled as if the operation is implemented by

std::cosh(i*z), where

i is the imaginary unit.

[edit] Notes... | https://en.cppreference.com/w/cpp/numeric/complex/cos | CC-MAIN-2019-13 | refinedweb | 177 | 54.83 |

[

]

Konstantin Shvachko updated HDFS-2280:

--------------------------------------

Attachment: checksumException.patch

In the patch I

# set {{imageDigest}} to null in {{BackupStorage.reset()}} to make sure the old digest is

not interfering with newly loaded images.

# and populate {{imageDigest}} obtained from NN to BN's... | http://mail-archives.apache.org/mod_mbox/hadoop-hdfs-issues/201108.mbox/%3C757452121.11836.1314233009054.JavaMail.tomcat@hel.zones.apache.org%3E | CC-MAIN-2014-23 | refinedweb | 165 | 50.02 |

Bug #15121closed

Memory Leak on rb_id2name(SYM2ID(sym))

Description

@ohler55 mentioned in that calling

rb_id2name(SYM2ID(sym)) seems to lock symbols in memory but I couldn't find any issue open for that. So I'm just opening one just in case, but pls close if this is a dupe.

I created a sample C extension to reproduce t... | https://bugs.ruby-lang.org/issues/15121 | CC-MAIN-2022-27 | refinedweb | 356 | 51.14 |

I know how to write some 100 lines C, but I do not know how to read/organize larger source like Rebol. Somewhere was a tutorial with hostkit and dll, but it seems R3 is now statically linked. So I do not know where to look.

How would I write a native which gets a value and returns another? Where to put it in the source... | http://ebanshi.cc/questions/1774504/for-rebol3-want-to-get-started-with-native-extensions-on-linux-how-do-i-write | CC-MAIN-2017-04 | refinedweb | 563 | 76.22 |

Hello friends,

I would like to calculate area and volume of cylinder but radious and height should be integer, float and double. How can i do?

May you help me?

First, do you know the formula for calculating the surface area and volume in terms of radius and height? Why do you want to do it for integer, float and double... | http://forums.codeguru.com/showthread.php?537169-In-order-to-use-the-functionality-of-the-base-class&goto=nextnewest | CC-MAIN-2015-14 | refinedweb | 1,111 | 67.25 |

When I saw MSBuild for the first time I thought - "Yeah, good improvement over the standard makefile - we now have XML (surprise!!) and it is extensible". The real import of word “extensible” struck home when I started writing custom tasks. The concept of being able to call an army of reusable objects from a makefile –... | http://blogs.msdn.com/b/srivatsn/archive/2005/09/20/471709.aspx | CC-MAIN-2014-41 | refinedweb | 541 | 56.96 |

Introduction

If you work with a large datasets in json inside your python code, then you might want to try using 3rd party libraries like ujsonand orjson which are replacements to python’s json library.

As per their documentation

ujson (UltraJSON) is an ultra fast JSON encoder and decoder written in pure C with binding... | https://practicaldev-herokuapp-com.global.ssl.fastly.net/dollardhingra/benchmarking-python-json-serializers-json-vs-ujson-vs-orjson-1o16 | CC-MAIN-2022-33 | refinedweb | 297 | 57.98 |

XQuery/DOJO data

Motivation[edit]

You want to use XQuery with your DOJO JavaScript library which uses a variation of JSON syntax.

Method[edit]

DOJO is a framework for developing rich client side applets in javascript: from the nice to have to the core webapp. Some day you may want to deliver your data in a way, that yo... | https://en.wikibooks.org/wiki/XQuery/DOJO_data | CC-MAIN-2018-30 | refinedweb | 344 | 51.99 |

Greetings! New to this forum, first time posting. =)

I'm currently trying to make a log-in or check-in system, it's meant to be able to allow users to log in, log out, and for there to be logs of the date and time the users did this. Issue being that I have never made anything like it before so it's a nice little proje... | http://www.javaprogrammingforums.com/%20whats-wrong-my-code/32957-multiple-instances-class-printingthethread.html | CC-MAIN-2016-18 | refinedweb | 519 | 64.85 |

22 January 2008 18:44 [Source: ICIS news]

By Stephen Burns

HOUSTON (ICIS news)--Some key US chemical spot values were heading lower on Tuesday amid the turmoil in global financial markets, but most traders were content to wait and see how the situation would pan out.

US financial markets reopened on Tuesday after a thr... | http://www.icis.com/Articles/2008/01/22/9094907/some-us-spot-chemicals-slip-as-buyers-stand-pat.html | CC-MAIN-2013-48 | refinedweb | 389 | 66.33 |

Sorting listview

ListView (and the default GridView view) is a great bare bones control for us to add extra functionality – though sorting out of the box would have been handy.

On the face of it, sorting is easy – you handle the click of the header and call Sort on the collection being bound to using the property of th... | http://itknowledgeexchange.techtarget.com/wpf/sorting-listview/ | CC-MAIN-2017-09 | refinedweb | 313 | 60.08 |

How to supercharge Swift enum-based states with Sourcery

I really love enums with associated values in Swift and it’s my main tool for designing state. Why are enums so much more powerful and beautiful option? Mostly because they allow me to keep strong invariants about the data in my type system.

For example, if I hav... | https://medium.com/flawless-app-stories/enums-and-sourcery-5da57cda473b?utm_campaign=Revue%20newsletter&utm_medium=Swift%20Weekly%20Newsletter%20Issue%20103&utm_source=Swift%20Weekly | CC-MAIN-2018-26 | refinedweb | 1,127 | 67.96 |

This is the mail archive of the gdb@sourceware.org mailing list for the GDB project.

We have a large source tree with many directories. When the system is built that tree appears in one place in the namespace; then the build results are saved in "good builds" directories, one per good build up to whatever we can save. ... | http://sourceware.org/ml/gdb/2006-03/msg00189.html | crawl-002 | refinedweb | 179 | 65.12 |

On Thu, Jun 19, 2008 at 12:05 AM, Brad Schick <schickb@gmail.com> wrote:

>

>.

>

An argument for #1 is that in uses cases like mine (millions of docs)

the presence of "extra" views can be a real headache. Users can just

provide the views necessary for the plugins to function. I like #1

also because it is "generative" as... | https://mail-archives.apache.org/mod_mbox/couchdb-user/200806.mbox/%3Ce282921e0806190119i7fb2b271n43d931691914a94a@mail.gmail.com%3E | CC-MAIN-2017-04 | refinedweb | 314 | 57.71 |

Today Widget - Just some comments

Sorry if this has been covered before.

But I tried to use the Today Widget, today (I have tried before, but long time ago). Anyway, I was suprised about what I could do.

I was able to load a CustomView using @JonB PYUILoader (loading a pyui file into a Custom Class) , I was able to use... | https://forum.omz-software.com/topic/4628/today-widget-just-some-comments | CC-MAIN-2021-39 | refinedweb | 895 | 69.68 |

Technical Support

On-Line Manuals

CARM User's Guide

Discontinued

#include <stdio.h>

int vsprintf (

char *buffer, /* pointer to storage buffer */

const char *fmtstr, /* pointer to format string */

char *argptr); /* pointer to argument list */

The vsprintf function formats a series of strings and

numeric values and store... | http://www.keil.com/support/man/docs/ca/ca_vsprintf.htm | CC-MAIN-2019-30 | refinedweb | 132 | 64.1 |

I have this question to deal with.....

Here is the question:

Create a class called Date that includes three pieces of information as instance variables—a

month (type int), a day (type int) and a year (type int). Your class should have a constructor that

initializes the three instance variables and assumes that the valu... | http://www.javaprogrammingforums.com/%20whats-wrong-my-code/5112-what-wrong-here-please-printingthethread.html | CC-MAIN-2015-27 | refinedweb | 256 | 69.82 |

I use a lot of images in my project (about 100-120, 10-30 kB each). Images are on the server and loading (source = "mediayes.png") and display on the window by clicking on the button. There is a delay due to the large number of images (7-10 s). I created a class to connect images to the project:

package

{

import spark.... | https://forums.adobe.com/thread/769185 | CC-MAIN-2017-30 | refinedweb | 320 | 75.61 |

In this article, you'll learn how to build apps using Firevel - a serverless Laravel framework.

In recent decades the PHP community has gone through some major changes. First, we saw billion-dollar platforms like Facebook adopting PHP as a primary language. Then tools like the Laravel framework quickly rose in populari... | https://morioh.com/p/fd192a497274 | CC-MAIN-2020-16 | refinedweb | 1,002 | 51.07 |

(For more resources related to this topic, see here.)

Running the IPython console

If IPython has been installed correctly, you should be able to run it from a system shell with the ipython command. You can use this prompt like a regular Python interpreter as shown in the following screenshot:

Command-line shell on Wind... | https://hub.packtpub.com/ten-ipython-essentials/ | CC-MAIN-2018-22 | refinedweb | 1,861 | 61.46 |

.5 Appendix C: Git Commands - Sharing and Updating Projects

Sharing and Updating Projects

There are not very many commands in Git that access the network, nearly all of the commands operate on the local database. When you are ready to share your work or pull changes from elsewhere, there are a handful of commands that ... | https://git-scm.com/book/be/v2/Appendix-C%3A-Git-Commands-Sharing-and-Updating-Projects | CC-MAIN-2019-13 | refinedweb | 807 | 65.86 |

The REST zealotry needs to end.

First, let me establish my bonafides here. I work on the ROME project. I built the first module for Google Base. I have used the Propono project to build an APP service. I use REST. REST is a friend of mine.

Here is the thing:

People need to own up to the fact that SOAP has its place. Ye... | http://www.oreillynet.com/onjava/blog/2007/10/ | crawl-002 | refinedweb | 309 | 82.14 |

I recently bought an Arduino pH sensor kit for measuring pH value of my hydroponic setup, it cheap but has very little information/document on how to use it, so I decided to figure it out myself on how it works and how to use it.

Popular pH measurement kits for Arduino

If you search for pH sensor with Arduino on Intern... | https://www.e-tinkers.com/2019/11/measure-ph-with-a-low-cost-arduino-ph-sensor-board/ | CC-MAIN-2021-49 | refinedweb | 4,763 | 62.58 |

On 8/5/12 5:30 PM, Gilles Sadowski wrote:

>>>> [...]

>>>>.

>> Why? RandomData is pretty descriptive and exactly what these

>> methods do. They generate random data.

> Fine...

> But can we plan to merge "RandomData" and "RandomDataImpl"?

Definitely want to do this. Unfortunately, it is incompatible

change. I guess we co... | http://mail-archives.apache.org/mod_mbox/commons-dev/201208.mbox/%3C501F1ECE.1060903@gmail.com%3E | CC-MAIN-2014-23 | refinedweb | 313 | 52.26 |

Posts from Mark Ng 2009-04-19T11:46:28Z Mark Ng Startups - a competition ? 2009-04-19T11:46:28Z Mark 80 Image cc-licensed from flickr So, on Thursday, I attended Bournemouth Startup Meetup, organised b[...] <img src="" alt="Boot Strap" /> <p style="font-size: 0.5em;">Image cc-licensed from <a href="">flickr</a></p> <p>... | http://feeds.feedburner.com/MarkNg | crawl-002 | refinedweb | 4,499 | 59.53 |

by James Polanco

Now that Adobe has released the public betas of Flash Catalyst and Flash Builder, you are probably hearing and seeing more about the new graphics file format, FXG.

So what is FXG and why is it important to Flash Catalyst, Flash Builder, Flex 4, Flash Professional and Creative Suite users? In this artic... | http://www.adobe.com/inspire-archive/august2009/articles/article1/index.html?trackingid=EVHEZ | CC-MAIN-2014-52 | refinedweb | 1,264 | 59.23 |

In this article we'll see how to use DropDownList and ListBox Web controls to display data in various formats.

Introduction This article is based on queries in the newsgroup:

Query 1 :

So data in the database along with the desired output is as given below

Note: The dropDownList has a bug which prevent us from assignin... | https://www.c-sharpcorner.com/article/working-with-dropdownlist-and-listbox-controls-in-Asp-Net/ | CC-MAIN-2019-30 | refinedweb | 217 | 53.14 |

24 November 2021

Alex Miller

Welcome to the Clojure Deref! This is a weekly link/news roundup for the Clojure ecosystem. (@ClojureDeref RSS)

Welcome to a special mid-week Deref as we will be out in the US this week! But on that note, big thanks to the Clojure community for always be interesting, inventive, and caring. ... | https://clojure.org/news/2021/11/24/deref | CC-MAIN-2021-49 | refinedweb | 555 | 56.05 |

#include <UIOP_Acceptor.h>

#include <UIOP_Acceptor.h>

Inheritance diagram for TAO_UIOP_Acceptor:

0

Create Acceptor object using addr.

[virtual]

Destructor.

Implements TAO_Acceptor.

[private]

Create a UIOP profile representing this acceptor.

Add the endpoints on this acceptor to a shared profile.

Obtains uiop properties... | http://www.theaceorb.com/1.4a/doxygen/tao/strategies/classTAO__UIOP__Acceptor.html | CC-MAIN-2017-51 | refinedweb | 130 | 62.44 |

17 April 2012 11:43 [Source: ICIS news]

SINGAPORE (ICIS)--A small fire erupted at Formosa Petrochemical Corp’s (FPCC) residue desulphuriser (RDS) unit in ?xml:namespace>

The fire broke out at about 04:10 hours Taiwan time (20:10 GMT) on Tuesday while FPCC is testing its 80,000 bbl/day No 2 RDS unit, which is due to res... | http://www.icis.com/Articles/2012/04/17/9550946/fire-breaks-out-at-formosas-mailiao-site-during-rds-unit-restart.html | CC-MAIN-2014-35 | refinedweb | 270 | 72.9 |

XmlDocument.PreserveWhitespace Property

Gets or sets a value indicating whether to preserve white space in element content.

Assembly: System.Xml (in System.Xml.dll)

This property determines how white space is handled during the load and save process..

This method is a Microsoft extension to the Document Object Model (D... | https://msdn.microsoft.com/en-us/library/system.xml.xmldocument.preservewhitespace(v=vs.100).aspx | CC-MAIN-2017-47 | refinedweb | 131 | 54.79 |

<<

C# Translator [using GOOGLE API]

Started by Professor Green, Jul 14 2011 09:05 AMcombobox

13 replies to this topic

#13

Posted 19 December 2012 - 01:03 PM

Man that kinda stinks I was looking forward to making one of these.

- 0

Recommended for you: Get network issues from WhatsUp Gold. Not end users.

#14

Posted 25 Jan... | http://forum.codecall.net/topic/64790-c-translator-using-google-api/page-2 | CC-MAIN-2018-30 | refinedweb | 662 | 56.15 |

And then i am using Intellij IDE from Jetbrains.

Download here

I wrote my first Java program 2 days ago. I am doing this with the #100DaysOfCode on Twitter. To track my progress.

What i have learnt so far.

- Every Java program must have at least one function.

- The functions don’t exist on their own. It must always bel... | https://practicaldev-herokuapp-com.global.ssl.fastly.net/buzzedison/i-started-learning-java-3hme | CC-MAIN-2021-39 | refinedweb | 194 | 70.5 |

Advertise with Us!

We have a variety of advertising options which would give your courses an instant visibility to a very large set of developers, designers and data scientists.View Plans

Typescript vs Javascript

Table of Contents

If you have ever worked on a web development project, you must have seen what JavaScript ... | https://hackr.io/blog/typescript-vs-javascript | CC-MAIN-2020-40 | refinedweb | 1,007 | 64 |

#include <cyg/io/framebuf.h>

typedef struct cyg_fb {

cyg_ucount16 fb_depth;

cyg_ucount16 fb_format;

cyg_uint32 fb_flags0;

…

} cyg_fb;

extern const cyg_uint8 cyg_fb_palette_ega[16 * 3];

extern const cyg_uint8 cyg_fb_palette_vga[256 * 3];

#define CYG_FB_DEFAULT_PALETTE_BLACK 0x00

#define CYG_FB_DEFAULT_PALETTE_BLUE 0x01

... | http://www.ecoscentric.com/ecospro/doc/html/ref/framebuf-colour.html | crawl-003 | refinedweb | 1,806 | 50.16 |

[HELP] Update View During Button Action

def changeBackground(sender): z=sender.superview x=1 while x<=10: button='button'+str(x) z[button].background_color=(0,0,0) x+=1 time.sleep(1)

In my mind, this code (assuming that this gets called by pressing a button) should change the background color of 'button1', 'button2', a... | https://forum.omz-software.com/topic/1437/help-update-view-during-button-action | CC-MAIN-2021-31 | refinedweb | 970 | 67.55 |

nathan.f77 (Nathan Broadbent)

- Registered on: 10/28/2012

- Last connection: 07/15/2014

Issues

Activity

07/16/2014

- 08:54 PM Ruby trunk Feature #10040: `%d` and `%t` shorthands for `Date.new(*args)` and `Time.new(*args)`

- > Nathan, Date is only available in stdlib (maybe it should be moved to core) so I don't think t... | https://bugs.ruby-lang.org/users/6274 | CC-MAIN-2018-13 | refinedweb | 375 | 65.32 |

I recently got to work outside my comfort zone, rather than scripting in D I was to utilize Python. It has been a long time since programming in Python, I've developed a style and Python does not follow C syntactic choices. Thus I had to search on how to solve problems I already know in D. I thought it would make for a... | https://he-the-great.livejournal.com/62199.html | CC-MAIN-2020-05 | refinedweb | 226 | 73.88 |

using circle detection and system works for a bit then disconnects itself and then end by opens its internal flash drive with blue led flashing

please advise

Tom

Find Circles Example

This example shows off how to find circles in the image using the Hough

Transform. Circle Hough Transform - Wikipedia

Note that the find_... | https://forums.openmv.io/t/openmv-camera-works-then-quits/1791 | CC-MAIN-2021-17 | refinedweb | 246 | 51.65 |

C; implementations of C must conform to the standard. An “implementation” is basically the combination of the compiler, and the hardware platform. As you will see, the C standard usually does not specify exact sizes for the native types, preferring to set a basic minimum that all conforming implementations of C must me... | http://www.exforsys.com/tutorials/c-language/c-programming-language-data-types.html | CC-MAIN-2019-35 | refinedweb | 3,463 | 59.84 |

- Part 1 - A Quirk With Implicit vs Explicit Interfaces

- Part 2 - What is an OpCode? (this post)

- Part 3 - Conditionals and Loops

In my last post we say the differences between interface implementations in the IL that is generated, but as a refresher, it looks like this:

And the code that created it looked like this... | https://www.aaron-powell.com/posts/2019-09-17-what-is-an-opcode/ | CC-MAIN-2019-43 | refinedweb | 1,235 | 65.46 |

Coroutines¶

Coroutines are the recommended way to write asynchronous code in

Tornado. Coroutines use the Python

yield: (functions

using these keywords are also called “native coroutines”). Starting in

Tornado 4.3, you can use them in place of most

yield-based

coroutines (see the following paragraphs for limitations).:

... | http://www.tornadoweb.org/en/branch4.5/guide/coroutines.html | CC-MAIN-2018-13 | refinedweb | 392 | 59.5 |

#include <wx/sysopt.h>:

The compile-time option to include or exclude this functionality is wxUSE_SYSTEM_OPTIONS.

Default constructor.

You don't need to create an instance of wxSystemOptions since all of its functions are static.

Gets an option.

The function is case-insensitive to name. Returns empty string if the opti... | https://docs.wxwidgets.org/3.1.5/classwx_system_options.html | CC-MAIN-2021-31 | refinedweb | 160 | 70.7 |

Although JavaScript

supports a data type we call an object, it

does not have a formal notion of a class. This makes it quite

different from classic object-oriented languages such as C++ and

Java. The common conception about object-oriented programming

languages is that they are strongly typed and support class-based

in... | https://docstore.mik.ua/orelly/webprog/jscript/ch08_05.htm | CC-MAIN-2019-18 | refinedweb | 2,277 | 56.05 |

[WAI ER WG] [TOC]

This is the homepage for EARL, the Evaluation And Report Language being developed by the W3C WAI ER Group.

We expect that EARL will be used more widely as part of the new QA effort at W3C, as the base format for storing results of test runs. EARL is also related to the W3C's Semantic Web Activity.

Thi... | http://www.w3.org/2001/03/earl/ | crawl-002 | refinedweb | 1,029 | 64.2 |

Festival and special event management

Published: Last Edited:

This essay has been submitted by a student. This is not an example of the work written by our professional essay writers.

IMPACT OF DLF IPL ON THE SOUTH AFRICAN COMMUNITY AND INDIAN ECONOMY

INTRODUCTION

There exists no such event whose effects can be annulle... | https://www.ukessays.com/essays/business/festival-and-special-event-management.php | CC-MAIN-2017-17 | refinedweb | 2,819 | 62.88 |

This is a

playground to test code. It runs a full

Node.js environment and already has all of

npm’s 400,000 packages pre-installed, including

seethru with all

npm packages installed. Try it out:

require()any package directly from npm

awaitany promise instead of using callbacks (example)

This service is provided by RunKi... | https://npm.runkit.com/seethru | CC-MAIN-2020-10 | refinedweb | 1,836 | 62.38 |

56903/how-to-assign-a-groovy-variable-to-a-shell-variable

I have the following code snippet in my Declarative Jenkins pipeline wherein I am trying to assign a groovy array variable 'test_id' to a shell array variable 't_id'.

script{

def test_id

sh """

t_id=($(bash -c \" source ./get_test_ids.sh ${BITBUCKET_COMMON_CREDS... | https://www.edureka.co/community/56903/how-to-assign-a-groovy-variable-to-a-shell-variable | CC-MAIN-2020-29 | refinedweb | 438 | 64.71 |

JQuery - Multiple versionsChetanya Jain Apr 25, 2012 10:21 PM

Hi,

CQ5.4 uses jQuery version 1.4.4, and the latest version available is 1.7.2. For some reasons we would like to use 1.7.2 version of the jQuery, but its clashing with the OOB jQuery version.

I would like to know:

- How do we use jQuery latest pack with CQ5... | https://forums.adobe.com/message/4794538 | CC-MAIN-2019-04 | refinedweb | 1,790 | 74.9 |

Extras/SteeringCommittee/Meeting-20061130

From FedoraProject

(10:00:05 AM) thl has changed the topic to: FESCo meeting -- Meeting rules at [WWW] -- Init process (10:00:11 AM) thl: FESCo meeting ping -- abadger1999, awjb, bpepple, c4chris, dgilmore, jeremy, jwb, rdieter, spot, scop, thl, tibbs, warren (10:00:14 AM) thl:... | http://fedoraproject.org/wiki/Extras/SteeringCommittee/Meeting-20061130 | CC-MAIN-2014-35 | refinedweb | 7,125 | 77.16 |

Class System relative to Non-Component Creation

Class System relative to Non-Component Creation

Is the new class system only for UI type Components or should it be used anytime the "new" keyword would be used; for example in creating a new Model, Store, etc. would it be advisable to use "Ext.define" instead of the "new... | http://www.sencha.com/forum/showthread.php?124542-Class-System-relative-to-Non-Component-Creation | CC-MAIN-2013-48 | refinedweb | 219 | 57.98 |

The CLR has two different techniques for implementing interfaces. These two techniques are exposed with distinct syntax in C#:

interface I { void m(); }

class C : I {

public virtual void m() {} // implicit contract matching

}

class D : I {

void I.m() {} // explicit contract matching

}

At first glance, it may seem like ... | https://blogs.msdn.microsoft.com/cbrumme/2003/05/03/interface-layout/ | CC-MAIN-2018-05 | refinedweb | 1,583 | 65.01 |

Variables are not automatically given an intial value by the system, and start with whatever garbage is left in memory when they are allocated. Pointers have the potential to cause substantial damage when they are used without a valid address. For this reason it's important to initialize them.

NULL.

The standard initia... | http://www.fredosaurus.com/notes-cpp/pointer-ref/50nullpointer.html | CC-MAIN-2016-22 | refinedweb | 136 | 57.98 |

You can configure Shield to use Public Key Infrastructure (PKI) certificates to authenticate users. This requires clients to present X.509 certificates. To use PKI, you configure a PKI realm, enable client authentication on the desired network layers (transport or http), and map the DNs from the user certificates to Sh... | https://www.elastic.co/guide/en/shield/current/pki-realm.html | CC-MAIN-2020-45 | refinedweb | 371 | 57.16 |

With a GLib implementation of the Python asyncio event loop, I can easily mix asyncio code with GLib/GTK code in the same thread. The next step is to see whether we can use this to make any APIs more convenient to use. A good candidate is APIs that make use of GAsyncResult.

These APIs generally consist of one function ... | https://blogs.gnome.org/jamesh/2019/10/07/gasyncresult-with-python-asyncio/ | CC-MAIN-2021-17 | refinedweb | 453 | 56.86 |

Ads Via DevMavens

The typical web form consists of controls (like labels, buttons, and data grids), and programming logic. In ASP.NET 2.0, there are two approaches to managing these control and code pieces: the single-file page model and the code-behind page model. Regardless of which model you choose, it’s important t... | http://www.odetocode.com/Articles/406.aspx | crawl-002 | refinedweb | 1,466 | 65.52 |

# Unicorns break into RTS: analyzing the OpenRA source code

This article is about the check of the OpenRA project using the static PVS-Studio analyzer. What is OpenRA? It is an open source game e... | https://habr.com/ru/post/514964/ | null | null | 4,384 | 52.36 |

As a web developer, you will encounter situations that call for an effective token replacement scheme. Token replacement is really just a technologically-savvy way of saying string replacement, and involves substituting a "real" value for a "token" value in a string. This article presents a powerful approach to token r... | https://www.simple-talk.com/dotnet/asp.net/token-replacement-in-asp.net/ | CC-MAIN-2014-15 | refinedweb | 2,667 | 54.63 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.