text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91

values | source stringclasses 1

value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

|---|---|---|---|---|---|

I am writing a command line interface to a Ruby gem and I have this method exit_error, which acts as an exit error point to all validations performed while processing.

exit_error

def self.exit_error(code,possibilities=[])

puts @errormsgs[code].colorize(:light_red)

if not possibilities.empty? then

puts "It should be:"

possibilities.each{ |p| puts " #{p}".colorize(:light_green) }

end

exit code

end

where @errormsgs is a hash whose keys are the error codes and whose values are the corresponding error messages.

@errormsgs

This way I may give users customized error messages writing validations like:

exit_error(101,@commands) if not valid_command? command

where:

@errormsgs[101] => "Invalid command."

@commands = [ :create, :remove, :list ]

and the user typing a wrong command would receive an error message like:

Invalid command.

It should be:

create

remove

list

At the same time, this way I may have bash scripts detecting exactly the error code who caused the exit condition, and this is very important to my gem.

Everything is working fine with this method and this strategy as a whole. But I must confess that I wrote all this without writing tests first. I know, I know... Shame on me!

Now that I am done with the gem, I want to improve my code coverage rate. Everything else was done by the book, writing tests first and code after tests. So, it would be great having tests for these error conditions too.

It happens that I really don't know how to write Rspec tests to this particular situation, when I use exit to interrupt processing. Any suggestions?

exit

Update => This gem is part of a "programming environment" full of bash scripts. Some of these scripts need to know exactly the error condition which interrupted the execution of a command to act accordingly.

For example:

class MyClass

def self.exit_error(code,possibilities=[])

puts @errormsgs[code].colorize(:light_red)

if not possibilities.empty? then

puts "It should be:"

possibilities.each{ |p| puts " #{p}".colorize(:light_green) }

end

exit code

end

end

You could write its rspec to be something like this:

describe 'exit_error' do

let(:errormsgs) { {101: "Invalid command."} }

let(:commands) { [ :create, :remove, :list ] }

context 'exit with success'

before(:each) do

MyClass.errormsgs = errormsgs # example/assuming that you can @errormsgs of the object/class

allow(MyClass).to receive(:exit).with(:some_code).and_return(true)

end

it 'should print commands of failures'

expect(MyClass).to receive(:puts).with(errormsgs[101])

expect(MyClass).to receive(:puts).with("It should be:")

expect(MyClass).to receive(:puts).with(" create")

expect(MyClass).to receive(:puts).with(" remove")

expect(MyClass).to receive(:puts).with(" list")

MyClass.exit_error(101, commands)

end

end

context 'exit with failure'

before(:each) do

MyClass.errormsgs = {} # example/assuming that you can @errormsgs of the object/class

allow(MyClass).to receive(:exit).with(:some_code).and_return(false)

end

# follow the same approach as above for a failure

end

end

Of course this is an initial premise for your specs and might not just work if you copy and paste the code. You will have to do a bit of a reading and refactoring in order to get green signals from rspec. | http://jakzaprogramowac.pl/pytanie/59268,writing-a-rspec-test-for-an-error-condition-finished-with-exit | CC-MAIN-2017-43 | refinedweb | 515 | 51.24 |

In this section we are going to swap two variables without using the third variable. For this, we have used input.nextInt() method of Scanner class for getting the integer type values from the command prompt. Instead of using temporary variable, we have done some arithmetic operations like we have added both the numbers and stored in the variable num1 then we have subtracted num2 from num1 and stored it into num2. After that, we have subtracted num2 from num1 and stored into num1. Now, we get the values that has been interchanged. To display the values on the command prompt use println() method and the swapped values will get displayed.

Here is the code:

import java.util.*; class Swapping { public static void main(String[] args) { Scanner input = new Scanner(System.in); System.out.println("Enter Number 1: "); int num1 = input.nextInt(); System.out.println("Enter Number 2: "); int num2 = input.nextInt(); num1 = num1 + num2; num2 = num1 - num2; num1 = num1 - num2; System.out.println("After swapping, num1= " + num1 + " and num2= " + num2); } }

Output: | http://www.roseindia.net/tutorial/java/core/swapnumbers.html | CC-MAIN-2014-10 | refinedweb | 172 | 67.76 |

Code style and conventions¶

Be consistent!¶

Look at the surrounding code, or a similar part of the project, and try to do the same thing. If you think the other code has actively bad style, fix it (in a separate commit).

When in doubt, ask in chat.zulip.org.

Lint tools¶

You can run them all at once with

./tools/lint

You can set this up as a local Git commit hook with

``tools/setup-git-repo``

The Vagrant setup process runs this for you.

lint runs many lint checks in parallel, including

Secrets¶

Please don’t put any passwords, secret access keys, etc. inline in the

code. Instead, use the

get_secret function in

zproject/settings.py

to read secrets from

/etc/zulip/secrets.conf.

Dangerous constructs¶

Misuse of database queries¶

Look out for Django code like this:

[Foo.objects.get(id=bar.x.id) for bar in Bar.objects.filter(...) if bar.baz < 7]

This will make one database query for each

Bar, which is slow in

production (but not in local testing!). Instead of a list comprehension,

write a single query using Django’s QuerySet

API.

If you can’t rewrite it as a single query, that’s a sign that something is wrong with the database schema. So don’t defer this optimization when performing schema changes, or else you may later find that it’s impossible.

UserProfile.objects.get() / Client.objects.get / etc.¶

In our Django code, never do direct

UserProfile.objects.get(email=foo)

database queries. Instead always use

get_user_profile_by_{email,id}.

There are 3 reasons for this:

- It’s guaranteed to correctly do a case-inexact lookup

- It fetches the user object from remote cache, which is faster

- It always fetches a UserProfile object which has been queried using .select_related(), and thus will perform well when one later accesses related models like the Realm.

Similarly we have

get_client and

get_stream functions to fetch those

commonly accessed objects via remote cache.

Using Django model objects as keys in sets/dicts¶

Don’t use Django model objects as keys in sets/dictionaries – you will get unexpected behavior when dealing with objects obtained from different database queries:

For example,

UserProfile.objects.only("id").get(id=17) in set([UserProfile.objects.get(id=17)])

is False

You should work with the IDs instead.

user_profile.save()¶

You should always pass the update_fields keyword argument to .save() when modifying an existing Django model object. By default, .save() will overwrite every value in the column, which results in lots of race conditions where unrelated changes made by one thread can be accidentally overwritten by another thread that fetched its UserProfile object before the first thread wrote out its change.

Using raw saves to update important model objects¶

In most cases, we already have a function in zerver/lib/actions.py with a name like do_activate_user that will correctly handle lookups, caching, and notifying running browsers via the event system about your change. So please check whether such a function exists before writing new code to modify a model object, since your new code has a good chance of getting at least one of these things wrong.

Naive datetime objects¶

Python allows datetime objects to not have an associated timezone, which can cause time-related bugs that are hard to catch with a test suite, or bugs that only show up during daylight savings time.

Good ways to make timezone-aware datetimes are below. We import

timezone

function as

from django.utils.timezone import now as timezone_now and

from django.utils.timezone import utc as timezone_utc. When Django is not

available,

timezone_utc should be replaced with

pytz.utc below.

timezone_now()when Django is available, such as in

zerver/.

datetime.now(tz=pytz.utc)when Django is not available, such as for bots and scripts.

datetime.fromtimestamp(timestamp, tz=timezone_utc)if creating a datetime from a timestamp. This is also available as

zerver.lib.timestamp.timestamp_to_datetime.

datetime.strptime(date_string, format).replace(tzinfo=timezone_utc)if creating a datetime from a formatted string that is in UTC.

Idioms that result in timezone-naive datetimes, and should be avoided, are

datetime.now() and

datetime.fromtimestamp(timestamp) without a

tz

parameter,

datetime.utcnow() and

datetime.utcfromtimestamp(), and

datetime.strptime(date_string, format) without replacing the

tzinfo at

the end.

Additional notes:

- Especially in scripts and puppet configuration where Django is not available, using

time.time()to get timestamps can be cleaner than dealing with datetimes.

- All datetimes on the backend should be in UTC, unless there is a good reason to do otherwise.

x.attr('zid') vs.

rows.id(x)¶

Our message row DOM elements have a custom attribute

zid which

contains the numerical message ID. Don’t access this directly as

x.attr('zid') ! The result will be a string and comparisons (e.g. with

<=) will give the wrong result, occasionally, just enough to make a

bug that’s impossible to track down.

You should instead use the

id function from the

rows module, as in

rows.id(x). This returns a number. Even in cases where you do want a

string, use the

id function, as it will simplify future code changes.

In most contexts in JavaScript where a string is needed, you can pass a

number without any explicit conversion.

JavaScript var¶

Always declare JavaScript variables using

var. JavaScript has

function scope only, not block scope. This means that a

var

declaration inside a

for or

if acts the same as a

var

declaration at the beginning of the surrounding

function. To avoid

confusion, declare all variables at the top of a function.

JS array/object manipulation¶

For generic functions that operate on arrays or JavaScript objects, you should generally use Underscore. We used to use jQuery’s utility functions, but the Underscore equivalents are more consistent, better-behaved and offer more choices.

A quick conversion table:

$.each → _.each (parameters to the callback reversed) $.inArray → _.indexOf (parameters reversed) $.grep → _.filter $.map → _.map $.extend → _.extend

There’s a subtle difference in the case of

_.extend; it will replace

attributes with undefined, whereas jQuery won’t:

$.extend({foo: 2}, {foo: undefined}); // yields {foo: 2}, BUT... _.extend({foo: 2}, {foo: undefined}); // yields {foo: undefined}!

Also,

_.each does not let you break out of the iteration early by

returning false, the way jQuery’s version does. If you’re doing this,

you probably want

_.find,

_.every, or

_.any, rather than ‘each’.

Some Underscore functions have multiple names. You should always use the

canonical name (given in large print in the Underscore documentation),

with the exception of

_.any, which we prefer over the less clear

‘some’.

More arbitrary style things¶

Line length¶

We have an absolute hard limit on line length only for some files, but we should still avoid extremely long lines. A general guideline is: refactor stuff to get it under 85 characters, unless that makes the code a lot uglier, in which case it’s fine to go up to 120 or so.

JavaScript¶

When calling a function with an anonymous function as an argument, use this style:

my_function('foo', function (data) { var x = ...; // ... });

The inner function body is indented one level from the outer function call. The closing brace for the inner function and the closing parenthesis for the outer call are together on the same line. This style isn’t necessarily appropriate for calls with multiple anonymous functions or other arguments following them.

Combine adjacent on-ready functions, if they are logically related.

The best way to build complicated DOM elements is a Mustache template

like

static/templates/message_reactions.handlebars. For simpler things

you can use jQuery DOM building APIs like so:

var new_tr = $('<tr />').attr('id', object.id);

Passing a HTML string to jQuery is fine for simple hardcoded things that don’t need internationalization:

foo.append('<p id="selected">/</p>');

but avoid programmatically building complicated strings.

We used to favor attaching behaviors in templates like so:

<p onclick="select_zerver({{id}})">

but there are some reasons to prefer attaching events using jQuery code:

- Potential huge performance gains by using delegated events where possible

- When calling a function from an

onclickattribute,

thisis not bound to the element like you might think

- jQuery does event normalization

Either way, avoid complicated JavaScript code inside HTML attributes; call a helper function instead.

HTML / CSS¶

Avoid using the

style= attribute unless the styling is actually

dynamic. Instead, define logical classes and put your styles in

external CSS files such as

zulip.css.

Don’t use the tag name in a selector unless you have to. In other words,

use

.foo instead of

span.foo. We shouldn’t have to care if the tag

type changes in the future.

Python¶

Don’t put a shebang line on a Python file unless it’s meaningful to run it as a script. (Some libraries can also be run as scripts, e.g. to run a test suite.)

Scripts should be executed directly (

./script.py), so that the interpreter is implicitly found from the shebang line, rather than explicitly overridden (

python script.py).

Put all imports together at the top of the file, absent a compelling reason to do otherwise.

Unpacking sequences doesn’t require list brackets:

[x, y] = xs # unnecessary x, y = xs # better

For string formatting, use

x % (y,)rather than

x % y, to avoid ambiguity if

yhappens to be a tuple.

Third party code¶

See our docs on dependencies for discussion of rules about integrating third-party projects. | https://zulip.readthedocs.io/en/stable/contributing/code-style.html | CC-MAIN-2018-51 | refinedweb | 1,580 | 57.67 |

PHP 5.4 and Zend Framework 2.0 Gearing up for Release. In PHP 5.4, developers will also be able to turn the

MB string on and off, so the multibyte support will be available without having to recompile PHP. According to Gutmans, that will provide a significant advantage to companies that want to have a common.

"With PHP 5.3, if you want to take advantage of the namespace support, it essentially mandated a rewrite, or at least substantial changes," Zeev Suraski, CTO of Zend told InternetNews.com. "PHP 5.4 will be more evolutionary than PHP 5.3, so it won't mandate a rewrite of code."

Zend Framework 2.0

The Zend Framework, which currently is in beta, is also set for a 2.0 release in 2012. Gutmans noted that his team has worked to make the Zend Framework (ZF) 2.0 release faster and more extensible.

According to Suraski, the main focus of Zend Framework 2.0 is to take full advantage of PHP 5.3 as well as making it easier to use.

"Developing applications in ZF 2.0 will be significantly easier than ZF 1.0," Suraski said. "You can create an application with just a few lines of code in a really elegant way."

Suraski added that Zend Framework in general is something that will help PHP developers to build more secure applications as well.

"Generally speaking, if you take advantage of Zend Framework, then a lot of the common issues that you get in applications just go away," Suraski said. "For example, if you use the database component instead of creating your own queries, then SQL injection goes away."

Sean Michael Kerner is a senior editor at InternetNews.com, the news service of Internet.com, the network for technology professionals.

Originally published on.

| http://www.developer.com/lang/php/php-5-4-and-zend-framework-2-0-gearing-up-for-release.html | CC-MAIN-2014-42 | refinedweb | 303 | 69.58 |

Don’t forget to support the lib by giving a ⭐️

How to install

CocoaPods

SwifCron is available through CocoaPods

To install it, simply add the following line in your Podfile:

pod 'SwifCron', '~> 1.3.0'

Swift Package Manager

.package(url: "", from:"1.3.0")

In your target’s dependencies add

"SwifCron" e.g. like this:

.target(name: "App", dependencies: ["SwifCron"]),

Usage

import SwifCron do { let cron = try SwifCron("* * * * *") //for getting next date related to current date let nextDate = try cron.next() //for getting next date related to custom date let nextDate = try cron.next(from: Date()) } catch { print(error) }

Limitations

I use CrontabGuru as a reference

So you could parse any expression which consists of digits with

*

,

/ and

- symbols

Contributing

Please feel free to contribute!

ToDo

- write more tests

- support literal names of months and days of week in expression

- support non-standard digits like

7for Sunday in day of week part of expression

Latest podspec

{ "name": "SwifCron", "version": "1.3.0", "summary": "u23f1 An awesome and simple pure swift cron expressions parser and scheduler", "description": "With this lib you will be able to easily parse cron strings and get next launch dates", "homepage": "", "license": { "type": "MIT", "file": "LICENSE" }, "authors": { "MihaelIsaev": "[email protected]" }, "source": { "git": "", "tag": "1.3.0" }, "platforms": { "ios": "8.0" }, "source_files": "Sources/SwifCron/**/*", "swift_version": "4.2" }

Thu, 07 Mar 2019 11:02:06 +0000 | https://tryexcept.com/articles/cocoapod/swifcron | CC-MAIN-2019-13 | refinedweb | 227 | 61.16 |

On Mon, 2016-11-07 at 18:06 +0100, Petr Vobornik wrote: > On 11/07/2016 05:49 PM, Martin Babinsky wrote: > > On 11/07/2016 05:43 PM, Justin Mitchell wrote: > >> I have been working on a python script to setup secure NFS exports using > >> kerberos that relies heavily on FreeIPA, and is in many ways the server > >> side compliment to ipa-client-automount. It attempts to automatically > >> discover the setup, and falls back to asking simple questions, in the > >> same way as ipa-server-install et al do. > >> > >> I'm not sure quite where it would fit best in the freeipa source tree, > >> perhaps under 'client' ? > >> Also, whats would be the best way to submit the script, as a patch or a > >> github pull request ? > >> > >> thanks > >> > >> > >> > > > > If it is a server-side code then it should go into ipaserver/ namespace. > > Could you describe the use case in more details? > > IIUIC it's about configuring NFS server against IPA and not IPA server > itself as NFS server. In that case it should be IMO in client package > because NFS server is also a client from IPA's perspective.

Advertising

Yes, it is to configure the NFS server, which is already an IPA client, to provide exports to other IPA clients which may like to use ipa-client-automount > > > > > We now prefer contributions in form of Github pull-requests. > Right Okay thanks, i will set that up. -- Manage your subscription for the Freeipa-devel mailing list: Contribute to FreeIPA: | https://www.mail-archive.com/freeipa-devel@redhat.com/msg36902.html | CC-MAIN-2018-26 | refinedweb | 249 | 67.38 |

Opened 8 years ago

Closed 5 years ago

#15587 closed Bug (duplicate)

django.forms.BaseModelForm._post_clean updates instance even when form validation fails

Description

This method will update the model instance with self.cleaned_data.

self.instance = construct_instance(self, self.instance, opts.fields, opts.exclude)

however, the cleaned_data might not be good. It is probably good to have a single line inserted before the first line of code of this method:

def _post_clean(self): if self._errors: return ...

Change History (7)

comment:1 Changed 8 years ago by

comment:2 Changed 8 years ago by

comment:3 Changed 7 years ago by

I have the same problem with model forms:

>>> from django.contrib.auth.models import User >>> from django.contrib.auth.forms import UserChangeForm >>> user = User.objects.all()[0] >>> form = UserChangeForm({'first_name': 'Vladimir', 'email': 'invalid'}, instance=user) >>> user.first_name u'' >>> form.is_valid() False >>> user.first_name u'Vladimir'

As you can see, this form changes provided instance after validation. This behavior is not expected and I'm considering this as a bug.

Or this is not a bug? Maybe this is a design decision to make it possible to validate form data in the

BaseModel.clean_fields method? This is odd for me.

Provided single-line patch in the ticket description isn't taking into account that validation error can be raised later by the model validation, so this is wouldn't fix this problem.

comment:4 Changed 7 years ago by

comment:5 Changed 7 years ago by

The question here is at which point the form is allowed to update the instance. Other tickets are also having problems here for their own reasons (#15995, #16423).

There is a strong argument for doing this while validating since ModelForm actually promises to fully validate the data (form and model). For this the instance needs to be updated.

However, there is case for reminding people that in current workflow validation implies updating the model to independently validate the model; so if anybody can create a patch for the docs explaining this.

Otherwise this will become a DDN and we need to discuss whether a form validation error means the field should not be updated on the model.

I'm afraid this bug report is very unclear - please give an example that shows an actual bug and re-open. Thanks! | https://code.djangoproject.com/ticket/15587 | CC-MAIN-2018-47 | refinedweb | 385 | 56.76 |

![: 7962

Hi,

I know this info may not be helpful.

I met a similar problem, and got stuck on it for 2 days.

Then I just try anthoer Linux host B, the problem disappeared.

After that, I reinstalled my Linux image on host A and updated it, and then the problem get solved.

Jun Jun_Zhang:

Could you please share the bootargs with us?

Huang,

Are you able to run 'dhcp' at the u-boot prompt? Does it give you the correct ip address of your target & host?

Are you using a direct connection between your EVM & PC?

Make sure your host's "/etc/exports" has the correct NFS mount listed.

Be sure to disable your host firewall.

Thank You,Michael RisleyTexas Instruments

Jun Zhang73904

Could you please share the bootargs with us?

hi, Jun

setenv bootargs 'mem=128M console=ttyO0,115200n8 root=/dev/nfs

nfsroot=10.2.1.10:/BoardNFS,nolock rw

ip=10.2.1.9:10.2.1.10:10.2.1.254:255.255.255.0:dm812x_ipnc:eth0:off'

In reply to Michael Risley:

hi, Michael

i didn't run 'dhcp' at the u-boot prompt, i use static ip only

yes, EVM & PC is direct connection

if i boot board use filesystem in flash, after boot successfully, i can mount host folder to board.

must i boot under 'dhcp' when booting use filesystem by NFS ?

In reply to huang jack:

Hi huang huang

Please ensure your file system is under nfsroot=10.2.1.10:/BoardNFS and was set under nfs server.

I use DM8168, and my EZSDK is 5_02_xx_xx,

My bootcmd is like this "bootcmd=dhcp;setenv serverip 172.16.1.62;tftpboot;bootm", and my board works well.

Good luck.

regards,

lei

In reply to Lei Wong:

hi, Lei

the nfs filesystem is ok, since i can mount it after board boot successfully.

i use static ip not 'dhcp'.

would you try static ip for a test ?

How did you populate the BoardNFS directory? That directory may not be "bootable"...as it may not have all the special files and permissions. It may also not be owned by root. Usually you would untar a rootfs image onto that directory. Since you can mount the NFS directory, you could tar up your target rootfs and untar onto your mounted NFS directory. A plain recursive copy usually doesn't copy special file properly.

Hi huang huang,

Sorry for the late reply. Have you fixed your problem?

I tested my board using static ip, and it failed.

I set it just like this "setenv bootargs 'console=ttyO2,115200n8 rootwait rw mem=256M earlyprink notifyk.vpssm3_sva=0xBF900000 vram=50M ti816xfb.vram=0:16M,1:16M,2:6M root=/dev/nfs nfsroot=172.16.1.62:/home/xiaoxiao/targetfs2 ip=172.16.1.61:172.16.1.62:172.16.1.1:255.255.255.0:dm816x-evm:eth0:off'"

The booting message shows as below.

--------------------------------------------------------------------------------------------------------------------

net eth0: attached PHY driver [Generic PHY] (mii_bus:phy_addr=0:01, id=282f013)

IP-Config: Complete:

device=eth0, addr=172.16.1.61, mask=255.255.255.0, gw=172.16.1.1,

host=dm816x-evm, domain=, nis-domain=(none),

bootserver=172.16.1.62, rootserver=172.16.1.62, rootpath=

PHY: 0:01 - Link is Up - 1000/Full

VFS: Unable to mount root fs via NFS, trying floppy.

VFS: Cannot open root device "nfs")

Backtrace:

[<c0046b44>] (dump_backtrace+0x0/0x110) from [<c0364b48>] (dump_stack+0x18/0x1c)

r7:cc813000 r6:00000000 r5:c002c014 r4:c04d9110

[<c0364b30>] (dump_stack+0x0/0x1c) from [<c0364bac>] (panic+0x60/0x17c)

[<c0364b4c>] (panic+0x0/0x17c) from [<c0009254>] (mount_block_root+0x1e0/0x220)

r3:00000000 r2:00000000 r1:cc82bf58 r0:c041a9dc

[<c0009074>] (mount_block_root+0x0/0x220) from [<c0009340>] (mount_root+0xac/0xc

c)

[<c0009294>] (mount_root+0x0/0xcc) from [<c00094d0>] (prepare_namespace+0x170/0x

1d4)

r4:c04d8a24

[<c0009360>] (prepare_namespace+0x0/0x1d4) from [<c0008784>] (kernel_init+0x114/

0x154)

r5:c0008670 r4:c04d89c0

[<c0008670>] (kernel_init+0x0/0x154) from [<c006aeec>] (do_exit+0x0/0x5e4)

r5:c0008670 r4:00000000

Hi, Lei

Sorry for the late reply, and thanks for your try. board still boot fail when using static IP. I'm waiting for the corresponding release SDK for my board.. | https://e2e.ti.com/support/processors/f/791/t/132915 | CC-MAIN-2019-13 | refinedweb | 685 | 75.81 |

# Lua in Moscow 2019: Interview with Roberto Ierusalimschy

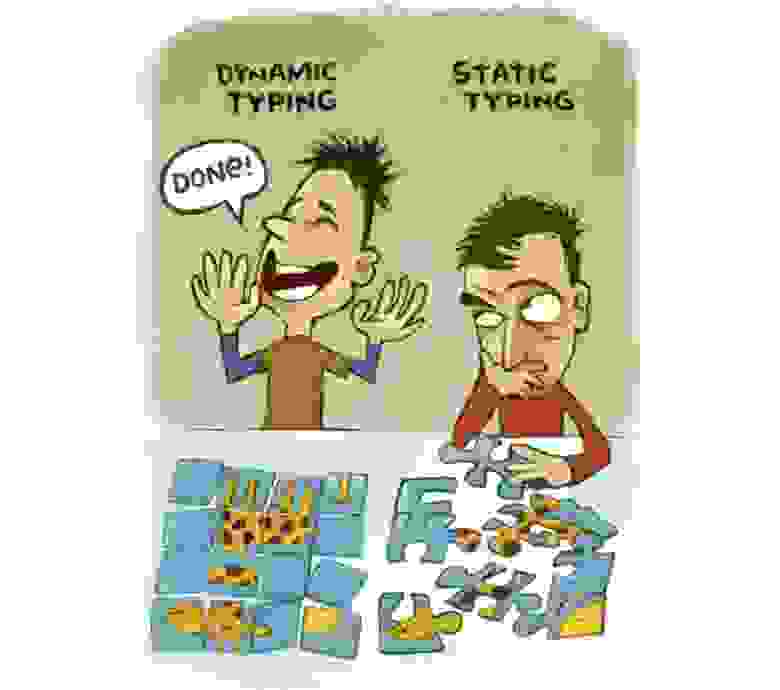

Some time ago, the creator of Lua programming language, Roberto Ierusalimschy, visited our Moscow office. We asked him some questions that we prepared with the participation of Habr.com users also. And finally, we’d like to share full-text version of this interview.

**— Let’s start with some philosophical matters. Imagine, if you recreated Lua from scratch, which three things would you change in Lua?**

— Wow! That’s a difficult question. There’s so much history embedded in the creation and development of the language. It was not like a big decision at once. There are some regrets, several of which I had a chance to correct over the years. People complain about that all the time because of compatibility. We did it several times. I’m only thinking of small things.

**— Global-by-default? Do you think this is the way?**

— Maybe. But it’s very difficult for dynamic languages. Maybe the solution will be to have no defaults at all, but would be hard to use variables then.

For instance, you would have to somehow declare all the standard libraries. You want a one-liner, `print(sin(x))`, and then you’ll have to declare ‘print’ and also declare ‘sin’. So it’s kinda strange to have declarations for that kind of very short scripts.

Anything larger should have no defaults, I think. Local-by-default is not the solution, it does not exist. It’s only for assignments, not for usage. Something we assign, and then we use and then assign, and there’s some error — completely mystifying.

Maybe global-by-default is not perfect, but for sure local-by-default is not a solution. I think some kind of declaration, maybe optional declaration… We had this proposal a lot of times — some kind of global declaration. But in the end, I think the problem is that people start asking for more and more and we give up.

(sarcastically) Yes, we are going to put some global declaration — add that and that and that, put that out, and in the end we understand the final conclusion will not satisfy most people and we will not put all the options everybody wants, so we don’t put anything. In the end, strict mode is a reasonable compromise.

There is this problem: more often than not we’re using fields inside the modules for instance, then you have the same problems again. It’s just one very specific case of mistakes the general solution should probably include. So I think if you really want that, you should use a statically typed language.

**— Global-by-default is also nice for small configuration files.**

— Yes, exactly, for small scripts and so on.

**— No tradeoffs here?**

— No, there are always tradeoffs. There’s a tradeoff between small scripts and real programs or something like that.

**— So, we’re getting back to the first big question: three things you would change if you had the chance. As I see it, you are quite happy with what we have now, is that right?**

— Well, it’s not a big change, but still… Our bad debt that became a big change is nils in tables. It’s something I really regret. I did that kind of implementation, a kind of hack… Did you see what I did? I sent a version of Lua about six months or a year ago that had nils in tables.

**— Nil values?**

— Exactly. I think it was called nils in tables — what’s called null. We did some hack in the grammar to make it somewhat compatible.

**— Why is it needed?**

— I’m really convinced that this is a whole problem of holes… I think that most problems of nils in arrays would disappear, if we could have [nils in tables]… Because the exact problem is not nils in arrays. People say we can’t have nils in arrays, so we should have arrays separated from tables. But the real problem is that we can’t have nils in tables! So the problem is with the tables, not the way we represent arrays. If we could have nils in tables, then we would have nils in arrays without anything else. So this is something I really regret, and many people don’t understand how things would change if Lua allowed nils in tables.

**— May I tell you a story about Tarantool? We actually have our own implementation of null, which is a CDATA to a null-pointer. We use it where gaps in memory are required. To fill positional arguments when we make remote calls and so on. But we usually suffer from it because CDATA is always converted to ‘true’. So nils in arrays would solve a lot of our problems.**

— Yeah, I know. That’s exactly my point — this would solve a lot of problems for a lot of people, but there’s a big problem of compatibility. We don’t have the energy to release a version that is so incompatible and then break the community and have different documentation for Lua 5 and Lua 6 etc. But maybe one day we’ll release it. But it’s a really big change. I think it should have been like that since the beginning — if it was, it would be a trivial change in the language, except for compatibility. It breaks a lot of programs, in very subtle ways.

**— What are the downsides except for compatibility?**

— Besides compatibility, the downside is that we would need two new operations, two new functions. Like ‘delete key’, because assigning nil would not delete the key, so we would have a kind of primitive operation to delete the key and really remove it from the table. And ‘test’ to check where exactly to distinguish between nil and absent. So we need two primitive functions.

**— Have you analyzed the impact of this on real implementations?**

— Yes, we released a version of Lua with that. And as I said, it breaks code in many subtle ways. There are people who do table.insert(f(x)) — a call to a function. And it’s on purpose, it’s by design that when a function doesn’t want to insert anything, it returns nil. So instead of a separate check «do I want to insert?», then I call a table.insert, and knowing that if it’s nil, it won’t be inserted. As everything in every language, a bug becomes a feature, and people use the feature — but if you change it, you break the code.

**— What about a new void type? Like nil, but void?**

— Oh no, this is a nightmare. You just postpone the problem, if you put another, then you need another and another and another. That’s not the solution. The main problem — well, not main, but one of the problems — is that nil is already ingrained in a lot of places in the language. For instance, a very typical example. We say: you should avoid nils in arrays, holes. But then we have functions that return nil and something after nil, so we get an error code. So that construction itself assumes what nil represents… For instance, if I want to make a list of returns of that function, just to capture all of these returns.

**— That’s why you have a hack for that. :)**

— Exactly, but you don’t have to use hacks for so primitive and obvious [issue]. But the way the libraries are built… I once thought of that — maybe the libraries should return false instead of nil — but it’s a half-cooked solution, it solves only a small part of the problem. The real problem, as I said, is that we should have nils in tables. If not, maybe we should not use nils as frequently as we do now. It’s all kinda messy. So if you create a void, these functions would still return a nil, and we’d still have this problem unless we create a new type and the functions would return void instead of nil.

**— Void could be used to explicitly tell that the key should be kept in a table — key with a void value. And nil can act as before.**

— Yes, that’s what I mean. All the functions in the libraries should return void or nil.

**— They can still return nil, why not?**

— Because we’d still have the problem that you cannot capture some functions.

**— But there won’t be a first key, only a second key.**

— No, there won’t be a second key, because the counting will be wrong and you’ll have a hole in the array.

**— Yes, so are you saying that you need a false metamethod?**

— Yes. My dream is something like that:

`{f(x)}`

You should capture all returns of the function `f(x)`. And then I can do `%x` or `#x`, and that will give me the number of returns of the function. That’s what a reasonable language should do. So creating a void will not solve that, unless we had a very strong rule that functions should never return nil, but then why do we need nil? Maybe we should avoid it.

**— Roberto, will there be a much stronger static analysis support for Lua? Like «Lua Check on steroids». I know it won’t solve all the problems, of course. You’re saying this is a feature for 6.0, if ever, right? So if in a 5.x there will be a strong static analysis tool — if man-hours and man-years were invested — would it really help?**

— No, I think a really strong static analysis tool is called… type system! If you want a really strong tool you should use a statically typed language, something like Haskell or even something with dependent types. Then you’ll have really strong analysis tools.

**— But then you don’t have Lua.**

— Exactly, Lua is for…

**— Imprecise? I really enjoyed your giraffe picture on static and dynamic types.**

— Yes, my last slide.

*The final slide from Roberto Ierusalimschy's talk «Why (and why not) Lua?»

at Lua in Moscow 2019 conference*

**— For our next prepared question, let’s return to that picture. If I got it right, your position is that Lua is a small nice handy tool for solving not very large tasks.**

— No, I think you can do some large tasks, but not with static analysis. I strongly believe in tests. By the way, I disagree with you on coverage, your opinion is we should not chase coverage… I mean, I fully agree that coverage does not imply full test, but non-coverage implies a zero percent test. So I gave a talk about a testing room — you were there in Stockholm. So I started my test with [a few] bugs — that’s the strangest thing — one of them was famous, the other was completely non-famous. It’s something completely broken in a header file from Microsoft, C and C++. So I search the web and nobody cares about it or even noticed it.

For instance, there’s a mathematical function, [modf()](https://en.cppreference.com/w/cpp/numeric/math/modf), where you have to pass a pointer to a double because it returns two doubles. We translate the integer part of the number or the fractional part. So this is a part of a standard library for a long time now. Then came C 99, and you need this function for floats. And the header file from Microsoft simply kept this function and declared another one as a macro. So it got this one into type casts. So it cast the double to float, ok, and then it cast the pointer to double for pointer to float!

**— Something is wrong in this picture.**

— This is a header file from Visual C++ and Visual C 2007. I mean, if you called this function once, with any parameters, and checked the results — it would be wrong unless it’s zero. Otherwise, any other value will be wrong. You would never ever use this function. Zero coverage. And then there’s a lot of discussions about testing… I mean, just call a function once, check the results! So it’s there, it’s been there for a long time, for many years nobody cared. One very famous was in Apple. [Something like](https://habr.com/ru/post/213525/) "`if… what… goto… ok`", it was something like that. Someone put another statement here. And then everything was going to ok. And there was a lot of discussions that you should have the rules, the brackets should be mandatory in your style, etc., etc. Nobody mentioned that there are a lot of other ifs here. That has never been executed…

**— There’s also a security problem as far as I remember.**

— Yes, exactly. Because they were only testing approved cases. They were not testing anything, because everything would be approved. It means there is not a single test case in the security application that checks whether it refuses some connection or whatever it is that it should refuse. So everyone discuss and say they should have brackets… They should have tests, minimum tests! Because nobody has ever tested that, that’s what I mean by coverage. It’s unbelievable how people don’t do basic tests. Because if they were doing all basic tests, then of course, it’s a nightmare to do all the coverage and execute all the lines, etc. People neglect even basic tests, so coverage is at least about the minimum. It is a way to call the attention to some parts of the program that you forgot about. It is a kind of guide on how to improve your tests a little.

**— What’s test coverage in Tarantool? 83%! Roberto, what’s Lua test coverage?**

— About 99.6. How many lines of code do you have? A million, hundreds of thousands? These are huge numbers. One percent of hundred thousand is a thousand lines of code that were never tested. You did not execute it at all. Your users don’t test anything.

**— So there are like 17 percent of Tarantool features that are not currently used?**

— I’m not sure if you want to unstack everything back to where we were… I think one of the problems with dynamic languages (and static languages for that matter) is that people don’t test stuff. Even if you have a static language, unless you have something — not even like Haskell, but Coq, — some proof system, you change that for that or that. No static analysis tool can catch these errors, so you do need tests. And if you have the tests, you detect global problems, rename misspellings, etc. All these kinds of errors. You should have these tests anyway, maybe sometimes it’s a little bit more difficult to debug, sometimes it’s not — depends on the language and the kind of bug. But the problem is that no static analysis tool can allow you to avoid tests. The tests, on the other hand… well, they never prove the absence of error, but I feel much more secure after all the tests.

**— We have a question about testing Lua modules. As a developer, I want to test some local functions which may be used later. The question is: we want to have a coverage of about 99 percent, but for the API this module produces, the number of functional cases it should produce is much lower than the functionality it supports internally.**

— Why is that, sorry?

**— There is some functionality which is not reachable by the public interface.**

— If there is functionality that is not reachable by the public interface, it shouldn’t be there, just erase it. Erase that code.

**— Just kill it?**

— Yes, sometimes I do that in Lua. There was some code coverage, I couldn’t get there or there or there, so I thought it was impossible and just removed the code. It’s not that common, but happened more than once. Those cases were impossible to happen, you just put an assertion to comment on why it cannot happen. If you cannot get inside your functions from the public API, it shouldn’t be there. We should code the public API with incorrect input, that’s essential for the tests.

**— Remove code, removal is good, it reduces complexity. Reduced complexity increases maintainability and stability. Keep it simple.**

— Yes, extreme programming had this rule. If it’s not in a test, then it doesn’t exist.

**— What languages inspired you when you created Lua? Which paradigms or functional specialties or parts of these languages did you like?**

— I designed Lua for a very specific purpose, it was not an academic project. That’s why when you ask me if I’d create it again, I say there’s lots of historical stuff on the language. I did not start with ‘Let me create the language I want or want to use or everybody needs etc. My problem was ‘This program here needs a configuration language for geologists and engineers, and I need to create some small language they could use with an easy interface. That’s why the API was always an integral part of the language, because it’s easier to be integrated. That was the goal. What I had in my background, it’s a lot of different languages at that time… about ten. If you want all of the background…

**— I was interested in languages that you wanted to include in Lua.**

— I was getting things from many different languages, whatever fitted the problem I had. The single biggest inspiration was the Modula language for syntax, but otherwise, it’s difficult to say because there are so many languages. Some stuff came from AWK, it was another small inspiration. Of course, Scheme and Lisp… I was always fascinated with Lisp since I started programming.

**— And still no macros in Lua!**

— Yes, there is much difference in syntax. Fortran, I think, was the first language… no, the first language I learned was Assembly, then came Fortran. I studied, but never used CLU. I did a lot of programming with Smalltalk, SNOBOL. I also studied, but never used Icon, it’s also very interesting. A lot came from Pascal and C. At the time I created Lua, C++ was already too complex for me — and that was before the templates, etc. It was 1991, and in 1993 Lua was started.

**— The Soviet Union fell and you started creating Lua. :) Were you bored with semicolons and objects when you started working on Lua? I would expect that Lua would have a similar syntax to C, because it is integrated to C. But…**

— Yes, I think it’s a good reason not to have similar syntax — so you don’t mix them, these are two different languages.

It’s something really funny and it’s connected to the answer you didn’t allow me [at the conference] to give on arrays starting at 1. My answer was too long.

When we started Lua, the world was different, not everything was C-like. Java and JavaScript did not exist, Python was in an infancy and had a lower than 1.0 version. So there was not this thing when all the languages are supposed to be C-like. C was just one of many syntaxes around.

And the arrays were exactly the same. It’s very funny that most people don’t realize that. There are good things about zero-based arrays as well as one-based arrays.

The fact is that most popular languages today are zero-based because of C. They were kind of inspired by C. And the funny thing is that C doesn’t have indexing. So you can’t say that C indexes arrays from zero, because there is no indexing operation. C has pointer arithmetic, so zero in C is not an index, it’s an offset. And as an offset, it must be a zero — not because it has better mathematical properties or because it’s more natural, whatever.

And all those languages that copied C, they do have indexes and don’t have pointer arithmetic. Java, JavaScript, etc., etc. — none of them have pointer arithmetic. So they just copied the zero, but it’s a completely different operation. They put zero for no reason at all — it’s like a cargo cult.

**— You’re saying it’s logical if you have a language embedded in C to make it with C-like syntax. But if you have a C-embedded language, I assume you have C programmers who want the code to be in C and not some other language, which looks like C, but isn’t C. So Lua users were never supposed to use C daily? Why?**

— Who uses C every day?

**— System programmers.**

— Exactly. That’s the problem, too many people use C, but should not be allowed to use it. Programmers ought to be certified to use C. Why is software so broken? All those hacks invading the world, all those security problems. At least half of them is because of C. It is really hard to program in C.

**— But Lua is in C.**

— Yes, and that’s how we learned how hard it is to program in C. You have buffer overflows, you have integer overflows that cause buffer overflows… Just get a single C program that you can be sure that no arithmetic goes wrong if people put any number anywhere and everything is checked. Then again, real portability issues — maybe sometimes in one CPU it works, but then it gets to the other CPU… It’s crazy.

For instance, very recently we had a problem. How do you know your C program does not do stack overflow? I mean stack depth, not stack overflow because you invaded… How many calls you have a right to do in a C program?

**— Depends on a stack size.**

— Exactly. What the standard says about that? If you code in C and then you do this function that calls this function that calls this function… how many calls can you do?

**— 16 thousand?**

— I may be wrong, but I think the standard says nothing about that.

**— I think there is nothing in the standard because it’s too dependent on the size.**

— Of course, it depends on the size of each function. It may be huge, automatic arrays in the function frame… So the standard says nothing and there is no way to check whether a call will be valid. So you may have a single problem if you have three step calls, it can crash and still be a valid C program. Correct according to the standard — though it’s not correct because it crashes. So it’s very hard to program in C, because there are so many… Another good example: what is the result when you subtract two pointers? No one here works with C?

**— No, so don’t grill them. But C++ supports different types.**

— No, C++ has the same problem.

**— What’s the type of declaration? `ptrdiff_t`?**

— Exactly, `ptrdiff_t` is a signed type. So typically, if you have a standard memory the size of your word and you subtract two pointers in this space, you cannot represent all the sizes in the signed type. So, what does the standard say about that?

When you subtract two pointers, if the answer fits in a pointer diff, then that is the answer. Otherwise, you have undefined behavior. And how do you know if it fits? You don’t. So whenever you subtract two pointers, usually you know that’s out of standard, that if you’re pointing to anything larger than at least 2 bytes, then the larger size would be half the size of the memory, so everything is ok.

So you’re only having a problem if you’re pointing to bytes or characters. But when you do that, you have a real problem, you can’t do pointer arithmetic without worrying that you have a string larger than half of the memory. And then I can’t just compute the size and store in a pointer diff type because it’s wrong.

That’s what I mean about having a secure C or C++ program that’s really safe.

**— Have you considered implementing Lua in a different language? Change it from C to something else?**

— When we started, I considered C++, but as I said I gave up using it because of complexity — I cannot learn the whole language. It should be useful to have some stuff from C++ but… even today I don’t see any language that would do.

**— Can you explain why?**

— Because I have no alternatives. I can only explain why against other languages. I’m not saying C is perfect or even good, but it’s the best. To explain why, I need to compare it with other languages.

**— What about JVM?**

— Oh, JVM. Come on, it doesn’t fit in half the hardware… Portability is the main reason, but performance too. In JVM it’s a little better than .NET, but it’s not that different. A lot of things that Lua does we can’t do with JVM. You cannot control the garbage collector for instance. You have to use JVM garbage collector because you can’t have a different garbage collector implemented on top of JVM. JVM is also a huge consumer of memory. When any Java program starts to say hello, it’s like 10 MB or so. Portability is an issue not because it wasn’t ported, but because it cannot be ported.

**— What about JVM modifications like Mobile JVM?**

— That’s not JVM, that’s a joke. It’s like a micro edition of Java, not Java.

**— How about other static languages like Go or Oberon? Could they be the basis for Lua if you created it today?**

— Oberon… might be, it depends… Go, again, has a garbage collector and has a runtime too big for Lua. Oberon would be an option, but Oberon has some very strange things, like you almost don’t have constants, if I recall correctly. Yeah, I think they removed const from Pascal to Oberon. I had a book on Oberon and loved Oberon. Its [system](https://en.wikipedia.org/wiki/Oberon_(operating_system)) was unbelievable, it’s really something.

I remember that in 1994 I saw a demonstration of Oberon and Self. You know Self? It’s a very interesting dynamic language with jit-compilers etc… I saw these demos a week apart, and Self was very smart, they used some techniques from cartoons to disguise the slowness of the operations. Because when you opened something, it was like ‘woop!’ — first it reduces a little, then expands with some effects. It was implemented very well, these techniques they used to simulate movement…

Then a week later we saw a demo of Oberon, it was running on like 1/10th of hardware for Self — there was this very old small machine. In Oberon you click and then just boom, everything works immediately, the whole system was so light.

But for me it’s too minimalistic, they removed constants and variant types.

**— Haskell?**

— I don’t know Haskell or how to implement Lua in Haskell.

**— And what’s your attitude to languages like Python or R or Julia as a basis for future implementations of Lua?**

— I think every one of these has its uses.

R seems to be good for statistics. It’s very domain specific, done by people in the area, so this is a strength.

Python is nice, but I had personal problems with it. I thought I mentioned it in my talk or the interview. That thing about not knowing the whole language or not using it, the subset fallacy.

We use Python in our courses, teaching basic programming — just a small part, loops and integers. Everybody was happy, and then they said it would be nice to have some graphical applications, so we needed some graphical library. And almost all graphical libraries, you get the API… But I don’t know Python enough, this is much-advanced stuff. It has the illusion it’s easy and I have all these libraries for everything, but it’s either easy or you have everything.

So when you start using the language, then you start: oh, I have to learn OOP, inheritance, whatever else. Every single library. It looks like authors take pride in using more advanced language features in their API to show I don’t know what. Function calls, standard types, etc. You have this object, and then if you want another thing then you have to create another object…

Even the pattern matching, you can do some simple stuff, but usually the standard pattern matching is not something you do. You do a matching, an object returns a result and then you call methods on that object result to get the real result of the match. Sometimes there is a simpler way to use but it’s not obvious, it’s not the way most people use.

Another example: I was teaching a course on pattern matching and wanted to use Perl-like syntax, and I couldn’t use Lua because of a completely different syntax. So I thought Python would be the perfect example. But in Python there are some direct functions for some basic stuff but for anything more complex you’d have to know objects and methods etc. I just wanted to do something and have the result.

**— What did you end up using?**

— I used Python and explained to them. But even Perl is much simpler, you do the match and the results are $1, $2, $3, it’s much easier, but I don’t have the courage to use Perl, so…

**— I was using Python for two years before I noticed there were decorators. (question by Yaroslav Dynnikov from Tarantool team)**

— Yes, and when you want to use a library, then you have to learn this stuff and you don’t understand API etc. Python gives an illusion that it’s easy but it’s quite complex.

...And Julia, I don’t know much about Julia, but it reminded me of LuaJIT in the sense that sometimes it looks like user’s pride. You can have very good results but you have to really understand what’s going on. It’s not like you write code and get good results. No, you write code and sometimes the results are good, sometimes they are horrible. And when the results are horrible, you have a lot of good tools that show you the intermediate language that was once generated, you check it and then you go through all this almost assembly code. Then you realize: oh, it’s not optimizing that because of that. That’s the problem of programmers, they like games and sometimes they like stuff because it’s difficult, not because it’s easy.

I don’t know much about Julia, but I once saw a talk about it. And the guy talking, he was the one to have this point of view: see how nice it is, we wrote this program and it’s perfect. I don’t remember much, something about matrix multiplication I guess. And then the floats are perfect, then the doubles are perfect, and then they put complex [numbers]… and it was a tragedy. Like a hundred times slower.

(sarcastically) ‘See how nice it is, we have this tool, we can see the whole assembly [listing], and then you go and change that and that and that. See how efficient this is’. Yes, I see, I can program in assembly directly.

But that was just one talk. I studied a little R and have some user experience with Python for small stuff.

**— What do you think of Erlang?**

— Erlang is a funny language. It has some really good uses, fault tolerance is really interesting. But they claim it’s a functional language and the whole idea of the functional language is that you don’t have a state.

And Erlang has a huge hidden state in the messages that are sent and not yet received. So each little process is completely functional but the program itself is completely non-functional.

It’s a mess of hidden data that is much worse than global variables because if it were global variables, you would print them. Messages that are the real state of your system. Every single moment, what’s the state of the system? There are all these messages sent here and there. It’s completely non-functional, at all.

**— So Erlang lies about being functional and Python lies about being simple. What does Lua lie about?**

— Lua lies a bit about being small. It’s still smaller than most other languages, but if you want a really small language then Lua is larger than you want it to be.

**— What’s a small language then?**

— Forth is, I love Forth.

**— Is there a room for a smaller version of Lua?**

— Maybe, but it’s difficult. I love tables but tables are not very small. If you want to represent small stuff, the whole idea behind tables will not suit you. It would be syntax of Lua, we’d call it Lua but it’s not Lua.

It would be just like Java micro edition. You call it Java but does it have multi-threading? No, it doesn’t. Does It have a reflection? No, it doesn’t. So why use it? It has a syntax of Java, the same type system but it’s not Java at all. It’s a different language that is easier to learn if you know Java but it’s not Java.

If you want to make a small language that looks like Lua but Lua without tables is not… Probably you should have to declare tables, something like FFI to be able to be small.

**— Are there any smaller adaptations of Lua?**

— Maybe, I don’t know.

**— Is Lua ready for pure functional programming? Can you do it with Lua?**

— Of course, you can. It’s not particularly efficient but only Haskell is really efficient for that. If you start using monads and stuff like that, create new functions, compose functions etc… You can do [that] with Lua, it runs quite reasonably, but you need implementation techniques different from normal imperative languages to do something really efficient.

**— Actually, there is a library for functional programming in Lua.**

— Yes, it’s reasonable and usable, if you do really need performance; you can do a lot of stuff with it. I love functional stuff and I do it all the time.

**— My question is more about the garbage collector, because we only have only mutable objects and we have to use them efficiently. Will Lua be good for that?**

— I think a new incarnation of garbage collector will help a lot, but again…

**— Young die young? The one that seems to work with young objects?**

— Exactly, yes. But as I said even with the standard garbage collector we don’t have optimal performance but it can be reasonable. More often you don’t even need that performance for most actions unless you are writing servers and having big operations.

**— What functional programming tasks do you perform in Lua?**

— A simple example. My book, I’m writing my own format and I have a formatter that transforms that in LaTex or DocBook. It’s completely functional, it has a big pattern matching… It’s slightly inspired by LaTex but much more uniformed. There’s @ symbol instead of backslash, a name of a macro and one single argument in curly brackets. So I have gsub that recognizes this kind of stuff and then it calls a function, the function does something and returns something. It’s all functional, just functions on top of functions on top of functions, and the final function gives a big result.

**— Why don’t you program with LaTeX?**

— Plain LaTeX? First, it’s too tricky for a lot of stuff and so difficult. I have several things that I don’t know how to do in LaTex. For example, I want to put a piece of inline code inside a text. Then there is a slash verb, standard stuff. But slash verb gives fixed space. And the space between stuff is never right. All real spaces are variable, it depends on how the line is adjusted, so it expands in some spaces and compacts in others depending on a lot of stuff. And those spaces are fixed, so sometimes they look too large, sometimes too small. It also depends on what you put in code.

**— But you still render your own format to LaTeX?**

— Yes, but with a lot of preprocessing. I write my own verb but then it changes and becomes not a verb but a lot of stuff. For example, when I write 3+1 I write a very small space here. In verb, if I don’t put any space here, it shrinks, and if I do, it’s too large. So I do the preprocessing, inserting a variable space. It’s very small but can be a little larger if it needs to adjust. But if I put ‘and’ after 1 then I put a larger space. This function here does all that. This is a small example but there are other things…

**— Do you have a source?**

— I do have the source, it is in the [git](https://github.com/lua/lua/blob/master/manual/manual.of). The program’s called [2html](https://github.com/lua/lua/blob/master/manual/2html). The current version only generates HTML… Sorry, that’s a kind of a mess. I created it for a book but also another one for the manual. The one in the git is for the manual. But the other one is more complicated and not public, I can’t make it public. But the main problem is that TeX is not there. It’s almost impossible to process TeX files without TeX itself.

**— Is it not machine-readable?**

— Yes, it’s not machine-readable. I mean, it is readable because TeX reads it. It’s so hard to test, so many strange rules etc. So this is much more uniformed and as I said I generate DocBook format, sometimes I need it. That started when I had this contract for a book.

**— So you use 2html to generate DocBook?**

— Yes, it generates DocBook directly.

**— Ok, thank you very much for the interview!**

---

If you have any more questions, you can ask them in the [Lua Mailing List](https://www.lua.org/lua-l.html). See you soon! | https://habr.com/ru/post/459466/ | null | null | 6,527 | 74.9 |

Hello everyone,

I've recently been introduced to arrays in my java module and i'm stuck on a question:

Write a program which reads a sequence of words, and prints a count of the number of distinct words. An example of input/output is

should I stay or should I go

5 distinct words

Could anybody explain to me how I would go about checking the array to see if each word has occurred already? Is linear searching the best route to take? Thanks for any help given. Oh and class Console is just a more convenient version of ConsoleReader()

Even though this is probably all wrong, here is my attempted code so far:

Code Java:

public class DistinctWords { public static void main(String[] args) { String[] words = new String[10]; // Allow up to 10 words int count=0; int distinctWord=0; System.out.println("Enter sentence:"); while(!Console.endOfFile()) { words[count]=Console.readToken(); count++; } int i=1; while(i<count && !words[i].equals(words[i-1])) { i++; } if(i<count) // found System.out.println("Found"); }//end main }//end class | http://www.javaprogrammingforums.com/%20collections-generics/8024-help-array-strings-linear-search-printingthethread.html | CC-MAIN-2018-09 | refinedweb | 180 | 62.78 |

The openide was designed around TopManager. That was not bad decision then, but

as seen from currrent point of view the TopManager connects too much

functionality together and makes separation of openide into smaller pieces hard.

In present days it is obsolete because of Lookup and some parts has already been

modified to use it, but still there is a bunch of other libraries that need to

be changed not to depend on TopManager.

ErrorManager.getDefault () should simplify life of all module writers:

issue 16854

Lookup should be enhanced to allow "standard" registration of entries

- issue 14722

Probably all libraries which are not yet fixed should be listed here,

and separate tasks filed.

Also we need a task for someone to write some kind of test suite that

can be automated and which checks that library separation is not

violated: perhaps that things are separately compilable (will not work

for e.g. TMUtil classes); or that all classes reachable (at compile

time) from a given JAR's entry points can be loaded with no

NoClassDefFoundError's. The latter might be possible using a bytecode

analyzer, say. Such a test will keep us honest. See issue #17431.

So is this task FIXED or what?

Well, I am not sure. We can continue to work on additional stuff -

move of org.openide.src.* to java modules, etc. In next versions. I'll

change the target milestone and assign it to myself to specify the

subtasks.

A branch sepration_19443 has been created for modules nbbuild, core

and openide. The goal is to remove compat.jar and cleanup

interdependencies between different parts of openide while still

maintaining compatibility.

A branch separation_19443 has been created for modules nbbuild,

core and openide. The goal is to remove compat.jar and cleanup

interdependencies between different parts of openide while still

maintaining compatibility.

Work finished, updated with main trunk and available in xtest_19433

branch. The xtest has yet to be updated. Use:

cvs co -r xtest_19433 nbbuild core openide xtest

to get that branch.

The issue is not blocked by issue 25807, the xtest framework does not

work. Has to be solved before we can integrate it.

Jesse, I have merged the xtest_19433 into the trunk and then remove

the changes. Trung made me recognize that you are the person that

should check the changes in because it most of all affects module

system and you are the responsible owner of it.

So please do the review, do changes which you want, and then apply the

xtest_19433 branch. Please keep in mind that these changes require a

work by release engineering (sigtest) and xtest (use of lib instead of

lib/ext) which should be done in synch in one day.

I'd approciate if the changes were done soon in release cycle, because

of possible hidden problems and also because this is necessary for

clean up of the APIs.

Created attachment 6933 [details]

Revised patch

I have attached a complete patch (nb_all, nbextra) against the current

trunk. It compiles and passes unit tests OK.

Unfortunately the goal of maintaining backward compatibility does not

work. I tried it with AbstractNode: I made a class:

package org;

import org.openide.nodes.*;

public class Foo {

public static void main(String[] x) {

AbstractNode n = new AbstractNode(Children.LEAF);

System.out.println(n.getCookieSet());

}

}

and compiled against a copy of AbstractNode.java that had getCookieSet

marked as public. I confirmed that the compiled class would run

against the patched AbstractNode.class but not regular openide.jar.

I then tried to run this class (precompiled) using internal execution

in an IDE built with this patch. It threw a VerifyError, indicating

that to the VM, getCookieSet is still protected.

Which is what I would expect: essentially the VM now sees:

public class AbstractNodePatch extends Node {

// ...

public abstract CookieSet getCookieSet();

}

public class AbstractNode extends AbstractNodePatch {

// ...

protected CookieSet getCookieSet() {...}

}

so classes compiled against AbstractNode see the method as protected,

not public.

So I have a number of problems with this patch.

1. Backward compatibility does not, in fact, work.

2. Some of the progress we previously made using openide-compat.jar

for patching is apparently gone in this system. For example,

CompilerGroup's add/removeCompilerListener is no longer final. And

Toolbar's bogus fields are now once again available for modification

to someone compiling from trunk source. So we actually have a

regression in the list of things which we successfully made clean

while keeping compatibility: even assuming we fix #1, we will have

reverted some of the APIs to their older, pre-cleanup state.

3. The stated goals of this issue report are not covered by the

current patch: it says "The goal is to remove compat.jar and cleanup

interdependencies between different parts of openide while still

maintaining compatibility.", of which we did not do the first (it is

only moved and changed format, not removed) and did not do the second.

So really this patch should be a sub-issue and keep #19443 as a

tracking issue.

4. There is not a clear explanation anywhere here of what is solved by

using the superclass patching that did not work using simple bytecode

overriding and the compatkit. If we are going to change how we handle

backwards compatibility, to a more complicated system, there must be

some justification of what we will get from it. Maybe you told me at

some point, but I cannot remember now, and it is not written down here.

5. For Martin specifically: I know I have asked before, but again

please consider removing Module.java from xtest ASAP; the patching

required to make a org.netbeans.core.modules.Module is getting really

ugly, and I have lost track of what changes there are in core.jar not

in xtest-ext.jar. I tried to apply some changes here, but I'm not at

all sure I got it right. The tests do seem to run as expected.

Concerning XTest, Module.java patch is not available in XTest anymore

(xtest_19433 branch), all the required functinality was moved directly

to Module.java in core (see lines 803 to 826).

Well, if "1. Backward compatibility does not, in fact, work." then I

am pretty stupid. I tried that using scripting console and it worked

well, but if that does not work in Java the whole thing is

meaningless. I try to check it out.

"Module.java patch is not available in XTest anymore (xtest_19433

branch)": here is another very good reason why a branch base tag is

nice! Module.java has no xtest_19433 tag at all, but it also has no

build tags, so there is no indication whatsoever in this file that it

does not exist on the branch (such as a dead rev). If it is supposed

to be deleted on a branch, you actually have to delete it... I spent

some time trying to patch it. Is this file

(xtest/src/org/netbeans/core/modules/Module.java) in fact supposed to

be deleted as part of the patch? (Don't worry, I'm not going to commit

anything in nb_all/xtest/ nor nbextra/xtest/, just asking so I can

test the patch in full first.)

Please switch to issue #26126 for any further comments re. the

superclass replacement patch.

Sorry Jesse, I forgot to create branch tag, I just created a simple

tag, so Module.java and org.netbeans.Main.java are supposed to be

deleted. Now the probem should be corrected (I created xtest_19433 branch)

OK, well I am creating a new branch as part of #26126 anyway. I will

make sure Main.java and Module.java are deleted in this branch in

xtest sources.

Things which are proposed:

- do #20898, i.e. move all of org.openide.src plus SourceCookie to

java.netbeans.org

- make a new autoload module for org.openide.execution plus

ExecCookie; some kind of interaction with core/execution, i.e.

core/execution serves as an impl companion module

- make a new deprecated autoload module for org.openide.compiler plus

CompilerCookie plus CompilerSupport; core/compiler serves as (again

deprecated) impl companion module

- make a new deprecated autoload module for general unwanted stuff

from openide; initially: all of org.openide.debugger and

DebuggerCookie; ExecSupport; TopManager, perhaps, if its remaining

useful methods can be placed elsewhere; maybe other stuff?

I will create a branch off the CVS trunk to hold such changes. Petr Z.

and I will work on it. Changes will be merged back to trunk if and

when they are "compatible", i.e. existing binary modules run

unchanged. Note that modules might still not run *usefully* due to

project changes - e.g. an old module with a CompilerType will not do

anything useful if 4.0 projects no longer uses CompilerType at all -

but at least (1) they should not throw linkage errors, (2) until 4.0

projects are merged, the trunk will continue to run with old-style

execution, compilation, and debugging using the 3.4 UI.

Created branch on a few modules:

cvs rtag -b -r separation_19443_a separation_19443_a_branch

core_nowww_1 openide_nowww_1 java_nowww_1

Interesting summary from Yarda:

Adding nbbuild and classfile to the set of branched module under

separation_19443_a_branch.

Jesse, I have the branch now.

Please devide the separation to subtasks and assign some of them to me.

Well, I am starting to break stuff up. The problem is that at this

early stage, I am still not entirely sure what kinds of problems we

will run into. So it is hard to assign subtasks: I don't yet know what

will need to be done. I will try to commit a snapshot of what I am

working on rather soon. So far I am creating submodules

openide/deprecated and java/srcmodel.

OK.

Do you want to move the <stuff> to openide/deprecated first and just

then try to make those core/<compiler stuff>, core/<execution stuff>

and <rests stuff> submodules or you have changed the plan?

Well, for now I'm moving a lot of classes to openide/deprecated and

java/srcmodel. If I have to create openide/compiler now, I will, but

it might be easier to wait on that. Creating proper core submodules is

a lower priority, I guess. For now I'm still trying to figure out

which classes are not going to compile and which should be moved around.

First commit on the branch, take a look. First priority right now is

to get rid of usage of TopManager. Two categories here:

1. TopManager methods that have a proper replacement already. E.g.

systemClassLoader -> Lookup.lookup(ClassLoader).

2. Methods that have no equivalent yet. E.g. getIO, createDialog,

setStatusText, exit. Need new APIs somewhere. I am thinking about

using WindowManager for some of these, as it is logical enough... not

yet sure whether it is acceptable to have a bunch of other APIs

depending on WindowManager, though.

#1 you could start working on right away if you wanted - just try

compiling openide/build.xml and look at the breakage. For #2, I will

try to start writing such APIs.

I see. If it wait till tommorow I start to work on #1 morning (I'm

leaving right now :-( ).

Current status: org.openide.src is moved to java/srcmodel (except for

documentation etc.), and lib/openide-deprecated.jar contains dead

classes such as TopManager. IDE w/ just java module seems to run fine.

The main task now is to make core*.jar not depend on

openide-deprecated.jar, permitting that JAR to be moved to the

autoload area for eventual retirement. This is important: if core.jar

depends on TopManager, then it depends on Debugger, which depends on

ConstructorElement, which is in

netbeans/modules/autoload/java-src-model.jar. That means that if you

ran the debugger and tried to set breakpoints it is possible there

would be NoClassDefFoundError's. This needs to get cleaned up.

I'm working on the core*.jar to not depend on openide/deprecated.

Removing the dependencies on TopManager.

Checked just first part, will continue.

But there seems to be some problems, especially with Project dependencies.

During the moving it would be nice to clean *.util.* package. Issue

27910 and issue 27911 describe the task. I've added them here, but if

you think they should not be here, remove them.

#27911 maybe. #27910 we could do any time, I don't think it's

important now.

BTW comments requested on newly introduced interfaces (found via

lookup) which replace some TopManager functionality:

void org.openide.awt.HtmlBrowser.URLDisplayer.showURL(URL)

[Radim said maybe this is unnecessary, you can make a new

BrowserComponent and call setURL, and that handles internal vs.

external browsers and browser selection choice correctly - pending]

Object org.openide.DialogDisplayer.notify(NotifyDescriptor)

Dialog org.openide.DialogDisplayer.createDialog(DialogDescriptor)

void org.openide.LifecycleManager.saveAll()

void org.openide.LifecycleManager.exit()

OutputWriter org.openide.windows.IOProvider.getStdout()

InputOutput org.openide.windows.IOProvider.getIO(String, boolean)

Basically these are just the result of splitting a few methods off

into their own interfaces. It should now be easier to e.g. write unit

tests exercising some but not all former TM functionality - for

example, dump a trivial impl of DialogDisplayer into

META-INF/services/ and you can test code that involves displaying

dialogs (as a lot of openide code does, I am afraid). No need to have