url stringlengths 58 61 | repository_url stringclasses 1

value | labels_url stringlengths 72 75 | comments_url stringlengths 67 70 | events_url stringlengths 65 68 | html_url stringlengths 46 51 | id int64 600M 2.05B | node_id stringlengths 18 32 | number int64 2 6.51k | title stringlengths 1 290 | user dict | labels listlengths 0 4 | state stringclasses 2

values | locked bool 1

class | assignee dict | assignees listlengths 0 4 | milestone dict | comments listlengths 0 30 | created_at timestamp[ns, tz=UTC] | updated_at timestamp[ns, tz=UTC] | closed_at timestamp[ns, tz=UTC] | author_association stringclasses 3

values | active_lock_reason float64 | draft float64 0 1 ⌀ | pull_request dict | body stringlengths 0 228k ⌀ | reactions dict | timeline_url stringlengths 67 70 | performed_via_github_app float64 | state_reason stringclasses 3

values | is_pull_request bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/4963 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4963/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4963/comments | https://api.github.com/repos/huggingface/datasets/issues/4963/events | https://github.com/huggingface/datasets/issues/4963 | 1,368,201,188 | I_kwDODunzps5RjRfk | 4,963 | Dataset without script does not support regular JSON data file | {

"avatar_url": "https://avatars.githubusercontent.com/u/326577?v=4",

"events_url": "https://api.github.com/users/julien-c/events{/privacy}",

"followers_url": "https://api.github.com/users/julien-c/followers",

"following_url": "https://api.github.com/users/julien-c/following{/other_user}",

"gists_url": "https... | [] | closed | false | null | [] | null | [

"Hi @julien-c,\r\n\r\nOut of the box, we only support JSON lines (NDJSON) data files, but your data file is a regular JSON file. The reason is we use `pyarrow.json.read_json` and this only supports line-delimited JSON. "

] | 2022-09-09T18:45:33Z | 2022-09-20T15:40:07Z | 2022-09-20T15:40:07Z | MEMBER | null | null | null | ### Link

https://huggingface.co/datasets/julien-c/label-studio-my-dogs

### Description

<img width="1115" alt="image" src="https://user-images.githubusercontent.com/326577/189422048-7e9c390f-bea7-4521-a232-43f049ccbd1f.png">

### Owner

Yes | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4963/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4963/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5492 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5492/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5492/comments | https://api.github.com/repos/huggingface/datasets/issues/5492/events | https://github.com/huggingface/datasets/issues/5492 | 1,566,604,216 | I_kwDODunzps5dYHu4 | 5,492 | Push_to_hub in a pull request | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https:... | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

},

{

"color": "7057ff",

"default": true... | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/32437151?v=4",

"events_url": "https://api.github.com/users/nateraw/events{/privacy}",

"followers_url": "https://api.github.com/users/nateraw/followers",

"following_url": "https://api.github.com/users/nateraw/following{/other_user}",

"gists_url": "https:... | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/32437151?v=4",

"events_url": "https://api.github.com/users/nateraw/events{/privacy}",

"followers_url": "https://api.github.com/users/nateraw/followers",

"following_url": "https://api.github.com/users/nateraw/following{/other_user}",

"gists... | null | [

"Assigned to myself and will get to it in the next week, but if someone finds this issue annoying and wants to submit a PR before I do, just ping me here and I'll reassign :). ",

"I would like to be assigned to this issue, @nateraw . #self-assign"

] | 2023-02-01T18:32:14Z | 2023-10-16T13:30:48Z | 2023-10-16T13:30:48Z | MEMBER | null | null | null | Right now `ds.push_to_hub()` can push a dataset on `main` or on a new branch with `branch=`, but there is no way to open a pull request. Even passing `branch=refs/pr/x` doesn't seem to work: it tries to create a branch with that name

cc @nateraw

It should be possible to tweak the use of `huggingface_hub` in `pus... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5492/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5492/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5577 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5577/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5577/comments | https://api.github.com/repos/huggingface/datasets/issues/5577/events | https://github.com/huggingface/datasets/issues/5577 | 1,598,587,665 | I_kwDODunzps5fSIMR | 5,577 | Cannot load `the_pile_openwebtext2` | {

"avatar_url": "https://avatars.githubusercontent.com/u/5126316?v=4",

"events_url": "https://api.github.com/users/wjfwzzc/events{/privacy}",

"followers_url": "https://api.github.com/users/wjfwzzc/followers",

"following_url": "https://api.github.com/users/wjfwzzc/following{/other_user}",

"gists_url": "https:/... | [] | closed | false | null | [] | null | [

"Hi! I've merged a PR to use `int32` instead of `int8` for `reddit_scores`, so it should work now.\r\n\r\n"

] | 2023-02-24T13:01:48Z | 2023-02-24T14:01:09Z | 2023-02-24T14:01:09Z | NONE | null | null | null | ### Describe the bug

I met the same bug mentioned in #3053 which is never fixed. Because several `reddit_scores` are larger than `int8` even `int16`. https://huggingface.co/datasets/the_pile_openwebtext2/blob/main/the_pile_openwebtext2.py#L62

### Steps to reproduce the bug

```python3

from datasets import load... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5577/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5577/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/4625 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4625/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4625/comments | https://api.github.com/repos/huggingface/datasets/issues/4625/events | https://github.com/huggingface/datasets/pull/4625 | 1,293,163,744 | PR_kwDODunzps46zELz | 4,625 | Unpack `dl_manager.iter_files` to allow parallization | {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url"... | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"Cool thanks ! Yup it sounds like the right solution.\r\n\r\nIt looks like `_generate_tables` needs to be updated as well to fix the CI"

] | 2022-07-04T13:16:58Z | 2022-07-05T11:11:54Z | 2022-07-05T11:00:48Z | CONTRIBUTOR | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/4625.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4625",

"merged_at": "2022-07-05T11:00:48Z",

"patch_url": "https://github.com/huggingface/datasets/pull/4625.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/... | Iterate over data files outside `dl_manager.iter_files` to allow parallelization in streaming mode.

(The issue reported [here](https://discuss.huggingface.co/t/dataset-only-have-n-shard-1-when-has-multiple-shards-in-repo/19887))

PS: Another option would be to override `FilesIterable.__getitem__` to make it indexa... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4625/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4625/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/6016 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/6016/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/6016/comments | https://api.github.com/repos/huggingface/datasets/issues/6016/events | https://github.com/huggingface/datasets/pull/6016 | 1,798,968,033 | PR_kwDODunzps5VNEvn | 6,016 | Dataset string representation enhancement | {

"avatar_url": "https://avatars.githubusercontent.com/u/63643948?v=4",

"events_url": "https://api.github.com/users/Ganryuu/events{/privacy}",

"followers_url": "https://api.github.com/users/Ganryuu/followers",

"following_url": "https://api.github.com/users/Ganryuu/following{/other_user}",

"gists_url": "https:... | [] | open | false | null | [] | null | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_6016). All of your documentation changes will be reflected on that endpoint.",

"It we could have something similar to Polars, that would be great.\r\n\r\nThis is what Polars outputs: \r\n* `__repr__`/`__str__` :\r\n```\r\nshape... | 2023-07-11T13:38:25Z | 2023-07-16T10:26:18Z | null | NONE | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/6016.diff",

"html_url": "https://github.com/huggingface/datasets/pull/6016",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/6016.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/6016"

} | my attempt at #6010

not sure if this is the right way to go about it, I will wait for your feedback | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/6016/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/6016/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/2734 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2734/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2734/comments | https://api.github.com/repos/huggingface/datasets/issues/2734/events | https://github.com/huggingface/datasets/pull/2734 | 956,844,874 | MDExOlB1bGxSZXF1ZXN0NzAwMzc4NjI4 | 2,734 | Update BibTeX entry | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",... | [] | closed | false | null | [] | null | [] | 2021-07-30T15:22:51Z | 2021-07-30T15:47:58Z | 2021-07-30T15:47:58Z | MEMBER | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/2734.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2734",

"merged_at": "2021-07-30T15:47:58Z",

"patch_url": "https://github.com/huggingface/datasets/pull/2734.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/... | Update BibTeX entry. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2734/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2734/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1506 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1506/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1506/comments | https://api.github.com/repos/huggingface/datasets/issues/1506/events | https://github.com/huggingface/datasets/pull/1506 | 763,846,074 | MDExOlB1bGxSZXF1ZXN0NTM4MTc1ODEz | 1,506 | Add nq_open question answering dataset | {

"avatar_url": "https://avatars.githubusercontent.com/u/28673745?v=4",

"events_url": "https://api.github.com/users/Nilanshrajput/events{/privacy}",

"followers_url": "https://api.github.com/users/Nilanshrajput/followers",

"following_url": "https://api.github.com/users/Nilanshrajput/following{/other_user}",

"g... | [] | closed | false | null | [] | null | [

"@SBrandeis thanks for the review, I applied your suggested changes, but CI is failing now not sure about the error.",

"Many thanks @Nilanshrajput !\r\nThe failing tests on CI are not related to your changes, merging master on your branch should fix them :)\r\nIf you're interested in what causes the CI to fail,... | 2020-12-12T13:46:48Z | 2020-12-17T15:34:50Z | 2020-12-17T15:34:50Z | CONTRIBUTOR | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/1506.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1506",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/1506.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1506"

} | Added nq_open Open-domain question answering dataset.

The NQ-Open task is currently being used to evaluate submissions to the EfficientQA competition, which is part of the NeurIPS 2020 competition track. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1506/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1506/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/469 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/469/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/469/comments | https://api.github.com/repos/huggingface/datasets/issues/469/events | https://github.com/huggingface/datasets/issues/469 | 671,876,963 | MDU6SXNzdWU2NzE4NzY5NjM= | 469 | invalid data type 'str' at _convert_outputs in arrow_dataset.py | {

"avatar_url": "https://avatars.githubusercontent.com/u/30617486?v=4",

"events_url": "https://api.github.com/users/Murgates/events{/privacy}",

"followers_url": "https://api.github.com/users/Murgates/followers",

"following_url": "https://api.github.com/users/Murgates/following{/other_user}",

"gists_url": "htt... | [] | closed | false | null | [] | null | [

"Hi ! Did you try to set the output format to pytorch ? (or tensorflow if you're using tensorflow)\r\nIt can be done with `dataset.set_format(\"torch\", columns=columns)` (or \"tensorflow\").\r\n\r\nNote that for pytorch, string columns can't be converted to `torch.Tensor`, so you have to specify in `columns=` the... | 2020-08-03T07:48:29Z | 2023-07-20T15:54:17Z | 2023-07-20T15:54:17Z | NONE | null | null | null | I trying to build multi label text classifier model using Transformers lib.

I'm using Transformers NLP to load the data set, while calling trainer.train() method. It throws the following error

File "C:\***\arrow_dataset.py", line 343, in _convert_outputs

v = command(v)

TypeError: new(): invalid data type ... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/469/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/469/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/48 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/48/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/48/comments | https://api.github.com/repos/huggingface/datasets/issues/48/events | https://github.com/huggingface/datasets/pull/48 | 612,504,687 | MDExOlB1bGxSZXF1ZXN0NDEzNDM2MTgz | 48 | [Command Convert] remove tensorflow import | {

"avatar_url": "https://avatars.githubusercontent.com/u/23423619?v=4",

"events_url": "https://api.github.com/users/patrickvonplaten/events{/privacy}",

"followers_url": "https://api.github.com/users/patrickvonplaten/followers",

"following_url": "https://api.github.com/users/patrickvonplaten/following{/other_use... | [] | closed | false | null | [] | null | [] | 2020-05-05T10:41:00Z | 2020-05-05T11:13:58Z | 2020-05-05T11:13:56Z | MEMBER | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/48.diff",

"html_url": "https://github.com/huggingface/datasets/pull/48",

"merged_at": "2020-05-05T11:13:56Z",

"patch_url": "https://github.com/huggingface/datasets/pull/48.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/48"

} | Remove all tensorflow import statements. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/48/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/48/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/350 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/350/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/350/comments | https://api.github.com/repos/huggingface/datasets/issues/350/events | https://github.com/huggingface/datasets/pull/350 | 652,398,691 | MDExOlB1bGxSZXF1ZXN0NDQ1NDczODYz | 350 | add from_pandas and from_dict | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https:... | [] | closed | false | null | [] | null | [] | 2020-07-07T15:03:53Z | 2020-07-08T14:14:33Z | 2020-07-08T14:14:32Z | MEMBER | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/350.diff",

"html_url": "https://github.com/huggingface/datasets/pull/350",

"merged_at": "2020-07-08T14:14:32Z",

"patch_url": "https://github.com/huggingface/datasets/pull/350.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/350... | I added two new methods to the `Dataset` class:

- `from_pandas()` to create a dataset from a pandas dataframe

- `from_dict()` to create a dataset from a dictionary (keys = columns)

It uses the `pa.Table.from_pandas` and `pa.Table.from_pydict` funcitons to do so.

It is also possible to specify the features types v... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/350/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/350/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/4417 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4417/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4417/comments | https://api.github.com/repos/huggingface/datasets/issues/4417/events | https://github.com/huggingface/datasets/issues/4417 | 1,251,933,091 | I_kwDODunzps5Knvuj | 4,417 | how to convert a dict generator into a huggingface dataset. | {

"avatar_url": "https://avatars.githubusercontent.com/u/32235549?v=4",

"events_url": "https://api.github.com/users/StephennFernandes/events{/privacy}",

"followers_url": "https://api.github.com/users/StephennFernandes/followers",

"following_url": "https://api.github.com/users/StephennFernandes/following{/other_... | [

{

"color": "d876e3",

"default": true,

"description": "Further information is requested",

"id": 1935892912,

"name": "question",

"node_id": "MDU6TGFiZWwxOTM1ODkyOTEy",

"url": "https://api.github.com/repos/huggingface/datasets/labels/question"

}

] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url"... | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

... | null | [

"@albertvillanova @lhoestq , could you please help me on this issue. ",

"Hi ! As mentioned on the [forum](https://discuss.huggingface.co/t/how-to-wrap-a-generator-with-hf-dataset/18464), the simplest for now would be to define a [dataset script](https://huggingface.co/docs/datasets/dataset_script) which can conta... | 2022-05-29T16:28:27Z | 2022-09-16T14:44:19Z | 2022-09-16T14:44:19Z | NONE | null | null | null | ### Link

_No response_

### Description

Hey there, I have used seqio to get a well distributed mixture of samples from multiple dataset. However the resultant output from seqio is a python generator dict, which I cannot produce back into huggingface dataset.

The generator contains all the samples needed for ... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4417/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4417/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/2580 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2580/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2580/comments | https://api.github.com/repos/huggingface/datasets/issues/2580/events | https://github.com/huggingface/datasets/pull/2580 | 935,767,421 | MDExOlB1bGxSZXF1ZXN0NjgyNjI2MTkz | 2,580 | Fix Counter import | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",... | [] | closed | false | null | [] | null | [] | 2021-07-02T13:21:48Z | 2021-07-02T14:37:47Z | 2021-07-02T14:37:46Z | MEMBER | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/2580.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2580",

"merged_at": "2021-07-02T14:37:46Z",

"patch_url": "https://github.com/huggingface/datasets/pull/2580.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/... | Import from `collections` instead of `typing`. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2580/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2580/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/6464 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/6464/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/6464/comments | https://api.github.com/repos/huggingface/datasets/issues/6464/events | https://github.com/huggingface/datasets/pull/6464 | 2,020,860,462 | PR_kwDODunzps5g5djo | 6,464 | Add concurrent loading of shards to datasets.load_from_disk | {

"avatar_url": "https://avatars.githubusercontent.com/u/51880718?v=4",

"events_url": "https://api.github.com/users/kkoutini/events{/privacy}",

"followers_url": "https://api.github.com/users/kkoutini/followers",

"following_url": "https://api.github.com/users/kkoutini/following{/other_user}",

"gists_url": "htt... | [] | open | false | null | [] | null | [

"If we use multithreading no need to ask for `num_proc`. And maybe we the same numbers of threads as tqdm by default (IIRC it's `max(32, cpu_count() + 4)`) - you can even use `tqdm.contrib.concurrent.thread_map` directly to simplify the code\r\n\r\nAlso you can ignore the `IN_MEMORY_MAX_SIZE` config for this. This ... | 2023-12-01T13:13:53Z | 2023-12-07T12:47:02Z | null | NONE | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/6464.diff",

"html_url": "https://github.com/huggingface/datasets/pull/6464",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/6464.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/6464"

} | In some file systems (like luster), memory mapping arrow files takes time. This can be accelerated by performing the mmap in parallel on processes or threads.

- Threads seem to be faster than processes when gathering the list of tables from the workers (see https://github.com/huggingface/datasets/issues/2252).

- I'... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/6464/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/6464/timeline | null | null | true |

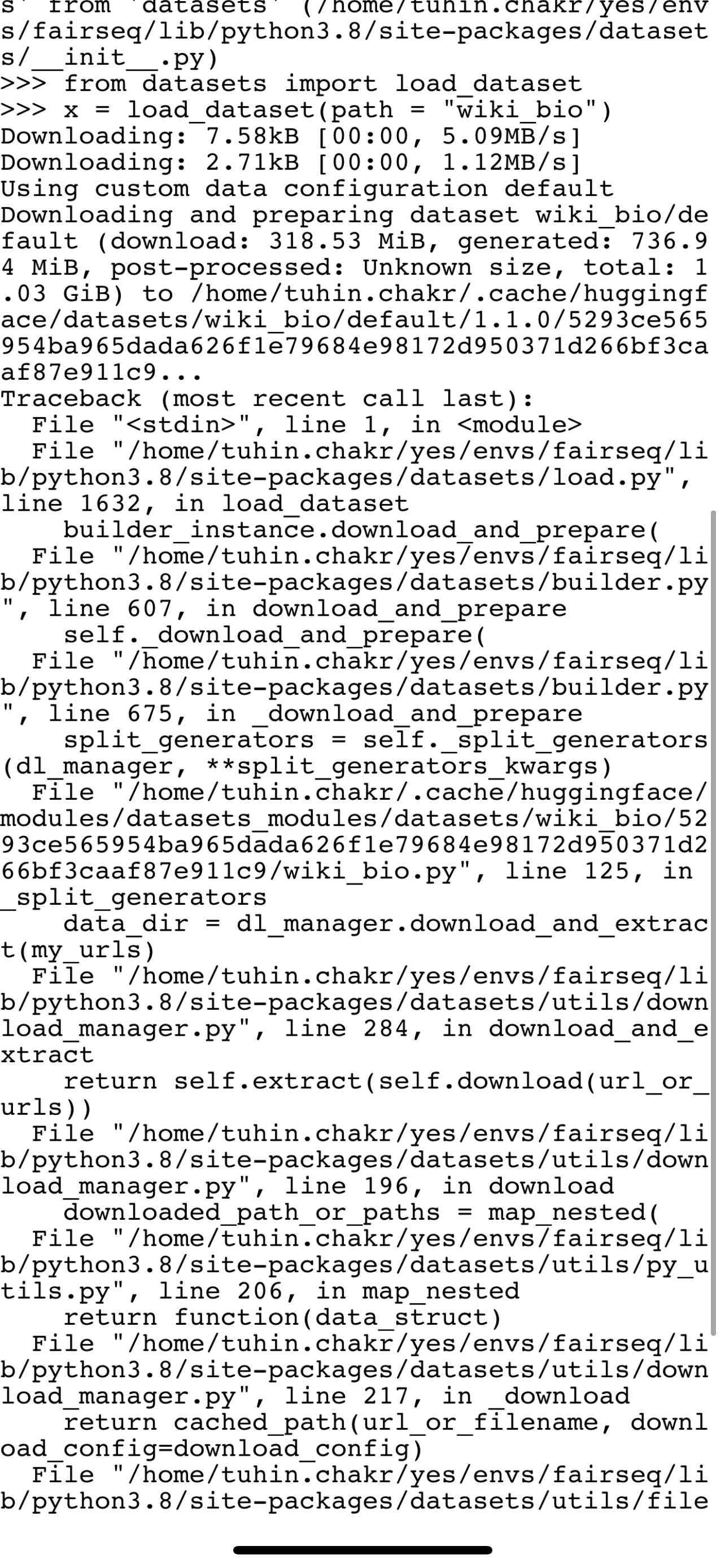

https://api.github.com/repos/huggingface/datasets/issues/3580 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3580/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3580/comments | https://api.github.com/repos/huggingface/datasets/issues/3580/events | https://github.com/huggingface/datasets/issues/3580 | 1,104,663,242 | I_kwDODunzps5B19LK | 3,580 | Bug in wiki bio load | {

"avatar_url": "https://avatars.githubusercontent.com/u/3104771?v=4",

"events_url": "https://api.github.com/users/tuhinjubcse/events{/privacy}",

"followers_url": "https://api.github.com/users/tuhinjubcse/followers",

"following_url": "https://api.github.com/users/tuhinjubcse/following{/other_user}",

"gists_ur... | [

{

"color": "2edb81",

"default": false,

"description": "A bug in a dataset script provided in the library",

"id": 2067388877,

"name": "dataset bug",

"node_id": "MDU6TGFiZWwyMDY3Mzg4ODc3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20bug"

}

] | closed | false | null | [] | null | [

"+1, here's the error I got: \r\n\r\n```\r\n>>> from datasets import load_dataset\r\n>>>\r\n>>> load_dataset(\"wiki_bio\")\r\nDownloading: 7.58kB [00:00, 4.42MB/s]\r\nDownloading: 2.71kB [00:00, 1.30MB/s]\r\nUsing custom data configuration default\r\nDownloading and preparing dataset wiki_bio/default (download: 318... | 2022-01-15T10:04:33Z | 2022-01-31T08:38:09Z | 2022-01-31T08:38:09Z | NONE | null | null | null |

wiki_bio is failing to load because of a failing drive link . Can someone fix this ?

: add initial loading script | {

"avatar_url": "https://avatars.githubusercontent.com/u/5757359?v=4",

"events_url": "https://api.github.com/users/AmitMY/events{/privacy}",

"followers_url": "https://api.github.com/users/AmitMY/followers",

"following_url": "https://api.github.com/users/AmitMY/following{/other_user}",

"gists_url": "https://ap... | [] | closed | false | null | [] | null | [

"Hi @AmitMY, sorry for leaving you hanging for a minute :) \r\n\r\nWe've developed a new pipeline for adding datasets with a few extra steps, including adding a dataset card. You can find the full process [here](https://github.com/huggingface/datasets/blob/master/ADD_NEW_DATASET.md)\r\n\r\nWould you be up for addin... | 2020-11-02T06:50:10Z | 2020-12-01T13:41:37Z | 2020-12-01T13:41:36Z | CONTRIBUTOR | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/789.diff",

"html_url": "https://github.com/huggingface/datasets/pull/789",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/789.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/789"

} | Its a small dataset, but its heavily annotated

https://www.bu.edu/asllrp/ncslgr.html

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/789/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/789/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/4363 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4363/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4363/comments | https://api.github.com/repos/huggingface/datasets/issues/4363/events | https://github.com/huggingface/datasets/issues/4363 | 1,238,897,652 | I_kwDODunzps5J2BP0 | 4,363 | The dataset preview is not available for this split. | {

"avatar_url": "https://avatars.githubusercontent.com/u/7584674?v=4",

"events_url": "https://api.github.com/users/roholazandie/events{/privacy}",

"followers_url": "https://api.github.com/users/roholazandie/followers",

"following_url": "https://api.github.com/users/roholazandie/following{/other_user}",

"gists... | [

{

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co",

"id": 3470211881,

"name": "dataset-viewer",

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer"

}

] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://ap... | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url... | null | [

"Hi! A dataset has to be streamable to work with the viewer. I did a quick test, and yours is, so this might be a bug in the viewer. cc @severo \r\n",

"Looking at it. The message is now:\r\n\r\n```\r\nMessage: cannot cache function '__shear_dense': no locator available for file '/src/services/worker/.venv/... | 2022-05-17T16:34:43Z | 2022-06-08T12:32:10Z | 2022-06-08T09:26:56Z | NONE | null | null | null | I have uploaded the corpus developed by our lab in the speech domain to huggingface [datasets](https://huggingface.co/datasets/Roh/ryanspeech). You can read about the companion paper accepted in interspeech 2021 [here](https://arxiv.org/abs/2106.08468). The dataset works fine but I can't make the dataset preview work. ... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4363/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4363/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/4306 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4306/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4306/comments | https://api.github.com/repos/huggingface/datasets/issues/4306/events | https://github.com/huggingface/datasets/issues/4306 | 1,231,137,204 | I_kwDODunzps5JYam0 | 4,306 | `load_dataset` does not work with certain filename. | {

"avatar_url": "https://avatars.githubusercontent.com/u/57242693?v=4",

"events_url": "https://api.github.com/users/whatever60/events{/privacy}",

"followers_url": "https://api.github.com/users/whatever60/followers",

"following_url": "https://api.github.com/users/whatever60/following{/other_user}",

"gists_url"... | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | [] | null | [

"Never mind. It is because of the caching of datasets..."

] | 2022-05-10T13:14:04Z | 2022-05-10T18:58:36Z | 2022-05-10T18:58:09Z | NONE | null | null | null | ## Describe the bug

This is a weird bug that took me some time to find out.

I have a JSON dataset that I want to load with `load_dataset` like this:

```

data_files = dict(train="train.json.zip", val="val.json.zip")

dataset = load_dataset("json", data_files=data_files, field="data")

```

## Expected results

... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4306/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4306/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/2163 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2163/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2163/comments | https://api.github.com/repos/huggingface/datasets/issues/2163/events | https://github.com/huggingface/datasets/pull/2163 | 849,669,366 | MDExOlB1bGxSZXF1ZXN0NjA4Mzk0NDMz | 2,163 | Concat only unique fields in DatasetInfo.from_merge | {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url"... | [] | closed | false | null | [] | null | [

"Hi @mariosasko,\r\nJust came across this PR and I was wondering if we can use\r\n`description = \"\\n\\n\".join(OrderedDict.fromkeys([info.description for info in dataset_infos]))`\r\n\r\nThis will obviate the need for `unique` and is almost as fast as `set`. We could have used `dict` inplace of `OrderedDict` but ... | 2021-04-03T14:31:30Z | 2021-04-06T14:40:00Z | 2021-04-06T14:39:59Z | CONTRIBUTOR | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/2163.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2163",

"merged_at": "2021-04-06T14:39:59Z",

"patch_url": "https://github.com/huggingface/datasets/pull/2163.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/... | I thought someone from the community with less experience would be interested in fixing this issue, but that wasn't the case.

Fixes #2103 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2163/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2163/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/2185 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2185/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2185/comments | https://api.github.com/repos/huggingface/datasets/issues/2185/events | https://github.com/huggingface/datasets/issues/2185 | 852,684,395 | MDU6SXNzdWU4NTI2ODQzOTU= | 2,185 | .map() and distributed training | {

"avatar_url": "https://avatars.githubusercontent.com/u/16107619?v=4",

"events_url": "https://api.github.com/users/VictorSanh/events{/privacy}",

"followers_url": "https://api.github.com/users/VictorSanh/followers",

"following_url": "https://api.github.com/users/VictorSanh/following{/other_user}",

"gists_url"... | [] | closed | false | null | [] | null | [

"Hi, one workaround would be to save the mapped(tokenized in your case) file using `save_to_disk`, and having each process load this file using `load_from_disk`. This is what I am doing, and in this case, I turn off the ability to automatically load from the cache.\r\n\r\nAlso, multiprocessing the map function seem... | 2021-04-07T18:22:14Z | 2021-10-23T07:11:15Z | 2021-04-09T15:38:31Z | MEMBER | null | null | null | Hi,

I have a question regarding distributed training and the `.map` call on a dataset.

I have a local dataset "my_custom_dataset" that I am loading with `datasets = load_from_disk(dataset_path=my_path)`.

`dataset` is then tokenized:

```python

datasets = load_from_disk(dataset_path=my_path)

[...]

def tokeni... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2185/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2185/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/1514 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1514/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1514/comments | https://api.github.com/repos/huggingface/datasets/issues/1514/events | https://github.com/huggingface/datasets/issues/1514 | 764,017,148 | MDU6SXNzdWU3NjQwMTcxNDg= | 1,514 | how to get all the options of a property in datasets | {

"avatar_url": "https://avatars.githubusercontent.com/u/6278280?v=4",

"events_url": "https://api.github.com/users/rabeehk/events{/privacy}",

"followers_url": "https://api.github.com/users/rabeehk/followers",

"following_url": "https://api.github.com/users/rabeehk/following{/other_user}",

"gists_url": "https:/... | [

{

"color": "d876e3",

"default": true,

"description": "Further information is requested",

"id": 1935892912,

"name": "question",

"node_id": "MDU6TGFiZWwxOTM1ODkyOTEy",

"url": "https://api.github.com/repos/huggingface/datasets/labels/question"

}

] | closed | false | null | [] | null | [

"In a dataset, labels correspond to the `ClassLabel` feature that has the `names` property that returns string represenation of the integer classes (or `num_classes` to get the number of different classes).",

"I think the `features` attribute of the dataset object is what you are looking for:\r\n```\r\n>>> datase... | 2020-12-12T16:24:08Z | 2022-05-25T16:27:29Z | 2022-05-25T16:27:29Z | CONTRIBUTOR | null | null | null | Hi

could you tell me how I can get all unique options of a property of dataset?

for instance in case of boolq, if the user wants to know which unique labels it has, is there a way to access unique labels without getting all training data lables and then forming a set i mean? thanks | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1514/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1514/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5574 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5574/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5574/comments | https://api.github.com/repos/huggingface/datasets/issues/5574/events | https://github.com/huggingface/datasets/issues/5574 | 1,598,104,691 | I_kwDODunzps5fQSRz | 5,574 | c4 dataset streaming fails with `FileNotFoundError` | {

"avatar_url": "https://avatars.githubusercontent.com/u/202907?v=4",

"events_url": "https://api.github.com/users/krasserm/events{/privacy}",

"followers_url": "https://api.github.com/users/krasserm/followers",

"following_url": "https://api.github.com/users/krasserm/following{/other_user}",

"gists_url": "https... | [] | closed | false | null | [] | null | [

"Also encountering this issue for every dataset I try to stream! Installed datasets from main:\r\n```\r\n- `datasets` version: 2.10.1.dev0\r\n- Platform: macOS-13.1-arm64-arm-64bit\r\n- Python version: 3.9.13\r\n- PyArrow version: 10.0.1\r\n- Pandas version: 1.5.2\r\n```\r\n\r\nRepro:\r\n```python\r\nfrom datasets ... | 2023-02-24T07:57:32Z | 2023-12-18T07:32:32Z | 2023-02-27T04:03:38Z | NONE | null | null | null | ### Describe the bug

Loading the `c4` dataset in streaming mode with `load_dataset("c4", "en", split="validation", streaming=True)` and then using it fails with a `FileNotFoundException`.

### Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("c4", "en", split="train", ... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5574/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5574/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/1718 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1718/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1718/comments | https://api.github.com/repos/huggingface/datasets/issues/1718/events | https://github.com/huggingface/datasets/issues/1718 | 783,474,753 | MDU6SXNzdWU3ODM0NzQ3NTM= | 1,718 | Possible cache miss in datasets | {

"avatar_url": "https://avatars.githubusercontent.com/u/18296312?v=4",

"events_url": "https://api.github.com/users/ofirzaf/events{/privacy}",

"followers_url": "https://api.github.com/users/ofirzaf/followers",

"following_url": "https://api.github.com/users/ofirzaf/following{/other_user}",

"gists_url": "https:... | [] | closed | false | null | [] | null | [

"Thanks for reporting !\r\nI was able to reproduce thanks to your code and find the origin of the bug.\r\nThe cache was not reusing the same file because one object was not deterministic. It comes from a conversion from `set` to `list` in the `datasets.arrrow_dataset.transmit_format` function, where the resulting l... | 2021-01-11T15:37:31Z | 2022-06-29T14:54:42Z | 2021-01-26T02:47:59Z | NONE | null | null | null | Hi,

I am using the datasets package and even though I run the same data processing functions, datasets always recomputes the function instead of using cache.

I have attached an example script that for me reproduces the problem.

In the attached example the second map function always recomputes instead of loading fr... | {

"+1": 2,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 2,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1718/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1718/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/738 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/738/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/738/comments | https://api.github.com/repos/huggingface/datasets/issues/738/events | https://github.com/huggingface/datasets/pull/738 | 723,033,923 | MDExOlB1bGxSZXF1ZXN0NTA0NjkxNjM4 | 738 | Replace seqeval code with original classification_report for simplicity | {

"avatar_url": "https://avatars.githubusercontent.com/u/6737785?v=4",

"events_url": "https://api.github.com/users/Hironsan/events{/privacy}",

"followers_url": "https://api.github.com/users/Hironsan/followers",

"following_url": "https://api.github.com/users/Hironsan/following{/other_user}",

"gists_url": "http... | [] | closed | false | null | [] | null | [

"Hello,\r\n\r\nI ran https://github.com/huggingface/transformers/blob/master/examples/token-classification/run.sh\r\n\r\nAnd received this error:\r\n```\r\n100%|██████████| 407/407 [21:37<00:00, 3.44s/it]Traceback (most recent call last):\r\n File \"run_ner.py\", line 445, in <module>\r\n main()\r\n File \"ru... | 2020-10-16T08:51:45Z | 2021-01-21T16:07:15Z | 2020-10-19T10:31:12Z | CONTRIBUTOR | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/738.diff",

"html_url": "https://github.com/huggingface/datasets/pull/738",

"merged_at": "2020-10-19T10:31:11Z",

"patch_url": "https://github.com/huggingface/datasets/pull/738.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/738... | Recently, the original seqeval has enabled us to get per type scores and overall scores as a dictionary.

This PR replaces the current code with the original function(`classification_report`) to simplify it.

Also, the original code has been updated to fix #352.

- Related issue: https://github.com/chakki-works/seq... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/738/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/738/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1636 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1636/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1636/comments | https://api.github.com/repos/huggingface/datasets/issues/1636/events | https://github.com/huggingface/datasets/issues/1636 | 774,574,378 | MDU6SXNzdWU3NzQ1NzQzNzg= | 1,636 | winogrande cannot be dowloaded | {

"avatar_url": "https://avatars.githubusercontent.com/u/10137?v=4",

"events_url": "https://api.github.com/users/ghost/events{/privacy}",

"followers_url": "https://api.github.com/users/ghost/followers",

"following_url": "https://api.github.com/users/ghost/following{/other_user}",

"gists_url": "https://api.git... | [] | closed | false | null | [] | null | [

"I have same issue for other datasets (`myanmar_news` in my case).\r\n\r\nA version of `datasets` runs correctly on my local machine (**without GPU**) which looking for the dataset at \r\n```\r\nhttps://raw.githubusercontent.com/huggingface/datasets/master/datasets/myanmar_news/myanmar_news.py\r\n```\r\n\r\nMeanwhi... | 2020-12-24T22:28:22Z | 2022-10-05T12:35:44Z | 2022-10-05T12:35:44Z | NONE | null | null | null | Hi,

I am getting this error when trying to run the codes on the cloud. Thank you for any suggestion and help on this @lhoestq

```

File "./finetune_trainer.py", line 318, in <module>

main()

File "./finetune_trainer.py", line 148, in main

for task in data_args.tasks]

File "./finetune_trainer.py", ... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1636/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1636/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/4501 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4501/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4501/comments | https://api.github.com/repos/huggingface/datasets/issues/4501/events | https://github.com/huggingface/datasets/pull/4501 | 1,272,300,646 | PR_kwDODunzps45th2M | 4,501 | Corrected broken links in doc | {

"avatar_url": "https://avatars.githubusercontent.com/u/22726840?v=4",

"events_url": "https://api.github.com/users/clefourrier/events{/privacy}",

"followers_url": "https://api.github.com/users/clefourrier/followers",

"following_url": "https://api.github.com/users/clefourrier/following{/other_user}",

"gists_u... | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | 2022-06-15T14:12:17Z | 2022-06-15T15:11:05Z | 2022-06-15T15:00:56Z | MEMBER | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/4501.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4501",

"merged_at": "2022-06-15T15:00:56Z",

"patch_url": "https://github.com/huggingface/datasets/pull/4501.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/... | null | {

"+1": 1,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4501/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4501/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/2697 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2697/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2697/comments | https://api.github.com/repos/huggingface/datasets/issues/2697/events | https://github.com/huggingface/datasets/pull/2697 | 950,021,623 | MDExOlB1bGxSZXF1ZXN0Njk0NjMyODg0 | 2,697 | Fix import on Colab | {

"avatar_url": "https://avatars.githubusercontent.com/u/32437151?v=4",

"events_url": "https://api.github.com/users/nateraw/events{/privacy}",

"followers_url": "https://api.github.com/users/nateraw/followers",

"following_url": "https://api.github.com/users/nateraw/following{/other_user}",

"gists_url": "https:... | [] | closed | false | null | [] | null | [

"@lhoestq @albertvillanova - It might be a good idea to have a patch release after this gets merged (presumably tomorrow morning when you're around). The Colab issue linked to this PR is a pretty big blocker. "

] | 2021-07-21T19:03:38Z | 2021-07-22T07:09:08Z | 2021-07-22T07:09:07Z | CONTRIBUTOR | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/2697.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2697",

"merged_at": "2021-07-22T07:09:06Z",

"patch_url": "https://github.com/huggingface/datasets/pull/2697.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/... | Fix #2695, fix #2700. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 1,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2697/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2697/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/2431 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2431/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2431/comments | https://api.github.com/repos/huggingface/datasets/issues/2431/events | https://github.com/huggingface/datasets/issues/2431 | 907,413,691 | MDU6SXNzdWU5MDc0MTM2OTE= | 2,431 | DuplicatedKeysError when trying to load adversarial_qa | {

"avatar_url": "https://avatars.githubusercontent.com/u/21348833?v=4",

"events_url": "https://api.github.com/users/hanss0n/events{/privacy}",

"followers_url": "https://api.github.com/users/hanss0n/followers",

"following_url": "https://api.github.com/users/hanss0n/following{/other_user}",

"gists_url": "https:... | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | [] | null | [

"Thanks for reporting !\r\n#2433 fixed the issue, thanks @mariosasko :)\r\n\r\nWe'll do a patch release soon of the library.\r\nIn the meantime, you can use the fixed version of adversarial_qa by adding `script_version=\"master\"` in `load_dataset`"

] | 2021-05-31T12:11:19Z | 2021-06-01T08:54:03Z | 2021-06-01T08:52:11Z | NONE | null | null | null | ## Describe the bug

A clear and concise description of what the bug is.

## Steps to reproduce the bug

```python

dataset = load_dataset('adversarial_qa', 'adversarialQA')

```

## Expected results

The dataset should be loaded into memory

## Actual results

>DuplicatedKeysError: FAILURE TO GENERATE DATASET ... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2431/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2431/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/2600 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2600/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2600/comments | https://api.github.com/repos/huggingface/datasets/issues/2600/events | https://github.com/huggingface/datasets/issues/2600 | 938,086,745 | MDU6SXNzdWU5MzgwODY3NDU= | 2,600 | Crash when using multiprocessing (`num_proc` > 1) on `filter` and all samples are discarded | {

"avatar_url": "https://avatars.githubusercontent.com/u/4904985?v=4",

"events_url": "https://api.github.com/users/mxschmdt/events{/privacy}",

"followers_url": "https://api.github.com/users/mxschmdt/followers",

"following_url": "https://api.github.com/users/mxschmdt/following{/other_user}",

"gists_url": "http... | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | [] | null | [] | 2021-07-06T16:53:25Z | 2021-07-07T12:50:31Z | 2021-07-07T12:50:31Z | CONTRIBUTOR | null | null | null | ## Describe the bug

If `filter` is applied to a dataset using multiprocessing (`num_proc` > 1) and all sharded datasets are empty afterwards (due to all samples being discarded), the program crashes.

## Steps to reproduce the bug

```python

from datasets import Dataset

data = Dataset.from_dict({'id': [0,1]})

dat... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2600/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2600/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/6484 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/6484/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/6484/comments | https://api.github.com/repos/huggingface/datasets/issues/6484/events | https://github.com/huggingface/datasets/issues/6484 | 2,033,333,294 | I_kwDODunzps55MjQu | 6,484 | [Feature Request] Dataset versioning | {

"avatar_url": "https://avatars.githubusercontent.com/u/47979198?v=4",

"events_url": "https://api.github.com/users/kenfus/events{/privacy}",

"followers_url": "https://api.github.com/users/kenfus/followers",

"following_url": "https://api.github.com/users/kenfus/following{/other_user}",

"gists_url": "https://a... | [] | open | false | null | [] | null | [

"Hello @kenfus, this is meant to be possible to do yes. Let me ping @lhoestq or @mariosasko from the `datasets` team (`huggingface_hub` is only the underlying library to download files from the Hub but here it looks more like a `datasets` problem). ",

"Hi! https://github.com/huggingface/datasets/pull/6459 will fi... | 2023-12-08T16:01:35Z | 2023-12-11T19:13:46Z | null | NONE | null | null | null | **Is your feature request related to a problem? Please describe.**

I am working on a project, where I would like to test different preprocessing methods for my ML-data. Thus, I would like to work a lot with revisions and compare them. Currently, I was not able to make it work with the revision keyword because it was n... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/6484/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/6484/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4152 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4152/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4152/comments | https://api.github.com/repos/huggingface/datasets/issues/4152/events | https://github.com/huggingface/datasets/issues/4152 | 1,202,034,115 | I_kwDODunzps5HpZXD | 4,152 | ArrayND error in pyarrow 5 | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https:... | [] | closed | false | null | [] | null | [

"Where do we bump the required pyarrow version? Any inputs on how I fix this issue? ",

"We need to bump it in `setup.py` as well as update some CI job to use pyarrow 6 instead of 5 in `.circleci/config.yaml` and `.github/workflows/benchmarks.yaml`"

] | 2022-04-12T15:41:40Z | 2022-05-04T09:29:46Z | 2022-05-04T09:29:46Z | MEMBER | null | null | null | As found in https://github.com/huggingface/datasets/pull/3903, The ArrayND features fail on pyarrow 5:

```python

import pyarrow as pa

from datasets import Array2D

from datasets.table import cast_array_to_feature

arr = pa.array([[[0]]])

feature_type = Array2D(shape=(1, 1), dtype="int64")

cast_array_to_feature(a... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4152/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4152/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/2355 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2355/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2355/comments | https://api.github.com/repos/huggingface/datasets/issues/2355/events | https://github.com/huggingface/datasets/pull/2355 | 890,484,408 | MDExOlB1bGxSZXF1ZXN0NjQzNDk5NTIz | 2,355 | normalized TOCs and titles in data cards | {

"avatar_url": "https://avatars.githubusercontent.com/u/10469459?v=4",

"events_url": "https://api.github.com/users/yjernite/events{/privacy}",

"followers_url": "https://api.github.com/users/yjernite/followers",

"following_url": "https://api.github.com/users/yjernite/following{/other_user}",

"gists_url": "htt... | [] | closed | false | null | [] | null | [

"Oh right! I'd be in favor of still having the same TOC across the board, we can either leave it as is or add a `[More Info Needed]` `Contributions` Section wherever it's currently missing, wdyt?",

"(I thought those were programmatically updated based on git history :D )",

"Merging for now to avoid conflict sin... | 2021-05-12T20:59:59Z | 2021-05-14T13:23:12Z | 2021-05-14T13:23:12Z | MEMBER | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/2355.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2355",

"merged_at": "2021-05-14T13:23:12Z",

"patch_url": "https://github.com/huggingface/datasets/pull/2355.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/... | I started fixing some of the READMEs that were failing the tests introduced by @gchhablani but then realized that there were some consistent differences between earlier and newer versions of some of the titles (e.g. Data Splits vs Data Splits Sample Size, Supported Tasks vs Supported Tasks and Leaderboards). We also ha... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 1,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2355/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2355/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1733 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1733/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1733/comments | https://api.github.com/repos/huggingface/datasets/issues/1733/events | https://github.com/huggingface/datasets/issues/1733 | 784,903,002 | MDU6SXNzdWU3ODQ5MDMwMDI= | 1,733 | connection issue with glue, what is the data url for glue? | {

"avatar_url": "https://avatars.githubusercontent.com/u/10137?v=4",

"events_url": "https://api.github.com/users/ghost/events{/privacy}",

"followers_url": "https://api.github.com/users/ghost/followers",

"following_url": "https://api.github.com/users/ghost/following{/other_user}",

"gists_url": "https://api.git... | [] | closed | false | null | [] | null | [

"Hello @juliahane, which config of GLUE causes you trouble?\r\nThe URLs are defined in the dataset script source code: https://github.com/huggingface/datasets/blob/master/datasets/glue/glue.py"

] | 2021-01-13T08:37:40Z | 2021-08-04T18:13:55Z | 2021-08-04T18:13:55Z | NONE | null | null | null | Hi

my codes sometimes fails due to connection issue with glue, could you tell me how I can have the URL datasets library is trying to read GLUE from to test the machines I am working on if there is an issue on my side or not

thanks | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1733/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1733/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/407 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/407/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/407/comments | https://api.github.com/repos/huggingface/datasets/issues/407/events | https://github.com/huggingface/datasets/issues/407 | 658,672,736 | MDU6SXNzdWU2NTg2NzI3MzY= | 407 | MissingBeamOptions for Wikipedia 20200501.en | {

"avatar_url": "https://avatars.githubusercontent.com/u/7490438?v=4",

"events_url": "https://api.github.com/users/mitchellgordon95/events{/privacy}",

"followers_url": "https://api.github.com/users/mitchellgordon95/followers",

"following_url": "https://api.github.com/users/mitchellgordon95/following{/other_user... | [] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https:... | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists... | null | [

"Fixed. Could you try again @mitchellgordon95 ?\r\nIt was due a file not being updated on S3.\r\n\r\nWe need to make sure all the datasets scripts get updated properly @julien-c ",

"Works for me! Thanks.",

"I found the same issue with almost any language other than English. (For English, it works). Will someone... | 2020-07-16T23:48:03Z | 2021-01-12T11:41:16Z | 2020-07-17T14:24:28Z | CONTRIBUTOR | null | null | null | There may or may not be a regression for the pre-processed Wikipedia dataset. This was working fine 10 commits ago (without having Apache Beam available):

```

nlp.load_dataset('wikipedia', "20200501.en", split='train')

```

And now, having pulled master, I get:

```

Downloading and preparing dataset wikipedia... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/407/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/407/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/4733 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4733/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4733/comments | https://api.github.com/repos/huggingface/datasets/issues/4733/events | https://github.com/huggingface/datasets/issues/4733 | 1,314,479,616 | I_kwDODunzps5OWV4A | 4,733 | rouge metric | {

"avatar_url": "https://avatars.githubusercontent.com/u/29248466?v=4",

"events_url": "https://api.github.com/users/asking28/events{/privacy}",

"followers_url": "https://api.github.com/users/asking28/followers",

"following_url": "https://api.github.com/users/asking28/following{/other_user}",

"gists_url": "htt... | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",... | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/o... | null | [

"Fixed by:\r\n- #4735"

] | 2022-07-22T07:06:51Z | 2022-07-22T09:08:02Z | 2022-07-22T09:05:35Z | NONE | null | null | null | ## Describe the bug

A clear and concise description of what the bug is.

Loading Rouge metric gives error after latest rouge-score==0.0.7 release.

Downgrading rougemetric==0.0.4 works fine.

## Steps to reproduce the bug

```python

# Sample code to reproduce the bug

```

## Expected results

A clear and concis... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4733/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4733/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/4569 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4569/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4569/comments | https://api.github.com/repos/huggingface/datasets/issues/4569/events | https://github.com/huggingface/datasets/issues/4569 | 1,284,833,694 | I_kwDODunzps5MlQGe | 4,569 | Dataset Viewer issue for sst2 | {

"avatar_url": "https://avatars.githubusercontent.com/u/26859204?v=4",

"events_url": "https://api.github.com/users/lewtun/events{/privacy}",

"followers_url": "https://api.github.com/users/lewtun/followers",

"following_url": "https://api.github.com/users/lewtun/following{/other_user}",

"gists_url": "https://a... | [

{

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co",

"id": 3470211881,

"name": "dataset-viewer",

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer"

}

] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",... | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/o... | null | [

"Hi @lewtun, thanks for reporting.\r\n\r\nI have checked locally and refreshed the preview and it seems working smooth now:\r\n```python\r\nIn [8]: ds\r\nOut[8]: \r\nDatasetDict({\r\n train: Dataset({\r\n features: ['idx', 'sentence', 'label'],\r\n num_rows: 67349\r\n })\r\n validation: Datas... | 2022-06-26T07:32:54Z | 2022-06-27T06:37:48Z | 2022-06-27T06:37:48Z | MEMBER | null | null | null | ### Link

https://huggingface.co/datasets/sst2

### Description

Not sure what is causing this, however it seems that `load_dataset("sst2")` also hangs (even though it downloads the files without problem):

```

Status code: 400

Exception: Exception

Message: Give up after 5 attempts with Connectio... | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4569/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4569/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/4664 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4664/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4664/comments | https://api.github.com/repos/huggingface/datasets/issues/4664/events | https://github.com/huggingface/datasets/pull/4664 | 1,299,571,212 | PR_kwDODunzps47IvfG | 4,664 | Add stanford dog dataset | {

"avatar_url": "https://avatars.githubusercontent.com/u/8711912?v=4",

"events_url": "https://api.github.com/users/khushmeeet/events{/privacy}",

"followers_url": "https://api.github.com/users/khushmeeet/followers",

"following_url": "https://api.github.com/users/khushmeeet/following{/other_user}",

"gists_url":... | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"Hi @khushmeeet, thanks for your contribution.\r\n\r\nBut wouldn't it be better to add this dataset to the Hub? \r\n- https://huggingface.co/docs/datasets/share\r\n- https://huggingface.co/docs/datasets/dataset_script",

"Hi @albertv... | 2022-07-09T04:46:07Z | 2022-07-15T13:30:32Z | 2022-07-15T13:15:42Z | CONTRIBUTOR | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/4664.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4664",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/4664.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4664"

} | This PR is for adding dataset, related to issue #4504.

We are adding Stanford dog breed dataset. It is a multi class image classification dataset.

Details can be found here - http://vision.stanford.edu/aditya86/ImageNetDogs/

Tests on dummy data is failing currently, which I am looking into. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4664/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4664/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3995 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3995/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3995/comments | https://api.github.com/repos/huggingface/datasets/issues/3995/events | https://github.com/huggingface/datasets/pull/3995 | 1,178,232,623 | PR_kwDODunzps404054 | 3,995 | Close `PIL.Image` file handler in `Image.decode_example` | {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url"... | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | 2022-03-23T14:51:48Z | 2022-03-23T18:24:52Z | 2022-03-23T18:19:27Z | CONTRIBUTOR | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/3995.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3995",

"merged_at": "2022-03-23T18:19:26Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3995.patch",