hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6

values | lang stringclasses 1

value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191

values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

9e14d9b96366d1545a457f48a662e84f56d437eb | 708 | md | Markdown | CHANGELOG.md | bconnorwhite/parse-lcov | 2b008afbeeb824e9999156cdaec1d189f101d21a | [

"MIT"

] | 1 | 2020-09-27T18:22:13.000Z | 2020-09-27T18:22:13.000Z | CHANGELOG.md | bconnorwhite/parse-lcov | 2b008afbeeb824e9999156cdaec1d189f101d21a | [

"MIT"

] | 1 | 2020-09-27T23:31:09.000Z | 2020-09-30T05:04:20.000Z | CHANGELOG.md | bconnorwhite/parse-lcov | 2b008afbeeb824e9999156cdaec1d189f101d21a | [

"MIT"

] | null | null | null | ## [1.0.4](https://github.com/bconnorwhite/parse-lcov/compare/v1.0.3...v1.0.4) (2020-10-06)

## [1.0.3](https://github.com/bconnorwhite/parse-lcov/compare/v1.0.2...v1.0.3) (2020-10-06)

## [1.0.2](https://github.com/bconnorwhite/parse-lcov/compare/v1.0.1...v1.0.2) (2020-09-29)

### Bug Fixes

* fix issue parsing m... | 22.83871 | 140 | 0.701977 | kor_Hang | 0.077864 |

9e15305ac63dedbab232c8f01b5895b5739ae9c4 | 732 | md | Markdown | README.md | xiaomi-sa/falcon-eye | 8b4072e0f6e89957dea774666da8d635b88eaeaf | [

"MIT"

] | 9 | 2015-01-20T10:22:38.000Z | 2019-05-14T10:20:57.000Z | README.md | ljb-2000/falcon-eye | 8b4072e0f6e89957dea774666da8d635b88eaeaf | [

"MIT"

] | null | null | null | README.md | ljb-2000/falcon-eye | 8b4072e0f6e89957dea774666da8d635b88eaeaf | [

"MIT"

] | 6 | 2015-01-16T09:06:34.000Z | 2019-05-14T10:20:58.000Z | falcon-eye

==========

linux monitor tool. an agent running on your host collect and display performance data. just like https://github.com/afaqurk/linux-dash

### install

```

mkdir -p $GOPATH/src/github.com/ulricqin

cd $GOPATH/src/github.com/ulricqin

git clone https://github.com/UlricQin/falcon-eye.git

cd $GOPATH/sr... | 26.142857 | 135 | 0.737705 | yue_Hant | 0.311322 |

9e15978910053592c10d3c404cbff7fbdf2e1c52 | 45 | md | Markdown | README.md | equinor/eds-zeroheight | cdc6b03e8fa43a79311a178b47102856fe281300 | [

"MIT"

] | null | null | null | README.md | equinor/eds-zeroheight | cdc6b03e8fa43a79311a178b47102856fe281300 | [

"MIT"

] | null | null | null | README.md | equinor/eds-zeroheight | cdc6b03e8fa43a79311a178b47102856fe281300 | [

"MIT"

] | null | null | null | # eds-zeroheight

Experiments in zero gravity

| 15 | 27 | 0.822222 | eng_Latn | 0.440948 |

9e15ce1c76a2381429ea98aaeb8fde2320a3a2dd | 87 | md | Markdown | _includes/02-image.md | jssol/markdown-portfolio | cd92fdd4c1746b92cad7801acbcbc7e5bcefe3d1 | [

"MIT"

] | null | null | null | _includes/02-image.md | jssol/markdown-portfolio | cd92fdd4c1746b92cad7801acbcbc7e5bcefe3d1 | [

"MIT"

] | 5 | 2022-01-29T20:22:38.000Z | 2022-01-30T21:15:19.000Z | _includes/02-image.md | jssol/markdown-portfolio | cd92fdd4c1746b92cad7801acbcbc7e5bcefe3d1 | [

"MIT"

] | null | null | null |

| 43.5 | 86 | 0.816092 | kor_Hang | 0.206476 |

9e15f2dcd2bc8df4f56be0e5a774017b258529b9 | 1,724 | md | Markdown | moveit/README.md | R3tr074/nlw4-monorepo | 2880af29932942741f8fb52d5e0e72e77eaf5e22 | [

"MIT"

] | 15 | 2021-02-28T19:34:00.000Z | 2021-11-18T17:15:25.000Z | moveit/README.md | R3tr074/nlw4-monorepo | 2880af29932942741f8fb52d5e0e72e77eaf5e22 | [

"MIT"

] | null | null | null | moveit/README.md | R3tr074/nlw4-monorepo | 2880af29932942741f8fb52d5e0e72e77eaf5e22 | [

"MIT"

] | null | null | null | <h1 align="center">

Move it | NLW#4

</h1>

<p align="center"> Application developed in the fourth edition of Rocketseat Next Level Week </p>

<p align="center">

<a href="#the-project">The project</a> •

<a href="#technologies">Technologies</a> •

<a href="#contribution">Contribution</a> •

<a href="#author">Author... | 35.916667 | 361 | 0.731439 | eng_Latn | 0.952917 |

9e1684136b1692527c6a6b73b0b207f9585a7394 | 233 | md | Markdown | docs/UserCustomClaims.md | OakLabsInc/javascript-dashboard-api | 1838b43d07c3352faa24646f1f6886d44eb05e0c | [

"Apache-2.0"

] | null | null | null | docs/UserCustomClaims.md | OakLabsInc/javascript-dashboard-api | 1838b43d07c3352faa24646f1f6886d44eb05e0c | [

"Apache-2.0"

] | null | null | null | docs/UserCustomClaims.md | OakLabsInc/javascript-dashboard-api | 1838b43d07c3352faa24646f1f6886d44eb05e0c | [

"Apache-2.0"

] | null | null | null | # dashboard.UserCustomClaims

## Properties

Name | Type | Description | Notes

------------ | ------------- | ------------- | -------------

**domain** | **String** | |

**domainUser** | **String** | |

**role** | **String** | |

| 21.181818 | 60 | 0.416309 | yue_Hant | 0.244612 |

9e16d21de68168a943fff7cdd9c5c60c44c452ca | 2,884 | md | Markdown | api/qsharp/microsoft.quantum.characterization.estimatefrequencya.md | FalkW/quantum-docs-pr.de-DE | 55f8dcffe38c522c4c5f22fadc2ad3bbc2e6b4c8 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2020-05-19T20:13:03.000Z | 2020-07-23T08:16:03.000Z | api/qsharp/microsoft.quantum.characterization.estimatefrequencya.md | FalkW/quantum-docs-pr.de-DE | 55f8dcffe38c522c4c5f22fadc2ad3bbc2e6b4c8 | [

"CC-BY-4.0",

"MIT"

] | 4 | 2020-02-20T08:56:47.000Z | 2021-02-02T23:04:31.000Z | api/qsharp/microsoft.quantum.characterization.estimatefrequencya.md | FalkW/quantum-docs-pr.de-DE | 55f8dcffe38c522c4c5f22fadc2ad3bbc2e6b4c8 | [

"CC-BY-4.0",

"MIT"

] | 3 | 2020-04-15T13:01:51.000Z | 2021-11-15T09:21:30.000Z | ---

uid: Microsoft.Quantum.Characterization.EstimateFrequencyA

title: Estimatefrequescya-Vorgang

ms.date: 1/23/2021 12:00:00 AM

ms.topic: article

qsharp.kind: operation

qsharp.namespace: Microsoft.Quantum.Characterization

qsharp.name: EstimateFrequencyA

qsharp.summary: Given a preparation that is adjointable and measur... | 48.066667 | 323 | 0.76699 | deu_Latn | 0.810489 |

9e16d6801e4c5abeb3fcb0cfe50afb40dc80a0b7 | 2,327 | md | Markdown | docs/database-engine/deprecated-database-engine-features-in-sql-server-version-15.md | roaming-debug/sql-docs.zh-cn | 6a1bc73995cfdbde269233c6342e136f32349419 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/database-engine/deprecated-database-engine-features-in-sql-server-version-15.md | roaming-debug/sql-docs.zh-cn | 6a1bc73995cfdbde269233c6342e136f32349419 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/database-engine/deprecated-database-engine-features-in-sql-server-version-15.md | roaming-debug/sql-docs.zh-cn | 6a1bc73995cfdbde269233c6342e136f32349419 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

description: '[!INCLUDE[sssql19-md](../includes/sssql19-md.md)] 中弃用的数据库引擎功能'

title: SQL Server 2019 中弃用的数据库引擎功能 | Microsoft Docs

titleSuffix: SQL Server 2019

ms.custom: seo-lt-2019

ms.date: 02/12/2021

ms.prod: sql

ms.prod_service: high-availability

ms.reviewer: ''

ms.technology: release-landing

ms.topic: conceptual... | 40.12069 | 272 | 0.776536 | yue_Hant | 0.511332 |

9e1754b7311295c06f21826fb05486a4393658ec | 599 | md | Markdown | README.md | yboyer/micrometa | 11f5b45167c3dc97e4f8527108011b9683c0581d | [

"MIT"

] | null | null | null | README.md | yboyer/micrometa | 11f5b45167c3dc97e4f8527108011b9683c0581d | [

"MIT"

] | null | null | null | README.md | yboyer/micrometa | 11f5b45167c3dc97e4f8527108011b9683c0581d | [

"MIT"

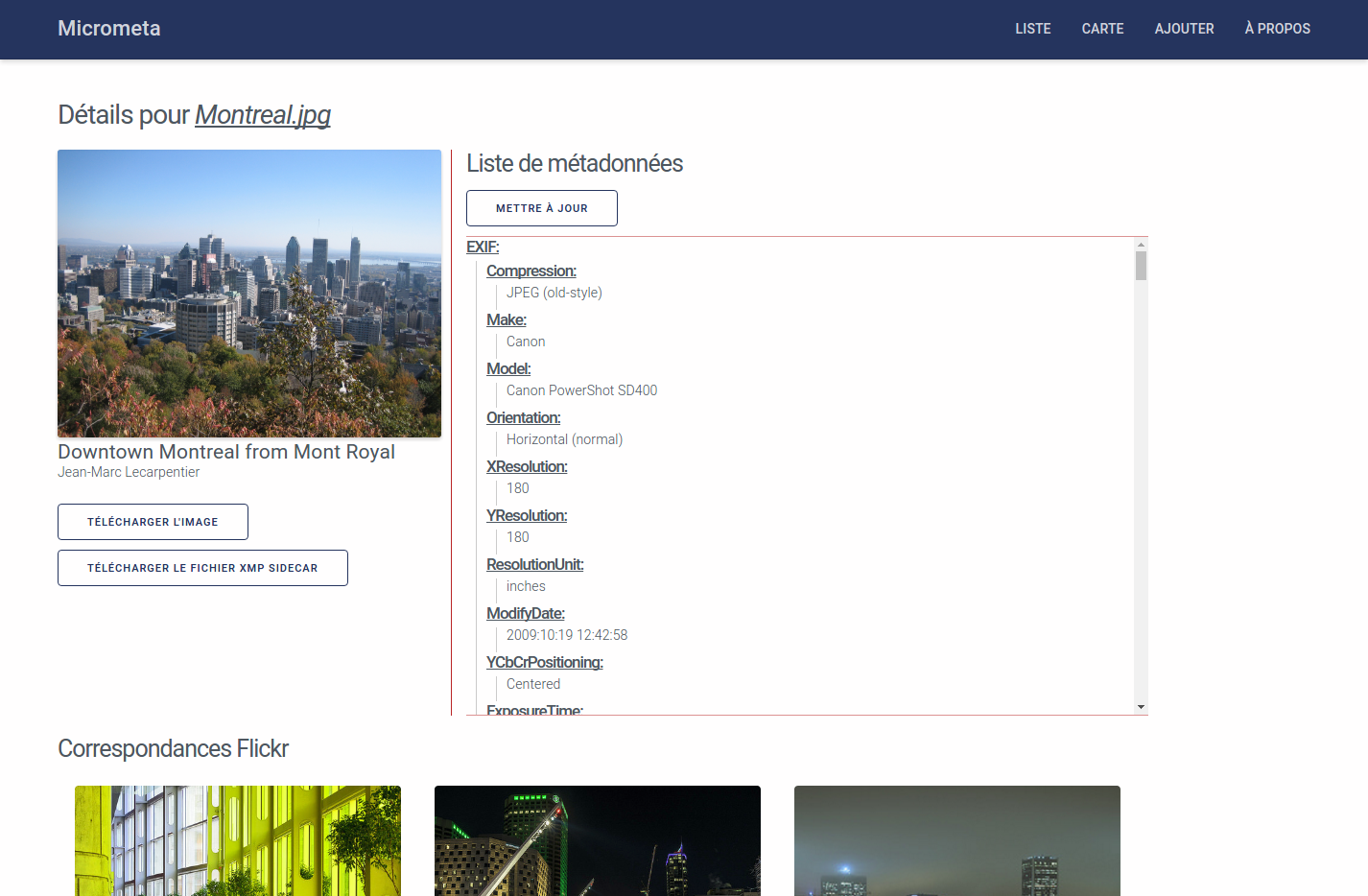

] | null | null | null | # Micrometa

> Subject: https://ensweb.users.info.unicaen.fr/devoirs/m2-dnr-umdn3c/

### Technologies

- `Silex`

- `Twig`

- `SASS`

- `ExifTool`

## Prerequisites:

- [`composer`](https://getcomposer.org/download/)

- [`E... | 22.185185 | 90 | 0.66611 | yue_Hant | 0.292393 |

9e1822ddac112e20e99ff2ccd3698b42956a33d2 | 468 | markdown | Markdown | _posts/2015-03-08-how-can-i-be-as-great-as-steve-jobs.markdown | malachai0/raw | 4ca5c13a34ad276c8c4f1991988fc16e7f8dd063 | [

"MIT"

] | 1 | 2019-01-28T09:08:07.000Z | 2019-01-28T09:08:07.000Z | _posts/2015-03-08-how-can-i-be-as-great-as-steve-jobs.markdown | malachai0/raw | 4ca5c13a34ad276c8c4f1991988fc16e7f8dd063 | [

"MIT"

] | null | null | null | _posts/2015-03-08-how-can-i-be-as-great-as-steve-jobs.markdown | malachai0/raw | 4ca5c13a34ad276c8c4f1991988fc16e7f8dd063 | [

"MIT"

] | 1 | 2019-01-28T09:08:09.000Z | 2019-01-28T09:08:09.000Z | ---

layout: post

title: How can I be as great as Steve Jobs?

date: '2015-03-08 12:57:10'

---

Young Composer: “Herr Mozart, I am thinking of writing a symphony. How should I get started?”

Mozart: “A symphony is a very complex musical form and you are still young. Perhaps you should start with something simpler, like a... | 36 | 142 | 0.741453 | eng_Latn | 0.998856 |

9e1836f1a7573cacd9b2d96bd120fbb7e2e96c18 | 1,053 | md | Markdown | README.md | Bluette1/progressbar | caab6ef96a8c8748f8fa29e3b941d0b23254f52b | [

"MIT"

] | null | null | null | README.md | Bluette1/progressbar | caab6ef96a8c8748f8fa29e3b941d0b23254f52b | [

"MIT"

] | 1 | 2021-03-22T07:41:13.000Z | 2021-03-22T07:41:13.000Z | README.md | Bluette1/progressbar | caab6ef96a8c8748f8fa29e3b941d0b23254f52b | [

"MIT"

] | null | null | null | # progressbar

A simple progress bar built using Javascript, HTML, and CSS.

## Built With

- Javascript

- HTML

- CSS

## Description

A simple progress bar built using Javascript, HTML, and CSS.

[Demo](https://bluette1.github.io/progressbar/)

## Authors

👤 **Maryl... | 21.9375 | 86 | 0.734093 | eng_Latn | 0.725682 |

9e1a1cdca966f40c0a239b28d2049f4ec4fbe3b2 | 4,284 | md | Markdown | _drafts/2017-06-02-&&-and.md | bensk/SE8_p5js | e330ed73a09f7d8cbb257b03ce649e7df32fabf3 | [

"MIT"

] | null | null | null | _drafts/2017-06-02-&&-and.md | bensk/SE8_p5js | e330ed73a09f7d8cbb257b03ce649e7df32fabf3 | [

"MIT"

] | null | null | null | _drafts/2017-06-02-&&-and.md | bensk/SE8_p5js | e330ed73a09f7d8cbb257b03ce649e7df32fabf3 | [

"MIT"

] | null | null | null | ---

title: AND

date: 2017-06-02 16:38:00 Z

layout: post

---

## Do Now (Google Classroom)

How can you make an ellipse that _ALWAYS_ appears 20px above the middle of the canvas?

When you're done answering, return to your [Traffic Light](http://bsk.education/SE8_p5js/2016/05/... | 33.46875 | 192 | 0.562558 | eng_Latn | 0.9731 |

9e1a523b17b1c1c3322402735271890699f03246 | 830 | md | Markdown | Network/Coding/close/close.md | nicesu/wiki | 8990270ceba0fece83ceddeaae834ceeff0774af | [

"MIT"

] | 357 | 2015-07-17T02:16:53.000Z | 2022-03-30T17:54:42.000Z | Network/Coding/close/close.md | nicesu/wiki | 8990270ceba0fece83ceddeaae834ceeff0774af | [

"MIT"

] | 36 | 2016-06-04T17:06:14.000Z | 2021-12-31T09:40:16.000Z | Network/Coding/close/close.md | nicesu/wiki | 8990270ceba0fece83ceddeaae834ceeff0774af | [

"MIT"

] | 80 | 2015-09-02T14:47:33.000Z | 2022-03-27T18:48:27.000Z | ## Close [Back](./../Coding.md)

- to close socket

- **close** method is just to decrease the number of references of **sockfd**, and clear sockfd when the number becomes **0**. That's because TCP will continue to use this sockfd to complete transmissions of data

### 1. close()

- 關閉後, 其他進程扔可以使用該socket描述符

##### metho... | 18.863636 | 213 | 0.662651 | eng_Latn | 0.858955 |

9e1b0e2f044f50371925e7dbd5aa9ad7e78a4bab | 87 | md | Markdown | content/english/blog/_index.md | RishabhNaik/portfolio | 37b6c57ce2aa336c3dd78c08722e37ca13ffb531 | [

"MIT"

] | 1 | 2021-05-24T07:24:15.000Z | 2021-05-24T07:24:15.000Z | content/english/blog/_index.md | RishabhNaik/portfolio | 37b6c57ce2aa336c3dd78c08722e37ca13ffb531 | [

"MIT"

] | null | null | null | content/english/blog/_index.md | RishabhNaik/portfolio | 37b6c57ce2aa336c3dd78c08722e37ca13ffb531 | [

"MIT"

] | null | null | null | ---

title: "My Latest Post"

description : "this is a meta description"

draft: false

--- | 17.4 | 42 | 0.689655 | eng_Latn | 0.960825 |

9e1b688fc3119a9c542cbea8e5d5f97b3092fec1 | 1,127 | md | Markdown | docs/framework/unmanaged-api/debugging/icordebugobjectvalue-getcontext-method.md | zabereznikova/docs.de-de | 5f18370cd709e5f6208aaf5cf371f161df422563 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/unmanaged-api/debugging/icordebugobjectvalue-getcontext-method.md | zabereznikova/docs.de-de | 5f18370cd709e5f6208aaf5cf371f161df422563 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/unmanaged-api/debugging/icordebugobjectvalue-getcontext-method.md | zabereznikova/docs.de-de | 5f18370cd709e5f6208aaf5cf371f161df422563 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: ICorDebugObjectValue::GetContext-Methode

ms.date: 03/30/2017

api_name:

- ICorDebugObjectValue.GetContext

api_location:

- mscordbi.dll

api_type:

- COM

f1_keywords:

- ICorDebugObjectValue::GetContext

helpviewer_keywords:

- GetContext method, ICorDebugObjectValue interface [.NET Framework debugging]

- ICorDebug... | 26.833333 | 94 | 0.770186 | yue_Hant | 0.665966 |

9e1b7da63246e3e4403d7cefa3e3b2a007ad619e | 589 | md | Markdown | solutions/3-formatted-input-output/projects/02/README.md | RichardKavanagh/c-modern-approach-solutions | 3f00cd657e8185b056394f660cb59f82a78f56a9 | [

"CC-BY-4.0"

] | null | null | null | solutions/3-formatted-input-output/projects/02/README.md | RichardKavanagh/c-modern-approach-solutions | 3f00cd657e8185b056394f660cb59f82a78f56a9 | [

"CC-BY-4.0"

] | null | null | null | solutions/3-formatted-input-output/projects/02/README.md | RichardKavanagh/c-modern-approach-solutions | 3f00cd657e8185b056394f660cb59f82a78f56a9 | [

"CC-BY-4.0"

] | null | null | null | ### C Formatted Input/Output - Project 3.02

Write a program that formats product information entered by the user.

A session with the program should look like this:

```

Enter item number: 583

Enter unit price: 13.5

Enter purchase date (mm/dd/yyyy): 10/24/2010

Item Unit Purchase

... | 28.047619 | 130 | 0.650255 | eng_Latn | 0.987076 |

9e1cc440d76df45ce5d69984a20a550446ba81a3 | 21 | md | Markdown | README.md | wawaac77/redux-toolkit-saga | 62ab22182e3a717f6136c530d08d315482a7f112 | [

"MIT"

] | null | null | null | README.md | wawaac77/redux-toolkit-saga | 62ab22182e3a717f6136c530d08d315482a7f112 | [

"MIT"

] | null | null | null | README.md | wawaac77/redux-toolkit-saga | 62ab22182e3a717f6136c530d08d315482a7f112 | [

"MIT"

] | null | null | null | # redux-toolkit-saga

| 10.5 | 20 | 0.761905 | est_Latn | 0.888034 |

9e1cdc427511fde19b78a2fb00aca49395dc8011 | 203 | md | Markdown | README.md | SofianSaleh/my-car-dealer-backend | bbd2fca1e0e14076c9ea276d560aa222c0e63f91 | [

"MIT"

] | 1 | 2020-09-22T21:09:44.000Z | 2020-09-22T21:09:44.000Z | README.md | SofianSaleh/my-car-dealer-backend | bbd2fca1e0e14076c9ea276d560aa222c0e63f91 | [

"MIT"

] | null | null | null | README.md | SofianSaleh/my-car-dealer-backend | bbd2fca1e0e14076c9ea276d560aa222c0e63f91 | [

"MIT"

] | null | null | null | # My-Car-Dealer

### folder structure:

- Using the Fractal Pattern:

#### Pros

- Reason about the location of files

- Manage and create complex user interfaces

- Iterate quickly

- And scale repeatably

| 15.615385 | 43 | 0.73399 | eng_Latn | 0.984803 |

9e1d121e1e9f0dbd6677ad3229dd43f7e070b4dc | 32 | md | Markdown | README.md | jgrooscors/proyectos | 1787e0d1d5e96c0f757b8787c0cea1aad1446c69 | [

"MIT"

] | null | null | null | README.md | jgrooscors/proyectos | 1787e0d1d5e96c0f757b8787c0cea1aad1446c69 | [

"MIT"

] | null | null | null | README.md | jgrooscors/proyectos | 1787e0d1d5e96c0f757b8787c0cea1aad1446c69 | [

"MIT"

] | null | null | null | # proyectos

proyectos de prueba

| 10.666667 | 19 | 0.8125 | spa_Latn | 0.999917 |

9e1d2646c585184f24f11441cb074643731b0baf | 164 | md | Markdown | test/date/README.md | nicholaswilde/solar-battery-charger | 47276386ef842f35346c14e2499c346413065469 | [

"Apache-2.0"

] | 5 | 2022-02-21T22:47:23.000Z | 2022-03-13T12:36:50.000Z | test/date/README.md | nicholaswilde/solar-battery-charger | 47276386ef842f35346c14e2499c346413065469 | [

"Apache-2.0"

] | 41 | 2022-02-20T09:16:33.000Z | 2022-03-31T02:04:40.000Z | test/date/README.md | nicholaswilde/solar-battery-charger | 47276386ef842f35346c14e2499c346413065469 | [

"Apache-2.0"

] | null | null | null | # date

Connect to an NTP server and get the date.

## Documentation

Documentation can be found [here](https://nicholaswilde.io/solar-battery-charger/test/date/).

| 20.5 | 93 | 0.756098 | eng_Latn | 0.930144 |

9e1d7ef55c7ae44333baffddbb74aa6104ae754f | 6,144 | md | Markdown | _posts/2019-07-13-Download-sacraments-of-life-life-of-the-sacraments-story-theology.md | Ozie-Ottman/11 | 1005fa6184c08c4e1a3030e5423d26beae92c3c6 | [

"MIT"

] | null | null | null | _posts/2019-07-13-Download-sacraments-of-life-life-of-the-sacraments-story-theology.md | Ozie-Ottman/11 | 1005fa6184c08c4e1a3030e5423d26beae92c3c6 | [

"MIT"

] | null | null | null | _posts/2019-07-13-Download-sacraments-of-life-life-of-the-sacraments-story-theology.md | Ozie-Ottman/11 | 1005fa6184c08c4e1a3030e5423d26beae92c3c6 | [

"MIT"

] | null | null | null | ---

layout: post

comments: true

categories: Other

---

## Download Sacraments of life life of the sacraments story theology book

I see. But he let Losen act the master! As far as I am aware, till they finally form a dreams, she didn't worry shores of the most northerly islands on Spitzbergen. it's you?" Ile wondered a... | 682.666667 | 6,014 | 0.789063 | eng_Latn | 0.999797 |

9e1dad7fc99b7f49a7f4c06baf91fb9555892030 | 18,071 | md | Markdown | bookdown/docs/Lab3_Distributions_I.md | liatkofler/psyc7709Lab | f7a4ce5fddc738afcb3cd8c8f848b9f3effdac9d | [

"CC-BY-4.0"

] | null | null | null | bookdown/docs/Lab3_Distributions_I.md | liatkofler/psyc7709Lab | f7a4ce5fddc738afcb3cd8c8f848b9f3effdac9d | [

"CC-BY-4.0"

] | 10 | 2021-08-17T20:40:04.000Z | 2021-12-08T08:56:38.000Z | bookdown/docs/Lab3_Distributions_I.md | liatkofler/psyc7709Lab | f7a4ce5fddc738afcb3cd8c8f848b9f3effdac9d | [

"CC-BY-4.0"

] | 1 | 2021-11-08T16:58:51.000Z | 2021-11-08T16:58:51.000Z | # Distributions I

"9/2/2020 | Last Compiled: 2020-12-04"

## Reading

Vokey & Allen, Chapters 5 & 6 on additional descriptive statistics, and recovering the distribution.

## Overview

<iframe width="560" height="315" src="https://www.youtube.com/embed/YQC3QlRNHwI" frameborder="0" allow="accelerometer; autoplay; enc... | 38.530917 | 588 | 0.740191 | eng_Latn | 0.99804 |

9e1ee7bdb11e8f8f24d80af079c938d0e6178231 | 475 | md | Markdown | README.md | EveryMundo/global-root-dir | 9a54abd8afe6ba6efe47023531847e531c10cc65 | [

"MIT"

] | null | null | null | README.md | EveryMundo/global-root-dir | 9a54abd8afe6ba6efe47023531847e531c10cc65 | [

"MIT"

] | 1 | 2021-05-06T22:40:36.000Z | 2021-05-06T22:40:36.000Z | README.md | EveryMundo/global-root-dir | 9a54abd8afe6ba6efe47023531847e531c10cc65 | [

"MIT"

] | null | null | null | # @everymundo/global-root-dir

Sets a global variable variable __rootdir

## Install

```sh

npm install @everymundo/global-root-dir

```

## Usage

```js

// this will use process.pwd() as value

require('@everymundo/global-root-dir').setGlobalRootDir()

console.log(global.__rootdir);

console.log({__rootdir});

// if you pref... | 23.75 | 66 | 0.749474 | eng_Latn | 0.257645 |

9e1f200647fac089d10414c5752677059cd8653a | 8,681 | md | Markdown | README.md | CristianGuemes/pyOCD | 1307acd7aa7f4dd4472af4d11eadea90f6c4fe5c | [

"Apache-2.0"

] | null | null | null | README.md | CristianGuemes/pyOCD | 1307acd7aa7f4dd4472af4d11eadea90f6c4fe5c | [

"Apache-2.0"

] | null | null | null | README.md | CristianGuemes/pyOCD | 1307acd7aa7f4dd4472af4d11eadea90f6c4fe5c | [

"Apache-2.0"

] | 1 | 2019-01-21T03:01:53.000Z | 2019-01-21T03:01:53.000Z | pyOCD

=====

pyOCD is an open source Python package for programming and debugging Arm Cortex-M microcontrollers

using multiple supported types of USB debug probes. It is fully cross-platform, with support for

Linux, macOS, and Windows.

A command line tool is provided that covers most use cases, or you can make use of ... | 37.098291 | 112 | 0.755789 | eng_Latn | 0.986171 |

9e1fd33150cc1d8584134943d5283bbb69d0f8b7 | 1,698 | md | Markdown | docs/safeint/safeintexception-class.md | Dinja1403/cpp-docs | 50161f2a9638424aa528253e95ef9a94ef028678 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2020-06-30T03:02:58.000Z | 2021-07-27T18:21:28.000Z | docs/safeint/safeintexception-class.md | drewbatgit/cpp-docs | 230b7231ed324317d2f806531288d6a109791af4 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2019-10-16T08:33:11.000Z | 2019-10-16T08:33:11.000Z | docs/safeint/safeintexception-class.md | drewbatgit/cpp-docs | 230b7231ed324317d2f806531288d6a109791af4 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-10-01T01:35:05.000Z | 2020-10-01T01:35:05.000Z | ---

title: "SafeIntException Class"

ms.date: "10/22/2018"

ms.topic: "reference"

f1_keywords: ["SafeIntException Class", "SafeIntException", "SafeIntException.SafeIntException", "SafeIntException::SafeIntException"]

helpviewer_keywords: ["SafeIntException class", "SafeIntException, constructor"]

ms.assetid: 88bef958-1f4... | 24.970588 | 134 | 0.694346 | kor_Hang | 0.355938 |

e591884c869b68474b3abe530b405dfdc1210a13 | 7,071 | md | Markdown | iis/configuration/system.webServer/caching/index.md | Rich-Lang/iis-docs | 3fc0423f2c1ca43616e24e7781ae49efc9c66016 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-03-19T18:34:42.000Z | 2020-03-19T18:34:42.000Z | iis/configuration/system.webServer/caching/index.md | Rich-Lang/iis-docs | 3fc0423f2c1ca43616e24e7781ae49efc9c66016 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | iis/configuration/system.webServer/caching/index.md | Rich-Lang/iis-docs | 3fc0423f2c1ca43616e24e7781ae49efc9c66016 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: "Caching <caching> | Microsoft Docs"

author: rick-anderson

description: "Overview The <caching> element allows you to enable or disable page output caching for an Internet Information Services (IIS) 7 application. This eleme..."

ms.author: iiscontent

manager: soshir

ms.date: 09/26/2016

ms.topic: ... | 53.568182 | 493 | 0.728468 | eng_Latn | 0.985081 |

e591c60ab13d0ed3937b57563fdd5069e6872bbb | 3,708 | md | Markdown | content/publication/iva2015.md | truonghuy/mywebsite | 75e749566f24055500d6677bb5ec1cc2fec3c5f8 | [

"MIT"

] | null | null | null | content/publication/iva2015.md | truonghuy/mywebsite | 75e749566f24055500d6677bb5ec1cc2fec3c5f8 | [

"MIT"

] | null | null | null | content/publication/iva2015.md | truonghuy/mywebsite | 75e749566f24055500d6677bb5ec1cc2fec3c5f8 | [

"MIT"

] | null | null | null | +++

title = "Modeling warmth and competence in virtual characters"

date = 2015-09-24T16:04:30-04:00

draft = false

# Authors. Comma separated list, e.g. `["Bob Smith", "David Jones"]`.

authors = ["Truong-Huy D Nguyen", "Elin Carstensdottir", "Nhi Ngo", "Magy Seif El-Nasr", "Matt Gray", "Derek Isaacowitz", "David DeSten... | 50.794521 | 1,148 | 0.762136 | eng_Latn | 0.974867 |

e591cf0ac3bdc877d43f4e83276491ee5176e58d | 26 | md | Markdown | README.md | goldcoinreserve/media | 175b2adbbabf3636991a8721a85d184d21c54bc5 | [

"BSD-3-Clause"

] | null | null | null | README.md | goldcoinreserve/media | 175b2adbbabf3636991a8721a85d184d21c54bc5 | [

"BSD-3-Clause"

] | null | null | null | README.md | goldcoinreserve/media | 175b2adbbabf3636991a8721a85d184d21c54bc5 | [

"BSD-3-Clause"

] | null | null | null | # media

GCR mepia package

| 8.666667 | 17 | 0.769231 | kor_Hang | 0.668953 |

e591ec1ca3b0e5ec187e885e57899eb65ef7a2a9 | 5,612 | md | Markdown | README.md | ihornet/egg-ts-helper | 7c2a5108bc221fc25ffb174d4a8ec33cfe219d2c | [

"MIT"

] | null | null | null | README.md | ihornet/egg-ts-helper | 7c2a5108bc221fc25ffb174d4a8ec33cfe219d2c | [

"MIT"

] | null | null | null | README.md | ihornet/egg-ts-helper | 7c2a5108bc221fc25ffb174d4a8ec33cfe219d2c | [

"MIT"

] | null | null | null | # egg-ts-helper

[![NPM version][npm-image]][npm-url]

[![Build Status][travis-image]][travis-url]

[![Appveyor status][appveyor-image]][appveyor-url]

[![Coverage Status][coveralls-image]][coveralls-url]

[npm-image]: https://img.shields.io/npm/v/egg-ts-helper.svg?style=flat-square

[npm-url]: https://npmjs.org/package/eg... | 21.419847 | 260 | 0.670349 | eng_Latn | 0.62362 |

e59233ddd865d1ccdfdd4b2bfccb56d6bf9e26e4 | 3,588 | md | Markdown | _posts/2020-02-20-kvalia.md | vvzvlad/blog | 8d8ec16cf0461d2ad8271d0d97362f9c34f37604 | [

"MIT"

] | null | null | null | _posts/2020-02-20-kvalia.md | vvzvlad/blog | 8d8ec16cf0461d2ad8271d0d97362f9c34f37604 | [

"MIT"

] | null | null | null | _posts/2020-02-20-kvalia.md | vvzvlad/blog | 8d8ec16cf0461d2ad8271d0d97362f9c34f37604 | [

"MIT"

] | null | null | null | ---

layout: post

title: Что такое умение?

---

Подумалось, что _умение_ что-то делать состоит из двух частей — знаний и навыков. Если со знаниями все более-менее ясно, то вот что такое навыки — формализовать сложно.

Я привык к следующему определению: навыки, во многом, это некая матрица коэффициентов важности. Условно,... | 156 | 718 | 0.798216 | rus_Cyrl | 0.997084 |

e592edb1b1cdfe9aa6d102cbcbe1bcf02f5c41a0 | 3,374 | md | Markdown | articles/desktop-flows/how-to/java.md | modery/power-automate-docs | 3ae7440345ffb33536f43157cf0ff8076ba47f54 | [

"CC-BY-4.0",

"MIT"

] | 98 | 2019-11-12T21:50:27.000Z | 2022-03-24T10:47:49.000Z | articles/desktop-flows/how-to/java.md | modery/power-automate-docs | 3ae7440345ffb33536f43157cf0ff8076ba47f54 | [

"CC-BY-4.0",

"MIT"

] | 566 | 2019-11-13T01:05:02.000Z | 2022-03-31T21:56:41.000Z | articles/desktop-flows/how-to/java.md | modery/power-automate-docs | 3ae7440345ffb33536f43157cf0ff8076ba47f54 | [

"CC-BY-4.0",

"MIT"

] | 126 | 2019-11-13T04:41:24.000Z | 2022-03-20T12:22:53.000Z | ---

title: Automate Java applications | Microsoft Docs

description: Automate Java applications

author: mariosleon

ms.service: power-automate

ms.subservice: desktop-flow

ms.topic: article

ms.date: 10/08/2021

ms.author: marleon

ms.reviewer:

search.app:

- Flow

search.audienceType:

- flowmaker

- enduser

---

# Aut... | 39.694118 | 222 | 0.77297 | eng_Latn | 0.971754 |

e59346606b1ab78248524e60402566c3c6d88745 | 2,418 | md | Markdown | docs/connect/jdbc/reference/commit-method-sqlserverconnection.md | Jteve-Sobs/sql-docs.de-de | 9843b0999bfa4b85e0254ae61e2e4ada1d231141 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/connect/jdbc/reference/commit-method-sqlserverconnection.md | Jteve-Sobs/sql-docs.de-de | 9843b0999bfa4b85e0254ae61e2e4ada1d231141 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/connect/jdbc/reference/commit-method-sqlserverconnection.md | Jteve-Sobs/sql-docs.de-de | 9843b0999bfa4b85e0254ae61e2e4ada1d231141 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Methode „commit“ (SQLServerConnection) | Microsoft-Dokumentation

ms.custom: ''

ms.date: 01/19/2017

ms.prod: sql

ms.prod_service: connectivity

ms.reviewer: ''

ms.technology: connectivity

ms.topic: conceptual

apiname:

- SQLServerConnection.commit

apilocation:

- sqljdbc.jar

apitype: Assembly

ms.assetid: c734616... | 46.5 | 640 | 0.782465 | deu_Latn | 0.91322 |

e593e85b4b04bb4fb0a5d6b15a21aa7196377473 | 79 | md | Markdown | Exchange/ExchangeOnline/recipients-in-exchange-online/index.md | skawafuchi/OfficeDocs-Exchange | 67ac7fc6893c39337cc18f8bb07260a47c01db9d | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-06-16T22:10:26.000Z | 2020-06-16T22:10:26.000Z | Exchange/ExchangeOnline/recipients-in-exchange-online/index.md | skawafuchi/OfficeDocs-Exchange | 67ac7fc6893c39337cc18f8bb07260a47c01db9d | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-01-27T08:03:30.000Z | 2020-01-27T08:03:30.000Z | Exchange/ExchangeOnline/recipients-in-exchange-online/index.md | skawafuchi/OfficeDocs-Exchange | 67ac7fc6893c39337cc18f8bb07260a47c01db9d | [

"CC-BY-4.0",

"MIT"

] | 1 | 2021-11-29T00:03:44.000Z | 2021-11-29T00:03:44.000Z | ---

redirect_url: recipients-in-exchange-online

redirect_document_id: TRUE

---

| 15.8 | 43 | 0.78481 | eng_Latn | 0.4086 |

e5942ce3f27b58583e432d31efcdef0e169297dc | 1,430 | md | Markdown | content/blog/reciprocal-cycles-ii.md | gamwe6/eulerinbabylon | dda136a0d5749a074added458a0d9f22fe93930f | [

"MIT"

] | null | null | null | content/blog/reciprocal-cycles-ii.md | gamwe6/eulerinbabylon | dda136a0d5749a074added458a0d9f22fe93930f | [

"MIT"

] | 13 | 2021-03-01T20:41:26.000Z | 2022-02-26T16:06:20.000Z | content/blog/reciprocal-cycles-ii.md | gamwe6/eulerinbabylon | dda136a0d5749a074added458a0d9f22fe93930f | [

"MIT"

] | null | null | null | ---

date: "2013-03-02T16:00:00"

title: "Reciprocal cycles II"

description: "Problem 417"

---

<p>A unit fraction contains 1 in the numerator. The decimal representation of the unit fractions with denominators 2 to 10 are given:</p>

<blockquote>

<table><tr><td><sup>1</sup>/<sub>2</sub></td><td>= </td><td>0.5</td>

</tr><... | 46.129032 | 150 | 0.611888 | eng_Latn | 0.772439 |

e5946709a3a1b77bec273ef0108e6f2652dc641d | 23,524 | md | Markdown | articles/migrate/how-to-migrate-vmware-vms-with-cmk-disks.md | beatrizmayumi/azure-docs.pt-br | ca6432fe5d3f7ccbbeae22b4ea05e1850c6c7814 | [

"CC-BY-4.0",

"MIT"

] | 39 | 2017-08-28T07:46:06.000Z | 2022-01-26T12:48:02.000Z | articles/migrate/how-to-migrate-vmware-vms-with-cmk-disks.md | beatrizmayumi/azure-docs.pt-br | ca6432fe5d3f7ccbbeae22b4ea05e1850c6c7814 | [

"CC-BY-4.0",

"MIT"

] | 562 | 2017-06-27T13:50:17.000Z | 2021-05-17T23:42:07.000Z | articles/migrate/how-to-migrate-vmware-vms-with-cmk-disks.md | beatrizmayumi/azure-docs.pt-br | ca6432fe5d3f7ccbbeae22b4ea05e1850c6c7814 | [

"CC-BY-4.0",

"MIT"

] | 113 | 2017-07-11T19:54:32.000Z | 2022-01-26T21:20:25.000Z | ---

title: Migrar máquinas virtuais VMware para o Azure com a SSE (criptografia do lado do servidor) e CMK (chaves gerenciadas pelo cliente) usando a migração de servidor de migrações para Azure

description: Saiba como migrar VMs VMware para o Azure com criptografia do lado do servidor (SSE) e chaves gerenciadas pelo c... | 85.231884 | 940 | 0.751488 | por_Latn | 0.927223 |

e5949bd120e9f41b50b11b53fc66596b80fa992b | 1,364 | md | Markdown | packages/cache-url/README.md | torch2424/amp-toolbox | beec9054355e67a2bb872b0e65b305a031434b71 | [

"Apache-2.0"

] | null | null | null | packages/cache-url/README.md | torch2424/amp-toolbox | beec9054355e67a2bb872b0e65b305a031434b71 | [

"Apache-2.0"

] | null | null | null | packages/cache-url/README.md | torch2424/amp-toolbox | beec9054355e67a2bb872b0e65b305a031434b71 | [

"Apache-2.0"

] | null | null | null | # AMP-Toolbox Cache URL

Translates an URL from the origin to the AMP Cache URL format, according to the specification

available in the [AMP documentation](https://developers.google.com/amp/cache/overview). This includes the SHA256 fallback URLs used by the AMP Cache on invalid human-readable cache urls.

## Usage

###... | 28.416667 | 186 | 0.71261 | eng_Latn | 0.460833 |

e59533240e3533d0d8f2f4438de724253f411126 | 40 | md | Markdown | docs/SPRecycleBinItemCollection.md | impensavel/spoil | 3b036f25ac87c8b0d52f1e6f011061edf35d9f59 | [

"MIT"

] | 5 | 2015-09-19T20:23:33.000Z | 2020-06-09T04:49:15.000Z | docs/SPRecycleBinItemCollection.md | impensavel/spoil | 3b036f25ac87c8b0d52f1e6f011061edf35d9f59 | [

"MIT"

] | null | null | null | docs/SPRecycleBinItemCollection.md | impensavel/spoil | 3b036f25ac87c8b0d52f1e6f011061edf35d9f59 | [

"MIT"

] | 3 | 2020-04-19T23:07:08.000Z | 2021-12-16T14:03:19.000Z | # SPOIL

## SPRecycleBinItemCollection

| 10 | 29 | 0.775 | yue_Hant | 0.886146 |

e59624928f7de5ab47ce11ef2515a1b5f3559c79 | 6,780 | md | Markdown | docs/cli/getting-started.md | LappleApple/tanzu-framework | b4293440b686b1314238763c1c084331f0867a6d | [

"Apache-2.0"

] | null | null | null | docs/cli/getting-started.md | LappleApple/tanzu-framework | b4293440b686b1314238763c1c084331f0867a6d | [

"Apache-2.0"

] | null | null | null | docs/cli/getting-started.md | LappleApple/tanzu-framework | b4293440b686b1314238763c1c084331f0867a6d | [

"Apache-2.0"

] | null | null | null | # Tanzu CLI Getting Started

A simple set of instructions to set up and use the Tanzu CLI.

## Binary installation

### Install the latest release of Tanzu CLI

`linux-amd64`,`windows-amd64`, and `darwin-amd64` are the OS-ARCHITECTURE

combinations we support now.

#### macOS/Linux

- Download the latest [release](https... | 26.692913 | 203 | 0.700737 | eng_Latn | 0.875936 |

e59678212e9b98adbd5502a9bd23fa22ae6fe7d2 | 525 | md | Markdown | README.md | aamishbaloch/my-django-extensions | 446f9a8d33355177e9d09ef926adeb003f1bd6d1 | [

"MIT"

] | null | null | null | README.md | aamishbaloch/my-django-extensions | 446f9a8d33355177e9d09ef926adeb003f1bd6d1 | [

"MIT"

] | null | null | null | README.md | aamishbaloch/my-django-extensions | 446f9a8d33355177e9d09ef926adeb003f1bd6d1 | [

"MIT"

] | null | null | null | My Django Extensions

--------------------

Created this to reuse what I have produced before. You can get a lot

of fine extensions for django here.

Getting It

----------

You can get My Django Extensions by using pip:

```sh

$ pip install git+https://github.com/aamishbaloch/my-django-extensions.git

```

Installing It

---... | 22.826087 | 118 | 0.678095 | eng_Latn | 0.956346 |

e5967bde5aea1f1afc5a2dd76b6464c885fcedc5 | 14,028 | md | Markdown | docs/framework/data/adonet/ef/string-functions.md | moebius87/docs.it-it | ab767314e9d53480fcc4e6a78819a3233cffb718 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/data/adonet/ef/string-functions.md | moebius87/docs.it-it | ab767314e9d53480fcc4e6a78819a3233cffb718 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/data/adonet/ef/string-functions.md | moebius87/docs.it-it | ab767314e9d53480fcc4e6a78819a3233cffb718 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Funzioni stringa

ms.date: 03/30/2017

ms.assetid: 338f0c26-8aee-43eb-bd1a-ec0849a376b9

ms.openlocfilehash: 6da257cad90232426c71221dfd9d418265479bbe

ms.sourcegitcommit: 9b552addadfb57fab0b9e7852ed4f1f1b8a42f8e

ms.translationtype: MT

ms.contentlocale: it-IT

ms.lasthandoff: 04/23/2019

ms.locfileid: "61879119"

--... | 264.679245 | 1,035 | 0.700813 | ita_Latn | 0.974758 |

e596b3e10d26c089b9f02203240e1e275ecb3f1c | 52 | md | Markdown | README.md | pzia/bnp-pdf-parser | ff5a79431427a797816885fbca244b665af63d93 | [

"MIT"

] | null | null | null | README.md | pzia/bnp-pdf-parser | ff5a79431427a797816885fbca244b665af63d93 | [

"MIT"

] | null | null | null | README.md | pzia/bnp-pdf-parser | ff5a79431427a797816885fbca244b665af63d93 | [

"MIT"

] | null | null | null | # bnp-pdf-parser

Parse to csv from Bnp Paribas PDF

| 17.333333 | 34 | 0.75 | kor_Hang | 0.998684 |

e5974a89e24a4ee6a1d1858aee4dd65463a4333c | 28 | md | Markdown | README.md | wihu/Fettle-Sharp | ab539bb2b91d7fa7cd766b5241703f8bf03c011b | [

"MIT"

] | null | null | null | README.md | wihu/Fettle-Sharp | ab539bb2b91d7fa7cd766b5241703f8bf03c011b | [

"MIT"

] | null | null | null | README.md | wihu/Fettle-Sharp | ab539bb2b91d7fa7cd766b5241703f8bf03c011b | [

"MIT"

] | null | null | null | # Fettle-Sharp

Fettle-Sharp

| 9.333333 | 14 | 0.785714 | eng_Latn | 0.866358 |

e5976382644f8b497139b5f8494b7a72da7803fc | 190 | md | Markdown | README.md | feelepxyz/feelep.xyz | b3cab4b31f60be44afcac8090809173a6f61c669 | [

"MIT"

] | null | null | null | README.md | feelepxyz/feelep.xyz | b3cab4b31f60be44afcac8090809173a6f61c669 | [

"MIT"

] | 151 | 2018-04-11T04:14:11.000Z | 2022-02-26T09:53:36.000Z | README.md | feelepxyz/feelep.xyz | b3cab4b31f60be44afcac8090809173a6f61c669 | [

"MIT"

] | 1 | 2018-09-14T09:29:35.000Z | 2018-09-14T09:29:35.000Z | # feelep.xyz

https://feelep.xyz

[](https://app.netlify.com/sites/feelep/deploys)

| 31.666667 | 155 | 0.763158 | hun_Latn | 0.136828 |

e598407086863a82b884c26130f3e8e58d32d2f0 | 4,549 | md | Markdown | articles/commerce/synchronize-tasks-teams-pos.md | alexbuckgit/dynamics-365-unified-operations-public | d15b46cd3d60f7eba15927469a936e29409f0036 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/commerce/synchronize-tasks-teams-pos.md | alexbuckgit/dynamics-365-unified-operations-public | d15b46cd3d60f7eba15927469a936e29409f0036 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/commerce/synchronize-tasks-teams-pos.md | alexbuckgit/dynamics-365-unified-operations-public | d15b46cd3d60f7eba15927469a936e29409f0036 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

# required metadata

title: Synchronize task management between Microsoft Teams and Dynamics 365 Commerce POS

description: This topic describes how to synchronize task management between Microsoft Teams and Dynamics 365 Commerce point of sale (POS).

author: gvrmohanreddy

ms.date: 02/17/2021

ms.topic: article

ms.prod... | 53.517647 | 265 | 0.774016 | eng_Latn | 0.986857 |

e5989a818f8952ea4a4e60a044f36f4bdda27518 | 150 | md | Markdown | content/querying/11-template-parameters.md | pusztaienike/docs-temp | 51efe20e9db1330bc0f38fabf50e14f06563894d | [

"MIT"

] | null | null | null | content/querying/11-template-parameters.md | pusztaienike/docs-temp | 51efe20e9db1330bc0f38fabf50e14f06563894d | [

"MIT"

] | null | null | null | content/querying/11-template-parameters.md | pusztaienike/docs-temp | 51efe20e9db1330bc0f38fabf50e14f06563894d | [

"MIT"

] | null | null | null | ---

title: Template parameters

sidebar: ApiDocs

showTitle: false

---

# Using template parameters

# Templates with properties

# Template expressions | 13.636364 | 27 | 0.773333 | eng_Latn | 0.905297 |

e598f41b0026780f01726d01ca16922ebe6a6e1e | 573 | md | Markdown | _languages-frameworks/26-vuejs.md | otaviojava/tech-radar | ff1657337a21c5a3078a212fb743613e7ea4e0be | [

"MIT"

] | 2 | 2021-07-09T11:43:42.000Z | 2021-11-03T16:40:53.000Z | _languages-frameworks/26-vuejs.md | otaviojava/tech-radar | ff1657337a21c5a3078a212fb743613e7ea4e0be | [

"MIT"

] | null | null | null | _languages-frameworks/26-vuejs.md | otaviojava/tech-radar | ff1657337a21c5a3078a212fb743613e7ea4e0be | [

"MIT"

] | 1 | 2021-07-09T13:17:54.000Z | 2021-07-09T13:17:54.000Z | ---

layout: details

filename: vuejs

name: vuejs

image: /tech-radar/assets/images/languages-frameworks/vuejs.png

category: languages-frameworks

ring: Assess

number: 26

---

# What is it ?

Vue.js is an open-source model–view–viewmodel front end JavaScript framework for building user interfaces and single-page application... | 30.157895 | 234 | 0.760908 | eng_Latn | 0.912161 |

e59a44517ccbd4c351ad5992111acfdfddfc92db | 94 | md | Markdown | docs/zh/guide/presentations.md | Stngle/macaca-reporter | e9fc02e09a3dda01806e1f2eef2cd99862a7898a | [

"MIT"

] | 1 | 2020-03-04T09:06:27.000Z | 2020-03-04T09:06:27.000Z | docs/zh/guide/presentations.md | zgq346712481/macaca-reporter | d2d12ec6b7167952575cd7fca6e85a264a667891 | [

"MIT"

] | null | null | null | docs/zh/guide/presentations.md | zgq346712481/macaca-reporter | d2d12ec6b7167952575cd7fca6e85a264a667891 | [

"MIT"

] | 1 | 2020-03-04T09:06:19.000Z | 2020-03-04T09:06:19.000Z | # 社区分享

## 分享

- Testerhome 社区:[《使用 Macaca Reporter 报告器》](https://testerhome.com/topics/9816)

| 15.666667 | 78 | 0.691489 | kor_Hang | 0.18558 |

e59b11988af0e6e004cad569b0e011f971659b34 | 1,554 | md | Markdown | docs/visual-basic/misc/bc30009.md | ANahr/docs.de-de | 14ad02cb12132d62994c5cb66fb6896864c7cfd7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/visual-basic/misc/bc30009.md | ANahr/docs.de-de | 14ad02cb12132d62994c5cb66fb6896864c7cfd7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/visual-basic/misc/bc30009.md | ANahr/docs.de-de | 14ad02cb12132d62994c5cb66fb6896864c7cfd7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Ein Verweis auf Assembly erforderlich "<Assemblyname>', enthält die implementierte Schnittstelle'<Schnittstellenname>"

ms.date: 07/20/2015

f1_keywords:

- vbc30009

- bc30009

helpviewer_keywords:

- BC30009

ms.assetid: b2dfb89d-7fde-4a8e-ba7f-fe1e59eabaca

ms.openlocfilehash: 09952d7329bd3e9a6f1f4bf2... | 50.129032 | 279 | 0.794723 | deu_Latn | 0.946747 |

e59b30159c73a76fcadae8d0455fe963fd9e7e2e | 2,039 | md | Markdown | events/elections/2021/candidate-aojea.md | Akshit6828/community | 74ae2f97f7f57949505d4636b4b037ec31079e97 | [

"Apache-2.0"

] | null | null | null | events/elections/2021/candidate-aojea.md | Akshit6828/community | 74ae2f97f7f57949505d4636b4b037ec31079e97 | [

"Apache-2.0"

] | null | null | null | events/elections/2021/candidate-aojea.md | Akshit6828/community | 74ae2f97f7f57949505d4636b4b037ec31079e97 | [

"Apache-2.0"

] | null | null | null | -------------------------------------------------------------

name: Antonio Ojea

ID: aojea

info:

- employer: Red Hat

- slack: aojea

-------------------------------------------------------------

## SIGS

- SIG Network

- SIG Testing

- Occasionally: SIG Release, Scalability, Lifecycle, API Machinery, Instrumentation,... | 46.340909 | 163 | 0.73075 | eng_Latn | 0.962705 |

e59b34dccb66ebae8509aff1707087c04f44f5f8 | 1,235 | md | Markdown | zxd/README.md | bbidong/maskrcnn-benchmark | b83254a1654e85ef38d73617f1a8ef66dea897e6 | [

"MIT"

] | null | null | null | zxd/README.md | bbidong/maskrcnn-benchmark | b83254a1654e85ef38d73617f1a8ef66dea897e6 | [

"MIT"

] | null | null | null | zxd/README.md | bbidong/maskrcnn-benchmark | b83254a1654e85ef38d73617f1a8ef66dea897e6 | [

"MIT"

] | null | null | null | # 安装环境

ubuntu 18

cuda 9

python 3.6

torch 1.0.0

torchvision 0.2.0

## Error

1. cocoapi安装出现`fatal error: Python.h: No such file or directory`

- 当前python版本没有安装完全,执行

```sh

sudo apt-get install python3-dev

```

如果报错,那么就换一个python版本建虚拟环境吧,比如3.6或3.7

2. apex安装出现 `error: expected primary-expressio... | 29.404762 | 154 | 0.740081 | yue_Hant | 0.33656 |

e59b846c68745f2ab16ba72ff7dc610bf4623f28 | 2,078 | md | Markdown | README.md | Protoncoind/ProtonCoin | af27976c103edf80435b523a4d14901e6566d1a9 | [

"MIT"

] | 25 | 2021-01-22T13:29:48.000Z | 2021-02-03T00:35:23.000Z | README.md | ingjose7/ProtonCoin | af27976c103edf80435b523a4d14901e6566d1a9 | [

"MIT"

] | null | null | null | README.md | ingjose7/ProtonCoin | af27976c103edf80435b523a4d14901e6566d1a9 | [

"MIT"

] | 3 | 2021-01-22T17:01:50.000Z | 2021-09-30T23:08:27.000Z | Especificaciones de ProtonCoin

Currency code: PTC

Currency name: ProtonCoin

Total Coin Supply: 28.000.000 PTC

transaction confirmations: 06

Maturity: 21

Proof-of-Work algorithm: Scrypt

¿Qué es ProtonCoin?

ProtonCoin es una nueva criptomoneda que utiliza el Sistema de Prueba de Trabajo (PoW, por sus siglas en inglés)... | 56.162162 | 635 | 0.817613 | spa_Latn | 0.997709 |

e59bc57718b03d8c7ac748224879a6473f9cea8a | 5,422 | md | Markdown | _notes/subject-1/note-1.md | kennguyen01/jekyll-cornell-notes | 0e22d83270717aaa39438b4f3c40fcc0e3ce02fc | [

"MIT"

] | null | null | null | _notes/subject-1/note-1.md | kennguyen01/jekyll-cornell-notes | 0e22d83270717aaa39438b4f3c40fcc0e3ce02fc | [

"MIT"

] | null | null | null | _notes/subject-1/note-1.md | kennguyen01/jekyll-cornell-notes | 0e22d83270717aaa39438b4f3c40fcc0e3ce02fc | [

"MIT"

] | null | null | null | ---

layout: note

title: Note 1

tag: Subject 1

---

{% include links.html tag=page.tag %}

> Donec risus mi, finibus ut condimentum a, eleifend vitae ligula. Phasellus interdum elit ut augue cursus, eu tincidunt felis commodo. Nunc luctus lobortis ligula nec bibendum. Quisque congue quis lacus a vehicula. Duis bibendum ... | 142.684211 | 718 | 0.808558 | hun_Latn | 0.142915 |

e59c0cc8165c583e47ace33e243d859868c1231e | 602 | markdown | Markdown | zero_editor_documentation/zeromanual/editor/editorcommands/command_list_viewer.markdown | zeroengineteam/ZeroDocs | ed780837c3f256d26da5ea9d5d780943a490f391 | [

"MIT"

] | 3 | 2019-03-13T06:29:11.000Z | 2021-09-02T11:23:09.000Z | zero_editor_documentation/zeromanual/editor/editorcommands/command_list_viewer.markdown | zeroengineteam/ZeroDocs | ed780837c3f256d26da5ea9d5d780943a490f391 | [

"MIT"

] | null | null | null | zero_editor_documentation/zeromanual/editor/editorcommands/command_list_viewer.markdown | zeroengineteam/ZeroDocs | ed780837c3f256d26da5ea9d5d780943a490f391 | [

"MIT"

] | 5 | 2019-04-02T23:51:22.000Z | 2021-06-24T15:16:55.000Z | The command list viewer is an in editor window which displays all registered engine commands, their hotkeys, and a brief description of their behavior.

The list can be accessed in the same way as any other command

- [Command](https://github.com/zeroengineteam/ZeroDocs/blob/master/zero_editor_documentation/zeromanual/... | 46.307692 | 273 | 0.822259 | eng_Latn | 0.821329 |

e59c1ba004ac9de62d819ec4725f22690d74ce75 | 52 | md | Markdown | http_client_sep_2020/README.md | codetojoy/gists_java | 5417b1d10ebce768d3b6a995eeb917894ae13ee7 | [

"Apache-2.0"

] | 1 | 2020-04-17T00:08:06.000Z | 2020-04-17T00:08:06.000Z | java/http_client_sep_2020/README.md | codetojoy/gists | 2616f36a8c301810a88b8a9e124af442cf717263 | [

"Apache-2.0"

] | 2 | 2021-04-25T12:26:02.000Z | 2021-07-27T17:17:32.000Z | java/http_client_sep_2020/README.md | codetojoy/gists | 2616f36a8c301810a88b8a9e124af442cf717263 | [

"Apache-2.0"

] | 1 | 2018-02-27T01:32:08.000Z | 2018-02-27T01:32:08.000Z |

### Summary

* client for ~/WarO_Strategy_API_Java

| 10.4 | 37 | 0.730769 | kor_Hang | 0.502655 |

e59c59169a3d23c60863bb97e5223a9662b77786 | 4,237 | md | Markdown | tests/dummy/app/pods/docs/addons/template.md | muziejus/ember-leaflet | 96ac37f5ece8407461bce77051e4ddddc7da8074 | [

"MIT"

] | null | null | null | tests/dummy/app/pods/docs/addons/template.md | muziejus/ember-leaflet | 96ac37f5ece8407461bce77051e4ddddc7da8074 | [

"MIT"

] | null | null | null | tests/dummy/app/pods/docs/addons/template.md | muziejus/ember-leaflet | 96ac37f5ece8407461bce77051e4ddddc7da8074 | [

"MIT"

] | null | null | null | # Addons

Leaflet has many plugins and they provide very useful features for your maps.

To use them in ember-leaflet, the community created ember addons that extend ember-leaflet

functionality, usually using some of those Leaflet plugins under the hood. A list of those addons can be found

in the <DocsLink @route="addon... | 36.843478 | 139 | 0.729762 | eng_Latn | 0.992247 |

e59c7674fc407f5f95e7e81c78d50f53b4d5b6cb | 932 | md | Markdown | _posts/2022-03-21-GnuTLS-Priority-String-Nedir?.md | BerkantErbey/jekyll-now | e7d216339bfbbfa705722b48079add84fb3d80ff | [

"MIT"

] | null | null | null | _posts/2022-03-21-GnuTLS-Priority-String-Nedir?.md | BerkantErbey/jekyll-now | e7d216339bfbbfa705722b48079add84fb3d80ff | [

"MIT"

] | null | null | null | _posts/2022-03-21-GnuTLS-Priority-String-Nedir?.md | BerkantErbey/jekyll-now | e7d216339bfbbfa705722b48079add84fb3d80ff | [

"MIT"

] | null | null | null | ---

published: true

---

## Giriş

* Merhaba, Bu yazımda **GnuTLS priority string** hakkında konuşacağız. Kısa bir yazı olacak. Yazıyı yazmamın amacı hem not almak, hem de hakkında az bilgi bulunduğunu düşündüğüm için paylaşmak.

## ... Nedir??

* **GnuTLS** , TLS SSL ve DTLS protokollerinin açık kaynak kodlu gerçek... | 49.052632 | 195 | 0.777897 | tur_Latn | 0.998266 |

e59c8519fef22d8f02935245ab1bd496fce9a098 | 423 | md | Markdown | README.md | z-rui/stok | 2d0cd70abfb0e2e08bba410908394f1e51d9d564 | [

"BSD-2-Clause"

] | null | null | null | README.md | z-rui/stok | 2d0cd70abfb0e2e08bba410908394f1e51d9d564 | [

"BSD-2-Clause"

] | null | null | null | README.md | z-rui/stok | 2d0cd70abfb0e2e08bba410908394f1e51d9d564 | [

"BSD-2-Clause"

] | null | null | null | # stok

This is a simple string tokenizer. It splits a string into tokens, which may include:

- white spaces

- numbers

- string literals

- identifiers

- punctuations

It is very simple and highly customizable. Instead of providing configuration mechanisms, we believe that it is easier to fork this repository and modif... | 30.214286 | 226 | 0.780142 | eng_Latn | 0.999923 |

e59cac096b7fdf16ae57b28efa2a8879d17a7012 | 294 | md | Markdown | README.md | oxguy3/rocview | cb22741047c76b7e98f6bdf9096eb4655a67be95 | [

"MIT"

] | null | null | null | README.md | oxguy3/rocview | cb22741047c76b7e98f6bdf9096eb4655a67be95 | [

"MIT"

] | null | null | null | README.md | oxguy3/rocview | cb22741047c76b7e98f6bdf9096eb4655a67be95 | [

"MIT"

] | null | null | null | rocview

=======

There are a fair number of openly accessible webcams around University of Rochester. This is a simple project to show them all as pins on a map to easily navigate through them all. I am no longer actively working on this project.

[MIT license](http://oxguy3.mit-license.org/)

| 42 | 229 | 0.765306 | eng_Latn | 0.997818 |

e59caf1f069560a8f67ba6a7d01a7e99619eb773 | 465 | md | Markdown | workspace/10-authentication/README.md | jballoffet/grpc-samples | 38708ba35e61d05f410d4a130960d3893d273a5e | [

"Apache-2.0"

] | null | null | null | workspace/10-authentication/README.md | jballoffet/grpc-samples | 38708ba35e61d05f410d4a130960d3893d273a5e | [

"Apache-2.0"

] | null | null | null | workspace/10-authentication/README.md | jballoffet/grpc-samples | 38708ba35e61d05f410d4a130960d3893d273a5e | [

"Apache-2.0"

] | null | null | null | # Authentication sample app

## Building

To build the application, taking `{REPO_PATH}` as the base repository path, run the following:

```bash

cd `{REPO_PATH}`/workspace/10-authentication

bazel build :greeter_server

bazel build :greeter_client

```

## Running

To run the application, taking `{REPO_PATH}` as the base r... | 23.25 | 94 | 0.765591 | eng_Latn | 0.91879 |

e59d768eb73554ac977a92ca6928b091f5595983 | 1,483 | md | Markdown | Notes/Opposite Category.md | zhaoshenzhai/MathWiki | 51caa9c2ec6c0463589ebb3b4d94b46baea0fdb7 | [

"MIT"

] | 1 | 2022-02-19T13:15:58.000Z | 2022-02-19T13:15:58.000Z | Notes/Opposite Category.md | zhaoshenzhai/MathWiki | 51caa9c2ec6c0463589ebb3b4d94b46baea0fdb7 | [

"MIT"

] | null | null | null | Notes/Opposite Category.md | zhaoshenzhai/MathWiki | 51caa9c2ec6c0463589ebb3b4d94b46baea0fdb7 | [

"MIT"

] | null | null | null | <br />

<br />

Date Created: 22/02/2022 12:14:26

Tags: #Definition #Closed

Types: _Not Applicable_

Examples: _Not Applicable_

Constructions: _Not Applicable_

Generalizations: _Not Applicable_

Properties: _Not Applicable_

Sufficiencies: _Not Applicable_

Equivalences: _Not Applicable_

Justifications: [[Opposite catego... | 41.194444 | 219 | 0.658125 | eng_Latn | 0.448785 |

e59d97478fba6b03aa1a76130745d8f6ad708f98 | 30 | md | Markdown | README.md | devKiratu/my-flask-app | 6def1f9699bc841ec2fc9420e6abd6dc0466f7e0 | [

"MIT"

] | null | null | null | README.md | devKiratu/my-flask-app | 6def1f9699bc841ec2fc9420e6abd6dc0466f7e0 | [

"MIT"

] | null | null | null | README.md | devKiratu/my-flask-app | 6def1f9699bc841ec2fc9420e6abd6dc0466f7e0 | [

"MIT"

] | null | null | null | # my-flask-app

learning flask

| 10 | 14 | 0.766667 | eng_Latn | 0.420077 |

e59dafee95cf7c57f3a370a9603bc75823eead76 | 45 | md | Markdown | README.md | emilniklas/vue-dart | 3ab441354f4ff6fd6f837e84d07ad920ec28f490 | [

"MIT"

] | 6 | 2015-07-19T16:35:21.000Z | 2017-06-07T18:53:27.000Z | README.md | emilniklas/vue-dart | 3ab441354f4ff6fd6f837e84d07ad920ec28f490 | [

"MIT"

] | 2 | 2015-12-02T09:55:03.000Z | 2017-12-11T16:20:37.000Z | README.md | emilniklas/vue-dart | 3ab441354f4ff6fd6f837e84d07ad920ec28f490 | [

"MIT"

] | null | null | null | # Vue.dart

Vue.js through Dart's JS interop. | 15 | 33 | 0.733333 | kor_Hang | 0.508048 |

e59e906778fff0f4d0b58030377c207bc5e25bdb | 2,248 | md | Markdown | articles/active-directory/active-directory-b2b-current-limitations.md | ebibibi/azure-docs.ja-jp | ef49e9766dd3dc5a825ea3b19b9c526e2c37faba | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/active-directory/active-directory-b2b-current-limitations.md | ebibibi/azure-docs.ja-jp | ef49e9766dd3dc5a825ea3b19b9c526e2c37faba | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/active-directory/active-directory-b2b-current-limitations.md | ebibibi/azure-docs.ja-jp | ef49e9766dd3dc5a825ea3b19b9c526e2c37faba | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: "Azure Active Directory B2B コラボレーションの制限 | Microsoft Docs"

description: "Azure Active Directory B2B コラボレーションの現在の制限事項"

services: active-directory

documentationcenter:

author: sasubram

manager: mtillman

editor:

tags:

ms.assetid:

ms.service: active-directory

ms.devlang: NA

ms.topic: article

ms.tg... | 47.829787 | 340 | 0.810943 | yue_Hant | 0.392199 |

e59e946be46f6b4ec748ceae32a35fd5f5f3b5b0 | 356 | md | Markdown | .github/SECURITY.md | jaredbain/digitalcorps.gsa.gov | 95ee7da6b8f334497c53e2256695411e35d9fc94 | [

"CC0-1.0"

] | 4 | 2021-06-25T01:57:26.000Z | 2021-12-09T16:52:15.000Z | .github/SECURITY.md | jaredbain/digitalcorps.gsa.gov | 95ee7da6b8f334497c53e2256695411e35d9fc94 | [

"CC0-1.0"

] | 98 | 2021-07-09T03:48:50.000Z | 2022-03-14T20:55:19.000Z | .github/SECURITY.md | jaredbain/digitalcorps.gsa.gov | 95ee7da6b8f334497c53e2256695411e35d9fc94 | [

"CC0-1.0"

] | 1 | 2021-12-15T19:48:28.000Z | 2021-12-15T19:48:28.000Z | # Security Policy

See GSA's [Privacy and Security Policy](https://www.gsa.gov/website-information/website-policies#privacy)

## Reporting a Vulnerability

digitalcorps.gsa.gov is a participant in GSA's Vulnerability Disclosure program.

For more information, see GSA's [Vulnerability Disclosure Policy](https://www.gsa.g... | 39.555556 | 118 | 0.80618 | eng_Latn | 0.804877 |

e59e9deb2da5506ee267fbe3f144e86d9d797a0d | 136 | md | Markdown | c++ problems asked in interview/README.md | rj011/Hacktoberfest2021-4 | 0aa981d4ba5e71c86cc162d34fe57814050064c2 | [

"MIT"

] | 41 | 2021-10-03T16:03:52.000Z | 2021-11-14T18:15:33.000Z | c++ problems asked in interview/README.md | rj011/Hacktoberfest2021-4 | 0aa981d4ba5e71c86cc162d34fe57814050064c2 | [

"MIT"

] | 175 | 2021-10-03T10:47:31.000Z | 2021-10-20T11:55:32.000Z | c++ problems asked in interview/README.md | rj011/Hacktoberfest2021-4 | 0aa981d4ba5e71c86cc162d34fe57814050064c2 | [

"MIT"

] | 208 | 2021-10-03T11:24:04.000Z | 2021-10-31T17:27:59.000Z | # CODE{ON}FEST-Program

I am a participant at Code{On}Fest Program.

Here I will upload my code for the questions I solve each day.

| 27.2 | 64 | 0.735294 | eng_Latn | 0.982664 |

e59eb745c02d03adbdd613da78843d02f4143051 | 1,704 | md | Markdown | readme.md | leekafai/singleflight | 1dad49666429c01b7f4cd5ff52bdae2069136847 | [

"MIT"

] | null | null | null | readme.md | leekafai/singleflight | 1dad49666429c01b7f4cd5ff52bdae2069136847 | [

"MIT"

] | null | null | null | readme.md | leekafai/singleflight | 1dad49666429c01b7f4cd5ff52bdae2069136847 | [

"MIT"

] | null | null | null | Golang singleflight in Node

---

provides a duplicate function call suppression mechanism.

当在并发中需要异步获取相同的响应数据时,可以使用 `singleFlight` 减少实际发出的异步请求数量。

例如在一个 HTTP 服务中的接口 `/getACombo` 中,需要返回一个缓存于 redis 的数据。

当多个客户端并发请求 `/getACombo` 接口,并传递相同的参数时,期望中返回的数据是一致且可以共用的。

此时,在不使用 `singleFlight` 的情况下,每一个请求都会发出一个 redis 指令;

使用 `sing... | 24 | 100 | 0.677817 | yue_Hant | 0.573394 |

e59eb9ea28eb6ec8efdddf55d9ac07ecd3e8d361 | 16 | md | Markdown | README.md | markthethomas/todocrud-node | dfb400f6035571747bee3a5046e6abd05bdda0b0 | [

"MIT"

] | null | null | null | README.md | markthethomas/todocrud-node | dfb400f6035571747bee3a5046e6abd05bdda0b0 | [

"MIT"

] | null | null | null | README.md | markthethomas/todocrud-node | dfb400f6035571747bee3a5046e6abd05bdda0b0 | [

"MIT"

] | null | null | null | # todocrud-node

| 8 | 15 | 0.75 | vie_Latn | 0.340151 |

e59f8095334414a6ba5a5788aecefd87fd52cbcc | 227 | md | Markdown | _posts/1995-03-24-a-small-oilslick-threatens-the.md | MiamiMaritime/miamimaritime.github.io | d087ae8c104ca00d78813b5a974c154dfd9f3630 | [

"MIT"

] | null | null | null | _posts/1995-03-24-a-small-oilslick-threatens-the.md | MiamiMaritime/miamimaritime.github.io | d087ae8c104ca00d78813b5a974c154dfd9f3630 | [

"MIT"

] | null | null | null | _posts/1995-03-24-a-small-oilslick-threatens-the.md | MiamiMaritime/miamimaritime.github.io | d087ae8c104ca00d78813b5a974c154dfd9f3630 | [

"MIT"

] | null | null | null | ---

title: A small oilslick threatens the

tags:

- Mar 1995

---

A small oilslick threatens the coral reef at Looe Key.

Newspapers: **Miami Morning News or The Miami Herald**

Page: **7**, Section: **B**

| 18.916667 | 56 | 0.621145 | eng_Latn | 0.847095 |

e5a0245fefb61bb11ce38abd3d92e602fc5627d0 | 144,292 | md | Markdown | docs/typescript.md | rocaccion/cdk8s-plus | 4fed38590dc48da6cfafa1d02380fbf2f921c1eb | [

"Apache-2.0"

] | null | null | null | docs/typescript.md | rocaccion/cdk8s-plus | 4fed38590dc48da6cfafa1d02380fbf2f921c1eb | [

"Apache-2.0"

] | null | null | null | docs/typescript.md | rocaccion/cdk8s-plus | 4fed38590dc48da6cfafa1d02380fbf2f921c1eb | [

"Apache-2.0"

] | null | null | null | # API Reference <a name="API Reference"></a>

## Constructs <a name="Constructs"></a>

### ConfigMap <a name="cdk8s-plus-22.ConfigMap"></a>

- *Implements:* [`cdk8s-plus-22.IConfigMap`](#cdk8s-plus-22.IConfigMap)

ConfigMap holds configuration data for pods to consume.

#### Initializers <a name="cdk8s-plus-22.ConfigMa... | 26.073726 | 774 | 0.717566 | eng_Latn | 0.787574 |

e5a087f741cf495b4968aa3d378ac8a8e6d9ab40 | 7,930 | md | Markdown | python/kfserving/README.md | jal06/kfserving | e662f7a42375cd5ac141b06b1548af04be7090f2 | [

"Apache-2.0"

] | null | null | null | python/kfserving/README.md | jal06/kfserving | e662f7a42375cd5ac141b06b1548af04be7090f2 | [

"Apache-2.0"

] | 25 | 2021-03-19T15:43:34.000Z | 2022-03-12T00:53:07.000Z | python/kfserving/README.md | jal06/kfserving | e662f7a42375cd5ac141b06b1548af04be7090f2 | [

"Apache-2.0"

] | 4 | 2021-02-15T23:02:53.000Z | 2022-01-27T22:54:16.000Z | # KFServing Python SDK

Python SDK for KFServing Server and Client.

## Installation

KFServing Python SDK can be installed by `pip` or `Setuptools`.

### pip install

```sh

pip install kfserving

```

### Setuptools

Install via [Setuptools](http://pypi.python.org/pypi/setuptools).

```sh

python setup.py install --user

... | 56.241135 | 302 | 0.792812 | yue_Hant | 0.679332 |

e5a090aea30624c4b74ea9555f359f885a3ad210 | 2,686 | md | Markdown | how_to_use.md | sedici/docker4drupal | fecff902ed1117602f7726e57afce8a01ba5c29e | [

"MIT"

] | null | null | null | how_to_use.md | sedici/docker4drupal | fecff902ed1117602f7726e57afce8a01ba5c29e | [

"MIT"

] | null | null | null | how_to_use.md | sedici/docker4drupal | fecff902ed1117602f7726e57afce8a01ba5c29e | [

"MIT"

] | null | null | null | # How to use Docker4Drupal with custom codebase

This explanation is intended for those Drupal installation based in [custom source code](https://wodby.com/docs/stacks/drupal/local/#mount-my-codebase). For this, its going to be required a database dump and a source code directory (generated with the [drush archive-dump]... | 61.045455 | 337 | 0.753165 | eng_Latn | 0.980497 |

e5a0af60268f62e4b5b35e93fab59be7f46ec805 | 3,837 | md | Markdown | blockchain-development-kit/accelerators/corda-integration-accelerator/service-bus-integration/corda-transaction-builder/docs/QuickStart.md | larrycl/blockchain | 94156bf2cbb0ce35bae2b25659b0124c80c324af | [

"MIT"

] | 1 | 2018-11-17T17:18:20.000Z | 2018-11-17T17:18:20.000Z | blockchain-development-kit/accelerators/corda-integration-accelerator/service-bus-integration/corda-transaction-builder/docs/QuickStart.md | larrycl/blockchain | 94156bf2cbb0ce35bae2b25659b0124c80c324af | [

"MIT"

] | null | null | null | blockchain-development-kit/accelerators/corda-integration-accelerator/service-bus-integration/corda-transaction-builder/docs/QuickStart.md | larrycl/blockchain | 94156bf2cbb0ce35bae2b25659b0124c80c324af | [

"MIT"

] | 1 | 2018-11-28T13:48:20.000Z | 2018-11-28T13:48:20.000Z | # Quick Start

[Index](Index.md)

Still in early stages of development, but the following should work

### 1.

Follow the [Quick Start](../corda-local-network/docs/QuickStart.md) guide

in 'corda-local-network' to get a local dev network running with the

'refrigerated transport' example.

Ensure that a local DNS entry... | 25.925676 | 287 | 0.636435 | eng_Latn | 0.747722 |

e5a1aa6c97596b90877a8b8d58a653c18c505222 | 1,472 | md | Markdown | CHANGELOG.md | OverZealous/cdnizer | 0d8d5050cd7724331efb48e46ea8268f22927cee | [

"MIT"

] | 44 | 2015-02-21T13:22:50.000Z | 2021-07-07T02:53:08.000Z | CHANGELOG.md | OverZealous/cdnizer | 0d8d5050cd7724331efb48e46ea8268f22927cee | [

"MIT"

] | 29 | 2015-02-24T16:25:30.000Z | 2020-05-21T14:58:20.000Z | CHANGELOG.md | OverZealous/cdnizer | 0d8d5050cd7724331efb48e46ea8268f22927cee | [

"MIT"

] | 19 | 2015-02-24T15:41:31.000Z | 2020-05-21T14:45:44.000Z | # Changelog

All notable changes to this project will be documented in this file.

The format is based on [Keep a Changelog](http://keepachangelog.com/en/1.0.0/)

and this project adheres to [Semantic Versioning](http://semver.org/spec/v2.0.0.html).

## [3.2.1](https://github.com/OverZealous/cdnizer/releases/tag/v3.2.1) ... | 40.888889 | 151 | 0.732337 | eng_Latn | 0.835173 |

e5a2919f5cba1cc69747109d088a15b0872cff21 | 2,418 | md | Markdown | README.md | SevdanurGENC/Data-Analytics-Lecture-Notes | 895b43285e76fb6f9a23b187058992454dbfe883 | [

"MIT"

] | null | null | null | README.md | SevdanurGENC/Data-Analytics-Lecture-Notes | 895b43285e76fb6f9a23b187058992454dbfe883 | [

"MIT"

] | null | null | null | README.md | SevdanurGENC/Data-Analytics-Lecture-Notes | 895b43285e76fb6f9a23b187058992454dbfe883 | [

"MIT"

] | null | null | null | # Data-Analytics-Lecture-Notes

In this repo, I have the course contents of the Data Analytics training, which will be given to Innova Technology by the cooperation of Academy Peak Information Technologies Training and Consultancy between 27 - 29 September 2021.

Bu repoda, 27 - 29 Eylül 2021 tarihleri arasında Academy ... | 43.178571 | 739 | 0.831266 | tur_Latn | 0.999999 |

e5a30b94932965c8607e95b7708e2935316119f8 | 2,604 | md | Markdown | global/2020_GCR_SZ_ContainerDay/步骤7-在EKS中使用IAMRole进行权限管理.md | aws-samples/eks-workshop-greater-china | ad13f30c2f6d231672a8a877f5bfc79cd8bad78d | [

"MIT-0"

] | 129 | 2019-12-16T08:19:20.000Z | 2022-03-24T08:58:04.000Z | global/2020_GCR_SZ_ContainerDay/步骤7-在EKS中使用IAMRole进行权限管理.md | aws-samples/eks-workshop-greater-china | ad13f30c2f6d231672a8a877f5bfc79cd8bad78d | [

"MIT-0"

] | 35 | 2019-12-19T08:16:57.000Z | 2022-03-03T06:04:41.000Z | global/2020_GCR_SZ_ContainerDay/步骤7-在EKS中使用IAMRole进行权限管理.md | aws-samples/eks-workshop-greater-china | ad13f30c2f6d231672a8a877f5bfc79cd8bad78d | [

"MIT-0"

] | 98 | 2019-12-16T08:07:22.000Z | 2022-02-09T06:07:47.000Z | # 步骤7 在EKS中使用IAM Role进行权限管理

我们将要为ServiceAccount配置一个S3的访问角色,并且部署一个job应用到EKS集群,完成S3的写入。

7.1 配置IAM Role、ServiceAccount

>7.1.1 使用eksctl 创建service account

```bash

# 在步骤3我们已经创建了OIDC身份提供商

# 请检查IAM OpenID Connect (OIDC) 身份提供商是否已经创建

aws eks describe-cluster --name ${CLUSTER_NAME} --query cluster.identity.oidc.issuer --ou... | 30.635294 | 162 | 0.74424 | yue_Hant | 0.572873 |

e5a33875fe819f7df9b82ac6f7ca03cf67296aad | 258 | md | Markdown | SA-Document/Baiscinfo.md | zjhZJH1998/SA-scrapy | 6ee0374dcaa50fe303b28a26e1085997bc162591 | [

"BSD-3-Clause"

] | null | null | null | SA-Document/Baiscinfo.md | zjhZJH1998/SA-scrapy | 6ee0374dcaa50fe303b28a26e1085997bc162591 | [

"BSD-3-Clause"

] | null | null | null | SA-Document/Baiscinfo.md | zjhZJH1998/SA-scrapy | 6ee0374dcaa50fe303b28a26e1085997bc162591 | [

"BSD-3-Clause"

] | 2 | 2019-10-03T04:12:58.000Z | 2019-10-03T14:04:59.000Z | Basic information

====

Name list

----

王子豪 2017302580257\

王怿临 2017302580258\

张新豪\

王初程 2017302580267

Project name

----

Scrapy:

An open source and collaborative framework for extracting the data you need from websites.\

In a fast, simple, yet extensible way.

| 16.125 | 91 | 0.763566 | eng_Latn | 0.971795 |

e5a393d04af59801c9079785932c770413f22897 | 15 | md | Markdown | README.md | changan-xigua/op.github.io | cdcb6f084f1742e4ce79d51c3cc238ab5bf684dd | [

"Apache-2.0"

] | null | null | null | README.md | changan-xigua/op.github.io | cdcb6f084f1742e4ce79d51c3cc238ab5bf684dd | [

"Apache-2.0"

] | null | null | null | README.md | changan-xigua/op.github.io | cdcb6f084f1742e4ce79d51c3cc238ab5bf684dd | [

"Apache-2.0"

] | null | null | null | # op.github.io

| 7.5 | 14 | 0.666667 | por_Latn | 0.517347 |

e5a404812181c480d21546c288f118d583cdf7b6 | 2,158 | md | Markdown | src/sw/2020-04/12/05.md | Pmarva/sabbath-school-lessons | 0e1564557be444c2fee51ddfd6f74a14fd1c45fa | [

"MIT"

] | 68 | 2016-10-30T23:17:56.000Z | 2022-03-27T11:58:16.000Z | src/sw/2020-04/12/05.md | Pmarva/sabbath-school-lessons | 0e1564557be444c2fee51ddfd6f74a14fd1c45fa | [

"MIT"

] | 367 | 2016-10-21T03:50:22.000Z | 2022-03-28T23:35:25.000Z | src/sw/2020-04/12/05.md | Pmarva/sabbath-school-lessons | 0e1564557be444c2fee51ddfd6f74a14fd1c45fa | [

"MIT"

] | 109 | 2016-08-02T14:32:13.000Z | 2022-03-31T10:18:41.000Z | ---

title: 'Muda wa Kutafuta Uwiano'

date: 16/12/2020

---

Yesu aliheshimu na kuitii sheria ya Mungu (Mathayo 5:17, 18). Hata hivyo Yesu alikemea uongozi wa kidini vile ulivyozitafsiri sheria. Viongozi hao wa kidini walitishika mno kutokana na kauli Yesu alizotamka kuhusu utunzaji wa Sabato. Sinagogi halikuacha kufanya... | 134.875 | 547 | 0.807692 | swh_Latn | 1.000004 |

e5a43116721b51c69aec2c3fac9a5b61c58e4478 | 450 | md | Markdown | README.md | alls77/homepage | cd659a2ab2882ff30b14c567cdb0360f1663da82 | [

"MIT"

] | null | null | null | README.md | alls77/homepage | cd659a2ab2882ff30b14c567cdb0360f1663da82 | [

"MIT"

] | 19 | 2021-05-04T06:37:59.000Z | 2021-05-06T16:11:32.000Z | README.md | alls77/homepage | cd659a2ab2882ff30b14c567cdb0360f1663da82 | [

"MIT"

] | null | null | null | ## Homepage

My very own personal website. Basically this is just a résumé.

## Preview

## Demo

[GitHub Pages](https://alls77.github.io/homepage/)

## Credits

Thanks [@volodymyr-kushnir](https://github.com/volodymyr-kushnir) for sty... | 30 | 107 | 0.744444 | yue_Hant | 0.426758 |

e5a4f7cd0d5efcc258d5d4d8c4cb01dd78c62493 | 3,558 | md | Markdown | src/content/posts/2008-12-20-dicionario-stardict-no-linux.md | manoelcampos/blog | 34c90443dc190978e76917c99c4d8be4f6292fe3 | [

"Apache-2.0"

] | 1 | 2018-03-23T17:47:59.000Z | 2018-03-23T17:47:59.000Z | src/content/posts/2008-12-20-dicionario-stardict-no-linux.md | manoelcampos/blog | 34c90443dc190978e76917c99c4d8be4f6292fe3 | [

"Apache-2.0"

] | null | null | null | src/content/posts/2008-12-20-dicionario-stardict-no-linux.md | manoelcampos/blog | 34c90443dc190978e76917c99c4d8be4f6292fe3 | [

"Apache-2.0"

] | null | null | null | ---

author: admin

comments: true

layout: post

slug: dicionario-stardict-no-linux

title: Dicionário StarDict no Linux

wordpress_id: 135

categories:

- Internet

- Linux

- Software

- Software Livre

---

O [StarDict](http://stardict.sourceforge.net) é um dicionário opensource semelhante ao famoso [Babylon](http://www.babylo... | 43.390244 | 426 | 0.788083 | por_Latn | 0.996641 |

e5a5271dcc8b28af8003b2f00a89aed5b60cfc76 | 892 | md | Markdown | README.md | Alfresco/aws-auto-tag | 8f7312cf97f35f148a9ae45b6e99a228a5123287 | [

"Apache-2.0"

] | 3 | 2017-11-25T07:15:06.000Z | 2021-05-25T13:18:43.000Z | README.md | Alfresco/aws-auto-tag | 8f7312cf97f35f148a9ae45b6e99a228a5123287 | [

"Apache-2.0"

] | null | null | null | README.md | Alfresco/aws-auto-tag | 8f7312cf97f35f148a9ae45b6e99a228a5123287 | [

"Apache-2.0"

] | 4 | 2017-10-24T13:51:52.000Z | 2018-03-20T20:42:06.000Z | # AutoTag

This repository contains a CloudFormation template that creates a Lambda function

that is triggered by a CloudWatch rule listening for CloudTrail create events in the region.

Currently new EC2 instances and S3 buckets are tracked and tagged.

## Prerequisites

Install and configure the AWS Command Line I... | 37.166667 | 172 | 0.780269 | eng_Latn | 0.997612 |

e5a52dd6b8bbe8d11b4d0c8a86a376b97f0fbbe9 | 524 | md | Markdown | public/readme.md | spudmashmedia/spudtube | 10fe99d3f10efd811aa6e0f40f3a308e2f7a3975 | [

"MIT"

] | null | null | null | public/readme.md | spudmashmedia/spudtube | 10fe99d3f10efd811aa6e0f40f3a308e2f7a3975 | [

"MIT"

] | null | null | null | public/readme.md | spudmashmedia/spudtube | 10fe99d3f10efd811aa6e0f40f3a308e2f7a3975 | [

"MIT"

] | null | null | null | # SPUDTUBE - NodeJS version

A very very very very very hacky streaming service....that needs some serious work...ლ(ಠ益ಠლ)

API: built with NodeJS + Restify

Web: built with NodeJS + Express + Handlebars

# NOTES

file names should be

[filename].mp4

when viewing a video via the WEB (http://localhost:3000/spud.tube), yo... | 18.714286 | 111 | 0.709924 | eng_Latn | 0.959258 |

e5a66fc53427c9dfb569cc20431507f14413478e | 35,839 | md | Markdown | docs/framework/wcf/feature-details/data-contract-schema-reference.md | Dorothywilk/docs.fr-fr | 459a44d2c7bf5de4f74d7de8833fa9f8ea4ff38a | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/wcf/feature-details/data-contract-schema-reference.md | Dorothywilk/docs.fr-fr | 459a44d2c7bf5de4f74d7de8833fa9f8ea4ff38a | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/wcf/feature-details/data-contract-schema-reference.md | Dorothywilk/docs.fr-fr | 459a44d2c7bf5de4f74d7de8833fa9f8ea4ff38a | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Référence des schémas de contrats de données

ms.date: 03/30/2017

helpviewer_keywords:

- data contracts [WCF], schema reference

ms.assetid: 9ebb0ebe-8166-4c93-980a-7c8f1f38f7c0

ms.openlocfilehash: 33661061e1a5db4f7826c1a8eca188f8c782b58f

ms.sourcegitcommit: 8c28ab17c26bf08abbd004cc37651985c68841b8

ms.translat... | 51.865412 | 835 | 0.69402 | fra_Latn | 0.856854 |

e5a6d80498c09bb3709656209d8fdb4bc77ac373 | 2,210 | md | Markdown | _posts/2017-01-28-Haskell-Module.md | CoderDB/coderDB.github.io | 7178ec2f98b93d8e6e4e8c964afc9a95ca0d8787 | [

"MIT"

] | null | null | null | _posts/2017-01-28-Haskell-Module.md | CoderDB/coderDB.github.io | 7178ec2f98b93d8e6e4e8c964afc9a95ca0d8787 | [

"MIT"

] | null | null | null | _posts/2017-01-28-Haskell-Module.md | CoderDB/coderDB.github.io | 7178ec2f98b93d8e6e4e8c964afc9a95ca0d8787 | [

"MIT"

] | null | null | null | ---

layout: post

date: 2017-01-28

title: Haskell 中的 Module

img: "haskell_module.jpg"

---

*模组* 就是一大袋花样众多的零食,拆了就吃。

Module

---

---

Haskell 的标准库就是一组 *模组*,每个模组都包含一些功能相近或相似的函数或型别。比如之前所有文章中的测试例子都是基于 *Prelude* 模组。

装载模组

---

---

Haskell 中装载模组用关键字 **import**,就像 Objective-C 中定义了一个 **People** 类,在需要用到它的某个实现文件中以 *#import "People... | 18.888889 | 222 | 0.633937 | kor_Hang | 0.290985 |

e5a719680c64403a4a8784fd8b716d19c5507bff | 862 | md | Markdown | Images and Conteiners Study/sonic-search/README.md | BrunoComitre/docker-resource-inventory | a3f797101320fa0b9c02bc93e10574a3d2a315d3 | [

"MIT"

] | null | null | null | Images and Conteiners Study/sonic-search/README.md | BrunoComitre/docker-resource-inventory | a3f797101320fa0b9c02bc93e10574a3d2a315d3 | [

"MIT"

] | null | null | null | Images and Conteiners Study/sonic-search/README.md | BrunoComitre/docker-resource-inventory | a3f797101320fa0b9c02bc93e10574a3d2a315d3 | [

"MIT"

] | null | null | null | # SONIC SEARCH

Documentation on implementing dynamic search using Sonic

***

<br />

## Quickstart

### Locally:

Install the sonic image:

``` $ docker pull valeriansaliou/sonic:v1.3.0 ```

Enter the file address:

``` $ pwd ```

Change the path and rotate the container:

``` $ docker run -p 1491:1491 -v "pwd"/sonic/co... | 27.806452 | 146 | 0.728538 | eng_Latn | 0.828305 |

e5a74f20b27697015fe85ea1784c2c71ec49a26c | 3,238 | md | Markdown | translations/it/docs/vanilla/api/data/ShortData.md | Lgmrszd/CraftTweaker-Documentation | 2e02a542dea9a4dbaec91a8e594aa7fba1a00206 | [

"MIT"

] | 1 | 2021-12-15T20:34:09.000Z | 2021-12-15T20:34:09.000Z | translations/it/docs/vanilla/api/data/ShortData.md | Lgmrszd/CraftTweaker-Documentation | 2e02a542dea9a4dbaec91a8e594aa7fba1a00206 | [

"MIT"

] | null | null | null | translations/it/docs/vanilla/api/data/ShortData.md | Lgmrszd/CraftTweaker-Documentation | 2e02a542dea9a4dbaec91a8e594aa7fba1a00206 | [

"MIT"

] | null | null | null | # ShortData

Questa classe è stata aggiunta da una mod con ID `crafttweaker`. Perciò, è necessario avere questa mod installata per poter utilizzare questa funzione.

## Importare la classe

Potrebbe essere necessario importare il pacchetto, se si incontrano dei problemi (come castare un vettore), quindi meglio essere ... | 26.760331 | 226 | 0.703521 | ita_Latn | 0.993176 |

e5a7b6883c6c9f4109afeb8c4778a800f9afb0ea | 94 | md | Markdown | microsoft/office365/exchange-online-plan-2/tlh.md | Hexatown/docs | 8baa1eb908c9182449d094b697dd50d197a8a0a1 | [

"MIT"