hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6

values | lang stringclasses 1

value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191

values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

6fa076f7565dcac0e9cd65c5423d38c761347418 | 2,080 | md | Markdown | mip-cehome-forumlist/README.md | wupengFEX/mip-extensions-platform | 6bd9e52b44bf62875383b71cb36411c09b86f884 | [

"MIT"

] | 35 | 2017-07-07T01:15:46.000Z | 2020-06-28T06:26:57.000Z | mip-cehome-forumlist/README.md | izzhip/mip-extensions-platform | d84c2297d6b3ced1d4cd4415ba6df03dad251609 | [

"MIT"

] | 48 | 2017-02-15T11:01:58.000Z | 2019-05-22T03:05:38.000Z | mip-cehome-forumlist/README.md | izzhip/mip-extensions-platform | d84c2297d6b3ced1d4cd4415ba6df03dad251609 | [

"MIT"

] | 86 | 2017-03-02T06:39:22.000Z | 2020-11-02T06:49:31.000Z | # mip-cehome-forumlist

mip-cehome-forumlist 板块列表

标题|内容

----|----

类型|不通用

支持布局|responsive,fixed-height,fill,container,fixed

所需脚本|https://c.mipcdn.com/static/v1/mip-cehome-forumlist/mip-cehome-forumlist.js

## 示例

### 基本用法

```html

<mip-cehome-forumlist>

<div id="banner">

<div class="swiper-container swiper-container-... | 30.144928 | 172 | 0.645673 | yue_Hant | 0.121519 |

6fa0957a98f77dc4a103569e1d230cc27642cb05 | 2,267 | md | Markdown | README.md | chenfengxu714/open_source_projects | 21b79c84a23352c9fc5471edc2a4c5bde64a279c | [

"MIT"

] | 1 | 2022-02-16T03:00:27.000Z | 2022-02-16T03:00:27.000Z | README.md | chenfengxu714/open_source_projects | 21b79c84a23352c9fc5471edc2a4c5bde64a279c | [

"MIT"

] | null | null | null | README.md | chenfengxu714/open_source_projects | 21b79c84a23352c9fc5471edc2a4c5bde64a279c | [

"MIT"

] | null | null | null | # Open Source Projects from PALLAS Lab

Below are links to different open source projects from [Prof. Keutzer](https://people.eecs.berkeley.edu/~keutzer)'s lab at UC Berkeley.

# Core Optimization Algorithms

* [AdaHessian: A Second-order Optimization Algorithm](https://github.com/amirgholami/adahessian)

* [HessianFlow:... | 64.771429 | 140 | 0.786502 | yue_Hant | 0.492816 |

6fa10329f2bc8145659dff329edc21c1b070c9ce | 550 | md | Markdown | knowledge/memory_table/README.md | pudongping/swoole-learn-demo | c8ed84212211c9a5d2be7679a6847d5821328099 | [

"MIT"

] | null | null | null | knowledge/memory_table/README.md | pudongping/swoole-learn-demo | c8ed84212211c9a5d2be7679a6847d5821328099 | [

"MIT"

] | null | null | null | knowledge/memory_table/README.md | pudongping/swoole-learn-demo | c8ed84212211c9a5d2be7679a6847d5821328099 | [

"MIT"

] | null | null | null | # swoole memory table

> 多进程之间共享数据

## 示例

```shell

root@dc705af7d5da:/var/www/swoole-learn-demo/memory_table# php table.php

表格的最大行数 ====> 1024

实际占用内存的尺寸 ====> 194880

表格中的数据总数 ====> 3

array(3) {

["name"]=>

string(4) "Jack"

["age"]=>

int(25)

["height"]=>

float(1.88)

}

现在表格中的数据总数 ====> 2

string(1) "1"

=====... | 12.222222 | 72 | 0.516364 | yue_Hant | 0.256477 |

6fa29828d50d96bcf9375ff333f93f8e43cbc0bb | 32 | md | Markdown | README.md | nathanssantos/node-express-mongo-boilerplate | 64685702a1e35b000c2889abe077a46407501f68 | [

"MIT"

] | null | null | null | README.md | nathanssantos/node-express-mongo-boilerplate | 64685702a1e35b000c2889abe077a46407501f68 | [

"MIT"

] | null | null | null | README.md | nathanssantos/node-express-mongo-boilerplate | 64685702a1e35b000c2889abe077a46407501f68 | [

"MIT"

] | null | null | null | # node-express-mongo-boilerplate | 32 | 32 | 0.84375 | eng_Latn | 0.493685 |

6fa2c6d202d466bff9bb0ff14154fbdfa1e4a77b | 35 | md | Markdown | README.md | RainMark/cops | 9b2906f9ff440d9b7c31479f9c9629b5aa47cc5a | [

"Apache-2.0"

] | 1 | 2022-01-22T13:11:35.000Z | 2022-01-22T13:11:35.000Z | README.md | RainMark/cops | 9b2906f9ff440d9b7c31479f9c9629b5aa47cc5a | [

"Apache-2.0"

] | null | null | null | README.md | RainMark/cops | 9b2906f9ff440d9b7c31479f9c9629b5aa47cc5a | [

"Apache-2.0"

] | null | null | null | # cops

Code Oops Coroutine Example

| 11.666667 | 27 | 0.8 | eng_Latn | 0.928344 |

6fa2d102f8f7055f7d3eb8572f428f6725cc6cf3 | 12,415 | md | Markdown | fabric-sdk-go/16177-17631/17021.md | hyperledger-gerrit-archive/fabric-gerrit | 188c6e69ccb2e4c4d609ae749a467fa7e289b262 | [

"Apache-2.0"

] | 2 | 2021-01-08T04:06:04.000Z | 2021-02-09T08:28:54.000Z | fabric-sdk-go/16177-17631/17021.md | cendhu/fabric-gerrit | 188c6e69ccb2e4c4d609ae749a467fa7e289b262 | [

"Apache-2.0"

] | null | null | null | fabric-sdk-go/16177-17631/17021.md | cendhu/fabric-gerrit | 188c6e69ccb2e4c4d609ae749a467fa7e289b262 | [

"Apache-2.0"

] | 4 | 2019-12-07T05:54:26.000Z | 2020-06-04T02:29:43.000Z | <strong>Project</strong>: fabric-sdk-go<br><strong>Branch</strong>: master<br><strong>ID</strong>: 17021<br><strong>Subject</strong>: [FAB-7830] Refactor client: delay error propagation<br><strong>Status</strong>: MERGED<br><strong>Owner</strong>: Troy Ronda - troy@troyronda.com<br><strong>Assignee</strong>:<br><strong... | 137.944444 | 3,652 | 0.763754 | kor_Hang | 0.314546 |

6fa34341d688828d594b0bcaefa9cb673b4379d9 | 642 | md | Markdown | _posts/archive/33-20171114/2017-11-13-IBM-pitched-its-Watson-supercomputer-as-a-revolution-in-cancer-care-Its-nowhere-close.md | polgarp/alg-exp | 07822f0707d09dd12c1d76dd5e438e866b865470 | [

"MIT"

] | null | null | null | _posts/archive/33-20171114/2017-11-13-IBM-pitched-its-Watson-supercomputer-as-a-revolution-in-cancer-care-Its-nowhere-close.md | polgarp/alg-exp | 07822f0707d09dd12c1d76dd5e438e866b865470 | [

"MIT"

] | null | null | null | _posts/archive/33-20171114/2017-11-13-IBM-pitched-its-Watson-supercomputer-as-a-revolution-in-cancer-care-Its-nowhere-close.md | polgarp/alg-exp | 07822f0707d09dd12c1d76dd5e438e866b865470 | [

"MIT"

] | null | null | null | ---

layout: post

title: "IBM pitched its Watson supercomputer as a revolution in cancer care. It’s nowhere close"

posturl: https://www.statnews.com/2017/09/05/watson-ibm-cancer/

tags:

- IBM

- Watson

- Healthcare

---

{% include post_info_header.md %}

The Wizard of Oz method is one of the suggested ways of prototyping ... | 37.764706 | 342 | 0.761682 | eng_Latn | 0.998335 |

6fa3a6b0faf3a8c741e91e1c865ba4cae8d17880 | 1,005 | md | Markdown | README.md | Conduitry/degit | 782f2b39443b411d5bbed058e0ca2bcc8219231c | [

"MIT"

] | null | null | null | README.md | Conduitry/degit | 782f2b39443b411d5bbed058e0ca2bcc8219231c | [

"MIT"

] | null | null | null | README.md | Conduitry/degit | 782f2b39443b411d5bbed058e0ca2bcc8219231c | [

"MIT"

] | null | null | null | # degit — straightforward project scaffolding

**degit** makes copies of git repositories. When you run `degit some-user/some-repo`, it will find the latest commit on https://github.com/some-user/some-repo and download the associated tar file to `~/.degit/some-user/some-repo/commithash.tar.gz` if it doesn't already exi... | 25.125 | 386 | 0.727363 | eng_Latn | 0.971906 |

6fa482f57b8e5fb3c9806ea4ed3582679c2045be | 66 | md | Markdown | README.md | calport/CodeLab0-Week5-GitDemo | e5e1879709d327ac33dc0293ad20853cf5b4bd39 | [

"Unlicense"

] | null | null | null | README.md | calport/CodeLab0-Week5-GitDemo | e5e1879709d327ac33dc0293ad20853cf5b4bd39 | [

"Unlicense"

] | null | null | null | README.md | calport/CodeLab0-Week5-GitDemo | e5e1879709d327ac33dc0293ad20853cf5b4bd39 | [

"Unlicense"

] | null | null | null | # CodeLab0-Week5-GitDemo

This is a temporary project for Code Lab

| 22 | 40 | 0.80303 | eng_Latn | 0.988451 |

6fa4fc6701b05cb6ab59f477b3b225247288b170 | 315 | md | Markdown | _definitions/textStage_1997_Plachta.md | WoutDLN/lexicon-scholarly-editing | c9b11e32dd786ade453a616bf60fb4f1b6417bbd | [

"CC-BY-4.0"

] | 2 | 2021-04-26T12:28:47.000Z | 2021-12-21T13:30:58.000Z | _definitions/textStage_1997_Plachta.md | WoutDLN/lexicon-scholarly-editing | c9b11e32dd786ade453a616bf60fb4f1b6417bbd | [

"CC-BY-4.0"

] | 45 | 2020-04-04T19:51:35.000Z | 2022-03-24T16:56:19.000Z | _definitions/textStage_1997_Plachta.md | WoutDLN/lexicon-scholarly-editing | c9b11e32dd786ade453a616bf60fb4f1b6417bbd | [

"CC-BY-4.0"

] | 3 | 2020-04-19T14:17:32.000Z | 2021-04-08T12:13:06.000Z | ---

lemma: text (stage)

source: plachta_editionswissenschaft_1997

page: 139

language: German

contributor: Caroline

updated_by: Caroline

---

**Textstufe** Texteinheit innerhalb der [Textentstehung](genesis.html), die auch chronologisch von der vorhergehenden und der folgenden Texteinheit geschieden werden kann.

| 24.230769 | 171 | 0.806349 | deu_Latn | 0.968458 |

6fa620e4a4861b3f094c470153c4544b04154812 | 3,611 | md | Markdown | wdk-ddi-src/content/fltkernel/nf-fltkernel-fltretainswappedbuffermdladdress.md | xiaoyinl/windows-driver-docs-ddi | 2442baf424975cfeec65190615ed8638a01791b5 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | wdk-ddi-src/content/fltkernel/nf-fltkernel-fltretainswappedbuffermdladdress.md | xiaoyinl/windows-driver-docs-ddi | 2442baf424975cfeec65190615ed8638a01791b5 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | wdk-ddi-src/content/fltkernel/nf-fltkernel-fltretainswappedbuffermdladdress.md | xiaoyinl/windows-driver-docs-ddi | 2442baf424975cfeec65190615ed8638a01791b5 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

UID: NF:fltkernel.FltRetainSwappedBufferMdlAddress

title: FltRetainSwappedBufferMdlAddress function (fltkernel.h)

description: FltRetainSwappedBufferMdlAddress prevents the Filter Manager from freeing the memory descriptor list (MDL) for a buffer that was swapped in by a minifilter driver.

old-location: ifsk\fl... | 31.675439 | 523 | 0.783439 | eng_Latn | 0.49066 |

6fa77b33c3c9761828c15339c585ebc6e0ae282d | 1,618 | md | Markdown | README.md | EuleMitKeule/geoip_monitor | ee21e1dc9bf6fc8c9149ee5ee8a25f51dc0d6ff6 | [

"Unlicense"

] | null | null | null | README.md | EuleMitKeule/geoip_monitor | ee21e1dc9bf6fc8c9149ee5ee8a25f51dc0d6ff6 | [

"Unlicense"

] | null | null | null | README.md | EuleMitKeule/geoip_monitor | ee21e1dc9bf6fc8c9149ee5ee8a25f51dc0d6ff6 | [

"Unlicense"

] | null | null | null | # geoip_monitor

Basic IP Monitoring for Ubuntu with Grafana World Map Plugin Integration

INSTRUCTIONS:

1. Put geoip_monitor.py and geoip_monitor.log and countries.csv in a folder

2. Run apt install geoiplookup

3. Have python3 and the following python packages installed:

-datetime

-time

-subprocess

-mysql... | 24.515152 | 105 | 0.710136 | eng_Latn | 0.619141 |

6fa7af8ebf83559aabed96a71796cae6ef45a036 | 6,848 | md | Markdown | gpdb-doc/markdown/ref_guide/datatype-pseudo.html.md | haolinw/gpdb | 16a9465747a54f0c61bac8b676fe7611b4f030d8 | [

"PostgreSQL",

"Apache-2.0"

] | null | null | null | gpdb-doc/markdown/ref_guide/datatype-pseudo.html.md | haolinw/gpdb | 16a9465747a54f0c61bac8b676fe7611b4f030d8 | [

"PostgreSQL",

"Apache-2.0"

] | null | null | null | gpdb-doc/markdown/ref_guide/datatype-pseudo.html.md | haolinw/gpdb | 16a9465747a54f0c61bac8b676fe7611b4f030d8 | [

"PostgreSQL",

"Apache-2.0"

] | null | null | null | # Pseudo-Types

Greenplum Database supports special-purpose data type entries that are collectively called *pseudo-types*. A pseudo-type cannot be used as a column data type, but it can be used to declare a function's argument or result type. Each of the available pseudo-types is useful in situations where a function'... | 108.698413 | 946 | 0.789136 | eng_Latn | 0.998054 |

6fa7b697369feb478ad943e1f486fedf9da823e7 | 4,338 | md | Markdown | docs/vs-2015/python/getting-started-with-ptvs-start-coding-projects.md | Birgos/visualstudio-docs.de-de | 64595418a3cea245bd45cd3a39645f6e90cfacc9 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/vs-2015/python/getting-started-with-ptvs-start-coding-projects.md | Birgos/visualstudio-docs.de-de | 64595418a3cea245bd45cd3a39645f6e90cfacc9 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/vs-2015/python/getting-started-with-ptvs-start-coding-projects.md | Birgos/visualstudio-docs.de-de | 64595418a3cea245bd45cd3a39645f6e90cfacc9 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: 'Erste Schritte mit PTVS: Codieren beginnen (Projekte) | Microsoft-Dokumentation'

ms.date: 11/15/2016

ms.prod: visual-studio-dev14

ms.technology: vs-python

ms.topic: conceptual

ms.assetid: 14b85e70-b9a8-415c-a307-8c8c316a0495

caps.latest.revision: 7

author: kraigb

ms.author: kraigb

manager: jillfra

ms.openlo... | 98.590909 | 662 | 0.818119 | deu_Latn | 0.99644 |

6fa88cdba464a07498ae49d44967d045a2aa34b7 | 2,304 | md | Markdown | frontend/Convention.md | collinahn/cafe_kiosk_solution | c6810f15c6f2681079e294f6238d24b8df108e34 | [

"MIT"

] | 1 | 2021-11-30T03:31:53.000Z | 2021-11-30T03:31:53.000Z | frontend/Convention.md | collinahn/cafe_kiosk_solution | c6810f15c6f2681079e294f6238d24b8df108e34 | [

"MIT"

] | 3 | 2021-11-26T18:14:14.000Z | 2021-12-11T05:33:24.000Z | frontend/Convention.md | collinahn/cafe_kiosk_solution | c6810f15c6f2681079e294f6238d24b8df108e34 | [

"MIT"

] | null | null | null | # React

## 컴포넌트 정의

### FunctionComponent.tsx

```ts

type Props = {

...

}

function FunctionComponent({ ... }: Props) {

...

}

export default FunctionComponent

```

위와 같이 CRA 템플릿에 있는 방식으로 컴포넌트를 정의한다.

클래스 컴포넌트의 사용은 지양한다. 클래스 컴포넌트는 함수 컴포넌트에서 지원하지 않는 일부 컴포넌트 생명주기 함수를 사용하기 위해서 사용한다.

React 17에선 번들 사이즈 최적화를 위해 컴포넌트를 정의... | 23.272727 | 219 | 0.688368 | kor_Hang | 1.00001 |

6fa8b8ef59db51d33287603dbee47b9b6e519475 | 754 | md | Markdown | docs/examples.md | Preta-Crowz/deno | 2d865f7f3f4608231862610b7375ddc2e9294903 | [

"MIT"

] | 2 | 2020-11-14T20:59:50.000Z | 2021-06-16T22:25:54.000Z | docs/examples.md | Preta-Crowz/deno | 2d865f7f3f4608231862610b7375ddc2e9294903 | [

"MIT"

] | 26 | 2021-11-22T04:24:30.000Z | 2022-03-13T01:30:44.000Z | docs/examples.md | Preta-Crowz/deno | 2d865f7f3f4608231862610b7375ddc2e9294903 | [

"MIT"

] | 1 | 2020-07-25T18:54:10.000Z | 2020-07-25T18:54:10.000Z | # Examples

In this chapter you can find some example programs that you can use to learn

more about the runtime.

## Basic

- [Hello World](./examples/hello_world.md)

- [Import and Export Modules](./examples/import_export.md)

- [How to Manage Dependencies](./examples/manage_dependencies.md)

- [Fetch Data](./examples/fe... | 31.416667 | 76 | 0.737401 | eng_Latn | 0.757779 |

6fa96945bd42d23923f1ae51e2651ffc7143509a | 2,282 | md | Markdown | src/lt/2019-02/09/05.md | PrJared/sabbath-school-lessons | 94a27f5bcba987a11a698e5e0d4279b81a68bc9a | [

"MIT"

] | 68 | 2016-10-30T23:17:56.000Z | 2022-03-27T11:58:16.000Z | src/lt/2019-02/09/05.md | PrJared/sabbath-school-lessons | 94a27f5bcba987a11a698e5e0d4279b81a68bc9a | [

"MIT"

] | 367 | 2016-10-21T03:50:22.000Z | 2022-03-28T23:35:25.000Z | src/lt/2019-02/09/05.md | PrJared/sabbath-school-lessons | 94a27f5bcba987a11a698e5e0d4279b81a68bc9a | [

"MIT"

] | 109 | 2016-08-02T14:32:13.000Z | 2022-03-31T10:18:41.000Z | ---

title: Laisvės praradimas

date: 29/05/2019

---

Tik vienas Dievas žino, kiek milijonų ar net milijardų žmonių kovoja su kokia nors priklausomybe. Iki šios dienos mokslininkai vis dar nesupranta, kas ją sukelia, nors kai kuriais atvejais jie iš tiesų gali pamatyti tą smegenų dalį, kurioje yra norai ir troškimai.

... | 120.105263 | 745 | 0.80894 | lit_Latn | 1.00001 |

6fa9c329bdc637071b9905eee0cc913dfe665694 | 317 | md | Markdown | content/blog/eight.md | Andyctct/Hugo-Personal-Site | 921108f6e93b785202e527c99754d0d1cfae94e7 | [

"CC0-1.0"

] | null | null | null | content/blog/eight.md | Andyctct/Hugo-Personal-Site | 921108f6e93b785202e527c99754d0d1cfae94e7 | [

"CC0-1.0"

] | null | null | null | content/blog/eight.md | Andyctct/Hugo-Personal-Site | 921108f6e93b785202e527c99754d0d1cfae94e7 | [

"CC0-1.0"

] | null | null | null | +++

categories = []

comments = false

date = 2021-10-28T17:27:06-04:00

draft = false

showpagemeta = true

slug = ""

tags = []

title = "The subjective nature of experience"

description = "An analysis of why we may never \"put ourselves in another person's shoes\" and how this should affect the way we treat people"

+++ | 26.416667 | 142 | 0.712934 | eng_Latn | 0.994297 |

6fab6b9b3f7242dee9300fc8533d4ea17569084f | 145 | md | Markdown | docs/algo-overview.md | mpolson64/Ax-1 | cf9e12cc1253efe0fc893f2620e99337e0927a26 | [

"MIT"

] | 1,803 | 2019-05-01T16:04:15.000Z | 2022-03-31T16:01:29.000Z | docs/algo-overview.md | mpolson64/Ax-1 | cf9e12cc1253efe0fc893f2620e99337e0927a26 | [

"MIT"

] | 810 | 2019-05-01T07:17:47.000Z | 2022-03-31T23:58:46.000Z | docs/algo-overview.md | mpolson64/Ax-1 | cf9e12cc1253efe0fc893f2620e99337e0927a26 | [

"MIT"

] | 220 | 2019-05-01T05:37:22.000Z | 2022-03-29T04:30:45.000Z | ---

id: algo-overview

title: Overview

---

Ax supports:

* Bandit optimization

* Empirical Bayes with Thompson sampling

* Bayesian optimization

| 14.5 | 42 | 0.751724 | eng_Latn | 0.839076 |

6face77b6936e389f0e615d6877e3046e11b3f6d | 809 | md | Markdown | catalog/silent-blue/en-US_silent-blue.md | htron-dev/baka-db | cb6e907a5c53113275da271631698cd3b35c9589 | [

"MIT"

] | 3 | 2021-08-12T20:02:29.000Z | 2021-09-05T05:03:32.000Z | catalog/silent-blue/en-US_silent-blue.md | zzhenryquezz/baka-db | da8f54a87191a53a7fca54b0775b3c00f99d2531 | [

"MIT"

] | 8 | 2021-07-20T00:44:48.000Z | 2021-09-22T18:44:04.000Z | catalog/silent-blue/en-US_silent-blue.md | zzhenryquezz/baka-db | da8f54a87191a53a7fca54b0775b3c00f99d2531 | [

"MIT"

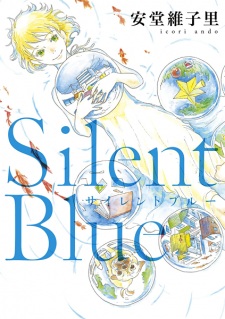

] | 2 | 2021-07-19T01:38:25.000Z | 2021-07-29T08:10:29.000Z | # Silent Blue

- **type**: manga

- **volumes**: 1

- **chapters**: 6

- **original-name**: Silent Blue

- **start-date**: 2011-10-08

- **end-date**: 2011-10-08

## Tags

- mystery

- drama

- josei

## Authors

- Andou

- Ikori (Stor... | 25.28125 | 371 | 0.682324 | eng_Latn | 0.969918 |

6fad4a9ffe0613ff8d4f24012f2237d125635c73 | 23,302 | md | Markdown | reference/docs-conceptual/whats-new/What-s-New-in-PowerShell-Core-61.md | jwmoss/PowerShell-Docs | 25ae434ae90eaa2b64f16a721d557d790972c331 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | reference/docs-conceptual/whats-new/What-s-New-in-PowerShell-Core-61.md | jwmoss/PowerShell-Docs | 25ae434ae90eaa2b64f16a721d557d790972c331 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | reference/docs-conceptual/whats-new/What-s-New-in-PowerShell-Core-61.md | jwmoss/PowerShell-Docs | 25ae434ae90eaa2b64f16a721d557d790972c331 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: What's New in PowerShell Core 6.1

description: New features and changes released in PowerShell Core 6.1

ms.date: 09/13/2018

---

# What's New in PowerShell Core 6.1

Below is a selection of some of the major new features and changes that have been introduced

in PowerShell Core 6.1.

There's also **tons** of ... | 42.137432 | 236 | 0.700326 | eng_Latn | 0.699729 |

6fadb537f23b1b8abdec45a04fb87ab41929f8b2 | 7,780 | md | Markdown | source/develop/release-management/features/vdsm/hostconfiguration.html.md | didib/ovirt-site | c80907faf68651ee931ae7de3f478fc0cab8c2ef | [

"MIT"

] | null | null | null | source/develop/release-management/features/vdsm/hostconfiguration.html.md | didib/ovirt-site | c80907faf68651ee931ae7de3f478fc0cab8c2ef | [

"MIT"

] | null | null | null | source/develop/release-management/features/vdsm/hostconfiguration.html.md | didib/ovirt-site | c80907faf68651ee931ae7de3f478fc0cab8c2ef | [

"MIT"

] | null | null | null | ---

title: HostConfiguration

category: feature

authors: ovedo, ybronhei

wiki_category: Feature

wiki_title: Features/HostConfiguration

wiki_revision_count: 18

wiki_last_updated: 2015-01-28

---

# Host Configuration Management

## Summary

oVirt 3.6 provides to admin users to set the host configuration through the UI and... | 60.78125 | 529 | 0.764653 | eng_Latn | 0.995757 |

6fade9c3822b8f08f14fff88760b3817fb0cfdf7 | 134 | md | Markdown | README.md | nikolausmayer/cpp-fps | e9b044e456738e8770265dda92f695adff0b726d | [

"MIT"

] | null | null | null | README.md | nikolausmayer/cpp-fps | e9b044e456738e8770265dda92f695adff0b726d | [

"MIT"

] | null | null | null | README.md | nikolausmayer/cpp-fps | e9b044e456738e8770265dda92f695adff0b726d | [

"MIT"

] | null | null | null | # cpp-fps

Tiny C++ tool: Monitor frequencies of things

[](LICENSE)

| 13.4 | 72 | 0.701493 | eng_Latn | 0.256642 |

6fae344c59cda679b0a2c0fd14af5f8350df3677 | 21,522 | md | Markdown | articles/cosmos-db/sql/sql-api-sdk-python.md | KreizIT/azure-docs.fr-fr | dfe0cb93ebc98e9ca8eb2f3030127b4970911a06 | [

"CC-BY-4.0",

"MIT"

] | 43 | 2017-08-28T07:44:17.000Z | 2022-02-20T20:53:01.000Z | articles/cosmos-db/sql/sql-api-sdk-python.md | KreizIT/azure-docs.fr-fr | dfe0cb93ebc98e9ca8eb2f3030127b4970911a06 | [

"CC-BY-4.0",

"MIT"

] | 676 | 2017-07-14T20:21:38.000Z | 2021-12-03T05:49:24.000Z | articles/cosmos-db/sql/sql-api-sdk-python.md | KreizIT/azure-docs.fr-fr | dfe0cb93ebc98e9ca8eb2f3030127b4970911a06 | [

"CC-BY-4.0",

"MIT"

] | 153 | 2017-07-11T00:08:42.000Z | 2022-01-05T05:39:03.000Z | ---

title: API, Kit de développement logiciel (SDK) et ressources Python SQL Azure Cosmos DB

description: Découvrez l’API et le Kit SDK Python SQL, y compris les dates de publication, les dates de suppression et les modifications apportées entre chaque version du Kit SDK Python Azure Cosmos DB.

author: Rodrigossz

ms.se... | 57.239362 | 417 | 0.75467 | fra_Latn | 0.961446 |

6fae63178608c0bf2c72cb0c5fa1d0062fdcd5f5 | 5,987 | md | Markdown | 201-sqlmi-new-vnet-w-point-to-site-vpn/README.md | josephkiran/azure-quickstart-templates | 07e7e1e87e5bee4bdc44d7510d1701c0574426aa | [

"MIT"

] | null | null | null | 201-sqlmi-new-vnet-w-point-to-site-vpn/README.md | josephkiran/azure-quickstart-templates | 07e7e1e87e5bee4bdc44d7510d1701c0574426aa | [

"MIT"

] | 2 | 2022-03-08T21:13:01.000Z | 2022-03-08T21:13:12.000Z | 201-sqlmi-new-vnet-w-point-to-site-vpn/README.md | josephkiran/azure-quickstart-templates | 07e7e1e87e5bee4bdc44d7510d1701c0574426aa | [

"MIT"

] | 1 | 2020-02-04T09:25:14.000Z | 2020-02-04T09:25:14.000Z | # Azure Sql Database Managed Instance (SQL MI) with Virtual network gateway configured for point-to-site connection inside the new virtual network

<IMG SRC="https://azbotstorage.blob.core.windows.net/badges/201-sqlmi-new-vnet-w-point-to-site-vpn/PublicLastTestDate.svg" />

<IMG SRC="https://azbotstorage.blob.core... | 77.753247 | 365 | 0.78303 | eng_Latn | 0.865113 |

6faee8937c4b545a056a2a1e581be114aa3ac3d2 | 1,191 | md | Markdown | _events/2020-05-16-covid5.md | Slugger70/website | a54acbebd871ee297c921d77c1331132593727b1 | [

"MIT"

] | null | null | null | _events/2020-05-16-covid5.md | Slugger70/website | a54acbebd871ee297c921d77c1331132593727b1 | [

"MIT"

] | 23 | 2019-08-20T02:32:42.000Z | 2022-03-30T04:08:40.000Z | _events/2020-05-16-covid5.md | Slugger70/website | a54acbebd871ee297c921d77c1331132593727b1 | [

"MIT"

] | 7 | 2019-08-15T22:16:24.000Z | 2021-09-02T03:48:00.000Z | ---

site: freiburg

tags: [training]

title: Galaxy-ELIXIR Webinar 5 - Behind the scenes - Global Open Infrastructures at work

starts: 2020-05-28

ends: 2020-05-29

organiser:

name: Galaxy-Elixir

---

**Galaxy-ELIXIR Webinar Series: FAIR data and Open Infrastructures to tackle the COVID-19 pandemic**

The Galaxy Community... | 49.625 | 370 | 0.780856 | eng_Latn | 0.946807 |

6faef7be71772cf147d8a0b1ca6145c9490f2c49 | 3,158 | md | Markdown | README.md | japrescott/inline-critical | e762c46f07d8a63a6d50f7335a78710561cb6f59 | [

"BSD-3-Clause"

] | null | null | null | README.md | japrescott/inline-critical | e762c46f07d8a63a6d50f7335a78710561cb6f59 | [

"BSD-3-Clause"

] | null | null | null | README.md | japrescott/inline-critical | e762c46f07d8a63a6d50f7335a78710561cb6f59 | [

"BSD-3-Clause"

] | null | null | null | # inline-critical

Inline critical-path css and load the existing stylesheets asynchronously.

Existing link tags will also be wrapped in ```<noscript>``` so the users with javascript disabled will see the site rendered normally.

[![NPM version][npm-image]][npm-url] [![Build Status][travis-image]][travis-url] [![Build ... | 34.326087 | 278 | 0.748575 | eng_Latn | 0.589711 |

6fafac5d53b813b007b42e7698fa3d977b91f929 | 7,170 | md | Markdown | articles/active-directory-b2c/tutorial-create-tenant.md | Caigie/azure-docs.de-de | 788350a050087ee84cd8c5a5a2d32b8d02b62da4 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/active-directory-b2c/tutorial-create-tenant.md | Caigie/azure-docs.de-de | 788350a050087ee84cd8c5a5a2d32b8d02b62da4 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/active-directory-b2c/tutorial-create-tenant.md | Caigie/azure-docs.de-de | 788350a050087ee84cd8c5a5a2d32b8d02b62da4 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: 'Tutorial: Erstellen eines Azure Active Directory B2C-Mandanten'

description: In diesem Tutorial erfahren Sie, wie Sie sich auf die Registrierung Ihrer Anwendungen vorbereiten, indem Sie einen Azure Active Directory B2C-Mandanten über das Azure-Portal erstellen.

services: B2C

author: msmimart

manager: celest... | 66.388889 | 465 | 0.787448 | deu_Latn | 0.993392 |

6fb04398f9950476c2768f8ec4ae4db929bdc69e | 35 | md | Markdown | _includes/about/zh.md | lgd3608/lgd3608.github.io | 84e270a5cd989bfad1d0480008d9fd1be2598928 | [

"Apache-2.0"

] | 1 | 2019-09-07T05:29:10.000Z | 2019-09-07T05:29:10.000Z | _includes/about/zh.md | lgd3608/lgd3608.github.io | 84e270a5cd989bfad1d0480008d9fd1be2598928 | [

"Apache-2.0"

] | null | null | null | _includes/about/zh.md | lgd3608/lgd3608.github.io | 84e270a5cd989bfad1d0480008d9fd1be2598928 | [

"Apache-2.0"

] | null | null | null | > 离开世界之前,一切都是过程。

Hi,我是lgd3608。

| 5 | 16 | 0.657143 | zho_Hans | 0.202389 |

6fb0d7b974cffec071ccaa457f1ec850bd1bb7b3 | 541 | md | Markdown | content/talks/aurelia-rediscover-your-choice-in-front-end-frameworks.md | tbrunia/site | 5998c52a2c53dc0241ffd4c8a39794837da8fafb | [

"MIT"

] | null | null | null | content/talks/aurelia-rediscover-your-choice-in-front-end-frameworks.md | tbrunia/site | 5998c52a2c53dc0241ffd4c8a39794837da8fafb | [

"MIT"

] | null | null | null | content/talks/aurelia-rediscover-your-choice-in-front-end-frameworks.md | tbrunia/site | 5998c52a2c53dc0241ffd4c8a39794837da8fafb | [

"MIT"

] | null | null | null | ---

title: Aurelia - rediscover your choice in front-end frameworks

date: 'April 11, 2017'

tags: Aurelia

speaker: Jeff Shinrock

---

It's 2017 and the choice of front-end frameworks seems endless, and yet somehow

not a choice at all. React and Angular dominate every conversation surrounding

mature, robust, and accessib... | 38.642857 | 79 | 0.798521 | eng_Latn | 0.991749 |

6fb0e934b4f7025f88bf8912c507b718cad8537e | 693 | md | Markdown | README.md | Andrea94c/IoT-MBA-CDP-Luiss | ea31834c73d3fcbb10e2d5b6d32a8ff94eabf6f3 | [

"MIT"

] | null | null | null | README.md | Andrea94c/IoT-MBA-CDP-Luiss | ea31834c73d3fcbb10e2d5b6d32a8ff94eabf6f3 | [

"MIT"

] | null | null | null | README.md | Andrea94c/IoT-MBA-CDP-Luiss | ea31834c73d3fcbb10e2d5b6d32a8ff94eabf6f3 | [

"MIT"

] | null | null | null | # IoT Demo - MBA CDP Luiss 2022

Introduzione all'IoT - Mini sistema IoT con sensori di temperatura e dashboard online

### Occorrente

2x Raspberry Pico

1x Raspberry Pi4 Model B

2x cavo da USB A maschio a USB Micro B maschio

1x cavo da USB A maschio a USB C maschio

1x cavo da Micro HDMI a HDMI

1x micro sd 32gb... | 18.72973 | 85 | 0.746032 | ita_Latn | 0.838333 |

6fb171c458a311cd912c8751e9cb87c092e764c2 | 973 | md | Markdown | README.md | aidoop/node-urx | 890b672f8516648feb9167ac98ee3278fc393032 | [

"MIT"

] | null | null | null | README.md | aidoop/node-urx | 890b672f8516648feb9167ac98ee3278fc393032 | [

"MIT"

] | null | null | null | README.md | aidoop/node-urx | 890b672f8516648feb9167ac98ee3278fc393032 | [

"MIT"

] | null | null | null | # node-urx

Universal Robot client module for nodejs

## Base code

python-urx: https://github.com/SintefManufacturing/python-urx

## Universal Robots

- Homepage: https://www.universal-robots.com/

- URSim(Simulator): https://www.universal-robots.com/download/software-e-series/simulator-linux/offline-simulator-e-series-u... | 19.46 | 145 | 0.688592 | yue_Hant | 0.159268 |

6fb178ce92b6b1c8b779404e3dd34ebc6f52aa35 | 19,518 | md | Markdown | articles/azure-cache-for-redis/cache-private-link.md | beatrizmayumi/azure-docs.pt-br | ca6432fe5d3f7ccbbeae22b4ea05e1850c6c7814 | [

"CC-BY-4.0",

"MIT"

] | 39 | 2017-08-28T07:46:06.000Z | 2022-01-26T12:48:02.000Z | articles/azure-cache-for-redis/cache-private-link.md | beatrizmayumi/azure-docs.pt-br | ca6432fe5d3f7ccbbeae22b4ea05e1850c6c7814 | [

"CC-BY-4.0",

"MIT"

] | 562 | 2017-06-27T13:50:17.000Z | 2021-05-17T23:42:07.000Z | articles/azure-cache-for-redis/cache-private-link.md | beatrizmayumi/azure-docs.pt-br | ca6432fe5d3f7ccbbeae22b4ea05e1850c6c7814 | [

"CC-BY-4.0",

"MIT"

] | 113 | 2017-07-11T19:54:32.000Z | 2022-01-26T21:20:25.000Z | ---

title: Cache do Azure para Redis com o link privado do Azure (versão prévia)

description: O ponto de extremidade privado do Azure é uma interface de rede que conecta você de forma privada e segura ao cache do Azure para Redis da plataforma Azure link privado. Neste artigo, você aprenderá a criar um cache do Azure, ... | 79.341463 | 656 | 0.743416 | por_Latn | 0.999646 |

6fb18d7db61365972e420feb6635c7016c79689c | 2,000 | md | Markdown | docs/pages/about.md | malvikarao/website | 556f14105964c1956f167a2fa4df9d5fabf90970 | [

"MIT"

] | null | null | null | docs/pages/about.md | malvikarao/website | 556f14105964c1956f167a2fa4df9d5fabf90970 | [

"MIT"

] | null | null | null | docs/pages/about.md | malvikarao/website | 556f14105964c1956f167a2fa4df9d5fabf90970 | [

"MIT"

] | null | null | null | ---

hdrnav: true

layout: page

title: About

permalink: /about/

navigation_weight: 0

---

### Our Value

Bugmark is a futures market for software issues.

Bugmark pioneers an approach using a two-sided

market, allowing both funders and workers to make

offers that are resolved auction style. We

believe the market signals ... | 28.985507 | 144 | 0.7905 | eng_Latn | 0.993198 |

6fb1bc963d0b24f7d9c310958de02e53c26ca4d4 | 1,265 | md | Markdown | api/Word.Index.md | kibitzerCZ/VBA-Docs | 046664c5f09c17707e8ee92fd1505ddd0f6c9a91 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2020-03-09T13:24:12.000Z | 2020-03-09T16:19:11.000Z | api/Word.Index.md | kibitzerCZ/VBA-Docs | 046664c5f09c17707e8ee92fd1505ddd0f6c9a91 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | api/Word.Index.md | kibitzerCZ/VBA-Docs | 046664c5f09c17707e8ee92fd1505ddd0f6c9a91 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2019-11-28T06:51:45.000Z | 2019-11-28T06:51:45.000Z | ---

title: Index object (Word)

keywords: vbawd10.chm2429

f1_keywords:

- vbawd10.chm2429

ms.prod: word

api_name:

- Word.Index

ms.assetid: 6a2aab98-485b-01c3-8d9b-9e108b455e22

ms.date: 06/08/2017

localization_priority: Normal

---

# Index object (Word)

Represents a single index. The **Index** object is a member of the... | 26.354167 | 247 | 0.750988 | eng_Latn | 0.838224 |

6fb1d1ddf1154de08a8f754eb823388a965bb206 | 1,917 | md | Markdown | README.md | psforever/gcapy | 1d88102ced17d2649537849bac03b7abe0a78f2a | [

"MIT"

] | 3 | 2016-02-29T06:36:26.000Z | 2020-05-27T01:02:17.000Z | README.md | psforever/gcapy | 1d88102ced17d2649537849bac03b7abe0a78f2a | [

"MIT"

] | 4 | 2016-06-19T16:10:15.000Z | 2020-05-27T08:20:14.000Z | README.md | psforever/gcapy | 1d88102ced17d2649537849bac03b7abe0a78f2a | [

"MIT"

] | 5 | 2016-02-29T13:17:31.000Z | 2020-05-27T01:06:34.000Z | # GCAPy

A Python library and script to parse GCAP files. GCAP stands for Game CAPture and it is a file-format created by the PSForever project to store recorded game records from PlanetSide.

The library currently only reads, not writes, GCAP files.

GCAPy supports three actions: metadata display, record extraction, and... | 41.673913 | 267 | 0.763693 | eng_Latn | 0.986463 |

6fb2d394c6b59637f03feeafc36eda6dc5603b25 | 148 | md | Markdown | README.md | lucasbueno/eventif-android | 04e8fb05c85d046409092098947d94a112d03dc4 | [

"MIT"

] | null | null | null | README.md | lucasbueno/eventif-android | 04e8fb05c85d046409092098947d94a112d03dc4 | [

"MIT"

] | null | null | null | README.md | lucasbueno/eventif-android | 04e8fb05c85d046409092098947d94a112d03dc4 | [

"MIT"

] | null | null | null | # eventif-android

Aplicativo Android para consulta da programação (palestras, cursos, etc.) de eventos.

Feito no Android Studio utilizando Kotlin.

| 29.6 | 85 | 0.804054 | por_Latn | 0.901269 |

6fb3341a3c1f3120a8f9ee6530abf18ae0dd14d6 | 512 | md | Markdown | docs/error-messages/compiler-errors-2/compiler-error-c2873.md | jmittert/cpp-docs | cea5a8ee2b4764b2bac4afe5d386362ffd64e55a | [

"CC-BY-4.0",

"MIT"

] | 2 | 2020-06-30T03:02:58.000Z | 2021-07-27T18:21:28.000Z | docs/error-messages/compiler-errors-2/compiler-error-c2873.md | jmittert/cpp-docs | cea5a8ee2b4764b2bac4afe5d386362ffd64e55a | [

"CC-BY-4.0",

"MIT"

] | 1 | 2019-10-16T08:33:11.000Z | 2019-10-16T08:33:11.000Z | docs/error-messages/compiler-errors-2/compiler-error-c2873.md | jmittert/cpp-docs | cea5a8ee2b4764b2bac4afe5d386362ffd64e55a | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-10-01T01:35:05.000Z | 2020-10-01T01:35:05.000Z | ---

title: "Compiler Error C2873"

ms.date: "11/04/2016"

f1_keywords: ["C2873"]

helpviewer_keywords: ["C2873"]

ms.assetid: 7a10036b-400e-4364-bd2f-dcd7370c5e28

---

# Compiler Error C2873

'symbol' : symbol cannot be used in a using-declaration

A `using` directive is missing a [namespace](../../cpp/namespaces-cpp.md) ke... | 42.666667 | 268 | 0.740234 | eng_Latn | 0.692773 |

6fb511bba74f4a12d0607e71b87c93a4ea56e314 | 359 | md | Markdown | split1/_posts/2014-08-05-Om devasura pathaye namaha 11 times.md | gbuk21/HinduGodsEnglish | b883907ef10e8beaa6030070fc2b8181152def47 | [

"MIT"

] | null | null | null | split1/_posts/2014-08-05-Om devasura pathaye namaha 11 times.md | gbuk21/HinduGodsEnglish | b883907ef10e8beaa6030070fc2b8181152def47 | [

"MIT"

] | null | null | null | split1/_posts/2014-08-05-Om devasura pathaye namaha 11 times.md | gbuk21/HinduGodsEnglish | b883907ef10e8beaa6030070fc2b8181152def47 | [

"MIT"

] | null | null | null | ---

layout: post

last_modified_at: 2021-03-30

title: Om Devasura pathaye namaha 11 times

youtubeId: DONj0lhCaS0

---

Om Devasura pathaye nama

- Who is the lord of Asuras and Devas

{% include youtubePlayer.html id=page.youtubeId %}

[Next]({{ site.baseurl }}{% link split1/_posts/2014-0... | 12.821429 | 90 | 0.665738 | eng_Latn | 0.518717 |

6fb5c7f855f0b28c82eceebe3fdeea6026c58b49 | 184 | md | Markdown | src/main/resources/docs/description/Generic_Debug_CSSLint.md | ruiteix/codacy-codesniffer | 4633bf6cf096bbba341a63f86e32c7b7637b8b4d | [

"Apache-2.0"

] | 6 | 2016-11-02T18:31:40.000Z | 2021-09-12T10:28:08.000Z | src/main/resources/docs/description/Generic_Debug_CSSLint.md | ruiteix/codacy-codesniffer | 4633bf6cf096bbba341a63f86e32c7b7637b8b4d | [

"Apache-2.0"

] | 57 | 2017-06-13T09:48:12.000Z | 2022-03-29T21:19:06.000Z | src/main/resources/docs/description/Generic_Debug_CSSLint.md | ruiteix/codacy-codesniffer | 4633bf6cf096bbba341a63f86e32c7b7637b8b4d | [

"Apache-2.0"

] | 18 | 2016-09-30T16:27:21.000Z | 2020-06-25T08:09:56.000Z | All css files should pass the basic csslint tests.

Valid: Valid CSS Syntax is used.

```

.foo: { width: 100%; }

```

Invalid: The CSS has a typo in it.

```

.foo: { width: 100 %; }

```

| 15.333333 | 50 | 0.61413 | eng_Latn | 0.895011 |

6fb5e21ebee026f891a5a119f2fc212defc8e405 | 3,645 | md | Markdown | README.md | tmandry/async-fundamentals-initiative | d3e9e0ccf0281a76603b779e9f9ef5a8f2136063 | [

"Apache-2.0",

"MIT"

] | 67 | 2021-09-02T16:16:42.000Z | 2022-03-13T05:07:51.000Z | README.md | tmandry/async-fundamentals-initiative | d3e9e0ccf0281a76603b779e9f9ef5a8f2136063 | [

"Apache-2.0",

"MIT"

] | 3 | 2021-09-09T01:10:37.000Z | 2022-02-01T17:44:06.000Z | README.md | tmandry/async-fundamentals-initiative | d3e9e0ccf0281a76603b779e9f9ef5a8f2136063 | [

"Apache-2.0",

"MIT"

] | 6 | 2021-09-09T01:10:23.000Z | 2022-03-16T00:08:18.000Z | # async fundamentals initiative

## What is this?

This page tracks the work of the async fundamentals [initiative], part of the wg-async-foundations [vision process]! To learn more about what we are trying to do, and to find out ... | 50.625 | 237 | 0.687243 | eng_Latn | 0.876965 |

6fb657c143b1257c8f67c466b77acd774d480463 | 74 | md | Markdown | README.md | liaoerdong/springboot-samples | 0703966e05a1c61dc335f96f3da56f1757a5af22 | [

"Apache-2.0"

] | null | null | null | README.md | liaoerdong/springboot-samples | 0703966e05a1c61dc335f96f3da56f1757a5af22 | [

"Apache-2.0"

] | null | null | null | README.md | liaoerdong/springboot-samples | 0703966e05a1c61dc335f96f3da56f1757a5af22 | [

"Apache-2.0"

] | null | null | null | 作者:廖尔东

项目:springboot整合springcloud全家桶,dubbo,权限,seata等等

本项目仅为个人及本人单位内部学习用途

| 14.8 | 46 | 0.878378 | afr_Latn | 0.274495 |

6fb659e5cb981bed16feed00b6d36ebb10041e5f | 988 | markdown | Markdown | source/_posts/2015-07-01-dump-pe-with-windbg-script.markdown | 0cch/0CChBlog | 232ec67da5bf2207c0d89e445e5d310a946659dc | [

"MIT"

] | 4 | 2015-10-21T09:15:35.000Z | 2021-12-04T08:44:58.000Z | source/_posts/2015-07-01-dump-pe-with-windbg-script.markdown | 0cch/0CChBlog | 232ec67da5bf2207c0d89e445e5d310a946659dc | [

"MIT"

] | 9 | 2020-07-20T02:02:49.000Z | 2021-11-30T02:30:48.000Z | source/_posts/2015-07-01-dump-pe-with-windbg-script.markdown | 0cch/0CChBlog | 232ec67da5bf2207c0d89e445e5d310a946659dc | [

"MIT"

] | null | null | null | ---

author: admin

comments: true

date: 2015-07-01 00:11:15+00:00

layout: post

slug: 'dump-pe-with-windbg-script'

title: 用Windbg script将内存中的PE文件dump出来

categories:

- Tips

---

最近看到有些恶意程序,从网络上下载PE文件后,直接放在内存里重定位和初始化,为了能将其dump出来,所以写了这个Windbg脚本。

{% codeblock lang:windbg %}

.foreach( place { !address /f:VAR,MEM_PRIVATE,MEM_... | 22.976744 | 88 | 0.597166 | yue_Hant | 0.398655 |

6fb6f0cca3c2241a999a5963f590f8361e9065bc | 1,510 | md | Markdown | src/php/gustav/doc/Dev-API.md | futape/gustav.futape.de | ea9f264a4efd5b5a2a2ad02b1797e2f0037f7638 | [

"CC0-1.0"

] | 1 | 2019-05-13T07:28:47.000Z | 2019-05-13T07:28:47.000Z | src/php/gustav/doc/Dev-API.md | futape/gustav.futape.de | ea9f264a4efd5b5a2a2ad02b1797e2f0037f7638 | [

"CC0-1.0"

] | null | null | null | src/php/gustav/doc/Dev-API.md | futape/gustav.futape.de | ea9f264a4efd5b5a2a2ad02b1797e2f0037f7638 | [

"CC0-1.0"

] | null | null | null | The Dev API contains class member that are not defined as `private` or those that should be but aren't due to technial restrictions for example. Moreover this section doesn't describe the publically available members, rather it describes the *real* members used to implement the public functionality. For example, the De... | 71.904762 | 447 | 0.771523 | eng_Latn | 0.861433 |

6fb823e5a0d704363d7f89ce9ef51c75c2e0497f | 1,509 | md | Markdown | docs/media/diagrams/README.md | donaldrich80/function-as-a-container | 098c53c3300d5c323e70762234b112f6cdde4f08 | [

"MIT"

] | 2 | 2021-02-07T05:33:02.000Z | 2021-04-01T03:01:05.000Z | docs/media/diagrams/README.md | donaldrich80/function-as-a-container | 098c53c3300d5c323e70762234b112f6cdde4f08 | [

"MIT"

] | null | null | null | docs/media/diagrams/README.md | donaldrich80/function-as-a-container | 098c53c3300d5c323e70762234b112f6cdde4f08 | [

"MIT"

] | 2 | 2020-11-04T05:55:13.000Z | 2021-02-07T05:33:05.000Z | ---

path: tree/master

source: media/diagrams/Dockerfile

---

# diagrams

[](https://hub.docker.com/r/donaldrich/function/diagrams)

## Documentatio... | 20.391892 | 230 | 0.66004 | yue_Hant | 0.574189 |

6fb91738fb1f02da8186aeaca0e8451f22674d0a | 4,938 | md | Markdown | docs/420-ESP8266-diverse-projects.md | NelisW/myOpenHab | f140112fcbc5798d7e2d19200d96bcd91318a98d | [

"CC0-1.0"

] | 31 | 2015-08-15T16:29:03.000Z | 2020-05-26T14:16:41.000Z | docs/420-ESP8266-diverse-projects.md | NelisW/myOpenHab | f140112fcbc5798d7e2d19200d96bcd91318a98d | [

"CC0-1.0"

] | 3 | 2016-08-13T19:20:48.000Z | 2017-07-14T09:04:56.000Z | docs/420-ESP8266-diverse-projects.md | NelisW/myOpenHab | f140112fcbc5798d7e2d19200d96bcd91318a98d | [

"CC0-1.0"

] | 8 | 2016-02-29T22:59:06.000Z | 2018-06-06T00:51:22.000Z | # ESP8266 Diverse Projects

## Web-based ESP environments

A somewhat confusing [post](http://www.instructables.com/id/ESP8266-based-web-configurable-wifi-general-purpos-1/)

The [ESPEasy project](http://www.esp8266.nu/index.php/Main_Page)

## Miscellaneous projects

<http://horaciobouzas.com/> has a number of project ... | 37.984615 | 180 | 0.782503 | eng_Latn | 0.740552 |

6fb939d40a25bbc4cccdf9594f862af230953acf | 16,268 | md | Markdown | wdk-ddi-src/content/wdfio/nf-wdfio-wdfioqueuefindrequest.md | tianye606/windows-driver-docs-ddi | 23fec97f3ed3a0c99b117543982d34ee592501e7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | wdk-ddi-src/content/wdfio/nf-wdfio-wdfioqueuefindrequest.md | tianye606/windows-driver-docs-ddi | 23fec97f3ed3a0c99b117543982d34ee592501e7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | wdk-ddi-src/content/wdfio/nf-wdfio-wdfioqueuefindrequest.md | tianye606/windows-driver-docs-ddi | 23fec97f3ed3a0c99b117543982d34ee592501e7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

UID: NF:wdfio.WdfIoQueueFindRequest

title: WdfIoQueueFindRequest function (wdfio.h)

description: The WdfIoQueueFindRequest method locates the next request in an I/O queue, or the next request that matches specified criteria, but does not grant ownership of the request to the driver.

old-location: wdf\wdfioqueue... | 35.136069 | 557 | 0.629026 | eng_Latn | 0.7019 |

6fb9ae2f3f741638c2adda6dd777c48f5e136fff | 84 | md | Markdown | README.md | smorey2/KFrame | 972eb29e3469c328db77f1819b03fcadcceeb096 | [

"MIT"

] | null | null | null | README.md | smorey2/KFrame | 972eb29e3469c328db77f1819b03fcadcceeb096 | [

"MIT"

] | null | null | null | README.md | smorey2/KFrame | 972eb29e3469c328db77f1819b03fcadcceeb096 | [

"MIT"

] | null | null | null | KFrame -

=====================================================

##DESCRIPTION

XXX

| 12 | 53 | 0.238095 | oci_Latn | 0.998641 |

6fbb4c8a1964b457a9ec8fea8ac5fa83c486b7ae | 5,098 | md | Markdown | README.md | ChicoState/PantryDjango | e075f699f29a3d02ee2c9c2b9a80cae6fce789f4 | [

"MIT"

] | null | null | null | README.md | ChicoState/PantryDjango | e075f699f29a3d02ee2c9c2b9a80cae6fce789f4 | [

"MIT"

] | 10 | 2020-05-13T00:56:16.000Z | 2022-03-12T00:52:29.000Z | README.md | ChicoState/PantryDjango | e075f699f29a3d02ee2c9c2b9a80cae6fce789f4 | [

"MIT"

] | 5 | 2020-04-27T04:17:25.000Z | 2020-08-18T05:01:29.000Z | # Food Pantry [](https://travis-ci.org/ChicoState/PantryDjango)

Welcome to the Food Pantry open source project!

Chico State, like many other universities, has a food pantry for students who do not have access to enough to eat. The pantry ... | 45.115044 | 402 | 0.792468 | eng_Latn | 0.987593 |

6fbb57439239a7225f95afadfbf8d138c4313616 | 463 | md | Markdown | docs/devops/_sidebar.md | Vunovati/titus | 775e6836d5386a60c18d45ee225cbd07c3901892 | [

"Apache-2.0"

] | null | null | null | docs/devops/_sidebar.md | Vunovati/titus | 775e6836d5386a60c18d45ee225cbd07c3901892 | [

"Apache-2.0"

] | null | null | null | docs/devops/_sidebar.md | Vunovati/titus | 775e6836d5386a60c18d45ee225cbd07c3901892 | [

"Apache-2.0"

] | null | null | null | - [Home](/)

- [Quick Start Guide](/quick-start/)

- [Developers](/developers/)

- [DevOps](/devops/?id=devops)

- [Overview](/devops/?id=overview)

- [Deploy Titus](/devops/?id=deploy-titus)

- [Deploy on GCP](/devops/gcp/)

- [Deploy on AWS with Mira](https://github.com/nearform/titus/tree/master/pac... | 42.090909 | 112 | 0.652268 | yue_Hant | 0.22652 |

6fbc3619fd2c49c04f0c61551e3af782b26ee735 | 205 | md | Markdown | content/influxdb/cloud/reference/glossary.md | clwluvw/docs-v2 | dc0c00fb59edd1580198242cb15109a01dfe40cc | [

"MIT"

] | 42 | 2019-10-14T18:38:17.000Z | 2022-03-29T15:34:49.000Z | content/influxdb/cloud/reference/glossary.md | clwluvw/docs-v2 | dc0c00fb59edd1580198242cb15109a01dfe40cc | [

"MIT"

] | 1,870 | 2019-10-14T17:03:50.000Z | 2022-03-30T22:23:24.000Z | content/influxdb/cloud/reference/glossary.md | clwluvw/docs-v2 | dc0c00fb59edd1580198242cb15109a01dfe40cc | [

"MIT"

] | 181 | 2019-11-08T19:40:05.000Z | 2022-03-25T10:01:02.000Z | ---

title: Glossary

description: >

Terms related to InfluxData products and platforms.

weight: 8

menu:

influxdb_cloud_ref:

name: Glossary

influxdb/cloud/tags: [glossary]

---

{{< duplicate-oss >}}

| 15.769231 | 53 | 0.712195 | eng_Latn | 0.75029 |

6fbc4c03aefc1ebf8cffb3668600383a15f600bc | 5,550 | md | Markdown | docs/sparkr-migration-guide.md | etspaceman/spark | 155a67d00cb2f12aad179f6df2d992feca8e003e | [

"Apache-2.0"

] | 4 | 2015-04-27T13:21:39.000Z | 2016-09-28T06:03:00.000Z | docs/sparkr-migration-guide.md | etspaceman/spark | 155a67d00cb2f12aad179f6df2d992feca8e003e | [

"Apache-2.0"

] | 33 | 2015-03-11T05:06:27.000Z | 2016-05-31T09:41:35.000Z | docs/sparkr-migration-guide.md | etspaceman/spark | 155a67d00cb2f12aad179f6df2d992feca8e003e | [

"Apache-2.0"

] | 3 | 2015-09-07T09:02:02.000Z | 2017-01-25T22:52:08.000Z | ---

layout: global

title: "Migration Guide: SparkR (R on Spark)"

displayTitle: "Migration Guide: SparkR (R on Spark)"

license: |

Licensed to the Apache Software Foundation (ASF) under one or more

contributor license agreements. See the NOTICE file distributed with

this work for additional information regarding c... | 71.153846 | 478 | 0.757477 | eng_Latn | 0.994139 |

6fbcc96ef36ad2469ea30348e32a24d781f41a40 | 7,774 | md | Markdown | docs/howto/how_to_add_a_new_fusion_system.md | jzjonah/apollo | bc534789dc0548bf2d27f8d72fe255d5c5e4f951 | [

"Apache-2.0"

] | 22,688 | 2017-07-04T23:17:19.000Z | 2022-03-31T18:56:48.000Z | docs/howto/how_to_add_a_new_fusion_system.md | WJY-Mark/apollo | 463fb82f9e979d02dcb25044e60931293ab2dba0 | [

"Apache-2.0"

] | 4,804 | 2017-07-04T22:30:12.000Z | 2022-03-31T12:58:21.000Z | docs/howto/how_to_add_a_new_fusion_system.md | WJY-Mark/apollo | 463fb82f9e979d02dcb25044e60931293ab2dba0 | [

"Apache-2.0"

] | 9,985 | 2017-07-04T22:01:17.000Z | 2022-03-31T14:18:16.000Z | # How to add a new fusion system

The detailed processing flow of fusion is shown below:

The fusion system introduced by this document is located at fusion Component listed below. Current architecture of Fusion Component is shown:

As we can se... | 33.947598 | 400 | 0.738616 | eng_Latn | 0.743614 |

6fbce1dbc4e5771349467c68eec3bc94ee6760ba | 86 | md | Markdown | README.md | Tita-Navarro/Learning-Angular | 695e3b03753b9edf4fd2362f75a8aca89eb97abf | [

"MIT"

] | null | null | null | README.md | Tita-Navarro/Learning-Angular | 695e3b03753b9edf4fd2362f75a8aca89eb97abf | [

"MIT"

] | null | null | null | README.md | Tita-Navarro/Learning-Angular | 695e3b03753b9edf4fd2362f75a8aca89eb97abf | [

"MIT"

] | null | null | null | # Learning-Angular

Mi primera app con Angular, ejercicios y lo necesario para crearla

| 28.666667 | 66 | 0.813953 | spa_Latn | 0.997346 |

6fbd179369442d48607939216c541d088a7cd778 | 220 | md | Markdown | README.md | papaiatis/wpf-stopwatch-control | 075cbdab973778f715d848b0a0c32692956673ea | [

"MIT"

] | 2 | 2020-05-28T12:21:40.000Z | 2022-03-26T08:30:36.000Z | README.md | papaiatis/wpf-stopwatch-control | 075cbdab973778f715d848b0a0c32692956673ea | [

"MIT"

] | null | null | null | README.md | papaiatis/wpf-stopwatch-control | 075cbdab973778f715d848b0a0c32692956673ea | [

"MIT"

] | null | null | null | # wpf-stopwatch-control

WPF Stopwatch Control

This is a simple WPF stopwatch control. It wraps a single Label control where the time is shown.

You can control the stopwatch with the Start(), Stop() and Pause() methods.

| 36.666667 | 96 | 0.777273 | eng_Latn | 0.998792 |

6fbe3f2f4ba3376da2170b86ac8d96926b3a5155 | 4,010 | md | Markdown | windows-driver-docs-pr/image/properties-for-wia-camera-minidrivers.md | i35010u/windows-driver-docs.zh-cn | e97bfd9ab066a578d9178313f802653570e21e7d | [

"CC-BY-4.0",

"MIT"

] | 1 | 2021-02-04T01:49:58.000Z | 2021-02-04T01:49:58.000Z | windows-driver-docs-pr/image/properties-for-wia-camera-minidrivers.md | i35010u/windows-driver-docs.zh-cn | e97bfd9ab066a578d9178313f802653570e21e7d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | windows-driver-docs-pr/image/properties-for-wia-camera-minidrivers.md | i35010u/windows-driver-docs.zh-cn | e97bfd9ab066a578d9178313f802653570e21e7d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: WIA 相机微型驱动程序的属性

description: WIA 相机微型驱动程序的属性

ms.date: 04/20/2017

ms.localizationpriority: medium

ms.openlocfilehash: 9defc4784feb4aa727598f23429245a7bde9a32b

ms.sourcegitcommit: 418e6617e2a695c9cb4b37b5b60e264760858acd

ms.translationtype: MT

ms.contentlocale: zh-CN

ms.lasthandoff: 12/07/2020

ms.locfileid: "9... | 28.041958 | 141 | 0.623691 | yue_Hant | 0.694958 |

6fbec5e2e615f3519dc92a32185f3b770d3b2da1 | 5,839 | md | Markdown | docs/big-data-cluster/hdfs-tiering.md | gmilani/sql-docs.pt-br | 02f07ca69eae8435cefd74616a8b00f09c4d4f99 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/big-data-cluster/hdfs-tiering.md | gmilani/sql-docs.pt-br | 02f07ca69eae8435cefd74616a8b00f09c4d4f99 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/big-data-cluster/hdfs-tiering.md | gmilani/sql-docs.pt-br | 02f07ca69eae8435cefd74616a8b00f09c4d4f99 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Configurar a camada do HDFS

titleSuffix: SQL Server big data clusters

description: Este artigo explica como configurar a camada do HDFS para montar um sistema de arquivos externo do Azure Data Lake Storage no HDFS em um [!INCLUDE[big-data-clusters-2019](../includes/ssbigdataclusters-ver15.md)].

author: nelgs... | 72.9875 | 814 | 0.788149 | por_Latn | 0.999786 |

6fbee27b57f15374ed613b446599c144569f5915 | 4,711 | md | Markdown | Office365-ServiceDescriptions/onedrive-for-business-service-description.md | MicrosoftDocs/OfficeDocs-O365ServiceDescriptions-pr.ru-RU | b3b02dab69d8ba828f2aa831c8db8a9b2003d421 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-05-19T19:22:27.000Z | 2020-05-19T19:22:27.000Z | Office365-ServiceDescriptions/onedrive-for-business-service-description.md | MicrosoftDocs/OfficeDocs-O365ServiceDescriptions-pr.ru-RU | b3b02dab69d8ba828f2aa831c8db8a9b2003d421 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2022-01-26T05:02:47.000Z | 2022-01-26T05:02:47.000Z | Office365-ServiceDescriptions/onedrive-for-business-service-description.md | MicrosoftDocs/OfficeDocs-O365ServiceDescriptions-pr.ru-RU | b3b02dab69d8ba828f2aa831c8db8a9b2003d421 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2019-10-11T20:04:07.000Z | 2019-10-11T20:04:07.000Z | ---

title: Описание услуги OneDrive

ms.author: office365servicedesc

author: pamelaar

manager: gailw

audience: ITPro

ms.topic: reference

f1_keywords:

- onedrive-for-business-service-description

ms.service: o365-administration

localization_priority: Critical

ms.custom:

- Adm_ServiceDesc

- Adm_ServiceDesc_top

ms.assetid: ... | 69.279412 | 500 | 0.782424 | rus_Cyrl | 0.804424 |

6fbf2a9144698c8f109c4d94109eca2d674ca4f8 | 74 | md | Markdown | vue/get-double/README.md | fossabot/100DaysOfCode-1 | 0d5c4e832e795ed63f0e846f8662aea2fcaeb774 | [

"MIT"

] | 13 | 2019-05-21T20:36:28.000Z | 2021-11-09T16:21:22.000Z | vue/get-double/README.md | GDSC-Algoma-University/21DaysOfWebdev | e33b30192e0bd9c76659225f122aa030008b076c | [

"MIT"

] | 3 | 2019-09-02T17:39:51.000Z | 2022-03-15T17:33:56.000Z | vue/get-double/README.md | GDSC-Algoma-University/21DaysOfWebdev | e33b30192e0bd9c76659225f122aa030008b076c | [

"MIT"

] | 6 | 2020-09-20T10:42:08.000Z | 2022-01-30T17:53:06.000Z | ## Creating *get-double* project

### Understanding `computed properties`

| 18.5 | 39 | 0.743243 | eng_Latn | 0.939772 |

6fbfb35404c135cf73cc4351aab9595bc31bfcf5 | 4,704 | md | Markdown | themes/gatsby-theme-flex/README.md | mjaydenkim/data-analysis | dc0aeaed3d005cba69bc6be488a3ce53ebe6d35f | [

"MIT"

] | 39 | 2019-11-19T10:57:24.000Z | 2019-11-28T02:34:21.000Z | themes/gatsby-theme-flex/README.md | mjaydenkim/data-analysis | dc0aeaed3d005cba69bc6be488a3ce53ebe6d35f | [

"MIT"

] | 7 | 2021-09-19T00:22:55.000Z | 2022-03-15T02:02:53.000Z | themes/gatsby-theme-flex/README.md | mjaydenkim/data-analysis | dc0aeaed3d005cba69bc6be488a3ce53ebe6d35f | [

"MIT"

] | 3 | 2019-11-21T18:36:23.000Z | 2019-11-24T02:20:26.000Z | <div align="center">

<h1>Page Builder Blocks for Gatsby</h1>

</div>

<p align="center">

Combine the power of <strong>Gatsby</strong>, <strong>MDX</strong> and <strong>Theme UI</strong> to build blazing fast websites.

</p>

<p align="center">

<a href="https://github.com/arshad/gatsby-themes/blob/master/LICENSE"><img s... | 26.27933 | 216 | 0.698767 | eng_Latn | 0.856917 |

6fbfc4a4500b851f9d1a2b388a7962ac8ca5bcb2 | 2,943 | md | Markdown | _success-stories/robbie_ouzts.md | JoshDoody/fsn | f06550c7213e92297ba7c6c68fea624e51278dff | [

"MIT"

] | null | null | null | _success-stories/robbie_ouzts.md | JoshDoody/fsn | f06550c7213e92297ba7c6c68fea624e51278dff | [

"MIT"

] | 11 | 2021-08-31T14:25:06.000Z | 2021-11-10T16:44:56.000Z | _success-stories/robbie_ouzts.md | JoshDoody/fsn | f06550c7213e92297ba7c6c68fea624e51278dff | [

"MIT"

] | 2 | 2021-08-13T20:05:05.000Z | 2021-08-13T22:05:00.000Z | ---

name: Robbie Ouzts

job_title: Georgia Institute of Technology Graduate Career & Co-op Advisor

company:

industry:

headshot: robbie_ouzts.jpg

short_version: |

**Josh's talk is one of the best I have heard on salary negotiation, and our students gave him rave reviews! He is a dynamic speaker, with clear idea... | 113.192308 | 547 | 0.799524 | eng_Latn | 0.999912 |

6fbfe5e6c274d283411779543365db1f83c2a96b | 8,735 | md | Markdown | articles/supply-chain/global-inventory-accounting/get-started-with-gia.md | MicrosoftDocs/Dynamics-365-Operations.pt-br | ad7f7b44fef487c4fcf846ab4b105d945f3b6554 | [

"CC-BY-4.0",

"MIT"

] | 4 | 2020-04-02T09:49:16.000Z | 2021-06-01T17:08:54.000Z | articles/supply-chain/global-inventory-accounting/get-started-with-gia.md | MicrosoftDocs/Dynamics-365-Operations.pt-br | ad7f7b44fef487c4fcf846ab4b105d945f3b6554 | [

"CC-BY-4.0",

"MIT"

] | 7 | 2017-12-12T13:23:02.000Z | 2021-07-15T11:10:45.000Z | articles/supply-chain/global-inventory-accounting/get-started-with-gia.md | MicrosoftDocs/Dynamics-365-Operations.pt-br | ad7f7b44fef487c4fcf846ab4b105d945f3b6554 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2021-10-13T09:28:14.000Z | 2021-10-13T09:28:14.000Z | ---

title: Introdução à Contabilidade de estoque global

description: Este tópico descreve como começar com a Contabilidade de estoque global.

author: AndersGirke

ms.date: 06/18/2021

ms.topic: article

audience: Application User

ms.reviewer: kamaybac

ms.custom: intro-internal

ms.search.region: Global

ms.author: aevengir

... | 67.713178 | 481 | 0.785003 | por_Latn | 0.998109 |

6fc0bec70b60b4535cba11aa4eb6631733ab502f | 377 | md | Markdown | README.md | phk422/- | 2b207b4de211319df5aeb525e1058e8a2e61d550 | [

"MIT"

] | 2 | 2020-01-18T08:41:53.000Z | 2020-06-10T02:57:30.000Z | README.md | phk422/- | 2b207b4de211319df5aeb525e1058e8a2e61d550 | [

"MIT"

] | null | null | null | README.md | phk422/- | 2b207b4de211319df5aeb525e1058e8a2e61d550 | [

"MIT"

] | null | null | null | # 小程序仿微信客户端界面/朋友圈和好友列表数据通过接口获取,接口用java实现

... | 29 | 95 | 0.814324 | yue_Hant | 0.063409 |

6fc12036138c7e218278be9b429630598eddf8e1 | 1,839 | md | Markdown | developer-guides/rest-api/users/info/README.md | Rodriq/GSoC-2019-Interactive-APIs-Docs | 79bea2c987fc6a790762ed3efef918c5d409792d | [

"MIT"

] | null | null | null | developer-guides/rest-api/users/info/README.md | Rodriq/GSoC-2019-Interactive-APIs-Docs | 79bea2c987fc6a790762ed3efef918c5d409792d | [

"MIT"

] | 15 | 2019-06-13T18:28:51.000Z | 2021-04-16T11:47:20.000Z | developer-guides/rest-api/users/info/README.md | Rodriq/GSoC-2019-Interactive-APIs-Docs | 79bea2c987fc6a790762ed3efef918c5d409792d | [

"MIT"

] | null | null | null | ---

method: get

parameters: true

endpoint: users.info

authentication: true

category: users

permalink: /developer-guides/rest-api/users/info/

---

{% capture fullPath %}{{ "/api/v1/" | append: page.endpoint }}{% endcapture %}

# Info

{% include api/specific_endpoint.html category=page.category endpoint=page.endpoint me... | 28.292308 | 181 | 0.713431 | eng_Latn | 0.52626 |

6fc19d1449be1b94f5776316506352eb6d0836ef | 7,070 | md | Markdown | docs/mfc/mfc-activex-controls-advanced-topics.md | sunbohong/cpp-docs.zh-cn | 493f8d9a3d1ad73e056941fde491e76329f9c5ec | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/mfc/mfc-activex-controls-advanced-topics.md | sunbohong/cpp-docs.zh-cn | 493f8d9a3d1ad73e056941fde491e76329f9c5ec | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/mfc/mfc-activex-controls-advanced-topics.md | sunbohong/cpp-docs.zh-cn | 493f8d9a3d1ad73e056941fde491e76329f9c5ec | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

description: 了解更多: MFC ActiveX 控件:高级主题

title: MFC ActiveX 控件:高级主题

ms.date: 09/12/2018

helpviewer_keywords:

- MFC ActiveX controls [MFC], error codes

- MFC ActiveX controls [MFC], accessing invisible dialog controls

- MFC ActiveX controls [MFC], advanced topics

- FireError method [MFC]

- MFC ActiveX controls [MFC], ... | 37.807487 | 298 | 0.78628 | yue_Hant | 0.809301 |

6fc1eaa916744183cbadcfdbb68a11b5f5fee260 | 8,560 | md | Markdown | anim/anim.md | dannycx/Demo | 204fca655db00cf41fcefa187d6acfc10a073370 | [

"Apache-2.0"

] | null | null | null | anim/anim.md | dannycx/Demo | 204fca655db00cf41fcefa187d6acfc10a073370 | [

"Apache-2.0"

] | null | null | null | anim/anim.md | dannycx/Demo | 204fca655db00cf41fcefa187d6acfc10a073370 | [

"Apache-2.0"

] | null | null | null | # Android 动画

- 帧动画(AnimationDrawable):一张张图片组成,类似放电影效果,需要大量图片资源

- 补间动画(不改变控件实际位置):旋转动画(RotateAnimation)、透明动画(AlphaAnimation)、缩放动画(ScaleAnimation)、平移动画(TranslateAnimation)

- 属性动画(改变控件实际位置):ValueAnimator、ObjectAnimator

- 布局动画:添加属性android:layoutAnimation=”@anim/layout_animation”,android:animateLayoutChanges,LayoutTransitio... | 30.035088 | 214 | 0.744509 | yue_Hant | 0.439775 |

6fc2a543e15df0654ff3a92e6a58458cda746d0d | 39 | md | Markdown | README.md | HuangQiii/React_Echarts | 0377157902384600e10c2e9880adbb1f6b8aca08 | [

"MIT"

] | null | null | null | README.md | HuangQiii/React_Echarts | 0377157902384600e10c2e9880adbb1f6b8aca08 | [

"MIT"

] | null | null | null | README.md | HuangQiii/React_Echarts | 0377157902384600e10c2e9880adbb1f6b8aca08 | [

"MIT"

] | null | null | null | # React_Echarts

一个测试echarts多需求的project

| 13 | 22 | 0.897436 | kor_Hang | 0.629155 |

6fc3362c5e06da9c108b4629a4ab4b67b001fb1b | 3,211 | md | Markdown | MD/README_FR.md | PierreBoisleve/Projet_Comparateur_de_Vols_FullStack | 3e0ec31264703ec1036a6f7e3fbbdd7dab4673ac | [

"MIT"

] | null | null | null | MD/README_FR.md | PierreBoisleve/Projet_Comparateur_de_Vols_FullStack | 3e0ec31264703ec1036a6f7e3fbbdd7dab4673ac | [

"MIT"

] | null | null | null | MD/README_FR.md | PierreBoisleve/Projet_Comparateur_de_Vols_FullStack | 3e0ec31264703ec1036a6f7e3fbbdd7dab4673ac | [

"MIT"

] | null | null | null | # Projet CIR2 Groupe 10

Membres du groupe : Eloi ANSELMET, Pierre BOISLEVE, Hugo MERLE, Tristan ROUX

# Cadre du projet :

- Projet de fin d'année 2019/2020 de la formation CIR de la deuxième promotion de l'ISEN Yncrea Ouest - Site de Nantes - Carquefou 44470

- Objectif du projet : créer / simuler un site de réservation... | 68.319149 | 187 | 0.749922 | fra_Latn | 0.996861 |

6fc3890e78d8f9c236d985a15ae6bf761e883702 | 943 | md | Markdown | README.md | mariohernandezk10/Homework_-4 | 149350f1e21a4cdcb4a783ae621f231d99899256 | [

"Apache-2.0"

] | null | null | null | README.md | mariohernandezk10/Homework_-4 | 149350f1e21a4cdcb4a783ae621f231d99899256 | [

"Apache-2.0"

] | null | null | null | README.md | mariohernandezk10/Homework_-4 | 149350f1e21a4cdcb4a783ae621f231d99899256 | [

"Apache-2.0"

] | null | null | null | # Not Your Average Quiz

## Description

Take a challenge quiz using simple HTML, JS, and CSS. I used local storage to store the highscores.

Screenshot:

## Table of Contents

* [Installation](#installation)

* [U... | 18.86 | 209 | 0.71474 | eng_Latn | 0.904062 |

6fc39ba3e01f9a99f205bfc252b31bcda2e483f0 | 2,986 | md | Markdown | README.md | xtreme-jason-smith/datadog-agent-boshrelease | cfbd267b92d9145af313ce878e74243819ceba7a | [

"Apache-2.0"

] | 3 | 2019-03-28T01:38:25.000Z | 2021-05-13T03:19:31.000Z | README.md | xtreme-jason-smith/datadog-agent-boshrelease | cfbd267b92d9145af313ce878e74243819ceba7a | [

"Apache-2.0"

] | 25 | 2017-05-30T20:31:04.000Z | 2020-10-30T21:43:11.000Z | README.md | xtreme-jason-smith/datadog-agent-boshrelease | cfbd267b92d9145af313ce878e74243819ceba7a | [

"Apache-2.0"

] | 18 | 2017-03-28T23:04:19.000Z | 2021-01-07T10:02:22.000Z | # Datadog Agent release for BOSH

* For Debian and RHEL/CentOS based stemcells

* Automatically defines tags based on deployments, names and jobs

* Process, network, ntp and disk integrations by default

* Monit processes are added automatically to process integration

* You can define additional integrations

# What thi... | 30.783505 | 172 | 0.761889 | eng_Latn | 0.993162 |

6fc3bb5c3d392a7cf97b7e4cf624e4fbbf45b7dc | 9,121 | md | Markdown | README.md | write-the-docs-quorum/quorum-meetups | 03994014e20ce467efbfdaa4ff6d779c119b28bb | [

"MIT"

] | 6 | 2020-12-11T20:03:31.000Z | 2021-11-12T00:02:41.000Z | README.md | write-the-docs-quorum/quorum-meetups | 03994014e20ce467efbfdaa4ff6d779c119b28bb | [

"MIT"

] | 17 | 2020-11-01T02:59:16.000Z | 2021-05-16T23:19:29.000Z | README.md | write-the-docs-quorum/quorum-meetups | 03994014e20ce467efbfdaa4ff6d779c119b28bb | [

"MIT"

] | 2 | 2021-03-18T13:47:15.000Z | 2021-06-16T04:18:50.000Z | # Write the Docs Quorum

This is the place to learn about the Write the Docs Quorum pilot program.

## :sparkles: What is Write the Docs Quorum?

The Quorum program brings together various local [Write the Docs](https://www.writethedocs.org/) meetup chapters that are in a common time zone to provide quarterly s... | 63.783217 | 280 | 0.769872 | eng_Latn | 0.997602 |

6fc439fb6bcd8be419fab16e925059f9c30cb85f | 9,097 | md | Markdown | doc/chaos.md | adriannovegil/spring-petclinic-microservices-sre | 2170f46d9a381e6e7c1f1e3d72c131a6fc0bf805 | [

"Apache-2.0"

] | null | null | null | doc/chaos.md | adriannovegil/spring-petclinic-microservices-sre | 2170f46d9a381e6e7c1f1e3d72c131a6fc0bf805 | [

"Apache-2.0"

] | null | null | null | doc/chaos.md | adriannovegil/spring-petclinic-microservices-sre | 2170f46d9a381e6e7c1f1e3d72c131a6fc0bf805 | [

"Apache-2.0"

] | null | null | null | # Chaos experiments

Following you can read all the information about the chaos experiments defined in the project.

You can execute:

- Chaos using Spring Boot Chaos Monkey interacting directly with the framework

- Chaos using Chaos Toolkit and Spring Boot Chaos Monkey

- Chaos using shell scripts

## 1. Define steady ... | 35.25969 | 368 | 0.73112 | eng_Latn | 0.913204 |

6fc45f5b46ea92eb4f28977d9b2f95fe1df13015 | 1,998 | md | Markdown | paas/sw-frontend/docs/documents/mzz07m.md | alibaba/SREWorks | 913bee3410fd5f3c1ccf62f9b20465f162be4cfb | [

"Apache-2.0"

] | 407 | 2022-03-16T08:09:38.000Z | 2022-03-31T12:27:10.000Z | paas/sw-frontend/docs/documents/mzz07m.md | alibaba/SREWorks | 913bee3410fd5f3c1ccf62f9b20465f162be4cfb | [

"Apache-2.0"

] | 25 | 2022-03-22T04:27:31.000Z | 2022-03-30T08:47:28.000Z | paas/sw-frontend/docs/documents/mzz07m.md | alibaba/SREWorks | 913bee3410fd5f3c1ccf62f9b20465f162be4cfb | [

"Apache-2.0"

] | 109 | 2022-03-21T17:30:44.000Z | 2022-03-31T09:36:28.000Z | # 2.2 源码构建安装

<a name="kliWz"></a>

# 1. SREWorks源码构建

<a name="xPY76"></a>

## 构建环境准备

- Kubernetes 的版本需要大于等于 **1.20**

- 一台安装有 `git / docker`命令的机器

- 一个可用于上传构建容器镜像仓库(执行`docker push`推送镜像)

- 源码构建包含通过Pod构建镜像环节,所需服务器资源量大于快速构建方案(3台4核16G)

<a name="naB3... | 24.975 | 80 | 0.717718 | yue_Hant | 0.299315 |

6fc4840b6443e18b4557341a4d84b6b7768a2db0 | 2,829 | md | Markdown | documents/aws-javascript-developer-guide-v2/doc_source/setting-credentials-node.md | siagholami/aws-documentation | 2d06ee9011f3192b2ff38c09f04e01f1ea9e0191 | [

"CC-BY-4.0"

] | 5 | 2021-08-13T09:20:58.000Z | 2021-12-16T22:13:54.000Z | documents/aws-javascript-developer-guide-v2/doc_source/setting-credentials-node.md | siagholami/aws-documentation | 2d06ee9011f3192b2ff38c09f04e01f1ea9e0191 | [

"CC-BY-4.0"

] | null | null | null | documents/aws-javascript-developer-guide-v2/doc_source/setting-credentials-node.md | siagholami/aws-documentation | 2d06ee9011f3192b2ff38c09f04e01f1ea9e0191 | [

"CC-BY-4.0"

] | null | null | null | # Setting Credentials in Node\.js<a name="setting-credentials-node"></a>

There are several ways in Node\.js to supply your credentials to the SDK\. Some of these are more secure and others afford greater convenience while developing an application\. When obtaining credentials in Node\.js, be careful about relying on m... | 64.295455 | 426 | 0.794981 | eng_Latn | 0.992924 |

6fc4ffea1175a81cf019855260b9bb3d53156dba | 406 | md | Markdown | _notes/Clases_Aprendizaje_Profundo_Mariano/GradCAM.md | jRicciL/my-obsidian-digital-garden | 9ab25911a24076922edc5ea687d50073fce49af1 | [

"MIT"

] | 4 | 2022-03-11T20:13:27.000Z | 2022-03-30T19:16:54.000Z | _notes/Clases_Aprendizaje_Profundo_Mariano/GradCAM.md | jRicciL/my-obsidian-digital-garden | 9ab25911a24076922edc5ea687d50073fce49af1 | [

"MIT"

] | null | null | null | _notes/Clases_Aprendizaje_Profundo_Mariano/GradCAM.md | jRicciL/my-obsidian-digital-garden | 9ab25911a24076922edc5ea687d50073fce49af1 | [

"MIT"

] | null | null | null | ---

---

# Gradient Class Activation Mapping

#GradCAM

#SirajRaval

***

<iframe width="560" height="315" src="https://www.youtube.com/embed/Y8mSngdQb9Q" title="YouTube video player" frameborder="0" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture" allowfullscreen></iframe>

... | 33.833333 | 248 | 0.746305 | eng_Latn | 0.172754 |

6fc57cf5cc494534a52fb860db523ad2cdbf6585 | 359 | md | Markdown | docs/aron7awol/56994748.md | 3ll3d00d/beqcatalogue | ce38c769c437de382b511e14e60e131944f2ca7d | [

"MIT"

] | 1 | 2021-01-30T20:28:22.000Z | 2021-01-30T20:28:22.000Z | docs/aron7awol/56994748.md | 3ll3d00d/beqcatalogue | ce38c769c437de382b511e14e60e131944f2ca7d | [

"MIT"

] | 7 | 2020-09-14T21:51:16.000Z | 2021-04-03T14:48:01.000Z | docs/aron7awol/56994748.md | 3ll3d00d/beqcatalogue | ce38c769c437de382b511e14e60e131944f2ca7d | [

"MIT"

] | 1 | 2021-03-08T20:09:01.000Z | 2021-03-08T20:09:01.000Z | # Free Fire

## DTS-HD MA 5.1

**2016 • R • 1h 30m • Action, Crime, Mystery • aron7awol**

A crime drama set in 1970s Boston, about a gun sale which goes wrong.

[Discuss](https://www.avsforum.com/threads/bass-eq-for-filtered-movies.2995212/post-56994748) [TMDB](334521)

![im... | 23.933333 | 109 | 0.693593 | yue_Hant | 0.625668 |

6fc5db2b96fe1853100070c2c5f056fe150d2246 | 781 | md | Markdown | AlchemyInsights/fix-0x8004de40-error-in-onedrive.md | isabella232/OfficeDocs-AlchemyInsights-pr.cs-CZ | 5ed88ef27055481eb0b053d1b3704fa2c5f67b4b | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-05-19T19:05:56.000Z | 2020-05-19T19:05:56.000Z | AlchemyInsights/fix-0x8004de40-error-in-onedrive.md | MicrosoftDocs/OfficeDocs-AlchemyInsights-pr.cs-CZ | 5de78c659954b926467b06b68b46812f72d379d5 | [

"CC-BY-4.0",

"MIT"

] | 3 | 2020-06-02T23:24:47.000Z | 2022-02-09T06:56:38.000Z | AlchemyInsights/fix-0x8004de40-error-in-onedrive.md | isabella232/OfficeDocs-AlchemyInsights-pr.cs-CZ | 5ed88ef27055481eb0b053d1b3704fa2c5f67b4b | [

"CC-BY-4.0",

"MIT"

] | 4 | 2019-10-09T20:27:51.000Z | 2021-10-09T10:51:00.000Z | ---

title: Oprava 0x8004de40 chyby v OneDrive

ms.author: pebaum

author: pebaum

ms.date: 04/21/2020

ms.audience: ITPro

ms.topic: article

ms.service: o365-administration

ROBOTS: NOINDEX, NOFOLLOW

localization_priority: Normal

ms.openlocfilehash: bedb20c830f47e71ac3aa6efd87b9b280d8ef55f

ms.sourcegitcommit: ab75f66355116e9... | 35.5 | 180 | 0.820743 | ces_Latn | 0.395447 |

6fc65a0b46dabf1a1297102d8129d055fbe52fbe | 557 | md | Markdown | trust/chad-johnston.md | alexnguyennz/vsct | 12a9a219115b1b35d6f067c6b46493b3b2ec1aa1 | [

"MIT"

] | 1 | 2022-01-18T01:45:49.000Z | 2022-01-18T01:45:49.000Z | trust/chad-johnston.md | alexnguyennz/vsct | 12a9a219115b1b35d6f067c6b46493b3b2ec1aa1 | [

"MIT"

] | null | null | null | trust/chad-johnston.md | alexnguyennz/vsct | 12a9a219115b1b35d6f067c6b46493b3b2ec1aa1 | [

"MIT"

] | null | null | null | ---

name: Chad Johnston

position: Trustee

image: /_public/img/trust/chad-johnston.webp

order: 5

---

A professional builder by trade, Chad is now the General Manager of Rydges Wellington Airport and has been in hospitality now for over 10 years. He discovered an unexpected passion for this dynamic industry and is motiv... | 55.7 | 301 | 0.797127 | eng_Latn | 0.999891 |

6fc6969b4f15f8347ac9aad8b928e9d0eb22882c | 48 | md | Markdown | README.md | fcoeverardo/Esteometria | 5a0199f55bc8e208aff92a707b46e3da72b88159 | [

"MIT"

] | null | null | null | README.md | fcoeverardo/Esteometria | 5a0199f55bc8e208aff92a707b46e3da72b88159 | [

"MIT"

] | null | null | null | README.md | fcoeverardo/Esteometria | 5a0199f55bc8e208aff92a707b46e3da72b88159 | [

"MIT"

] | null | null | null | # Estequiometria

Aplicativo Android Educacional

| 16 | 30 | 0.875 | por_Latn | 0.733368 |

6fc6a87c0ed35506d721bb339350dea16202d980 | 2,957 | md | Markdown | add/metadata/System.Windows.Documents/TableColumnCollection.meta.md | kcpr10/dotnet-api-docs | b73418e9a84245edde38474bdd600bf06d047f5e | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-06-16T22:24:36.000Z | 2020-06-16T22:24:36.000Z | add/metadata/System.Windows.Documents/TableColumnCollection.meta.md | kcpr10/dotnet-api-docs | b73418e9a84245edde38474bdd600bf06d047f5e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | add/metadata/System.Windows.Documents/TableColumnCollection.meta.md | kcpr10/dotnet-api-docs | b73418e9a84245edde38474bdd600bf06d047f5e | [

"CC-BY-4.0",

"MIT"

] | 1 | 2019-04-08T14:42:27.000Z | 2019-04-08T14:42:27.000Z | ---

uid: System.Windows.Documents.TableColumnCollection

---

---

uid: System.Windows.Documents.TableColumnCollection.System#Collections#IList#IndexOf(System.Object)

---

---

uid: System.Windows.Documents.TableColumnCollection.CopyTo(System.Array,System.Int32)

---

---

uid: System.Windows.Documents.TableColumnCollection... | 23.846774 | 142 | 0.793372 | yue_Hant | 0.997115 |

6fc6d2bdc80d317e3305feeccf3dc8d6895367d9 | 2,452 | md | Markdown | ru/datasphere/api-ref/Project/get.md | OlesyaAkimova28/docs | 08b8e09d3346ec669daa886a8eda836c3f14a0b0 | [

"CC-BY-4.0"

] | 1 | 2022-03-03T01:02:33.000Z | 2022-03-03T01:02:33.000Z | ru/datasphere/api-ref/Project/get.md | OlesyaAkimova28/docs | 08b8e09d3346ec669daa886a8eda836c3f14a0b0 | [

"CC-BY-4.0"

] | null | null | null | ru/datasphere/api-ref/Project/get.md | OlesyaAkimova28/docs | 08b8e09d3346ec669daa886a8eda836c3f14a0b0 | [

"CC-BY-4.0"

] | null | null | null | ---

editable: false

---

# Method get

Returns the specified project.

## HTTP request {#https-request}

```

GET https://datasphere.api.cloud.yandex.net/datasphere/v1/projects/{projectId}

```

## Path parameters {#path_params}

Parameter | Description

--- | ---

projectId | Required. ID of the Project resource to re... | 41.559322 | 357 | 0.690457 | eng_Latn | 0.82124 |

6fc724192a5dc0aaecaad4e8a08cac6211857351 | 11,467 | md | Markdown | docs/xamarin-forms/platform/sign-in-with-apple/android-ios-sign-in.md | DamianSuess/xamarin-docs | 3bc1dea069f4e646c762efbd41b99f038284f01d | [

"CC-BY-4.0",

"MIT"

] | 1 | 2021-12-09T05:19:03.000Z | 2021-12-09T05:19:03.000Z | docs/xamarin-forms/platform/sign-in-with-apple/android-ios-sign-in.md | DamianSuess/xamarin-docs | 3bc1dea069f4e646c762efbd41b99f038284f01d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/xamarin-forms/platform/sign-in-with-apple/android-ios-sign-in.md | DamianSuess/xamarin-docs | 3bc1dea069f4e646c762efbd41b99f038284f01d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: "Use Sign In with Apple for Xamarin.Forms"

description: "Learn how to implement Sign In with Apple in your Xamarin.Forms mobile applications."

ms.prod: xamarin

ms.assetid: 2E47E7F2-93D4-4CA3-9E66-247466D25E4D

ms.technology: xamarin-forms

author: davidortinau

ms.author: daortin

ms.date: 09/10/2019

no-loc: [Xa... | 46.425101 | 496 | 0.742217 | eng_Latn | 0.8999 |

6fc72dcc420c80b6d90cd1302ee0333a9e756724 | 29 | md | Markdown | README.md | samuilll/BeginnerExams | 6eec7b295e684399254b44c60b1fd96509004803 | [

"MIT"

] | null | null | null | README.md | samuilll/BeginnerExams | 6eec7b295e684399254b44c60b1fd96509004803 | [

"MIT"