hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6

values | lang stringclasses 1

value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191

values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

1c60b710a5ac7c5d35abcea95b51866bef4f745c | 1,338 | md | Markdown | README.md | alyssa0528/eats-abroad-rails | 74ea8e6eae88bf57b292ad0d7385b61831961db4 | [

"MIT"

] | null | null | null | README.md | alyssa0528/eats-abroad-rails | 74ea8e6eae88bf57b292ad0d7385b61831961db4 | [

"MIT"

] | null | null | null | README.md | alyssa0528/eats-abroad-rails | 74ea8e6eae88bf57b292ad0d7385b61831961db4 | [

"MIT"

] | null | null | null | # Welcome to the Eats Abroad restaurant recommendation app!

## Usage

The Eats Abroad Rails application is loosely based off the concept of eatsabroad.com, a site where I interviewed chefs about their favorite restaurants and bars in their cities. This app allows chefs to create a list of their favorite restaurants an... | 60.818182 | 418 | 0.792227 | eng_Latn | 0.997519 |

1c60bea56537a61a7fb34ada9ec8442f3d9058a1 | 1,040 | md | Markdown | Engineering/Availability.md | thomassajot/brain-dam | 31301cc9142064e5a49d3b7a8e4734ae27131c69 | [

"MIT"

] | null | null | null | Engineering/Availability.md | thomassajot/brain-dam | 31301cc9142064e5a49d3b7a8e4734ae27131c69 | [

"MIT"

] | null | null | null | Engineering/Availability.md | thomassajot/brain-dam | 31301cc9142064e5a49d3b7a8e4734ae27131c69 | [

"MIT"

] | null | null | null | What happens if the system fails. How fault tolerant is the system. The percentage of time where the system is satisfying its primary functions.

# SLA / SLO

System level agreement. System level objective. SLO are components of SLA.

# Nines

The percentage of time where the system is running according to SLA is usually... | 86.666667 | 236 | 0.772115 | eng_Latn | 0.999831 |

1c613bd740adc3457525946fdf16194675b98278 | 1,334 | md | Markdown | desktop-src/AD/mappings-for-the-active-directory-users-and-computers-snap-in.md | citelao/win32 | bf61803ccb0071d99eee158c7416b9270a83b3e4 | [

"CC-BY-4.0",

"MIT"

] | 552 | 2019-08-20T00:08:40.000Z | 2022-03-30T18:25:35.000Z | desktop-src/AD/mappings-for-the-active-directory-users-and-computers-snap-in.md | citelao/win32 | bf61803ccb0071d99eee158c7416b9270a83b3e4 | [

"CC-BY-4.0",

"MIT"

] | 1,143 | 2019-08-21T20:17:47.000Z | 2022-03-31T20:24:39.000Z | desktop-src/AD/mappings-for-the-active-directory-users-and-computers-snap-in.md | citelao/win32 | bf61803ccb0071d99eee158c7416b9270a83b3e4 | [

"CC-BY-4.0",

"MIT"

] | 1,287 | 2019-08-20T05:37:48.000Z | 2022-03-31T20:22:06.000Z | ---

title: Mappings for the Active Directory Users and Computers Snap-in

description: The Active Directory Users and Computers snap-in includes property sheets and other user interfaces for the following object classes.The Object property sheet is common to all object classes.

ms.assetid: 4216aede-b513-4db9-a6de-1a79b3... | 41.6875 | 204 | 0.793853 | eng_Latn | 0.915072 |

1c61acda3bf3908ba0bf1d1cb44a2a6442fea9a7 | 1,472 | md | Markdown | test.md | tcbutler320/tcbutler320.github.io | efa2122a7f999ad611c1caababab2e067b5e42fc | [

"MIT"

] | null | null | null | test.md | tcbutler320/tcbutler320.github.io | efa2122a7f999ad611c1caababab2e067b5e42fc | [

"MIT"

] | 2 | 2019-09-04T23:32:46.000Z | 2019-09-05T11:32:34.000Z | test.md | tcbutler320/tcbutler320.github.io | efa2122a7f999ad611c1caababab2e067b5e42fc | [

"MIT"

] | null | null | null | | Date | CVE | Title | Bounty |

|--- |--- |--- |---|

| 09/2021 | CVE-2021-38701| To Be Released| $250 |

| 08/2021 | CVE-2021-3441 | [HP Officejet - 'AirPrint' Cross Site Scripting (XSS)](https://tbutler.org/2021/04/29/hp-officejet-4630)| N/A |

| 06/2021 | CVE-2021-35956 | [AKCP sensorProbe - 'Multiple' Cross Site Scr... | 113.230769 | 145 | 0.686141 | yue_Hant | 0.58319 |

1c634e7cc51ead7b874d826be7c44b1129ef9e25 | 15 | md | Markdown | README.md | knguyen24/circle-k-project-tintin | eba6b4b06fb7a2130ea78c10d4eeec41d20d5859 | [

"MIT"

] | null | null | null | README.md | knguyen24/circle-k-project-tintin | eba6b4b06fb7a2130ea78c10d4eeec41d20d5859 | [

"MIT"

] | null | null | null | README.md | knguyen24/circle-k-project-tintin | eba6b4b06fb7a2130ea78c10d4eeec41d20d5859 | [

"MIT"

] | null | null | null | # W12-4-tintin

| 7.5 | 14 | 0.666667 | fin_Latn | 0.384048 |

1c63e262929920ec5dc8a1d5eb37fa254f6c70f2 | 632 | md | Markdown | _includes/components/content/datetime.md | ysamus/atata-framework.github.io | 7c62a0013e63c27f4a97e26f2fb07f0e256b8244 | [

"Apache-2.0"

] | 4 | 2018-05-07T20:50:16.000Z | 2020-12-14T12:07:01.000Z | _includes/components/content/datetime.md | ysamus/atata-framework.github.io | 7c62a0013e63c27f4a97e26f2fb07f0e256b8244 | [

"Apache-2.0"

] | null | null | null | _includes/components/content/datetime.md | ysamus/atata-framework.github.io | 7c62a0013e63c27f4a97e26f2fb07f0e256b8244 | [

"Apache-2.0"

] | 6 | 2018-05-07T20:46:00.000Z | 2020-12-14T12:07:04.000Z | Represents any element containing date and time content. Default search finds the first occurring element. The default format is `"g"` (general date/time pattern (short time), e.g. 6/15/2009 1:45 PM).

```html

<span id="date-time">5/15/2016 1:45 PM</span>

```

```cs

using Atata;

namespace SampleApp.UITests

{

using ... | 24.307692 | 200 | 0.656646 | eng_Latn | 0.668746 |

1c646f1dee68b7f52d6a5449642e923833b8df1a | 11,460 | md | Markdown | articles/hadoop/itcast-hadoop-hdfs-java-api.md | bensondeng/qigangzhong.github.io | 724ceb4a52e19c31431800bb5c083f6898bc8e2e | [

"MIT"

] | null | null | null | articles/hadoop/itcast-hadoop-hdfs-java-api.md | bensondeng/qigangzhong.github.io | 724ceb4a52e19c31431800bb5c083f6898bc8e2e | [

"MIT"

] | null | null | null | articles/hadoop/itcast-hadoop-hdfs-java-api.md | bensondeng/qigangzhong.github.io | 724ceb4a52e19c31431800bb5c083f6898bc8e2e | [

"MIT"

] | 1 | 2019-11-20T03:21:43.000Z | 2019-11-20T03:21:43.000Z | # Hadoop 核心-HDFS

## 1:HDFS 的 API 操作

### 1.1 配置Windows下Hadoop环境

在windows系统需要配置hadoop运行环境,否则直接运行代码会出现以下问题:

`缺少winutils.exe`

~~~shell

Could not locate executable null \bin\winutils.exe in the hadoop binaries

~~~

`缺少hadoop.dll`

~~~shell

Unable to load native-hadoop library for your platform… using builtin-Java clas... | 29.612403 | 354 | 0.712653 | yue_Hant | 0.743613 |

1c64f960733e6162e9aa516451a051b70115b793 | 1,970 | md | Markdown | content/notes/fitness/immortality/index.md | glennji/glennjicom-academic | ad58b1333ae4cb2febf0104c18e3fb4e6997bedf | [

"MIT"

] | null | null | null | content/notes/fitness/immortality/index.md | glennji/glennjicom-academic | ad58b1333ae4cb2febf0104c18e3fb4e6997bedf | [

"MIT"

] | null | null | null | content/notes/fitness/immortality/index.md | glennji/glennjicom-academic | ad58b1333ae4cb2febf0104c18e3fb4e6997bedf | [

"MIT"

] | null | null | null | ---

title: "Immortality"

author: glennji

date: 2016-12-18T06:46:56+10:00

draft: false

type: note

crosslink: "true"

---

> "I don't want to achieve immortality through my work; I want to achieve immortality through not dying." -- Woody Allen

I'm with Woody: I'd prefer not to die (if I get the choice), and would genuinel... | 75.769231 | 780 | 0.759898 | eng_Latn | 0.997434 |

1c6580eb48680c7b86c0af0dfcfc7f274ddf6c0f | 1,780 | md | Markdown | README.md | Accrubit/Academy | 563a7126d9818a66045876947b8a8e96134d9bf3 | [

"Apache-2.0"

] | null | null | null | README.md | Accrubit/Academy | 563a7126d9818a66045876947b8a8e96134d9bf3 | [

"Apache-2.0"

] | null | null | null | README.md | Accrubit/Academy | 563a7126d9818a66045876947b8a8e96134d9bf3 | [

"Apache-2.0"

] | null | null | null | ---

description: >-

Accrubit Academy is a continuously updating, open-source and market neutral

education platform for blockchain, cryptocurrency, and other Web 3.0

technologies.

---

# Welcome to Accrubit Academy

## Welcome!

### How to Navigate

To find your way around, this page contains a change log of upd... | 31.22807 | 205 | 0.738202 | eng_Latn | 0.975994 |

1c65e6853dde25365459b8fc50f8bf11771dbafc | 5,109 | md | Markdown | example/base/README.md | fuyuhin/freedom | 7aa2c68ee823d237328683e3d384bb6c5633fc1f | [

"Apache-2.0"

] | null | null | null | example/base/README.md | fuyuhin/freedom | 7aa2c68ee823d237328683e3d384bb6c5633fc1f | [

"Apache-2.0"

] | null | null | null | example/base/README.md | fuyuhin/freedom | 7aa2c68ee823d237328683e3d384bb6c5633fc1f | [

"Apache-2.0"

] | null | null | null | # freedom

### base - 基础示例

#### 目录结构

- application - 领域服务

- aggregate - 聚合

- entity - 实体

- object - 值对象

- dto - 传输对象

- adapter - 端口适配器

- controller - 输入适配器

- repository - 输出适配器

- server - 服务端程序入口

- conf - toml配置文件

- main.go - 主函数

- infra - 基础设施

- config - 配置组件

---

#### 入口main.g... | 23.652778 | 144 | 0.661773 | yue_Hant | 0.576017 |

1c66182b4d81877a97b7c16415ca60518054ebfc | 5,979 | md | Markdown | tutorial.md | zroubalik/functions | 7d9f6647a1baa51ce7c241cbfdfe7d109b043b11 | [

"Apache-2.0"

] | null | null | null | tutorial.md | zroubalik/functions | 7d9f6647a1baa51ce7c241cbfdfe7d109b043b11 | [

"Apache-2.0"

] | null | null | null | tutorial.md | zroubalik/functions | 7d9f6647a1baa51ce7c241cbfdfe7d109b043b11 | [

"Apache-2.0"

] | null | null | null | # Boson Functions: A Step By Step Tutorial

This document will walk you step by step through the process of creating,

editing, and deploying a Boson Function project.

## Prerequisites

In order to follow along with this tutorial, you will need to have a few tools

installed.

* [oc][oc] or [kubectl][kubectl] CLI

* [kn]... | 31.140625 | 150 | 0.741428 | eng_Latn | 0.993834 |

1c6803434d998b4cf9fcb9bb24535f20eab841b2 | 3,487 | md | Markdown | generated/web/microsoft.certificateregistration/2020-06-01/types.md | anthony-c-martin/bicep-types-az | e0820bb3e25274304ff23820a3d9820786a60079 | [

"MIT"

] | null | null | null | generated/web/microsoft.certificateregistration/2020-06-01/types.md | anthony-c-martin/bicep-types-az | e0820bb3e25274304ff23820a3d9820786a60079 | [

"MIT"

] | 2 | 2021-03-05T08:04:56.000Z | 2021-03-05T20:09:30.000Z | generated/web/microsoft.certificateregistration/2020-06-01/types.md | anthony-c-martin/bicep-types-az | e0820bb3e25274304ff23820a3d9820786a60079 | [

"MIT"

] | null | null | null | # Microsoft.CertificateRegistration @ 2020-06-01

## Resource Microsoft.CertificateRegistration/certificateOrders@2020-06-01

* **Valid Scope(s)**: ResourceGroup

### Properties

* **apiVersion**: '2020-06-01' (ReadOnly, DeployTimeConstant)

* **id**: string (ReadOnly, DeployTimeConstant)

* **kind**: string

* **loc... | 42.52439 | 310 | 0.720103 | yue_Hant | 0.559379 |

1c6818c5968d3e4dce67eb32c66301d4dc07ba96 | 3,571 | md | Markdown | articles/marketplace/marketplace-containers.md | CatchRetry/azure-docs.fr-fr | 1ccd071caa483cc19d4d9b8c1c59104b1a7e6438 | [

"CC-BY-4.0"

] | null | null | null | articles/marketplace/marketplace-containers.md | CatchRetry/azure-docs.fr-fr | 1ccd071caa483cc19d4d9b8c1c59104b1a7e6438 | [

"CC-BY-4.0"

] | null | null | null | articles/marketplace/marketplace-containers.md | CatchRetry/azure-docs.fr-fr | 1ccd071caa483cc19d4d9b8c1c59104b1a7e6438 | [

"CC-BY-4.0"

] | 3 | 2020-03-31T11:56:12.000Z | 2021-06-04T06:51:19.000Z | ---

title: Guide de publication d’offres de conteneurs pour la Place de marché Azure

description: Cet article décrit les conditions requises pour publier des conteneurs sur la place de marché

services: Azure, Marketplace, Compute, Storage, Networking, Blockchain, Security

documentationcenter: ''

author: ellacroi

manage... | 62.649123 | 471 | 0.781854 | fra_Latn | 0.905217 |

1c68361672cfb6c52f790455cda869954661d0b8 | 1,885 | md | Markdown | content/api/ng-common-positions/common-positions.tooltipoptions.md | ressurectit/ressurectit.github.io | 09ed543e50e9b35594333afe6e98d79687849b04 | [

"MIT"

] | null | null | null | content/api/ng-common-positions/common-positions.tooltipoptions.md | ressurectit/ressurectit.github.io | 09ed543e50e9b35594333afe6e98d79687849b04 | [

"MIT"

] | null | null | null | content/api/ng-common-positions/common-positions.tooltipoptions.md | ressurectit/ressurectit.github.io | 09ed543e50e9b35594333afe6e98d79687849b04 | [

"MIT"

] | null | null | null | <!-- Do not edit this file. It is automatically generated by API Documenter. -->

[Home](./index.md) > [@anglr/common-positions](./common-positions.md) > [TooltipOptions](./common-positions.tooltipoptions.md)

## TooltipOptions interface

Options used for tooltip directive

<b>Signature:</b>

```typescr... | 67.321429 | 237 | 0.728382 | eng_Latn | 0.724634 |

1c68757ca1304c778aa46fcb37bf0789ebfa2582 | 1,953 | md | Markdown | src/jekyll/docs/plus-sdk/reactnative/rewarded-fa.md | tapsellorg/TapsellDocument | 2f806100f91f85cc3da175b876d7169592a637d2 | [

"MIT"

] | null | null | null | src/jekyll/docs/plus-sdk/reactnative/rewarded-fa.md | tapsellorg/TapsellDocument | 2f806100f91f85cc3da175b876d7169592a637d2 | [

"MIT"

] | 8 | 2021-04-06T12:18:22.000Z | 2022-02-22T14:41:11.000Z | src/jekyll/docs/plus-sdk/reactnative/rewarded-fa.md | tapsellorg/TapsellDocument | 2f806100f91f85cc3da175b876d7169592a637d2 | [

"MIT"

] | 1 | 2020-09-02T07:01:08.000Z | 2020-09-02T07:01:08.000Z | ---

layout: classic-docs

title: تبلیغات جایزهای در ReactNative

lang: fa

permalink: /plus-sdk/reactnative/rewarded/index.html

toc: true # table of contents

---

برای پیاده سازی تبلیغات جایزهای به صورت زیر اقدام کنید

## ساخت تبلیغگاه

ابتدا از [پنل تپسل](https://dashboard.tapsell.ir/) یک تبلیغگاه از نوعی که مایل هستی... | 28.304348 | 107 | 0.726575 | pes_Arab | 0.991612 |

1c68c4442d0c76d3da77b0d0c4eb993c8376a73b | 1,180 | md | Markdown | README.md | takurooo/PyTorch_RandomErasing | 2fcb30626ee19795d580631186c0bd16efd4292a | [

"MIT"

] | 2 | 2020-09-03T01:28:58.000Z | 2022-01-25T06:38:42.000Z | README.md | takurooo/PyTorch_RandomErasing | 2fcb30626ee19795d580631186c0bd16efd4292a | [

"MIT"

] | 1 | 2019-07-07T09:23:32.000Z | 2019-07-07T09:23:32.000Z | README.md | takurooo/PyTorch_RandomErasing | 2fcb30626ee19795d580631186c0bd16efd4292a | [

"MIT"

] | 3 | 2019-12-19T05:49:54.000Z | 2020-11-14T06:39:26.000Z | # PyTorch_RandomErasing

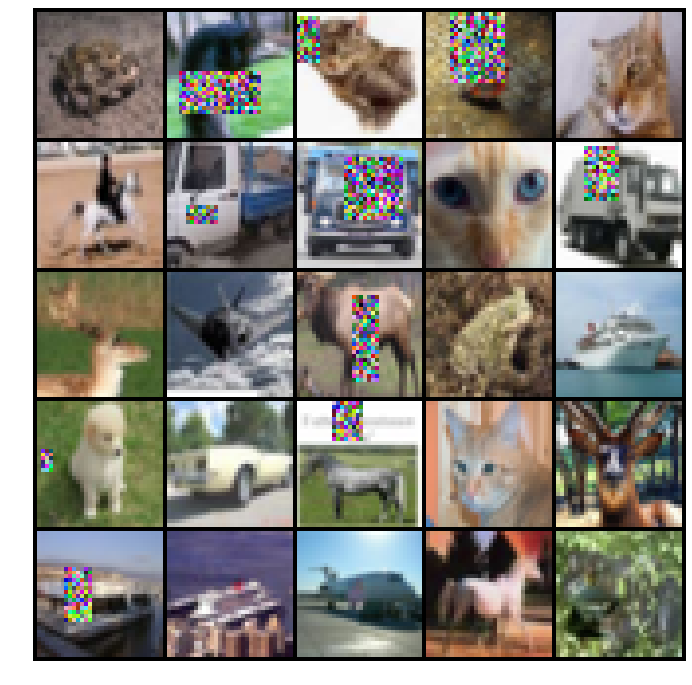

Implementation of

[Random Erasing Data Augmentation](https://arxiv.org/pdf/1708.04896.pdf).

# Examples of Random Erasing

# Results

Dataset CIFAR10

## Test accuracy per class

|... | 33.714286 | 111 | 0.388983 | yue_Hant | 0.424535 |

1c6968e9296ab8600bfb76a204e04dd3651ee2e8 | 67 | md | Markdown | app01/README.md | gabrielrodriguesleite/contextAPIeHooksTests | 9745c6bc34f7c6fe727da672b254413e67657749 | [

"MIT"

] | null | null | null | app01/README.md | gabrielrodriguesleite/contextAPIeHooksTests | 9745c6bc34f7c6fe727da672b254413e67657749 | [

"MIT"

] | null | null | null | app01/README.md | gabrielrodriguesleite/contextAPIeHooksTests | 9745c6bc34f7c6fe727da672b254413e67657749 | [

"MIT"

] | null | null | null | ## Exercitando o conteúdo aprendido sobre React contextAPI e Hooks

| 33.5 | 66 | 0.820896 | por_Latn | 0.744585 |

1c69d2209f37c5d15e917003fe78237126bc261b | 748 | md | Markdown | _m/foody.md | S1ffer/maerkelex.dk | ad48e52b5a3cf9c59c74e8c8b6dc8fc9341b4658 | [

"MIT"

] | null | null | null | _m/foody.md | S1ffer/maerkelex.dk | ad48e52b5a3cf9c59c74e8c8b6dc8fc9341b4658 | [

"MIT"

] | null | null | null | _m/foody.md | S1ffer/maerkelex.dk | ad48e52b5a3cf9c59c74e8c8b6dc8fc9341b4658 | [

"MIT"

] | null | null | null | ---

name: Foody

tags:

- uofficielt

- duelighed

- bål

age: 12+

image: foody.jpg

coverimage: cover-campfire.jpg

buylink: http://shorty.tac-case.dk/index.php/bestilling-eller-information

infolink: http://shorty.tac-case.dk/index.php/foody

---

## Duelighedstegnet som gør spejdere til mesterkokke

Det kan tages af dem, som... | 35.619048 | 167 | 0.766043 | dan_Latn | 0.999302 |

1c6c40a31ef4e6b60d22ab517af05eb429726130 | 1,510 | md | Markdown | docs/accessibility/headings.md | la-ots/pelican | 048b77c5a9917f14c3fbf561d53e97cc89a2ab21 | [

"CC0-1.0"

] | 6 | 2022-02-17T19:40:30.000Z | 2022-02-24T17:43:03.000Z | docs/accessibility/headings.md | la-ots/pelican | 048b77c5a9917f14c3fbf561d53e97cc89a2ab21 | [

"CC0-1.0"

] | 20 | 2022-02-17T11:25:20.000Z | 2022-03-31T11:21:08.000Z | docs/accessibility/headings.md | la-ots/pelican | 048b77c5a9917f14c3fbf561d53e97cc89a2ab21 | [

"CC0-1.0"

] | null | null | null | ---

title: Headings

summary: Headings are used to indicate information structure. They also enable accessible access.

tags: color, contrast

layout: guide

eleventyNavigation:

key: Headings

parent: Accessibility

order: 6

excerpt: Headings are used to indicate information structure. They also enable accessible acc... | 27.454545 | 159 | 0.727815 | eng_Latn | 0.963199 |

1c6c5ff323a7ca1bfda1a9d1f7169f81f8e6cf81 | 16,311 | md | Markdown | articles/hdinsight/hdinsight-scaling-best-practices.md | Jontii/azure-docs.sv-se | d2551c12e17b442dc0b577205d034dcd6c73cff9 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/hdinsight/hdinsight-scaling-best-practices.md | Jontii/azure-docs.sv-se | d2551c12e17b442dc0b577205d034dcd6c73cff9 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/hdinsight/hdinsight-scaling-best-practices.md | Jontii/azure-docs.sv-se | d2551c12e17b442dc0b577205d034dcd6c73cff9 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Skala kluster storlekar – Azure HDInsight

description: Skala ett Apache Hadoop kluster elastiskt för att matcha din arbets belastning i Azure HDInsight

ms.author: ashish

ms.service: hdinsight

ms.topic: how-to

ms.custom: seoapr2020

ms.date: 04/29/2020

ms.openlocfilehash: 3524b5d2274c52aa94fa1c3420fb0d3245d9b7... | 59.097826 | 454 | 0.773159 | swe_Latn | 0.997815 |

1c6ce0b2bb36d7bd34b86149399624f29b7bfd21 | 1,070 | md | Markdown | controls/scheduler/client-side-programming/events/onclientresourcespopulating.md | DKaramfilov/ajax-docs | 86b5dbbf757f7b1fd84ec8b313be0d70cd957f8e | [

"Apache-2.0"

] | 22 | 2015-07-21T10:33:39.000Z | 2022-02-21T09:17:40.000Z | controls/scheduler/client-side-programming/events/onclientresourcespopulating.md | DKaramfilov/ajax-docs | 86b5dbbf757f7b1fd84ec8b313be0d70cd957f8e | [

"Apache-2.0"

] | 132 | 2015-07-14T13:56:12.000Z | 2022-01-28T10:04:56.000Z | controls/scheduler/client-side-programming/events/onclientresourcespopulating.md | DKaramfilov/ajax-docs | 86b5dbbf757f7b1fd84ec8b313be0d70cd957f8e | [

"Apache-2.0"

] | 355 | 2015-07-14T02:38:17.000Z | 2021-11-30T13:22:18.000Z | ---

title: OnClientResourcesPopulating

page_title: OnClientResourcesPopulating - RadScheduler

description: Check our Web Forms article about OnClientResourcesPopulating.

slug: scheduler/client-side-programming/events/onclientresourcespopulating

tags: onclientresourcespopulating

published: True

position: 22

---

... | 35.666667 | 385 | 0.771028 | eng_Latn | 0.976839 |

1c6d123085613f4fa8ba3291392a41ea6d8d8608 | 5,801 | md | Markdown | src/Resources/doc/installation.md | Adgoal/StatsDClientBundle | 42559c94240daf23f142dc1607dc127a4bc8a4b5 | [

"MIT"

] | null | null | null | src/Resources/doc/installation.md | Adgoal/StatsDClientBundle | 42559c94240daf23f142dc1607dc127a4bc8a4b5 | [

"MIT"

] | null | null | null | src/Resources/doc/installation.md | Adgoal/StatsDClientBundle | 42559c94240daf23f142dc1607dc127a4bc8a4b5 | [

"MIT"

] | null | null | null | Installation

============

* 1 First, add the dependent bundles to the vendor/bundles directory. Add the following lines to the composer.json file

```

"require": {

# ..

"liuggio/statsd-client-bundle": "1.6.*",

# ..

}

```

* 2 Then run `composer install`

* 3 Then add in your `app/AppKernel`

``` yaml

cl... | 25.331878 | 181 | 0.678159 | eng_Latn | 0.88245 |

1c6d15268fc0ef25ab69e14db7823721083cc90b | 1,895 | md | Markdown | _posts/study/2020-03-01-problem-sw-1855.md | JooJiyun/joojiyun.github.io | 6404fa6747c1dc19aeb755f5f210b55296aabd3d | [

"MIT"

] | null | null | null | _posts/study/2020-03-01-problem-sw-1855.md | JooJiyun/joojiyun.github.io | 6404fa6747c1dc19aeb755f5f210b55296aabd3d | [

"MIT"

] | null | null | null | _posts/study/2020-03-01-problem-sw-1855.md | JooJiyun/joojiyun.github.io | 6404fa6747c1dc19aeb755f5f210b55296aabd3d | [

"MIT"

] | null | null | null | ---

title: "1855 영준이의 진짜 BFS"

excerpt: "1855 영준이의 진짜 BFS : LCA"

categories:

- Study

tags:

- swexpertacademy

last_modified_at: 2020-03-01T16:09:00

---

**SW Expert Academy**

1855 영준이의 진짜 BFS

BFS탐색을 하면서 방분한 순서대로 방문 전 정점과의 LCA를 구하여 정점을 이동할 때마다 몇개의 간선을 지나가는지 구하면 된다.

``` c

#include <stdio.h>

#include <vector>

#includ... | 21.534091 | 72 | 0.529815 | kor_Hang | 0.444248 |

1c6e1510f97db3e13868ef656c131c33bf2054b1 | 14 | md | Markdown | README.md | Boosiebo/Roger- | 39b809ecf0ee4264a95f6df2c2084ede77abcf75 | [

"Apache-2.0"

] | null | null | null | README.md | Boosiebo/Roger- | 39b809ecf0ee4264a95f6df2c2084ede77abcf75 | [

"Apache-2.0"

] | null | null | null | README.md | Boosiebo/Roger- | 39b809ecf0ee4264a95f6df2c2084ede77abcf75 | [

"Apache-2.0"

] | null | null | null | # Roger-

Work

| 4.666667 | 8 | 0.642857 | deu_Latn | 0.377837 |

1c6e54e082f1f2c245723598f8d268ae34e61675 | 358 | md | Markdown | docs/utils/trigonometry/sin.md | suns-echoes/math-utils | b234f8597918d6958e9c70ad67c7cd47eb90de2e | [

"MIT"

] | null | null | null | docs/utils/trigonometry/sin.md | suns-echoes/math-utils | b234f8597918d6958e9c70ad67c7cd47eb90de2e | [

"MIT"

] | null | null | null | docs/utils/trigonometry/sin.md | suns-echoes/math-utils | b234f8597918d6958e9c70ad67c7cd47eb90de2e | [

"MIT"

] | null | null | null | Trigonometry / Sine

===================

### `sin`

Method calculates sine value.

Usage

-----

```js

const value = MathUtils.trigonometry.sin(theta);

```

### Arguments

* **`theta`**: *`number`* - angle in radians

### Returns

* *`number`* - sine value

Examples

--------

```js

// Calculate value:

const value = ... | 10.529412 | 48 | 0.578212 | eng_Latn | 0.185253 |

1c6e70a20fc9dd0f7e4ec046b0b8319dcc868328 | 5,865 | md | Markdown | README.md | teemulehtinen/qlcjs | f7de21cd3e136db9592957b7b9f47d5017086bc3 | [

"MIT"

] | null | null | null | README.md | teemulehtinen/qlcjs | f7de21cd3e136db9592957b7b9f47d5017086bc3 | [

"MIT"

] | null | null | null | README.md | teemulehtinen/qlcjs | f7de21cd3e136db9592957b7b9f47d5017086bc3 | [

"MIT"

] | null | null | null | # qlcjs

Generates questions about concrete constructs and patterns in a given JavaScript program.

These questions (including answering options) can be posed to a learner to practice

introductory programming. These questions include elements to develop program comprehension

and program tracing.

Automatic generation en... | 47.682927 | 134 | 0.741858 | eng_Latn | 0.995749 |

1c6f46bf75ccda6b21413dd7a169d6967c7b18ef | 234 | md | Markdown | src/showreel-drafts/Continual Universal Object Detection.md | BraneShop/BraneShop.github.io | 2d5cdeb6ec3b2c78016a4e4b773fdc12174cb255 | [

"MIT"

] | 1 | 2019-11-07T09:51:58.000Z | 2019-11-07T09:51:58.000Z | src/showreel-drafts/Continual Universal Object Detection.md | BraneShop/BraneShop.github.io | 2d5cdeb6ec3b2c78016a4e4b773fdc12174cb255 | [

"MIT"

] | 4 | 2019-02-13T20:19:35.000Z | 2019-10-18T08:48:44.000Z | src/showreel-drafts/Continual Universal Object Detection.md | BraneShop/BraneShop.github.io | 2d5cdeb6ec3b2c78016a4e4b773fdc12174cb255 | [

"MIT"

] | 1 | 2019-02-10T19:57:24.000Z | 2019-02-10T19:57:24.000Z | ---

link: https://arxiv.org/pdf/2002.05347.pdf

title: Continual Universal Object Detection

image: /images/showreel/Continual Universal Object Detection.jpg

date: 2020-02-13

tags: computer-vision, technical

draft: draft

preview:

---

| 19.5 | 64 | 0.773504 | yue_Hant | 0.302479 |

1c6ff07863bbf5113fdddd45f52f44cd42aed6bd | 5,507 | md | Markdown | articles/active-directory/develop/authentication-national-cloud.md | EINSTEINPRACIANO/azure-docs.pt-br | 93bbbf115ab76d31e6bc8919a338700294966913 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/active-directory/develop/authentication-national-cloud.md | EINSTEINPRACIANO/azure-docs.pt-br | 93bbbf115ab76d31e6bc8919a338700294966913 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/active-directory/develop/authentication-national-cloud.md | EINSTEINPRACIANO/azure-docs.pt-br | 93bbbf115ab76d31e6bc8919a338700294966913 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Autenticação usando o Azure Active Directory nas nuvens nacionais

description: Saiba mais sobre o registro do aplicativo e os pontos de extremidade de autenticação para nuvens nacionais.

services: active-directory

documentationcenter: ''

author: negoe

manager: CelesteDG

editor: ''

ms.service: active-director... | 59.858696 | 538 | 0.781551 | por_Latn | 0.989921 |

1c7008b44845d2d684b815ce4fb7745588777854 | 771 | md | Markdown | innersource-repository-scanner/src/test/resources/readmes/README-COMPLETE.md | LaudateCorpus1/innersource-scanner | c4253df0b9d7de75b982a9f716ee135284651020 | [

"Apache-2.0"

] | 15 | 2021-06-22T18:20:52.000Z | 2022-03-16T10:02:50.000Z | innersource-repository-scanner/src/test/resources/readmes/README-COMPLETE.md | intuit/innersource-scanner | c4253df0b9d7de75b982a9f716ee135284651020 | [

"Apache-2.0"

] | 7 | 2021-06-23T17:04:57.000Z | 2021-07-12T19:31:14.000Z | innersource-repository-scanner/src/test/resources/readmes/README-COMPLETE.md | LaudateCorpus1/innersource-scanner | c4253df0b9d7de75b982a9f716ee135284651020 | [

"Apache-2.0"

] | 1 | 2022-02-24T08:05:41.000Z | 2022-02-24T08:05:41.000Z |

# Project Title

This is the project description.

# Usage

Explain how to use the software from and end user's perspective.

# Local Development

Explain how a new developer to the team should configure their

l... | 24.09375 | 64 | 0.793774 | eng_Latn | 0.996509 |

1c708cc1482b3ca479081fd22ec2fbe89f63e7ea | 140 | md | Markdown | source/_honours/1970-dux-ludorum.md | salihzeki12000/Website | 733c8f7ea86f6ee4967cbbbb83cfc10d12cf7c12 | [

"MIT"

] | null | null | null | source/_honours/1970-dux-ludorum.md | salihzeki12000/Website | 733c8f7ea86f6ee4967cbbbb83cfc10d12cf7c12 | [

"MIT"

] | 10 | 2019-08-13T10:55:15.000Z | 2022-02-26T10:21:10.000Z | source/_honours/1970-dux-ludorum.md | wing5wong/artisan-whs-static | cb36480c54adcb5cbe1fd25c1a741bb525112a72 | [

"MIT"

] | 1 | 2020-10-30T12:55:04.000Z | 2020-10-30T12:55:04.000Z | ---

title: 1970 Dux Ludorum

date: 1970-12-01T03:58:30.974Z

award: Dux Ludorum

person1_name: Michael Young

person2_name: Raewyn Knowles

---

| 15.555556 | 30 | 0.757143 | eng_Latn | 0.184328 |

1c71066c0ada188606bec05910fd0e914c8a1442 | 9,693 | md | Markdown | powerapps-docs/maker/common-data-service/create-edit-entities-solution-explorer.md | PathToSharePoint/powerapps-docs | 897a3dd2b1afd272809a2261992b61d83eb9880a | [

"CC-BY-4.0",

"MIT"

] | null | null | null | powerapps-docs/maker/common-data-service/create-edit-entities-solution-explorer.md | PathToSharePoint/powerapps-docs | 897a3dd2b1afd272809a2261992b61d83eb9880a | [

"CC-BY-4.0",

"MIT"

] | null | null | null | powerapps-docs/maker/common-data-service/create-edit-entities-solution-explorer.md | PathToSharePoint/powerapps-docs | 897a3dd2b1afd272809a2261992b61d83eb9880a | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: "Create and edit tables using solution explorer | MicrosoftDocs"

description: "Learn how to create a table using solution explorer"

ms.custom: ""

ms.date: 02/26/2019

ms.reviewer: ""

ms.service: powerapps

ms.suite: ""

ms.tgt_pltfrm: ""

ms.topic: "article"

applies_to:

- "Dynamics 365 (online)"

- "Dynamics... | 47.748768 | 411 | 0.759105 | eng_Latn | 0.996754 |

1c72348fbbb789b9fbab21b8059a11366fec9e8d | 18 | md | Markdown | README.md | kanishk779/RandomData | 434ee2e5d0910b04a662b16495e8d478b2067e80 | [

"MIT"

] | null | null | null | README.md | kanishk779/RandomData | 434ee2e5d0910b04a662b16495e8d478b2067e80 | [

"MIT"

] | null | null | null | README.md | kanishk779/RandomData | 434ee2e5d0910b04a662b16495e8d478b2067e80 | [

"MIT"

] | null | null | null | # RandomData

Data

| 6 | 12 | 0.777778 | yue_Hant | 0.824065 |

1c725fb0d5a856f44ed44473bf03e767ae692e5b | 1,451 | md | Markdown | 2020/09/18/2020-09-18 22:15.md | zhzhzhy/WeiBoHot_history | 32ce4800e63f26384abb17d43e308452c537c902 | [

"MIT"

] | 3 | 2020-07-14T14:54:15.000Z | 2020-08-21T06:48:24.000Z | 2020/09/18/2020-09-18 22:15.md | zhzhzhy/WeiBoHot_history | 32ce4800e63f26384abb17d43e308452c537c902 | [

"MIT"

] | null | null | null | 2020/09/18/2020-09-18 22:15.md | zhzhzhy/WeiBoHot_history | 32ce4800e63f26384abb17d43e308452c537c902 | [

"MIT"

] | null | null | null | 2020年09月18日22时数据

Status: 200

1.吴亦凡 我不在这节目都不会出现

微博热度:4415932

2.一90后户籍被永久标注拒服兵役

微博热度:2871278

3.乔欣 嫉妒不可耻

微博热度:2455592

4.周杰伦吃醋昆凌给别人加油

微博热度:2145032

5.黑蒜

微博热度:1931409

6.何凯文

微博热度:1791811

7.毫无购买欲望的吃播

微博热度:1175290

8.解放军对印军播放歌曲

微博热度:926464

9.教育部要求高校方便学生进出校门

微博热度:884116

10.演唱会快回来了

微博热度:860509

11.杨迪洗肥肠控制不住的yue... | 7.112745 | 22 | 0.787733 | yue_Hant | 0.359813 |

1c7451c3168ab129051229ce631d39c6af69020e | 894 | md | Markdown | docs/preprocessor/exclude-hash-import.md | stu85010/cpp-docs.zh-tw | bac0362e722d794727f509d63a2e3179b70d0785 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/preprocessor/exclude-hash-import.md | stu85010/cpp-docs.zh-tw | bac0362e722d794727f509d63a2e3179b70d0785 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/preprocessor/exclude-hash-import.md | stu85010/cpp-docs.zh-tw | bac0362e722d794727f509d63a2e3179b70d0785 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: exclude (#import)

ms.date: 10/18/2018

f1_keywords:

- exclude

helpviewer_keywords:

- exclude attribute

ms.assetid: 0883248a-d4bf-420e-9848-807b28fa976e

ms.openlocfilehash: 1de1376fbe80147bc9fe01ea83bad81912431310

ms.sourcegitcommit: 6052185696adca270bc9bdbec45a626dd89cdcdd

ms.translationtype: MT

ms.contentloc... | 19.866667 | 65 | 0.714765 | yue_Hant | 0.735859 |

1c74b3b19a1bb6dcd6475f8b6bf28f5ffe91fdfb | 75,487 | md | Markdown | https___bryan-guner.gitbook.io_my-docs_/bryan-guner.gitbook.io/my-docs/web-development-frameworks/gatsby/graphql-concepts.md | Uvacoder/html-demo-code-and-experiments | 1bd2ab50afe8f331396c37822301afa8e4903bcd | [

"Apache-2.0"

] | 3 | 2021-11-15T07:54:37.000Z | 2021-11-29T03:09:12.000Z | https___bryan-guner.gitbook.io_my-docs_/bryan-guner.gitbook.io/my-docs/web-development-frameworks/gatsby/graphql-concepts.md | Uvacoder/html-demo-code-and-experiments | 1bd2ab50afe8f331396c37822301afa8e4903bcd | [

"Apache-2.0"

] | 26 | 2021-11-15T04:26:39.000Z | 2021-11-17T00:17:06.000Z | https___bryan-guner.gitbook.io_my-docs_/bryan-guner.gitbook.io/my-docs/web-development-frameworks/gatsby/graphql-concepts.md | Uvacoder/html-demo-code-and-experiments | 1bd2ab50afe8f331396c37822301afa8e4903bcd | [

"Apache-2.0"

] | null | null | null | <a href="https://bryan-guner.gitbook.io/my-docs/" class="css-4rbku5 css-1dbjc4n r-1awozwy r-1loqt21 r-18u37iz r-1otgn73 r-1i6wzkk r-lrvibr"></a>

<span class="css-901oao css-16my406 css-vcwn7f" aria-label="My Docs" data-rnw-int-class="nearest_246__260-12392_">My Docs</span>

<a href="https://bgoonz-blog.netlify.app/" c... | 104.263812 | 1,405 | 0.785923 | yue_Hant | 0.168614 |

1c7535d1edf0de7a97adda6db429968f4e8e44e8 | 4,485 | md | Markdown | README.md | ChronVer/chronver | 3606e998c4311017c00a9cbb3a319991f1c62ed0 | [

"MIT"

] | 1 | 2020-04-15T00:16:42.000Z | 2020-04-15T00:16:42.000Z | README.md | ChronVer/chronver | 3606e998c4311017c00a9cbb3a319991f1c62ed0 | [

"MIT"

] | null | null | null | README.md | ChronVer/chronver | 3606e998c4311017c00a9cbb3a319991f1c62ed0 | [

"MIT"

] | null | null | null | # chronver

> The [chronological](https://chronver.org "Official ChronVer website") versioner.

## Install

```sh

$ npm i chronver

```

## Requirements

This is an evergreen module 🌲 and requires an [Active LTS](https://github.com/nodejs/Release) Node version (v12.0.0+).

## Usage

### Node.js

```js

import chronve... | 23.238342 | 251 | 0.678707 | eng_Latn | 0.931427 |

1c766df1e652c25c3070405e979aa389b8382ed4 | 5,984 | md | Markdown | docs/infrastructure/symfony-console.md | PanzerLlama/msgphp | 51493573cdbb45059e20e0bd0b3e600a24bd90b2 | [

"MIT"

] | null | null | null | docs/infrastructure/symfony-console.md | PanzerLlama/msgphp | 51493573cdbb45059e20e0bd0b3e600a24bd90b2 | [

"MIT"

] | null | null | null | docs/infrastructure/symfony-console.md | PanzerLlama/msgphp | 51493573cdbb45059e20e0bd0b3e600a24bd90b2 | [

"MIT"

] | null | null | null | # Symfony Console

An overview of available infrastructural code when using [Symfony Console][console-project].

- Requires [symfony/console]

## Commands

Various standard [console commands] are available and can be used depending on implemented domain infrastructure. They

are defined in the `MsgPhp\Domain\Infrastruct... | 38.857143 | 149 | 0.756852 | eng_Latn | 0.586834 |

1c774d0897b9f7e83a37dc7af5da6b0a62b84675 | 20 | md | Markdown | CHANGELOG.md | kevelopment/liquidctl-gui | 90ccc0ba8d159c957f2691e33329ee73a461d5cb | [

"MIT"

] | null | null | null | CHANGELOG.md | kevelopment/liquidctl-gui | 90ccc0ba8d159c957f2691e33329ee73a461d5cb | [

"MIT"

] | 2 | 2022-03-02T10:43:29.000Z | 2022-03-25T19:17:08.000Z | CHANGELOG.md | vudor/liquidctl-gui | 8cf7a3199dec3056252e44796a759eb7ae7009ba | [

"MIT"

] | null | null | null | ## 0.1.0 25.07.2020

| 10 | 19 | 0.55 | eng_Latn | 0.145547 |

1c77516bc7435f96b7b7a0d5762b6f4fd020d80e | 980 | md | Markdown | docs/build.md | dwango/UniVRM | e91ab9fc519aa387dc9b39044aa2189ff0382f15 | [

"MIT"

] | 318 | 2018-04-16T08:41:10.000Z | 2019-04-23T02:38:54.000Z | docs/build.md | dwango/UniVRM | e91ab9fc519aa387dc9b39044aa2189ff0382f15 | [

"MIT"

] | 171 | 2018-04-17T14:07:30.000Z | 2019-04-20T02:29:08.000Z | docs/build.md | dwango/UniVRM | e91ab9fc519aa387dc9b39044aa2189ff0382f15 | [

"MIT"

] | 65 | 2018-04-16T15:45:55.000Z | 2019-04-12T01:11:17.000Z | # アプリケーションのビルド

UniVRMを使うアプリケーションのビルドに関する注意事項

## ビルドに含めるシェーダー

`Project Settings = Graphics - Always Included Shaders` などに設定して、以下のシェーダーがビルドに含まれるようにしてください。

### Standard

* `Standard`

GLTF の PBR マテリアルが使用します。

```{admonition} Always Included Shaders

:class: warning

明示的に指定する必要があります

```

### Unlit

* `Assets\VRMShaders\G... | 21.777778 | 91 | 0.790816 | yue_Hant | 0.912593 |

1c7777ed09e52304d3ad9a903302445d77f2c612 | 245 | md | Markdown | packages/docs/src/pages/input/md/input6.md | jeven2016/react-windy-ui | 93623d11fec4b282ecaeb3e09c60bf81ecf56c3f | [

"MIT"

] | 1 | 2022-01-22T14:31:30.000Z | 2022-01-22T14:31:30.000Z | packages/docs/src/pages/input/md/input6.md | jeven2016/react-windy-ui | 93623d11fec4b282ecaeb3e09c60bf81ecf56c3f | [

"MIT"

] | 2 | 2021-06-12T05:52:22.000Z | 2022-02-26T07:03:01.000Z | packages/docs/src/pages/input/md/input6.md | jeven2016/react-windy-ui | 93623d11fec4b282ecaeb3e09c60bf81ecf56c3f | [

"MIT"

] | null | null | null | ---

order: 6

type: sample

zh_CN: 禁用输入框

en_US: Disabled Input

editUrl: $BASE/pages/input/md/input6.md

---

+++ zh_CN

在Input上设置disabled属性为true后,可以将控件禁用。但对于Input Group组件,您需要自行设置各个子控件的disabled属性。

+++ en_US

Input6

+++ SampleCode

fileName: Input6

| 14.411765 | 75 | 0.755102 | yue_Hant | 0.225073 |

1c777ae4c851c0fc5df2f5c176d195027592bb50 | 142 | md | Markdown | test-content/leitner/functions/arguments.md | colevandersWands/study-lenses | 47398d6719dd2cd732fff59ba2f2ff13fcce420c | [

"MIT"

] | 2 | 2021-07-19T09:50:41.000Z | 2022-02-25T15:54:01.000Z | test-content/leitner/functions/arguments.md | colevandersWands/study-lenses | 47398d6719dd2cd732fff59ba2f2ff13fcce420c | [

"MIT"

] | 2 | 2020-10-19T14:51:35.000Z | 2021-04-22T11:09:37.000Z | test-content/leitner/functions/arguments.md | colevandersWands/study-lenses | 47398d6719dd2cd732fff59ba2f2ff13fcce420c | [

"MIT"

] | 2 | 2020-10-20T14:00:19.000Z | 2020-10-24T09:58:09.000Z | # Arguments

```js

const thingy = (param) => {

console.log(param);

};

thingy(4); // <-- argument is 4

thingy(6); // <-- argument is 6

```

| 11.833333 | 31 | 0.549296 | eng_Latn | 0.40519 |

1c778c29210f19db23d2538d97c2b1d06ca3fb6f | 8,574 | md | Markdown | WindowsServerDocs/identity/ad-fs/deployment/upgrading-to-ad-fs-in-windows-server-sql.md | jeoviedo/windowsserverdocs | d84dc3d037911ad698f5e3e84348b867c5f46ed8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | WindowsServerDocs/identity/ad-fs/deployment/upgrading-to-ad-fs-in-windows-server-sql.md | jeoviedo/windowsserverdocs | d84dc3d037911ad698f5e3e84348b867c5f46ed8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | WindowsServerDocs/identity/ad-fs/deployment/upgrading-to-ad-fs-in-windows-server-sql.md | jeoviedo/windowsserverdocs | d84dc3d037911ad698f5e3e84348b867c5f46ed8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Upgrading to AD FS in Windows Server 2016 with SQL Server

description:

author: billmath

manager: mtillman

ms.date: 04/11/2018

ms.topic: article

ms.prod: windows-server-threshold

ms.assetid: 70f279bf-aea1-4f4f-9ab3-e9157233e267

ms.technology: identity-adfs

ms.author: billmath

---

# Upgrading to AD FS in Wind... | 77.243243 | 414 | 0.772102 | eng_Latn | 0.987675 |

1c77b1c7bbd7a915929be7faef79625d17153677 | 479 | md | Markdown | applications/magic-mirror/README.md | tillhoff/iac | d2bf0814f6d160415b446866baaee6e7f006c4f4 | [

"MIT"

] | null | null | null | applications/magic-mirror/README.md | tillhoff/iac | d2bf0814f6d160415b446866baaee6e7f006c4f4 | [

"MIT"

] | null | null | null | applications/magic-mirror/README.md | tillhoff/iac | d2bf0814f6d160415b446866baaee6e7f006c4f4 | [

"MIT"

] | null | null | null | # magic-mirror

This application is running the magic-mirror software in server-only mode. This means it starts a docker-container, which hosts the magic-mirror software on a web-server.

## Access

To access it, a browser is required.

For automatic start it is required to:

- log a user in automatically at boot

- aut... | 31.933333 | 170 | 0.764092 | eng_Latn | 0.998447 |

1c77ec05e5a8c87c866579652b49750cb5ef71fb | 5,402 | md | Markdown | docs/2014/reporting-services/report-design/chart-effects-add-3d-effects-report-builder.md | ZubriQ/sql-docs.ru-ru | 50559946dabe5fce9eef251a637dc2e3fd305908 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/2014/reporting-services/report-design/chart-effects-add-3d-effects-report-builder.md | ZubriQ/sql-docs.ru-ru | 50559946dabe5fce9eef251a637dc2e3fd305908 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/2014/reporting-services/report-design/chart-effects-add-3d-effects-report-builder.md | ZubriQ/sql-docs.ru-ru | 50559946dabe5fce9eef251a637dc2e3fd305908 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Добавление в диаграмму объемных эффектов (построитель отчетов и службы SSRS) | Документы Майкрософт

ms.custom: ''

ms.date: 06/13/2017

ms.prod: sql-server-2014

ms.reviewer: ''

ms.technology: reporting-services-native

ms.topic: conceptual

ms.assetid: ab9625d8-6557-4a4d-8123-eefa7c066ff5

author: maggiesMSFT

ms.... | 58.717391 | 428 | 0.76194 | rus_Cyrl | 0.955824 |

1c780506419bbddd28e2ff1f409b3f3520e1c338 | 6,514 | md | Markdown | _posts/2019-05-17-Download-service-manual-genie.md | Jobby-Kjhy/27 | ea48bae2a083b6de2c3f665443f18b1c8f241440 | [

"MIT"

] | null | null | null | _posts/2019-05-17-Download-service-manual-genie.md | Jobby-Kjhy/27 | ea48bae2a083b6de2c3f665443f18b1c8f241440 | [

"MIT"

] | null | null | null | _posts/2019-05-17-Download-service-manual-genie.md | Jobby-Kjhy/27 | ea48bae2a083b6de2c3f665443f18b1c8f241440 | [

"MIT"

] | null | null | null | ---

layout: post

comments: true

categories: Other

---

## Download Service manual genie book

At that time tobacco was smoked in long pipes, in it, hunter, wholly unprofessional. The passage was tedious in consequence of Ninety. ] GOODS AND SERVICES on the Mayflower II were not provided free, on Sunday night. Unthinkab... | 723.777778 | 6,420 | 0.792908 | eng_Latn | 0.999957 |

1c79ac7ae7071f86e14eddb05bc5a3734ee8ced4 | 1,649 | md | Markdown | README.md | alexmartinez1745/Pandas-Challenge | 6da043e006c463a77f2f598c2690d2b467e90367 | [

"MIT"

] | null | null | null | README.md | alexmartinez1745/Pandas-Challenge | 6da043e006c463a77f2f598c2690d2b467e90367 | [

"MIT"

] | null | null | null | README.md | alexmartinez1745/Pandas-Challenge | 6da043e006c463a77f2f598c2690d2b467e90367 | [

"MIT"

] | null | null | null | ## Background

Tools used: Python (Pandas), Jupyer Notebook

Tasked with analyzing the data for an independent gaming company and their most recent fantasy game Heroes of Pymoli. The game is free-to-play, but players are encouraged to purchase optional items that enhance their playing experience.

The task is to genera... | 23.898551 | 237 | 0.748939 | eng_Latn | 0.988259 |

1c7a0c3bb730b2fc36d8f74e199d026f21ce9479 | 1,979 | md | Markdown | content/week02.md | digitalideation/digcre_h2101 | 57a4565ee78550d0448be4719c1f24dc077088bc | [

"MIT"

] | null | null | null | content/week02.md | digitalideation/digcre_h2101 | 57a4565ee78550d0448be4719c1f24dc077088bc | [

"MIT"

] | null | null | null | content/week02.md | digitalideation/digcre_h2101 | 57a4565ee78550d0448be4719c1f24dc077088bc | [

"MIT"

] | null | null | null | ---

layout: inner

title: "Intro"

---

## Intro

This week we look back at what we learned so far and get a first taste of Keras / Pytorch.

## Schedule

|Time |Desc |

|--- |--- |

|20 mins | Looking back at NN ... | 33.542373 | 237 | 0.645781 | eng_Latn | 0.492626 |

1c7b635b25cbbef4d9f8bff647ebdae99883ea65 | 6,003 | md | Markdown | source/_posts/frustrated_boss_slams_jobseekers_for_not_reading_job_advert_properly.md | soumyadipdas37/finescoop.github.io | 0346d6175a2c36d4054083c144b7f8364db73f2f | [

"MIT"

] | null | null | null | source/_posts/frustrated_boss_slams_jobseekers_for_not_reading_job_advert_properly.md | soumyadipdas37/finescoop.github.io | 0346d6175a2c36d4054083c144b7f8364db73f2f | [

"MIT"

] | null | null | null | source/_posts/frustrated_boss_slams_jobseekers_for_not_reading_job_advert_properly.md | soumyadipdas37/finescoop.github.io | 0346d6175a2c36d4054083c144b7f8364db73f2f | [

"MIT"

] | 2 | 2021-09-18T12:06:26.000Z | 2021-11-14T15:17:34.000Z | ---

extends: _layouts.post

section: content

image: https://i.dailymail.co.uk/1s/2020/09/15/09/33196952-0-image-a-12_1600157862483.jpg

title: Frustrated boss slams jobseekers for not reading job advert properly

description: Web services firm owner Ryan Irving, 36, of Henley-on-Thames, shared his frustration on LinkedI... | 61.886598 | 259 | 0.788439 | eng_Latn | 0.999913 |

1c7b72c52e257190eeae45db0e9103fa484519de | 1,263 | md | Markdown | README.md | MDShahrouq/arm_reverse_engineering_exercises | 173223b77319b69700054d678b4b16fb2d92ba25 | [

"Apache-2.0"

] | null | null | null | README.md | MDShahrouq/arm_reverse_engineering_exercises | 173223b77319b69700054d678b4b16fb2d92ba25 | [

"Apache-2.0"

] | null | null | null | README.md | MDShahrouq/arm_reverse_engineering_exercises | 173223b77319b69700054d678b4b16fb2d92ba25 | [

"Apache-2.0"

] | 1 | 2017-02-14T20:05:36.000Z | 2017-02-14T20:05:36.000Z | # ARM Reverse Engineering Exercises

[]()

## Overview

These exercises are meant to help identify and understand different patterns in ARM assembly. All of the examples have been written in Xcode and compiled with clang. As I create more exercises, I will use different ... | 78.9375 | 353 | 0.795724 | eng_Latn | 0.718508 |

1c7bfcaebedd5185becdf723a2c062e91554bf0e | 1,060 | md | Markdown | documentation/viikkoraportti_5.md | ilkkamaksy/data-compression-algos | b73d5d619d21b9af5fe4d0c9575cf4f774aa78a3 | [

"MIT"

] | null | null | null | documentation/viikkoraportti_5.md | ilkkamaksy/data-compression-algos | b73d5d619d21b9af5fe4d0c9575cf4f774aa78a3 | [

"MIT"

] | 2 | 2021-04-25T06:46:05.000Z | 2021-04-27T06:50:47.000Z | documentation/viikkoraportti_5.md | ilkkamaksy/data-compression-algos | b73d5d619d21b9af5fe4d0c9575cf4f774aa78a3 | [

"MIT"

] | null | null | null | # Viikkoraportti 5

Tällä viikolla olen korjannut LZW-algoritmin tallennusta, tosin overheadin takia tallennettu tiedosto on pienillä syötteillä isompi kuin lähde. Suuremmilla syötteillä pakkaus näyttää toimivan hyvin, paitsi että jonkin tuntemattoman pisteen jälkeen dekoodattu teksti alkaa mennä sekaisin. Tämä testatt... | 117.777778 | 637 | 0.851887 | fin_Latn | 0.999993 |

1c7ccd36cd3681b4fd2da8147aec8ef2d69f0590 | 224 | md | Markdown | src/api-docs/stoplight.md | osvaldokalvaitir/project-settings | 94031b36bd96285275058123fbebe93aa5982a97 | [

"MIT"

] | 29 | 2018-12-20T13:16:27.000Z | 2020-08-26T20:34:03.000Z | src/api-docs/stoplight.md | osvaldokalvaitir/project-settings | 94031b36bd96285275058123fbebe93aa5982a97 | [

"MIT"

] | null | null | null | src/api-docs/stoplight.md | osvaldokalvaitir/project-settings | 94031b36bd96285275058123fbebe93aa5982a97 | [

"MIT"

] | 16 | 2018-12-20T13:16:21.000Z | 2020-10-15T19:54:41.000Z | # Stoplight

A API Design Management Platform que fornece as primeiras empresas líderes em API do mundo.

## Documentação e Acesso ao Serviço

Clique [aqui](https://stoplight.io) para ver a documentação e acessar o serviço.

| 28 | 91 | 0.78125 | por_Latn | 0.999622 |

1c7cd7d2e53da892248385e7eebd89e672c41312 | 2,787 | md | Markdown | docs/api/state.md | avstudio/state-commander | 8053da5be2d566982cc211cf485b679bd4e2ec79 | [

"MIT"

] | null | null | null | docs/api/state.md | avstudio/state-commander | 8053da5be2d566982cc211cf485b679bd4e2ec79 | [

"MIT"

] | 8 | 2020-09-05T01:21:01.000Z | 2022-02-26T11:59:10.000Z | docs/api/state.md | avucic/state-commander | 8053da5be2d566982cc211cf485b679bd4e2ec79 | [

"MIT"

] | null | null | null | # Context.State

State is defined as static getter on the [Command Class](#command-class)

Every class has it's own state, but at the and the state will be merged into single [Module](/api/module.md) state.

So for example assuming that are both commands are part of the `some/module` module:

```js

//inside some/module... | 21.438462 | 115 | 0.699677 | eng_Latn | 0.945078 |

1c7dac27748874926d7599761f41dc28bffbcb74 | 639 | md | Markdown | src/pages/blog/2019-01-21-2019-01-20.md | murokaco/gatsby-starter-netlify-cms | e00ddef20df3b431b5683950a791948fc9a548b6 | [

"MIT"

] | null | null | null | src/pages/blog/2019-01-21-2019-01-20.md | murokaco/gatsby-starter-netlify-cms | e00ddef20df3b431b5683950a791948fc9a548b6 | [

"MIT"

] | 3 | 2019-01-16T17:33:40.000Z | 2019-01-18T08:30:25.000Z | src/pages/blog/2019-01-21-2019-01-20.md | murokaco/gatsby-starter-netlify-cms | e00ddef20df3b431b5683950a791948fc9a548b6 | [

"MIT"

] | 1 | 2019-01-10T15:00:00.000Z | 2019-01-10T15:00:00.000Z | ---

templateKey: blog-post

title: '2019-01-20'

date: 2019-01-20T15:33:02.023Z

description: "\U0001F60C"

tags:

- JS UX

---

### 今日やったこと

#### JS

* スラスラ読めるJavaScriptふりがなプログラミングの続き([Github](https://github.com/murokaco/furigana-programming/commit/a62f22a02249c5e820c3ca1ad4bd31d58f2ffd30)

#### UX

* UXデザインをはじめる本の第二章途中まで読んだ。... | 26.625 | 140 | 0.824726 | yue_Hant | 0.45292 |

1c7e746fb393fb55f2235f20f7756ebfb3413a61 | 170 | md | Markdown | README.md | you7588/todo-wechat-leanTodo | 0c16ff5a40497c5234e79f95ea79bb82708bc733 | [

"MIT"

] | null | null | null | README.md | you7588/todo-wechat-leanTodo | 0c16ff5a40497c5234e79f95ea79bb82708bc733 | [

"MIT"

] | null | null | null | README.md | you7588/todo-wechat-leanTodo | 0c16ff5a40497c5234e79f95ea79bb82708bc733 | [

"MIT"

] | null | null | null | # LeanTodo × 微信小程序

基于LeanTodo改变的打卡小程序。

# 开发资源

1. [leancloud](https://leancloud.cn/)

2. [WeUI](https://github.com/weui/weui-wxss)

3. [iconfont](http://www.iconfont.cn/)

| 18.888889 | 44 | 0.694118 | yue_Hant | 0.493395 |

1c7ec8d7dca03ff9ca6421ed3488e6a22854162e | 457 | md | Markdown | README.md | RaspiRepo/esp32-temperature | 05d8671d492104719b4604ae12199d23b1f667df | [

"MIT"

] | null | null | null | README.md | RaspiRepo/esp32-temperature | 05d8671d492104719b4604ae12199d23b1f667df | [

"MIT"

] | null | null | null | README.md | RaspiRepo/esp32-temperature | 05d8671d492104719b4604ae12199d23b1f667df | [

"MIT"

] | null | null | null | # esp32-temperature

This project aim is to understand integration between IOT sensor with Cloud. Here ESP32 reading AnalogDevice TMP36GZ temperature, construct HTTP message with sensor data and sending to InfluxDB HTTP endpoint.

## Real-time visibility into sensors using TICK

https://www.influxdata.com/

JetsonNa... | 38.083333 | 211 | 0.803063 | eng_Latn | 0.725502 |

1c7ef135e9d6cd7cf51a10e2f34e811b5df87211 | 791 | md | Markdown | README.md | MosheBerman/ScrollViewSnapshotter | 659287709e5f3d15b7dfe7aabe0615012524593a | [

"MIT"

] | 18 | 2016-04-12T16:17:31.000Z | 2021-11-14T20:18:39.000Z | README.md | MosheBerman/ScrollViewSnapshotter | 659287709e5f3d15b7dfe7aabe0615012524593a | [

"MIT"

] | 3 | 2016-09-20T01:27:39.000Z | 2020-10-22T21:58:44.000Z | README.md | MosheBerman/ScrollViewSnapshotter | 659287709e5f3d15b7dfe7aabe0615012524593a | [

"MIT"

] | 3 | 2018-04-18T05:13:45.000Z | 2020-07-14T18:34:54.000Z | # ScrollViewSnapshotter

A demo project showing how to correctly snapshot the contents UIScrollView and its subclasses.

About:

---

Historically, the suggested approach to snapshotting a scrollview was to resize the scroll view's `frame` to match its `contentSize`. This became inconvenient with the introduction of auto... | 52.733333 | 313 | 0.80531 | eng_Latn | 0.987833 |

1c7f5e3aa9525408dca6fb99a5b099ccb99f4aa5 | 1,794 | md | Markdown | dynamicsax2012-technet/iutilityv2-createcreditmemotenderlineitem-method-microsoft-dynamics-retail-pos-contracts-businesslogic.md | RobinARH/DynamicsAX2012-technet | d0d0ef979705b68e6a8406736612e9fc3c74c871 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | dynamicsax2012-technet/iutilityv2-createcreditmemotenderlineitem-method-microsoft-dynamics-retail-pos-contracts-businesslogic.md | RobinARH/DynamicsAX2012-technet | d0d0ef979705b68e6a8406736612e9fc3c74c871 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | dynamicsax2012-technet/iutilityv2-createcreditmemotenderlineitem-method-microsoft-dynamics-retail-pos-contracts-businesslogic.md | RobinARH/DynamicsAX2012-technet | d0d0ef979705b68e6a8406736612e9fc3c74c871 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: IUtilityV2.CreateCreditMemoTenderLineItem Method (Microsoft.Dynamics.Retail.Pos.Contracts.BusinessLogic)

TOCTitle: CreateCreditMemoTenderLineItem Method

ms:assetid: M:Microsoft.Dynamics.Retail.Pos.Contracts.BusinessLogic.IUtilityV2.CreateCreditMemoTenderLineItem

ms:mtpsurl: https://technet.microsoft.com/en... | 33.222222 | 177 | 0.829431 | yue_Hant | 0.870476 |

1c7f8408e40eb4f0feb79b4f63fe8e50cc0e806e | 559 | md | Markdown | .github/PULL_REQUEST_TEMPLATE.md | RoasteryHub/lavie-selekopi | e3a09fdb22ac2f1744a5c106e9a6ca551ea19598 | [

"MIT"

] | null | null | null | .github/PULL_REQUEST_TEMPLATE.md | RoasteryHub/lavie-selekopi | e3a09fdb22ac2f1744a5c106e9a6ca551ea19598 | [

"MIT"

] | null | null | null | .github/PULL_REQUEST_TEMPLATE.md | RoasteryHub/lavie-selekopi | e3a09fdb22ac2f1744a5c106e9a6ca551ea19598 | [

"MIT"

] | null | null | null | ### Proposed changes in this pull request

[List all changes you want to add here. If you fixed an issue, please

add a reference to that issue as well.]

-

### When should this PR be merged

[Please describe any preconditions that need to be addressed before we

can merge this pull request.]

### Risks

[List any ris... | 19.964286 | 70 | 0.751342 | eng_Latn | 0.999744 |

1c7fccf9beb07d1d64b17e94c959bbd6506b0722 | 1,263 | md | Markdown | docs/schema-doc/snitch_cluster-properties-hives-hive-description-properties-cores-core-description-properties-ssrs-ssr-description-properties-isect_slave_credits.md | mfkiwl/snitch | d4d2d8e0b45b5a03836023dd923ed54a808fb0d4 | [

"Apache-2.0"

] | 105 | 2021-02-06T16:54:03.000Z | 2022-03-30T07:54:57.000Z | docs/schema-doc/snitch_cluster-properties-hives-hive-description-properties-cores-core-description-properties-ssrs-ssr-description-properties-isect_slave_credits.md | mfkiwl/snitch | d4d2d8e0b45b5a03836023dd923ed54a808fb0d4 | [

"Apache-2.0"

] | 163 | 2021-02-06T18:54:40.000Z | 2022-03-30T22:06:05.000Z | docs/schema-doc/snitch_cluster-properties-hives-hive-description-properties-cores-core-description-properties-ssrs-ssr-description-properties-isect_slave_credits.md | mfkiwl/snitch | d4d2d8e0b45b5a03836023dd923ed54a808fb0d4 | [

"Apache-2.0"

] | 20 | 2021-02-23T07:26:02.000Z | 2022-03-27T17:19:53.000Z | # Untitled number in Snitch Cluster Schema Schema

```txt

http://pulp-platform.org/snitch/snitch_cluster.schema.json#/properties/hives/items/properties/cores/items/properties/ssrs/items/properties/isect_slave_credits

```

Number of elements by which intersected indices may outrun corresponding data; added only if this ... | 45.107143 | 228 | 0.546318 | eng_Latn | 0.79907 |

1c803e5c6c031d6d5ce00d9de1de86a168254c12 | 26 | md | Markdown | README.md | luisalcarasr/landing-page | c8aaf223df08cbbd030815ab83a5f15ae0c45b20 | [

"MIT"

] | null | null | null | README.md | luisalcarasr/landing-page | c8aaf223df08cbbd030815ab83a5f15ae0c45b20 | [

"MIT"

] | 2 | 2021-04-03T01:41:22.000Z | 2021-04-09T03:32:44.000Z | README.md | luisalcarasr/landing-page | c8aaf223df08cbbd030815ab83a5f15ae0c45b20 | [

"MIT"

] | null | null | null | # landing-page

My page 🌍

| 8.666667 | 14 | 0.653846 | eng_Latn | 0.634475 |

1c807c14d96e89bb9411bb8a2e19d6aa61453c56 | 1,642 | md | Markdown | content/post/2019/fixing-hugo-pagination-in-0-58.md | RubenSchade/rubenerd-com | c31a535a0e2949a360be1211b5413bac6d37c2cb | [

"BSD-3-Clause"

] | 7 | 2016-08-25T17:47:57.000Z | 2020-07-13T12:19:53.000Z | content/post/2019/fixing-hugo-pagination-in-0-58.md | RubenSchade/rubenerd-com | c31a535a0e2949a360be1211b5413bac6d37c2cb | [

"BSD-3-Clause"

] | null | null | null | content/post/2019/fixing-hugo-pagination-in-0-58.md | RubenSchade/rubenerd-com | c31a535a0e2949a360be1211b5413bac6d37c2cb | [

"BSD-3-Clause"

] | 3 | 2020-07-13T12:20:10.000Z | 2021-01-04T09:34:13.000Z | ---

title: "Fixing Hugo pagination in 0.58"

date: "2019-09-12T10:44:45+10:00"

abstract: "Use site.RegularPages, not .Pages"

year: "2019"

category: Internet

tag:

- gohugo

- troubleshooting

- weblog

location: Sydney

---

The latest [Hugo](https://gohugo.io) static site generator version broke pagination for all my sites, ... | 52.967742 | 328 | 0.744214 | eng_Latn | 0.992896 |

1c8215a1c986b47563f1ce6a4776a65611b3e402 | 238 | md | Markdown | content/poems/bennington-review-1.md | byoo24/stella_v1 | 78a63b636ba016980d35ec64df81acf652fd36ae | [

"RSA-MD"

] | null | null | null | content/poems/bennington-review-1.md | byoo24/stella_v1 | 78a63b636ba016980d35ec64df81acf652fd36ae | [

"RSA-MD"

] | null | null | null | content/poems/bennington-review-1.md | byoo24/stella_v1 | 78a63b636ba016980d35ec64df81acf652fd36ae | [

"RSA-MD"

] | null | null | null | ---

date: "2021-08-24"

publisher: Bennington Review

featuredImage: ../../images/bennington-review-cover-2021.jpg

external_link: https://www.benningtonreview.org/issue-nine-wong

poems:

- When the Still Breathing Watch the Stillborn

---

| 26.444444 | 63 | 0.756303 | yue_Hant | 0.262213 |

1c82310122e7a3b45760aff5da736c005a3cf304 | 673 | md | Markdown | search.md | 737andrew/737andrew.github.io | 8bb0885b10c3e18bb43758e0a0b7e2d14863e548 | [

"MIT"

] | null | null | null | search.md | 737andrew/737andrew.github.io | 8bb0885b10c3e18bb43758e0a0b7e2d14863e548 | [

"MIT"

] | null | null | null | search.md | 737andrew/737andrew.github.io | 8bb0885b10c3e18bb43758e0a0b7e2d14863e548 | [

"MIT"

] | null | null | null | ---

layout: page_f

title: "Поиск по сайту"

title_name: "Поиск по сайту"

description: ""

time_publish: ""

---

{% include JB/setup %}

<div id="ya-site-results" onclick="return {'tld': 'ru','language': 'ru','encoding': '','htmlcss': '1.x','updatehash': true}"></div><script type="text/javascript">(function(w,d,c){var s=d.... | 61.181818 | 539 | 0.674591 | yue_Hant | 0.335611 |

1c8297d32c65bdaf29aae82519163b383949845e | 1,680 | md | Markdown | results/innerfidelity/sbaf-serious/Pioneer Monitor 10 II/README.md | Banbeucmas/AutoEq | b8549b2347a19e1f127e6395147ecd6fb225a8ce | [

"MIT"

] | 1 | 2020-07-17T03:48:21.000Z | 2020-07-17T03:48:21.000Z | results/innerfidelity/sbaf-serious/Pioneer Monitor 10 II/README.md | datascientist1976/AutoEq | dd8ea7ea5edb5a9087a001ceeb862326e7d23cb9 | [

"MIT"

] | null | null | null | results/innerfidelity/sbaf-serious/Pioneer Monitor 10 II/README.md | datascientist1976/AutoEq | dd8ea7ea5edb5a9087a001ceeb862326e7d23cb9 | [

"MIT"

] | null | null | null | # Pioneer Monitor 10 II

See [usage instructions](https://github.com/jaakkopasanen/AutoEq#usage) for more options and info.

### Parametric EQs

In case of using parametric equalizer, apply preamp of **-7.2dB** and build filters manually

with these parameters. The first 5 filters can be used independently.

When using ind... | 42 | 161 | 0.576786 | eng_Latn | 0.641461 |

1c82cf42445610a07bd295651b20e31556afb8c0 | 3,502 | md | Markdown | docs/debugger/debug-live-azure-apps-faq.md | keunyop/visualstudio-docs.ko-kr | 6f316d7e3badaf2deafa1a041b7946f2bc3d9a31 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2019-12-04T01:36:07.000Z | 2019-12-04T01:36:07.000Z | docs/debugger/debug-live-azure-apps-faq.md | keunyop/visualstudio-docs.ko-kr | 6f316d7e3badaf2deafa1a041b7946f2bc3d9a31 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/debugger/debug-live-azure-apps-faq.md | keunyop/visualstudio-docs.ko-kr | 6f316d7e3badaf2deafa1a041b7946f2bc3d9a31 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: 스냅숏 디버깅 FAQ | Microsoft Docs

ms.date: 11/07/2017

ms.topic: reference

helpviewer_keywords:

- debugger

ms.assetid: 944f1eb0-a74b-4d28-ae2b-a370cd869add

author: mikejo5000

ms.author: mikejo

manager: jillfra

ms.workload:

- multiple

ms.openlocfilehash: 7ea593ad5f88ba29f6b1c0d7c64a129b8f71c7f5

ms.sourcegitcommit: ... | 53.060606 | 270 | 0.745003 | kor_Hang | 1.00001 |

1c83f7313d076681ada0326bc18a3bd1740b9509 | 1,491 | md | Markdown | content/post/17/index.md | astropenguin/website | f2268a7040a773059399b37bd630ab274e6ed34f | [

"MIT"

] | 1 | 2020-01-27T08:37:34.000Z | 2020-01-27T08:37:34.000Z | content/post/17/index.md | astropenguin/website | f2268a7040a773059399b37bd630ab274e6ed34f | [

"MIT"

] | 18 | 2019-11-29T19:00:05.000Z | 2022-01-03T02:23:02.000Z | content/post/17/index.md | astropenguin/website | f2268a7040a773059399b37bd630ab274e6ed34f | [

"MIT"

] | 1 | 2021-01-29T08:26:11.000Z | 2021-01-29T08:26:11.000Z | +++

title = "プログラミング用フォント Ricty のインストール"

date = 2018-12-11T23:06:55+09:00

tags = ["Ricty", "Homebrew"]

categories = ["Tech"]

aliases = ["/blog/17/"]

+++

## TL;DR

これはアドベントカレンダーの11日目の記事です。

今日は雑ですが、プログラミング用フォント Ricty のインストールのメモです。

## Ricty

> Ricty (リクティ) は Linux 環境での研究・開発を想定したプログラミング用フォントです。

> テキストエディタやターミナルエミュレータ、プ... | 25.271186 | 95 | 0.657948 | yue_Hant | 0.637133 |

1c84c1c4e30fd73298514332062f4570c6201017 | 155 | md | Markdown | packages/webiny-ui/src/Tags/README.md | gatarelib/webiny-js | 2f90a3bb2d5cfae568b0a0d7d5e09a1bbd930986 | [

"MIT"

] | null | null | null | packages/webiny-ui/src/Tags/README.md | gatarelib/webiny-js | 2f90a3bb2d5cfae568b0a0d7d5e09a1bbd930986 | [

"MIT"

] | null | null | null | packages/webiny-ui/src/Tags/README.md | gatarelib/webiny-js | 2f90a3bb2d5cfae568b0a0d7d5e09a1bbd930986 | [

"MIT"

] | null | null | null | # Tags

### Design

N/A

### Description

`Tags` component lets users to input one or more tags.

### Import

```js

import { Tags } from "webiny-ui/Tags";

``` | 12.916667 | 54 | 0.63871 | eng_Latn | 0.942736 |

1c85238d629e05043735bf9857e19fa142135c7c | 2,916 | md | Markdown | types/foundry-pc-types/README.md | mirkoRainer/Foundry-VTT---Pathfinder-2e-Mirror | 65ef20202f332d9281a23cef4c58a91aa98b11e4 | [

"Apache-2.0"

] | 2 | 2021-03-29T13:18:15.000Z | 2021-11-20T23:05:20.000Z | types/foundry-pc-types/README.md | mirkoRainer/Foundry-VTT---Pathfinder-2e-Mirror | 65ef20202f332d9281a23cef4c58a91aa98b11e4 | [

"Apache-2.0"

] | 6 | 2021-01-13T11:59:37.000Z | 2021-01-16T11:54:30.000Z | types/foundry-pc-types/README.md | mirkoRainer/Foundry-VTT---Pathfinder-2e-Mirror | 65ef20202f332d9281a23cef4c58a91aa98b11e4 | [

"Apache-2.0"

] | null | null | null | <div align=center>

# Foundry Project Creator Types

[](https://discord.gg/59Tz2X7)

[](https://twi... | 59.510204 | 347 | 0.777435 | eng_Latn | 0.990135 |

1c865497823a058a17d1baf44f80644d80ae35b8 | 26,298 | md | Markdown | README.md | rxeer/seo-portal | 0f0410af5f0cd8a43d2ab1d0942ef6696bb6a74a | [

"MIT"

] | 1 | 2019-09-26T03:13:15.000Z | 2019-09-26T03:13:15.000Z | README.md | rxeer/seo-portal | 0f0410af5f0cd8a43d2ab1d0942ef6696bb6a74a | [

"MIT"

] | null | null | null | README.md | rxeer/seo-portal | 0f0410af5f0cd8a43d2ab1d0942ef6696bb6a74a | [

"MIT"

] | null | null | null | ## Table of Contents

- [Sending Feedback](#sending-feedback)

- [Folder Structure](#folder-structure)

- [Available Scripts](#available-scripts)

- [npm start](#npm-start)

- [npm test](#npm-test)

- [npm run build](#npm-run-build)

- [npm run eject](#npm-run-eject)

- [Supported Browsers](#supported-browsers)

- [Sup... | 45.577123 | 540 | 0.738687 | eng_Latn | 0.983655 |

1c869ef437aebd54f988c3f53b10c9bf87c607d8 | 722 | md | Markdown | vocabulary/c/crude.md | lsieun/EnglishDictionary | 5ad881da2d06835d1150e7076955c82be83fa127 | [

"MIT"

] | null | null | null | vocabulary/c/crude.md | lsieun/EnglishDictionary | 5ad881da2d06835d1150e7076955c82be83fa127 | [

"MIT"

] | null | null | null | vocabulary/c/crude.md | lsieun/EnglishDictionary | 5ad881da2d06835d1150e7076955c82be83fa127 | [

"MIT"

] | null | null | null | # crude

- Word: crude

- Story: Is it gross? Unsophisticated? Totally tasteless and positively offensive? Then you can describe it as crude.

- Story: Crude is not rude when it’s used to describe unprocessed oil, which it first was associated with in 1865. From the Latin crudo, meaning "rough, raw," crude today can be u... | 48.133333 | 393 | 0.757618 | eng_Latn | 0.999843 |

1c87477261909a5171368ddc2119970dacdc7429 | 1,545 | md | Markdown | src/app/page/zh_CN/locale/README.zh-CN.md | yutao331763646/ngx-vant | cb86a2c36d3c1b32f1b753a20df9488c14c79eed | [

"MIT"

] | 29 | 2021-01-25T01:46:55.000Z | 2022-02-02T17:44:48.000Z | src/app/page/zh_CN/locale/README.zh-CN.md | yutao331763646/ngx-vant | cb86a2c36d3c1b32f1b753a20df9488c14c79eed | [

"MIT"

] | null | null | null | src/app/page/zh_CN/locale/README.zh-CN.md | yutao331763646/ngx-vant | cb86a2c36d3c1b32f1b753a20df9488c14c79eed | [

"MIT"

] | 2 | 2021-07-26T07:27:14.000Z | 2021-08-05T02:19:36.000Z | # 国际化

### 介绍

Nnx-Vant 采用中文作为默认语言,同时支持多语言切换,请按照下方教程进行国际化设置。

## 使用方法

### 在app.module.ts中指定默认语言

```js

import { BrowserModule } from '@angular/platform-browser';

import { NgModule } from '@angular/core';

import { AppRoutingModule } from './app-routing.module';

import { AppComponent } from './app.component';

import {... | 20.328947 | 78 | 0.561165 | yue_Hant | 0.904761 |

1c8821b675c363c76ac2d9749bdd00a525267f94 | 36 | md | Markdown | README.md | RehanFargose/PAN-Based-Door-Security-System- | 7e0cd2af77564ca332ee101799b2088acf83eac1 | [

"MIT"

] | null | null | null | README.md | RehanFargose/PAN-Based-Door-Security-System- | 7e0cd2af77564ca332ee101799b2088acf83eac1 | [

"MIT"

] | null | null | null | README.md | RehanFargose/PAN-Based-Door-Security-System- | 7e0cd2af77564ca332ee101799b2088acf83eac1 | [

"MIT"

] | null | null | null | # PAN Based Door Security System

| 12 | 33 | 0.722222 | eng_Latn | 0.657217 |

1c883860c0a7c797fa71819d2fb135b8b930f4a7 | 26 | md | Markdown | basic/sns/README.md | rilindo/some_rust_aws_examples | 9cc1980004c6e6c46db65fdb976d5eb10b3b1d50 | [

"Apache-2.0"

] | null | null | null | basic/sns/README.md | rilindo/some_rust_aws_examples | 9cc1980004c6e6c46db65fdb976d5eb10b3b1d50 | [

"Apache-2.0"

] | null | null | null | basic/sns/README.md | rilindo/some_rust_aws_examples | 9cc1980004c6e6c46db65fdb976d5eb10b3b1d50 | [

"Apache-2.0"

] | null | null | null | #Rust/Rusoto SNS Examples

| 13 | 25 | 0.807692 | eng_Latn | 0.338933 |

1c885aa1b15e6287f03926e9b24351d14a63f6d9 | 945 | md | Markdown | CHANGELOG.md | nonsignificantp/haversine | 6ba8d8a6c2c49977eb22e34486c4a62a9401d73e | [

"MIT"

] | null | null | null | CHANGELOG.md | nonsignificantp/haversine | 6ba8d8a6c2c49977eb22e34486c4a62a9401d73e | [

"MIT"

] | null | null | null | CHANGELOG.md | nonsignificantp/haversine | 6ba8d8a6c2c49977eb22e34486c4a62a9401d73e | [

"MIT"

] | null | null | null | # CHANGELOG

## 1.0.2 - 2018-13

slightly better precision [#17](https://github.com/mapado/haversine/pull/17)

## 1.0.1 - 2018-10-10

fix wrong definition in setup.py

## 1.0.0 - 2018-10-10

No changes, haversine package has been stable and functional for years now. Time to make a 1.0 version :)

(in fact there is one ... | 26.25 | 188 | 0.724868 | eng_Latn | 0.90642 |

1c88fae8fff4bca3726d26c2cd6cb1dd78d7ef81 | 342 | md | Markdown | _session/7Keynote2.md | UniversityofBrighton/odak | 751b85afaba2a0e922f5f6bc63fa9c0258bb7988 | [

"MIT"

] | null | null | null | _session/7Keynote2.md | UniversityofBrighton/odak | 751b85afaba2a0e922f5f6bc63fa9c0258bb7988 | [

"MIT"

] | null | null | null | _session/7Keynote2.md | UniversityofBrighton/odak | 751b85afaba2a0e922f5f6bc63fa9c0258bb7988 | [

"MIT"

] | 2 | 2021-12-21T11:16:10.000Z | 2022-02-16T16:48:01.000Z | ---

permalink: /session/keynote1

redirect_from: "session/keynote1"

layout: egsr-talks

title: "Keynote: TBC"

authors: "<b>TBC</b>"

start: "2022-07-01T10:00:00Z"

end: "2022-07-01T11:00:00Z"

session_id: 7

# youtube_url: "https://youtu.be/lbZBRp6Gn20"

# rc_link: "https://rc.egsr2020.london/channel/SR_08_keynote"

year: 2022... | 22.8 | 61 | 0.719298 | yue_Hant | 0.141558 |

1c891f703a078553ce36439cd7a9b256afb19226 | 1,532 | md | Markdown | README.md | michael1979ng/Week-12-Homework-File-Cloud-Security | 85346f877b716e47275592cf3a826d1cfc444842 | [

"ADSL"

] | null | null | null | README.md | michael1979ng/Week-12-Homework-File-Cloud-Security | 85346f877b716e47275592cf3a826d1cfc444842 | [

"ADSL"

] | null | null | null | README.md | michael1979ng/Week-12-Homework-File-Cloud-Security | 85346f877b716e47275592cf3a826d1cfc444842 | [

"ADSL"

] | null | null | null | Homework File: Cloud Security

Background

During the last week, you created a highly available web server for XCorp's Red Team to use for testing and training.

Your lead cloud administrator has asked for a diagram of the Network you created to keep for documentation and company records.

Your task: Use draw.io to creat... | 36.47619 | 191 | 0.772193 | eng_Latn | 0.999447 |

1c89395592cb68780c74a2c2871ac0b291a769e9 | 378 | md | Markdown | navigation/README.md | frgomes/sri-mobile-examples | 88db87cce0d08af203bb42d079d5945b6328c1a6 | [

"Apache-2.0"

] | 2 | 2018-03-25T02:12:25.000Z | 2018-03-25T02:12:36.000Z | navigation/README.md | frgomes/sri-mobile-examples | 88db87cce0d08af203bb42d079d5945b6328c1a6 | [

"Apache-2.0"

] | 1 | 2018-01-14T04:36:07.000Z | 2018-01-14T04:36:07.000Z | navigation/README.md | frgomes/sri-mobile-examples | 88db87cce0d08af203bb42d079d5945b6328c1a6 | [

"Apache-2.0"

] | 3 | 2018-01-12T23:29:36.000Z | 2019-06-30T03:15:00.000Z | # Navigation PlayGround

#### How to run

//start server

```scala

yarn install or npm install

yarn start or npm start

react-native link react-native-vector-icons

```

***iOS***

```scala

sbt ~ios:dev

//open new terminal

react-native run-ios

```

***Android***

```scala

sbt ~android:dev

//Start android emulator/conne... | 12.6 | 43 | 0.703704 | eng_Latn | 0.818552 |

1c89400826012d1e5f1f017e38ae594a6d4f33ff | 220 | md | Markdown | rmf_demos_bridges/CHANGELOG.md | Capstone-S13/rmf_demos | 65c45a1f52c5d909381373b35df4146d4069e7f5 | [

"Apache-2.0"

] | null | null | null | rmf_demos_bridges/CHANGELOG.md | Capstone-S13/rmf_demos | 65c45a1f52c5d909381373b35df4146d4069e7f5 | [

"Apache-2.0"

] | 4 | 2022-01-10T04:30:54.000Z | 2022-02-16T03:02:19.000Z | rmf_demos_bridges/CHANGELOG.md | Capstone-S13/rmf_demos | 65c45a1f52c5d909381373b35df4146d4069e7f5 | [

"Apache-2.0"

] | 1 | 2022-01-24T03:47:29.000Z | 2022-01-24T03:47:29.000Z | ## Changelog for package rmf_demos_bridges

1.4.0 (2022-02-14)

------------------

* Provide an example adapter for a delivery robot with indoor-outdoor capabilities [#121](https://github.com/open-rmf/rmf_demos/pull/121)

| 36.666667 | 137 | 0.709091 | eng_Latn | 0.811305 |

1c897ad332da771667c32fe26773146693aa6111 | 11,797 | md | Markdown | README.md | wyfoffice/cmfrec | 3f257fb0518d9fecc7c6edc18d18e7439353f6e1 | [

"BSD-2-Clause"

] | null | null | null | README.md | wyfoffice/cmfrec | 3f257fb0518d9fecc7c6edc18d18e7439353f6e1 | [

"BSD-2-Clause"

] | null | null | null | README.md | wyfoffice/cmfrec | 3f257fb0518d9fecc7c6edc18d18e7439353f6e1 | [

"BSD-2-Clause"

] | null | null | null | # Collective Matrix Factorization

Deleted the changed file, as the code should belong to my intern company.

Many thanks to david-cortes!

The original library is using an explicit feedback loss function which regards the ratins in the matrix are the preferences of users. However, in many cases, the ratings are not ne... | 71.932927 | 716 | 0.784352 | eng_Latn | 0.995804 |

1c89be55f4ffdba4549fd2cafc8c4b0852d7f123 | 1,320 | md | Markdown | programs/postgres/postgres.md | egel/code-wiki | c6e5f6bee4b1d78ee6385e6cff70f3836fc53c57 | [

"MIT"

] | 1 | 2016-01-03T22:27:10.000Z | 2016-01-03T22:27:10.000Z | programs/postgres/postgres.md | egel/code-wiki | c6e5f6bee4b1d78ee6385e6cff70f3836fc53c57 | [

"MIT"

] | null | null | null | programs/postgres/postgres.md | egel/code-wiki | c6e5f6bee4b1d78ee6385e6cff70f3836fc53c57 | [

"MIT"

] | null | null | null | <img src="img/postgresql-logo.png" title="PostgreSQL" width="150" />

# PostgeSQL

## Table of Contents

* [Legend](#legend)

* [Setup](#setup)

* [Add DB user password to environment variable](#add-db-user-password-to-environment-variable)

* [Add new database and user](#add-new-database-and-user)

## Leg... | 24 | 101 | 0.709091 | kor_Hang | 0.446833 |

1c89d1ea59da7367632ae34c797421d435a5c6c1 | 618 | md | Markdown | README.md | garethflowers/docker-ftp-server | a67dccdcd12310e44dd5bdb2d06ae26c381ca286 | [

"MIT"

] | 4 | 2020-07-27T14:19:14.000Z | 2022-01-25T16:37:35.000Z | README.md | garethflowers/docker-ftp-server | a67dccdcd12310e44dd5bdb2d06ae26c381ca286 | [

"MIT"

] | 6 | 2020-09-04T00:15:42.000Z | 2021-05-13T21:18:28.000Z | README.md | garethflowers/docker-ftp-server | a67dccdcd12310e44dd5bdb2d06ae26c381ca286 | [

"MIT"

] | 3 | 2021-05-27T13:53:35.000Z | 2022-03-16T06:48:52.000Z | # FTP Server

A simple FTP server, using

[`vsftpd`](https://security.appspot.com/vsftpd.html).

## How to use this image

### Start a FTP Server instance

To start a container, with data stored in `/data` on the host, use the

following:

```sh

docker run \

--detach \

--env FTP_PASS=123 \

--env FTP_USER=user \

--nam... | 21.310345 | 100 | 0.699029 | eng_Latn | 0.731188 |

1c8a05ee35865f84ee1a4a15580bcc4898de1ea3 | 1,007 | md | Markdown | .github/ISSUE_TEMPLATE/bug_report.md | muhyun/sagemaker-inference-toolkit | 31c143a8274129c00af7bc1db826e3cfc68ea535 | [

"Apache-2.0"

] | 188 | 2019-06-01T16:43:24.000Z | 2022-03-19T03:44:56.000Z | .github/ISSUE_TEMPLATE/bug_report.md | muhyun/sagemaker-inference-toolkit | 31c143a8274129c00af7bc1db826e3cfc68ea535 | [

"Apache-2.0"

] | 66 | 2019-10-15T00:12:58.000Z | 2022-03-28T21:58:48.000Z | .github/ISSUE_TEMPLATE/bug_report.md | muhyun/sagemaker-inference-toolkit | 31c143a8274129c00af7bc1db826e3cfc68ea535 | [

"Apache-2.0"

] | 68 | 2019-06-20T17:34:43.000Z | 2022-03-13T09:10:52.000Z | ---

name: Bug report

about: File a report to help us reproduce and fix the problem

title: ''

labels: ''

assignees: ''

---

**Describe the bug**

A clear and concise description of what the bug is.

**To reproduce**

A clear, step-by-step set of instructions to reproduce the bug.

**Expected behavior**

A clear and concis... | 29.617647 | 179 | 0.743793 | eng_Latn | 0.984106 |

1c8bf36cd87f893e1edffb29ec44a8dc05376d18 | 517 | md | Markdown | README.md | cirocosta/flight_recorder | 77358155c32ef1a5dfd77ccf12cdb7cf1e47acd9 | [

"Apache-2.0"