hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6

values | lang stringclasses 1

value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191

values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

1c1119fdd1c2c855a372ec039fdc0154453362f0 | 2,404 | md | Markdown | docs/2014/analysis-services/multidimensional-models/document-and-script-an-analysis-services-database.md | masahiko-sotta/sql-docs.ja-jp | f9e587be8d74ad47d0cc2c31a1670e2190a0aab7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/2014/analysis-services/multidimensional-models/document-and-script-an-analysis-services-database.md | masahiko-sotta/sql-docs.ja-jp | f9e587be8d74ad47d0cc2c31a1670e2190a0aab7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/2014/analysis-services/multidimensional-models/document-and-script-an-analysis-services-database.md | masahiko-sotta/sql-docs.ja-jp | f9e587be8d74ad47d0cc2c31a1670e2190a0aab7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: ドキュメントし、Analysis Services データベースをスクリプト |Microsoft Docs

ms.custom: ''

ms.date: 03/06/2017

ms.prod: sql-server-2014

ms.reviewer: ''

ms.technology: analysis-services

ms.topic: conceptual

helpviewer_keywords:

- XML for Analysis, scripts

- XMLA, scripts

- scripts [Analysis Services], databases

- documenting datab... | 52.26087 | 487 | 0.782862 | yue_Hant | 0.587918 |

1c1193611a07a5f0acd2c2ba7b832de99e8907b9 | 2,805 | markdown | Markdown | _posts/2018/2018-10-16-qpcr-ronits-dnased-c-gigas-ploidydessication-rna-with-18s-primers.markdown | AidanCox12/Aidans_Journal | 6bc80960ae7cc3f81aa097382d7c0bcc63f0c9f9 | [

"MIT"

] | null | null | null | _posts/2018/2018-10-16-qpcr-ronits-dnased-c-gigas-ploidydessication-rna-with-18s-primers.markdown | AidanCox12/Aidans_Journal | 6bc80960ae7cc3f81aa097382d7c0bcc63f0c9f9 | [

"MIT"

] | null | null | null | _posts/2018/2018-10-16-qpcr-ronits-dnased-c-gigas-ploidydessication-rna-with-18s-primers.markdown | AidanCox12/Aidans_Journal | 6bc80960ae7cc3f81aa097382d7c0bcc63f0c9f9 | [

"MIT"

] | 5 | 2019-12-18T06:47:34.000Z | 2022-03-15T23:47:41.000Z | ---

author: kubu4

comments: true

date: 2018-10-16 18:15:26+00:00

layout: post

slug: qpcr-ronits-dnased-c-gigas-ploidydessication-rna-with-18s-primers

title: qPCR - Ronit's DNAsed C.gigas Ploidy/Dessication RNA with 18s primers

wordpress_id: 3652

author:

- kubu4

categories:

- Miscellaneous

tags:

- 18s

- BB15

-... | 21.25 | 190 | 0.751872 | eng_Latn | 0.594991 |

1c1213c5b409397c369dfd22989b29e5aab0beca | 9,545 | md | Markdown | articles/azure-netapp-files/azure-netapp-files-develop-with-rest-api.md | cristhianu/azure-docs.es-es | 910ba6adc1547b9e94d5ed4cbcbe781921d009b7 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2019-09-04T06:39:25.000Z | 2019-09-04T06:43:40.000Z | articles/azure-netapp-files/azure-netapp-files-develop-with-rest-api.md | cristhianu/azure-docs.es-es | 910ba6adc1547b9e94d5ed4cbcbe781921d009b7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/azure-netapp-files/azure-netapp-files-develop-with-rest-api.md | cristhianu/azure-docs.es-es | 910ba6adc1547b9e94d5ed4cbcbe781921d009b7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Desarrollo para Azure NetApp Files con la API REST | Microsoft Docs

description: Describe cómo empezar a usar la API REST de Azure NetApp Files.

services: azure-netapp-files

documentationcenter: ''

author: b-juche

manager: ''

editor: ''

ms.assetid: ''

ms.service: azure-netapp-files

ms.workload: storage

ms.tg... | 54.542857 | 351 | 0.742797 | spa_Latn | 0.30821 |

1c12b21d5c69b68ad228543296ccb8528958328c | 1,123 | md | Markdown | articles/virtual-machines/virtual-machines-linux-create-custom.md | huiw-git/azure-content-zhtw | f20103dc3d404c9c929c155b36c5a47aee5baed6 | [

"CC-BY-3.0"

] | null | null | null | articles/virtual-machines/virtual-machines-linux-create-custom.md | huiw-git/azure-content-zhtw | f20103dc3d404c9c929c155b36c5a47aee5baed6 | [

"CC-BY-3.0"

] | null | null | null | articles/virtual-machines/virtual-machines-linux-create-custom.md | huiw-git/azure-content-zhtw | f20103dc3d404c9c929c155b36c5a47aee5baed6 | [

"CC-BY-3.0"

] | 1 | 2020-11-04T04:34:56.000Z | 2020-11-04T04:34:56.000Z | <properties

pageTitle="建立 Linux VM | Microsoft Azure"

description="了解如何以執行 Linux 作業系統的傳統部署模型建立自訂虛擬機器。"

services="virtual-machines"

documentationCenter=""

authors="dsk-2015"

manager="timlt"

editor="tysonn"

tags="azure-service-management"/>

<tags

ms.service="virtual-machines"

ms.workload="infrastructure-servic... | 29.552632 | 184 | 0.763134 | yue_Hant | 0.906092 |

1c12c7bf3daaa8ec7408ea18bc1a7eef91b3f0fe | 1,315 | md | Markdown | Module-2a/README.md | ajaymahale/apijam | abdbcc3265af5026804f962eae129e2f6e4498f5 | [

"Apache-2.0"

] | null | null | null | Module-2a/README.md | ajaymahale/apijam | abdbcc3265af5026804f962eae129e2f6e4498f5 | [

"Apache-2.0"

] | null | null | null | Module-2a/README.md | ajaymahale/apijam | abdbcc3265af5026804f962eae129e2f6e4498f5 | [

"Apache-2.0"

] | 2 | 2021-05-26T06:04:50.000Z | 2021-05-27T00:54:14.000Z | # Module 2a - API Security Part 1

Apigee’s API Jam Module 2a is the first part of a hands-on workshop that will jumpstart your understanding of API security. In this module, you will walk through two lab exercises that will help you throttle, protect, and secure your APIs by utilizing modern security principles with OA... | 50.576923 | 295 | 0.764259 | eng_Latn | 0.98033 |

1c12d8e95ac5d42aff3ee85dd646902901cd3569 | 2,690 | md | Markdown | Office365-Admin/misc/contacts.md | NelsonFMDuarte/OfficeDocs-O365ITPro | 734ac6b31c9b0f839bc18a5503038d0c54845a78 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | Office365-Admin/misc/contacts.md | NelsonFMDuarte/OfficeDocs-O365ITPro | 734ac6b31c9b0f839bc18a5503038d0c54845a78 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | Office365-Admin/misc/contacts.md | NelsonFMDuarte/OfficeDocs-O365ITPro | 734ac6b31c9b0f839bc18a5503038d0c54845a78 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: "Quick help Contacts"

ms.author: kwekua

author: kwekua

manager: scotv

audience: Admin

ms.topic: article

ms.service: o365-administration

localization_priority: Normal

ms.collection:

- M365-subscription-management

- Adm_O365

- Adm_NonTOC

search.appverid:

- BCS160

- MET150

- MOE150

ms.assetid: e64ceac2-ae62-4... | 42.03125 | 335 | 0.761338 | eng_Latn | 0.989742 |

1c134f63445e6ac626975775fad074818d7655f9 | 1,012 | md | Markdown | article/PART9/deep-learning/keras/keras-01-env-install-set-build.md | LuckinJack/LuckinJack.github.io | 8caf1bfa1a02d1c689a1da3ad1829b45861e8d5b | [

"Apache-2.0"

] | 1 | 2021-03-08T14:26:39.000Z | 2021-03-08T14:26:39.000Z | article/PART9/deep-learning/keras/keras-01-env-install-set-build.md | LuckinJack/LuckinJack.github.io | 8caf1bfa1a02d1c689a1da3ad1829b45861e8d5b | [

"Apache-2.0"

] | null | null | null | article/PART9/deep-learning/keras/keras-01-env-install-set-build.md | LuckinJack/LuckinJack.github.io | 8caf1bfa1a02d1c689a1da3ad1829b45861e8d5b | [

"Apache-2.0"

] | null | null | null | # Keras安装(基于Win10)

安装Keras前,请先基于下面这篇文章,安装好TensorFlow

<a style="", href="../../PART9/tensorflow/tensorflow-01-env-install-set-build.html">TensorFlow2.1安装</a>

## 安装 Keras

输入命令开始安装keras:

`pip install keras==2.3.1 -i https://pypi.doubanio.com/simple`

接着我们在 *PyCharm* 中新建一个Python文件,复制以下Python代码在IDE中执行

```python

im... | 22.488889 | 138 | 0.767787 | yue_Hant | 0.679521 |

1c1383290c2d83ee38f8930a81ba26097a5a004c | 2,157 | md | Markdown | source/includes/_job_templates.md | Scripted/api-docs | 95f03af0c6b71a12809aa72fc2bea9ecefba643c | [

"Apache-2.0"

] | null | null | null | source/includes/_job_templates.md | Scripted/api-docs | 95f03af0c6b71a12809aa72fc2bea9ecefba643c | [

"Apache-2.0"

] | null | null | null | source/includes/_job_templates.md | Scripted/api-docs | 95f03af0c6b71a12809aa72fc2bea9ecefba643c | [

"Apache-2.0"

] | 1 | 2020-01-28T13:22:59.000Z | 2020-01-28T13:22:59.000Z | # Job Templates

```ruby

ScriptedClient::JobTemplate.all

```

```shell

curl -H "Authorization: Bearer abcdefghij0123456789" \

https://api.scripted.com/abcd1234/v1/job_templates

```

> Sample JobTemplate

```json

{

"id": "5654ec02a6e02a37e70000cc",

"name": "Standard Blog Post",

"created_at": "2015-11-24T15:00:... | 24.235955 | 173 | 0.579045 | eng_Latn | 0.563569 |

1c139f8c871e514bf858619f84455d7cada0db3a | 924 | markdown | Markdown | doc/api/cartpurchase/non-owner_is_logged_in.markdown | barkbox/shopping | 400fd4108ac62282454abd239cdbc9f385737735 | [

"MIT"

] | null | null | null | doc/api/cartpurchase/non-owner_is_logged_in.markdown | barkbox/shopping | 400fd4108ac62282454abd239cdbc9f385737735 | [

"MIT"

] | 2 | 2017-08-09T16:55:22.000Z | 2018-04-23T19:41:25.000Z | doc/api/cartpurchase/non-owner_is_logged_in.markdown | barkbox/shopping | 400fd4108ac62282454abd239cdbc9f385737735 | [

"MIT"

] | 1 | 2018-03-27T18:45:00.000Z | 2018-03-27T18:45:00.000Z | # CartPurchase API

## non-owner is logged in

### GET /cart_purchases/:id

### Parameters

| Name | Description | Required | Scope |

|------|-------------|----------|-------|

| cart_purchase_id | Cart Purchase ID | true | |

### Request

#### Headers

<pre>Content-Type: application/vnd.api+json

Host: example.org

Cook... | 17.111111 | 81 | 0.626623 | eng_Latn | 0.279184 |

1c145da7f591b54edfa80a73a47fae2c73d2c4a6 | 656 | md | Markdown | README.md | Iaggelis/tuppers-formula | dec63f97818e941a37a153324df36f85bb3530a1 | [

"MIT"

] | null | null | null | README.md | Iaggelis/tuppers-formula | dec63f97818e941a37a153324df36f85bb3530a1 | [

"MIT"

] | null | null | null | README.md | Iaggelis/tuppers-formula | dec63f97818e941a37a153324df36f85bb3530a1 | [

"MIT"

] | null | null | null | # Tupper's self-referential formula

A simple visualization of tupper's self-referential formula using C and SDL2. The code for the visualization is a simplified version of Tsoding's nice project [https://github.com/tsoding/gp](https://github.com/tsoding/gp). The calculation part is not yet 100% correct, as can be see... | 24.296296 | 305 | 0.737805 | eng_Latn | 0.679251 |

1c14a28b82aecb1295c6fb813d9225c3dafe7b3c | 1,661 | md | Markdown | README.md | ozanlimited/ozan-checkout-ios | 431a516b60b169fbbc4c9c7307d84b801992bbe4 | [

"MIT"

] | 3 | 2017-08-03T15:13:27.000Z | 2020-07-08T10:54:18.000Z | README.md | ozanlimited/ozan-checkout-ios | 431a516b60b169fbbc4c9c7307d84b801992bbe4 | [

"MIT"

] | null | null | null | README.md | ozanlimited/ozan-checkout-ios | 431a516b60b169fbbc4c9c7307d84b801992bbe4 | [

"MIT"

] | null | null | null | ## Ozan Checkout iOS

[](https://cocoapods.org/pods/OzanCheckout)

[](https://github.com/intercom/intercom-ios)

[  .jpg>)

| 44.5 | 156 | 0.651685 | afr_Latn | 0.228366 |

1c14aea2ecd3f38611c1f6702699752dfe4449ef | 3,714 | md | Markdown | help/c-implementing-target/c-implementing-target-for-client-side-web/t-mbox-download/orderconfirm-create.md | and-poulsen/target.en | 124be26aacd823fc994252559c5f3621a29b89bf | [

"MIT"

] | 7 | 2019-07-22T16:10:30.000Z | 2021-06-03T14:07:16.000Z | help/c-implementing-target/c-implementing-target-for-client-side-web/t-mbox-download/orderconfirm-create.md | and-poulsen/target.en | 124be26aacd823fc994252559c5f3621a29b89bf | [

"MIT"

] | 176 | 2019-02-28T16:15:54.000Z | 2022-03-01T10:49:44.000Z | help/c-implementing-target/c-implementing-target-for-client-side-web/t-mbox-download/orderconfirm-create.md | and-poulsen/target.en | 124be26aacd823fc994252559c5f3621a29b89bf | [

"MIT"

] | 66 | 2019-02-25T22:01:30.000Z | 2022-03-23T12:58:24.000Z | ---

keywords: order confirmation;orderConfirmPage

description: Learn about the legacy mbox.js implementation of Adobe Target. Migrate to the Adobe Experience Platform Web SDK (AEP Web SDK) or to the latest version of at.js.

title: How Do I Create an Order Confirmation mbox using mbox.js?

feature: at.js

role: Developer

... | 67.527273 | 405 | 0.763866 | eng_Latn | 0.942397 |

1c157dfdbec985e2bc1f3622abe96c8ac7309696 | 1,411 | md | Markdown | leetcode/desc/d9/946.md | RobWalt/rustgym | b4dc47cb36d59c157095563857b0ac5ca62c68b3 | [

"MIT"

] | 354 | 2020-08-11T07:56:06.000Z | 2022-03-31T14:22:41.000Z | leetcode/desc/d9/946.md | RobWalt/rustgym | b4dc47cb36d59c157095563857b0ac5ca62c68b3 | [

"MIT"

] | 51 | 2020-10-16T05:29:05.000Z | 2022-02-08T00:33:01.000Z | leetcode/desc/d9/946.md | RobWalt/rustgym | b4dc47cb36d59c157095563857b0ac5ca62c68b3 | [

"MIT"

] | 43 | 2020-09-22T07:14:15.000Z | 2022-03-30T11:30:39.000Z | <div><p>Given two sequences <code>pushed</code> and <code>popped</code> <strong>with distinct values</strong>, return <code>true</code> if and only if this could have been the result of a sequence of push and pop operations on an initially empty stack.</p>

<p> </p>

<div>

<p><strong>Example 1:</strong><... | 41.5 | 266 | 0.647768 | eng_Latn | 0.551026 |

1c15a21dda41639f42b0bf16710d25ea44f431cd | 341 | md | Markdown | guide/chinese/mathematics/logarithms-introduction-to-the-relationship/index.md | SweeneyNew/freeCodeCamp | e24b995d3d6a2829701de7ac2225d72f3a954b40 | [

"BSD-3-Clause"

] | 10 | 2019-08-09T19:58:19.000Z | 2019-08-11T20:57:44.000Z | guide/chinese/mathematics/logarithms-introduction-to-the-relationship/index.md | SweeneyNew/freeCodeCamp | e24b995d3d6a2829701de7ac2225d72f3a954b40 | [

"BSD-3-Clause"

] | 2,056 | 2019-08-25T19:29:20.000Z | 2022-02-13T22:13:01.000Z | guide/chinese/mathematics/logarithms-introduction-to-the-relationship/index.md | SweeneyNew/freeCodeCamp | e24b995d3d6a2829701de7ac2225d72f3a954b40 | [

"BSD-3-Clause"

] | 5 | 2018-10-18T02:02:23.000Z | 2020-08-25T00:32:41.000Z | ---

title: Logarithms Introduction to the Relationship

localeTitle: 对数介绍关系

---

## 对数介绍关系

这是一个存根。 [帮助我们的社区扩展它](https://github.com/freecodecamp/guides/tree/master/src/pages/mathematics/logarithms-introduction-to-the-relationship/index.md) 。

[这种快速风格指南有助于确保您的拉取请求被接受](https://github.com/freecodecamp/guides/blob/master/REA... | 31 | 149 | 0.780059 | yue_Hant | 0.552429 |

1c162220befe0ba9defbdb058258cba0d007d067 | 1,034 | md | Markdown | convene-web/app/views/guides/_glossary.md | user512/convene | be07986d168ea7de53dbde0546ce64341ec6cd88 | [

"BlueOak-1.0.0",

"Apache-2.0"

] | 28 | 2020-05-04T21:38:47.000Z | 2022-03-21T22:12:00.000Z | convene-web/app/views/guides/_glossary.md | user512/convene | be07986d168ea7de53dbde0546ce64341ec6cd88 | [

"BlueOak-1.0.0",

"Apache-2.0"

] | 212 | 2020-04-27T18:31:20.000Z | 2022-03-24T02:53:26.000Z | convene-web/app/views/guides/_glossary.md | eg-bet/convene | 4fdd5734a7958f20410d776432619548e4ce4aee | [

"BlueOak-1.0.0",

"Apache-2.0"

] | 9 | 2020-06-11T04:09:34.000Z | 2022-03-12T17:19:02.000Z | [neighborhoods]: ./neighborhoods

[neighborhood]: ./neighborhoods

[rooms]: ./rooms

[room]: ./rooms

[internal rooms]: ./rooms#internal-rooms

[locked rooms]: ./rooms#locked-rooms

[locked room]: ./rooms#locked-rooms

[access codes]: ./rooms#access-codes

[access code]: ./rooms#access-codes

[room links]: ./rooms#room-links

[... | 27.945946 | 69 | 0.742747 | eng_Latn | 0.831126 |

1c16800adff1c5d3804b95644941813344c236da | 32 | md | Markdown | README.md | samuel123457/samuel-samarpana-boye | 989b831bc1d6291a22a208fac157440852dbcf6b | [

"BSL-1.0"

] | null | null | null | README.md | samuel123457/samuel-samarpana-boye | 989b831bc1d6291a22a208fac157440852dbcf6b | [

"BSL-1.0"

] | null | null | null | README.md | samuel123457/samuel-samarpana-boye | 989b831bc1d6291a22a208fac157440852dbcf6b | [

"BSL-1.0"

] | null | null | null | # samuel-samarpana-boye

nothing

| 10.666667 | 23 | 0.8125 | eng_Latn | 0.134423 |

1c1698248dd9c97d0d2177e92fd5e50c23b1100d | 342 | md | Markdown | CHANGELOG.md | Ardesco/Query | 02d5f93811adaa7553f21cf8d63f420c6c14bc84 | [

"Apache-2.0"

] | 11 | 2017-11-20T08:35:56.000Z | 2020-04-15T20:04:35.000Z | CHANGELOG.md | Ardesco/Query | 02d5f93811adaa7553f21cf8d63f420c6c14bc84 | [

"Apache-2.0"

] | 7 | 2017-11-20T20:16:44.000Z | 2019-02-14T07:40:28.000Z | CHANGELOG.md | Ardesco/Query | 02d5f93811adaa7553f21cf8d63f420c6c14bc84 | [

"Apache-2.0"

] | 5 | 2018-06-12T10:39:04.000Z | 2020-08-13T02:31:19.000Z | # Changelog

##Next Version (Release Date TBC) Release Notes

##Version 1.2.0 Release Notes

* Modify Query instantiation so that it requires a driver object for each query object to make it thread safe.

##Version 1.1.0 Release Notes

* Add support for Appium MobileElement.

##Version 1.0.0 Release Notes

* Initial re... | 22.8 | 110 | 0.760234 | eng_Latn | 0.925375 |

1c16b213430936cad9ba39e4fdf840f6191c9001 | 14,054 | md | Markdown | CHANGELOG.md | carltonstale/zeitwerk | 914cb1a752c70dc0798c476c266cc8dd35b5359f | [

"MIT"

] | null | null | null | CHANGELOG.md | carltonstale/zeitwerk | 914cb1a752c70dc0798c476c266cc8dd35b5359f | [

"MIT"

] | null | null | null | CHANGELOG.md | carltonstale/zeitwerk | 914cb1a752c70dc0798c476c266cc8dd35b5359f | [

"MIT"

] | null | null | null | # CHANGELOG

## 2.5.4 (28 January 2022)

* If a file did not define the expected constant, there was a reload, and there were `on_unload` callbacks, Zeitwerk still tried to access the constant during reload, which raised. This has been corrected.

## 2.5.3 (30 December 2021)

* The change introduced in 2.5.2 implied a ... | 39.366947 | 493 | 0.750818 | eng_Latn | 0.994623 |

1c17921febf00a3dabd5e29b6728d908a0e3e0d9 | 2,348 | md | Markdown | data/blog/Indices-and-Range-in-csharp.md | jeevan-vj/iamjeevan | 975307ca8644361b676682651bb2e62136a169b6 | [

"MIT"

] | 1 | 2021-09-29T04:11:19.000Z | 2021-09-29T04:11:19.000Z | data/blog/Indices-and-Range-in-csharp.md | jeevan-vj/iamjeevan | 975307ca8644361b676682651bb2e62136a169b6 | [

"MIT"

] | 3 | 2021-09-13T08:20:03.000Z | 2021-11-19T01:46:24.000Z | data/blog/Indices-and-Range-in-csharp.md | jeevan-vj/iamjeevan | 975307ca8644361b676682651bb2e62136a169b6 | [

"MIT"

] | 1 | 2021-09-13T08:14:53.000Z | 2021-09-13T08:14:53.000Z | ---

title: 'Indices and Ranges in C#'

date: '2021-09-10'

tags: ['c#']

draft: false

summary: 'Indices and Ranges in C#'

---

# Indices and Range in C#

Indices and Range provide clear, concise syntax to access a single element or a range of elements in a sequence.

[Official Doc](https://docs.microsoft.com/en-us/dotnet/... | 22.796117 | 142 | 0.68569 | eng_Latn | 0.848784 |

1c179c8c2f3927f1ac0c279b9d758fe7431b5bd7 | 6,988 | md | Markdown | web-dev/http2.md | ntk148v/research | 52d8942078f6a81f940cd59fd65f1b053fca7c3b | [

"Apache-2.0"

] | 1 | 2017-11-24T10:45:14.000Z | 2017-11-24T10:45:14.000Z | web-dev/http2.md | ntk148v/learning | 9c868dc389e978f996521bc73edf3c69a4504341 | [

"Apache-2.0"

] | null | null | null | web-dev/http2.md | ntk148v/learning | 9c868dc389e978f996521bc73edf3c69a4504341 | [

"Apache-2.0"

] | null | null | null | # HTTP/2.0

Source: https://developers.google.com/web/fundamentals/performance/http2

- [HTTP/2.0](#http20)

- [1. Introduction](#1-introduction)

- [2. Design](#2-design)

- [2.1. Binary framing layer](#21-binary-framing-layer)

- [2.2. Streams, messages, and frames](#22-streams-messages-and-frames)

- [2.3... | 60.241379 | 270 | 0.774041 | eng_Latn | 0.995673 |

1c17a446949a77691ad572dcfd1edcee336af269 | 812 | md | Markdown | README.md | omegascorp/sequelize-mariadb-json-test | a06c0ce5e8da2a9c263a09b49d869ff56366c4e5 | [

"MIT"

] | null | null | null | README.md | omegascorp/sequelize-mariadb-json-test | a06c0ce5e8da2a9c263a09b49d869ff56366c4e5 | [

"MIT"

] | 1 | 2021-05-10T15:40:18.000Z | 2021-05-10T15:40:18.000Z | README.md | omegascorp/sequelize-mariadb-json-test | a06c0ce5e8da2a9c263a09b49d869ff56366c4e5 | [

"MIT"

] | null | null | null | # It's en experiment that reads JSON fields from MariaDB via Sequelize

## Init

```

yarn && yarn db-init

```

## Run

```

docker-compose up

```

```

yarn start

```

## Issue description

There are 2 models defined Users and Projects. User has many projects and they are associated via userId filed.

```

User {

id: IN... | 15.615385 | 112 | 0.646552 | eng_Latn | 0.972138 |

1c1828f76cd5b62d4c3bf32f4a1b9ebb6d351bd7 | 114 | md | Markdown | go/Readme.md | joshuawalcher/dockerfile-boilerplates | caff607487e8733fc157671de16a66325adab107 | [

"MIT"

] | 229 | 2020-06-03T16:43:04.000Z | 2022-03-13T07:51:48.000Z | go/Readme.md | joshuawalcher/dockerfile-boilerplates | caff607487e8733fc157671de16a66325adab107 | [

"MIT"

] | 5 | 2020-06-04T02:30:24.000Z | 2020-06-05T07:12:46.000Z | go/Readme.md | joshuawalcher/dockerfile-boilerplates | caff607487e8733fc157671de16a66325adab107 | [

"MIT"

] | 22 | 2020-06-03T20:40:30.000Z | 2022-03-12T21:16:57.000Z | # Go Boilerplate Docker

Build and run Go scripts via docker.

```

docker build -t go .

docker run --rm go

```

| 11.4 | 36 | 0.657895 | kor_Hang | 0.484091 |

1c192ed199ccaf68b767d9de0050daab9e43db13 | 5,622 | md | Markdown | _posts/2019-08-02-hardcore-audio-3.md | shaoguoji/shaoguoji.github.io | 66bfe7bfb48e20b92522b686853521c5e30bcb63 | [

"Apache-2.0"

] | null | null | null | _posts/2019-08-02-hardcore-audio-3.md | shaoguoji/shaoguoji.github.io | 66bfe7bfb48e20b92522b686853521c5e30bcb63 | [

"Apache-2.0"

] | 19 | 2017-08-03T15:43:32.000Z | 2018-07-20T10:58:00.000Z | _posts/2019-08-02-hardcore-audio-3.md | shaoguoji/shaoguoji.github.io | 66bfe7bfb48e20b92522b686853521c5e30bcb63 | [

"Apache-2.0"

] | 3 | 2018-10-01T10:40:46.000Z | 2021-05-27T11:37:06.000Z | ---

layout: post

title: 硬核音频系列(三)—— 线性淡入淡出

subtitle: 算法思路、实现与优化方法描述

date: 2019-08-02 15:12:29 +0800

author: Shao Guoji

header-img: img/post-bg-hardcore-audio.jpg

catalog: true

tag:

- 学习笔记

- 嵌入式

- 数字音频

---

*硬核音频系列文章列表:*

* [硬核音频系列(一)—— 声音信息的表示:基础概念扫... | 28.11 | 180 | 0.728922 | yue_Hant | 0.233177 |

1c1a0f97f9dddda8e6a6045a39babf1bfc3d54fc | 960 | md | Markdown | README.md | Dorisqi/foodie-connector | b463068c49226f464b640fe7fcaec14cf2ea8966 | [

"MIT"

] | null | null | null | README.md | Dorisqi/foodie-connector | b463068c49226f464b640fe7fcaec14cf2ea8966 | [

"MIT"

] | null | null | null | README.md | Dorisqi/foodie-connector | b463068c49226f464b640fe7fcaec14cf2ea8966 | [

"MIT"

] | null | null | null | # Foodie Connector

**This project is not production ready.**

This is a project for CS 373 Software Engineering I at Purdue University. This is a food delivery platform where you can place group orders with your friends and neighbors.

## Run the frontend

The frontend is a [React](https://reactjs.org/) application. T... | 53.333333 | 300 | 0.759375 | eng_Latn | 0.9994 |

1c1a66ce1f58e680722bdc8f6f97e69f4c7e455e | 994 | md | Markdown | README.md | c-rainstorm/common-utils | 2c0201bce7e1af3d3c9ab7e2a85a968da539e387 | [

"MIT"

] | null | null | null | README.md | c-rainstorm/common-utils | 2c0201bce7e1af3d3c9ab7e2a85a968da539e387 | [

"MIT"

] | null | null | null | README.md | c-rainstorm/common-utils | 2c0201bce7e1af3d3c9ab7e2a85a968da539e387 | [

"MIT"

] | 1 | 2020-10-02T13:41:17.000Z | 2020-10-02T13:41:17.000Z | # common-utils

项目中可以使用的通用工具

## 报表导出工具

```java

private void doExport(int sizePreExport, int totalSize, String sheetNamePrefix, int sheetSize, Function<Integer, List<AllInOneClass>> supplier) throws Exception {

File exportFile = Paths.get(EXPORT_DIR, sheetNamePrefix + "-" +

DateTimeFormatterUtil.get(Dat... | 43.217391 | 162 | 0.65996 | yue_Hant | 0.468037 |

1c1ad00e30234eb977d29476e7ce665f08661864 | 10,720 | md | Markdown | _posts/2020-10-04-learning-curve.md | lmc2179/lmc2179.github.io | 45b835cebf43935206f313fdf0e1bdce7ad2716b | [

"MIT"

] | null | null | null | _posts/2020-10-04-learning-curve.md | lmc2179/lmc2179.github.io | 45b835cebf43935206f313fdf0e1bdce7ad2716b | [

"MIT"

] | 1 | 2020-11-01T05:35:06.000Z | 2020-11-01T05:35:06.000Z | _posts/2020-10-04-learning-curve.md | lmc2179/lmc2179.github.io | 45b835cebf43935206f313fdf0e1bdce7ad2716b | [

"MIT"

] | null | null | null | ---

layout: post

title: "Would collecting more data improve my model's predictions? The learning curve and the value of incremental samples"

author: "Louis Cialdella"

categories: posts

tags: [data-science]

image: wine.jpg

---

*Since we usually need to pay for data (either with money to buy it or effort to collect it),... | 84.409449 | 1,173 | 0.782276 | eng_Latn | 0.998477 |

1c1b023dfeb989ef57a58a8e7aa5044f92e572cb | 974 | md | Markdown | _posts_older/2013-04-06-makethumbwd-2013.md | brontosaurusrex/brontosaurusrex.github.io | 7a3b6a21f36327c176849ac75c7c55f603646c0e | [

"MIT"

] | 1 | 2018-12-30T05:17:13.000Z | 2018-12-30T05:17:13.000Z | _posts_older/2013-04-06-makethumbwd-2013.md | brontosaurusrex/brontosaurusrex.github.io | 7a3b6a21f36327c176849ac75c7c55f603646c0e | [

"MIT"

] | 3 | 2017-01-26T21:04:51.000Z | 2020-02-22T11:57:10.000Z | _posts_older/2013-04-06-makethumbwd-2013.md | brontosaurusrex/brontosaurusrex.github.io | 7a3b6a21f36327c176849ac75c7c55f603646c0e | [

"MIT"

] | 4 | 2018-02-28T16:08:19.000Z | 2019-06-19T21:05:05.000Z | ---

id: 2539

title: makethumbwd 2013

date: 2013-04-06T20:53:12+00:00

author: bronto saurus

layout: post

guid: http://b.pwnz.org/?p=2539

permalink: /2013/04/makethumbwd-2013/

categories:

- Uncategorized

---

<pre>#!/bin/bash

# makethumbwd 2013

# I need mtn (http://moviethumbnail.sourceforge.net/)

# and image magic... | 21.644444 | 90 | 0.649897 | eng_Latn | 0.380679 |

1c1b41396aa2d9146cbbc9f581f9211c37ddec08 | 2,685 | md | Markdown | sdk-api-src/content/tapi3if/nf-tapi3if-itcallstateevent-get_cause.md | amorilio/sdk-api | 54ef418912715bd7df39c2561fbc3d1dcef37d7e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | sdk-api-src/content/tapi3if/nf-tapi3if-itcallstateevent-get_cause.md | amorilio/sdk-api | 54ef418912715bd7df39c2561fbc3d1dcef37d7e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | sdk-api-src/content/tapi3if/nf-tapi3if-itcallstateevent-get_cause.md | amorilio/sdk-api | 54ef418912715bd7df39c2561fbc3d1dcef37d7e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

UID: NF:tapi3if.ITCallStateEvent.get_Cause

title: ITCallStateEvent::get_Cause (tapi3if.h)

description: The get_Cause method gets the cause associated with this event.

helpviewer_keywords: ["ITCallStateEvent interface [TAPI 2.2]","get_Cause method","ITCallStateEvent.get_Cause","ITCallStateEvent::get_Cause","_tapi3_i... | 22.948718 | 350 | 0.747486 | yue_Hant | 0.598672 |

1c1bc8386f4623fbbd3aaa778d7f5f13a88b5bf0 | 2,360 | md | Markdown | docs/vs-2015/debugger/debug-interface-access/idiasymbol-get-virtualbasetabletype.md | tommorris/visualstudio-docs.cs-cz | 92c436dbc75020bc5121cc2c9e4976f62c9b13ca | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/vs-2015/debugger/debug-interface-access/idiasymbol-get-virtualbasetabletype.md | tommorris/visualstudio-docs.cs-cz | 92c436dbc75020bc5121cc2c9e4976f62c9b13ca | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/vs-2015/debugger/debug-interface-access/idiasymbol-get-virtualbasetabletype.md | tommorris/visualstudio-docs.cs-cz | 92c436dbc75020bc5121cc2c9e4976f62c9b13ca | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Idiasymbol::get_virtualbasetabletype – | Dokumentace Microsoftu

ms.custom: ''

ms.date: 2018-06-30

ms.prod: visual-studio-dev14

ms.reviewer: ''

ms.suite: ''

ms.technology:

- vs-ide-debug

ms.tgt_pltfrm: ''

ms.topic: article

dev_langs:

- C++

helpviewer_keywords:

- IDiaSymbol::get_virtualBaseTableType method

ms.... | 32.777778 | 240 | 0.713983 | ces_Latn | 0.934264 |

1c1c1a4f606159123cc09944c20f38439736d99a | 10,262 | md | Markdown | pages/guides/base-tech/information-system-design/gbt_uml.ru.md | Flexberry/Documentation | 891362f896aeef59f057f92dc4429512558b7798 | [

"MIT",

"BSD-3-Clause"

] | 8 | 2016-11-21T09:50:04.000Z | 2020-07-19T08:14:29.000Z | pages/guides/base-tech/information-system-design/gbt_uml.ru.md | Flexberry/Documentation | 891362f896aeef59f057f92dc4429512558b7798 | [

"MIT",

"BSD-3-Clause"

] | 34 | 2018-08-13T12:46:25.000Z | 2020-09-04T10:17:11.000Z | pages/guides/base-tech/information-system-design/gbt_uml.ru.md | Flexberry/Documentation | 891362f896aeef59f057f92dc4429512558b7798 | [

"MIT",

"BSD-3-Clause"

] | 35 | 2016-08-29T10:29:16.000Z | 2022-03-26T20:55:49.000Z | ---

title: UML

keywords: Programming

sidebar: guide-base-tech_sidebar

toc: true

permalink: ru/gbt_uml.html

folder: guides/base-tech/information-system-design/

lang: ru

---

## Краткое описание

UML (англ. Unified Modeling Language — унифицированный язык моделирования) — язык графического описания для объектного моделир... | 62.193939 | 373 | 0.805496 | rus_Cyrl | 0.988003 |

1c1c3aa4f2a30218c688d695c2ddb3cfb670b89c | 1,442 | md | Markdown | cmd/dctor/README.md | dennwc/go-dcpp | 0332759e7a0b6e0492171a383e03c34c3d1f4394 | [

"BSD-3-Clause"

] | 28 | 2019-02-03T10:12:31.000Z | 2022-01-12T12:23:21.000Z | cmd/dctor/README.md | dennwc/go-dcpp | 0332759e7a0b6e0492171a383e03c34c3d1f4394 | [

"BSD-3-Clause"

] | 97 | 2019-01-29T03:10:44.000Z | 2021-06-07T22:19:31.000Z | cmd/dctor/README.md | dennwc/go-dcpp | 0332759e7a0b6e0492171a383e03c34c3d1f4394 | [

"BSD-3-Clause"

] | 13 | 2019-01-29T03:10:53.000Z | 2021-11-06T12:10:26.000Z | # Tor bridge for DC

This program implements a fully-functional ADC bridge over Tor.

Features:

- Tor is embedded into the bridge - no need to run separately.

- All features like chat, search, file download/upload are supported.

TODOs:

- Only Tor C-C connections are supported at the moment. Mixed connections can be im... | 28.84 | 99 | 0.748266 | eng_Latn | 0.999094 |

1c1cad7484c8372b8ff5a1f750e06d69817090c0 | 1,875 | md | Markdown | internal/js-parser/test-fixtures/typescript/types/tuple-labeled/input.test.md | mainangethe/tools | a030d7efe77ccbf3b4fcc1f1d86fd1de29e2743f | [

"MIT"

] | null | null | null | internal/js-parser/test-fixtures/typescript/types/tuple-labeled/input.test.md | mainangethe/tools | a030d7efe77ccbf3b4fcc1f1d86fd1de29e2743f | [

"MIT"

] | null | null | null | internal/js-parser/test-fixtures/typescript/types/tuple-labeled/input.test.md | mainangethe/tools | a030d7efe77ccbf3b4fcc1f1d86fd1de29e2743f | [

"MIT"

] | null | null | null | # `index.test.ts`

**DO NOT MODIFY**. This file has been autogenerated. Run `rome test internal/js-parser/index.test.ts --update-snapshots` to update.

## `typescript > types > tuple-labeled`

### `ast`

```javascript

JSRoot {

comments: Array []

corrupt: false

diagnostics: Array []

directives: Array []

hasHoistedV... | 27.985075 | 131 | 0.690667 | kor_Hang | 0.280441 |

1c1e15cbbef2375ca724ee60967809142da3aeb8 | 2,592 | md | Markdown | portfolio/orinoco.md | SamuelRiveraC/SamuelRiveraC.github.io | b69f122c40183430be38633b7e5bc207aede4f10 | [

"MIT"

] | null | null | null | portfolio/orinoco.md | SamuelRiveraC/SamuelRiveraC.github.io | b69f122c40183430be38633b7e5bc207aede4f10 | [

"MIT"

] | 1 | 2021-08-02T14:02:41.000Z | 2021-08-02T14:37:23.000Z | portfolio/orinoco.md | SamuelRiveraC/samuelriverac.github.io | b69f122c40183430be38633b7e5bc207aede4f10 | [

"MIT"

] | null | null | null | ---

title: Orinoco.io

date: "2018-04-01T22:12:03.284Z"

excerpt: "Front end web development for a Criptoexchange and fintech, with vue, vuetify and graphql"

description: "<p>Even before finishing my internship I landed a job at a local crypto exchange. They had a very simple system made on plain PHP where clients added... | 78.545455 | 1,150 | 0.783179 | eng_Latn | 0.999163 |

1c1e334c66be6d7b728c39ad4fbc9c9447f0eb9f | 796 | md | Markdown | content/en/faq/applications/zookeeper.md | tialouden/istio.io | 53ddedae0586972609e48ac0b70a4b81b960f4d9 | [

"Apache-2.0"

] | null | null | null | content/en/faq/applications/zookeeper.md | tialouden/istio.io | 53ddedae0586972609e48ac0b70a4b81b960f4d9 | [

"Apache-2.0"

] | null | null | null | content/en/faq/applications/zookeeper.md | tialouden/istio.io | 53ddedae0586972609e48ac0b70a4b81b960f4d9 | [

"Apache-2.0"

] | null | null | null | ---

title: Can I run Zookeeper inside an Istio mesh?

description: How to run Zookeeper with Istio.

weight: 50

keywords: [zookeeper]

test: no

---

By default, Zookeeper listens on the pod IP address for communication

between servers. Istio and other service meshes require `localhost`

(`127.0.0.1`) to be the address to l... | 31.84 | 86 | 0.765075 | eng_Latn | 0.97882 |

1c1e386b995e321408cb80f1fa1a91f19c94cc2d | 559 | md | Markdown | README.md | Hadsake/Yard-mc-Bot | 71b8b50c7af8bcd7ec058ecf38900059e4f33924 | [

"Unlicense"

] | null | null | null | README.md | Hadsake/Yard-mc-Bot | 71b8b50c7af8bcd7ec058ecf38900059e4f33924 | [

"Unlicense"

] | null | null | null | README.md | Hadsake/Yard-mc-Bot | 71b8b50c7af8bcd7ec058ecf38900059e4f33924 | [

"Unlicense"

] | null | null | null | # VaxeTurkiye-Dosyalari

VaxeTurkiye 2017.11.23 Acilmistir ve Para Olmadi icin Kapanmistir.Bu Botu Yapimcisi LMD | xChairs#4713 Yeni Botumuz Olan Discord ve Eglen Eklemek Icin:https://discordapp.com/oauth2/authorize?client_id=446379688839872532&scope=bot&permissions=2146958591

Github'da yayınlanan Türkçe, Discor... | 50.818182 | 260 | 0.842576 | tur_Latn | 0.996239 |

1c1edbaf327b234b0a717428830bdd6a182ef387 | 1,833 | md | Markdown | README.md | daothuydung/daothuydung.github.io | c746a43f2a5cb3de35b142ea8ed0f3c6b8762459 | [

"MIT"

] | 1 | 2017-03-12T01:01:11.000Z | 2017-03-12T01:01:11.000Z | README.md | daothuydung/daothuydung.github.io | c746a43f2a5cb3de35b142ea8ed0f3c6b8762459 | [

"MIT"

] | null | null | null | README.md | daothuydung/daothuydung.github.io | c746a43f2a5cb3de35b142ea8ed0f3c6b8762459 | [

"MIT"

] | null | null | null | #New Age Jekyll theme

=========================

## If you are a company and you're going to use the blog:

1. contact bootstrap start up and ask.

2. contact me because there is to remove some useless part.

Jekyll theme based on [New Age bootstrap theme ](https://startbootstrap.com/template-overviews/new-age/)

# Demo... | 39 | 189 | 0.754501 | eng_Latn | 0.992672 |

1c1f1094d5f7b3805499fd623748df380d44ed8f | 1,197 | md | Markdown | docs/column-definition/cell.md | keeslinp/reactabular | 85941babca56453f1c9c0a7809bff72e9a47ed9a | [

"MIT"

] | 715 | 2016-07-12T11:22:33.000Z | 2022-03-12T16:46:09.000Z | docs/column-definition/cell.md | keeslinp/reactabular | 85941babca56453f1c9c0a7809bff72e9a47ed9a | [

"MIT"

] | 219 | 2016-07-11T13:16:00.000Z | 2021-04-22T09:36:11.000Z | docs/column-definition/cell.md | keeslinp/reactabular | 85941babca56453f1c9c0a7809bff72e9a47ed9a | [

"MIT"

] | 132 | 2016-07-15T14:27:04.000Z | 2021-07-15T09:10:52.000Z | In addition to `header` customization, it's essential to define how the rows should map to content. This is achieved through `cell` fields.

## **`cell.transforms`**

```javascript

cell.transforms = [

(

<value>, {

columnIndex: <number>,

column: <object>,

rowData: <object>,

rowIndex: <numbe... | 17.347826 | 142 | 0.570593 | eng_Latn | 0.950569 |

1c20bbc49aaebc59ce0d1aa9e9ed84ea44f3d26d | 24 | md | Markdown | README.md | selcux/terraform-azure-sample | d8d754baf870b56a665b150d47a338a9dbd9fa47 | [

"MIT"

] | null | null | null | README.md | selcux/terraform-azure-sample | d8d754baf870b56a665b150d47a338a9dbd9fa47 | [

"MIT"

] | null | null | null | README.md | selcux/terraform-azure-sample | d8d754baf870b56a665b150d47a338a9dbd9fa47 | [

"MIT"

] | null | null | null | # terraform-azure-sample | 24 | 24 | 0.833333 | eng_Latn | 0.341109 |

1c20c22773f8c526949067ace32a132a89f7e58d | 944 | md | Markdown | README.md | seancrowe/node-chiliservice | 9c6d681d2faa4917e036db41f7213d190f7581c8 | [

"MIT"

] | 1 | 2019-11-19T08:33:21.000Z | 2019-11-19T08:33:21.000Z | README.md | seancrowe/node-chiliservice | 9c6d681d2faa4917e036db41f7213d190f7581c8 | [

"MIT"

] | 2 | 2020-07-17T13:11:25.000Z | 2021-05-09T19:26:25.000Z | README.md | seancrowe/node-chiliservice | 9c6d681d2faa4917e036db41f7213d190f7581c8 | [

"MIT"

] | null | null | null | ChiliService

=========

A small library that allows you to easily make web service calls to your CHILI Server

This module was developed for servers running CHILI Publisher >5.4

It will work with old CHILI installs, but some functions (like DocumentProcessServerSide) will cause a "Error! Function does not exist" error... | 24.842105 | 142 | 0.663136 | eng_Latn | 0.975788 |

1c20eef93fb3a8c9d29ae44a6a80532a1df9097c | 8,598 | md | Markdown | articles/azure-functions/create-first-function-vs-code-python.md | gustavodelima/azure-docs.pt-br | 07f02d5a6f3328b5b720c3091518e805d37590b8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/azure-functions/create-first-function-vs-code-python.md | gustavodelima/azure-docs.pt-br | 07f02d5a6f3328b5b720c3091518e805d37590b8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/azure-functions/create-first-function-vs-code-python.md | gustavodelima/azure-docs.pt-br | 07f02d5a6f3328b5b720c3091518e805d37590b8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Criar uma função Python usando o Visual Studio Code – Azure Functions

description: Saiba como criar uma função Python e publique o projeto local por meio da hospedagem sem servidor no Azure Functions usando a extensão do Azure Functions no Visual Studio Code.

ms.topic: quickstart

ms.date: 11/04/2020

ms.custo... | 63.688889 | 430 | 0.770644 | por_Latn | 0.998965 |

1c23707331d3ce4eafd31ab4a6a8efe25e2e8126 | 1,223 | md | Markdown | site/content/amis_amants/_index.md | yutaro-komaji/kubiswara-z | 627d2f5afc3a3a2ef13349e9e87d916ec937889c | [

"MIT"

] | null | null | null | site/content/amis_amants/_index.md | yutaro-komaji/kubiswara-z | 627d2f5afc3a3a2ef13349e9e87d916ec937889c | [

"MIT"

] | 4 | 2021-03-10T09:32:16.000Z | 2022-02-18T21:49:02.000Z | site/content/amis_amants/_index.md | yutaro-komaji/kubiswara-z | 627d2f5afc3a3a2ef13349e9e87d916ec937889c | [

"MIT"

] | null | null | null | ---

title: "Light My Cigarette"

anchor:

- anchorItem: "introduction"

anchorItemtxt: INTRODUCTION

- anchorItem: "live"

anchorItemtxt: LIVE

anchor02:

- anchorItem: "music"

anchorItemtxt: MUSIC

- anchorItem: "movie"

anchorItemtxt: MOVIE

pageUrl: /lmc/

introduction:

- heading: "I... | 23.075472 | 129 | 0.666394 | yue_Hant | 0.539425 |

1c23a599e81e9733bb1e3f4bc07419417798a558 | 1,446 | md | Markdown | _posts/2011-08-31-how-to-find-your-external-ip-address-from-the-command-line.md | benhamilton/benhamilton.github.io | 798e7bbbed048b8bb03ec72864f5249c3bb9dcf4 | [

"MIT"

] | null | null | null | _posts/2011-08-31-how-to-find-your-external-ip-address-from-the-command-line.md | benhamilton/benhamilton.github.io | 798e7bbbed048b8bb03ec72864f5249c3bb9dcf4 | [

"MIT"

] | null | null | null | _posts/2011-08-31-how-to-find-your-external-ip-address-from-the-command-line.md | benhamilton/benhamilton.github.io | 798e7bbbed048b8bb03ec72864f5249c3bb9dcf4 | [

"MIT"

] | null | null | null | ---

layout: post

title: How to find your external ip address from the command line

permalink: /microsoft/how-to-find-your-external-ip-address-from-the-command-line

post_id: 422

categories:

- Command

- How to

- IP

- Microsoft

- Script

---

I often need to know what the external IP address for a client is. Thus I've cobb... | 39.081081 | 230 | 0.766252 | eng_Latn | 0.964936 |

1c24bea4097a7f6a23a4cca1f2b09b767e840c8d | 112 | md | Markdown | README.md | fmaj7/oreo-sample | 0e347bf140b25e9fd0736928c5d2937b13642e6c | [

"MIT"

] | null | null | null | README.md | fmaj7/oreo-sample | 0e347bf140b25e9fd0736928c5d2937b13642e6c | [

"MIT"

] | null | null | null | README.md | fmaj7/oreo-sample | 0e347bf140b25e9fd0736928c5d2937b13642e6c | [

"MIT"

] | null | null | null | oreo-sample

===========

https://codeship.com/projects/0376e580-77e8-0132-6e6e-3ee8152094db/status?branch=master

| 28 | 87 | 0.75 | yue_Hant | 0.121365 |

1c24e5780411da0dbd7f1b32729ab59954e77e2b | 908 | md | Markdown | docs/error-messages/compiler-errors-2/compiler-error-c3154.md | littlenine/cpp-docs.zh-tw | 811b8d2137808d9d0598fbebec6343fdfa88c79e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/error-messages/compiler-errors-2/compiler-error-c3154.md | littlenine/cpp-docs.zh-tw | 811b8d2137808d9d0598fbebec6343fdfa88c79e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/error-messages/compiler-errors-2/compiler-error-c3154.md | littlenine/cpp-docs.zh-tw | 811b8d2137808d9d0598fbebec6343fdfa88c79e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: 編譯器錯誤 C3154

ms.date: 11/04/2016

f1_keywords:

- C3154

helpviewer_keywords:

- C3154

ms.assetid: 78005c74-eaaf-4ac2-88ae-6c25d01a302a

ms.openlocfilehash: 9f7af4e19fab5f5a0539e9fc3bf9dbeffb5c6fbf

ms.sourcegitcommit: c6f8e6c2daec40ff4effd8ca99a7014a3b41ef33

ms.translationtype: MT

ms.contentlocale: zh-TW

ms.lastha... | 21.619048 | 99 | 0.672907 | yue_Hant | 0.202719 |

1c24f23458b38516f892eaae81926a962e46b0bb | 136 | md | Markdown | docs/cursos/procesos/uncordobax/mcm002/_sidebar.md | SidVal/conocimientos | 3e00e5574f0a45446b8169cef6417a8d72e1a891 | [

"MIT"

] | null | null | null | docs/cursos/procesos/uncordobax/mcm002/_sidebar.md | SidVal/conocimientos | 3e00e5574f0a45446b8169cef6417a8d72e1a891 | [

"MIT"

] | null | null | null | docs/cursos/procesos/uncordobax/mcm002/_sidebar.md | SidVal/conocimientos | 3e00e5574f0a45446b8169cef6417a8d72e1a891 | [

"MIT"

] | null | null | null | * <a href="javascript:history.back()">Atrás</a>

* [Clases](/cursos/marketing/uncordobax/)

* [Contenido](/c/)

* [☆](/medium.md#estrella)

| 27.2 | 47 | 0.654412 | spa_Latn | 0.10065 |

1c254f60327503557773124e3dce115cc78628f3 | 363 | md | Markdown | packages/morphic-fastcheck-interpreters/docs/modules/model/object.ts.md | Brettm12345/morphic-ts | 7daf85ec739b13bf8a149888c3ccf79654637449 | [

"MIT"

] | null | null | null | packages/morphic-fastcheck-interpreters/docs/modules/model/object.ts.md | Brettm12345/morphic-ts | 7daf85ec739b13bf8a149888c3ccf79654637449 | [

"MIT"

] | null | null | null | packages/morphic-fastcheck-interpreters/docs/modules/model/object.ts.md | Brettm12345/morphic-ts | 7daf85ec739b13bf8a149888c3ccf79654637449 | [

"MIT"

] | null | null | null | ---

title: model/object.ts

nav_order: 9

parent: Modules

---

---

<h2 class="text-delta">Table of contents</h2>

- [fastCheckObjectInterpreter (constant)](#fastcheckobjectinterpreter-constant)

---

# fastCheckObjectInterpreter (constant)

**Signature**

```ts

export const fastCheckObjectInterpreter: ModelAlgebraObject... | 15.125 | 80 | 0.732782 | eng_Latn | 0.211783 |

1c25b76d18068e6017cfe5dff1056eeb74d69690 | 10,300 | md | Markdown | socrata/rv3g-ypg7.md | axibase/open-data-catalog | 18210b49b6e2c7ef05d316b6699d2f0778fa565f | [

"Apache-2.0"

] | 7 | 2017-05-02T16:08:17.000Z | 2021-05-27T09:59:46.000Z | socrata/rv3g-ypg7.md | axibase/open-data-catalog | 18210b49b6e2c7ef05d316b6699d2f0778fa565f | [

"Apache-2.0"

] | 5 | 2017-11-27T15:40:39.000Z | 2017-12-05T14:34:14.000Z | socrata/rv3g-ypg7.md | axibase/open-data-catalog | 18210b49b6e2c7ef05d316b6699d2f0778fa565f | [

"Apache-2.0"

] | 3 | 2017-03-03T14:48:48.000Z | 2019-05-23T12:57:42.000Z | # Calls for Service 2012

## Dataset

| Name | Value |

| :--- | :---- |

| Catalog | [Link](https://catalog.data.gov/dataset/calls-for-service-2012) |

| Metadata | [Link](https://data.nola.gov/api/views/rv3g-ypg7) |

| Data: JSON | [100 Rows](https://data.nola.gov/api/views/rv3g-ypg7/rows.json?max_rows=100) |

| Data: CSV... | 100.980392 | 2,694 | 0.633204 | eng_Latn | 0.614963 |

1c26ce00c2615a80e5d9dc3f2f99c8f686791be9 | 2,559 | md | Markdown | README.md | chaowang1994/ali-tianchi | 27575ce01efcb4d0e816fa681cb8b996d941f17b | [

"BSD-2-Clause"

] | 1 | 2018-10-10T14:14:56.000Z | 2018-10-10T14:14:56.000Z | README.md | chaowang1994/ali-tianchi | 27575ce01efcb4d0e816fa681cb8b996d941f17b | [

"BSD-2-Clause"

] | null | null | null | README.md | chaowang1994/ali-tianchi | 27575ce01efcb4d0e816fa681cb8b996d941f17b | [

"BSD-2-Clause"

] | null | null | null | # Caffe

## 1. 新增加常见[数据增强方式](https://github.com/twtygqyy/caffe-augmentation)

2018.10.07 新增

- min_side - resize and crop preserving aspect ratio, default 0 (disabled);

- max_rotation_angle - max angle for an image rotation, default 0;

- contrast_brightness_adjustment - enable/disable contrast adjustment, default fa... | 19.534351 | 170 | 0.722939 | eng_Latn | 0.672663 |

1c274f54ed1445a4ad8d82213461c441bb5cad49 | 5,637 | md | Markdown | README.md | nageshwaran08/OCS-GLPI-Installation-on-CentOS-7 | cabd8c987e272b41b069194e042c8bef2f841bb7 | [

"PHP-3.01",

"PHP-3.0",

"IJG",

"Zend-2.0"

] | null | null | null | README.md | nageshwaran08/OCS-GLPI-Installation-on-CentOS-7 | cabd8c987e272b41b069194e042c8bef2f841bb7 | [

"PHP-3.01",

"PHP-3.0",

"IJG",

"Zend-2.0"

] | null | null | null | README.md | nageshwaran08/OCS-GLPI-Installation-on-CentOS-7 | cabd8c987e272b41b069194e042c8bef2f841bb7 | [

"PHP-3.01",

"PHP-3.0",

"IJG",

"Zend-2.0"

] | null | null | null | ## OCS Installation

1. Install this on Centos 7 Minimal installation

1. Install wget

```

yum install wget -y

```

1. Download and run [setup.sh](https://github.com/nageshwaran08/OCS-GLPI-Installation-on-CentOS-7/blob/master/setup.sh) on the server

```

https://raw.githubusercontent.com/nageshwaran08... | 24.723684 | 165 | 0.644669 | yue_Hant | 0.422194 |

1c288fe0540925c2ed3c64e539f284a06266151a | 2,078 | md | Markdown | wdk-ddi-src/content/wditypes/ns-wditypes-_wdi_type_pmk_name.md | AlexGuteniev/windows-driver-docs-ddi | 99b84cea8977c8b190d39e65ccf26f1885ba3189 | [

"CC-BY-4.0",

"MIT"

] | 176 | 2018-01-12T23:42:01.000Z | 2022-03-30T18:23:27.000Z | wdk-ddi-src/content/wditypes/ns-wditypes-_wdi_type_pmk_name.md | AlexGuteniev/windows-driver-docs-ddi | 99b84cea8977c8b190d39e65ccf26f1885ba3189 | [

"CC-BY-4.0",

"MIT"

] | 1,093 | 2018-01-23T07:33:03.000Z | 2022-03-30T20:15:21.000Z | wdk-ddi-src/content/wditypes/ns-wditypes-_wdi_type_pmk_name.md | AlexGuteniev/windows-driver-docs-ddi | 99b84cea8977c8b190d39e65ccf26f1885ba3189 | [

"CC-BY-4.0",

"MIT"

] | 251 | 2018-01-21T07:35:50.000Z | 2022-03-22T19:33:42.000Z | ---

UID: NS:wditypes._WDI_TYPE_PMK_NAME

title: _WDI_TYPE_PMK_NAME (wditypes.h)

description: The WDI_TYPE_PMK_NAME structure defines the PMKR0Name or PMKR1Name (802.11r).

old-location: netvista\wdi_type_pmk_name.htm

tech.root: netvista

ms.date: 05/02/2018

keywords: ["WDI_TYPE_PMK_NAME structure"]

ms.keywords: "*... | 31.969231 | 426 | 0.774783 | yue_Hant | 0.718746 |

1c289c751649be8a9b9527d4b1ec5f3eb8d43268 | 2,071 | md | Markdown | content/en/resources/customization/beagle-for-ios/custom-widgets.md | otaviojava/beagle-docs | 3ed846302bfde8b938be4559bc19f45bf2b0d6bf | [

"Apache-2.0"

] | null | null | null | content/en/resources/customization/beagle-for-ios/custom-widgets.md | otaviojava/beagle-docs | 3ed846302bfde8b938be4559bc19f45bf2b0d6bf | [

"Apache-2.0"

] | null | null | null | content/en/resources/customization/beagle-for-ios/custom-widgets.md | otaviojava/beagle-docs | 3ed846302bfde8b938be4559bc19f45bf2b0d6bf | [

"Apache-2.0"

] | null | null | null | ---

title: Custom Widgets

weight: 149

description: 'You will find here, how to create a component and a Custom widgets class'

---

---

## Introduction

Beagle already has basic widgets that can be used to alter your UI application through back-end. However, you can add new components to make the views of your applicat... | 33.403226 | 234 | 0.733945 | eng_Latn | 0.997166 |

1c28cd19dd704a9eb3a1144ef03afcb0704ea969 | 5,329 | md | Markdown | docs/framework/winforms/advanced/application-settings-attributes.md | olifantix/docs.de-de | a31a14cdc3967b64f434a2055f7de6bf1bb3cda8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/winforms/advanced/application-settings-attributes.md | olifantix/docs.de-de | a31a14cdc3967b64f434a2055f7de6bf1bb3cda8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/winforms/advanced/application-settings-attributes.md | olifantix/docs.de-de | a31a14cdc3967b64f434a2055f7de6bf1bb3cda8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Attribute für Anwendungseinstellungen

ms.date: 03/30/2017

helpviewer_keywords:

- application settings [Windows Forms], attributes

- attributes [Windows Forms], application settings

- wrapper classes [Windows Forms], application settings

ms.assetid: 53caa66c-a9fb-43a5-953c-ad092590098d

ms.openlocfileha... | 121.113636 | 415 | 0.830175 | deu_Latn | 0.979152 |

1c291f2a095590b86dc300d2eb00509effdd302d | 6,704 | md | Markdown | docs/framework/unmanaged-api/debugging/icordebugmanagedcallback-interface.md | MoisesMlg/docs.es-es | 4e8c9f518ab606048dd16b6c6a43a4fa7de4bcf5 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/unmanaged-api/debugging/icordebugmanagedcallback-interface.md | MoisesMlg/docs.es-es | 4e8c9f518ab606048dd16b6c6a43a4fa7de4bcf5 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/unmanaged-api/debugging/icordebugmanagedcallback-interface.md | MoisesMlg/docs.es-es | 4e8c9f518ab606048dd16b6c6a43a4fa7de4bcf5 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: ICorDebugManagedCallback (Interfaz)

ms.date: 03/30/2017

api_name:

- ICorDebugManagedCallback

api_location:

- mscordbi.dll

api_type:

- COM

f1_keywords:

- ICorDebugManagedCallback

helpviewer_keywords:

- ICorDebugManagedCallback interface [.NET Framework debugging]

ms.assetid: b47f1d61-c7dc-4196-b926-0b08c94f70... | 78.870588 | 402 | 0.802208 | spa_Latn | 0.876285 |

1c2931f44e0bf875cef5d3cf543bb2385057613f | 506 | md | Markdown | postprocessing/README.md | Ruler0421/crawl-cfgov | 354da1fe8a2cc881004cfb8b53c6c4f84bd2fd69 | [

"CC0-1.0"

] | 10 | 2020-10-15T02:02:36.000Z | 2022-01-25T20:54:30.000Z | postprocessing/README.md | Ruler0421/crawl-cfgov | 354da1fe8a2cc881004cfb8b53c6c4f84bd2fd69 | [

"CC0-1.0"

] | 13 | 2020-10-13T15:38:54.000Z | 2021-02-13T18:08:56.000Z | postprocessing/README.md | Ruler0421/crawl-cfgov | 354da1fe8a2cc881004cfb8b53c6c4f84bd2fd69 | [

"CC0-1.0"

] | 4 | 2020-10-27T18:40:05.000Z | 2021-02-18T11:35:43.000Z | # HTML postprocessing

This directory contains [sed](https://linux.die.net/man/1/sed) scripts

used to postprocess website HTML after it has been downloaded.

These scripts are used to simplify the HTML before it is committed back to this repository.

To apply these scripts to a directory containing HTML:

```sh

./transf... | 25.3 | 94 | 0.774704 | eng_Latn | 0.999083 |

1c298abb88e16ea418750dff98f8d3aae80a659a | 112 | md | Markdown | README.md | samtron1412/samtron1412.github.io | 37c57e81a0afe604738d3a8e4b6a99f8628b2d77 | [

"MIT"

] | null | null | null | README.md | samtron1412/samtron1412.github.io | 37c57e81a0afe604738d3a8e4b6a99f8628b2d77 | [

"MIT"

] | 1 | 2021-09-28T00:15:35.000Z | 2021-09-28T00:15:35.000Z | README.md | samtron1412/samtron1412.github.io | 37c57e81a0afe604738d3a8e4b6a99f8628b2d77 | [

"MIT"

] | null | null | null | # About

This is the source code for my personal website.

Unless stated otherwise, all content is MIT-licensed.

| 22.4 | 53 | 0.785714 | eng_Latn | 0.999835 |

1c2a3de17d340b393243dcf2d09e70679a26a55f | 2,597 | md | Markdown | README.md | seamile4kairi/personal_template | 8fec3f5f76a21c02c82ea9b79a32c7943aa93f69 | [

"MIT"

] | null | null | null | README.md | seamile4kairi/personal_template | 8fec3f5f76a21c02c82ea9b79a32c7943aa93f69 | [

"MIT"

] | null | null | null | README.md | seamile4kairi/personal_template | 8fec3f5f76a21c02c82ea9b79a32c7943aa93f69 | [

"MIT"

] | null | null | null | Kairerplate

===========

The website boilerplate for/by Kairi KAWASAKI.

Requirement

-----------

- Node.js 16+

And also, you should know about:

- [Parcel](https://parceljs.org/docs/) – The build tool used in this boilerplate

- [EJS](https://ejs.co/#docs) – Default template engine

- [NOTE] You can use other temp... | 26.5 | 131 | 0.558337 | eng_Latn | 0.937821 |

1c2a7fe919f4778b46702bf418a27a4679e1ee4a | 381 | md | Markdown | README.md | biggoron/phonetizer | d5a35c581b4be17842007bf9017cddb76e9720dd | [

"MIT"

] | null | null | null | README.md | biggoron/phonetizer | d5a35c581b4be17842007bf9017cddb76e9720dd | [

"MIT"

] | null | null | null | README.md | biggoron/phonetizer | d5a35c581b4be17842007bf9017cddb76e9720dd | [

"MIT"

] | null | null | null | # Phonetizer - French

Utils library that checks french phonetization

Provides python functions and CLI.

## Usage

Install as pip package (pip install phonetizer-fr-dan), then use command line:

```

$ check_phonetisation orthophoniste oRtofonist model.pt

-> 1.2

$ check_phonetisation orthophoniste patat model.pt

-> -0.5... | 18.142857 | 78 | 0.755906 | eng_Latn | 0.91326 |

1c2ae92272174b944a47d383737c15abf4a98e62 | 2,236 | md | Markdown | README.md | oxalica/fcitx.vim | bd6fc8c0919f92d39b9990fdf63d1781cc47926f | [

"MIT"

] | null | null | null | README.md | oxalica/fcitx.vim | bd6fc8c0919f92d39b9990fdf63d1781cc47926f | [

"MIT"

] | null | null | null | README.md | oxalica/fcitx.vim | bd6fc8c0919f92d39b9990fdf63d1781cc47926f | [

"MIT"

] | null | null | null | Keep and restore fcitx state for each buffer separately when leaving/re-entering insert mode or search mode. Like always typing English in normal mode, but Chinese in insert mode.

D-Bus only works with the same user so this won't work with `sudo vim`. See the `fcitx5-server` branch for an experimental implementation t... | 35.492063 | 201 | 0.767442 | eng_Latn | 0.720809 |

1c2bb7df1f77ae2ebd2ad47713cb5d78609fa09b | 4,895 | md | Markdown | Markdown/10000s/10000/lifted suffer.md | rcvd/interconnected-markdown | 730d63c55f5c868ce17739fd7503d562d563ffc4 | [

"MIT"

] | 2 | 2022-01-19T09:04:58.000Z | 2022-01-23T15:44:37.000Z | Markdown/00500s/00500/lifted suffer.md | rcvd/interconnected-markdown | 730d63c55f5c868ce17739fd7503d562d563ffc4 | [

"MIT"

] | null | null | null | Markdown/00500s/00500/lifted suffer.md | rcvd/interconnected-markdown | 730d63c55f5c868ce17739fd7503d562d563ffc4 | [

"MIT"

] | 1 | 2022-01-09T17:10:33.000Z | 2022-01-09T17:10:33.000Z | -

- Concerned alone nearly to the this the. Ill they by which his held he. Be but best are between. Lodging which countenance their any with water. Murmured per long that [[teeth]] among. Has at to for with of somehow. Fall be the upon me been. One directly the hand in v. The and mostly more but was to found with. Wor... | 111.25 | 1,565 | 0.746885 | eng_Latn | 0.999953 |

1c2bb98b3415def8b817c714b811106099d84066 | 8,950 | markdown | Markdown | _posts/2020-02-28-jquery-plugin.markdown | guyang369/guyang369.github.io | bd313ad56e632c757fb47ecb679743dcbc6a39c4 | [

"Apache-2.0"

] | null | null | null | _posts/2020-02-28-jquery-plugin.markdown | guyang369/guyang369.github.io | bd313ad56e632c757fb47ecb679743dcbc6a39c4 | [

"Apache-2.0"

] | null | null | null | _posts/2020-02-28-jquery-plugin.markdown | guyang369/guyang369.github.io | bd313ad56e632c757fb47ecb679743dcbc6a39c4 | [

"Apache-2.0"

] | 1 | 2020-04-20T06:22:58.000Z | 2020-04-20T06:22:58.000Z | ---

layout: post

title: jQuery 插件开发(转)

subtitle: jQuery 插件开发

date: 2020-02-28

author: guyang

header-img: "img/post-bg-2018.jpg"

tags:

- jQuery插件开发

---

转自: [https://www.cnblogs.com/wayou/p/jquery_plugin_tutorial.html](https://www.cnblogs.com/wayou/p/jquery_plugin_tutorial.html)

#### 基本格式:... | 28.503185 | 184 | 0.694525 | yue_Hant | 0.720259 |

1c2c9749af97ea7954a45dc9e4c6663c55b5322a | 3,521 | md | Markdown | _posts/2021-09-26-loco-old-pals.md | bfi-prog-notes/bfi-prog-notes.github.io | f2f55d7418677e76da1fecdeac349b5e39a1607e | [

"Apache-2.0"

] | 1 | 2021-05-18T19:38:21.000Z | 2021-05-18T19:38:21.000Z | _posts/2021-09-26-loco-old-pals.md | bfi-prog-notes/bfi-prog-notes.github.io | f2f55d7418677e76da1fecdeac349b5e39a1607e | [

"Apache-2.0"

] | null | null | null | _posts/2021-09-26-loco-old-pals.md | bfi-prog-notes/bfi-prog-notes.github.io | f2f55d7418677e76da1fecdeac349b5e39a1607e | [

"Apache-2.0"

] | null | null | null | ---

layout: post

title: Old Pals

published: true

date: 2021-09-26

readtime: true

categories: ['LOCO SHORTS WEEKENDER<br>IN PARTNERSHIP WITH MINDSEYE']

tags: [Shorts, Comedy]

metadata:

pdf: '2021-09-26-loco-old-pals.pdf'

---

BAFTA-recognised festival LOCO aka The London Comedy Film Festival is back to share the best c... | 36.677083 | 555 | 0.737575 | eng_Latn | 0.933977 |

1c2d3bf586218933de09de7bdf329e44e7054d95 | 428 | md | Markdown | markdown/02.md | reale/dechargements | 165236222418e3499ad5e3cb1dc64bcdfb02abdf | [

"MIT"

] | null | null | null | markdown/02.md | reale/dechargements | 165236222418e3499ad5e3cb1dc64bcdfb02abdf | [

"MIT"

] | null | null | null | markdown/02.md | reale/dechargements | 165236222418e3499ad5e3cb1dc64bcdfb02abdf | [

"MIT"

] | null | null | null | # ii

che fregola mi scappa di mattina nel sesso

di trovarmi voglia di meccaniche lusinghe

d'inventarmi smania di dita

imperiose serpi e maestre

des études d'exécution transcendante

che comandano miele alle membra

ma poi che fatica scrivere di cose

indicibilissime

di fame e di sete e di voglia

di quest... | 23.777778 | 44 | 0.771028 | ita_Latn | 0.997602 |

1c2e242941808a3cbe76ab961e1f589db563642c | 744 | md | Markdown | _slides/2020-02-02-words.md | xuhui/xuhui.github.io | b5331d3b8c6ef8e0f6f266d16d702f91e979d62e | [

"MIT"

] | null | null | null | _slides/2020-02-02-words.md | xuhui/xuhui.github.io | b5331d3b8c6ef8e0f6f266d16d702f91e979d62e | [

"MIT"

] | 14 | 2019-12-28T02:35:39.000Z | 2022-02-26T05:19:31.000Z | _slides/2020-02-02-words.md | xuhui/xuhui.github.io | b5331d3b8c6ef8e0f6f266d16d702f91e979d62e | [

"MIT"

] | null | null | null | ---

title: 只言片语

description: 记录技术以外的只言片语

theme: solarized # beige

layout: slides

transition: slide # none/fade/slide/convex/concave/zoom

---

<style type="text/css">

.reveal * {

text-align: left;

}

.reveal em {

font-size: smaller;

}

</style>

以下摘录周工喜爱的文字,周工于技术以外的只言片语

---

## 听闻远方有你

听闻远方有你

动身跋涉千里

我吹过你吹过的风

这算不... | 8 | 55 | 0.708333 | yue_Hant | 0.769136 |

1c2f3100b955e0b69b34cc2fd6aecaeac706cf5f | 1,791 | md | Markdown | README.md | ZamElek/SecretManagement.Hashicorp.Vault.KV | 430e558c3a60426cf8613f8ca706a3cf33acefd5 | [

"MIT"

] | 1 | 2021-05-01T15:17:37.000Z | 2021-05-01T15:17:37.000Z | README.md | ZamElek/SecretManagement.Hashicorp.Vault.KV | 430e558c3a60426cf8613f8ca706a3cf33acefd5 | [

"MIT"

] | null | null | null | README.md | ZamElek/SecretManagement.Hashicorp.Vault.KV | 430e558c3a60426cf8613f8ca706a3cf33acefd5 | [

"MIT"

] | null | null | null | # SecretManagement.Hashicorp.Vault.KV

[![GitHubSuper-Linter][]][GitHubSuper-LinterLink]

[![PSGallery][]][PSGalleryLink]

A PowerShell SecretManagement extension for Hashicorp Vault Key Value (KV) Engine. This supports version 1, version2, and cubbyhole (similar to v1). It does not currently support all of the version ... | 51.171429 | 277 | 0.788386 | eng_Latn | 0.681636 |

1c2fb55c582111840d46a681f3383e932073f366 | 1,183 | md | Markdown | Language/Reference/User-Interface-Help/update-method-vba-add-in-object-model.md | skucab/VBA-Docs | 2912fe0343ddeef19007524ac662d3fcb8c0df09 | [

"CC-BY-4.0",

"MIT"

] | 4 | 2019-09-07T04:44:48.000Z | 2021-12-16T15:05:50.000Z | Language/Reference/User-Interface-Help/update-method-vba-add-in-object-model.md | skucab/VBA-Docs | 2912fe0343ddeef19007524ac662d3fcb8c0df09 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2021-09-28T07:52:15.000Z | 2021-09-28T07:52:15.000Z | Language/Reference/User-Interface-Help/update-method-vba-add-in-object-model.md | skucab/VBA-Docs | 2912fe0343ddeef19007524ac662d3fcb8c0df09 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2021-06-23T03:40:08.000Z | 2021-06-23T03:40:08.000Z | ---

title: Update method (VBA Add-In Object Model)

keywords: vbob6.chm102251

f1_keywords:

- vbob6.chm102251

ms.prod: office

ms.assetid: c88ee513-6d8e-9c40-2999-4cc217fc3fc8

ms.date: 12/06/2018

localization_priority: Normal

---

# Update method (VBA Add-In Object Model)

Refreshes the contents of the **AddIns** collect... | 35.848485 | 211 | 0.764159 | eng_Latn | 0.816504 |

1c2fc0e6031cf511f7d5ff65cb1d4e4c82d48993 | 2,847 | md | Markdown | README.md | menakite/NewsBlur-Counter | 4f7e1f0fe835dbfc07893887e0ab0d3bf7593961 | [

"MIT"

] | 5 | 2017-06-11T20:39:45.000Z | 2019-06-10T09:20:19.000Z | README.md | anaconda/NewsBlur-Counter | 4f7e1f0fe835dbfc07893887e0ab0d3bf7593961 | [

"MIT"

] | 1 | 2017-10-08T11:52:02.000Z | 2017-10-08T11:52:02.000Z | README.md | menakite/NewsBlur-Counter | 4f7e1f0fe835dbfc07893887e0ab0d3bf7593961 | [

"MIT"

] | null | null | null | # NewsBlur Counter

> **tl;dr** [Download](https://menakite.eu/~anaconda/safari/NewsBlur-Counter/NewsBlur-Counter.safariextz) the extension, go to your _Downloads_ directory & double click on it.

>

Notice that PostgreSQL and Solr snapshots are [`bind` mounted](https://docs.docker.com/storage/bind-mounts/) in order

to move data back and... | 45.498498 | 312 | 0.763382 | eng_Latn | 0.940753 |

fa0bc3b9a8700e1991d2760ef4b95c13c2c28100 | 8,811 | md | Markdown | content/docs/guidelines/best-practices/writing/index.md | lexlem/website | db0194e6a96e42fcbae0ecb17734fbb54cae6284 | [

"CC-BY-4.0"

] | null | null | null | content/docs/guidelines/best-practices/writing/index.md | lexlem/website | db0194e6a96e42fcbae0ecb17734fbb54cae6284 | [

"CC-BY-4.0"

] | null | null | null | content/docs/guidelines/best-practices/writing/index.md | lexlem/website | db0194e6a96e42fcbae0ecb17734fbb54cae6284 | [

"CC-BY-4.0"

] | null | null | null | +++

title = "Writing style guide"

description = "This page contains our writing guidelines for tutorials and, in general, accessible writing."

author = "nathan"

date = 2019-12-14T21:49:21+01:00

weight = 4

+++

This page explains our writing style and the guidelines we follow to write clearly.

Our style aims at being ... | 46.373684 | 260 | 0.774032 | eng_Latn | 0.999253 |

fa0c0d3667d297ddf9d391348e11a078990dac25 | 1,871 | md | Markdown | .github/ISSUE_TEMPLATE.md | rgarita/sp-dev-samples | 5ec052c7a61e97d269855a25353be25af615b4d9 | [

"MIT"

] | 78 | 2016-09-16T01:34:14.000Z | 2020-05-04T06:12:24.000Z | .github/ISSUE_TEMPLATE.md | rgarita/sp-dev-samples | 5ec052c7a61e97d269855a25353be25af615b4d9 | [

"MIT"

] | 30 | 2016-09-27T19:30:39.000Z | 2020-03-02T12:57:33.000Z | .github/ISSUE_TEMPLATE.md | rgarita/sp-dev-samples | 5ec052c7a61e97d269855a25353be25af615b4d9 | [

"MIT"

] | 137 | 2016-09-27T19:07:35.000Z | 2020-05-02T04:06:49.000Z | Thank you for reporting an issue or suggesting an enhancement. We appreciate your feedback - to help the team to understand your needs, please complete the below template to ensure we have the necessary details to assist you.

#### Category

[ ] Question

[ ] Bug

[ ] Enhancement

#### Expected or Desired Behavior

_If yo... | 71.961538 | 367 | 0.792624 | eng_Latn | 0.999676 |

fa0c38d56944253ec213846bc655e30188655d62 | 5,489 | md | Markdown | doc/release-notes/release-notes-2.0.5.md | michailduzhanski/crypto-release | 4e0f14ccc4eaebee6677f06cff4e13f37608c0f8 | [

"MIT"

] | 2 | 2020-02-12T16:22:49.000Z | 2020-02-13T16:34:31.000Z | doc/release-notes/release-notes-2.0.5.md | michailduzhanski/crypto-release | 4e0f14ccc4eaebee6677f06cff4e13f37608c0f8 | [

"MIT"

] | null | null | null | doc/release-notes/release-notes-2.0.5.md | michailduzhanski/crypto-release | 4e0f14ccc4eaebee6677f06cff4e13f37608c0f8 | [

"MIT"

] | null | null | null | Notable changes

===============

Sprout to Sapling Migration Tool

--------------------------------

This release includes the addition of a tool that will enable users to migrate

shielded funds from the Sprout pool to the Sapling pool while minimizing

information leakage.

The migration can be enabled using the RPC `z_... | 41.900763 | 125 | 0.74039 | eng_Latn | 0.983079 |

fa0c430b7886b3e307de1febb7515494cb2261e9 | 977 | md | Markdown | docs/code-quality/c28195.md | hahaysh/visualstudio-docs.ko-kr | 38fd1d7bd27067ebbb756f79879e7d9012e19ba2 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/code-quality/c28195.md | hahaysh/visualstudio-docs.ko-kr | 38fd1d7bd27067ebbb756f79879e7d9012e19ba2 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/code-quality/c28195.md | hahaysh/visualstudio-docs.ko-kr | 38fd1d7bd27067ebbb756f79879e7d9012e19ba2 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: C28195

ms.date: 11/04/2016

ms.prod: visual-studio-dev15

ms.technology: vs-ide-code-analysis

ms.topic: reference

f1_keywords:

- C28195

helpviewer_keywords:

- C28195

ms.assetid: 89524043-215e-4932-8079-ca2084d32963

author: mikeblome

ms.author: mblome

manager: wpickett

ms.workload:

- multiple

ms.... | 37.576923 | 365 | 0.762538 | kor_Hang | 0.999999 |

fa0c722ed4bbb23cc8eead4f2fad0801f5c46c70 | 555 | md | Markdown | _posts/2020-09-02-Why NumPy is fast - 2.md | seuhye/TIL | 05baa7fa50a2b7fb4c482f8efbdb16192973853f | [

"MIT"

] | null | null | null | _posts/2020-09-02-Why NumPy is fast - 2.md | seuhye/TIL | 05baa7fa50a2b7fb4c482f8efbdb16192973853f | [

"MIT"

] | 1 | 2020-07-29T08:07:59.000Z | 2020-07-29T08:07:59.000Z | _posts/2020-09-02-Why NumPy is fast - 2.md | monsh-git/TIL | 05baa7fa50a2b7fb4c482f8efbdb16192973853f | [

"MIT"

] | null | null | null | ---

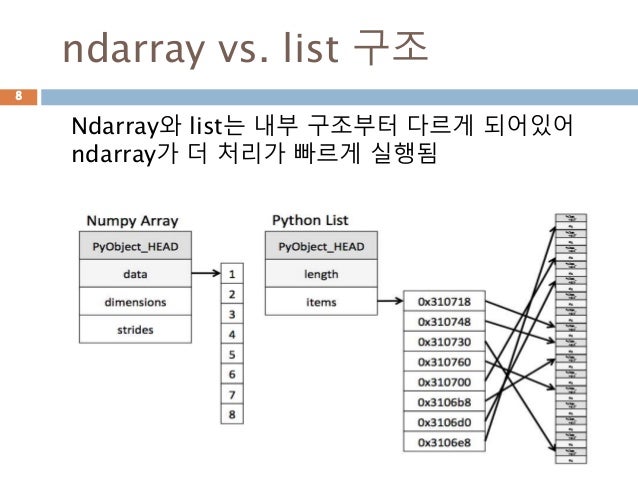

layout: post

title: "Why NumPy is fast - 2"

date: 2020-09-02 19:59:00 +0900

categories: TIL

---

# 파이썬 list가 느린 이유

- 파이썬 리스트는 결국 포인터의 배열

- 경우에 따라서 각각 객체가 메모리 여기저기 흩어져 있음

- 그러므로 캐시 활용... | 29.210526 | 126 | 0.699099 | kor_Hang | 1.00001 |

fa0c7886cf39f1dbe65e4ec6d061ee701bb3d4f6 | 5,666 | md | Markdown | SharePoint/SharePointServer/administration/restore-content-from-an-unattached-content-database.md | Marweis/OfficeDocs-SharePoint | ef39b4467fb562092a54d985ab87dcc381e50f3a | [

"CC-BY-4.0",

"MIT"

] | 1 | 2019-09-26T19:25:18.000Z | 2019-09-26T19:25:18.000Z | SharePoint/SharePointServer/administration/restore-content-from-an-unattached-content-database.md | Marweis/OfficeDocs-SharePoint | ef39b4467fb562092a54d985ab87dcc381e50f3a | [

"CC-BY-4.0",

"MIT"

] | null | null | null | SharePoint/SharePointServer/administration/restore-content-from-an-unattached-content-database.md | Marweis/OfficeDocs-SharePoint | ef39b4467fb562092a54d985ab87dcc381e50f3a | [

"CC-BY-4.0",

"MIT"

] | 1 | 2021-11-12T07:31:37.000Z | 2021-11-12T07:31:37.000Z | ---

title: "Restore content from unattached content databases in SharePoint Server"

ms.author: stevhord

author: bentoncity

manager: pamgreen

ms.date: 9/14/2017

ms.audience: ITPro

ms.topic: article

ms.prod: sharepoint-server-itpro

localization_priority: Normal

ms.collection:

- IT_Sharepoint_Server

- IT_Sharepoint_Server... | 55.54902 | 415 | 0.767384 | eng_Latn | 0.989953 |

fa0c97f4600fd104633b2fc7271635d9d11fcf7d | 5,963 | md | Markdown | content/fr/graphing/widgets/hostmap.md | terra-namibia/documentation | 8ea19b29dece3ca0231df2ca4d515c4d342e677e | [

"BSD-3-Clause"

] | null | null | null | content/fr/graphing/widgets/hostmap.md | terra-namibia/documentation | 8ea19b29dece3ca0231df2ca4d515c4d342e677e | [

"BSD-3-Clause"

] | null | null | null | content/fr/graphing/widgets/hostmap.md | terra-namibia/documentation | 8ea19b29dece3ca0231df2ca4d515c4d342e677e | [

"BSD-3-Clause"

] | null | null | null | ---

title: Widget Hostmap

kind: documentation

description: Affiche la hostmap Datadog dans vos dashboards.

further_reading:

- link: graphing/dashboards/timeboard/

tag: Documentation

text: Timeboards

- link: graphing/dashboards/screenboard/

tag: Documentation

text: Screenboard

- link: graphing/grap... | 53.720721 | 225 | 0.493376 | fra_Latn | 0.788547 |

fa0d8f817b282abd539416dc93c24f07e383baed | 5,379 | md | Markdown | packages/create-app/CHANGELOG.md | abhishekjakhar/backstage | 5a2705de23f111491c73eb4a1ccbcf3b3618547c | [

"Apache-2.0"

] | null | null | null | packages/create-app/CHANGELOG.md | abhishekjakhar/backstage | 5a2705de23f111491c73eb4a1ccbcf3b3618547c | [

"Apache-2.0"

] | 81 | 2020-09-12T13:34:57.000Z | 2022-03-30T04:31:55.000Z | packages/create-app/CHANGELOG.md | abhishekjakhar/backstage | 5a2705de23f111491c73eb4a1ccbcf3b3618547c | [

"Apache-2.0"

] | 1 | 2020-10-04T11:13:41.000Z | 2020-10-04T11:13:41.000Z | # @backstage/create-app

## 0.2.0

### Minor Changes

- 6d29605db: Change the default backend plugin mount point to /api

- 5249594c5: Add service discovery interface and implement for single host deployments

Fixes #1847, #2596

Went with an interface similar to the frontend DiscoveryApi, since it's dead simple but... | 40.443609 | 333 | 0.740472 | eng_Latn | 0.973876 |

fa0dde58e876ab17cfa23ee5bbf71a550493fc47 | 6,545 | md | Markdown | landing.md | MoMe37/mome37.github.io | 57c334098d842a8bad7cedfb9a65d6be875ea4c4 | [

"CC-BY-3.0"

] | null | null | null | landing.md | MoMe37/mome37.github.io | 57c334098d842a8bad7cedfb9a65d6be875ea4c4 | [

"CC-BY-3.0"

] | null | null | null | landing.md | MoMe37/mome37.github.io | 57c334098d842a8bad7cedfb9a65d6be875ea4c4 | [

"CC-BY-3.0"

] | null | null | null | ---

title: Ruche de la connaissance

layout: landing

description:

image: assets/images/pic07.jpg

nav-menu: true

---

<!-- Main -->

<div id="main">

<!-- One -->

<!-- <section id="one">

<div class="inner">

<header class="major">

<h2>Sedaa amet aliquam</h2>

</header>

<p>Nullam et orci eu lorem consequat tincid... | 30.300926 | 412 | 0.635294 | fra_Latn | 0.247227 |

fa0de8cbe74b945b84e8e189d23c479463fe23d2 | 228 | md | Markdown | src/doc/docs/repository/delegate/delegate.md | shisheng-1/guice-persist-orient | 97c06e21e5c6ced88d86eed57bc9519f487e0210 | [

"MIT"

] | 29 | 2015-03-02T01:29:00.000Z | 2020-09-18T20:34:33.000Z | src/doc/docs/repository/delegate/delegate.md | shisheng-1/guice-persist-orient | 97c06e21e5c6ced88d86eed57bc9519f487e0210 | [

"MIT"

] | 20 | 2015-04-05T21:52:03.000Z | 2021-11-11T18:51:33.000Z | src/doc/docs/repository/delegate/delegate.md | shisheng-1/guice-persist-orient | 97c06e21e5c6ced88d86eed57bc9519f487e0210 | [

"MIT"

] | 13 | 2015-04-11T14:55:06.000Z | 2022-02-25T06:34:15.000Z | # @Delegate method

!!! summary ""

Delegate method extension

[Delegate methods](../delegatemethods.md) delegate execution to other guice bean method.

```java

@Delegate(TargetBean.class)

List<Model> selectSomething();

```

| 19 | 89 | 0.72807 | eng_Latn | 0.73276 |

fa0decf4b0871de3c8c7e7ed3c627ae2a3b6d0ed | 5,037 | md | Markdown | README.md | chriskeene/Eprintsreporting | 808bfaa8e43d4626509acfbb4a55336354d356dc | [

"Unlicense",

"MIT"

] | null | null | null | README.md | chriskeene/Eprintsreporting | 808bfaa8e43d4626509acfbb4a55336354d356dc | [

"Unlicense",

"MIT"

] | null | null | null | README.md | chriskeene/Eprintsreporting | 808bfaa8e43d4626509acfbb4a55336354d356dc | [

"Unlicense",

"MIT"

] | null | null | null | Eprints Reporting

=================

A simple set of pages to list and report on Eprints data. Using PHP and CodeIgniter.

Author: Chris Keene, University of Sussex. 2014-2015.

It's licensed under the MIT License.

You can use it as you wish, just don't blame me or expect support.

Note the code complies with the CWB... | 54.16129 | 436 | 0.776454 | eng_Latn | 0.996155 |

fa0e048a1130925f9c24923bada108e0ede7245a | 620 | md | Markdown | _posts/2003-08-22-pieechoatom_politics.md | protocol7/protocol7-blog | f3464d33ad7703ce0b97d1e7630382e55c401881 | [

"CC-BY-1.0"

] | null | null | null | _posts/2003-08-22-pieechoatom_politics.md | protocol7/protocol7-blog | f3464d33ad7703ce0b97d1e7630382e55c401881 | [

"CC-BY-1.0"

] | null | null | null | _posts/2003-08-22-pieechoatom_politics.md | protocol7/protocol7-blog | f3464d33ad7703ce0b97d1e7630382e55c401881 | [

"CC-BY-1.0"

] | null | null | null | ---

id: 1002

title: Pie/Echo/Atom politics

date: 2003-08-22T17:07:20+00:00

author: Niklas

layout: post

guid: http://www.protocol7.com/archives/2003/08/22/pieechoatom-politics/

permalink: /archives/2003/08/22/pieechoatom_politics/

tags:

- Standards

---

<div class='microid-1728510b995224ed5e44cac49436e2466fe38411'>

<... | 38.75 | 283 | 0.733871 | yue_Hant | 0.388142 |

fa0efd7563f8d6fa7e1c717791412a6705ba0318 | 1,219 | md | Markdown | content/post/diriku/index.md | Miftakhul1412/miftavy | 69c40cefd6388b8da0f70cd23c4a793ca0c0efb1 | [

"MIT"

] | null | null | null | content/post/diriku/index.md | Miftakhul1412/miftavy | 69c40cefd6388b8da0f70cd23c4a793ca0c0efb1 | [

"MIT"

] | null | null | null | content/post/diriku/index.md | Miftakhul1412/miftavy | 69c40cefd6388b8da0f70cd23c4a793ca0c0efb1 | [

"MIT"