hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6 values | lang stringclasses 1 value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191 values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

e90e27df38bea2bd32b36b86af61b5372d00ca41 | 64 | md | Markdown | NEWS.md | validmeasures/somalia | fe0d920a53ea05f5cdf31c859d3f3c2f71998018 | [

"CC0-1.0"

] | 1 | 2018-05-02T02:15:02.000Z | 2018-05-02T02:15:02.000Z | NEWS.md | validmeasures/somalia | fe0d920a53ea05f5cdf31c859d3f3c2f71998018 | [

"CC0-1.0"

] | null | null | null | NEWS.md | validmeasures/somalia | fe0d920a53ea05f5cdf31c859d3f3c2f71998018 | [

"CC0-1.0"

] | null | null | null | # somalia v0.1.0

* First release of `somalia` dataset package.

| 16 | 45 | 0.71875 | eng_Latn | 0.660702 |

e90e33b205f68bec1c3f5e412be4aa5939b35a4a | 4,248 | md | Markdown | README.md | FroggieFrog/SettingsPlugin | d19211711b9cba068e76e1775eb4006469b52065 | [

"MIT"

] | 375 | 2016-06-28T20:06:52.000Z | 2022-03-20T14:45:12.000Z | README.md | FroggieFrog/SettingsPlugin | d19211711b9cba068e76e1775eb4006469b52065 | [

"MIT"

] | 139 | 2016-06-29T04:49:58.000Z | 2021-03-23T22:01:15.000Z | README.md | FroggieFrog/SettingsPlugin | d19211711b9cba068e76e1775eb4006469b52065 | [

"MIT"

] | 121 | 2016-08-14T06:13:57.000Z | 2022-02-27T20:58:58.000Z | # Settings Plugin for Xamarin And Windows

Create and access settings from shared code across all of your apps!

## Documentation

Get started by reading through the [Settings Plugin documentation](https://jamesmontemagno.github.io/SettingsPlugin/).

Looking to store credentials and sensitive information? Use Xamarin.Essential's [Secure Storage](https://docs.microsoft.com/xamarin/essentials/secure-storage?WT.mc_id=friends-0000-jamont)

## NuGet

* [Xam.Plugins.Settings](http://www.nuget.org/packages/Xam.Plugins.Settings) [](https://www.nuget.org/packages/Xam.Plugins.Settings)

### The Future: [Xamarin.Essentials](https://docs.microsoft.com/xamarin/essentials/index?WT.mc_id=friends-0000-jamont)

I have been working on Plugins for Xamarin for a long time now. Through the years I have always wanted to create a single, optimized, and official package from the Xamarin team at Microsoft that could easily be consumed by any application. The time is now with [Xamarin.Essentials](https://docs.microsoft.com/xamarin/essentials/index?WT.mc_id=friends-0000-jamont), which offers over 50 cross-platform native APIs in a single optimized package. I worked on this new library with an amazing team of developers and I highly highly highly recommend you check it out.

I will continue to work and maintain my Plugins, but I do recommend you checkout Xamarin.Essentials to see if it is a great fit your app as it has been for all of mine!

### Xamarin.Essentials Migration

This plugin and Xamarin.Essentials store information in the same exact location :). This means you can seemlessly swap out this plugin for Xamarin.Essentials and not lose any data. Checkout my blog for more info: https://montemagno.com/upgrading-from-plugins-to-xamarin-essentials/

## Build:

*

* CI NuGet Feed: http://myget.org/F/xamarin-plugins

**Platform Support**

|Platform|Version|

| ------------------- | :-----------: |

|Xamarin.iOS|iOS 7+|

|Xamarin.Android|API 15+|

|Windows 10 UWP|10+|

|Xamarin.Mac|All|

|Xamarin.tvOS|All|

|Xamarin.watchOS|All|

|.NET|4.5+|

|.NET Core|2.0+|

|Tizen|4.0+|

#### Settings Plugin or Xamarin.Forms App.Properties

I get this question a lot, so here it is from a recent issue opened up. This plugin saves specific properties directly to each platforms native settings APIs (NSUserDefaults, SharedPreferences, etc). This ensures the fastest, most secure, and reliable creation and editing settings per application. Additionally, it works with **any Xamarin application**, not just Xamarin.Forms.

App.Current.Properties actually serializes and deserializes items to disk as you can see in the [implementation](https://github.com/xamarin/Xamarin.Forms/blob/e6d5186c8acbf37b877c7ca3c77a378352a3743d/Xamarin.Forms.Platform.iOS/Deserializer.cs).

To me that isn't as reliable as saving direct to the native platforms settings.

# Contribution

Thanks you for your interest in contributing to Settings plugin! In this section we'll outline what you need to know about contributing and how to get started.

### Bug Fixes

Please browse open issues, if you're looking to fix something, it's possible that someone already reported it. Additionally you select any `up-for-grabs` items

### Pull requests

Please fill out the pull request template when you send one.

Run tests to make sure your changes don't break any unit tests. Follow these instructions to run tests -

**iOS**

- Navigate to _tests/Plugin.Settings.NUnitTest.iOS_

- Execute `make run-simulator-tests`

**Android**

Execute `./build.sh --target RunDroidTests` from the project root

## License

The MIT License (MIT) see [License file](LICENSE)

### Want To Support This Project?

All I have ever asked is to be active by submitting bugs, features, and sending those pull requests down! Want to go further? Make sure to subscribe to my weekly development podcast [Merge Conflict](http://mergeconflict.fm), where I talk all about awesome Xamarin goodies and you can optionally support the show by becoming a [supporter on Patreon](https://www.patreon.com/mergeconflictfm).

| 57.405405 | 562 | 0.778719 | eng_Latn | 0.9735 |

e90ec513f8eddccf9be24a98988058266bb8c181 | 66 | md | Markdown | compiler/mio-circle/README.md | bogus-sudo/ONE-1 | 7052a817eff661ec2854ed2e7ee0de5e8ba82b55 | [

"Apache-2.0"

] | 255 | 2020-05-22T07:45:29.000Z | 2022-03-29T23:58:22.000Z | compiler/mio-circle/README.md | bogus-sudo/ONE-1 | 7052a817eff661ec2854ed2e7ee0de5e8ba82b55 | [

"Apache-2.0"

] | 5,102 | 2020-05-22T07:48:33.000Z | 2022-03-31T23:43:39.000Z | compiler/mio-circle/README.md | bogus-sudo/ONE-1 | 7052a817eff661ec2854ed2e7ee0de5e8ba82b55 | [

"Apache-2.0"

] | 120 | 2020-05-22T07:51:08.000Z | 2022-02-16T19:08:05.000Z | # mio-circle

Let's make it easy to read and write Circle models.

| 16.5 | 51 | 0.742424 | eng_Latn | 0.999687 |

e9107218281edb54ea0fc0fa5592470fa79e5660 | 1,081 | md | Markdown | README.md | mochilor/bplate | cb678670ce9e3738f2f0596eeb52a384402a2f67 | [

"MIT"

] | null | null | null | README.md | mochilor/bplate | cb678670ce9e3738f2f0596eeb52a384402a2f67 | [

"MIT"

] | null | null | null | README.md | mochilor/bplate | cb678670ce9e3738f2f0596eeb52a384402a2f67 | [

"MIT"

] | null | null | null | # Simple boiler plate for small PHP web development.

Based on great [HTML5 Boilerplate](https://html5boilerplate.com/) and intended for small php apps. It uses a small custom routing (it needs url rewriting to be activated on server) and have no classes nor autoloading. Public assets are in webroot folder. Pages files (views) are in /app/pages. If you need a new url, simply add a new file here named as the desired url. Each section after first slash will be treated as parameters and passed to page file.

## Gulp

Includes a gulpfile for handle resources files and put concatenated and minified files under webroot folder. If you plan not to use gulp, you must replace main.min.css and main.min.js with your own files.

### Included frontend libraries via bower:

- Bootstrap

- Fontawesome

- jQuery

- jQuery validation

Install libraries with:

```

npm install

gulp bower

```

Deafult `bower` task will watch for changes in resources folders.

###Missing features (also known as TO DO!)

- Javascript and server side simple validation.

- Database management (basic CRUD).

- ... | 40.037037 | 455 | 0.769658 | eng_Latn | 0.995918 |

e9108907744532a5f5a319626410047466cb33a6 | 1,570 | md | Markdown | README.md | hsz-devops/timescaledb--timescaledb-docker | ba0cbe086b17852ca6f2eb712d3aa406731f81dd | [

"Apache-2.0"

] | 1 | 2018-11-14T23:50:11.000Z | 2018-11-14T23:50:11.000Z | README.md | hsz-devops/timescaledb--timescaledb-docker | ba0cbe086b17852ca6f2eb712d3aa406731f81dd | [

"Apache-2.0"

] | null | null | null | README.md | hsz-devops/timescaledb--timescaledb-docker | ba0cbe086b17852ca6f2eb712d3aa406731f81dd | [

"Apache-2.0"

] | 1 | 2018-11-19T00:10:51.000Z | 2018-11-19T00:10:51.000Z | <img src="http://www.timescale.com/img/timescale-wordmark-blue.svg" alt="Timescale" width="300"/>

## What is TimescaleDB?

TimescaleDB is an open-source database designed to make SQL scalable

for time-series data. For more information, see

the [Timescale website](https://www.timescale.com).

## How to use this image

This image is based on the

official

[Postgres docker image](https://store.docker.com/images/postgres) so

the documentation for that image also applies here, including the

environment variables one can set, extensibility, etc.

### Starting a TimescaleDB instance

```

$ docker run -d --name some-timescaledb -p 5432:5432 timescale/timescaledb

```

Then connect with an app or the `psql` client:

```

$ docker run -it --net=host --rm timescale/timescaledb psql -h localhost -U postgres

```

You can also connect your app via port `5432` on the host machine.

If you are running your docker image for the first time, you can also set an environmental variable, `TIMESCALEDB_TELEMETRY`, to set the level of [telemetry](https://docs.timescale.com/using-timescaledb/telemetry) in the Timescale docker instance. For example, to turn off telemetry, run:

```

$ docker run -d --name some-timescaledb -p 5432:5432 --env TIMESCALEDB_TELEMETRY=off timescale/timescaledb

```

Note that if the cluster has previously been initialized, you should not use this environment variable to set the level of telemetry. Instead, follow the [instructions](https://docs.timescale.com/using-timescaledb/telemetry) in our docs to disable telemetry once a cluster is running.

| 41.315789 | 288 | 0.76879 | eng_Latn | 0.972663 |

e911a116828c4a6590ab616566ab2360e1355251 | 2,063 | md | Markdown | source/Modules/ActiveDirectoryDsc.Common/docs/Get-DomainControllerObject.md | nanderh/ActiveDirectoryDsc | 8a305922b5af060bf621ae89f41f8a0ec0c287b4 | [

"MIT"

] | 157 | 2015-04-16T19:55:00.000Z | 2019-07-13T23:10:26.000Z | source/Modules/ActiveDirectoryDsc.Common/docs/Get-DomainControllerObject.md | nanderh/ActiveDirectoryDsc | 8a305922b5af060bf621ae89f41f8a0ec0c287b4 | [

"MIT"

] | 425 | 2015-04-16T04:45:37.000Z | 2019-07-25T09:29:39.000Z | source/Modules/ActiveDirectoryDsc.Common/docs/Get-DomainControllerObject.md | nanderh/ActiveDirectoryDsc | 8a305922b5af060bf621ae89f41f8a0ec0c287b4 | [

"MIT"

] | 142 | 2015-04-16T04:16:31.000Z | 2019-07-23T18:15:59.000Z |

# Get-DomainControllerObject

## SYNOPSIS

Gets the domain controller object if the node is a domain controller.

## SYNTAX

```

Get-DomainControllerObject [-DomainName] <String> [[-ComputerName] <String>] [[-Credential] <PSCredential>]

[<CommonParameters>]

```

## DESCRIPTION

The Get-DomainControllerObject function is used to get the domain controller object if the node is a domain

controller, otherwise it returns $null.

## EXAMPLES

### EXAMPLE 1

```

Get-DomainControllerObject -DomainName contoso.com

```

## PARAMETERS

### -ComputerName

Specifies the name of the node to return the domain controller object for.

```yaml

Type: System.String

Parameter Sets: (All)

Aliases:

Required: False

Position: 2

Default value: $env:COMPUTERNAME

Accept pipeline input: False

Accept wildcard characters: False

```

### -Credential

Specifies the credentials to use when accessing the domain, or use the current user if not specified.

```yaml

Type: System.Management.Automation.PSCredential

Parameter Sets: (All)

Aliases:

Required: False

Position: 3

Default value: None

Accept pipeline input: False

Accept wildcard characters: False

```

### -DomainName

Specifies the name of the domain that should contain the domain controller.

```yaml

Type: System.String

Parameter Sets: (All)

Aliases:

Required: True

Position: 1

Default value: None

Accept pipeline input: False

Accept wildcard characters: False

```

### CommonParameters

This cmdlet supports the common parameters: -Debug, -ErrorAction, -ErrorVariable, -InformationAction, -InformationVariable, -OutVariable, -OutBuffer, -PipelineVariable, -Verbose, -WarningAction, and -WarningVariable. For more information, see [about_CommonParameters](http://go.microsoft.com/fwlink/?LinkID=113216).

## INPUTS

### None

## OUTPUTS

### Microsoft.ActiveDirectory.Management.ADDomainController

## NOTES

Throws an exception of Microsoft.ActiveDirectory.Management.ADServerDownException if the domain cannot be

contacted.

## RELATED LINKS

| 23.988372 | 316 | 0.743093 | eng_Latn | 0.595452 |

e911e8a5bc57ece25e9e9b2ee6ea43c6752b1d40 | 393 | md | Markdown | README.md | nathaliabruno/serasse-extension | d0c19d80a3d5d0e6b9999a5811aaf267c520f1b9 | [

"MIT"

] | null | null | null | README.md | nathaliabruno/serasse-extension | d0c19d80a3d5d0e6b9999a5811aaf267c520f1b9 | [

"MIT"

] | null | null | null | README.md | nathaliabruno/serasse-extension | d0c19d80a3d5d0e6b9999a5811aaf267c520f1b9 | [

"MIT"

] | null | null | null | # SERASSE??

## A funny extension created from a joke with my co-workers. Where we repeat a wrong popular brazilian expression.

- Work in progress

-------------

Boilerplate: [chrome-extension-webpack-boilerplate](https://github.com/samuelsimoes/chrome-extension-webpack-boilerplate) by Samuel Simões ~ [@samuelsimoes](https://twitter.com/samuelsimoes) ~ [Blog](http://blog.samuelsimoes.com/)

| 49.125 | 230 | 0.750636 | yue_Hant | 0.316161 |

e91286596ee938e6157f4f2c87de96b5c1e93364 | 109 | md | Markdown | README.md | quovixi/jekyll-blog | 00351ae4d3bcd0d71872132872510a0c6605cdb4 | [

"Apache-2.0"

] | null | null | null | README.md | quovixi/jekyll-blog | 00351ae4d3bcd0d71872132872510a0c6605cdb4 | [

"Apache-2.0"

] | null | null | null | README.md | quovixi/jekyll-blog | 00351ae4d3bcd0d71872132872510a0c6605cdb4 | [

"Apache-2.0"

] | null | null | null | # jekyll-blog

Attempt at setting up a jekyll blog following a tutorial

http://quovixi.github.io/jekyll-blog/

| 27.25 | 56 | 0.788991 | eng_Latn | 0.435856 |

e9128b7f395d6575491a45be71ae7568e64dd557 | 2,512 | md | Markdown | docs/integration-services/change-data-capture/use-the-new-instance-wizard.md | L3onard80/sql-docs.it-it | f73e3d20b5b2f15f839ff784096254478c045bbb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/integration-services/change-data-capture/use-the-new-instance-wizard.md | L3onard80/sql-docs.it-it | f73e3d20b5b2f15f839ff784096254478c045bbb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/integration-services/change-data-capture/use-the-new-instance-wizard.md | L3onard80/sql-docs.it-it | f73e3d20b5b2f15f839ff784096254478c045bbb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Usare la Procedura guidata nuova istanza | Microsoft Docs

ms.custom: ''

ms.date: 03/01/2017

ms.prod: sql

ms.prod_service: integration-services

ms.reviewer: ''

ms.technology: integration-services

ms.topic: conceptual

ms.assetid: dfc09f71-7037-4cd5-a3cd-c79f8c714e22

author: chugugrace

ms.author: chugu

ms.openlocfilehash: d964639bbcf7679c71191d4b3a8e2a455a4635e8

ms.sourcegitcommit: 58158eda0aa0d7f87f9d958ae349a14c0ba8a209

ms.translationtype: HT

ms.contentlocale: it-IT

ms.lasthandoff: 03/30/2020

ms.locfileid: "71298557"

---

# <a name="use-the-new-instance-wizard"></a>Utilizzare la New Instance Wizard

[!INCLUDE[ssis-appliesto](../../includes/ssis-appliesto-ssvrpluslinux-asdb-asdw-xxx.md)]

Tramite la New Instance Wizard è possibile creare una nuova istanza per un servizio CDC. Viene visualizzata la procedura guidata Create an Oracle CDC Instance da CDC Designer Console. Nella New Instance Wizard è possibile eseguire le operazioni seguenti.

- [Creare il database delle modifiche di SQL Server](../../integration-services/change-data-capture/create-the-sql-server-change-database.md)

- [Connettersi a un database di origine Oracle](../../integration-services/change-data-capture/connect-to-an-oracle-source-database.md)

- [Connettersi a Oracle](../../integration-services/change-data-capture/connect-to-oracle.md)

- [Selezionare tabelle e colonne Oracle](../../integration-services/change-data-capture/select-oracle-tables-and-columns.md)

- [Selezionare le tabelle Oracle per l'acquisizione delle modifiche](../../integration-services/change-data-capture/select-oracle-tables-for-capturing-changes.md)

- [Apportare modifiche alle tabelle selezionate per l'acquisizione di modifiche](../../integration-services/change-data-capture/make-changes-to-the-tables-selected-for-capturing-changes.md)

- [Generare ed eseguire lo script di registrazione supplementare](../../integration-services/change-data-capture/generate-and-run-the-supplemental-logging-script.md)

- [Generare tabelle mirror e istanze di acquisizione di CDC](../../integration-services/change-data-capture/generate-mirror-tables-and-cdc-capture-instances.md)

- [Fine](../../integration-services/change-data-capture/finish.md)

## <a name="see-also"></a>Vedere anche

[Procedura di creazione dell'istanza del database delle modifiche di SQL Server](../../integration-services/change-data-capture/how-to-create-the-sql-server-change-database-instance.md)

| 51.265306 | 258 | 0.768312 | ita_Latn | 0.398617 |

e912c8c6c110c921d93606a3606d4fa73e48cf05 | 17 | md | Markdown | docs/README.zh-CN.md | karthikchilamkurthy/karthikchilamkurthy.github.io | 4032345ea4e888108a5f5fee96a32b6da27bf031 | [

"MIT"

] | null | null | null | docs/README.zh-CN.md | karthikchilamkurthy/karthikchilamkurthy.github.io | 4032345ea4e888108a5f5fee96a32b6da27bf031 | [

"MIT"

] | null | null | null | docs/README.zh-CN.md | karthikchilamkurthy/karthikchilamkurthy.github.io | 4032345ea4e888108a5f5fee96a32b6da27bf031 | [

"MIT"

] | null | null | null | # Karthik's BLOG

| 8.5 | 16 | 0.705882 | deu_Latn | 0.533885 |

e9134c739e8ae55fe06a42b8d588e112663abdb4 | 1,576 | md | Markdown | docs/api_docs/python/hub/load.md | rovinyu/hub | 25d378b24f233651b8a8027321e762d04607a8dd | [

"Apache-2.0"

] | 1 | 2019-10-10T06:23:16.000Z | 2019-10-10T06:23:16.000Z | docs/api_docs/python/hub/load.md | Jabrils/hub | 84ac11ac756050a186cc8bddb54e104323fb9dff | [

"Apache-2.0"

] | null | null | null | docs/api_docs/python/hub/load.md | Jabrils/hub | 84ac11ac756050a186cc8bddb54e104323fb9dff | [

"Apache-2.0"

] | 1 | 2019-10-10T06:23:22.000Z | 2019-10-10T06:23:22.000Z | <div itemscope itemtype="http://developers.google.com/ReferenceObject">

<meta itemprop="name" content="hub.load" />

<meta itemprop="path" content="Stable" />

</div>

# hub.load

``` python

hub.load(

handle,

tags=None

)

```

Loads a module from a handle.

Currently this method is fully supported only with Tensorflow 2.x and with

modules created by calling tensorflow.saved_model.save(). The method works in

both eager and graph modes.

Depending on the type of handle used, the call may involve downloading a

Tensorflow Hub module to a local cache location specified by the

TFHUB_CACHE_DIR environment variable. If a copy of the module is already

present in the TFHUB_CACHE_DIR, the download step is skipped.

Currently, three types of module handles are supported:

1) Smart URL resolvers such as tfhub.dev, e.g.:

https://tfhub.dev/google/nnlm-en-dim128/1.

2) A directory on a file system supported by Tensorflow containing module

files. This may include a local directory (e.g. /usr/local/mymodule) or a

Google Cloud Storage bucket (gs://mymodule).

3) A URL pointing to a TGZ archive of a module, e.g.

https://example.com/mymodule.tar.gz.

#### Args:

* <b>`handle`</b>: (string) the Module handle to resolve.

* <b>`tags`</b>: A set of strings specifying the graph variant to use, if loading from

a v1 module.

#### Returns:

A trackable object (see tf.saved_model.load() documentation for details).

#### Raises:

* <b>`NotImplementedError`</b>: If the code is running against incompatible (1.x)

version of TF. | 31.52 | 86 | 0.718909 | eng_Latn | 0.975098 |

e913ef885cbebbd5fb973665aead2b9b4439e924 | 330 | md | Markdown | docs/2018/11/02.md | yzqcol/zaobao | 63f5c6b1b91833a3a6e7e748e2f41e551311a1ac | [

"MIT"

] | 2,088 | 2018-12-25T04:41:58.000Z | 2022-03-31T03:29:36.000Z | docs/2018/11/02.md | yzqcol/zaobao | 63f5c6b1b91833a3a6e7e748e2f41e551311a1ac | [

"MIT"

] | 94 | 2019-01-01T08:33:37.000Z | 2022-03-30T06:36:38.000Z | docs/2018/11/02.md | yzqcol/zaobao | 63f5c6b1b91833a3a6e7e748e2f41e551311a1ac | [

"MIT"

] | 163 | 2018-12-25T05:47:53.000Z | 2022-03-28T12:47:58.000Z | ### 2018.11.02

[工具] VS Code JavaScript Snippets 能大量简化写法,比如 import from,如果想看更多可以去 GitHub 搜索 VS Code Snippets:<https://github.com/xabikos/VS Code-javascript>

[类库] Webpack 二级缓存,加快启动时间:<https://github.com/mzgoddard/hard-source-webpack-plugin>

[类库] 在命令行中显示图表,我能想到的场景是,在构建多个项目时,能直观的看到哪个项目构建的比较久。<https://github.com/chunqiuyiyu/ervy>

| 41.25 | 140 | 0.781818 | yue_Hant | 0.924436 |

e914446548f96b11f559ff56d3dcbd68be473def | 1,860 | md | Markdown | CHANGELOG.md | sbergwall/RobocopyPS | 22e38a3a08e1876e55e85c41cf52d39552bdebcd | [

"MIT"

] | 24 | 2019-06-25T13:24:10.000Z | 2022-03-14T18:29:59.000Z | CHANGELOG.md | sbergwall/RobocopyPS | 22e38a3a08e1876e55e85c41cf52d39552bdebcd | [

"MIT"

] | 9 | 2020-04-30T04:53:35.000Z | 2022-01-15T16:44:56.000Z | CHANGELOG.md | sbergwall/RobocopyPS | 22e38a3a08e1876e55e85c41cf52d39552bdebcd | [

"MIT"

] | 4 | 2020-02-07T16:10:41.000Z | 2021-09-03T15:44:53.000Z | # RobocopyPS Release History

## 0.2.7 - 2021-10-17

### Added

* Added parameters ExcludeDirectory and ExcludeFileName to Get-RoboItem

### Changed

* Fixed problem with parameters ExcludeDirectory and ExcludeFileName

## 0.2.6 - 2021-09-02

### Added

* Added new cmdlets Get-RoboItem, Remove-RoboItem,Copy-RoboItem,Move-RoboItem.

### Changed

* Removed all ParameterSetName in Invoke-Robocopy, including IncludeSubDirectories (/s) and IncludeEmptySubDirectories (/e) as they are not mutually exlusive.

* Changed Pester Tests to match Version 5 of Pester.

* Changed help file for Get-Help.

* Changed how we validate source directories.

## 0.2.5 - 2021-08-12

### Added

* Added parameters so the module is in phase with native Robocopy, tested on Windows 10 21H1

### Changed

* Removed some of the forced parameters we use (example /v is not used if -Verbose is not specified)

* Changed some tests to be compatible with Pester version 5

* Changed documentation

### Removed

* Removed some tests

## 0.2.2 - 2019-07-18

### Fixed

* Fixed problem with parameter ExcludeFileName and ExcludeDirectory where you could not specify multiple files/directories.

### Added

* Added functionality to Exclude/IncludeAttribute and Remove/AddAttribute

## 0.2.0 - 2019-07-16

### Changed

* A re-write was done to be able to handle error code better and more precisely. Changes to the function names was also done, Start-Robocopy became Invoke-Robocopy and the internal logic handling output from Robocopy.exe was extracted to Invoke-RobocopyParser.

* All other functions was removed during this release so they can be re-worked to follow the new standard.

## 0.1.0 - 2019-05-30

### Fixed

* No fix as this is the first release

### Added

* Added function Start-Robocopy

* Added function Remove-RoboDirectory

### Changed

* No change as this is first release | 24.473684 | 260 | 0.754839 | eng_Latn | 0.997411 |

e915b8e08c7c110165b06b2c636bfe3f8d979621 | 821 | md | Markdown | _posts/pedingpost/2021-01-12-writeing.md | GinGu-Kang/GinGu-Kang.github.io | 868e9f0896c7e80e8c3d9d909cef90556334223b | [

"MIT"

] | null | null | null | _posts/pedingpost/2021-01-12-writeing.md | GinGu-Kang/GinGu-Kang.github.io | 868e9f0896c7e80e8c3d9d909cef90556334223b | [

"MIT"

] | null | null | null | _posts/pedingpost/2021-01-12-writeing.md | GinGu-Kang/GinGu-Kang.github.io | 868e9f0896c7e80e8c3d9d909cef90556334223b | [

"MIT"

] | null | null | null | ---

title: "초보 프로그래머의 블로그 포스팅 하기"

date: 2022-01-12 13:45

categories: "글쓰기"

published: false

---

# 개요

내가 첫 블로그를 생성하고 기술블로그를 생성하기 위해 필요했던 정보들과 앞으로의 블로그를 운영하기위해 필요한 정보들 그리고 블로그에 대한 나의 생각을 정리해보려고 한다.(추후 계속 다듬을 예정)

<details>

<summary>목차</summary>

<div markdown="1">

<ol >

<li>블로그를 하는 이유?</li>

<li>블로그를 생성할때 생각해야 할것</li>

<li>블로그를 운영할때 생각해야 할것</li>

<li>포스팅을 할때 생각해야 할것</li>

<li>글을쓰기 위해 생각해야 할것</li>

</ol>

</div>

</details>

<br>

# 나는 왜 블로그를 운영 하는가?

세상에 블로그를 운영하는 이유는 참 다양하다고 생각한다. 누군가는 소통을 하기 위해 누군가는 지식을 공유하기 위해 또 누군가는 돈을 벌기위해서 블로그를 운영한다. 그렇다면 나는 왜 블로그를 운영하려고 하는걸까.

<br>

<br>

### 첫째. 기록

첫 번째로 내가 블로그를 운영하고 싶었던 가장큰 이유는 기록이다. 기록한다는 것은 남긴 다는것, 기록을 함으로써 나의 소중했던 순간을 되새기며 그때의 감정을 오래도록 남기고 싶어서 이다. 또 한가지는 내가 공부했던 지식들을 남기고 정리하며 내가 어려워 했던 문제들과 실수에 대한 부분을 개선해가는 나의 성장과정을 기록하고 싶어서 이다.

<br>

### 둘째. 소통

| 20.525 | 187 | 0.64799 | kor_Hang | 1.00001 |

e9160b3384e48fada79b37251159fc37e3ee9ee3 | 419 | md | Markdown | _posts/2005-12-19-55-voprosov-hr.md | alexriabtsev/alexriabtsev.github.io | be2bdeaf4c983cb1a531a9dc39d17a6250d4a6e3 | [

"MIT"

] | null | null | null | _posts/2005-12-19-55-voprosov-hr.md | alexriabtsev/alexriabtsev.github.io | be2bdeaf4c983cb1a531a9dc39d17a6250d4a6e3 | [

"MIT"

] | null | null | null | _posts/2005-12-19-55-voprosov-hr.md | alexriabtsev/alexriabtsev.github.io | be2bdeaf4c983cb1a531a9dc39d17a6250d4a6e3 | [

"MIT"

] | null | null | null | ---

id: 489

title: 55 вопросов hr

date: 2005-12-19T19:48:00+02:00

author: alexrb

layout: post

guid: http://alexrb.name/?p=489

permalink: /2005/12/55-voprosov-hr/

lj_itemid:

- "483"

lj_permalink:

- http://alexrb-aka-ral.livejournal.com/123855.html

post_views_count:

- "4"

categories:

- Lost-and-found

tags:

- работа

---

http://www.techinterviews.com/?p=230

на английском

с ответами

может кому понадобится | 18.217391 | 53 | 0.711217 | kor_Hang | 0.141842 |

e91686e9bf2a70a617f717d3e94931fa2d6665d2 | 2,477 | md | Markdown | README.md | FerramONG/ferramong-pay | 1bdd744fa79f4538e34f79b8007480b6b3a9df4b | [

"0BSD"

] | null | null | null | README.md | FerramONG/ferramong-pay | 1bdd744fa79f4538e34f79b8007480b6b3a9df4b | [

"0BSD"

] | null | null | null | README.md | FerramONG/ferramong-pay | 1bdd744fa79f4538e34f79b8007480b6b3a9df4b | [

"0BSD"

] | null | null | null | <p align='center'>

<img width="250px" src='https://raw.githubusercontent.com/FerramONG/ferramong-pay/master/docs/img/logo/logo.png?raw=true' />

</p>

<h1 align='center'>FerramONG - Pay</h1>

<p align='center'>Service responsible for managing users' purchases on the platform.</p>

<p align="center">

<a href="https://github.com/FerramONG/ferramong-pay/actions/workflows/windows.yml"><img src="https://github.com/FerramONG/ferramong-pay/actions/workflows/windows.yml/badge.svg" alt=""></a>

<a href="https://github.com/FerramONG/ferramong-pay/actions/workflows/macos.yml"><img src="https://github.com/FerramONG/ferramong-pay/actions/workflows/macos.yml/badge.svg" alt=""></a>

<a href="https://github.com/FerramONG/ferramong-pay/actions/workflows/ubuntu.yml"><img src="https://github.com/FerramONG/ferramong-pay/actions/workflows/ubuntu.yml/badge.svg" alt=""></a>

<a href="http://java.oracle.com"><img src="https://img.shields.io/badge/java-12+-D0008F.svg" alt="Java compatibility"></a>

<a href="https://github.com/FerramONG/ferramong-pay/blob/master/LICENSE"><img src="https://img.shields.io/badge/License-BSD0-919191.svg" alt="License"></a>

<a href="https://github.com/FerramONG/ferramong-pay/releases"><img src="https://img.shields.io/github/v/release/FerramONG/ferramong-pay" alt="Release"></a>

</p>

<hr />

## ❇ Introduction

This source code is a webservice that is used to manage users' purchases on the platform.

## ❓ How to use

See [here](https://ferramong-pay.herokuapp.com/swagger-ui/index.html?configUrl=/v3/api-docs/swagger-config) OpenAPI doc.

## ⚠ Warnings

The hosting service Heroku may have a certain delay (~ 1 min) for uploading the application so the loading of the website may have a certain delay.

## 🚩 Changelog

Details about each version are documented in the [releases section](https://github.com/FerramONG/ferramong-pay/releases).

## 🗺 Project structure

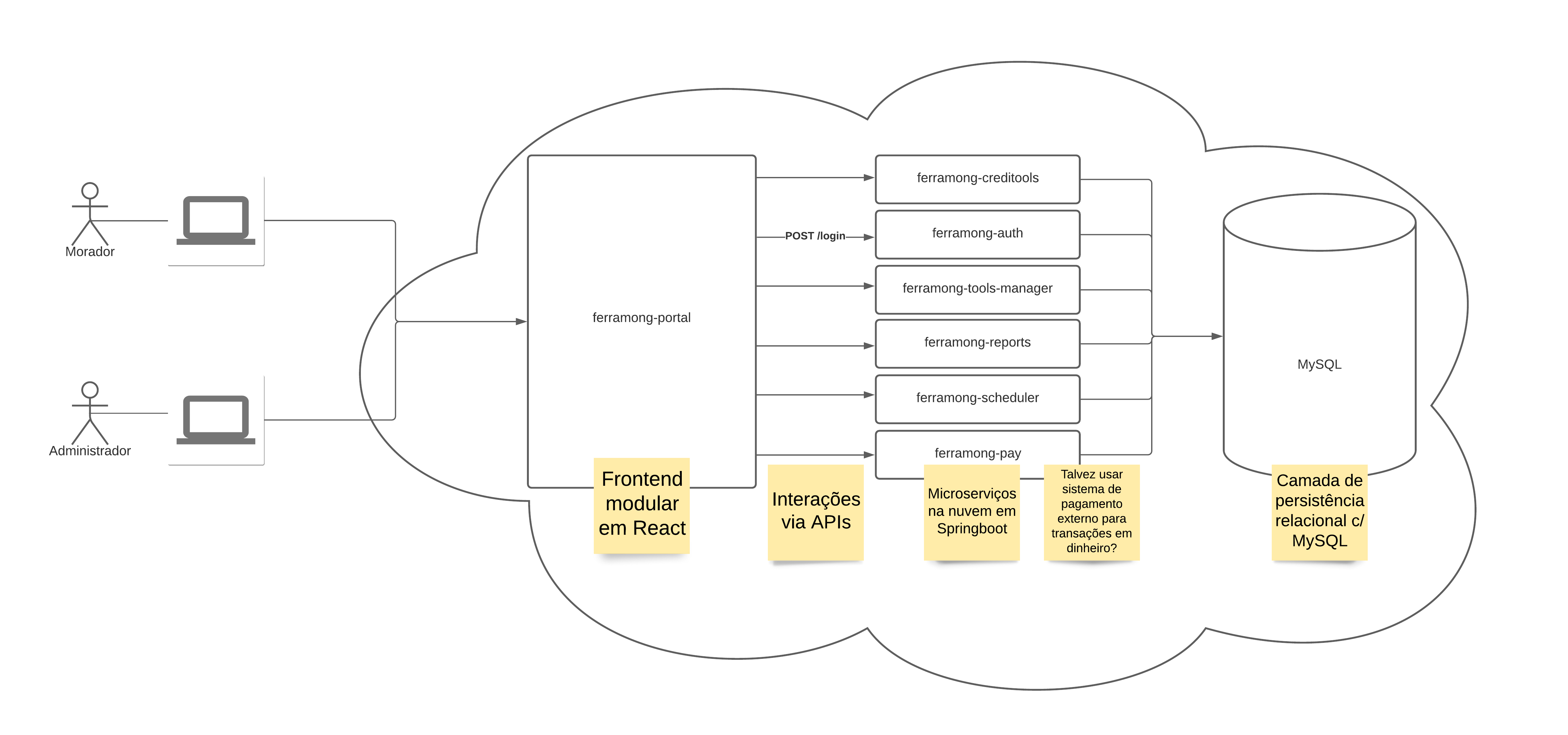

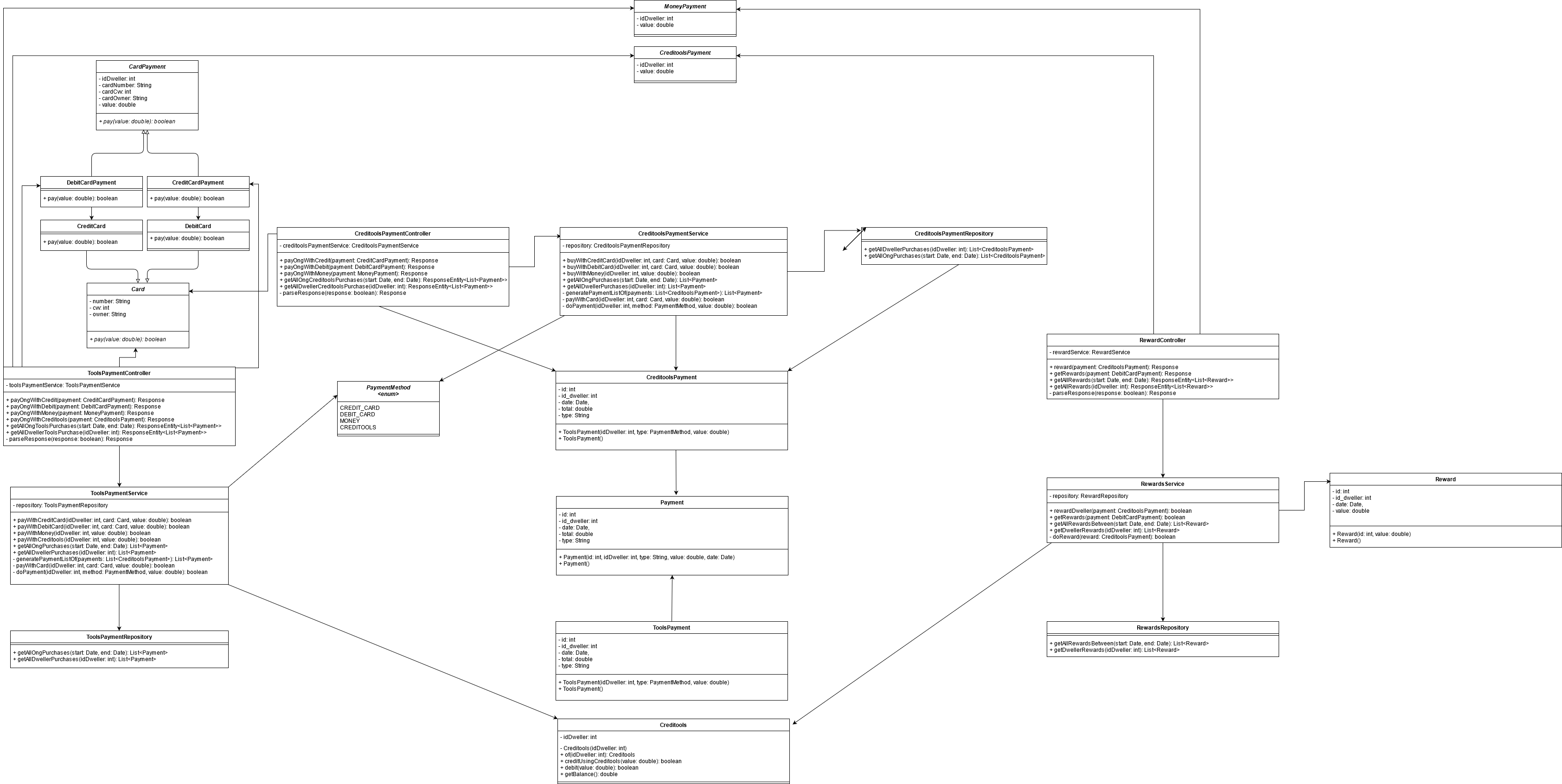

#### FerramONG architecture

#### Pay class diagram

## 📁 Files

### /

| Name |Type|Description|

|----------------|-------------------------------|-----------------------------|

|dist |`Directory`|Released versions|

|docs |`Directory`|Documentation files|

|src |`Directory`| Source files |

|test |`Directory`| Test files |

| 56.295455 | 189 | 0.716996 | yue_Hant | 0.540929 |

e9176b6e181e679e81903a14078bf81ede404d0d | 25,910 | md | Markdown | articles/cost-management-billing/costs/migrate-from-enterprise-reporting-to-azure-resource-manager-apis.md | kalleantero/azure-docs | 50585080657eabcecdb7fa01536ca5f5aecdc600 | [

"CC-BY-4.0",

"MIT"

] | 4 | 2021-11-22T02:45:17.000Z | 2022-03-22T07:08:33.000Z | articles/cost-management-billing/costs/migrate-from-enterprise-reporting-to-azure-resource-manager-apis.md | kalleantero/azure-docs | 50585080657eabcecdb7fa01536ca5f5aecdc600 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/cost-management-billing/costs/migrate-from-enterprise-reporting-to-azure-resource-manager-apis.md | kalleantero/azure-docs | 50585080657eabcecdb7fa01536ca5f5aecdc600 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2021-01-09T16:27:39.000Z | 2021-01-09T16:27:39.000Z | ---

title: Migrate from Enterprise Reporting to Azure Resource Manager APIs

description: This article helps you understand the differences between the Reporting APIs and the Azure Resource Manager APIs, what to expect when you migrate to the Azure Resource Manager APIs, and the new capabilities that are available with the new Azure Resource Manager APIs.

author: bandersmsft

ms.reviewer: adwise

ms.service: cost-management-billing

ms.subservice: common

ms.topic: reference

ms.date: 11/19/2020

ms.author: banders

---

# Migrate from Enterprise Reporting to Azure Resource Manager APIs

This article helps developers who have built custom solutions using the [Azure Reporting APIs for Enterprise Customers](../manage/enterprise-api.md) to migrate onto the Azure Resource Manager APIs for Cost Management. Service Principal support for the newer Azure Resource Manager APIs is now generally available. Azure Resource Manager APIs are in active development. Consider migrating to them instead of using the older Azure Reporting APIs for Enterprise Customers. The older APIs are being deprecated. This article helps you understand the differences between the Reporting APIs and the Azure Resource Manager APIs, what to expect when you migrate to the Azure Resource Manager APIs, and the new capabilities that are available with the new Azure Resource Manager APIs.

## API differences

The following information describes the differences between the older Reporting APIs for Enterprise Customers and the newer Azure Resource Manager APIs.

| **Use** | **Enterprise Agreement APIs** | **Azure Resource Manager APIs** |

| --- | --- | --- |

| Authentication | API Key provisioned in the Enterprise Agreement (EA) portal | Azure Active Directory (Azure AD) Authentication using User tokens or Service Principals. Service Principals take the place of API Keys. |

| Scopes and Permissions | All requests are at the Enrollment scope. The API Key permission assignments will determine whether data for the entire Enrollment, a Department, or a specific Account is returned. No user authentication. | Users or Service Principals are assigned access to the Enrollment, Department, or Account scope. |

| URI Endpoint | https://consumption.azure.com | https://management.azure.com |

| Development Status | In maintenance mode. On the path to deprecation. | Actively being developed |

| Available APIs | Limited to what is available currently | Equivalent APIs are available to replace each EA API. <p> Additional [Cost Management APIs](/rest/api/cost-management/) are also available to you, including: <p> <ul><li>Budgets</li><li>Alerts<li>Exports</li></ul> |

## Migration checklist

- Familiarize yourself with the [Azure Resource Manager REST APIs](/rest/api/azure).

- Determine which EA APIs you use and see which Azure Resource Manager APIs to move to at [EA API mapping to new Azure Resource Manager APIs](#ea-api-mapping-to-new-azure-resource-manager-apis).

- Configure service authorization and authentication for the Azure Resource Manager APIs

- If you're not already using Azure Resource Manager APIs, [register your client app with Azure AD](/rest/api/azure/#register-your-client-application-with-azure-ad). Registration creates a service principal for you to use to call the APIs.

- Assign the service principal access to the scopes needed, as outlined below.

- Update any programming code to use [Azure AD authentication](/rest/api/azure/#create-the-request) with your Service Principal.

- Test the APIs and then update any programming code to replace EA API calls with Azure Resource Manager API calls.

- Update error handling to use new error codes. Some considerations include:

- Azure Resource Manager APIs have a timeout period of 60 seconds.

- Azure Resource Manager APIs have rate limiting in place. This results in a 429 throttling error if rates are exceeded. Build your solutions so that you don't place too many API calls in a short time period.

- Review the other Cost Management APIs available through Azure Resource Manager and assess for use later. For more information, see [Use additional Cost Management APIs](#use-additional-cost-management-apis).

## Assign Service Principal access to Azure Resource Manager APIs

After you create a Service Principal to programmatically call the Azure Resource Manager APIs, you need to assign it the proper permissions to authorize against and execute requests in Azure Resource Manager. There are two permission frameworks for different scenarios.

### Azure Billing Hierarchy Access

To assign Service Principal permissions to your Enterprise Billing Account, Departments, or Enrollment Account scopes, use [Billing Permissions](/rest/api/billing/2019-10-01-preview/billingpermissions), [Billing Role Definitions](/rest/api/billing/2019-10-01-preview/billingroledefinitions), and [Billing Role Assignments](/rest/api/billing/2019-10-01-preview/billingroleassignments) APIs.

- Use the Billing Permissions APIs to identify the permissions that a Service Principal already has on a given scope, like a Billing Account or Department.

- Use the Billing Role Definitions APIs to enumerate the available roles that can be assigned to your Service Principal.

- Only Read-Only EA Admin and Read-Only Department Admin roles can be assigned to Service Principals at this time.

- Use the Billing Role Assignments APIs to assign a role to your Service Principal.

The following example shows how to call the Role Assignments API to grant a Service Principal access to your billing account. We recommend using [PostMan](https://postman.com) to do these one-time permission configurations.

```json

POST https://management.azure.com/providers/Microsoft.Billing/billingAccounts/{billingAccountName}/createBillingRoleAssignment?api-version=2019-10-01-preview

```

#### Request Body

```json

{

"principalId": "00000000-0000-0000-0000-000000000000",

"billingRoleDefinitionId": "/providers/Microsoft.Billing/billingAccounts/{billingAccountName}/providers/Microsoft.Billing/billingRoleDefinition/10000000-aaaa-bbbb-cccc-100000000000"

}

```

### Azure role-based access control

New Service Principal support extends to Azure-specific scopes, like management groups, subscriptions, and resource groups. You can assign Service Principal permissions to these scopes directly [in the Azure portal](../../active-directory/develop/howto-create-service-principal-portal.md#assign-a-role-to-the-application) or by using [Azure PowerShell](../../active-directory/develop/howto-authenticate-service-principal-powershell.md#assign-the-application-to-a-role).

## EA API mapping to new Azure Resource Manager APIs

Use the table below to identify the EA APIs that you currently use and the replacement Azure Resource Manager API to use instead.

| **Scenario** | **EA APIs** | **Azure Resource Manager APIs** |

| --- | --- | --- |

| Balance Summary | [/balancesummary](/rest/api/billing/enterprise/billing-enterprise-api-balance-summary) |[Microsoft.Consumption/balances](/rest/api/consumption/balances/getbybillingaccount) |

| Price Sheet | [/pricesheet](/rest/api/billing/enterprise/billing-enterprise-api-pricesheet) | [Microsoft.Consumption/pricesheets/default](/rest/api/consumption/pricesheet) – use for negotiated prices <p> [Retail Prices API](/rest/api/cost-management/retail-prices/azure-retail-prices) – use for retail prices |

| Reserved Instance Details | [/reservationdetails](/rest/api/billing/enterprise/billing-enterprise-api-reserved-instance-usage) | [Microsoft.CostManagement/generateReservationDetailsReport](/rest/api/cost-management/generatereservationdetailsreport) |

| Reserved Instance Summary | [/reservationsummaries](/rest/api/billing/enterprise/billing-enterprise-api-reserved-instance-usage) | [Microsoft.Consumption/reservationSummaries](/rest/api/consumption/reservationssummaries/list#reservationsummariesdailywithbillingaccountid) |

| Reserved Instance Recommendations | [/SharedReservationRecommendations](/rest/api/billing/enterprise/billing-enterprise-api-reserved-instance-recommendation)<p>[/SingleReservationRecommendations](/rest/api/billing/enterprise/billing-enterprise-api-reserved-instance-recommendation) | [Microsoft.Consumption/reservationRecommendations](/rest/api/consumption/reservationrecommendations/list) |

| Reserved Instance Charges | [/reservationcharges](/rest/api/billing/enterprise/billing-enterprise-api-reserved-instance-charges) | [Microsoft.Consumption/reservationTransactions](/rest/api/consumption/reservationtransactions/list) |

## Migration details by API

The following sections show old API request examples with new replacement API examples.

### Balance Summary API

Use the following request URIs when calling the new Balance Summary API. Your enrollment number should be used as the billingAccountId.

#### Supported requests

[Get for Enrollment](/rest/api/consumption/balances/getbybillingaccount)

```json

https://management.azure.com/providers/Microsoft.Billing/billingAccounts/{billingAccountId}/providers/Microsoft.Consumption/balances?api-version=2019-10-01

```

### Response body changes

_Old response body_:

```json

{

"id": "enrollments/100/billingperiods/201507/balancesummaries",

"billingPeriodId": 201507,

"currencyCode": "USD",

"beginningBalance": 0,

"endingBalance": 1.1,

"newPurchases": 1,

"adjustments": 1.1,

"utilized": 1.1,

"serviceOverage": 1,

"chargesBilledSeparately": 1,

"totalOverage": 1,

"totalUsage": 1.1,

"azureMarketplaceServiceCharges": 1,

"newPurchasesDetails": [

{

"name": "",

"value": 1

}

],

"adjustmentDetails": [

{

"name": "Promo Credit",

"value": 1.1

},

{

"name": "SIE Credit",

"value": 1.0

}

]

}

```

_New response body_:

The same data is now available in the `properties` field of the new API response. There might be minor changes to the spelling on some of the field names.

```json

{

"id": "/providers/Microsoft.Billing/billingAccounts/123456/providers/Microsoft.Billing/billingPeriods/201702/providers/Microsoft.Consumption/balances/balanceId1",

"name": "balanceId1",

"type": "Microsoft.Consumption/balances",

"properties": {

"currency": "USD ",

"beginningBalance": 3396469.19,

"endingBalance": 2922371.02,

"newPurchases": 0,

"adjustments": 0,

"utilized": 474098.17,

"serviceOverage": 0,

"chargesBilledSeparately": 0,

"totalOverage": 0,

"totalUsage": 474098.17,

"azureMarketplaceServiceCharges": 609.82,

"billingFrequency": "Month",

"priceHidden": false,

"newPurchasesDetails": [

{

"name": "Promo Purchase",

"value": 1

}

],

"adjustmentDetails": [

{

"name": "Promo Credit",

"value": 1.1

},

{

"name": "SIE Credit",

"value": 1

}

]

}

}

```

### Price Sheet

Use the following request URIs when calling the new Price Sheet API.

#### Supported requests

You can call the API using the following scopes:

- Enrollment: `providers/Microsoft.Billing/billingAccounts/{billingAccountId}`

- Subscription: `subscriptions/{subscriptionId}`

[_Get for current Billing Period_](/rest/api/consumption/pricesheet/get)

```json

https://management.azure.com/{scope}/providers/Microsoft.Consumption/pricesheets/default?api-version=2019-10-01

```

[_Get for specified Billing Period_](/rest/api/consumption/pricesheet/getbybillingperiod)

```json

https://management.azure.com/{scope}/providers/Microsoft.Billing/billingPeriods/{billingPeriodName}/providers/Microsoft.Consumption/pricesheets/default?api-version=2019-10-01

```

#### Response body changes

_Old response_:

```json

[

{

"id": "enrollments/57354989/billingperiods/201601/products/343/pricesheets",

"billingPeriodId": "201704",

"meterId": "dc210ecb-97e8-4522-8134-2385494233c0",

"meterName": "A1 VM",

"unitOfMeasure": "100 Hours",

"includedQuantity": 0,

"partNumber": "N7H-00015",

"unitPrice": 0.00,

"currencyCode": "USD"

},

{

"id": "enrollments/57354989/billingperiods/201601/products/2884/pricesheets",

"billingPeriodId": "201404",

"meterId": "dc210ecb-97e8-4522-8134-5385494233c0",

"meterName": "Locally Redundant Storage Premium Storage - Snapshots - AU East",

"unitOfMeasure": "100 GB",

"includedQuantity": 0,

"partNumber": "N9H-00402",

"unitPrice": 0.00,

"currencyCode": "USD"

},

...

]

```

_New response_:

Old data is now in the `pricesheets` field of the new API response. Meter details information is also provided.

```json

{

"id": "/subscriptions/00000000-0000-0000-0000-000000000000/providers/Microsoft.Billing/billingPeriods/201702/providers/Microsoft.Consumption/pricesheets/default",

"name": "default",

"type": "Microsoft.Consumption/pricesheets",

"properties": {

"nextLink": "https://management.azure.com/subscriptions/00000000-0000-0000-0000-000000000000/providers/microsoft.consumption/pricesheets/default?api-version=2018-01-31&$skiptoken=AQAAAA%3D%3D&$expand=properties/pricesheets/meterDetails",

"pricesheets": [

{

"billingPeriodId": "/subscriptions/00000000-0000-0000-0000-000000000000/providers/Microsoft.Billing/billingPeriods/201702",

"meterId": "00000000-0000-0000-0000-000000000000",

"unitOfMeasure": "100 Hours",

"includedQuantity": 100,

"partNumber": "XX-11110",

"unitPrice": 0.00000,

"currencyCode": "EUR",

"offerId": "OfferId 1",

"meterDetails": {

"meterName": "Data Transfer Out (GB)",

"meterCategory": "Networking",

"unit": "GB",

"meterLocation": "Zone 2",

"totalIncludedQuantity": 0,

"pretaxStandardRate": 0.000

}

}

]

}

}

```

### Reserved instance usage details

Microsoft isn't actively working on synchronous-based Reservation Details APIs. We recommend at you move to the newer SPN-supported asynchronous API call pattern as a part of the migration. Asynchronous requests better handle large amounts of data and will reduce timeout errors.

#### Supported requests

Use the following request URIs when calling the new Asynchronous Reservation Details API. Your enrollment number should be used as the `billingAccountId`. You can call the API with the following scopes:

- Enrollment: `providers/Microsoft.Billing/billingAccounts/{billingAccountId}`

#### Sample request to generate a reservation details report

```json

POST

https://management.azure.com/providers/Microsoft.Billing/billingAccounts/{billingAccountId}/providers/Microsoft.CostManagement/generateReservationDetailsReport?startDate={startDate}&endDate={endDate}&api-version=2019-11-01

```

#### Sample request to poll report generation status

```json

GET

https://management.azure.com/providers/Microsoft.Billing/billingAccounts/{billingAccountId}/providers/Microsoft.CostManagement/reservationDetailsOperationResults/{operationId}?api-version=2019-11-01

```

#### Sample poll response

```json

{

"status": "Completed",

"properties": {

"reportUrl": "https://storage.blob.core.windows.net/details/20200911/00000000-0000-0000-0000-000000000000?sv=2016-05-31&sr=b&sig=jep8HT2aphfUkyERRZa5LRfd9RPzjXbzB%2F9TNiQ",

"validUntil": "2020-09-12T02:56:55.5021869Z"

}

}

```

#### Response body changes

The response of the older synchronous based Reservation Details API is below.

_Old response_:

```json

{

"reservationOrderId": "00000000-0000-0000-0000-000000000000",

"reservationId": "00000000-0000-0000-0000-000000000000",

"usageDate": "2018-02-01T00:00:00",

"skuName": "Standard_F2s",

"instanceId": "/subscriptions/00000000-0000-0000-0000-000000000000/resourcegroups/resourvegroup1/providers/microsoft.compute/virtualmachines/VM1",

"totalReservedQuantity": 18.000000000000000,

"reservedHours": 432.000000000000000,

"usedHours": 400.000000000000000

}

```

_New response_:

The new API creates a CSV file for you. See the following file fields.

| **Old Property** | **New Property** | **Notes** |

| --- | --- | --- |

| | InstanceFlexibilityGroup | New property for instance flexibility. |

| | InstanceFlexibilityRatio | New property for instance flexibility. |

| instanceId | InstanceName | |

| | Kind | It's a new property. Value is `None`, `Reservation`, or `IncludedQuantity`. |

| reservationId | ReservationId | |

| reservationOrderId | ReservationOrderId | |

| reservedHours | ReservedHours | |

| skuName | SkuName | |

| totalReservedQuantity | TotalReservedQuantity | |

| usageDate | UsageDate | |

| usedHours | UsedHours | |

### Reserved Instance Usage Summary

Use the following request URIs to call the new Reservation Summaries API.

#### Supported requests

Call the API with the following scopes:

- Enrollment: `providers/Microsoft.Billing/billingAccounts/{billingAccountId}`

[_Get Reservation Summary Daily_](/rest/api/consumption/reservationssummaries/list#reservationsummariesdailywithbillingaccountid)

```json

https://management.azure.com/{scope}/Microsoft.Consumption/reservationSummaries?grain=daily&$filter=properties/usageDate ge 2017-10-01 AND properties/usageDate le 2017-11-20&api-version=2019-10-01

```

[_Get Reservation Summary Monthly_](/rest/api/consumption/reservationssummaries/list#reservationsummariesmonthlywithbillingaccountid)

```json

https://management.azure.com/{scope}/Microsoft.Consumption/reservationSummaries?grain=daily&$filter=properties/usageDate ge 2017-10-01 AND properties/usageDate le 2017-11-20&api-version=2019-10-01

```

#### Response body changes

_Old response_:

```json

[

{

"reservationOrderId": "00000000-0000-0000-0000-000000000000",

"reservationId": "00000000-0000-0000-0000-000000000000",

"skuName": "Standard_F1s",

"reservedHours": 24,

"usageDate": "2018-05-01T00:00:00",

"usedHours": 23,

"minUtilizationPercentage": 0,

"avgUtilizationPercentage": 95.83,

"maxUtilizationPercentage": 100

}

]

```

_New response_:

```json

{

"value": [

{

"id": "/providers/Microsoft.Billing/billingAccounts/12345/providers/Microsoft.Consumption/reservationSummaries/reservationSummaries_Id1",

"name": "reservationSummaries_Id1",

"type": "Microsoft.Consumption/reservationSummaries",

"tags": null,

"properties": {

"reservationOrderId": "00000000-0000-0000-0000-000000000000",

"reservationId": "00000000-0000-0000-0000-000000000000",

"skuName": "Standard_B1s",

"reservedHours": 720,

"usageDate": "2018-09-01T00:00:00-07:00",

"usedHours": 0,

"minUtilizationPercentage": 0,

"avgUtilizationPercentage": 0,

"maxUtilizationPercentage": 0

}

}

]

}

```

### Reserved instance recommendations

Use the following request URIs to call the new Reservation Recommendations API.

#### Supported requests

Call the API with the following scopes:

- Enrollment: `providers/Microsoft.Billing/billingAccounts/{billingAccountId}`

- Subscription: `subscriptions/{subscriptionId}`

- Resource Groups: `subscriptions/{subscriptionId}/resourceGroups/{resourceGroupName}`

[_Get Recommendations_](/rest/api/consumption/reservationrecommendations/list)

Both the shared and the single scope recommendations are available through this API. You can also filter on the scope as an optional API parameter.

```json

https://management.azure.com/providers/Microsoft.Billing/billingAccounts/123456/providers/Microsoft.Consumption/reservationRecommendations?api-version=2019-10-01

```

#### Response body changes

Recommendations for Shared and Single scopes are combined into one API.

_Old response_:

```json

[{

"subscriptionId": "1111111-1111-1111-1111-111111111111",

"lookBackPeriod": "Last7Days",

"meterId": "2e3c2132-1398-43d2-ad45-1d77f6574933",

"skuName": "Standard_DS1_v2",

"term": "P1Y",

"region": "westus",

"costWithNoRI": 186.27634908960002,

"recommendedQuantity": 9,

"totalCostWithRI": 143.12931642978083,

"netSavings": 43.147032659819189,

"firstUsageDate": "2018-02-19T00:00:00"

}

]

```

_New response_:

```json

{

"value": [

{

"id": "billingAccount/123456/providers/Microsoft.Consumption/reservationRecommendations/00000000-0000-0000-0000-000000000000",

"name": "00000000-0000-0000-0000-000000000000",

"type": "Microsoft.Consumption/reservationRecommendations",

"location": "westus",

"sku": "Standard_DS1_v2",

"kind": "legacy",

"properties": {

"meterId": "00000000-0000-0000-0000-000000000000",

"term": "P1Y",

"costWithNoReservedInstances": 12.0785105,

"recommendedQuantity": 1,

"totalCostWithReservedInstances": 11.4899644807748,

"netSavings": 0.588546019225182,

"firstUsageDate": "2019-07-07T00:00:00-07:00",

"scope": "Shared",

"lookBackPeriod": "Last7Days",

"instanceFlexibilityRatio": 1,

"instanceFlexibilityGroup": "DSv2 Series",

"normalizedSize": "Standard_DS1_v2",

"recommendedQuantityNormalized": 1,

"skuProperties": [

{

"name": "Cores",

"value": "1"

},

{

"name": "Ram",

"value": "1"

}

]

}

},

]

}

```

### Reserved instance charges

Use the following request URIs to call the new Reserved Instance Charges API.

#### Supported requests

[_Get Reservation Charges by Date Range_](/rest/api/consumption/reservationtransactions/list)

```json

https://management.azure.com/providers/Microsoft.Billing/billingAccounts/{billingAccountId}/providers/Microsoft.Consumption/reservationTransactions?$filter=properties/eventDate+ge+2020-05-20+AND+properties/eventDate+le+2020-05-30&api-version=2019-10-01

```

#### Response body changes

_Old response_:

```json

[

{

"purchasingEnrollment": "string",

"armSkuName": "Standard_F1s",

"term": "P1Y",

"region": "eastus",

"PurchasingsubscriptionGuid": "00000000-0000-0000-0000-000000000000",

"PurchasingsubscriptionName": "string",

"accountName": "string",

"accountOwnerEmail": "string",

"departmentName": "string",

"costCenter": "",

"currentEnrollment": "string",

"eventDate": "string",

"reservationOrderId": "00000000-0000-0000-0000-000000000000",

"description": "Standard_F1s eastus 1 Year",

"eventType": "Purchase",

"quantity": int,

"amount": double,

"currency": "string",

"reservationOrderName": "string"

}

]

```

_New response_:

```json

{

"value": [

{

"id": "/billingAccounts/123456/providers/Microsoft.Consumption/reservationtransactions/201909091919",

"name": "201909091919",

"type": "Microsoft.Consumption/reservationTransactions",

"tags": {},

"properties": {

"eventDate": "2019-09-09T19:19:04Z",

"reservationOrderId": "00000000-0000-0000-0000-000000000000",

"description": "Standard_DS1_v2 westus 1 Year",

"eventType": "Cancel",

"quantity": 1,

"amount": -21,

"currency": "USD",

"reservationOrderName": "Transaction-DS1_v2",

"purchasingEnrollment": "123456",

"armSkuName": "Standard_DS1_v2",

"term": "P1Y",

"region": "westus",

"purchasingSubscriptionGuid": "11111111-1111-1111-1111-11111111111",

"purchasingSubscriptionName": "Infrastructure Subscription",

"accountName": "Microsoft Infrastructure",

"accountOwnerEmail": "admin@microsoft.com",

"departmentName": "Unassigned",

"costCenter": "",

"currentEnrollment": "123456",

"billingFrequency": "recurring"

}

},

]

}

```

## Use additional Cost Management APIs

After you've migrated to Azure Resource Manager APIs for your existing reporting scenarios, you can use many other APIs, too. The APIs are also available through Azure Resource Manager and can be automated using Service Principal-based authentication. Here's a quick summary of the new capabilities that you can use.

- [Budgets](/rest/api/consumption/budgets/createorupdate) - Use to set thresholds to proactively monitor your costs, alert relevant stakeholders, and automate actions in response to threshold breaches.

- [Alerts](/rest/api/cost-management/alerts) - Use to view alert information including, but not limited to, budget alerts, invoice alerts, credit alerts, and quota alerts.

- [Exports](/rest/api/cost-management/exports) - Use to schedule recurring data export of your charges to an Azure Storage account of your choice. It's the recommended solution for customers with a large Azure presence who want to analyze their data and use it in their own internal systems.

## Next steps

- Familiarize yourself with the [Azure Resource Manager REST APIs](/rest/api/azure).

- If needed, determine which EA APIs you use and see which Azure Resource Manager APIs to move to at [EA API mapping to new Azure Resource Manager APIs](#ea-api-mapping-to-new-azure-resource-manager-apis).

- If you're not already using Azure Resource Manager APIs, [register your client app with Azure AD](/rest/api/azure/#register-your-client-application-with-azure-ad).

- If needed, update any of your programming code to use [Azure AD authentication](/rest/api/azure/#create-the-request) with your Service Principal. | 42.47541 | 774 | 0.718641 | eng_Latn | 0.627252 |

e917757d4247bc14c2d017adb0b27972167119a1 | 594 | md | Markdown | README.md | xt0fer/COBOL-Lab2 | ef67b2623dc152a91df651fbb257a12eafa6f574 | [

"MIT"

] | null | null | null | README.md | xt0fer/COBOL-Lab2 | ef67b2623dc152a91df651fbb257a12eafa6f574 | [

"MIT"

] | null | null | null | README.md | xt0fer/COBOL-Lab2 | ef67b2623dc152a91df651fbb257a12eafa6f574 | [

"MIT"

] | null | null | null | # COBOL-Lab2

1) Modify the your 'ask-name' program from Lab1 such that only the users Alice and Bob are greeted with their names

2) Write a program that asks the user for a number n and prints the sum of the numbers 1 to n

3) Guessing game (Too Large/ Too Small)

Write a guessing game where the user has to guess a secret number. After every guess the program tells the user whether their number was too large or too small. At the end the number of tries needed should be printed. It counts only as one try if they input the same number multiple times consecutively.

submit a pull request

| 49.5 | 303 | 0.777778 | eng_Latn | 0.99999 |

e91ac64cba91fcc0f16fc6e7fb59282dcfce54ad | 3,818 | md | Markdown | README.md | rogerjdeangelis/utl_using_sas_zip_qnd_unzip_engines | 0924d8ff9c13d30394247e3cc15ae16731fb0013 | [

"MIT"

] | null | null | null | README.md | rogerjdeangelis/utl_using_sas_zip_qnd_unzip_engines | 0924d8ff9c13d30394247e3cc15ae16731fb0013 | [

"MIT"

] | null | null | null | README.md | rogerjdeangelis/utl_using_sas_zip_qnd_unzip_engines | 0924d8ff9c13d30394247e3cc15ae16731fb0013 | [

"MIT"

] | null | null | null | # utl_using_sas_zip_and_unzip_engines

Using SAS zip qnd unzip engines. Keywords: sas sql join merge big data analytics macros oracle teradata mysql sas communities stackoverflow statistics artificial inteligence AI Python R Java Javascript WPS Matlab SPSS Scala Perl C C# Excel MS Access JSON graphics maps NLP natural language processing machine learning igraph DOSUBL DOW loop stackoverflow SAS community.

Using SAS zip qnd unzip engines

Not sure I understand the problem

github

https://github.com/rogerjdeangelis/utl_using_sas_zip_and_unzip_engines

see

https://goo.gl/snSxAp

https://communities.sas.com/t5/General-SAS-Programming/Export-zip-files-using-ods/m-p/429058

WPS had the following ERROR

ERROR: ZIP is not a valid access method

INPUT (csv file)

================

d:\csv\class.csv

NAME,SEX,AGE,HEIGHT,WEIGHT

Alfred,M,14,69,112.5

Alice,F,13,56.5,84

Barbara,F,13,65.3,98

Carol,F,14,62.8,102.5

Henry,M,14,63.5,102.5

James,M,12,57.3,83

Jane,F,12,59.8,84.5

....

PROCESS (all the code)

======================

ZIP the csv file

filename foo ZIP 'd:\zip\class.zip';

data _null_;

infile "d:\csv\class.csv";

input;

file foo(class);

put _infile_;

run;quit;

UNZIP the csv file

filename foo ZIP 'd:\zip\class.zip';

data _null_;

infile foo(class);

input;

put _infile_;

run;quit;

OUTPUT

======

ZIP: d:\zip\class.zip

Files in the ZIP file

MEMNAME

class

N = 1

UNZIP d:\zip\class.zip

This will appear in log

NAME,SEX,AGE,HEIGHT,WEIGHT

Alfred,M,14,69,112.5

Alice,F,13,56.5,84

Barbara,F,13,65.3,98

Carol,F,14,62.8,102.5

Henry,M,14,63.5,102.5

James,M,12,57.3,83

Jane,F,12,59.8,84.5

* _ _ _

_ __ ___ __ _| | _____ __| | __ _| |_ __ _

| '_ ` _ \ / _` | |/ / _ \ / _` |/ _` | __/ _` |

| | | | | | (_| | < __/ | (_| | (_| | || (_| |

|_| |_| |_|\__,_|_|\_\___| \__,_|\__,_|\__\__,_|

;

dm "dexport sashelp.class 'd:\csv\class.csv' replace";

or type on Classic editor command line

dexport sashelp.class 'd:\csv\class.csv' replace

* _ _ _

___ ___ | |_ _| |_(_) ___ _ __

/ __|/ _ \| | | | | __| |/ _ \| '_ \

\__ \ (_) | | |_| | |_| | (_) | | | |

|___/\___/|_|\__,_|\__|_|\___/|_| |_|

;

ZIP the csv file

filename foo ZIP 'd:\zip\class.zip';

data _null_;

infile "d:\csv\class.csv";

input;

file foo(class);

put _infile_;

run;quit;

UNZIP the csv file

filename foo ZIP 'd:\zip\class.zip';

data _null_;

infile foo(class);

input;

put _infile_;

run;quit;

* _ _ _

___(_)_ __ ___ ___ _ __ | |_ ___ _ __ | |_ ___

|_ / | '_ \ / __/ _ \| '_ \| __/ _ \ '_ \| __/ __|

/ /| | |_) | | (_| (_) | | | | || __/ | | | |_\__ \

/___|_| .__/ \___\___/|_| |_|\__\___|_| |_|\__|___/

|_|

;

filename inzip zip "d:\zip\class.zip";

/* Read the "members" (files) from the ZIP file */

data contents(keep=memname);

length memname $200;

fid=dopen("inzip");

if fid=0 then

stop;

memcount=dnum(fid);

do i=1 to memcount;

memname=dread(fid,i);

output;

end;

rc=dclose(fid);

run;

/* create a report of the ZIP contents */

title "Files in the ZIP file";

proc print data=contents noobs N;

run;

| 24.792208 | 369 | 0.509953 | yue_Hant | 0.356431 |

e91b7619a72fe9c233f318f0f9a9d6f7f3bfcfda | 3,122 | md | Markdown | airbyte-integrations/connectors/source-plaid/README.md | rajatariya21/airbyte | 11e70a7a96e2682b479afbe6f709b9a5fe9c4a8d | [

"MIT"

] | 6,215 | 2020-09-21T13:45:56.000Z | 2022-03-31T21:21:45.000Z | airbyte-integrations/connectors/source-plaid/README.md | rajatariya21/airbyte | 11e70a7a96e2682b479afbe6f709b9a5fe9c4a8d | [

"MIT"

] | 8,448 | 2020-09-21T00:43:50.000Z | 2022-03-31T23:56:06.000Z | airbyte-integrations/connectors/source-plaid/README.md | rajatariya21/airbyte | 11e70a7a96e2682b479afbe6f709b9a5fe9c4a8d | [

"MIT"

] | 1,251 | 2020-09-20T05:48:47.000Z | 2022-03-31T10:41:29.000Z | # Plaid Source

This is the repository for the JavaScript Template source connector, written in JavaScript.

For information about how to use this connector within Airbyte, see [the documentation](https://docs.airbyte.io/integrations/sources/javascript-template).

## Local development

### Prerequisites

**To iterate on this connector, make sure to complete this prerequisites section.**

#### Build & Activate Virtual Environment

First, build the module by running the following from the `airbyte` project root directory:

```

./gradlew :airbyte-integrations:connectors:source-plaid:build

```

This will generate a virtualenv for this module in `source-plaid/.venv`. Make sure this venv is active in your

development environment of choice. To activate the venv from the terminal, run:

```

cd airbyte-integrations/connectors/source-plaid # cd into the connector directory

source .venv/bin/activate

```

If you are in an IDE, follow your IDE's instructions to activate the virtualenv.

#### Create credentials

**If you are a community contributor**, follow the instructions in the [documentation](https://docs.airbyte.io/integrations/sources/javascript-template)

to generate the necessary credentials. Then create a file `secrets/config.json` conforming to the `source_javascript_template/spec.json` file.

See `sample_files/sample_config.json` for a sample config file.

**If you are an Airbyte core member**, copy the credentials in RPass under the secret name `source-plaid-integration-test-config`

and place them into `secrets/config.json`.

### Locally running the connector

```

npm install

node source.js spec

node source.js check --config secrets/config.json

node source.js discover --config secrets/config.json

node source.js read --config secrets/config.json --catalog sample_files/configured_catalog.json

```

### Unit Tests (wip)

To run unit tests locally, from the connector directory run:

```

npm test

```

### Locally running the connector docker image

```

# in airbyte root directory

./gradlew :airbyte-integrations:connectors:source-plaid:airbyteDocker

docker run --rm airbyte/source-plaid:dev spec

docker run --rm -v $(pwd)/airbyte-integrations/connectors/source-plaid/secrets:/secrets airbyte/source-plaid:dev check --config /secrets/config.json

docker run --rm -v $(pwd)/airbyte-integrations/connectors/source-plaid/secrets:/secrets airbyte/source-plaid:dev discover --config /secrets/config.json

docker run --rm -v $(pwd)/airbyte-integrations/connectors/source-plaid/secrets:/secrets -v $(pwd)/airbyte-integrations/connectors/source-plaid/sample_files:/sample_files airbyte/source-plaid:dev read --config /secrets/config.json --catalog /sample_files/fullrefresh_configured_catalog.json

```

### Integration Tests

1. From the airbyte project root, run `./gradlew :airbyte-integrations:connectors:source-plaid:integrationTest` to run the standard integration test suite.

1. To run additional integration tests, place your integration tests in a new directory `integration_tests` and run them with `node test (wip)`.

## Dependency Management

All of your dependencies should go in `package.json`.

| 41.078947 | 289 | 0.785394 | eng_Latn | 0.925415 |

e91b9b04ae6be9df32234e82c06b0210d6a72a79 | 222 | md | Markdown | Compiled/Readme.md | SDXorg/ANTLR-collection | 790604794b7af8c75a9a8ea7a45a5fa437f46a2e | [

"MIT"

] | 1 | 2015-11-17T19:38:39.000Z | 2015-11-17T19:38:39.000Z | Compiled/Readme.md | SDXorg/ANTLR-collection | 790604794b7af8c75a9a8ea7a45a5fa437f46a2e | [

"MIT"

] | 1 | 2015-11-17T19:37:36.000Z | 2015-11-17T19:37:36.000Z | Compiled/Readme.md | SDXorg/ANTLR_collection | 790604794b7af8c75a9a8ea7a45a5fa437f46a2e | [

"MIT"

] | null | null | null | #Parsers and Lexers

This folder holds the ANTLR4 output from the model grammars, compiled with each of the various ANTLR bindings. We don't modify these directly, but extend them by constructing visitor/listener classes.

| 55.5 | 200 | 0.815315 | eng_Latn | 0.999546 |

e91c5cec506919bca19d7c72c228c0d4171bb213 | 2,394 | md | Markdown | docs/src/man/backends.md | UnofficialJuliaMirrorSnapshots/Gadfly.jl-c91e804a-d5a3-530f-b6f0-dfbca275c004 | d180d5760c758863f24e27e2bc42d7c669fc75ed | [

"MIT"

] | 996 | 2016-10-13T18:33:30.000Z | 2022-03-25T04:40:31.000Z | docs/src/man/backends.md | UnofficialJuliaMirrorSnapshots/Gadfly.jl-c91e804a-d5a3-530f-b6f0-dfbca275c004 | d180d5760c758863f24e27e2bc42d7c669fc75ed | [

"MIT"

] | 730 | 2016-10-11T03:23:01.000Z | 2022-03-31T18:20:39.000Z | docs/src/man/backends.md | UnofficialJuliaMirrorSnapshots/Gadfly.jl-c91e804a-d5a3-530f-b6f0-dfbca275c004 | d180d5760c758863f24e27e2bc42d7c669fc75ed | [

"MIT"

] | 189 | 2016-10-19T22:33:09.000Z | 2022-03-30T00:59:54.000Z | ```@meta

Author = "Daniel C. Jones, Tamas Nagy"

```

# Backends

Gadfly supports creating SVG images out of the box through the native Julian

renderer in [Compose.jl](https://github.com/GiovineItalia/Compose.jl). The

PNG, PDF, PS, and PGF formats, however, require Julia's bindings to

[cairo](https://www.cairographics.org/) and

[fontconfig](https://www.freedesktop.org/wiki/Software/fontconfig/), which can

be installed with

```julia

Pkg.add("Cairo")

Pkg.add("Fontconfig")

```

## Rendering to a file

In addition to the `draw` interface presented in the [Tutorial](@ref Tutorial):

```julia

p = plot(...)

draw(SVG("foo.svg", 6inch, 4inch), p)

```

one can more succintly use Julia's function chaining syntax:

```julia

p |> SVG("foo.svg", 6inch, 4inch)

```

If you plan on drawing many figures of the same size, consider

setting it as the default:

```julia

set_default_plot_size(6inch, 4inch)

p1 |> SVG("foo1.svg")

p2 |> SVG("foo2.svg")

p3 |> SVG("foo3.svg")

```

## Choosing a backend

Drawing to different backends is easy. Simply swap `SVG` for one

of `SVGJS`, `PNG`, `PDF`, `PS`, or `PGF`:

```julia

# e.g.

p |> PDF("foo.pdf")

```

## Interactive SVGs

The `SVGJS` backend writes SVG with embedded javascript. There are a couple

subtleties with using the output from this backend.

Drawing to the backend works like any other

```julia

draw(SVGJS("foo.svg", 6inch, 6inch), p)

```

If included with an `<img>` tag, the output will display as a static SVG image

though.

```html

<img src="foo.svg"/>

```

For the [interactive](@ref Interactivity) javascript features to be enabled, it

either needs to be included inline in the HTML page, or included with an object

tag.

```html

<object data="foo.svg" type="image/svg+xml"></object>

```

For the latter, a `div` element must be placed, and the `draw` function

must be passed the id of this element, so it knows where in the

document to place the plot.

## IJulia

The [IJulia](https://github.com/JuliaLang/IJulia.jl) project adds Julia support

to [Jupyter](https://jupyter.org/). This includes a browser based notebook

that can inline graphics and plots. Gadfly works out of the box with IJulia,

with or without drawing explicity to a backend.

Without an explicit call to `draw` (i.e. just calling `plot` without a trailing

semicolon), the SVGJS backend is used with the default plot size, which can be

changed as described above.

| 24.428571 | 79 | 0.718463 | eng_Latn | 0.988638 |

e91c72790a0415deb0febd645a1910c3f8958601 | 2,674 | md | Markdown | sdk-api-src/content/snmp/nf-snmp-snmputilasnanyfree.md | amorilio/sdk-api | 54ef418912715bd7df39c2561fbc3d1dcef37d7e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | sdk-api-src/content/snmp/nf-snmp-snmputilasnanyfree.md | amorilio/sdk-api | 54ef418912715bd7df39c2561fbc3d1dcef37d7e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | sdk-api-src/content/snmp/nf-snmp-snmputilasnanyfree.md | amorilio/sdk-api | 54ef418912715bd7df39c2561fbc3d1dcef37d7e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

UID: NF:snmp.SnmpUtilAsnAnyFree

title: SnmpUtilAsnAnyFree function (snmp.h)

description: The SnmpUtilAsnAnyFree function frees the memory allocated for the specified AsnAny structure. This function is an element of the SNMP Utility API.

helpviewer_keywords: ["SnmpUtilAsnAnyFree","SnmpUtilAsnAnyFree function [SNMP]","_snmp_snmputilasnanyfree","snmp.snmputilasnanyfree","snmp/SnmpUtilAsnAnyFree"]

old-location: snmp\snmputilasnanyfree.htm

tech.root: SNMP

ms.assetid: b18c3722-398e-4659-ab1c-edd09d5c220d

ms.date: 12/05/2018

ms.keywords: SnmpUtilAsnAnyFree, SnmpUtilAsnAnyFree function [SNMP], _snmp_snmputilasnanyfree, snmp.snmputilasnanyfree, snmp/SnmpUtilAsnAnyFree

req.header: snmp.h

req.include-header:

req.target-type: Windows

req.target-min-winverclnt: Windows 2000 Professional [desktop apps only]

req.target-min-winversvr: Windows 2000 Server [desktop apps only]

req.kmdf-ver:

req.umdf-ver:

req.ddi-compliance:

req.unicode-ansi:

req.idl:

req.max-support:

req.namespace:

req.assembly:

req.type-library:

req.lib: Snmpapi.lib

req.dll: Snmpapi.dll

req.irql:

targetos: Windows

req.typenames:

req.redist:

ms.custom: 19H1

f1_keywords:

- SnmpUtilAsnAnyFree

- snmp/SnmpUtilAsnAnyFree

dev_langs:

- c++

topic_type:

- APIRef

- kbSyntax

api_type:

- DllExport

api_location:

- Snmpapi.dll

api_name:

- SnmpUtilAsnAnyFree

---

# SnmpUtilAsnAnyFree function

## -description

<p class="CCE_Message">[SNMP is available for use in the operating systems specified in the Requirements section. It may be altered or unavailable in subsequent versions. Instead, use <a href="/windows/desktop/WinRM/portal">Windows Remote Management</a>, which is the Microsoft implementation of WS-Man.]

The

<b>SnmpUtilAsnAnyFree</b> function frees the memory allocated for the specified

<a href="/windows/desktop/api/snmp/ns-snmp-asnany">AsnAny</a> structure. This function is an element of the SNMP Utility API.

## -parameters

### -param pAny [in]

Pointer to an

<a href="/windows/desktop/api/snmp/ns-snmp-asnany">AsnAny</a> structure whose memory should be freed.

## -returns

This function does not return a value.

## -remarks

Call the

<b>SnmpUtilAsnAnyFree</b> function to free the memory that the

<a href="/windows/desktop/api/snmp/nf-snmp-snmputilasnanycpy">SnmpUtilAsnAnyCpy</a> function allocates.

## -see-also

<a href="/windows/desktop/api/snmp/ns-snmp-asnany">AsnAny</a>

<a href="/windows/desktop/SNMP/snmp-functions">SNMP Functions</a>

<a href="/windows/desktop/SNMP/simple-network-management-protocol-snmp-">Simple Network Management Protocol (SNMP) Overview</a>

<a href="/windows/desktop/api/snmp/nf-snmp-snmputilasnanycpy">SnmpUtilAsnAnyCpy</a> | 29.711111 | 304 | 0.777861 | eng_Latn | 0.493286 |

e91cd6fd4af3ca680a390f937fdca882b433c07d | 3,572 | md | Markdown | docs/relational-databases/system-stored-procedures/sp-changesubscriptiondtsinfo-transact-sql.md | CeciAc/sql-docs.fr-fr | 0488ed00d9a3c5c0a3b1601a143c0a43692ca758 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/relational-databases/system-stored-procedures/sp-changesubscriptiondtsinfo-transact-sql.md | CeciAc/sql-docs.fr-fr | 0488ed00d9a3c5c0a3b1601a143c0a43692ca758 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/relational-databases/system-stored-procedures/sp-changesubscriptiondtsinfo-transact-sql.md | CeciAc/sql-docs.fr-fr | 0488ed00d9a3c5c0a3b1601a143c0a43692ca758 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-03-04T05:50:54.000Z | 2020-03-04T05:50:54.000Z | ---

title: sp_changesubscriptiondtsinfo (Transact-SQL) | Microsoft Docs

ms.custom: ''

ms.date: 03/04/2017

ms.prod: sql

ms.prod_service: database-engine

ms.reviewer: ''

ms.technology: replication

ms.topic: language-reference

f1_keywords:

- sp_changesubscriptiondtsinfo

- sp_changesubscriptiondtsinfo_TSQL

helpviewer_keywords:

- sp_changesubscriptiondtsinfo

ms.assetid: 64fc085f-f81b-493b-b59a-ee6192d9736d

author: stevestein

ms.author: sstein

ms.openlocfilehash: a091df0cbbeb2883ff9905d7c5b3718d50efa86b

ms.sourcegitcommit: 728a4fa5a3022c237b68b31724fce441c4e4d0ab

ms.translationtype: MT

ms.contentlocale: fr-FR

ms.lasthandoff: 08/03/2019

ms.locfileid: "68762555"

---

# <a name="spchangesubscriptiondtsinfo-transact-sql"></a>sp_changesubscriptiondtsinfo (Transact-SQL)

[!INCLUDE[appliesto-ss-asdbmi-xxxx-xxx-md](../../includes/appliesto-ss-asdbmi-xxxx-xxx-md.md)]

Modifie les propriétés de package DTS (Data Transformation Services) d'un abonnement. Cette procédure stockée est exécutée sur la base de données d'abonnement de l'Abonné.

[Conventions de la syntaxe Transact-SQL](../../t-sql/language-elements/transact-sql-syntax-conventions-transact-sql.md)

## <a name="syntax"></a>Syntaxe

```

sp_changesubscriptiondtsinfo [ [ @job_id = ] job_id ]

[ , [ @dts_package_name= ] 'dts_package_name' ]

[ , [ @dts_package_password= ] 'dts_package_password' ]