hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6 values | lang stringclasses 1 value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191 values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

932938d7e009b526ca879939a912e115cf5ff362 | 858 | md | Markdown | src/pages/escuela/clases/2021-barra-coreografiada.md | denisprado/bambudanza | c5d881cc27a846a00227c53fc1633515b2cdf83f | [

"MIT"

] | null | null | null | src/pages/escuela/clases/2021-barra-coreografiada.md | denisprado/bambudanza | c5d881cc27a846a00227c53fc1633515b2cdf83f | [

"MIT"

] | null | null | null | src/pages/escuela/clases/2021-barra-coreografiada.md | denisprado/bambudanza | c5d881cc27a846a00227c53fc1633515b2cdf83f | [

"MIT"

] | null | null | null | ---

templateKey: programa-post

title: BARRA COREOGRAFIADA

order: 11

date: 2021-01-22T18:23:09.114Z

description: Técnicas fusionadas

featuredpost: true

featuredimage: /img/arnaldo-iv.jpg

tags:

- Barra Coreografiada

profesora: Arnaldo Iarsoli

tarifa:

- 1 X A LA SEMANA (1H) - 45 €

- 2 X A LA SEMANA ONLINE - 60 €

horarios:

- 19h00 a 20h00

dias:

- Martes y Jueves

estilo:

- Jazz y Ballet Clásico

nivel:

- Iniciación

- Intermedio

- Avanzado

---

<!--StartFragment-->

Esta clase tiene como objetivo lograr mejor postura, coordinación y fortalecer la parte superior e inferior del cuerpo con el trabajo de contrapeso, buscando posturas adecuadas de cada miembro, potenciando la creatividad e investigación de cada alumno. Mezclaremos las bases del ballet clásico con el Jazz, trabajando el movimiento de forma placentera.

<!--FinishFragment-->

| 26.8125 | 352 | 0.75641 | spa_Latn | 0.938678 |

93294c61d9920cc2b68a2620bf642d8492de85d3 | 71 | md | Markdown | lib/README.md | cassidoxa/z3r-sramr | 9cd19460737a3f84a19d9aa7ff4db699a342aa71 | [

"MIT"

] | null | null | null | lib/README.md | cassidoxa/z3r-sramr | 9cd19460737a3f84a19d9aa7ff4db699a342aa71 | [

"MIT"

] | 3 | 2021-03-29T19:01:39.000Z | 2021-03-29T19:12:51.000Z | lib/README.md | cassidoxa/z3r-sramr | 9cd19460737a3f84a19d9aa7ff4db699a342aa71 | [

"MIT"

] | null | null | null | # z3r-sramr

## Installation

Add `z3r-sramr = 0.2` to your Cargo.toml

| 11.833333 | 40 | 0.676056 | kor_Hang | 0.405698 |

93295286621450c4df26695ea6909b2eaa32f40d | 1,980 | md | Markdown | node_modules/@seregpie/claw/README.md | MaherSalama2020/GeniusExams | 8091cfaba95ec1747ebb14e6f3a32ea0295fb225 | [

"MIT"

] | null | null | null | node_modules/@seregpie/claw/README.md | MaherSalama2020/GeniusExams | 8091cfaba95ec1747ebb14e6f3a32ea0295fb225 | [

"MIT"

] | null | null | null | node_modules/@seregpie/claw/README.md | MaherSalama2020/GeniusExams | 8091cfaba95ec1747ebb14e6f3a32ea0295fb225 | [

"MIT"

] | null | null | null | # Claw

A very small gesture recognizer.

## demo

[Try it out!](https://seregpie.github.io/VueClaw/)

## setup

### npm

```shell

npm install @seregpie/claw

```

### ES module

```javascript

import Claw from '@seregpie/claw';

```

### browser

```html

<script src="https://unpkg.com/@seregpie/claw"></script>

```

## members

```

.constructor(target, {

delay = 500,

distance = 1,

})

```

| argument | description |

| ---: | :--- |

| `target` | The target element. |

| `delay` | The delay threshold to separate the event types. |

| `distance` | The distance threshold to separate the event types. |

```javascript

let element = document.getElementById('claw');

(new Claw(element))

.on('panStart', event => {

// handle

})

.on('pan', event => {

// handle

})

.on('panEnd', event => {

// handle

});

```

---

`.on(type, listener)`

*chainable*

Binds a listener to an event type.

```javascript

claw.on('tap', event => {

// handle tap event

});

```

---

`.off(type, listener)`

*chainable*

Unbinds a listener from an event type.

Omit the argument `listener` to unbind all listeners from an event type.

Omit the argument `type` to unbind all listeners from all event types.

```javascript

let tapListener = function(event) {

// handle tap event

claw.off('tap', tapListener);

};

claw.on('tap', tapListener);

```

---

`.isIdle`

Returns `true`, if there are no bound listeners.

## events

`holdStart`

```js

{

pointerType,

timeStamp,

x,

y,

}

```

---

`holdEnd`

```js

{

initialTimeStamp,

pointerType,

timeStamp,

x,

y,

}

```

---

`panStart`

```js

{

pointerType,

timeStamp,

x,

y,

}

```

---

`pan`

```js

{

initialTimeStamp,

initialX,

initialY,

pointerType,

previousTimeStamp,

previousX,

previousY,

timeStamp,

x,

y,

}

```

---

`panEnd`

```js

{

initialTimeStamp,

initialX,

initialY,

pointerType,

timeStamp,

x,

y,

}

```

---

`tap`

```js

{

pointerType,

timeStamp,

x,

y,

}

```

| 10.819672 | 72 | 0.6 | eng_Latn | 0.542967 |

9329ea19b1ae97cffb1c4a2f6112173dbd1ca2f4 | 1,035 | md | Markdown | junior_class/chapter-4-Object_Detection/README_en.md | wwhio/awesome-DeepLearning | 2cc92edcf0c22bdfc670c537cc819c8fadf33fac | [

"Apache-2.0"

] | 1,150 | 2021-06-01T03:44:21.000Z | 2022-03-31T13:43:42.000Z | junior_class/chapter-4-Object_Detection/README_en.md | wwhio/awesome-DeepLearning | 2cc92edcf0c22bdfc670c537cc819c8fadf33fac | [

"Apache-2.0"

] | 358 | 2021-06-01T03:58:47.000Z | 2022-03-28T02:55:00.000Z | junior_class/chapter-4-Object_Detection/README_en.md | wwhio/awesome-DeepLearning | 2cc92edcf0c22bdfc670c537cc819c8fadf33fac | [

"Apache-2.0"

] | 502 | 2021-05-31T12:52:14.000Z | 2022-03-31T02:51:41.000Z | # Computer Vision: Object_Detection[[简体中文](./README.md)]

## Introduction

In this chapter, you will learn some basic concepts for target detection tasks, including: bounding box, anchor box, IOU, non-maximum suppression, and mAP. At the same time, the basic principle of YOLOV3 target detection model is introduced, and model training and testing experiments are completed through forest pest and disease data sets. Hopefully, through this chapter, you will have a basic understanding of the target detection task and YOLOV3.

## Structure

This chapter is presented in the form of notebook and code:

- The notebook provides the learning tutorial of this chapter, with complete text description. In order to have a better reading method, you can also visit the [notebook document](https://aistudio.baidu.com/aistudio/education/group/info/9045/content) on the AIStudio platform.

- The code section provides a complete learning code. For the specific usage tutorial, please refer to the [[README](./code/README.md)] in the code section.

| 94.090909 | 451 | 0.795169 | eng_Latn | 0.997152 |

932b54cb71418679ea7982e75a27b10b271d8df8 | 5,053 | md | Markdown | README.md | hiroq/RxWebSocketClient | 17c874f77a50343fb32b9e28a52bffeddf97f452 | [

"MIT"

] | 1 | 2016-10-27T07:45:39.000Z | 2016-10-27T07:45:39.000Z | README.md | hiroq/RxWebSocketClient | 17c874f77a50343fb32b9e28a52bffeddf97f452 | [

"MIT"

] | null | null | null | README.md | hiroq/RxWebSocketClient | 17c874f77a50343fb32b9e28a52bffeddf97f452 | [

"MIT"

] | null | null | null | # RxWebSocketClient

Simple RxJava WebSocketClient

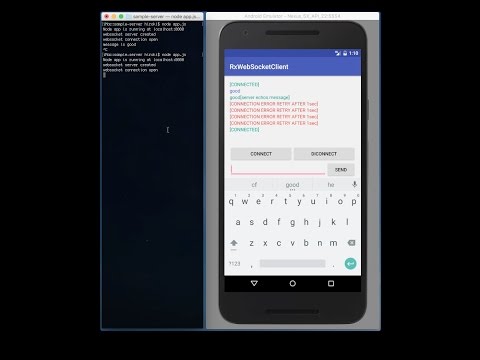

# DEMO Video

[](http://www.youtube.com/watch?v=831TLryJnR8)

# Install

```groovy

dependencies {

compile 'net.hiroq:rxwsc:0.1.7'

}

```

# Usage

If you use lambda, the code will be simpler!

```java

onResume(){

mSocketClient = new RxWebSocketClient();

mSubscription = mSocketClient.connect(Uri.parse("ws://hogehoge"))

.subscribeOn(Schedulers.newThread())

.observeOn(AndroidSchedulers.mainThread())

.subscribe(new Action1<RxWebSocketClient.Event>() {

@Override

public void call(RxWebSocketClient.Event event) {

Log.d(TAG, "== onNext ==");

switch (event.getType()) {

case CONNECT:

Log.d(TAG, " CONNECT");

mSocketClient.send("test");

break;

case DISCONNECT:

Log.d(TAG, " DISCONNECT");

break;

case MESSAGE_BINARY:

Log.d(TAG, " MESSAGE_BINARY : bytes = " + event.getBytes().length);

break;

case MESSAGE_STRING:

Log.d(TAG, " MESSAGE_STRING = " + event.getString());

break;

}

}

}, new Action1<Throwable>() {

@Override

public void call(Throwable throwable) {

Log.d(TAG, "== onError ==");

throwable.printStackTrace();

}

}, new Action0() {

@Override

public void call() {

Log.d(TAG, "== onComplete ==");

}

});

}

}

onPause(){

// After this call, connection will be disconnected.

mSubscription.unsubscribe();

}

onClick(){

mSocketClient.send("This is test message");

}

```

If you want to reconnect automatically, use retryWhen like below:

```java

mSocketClient = new RxWebSocketClient();

mSubscription = mSocketClient.connect(Uri.parse("ws://hogehoge"))

.retryWhen(new Func1<Observable<? extends Throwable>, Observable<?>>() {

@Override

public Observable<?> call(final Observable<? extends Throwable> observable) {

return observable.flatMap(new Func1<Throwable, Observable<?>>() {

@Override

public Observable<?> call(Throwable throwable) {

// If the exception is ConnectionException,

// retry with 5 second delay.

if (throwable instanceof ConnectException) {

Log.d(TAG, "retry with delay");

return Observable.timer(5, TimeUnit.SECONDS);

}else {

// Other exceptions will be handle onError

return Observable.error(throwable);

}

}

});

}

})

.subscribeOn(Schedulers.newThread())

.observeOn(AndroidSchedulers.mainThread())

```

# Sample

You can run sample project with sample WebSocket server which is implemented in Javascript and run on Node.js.

```

cd sample-server

npm install

node app.js

```

Then run sample android app on an emulator.

> If you want run the sample on a real devide, you have to change Connetion URL.

# TODO

* make test

# Licence

```

MIT License

Copyright (c) 2016 Hiroki Oizumi

This library is ported from following libraries

1. Copyright (c) 2010-2016 James Coglan<br>

faye's faye-websocket-node<br>

https://github.com/faye/faye-websocket-node

2. Copyright (c) 2012 Eric Butler<br>

codebutler's android-websockets.<br>

https://github.com/codebutler/android-websockets

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

```

| 34.37415 | 110 | 0.584603 | eng_Latn | 0.350824 |

932b92ad38add1a6da2f3d8fe025c2ebf9020ea3 | 111 | md | Markdown | _news/new_2.md | npitsillos/npitsillos.github.io | 6aaba62ce7c9ea38da862d68624ae74892dbd26e | [

"MIT"

] | null | null | null | _news/new_2.md | npitsillos/npitsillos.github.io | 6aaba62ce7c9ea38da862d68624ae74892dbd26e | [

"MIT"

] | null | null | null | _news/new_2.md | npitsillos/npitsillos.github.io | 6aaba62ce7c9ea38da862d68624ae74892dbd26e | [

"MIT"

] | null | null | null | ---

layout: post

date: 2021-05-24

inline: true

---

[Paper](/publications/) accepted at ICDL-EpiRob 2021 :tada: | 15.857143 | 59 | 0.693694 | eng_Latn | 0.41249 |

932cbb4d691f2e090197ea74035afebaa33e364e | 2,300 | md | Markdown | docs/workflow-designer/messaging-activity-designers.md | klmnden/visualstudio-docs.tr-tr | 82aa1370dab4ae413f5f924dad3e392ecbad0d02 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-09-01T20:45:52.000Z | 2020-09-01T20:45:52.000Z | docs/workflow-designer/messaging-activity-designers.md | klmnden/visualstudio-docs.tr-tr | 82aa1370dab4ae413f5f924dad3e392ecbad0d02 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/workflow-designer/messaging-activity-designers.md | klmnden/visualstudio-docs.tr-tr | 82aa1370dab4ae413f5f924dad3e392ecbad0d02 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: İş Akışı Tasarımcısı - Mesajlaşma etkinlik tasarımcıları

ms.date: 11/04/2016

ms.topic: reference

ms.assetid: 897e63cf-a42f-4edd-876f-c4ccfffaf6d6

author: gewarren

ms.author: gewarren

manager: jillfra

ms.workload:

- multiple

ms.openlocfilehash: a9868b5eb52edde8e12d6a3b4f5edab1a4a9e499

ms.sourcegitcommit: 12f2851c8c9bd36a6ab00bf90a020c620b364076

ms.translationtype: MT

ms.contentlocale: tr-TR

ms.lasthandoff: 06/06/2019

ms.locfileid: "66747098"

---

# <a name="messaging-activity-designers"></a>Mesajlaşma etkinlik tasarımcıları

Mesajlaşma etkinlik tasarımcıları oluşturmak ve bir Windows Workflow Foundation (WF) uygulama içinden Windows Communication Foundation (WCF) iletilerini gönderip Mesajlaşma etkinlikleri yapılandırmak için kullanılır. Beş Mesajlaşma etkinlikleri, .NET Framework 4'te tanıtıldı. İş Akışı Tasarımcısı bir iş akışı içinde Mesajlaşma yönetmenizi sağlayan iki Şablon tasarımcıları sağlar.

Bu bölümde yer alan ve aşağıdaki tabloda listelenen konular, Şablon tasarımcıları ve etkinlik iş akışı Tasarımcısını kullanma konusunda rehberlik sağlar.

- <xref:System.Activities.Activity>

- <xref:System.ServiceModel.Activities.CorrelationScope>

- <xref:System.ServiceModel.Activities.Receive>

- <xref:System.ServiceModel.Activities.Send>

- <xref:System.ServiceModel.Activities.ReceiveReply>

- <xref:System.ServiceModel.Activities.SendReply>

- <xref:System.ServiceModel.Activities.TransactedReceiveScope>

## <a name="related-sections"></a>İlgili bölümler

Etkinlik tasarımcıları diğer türleri için aşağıdaki konulara bakın:

- [Denetim Akışı](../workflow-designer/control-flow-activity-designers.md)

- [Etkinlik Tasarımcılarını kullanma](../workflow-designer/using-the-activity-designers.md)

- [Akış Çizelgesi](../workflow-designer/flowchart-activity-designers.md)

- [Çalışma Zamanı](../workflow-designer/runtime-activity-designers.md)

- [Temel Türler](../workflow-designer/primitives-activity-designers.md)

- [İşlem](../workflow-designer/transaction-activity-designers.md)

- [Koleksiyon](../workflow-designer/collection-activity-designers.md)

- [Hata İşleme](../workflow-designer/error-handling-activity-designers.md)

## <a name="external-resources"></a>Dış kaynaklar

[Etkinlik Tasarımcılarını kullanma](../workflow-designer/using-the-activity-designers.md) | 38.333333 | 382 | 0.808696 | tur_Latn | 0.974589 |

932cd48fe14c8e8b5dfd28c8c6449c1151f2229e | 655 | md | Markdown | CHANGELOG.md | kudohamu/akashic-label | 6f61a7d0d0c95d75412641147ff929a55f0758d9 | [

"MIT"

] | null | null | null | CHANGELOG.md | kudohamu/akashic-label | 6f61a7d0d0c95d75412641147ff929a55f0758d9 | [

"MIT"

] | null | null | null | CHANGELOG.md | kudohamu/akashic-label | 6f61a7d0d0c95d75412641147ff929a55f0758d9 | [

"MIT"

] | null | null | null | # CHANGELOG

## 2.0.5

* 描画タイミングで、`glyph.surface` が存在しない場合の対応。

* `drawImage` 前に、`glyph.isSurfaceValid` にてチェックを行い、破棄されていた場合、改めてglyphの作成を行うよう修正。

## 2.0.4

* サロゲート文字の一部が正しく描画できない問題の解消。

## 2.0.3

* 禁則処理を指定する `lineBreakRule` オプションを追加。

## 2.0.2

* サロゲート文字を正しく描画できない問題の解消。

* サンプルのディレクトリ構造を akashic-cli-init の typescript テンプレートに追従。

## 2.0.1

* サロゲート文字が文字化けする現象に対応。

## 2.0.0

* akashic-engine@2.0.0 系に追従。あわせてバージョンを 2.0.0 に。

* 非推奨だった `LabelParameterObject#bitmapFont` および `RubyOptions#rubyBitmapFont` を削除。

## 0.3.5

* publish対象から不要なファイルを除去。

## 0.3.4

* ビルドツールの変更

* TypeScriptの更新

## 0.3.3

* `trimMarginTop` `widthAutoAdjust` オプションを追加。

## 0.3.2

* 初期リリース

| 14.23913 | 81 | 0.71145 | yue_Hant | 0.62428 |

932ce931103036aff702a6cff832b617be803ca6 | 2,112 | md | Markdown | CONTRIBUTING.md | hfaivre/synthetics-ci-github-action-1 | ca8612ffcfced6982be83e31fe061970146c0121 | [

"MIT"

] | 25 | 2022-01-18T15:17:10.000Z | 2022-03-17T21:52:25.000Z | CONTRIBUTING.md | hfaivre/synthetics-ci-github-action-1 | ca8612ffcfced6982be83e31fe061970146c0121 | [

"MIT"

] | 3 | 2022-01-07T14:06:48.000Z | 2022-03-04T09:29:42.000Z | CONTRIBUTING.md | hfaivre/synthetics-ci-github-action-1 | ca8612ffcfced6982be83e31fe061970146c0121 | [

"MIT"

] | 2 | 2022-01-05T22:27:57.000Z | 2022-02-17T09:25:17.000Z | # Contributing

First of all, thanks for contributing!

This document provides some basic guidelines for contributing to this repository. To propose improvements, feel free to submit a pull request.

## Submitting issues

Github issues are welcome, feel free to submit error reports and feature requests!

- Ensure the bug was not already reported by searching on GitHub under [Issues](https://github.com/DataDog/synthetics-ci-github-action/issues).

- If you're unable to find an open issue addressing the problem, [open a new one](https://github.com/DataDog/synthetics-ci-github-action/issues/new/choose).

- Make sure to add enough details to explain your use case.

If you require further assistance, you can also contact [our support](https://docs.datadoghq.com/help/).

## Submitting pull requests

Have you fixed a bug or written a new feature and want to share it? Many thanks!

In order to ease/speed up our review, here are some items you can check/improve when submitting your pull request:

- **Write meaningful commit messages**

Messages should be concise but explanatory.

The commit message should describe the reason for the change, to later understand quickly the thing you've been working on for a day.

- **Keep it small and focused.**

Pull requests should contain only one fix, or one feature improvement.

Bundling several fixes or features in the same PR will make it harder to review, and eventually take more time to release.

- **Write tests for the code you wrote.**

Each module should be tested.

The tests for a module are located in the [`__tests__` folder](https://github.com/DataDog/synthetics-ci-github-action/tree/main/__tests__), under a file with the same name as the module.

Our CI is not (yet) public, so it may be difficult to understand why your pull request status is failing. Make sure that all tests pass locally, and we'll try to sort it out in our CI.

## Style guide

The code under this repository follows a format enforced by prettier, and a style guide enforced by eslint.

## Asking a questions

Need help? Contact [Datadog support](https://docs.datadoghq.com/help/).

| 49.116279 | 186 | 0.777936 | eng_Latn | 0.998442 |

932e49a7eb4e4d32159b5cf5ee9e1143e50bf627 | 3,490 | md | Markdown | content/publication/mmhri2020_workshop/index.md | zedavid/website | 11b271a4e91c80b4a14ce49f0e88449e1b97bad3 | [

"MIT"

] | null | null | null | content/publication/mmhri2020_workshop/index.md | zedavid/website | 11b271a4e91c80b4a14ce49f0e88449e1b97bad3 | [

"MIT"

] | null | null | null | content/publication/mmhri2020_workshop/index.md | zedavid/website | 11b271a4e91c80b4a14ce49f0e88449e1b97bad3 | [

"MIT"

] | null | null | null | ---

title: "Natural Language Interaction to Facilitate Mental Models of Remote Robots"

# Authors

# If you created a profile for a user (e.g. the default `admin` user), write the username (folder name) here

# and it will be replaced with their full name and linked to their profile.

authors:

- Francisco Javier Chiyah Garcia

- admin

- Helen Hastie

# Author notes (optional)

#author_notes:

#- "Equal contribution"

#- "Equal contribution"

date: "2020-05-01T00:00:00Z"

doi: ""

# Schedule page publish date (NOT publication's date).

publishDate: "2020-12-01T00:00:00Z"

# Publication type.

# Legend: 0 = Uncategorized; 1 = Conference paper; 2 = Journal article;

# 3 = Preprint / Working Paper; 4 = Report; 5 = Book; 6 = Book section;

# 7 = Thesis; 8 = Patent

publication_types: ["1"]

# Publication name and optional abbreviated publication name.

publication: In *Mental Model of Robots Workshop at HRI*

publication_short: In *MM-HRI*

abstract: "Increasingly complex and autonomous robots are being deployed in real-world environments with far-reaching consequences. High-stakes scenarios, such as emergency response or offshore energy platform and nuclear inspections, require robot operators to have clear mental models of what the robots can and can't do. However, operators are often not the original designers of the robots and thus, they do not necessarily have such clear mental models, especially if they are novice users. This lack of mental model clarity can slow adoption and can negatively impact human-machine teaming. We propose that interaction with a conversational assistant, who acts as a mediator, can help the user with understanding the functionality of remote robots and increase transparency through natural language explanations, as well as facilitate the evaluation of operators' mental models."

# Summary. An optional shortened abstract.

summary: A position paper on mental models for HRI.

tags: []

# Display this page in the Featured widget?

featured: true

# Custom links (uncomment lines below)

# links:

# - name: Custom Link

# url: http://example.org

url_pdf: 'https://arxiv.org/pdf/2003.05870.pdf'

url_code: ''

url_dataset: ''

url_poster: ''

url_project: ''

url_slides: ''

url_source: ''

url_video: ''

# Featured image

# To use, add an image named `featured.jpg/png` to your page's folder.

#image:

# caption: "Image credit: [Mr Freeze's lab](https://www.flickr.com/photos/9842867@N04/8560981360)"

# focal_point: ""

# preview_only: false

# Associated Projects (optional).

# Associate this publication with one or more of your projects.

# Simply enter your project's folder or file name without extension.

# E.g. `internal-project` references `content/project/internal-project/index.md`.

# Otherwise, set `projects: []`.

#projects:

#- example

# Slides (optional).

# Associate this publication with Markdown slides.

# Simply enter your slide deck's filename without extension.

# E.g. `slides: "example"` references `content/slides/example/index.md`.

# Otherwise, set `slides: ""`.

#slides: example

---

{{% callout note %}}

Click the *Cite* button above to demo the feature to enable visitors to import publication metadata into their reference management software.

{{% /callout %}}

{{% callout note %}}

Create your slides in Markdown - click the *Slides* button to check out the example.

{{% /callout %}}

Supplementary notes can be added here, including [code, math, and images](https://wowchemy.com/docs/writing-markdown-latex/).

| 39.213483 | 885 | 0.751289 | eng_Latn | 0.980021 |

932e670e962932b9358111bbd25d41849359c8c6 | 24 | md | Markdown | _includes/04-lists.md | redlotus15/markdown-portfolio | d70b85e55e5b67d3365741dfa4e9d011a795e644 | [

"MIT"

] | null | null | null | _includes/04-lists.md | redlotus15/markdown-portfolio | d70b85e55e5b67d3365741dfa4e9d011a795e644 | [

"MIT"

] | 5 | 2019-09-09T21:44:07.000Z | 2019-09-10T19:12:46.000Z | _includes/04-lists.md | redlotus15/markdown-portfolio | d70b85e55e5b67d3365741dfa4e9d011a795e644 | [

"MIT"

] | null | null | null | - Pizza

- Burger

- Cake

| 6 | 8 | 0.625 | kor_Hang | 0.727405 |

932ef7fe492e13c111259a867117d10a7ab335e0 | 799 | md | Markdown | example/README.md | pavannntarget/pspdfkit-flutter | 3ad842d880a9e7e25131b785e43b3e35a0ce8d42 | [

"Apache-2.0"

] | null | null | null | example/README.md | pavannntarget/pspdfkit-flutter | 3ad842d880a9e7e25131b785e43b3e35a0ce8d42 | [

"Apache-2.0"

] | null | null | null | example/README.md | pavannntarget/pspdfkit-flutter | 3ad842d880a9e7e25131b785e43b3e35a0ce8d42 | [

"Apache-2.0"

] | 1 | 2021-01-15T17:46:38.000Z | 2021-01-15T17:46:38.000Z | # PSPDFKit Flutter Example

This is a brief example of how to use the PSPDFKit with Flutter.

# Running the Example Project

1. Clone the repository `git clone https://github.com/PSPDFKit/pspdfkit-flutter.git`

2. Create a local property file in `pspdfkit-flutter/example/android/local.properties` and specify the following properties:

```local.properties

sdk.dir=/path/to/your/Android/sdk

flutter.sdk=/path/to/your/flutter/sdk

flutter.buildMode=debug

```

3. cd `pspdfkit-flutter/example`

4. Run `flutter emulators --launch <EMULATOR_ID>` to launch the desired emulator. Optionally, you can repeat this step to launch multiple emulators.

5. The app is ready to start! Run `flutter run -d all` and the PSPDFKit Flutter example will be deployed on all your devices connected, both iOS and Android.

| 39.95 | 157 | 0.780976 | eng_Latn | 0.906668 |

edbfb87bbbfe83758cdf07cecdf7654893231eec | 3,277 | md | Markdown | _problems/hard/UPDTREE.md | captn3m0/codechef | 9b9a127365d1209893e94f8430b909433af6b5f9 | [

"WTFPL"

] | 14 | 2015-11-27T15:49:32.000Z | 2022-02-04T17:31:27.000Z | _problems/hard/UPDTREE.md | ashrafulislambd/codechef | b192550188e13d7edb211746103fddf049272027 | [

"WTFPL"

] | 40 | 2015-12-16T12:58:07.000Z | 2022-02-02T11:46:05.000Z | _problems/hard/UPDTREE.md | ashrafulislambd/codechef | b192550188e13d7edb211746103fddf049272027 | [

"WTFPL"

] | 18 | 2015-03-30T09:35:35.000Z | 2020-12-03T14:11:12.000Z | ---

category_name: hard

problem_code: UPDTREE

problem_name: 'Updating Edges on Trees'

languages_supported:

- ADA

- ASM

- BASH

- BF

- C

- 'C99 strict'

- CAML

- CLOJ

- CLPS

- 'CPP 4.3.2'

- 'CPP 4.9.2'

- CPP14

- CS2

- D

- ERL

- FORT

- FS

- GO

- HASK

- ICK

- ICON

- JAVA

- JS

- 'LISP clisp'

- 'LISP sbcl'

- LUA

- NEM

- NICE

- NODEJS

- 'PAS fpc'

- 'PAS gpc'

- PERL

- PERL6

- PHP

- PIKE

- PRLG

- PYTH

- 'PYTH 3.4'

- RUBY

- SCALA

- 'SCM guile'

- 'SCM qobi'

- ST

- TCL

- TEXT

- WSPC

max_timelimit: '3.5'

source_sizelimit: '50000'

problem_author: darkshadows

problem_tester: null

date_added: 15-09-2014

tags:

- cook50

- darkshadows

- dfs

- dynamic

- lca

- medium

editorial_url: 'http://discuss.codechef.com/problems/UPDTREE'

time:

view_start_date: 1411324200

submit_start_date: 1411324200

visible_start_date: 1411324200

end_date: 1735669800

current: 1493556895

layout: problem

---

All submissions for this problem are available.### Read problems statements in [English](http://www.codechef.com/download/translated/COOK50/english/UPDTREE.pdf), [Mandarin Chinese](http://www.codechef.com/download/translated/COOK50/mandarin/UPDTREE.pdf) and [Russian](http://www.codechef.com/download/translated/COOK50/russian/UPDTREE.pdf) as well.

You have a [tree](http://en.wikipedia.org/wiki/Tree_(graph_theory)) consisting of **N** vertices numbered **1** to **N**.

Initially each edge has a value equal to zero. You have to first perform **M1** operations and then answer **M2** queries. Note you have to first perform all the operations and then answer all queries after all operations have been done.

Operations are defined by:

**A B C D**: On the path between nodes numbered **A** and **B** increase the value of each edge by **1**, except for those edges which occur on the path between **C** and **D**. Note that there is an unique path between every pair of nodes ie. we don't consider values on edges for finding the path. All four values given in input will be distinct.

Queries are of the following type:

**E F**: Print the sum of values of all the edges on the path between two distinct nodes **E** and **F**. Again the path will be unique. />/>/>/>/>/>/>

### Input

Input description.

First line contains **N**, **M1** and **M2**. Each of the next **N-1** lines contain two integers **u v** denoting an undirected edge between node numbered **u** and **v**. Each of the next **M1** lines contain four integers **Ai Bi Ci Di**, denoting the operations. Each of the next **M2** lines contain two integers **Ei Fi** denoting the queries.

### Output

For each query, print the required answer in one line.

### Constraints

10. **1** ≤ **N** ≤ **105**

11. **1** ≤ **M1, M2** ≤ **5\*105**

12. **1** ≤ **Ai, Bi, Ci, Di, Ei, Fi** ≤ **N**

### Example

<pre><b>Input:</b>

5 2 2

1 2

2 4

2 5

1 3

1 4 2 3

3 4 2 5

4 5

4 3

<b>Output:</b>

2

4

</pre>

### Explanation

On first operation, value of edge (2-4) is increased by one. On second operation, value of edges (1-3), (1-2), (2-4) are increased by one.

**Warning:**Use fast input/output. Large input files.

| 25.80315 | 349 | 0.642356 | eng_Latn | 0.909907 |

edc07d19917a74affdcb875d6a05dcc93cff9dc4 | 1,184 | md | Markdown | _posts/4.CS_/CA/2021-12-21-Computer Architecture-lecture-1-6.md | candymask0712/candymask0712.github.io | 1e47e68639718a4f0ea423bcb4e5ab88b3c349a3 | [

"MIT"

] | null | null | null | _posts/4.CS_/CA/2021-12-21-Computer Architecture-lecture-1-6.md | candymask0712/candymask0712.github.io | 1e47e68639718a4f0ea423bcb4e5ab88b3c349a3 | [

"MIT"

] | 1 | 2021-11-14T09:17:23.000Z | 2021-11-14T09:17:23.000Z | _posts/4.CS_/CA/2021-12-21-Computer Architecture-lecture-1-6.md | candymask0712/candymask0712.github.io | 1e47e68639718a4f0ea423bcb4e5ab88b3c349a3 | [

"MIT"

] | null | null | null | ---

title: "[Computer Architecture] 컴퓨터 구조 - 6 - 부울대수와 논리식의 간편화"

excerpt:

toc: true

toc_sticky: true

toc_label: "페이지 컨텐츠 리스트"

categories:

- Computer Architecture

tags:

- Boolean Algebra

- Karnaugh map

last_modified_at:

---

### **1. 부울대수(Boolean Algebra)**

- T/F 판별 논리 명제를 수학 전개 식으로 구현 (G.Boole)

- 부울 대수의 기본 법칙

- 교환 법칙

```JavaScript

A*B = B*A

A+B = B+A

```

- 결합 법칙

```JavaScript

A*(B*C) = (A*B)*C

(A+B)+C = A+(B+C)

```

- 분배 법칙

```JavaScript

A*(B+C) = A*B + A*C

```

- 드모르간의 법칙

```JavaScript

(A + B)' = A' + B'

(A * B)' = A' * B'

```

[참고자료 - 드모르간의 법칙](https://ko.wikipedia.org/wiki/%EB%93%9C_%EB%AA%A8%EB%A5%B4%EA%B0%84%EC%9D%98_%EB%B2%95%EC%B9%99#:~:text=%EB%93%9C%20%EB%AA%A8%EB%A5%B4%EA%B0%84%EC%9D%98%20%EB%B2%95%EC%B9%99(%EC%98%81%EC%96%B4,%ED%95%9C%20%EA%B2%83%EC%9C%BC%EB%A1%9C%2C%20%EC%88%98%ED%95%99%EC%9E%90%20%EC%98%A4%EA%B1%B0%EC%8A%A4%ED%84%B0%EC%8A%A4)

### **2. 카노(Karnaugh) 맵**

- 논리 표현식을 간편하게 보여줄 수 있는 맵

- 카노 맵의 표현 방법

- 만약 변수가 n개라면 카노맵은 2의n승 개의 민텀

- 각 인접 민텀은 하나의 변수만이 변경되어야 한다

- 출력이 1인 기본 곱에 해당되는 민텀은 1로, 나머지는 0으로 표시

[참고자료 - 컴퓨터 공학 전공 필수 강의 (패스트캠퍼스 - 현재는 수강불가)]

| 19.733333 | 332 | 0.559966 | kor_Hang | 0.998815 |

edc0f8159715ea615d1184535296a2d55deedb22 | 1,523 | md | Markdown | docs/ProfessionalInputDTO.md | em-ad/kotlinsdk | be4c2e4a6222d467a51d9148555eed25264c7eb0 | [

"Condor-1.1"

] | null | null | null | docs/ProfessionalInputDTO.md | em-ad/kotlinsdk | be4c2e4a6222d467a51d9148555eed25264c7eb0 | [

"Condor-1.1"

] | null | null | null | docs/ProfessionalInputDTO.md | em-ad/kotlinsdk | be4c2e4a6222d467a51d9148555eed25264c7eb0 | [

"Condor-1.1"

] | null | null | null | # ProfessionalInputDTO

## Properties

Name | Type | Description | Notes

------------ | ------------- | ------------- | -------------

**aboutMe** | [**kotlin.String**](.md) | | [optional]

**address** | [**kotlin.String**](.md) | | [optional]

**capabilities** | [**kotlin.Array<Capability>**](Capability.md) | | [optional]

**city** | [**kotlin.String**](.md) | | [optional]

**dateOfIssue** | [**kotlin.String**](.md) | | [optional]

**degree** | [**inline**](#DegreeEnum) | | [optional]

**educations** | [**kotlin.Array<Education>**](Education.md) | | [optional]

**gender** | [**inline**](#GenderEnum) | | [optional]

**languages** | [**kotlin.Array<LanguageModel>**](LanguageModel.md) | | [optional]

**licenseCode** | [**kotlin.String**](.md) | | [optional]

**licenseFileId** | [**kotlin.String**](.md) | | [optional]

**personalInformation** | [**PersonalInformationReq**](PersonalInformationReq.md) | | [optional]

**profileImageId** | [**kotlin.String**](.md) | | [optional]

**province** | [**kotlin.String**](.md) | | [optional]

**researches** | [**kotlin.Array<Research>**](Research.md) | | [optional]

**resumes** | [**kotlin.Array<Resume>**](Resume.md) | | [optional]

**userId** | [**kotlin.String**](.md) | | [optional]

<a name="DegreeEnum"></a>

## Enum: degree

Name | Value

---- | -----

degree | ASSOCIATE, BACHELOR, DIPLOMA, DOCTORATE, MASTER, POSTDOCTORAL

<a name="GenderEnum"></a>

## Enum: gender

Name | Value

---- | -----

gender | FEMALE, MALE, NONE

| 43.514286 | 98 | 0.576494 | yue_Hant | 0.39899 |

edc1aa4a26429bcfac8dfb7eacf9e284ed7558c9 | 742 | md | Markdown | content/tentang.md | perkodi-org/perkodi | 41339bac76d1219c72be33543867c83002bf2b78 | [

"MIT"

] | 8 | 2017-10-24T04:19:44.000Z | 2020-03-07T00:35:29.000Z | content/tentang.md | perkodi-org/perkodi | 41339bac76d1219c72be33543867c83002bf2b78 | [

"MIT"

] | 10 | 2017-10-24T03:06:19.000Z | 2021-03-29T17:36:53.000Z | content/tentang.md | perkodi-org/perkodi | 41339bac76d1219c72be33543867c83002bf2b78 | [

"MIT"

] | 6 | 2017-10-24T04:19:00.000Z | 2018-11-19T07:11:52.000Z | ---

title: Tentang PERKODI

---

# Tentang PERKODI

PERKODI lahir sebagai perwujudan dari keinginan sejumlah komunitas pemrograman di Indonesia untuk mengadakan konferensi bahasa pengembangan sumber terbuka (*open source*) bertingkat internasional di tahun 2017.

PERKODI merupakan badan hukum perkumpulan yang sifatnya non-profit. Keanggotaan dan kepengurusan PERKODI adalah sukarela dan sumber penghasilan untuk mengadakan kegiatan datang dari sponsor, donatur serta iuran anggota.

Di tahun pertama sejak berdiri, PERKODI telah mendukung 2 kegiatan berskala internasional dan akan terus berusaha konsisten untuk mengadakan kegiatan yang lebih baik kedepannya dalam mendukung peningkatan budaya pemrograman di Indonesia sesuai visi PERKODI.

| 61.833333 | 257 | 0.842318 | ind_Latn | 0.975986 |

edc1ac407ab36241c1c0d24220cc7ffeb37f268a | 10,112 | md | Markdown | PostnayaTriod1915/chapters/22sub4.md | slavonic/cu-md-sandbox | 34e07f78ee101d387e850123cf9469664ea9ed16 | [

"MIT"

] | 5 | 2018-06-14T15:04:18.000Z | 2021-04-13T11:38:32.000Z | PostnayaTriod1915/chapters/22sub4.md | slavonic/cu-md-sandbox | 34e07f78ee101d387e850123cf9469664ea9ed16 | [

"MIT"

] | 1 | 2018-06-11T16:37:54.000Z | 2018-07-13T01:18:29.000Z | PostnayaTriod1915/chapters/22sub4.md | slavonic/cu-md-sandbox | 34e07f78ee101d387e850123cf9469664ea9ed16 | [

"MIT"

] | 1 | 2021-04-13T11:38:36.000Z | 2021-04-13T11:38:36.000Z | =Въ сꙋббѡ́тꙋ д҃-ѧ седми́цы на ᲂу҆́трени,=

{{text_align=center}}

=А҆ллилꙋ́їа и҆ тропарѝ, гла́съ в҃:= ~А҆пⷭ҇ли, мч҃нцы: =Сла́ва:= ~Помѧнѝ, гдⷭ҇и: =И҆ ны́нѣ:= ~Мт҃и ст҃а́ѧ: =И҆ стїхосло́вїе.= =Та́же мч҃нчны сѣда́льны гла́са. По непоро́чныхъ же пое́мъ тропарѝ:= ~Ст҃ы́хъ ли́къ: =И҆ по є҆ктенїѝ сѣда́ленъ ме́ртвенъ, гла́съ є҃:= ~Поко́й, сп҃се на́шъ, съ првⷣными: =Сла́ва, коне́цъ: И҆ ны́нѣ, бг҃оро́диченъ:= ~Ѿ дв҃ы возсїѧ́вый: =Та́же канѡ́нъ мине́и, и҆ свѧта́гѡ ѡ҆би́тели, и҆ настоѧ́щыѧ четверопѣ́снцы по чи́нꙋ и҆́хъ. Четверопѣ́снецъ, є҆гѡ́же краестро́чїе: Пѣ́снь на мч҃нки. Творе́нїе господи́на і҆ѡ́сифа.=

=И҆ стїхосло́вѧтсѧ пѣ̑сни. Гла́съ д҃.=

{{text_align=center}}

=Пѣ́снь ѕ҃.=

{{text_align=center}}

=І҆рмо́съ:= ~Пꙋчи́ною жите́йскою:

~Преидо́сте предѣ́лы пло́ти мно́гимъ терпѣ́нїемъ, страда́льцы, поне́сше мꙋ̑ки и҆ бѡлѣ̑зни: тѣ́мже всѧ́кꙋю болѣ́знь и҆ ско́рбь ва́съ воспѣва́ющихъ ѡ҆блегча́ете.

~Тьма́мъ а҆́гг҃лъ совокꙋпи́сѧ во́инство ст҃ы́хъ стрⷭ҇тоте́рпєцъ, и҆ мо́литъ всест҃а́го бг҃а, ѿ согрѣше́нїй тьмочи́сленныхъ на́съ и҆зба́вити, ꙗ҆́кѡ хрⷭ҇тꙋ̀ ᲂу҆годи́вшїи.

=Оу҆=мерщвле́нъ бы́въ и҆ во гро́бѣ ᲂу҆снꙋ́въ, хрⷭ҇тѐ, возста́вилъ є҆сѝ мє́ртвыѧ, и҆ въ вѣ́рѣ ᲂу҆ме́ршымъ дае́ши бл҃гости бога́тство, хрⷭ҇тѐ, ᲂу҆покое́нїе со всѣ́ми ст҃ы́ми.

=Бг҃оро́диченъ:= ~Бж҃їе бг҃ъ сло́во, и҆скі́й ѡ҆божи́ти человѣ́ка чтⷭ҇аѧ, и҆з̾ тебє̀ воплоща́етсѧ и҆ человѣ́къ ꙗ҆влѧ́етсѧ: є҆го́же непреста́ннѡ молѝ, ѡ҆брѣстѝ на́мъ млⷭ҇ть во вре́мѧ ѿвѣ́та.

=И҆́ный, господи́на ѳео́дѡра, гла́съ то́йже.=

{{text_align=center}}

=І҆рмо́съ:= ~Бꙋ́рею грѣхо́вною:

~Пло́ти и҆ кро́ве не пощадѣ́вше, ст҃і́и, ко всѧ́комꙋ мꙋче́нїю ста́сте небоѧ́знени, хрⷭ҇та̀ не ѿмета́ющесѧ: тѣ́мже съ нб҃съ низпосла̀ хрⷭ҇то́съ ва́мъ вѣнцы̀.

~Мꙋ́чєникъ торжество̀ срѣта́имъ, просвѣти́вшесѧ дѣѧ́ньми, и҆ возопїи́мъ бг҃одꙋхнове́нными пѣ́сньми: вы̀ є҆стѐ вои́стиннꙋ денни̑цы на землѝ, хрⷭ҇тѡ́вы мч҃нцы.

=Трⷪ҇ченъ:= ~Трⷪ҇це ст҃а́ѧ, сла́влю тѧ̀, є҆стество̀ безнача́льное, є҆ди́наго бг҃а, є҆ди́наго гдⷭ҇а, трѝ лица̑, ѻ҆ц҃а̀, сн҃а и҆ дх҃а, нерожде́нна, рожде́нна, и҆сходѧ́ща, тꙋ́южде и҆ присносꙋ́щнꙋ.

=Бг҃оро́диченъ:= ~Ѽ бл҃же́ннаѧ бг҃оневѣ́сто, ка́кѡ родила̀ є҆сѝ без̾ мꙋ́жа, и҆ пребыла̀ є҆сѝ,ꙗ҆́коже пре́жде; бг҃а бо родила̀ є҆сѝ, чꙋ́до стра́шное. но молѝ сп҃са́тисѧ пою́щымъ тѧ̀.

=Сті́хъ:= ~Ди́венъ бг҃ъ во ст҃ы́хъ свои́хъ, бг҃ъ і҆и҃левъ.

=Мч҃нченъ: Оу҆=сѣчє́нїѧ ᲂу҆дѡ́въ ва́шихъ зрѧ́ще; наслажда́стесѧ кровьмѝ ра́дꙋющесѧ: но моли́те ѡ҆ на́съ прилѣ́жнѡ гдⷭ҇а, мч҃нцы присносла́внїи.

=Сті́хъ:= ~Дꙋ́шы и҆́хъ во бл҃ги́хъ водворѧ́тсѧ.

~Ѿ землѝ созда́вый мѧ̀, и҆ ѡ҆живи́вый мѧ̀, и҆ въ зе́млю возврати́тсѧ мнѣ̀ па́ки рекі́й, ꙗ҆̀же прїѧ́лъ є҆сѝ рабы̑ твоѧ̑ ᲂу҆поко́й, и҆ ѿ тлѣ́нїѧ сме́ртнагѡ возведѝ, гдⷭ҇и.

=І҆рмо́съ:= ~Бꙋ́рею грѣхо́вною погрꙋжа́емь, ꙗ҆́кѡ во чре́вѣ ки́товѣ содержи́мь, съ прⷪ҇ро́комъ зовꙋ́ ти: возведѝ ѿ тлѣ́нїѧ жи́знь мою, гдⷭ҇и, и҆ сп҃си́ мѧ.

=Конда́къ:= ~Со ст҃ы́ми ᲂу҆поко́й: =І҆́косъ:= ~Са́мъ є҆ди́нъ є҆сѝ безсме́ртенъ:

=Пѣ́снь з҃.=

{{text_align=center}}

=І҆рмо́съ:= ~Ѡ҆́бразꙋ злато́мꙋ:

~Ѡ҆блече́ни въ нетлѣ́нїе бж҃е́ственное ѡ҆бнаже́нїемъ тлѣ́нныѧ пло́ти, ны́нѣ свѣ́тли предстоитѐ на́съ ра́ди пло́ть ѿ нетлѣ́нныѧ жены̀ прїе́мшемꙋ, страда́льцы: сегѡ̀ ра́ди во ѡ҆де́ждꙋ сщ҃е́ннꙋю мѧ̀ ѡ҆блецы́те, ѕлѣ̀ ѡ҆бнаже́ннаго.

~Въ воздержа́нїи пожи́вый, стрⷭ҇тоте́рпєцъ собо́ръ ᲂу҆крѣплѧ́етъ на́съ воздержа́нїѧ по́прище тещѝ невозбра́ннѡ, ꙗ҆́кѡ проповѣ́давый хрⷭ҇та̀ на по́прищи мꙋ́жественнѡ, и҆ предстоѧ́й прⷭ҇то́лꙋ вѣнцено́сенъ наслажда́етсѧ мы́сленнѡ со а҆́гг҃лы.

~Мл҃твами твои́хъ ст҃ы́хъ мч҃нкъ, бж҃е, ᲂу҆со́пшыѧ вѣ̑рныѧ рабы̑ твоѧ̑ раѧ̀ жи́тєли покажѝ, и҆ свѣ́та мы́сленнагѡ сподо́би ѧ҆̀, непреста́ннѡ вопїю́щыѧ тебѣ̀: бл҃гослове́нъ бг҃ъ ѻ҆тє́цъ на́шихъ.

=Бг҃оро́диченъ:= ~Тѧ̀ є҆ди́нꙋ бл҃гꙋ́ю мо́лимъ, дв҃о, ѡ҆ѕло́блєнныѧ ны̀ бл҃ги ꙗ҆вѝ, и҆ хрⷭ҇та̀ є҆стество́мъ сꙋ́ща бл҃га́го прилѣ́жнѡ молѝ, воздержа́нїѧ вре́мѧ, бл҃га̑ѧ дѣ́ющымъ, и҆спо́лнити на́мъ пѣсносло́вѧщымъ є҆го̀: бл҃гослове́нъ бг҃ъ ѻ҆тє́цъ на́шихъ.

=И҆́ный.=

{{text_align=center}}

=І҆рмо́съ:= ~Глаго́лавый на горѣ̀:

~Возвели́чивый ст҃ы̑ѧ твоѧ̑ всѧ̑ и҆ зна́меньми ᲂу҆диви́вый ѧ҆̀ въ мі́рѣ, бл҃гослове́нъ є҆сѝ во вѣ́ки, гдⷭ҇и бж҃е ѻ҆тє́цъ на́шихъ.

~Всѧ́кїй ви́дъ ра́нъ проше́дше и҆ колѣ̑на ваа́лꙋ не поко́ршесѧ преклони́ти, вѣнцы̀ сла́вы взѧ́сте ѿ бг҃а, хрⷭ҇тѡ́вы мч҃нцы.

=Трⷪ҇ченъ:= ~Во є҆ди́ницѣ трⷪ҇це, покланѧ́емое сꙋщество̀, ѻ҆́ч҃е, сн҃е и҆ дш҃е, воспѣва́ющыѧ тѧ̀ сохранѧ́й, бж҃е ѻ҆тє́цъ на́шихъ.

=Бг҃оро́диченъ:= ~Дв҃о мт҃и ѻ҆трокови́це всесвѣ́тлаѧ, є҆ди́на къ бг҃ꙋ хода́таице, не преста́й, влⷣчце, моли́ти сп҃сти́сѧ на́мъ.

=Сті́хъ:= ~Ст҃ы̑мъ, и҆̀же сꙋ́ть на землѝ є҆гѡ̀:

~Безсме́ртномꙋ цр҃ю̀ во́инствовавше и҆ вѣ́рꙋ къ немꙋ̀ показа́вше соверше́ннꙋю, мч҃нцы, кро́вь свою̀ за него̀ и҆злїѧ́сте.

=Сті́хъ:= ~Бл҃же́ни, ꙗ҆̀же и҆збра́лъ и҆ прїѧ́лъ є҆сѝ, гдⷭ҇и.

~И҆дѣ́же жи́зни свѣ́тъ тво́й и҆стека́етъ, вселѝ вѣ̑рныѧ твоѧ̑ рабы̑, ꙗ҆̀же преста́вилъ є҆сѝ ѿ вре́менныхъ, гдⷭ҇и бж҃е ѻ҆тє́цъ на́шихъ.

=І҆рмо́съ:= ~Глаго́лавый на горѣ̀ съ мѡѷсе́емъ, и҆ ѡ҆́бразъ дв҃ы показа́вый кꙋпинꙋ̀, бл҃гослове́нъ є҆сѝ, гдⷭ҇и бж҃е ѻ҆тє́цъ на́шихъ.

=Пѣ́снь и҃.=

{{text_align=center}}

=І҆рмо́съ:= ~Во ѻ҆гнѝ пла́меннѣмъ:

~Великоимени́тїи хрⷭ҇тѡ́вы страда́льцы и҆ чтⷭ҇ні́и въ бз҃ѣ, всѧ̑ воспѣва́ющыѧ па̑мѧти ва́шѧ ѿ вели́кихъ согрѣше́нїй и҆ та́мошнихъ мꙋ́къ и҆зба́вите ва́шими вели́кими къ немꙋ̀ мл҃твами.

~Ст҃оизбра́нное и҆ тве́рдое вои́стиннꙋ во́инство хрⷭ҇та̀ бг҃а, мч҃нкъ собо́ри, ѡ҆ст҃и́те на́шъ ᲂу҆́мъ и҆ се́рдце въ сїѧ̑ ст҃ы̑ѧ дни̑ пѡ́стныѧ ва́шими ст҃ы́ми мл҃твами.

~И҆зба́ви, гдⷭ҇и ѿ че́рвїѧ мꙋ́чащагѡ и҆ скре́жета зꙋ́бнагѡ, и҆ кромѣ́шнїѧ тьмы̀ несвѣти́мыѧ, всѧ̑ ꙗ҆̀же вѣ́рою прїѧ́лъ є҆сѝ: и҆ ᲂу҆чинѝ сїѧ̑, и҆дѣ́же свѣ́тъ лица̀ твоегѡ̀, хрⷭ҇тѐ, сїѧ́етъ во вѣ́ки.

=Бг҃оро́диченъ:= ~Крⷭ҇тъ хрⷭ҇то́въ чтⷭ҇ны́й ви́дѣвшымъ и҆ ѿ се́рдца поклони́вшымсѧ сподо́би, бцⷣе чтⷭ҇аѧ, твои́ми ко влⷣцѣ мл҃твами, ви́дѣти на́мъ и҆ стрⷭ҇ти честны̑ѧ, ѿ страсте́й ѡ҆чищє́ннымъ.

=И҆́ный.=

{{text_align=center}}

=І҆рмо́съ:= ~Землѧ̀ и҆ всѧ̑ ꙗ҆̀же на не́й:

~Ѽ до́брыѧ мѣ́ны! є҆́юже ѡ҆брѣто́сте сме́ртїю жи́знъ, сщ҃енномч҃нцы хрⷭ҇тѡ́вы, ѻ҆гнѧ̀ и҆ меча̀, мра́за и҆ ѕвѣре́й ника́коже ᲂу҆боѧ́вшесѧ и҆ вопїю́ще: по́йте гдⷭ҇а и҆ превозноси́те во всѧ̑ вѣ́ки.

~Горѣ̀ ли́къ а҆́гг҃льскїй, до́лꙋ же мы̀ земні́и хва́лимъ, мч҃нцы хрⷭ҇тѡ́вы, ди̑внаѧ страда̑нїѧ и҆ по́двиги ва́шегѡ мꙋ́жества, бл҃гословѧ́ще, пою́ще гдⷭ҇а и҆ превозносѧ́ще во всѧ̑ вѣ́ки.

=Бл҃гослови́мъ ѻ҆ц҃а̀, и҆ сн҃а, и҆ ст҃а́го дх҃а, гдⷭ҇а.=

~Свѣ́тъ, жи́знъ и҆ жи̑зни почита́ю тѧ̀, ѻ҆ц҃а̀ и҆ сн҃а, и҆ дх҃а и҆схо́дна, є҆стество̀ є҆ди́но, трѝ ѵ҆поста̑си, бг҃а є҆ди́наго поѧ̀: бл҃гословлѧю́ тѧ̀, пою́ тѧ гдⷭ҇а, и҆ превозношꙋ́ тѧ во всѧ̑ вѣ́ки.

=Бг҃оро́диченъ:= ~Кто̀ ѿ земноро́дныхъ не воспое́тъ тѧ̀, нескве́рнаѧ чтⷭ҇аѧ голꙋби́це; ты́ бо родила̀ є҆сѝ на́мъ свѣ́тъ вели́кїй, жи́зни бога́тство, і҆и҃са сп҃са: є҆го́же пое́мъ хва́лѧще ꙗ҆́кѡ гдⷭ҇а, и҆ превозно́симъ во всѧ̑ вѣ́ки.

=Сті́хъ:= ~Ди́венъ бг҃ъ во ст҃ы́хъ свои́хъ, бг҃ъ і҆и҃левъ.

~По́двиги чꙋ̑дныѧ ва́шѧ сла́вѧще, мч҃нцы, бл҃годѣ́телѧ и҆ бг҃а бл҃гослови́мъ и҆ покланѧ́емсѧ, на по́прище подвигѡ́въ ва́съ ᲂу҆крѣпи́вшаго: є҆го́же превозно́симъ во всѧ̑ вѣ́ки.

=Сті́хъ:= ~Дꙋ́шы и҆́хъ во бл҃ги́хъ водворѧ́тсѧ.

~Ты̀, сме́рти и҆ жи́зни гдⷭ҇ь сы́й и҆ бг҃ъ, преста́вльшыѧсѧ бл҃гоче́стнѡ воздвиза́ѧй, положѝ та́мѡ въ селе́нїихъ првⷣныхъ, бл҃гословѧ́щыѧ, пою́щыѧ тѧ̀, гдⷭ҇и, и҆ превозносѧ́щыѧ во всѧ̑ вѣ́ки.

=Хва́лимъ, бл҃гослови́мъ, покланѧ́емсѧ гдⷭ҇еви:=

=І҆рмо́съ:= ~Землѧ̀ и҆ всѧ̑ ꙗ҆̀же на не́й, морѧ̀ и҆ всѝ и҆сто́чницы, небеса̀ небе́съ, свѣ́тъ и҆ тьма̀, мра́зъ и҆ зно́й, сы́нове человѣ́честїи, сщ҃е́нницы, бл҃гослови́те гдⷭ҇а и҆ превозноси́те во всѧ̑ вѣ́ки.

=Пѣ́снь ѳ҃.=

{{text_align=center}}

=І҆рмо́съ:= ~Ꙗ҆́кѡ сотворѝ мнѣ̀:

~Свѣти́льницы сꙋ́ще неле́стнїи, хрⷭ҇тѡ́вы страда́льцы, просвѣти́те на́шѧ по́мыслы ᲂу҆тверди́те твори́ти свѣтоза̑рнаѧ и҆ чтⷭ҇аѧ бж҃їѧ хотѣ̑нїѧ.

~Ѡ҆рꙋ̑жїѧ закала̑ющаѧ врагѝ зрите́сѧ, до́блїи хрⷭ҇тѡ́вы страда́льцы: но и҆зба́вите на́съ ѿ стрѣ́лъ лꙋка́вагѡ предста́тельствы ва́шими.

~Поко́й, ще́дре, въ нѣ́дрѣхъ а҆враа́мовыхъ рабы̑ твоѧ̑, вѣ́рою ѿ на́съ ѿше́дшыѧ къ тебѣ̀, всѧ́ческихъ зижди́телю и҆ пребл҃го́мꙋ.

=Бг҃оро́диченъ:= ~Пло́ти моеѧ̀ ᲂу҆мертвѝ движє́нїѧ, бг҃а пло́тїю ро́ждшаѧ па́че ᲂу҆ма̀, и҆ пода́ждь просвѣще́нїе мы́сли мое́й, чтⷭ҇аѧ, сꙋ́щи ѡ҆́блакъ свѣ́та.

=И҆́ный.=

{{text_align=center}}

=І҆рмо́съ:= ~Велича́емъ всѝ:

~Воспѣва́емъ всѝ па̑мѧти ва́шѧ, мч҃нцы прехва́льнїи, ви́дѧще по́двиги сщ҃е́нныхъ ва́шихъ страда́нїй, и҆ ᲂу҆диви́вшесѧ хрⷭ҇та̀ велича́емъ.

~Стра́ждꙋще дрꙋ́гъ ко дрꙋ́гꙋ глаго́лютъ стрⷭ҇тоте́рпцы: пло́ти не пощади́мъ: прїиди́те, ᲂу҆́мремъ за хрⷭ҇та, да во всѧ̑ вѣ́ки поживе́мъ ликꙋ́юще безконе́чнѡ.

=Трⷪ҇ченъ:= ~Ѽ трⷪ҇це во є҆ди́номъ є҆стествѣ̀, ѻ҆́ч҃е нерожде́нный, и҆ рожде́нный сн҃е, и҆ дш҃е и҆сходѧ́й, тѧ̀ воспѣва̑ющыѧ твое́ю млⷭ҇тїю невреди̑мы соблюдѝ.

=Бг҃оро́диченъ:= ~Ра́дꙋйсѧ, всечⷭ҇тна́ѧ, чтⷭ҇аѧ: дѣ́вства похвало̀, ма́терей ᲂу҆твержде́нїе,человѣ́кѡмъ по́мощь и҆ мі́ра ра́дость, мр҃і́е, мт҃и и҆ рабо̀ бг҃а на́шегѡ.

=Сті́хъ:= ~Ди́венъ бг҃ъ во ст҃ы́хъ свои́хъ, бг҃ъ і҆и҃левъ.

~Ли́че ст҃ы́хъ, прїимѝ мольбꙋ̀ мою̀: и҆ ꙗ҆́коже сподо́бихсѧ крⷭ҇тъ ѡ҆блобыза́ти, ᲂу҆ хрⷭ҇та̀ и҆спроси́те и҆ сп҃си́тельнѣй стрⷭ҇ти мнѣ̀ поклони́тисѧ.

=Сті́хъ:= ~Бл҃же́ни, ꙗ҆̀же и҆збра́лъ и҆ прїѧ́лъ є҆сѝ, гдⷭ҇и.

~Ѡ҆сла́би, ѡ҆ста́ви, ще́дре, къ тебѣ̀ чл҃вѣколю́бцꙋ преста́вльшымсѧ, и҆ сїѧ̑ ᲂу҆поко́й въ селе́нїихъ и҆збра́нныхъ: ты́ бо є҆сѝ жи́знь и҆ воскрⷭ҇нїе.

=І҆рмо́съ:= ~Велича́емъ всѝ чл҃вѣколю́бїе твоѐ, хрⷭ҇тѐ сп҃се на́шъ, сла́во ра̑бъ твои́хъ и҆ вѣ́нче вѣ́рныхъ, возвели́чивый па́мѧть ро́ждшїѧ тѧ̀.

=Є҆ѯапостїла́рїи, пи́саны въ сꙋббѡ́тꙋ в҃-ю поста̀. На хвали́техъ мч҃нчны гла́са. На стїхо́внѣ же подо́бны господи́на ѳеофа́на.=

=На +лїтꙋргі́и+: И҆з̾ѡбрази́тєльнаѧ и҆ бл҃жє́нны гла́са. Прокі́менъ а҆пⷭ҇ла ме́ртвенъ. А҆пⷭ҇лъ, зача́ло тг҃і. И҆ за ᲂу҆поко́й, къ корі́нѳѧнѡмъ, зача́ло рѯ҃г. А҆ллилꙋ́їа, ме́ртвенъ. Є҆ѵⷢ҇лїе ѿ ма́рка, зача́ло л҃а. И҆ за ᲂу҆поко́й, ѿ і҆ѡа́нна, зача́ло ѕ҃і. Прича́стенъ:= ~Ра́дꙋйтесѧ, првⷣнїи: =Дрꙋгі́й, за ᲂу҆поко́й:= ~Бл҃же́ни, ꙗ҆̀же и҆збра́лъ:

| 60.550898 | 548 | 0.662282 | ukr_Cyrl | 0.180464 |

edc1eb5251fb0d2d9d21d1f926da351c1bfc3348 | 1,577 | md | Markdown | README.md | catchr/tiktok-marketing-api | c5477baf2c976c130bc4f131ed59a2fe14df7296 | [

"Unlicense"

] | null | null | null | README.md | catchr/tiktok-marketing-api | c5477baf2c976c130bc4f131ed59a2fe14df7296 | [

"Unlicense"

] | null | null | null | README.md | catchr/tiktok-marketing-api | c5477baf2c976c130bc4f131ed59a2fe14df7296 | [

"Unlicense"

] | null | null | null | # TikTok Marketing API PHP client library

Convenient full-featured wrapper for [TikTok Marketing API](https://ads.tiktok.com/marketing_api/docs).

🚫 ️️Work In Progress. Not ready for use.

[](https://travis-ci.org/promopult/tiktok-marketing-api)

[](https://scrutinizer-ci.com/g/promopult/tiktok-marketing-api/?branch=master)

[](https://scrutinizer-ci.com/g/promopult/tiktok-marketing-api/?branch=master)

### Installation

```bash

$ composer require promopult/tiktok-marketing-api

```

or

```

"require": {

// ...

"promopult/tiktok-marketing-api": "*"

// ...

}

```

### Usage

See [examples](/examples) folder.

```php

$credentials = \Promopult\TikTokMarketingApi\Credentials::build(

getenv('__ACCESS_TOKEN__'),

getenv('__APP_ID__'),

getenv('__SECRET__')

);

// Any PSR-18 HTTP-client

$httpClient = new \GuzzleHttp\Client();

$client = new \Promopult\TikTokMarketingApi\Client(

$credentials,

$httpClient

);

$response = $client->user->info();

print_r($response);

//Array

//(

// [message] => OK

// [code] => 0

// [data] => Array

// (

// [create_time] => 1614175583

// [display_name] => my_user

// [id] => xxx

// [email] => xxx

// )

//

// [request_id] => xxx

//)

```

| 25.031746 | 200 | 0.65948 | kor_Hang | 0.153066 |

edc209593e57a949095847f53a3c47b277381c9c | 506 | md | Markdown | README.md | bguyl/docker-stacks | 6800e07a495ff3c89178b8427102dd3bc7dc5826 | [

"MIT"

] | 1 | 2018-05-17T16:31:10.000Z | 2018-05-17T16:31:10.000Z | README.md | bguyl/docker-stacks | 6800e07a495ff3c89178b8427102dd3bc7dc5826 | [

"MIT"

] | 3 | 2018-06-28T11:05:09.000Z | 2018-06-28T11:18:24.000Z | README.md | bguyl/docker-stacks | 6800e07a495ff3c89178b8427102dd3bc7dc5826 | [

"MIT"

] | null | null | null | # docker-stacks

> Some useful stack files for Docker

### How to use it ?

`latest` directories contains stacks with images without specifics tags. Don't use theses in production.

`stateless` are stacks without volumes. Use them to run and try stacks quickly.

`stable` contains stacks with the last version tested.

### Stacks

* [DAMP](damp/DESCRIPTION.md): Apache-MySQL-PHP stack

* [Wordpress](wordpress/DESCRIPTION.md)

* [Java-MySQL](java-mysql/DESCRIPTION.md)

* [Peertube](peertube/DESCRIPTION.md) | 31.625 | 106 | 0.752964 | eng_Latn | 0.766348 |

edc280d5d930aa946b757f5e9474f1fb2f05ff7d | 2,171 | md | Markdown | README.md | wagtaillabs/GRANT | 442fa8353e198a1a1fbac83b645be1915382fc85 | [

"Apache-1.1"

] | 19 | 2020-11-17T13:26:56.000Z | 2022-02-01T08:47:57.000Z | README.md | wagtaillabs/GRANT | 442fa8353e198a1a1fbac83b645be1915382fc85 | [

"Apache-1.1"

] | null | null | null | README.md | wagtaillabs/GRANT | 442fa8353e198a1a1fbac83b645be1915382fc85 | [

"Apache-1.1"

] | null | null | null | # GRANT

Graft, Reassemble, Answer delta, Neighbour sensitivity, Training delta (GRANT)

GRANT has been created by Wagtail Labs to remove the guesswork in using tree based models, assisting the creation of faster, more accurate models with satisfying explanations. It provides a deep understanding of the model's internal behaviour, shows prediction sensitivities, and helps data scientists improve inaccurate predictions.

For a detailed introduction to the theory of GRANT visit [wagtaillabs.com](https://wagtaillabs.com).

For a detailed introduction to the implementation of GRANT see the GRANT_walkthrough.ipynb notebook in this repo.

## Installation

Clone the WagtailLabs/GRANT/grant.py file.

## Usage

For a detailed walkthrough of the usage see the GRANT.ipynb notebook in this repo.

```python

from WagtailLabs.grant import grant

grant_rf = grant(rf, train_features, train_labels)

grant_rf.graft()

grafted_df = grant_rf.get_graft() #Returns the Graft (dataset containing all decision boundaries) of the Tree Ensemble

grafted_dt = grant_rf.reassemble() #Returns a decision tree that produces the exact same results of the Tree Ensemble in less time

grant_rf.amalgamate(threshold)

amalgamated_df = grant_rf.get_amalgamated() #Returns a pruned (simplified) copy of the Graft where contigious decision boundaries with a prediction difference less than the supplied threshold are merged

sensitivities = grant_rf.neighbour_sensitivity(val_feature) #Returns the change required in explanatory data required to reach each neighbouring decision boundary

trainer_delta = grant_rf.training_delta_trainer(trainer_feature, trainer_label) #Returns the incremental change in result from a given training record to all predictions

grant_rf.training_delta_trainee(trainee_feature, trainee_label) #Returns the incremental change in result from each training record that contributed any given prediction

```

## Contributing

Pull requests are welcome. For major changes, please open an issue first to discuss what you would like to change.

Please make sure to update tests as appropriate.

## License

[Apache License 2.0](https://choosealicense.com/licenses/apache-2.0/)

| 48.244444 | 333 | 0.817135 | eng_Latn | 0.992934 |

edc2ac0328356b073daeb049639098c7caf78fba | 4,066 | md | Markdown | _posts/2021-07-15-3-alternatives-to-saying-i-dont-know-in-interviews.md | recursivefaults/recursivefaults.github.io | 87f92eeacf2d134f130019a432dc4130660e776f | [

"MIT"

] | null | null | null | _posts/2021-07-15-3-alternatives-to-saying-i-dont-know-in-interviews.md | recursivefaults/recursivefaults.github.io | 87f92eeacf2d134f130019a432dc4130660e776f | [

"MIT"

] | 2 | 2020-06-02T16:44:46.000Z | 2021-09-03T17:11:16.000Z | _posts/2021-07-15-3-alternatives-to-saying-i-dont-know-in-interviews.md | recursivefaults/recursivefaults.github.io | 87f92eeacf2d134f130019a432dc4130660e776f | [

"MIT"

] | null | null | null | ---

title: 3 Alternatives to Saying, “I Don’t Know” In Interviews

tags: [interview, question, technique]

categories: [career]

excerpt: Eventually you won’t know how to answer a question in an interview. In this article I walk through three alternatives to saying “I don’t know” that give much better results.

cover_image: https://source.unsplash.com/fg8tdcxrkrA/900x500

---

You’ll inevitably hear some question that you don’t know the answer to during an interview. How you handle that moment can either leave the interviewer nodding in approval or unsure if you’re ready.

Before I jump into how to handle this, I want to point out why this is a certainty:

The tech field is just too big to have all the answers in our heads.

Most interviews favor obscure, ridiculous, and trick questions that are all geared to mess you up.

There is a specific interviewing technique that I call “The probing question,” which specifically tries to get someone to the boundary of their knowledge.

In other words, you’ll get asked a question, and you will have no idea what the answer is.

So here’s what you can do about it:

# Technique 1: Focus on What You Can Do

When you hear that question and your brain immediately turns to jelly because it draws a total blank, you want to shift gears quickly.

Instead of focusing on the answer and how you don’t have it, focus instead on the process you’d follow to arrive at the solution.

In other words, tell them what you can do to get the answer.

A pretty easy way to launch into that is to say, “Oh, that’s interesting, I haven’t come across that before, but here’s what I’d do...”

By doing this, you’re keeping a conversation going and demonstrating your ability to solve problems, keep composure, and how you work.

# Technique 2: Tell A Story

Somewhat related to the first technique, you can instead tell a story about a time you solved a puzzle you didn’t have the answer to either.

Now, this one takes a little bit of extra work because you’ll have to relate your story back to the question. Thankfully this isn’t too hard to do. If you are getting asked about some obscure fact, tell a story where you had to learn an obscure fact. If it was a tricky coding problem, tell a story about solving a problem that felt obscure.

By telling a story, you’re using your past to show you’ve been through similar tricky situations in a deeply relatable way. Storytelling is a potent technique that builds relationships and rounds out rough spots in an interview.

# Technique 3: Reversal

This last technique is probably the toughest to pull off but can pay off pretty big. The idea is to reverse the question back on the interviewer. It might go something like this:

“Oh, that’s interesting. Have you bumped into something like that here?”

If you can get the interviewer to share their story, they will build a relationship with you. You can ask more questions and talk like two peers.

There is a risk with this technique since many questions people ask are genuinely unrelated to anything, so you can’t reverse it for them to tell a story. What this will do in this case is highlight that they just asked you an unrelated question. At this point, your best bet is likely to leverage technique #2 and tell a story about something you can relate to.

I’ll give an example from my past to show how this might play out.

Them: How would you solve this... (Some problem related to relational databases)

Me: Wow, do you all bump into this problem a lot?

Them: Yeah, almost every project. It’s a pain to deal with, and we need to know our developers can do it.

Me: Well, I’d use a NoSQL solution for this case, I think.

Them: Uh, we’ve never used NoSQL before. How would that work?

Me: No problem, let me walk you through how I’d do this.

I reversed the question, then did moved back to technique #1 and focused on what I could do. I had no idea how to answer the question they asked, so I created a scenario that I could attempt.

There you have it, three ways to handle the moment when you don’t know the answer in an interview. | 67.766667 | 362 | 0.775947 | eng_Latn | 0.999896 |

edc3b80d1a06d84fa50bae6d5ef620f203c76de4 | 4,276 | md | Markdown | dynamicsax2012-technet/add-or-update-identification-information.md | MicrosoftDocs/DynamicsAX2012-technet | 4e3ffe40810e1b46742cdb19d1e90cf2c94a3662 | [

"CC-BY-4.0",

"MIT"

] | 9 | 2019-01-16T13:55:51.000Z | 2021-11-04T20:39:31.000Z | dynamicsax2012-technet/add-or-update-identification-information.md | MicrosoftDocs/DynamicsAX2012-technet | 4e3ffe40810e1b46742cdb19d1e90cf2c94a3662 | [

"CC-BY-4.0",

"MIT"

] | 265 | 2018-08-07T18:36:16.000Z | 2021-11-10T07:15:20.000Z | dynamicsax2012-technet/add-or-update-identification-information.md | MicrosoftDocs/DynamicsAX2012-technet | 4e3ffe40810e1b46742cdb19d1e90cf2c94a3662 | [

"CC-BY-4.0",

"MIT"

] | 32 | 2018-08-09T22:29:36.000Z | 2021-08-05T06:58:53.000Z | ---

title: Add or update identification information

TOCTitle: Add or update identification information

ms:assetid: 71bd6f16-e2b1-4011-88c8-a4f931124a12

ms:mtpsurl: https://technet.microsoft.com/library/Hh271561(v=AX.60)

ms:contentKeyID: 36384192

author: Khairunj

ms.author: daxcpft

ms.date: 04/18/2014

mtps_version: v=AX.60

f1_keywords:

- HcmEPPersonIdentificationNumberCreate

- HcmEPPersonIdentificationNumberEdit

- HcmEPPersonIdentificationNumberList

audience: Application User

ms.search.region: Global

---

# Add or update identification information

[!INCLUDE[archive-banner](includes/archive-banner.md)]

_**Applies To:** Microsoft Dynamics AX 2012 R3, Microsoft Dynamics AX 2012 R2, Microsoft Dynamics AX 2012 Feature Pack, Microsoft Dynamics AX 2012_

Use the **Identification** list page to maintain information about your identity or the identity of your personal contacts. You can use information from forms of identity such as a driver’s license, visa, passport, or another government issued identity card.

> [!NOTE]

> <P>Depending on your role or the privileges that are assigned to you, you might need to go to your Employee services site before you complete the procedures in this topic.</P>

## View your identification information

Click **Personal information** on the top link bar, and then click **Identification** on the Quick Launch to display the **Identification** list page, where you can view the forms of identification that you have on record with your company or organization.

## Add identification information

1. Click **Personal information** on the top link bar.

2. To add identification information for yourself, click **Identification** on the Quick Launch.

To add identification information for a personal contact, on the **Personal contacts** FastTab, select the personal contact to add identification information for, and then click **Edit**.

3. Click **Identification** to display the **New identification** page.

4. Select the type of identification. For example, if you are adding identification information from your driver’s license, select **Driver’s license**.

5. Enter the number that is associated with the identification type that you selected in step 4. For example, if you selected **Driver’s license** in step 4, enter your driver’s license number.

6. Enter additional information about the identification information in either the **Description** field or the **Entry type** field.

7. Select the **Primary** check box to indicate that the identification information is your primary form of identification.

8. Select the agency that issued the form of identification that you selected in step 4. For example, if you selected **Driver’s license** and your driver’s license was issued to you by a government entity, select the name of the government entity.

9. Enter the date that the form of identification that you selected in step 4 was issued.

10. Enter the expiration date of the form of identification that you selected in step 4.

11. Click **Save and close**.

## Modify identification information

1. Click **Personal information** on the top link bar.

2. To modify identification information for yourself, click **Identification** on the Quick Launch. Go to step 4.

To modify identification information for a personal contact, on the **Personal contacts** FastTab, select the personal contact to modify identification information for and then click **Edit**.

3. Click **Identification**.

4. Select the identification information to modify and then click **Edit**.

5. Modify the necessary information and then click **Save and close**.

## Delete identification information

1. Click **Personal information** on the top link bar.

2. To delete identification information for yourself, click **Identification** on the Quick Launch. Go to step 4.

To delete identification information for a personal contact, on the **Personal contacts** FastTab, select the personal contact to delete identification information for and then click **Edit**.

3. Click **Identification**.

4. Select the identification information to delete and then click **Remove**.

## See also

[Add and maintain your personal contacts](add-and-maintain-your-personal-contacts.md)

| 43.632653 | 258 | 0.77479 | eng_Latn | 0.984681 |

edc44a024e7571ac3575a1bd2b2b501e831a6114 | 110 | md | Markdown | README.md | jMac029/Bootstrap-Portfolio | 1a635b64845b79706bf3f5f10bdcea219ea2d474 | [

"MIT"

] | 2 | 2017-10-31T07:33:10.000Z | 2018-02-12T19:17:00.000Z | README.md | jMac029/Bootstrap-Portfolio | 1a635b64845b79706bf3f5f10bdcea219ea2d474 | [

"MIT"

] | null | null | null | README.md | jMac029/Bootstrap-Portfolio | 1a635b64845b79706bf3f5f10bdcea219ea2d474 | [

"MIT"

] | null | null | null | # Bootstrap-Portfolio

2nd Homework Assignment for UCI Coding Bootcamp - Make a Portfolio site using BootStrap

| 36.666667 | 87 | 0.827273 | eng_Latn | 0.54022 |

edc4ff692f5130d2b90d9457c9d807c62dd462b5 | 765 | md | Markdown | F1/windowactivate-event-project-vbapj-chm131140.md | CeptiveYT/VBA-Docs | 1d9c58a40ee6f2d85f96de0a825de201f950fc2a | [

"CC-BY-4.0",

"MIT"

] | 283 | 2018-07-06T07:44:11.000Z | 2022-03-31T14:09:36.000Z | F1/windowactivate-event-project-vbapj-chm131140.md | CeptiveYT/VBA-Docs | 1d9c58a40ee6f2d85f96de0a825de201f950fc2a | [

"CC-BY-4.0",

"MIT"

] | 1,457 | 2018-05-11T17:48:58.000Z | 2022-03-25T22:03:38.000Z | F1/windowactivate-event-project-vbapj-chm131140.md | CeptiveYT/VBA-Docs | 1d9c58a40ee6f2d85f96de0a825de201f950fc2a | [

"CC-BY-4.0",

"MIT"

] | 469 | 2018-06-14T12:50:12.000Z | 2022-03-27T08:17:02.000Z | ---

title: WindowActivate Event, Project [vbapj.chm131140]

keywords: vbapj.chm131140

f1_keywords:

- vbapj.chm131140

ms.prod: office

ms.assetid: a9fb0eaf-faec-4680-ab20-702a0f6856a7

ms.date: 06/08/2017

ms.localizationpriority: medium

---

# WindowActivate Event, Project [vbapj.chm131140]

Hi there! You have landed on one of our F1 Help redirector pages. Please select the topic you were looking for below.

[Application.WindowActivate Event (Project)](https://msdn.microsoft.com/library/b54d0956-7eab-db5f-394a-5120bc111afd%28Office.15%29.aspx)

[Application.WindowSidepaneTaskChange Event (Project)](https://msdn.microsoft.com/library/674a8134-1e34-2658-6c67-5eb92c628ed8%28Office.15%29.aspx)

[!include[Support and feedback](~/includes/feedback-boilerplate.md)] | 36.428571 | 147 | 0.797386 | eng_Latn | 0.317915 |

edc6cf775328051c19cc34624e01ac20bd7bc7a9 | 483 | md | Markdown | README.md | jpbonson/APIAuthenticationCourse | b9cf6239a5ece90044f6b274f74b76836334d073 | [

"BSD-2-Clause"

] | 3 | 2018-01-23T22:21:38.000Z | 2018-01-25T15:19:47.000Z | README.md | jpbonson/Course_APIAuthentication | b9cf6239a5ece90044f6b274f74b76836334d073 | [

"BSD-2-Clause"

] | null | null | null | README.md | jpbonson/Course_APIAuthentication | b9cf6239a5ece90044f6b274f74b76836334d073 | [

"BSD-2-Clause"

] | null | null | null | # API Authentication Course

API with examples of multiple authentication strategies (Python).

- Basic

- Simple Token

- OAuth 2.0 with JWT

Python. Flask.

Slides at https://docs.google.com/presentation/d/1zWgwnl7QCW8svIQOgq28rlYA13sK3P7N82SH-Wceel0/edit?usp=sharing (Portuguese only)

### How to install? ###

```

pipenv install

```

### How to run? ###

```

gunicorn main:app

```

### Application ###

https://course-api-auth.herokuapp.com/

The routes are described in the slides.

| 17.25 | 128 | 0.724638 | eng_Latn | 0.748658 |

edc77a6416ffe83bcee47c2d50a335ff18311dcf | 4,175 | md | Markdown | articles/databox/data-box-disk-system-requirements.md | changeworld/azure-docs.nl-nl | bdaa9c94e3a164b14a5d4b985a519e8ae95248d5 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/databox/data-box-disk-system-requirements.md | changeworld/azure-docs.nl-nl | bdaa9c94e3a164b14a5d4b985a519e8ae95248d5 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/databox/data-box-disk-system-requirements.md | changeworld/azure-docs.nl-nl | bdaa9c94e3a164b14a5d4b985a519e8ae95248d5 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Systeemvereisten voor Microsoft Azure Data Box Disk | Microsoft Docs

description: Lees meer over de software- en netwerkvereisten voor uw Azure Data Box Disk

services: databox

author: alkohli

ms.service: databox

ms.subservice: disk

ms.topic: article

ms.date: 09/04/2019

ms.author: alkohli

ms.localizationpriority: high

ms.openlocfilehash: fb2fd89664517e44cf5128a5c82e583f03087061

ms.sourcegitcommit: 49c4b9c797c09c92632d7cedfec0ac1cf783631b

ms.translationtype: HT

ms.contentlocale: nl-NL

ms.lasthandoff: 09/05/2019

ms.locfileid: "70307687"

---

::: zone target="docs"

# <a name="azure-data-box-disk-system-requirements"></a>Systeemvereisten voor Azure Data Box Disk

In dit artikel worden de belangrijkste systeemvereisten beschreven voor uw oplossing met Microsoft Azure Data Box Disk en voor de clients die verbinding maken met de Data Box Disk. We adviseren om de informatie zorgvuldig door te nemen voordat u uw Data Box Disk implementeert. Als u tijdens de implementatie en daaropvolgende bewerkingen nog vragen hebt, kunt u de informatie er altijd nog even bij pakken.

De systeemvereisten omvatten de ondersteunde platforms voor clients die verbinding maken met schijven, ondersteunde opslagaccounts en opslagtypen.

::: zone-end

::: zone target="chromeless"

## <a name="review-prerequisites"></a>Vereisten controleren

1. U moet uw Data Box Disk hebben besteld met behulp van [Zelfstudie: Azure Data Box Disk bestellen](data-box-disk-deploy-ordered.md). U hebt de schijven en één aansluitkabel per schijf ontvangen.

2. U hebt een clientcomputer beschikbaar van waaruit u de gegevens kunt kopiëren. De clientcomputer moet voldoen aan deze vereisten:

- Er is een ondersteund besturingssysteem geïnstalleerd.

- Andere vereiste software is geïnstalleerd.

::: zone-end

## <a name="supported-operating-systems-for-clients"></a>Ondersteunde besturingssystemen voor clients

Hier volgt een lijst met de ondersteunde besturingssystemen voor het ontgrendelen van de schijf en het kopiëren van gegevens via de clients die zijn verbonden met de Data Box Disk.

| **Besturingssysteem** | **Geteste versies** |

| --- | --- |

| Windows Server |2008 R2 SP1 <br> 2012 <br> 2012 R2 <br> 2016 |

| Windows (64-bits) |7, 8, 10 |

|Linux <br> <li> Ubuntu </li><li> Debian </li><li> Red Hat Enterprise Linux (RHEL) </li><li> CentOS| <br>14.04, 16.04, 18.04 <br> 8.11, 9 <br> 7.0 <br> 6.5, 6.9, 7.0, 7.5 |

## <a name="other-required-software-for-windows-clients"></a>Andere vereiste software voor Windows-clients

Voor Windows-clients moet de volgende software ook zijn geïnstalleerd.

| **Software**| **Versie** |

| --- | --- |

| Windows Powershell |5.0 |

| .NET Framework |4.5.1 |

| Windows Management Framework |5.0|

| BitLocker| - |

## <a name="other-required-software-for-linux-clients"></a>Andere vereiste software voor Linux-clients

Voor Linux-clients wordt de volgende vereiste software geïnstalleerd door de Data Box Disk-werkset:

- dislocker

- OpenSSL

## <a name="supported-connection"></a>Ondersteunde verbinding

De clientcomputer met de gegevens moet een USB 3.0-poort of hoger hebben. De schijven maken verbinding met deze client met behulp van de meegeleverde kabel.

## <a name="supported-storage-accounts"></a>Ondersteunde opslagaccounts

Hier volgt een lijst met de ondersteunde opslagtypen voor de Data Box Disk.

| **Opslagaccount** | **Opmerkingen** |

| --- | --- |

| Klassiek | Standard |

| Algemeen gebruik |Standard; zowel V1 als V2 wordt ondersteund. Zowel dynamische als statische servicelagen worden ondersteund. |

| Blob-opslagaccount | |

>[!NOTE]

> Gen 2-accounts van Azure Data Lake Storage worden niet ondersteund.

## <a name="supported-storage-types-for-upload"></a>Ondersteunde opslagtypen voor uploaden

Hier volgt een lijst met de opslagtypen die worden ondersteund voor uploaden naar Azure met behulp van Data Box Disk.

| **Bestandsindeling** | **Opmerkingen** |

| --- | --- |

| Azure-blok-blob | |

| Azure-pagina-blob | |

| Azure Files | |

| Beheerde schijven | |

::: zone target="docs"

## <a name="next-step"></a>Volgende stap

* [Azure Data Box Disk implementeren](data-box-disk-deploy-ordered.md)

::: zone-end

| 39.386792 | 407 | 0.752096 | nld_Latn | 0.993273 |

edc947104f62ba5367b866e26ad8608e70675b05 | 76 | md | Markdown | README.md | auttawutsriprasan/myTODOs | 15e861dd5195d70234631b10eb601df0d019a4b3 | [

"MIT"

] | null | null | null | README.md | auttawutsriprasan/myTODOs | 15e861dd5195d70234631b10eb601df0d019a4b3 | [

"MIT"

] | null | null | null | README.md | auttawutsriprasan/myTODOs | 15e861dd5195d70234631b10eb601df0d019a4b3 | [

"MIT"

] | null | null | null | # myTODOs

This repo is a collection of all things I want to achieve.

momo

| 12.666667 | 58 | 0.75 | eng_Latn | 0.999872 |

edc95dfae80aa56470804cfb9d9f137896d5856e | 326 | md | Markdown | src/comments/mastering-paper/introduction-tool-guide/comment-1385076228000.md | Oxyenyos/web-proj | 37e321fcc45f13a87831d20a83ba797b29864ea0 | [

"MIT"

] | null | null | null | src/comments/mastering-paper/introduction-tool-guide/comment-1385076228000.md | Oxyenyos/web-proj | 37e321fcc45f13a87831d20a83ba797b29864ea0 | [

"MIT"

] | null | null | null | src/comments/mastering-paper/introduction-tool-guide/comment-1385076228000.md | Oxyenyos/web-proj | 37e321fcc45f13a87831d20a83ba797b29864ea0 | [

"MIT"

] | null | null | null | ---

replying_to: '14'

id: comment-1133682554

date: 2013-11-21T23:23:48Z

updated: 2013-11-21T23:23:48Z

_parent: /mastering-paper/introduction-tool-guide/

name: Michael Rose

url: https://alokprateek.in/

email: 1ce71bc10b86565464b612093d89707e

---

My pleasure. I learn something new every time I use Paper. It's such a fun app!

| 25.076923 | 79 | 0.766871 | yue_Hant | 0.373742 |

edc9751e8a38a6eee9f8372fb65b9265609134cd | 441 | md | Markdown | content/conditions/heat-rash/main-content-4.md | nhsalpha/betahealth | 9cc0bbf71000e5322a5dcedfe4e6b4473ef9313e | [

"MIT"

] | 4 | 2016-09-15T13:47:05.000Z | 2017-04-24T08:01:30.000Z | content/conditions/heat-rash/main-content-4.md | nhsuk/betahealth | 9cc0bbf71000e5322a5dcedfe4e6b4473ef9313e | [

"MIT"

] | 73 | 2016-07-28T10:52:06.000Z | 2017-06-07T14:58:49.000Z | content/conditions/heat-rash/main-content-4.md | nhsuk/betahealth | 9cc0bbf71000e5322a5dcedfe4e6b4473ef9313e | [

"MIT"

] | 7 | 2016-10-07T12:37:41.000Z | 2021-04-11T07:40:24.000Z | ## Causes of heat rash

Heat rash is usually caused by excessive sweating.

Sweat glands get blocked and the trapped sweat causes a rash to develop a few

days later.

Babies often get it because they can’t control their temperature as well as

adults and children.

Sweating is usually caused by hot or humid weather but other things can cause

it. For example, being overweight or spending long periods in bed, perhaps

because of an illness.

| 31.5 | 77 | 0.795918 | eng_Latn | 0.999989 |

edc9bc90b63f7627caa645498335d22acfb9d392 | 16,442 | md | Markdown | 09-04-2020/13-56.md | preetham/greenhub | 7aac43f72d919533b7515bf016021fc6b9d023f6 | [

"MIT"

] | null | null | null | 09-04-2020/13-56.md | preetham/greenhub | 7aac43f72d919533b7515bf016021fc6b9d023f6 | [

"MIT"

] | null | null | null | 09-04-2020/13-56.md | preetham/greenhub | 7aac43f72d919533b7515bf016021fc6b9d023f6 | [

"MIT"

] | null | null | null | <h2>News Now</h2><table><tr><th>Title</th><th>Content</th><th>URL</th><th>Author</th></tr>