hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6 values | lang stringclasses 1 value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191 values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

6154f0993ef4cd260fb9240f3be39531b99e29fe | 1,839 | md | Markdown | CHANGELOG.md | snjypl/airflow-provider-great-expectations | 00219e18b2219ee7d22a7902cbe0258dfc45f8c2 | [

"Apache-2.0"

] | 91 | 2020-11-16T23:35:42.000Z | 2022-03-29T15:59:04.000Z | CHANGELOG.md | snjypl/airflow-provider-great-expectations | 00219e18b2219ee7d22a7902cbe0258dfc45f8c2 | [

"Apache-2.0"

] | 35 | 2020-11-23T20:59:54.000Z | 2022-03-28T19:50:11.000Z | CHANGELOG.md | snjypl/airflow-provider-great-expectations | 00219e18b2219ee7d22a7902cbe0258dfc45f8c2 | [

"Apache-2.0"

] | 31 | 2020-11-23T15:53:47.000Z | 2022-03-20T11:43:38.000Z | # Apache Airflow Provider for Great Expectations

## Upcoming

* (please add here)

## 0.0.8

* [FEATURE] Add explicit Airflow version handling - thanks, @jeffkpayne!

* [FEATURE] Add example DAG setup conveniences - thanks, @jeffkpayne!

* [FEATURE] Defer initialization of DataContext and other resources - thanks, @jeffkpayne!

* [DOCS] Update README with virtual env and testing process - thanks, @jeffkpayne!

* [MAINTENANCE] Mark additional vars templated in BigQuery operator - thanks, @jeffkpayne!

* [MAINTENANCE] Add type hints and update docstrings - thanks, @jeffkpayne!

* [MAINTENANCE] Add unit and integration test coverage - thanks, @jeffkpayne!

## 0.0.7

* [BUGFIX] Addressed a bug whereby the fail_task_on_validation_failure in the BigQuery operator was being shadowed by the parent class.

* [ENHANCEMENT] Add support for validation operators when running LegacyCheckpoints with the GreatExpectationsOperator

* [DOCS] Fixed typos in documentation - thanks @armandduijn and @sunkickr!

* [MAINTENANCE] Make some improvements to the package by updating setup.py dependencies, exposing the example_dags within that, and adding an __init__.py to the example DAG directory - thanks @petedejoy and @pgzmnk!

## 0.0.6

* [BUGFIX] Update setup.py with appropriate versions

## 0.0.5

* [BREAKING] Updated GreatExpectations operator to work with class-based Checkpoints (Great Expectations >= 0.13.9)

* [ENHANCEMENT] Restructured project, provider metadata, examples, added "how to run" steps to README, and added tests

## 0.0.4

* [ENHANCEMENT] Adding BigQuery operator for easier use with BigQuery and Google Cloud Storage

## 0.0.3

* [ENHANCEMENT] Adding template fields

* [ENHANCEMENT] Adding fail callback function parameter

## 0.0.2

* [ENHANCEMENT] Updated Great Expectations examples

## 0.0.1

* Initial release of the provider

| 45.975 | 216 | 0.771615 | eng_Latn | 0.939772 |

61554ef385108c4c608474304af1001ea98990e9 | 1,533 | md | Markdown | index.md | nik1806/nik1806.github.io | 52d9692432feabeca2293d99394c411fa67a5603 | [

"Unlicense"

] | null | null | null | index.md | nik1806/nik1806.github.io | 52d9692432feabeca2293d99394c411fa67a5603 | [

"Unlicense"

] | null | null | null | index.md | nik1806/nik1806.github.io | 52d9692432feabeca2293d99394c411fa67a5603 | [

"Unlicense"

] | 1 | 2020-03-05T11:10:54.000Z | 2020-03-05T11:10:54.000Z | ## Portfolio

---

### Computer Vision and Robotics projects

---

[ multiple object tracking](https://github.com/nik1806/multi_obj_track_multiprocess)

<img src="images/multiple.jpeg?raw=true"/>

---

[ Motion-Analysis](https://github.com/nik1806/Motion-Analysis)

<img src="images/motionanal.jpeg?raw=true"/>

---

[sober_drunk_classification](https://github.com/nik1806/sober_drunk_classification)

<img src="images/sober.jpeg?raw=true"/>

---

[Human-Pose-Detection](https://github.com/nik1806/Human-Pose-Detection)

<img src="images/pose.png?raw=true"/>

---

[Plant-leaf-infection-detection](https://github.com/nik1806/Plant-leaf-infection-detection)

<img src="images/leaf.png?raw=true"/>

---

[Multi-UAV-collision-avoidance](https://github.com/nik1806/Multi-UAV-collision-avoidance)

<img src="images/uav.png?raw=true"/>

---

### Other projects

- [ multiple object tracking](https://github.com/nik1806/multi_obj_track_multiprocess)

- [ Motion-Analysis](https://github.com/nik1806/Motion-Analysis)

- [sober_drunk_classification](https://github.com/nik1806/sober_drunk_classification)

- [Human-Pose-Detection](https://github.com/nik1806/Human-Pose-Detection)

- [Plant-leaf-infection-detection](https://github.com/nik1806/Plant-leaf-infection-detection)

- [Multi-UAV-collision-avoidance](https://github.com/nik1806/Multi-UAV-collision-avoidance)

---

---

<p style="font-size:11px">Page template forked from <a href="https://github.com/evanca/quick-portfolio">evanca</a></p>

<!-- Remove above link if you don't want to attibute -->

| 29.480769 | 118 | 0.746902 | yue_Hant | 0.303105 |

6156a9c3b6a93952c9b1e41d8ae8668e50e3ef1b | 418 | md | Markdown | wiki/translations/zh-tw/Category:PartDesign.md | dwhr-pi/FreeCAD-documentation | 0c889672d80e7969dcabe83f5ddf503e72a4f5bb | [

"CC0-1.0"

] | null | null | null | wiki/translations/zh-tw/Category:PartDesign.md | dwhr-pi/FreeCAD-documentation | 0c889672d80e7969dcabe83f5ddf503e72a4f5bb | [

"CC0-1.0"

] | null | null | null | wiki/translations/zh-tw/Category:PartDesign.md | dwhr-pi/FreeCAD-documentation | 0c889672d80e7969dcabe83f5ddf503e72a4f5bb | [

"CC0-1.0"

] | null | null | null | # Category:PartDesign/zh-tw

This category lists pages related to the [PartDesign Workbench/zh-tw](PartDesign_Workbench/zh-tw.md).

### Contents:

[Template:PartDesign Tools navi/zh-tw](Template:PartDesign_Tools_navi/zh-tw.md) , [PartDesign Workbench/zh-tw](PartDesign_Workbench/zh-tw.md)

[Category:Workbenches/zh-tw](Category:Workbenches/zh-tw.md)

---

[documentation index](../README.md) > Category:PartDesign/zh-tw

| 34.833333 | 141 | 0.77512 | yue_Hant | 0.870403 |

6156be5044cb424b2898574f5de0300fef316714 | 13,276 | md | Markdown | docs/spark/tutorials/hdinsight-deployment.md | Jteve-Sobs/docs.de-de | 06092136f031dda8715cfe6928a4fdc0ec6c0899 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/spark/tutorials/hdinsight-deployment.md | Jteve-Sobs/docs.de-de | 06092136f031dda8715cfe6928a4fdc0ec6c0899 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/spark/tutorials/hdinsight-deployment.md | Jteve-Sobs/docs.de-de | 06092136f031dda8715cfe6928a4fdc0ec6c0899 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Bereitstellen einer .NET für Apache Spark-Anwendung in Azure HDInsight

description: Erfahren Sie, wie Sie eine .NET für Apache Spark-Anwendung in HDInsight bereitstellen.

ms.date: 01/23/2020

ms.topic: tutorial

ms.custom: mvc

ms.openlocfilehash: 77b57463375c36444532bdd383ec4b3bfe3ab056

ms.sourcegitcommit: 7588136e355e10cbc2582f389c90c127363c02a5

ms.translationtype: HT

ms.contentlocale: de-DE

ms.lasthandoff: 03/15/2020

ms.locfileid: "77504167"

---

# <a name="tutorial-deploy-a-net-for-apache-spark-application-to-azure-hdinsight"></a>Tutorial: Bereitstellen einer .NET für Apache Spark-Anwendung in Azure HDInsight

In diesem Tutorial erfahren Sie, wie Sie Ihre .NET-App für Apache Spark über einen Azure HDInsight-Cluster in der Cloud bereitstellen. HDInsight erleichtert das Erstellen und Konfigurieren eines Spark-Clusters in Azure, da Spark-Cluster in HDInsight mit Azure Storage und Azure Data Lake Storage kompatibel sind.

In diesem Tutorial lernen Sie, wie die folgenden Aufgaben ausgeführt werden:

> [!div class="checklist"]

>

> * Zugreifen über Azure Storage-Explorer auf Ihre Speicherkonten.

> * Erstellen eines Azure HDInsight-Clusters.

> * Veröffentlichen der .NET-App für Apache Spark.

> * Erstellen und Ausführen einer HDInsight-Skriptaktion.

> * Ausführen einer .NET-App für Apache Spark in einem HDInsight-Cluster.

## <a name="prerequisites"></a>Voraussetzungen

Führen Sie die folgenden Schritte aus, bevor Sie beginnen:

* Wenn Sie kein Azure-Abonnement besitzen, können Sie ein [kostenloses Konto](https://azure.microsoft.com/free/) erstellen.

* Melden Sie sich beim [Azure-Portal](https://portal.azure.com/) an.

* Installieren Sie Azure Storage-Explorer auf Ihrem [Windows](https://go.microsoft.com/fwlink/?LinkId=708343&clcid=0x409)-, [Linux](https://go.microsoft.com/fwlink/?LinkId=722418&clcid=0x409)- oder [MacOS](https://go.microsoft.com/fwlink/?LinkId=708342&clcid=0x409)-Computer.

* Vervollständigen Sie das Tutorial [.NET für Apache Spark: Erste Schritte in 10 Minuten](https://dotnet.microsoft.com/learn/data/spark-tutorial/intro).

## <a name="access-your-storage-accounts"></a>Zugreifen auf Ihre Speicherkonten

1. Öffnen Sie den Azure Storage-Explorer.

2. Wählen Sie im linken Menü **Konto hinzufügen** aus, und melden Sie sich bei Ihrem Azure-Konto an.

Nachdem Sie sich angemeldet haben, sollten alle Speicherkonten in Ihrem Besitz und alle Ressourcen angezeigt werden, die Sie in Ihre Speicherkonten hochgeladen haben.

## <a name="create-an-hdinsight-cluster"></a>Erstellen eines HDInsight-Clusters

> [!IMPORTANT]

> Die Abrechnung für die HDInsight-Cluster erfolgt anteilsmäßig auf Minutenbasis und ist unabhängig von ihrer Verwendung. Daher sollten Sie Ihren Cluster nach der Verwendung unbedingt wieder löschen. Weitere Informationen finden Sie im Abschnitt [Bereinigen von Ressourcen](#clean-up-resources) in diesem Tutorial.

1. Besuchen Sie das [Azure-Portal](https://portal.azure.com).

2. Wählen Sie **+ Ressource erstellen**. Wählen Sie dann **HDInsight** aus der Kategorie **Analyse** aus.

3. Geben Sie unter **Grundlagen** die folgenden Werte an:

|Eigenschaft |Beschreibung |

|---------|---------|

|Abonnement | Wählen Sie in der Dropdownliste eines Ihrer aktiven Azure-Abonnements aus. |

|Resource group | Geben Sie an, ob Sie eine neue Ressourcengruppe erstellen oder eine vorhandene Ressourcengruppe verwenden möchten. Eine Ressourcengruppe ist ein Container, der verwandte Ressourcen für eine Azure-Lösung enthält. |

|Clustername | Geben Sie einen Namen für den HDInsight Spark-Cluster an.|

|Speicherort | Wählen Sie einen Speicherort für die Ressourcengruppe aus. Die Vorlage verwendet diesen Standort sowohl für die Erstellung des Clusters als auch für den Standardclusterspeicher. |

|Clustertyp| Wählen Sie als Clustertyp **Spark** aus.|

|Clusterversion|Nach der Auswahl des Clustertyps wird dieses Feld automatisch mit der Standardversion aufgefüllt. Wählen Sie eine Version 2.3 oder 2.4 von Spark aus.|

|Benutzername für Clusteranmeldung| Geben Sie den Anmeldebenutzernamen für den Cluster ein. Der Standardname lautet *admin*. |

|Kennwort für Clusteranmeldung| Geben Sie das Kennwort für die Anmeldung ein. |

|SSH-Benutzername (Secure Shell)| Geben Sie den SSH-Benutzernamen ein. Standardmäßig gilt für dieses Konto dasselbe Kennwort wie für das Konto mit dem *Benutzernamen für die Clusteranmeldung*. |

4. Klicken Sie auf **Weiter: Speicher >>** , um zur Seite **Speicher** zu wechseln. Geben Sie unter **Speicher** die folgenden Werte an:

|Eigenschaft |Beschreibung |

|---------|---------|

|Primärer Speichertyp|Übernehmen Sie den Standardwert **Azure Storage**.|

|Auswahlmethode|Übernehmen Sie den Standardwert **Aus Liste auswählen**.|

|Primäres Speicherkonto|Wählen Sie Ihr Abonnement und eines Ihrer aktiven Speicherkonten in diesem Abonnement aus.|

|Container|Dieser Container ist der spezifische Blobcontainer in Ihrem Speicherkonto, in dem Ihr Cluster nach Dateien sucht, um Ihre App in der Cloud auszuführen. Sie können ihm einen beliebigen verfügbaren Namen zuweisen.|

5. Wählen Sie unter **Überprüfen + erstellen** die Option **Erstellen** aus. Das Erstellen des Clusters dauert ca. 20 Minuten. Der Cluster muss erstellt werden, bevor Sie mit dem nächsten Schritt fortfahren können.

## <a name="publish-your-app"></a>Veröffentlichen der App

Anschließend veröffentlichen Sie die *mySparkApp*, die im Tutorial [.NET für Apache Spark: Erste Schritte in 10 Minuten](https://dotnet.microsoft.com/learn/data/spark-tutorial/intro) erstellt wurde, die Ihrem Spark-Cluster Zugriff auf alle Dateien ermöglicht, die er zum Ausführen Ihrer App benötigt.

1. Führen Sie zum Veröffentlichen der *mySparkApp* die folgenden Befehle aus:

**Unter Windows:**

```dotnetcli

cd mySparkApp

dotnet publish -c Release -f netcoreapp3.0 -r ubuntu.16.04-x64

```

**Unter Linux:**

```bash

cd mySparkApp

foo@bar:~/path/to/app$ dotnet publish -c Release -f netcoreapp3.0 -r ubuntu.16.04-x64

```

2. Führen Sie die folgenden Aufgaben aus, um die veröffentlichten App-Dateien zu komprimieren, damit Sie sie problemlos in ihren HDInsight-Cluster hochladen können.

**Unter Windows:**

Navigieren Sie zu *MySparkApp/bin/Release/netcoreapp3.0/ubuntu.16.04-x64*. Klicken Sie dann mit der rechten Maustaste auf den Ordner **Veröffentlichen**, und wählen Sie **Senden an > Komprimierter Ordner (ZIP-Ordner)** aus. Nennen Sie den neuen Ordner **publish.zip**.

**Führen Sie unter Linux den folgenden Befehl aus:**

```bash

zip -r publish.zip

```

## <a name="upload-files-to-azure"></a>Hochladen von Dateien in Azure

Im nächsten Schritt verwenden Sie Azure Storage-Explorer, um die folgenden fünf Dateien in den Blobcontainer hochzuladen, den Sie als Speicher für Ihren Cluster ausgewählt haben:

* Microsoft.Spark.Worker

* install-worker.sh

* publish.zip

* microsoft-spark-2.3.x-0.3.0.jar

* input.txt.

1. Öffnen Sie Azure Storage-Explorer, und navigieren Sie im Menü auf der linken Seite zu Ihrem Speicherkonto. Führen Sie einen Drilldown zum Blobcontainer für Ihren Cluster unter **Blobcontainer** in Ihrem Speicherkonto aus.

2. *Microsoft.Spark.Worker* unterstützt Apache Spark bei der Ausführung Ihrer App, z.B. für benutzerdefinierte Funktionen (User-Defined Functions, UDFs), die Sie ggf. geschrieben haben. Laden Sie [Microsoft.Spark.Worker](https://github.com/dotnet/spark/releases/download/v0.3.0/Microsoft.Spark.Worker.netcoreapp2.1.linux-x64-0.3.0.tar.gz) herunter. Wählen Sie dann in Azure Storage-Explorer **Hochladen** aus, um den Worker hochzuladen.

3. *install-worker.sh* ist ein Skript, mit dem Sie von .NET für Apache Spark abhängige Dateien in die Knoten Ihres Clusters kopieren können.

Erstellen Sie eine neue Datei mit dem Namen **install-worker.sh** auf Ihrem lokalen Computer, und fügen Sie den [Inhalt von install-worker.sh](https://raw.githubusercontent.com/dotnet/spark/master/deployment/install-worker.sh) auf GitHub ein. Laden Sie dann *install-worker.sh* in Ihren Blobcontainer hoch.

4. Ihr Cluster benötigt die Datei „publish.zip“, die die veröffentlichten Dateien Ihrer App enthält. Navigieren Sie zu Ihrem veröffentlichten Ordner **mySparkApp/bin/Release/netcoreapp3.0/ubuntu.16.04-x64**, und suchen Sie nach **publish.zip**. Laden Sie dann *publish.zip* in Ihren Blobcontainer hoch.

5. Ihr Cluster benötigt den Anwendungscode, der in einer JAR-Datei gepackt wurde. Navigieren Sie zu Ihrem veröffentlichten Ordner **mySparkApp/bin/Release/netcoreapp3.0/ubuntu.16.04-x64**, und suchen Sie nach **microsoft-spark-2.3.x-0.3.0.jar**. Laden Sie die JAR-Datei dann in Ihren Blobcontainer hoch.

Möglicherweise gibt es mehrere JAR-Dateien (für die Versionen 2.3.x und 2.4.x von Spark). Sie müssen die JAR-Datei auswählen, die mit der Version von Spark übereinstimmt, die Sie während der Clustererstellung ausgewählt haben. Wählen Sie beispielsweise *microsoft-spark-2.3.x-0.3.0.jar* aus, wenn Sie Spark 2.3.2 während der Clustererstellung ausgewählt haben.

6. Ihr Cluster benötigt die Eingabe in Ihre App. Navigieren Sie zu Ihrem Verzeichnis **mySparkApp**, und suchen Sie nach **input.txt**. Laden Sie die Eingabedatei in das Verzeichnis **user/sshuser** in Ihren Blobcontainer hoch. Sie stellen über SSH eine Verbindung mit Ihrem Cluster her, und in diesem Ordner sucht der Cluster nach der Eingabe. Die Datei *input.txt* ist die einzige Datei, die in ein bestimmtes Verzeichnis hochgeladen wurde.

## <a name="run-the-hdinsight-script-action"></a>Ausführen der HDInsight-Skriptaktion

Sobald der Cluster ausgeführt wird und Sie Ihre Dateien in Azure hochgeladen haben, führen Sie das Skript **install-worker.sh** auf dem Cluster aus.

1. Navigieren Sie im Azure-Portal zu Ihrem HDInsight Spark-Cluster, und wählen Sie dann **Skriptaktionen**aus.

2. Wählen Sie **+ Neue übermitteln** aus, und geben Sie die folgenden Werte an:

|Eigenschaft |Beschreibung |

|---------|---------|

| Skripttyp |Benutzerdefiniert|

| name | Installieren des Workers|

| Bash-Skript-URI |https://mystorageaccount.blob.core.windows.net/mycontainer/install-worker.sh </br> Klicken Sie zum Bestätigen dieses URIs in Azure Storage-Explorer mit der rechten Maustaste auf „install-Worker.sh“, und wählen Sie „Eigenschaften“ aus. |

| Knotentyp(en)| Worker|

| Parameter | azure </br> wasbs://mycontainer@myStorageAccount.blob.core.windows.net/Microsoft.Spark.Worker.netcoreapp2.1.linux-x64-0.6.0.tar.gz </br> /usr/local/bin

3. Wählen Sie **Erstellen** aus, um das Skript zu übermitteln.

## <a name="run-your-app"></a>Ausführen der App

1. Navigieren Sie im Azure-Portal zu Ihrem HDInsight Spark-Cluster, und wählen Sie dann **SSH und Clusteranmeldung**aus.

2. Kopieren Sie die SSH-Anmeldeinformationen, und fügen Sie diese in ein Terminal ein. Melden Sie sich mit dem Kennwort, das Sie während der Clustererstellung festgelegt haben, bei Ihrem Cluster an. Es sollten Meldungen angezeigt werden, die Sie bei Ubuntu und Spark Willkommen heißen.

3. Verwenden Sie den Befehl **spark-submit**, um Ihre App auf dem HDInsight-Cluster auszuführen. Denken Sie daran, **mycontainer** und **mystorageaccount** im Beispielskript durch die tatsächlichen Namen Ihres Blobcontainers und Speicherkontos zu ersetzen.

```bash

$SPARK_HOME/bin/spark-submit \

--master yarn \

--class org.apache.spark.deploy.dotnet.DotnetRunner \

wasbs://mycontainer@mystorageaccount.blob.core.windows.net/microsoft-spark-2.3.x-0.6.0.jar \

wasbs://mycontainer@mystorageaccount.blob.core.windows.net/publish.zip mySparkApp

```

Wenn Ihre App ausgeführt wird, wird die gleiche Wortzahltabelle aus der lokalen Ausführung von „Erste Schritte“ in der Konsole angezeigt. Herzlichen Glückwunsch, Sie haben Ihre erste .NET-Anwendung für Apache Spark in der Cloud ausgeführt!

## <a name="clean-up-resources"></a>Bereinigen von Ressourcen

HDInsight speichert Ihre Daten in Azure Storage, sodass Sie einen Cluster problemlos löschen können, wenn er nicht verwendet wird. Für einen HDInsight-Cluster fallen auch dann Gebühren an, wenn er nicht verwendet wird. Da die Gebühren für den Cluster erheblich höher sind als die Kosten für den Speicher, ist es sinnvoll, nicht verwendete Cluster zu löschen.

Sie können auch den Namen der Ressourcengruppe auswählen, um die Seite für die Ressourcengruppe zu öffnen, und dann **Ressourcengruppe löschen** auswählen. Indem Sie die Ressourcengruppe löschen, löschen Sie sowohl den HDInsight Spark-Cluster als auch das Standardspeicherkonto.

## <a name="next-steps"></a>Nächste Schritte

In diesem Tutorial haben Sie eine .NET für Apache Spark-Anwendung in Azure HDInsight bereitgestellt. Weitere Informationen finden Sie in der Dokumentation zu Azure HDInsight.

> [!div class="nextstepaction"]

> [Azure HDInsight-Dokumentation](https://docs.microsoft.com/azure/hdinsight/)

| 69.507853 | 442 | 0.77433 | deu_Latn | 0.990295 |

61576892ac6d242afe5213f2c869ad5eae264652 | 1,613 | md | Markdown | exercises/alphametics/README.md | Hilife-Miller/elixir | d4fd59a42d8829fe8a46e813a6d01a85a3f1fa31 | [

"MIT"

] | null | null | null | exercises/alphametics/README.md | Hilife-Miller/elixir | d4fd59a42d8829fe8a46e813a6d01a85a3f1fa31 | [

"MIT"

] | null | null | null | exercises/alphametics/README.md | Hilife-Miller/elixir | d4fd59a42d8829fe8a46e813a6d01a85a3f1fa31 | [

"MIT"

] | 1 | 2018-07-19T23:43:56.000Z | 2018-07-19T23:43:56.000Z | # Alphametics

Write a function to solve alphametics puzzles.

[Alphametics](https://en.wikipedia.org/wiki/Alphametics) is a puzzle where

letters in words are replaced with numbers.

For example `SEND + MORE = MONEY`:

```text

S E N D

M O R E +

-----------

M O N E Y

```

Replacing these with valid numbers gives:

```text

9 5 6 7

1 0 8 5 +

-----------

1 0 6 5 2

```

This is correct because every letter is replaced by a different number and the

words, translated into numbers, then make a valid sum.

Each letter must represent a different digit, and the leading digit of

a multi-digit number must not be zero.

Write a function to solve alphametics puzzles.

## Running tests

Execute the tests with:

```bash

$ elixir alphametics_test.exs

```

### Pending tests

In the test suites, all but the first test have been skipped.

Once you get a test passing, you can unskip the next one by

commenting out the relevant `@tag :pending` with a `#` symbol.

For example:

```elixir

# @tag :pending

test "shouting" do

assert Bob.hey("WATCH OUT!") == "Whoa, chill out!"

end

```

Or, you can enable all the tests by commenting out the

`ExUnit.configure` line in the test suite.

```elixir

# ExUnit.configure exclude: :pending, trace: true

```

For more detailed information about the Elixir track, please

see the [help page](http://exercism.io/languages/elixir).

## Source

Wikipedia [https://en.wikipedia.org/wiki/Alphametics](https://en.wikipedia.org/wiki/Alphametics)

## Submitting Incomplete Solutions

It's possible to submit an incomplete solution so you can see how others have completed the exercise.

| 21.797297 | 101 | 0.723497 | eng_Latn | 0.990073 |

61584e0760cad492e2ad78310188fead4635f37c | 553 | md | Markdown | README.md | carlostojal/BarnabyChatbot | 7a09f256ffdf1393e9b3a023afc1e33a74e5db73 | [

"MIT"

] | 1 | 2020-03-30T22:38:05.000Z | 2020-03-30T22:38:05.000Z | README.md | carlostojal/BarnabyChatbot | 7a09f256ffdf1393e9b3a023afc1e33a74e5db73 | [

"MIT"

] | null | null | null | README.md | carlostojal/BarnabyChatbot | 7a09f256ffdf1393e9b3a023afc1e33a74e5db73 | [

"MIT"

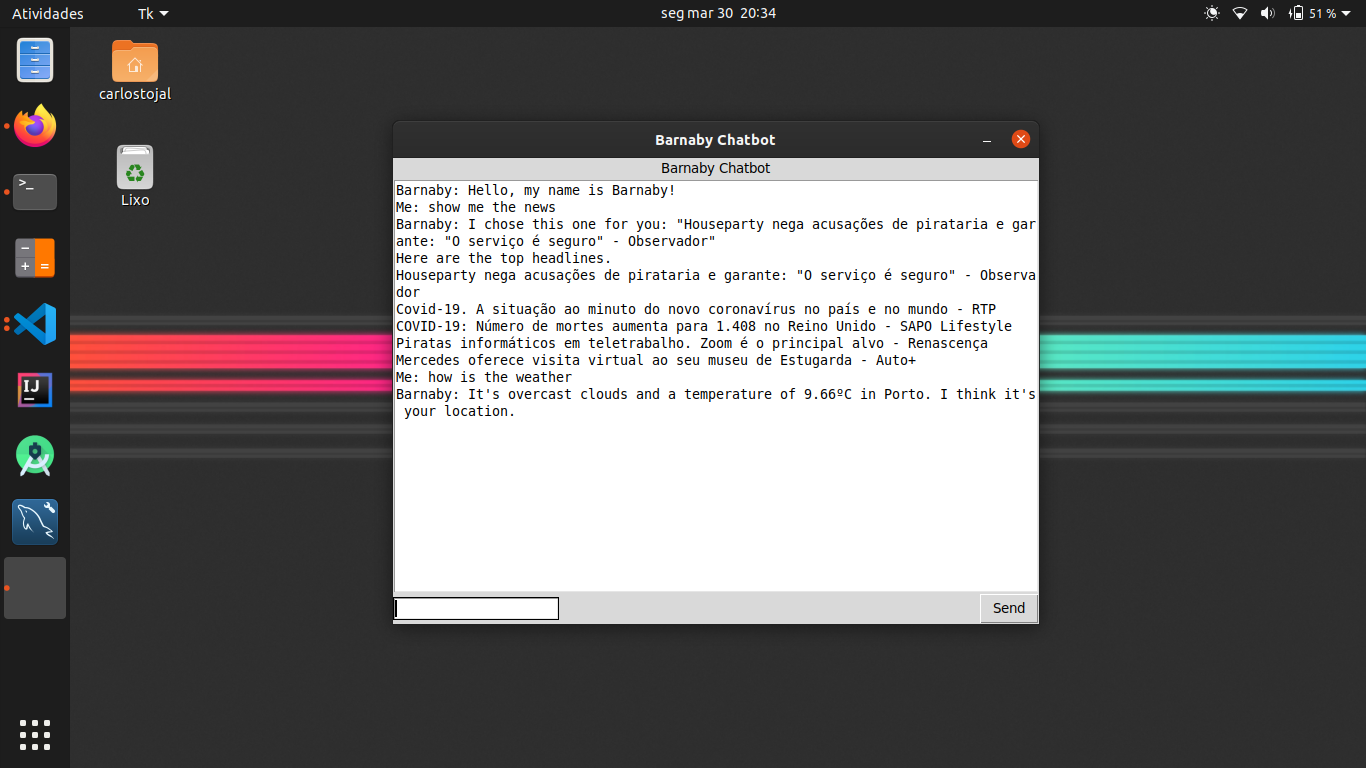

] | null | null | null | # BarnabyChatbot

BarnabyChatbot is a simple chatbot used as demo for [Barnaby API](https://github.com/carlostojal/Barnaby). Its goal is to show how easy is to use Barnaby API.

## How to use

* Run ```setup.sh``` to install all required dependencies.

* Start the Barnaby API. See Barnaby API documentation. Check the address and port (Default: 127.0.0.1:5000)

* Start the Barnaby Chatbot. (```barnaby.py```)

| 46.083333 | 158 | 0.768535 | eng_Latn | 0.638043 |

61588ff2deed54f7ce5697f9b582dbc4e7b87904 | 86 | md | Markdown | docs/src/index.md | kklot/Epi.jl | 13498e5d98d028b318c1bd0ce7540afef250521c | [

"MIT"

] | 1 | 2021-03-16T17:33:19.000Z | 2021-03-16T17:33:19.000Z | docs/src/index.md | kklot/Epi.jl | 13498e5d98d028b318c1bd0ce7540afef250521c | [

"MIT"

] | null | null | null | docs/src/index.md | kklot/Epi.jl | 13498e5d98d028b318c1bd0ce7540afef250521c | [

"MIT"

] | null | null | null | # Epi.jl Documentation

```@autodocs

Modules = [Epi]

Order = [:function, :type]

```

| 12.285714 | 28 | 0.616279 | yue_Hant | 0.752256 |

61589fbc68e783f1105c99b846ea78183ad1be10 | 644 | md | Markdown | README.md | Nixon120/go-api-docker-compose | b2ec99864cc07e91cc27033dd6aa5a9d572e6806 | [

"MIT"

] | 1 | 2022-01-22T15:49:03.000Z | 2022-01-22T15:49:03.000Z | README.md | Nixon120/go-api-docker-compose | b2ec99864cc07e91cc27033dd6aa5a9d572e6806 | [

"MIT"

] | 2 | 2021-06-02T23:33:56.000Z | 2021-06-02T23:51:56.000Z | README.md | abdulazeez-okteto/go-api-docker-compose | 4fdce652d678be27d0845d36c7040f9023b0bc2e | [

"MIT"

] | 1 | 2021-12-13T05:24:48.000Z | 2021-12-13T05:24:48.000Z | # Get Started with Docker Compose on Okteto

[](https://cloud.okteto.com/deploy?repository=https://github.com/okteto/go-api-docker-compose)

This example shows how to deploy a docker-compose application to Okteto. Read the detailed guide on [how you can deploy your docker-compose applications to Okteto](https://okteto.com/blog/a-step-by-step-guide-on-deploying-your-docker-compose-application-to-okteto/) and [how you can develop your docker-compose application remotely on Okteto Cloud](https://okteto.com/blog/how-to-develop-docker-compose-applications-remotely-in-okteto-cloud/).

| 107.333333 | 443 | 0.795031 | eng_Latn | 0.425346 |

6158e7c64966c97b1dd6d16b320c7bd4f0b2e08c | 5,037 | md | Markdown | treebanks/yo_ytb/yo_ytb-dep-compound.md | emmettstr/docs | 2d0376d6e07f3ffa828f6152d12cf260a530c64d | [

"Apache-2.0"

] | 204 | 2015-01-20T16:36:39.000Z | 2022-03-28T00:49:51.000Z | treebanks/yo_ytb/yo_ytb-dep-compound.md | emmettstr/docs | 2d0376d6e07f3ffa828f6152d12cf260a530c64d | [

"Apache-2.0"

] | 654 | 2015-01-02T17:06:29.000Z | 2022-03-31T18:23:34.000Z | treebanks/yo_ytb/yo_ytb-dep-compound.md | emmettstr/docs | 2d0376d6e07f3ffa828f6152d12cf260a530c64d | [

"Apache-2.0"

] | 200 | 2015-01-16T22:07:02.000Z | 2022-03-25T11:35:28.000Z | ---

layout: base

title: 'Statistics of compound in UD_Yoruba-YTB'

udver: '2'

---

## Treebank Statistics: UD_Yoruba-YTB: Relations: `compound`

This relation is universal.

There are 2 language-specific subtypes of `compound`: <tt><a href="yo_ytb-dep-compound-prt.html">compound:prt</a></tt>, <tt><a href="yo_ytb-dep-compound-svc.html">compound:svc</a></tt>.

77 nodes (1%) are attached to their parents as `compound`.

74 instances of `compound` (96%) are left-to-right (parent precedes child).

Average distance between parent and child is 1.48051948051948.

The following 11 pairs of parts of speech are connected with `compound`: <tt><a href="yo_ytb-pos-VERB.html">VERB</a></tt>-<tt><a href="yo_ytb-pos-SCONJ.html">SCONJ</a></tt> (30; 39% instances), <tt><a href="yo_ytb-pos-NOUN.html">NOUN</a></tt>-<tt><a href="yo_ytb-pos-NOUN.html">NOUN</a></tt> (16; 21% instances), <tt><a href="yo_ytb-pos-ADJ.html">ADJ</a></tt>-<tt><a href="yo_ytb-pos-ADP.html">ADP</a></tt> (8; 10% instances), <tt><a href="yo_ytb-pos-PRON.html">PRON</a></tt>-<tt><a href="yo_ytb-pos-PART.html">PART</a></tt> (6; 8% instances), <tt><a href="yo_ytb-pos-VERB.html">VERB</a></tt>-<tt><a href="yo_ytb-pos-NOUN.html">NOUN</a></tt> (6; 8% instances), <tt><a href="yo_ytb-pos-ADJ.html">ADJ</a></tt>-<tt><a href="yo_ytb-pos-ADJ.html">ADJ</a></tt> (3; 4% instances), <tt><a href="yo_ytb-pos-NOUN.html">NOUN</a></tt>-<tt><a href="yo_ytb-pos-ADJ.html">ADJ</a></tt> (2; 3% instances), <tt><a href="yo_ytb-pos-VERB.html">VERB</a></tt>-<tt><a href="yo_ytb-pos-ADV.html">ADV</a></tt> (2; 3% instances), <tt><a href="yo_ytb-pos-VERB.html">VERB</a></tt>-<tt><a href="yo_ytb-pos-PART.html">PART</a></tt> (2; 3% instances), <tt><a href="yo_ytb-pos-ADJ.html">ADJ</a></tt>-<tt><a href="yo_ytb-pos-ADV.html">ADV</a></tt> (1; 1% instances), <tt><a href="yo_ytb-pos-ADV.html">ADV</a></tt>-<tt><a href="yo_ytb-pos-ADV.html">ADV</a></tt> (1; 1% instances).

~~~ conllu

# visual-style 4 bgColor:blue

# visual-style 4 fgColor:white

# visual-style 3 bgColor:blue

# visual-style 3 fgColor:white

# visual-style 3 4 compound color:blue

1 Ọlọ́run ọlọ́run NOUN _ _ 3 nsubj _ Gloss=god|Ref=GEN_1.3

2 sì sì CCONJ _ _ 3 cc _ Gloss=then|Ref=GEN_1.3

3 wí wí VERB _ _ 0 root _ Gloss=said|Ref=GEN_1.3

4 pé pé SCONJ _ _ 3 compound _ Gloss=that|Ref=GEN_1.3|SpaceAfter=No

5 , , PUNCT _ _ 12 punct _ Gloss=,|Ref=GEN_1.3

6 “ “ PUNCT _ _ 12 punct _ Gloss=“|Ref=GEN_1.3|SpaceAfter=No

7 Jẹ́ jẹ́ VERB _ _ 12 ccomp _ Gloss=let|Ref=GEN_1.3

8 kí kí AUX _ _ 7 aux _ Gloss=|Ref=GEN_1.3

9 ìmọ́lẹ̀ ìmọ́lẹ̀ NOUN _ _ 12 nsubj _ Gloss=light|Ref=GEN_1.3

10 kí kí AUX _ _ 12 aux _ Gloss=let|Ref=GEN_1.3

11 ó ó PRON _ Case=Nom|Number=Sing|Person=3|PronType=Prs 12 expl _ Gloss=he|Ref=GEN_1.3

12 wà wà VERB _ _ 3 ccomp _ Gloss=was|Ref=GEN_1.3|SpaceAfter=No

13 , , PUNCT _ _ 12 punct _ Gloss=,|Ref=GEN_1.3|SpaceAfter=No

14 ” ” PUNCT _ _ 12 punct _ Gloss=”|Ref=GEN_1.3

15 ìmọ́lẹ̀ ìmọ́lẹ̀ NOUN _ _ 17 nsubj _ Gloss=light|Ref=GEN_1.3

16 sì sì CCONJ _ _ 17 cc _ Gloss=and|Ref=GEN_1.3

17 wà wà VERB _ _ 3 conj _ Gloss=was|Ref=GEN_1.3|SpaceAfter=No

18 . . PUNCT _ _ 3 punct _ Gloss=.|Ref=GEN_1.3

~~~

~~~ conllu

# visual-style 11 bgColor:blue

# visual-style 11 fgColor:white

# visual-style 9 bgColor:blue

# visual-style 9 fgColor:white

# visual-style 9 11 compound color:blue

1 Wọ́n Wọ́n PRON _ Case=Acc|Number=Plur|Person=3|PronType=Prs 7 nsubj _ _

2 bí bí SCONJ _ _ 7 mark _ _

3 Ẹ̀bùn Ẹ̀bùn NOUN _ _ 7 nsubj _ _

4 Olóyèdé Olóyèdé NOUN _ _ 3 nmod _ _

5 ní ní ADP _ _ 6 case _ _

6 ilú ilú NOUN _ _ 3 nmod _ _

7 Kẹ́nta Kẹ́nta ADV _ _ 0 root _ SpaceAfter=No

8 , , PUNCT _ _ 9 punct _ _

9 Òkè òkè NOUN _ _ 7 conj _ SpaceAfter=No

10 - - PUNCT _ _ 11 punct _ SpaceAfter=No

11 Èjìgbò Èjìgbò NOUN _ _ 9 compound _ _

12 Abẹ́òkúta Abẹ́òkúta NOUN _ _ 9 nmod _ SpaceAfter=No

13 . . PUNCT _ _ 7 punct _ SpacesAfter=\n\n

~~~

~~~ conllu

# visual-style 3 bgColor:blue

# visual-style 3 fgColor:white

# visual-style 1 bgColor:blue

# visual-style 1 fgColor:white

# visual-style 1 3 compound color:blue

1 Alábùkún Alábùkún ADJ _ _ 8 nsubj _ Ref=MATT_5.4|SpaceAfter=No|Gloss=blessed

2 - - PUNCT _ _ 3 punct _ Ref=MATT_5.4|SpaceAfter=No|Gloss=the

3 fún fún ADP _ _ 1 compound _ Ref=MATT_5.4|Gloss=unto

4 ni ni PART _ _ 6 case _ Ref=MATT_5.4|Gloss=is

5 àwọn àwọn PRON _ Case=Nom|Number=Plur|Person=3|PronType=Prs 6 nmod _ Ref=MATT_5.4|Gloss=they

6 tí tí PRON _ PronType=Rel 8 nmod _ Ref=MATT_5.4|Gloss=that

7 ń ń AUX _ _ 8 aux _ Ref=MATT_5.4|Gloss=that

8 ṣọ̀fọ̀ ṣọ̀fọ̀ VERB _ _ 0 root _ Ref=MATT_5.4|SpaceAfter=No|Gloss=mourn

9 , , PUNCT _ _ 13 punct _ Ref=MATT_5.4|Gloss=,

10 nítorí nítorí SCONJ _ _ 13 mark _ Ref=MATT_5.4|Gloss=for

11 a a PRON _ Case=Nom|Number=Plur|Person=1|PronType=Prs 13 nsubj _ Ref=MATT_5.4|Gloss=we

12 ó ó AUX _ _ 13 aux _ Ref=MATT_5.4|Gloss=will

13 tù tù VERB _ _ 8 advcl _ Ref=MATT_5.4|Gloss=comfort

14 wọ́n wọ́n PRON _ Case=Acc|Number=Plur|Person=3|PronType=Prs 13 obj _ Ref=MATT_5.4|Gloss=them

15 nínú nínú ADP _ _ 13 obl _ Ref=MATT_5.4|SpaceAfter=No|Gloss=inside

16 . . PUNCT _ _ 8 punct _ Ref=MATT_5.4|Gloss=.

~~~

| 51.927835 | 1,346 | 0.698233 | yue_Hant | 0.583257 |

615943c01026e549aa9248eedfdd9cf64fb5d3cf | 3,312 | md | Markdown | microsoft-365/bookings/schedule-closures-time-off-vacation.md | MicrosoftDocs/microsoft-365-docs-pr.es-ES | 90e5004934c592bb15f72edd88bec954c94ad710 | [

"CC-BY-4.0",

"MIT"

] | 19 | 2020-05-18T20:10:47.000Z | 2022-03-09T07:27:47.000Z | microsoft-365/bookings/schedule-closures-time-off-vacation.md | MicrosoftDocs/microsoft-365-docs-pr.es-ES | 90e5004934c592bb15f72edd88bec954c94ad710 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2022-02-09T06:48:57.000Z | 2022-02-09T06:49:35.000Z | microsoft-365/bookings/schedule-closures-time-off-vacation.md | MicrosoftDocs/microsoft-365-docs-pr.es-ES | 90e5004934c592bb15f72edd88bec954c94ad710 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2019-05-25T04:43:49.000Z | 2021-05-26T19:33:23.000Z | ---

title: Programar cierres de negocios, tiempo libre y tiempo de vacaciones

ms.author: kwekua

author: kwekuako

manager: scotv

audience: Admin

ms.topic: article

ms.service: bookings

ms.localizationpriority: medium

ms.assetid: e3c0a4ee-e3d8-4fbe-bd8f-16d1c712d1f4

description: Programe los cierres de oficina y el tiempo de descanso de los empleados del calendario de Bookings para que los empleados se marquen como no disponibles para las reservas durante las horas especificadas.

ms.openlocfilehash: 30f43af083da6a41ab8458e377bdc9fc99690678

ms.sourcegitcommit: d4b867e37bf741528ded7fb289e4f6847228d2c5

ms.translationtype: MT

ms.contentlocale: es-ES

ms.lasthandoff: 10/06/2021

ms.locfileid: "60164073"

---

# <a name="schedule-business-closures-time-off-and-vacation-time"></a>Programar cierres de negocios, tiempo libre y tiempo de vacaciones

En ocasiones, querrá cerrar su negocio por vacaciones o eventos de equipo, o sus empleados necesitarán tiempo libre cuando estén enfermos, de vacaciones o no estén disponibles por otros motivos. Puedes programar el tiempo de espera del calendario de Microsoft Bookings y el empleado no estará disponible para las reservas durante el tiempo especificado. Una vez que la empresa vuelva a abrirse o los empleados vuelvan al trabajo, todos aparecerán en la página de reserva de acuerdo con sus horas de trabajo establecidas.

Vea este vídeo o siga los pasos que se indican a continuación para programar cierres de negocios o la baja de empleados.

> [!VIDEO https://www.microsoft.com/videoplayer/embed/RE2TxDC]

## <a name="schedule-ad-hoc-business-closures"></a>Programar cierres de negocios ad hoc

1. En Microsoft 365, selecciona el iniciador de aplicaciones y, a continuación, selecciona Bookings.

1. En el panel de navegación, seleccione **Tiempo de** espera \> **del calendario**.

1. Rellene los detalles, incluidos el título, la fecha y las horas de inicio y finalización, la ubicación y las notas adicionales.

1. Seleccione **Evento Todo el día**.

1. Seleccione todos los miembros del personal.

1. Seleccione **Guardar**.

Cuando un cliente intenta programar el servicio en un día que la oficina está cerrada, verá un mensaje en la página de reserva.

## <a name="schedule-employee-time-off"></a>Programar el tiempo de descanso de los empleados

1. En Microsoft 365, seleccione el iniciador de aplicaciones y, a continuación, seleccione **Bookings**.

1. En el panel de navegación, seleccione **Tiempo de** espera \> **del calendario**.

1. Rellene los detalles, incluidos el título, la fecha y las horas de inicio y finalización, la ubicación y las notas adicionales. Si el empleado se va a ir durante un día completo o durante varios días, seleccione **Evento Todo el día**.

1. Seleccione el miembro del personal o los miembros que están tomando el tiempo libre.

1. Haga clic en **Guardar**.

| 53.419355 | 520 | 0.785326 | spa_Latn | 0.99046 |

61599921520fc853e8f48d0e083bab6b55de0b4b | 1,766 | md | Markdown | sails-docs/reference/websockets/sails.io.js/socket.post.md | tindNan/sails | a29cab7ece19d744e98b39ef82e537ae67f59875 | [

"MIT"

] | null | null | null | sails-docs/reference/websockets/sails.io.js/socket.post.md | tindNan/sails | a29cab7ece19d744e98b39ef82e537ae67f59875 | [

"MIT"

] | 1 | 2021-02-23T17:52:34.000Z | 2021-02-23T17:52:34.000Z | sails-docs/reference/websockets/sails.io.js/socket.post.md | tindNan/sails | a29cab7ece19d744e98b39ef82e537ae67f59875 | [

"MIT"

] | null | null | null | # `io.socket.post()`

Send a socket request (virtual POST) to a Sails server using Socket.IO.

```js

io.socket.post(url, data, function (resData, jwres){

// ...

});

```

### Usage

| | Argument | Type | Details |

|---|------------|:------------:|---------|

| 1 | url | ((string)) | The destination URL path, e.g. "/checkout".

| 2 | _data_ | ((json?)) | Optional request data. If provided, it will be JSON-encoded and included as the virtual HTTP body.

| 3 | _callback_ | ((function?)) | Optional callback. If provided, it will be called when the server responds.

##### Callback

| | Argument | Type | Details |

|---|-----------|:------------:|---------|

| 1 | resData | ((json)) | Data received in the response from the Sails server (=== `jwres.body`, and also equivalent to the HTTP response body).

| 2 | jwres | ((dictionary)) | A [JSON WebSocket Response](https://github.com/balderdashy/sails/blob/master/sails-docs/PAGE_NEEDED.md) object. Has `headers`, a `body`, and a `statusCode`.

### Example

```html

<script>

io.socket.post('/users', { name: 'Timmy Mendez' }, function (resData, jwRes) {

jwRes.statusCode; // => 200

});

</script>

```

### Notes

> + Remember that you can communicate with _any of your routes_ using socket requests.

> + Need to customize request headers? Check out the slightly lower-level [`io.socket.request()`](https://sailsjs.com/documentation/reference/web-sockets/socket-client/io-socket-request) method. To set custom headers for _all_ outgoing requests, check out [`io.sails.headers`](https://sailsjs.com/documentation/reference/web-sockets/socket-client/io-sails).

<docmeta name="displayName" value="io.socket.post()">

<docmeta name="pageType" value="method">

| 37.574468 | 358 | 0.638165 | eng_Latn | 0.767344 |

6159fd6d58f0fb4115fa0229d6899cc41a34309e | 4,543 | md | Markdown | README.md | DanIulian/PeerToPeer-Torrent-Client | 80fe0eb76fa33c9a7746020d5ddd2042b1c84569 | [

"MIT"

] | 1 | 2018-07-23T09:01:07.000Z | 2018-07-23T09:01:07.000Z | README.md | DanIulian/PeerToPeer-Torrent-Client | 80fe0eb76fa33c9a7746020d5ddd2042b1c84569 | [

"MIT"

] | null | null | null | README.md | DanIulian/PeerToPeer-Torrent-Client | 80fe0eb76fa33c9a7746020d5ddd2042b1c84569 | [

"MIT"

] | null | null | null | # PeerToPeer-Torrent-Client

The purpose of this project is to design and implement a scalable and fully functional PeerToPeer Torrent Application for files transfer.

## Table of Contents

1. [Project overview](#project-overview)

2. [Requirements](#requirements)

3. [Architecture](#architecture)

- [Communication Protocol](#communication-protocol)

- [Main Node](#main-node)

- [Client](#client)

## Project Overview

The purpose of this project is to implement in Java a scalable and fully functional PeerToPeer Torrent Application for files transfer. The application consists of a Central

Node which stores all the information regarding the shared files, and a variable number of Clients (peers) which connect to the Central Node, search for information about a file, and download it from the peers that have the requested file.

The clients connect and leave the network in an asynchronous manner and can choose to publish a file or to download one. Information about the published files will be available

on the Main Node. However, the actual file will reside only at the client that published it, or at the clients that have downloaded it. Each file is uniquely identified by

its name and when it is first published, it is splitted in fragments of equal sizes. The port and the IP for each Client will be variable and will depend on the time that the

Client connected to the network. However, the Main Node will have a fixed public IP and port, known in advance by each client that connects to it.

Every operation completed by the clients and the Main Node will be logged using the log4j API.

## Requirements

* A machine with a 2 GB Ram

* Ubuntu 14.04 or higher

* JAVA Version 7 or higher and log4j JAR

* Apache ANT for deployment

In order to run the application:

./ ant build

./ ant run-server

./ ant run-client1[run-client2, run-client3]

./ ant clean

## Architecture

##### Communication Protocol

The peers and Main Node communicates with each other using a custom protocol described by the comm_proto package which contains the classes FileDescription, FragmentDescription

and FragmentFile. Each message exchanged between two entities in the network uses a 12 bytes HEADER. The first 4 bytes contains the thpe of the message ( Publish a fragment,

Request Information about a File, Publish a File). The next 4 bytes specifies the length of the data message, and the final 4 bytes contains the port on which the client listens. The DATA filed of the message contains the serial representation of one of the three classes mentioned earlier or the serial representation of the name of the file that

a client requests information about. Every time an entity in the network receive a message, it will first read 12 bytes which represents the HEADER and then it will read the DATA.

#### Main Node

The Main Node is implemented using the central_nod_pkg package which has three classes. The MainServer class starts the Main Node, the CentralNode class

contains the implementation of the MainNode, and the PublishedFile class is used to encapsulate all the information required about a published file.

The server has a list of all published file. Each element of the list has information about the file name, the file length, the first fragment length.

There is also a list of FragmentFile objects that has information about each fragment and all the clients that have the fragment in question.

The Main Node implementation uses asynchronous sockets and a selector. The server runs in a different thread.

#### Client

The client uses a thread pool for uploading and downloading files. Each thread is managing a single fragment of the file. There is also a special thread that

exchanges messages with the Main Node, the class ClientAsServer implements the behaviour of this thread. For each fragment downloaded, a new socket connection

is established with the other peer; the connection is closed after the fragment is successfully received. For the thread pool I used Executor class and I have set the maximum

number of connections to 10. The class ClientRequest is in charge of processing a request for a fragment, and the class AskForFragment is in charge of downloading a fragment

from another client. The fragment size depends on the length of the file and can vary from 2KB to 512KB

Each client will store the files in different directory.

## Copyright and License

This application is provided under the [MIT-license](https://github.com/DanIulian/PeerToPeer-Torrent-Client/blob/master/LICENSE).

| 57.506329 | 347 | 0.794849 | eng_Latn | 0.999624 |

615b8894fb47cf4c9878aa72f724b5e40a77cd6d | 1,694 | md | Markdown | _publications/2_tuple.md | oguztoragay/oguztoragay.github.io | 853e2f5fa9a90ed2dee94b9f6c1c4b762b8566b0 | [

"MIT"

] | 1 | 2021-08-30T15:20:02.000Z | 2021-08-30T15:20:02.000Z | _publications/2_tuple.md | oguztoragay/academicpages.github.io | 8fabe272e174577473807868f270014122736430 | [

"MIT"

] | null | null | null | _publications/2_tuple.md | oguztoragay/academicpages.github.io | 8fabe272e174577473807868f270014122736430 | [

"MIT"

] | null | null | null | ---

title: "Performance Evaluation of Faculty Departments by a Delphi Method Based on 2-Tuple fuzzy Linguistic Representation Model and TOPSIS"

collection: publications

permalink: /publication/2010-10-01-paper-title-number-2

excerpt: ''

date: 2015-10-10

venue: 'International Journal of Basic and Applied Sciences IJBAS-IJENS, Vol: 15, No: 05'

paperurl: 'http://ijens.org/Vol_15_I_05/150205-7373-IJBAS-IJENS.pdf'

citation: 'TORAGAY, Oguz, and Murat ARIKAN. "Performance Evaluation of Faculty Departments by a Delphi Method Based on 2-Tuple fuzzy Linguistic Representation Model and TOPSIS." International Journal of Basic & Applied Sciences 15 (2015): 1-10'

---

The development of and competition in educational facilities gradually increase the service quality’s importance. To accommodate this rapid process, educational organisations

attempt to measure their performance and to enhance their standards. In general, an organisation’s performance does not depend solely on one criterion; instead, it should be evaluated based on multiple criteria. In this study, the academic performances of the departments within the Engineering Faculty of one of the largest universities in Turkey, Gazi University, have been compared using a multi-attribute decision making (MADM) method, TOPSIS. The criteria weights for the TOPSIS method are determined dependent upon linguistically expressed expert opinions. Therefore, a Delphi method based on the 2-tuple fuzzy linguistic representation model for computing with words is proposed. A sensitivity analysis study is also performed to determine the most critical criterion.

[Download paper here](http://ijens.org/Vol_15_I_05/150205-7373-IJBAS-IJENS.pdf)

| 112.933333 | 775 | 0.815821 | eng_Latn | 0.980801 |

615be5e0199627ab6e7e7f627aa1f82c309d232b | 548 | md | Markdown | integrations/adlearn-open-platform/README.md | carrotquest/analytics.js-integrations | c129f4647bab6ff846618cc2192f33b8952d34e6 | [

"MIT"

] | 116 | 2015-01-13T01:38:37.000Z | 2022-03-25T09:21:16.000Z | integrations/adlearn-open-platform/README.md | carrotquest/analytics.js-integrations | c129f4647bab6ff846618cc2192f33b8952d34e6 | [

"MIT"

] | 495 | 2015-01-01T06:51:12.000Z | 2022-03-29T21:06:59.000Z | integrations/adlearn-open-platform/README.md | carrotquest/analytics.js-integrations | c129f4647bab6ff846618cc2192f33b8952d34e6 | [

"MIT"

] | 205 | 2015-01-05T16:00:28.000Z | 2022-03-23T11:32:41.000Z | # analytics.js-integration-adlearn-open-platform [![Build Status][ci-badge]][ci-link]

Adlearn Open Platform integration for [Analytics.js][].

## License

Released under the [MIT license](License.md).

[Analytics.js]: https://segment.com/docs/libraries/analytics.js/

[ci-link]: https://ci.segment.com/gh/segment-integrations/analytics.js-integration-adlearn-open-platform

[ci-badge]: https://ci.segment.com/gh/segment-integrations/analytics.js-integration-adlearn-open-platform.svg?style=svg&circle-token=b8a9d9117d4dd82c72334dcfd98e02b3a810b783

| 42.153846 | 173 | 0.791971 | hun_Latn | 0.193095 |

615e18b40c5831986e01981de4424370f1cb209e | 512 | md | Markdown | packages/sudoku-solver/README.md | christianjuth/lerna-monorepo | c004d8ae9a1888c9107b049c8ddb058340d926ab | [

"MIT"

] | 10 | 2021-12-11T03:13:44.000Z | 2021-12-12T16:15:18.000Z | packages/sudoku-solver/README.md | christianjuth/lerna-monorepo | c004d8ae9a1888c9107b049c8ddb058340d926ab | [

"MIT"

] | 1 | 2022-02-01T12:21:21.000Z | 2022-02-16T21:43:42.000Z | packages/sudoku-solver/README.md | christianjuth/monorepo | c004d8ae9a1888c9107b049c8ddb058340d926ab | [

"MIT"

] | null | null | null | # `@christianjuth/sudoku-solver`

Depth first search Sudoku solver.

[Demo](https://npm.christianjuth.com/sudoku-solver)

## Usage

```javascript

import { solve } from '../src/sudoku-solver'

const sudoku = [

0, 3, 0, 4, 9, 0, 0, 1, 0,

7, 4, 0, 0, 1, 8, 0, 0, 0,

1, 9, 6, 7, 0, 0, 0, 2, 4,

0, 0, 0, 5, 0, 1, 7, 6, 2,

0, 0, 3, 0, 2, 7, 0, 5, 9,

0, 0, 0, 0, 4, 0, 3, 0, 0,

0, 7, 8, 9, 0, 0, 0, 0, 0,

4, 2, 9, 0, 0, 0, 0, 7, 3,

0, 0, 0, 3, 7, 0, 0, 9, 8,

]

const { solution } = solve(sudoku)

```

| 19.692308 | 51 | 0.496094 | krc_Cyrl | 0.998723 |

615e893c4e1d640c1422c39d59d69c36366fa063 | 1,153 | markdown | Markdown | _posts/2012-02-22-file-upload.markdown | jackoliver/wallymathieu.github.io | 50ae9b9854f9d9be659c5e3fedc633f6fd848ae0 | [

"MIT"

] | 1 | 2019-01-21T03:46:33.000Z | 2019-01-21T03:46:33.000Z | _posts/2012-02-22-file-upload.markdown | jackoliver/wallymathieu.github.io | 50ae9b9854f9d9be659c5e3fedc633f6fd848ae0 | [

"MIT"

] | 81 | 2019-01-06T21:52:15.000Z | 2022-02-26T12:39:38.000Z | _posts/2012-02-22-file-upload.markdown | wallymathieu/assertfail | 0a7e85a24084e4e767224e6dd14af46d443aae5e | [

"MIT"

] | 1 | 2015-08-11T16:05:56.000Z | 2015-08-11T16:05:56.000Z | ---

layout: post

title: "File upload"

date: 2012-02-22T21:00:00+01:00

tags: javascript

---

The alternatives right now (2012-02-22):<br><br><ol>

<li>Standard html: File select together in a form that is submitted to a page. (firefox, chrome, IE)</li>

<li>Iframe hack: File select together with hidden iframe used to upload file. (firefox, chrome, IE)</li>

<li>Flash (all flash enabled browsers)

</li>

<li>FileReader

(firefox, chrome)</li>

<ol>

<li>Drag and drop together with FileReader and ajax post. </li>

<li>File select together with FileReader and ajax post.</li>

</ol>

</ol>

<div>

When building ordinary web applications then the first alternative is definitely the best. It's simple and works well. If you are building a more javascript intense application you are in a different mess. If you are supporting IE 7-9 then alternative 2 and 3 is what you are looking for. To enable the best experience you could sniff if the browser handles FileReader (since IE10 might support it) and enable a better experience in that case.</div>

<div>

<br>

</div>

<div>

Note: I've only considered firefox, chrome and IE. </div>

<div style="clear: both;"></div> | 42.703704 | 449 | 0.740676 | eng_Latn | 0.990724 |

615eba68bd106b5a95cbf67e30b920f191640738 | 14,786 | md | Markdown | articles/remote-rendering/how-tos/frontend-apis.md | ZetaPR/azure-docs.es-es | 0e2bf787d1d9ab12065fcb1091a7f13b96c6f8a2 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2021-03-12T23:37:16.000Z | 2021-03-12T23:37:16.000Z | articles/remote-rendering/how-tos/frontend-apis.md | ZetaPR/azure-docs.es-es | 0e2bf787d1d9ab12065fcb1091a7f13b96c6f8a2 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/remote-rendering/how-tos/frontend-apis.md | ZetaPR/azure-docs.es-es | 0e2bf787d1d9ab12065fcb1091a7f13b96c6f8a2 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: API de front-end de Azure para la autenticación

description: Explica cómo usar la API de front-end en C# para la autenticación

author: florianborn71

ms.author: flborn

ms.date: 02/12/2010

ms.topic: how-to

ms.custom: devx-track-csharp

ms.openlocfilehash: 5f0519b60d3b02c8312e15861441060ca89ab002

ms.sourcegitcommit: 692382974e1ac868a2672b67af2d33e593c91d60

ms.translationtype: HT

ms.contentlocale: es-ES

ms.lasthandoff: 10/22/2021

ms.locfileid: "130234139"

---

# <a name="use-the-azure-frontend-apis-for-authentication"></a>Uso de las API de front-end de Azure para la autenticación

En esta sección, se describirá cómo usar la API # para la autenticación y la administración de sesiones.

> [!CAUTION]

> Las funciones descritas en este capítulo emiten llamadas REST en el servidor de manera interna. En el caso de todas las llamadas REST, enviar estos comandos con demasiada frecuencia hará que el servidor se limite y devuelva un error con el tiempo. En este caso, el valor del miembro `SessionGeneralContext.HttpResponseCode` es 429 ("demasiadas solicitudes"). Como regla general, debería haber un retraso de entre **5 y 10 segundos entre las llamadas subsiguientes**.

Algunas funciones también devuelven información cuando se guardan para intentarse de nuevo. Por ejemplo, `RenderingSessionPropertiesResult.MinimumRetryDelay` especifica cuántos segundos hay que esperar antes de intentar otra comprobación. Cuando está disponible, es mejor usar este valor devuelto, ya que permite realizar comprobaciones con la mayor frecuencia posible, sin limitaciones.

## <a name="sessionconfiguration"></a>SessionConfiguration

SessionConfiguration se usa para configurar la información de autenticación de una instancia de ```RemoteRenderingClient``` en el SDK.

Los campos importantes son:

```cs

public class SessionConfiguration

{

// Domain that will be used for account authentication for the Azure Remote Rendering service, in the form [region].mixedreality.azure.com.

// [region] should be set to the domain of the Azure Remote Rendering account.

public string AccountDomain;

// Domain that will be used to generate sessions for the Azure Remote Rendering service, in the form [region].mixedreality.azure.com.

// [region] should be selected based on the region closest to the user. For example, westus2.mixedreality.azure.com or westeurope.mixedreality.azure.com.

public string RemoteRenderingDomain;

// Can use one of:

// 1) ID and Key.

// 2) ID and AuthenticationToken.

// 3) ID and AccessToken.

public string AccountId = Guid.Empty.ToString();

public string AccountKey = string.Empty;

public string AuthenticationToken = string.Empty;

public string AccessToken = string.Empty;

}

```

El homólogo en C++ tiene este aspecto:

```cpp

struct SessionConfiguration

{

std::string AccountDomain{};

std::string RemoteRenderingDomain{};

std::string AccountId{};

std::string AccountKey{};

std::string AuthenticationToken{};

std::string AccessToken{};

};

```

Para la parte _region_ del dominio, use una [región que tenga cerca](../reference/regions.md).

La información de la cuenta se puede obtener desde el portal, tal como se describe en el párrafo [Recuperación de la información de la cuenta](create-an-account.md#retrieve-the-account-information).

## <a name="azure-frontend"></a>Front-end de Azure

Las clases relevantes son ```RemoteRenderingClient``` y ```RenderingSession```. ```RemoteRenderingClient``` se usa para la funcionalidad de administración de cuentas y de nivel de cuenta, lo que incluye la creación de sesiones de representación y conversión de activos. ```RenderingSession``` se usa para la funcionalidad de nivel de sesión e incluye: actualización de la sesión, consultas, renovación y retirada.

Cada elemento ```RenderingSession``` abierto o creado conservará una referencia al front-end que lo crea. Para apagar correctamente, todas las sesiones se deben desasignar antes que el front-end.

Al desasignar una sesión, no se detendrá el servidor en Azure; `RenderingSession.StopAsync` se debe invocar explícitamente.

Una vez que se ha creado una sesión y su estado se ha marcado como listo, puede conectarse al entorno en tiempo de ejecución de representación remota con `RenderingSession.ConnectAsync`.

### <a name="threading"></a>Subprocesos

Todas las llamadas asincrónicas a RenderingSession y RemoteRenderingClient se completan en un subproceso en segundo plano, no en el subproceso de la aplicación principal.

### <a name="conversion-apis"></a>API de conversión

Para obtener más información sobre el servicio de conversión, consulte [la API REST de conversión de modelos](conversion/conversion-rest-api.md).

#### <a name="start-asset-conversion"></a>Inicia una conversión de recursos.

```cs

async void StartAssetConversion(RemoteRenderingClient client, string storageContainer, string blobinputpath, string bloboutpath, string modelName, string outputName)

{

var result = await client.StartAssetConversionAsync(

new AssetConversionInputOptions(storageContainer, blobinputpath, "", modelName),

new AssetConversionOutputOptions(storageContainer, bloboutpath, "", outputName)

);

}

```

```cpp

void StartAssetConversion(ApiHandle<RemoteRenderingClient> client, std::string storageContainer, std::string blobinputpath, std::string bloboutpath, std::string modelName, std::string outputName)

{

AssetConversionInputOptions input;

input.BlobContainerInformation.BlobContainerName = blobinputpath;

input.BlobContainerInformation.StorageAccountName = storageContainer;

input.BlobContainerInformation.FolderPath = "";

input.InputAssetPath = modelName;

AssetConversionOutputOptions output;

output.BlobContainerInformation.BlobContainerName = blobinputpath;

output.BlobContainerInformation.StorageAccountName = storageContainer;

output.BlobContainerInformation.FolderPath = "";

output.OutputAssetPath = outputName;

client->StartAssetConversionAsync(input, output, [](Status status, ApiHandle<AssetConversionResult> result) {

if (status == Status::OK)

{

//use result

}

else

{

printf("Failed to start asset conversion!");

}

});

}

```

#### <a name="get-conversion-status"></a>Obtener el estado de conversión

```cs

async void GetConversionStatus(RemoteRenderingClient client, string assetId)

{

AssetConversionStatusResult status = await client.GetAssetConversionStatusAsync(assetId);

// do something with status (e.g. check current status etc.)

}

```

```cpp

void GetConversionStatus(ApiHandle<RemoteRenderingClient> client, std::string assetId)

{

client->GetAssetConversionStatusAsync(assetId, [](Status status, ApiHandle<AssetConversionStatusResult> result) {

if (status == Status::OK)

{

// do something with result (e.g. check current status etc.)

}

else

{

printf("Failed to get status of asset conversion!");

}

});

}

```

### <a name="rendering-apis"></a>Representación de API

Consulte [la API REST de administración de sesiones](session-rest-api.md) para obtener más información sobre la administración de sesiones.

Una sesión de representación se puede crear dinámicamente en el servicio o un identificador de sesión ya existente se puede "abrir" en un objeto de RenderingSession.

#### <a name="create-rendering-session"></a>Creación de una sesión de representación

```cs

async void CreateRenderingSession(RemoteRenderingClient client, RenderingSessionVmSize vmSize, int maxLeaseInMinutes)

{

CreateRenderingSessionResult result = await client.CreateNewRenderingSessionAsync(

new RenderingSessionCreationOptions(vmSize, maxLeaseInMinutes / 60, maxLeaseInMinutes % 60));

// if the call was successful, result.Session holds a valid session reference, otherwise check result.Context for error information

}

```

```cpp

void CreateRenderingSession(ApiHandle<RemoteRenderingClient> client, RenderingSessionVmSize vmSize, int maxLeaseInMinutes)

{

RenderingSessionCreationOptions params;

params.MaxLeaseInMinutes = maxLeaseInMinutes;

params.Size = vmSize;

client->CreateNewRenderingSessionAsync(params, [](Status status, ApiHandle<CreateRenderingSessionResult> result) {

if (status == Status::OK && result->GetErrorCode() == Result::Success)

{

result->GetSession();

//use res->Result

}

else

{

printf("Failed to create session!");

}

});

}

```

#### <a name="open-an-existing-rendering-session"></a>Abrir una sesión de representación existente

Abrir una sesión existente es una llamada sincrónica.

```cs

async void CreateRenderingSession(RemoteRenderingClient client, string sessionId)

{

CreateRenderingSessionResult result = await client.OpenRenderingSessionAsync(sessionId);

if (result.ErrorCode == Result.Success)

{

RenderingSession session = result.Session;

// Query session status, etc.

}

}

```

```cpp

void CreateRenderingSession(ApiHandle<RemoteRenderingClient> client, std::string sessionId)

{

client->OpenRenderingSessionAsync(sessionId, [](Status status, ApiHandle<CreateRenderingSessionResult> result) {

if (status == Status::OK && result->GetErrorCode()==Result::Success)

{

ApiHandle<RenderingSession> session = result->GetSession();

// Query session status, etc.

}

});

}

```

#### <a name="get-current-rendering-sessions"></a>Obtener las sesiones de representación actuales

```cs

async void GetCurrentRenderingSessions(RemoteRenderingClient client)

{

RenderingSessionPropertiesArrayResult result = await client.GetCurrentRenderingSessionsAsync();

if (result.ErrorCode == Result.Success)

{

RenderingSessionProperties[] properties = result.SessionProperties;

// Query session status, etc.

}

}

```

```cpp

void GetCurrentRenderingSessions(ApiHandle<RemoteRenderingClient> client)

{

client->GetCurrentRenderingSessionsAsync([](Status status, ApiHandle<RenderingSessionPropertiesArrayResult> result) {

if (status == Status::OK && result->GetErrorCode() == Result::Success)

{

std::vector<RenderingSessionProperties> properties;

result->GetSessionProperties(properties);

}

else

{

printf("Failed to get current rendering sessions!");

}

});

}

```

### <a name="session-apis"></a>API de sesión

#### <a name="get-rendering-session-properties"></a>Obtener propiedades de la sesión de representación

```cs

async void GetRenderingSessionProperties(RenderingSession session)

{

RenderingSessionPropertiesResult result = await session.GetPropertiesAsync();

if (result.ErrorCode == Result.Success)

{

RenderingSessionProperties properties = result.SessionProperties;

}

else

{

Console.WriteLine("Failed to get properties of session!");

}

}

```

```cpp

void GetRenderingSessionProperties(ApiHandle<RenderingSession> session)

{

session->GetPropertiesAsync([](Status status, ApiHandle<RenderingSessionPropertiesResult> result) {

if (status == Status::OK && result->GetErrorCode() == Result::Success)

{

RenderingSessionProperties properties = result->GetSessionProperties();

}

else

{

printf("Failed to get properties of session!");

}

});

}

```

#### <a name="update-rendering-session"></a>Actualización de una sesión de representación

```cs

async void UpdateRenderingSession(RenderingSession session, int updatedLeaseInMinutes)

{

SessionContextResult result = await session.RenewAsync(

new RenderingSessionUpdateOptions(updatedLeaseInMinutes / 60, updatedLeaseInMinutes % 60));

if (result.ErrorCode == Result.Success)

{

Console.WriteLine("Rendering session renewed succeeded!");

}

else

{

Console.WriteLine("Failed to renew rendering session!");

}

}

```

```cpp

void UpdateRenderingSession(ApiHandle<RenderingSession> session, int updatedLeaseInMinutes)

{

RenderingSessionUpdateOptions params;

params.MaxLeaseInMinutes = updatedLeaseInMinutes;

session->RenewAsync(params, [](Status status, ApiHandle<SessionContextResult> result) {

if (status == Status::OK && result->GetErrorCode() == Result::Success)

{

printf("Rendering session renewed succeeded!");

}

else

{

printf("Failed to renew rendering session!");

}

});

}

```

#### <a name="stop-rendering-session"></a>Detener una sesión de representación

```cs

async void StopRenderingSession(RenderingSession session)

{

SessionContextResult result = await session.StopAsync();

if (result.ErrorCode == Result.Success)

{

Console.WriteLine("Rendering session stopped successfully!");

}

else

{

Console.WriteLine("Failed to stop rendering session!");

}

}

```

```cpp

void StopRenderingSession(ApiHandle<RenderingSession> session)

{

session->StopAsync([](Status status, ApiHandle<SessionContextResult> result) {

if (status == Status::OK && result->GetErrorCode() == Result::Success)

{

printf("Rendering session stopped successfully!");

}

else

{

printf("Failed to stop rendering session!");

}

});

}

```

#### <a name="connect-to-arr-inspector"></a>Conectar con el inspector de ARR

```cs

async void ConnectToArrInspector(RenderingSession session)

{

string htmlPath = await session.ConnectToArrInspectorAsync();

#if WINDOWS_UWP

UnityEngine.WSA.Application.InvokeOnUIThread(async () =>

{

var file = await Windows.Storage.StorageFile.GetFileFromPathAsync(htmlPath);

await Windows.System.Launcher.LaunchFileAsync(file);

}, true);

#else

InvokeOnAppThreadAsync(() =>

{

System.Diagnostics.Process.Start("file:///" + htmlPath);

});

#endif

}

```

```cpp

void ConnectToArrInspector(ApiHandle<RenderingSession> session)

{

session->ConnectToArrInspectorAsync([](Status status, std::string result) {

if (status == Status::OK)

{

// Launch the html file with default browser

std::string htmlPath = "file:///" + result;

ShellExecuteA(NULL, "open", htmlPath.c_str(), NULL, NULL, SW_SHOWDEFAULT);

}

else

{

printf("Failed to connect to ARR inspector!");

}

});

}

```

## <a name="next-steps"></a>Pasos siguientes

* [Crear una cuenta](create-an-account.md)

* [Scripts de PowerShell de ejemplo](../samples/powershell-example-scripts.md)

| 36.781095 | 468 | 0.718382 | yue_Hant | 0.234082 |

615f52b38332f5d0ba6735809345505b049f3cc0 | 480 | md | Markdown | JavaScript/codewars/032_the_coupon_code.md | Gyubin/TIL | 9d855ba5376a0d3d58891bcfcc532e2c43496210 | [

"MIT"

] | 133 | 2016-01-05T14:40:48.000Z | 2021-11-16T16:23:54.000Z | JavaScript/codewars/032_the_coupon_code.md | Gyubin/TIL | 9d855ba5376a0d3d58891bcfcc532e2c43496210 | [

"MIT"

] | 1 | 2016-01-22T11:53:16.000Z | 2016-01-22T12:15:07.000Z | JavaScript/codewars/032_the_coupon_code.md | Gyubin/TIL | 9d855ba5376a0d3d58891bcfcc532e2c43496210 | [

"MIT"

] | 70 | 2016-07-03T02:01:22.000Z | 2021-12-20T03:47:58.000Z | # #32 The Coupon Code

쿠폰이 올바른지 아닌지를 체크하는 문제다. 코드가 일치하는지 확인하고, 만료일보다 이전인지 확인하면 된다.

## 1. 코드

```js

function checkCoupon(enteredCode, correctCode, currentDate, expirationDate){

return enteredCode === correctCode && Date.parse(expirationDate) >= Date.parse(currentDate)

}

```

- 쿠폰 코드는 단순히 `===`를 써서 같은지 확인한다.

- 날짜는 찾아보니 `Date.parse(dateString)` 함수가 있었다. 날짜 형태의 문자열을 매개변수로 넣으면 Date 객체를 만들어낸다. 만들어진 객체는 부등호 연산이 가능하다.

- 다른 해답을 보니 그냥 `new Date(datestring)` 형태로 객체를 생성해서 비교할 수도 있었다.

| 30 | 105 | 0.7125 | kor_Hang | 1.000008 |

61601af8b021b7143ffbc572510bff0bd90a8ab0 | 3,431 | md | Markdown | docs/md/MultiPeril.md | oejwing/ktools | 3daf2c3ae9983900f6fed655bc15d03e2fdda0b2 | [

"BSD-3-Clause"

] | null | null | null | docs/md/MultiPeril.md | oejwing/ktools | 3daf2c3ae9983900f6fed655bc15d03e2fdda0b2 | [

"BSD-3-Clause"

] | null | null | null | docs/md/MultiPeril.md | oejwing/ktools | 3daf2c3ae9983900f6fed655bc15d03e2fdda0b2 | [

"BSD-3-Clause"

] | null | null | null |

# Appendix C: Multi-peril model support <a id="multiperil"></a>

ktools now supports multi-peril models through the introduction of the coverage_id in the data structures.

Ground up losses apply at the “Item” level in the Kernel which corresponds to “interest coverage” in business terms, which is the element of financial loss that can be associated with a particular asset. In ktools, item_id represents the element of financial loss and coverage_id represents the asset with its associated total insured value. If there is more than one item per coverage (as defined in the items data) then each item represents an element of financial loss from a particular peril contributing to the total loss for the asset. For each item, the identification of the peril is held in the areaperil_id, which is a unique key representing a combination of the location (area) and peril.

#### Multi-peril damage calculation

Ground up losses are calculated by multiplying the damage ratio for an item by the total insured value of its associated coverage (defined in the coverages data). The questions are then; how are these losses combined across items, and how are they correlated?

There are a few ways in which losses can be combined and the first example in ktools uses a simple rule, which is to sum the losses for each coverage and cap the overall loss to the total insured value. This is what you get when you use the -c parameter in gulcalc to output losses by 'coverage'.

In v3.1.0 the method of combining losses became function-driven using the gulcalc command line parameter -a as a few standard approaches have emerged. These are;

| Allocation option | Description |

|:------------------|:------------------------------------------------------------------------------------------------------|

| 0 | Do nothing (suitable for single sub-peril models with one item per coverage) |

| 1 | Sum damage ratios and cap to 1. Back-allocate in proportion to contributing subperil loss |

| 2 | Multiplicative method for combining damage. Back-allocate in proportion to contributing subperil loss |

| 3 | Total damage = maximum subperil damage. Back-allocate all to the maximum contributing subperil loss |

Allocation option 1 has been implemented in v3.1.0.

Correlation of item damage is generic in ktools, as damage can either be 100% correlated or independent (see [Appendix A Random Numbers](RandomNumbers.md)). This is no different in the multi-peril case when items represent different elements of financial loss to the same asset, rather than different assets. More sophisticated methods of multi-peril correlation have been implemented for particular models, but as yet no standard approach has been implemented in ktools.

Note that ground up losses by item can be passed into the financial module unallocated (allocation method 0) using the gulcalc option -i, or allocated using the gulcalc option -a1 -i. If the item ground up losses are passed though unallocated then the limit of total insured value must be applied as part of the financial module calculations, to prevent the ground up loss exceeding the coverage TIV.

[Return to top](#multiperil)

[Back to Contents](Contents.md)

| 107.21875 | 700 | 0.718449 | eng_Latn | 0.999442 |

61602995f27107150d2df93600bc0af7bd809ff8 | 1,022 | md | Markdown | README.md | NiallBunting/broker-calculator | f564940a02dcf6ac3d8204f56c327afb92e54918 | [

"MIT"

] | null | null | null | README.md | NiallBunting/broker-calculator | f564940a02dcf6ac3d8204f56c327afb92e54918 | [

"MIT"

] | null | null | null | README.md | NiallBunting/broker-calculator | f564940a02dcf6ac3d8204f56c327afb92e54918 | [

"MIT"

] | null | null | null | # Broker Calculator

## Original Notes

Planning to create a small broker calculator that will allow you to see the most relevant broker for you in terms of cost.

---------------------------------------

Current Balance

How often do you trade? - x/<period>

Do you trade outside the daily fixed time?

Trading

ISA

SIPP

Scale of self management

nice looking website

App

----------------------------------------

Name

Notes on the service

Self management ranking: 10 - mainly self managed, 0 - diy

Platform {

type: web, app

easeofuse:

design:

link:

note:

}

Fee {

type: percentage

amount: 0.9

min: 0

max: 123123

trigger: annual, funds, etfs, regular, entry, exit, monthly

notes:

waved: -1 (one free a month), 3 (waved after 3)

}

----------------------------------------

## The plan

Hoping to write it all in JS so it can run on peoples browsers. With the ability to be put into an iframe so people can embed it on their website. Hopefully in that case they can add the data in return for embedding it.

| 19.653846 | 219 | 0.646771 | eng_Latn | 0.994857 |

616088d7fe5a31804cce3c071cd1a94b65ffed6b | 116 | md | Markdown | README.md | joyously/conic-gradient | 68581386a48f84572befee347b8be19279878220 | [

"MIT"

] | 513 | 2015-06-18T18:05:45.000Z | 2022-01-27T20:10:30.000Z | README.md | joyously/conic-gradient | 68581386a48f84572befee347b8be19279878220 | [

"MIT"

] | 33 | 2015-06-19T15:48:27.000Z | 2020-07-06T20:41:41.000Z | README.md | joyously/conic-gradient | 68581386a48f84572befee347b8be19279878220 | [

"MIT"

] | 78 | 2015-06-18T18:05:46.000Z | 2021-10-04T07:09:40.000Z | # Conic Gradient Polyfill

Please visit http://leaverou.github.io/conic-gradient/ for usage instructions and demos.

| 29 | 88 | 0.801724 | eng_Latn | 0.760522 |

6160d483872899b71993249d7d4b0b505250c68a | 129 | md | Markdown | README.md | xinwuyun/note-in-xidian | d7d5f1f4fcdb7e9a51d22ab7196de6ed2422c010 | [

"MIT"

] | 571 | 2015-09-25T15:33:28.000Z | 2022-03-19T08:29:14.000Z | README.md | xinwuyun/note-in-xidian | d7d5f1f4fcdb7e9a51d22ab7196de6ed2422c010 | [

"MIT"

] | null | null | null | README.md | xinwuyun/note-in-xidian | d7d5f1f4fcdb7e9a51d22ab7196de6ed2422c010 | [

"MIT"

] | 180 | 2015-11-10T07:30:06.000Z | 2021-09-16T00:29:48.000Z | # Post

## [网站收集](website.md)

## [工具](tools.md)

## [开源库](open\_source\_lib.md)

## [合集](broken-reference)

## [书单](shu-dan.md)

| 10.75 | 30 | 0.565891 | yue_Hant | 0.115529 |

61615f176050c2abacb9207d3d958fb5d11f706c | 682 | md | Markdown | README.md | coldspire/last-candle-flame | bc62c58965b491035187a8e95f5f9c3620af51d8 | [

"MIT"

] | null | null | null | README.md | coldspire/last-candle-flame | bc62c58965b491035187a8e95f5f9c3620af51d8 | [

"MIT"

] | null | null | null | README.md | coldspire/last-candle-flame | bc62c58965b491035187a8e95f5f9c3620af51d8 | [

"MIT"

] | null | null | null | # Flame of the Last Candle 🕯️

Just another blog from a guy.

## Workflow

Posts are written and pushed from [Forestry.io](https://forestry.io/).

New posts are sent from Forestry to the `staging` branch.

When time to publish one or more posts to the web, `staging` is merged to `main`.

Finally, Netlify sees the `main` branch update, and then builds and deploys the site to [https://fotlc.netlify.app/](https://fotlc.netlify.app/).

### CL commands

```

npx eleventy

```

Or build and host locally for local development

```

npx eleventy --serve

```

Or build automatically when a template changes:

```

npx eleventy --watch

```

Or in debug mode:

```

DEBUG=* npx eleventy

| 20.666667 | 145 | 0.711144 | eng_Latn | 0.995366 |

6161781adb74d805a0ac7f47e53cce8d52d67d69 | 3,221 | md | Markdown | docs/authorization/groups-sync.md | dendisuhubdy/kowl | 9efdbac6bb5959b97bf5566c010e5e7df94c9ddb | [

"Apache-2.0"

] | 1 | 2021-04-19T06:45:39.000Z | 2021-04-19T06:45:39.000Z | docs/authorization/groups-sync.md | gitchong/kowl | 52dc2b46ab9cea8d6af6f2e2cf838df1e7c1706f | [

"Apache-2.0"

] | null | null | null | docs/authorization/groups-sync.md | gitchong/kowl | 52dc2b46ab9cea8d6af6f2e2cf838df1e7c1706f | [

"Apache-2.0"

] | null | null | null | # Groups Sync

<p align="center">

<b>:loudspeaker: This page documents Kowl Business exclusive features.</b>

</p>

If you want to bind Roles to a set of users (e.g. GitHub teams or Google Groups) you need to grant Kowl a few additional permissions, so that it can resolve the memberships of these user sets. This page guides you through the required steps for each supported provider.

## Google

### Introduction

Google Groups is a Google service which belongs to GSuite. Businesses may use it to organize their employees in groups so that they can manage permissions on a group level. A group can be as simple as this:

```

Group: dev-team-checkout@mycompany.com

Members:

- employee.ab@mycompany.com

- employee.cd@mycompany.com

- employee.ef@mycompany.com

```

But it could also be a nested group like this:

```

Group: software-engineers@mycompany.com

Members:

- bi-reports@other-company.com (External Group, managed within a different organization)

- dev-team-checkout@mycompany.com (Group)

- dev-team-landing@mycompany.com (Group)

- security-officer@mycompany.com (User)

```

Kowl supports either case (externally managed groups being a bit tricky).

### Configuration