text stringlengths 0 30.5k | title stringclasses 1

value | embeddings listlengths 768 768 |

|---|---|---|

How do you get around this Ajax cross site scripting problem on FireFox 3?

If you're using jQuery it has a callback function to overcome this:

<http://docs.jquery.com/Ajax/jQuery.ajax#options>

> As of jQuery 1.2, you can load JSON

> data located on another domain if you

> specify a JSONP callback, which can be

> d... | [

0.021621422842144966,

-0.174272358417511,

0.47391605377197266,

-0.13947102427482605,

-0.41219863295555115,

-0.01540327351540327,

0.16531774401664734,

-0.0525263249874115,

-0.1500178426504135,

-0.7278923988342285,

-0.19818346202373505,

0.5116543173789978,

-0.3874285817146301,

-0.22632022202... | |

to a server-side script which does the cross-domain call for you, then passes the data back to your script | [

0.03881277143955231,

-0.15266811847686768,

0.14945533871650696,

0.31203964352607727,

0.03619268164038658,

-0.06313739717006683,

0.1152457445859909,

0.29355311393737793,

0.12529851496219635,

-0.5070646405220032,

-0.37820059061050415,

0.2437216341495514,

-0.004197878297418356,

0.315307676792... | |

I have a web application running on a Gentoo-based LAMP stack. My customers buy the software as a service and I host everything. However, there is some demand for on-site deployment inside the clients' own networks.

Currently, because I host the system, there is no built-in license management in the app. I bill based ... | [

1.0927587747573853,

0.4578256905078888,

0.36065319180488586,

-0.19078615307807922,

0.1398591548204422,

0.15030361711978912,

-0.06349805742502213,

-0.12732382118701935,

-0.03251729533076286,

-0.5323461294174194,

0.1671091914176941,

0.5248440504074097,

0.009669527411460876,

0.235620066523551... | |

for a license managment framework and/or typical approaches that you have implemented yourselves or have seen to work well elsewhere. My requirements are:

* "safe enough" rather than "military grade"

* very much non-obtrusive

* prevent the owner of a license from running the system in multiple plants when he has only ... | [

0.7596715688705444,

0.3659239411354065,

0.11030340194702148,

-0.045880354940891266,

0.5076342225074768,

-0.776457667350769,

0.4439912438392639,

-0.3338374197483063,

0.03922444209456444,

-0.18818967044353485,

-0.39645469188690186,

0.569344162940979,

0.022828899323940277,

-0.1605420857667923... | |

be a temporary connection during installation

For some of the on-site scenarios, there would be a requirement for some particular OS, typically some version of Windows Server, but various Linux distros are getting more popular as well, especially in the public sector.

From a user's point of view, I am quite satisfied... | [

0.35035911202430725,

0.16466544568538666,

0.2496710866689682,

0.2159610241651535,

0.3561687469482422,

-0.4738944470882416,

0.14281761646270752,

0.0970311313867569,

-0.1246948093175888,

-0.556166410446167,

-0.12235599011182785,

0.5003767013549805,

-0.32812392711639404,

-0.01199687086045742,... | |

you are not spending fixing bugs or adding features. By writing a license management system, you are spending resources in order to reduce the value of your product!

Copyright your code, have a lawyer and be ready to prosecute anyone who violates your copyright, and call it a day. | [

0.681920051574707,

0.32609283924102783,

-0.08851371705532074,

0.31136736273765564,

0.11263326555490494,

-0.6949906945228577,

0.36241698265075684,

0.02893608808517456,

-0.02956574223935604,

-0.3802703320980072,

-0.17372815310955048,

0.6201102137565613,

-0.201130211353302,

0.1869711875915527... | |

i have a list of products that are being displayed in particular order. store admin can reassign the display order, by moving the "hot" items to the top of the list. what's the best way of implementing the admin functionality **UI** [asp.net C#]? Products table has a [displayOrder(int)] filed which determines the displ... | [

0.1533677577972412,

0.03223363682627678,

0.6606930494308472,

0.03267365321516991,

-0.11610104888677597,

-0.04616048187017441,

0.217599555850029,

-0.3230605125427246,

-0.2386721521615982,

-0.8185759782791138,

-0.08072309195995331,

0.44357722997665405,

-0.12438502907752991,

-0.02186926081776... | |

can find more information here <http://www.asp.net/AJAX/AjaxControlToolkit/Samples/ReorderList/ReorderList.aspx>

Mauro

[http://www.brantas.co.uk](http://www.brantas.co.uk/) | [

-0.41106536984443665,

-0.008775130845606327,

0.31362104415893555,

-0.09304880350828171,

0.24444682896137238,

0.19990788400173187,

0.3072846531867981,

0.1511247605085373,

-0.4778484106063843,

-0.2167052924633026,

-0.39298954606056213,

0.39298516511917114,

0.26862481236457825,

-0.45239195227... | |

Do you use a formal event to get people talking in your IT department? Like a **monthly meetup** in a social place, a **internal wiki/chat** space or just a regular "information market" with some **presentations about technology or projects** made by your staff for your staff? Do you invite Sales people to participate ... | [

0.6739215850830078,

-0.054959628731012344,

-0.1734725534915924,

0.5945464968681335,

-0.11656228452920914,

-0.49298664927482605,

-0.07898073643445969,

0.021636473014950752,

-0.53350830078125,

-0.10303089767694473,

-0.2682570517063141,

0.5005075335502625,

0.2331557720899582,

0.06202420964837... | |

progress of knowledge transfer itself. How do you spot critical one-person spots of failure in your projects? There are several methods to avoid it, like staff swapping or the "fifo" attempt on bug fixing.

*Note:* Ok, this is a very very noisy question and I hope to fix it after a few comments. Sorry for the mixup.

*... | [

0.846062183380127,

0.08543183654546738,

0.2667825222015381,

0.30197209119796753,

0.15658003091812134,

-0.38825705647468567,

0.22332395613193512,

0.14928656816482544,

-0.2361525446176529,

-0.6364558935165405,

0.007029550615698099,

0.38276350498199463,

0.3690010905265808,

0.07139015197753906... | |

the developing staff. It's like people don't like our wiki, our document management system or the meeting. Maybe it's because it's all free-to-use and not forced by the management. But I don't like to force people into it - but is it the right way?

One example: Our wiki holds pages about projects, telling who worked o... | [

0.5993214845657349,

0.22102093696594238,

0.1179484948515892,

0.29497238993644714,

0.20022916793823242,

-0.09936163574457169,

-0.16490183770656586,

0.08728602528572083,

-0.0899319127202034,

-0.4252080023288727,

-0.05691505968570709,

0.5531669855117798,

0.36855921149253845,

0.345398724079132... | |

needed? All the time I use to bring others up to speed, what do I gain from it?

The best way to go about this is to be an example. Share your knowledge; in a wiki, blog about it, talk about it, make it easily accessible, and talk about the benefits you have from that: less people come to interupt and ask you stuff, as... | [

0.6466124653816223,

-0.03321298211812973,

0.15659373998641968,

0.3368074297904968,

0.014282784424722195,

-0.18156524002552032,

0.2770836353302002,

0.040657490491867065,

-0.525036633014679,

-0.5406888723373413,

0.29968005418777466,

0.45955008268356323,

0.28594258427619934,

-0.00844288244843... | |

on paying me 1/3 of my salary for another year after I left (on my own initiative), just to keep my knowledge-base up and running. Did he have to? No, it was his property anyway. But it motivated people still working for him to share their knowledge. | [

0.6239961981773376,

0.12173149734735489,

-0.06319288909435272,

0.272009015083313,

-0.1268380731344223,

-0.012801851145923138,

-0.06745603680610657,

0.13319623470306396,

-0.1536170095205307,

0.2163357138633728,

0.09570438414812088,

0.42255109548568726,

0.04562817141413689,

0.125233650207519... | |

I've encountered multiple third party .Net component-vendors that use a licensing scheme. On an evaluation copy, the components show up with a nag-screen or watermark or some such indicator. On a licensed machine, a **Licenses.licx** is created - with what appears to be *just* the assembly full name/identifiers. This f... | [

0.7895975112915039,

0.2090258151292801,

0.2911500632762909,

0.14505910873413086,

0.045027054846286774,

-0.3976300358772278,

-0.06295517086982727,

-0.08809534460306168,

-0.13374663889408112,

-0.5519757866859436,

-0.16442644596099854,

0.5304147005081177,

-0.0431610643863678,

0.28890454769134... | |

everything about .Net licensing is explained [here](http://www.developer.com/net/csharp/article.php/3074001). No need to rewrite, I think.

It is better to exclude license files from project in source control, if you can. Otherwise, editing visual components may be pain in the ass. Also, storing license files in source... | [

0.8077980279922485,

0.2190796583890915,

0.10644149035215378,

0.19061055779457092,

-0.010783597826957703,

-0.7852361798286438,

0.20964542031288147,

0.2349938303232193,

-0.21897044777870178,

-0.5892924666404724,

-0.09421376138925552,

0.6895114779472351,

-0.15521445870399475,

0.17201843857765... | |

The [Sun Documentation for DataInput.skipBytes](http://java.sun.com/j2se/1.4.2/docs/api/java/io/DataInput.html#skipBytes(int)) states that it "makes an attempt to skip over n bytes of data from the input stream, discarding the skipped bytes. However, it may skip over some smaller number of bytes, possibly zero. This ma... | [

0.11041662096977234,

-0.1086118146777153,

0.16250115633010864,

-0.009011432528495789,

0.0019376057898625731,

-0.17020590603351593,

0.0762401595711708,

-0.15121842920780182,

-0.6486946940422058,

-0.44898858666419983,

-0.037028536200523376,

0.021503441035747528,

-0.3556540012359619,

0.057501... | |

and throw an `EOFException` if this causes me to go to the end of the file, should I use `readFully()` and ignore the resulting byte array? Or is there a better way?

1) There might not be that much data available to read (the other end of the pipe might not have sent that much data yet), and the implementing class migh... | [

0.3435014486312866,

-0.13594430685043335,

-0.018994085490703583,

-0.10061787068843842,

-0.06485065072774887,

-0.17274342477321625,

0.45638179779052734,

-0.13964885473251343,

0.13870641589164734,

-0.5099239349365234,

-0.021134132519364357,

0.41165396571159363,

-0.40118131041526794,

0.261831... | |

option is simply that the file gets closed part-way through the read.

2) Either readFully() (which will always wait for enough input or else fail) or call skipBytes() in a loop. I think the former is probably better, unless the array is truly vast. | [

0.22638900578022003,

-0.1411847025156021,

0.001577408635057509,

-0.06409444659948349,

0.008418404497206211,

-0.16610293090343475,

0.2171139419078827,

0.03696375712752342,

-0.47096988558769226,

-0.25914835929870605,

-0.314951628446579,

0.30118173360824585,

-0.269288033246994,

0.007254818920... | |

How do you get a Media Type (MIME type) from a file using Java? So far I've tried JMimeMagic & Mime-Util. The first gave me memory exceptions, the second doesn't close its streams properly.

How would you probe the file to determine its actual type (not merely based on the extension)?

In Java 7 you can now just use [`F... | [

0.238043412566185,

-0.25303417444229126,

0.38215577602386475,

-0.05848059803247452,

-0.0429513119161129,

0.010196253657341003,

0.35705795884132385,

-0.4980495274066925,

-0.12123686075210571,

-0.6092262864112854,

0.07947863638401031,

0.6429288983345032,

-0.4107302725315094,

0.02041889913380... | |

what is the best method for inter process communication in a multithreaded java app.

It should be performant (so no JMS please) easy to implement and reliable,so that

objects & data can be bound to one thread only?

Any ideas welcome!

Assuming the scenario 1 JVM, multiple threads then indeed java.util.concurrent is th... | [

0.014732182957231998,

-0.21239690482616425,

0.34941282868385315,

0.39465147256851196,

-0.014206798747181892,

-0.1616741418838501,

0.015473887324333191,

0.25714999437332153,

-0.647054135799408,

-0.5932218432426453,

0.15370365977287292,

0.16634295880794525,

-0.405705064535141,

-0.09826997667... | |

In [PostgreSQL](http://en.wikipedia.org/wiki/PostgreSQL), I can do something like this:

```

ALTER SEQUENCE serial RESTART WITH 0;

```

Is there an Oracle equivalent?

Here is a good procedure for resetting any sequence to 0 from Oracle guru [Tom Kyte](http://asktom.oracle.com). Great discussion on the pros and cons in... | [

-0.016103653237223625,

-0.016998931765556335,

0.34263867139816284,

0.03181935101747513,

-0.03393782302737236,

0.1832602471113205,

0.21229852735996246,

-0.15585461258888245,

-0.2643500864505768,

-0.39066171646118164,

-0.06134066730737686,

0.6432481408119202,

-0.1936027556657791,

0.140614062... | |

' minvalue 0';

execute immediate

'select ' || p_seq_name || '.nextval from dual' INTO l_val;

execute immediate

'alter sequence ' || p_seq_name || ' increment by 1 minvalue 0';

end;

/

```

From this page: [Dynamic SQL to | [

-0.3591887056827545,

-0.45431774854660034,

0.7294102907180786,

-0.07296214252710342,

0.20933370292186737,

0.24700716137886047,

0.2644725739955902,

-0.12919777631759644,

-0.07502757012844086,

-0.4752998948097229,

-0.5274133086204529,

0.7873995900154114,

-0.24295136332511902,

0.0789569243788... | |

reset sequence value](http://asktom.oracle.com/pls/asktom/f?p=100:11:0::::P11_QUESTION_ID:951269671592)

Another good discussion is also here: [How to reset sequences?](http://asktom.oracle.com/pls/asktom/f?p=100:11:0::::P11_QUESTION_ID:1119633817597) | [

-0.041874635964632034,

0.07457143813371658,

-0.0791669562458992,

0.11383446305990219,

0.3591306507587433,

0.5552757382392883,

0.15706796944141388,

-0.362804651260376,

-0.4439021050930023,

-0.46595847606658936,

-0.029683401808142662,

0.6121681928634644,

-0.3122832775115967,

-0.0062160016968... | |

For me **usable** means that:

* it's being used in real-wold

* it has tools support. (at least some simple editor)

* it has human readable syntax (no angle brackets please)

Also I want it to be as close to XML as possible, i.e. there must be support for attributes as well as for properties. So, no [YAML](http://en.wi... | [

0.1257568895816803,

0.12185357511043549,

0.22118879854679108,

-0.043956458568573,

-0.4676622152328491,

-0.007893634028732777,

0.3900962769985199,

0.027099961414933205,

0.0677303895354271,

-0.7602301239967346,

-0.1341586709022522,

0.6905339360237122,

-0.2817682921886444,

-0.3084211051464081... | |

much more too (like references).

I can't think of anything XML can do that YAML can't, except to validate a document with a DTD, which in my experience has never been worth the overhead. But YAML is so much faster and easier to type and read than XML.

As for attributes or properties, if you think about it, they don't... | [

0.46787095069885254,

0.18678033351898193,

0.11396808922290802,

0.2826133072376251,

-0.4205262362957001,

-0.15939250588417053,

-0.10319200158119202,

0.1338910609483719,

-0.14742296934127808,

-0.5285822153091431,

-0.10707850009202957,

0.44840672612190247,

-0.0200695488601923,

0.0670107379555... | |

XML -->

<Director name="Spielberg">

<Movies>

<Movie title="Jaws" year="1975"/>

<Movie title="E.T." year="1982"/>

</Movies>

</Director>

# YAML

Director:

name: Spielberg

Movies:

- Movie: {title: E.T., year: 1975}

- Movie: {title: Jaws, year: 1982}

```

For me, the luxury of ... | [

-0.15041793882846832,

0.26421919465065,

0.45171770453453064,

0.26993611454963684,

0.0037950046826153994,

-0.21695691347122192,

-0.5017073154449463,

-0.01187607366591692,

-0.3309962749481201,

-0.1667591780424118,

-0.3605886697769165,

0.3361571133136749,

-0.009019066579639912,

0.285212993621... | |

as that always seemed to me like a gray area of XML that needlessly introduced two sets of syntax (both when writing and traversing) for essentially the same concept. YAML does away with that confusion altogether. | [

0.15682567656040192,

0.1639486402273178,

0.30601412057876587,

0.06259463727474213,

-0.23135361075401306,

-0.1011236384510994,

0.11390706896781921,

0.3408527076244354,

-0.14186280965805054,

-0.2507293224334717,

-0.09067069739103317,

0.22471226751804352,

0.10642702877521515,

0.31336137652397... | |

I have a list of structs and I want to change one element. For example :

```

MyList.Add(new MyStruct("john");

MyList.Add(new MyStruct("peter");

```

Now I want to change one element:

```

MyList[1].Name = "bob"

```

However, whenever I try and do this I get the following error:

> Cannot modify the return value of

>... | [

0.13026271760463715,

0.10467198491096497,

0.2673102021217346,

-0.3206464946269989,

0.09646422415971756,

0.057523757219314575,

0.4627898633480072,

-0.17106910049915314,

-0.36118564009666443,

-0.7307030558586121,

-0.20565684139728546,

0.48725631833076477,

-0.5846512317657471,

0.2492951899766... | |

classes and not structs?

```

MyList[1] = new MyStruct("bob");

```

structs in C# should almost always be designed to be immutable (that is, have no way to change their internal state once they have been created).

In your case, what you want to do is to replace the entire struct in specified array index, not to try to... | [

0.21285587549209595,

0.09094777703285217,

-0.08876535296440125,

0.040108438581228256,

-0.05419256165623665,

-0.18139906227588654,

0.26713359355926514,

0.16134746372699738,

-0.011275571770966053,

-0.42893144488334656,

-0.43860745429992676,

0.45483309030532837,

-0.4305802285671234,

0.0802299... | |

I need a real DBA's opinion. Postgres 8.3 takes 200 ms to execute this query on my Macbook Pro while Java and Python perform the same calculation in under 20 ms (350,000 rows):

```

SELECT count(id), avg(a), avg(b), avg(c), avg(d) FROM tuples;

```

Is this normal behaviour when using a SQL database?

The schema (the t... | [

0.04185659810900688,

0.32732143998146057,

0.4069412350654602,

-0.18980772793293,

-0.2161971628665924,

0.2990334928035736,

0.6692324280738831,

-0.4800330400466919,

-0.06810228526592255,

-0.5057233572006226,

-0.026952343061566353,

0.4037224352359772,

-0.3876286745071411,

0.005051480140537023... | |

ms

java 1.5 ~ 10 ms

python 2.5 numpy ~ 18 ms

python 2.5 ~ 370 ms

```

Even sqlite3 is competitive with Postgres despite it assumping all columns are strings (for contrast: even using just switching to numeric columns instead of integers in Postgres results in 10x slowdown)

Tunings i've t... | [

-0.3002191483974457,

-0.008475027047097683,

0.6161803007125854,

-0.2006819248199463,

-0.2858225703239441,

0.18432791531085968,

0.21383044123649597,

-0.23835274577140808,

-0.18847166001796722,

-0.5555290579795837,

0.07888022065162659,

0.342387318611145,

-0.14736558496952057,

0.0057705920189... | |

2MB

disabled connection and statement logging

used a stored procedure via CREATE FUNCTION ... LANGUAGE SQL

```

So my question is, is my experience here normal, and this is what I can expect when using a SQL database? I can understand that ACID must come with costs, but this is kind of crazy in my opinion. I'm not ask... | [

0.13498665392398834,

0.10679199546575546,

0.0351773239672184,

-0.08584266155958176,

-0.16027085483074188,

-0.3219543695449829,

0.3130743205547333,

-0.07944952696561813,

-0.378580778837204,

-0.27057015895843506,

0.18822528421878815,

0.6614421010017395,

-0.682136595249176,

-0.248818010091781... | |

and Pig + Hadoop but not super excited about maintaining yet another server application and not sure if they would even help.

---

No the Python code and Java code do all the work in house so to speak. I just generate 4 arrays with 350,000 random values each, then take the average. I don't include the generation in th... | [

0.4064224362373352,

-0.1961778849363327,

0.08197986334562302,

-0.0436117984354496,

-0.2830897271633148,

0.1338837742805481,

0.030787533149123192,

-0.09329959005117416,

-0.2327757477760315,

-0.6494563817977905,

0.5973051190376282,

0.4503316879272461,

-0.29077741503715515,

-0.273953884840011... | |

more behind the scenes, but most of that work doesn't matter to me since this is read only data.

The Postgres query doesn't change timing on subsequent runs.

I've rerun the Python tests to include spooling it off the disk. The timing slows down considerably to nearly 4 secs. But I'm guessing that Python's file handli... | [

0.3430030643939972,

-0.22227618098258972,

0.15719105303287506,

0.1561979055404663,

-0.30147457122802734,

-0.4417446255683899,

0.5101029872894287,

0.3782944679260254,

-0.18879614770412445,

-0.5363848805427551,

-0.0682566687464714,

0.7909277677536011,

-0.07589370757341385,

-0.209995806217193... | |

than it looks like (maintaining data consistency for a start!)

If the values don't have to be 100% spot on, or if the table is updated rarely, but you are running this calculation often, you might want to look into Materialized Views to speed it up.

(Note, I have not used materialized views in Postgres, they look at ... | [

0.020071113482117653,

-0.7017061710357666,

0.7736949920654297,

0.30607619881629944,

0.09458406269550323,

-0.09255467355251312,

0.2625921368598938,

0.0746021643280983,

-0.2583508789539337,

-0.9811763763427734,

0.0262761227786541,

0.7852346897125244,

0.08062085509300232,

-0.00124605419114232... | |

on my oracle server, the same table structure with about 500k rows and no indexes, takes about 1 - 1.5 seconds, which is almost all just oracle sucking the data off disk.

The real question is, is 200ms fast enough?

-------------- More --------------------

I was interested in solving this using materialized views, si... | [

-0.13182154297828674,

-0.12966276705265045,

0.8771015405654907,

-0.08914152532815933,

-0.1922987848520279,

-0.05771319940686226,

0.25703853368759155,

-0.1478111445903778,

-0.21541789174079895,

-0.9184119701385498,

-0.20231148600578308,

0.8800044059753418,

0.1646624058485031,

-0.06115176528... | |

00:00:00.00

```

Once it refreshes, its MUCH faster than doing the raw query

```

SQL> select count(*),avg(a),avg(b),avg(c),avg(d) from so_x;

COUNT(*) AVG(A) AVG(B) AVG(C) AVG(D)

---------- ---------- ---------- ---------- ----------

1899459 7495.38839 22.2905454 5.00276131 2.13432836

Elapsed: 0... | [

-0.23922891914844513,

0.04981774091720581,

0.8315673470497131,

-0.3362584412097931,

0.20666177570819855,

0.041889362037181854,

0.36245641112327576,

-0.6047125458717346,

-0.2180301398038864,

-0.744601309299469,

-0.44684624671936035,

0.6083393096923828,

0.00952175073325634,

0.049207348376512... | |

view the MV.

```

SQL> insert into so_x values (1,2,3,4,5);

1 row created.

Elapsed: 00:00:00.00

SQL> commit;

Commit complete.

Elapsed: 00:00:00.00

SQL> select * from mv_so_x;

COUNT(*) AVG(A) AVG(B) AVG(C) AVG(D)

---------- ---------- ---------- ---------- ----------

1899459 7495.38839 22.29054... | [

0.06119643524289131,

-0.10619861632585526,

1.172992467880249,

-0.23475614190101624,

0.005743001122027636,

0.04016413167119026,

0.4789291322231293,

-0.28407472372055054,

-0.4492049515247345,

-0.5559489130973816,

-0.336100310087204,

1.0809893608093262,

-0.2755318582057953,

0.0503699742257595... | |

---------- ---------- ----------

1899460 7495.35823 22.2905352 5.00276078 2.17647059

Elapsed: 00:00:00.00

SQL>

```

This isn't ideal. for a start, its not realtime, inserts/updates will not be immediately visible. Also, you've got a query running to update the MV whether you need it or not (this can be tune to wh... | [

-0.011674879118800163,

-0.09438345581293106,

0.9388422966003418,

-0.07992826402187347,

0.0941300317645073,

-0.326252818107605,

0.4463278353214264,

-0.2167081981897354,

-0.32688653469085693,

-0.5674720406532288,

-0.04512663185596466,

0.816243052482605,

-0.22123949229717255,

0.35392242670059... | |

Typically when writing new code you discover that you are missing a #include because the file doesn't compile. Simple enough, you add the required #include. But later you refactor the code somehow and now a couple of #include directives are no longer needed. How do I discover which ones are no longer needed?

Of cours... | [

0.7220247387886047,

-0.22475750744342804,

0.020283007994294167,

0.2550135850906372,

-0.07613256573677063,

-0.21488022804260254,

0.17575322091579437,

-0.1473148614168167,

-0.5033631920814514,

-0.7217898368835449,

-0.07962782680988312,

0.8003773093223572,

-0.22383594512939453,

-0.24896693229... | |

do that.

Unusually there isn't a free OS version of the tool available.

You can remove #includes by passing by reference instead of passing by value and forward declaring. This is because the compiler doesn't need to know the size of the object at compile time. This will require a large amount of manual work on your ... | [

0.22942106425762177,

0.2559811770915985,

-0.2821202278137207,

0.07039531320333481,

-0.0825507715344429,

-0.24795836210250854,

0.523445725440979,

-0.23455692827701569,

-0.5669832825660706,

-0.47868049144744873,

-0.14011400938034058,

0.5506260395050049,

-0.6079961657524109,

0.183021962642669... | |

Let's say I have the following class:

```

public class Test<E> {

public boolean sameClassAs(Object o) {

// TODO help!

}

}

```

How would I check that `o` is the same class as `E`?

```

Test<String> test = new Test<String>();

test.sameClassAs("a string"); // returns true;

test.sameClassAs(4); // return... | [

0.2197037637233734,

0.10460416972637177,

0.08021486550569534,

-0.1797119379043579,

0.051587577909231186,

-0.13858766853809357,

0.4124068021774292,

-0.3350493311882019,

0.1068863645195961,

-0.46576690673828125,

-0.14647804200649261,

0.4403844475746155,

-0.28415659070014954,

0.11130528897047... | |

has no information as to what `E` is at runtime. So, you need to pass a `Class<E>` to the constructor of Test.

```

public class Test<E> {

private final Class<E> clazz;

public Test(Class<E> clazz) {

if (clazz == null) {

throw new NullPointerException();

}

this.clazz = clazz;

... | [

0.11079150438308716,

0.00062952731968835,

0.39593395590782166,

-0.15306244790554047,

0.17397724092006683,

-0.12450763583183289,

0.37742000818252563,

-0.24566859006881714,

0.08256629854440689,

-0.6944973468780518,

-0.34662118554115295,

0.5360267162322998,

-0.18790161609649658,

0.23868609964... | |

public static <T> Test<T> create(Class<T> clazz) {

return new Test<T>(clazz);

}

public boolean sameClassAs(Object o) {

return o != null && o.getClass() == clazz;

}

}

```

If you want an "instanceof" relationship, use `Class.isAssignableFrom` instead of the `Class` comparison. Note, `E` will... | [

0.3336326479911804,

-0.1809544712305069,

-0.02616364136338234,

-0.07591172307729721,

0.081313356757164,

-0.011596731841564178,

0.2930232286453247,

-0.19224949181079865,

-0.008730903267860413,

-0.42889603972435,

-0.22308066487312317,

0.6042984127998352,

-0.40933308005332947,

0.1536753624677... | |

What is the easiest way to extract the original exception from an exception returned via Apache's implementation of XML-RPC?

It turns out that getting the cause exception from the Apache exception is the right one.

```

} catch (XmlRpcException rpce) {

Throwable cause = rpce.getCause();

if(cause != null) {

... | [

0.048739515244960785,

-0.09718631207942963,

0.08754958212375641,

0.032903041690588,

-0.12108407914638519,

-0.11806792765855789,

0.3160194754600525,

-0.5186727046966553,

-0.1446235179901123,

-0.36548903584480286,

-0.015621534548699856,

0.3584943413734436,

-0.1690387725830078,

0.270835161209... | |

else { throw(rpce); }

}

``` | [

0.23468497395515442,

0.20343215763568878,

-0.18874433636665344,

-0.47365602850914,

0.08857221156358719,

-0.45433923602104187,

0.29112163186073303,

-0.3281005024909973,

0.053597912192344666,

-0.36180007457733154,

-0.3526436686515808,

0.7295058369636536,

-0.20895786583423615,

0.1745092123746... | |

My server already runs IIS on TCP ports 80 and 443. I want to make a centralized "push/pull" Git repository available to all my team members over the Internet.

So I should use HTTP or HTTPS.

But I cannot use Apache because of IIS already hooking up listening sockets on ports 80 and 443! Is there any way to publish a ... | [

0.21117864549160004,

0.17394953966140747,

0.3040820360183716,

-0.13565945625305176,

-0.29320523142814636,

-0.14768175780773163,

0.41342201828956604,

-0.0035170544870197773,

-0.14950019121170044,

-0.7909042835235596,

-0.00016174268967006356,

0.2877700924873352,

-0.15774591267108917,

0.33169... | |

besides using SVN for that branch?

**Bonobo Git Server**

<https://bonobogitserver.com/>

---

**GitAspx** - By Jeremy Skinner

<https://github.com/JeremySkinner/git-dot-aspx/>

<https://github.com/JeremySkinner/git-dot-aspx/downloads>

*Install Instructions*

<https://www.jeremyskinner.co.uk/2010/10/19/gitaspx-0-3-ava... | [

0.16896338760852814,

0.027559803798794746,

0.39769884943962097,

-0.06191331148147583,

0.010937520302832127,

0.13675113022327423,

0.15972860157489777,

0.07607509940862656,

-0.30196648836135864,

-0.14810365438461304,

-0.06559832394123077,

0.21696116030216217,

-0.25262579321861267,

-0.2841117... | |

How can I discover any USB storage devices and/or CD/DVD writers available at a given time (using C# .Net2.0).

I would like to present users with a choice of devices onto which a file can be stored for physically removal - i.e. not the hard drive.

```

using System.IO;

DriveInfo[] allDrives = DriveInfo.GetDrives();

fo... | [

0.062231600284576416,

0.17706908285617828,

0.576192319393158,

0.1291651874780655,

0.3028874099254608,

-0.16485226154327393,

-0.25299760699272156,

0.07140155136585236,

-0.22812210023403168,

-0.7765921950340271,

-0.33434608578681946,

0.8626390099525452,

-0.3142257034778595,

0.208494633436203... | |

I have the situation where i use GIS software which stores the information about GIS objects into separate database table for each type/class of GIS object (road, river, building, sea, ...) and keeps the metadata table in which it stores info about the class name and its DB table.

Those GIS objects of different classe... | [

0.6369734406471252,

0.33519676327705383,

0.0181853249669075,

0.16535687446594238,

-0.36786842346191406,

0.0716724842786789,

-0.059619802981615067,

-0.10130080580711365,

-0.11387808620929718,

-0.7926424741744995,

0.05921647325158119,

0.3580869436264038,

-0.17879456281661987,

0.2969519197940... | |

of the given GIS class.

The problem for me is how to map those objects using NHibernate to explain to the NHibernate when creating a C# GisObject to receive and **use the table name as a parameter** which will be read from the meta table (it can be in two steps, i can manually fetch the table name in first step and th... | [

-0.12912029027938843,

0.07469627261161804,

-0.11920166015625,

0.12075120210647583,

-0.35261911153793335,

0.03435911983251572,

0.3959466814994812,

-0.0324796661734581,

0.052502185106277466,

-0.7139672040939331,

-0.06856728345155716,

0.5814703106880188,

-0.41115039587020874,

0.20464046299457... | |

are created dynamically in the application. Every GIS data of the same type should be a class, but my user has the possibility to get new set of data and put it in the database. I can't know in front which classes my user will have in the application. Therefore, the in-front per-class mapping model doesn't work because... | [

0.4112946093082428,

0.11843275278806686,

0.25140857696533203,

0.40197300910949707,

-0.10948333889245987,

0.2270795851945877,

0.11142924427986145,

-0.06108909845352173,

-0.2869020998477936,

-0.8299843072891235,

-0.2034188061952591,

0.43294885754585266,

-0.2714759409427643,

0.315298855304718... | |

class fetching that query using the

```

string qs = getSession().getNamedQuery(queryName);

```

and use the string replace to inject database name (by replacing some placeholder string) which i will pass as a parameter.

```

qs = qs.replace(":tablename:", tableName);

```

How do you feel about that solution? I kno... | [

-0.13455133140087128,

-0.38067230582237244,

0.4560394287109375,

0.24632102251052856,

-0.07924679666757584,

0.059328388422727585,

-0.06625860929489136,

-0.21426095068454742,

-0.01625289022922516,

-0.6556671857833862,

-0.06344608962535858,

0.7301421165466309,

-0.4276385009288788,

0.191736131... | |

objects. | [

0.20031897723674774,

0.1593267172574997,

0.03764091059565544,

0.45702314376831055,

-0.23505635559558868,

-0.01604924350976944,

-0.2971287667751312,

0.04860246554017067,

-0.26253828406333923,

-0.716981828212738,

-0.569365918636322,

0.1602056920528412,

-0.24588137865066528,

0.347059816122055... | |

Does anyone have a decent algorithm for calculating axis minima and maxima?

When creating a chart for a given set of data items, I'd like to be able to give the algorithm:

* the maximum (y) value in the set

* the minimum (y) value in the set

* the number of tick marks to appear on the axis

* an optional value that ... | [

0.33637669682502747,

-0.22015227377414703,

0.47379249334335327,

0.4076271057128906,

-0.07073015719652176,

0.20949412882328033,

0.07744786143302917,

0.07833319902420044,

-0.23421406745910645,

-0.5274465680122375,

0.30219948291778564,

0.43342217803001404,

-0.038425348699092865,

0.20772430300... | |

ticks)

* the interval size

The ticks should be at a regular interval should be of a "reasonable" size (e.g. 1, 3, 5, possibly even 2.5, but not any more sig figs).

The presence of the optional value will skew this, but without that value the largest item should appear between the top two tick marks, the lowest value... | [

0.2759113311767578,

-0.05379107594490051,

0.3048456907272339,

0.19599191844463348,

-0.12232381105422974,

0.34257668256759644,

0.16193781793117523,

0.004345751833170652,

-0.2715923488140106,

-0.6207369565963745,

0.030595937743782997,

0.1503380835056305,

-0.13134880363941193,

0.0760514736175... | |

and pinching some ideas from there. | [

0.20186354219913483,

0.23533932864665985,

0.013987140730023384,

0.19568610191345215,

-0.22957396507263184,

0.07093046605587006,

-0.07912340015172958,

0.2660807967185974,

-0.11926048249006271,

-0.019419968128204346,

0.23692479729652405,

0.33390605449676514,

0.12814080715179443,

-0.038423091... | |

Is it possible to deploy a native Delphi application with ClickOnce without a stub C# exe that would be used to launch the Delphi application?

The same question applies to VB6, C++ and other native Windows applications.

Personally, I build my own mechanism to kick off self update process when my application timestamp ... | [

0.1854313313961029,

-0.1985362023115158,

0.5918599367141724,

-0.18215534090995789,

-0.31524136662483215,

-0.3381389379501343,

0.05276923626661301,

0.04333309084177017,

-0.3074634075164795,

-0.8143488764762878,

-0.18189039826393127,

0.7628820538520813,

-0.26066818833351135,

0.05775115266442... | |

does is to validate its own file-time with the server.

1.2 If update is required then it will download the updated file to the file named "MyApp-YYYY-MM-DD-HH-MM-SS.exe"

1.3 Then it invoke "MyApp-YYYY-MM-DD-HH-MM-SS.exe" with command argument

```

MyApp-YYYY-MM-DD-HH-MM-SS.exe --update MyApp.EXE

```

1.4 Terminate... | [

0.6326586008071899,

0.20123335719108582,

1.2247182130813599,

-0.04150322079658508,

0.27991145849227905,

-0.15729448199272156,

0.24147668480873108,

-0.3699636161327362,

-0.08965384215116501,

-0.5008091330528259,

-0.09291670471429825,

0.3747332692146301,

-0.1649753600358963,

0.15560729801654... | |

for 7 years and it works well. It could be quite painful to debug when things goes wrong since the steps involve many processes. I suggest you make a lot of trace logging to allow simpler trouble-shooting.

Good Luck | [

0.4025587737560272,

-0.06465122848749161,

0.450299471616745,

0.06988473981618881,

0.4403541684150696,

-0.5258729457855225,

0.6862711906433105,

0.22917583584785461,

-0.2986786663532257,

-0.13276784121990204,

0.19643060863018036,

0.5900022387504578,

0.2473112940788269,

-0.20670269429683685,

... | |

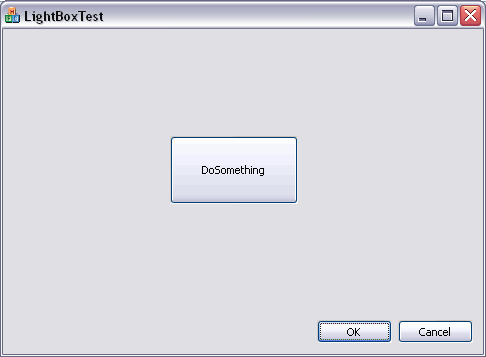

Has anyone implemented Lightbox style background dimming on a modal dialog box in a MFC/non .net app.

I think the procedure would have to be something like:

steps:

1. Get dialog parent HWND or CWnd\*

2. Get the rect of the parent window and draw an overlay with a translucency over that window

3. allow the dialog t... | [

0.45759138464927673,

-0.28773465752601624,

0.5780779123306274,

-0.11670847237110138,

-0.16262033581733704,

-0.3095201551914215,

0.12200617790222168,

-0.12656597793102264,

-0.14846213161945343,

-0.6558975577354431,

-0.06532469391822815,

0.5955625176429749,

-0.4674205482006073,

-0.1265136599... | |

**Some App**:

with a lightbox dialog box

Here's what I did\* based on Brian's links

First create a dialog resource with the properties:

* border **FALSE... | [

0.3463478684425354,

0.05249451845884323,

0.8941377997398376,

-0.14815418422222137,

-0.09643583744764328,

-0.07762737572193146,

0.46099501848220825,

-0.2489154040813446,

-0.3544405400753021,

-0.8536444306373596,

-0.0027440334670245647,

0.4342525601387024,

-0.4663291275501251,

-0.07369580864... | |

size;

GetParent()->GetWindowRect(&rect);

size.top = 0;

size.left = 0;

size.right = rect.right - rect.left;

size.bottom = rect.bottom - rect.top;

SetWindowPos(m_pParentWnd,rect.left,rect.top,size.right,size.bottom,NULL);

HWND hWnd=m_hWnd;

SetWindowLong (hWnd , GWL_EXSTYLE ,GetWindowLo... | [

-0.3362944424152374,

-0.3405154049396515,

0.8486133217811584,

-0.35463449358940125,

0.18006496131420135,

0.14850255846977234,

0.40650105476379395,

-0.583215057849884,

-0.2947802245616913,

-0.34198617935180664,

-0.9107864499092102,

0.5406413674354553,

-0.20131732523441315,

0.170543864369392... | |

if (pSetLayeredWindowAttributes != NULL)

{

/*

* Second parameter RGB(255,255,255) sets the colorkey

* to white LWA_COLORKEY flag indicates that color key

* is valid LWA_ALPHA indicates that ALphablend parameter

* is valid - here 100 is used

*/

pSetLayeredW... | [

-0.02142472192645073,

-0.11628255993127823,

0.24730873107910156,

-0.5868418216705322,

0.27857694029808044,

0.26366740465164185,

0.24094325304031372,

-0.4730031490325928,

0.03117719665169716,

-0.7861875891685486,

-0.388482928276062,

0.3111604154109955,

-0.41678717732429504,

0.16126562654972... | |

RGB(255,255,255), 100, LWA_COLORKEY|LWA_ALPHA);

}

return true;

}

```

then create a small black bitmap in an image editor (say 48x48) and import it as a bitmap resource (in this example IDB\_BITMAP1)

override the WM\_ERASEBKGND message with:

```

BOOL LightBoxDlg::OnEraseBkgnd(CDC* pDC)

{

BOOL bRet = ... | [

-0.07314390689134598,

-0.04435732588171959,

0.5386183857917786,

-0.2858833372592926,

0.24062755703926086,

0.16168835759162903,

0.2585378587245941,

-0.4563025236129761,

-0.21554726362228394,

-0.8245025873184204,

-0.5022014379501343,

0.527262270450592,

-0.45275622606277466,

0.166498005390167... | |

rect.top;

CBitmap cbmp;

cbmp.LoadBitmapW(IDB_BITMAP1);

BITMAP bmp;

cbmp.GetBitmap(&bmp);

CDC memDc;

memDc.CreateCompatibleDC(pDC);

memDc.SelectObject(&cbmp);

pDC->StretchBlt(0,0,size.right,size.bottom,&memDc,0,0,bmp.bmWidth,bmp.bmHeight,SRCCOPY);

return bRet;

}

```

Instantiate it... | [

0.21895048022270203,

-0.1369638741016388,

0.9862149357795715,

-0.3675480782985687,

0.06270571798086166,

-0.03419762849807739,

0.16733090579509735,

-0.4596107304096222,

-0.2327737957239151,

-0.887711763381958,

-0.4508350193500519,

0.4632855951786041,

-0.488700270652771,

0.12206891179084778,... | |

Dlg.ShowWindow(SW_SHOW);

BOOL ret = CDialog::DoModal();

Dlg.ShowWindow(SW_HIDE);

return ret;

}

```

and this results in something **exactly** like my mock up above

\*there are still places for improvment, like doing it without making a dialog box to begin with and some other general tidyups. | [

0.25981828570365906,

-0.015506968833506107,

0.6496609449386597,

-0.3440442681312561,

0.08555296808481216,

-0.33442237973213196,

0.4966162145137787,

-0.2486809343099594,

-0.03927107900381088,

-0.5071366429328918,

-0.4117598235607147,

0.49488216638565063,

-0.37238848209381104,

0.112449891865... | |

Recently I got IE7 crashed on Vista on jar loading (presumably) with the following error:

```

Problem signature:

Problem Event Name: BEX

Application Name: iexplore.exe

Application Version: 7.0.6001.18000

Application Timestamp: 47918f11

Fault Module Name: ntdll.dll

Fault Mo... | [

-0.2558026909828186,

0.13103245198726654,

0.6459340453147888,

-0.2941005825996399,

-0.17782819271087646,

-0.16695335507392883,

0.8385832905769348,

0.2623414993286133,

-0.20485655963420868,

-1.049533724784851,

-0.21215477585792542,

0.5513960123062134,

-0.16738969087600708,

0.187564194202423... | |

00087ba6

Exception Code: c000000d

Exception Data: 00000000

OS Version: 6.0.6001.2.1.0.768.3

Locale ID: 1037

Additional Information 1: fd00

Additional Information 2: ea6f5fe8924aaa756324d57f87834160

Additional Information 3: fd00

Additional Informat... | [

-0.4298892915248871,

0.48523247241973877,

0.14885751903057098,

-0.11536144465208054,

0.2987653315067291,

-0.004430827219039202,

0.5578550696372986,

0.07348133623600006,

-0.307005912065506,

-0.4650344252586365,

-0.25040680170059204,

0.3428822457790375,

-0.349092572927475,

0.3132610619068146... | |

problems [is](http://forums.microsoft.com/MSDN/ShowPost.aspx?PostID=1194672&SiteID=1) [common](http://support.mozilla.com/tiki-view_forum_thread.php?locale=eu&comments_parentId=101420&forumId=1) [for](http://www.eggheadcafe.com/software/aspnet/29930817/bex-problem.aspx) [Vista](http://www.microsoft.com/communities/news... | [

-0.29548195004463196,

0.36538317799568176,

0.1773625612258911,

-0.028642041608691216,

0.2452712059020996,

-0.0018043022137135267,

0.1158561035990715,

0.14955508708953857,

-0.5453082919120789,

-0.5317450761795044,

-0.3201097249984741,

0.345990926027298,

-0.4284818172454834,

0.28527075052261... | |

I am using .Net 2 and the normal way to store my settings. I store my custom object serialized to xml. I am trying to retrieve the default value of the property (but without reseting other properties). I use:

```

ValuationInput valuationInput = (ValuationInput) Settings.Default.Properties["ValuationInput"].DefaultValu... | [

0.40737253427505493,

0.15144558250904083,

0.45842525362968445,

-0.037606194615364075,

-0.2917936146259308,

-0.08749104291200638,

0.12332670390605927,

-0.6214367151260376,

0.0855894535779953,

-0.377663791179657,

0.12324880063533783,

1.1907109022140503,

-0.12631012499332428,

0.11222793161869... | |

the default value myself, I would like to read it the same way as I read the current value: `ValuationInput valuationInput = Settings.Default.ValuationInput;`

BEX=Buffer overflow exception. See <http://technet.microsoft.com/en-us/library/cc738483.aspx> for details. However, c000000d is STATUS\_INVALID\_PARAMETER; the t... | [

0.2912461757659912,

0.06237718090415001,

0.29915493726730347,

0.12994389235973358,

0.24804942309856415,

0.02197282947599888,

-0.2354142814874649,

-0.3182452917098999,

-0.05229765549302101,

-0.32436704635620117,

-0.08914215862751007,

1.0393494367599487,

0.13721437752246857,

0.25772550702095... | |

I have a ASP.Net website that is failing on AJAX postbacks (both with ASP.Net AJAX and a 3rd part control) in IE. FireFox works fine. If I install the website on another machine without .Net 3.5 SP1, it works as expected.

When it fails, Fiddler shows that I'm getting a 405 "Method Not Allowed". The form seems to be po... | [

0.16881594061851501,

0.1910378336906433,

0.7929760813713074,

-0.04318394139409065,

0.10531473159790039,

-0.1428079903125763,

0.8473963737487793,

-0.26372164487838745,

-0.33792567253112793,

-0.5993980169296265,

-0.12365984916687012,

0.5160208940505981,

-0.22206106781959534,

-0.0819438174366... | |

*nested* Foo tags:

```

<NewDataSet>

<Foo> <!-- Foo-Id: 0 -->

<Bar>abcd</Bar>

<Foo>efg</Foo> <!-- Foo-Id: 1, Parent-Id: 0 -->

</Foo>

<Foo> <!-- Foo-Id: 2 -->

<Bar>hijk</Bar>

<Foo>lmn</Foo> <!-- Foo-Id: 3, Parent-Id: 2 -->

</Foo>

</NewDataSet>

```

So this correct... | [

0.06558167934417725,

0.13253574073314667,

0.17067283391952515,

-0.22078217566013336,

0.22642828524112701,

0.3752860128879547,

-0.071263886988163,

-0.7025372982025146,

-0.18387295305728912,

-0.46103131771087646,

-0.19180984795093536,

0.05713093653321266,

-0.2021944373846054,

0.1590853035449... | |

<!-- Rec-Id: 0 -->

<Bar>abcd</Bar>

<Foo>efg</Foo>

</Rec>

<Rec> <!-- Rec-Id: 1 -->

<Bar>hijk</Bar>

<Foo>lmn</Foo>

</Rec>

</NewDataSet>

```

Which should result in:

```

Bar Foo Rec-Id

abcd efg 0

hijk lmn 1

``` | [

-0.001646058284677565,

0.18376430869102478,

0.5409844517707825,

-0.4240977466106415,

0.06571279466152191,

0.0008008884033188224,

0.1504158228635788,

-0.7160705327987671,

-0.14592769742012024,

-0.4720933437347412,

-0.5609149932861328,

0.4786319136619568,

-0.3365975320339203,

-0.003970016259... | |

As part of some error handling in our product, we'd like to dump some stack trace information. However, we experience that many users will simply take a screenshot of the error message dialog instead of sending us a copy of the full report available from the program, and thus I'd like to make some minimal stack trace i... | [

0.04067497327923775,

0.1437082290649414,

0.2845974564552307,

-0.009542366489768028,

0.277602881193161,

-0.12448570132255554,

0.1920538693666458,

-0.21292760968208313,

-0.17157495021820068,

-0.6479324698448181,

0.03349070996046066,

0.5321606397628784,

-0.21479745209217072,

0.224807918071746... | |

mode, FileAccess access, FileShare share, Int32 bufferSize, FileOptions options)

at System.IO.StreamReader..ctor(String path, Encoding encoding, Boolean detectEncodingFromByteOrderMarks, Int32 bufferSize)

at System.IO.StreamReader..ctor(String path)

at LVKWinFormsSandbox.MainForm.button1_Click(Object sender, EventArgs ... | [

-0.01618744432926178,

-0.3972715735435486,

0.674144983291626,

-0.043183594942092896,

0.009548122063279152,

-0.18448734283447266,

0.40086811780929565,

0.06677006930112839,

-0.09852386265993118,

-0.7334692478179932,

-0.5043255686759949,

0.783443808555603,

-0.2684454619884491,

0.1465642303228... | |

like this information from the above text:

```

C:\Dev\VS.NET\Gatsoft\LVKWinFormsSandbox\MainForm.cs:line 36

```

Any advice you can give will be helpful.

You should be able to get a StackTrace object instead of a string by saying

```

var trace = new System.Diagnostics.StackTrace(exception);

```

You can then look a... | [

0.3943440616130829,

0.03188246488571167,

0.46038708090782166,

0.11444808542728424,

0.19023722410202026,

-0.2975756824016571,

0.3155822455883026,

-0.4886753559112549,

-0.4107416868209839,

-0.6445566415786743,

-0.11443652212619781,

0.4134943187236786,

-0.3546604812145233,

-0.0755009055137634... | |

I have a class with a `ToString` method that produces XML. I want to unit test it to ensure it is producing valid xml. I have a DTD to validate the XML against.

**Should I include the DTD as a string within the unit test to avoid a dependency** on it, or is there a smarter way to do this?

If your program validates th... | [

0.6153883934020996,

0.029821552336215973,

0.12493999302387238,

0.2615104615688324,

-0.17324906587600708,

-0.022595198825001717,

0.10117476433515549,

-0.13888709247112274,

0.13970772922039032,

-0.52897047996521,

-0.07023809850215912,

0.5481846928596497,

0.1198534369468689,

0.104646138846874... | |

a string in your code is probably okay.

Otherwise, I'd put it in an external file and have your unit test read it from that file. | [

0.44409915804862976,

-0.05976890027523041,

-0.2571927011013031,

0.2639809846878052,

0.10674773901700974,

-0.12690627574920654,

0.3680708110332489,

0.026634253561496735,

-0.23105353116989136,

-0.2778095602989197,

0.09214707463979721,

0.29385003447532654,

-0.1135137751698494,

-0.137620344758... | |

I have a variable of type `Dynamic` and I know for sure one of its fields, lets call it `a`, actually is an array. But when I'm writing

```

var d : Dynamic = getDynamic();

for (t in d.a) {

}

```

I get a compilation error on line two:

> You can't iterate on a Dynamic value, please specify Iterator or Iterable

How ... | [

0.10586671531200409,

-0.0660921186208725,

0.09779590368270874,

-0.18698559701442719,

-0.22645097970962524,

-0.12613050639629364,

0.2801714241504669,

-0.3875097632408142,

-0.11132117360830307,

-0.36457669734954834,

-0.19684988260269165,

0.7412660121917725,

-0.4833581745624542,

0.09265370666... | |

also change `Dynamic` to the type of the contents of the array. | [

0.11985552310943604,

-0.25924938917160034,

-0.09050393104553223,

-0.05241187661886215,

-0.06935499608516693,

-0.13417328894138336,

0.1454392522573471,

0.16331660747528076,

-0.1684541404247284,

-0.6108902096748352,

-0.37367454171180725,

0.4692836105823517,

-0.49281221628189087,

0.1374494135... | |

I have always made a point of writing nice code comments for classes and methods with the C# xml syntax. I always expected to easily be able to export them later on.

Today I actually have to do so, but am having trouble finding out how. Is there something I'm missing? I want to go *Menu->Build->Build Code Documentatio... | [

0.6811619997024536,

0.29096361994743347,

0.04776685684919357,

-0.01949690468609333,

-0.18677696585655212,

0.004387475084513426,

0.23526981472969055,

0.20094606280326843,

-0.11850419640541077,

-0.617622435092926,

-0.009912930428981781,

0.45318445563316345,

0.08993592858314514,

0.17883971333... | |

[Sandcastle](http://www.microsoft.com/downloads/details.aspx?FamilyId=E82EA71D-DA89-42EE-A715-696E3A4873B2&displaylang=en). | [

-0.7201434373855591,

0.2629416584968567,

0.4145268499851227,

-0.04409480094909668,

0.11555033922195435,

-0.011279557831585407,

0.0353621207177639,

-0.15626955032348633,

-0.6574103236198425,

-0.47873854637145996,

-0.40292099118232727,

0.10038644075393677,

-0.22815372049808502,

0.37509298324... | |

Is the edit control I'm typing in now, with all its buttons and rules freely available for use?

My web project is also .Net based.

It's the [WMD](http://wmd-editor.com/) Markdown editor which is free and seems to be pretty easy to use. Just include the javascript for it and (in the easiest case), it just attaches to ... | [

0.43002817034721375,

-0.053836144506931305,

0.45009055733680725,

0.15483978390693665,

-0.2173769623041153,

-0.37056756019592285,

0.2697053551673889,

-0.05162888765335083,

-0.03856753557920456,

-0.5629062056541443,

0.08491553366184235,

0.4779873192310333,

-0.3718293011188507,

-0.22822661697... | |

license though.

> > > > "now completely free to use. The next release will be open source under an MIT-style license." | [

0.5558156967163086,

0.14653243124485016,

0.23131336271762848,

0.2977273166179657,

0.09410259872674942,

-0.8826165199279785,

0.060789529234170914,

0.33324024081230164,

-0.10724890232086182,

-0.3566690981388092,

-0.24840764701366425,

0.37664246559143066,

-0.17512843012809753,

0.4583889842033... | |

I want to ask how other programmers are producing Dynamic SQL strings for execution as the CommandText of a SQLCommand object.

I am producing parameterized queries containing user-generated WHERE clauses and SELECT fields. Sometimes the queries are complex and I need a lot of control over how the different parts are b... | [

0.4943687617778778,

0.16579219698905945,

-0.1912752091884613,

0.23101013898849487,

-0.2614503800868988,

-0.09952164441347122,

0.44460684061050415,

-0.03723004460334778,

-0.12800222635269165,

-0.35608434677124023,

-0.02722136303782463,

0.5049335360527039,

-0.4999611973762512,

0.173247992992... | |

detail to my previous post:

1. I cannot really template my query due to the requirements. It just changes too much.

2. I have to allow for aggregate functions, like Count(). This has consequences for the Group By/Having clause. It also causes nested SELECT statements. This, in turn, effects the column name used by

3. ... | [

0.024509647861123085,

0.22041726112365723,

0.3352268934249878,

-0.05561521276831627,

-0.24242758750915527,

-0.15721315145492554,

0.3675054907798767,

-0.4088154435157776,

-0.1462072879076004,

-0.4521920084953308,

0.10557045787572861,

0.35006290674209595,

-0.5182065963745117,

0.0311406739056... | |

that I have to use a Temp table and then get the @@rowcount, before selecting my subset, to avoid a second query.

I will show some code (the horror!) so that you guys have an idea of what I'm dealing with.

```

sqlCmd.CommandText = "DECLARE @t Table(ContactId int, ROWRANK int" + declare

+ ")INSERT INTO @t(Contac... | [

0.34872347116470337,

0.13983154296875,

0.34879598021507263,

0.0155633008107543,

0.02621750719845295,

-0.041809238493442535,

0.13427689671516418,

-0.2037522792816162,

0.01838308945298195,

-0.4312278628349304,

0.023668497800827026,

0.5781831741333008,

-0.309063196182251,

0.19691234827041626,... | |

rowrank for each row

+ outerFields

+ " FROM ( SELECT c.id AS ContactID"

+ coreFields

+ from // sometimes different tables are required

+ where + ") T " // user input goes here.

+ groupBy+ " "

+ havingClause //can be empty

+ ";" | [

-0.4394335150718689,

-0.11367882788181305,

0.41251856088638306,

-0.051527854055166245,

0.04246484488248825,

-0.01010774914175272,

-0.06556995213031769,

-0.41647815704345703,

-0.004512658342719078,

-0.501326322555542,

-0.15137575566768646,

0.4216347336769104,

-0.14511218667030334,

-0.022634... | |

+ "select @@rowcount as rCount;" // return 2 recordsets, avoids second query

+ " SELECT " + fields + ",field1,field2" // join onto the other cols n the table

+" FROM @t t INNER JOIN contacts c on t.ContactID = c.id"

+" WHERE ROWRANK BETWEEN " + ((pageIndex * pageSize) + 1) + " AND "

+ ( (pageI... | [

-0.19213367998600006,

0.006111393216997385,

0.8841353058815002,

-0.03259255737066269,

0.0149848572909832,

0.011986830271780491,

-0.25185462832450867,

-0.3484426438808441,

-0.055043578147888184,

-0.8137302398681641,

-0.19421765208244324,

0.40142327547073364,

-0.46964114904403687,

-0.0894123... | |

For purely relational data, the query is much more simple. Each of the section variables are StringBuilders. Where clauses are built like so:

```

// Add Parameter to SQL Command

AddParamToSQLCmd(sqlCmd, "@p" + z.ToString(), SqlDbType.VarChar, 50, ParameterDirection.Input, qc.FieldValue);

// Create SQL code Fragment

wh... | [

-0.044587332755327225,

0.18925024569034576,

0.317260205745697,

-0.009016702882945538,

-0.3200644552707672,

0.20498165488243103,

0.0012157815508544445,

-0.6269230842590332,

0.07678530365228653,

-0.38273245096206665,

-0.2047685980796814,

0.35697153210639954,

-0.23757672309875488,

0.362316191... | |

have the following classes: Query, SelectColumn, Join, WhereCondition, Sort, GroupBy. Each of these classes contains all details relating to that component of the query.

* The last five classes are all related to a Query object. So the Query object itself has collections of each class.

* Each class has a method that ca... | [

-0.04726317524909973,

-0.14930197596549988,

0.3897750675678253,

0.3091638684272766,

-0.2718569040298462,

0.08299645781517029,

-0.1424204409122467,

-0.17990943789482117,

-0.14391233026981354,

-0.47597071528434753,

-0.013418844901025295,

0.10814283788204193,

-0.16742342710494995,

0.218137949... | |

the SQL generation for each individual part of the query originates (and I don't think that there are any big switch statements). And don't forget to use StringBuilder. | [

0.2657760679721832,

-0.08157560974359512,

0.04291844740509987,

0.2850084900856018,

-0.15879835188388824,

-0.5258886814117432,

0.1525811105966568,

0.33257466554641724,

0.0025040251202881336,

-0.38176074624061584,

0.10329511761665344,

0.4903505742549896,

-0.1764868050813675,

0.05790195614099... | |

Does anyone have experience using makefiles for Visual Studio C++ builds (under VS 2005) as opposed to using the project/solution setup. For us, the way that the project/solutions work is not intuitive and leads to configuruation explosion when you are trying to tweak builds with specific compile time flags.

Under Uni... | [

0.5083149075508118,

-0.049579836428165436,

0.04933035746216774,

0.07474513351917267,

0.06660589575767517,

-0.16270147264003754,

0.0324130579829216,

-0.21795518696308136,

-0.4115937352180481,

-0.4763375520706177,

0.29773202538490295,

0.56293123960495,

-0.1324736326932907,

-0.265648603439331... | |

might have several configurations (for example debug, release, and several others). One of my goals on a newly formed project is to have a solution that can have all platform build living together, which makes building and testing code changes easier since you aren't having to open 3 different solutions just to test yo... | [

0.5294851064682007,

-0.07009375095367432,

0.035610996186733246,

0.22354882955551147,

0.1653948277235031,

0.05104135349392891,

0.14151163399219513,

-0.10260240733623505,

-0.2649213373661041,

-0.7674424648284912,

-0.023400912061333656,

0.47562918066978455,

-0.1672428846359253,

-0.01905231736... | |

to a minimum and only maintain a small set of files for all of the different builds that we have to do.

However, I have no experience with makefiles under visual studio and would like to know if others have experiences or issues that they can share.

Thanks.

(post edited to mention that these are C++ builds)

I've fou... | [

0.785301685333252,

-0.015087176114320755,

-0.0810125544667244,

0.07740198820829391,

-0.04967978596687317,

-0.07378896325826645,

0.2026185244321823,

0.010310129262506962,

-0.10743577033281326,

-0.6775975227355957,

0.022232213988900185,

0.7535139322280884,

0.22025087475776672,

-0.21130359172... | |

With multiple configurations, adding an include path means you need to make sure you update every config manually through Visual Studio's fiddly project properties, which can get pretty tedious as a project grows in size.

Projects which use a lot of custom build tools can be easier to manage too, such as if you need t... | [

0.7605779767036438,

-0.24973681569099426,

0.010595330037176609,

0.20980775356292725,

-0.18204374611377716,

-0.057542987167835236,

0.35272109508514404,

-0.3736034333705902,

-0.30140891671180725,

-0.7645736932754517,

0.02270008996129036,

0.5080817341804504,

-0.5335617065429688,

-0.0482113808... | |

make).

Immediate downsides that spring to mind:

* Slower builds: VS isn't particularly quick at invoking external tools, or even working out whether it needs to build a project in the first place.

* Awkward inter-project dependencies: It's fiddly to set up so that a dependee causes the base project to build, and fidd... | [

0.49175727367401123,

-0.3616950809955597,

0.3665018081665039,

0.17628373205661774,

-0.1495288461446762,

0.0074944947846233845,

0.2585921585559845,

-0.2493956983089447,

-0.12744976580142975,

-0.6418524980545044,

-0.10289584845304489,

0.7310385704040527,

-0.04475798085331917,

0.0113231437280... | |

project configurations, but more time coaxing Visual Studio to work properly with it. | [

0.24113821983337402,

-0.19103263318538666,

0.06569507718086243,

0.22074486315250397,

-0.09086266160011292,

0.16900622844696045,

0.09234041720628738,

-0.39453330636024475,

-0.3172057271003723,

-0.9840323328971863,

-0.36151084303855896,

0.4459828734397888,

0.08970081061124802,

-0.14104892313... |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.